Monte Carlo Tree Search A Tutorial Supported by

Monte Carlo Tree Search A Tutorial Supported by the Engineering and Physical Sciences Research Council (EPSRC) Peter Cowling University of York, UK peter. cowling@york. ac. uk Simon Lucas University of Essex, UK sml@essex. ac. uk

Further Reading Browne C. , Powley E. , Whitehouse D. , Lucas S. , Cowling P. I. , Rohlfshagen P. , Tavener S. , Perez D. and Samothrakis S. , Colton S. (2012): "A Survey of Monte Carlo Tree Search Methods" IEEE Transactions on Computational Intelligence and AI in Games, IEEE, 4 (1): 1 -43.

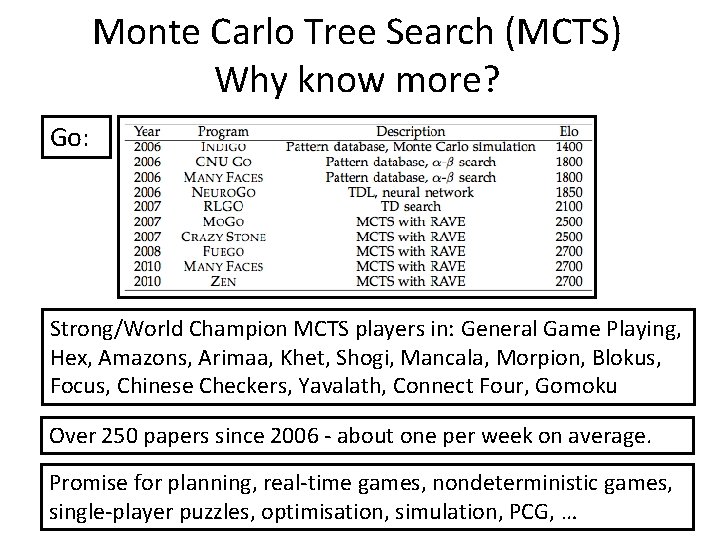

Monte Carlo Tree Search (MCTS) Why know more? Go: Strong/World Champion MCTS players in: General Game Playing, Hex, Amazons, Arimaa, Khet, Shogi, Mancala, Morpion, Blokus, Focus, Chinese Checkers, Yavalath, Connect Four, Gomoku Over 250 papers since 2006 - about one per week on average. Promise for planning, real-time games, nondeterministic games, single-player puzzles, optimisation, simulation, PCG, …

Tutorial Structure • Monte Carlo search – The multi-armed bandit problem • Monte Carlo Tree Search (MCTS) – Selection, expansion, simulation, backpropagation • • Enhancements to the basic algorithm Handling uncertainty in MCTS for real-time games Conclusions, future research directions

Monte Carlo Search for Decision Making in Games No trees yet …

The Multi-Armed Bandit Problem At each step pull one arm Noisy/random reward signal In order to: Find the best arm Minimise regret Maximise expected return

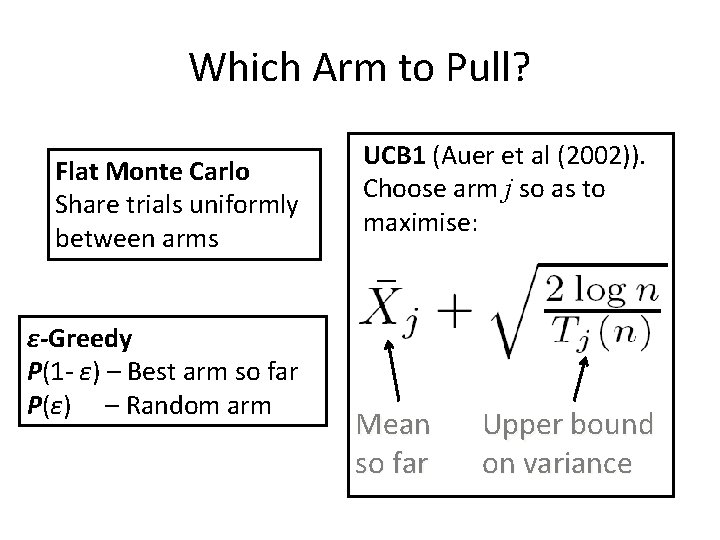

Which Arm to Pull? Flat Monte Carlo Share trials uniformly between arms ε-Greedy P(1 - ε) – Best arm so far P(ε) – Random arm UCB 1 (Auer et al (2002)). Choose arm j so as to maximise: Mean so far Upper bound on variance

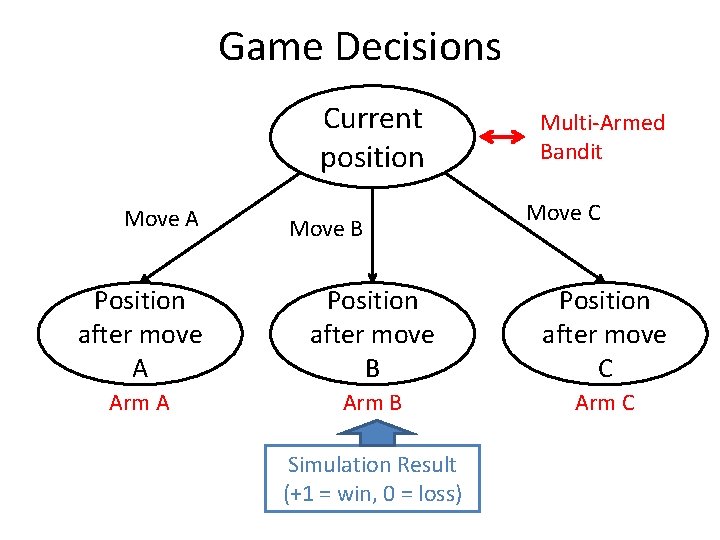

Game Decisions Current position Move A Move B Multi-Armed Bandit Move C Position after move A Position after move B Position after move C Arm A Arm B Arm C Simulation Result (+1 = win, 0 = loss)

Discussion • Anytime – stop whenever you like • UCB 1 formula minimises regret – Grows like log(n) • Needs only game rules: – Move generation – Terminal state evaluation • Surprisingly effective, but… … doesn’t look ahead

MCTS / UCT Basics

Attractive Features • Anytime • Scalable – Tackle complex games better than before – May be logarithmically better with increased CPU • No need for heuristic function – Though usually better with one • Next we’ll look at: – General MCTS – UCT in particular

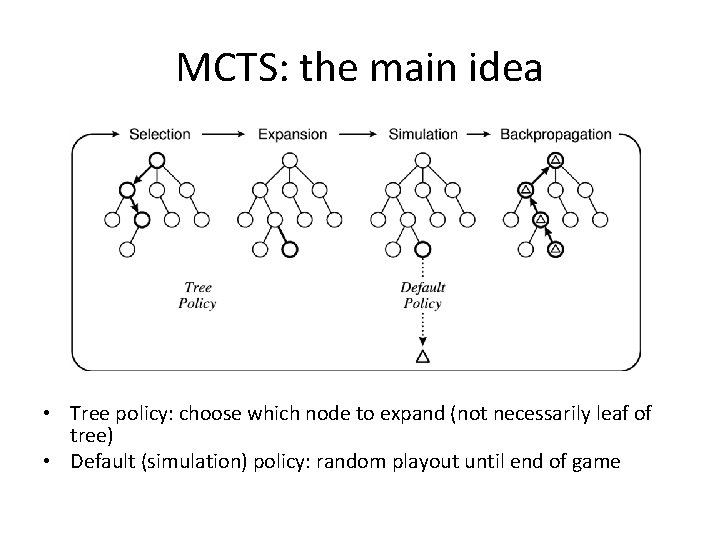

MCTS: the main idea • Tree policy: choose which node to expand (not necessarily leaf of tree) • Default (simulation) policy: random playout until end of game

MCTS Algorithm • Decompose into 6 parts: • MCTS main algorithm – Tree policy • Expand • Best Child (UCT Formula) – Default Policy – Back-propagate • We’ll run through these then show demos

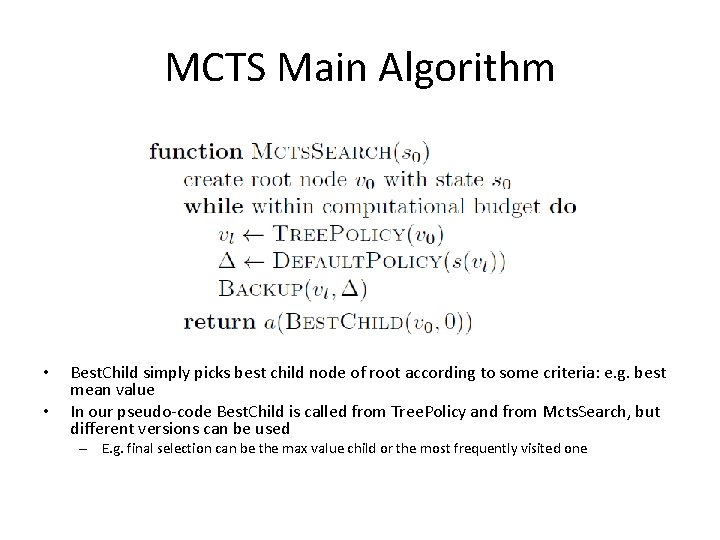

MCTS Main Algorithm • • Best. Child simply picks best child node of root according to some criteria: e. g. best mean value In our pseudo-code Best. Child is called from Tree. Policy and from Mcts. Search, but different versions can be used – E. g. final selection can be the max value child or the most frequently visited one

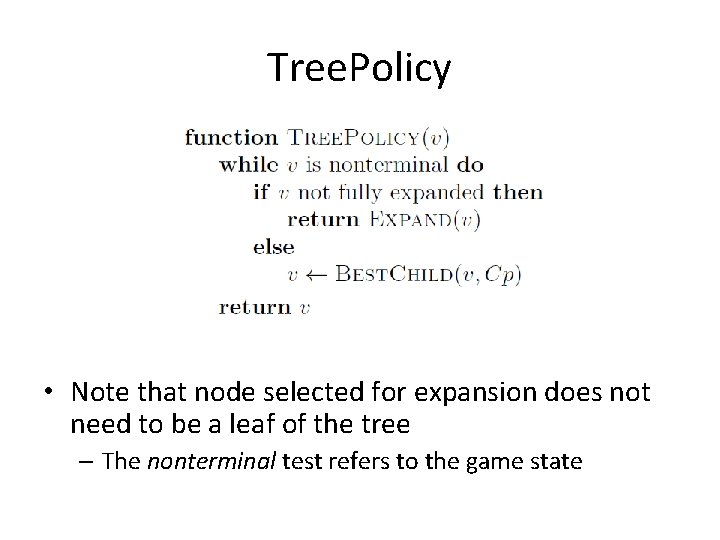

Tree. Policy • Note that node selected for expansion does not need to be a leaf of the tree – The nonterminal test refers to the game state

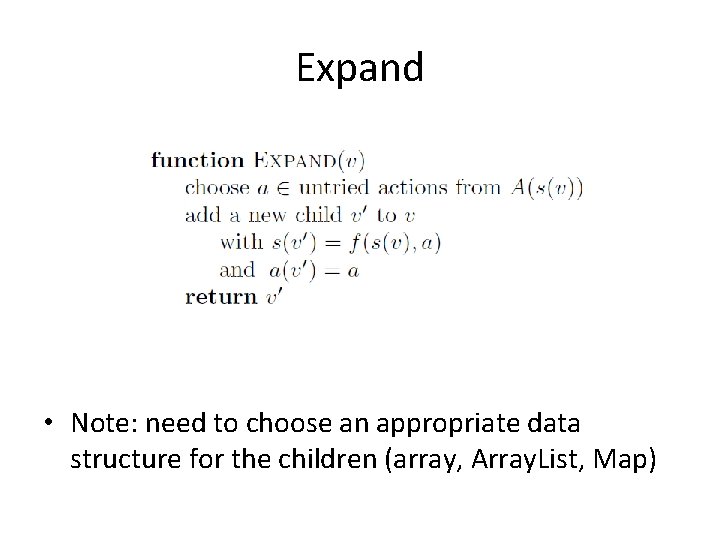

Expand • Note: need to choose an appropriate data structure for the children (array, Array. List, Map)

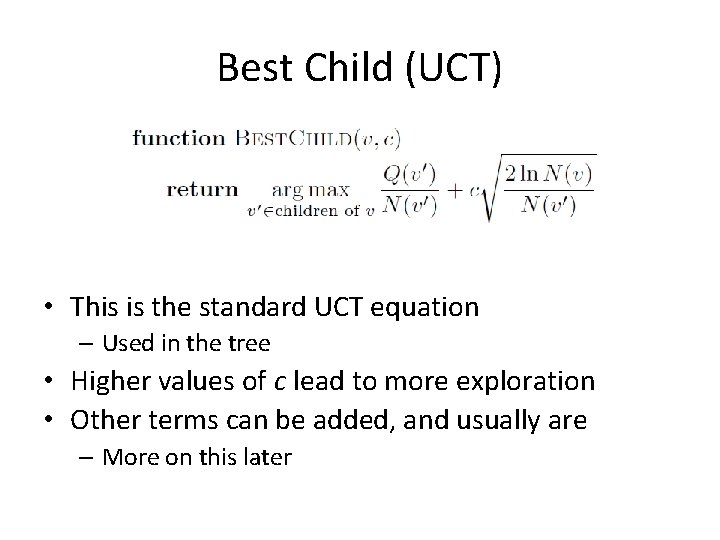

Best Child (UCT) • This is the standard UCT equation – Used in the tree • Higher values of c lead to more exploration • Other terms can be added, and usually are – More on this later

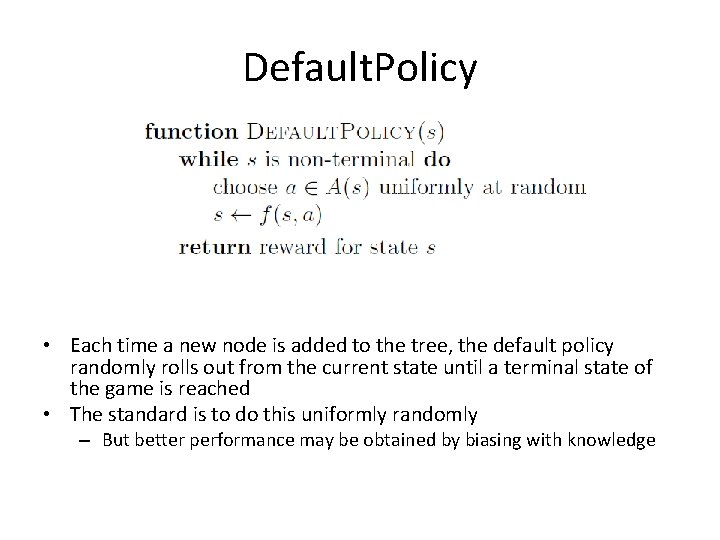

Default. Policy • Each time a new node is added to the tree, the default policy randomly rolls out from the current state until a terminal state of the game is reached • The standard is to do this uniformly randomly – But better performance may be obtained by biasing with knowledge

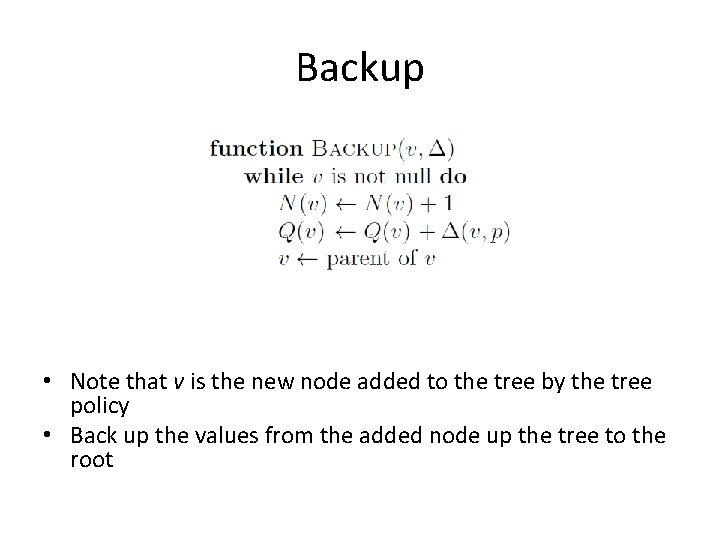

Backup • Note that v is the new node added to the tree by the tree policy • Back up the values from the added node up the tree to the root

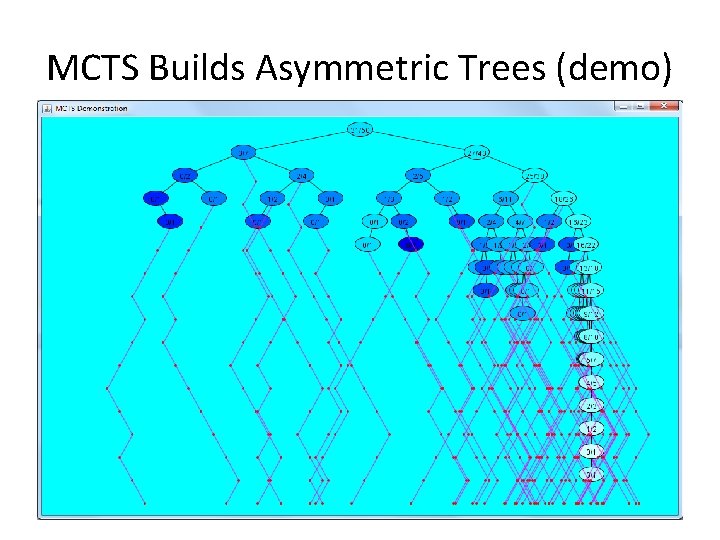

MCTS Builds Asymmetric Trees (demo)

Connect 4 Demo

Enhancements to the Basic MCTS Algorithm Tree Policy Default Policy Domain: independent or specific

Selection/Expansion Enhancements (just a sample …) • AMAF / RAVE • First Play Urgency • Learning – E. g. Temporal Difference Learning to bias tree policy or default policy • Bandit enhancements – E. g. Bayesian selection • Parameter Tuning

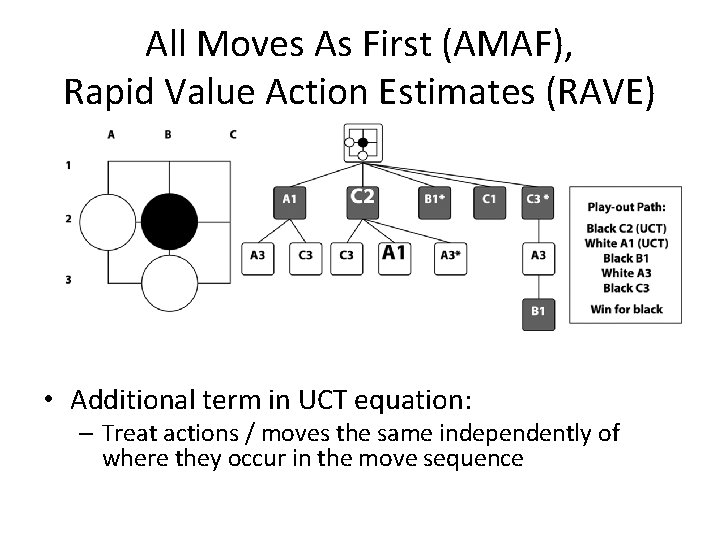

All Moves As First (AMAF), Rapid Value Action Estimates (RAVE) • Additional term in UCT equation: – Treat actions / moves the same independently of where they occur in the move sequence

Simulation Enhancements • Nudge the simulation to be more realistic – Without introducing bias – At very low CPU cost • Examples: – Move-Average Sampling Technique (MAST) – Last Good Reply (LGR) – Patterns e. g. Bridge Completion: – Heuristic value functions • Paradoxically better simulation policy sometimes yields weaker play

Hex Demo

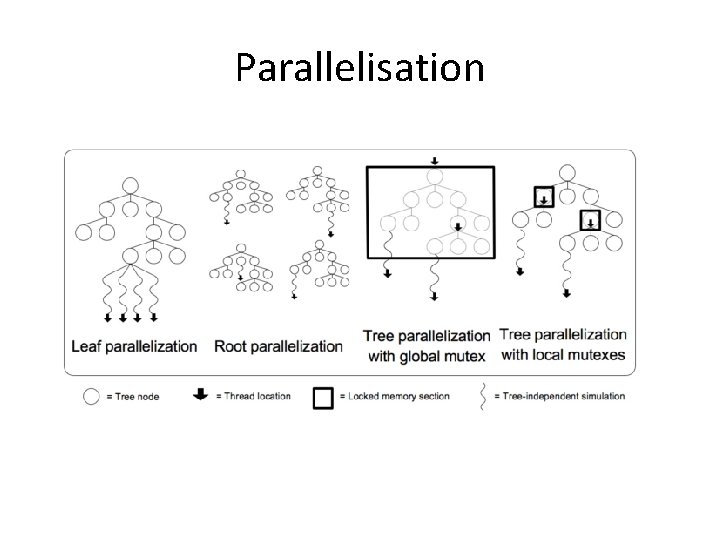

Parallelisation

Handling Uncertainty and Incomplete Information in MCTS

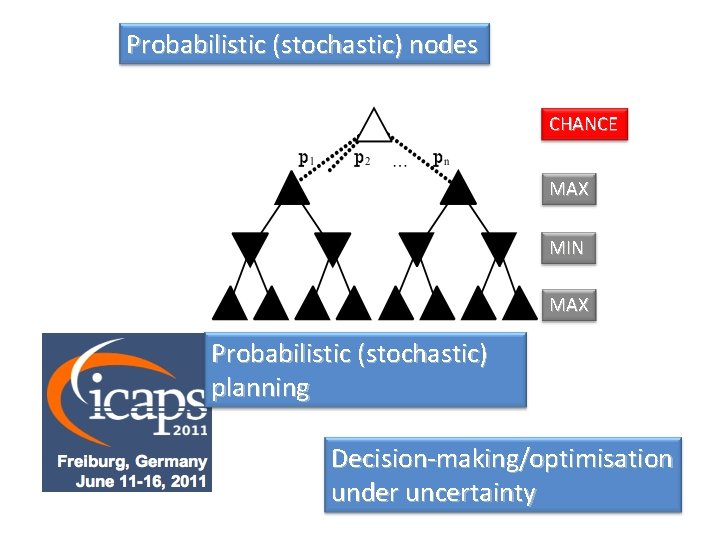

Probabilistic (stochastic) nodes CHANCE MAX MIN MAX Probabilistic (stochastic) planning Decision-making/optimisation under uncertainty

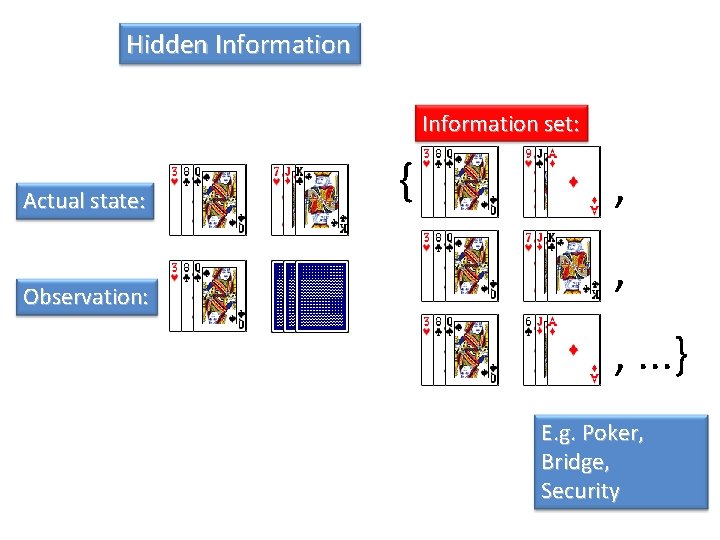

Hidden Information set: Actual state: Observation: { , , , . . . } E. g. Poker, Bridge, Security

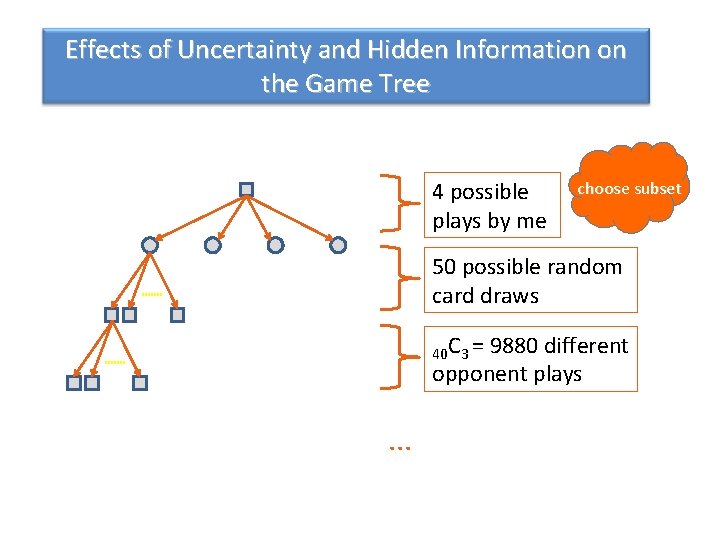

Effects of Uncertainty and Hidden Information on the Game Tree 4 possible plays by me choose subset 50 possible random card draws 40 C 3 = 9880 different opponent plays . . .

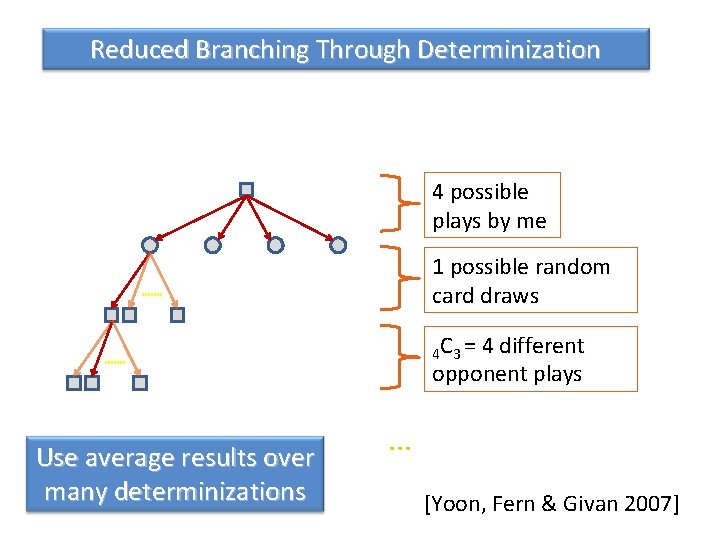

Reduced Branching Through Determinization 4 possible plays by me 1 possible random card draws 4 C 3 = 4 different opponent plays Use average results over many determinizations . . . [Yoon, Fern & Givan 2007]

Determinization • • Sample states from the information set Analyse the individual perfect information games Combine the results at the end Successes – Bridge (Ginsberg), Scrabble (Sheppard) – Klondike solitaire (Bjarnason, Fern and Tadepalli) – Probabilistic planning (Yoon, Fern and Givan) • Problems – Never tries to gather or hide information – Suffers from strategy fusion and non-locality (Frank and Basin) M. L. Ginsberg, “GIB: Imperfect information in a computationally challenging game, ” Journal of Artificial Intelligence Research, vol. 14, 2002, p. 313– 368. R. Bjarnason, A. Fern, and P. Tadepalli, “Lower bounding Klondike solitaire with Monte-Carlo planning, ” Proc. ICAPS-2009, p. 26– 33. S. Yoon, A. Fern, and R. Givan, “FF-Replan: A Baseline for Probabilistic Planning, ” 1 Proc. ICAPS-2007, p. 352– 359. I. Frank and D. Basin, “Search in games with incomplete information: a case study using Bridge card play, ” Artificial Intelligence, vol. 100, 1998, pp. 87 -123. 33

Cheating • Easiest approach to AI for games with imperfect information: cheat and look at the hidden information and outcomes of future chance events • This gives a deterministic game of perfect information, which can be searched with standard techniques (minimax, UCT, …) • Obviously not a reasonable approach in practice, but gives a useful bound on performance • Much used in commercial games

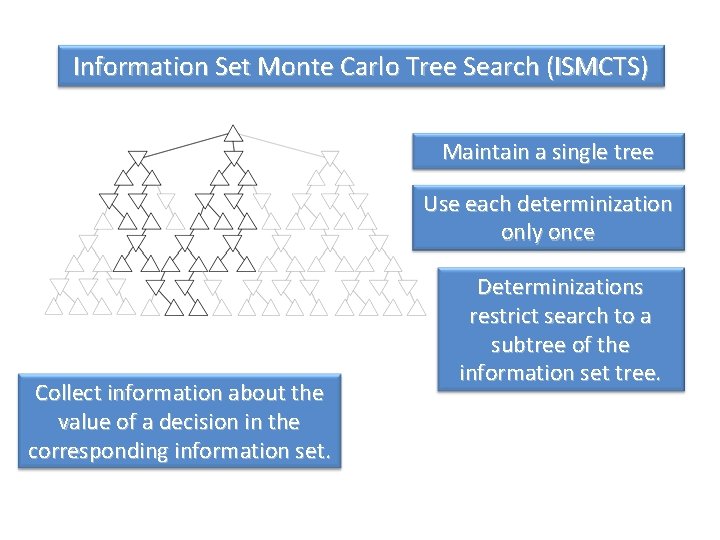

Information Set Monte Carlo Tree Search (ISMCTS) Maintain a single tree Use each determinization only once Collect information about the value of a decision in the corresponding information set. Determinizations restrict search to a subtree of the information set tree.

Lord of the Rings: The Confrontation • Board game designed by Reiner Knizia • Hidden information: can’t see the identities of opponent’s pieces • Simultaneous moves: combat is resolved by both players simultaneously choosing a card Also Phantom 4, 4, 4, Dou Di Zhu and several other card games…

MCTS for Real-Time Games • Limited roll-out budget – Heuristic knowledge becomes important • Action space may be too fine-grained – Take Macro-actions – Otherwise planning will be very short-term • May be no terminal node in sight – Again, use heuristic – Tune simulation depth • Next-state function may be expensive – Consider making a simpler abstraction

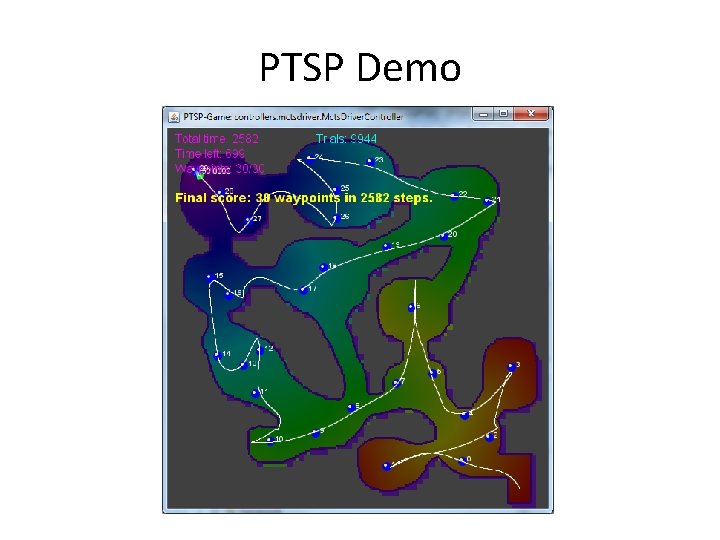

PTSP Demo

Summary • MCTS: exciting area of research • Many impressive achievements already – With many more to come • Some interesting directions: – Applications beyond games – Comparisons with other search methods – Heuristic knowledge is important – more work needed on learning it – Efficient parameter tuning – POMDPs – Uncertainty – Macro-actions – Hybridisation with other CI methods e. g. Evolution

Questions? Browne C. , Powley E. , Whitehouse D. , Lucas S. , Cowling P. I. , Rohlfshagen P. , Tavener S. , Perez D. and Samothrakis S. , Colton S. (2012): "A Survey of Monte Carlo Tree Search Methods" IEEE Transactions on Computational Intelligence and AI in Games, IEEE, 4 (1): 1 -43.

Advertising Break • Sep 28: Essex workshop on AI and Games – Strong MCTS Theme – Organised by Essex Game Intelligence Group • Next week: AIGAMEDEV Vienna AI summit – Find out what games industry people think – Resistance competition and MCTS tutorial (!)

- Slides: 41