XROOTD tests Outline Castor background changes What changes

XROOTD tests Outline § Castor background & changes § What changes for you? § XROOTD speed tests Thanks to Andreas Peters! Max Baak & Matthias Schott ADP meeting 18 Jan ‘ 09 1/26

Castor § CASTOR default pool: place to copy data to with commands like: • rfcp My. File. Name /castor/cern. ch/user/<letter>/<UID>/My. Directory/My. File. Name § Default CASTOR pool: 60 TB disk pool with a tape back-end. • When disk is full, older files are migrated to tape automatically to make space for newer files to arrive on disk. § CERN has only one tape system, managed by Central Computing Operations group. • Shared by all experiments. Max Baak 2/26

Castor problems § CASTOR: • In theory: Ø “pool with infinite space”. Sometimes delays to get files back from tape. • In practice: Ø Source of many problems and user frustration § CASTOR problems: • Tape systems not designed for small files very inefficient. Ø Preferred file size >= 1 Gb • If CERN tape system used heavily, long time for the data to be migrated back to disk applications time out. § Typical user: uncontrolled/chaotic access to tape system • ‘Lock up’ when too many open network connections. • Could easily lead to situations which endanger data taking Ø Already several of such situations even without LHC running. Max Baak 3/26

Changes to Castor § LHC data taking mode: • Protect tape system from users to make sure it performs well/controlled when taking data. § Consequence: • CASTOR pool becomes disk pool only • Default CASTOR tape back-end will be closed down for users Ø Disable write access for users to tape. § Full information: • https: //twiki. cern. ch/twiki/bin/view/Atlas/Castor. Default. Pool. Restrictions Max Baak 4/26

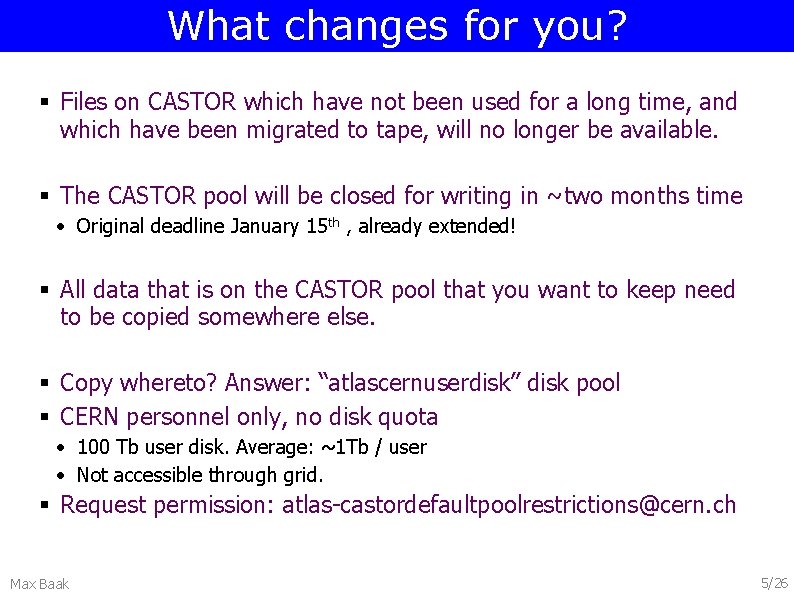

What changes for you? § Files on CASTOR which have not been used for a long time, and which have been migrated to tape, will no longer be available. § The CASTOR pool will be closed for writing in ~two months time • Original deadline January 15 th , already extended! § All data that is on the CASTOR pool that you want to keep need to be copied somewhere else. § Copy whereto? Answer: “atlascernuserdisk” disk pool § CERN personnel only, no disk quota • 100 Tb user disk. Average: ~1 Tb / user • Not accessible through grid. § Request permission: atlas-castordefaultpoolrestrictions@cern. ch Max Baak 5/26

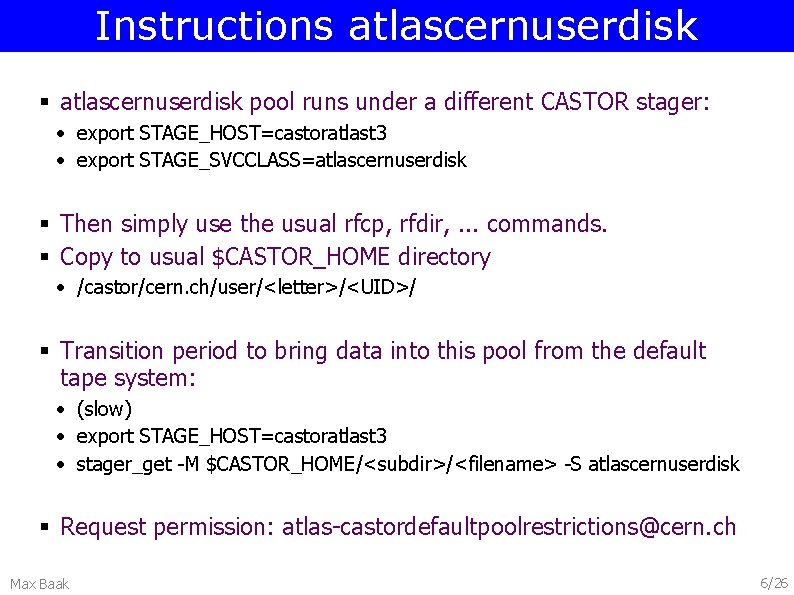

Instructions atlascernuserdisk § atlascernuserdisk pool runs under a different CASTOR stager: • export STAGE_HOST=castoratlast 3 • export STAGE_SVCCLASS=atlascernuserdisk § Then simply use the usual rfcp, rfdir, . . . commands. § Copy to usual $CASTOR_HOME directory • /castor/cern. ch/user/<letter>/<UID>/ § Transition period to bring data into this pool from the default tape system: • (slow) • export STAGE_HOST=castoratlast 3 • stager_get -M $CASTOR_HOME/<subdir>/<filename> -S atlascernuserdisk § Request permission: atlas-castordefaultpoolrestrictions@cern. ch Max Baak 6/26

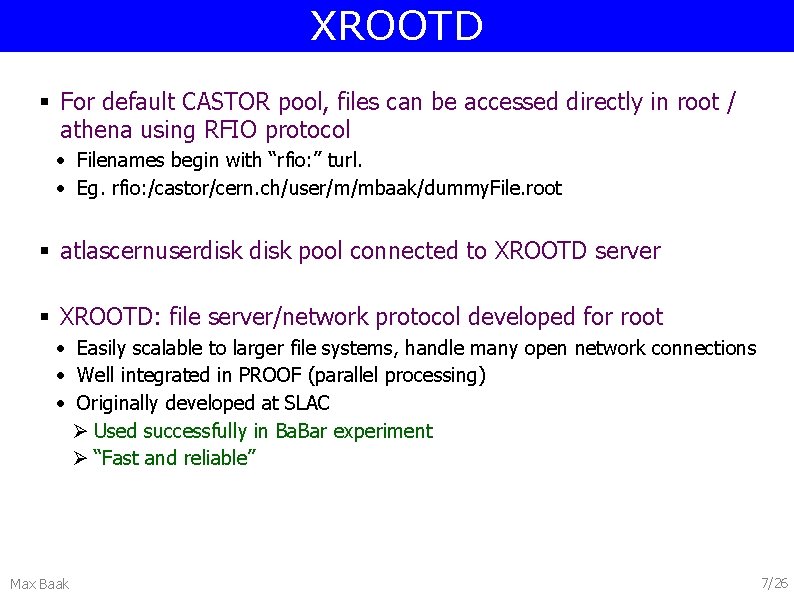

XROOTD § For default CASTOR pool, files can be accessed directly in root / athena using RFIO protocol • Filenames begin with “rfio: ” turl. • Eg. rfio: /castor/cern. ch/user/m/mbaak/dummy. File. root § atlascernuserdisk pool connected to XROOTD server § XROOTD: file server/network protocol developed for root • Easily scalable to larger file systems, handle many open network connections • Well integrated in PROOF (parallel processing) • Originally developed at SLAC Ø Used successfully in Ba. Bar experiment Ø “Fast and reliable” Max Baak 7/26

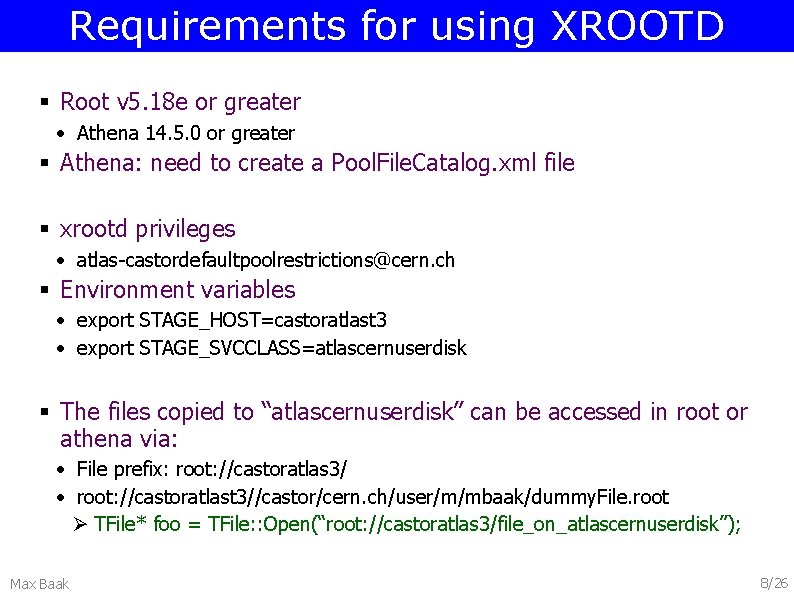

Requirements for using XROOTD § Root v 5. 18 e or greater • Athena 14. 5. 0 or greater § Athena: need to create a Pool. File. Catalog. xml file § xrootd privileges • atlas-castordefaultpoolrestrictions@cern. ch § Environment variables • export STAGE_HOST=castoratlast 3 • export STAGE_SVCCLASS=atlascernuserdisk § The files copied to “atlascernuserdisk” can be accessed in root or athena via: • File prefix: root: //castoratlas 3/ • root: //castoratlast 3//castor/cern. ch/user/m/mbaak/dummy. File. root Ø TFile* foo = TFile: : Open(“root: //castoratlas 3/file_on_atlascernuserdisk”); Max Baak 8/26

XROOTD test results Outline § Single job performance § Stress test results • (Multiple simultaneous jobs) Max Baak 9/26

Test setup Five file-transfer configurations: § Local disk (no file transfer) § File. Stager • Effectively: running over files from local disk § Xrootd • Buffered • Non-buffered § Rfio Files used: § Z ee, Z mumu AOD collections § ~37 mb/file, 190 kb/event § 200 events per File Max Baak 10/26

Intelligent File. Stager § cmt co –r File. Stager-00 -00 -19 Database/File. Stager • https: //twiki. cern. ch/twiki/bin/view/Main/File. Stager § Intelligent file stager copies files one-by-one to local disk, while running over previous file(s). • Run semi-interactive analysis over files nearby, eg. on Castor. • File pre-staging to improve wall-time performance. • Works in ROOT and in Athena. § Actual processing over local files in cache = fast! • • Max Baak Only time loss due to staging first file. In many cases: prestaging as fast as running over local files! Minimum number of network connections kept open. Spreads the network load of accessing data over length of job. 11/26

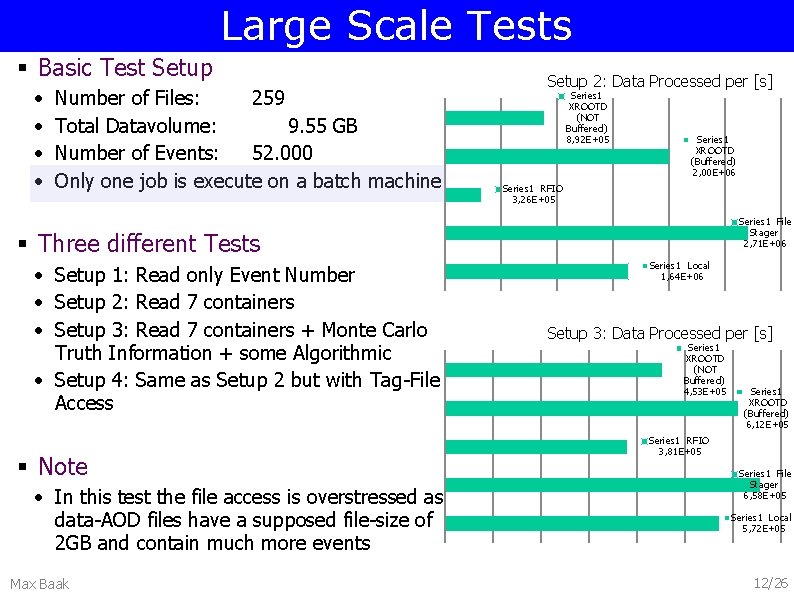

Large Scale Tests § Basic Test Setup • • Number of Files: 259 Total Datavolume: 9. 55 GB Number of Events: 52. 000 Only one job is execute on a batch machine Setup 2: Data Processed per [s] Series 1 XROOTD (NOT Buffered) 8, 92 E+05 Series 1 XROOTD (Buffered) 2, 00 E+06 Series 1 RFIO 3, 26 E+05 Series 1 File Stager 2, 71 E+06 § Three different Tests • Setup 1: Read only Event Number • Setup 2: Read 7 containers • Setup 3: Read 7 containers + Monte Carlo Truth Information + some Algorithmic • Setup 4: Same as Setup 2 but with Tag-File Access § Note • In this test the file access is overstressed as data-AOD files have a supposed file-size of 2 GB and contain much more events Max Baak Series 1 Local 1, 64 E+06 Setup 3: Data Processed per [s] Series 1 XROOTD (NOT Buffered) 4, 53 E+05 Series 1 XROOTD (Buffered) 6, 12 E+05 Series 1 RFIO 3, 81 E+05 Series 1 File Stager 6, 58 E+05 Series 1 Local 5, 72 E+05 12/26

![Large Scale Tests Time in Seconds [s] § Reading Only Event Number • Reading Large Scale Tests Time in Seconds [s] § Reading Only Event Number • Reading](http://slidetodoc.com/presentation_image_h2/9e174bdd44052e34f579694eff15e852/image-13.jpg)

Large Scale Tests Time in Seconds [s] § Reading Only Event Number • Reading 10% of File Content § Timing: • Comparable timing for local access, xrootd and file stager • RFIO 5 times slower Series 1 XROOTD (NOT Buffered) 699 Series 1 XROOTD (Buffered) 455 Series 1 RFIO 2530 Series 1 File Stager 519 Series 1 Local 401 § Datatransfer • RFIO: 45 x larger data transfer than needed • xrootd (not buffered): 1% overhead of file transfer • xrootd (buffered): 32 x larger data transfer than needed • File. Stager: Copies whole file and hence 12 x larger data transfer than needed Max Baak Datatransfer in Byte Series 1 XROOTD (NOT Buffered) 7, 92 E+08 Series 1 XROOTD (Buffered) 2, 57 E+10 Series 1 RFIO 3, 61 E+10 Series 1 File Stager 1, 01 E+10 Series 1 Local 7, 10 E+06 Series 1 Root Read Data Volume 7, 88 E+08 Series 1 Total Data Volume 9, 55 E+09 13/26

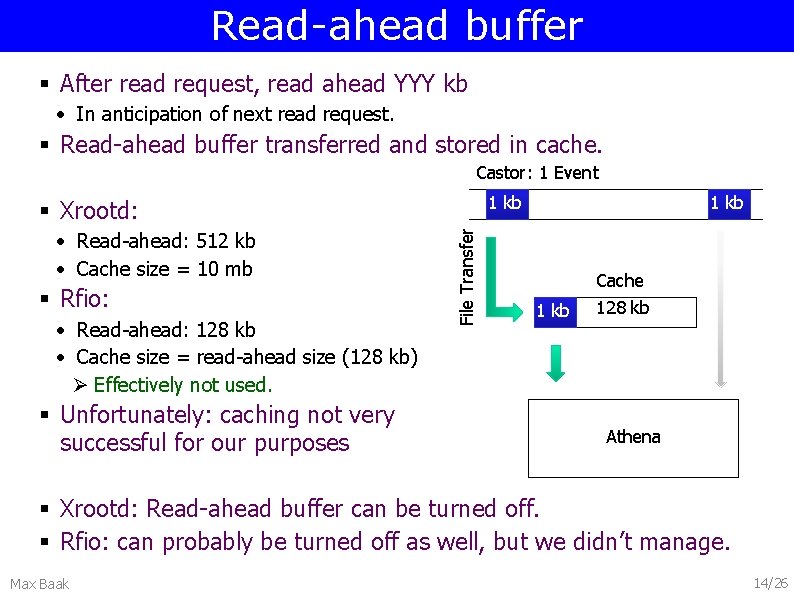

Read-ahead buffer § After read request, read ahead YYY kb • In anticipation of next read request. § Read-ahead buffer transferred and stored in cache. Castor: 1 Event 1 kb • Read-ahead: 512 kb • Cache size = 10 mb § Rfio: • Read-ahead: 128 kb • Cache size = read-ahead size (128 kb) Ø Effectively not used. § Unfortunately: caching not very successful for our purposes File Transfer § Xrootd: 1 kb Cache 128 kb Athena § Xrootd: Read-ahead buffer can be turned off. § Rfio: can probably be turned off as well, but we didn’t manage. Max Baak 14/26

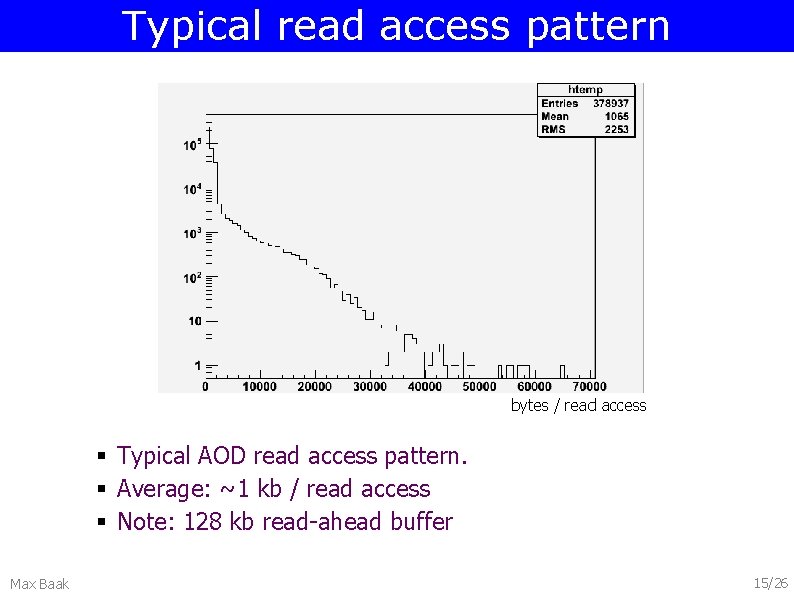

Typical read access pattern bytes / read access § Typical AOD read access pattern. § Average: ~1 kb / read access § Note: 128 kb read-ahead buffer Max Baak 15/26

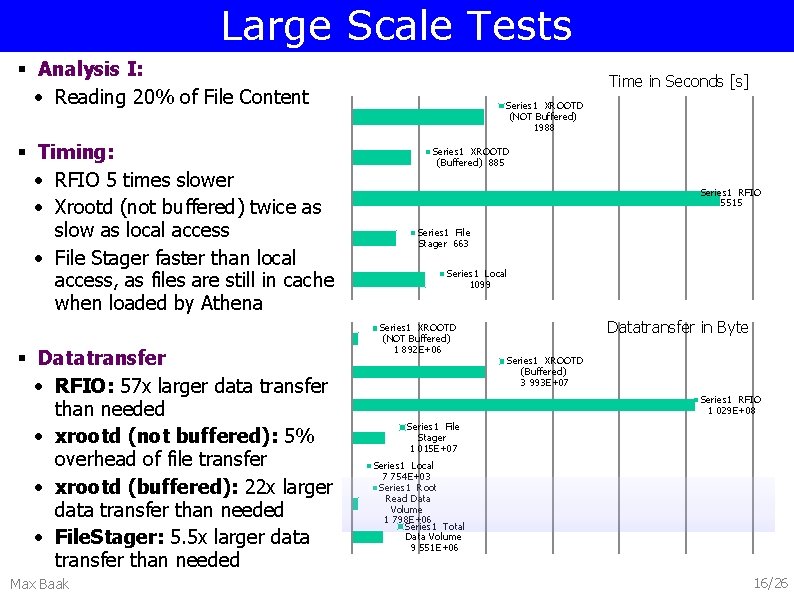

Large Scale Tests § Analysis I: • Reading 20% of File Content § Timing: • RFIO 5 times slower • Xrootd (not buffered) twice as slow as local access • File Stager faster than local access, as files are still in cache when loaded by Athena § Datatransfer • RFIO: 57 x larger data transfer than needed • xrootd (not buffered): 5% overhead of file transfer • xrootd (buffered): 22 x larger data transfer than needed • File. Stager: 5. 5 x larger data transfer than needed Max Baak Time in Seconds [s] Series 1 XROOTD (NOT Buffered) 1988 Series 1 XROOTD (Buffered) 885 Series 1 RFIO 5515 Series 1 File Stager 663 Series 1 Local 1099 Series 1 XROOTD (NOT Buffered) 1 892 E+06 Datatransfer in Byte Series 1 XROOTD (Buffered) 3 993 E+07 Series 1 RFIO 1 029 E+08 Series 1 File Stager 1 015 E+07 Series 1 Local 7 754 E+03 Series 1 Root Read Data Volume 1 798 E+06 Series 1 Total Data Volume 9 551 E+06 16/26

![Large Scale Tests Time in Seconds [s] § Analysis II: • Reading 35% of Large Scale Tests Time in Seconds [s] § Analysis II: • Reading 35% of](http://slidetodoc.com/presentation_image_h2/9e174bdd44052e34f579694eff15e852/image-17.jpg)

Large Scale Tests Time in Seconds [s] § Analysis II: • Reading 35% of File Content and more algorithmic inside the analysis Series 1 XROOTD (NOT Buffered) 7170 Series 1 XROOTD (Buffered) 5300 Series 1 RFIO 8590 § Timing: • Overall comparable timing as algorithmic part gets dominant • Xrootd (not buffered) is 20% faster than RFIO. • File Stager faster than local access, as files are still in cache when loaded by Athena § Datatransfer • Similar to previous analysis Max Baak Series 1 File Stager 4970 Series 1 Local 5720 Series 1 XROOTD (NOT Buffered) 3 474 E+06 Datatransfer in Byte Series 1 XROOTD (Buffered) 4 106 E+07 Series 1 RFIO 1 160 E+08 Series 1 File Stager 1 014 E+07 Series 1 Local 0 000 E+00 Series 1 Root Read Data Volume 3 272 E+06 Series 1 Total Data Volume 9 551 E+06 17/26

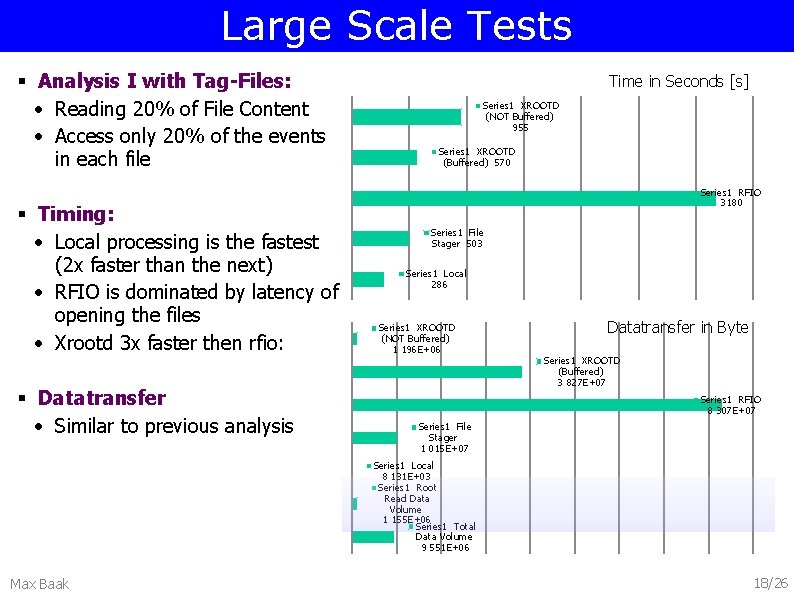

Large Scale Tests § Analysis I with Tag-Files: • Reading 20% of File Content • Access only 20% of the events in each file § Timing: • Local processing is the fastest (2 x faster than the next) • RFIO is dominated by latency of opening the files • Xrootd 3 x faster then rfio: § Datatransfer • Similar to previous analysis Time in Seconds [s] Series 1 XROOTD (NOT Buffered) 955 Series 1 XROOTD (Buffered) 570 Series 1 RFIO 3180 Series 1 File Stager 503 Series 1 Local 286 Series 1 XROOTD (NOT Buffered) 1 196 E+06 Datatransfer in Byte Series 1 XROOTD (Buffered) 3 827 E+07 Series 1 RFIO 8 307 E+07 Series 1 File Stager 1 015 E+07 Series 1 Local 8 131 E+03 Series 1 Root Read Data Volume 1 155 E+06 Series 1 Total Data Volume 9 551 E+06 Max Baak 18/26

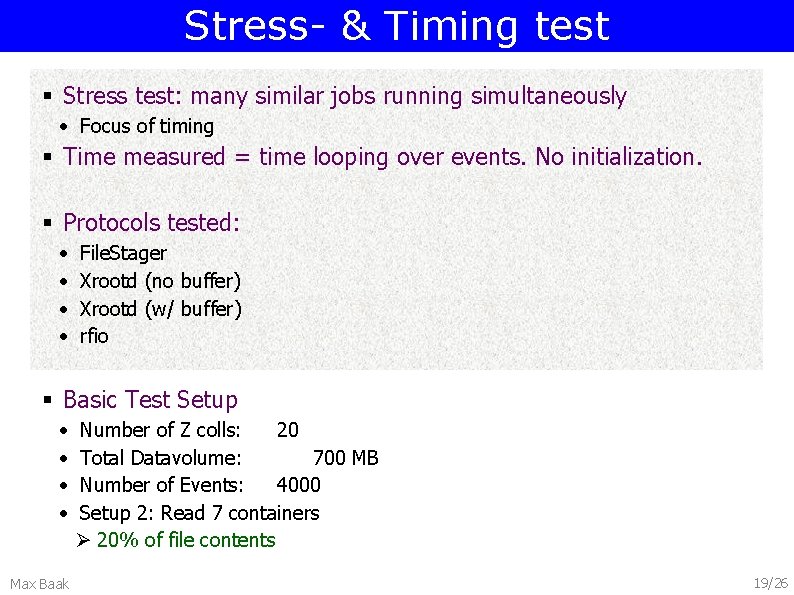

Stress- & Timing test § Stress test: many similar jobs running simultaneously • Focus of timing § Time measured = time looping over events. No initialization. § Protocols tested: • • File. Stager Xrootd (no buffer) Xrootd (w/ buffer) rfio § Basic Test Setup • • Max Baak Number of Z colls: 20 Total Datavolume: 700 MB Number of Events: 4000 Setup 2: Read 7 containers Ø 20% of file contents 19/26

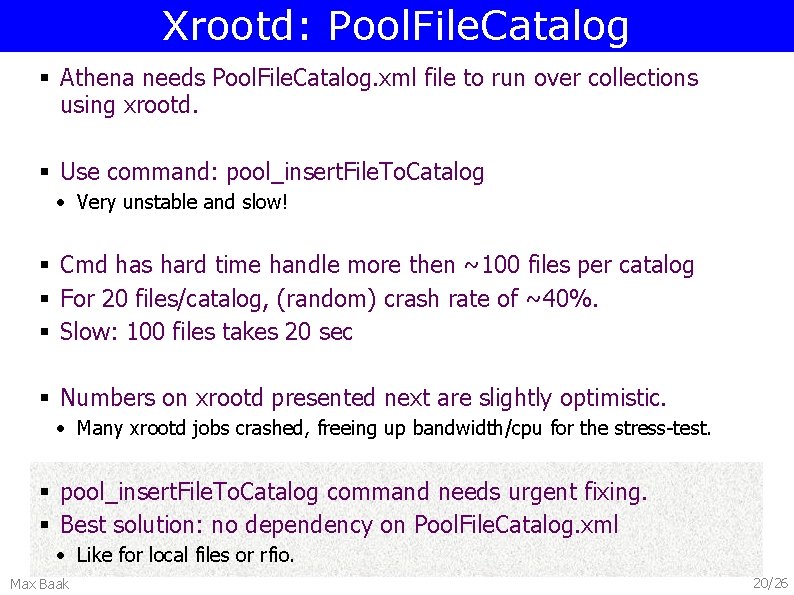

Xrootd: Pool. File. Catalog § Athena needs Pool. File. Catalog. xml file to run over collections using xrootd. § Use command: pool_insert. File. To. Catalog • Very unstable and slow! § Cmd has hard time handle more then ~100 files per catalog § For 20 files/catalog, (random) crash rate of ~40%. § Slow: 100 files takes 20 sec § Numbers on xrootd presented next are slightly optimistic. • Many xrootd jobs crashed, freeing up bandwidth/cpu for the stress-test. § pool_insert. File. To. Catalog command needs urgent fixing. § Best solution: no dependency on Pool. File. Catalog. xml • Like for local files or rfio. Max Baak 20/26

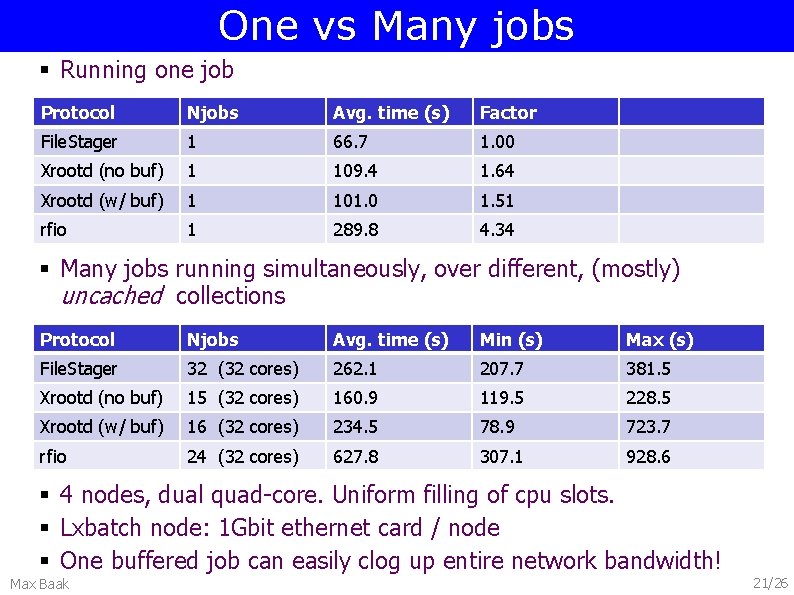

One vs Many jobs § Running one job Protocol Njobs Avg. time (s) Factor File. Stager 1 66. 7 1. 00 Xrootd (no buf) 1 109. 4 1. 64 Xrootd (w/ buf) 1 101. 0 1. 51 rfio 1 289. 8 4. 34 § Many jobs running simultaneously, over different, (mostly) uncached collections Protocol Njobs Avg. time (s) Min (s) Max (s) File. Stager 32 (32 cores) 262. 1 207. 7 381. 5 Xrootd (no buf) 15 (32 cores) 160. 9 119. 5 228. 5 Xrootd (w/ buf) 16 (32 cores) 234. 5 78. 9 723. 7 rfio 24 (32 cores) 627. 8 307. 1 928. 6 § 4 nodes, dual quad-core. Uniform filling of cpu slots. § Lxbatch node: 1 Gbit ethernet card / node § One buffered job can easily clog up entire network bandwidth! Max Baak 21/26

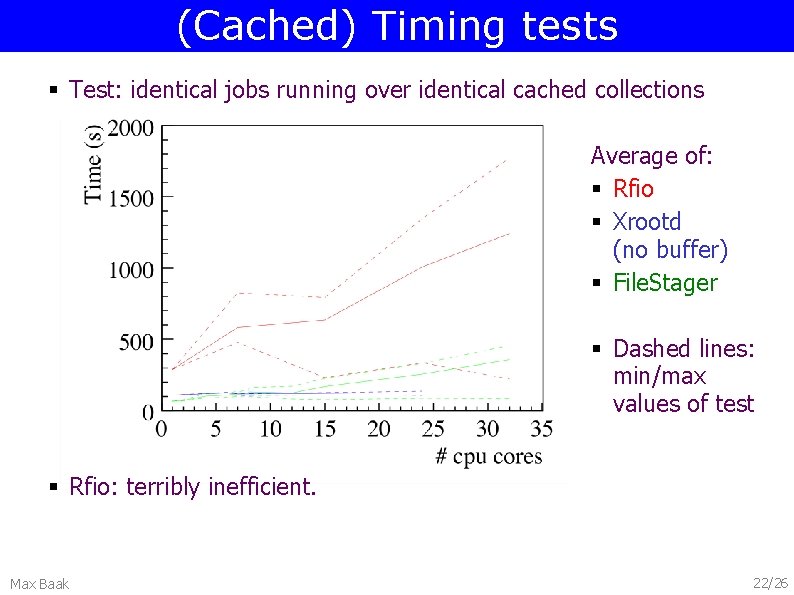

(Cached) Timing tests § Test: identical jobs running over identical cached collections Average of: § Rfio § Xrootd (no buffer) § File. Stager § Dashed lines: min/max values of test § Rfio: terribly inefficient. Max Baak 22/26

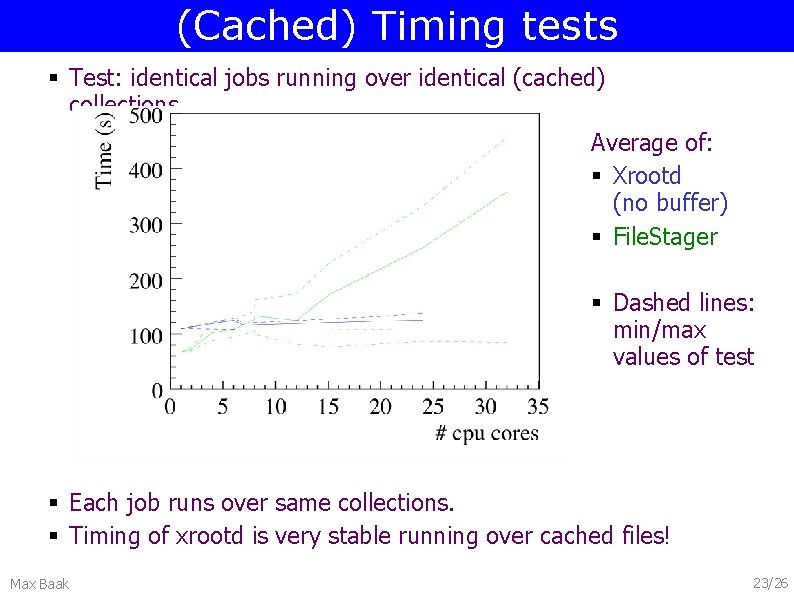

(Cached) Timing tests § Test: identical jobs running over identical (cached) collections Average of: § Xrootd (no buffer) § File. Stager § Dashed lines: min/max values of test § Each job runs over same collections. § Timing of xrootd is very stable running over cached files! Max Baak 23/26

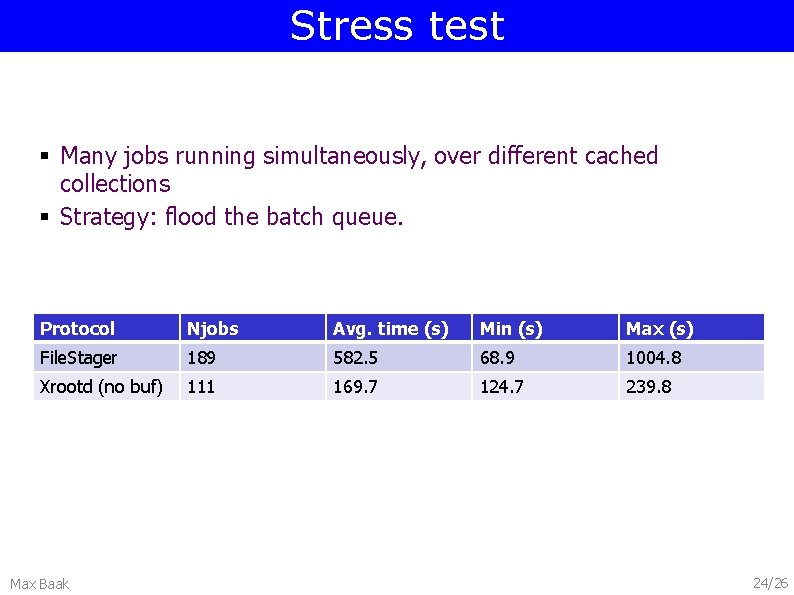

Stress test § Many jobs running simultaneously, over different cached collections § Strategy: flood the batch queue. Protocol Njobs Avg. time (s) Min (s) Max (s) File. Stager 189 582. 5 68. 9 1004. 8 Xrootd (no buf) 111 169. 7 124. 7 239. 8 Max Baak 24/26

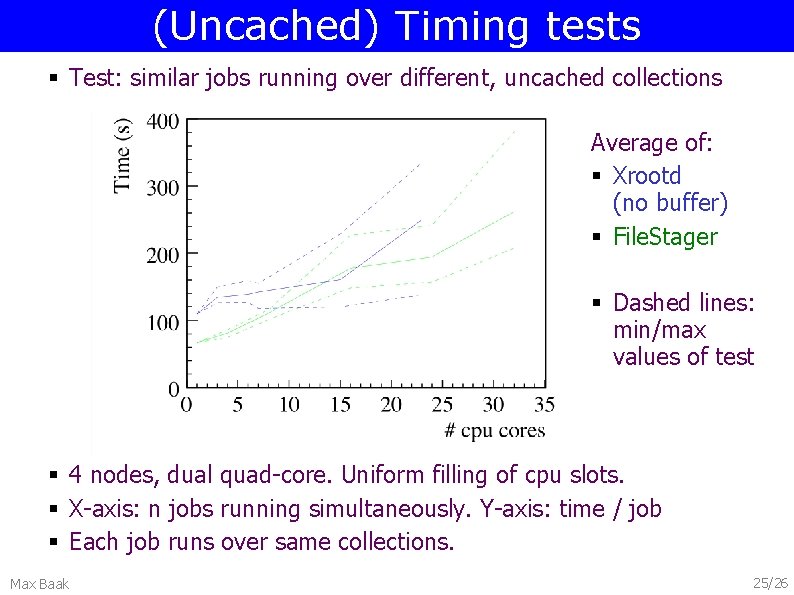

(Uncached) Timing tests § Test: similar jobs running over different, uncached collections Average of: § Xrootd (no buffer) § File. Stager § Dashed lines: min/max values of test § 4 nodes, dual quad-core. Uniform filling of cpu slots. § X-axis: n jobs running simultaneously. Y-axis: time / job § Each job runs over same collections. Max Baak 25/26

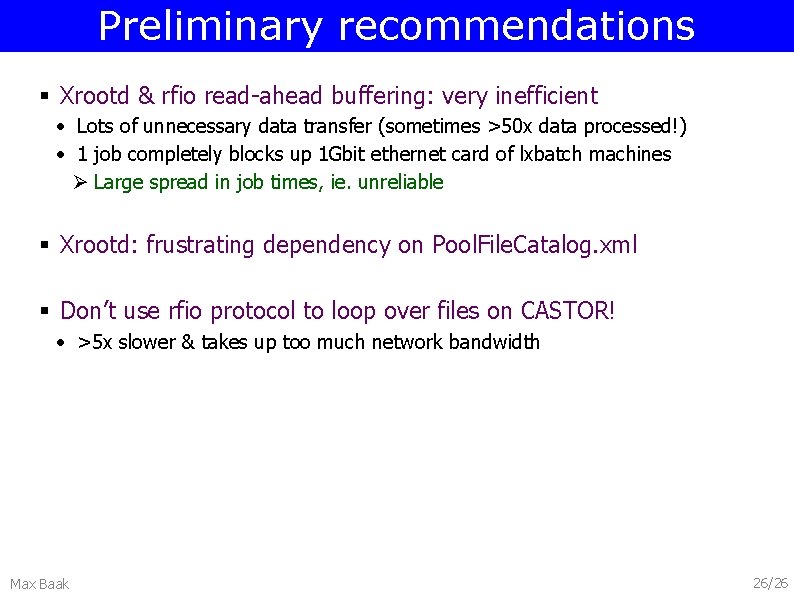

Preliminary recommendations § Xrootd & rfio read-ahead buffering: very inefficient • Lots of unnecessary data transfer (sometimes >50 x data processed!) • 1 job completely blocks up 1 Gbit ethernet card of lxbatch machines Ø Large spread in job times, ie. unreliable § Xrootd: frustrating dependency on Pool. File. Catalog. xml § Don’t use rfio protocol to loop over files on CASTOR! • >5 x slower & takes up too much network bandwidth Max Baak 26/26

Preliminary recommendations Different recommendations for single / multiple jobs § Single jobs: File. Stager does very well. § Multiple, production-style jobs • Xrootd (no buffer) works extremely stable & fast on files in disk pool cache. Ø Factor ~2 slow-down when read-ahead buffer turned on. • Two recommendations: Ø Xrootd, no buffer, for cached files Ø File. Stager or Xrootd for uncached files Max Baak 27/26

- Slides: 27