Word Representation word embeddingSVD Word 2 VEC and

Word Representation (word embedding)-SVD, Word 2 VEC and Glo. Ve (Draft) KH wong Glo. Ve ver. 0. 1. b 4 1

Overview • Introduction • Why we need word representation • We will study 3 methods: • SVD, Word 2 VEC and Glo. Ve ver. 0. 1. b 4 2

Introduce • SVD: Count based method • Word 2 Vec: Context Prediction methods: • Continuous Bag of Words (CBOW) • Skip-Gram Model (SGM) • Word 2 vec utilizing CBOW or SGM to build a neural net • : Glo. Ve : Count based plus prediction based • Found to be effective and comparable with word 2 vec in performance • To be used in text understanding, sentiment analyses, machine translation etc. • Practical issues: a word vector can have dimension: from 50 to 300 Glo. Ve ver. 0. 1. b 4 3

The SVD method • Reference • https: //en. wikipedia. org/wiki/Latent_semantic_an alysis • Step 1: Build the Co-occurrence matrix X • Step 2: Apply Singular Value Decomposition(SVD) on the matrix Co-occurrence matrix, i. e. [U, , V]=X • Step 3: From U, , V find reduced dimension reduced • Step 4: Word vector table = U reduced Glo. Ve ver. 0. 1. b 4 4

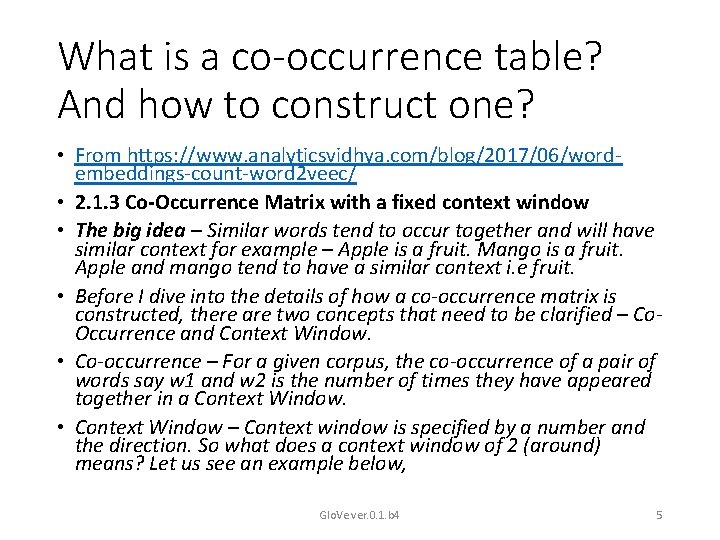

What is a co-occurrence table? And how to construct one? • From https: //www. analyticsvidhya. com/blog/2017/06/wordembeddings-count-word 2 veec/ • 2. 1. 3 Co-Occurrence Matrix with a fixed context window • The big idea – Similar words tend to occur together and will have similar context for example – Apple is a fruit. Mango is a fruit. Apple and mango tend to have a similar context i. e fruit. • Before I dive into the details of how a co-occurrence matrix is constructed, there are two concepts that need to be clarified – Co. Occurrence and Context Window. • Co-occurrence – For a given corpus, the co-occurrence of a pair of words say w 1 and w 2 is the number of times they have appeared together in a Context Window. • Context Window – Context window is specified by a number and the direction. So what does a context window of 2 (around) means? Let us see an example below, Glo. Ve ver. 0. 1. b 4 5

Co-occurrence table examples Quick Brown Fox Jump Over The Lazy Dog The green words are a 2 (around) context window for the word ‘Fox’ and for calculating the co-occurrence only these words will be counted. Let us see context window for the word ‘Over’. Quick Brown Fox Jump Over Glo. Ve ver. 0. 1. b 4 The Lazy Dog 6

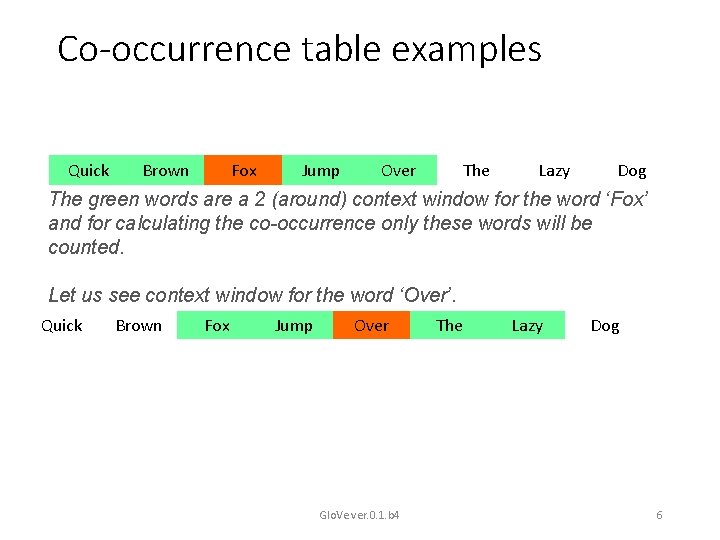

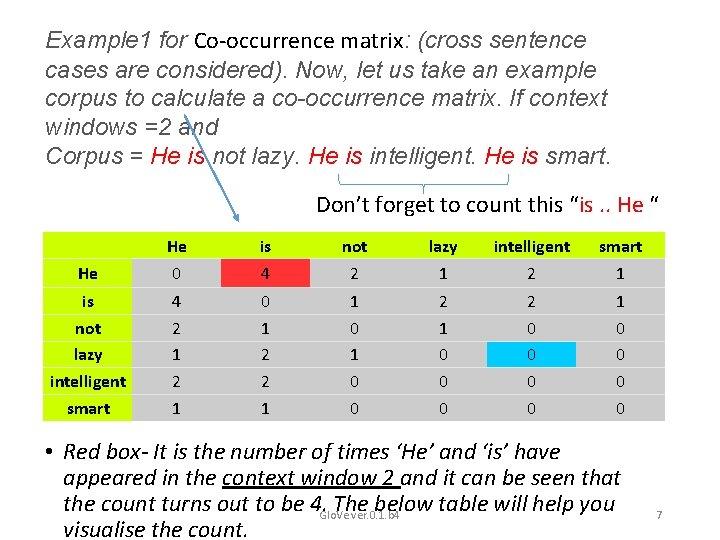

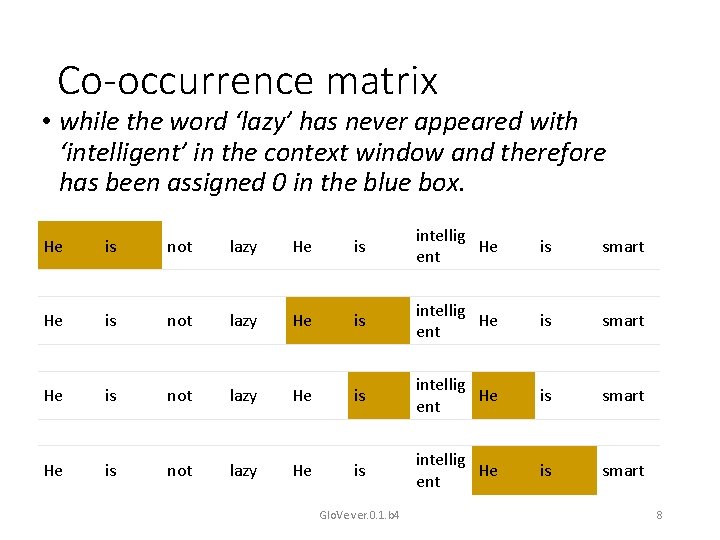

Example 1 for Co-occurrence matrix: (cross sentence cases are considered). Now, let us take an example corpus to calculate a co-occurrence matrix. If context windows =2 and Corpus = He is not lazy. He is intelligent. He is smart. Don’t forget to count this “is. . He “ He is not lazy intelligent smart He 0 4 2 1 is not lazy intelligent smart 4 2 1 0 1 2 2 1 1 0 0 2 1 0 0 0 2 0 0 1 0 0 • Red box- It is the number of times ‘He’ and ‘is’ have appeared in the context window 2 and it can be seen that the count turns out to be 4. Glo. Ve The below table will help you ver. 0. 1. b 4 visualise the count. 7

Co-occurrence matrix • while the word ‘lazy’ has never appeared with ‘intelligent’ in the context window and therefore has been assigned 0 in the blue box. He is not lazy He is intellig He ent is smart Glo. Ve ver. 0. 1. b 4 8

Example 2 for Co-occurrence matrix: (cross sentence cases are Not considered) Let window size =1. This means that context words for each and every word are 1 word to the left and one to the right. Context words for: • Let our corpus contain the following three sentences: 1) I enjoy flying 2) I like NLP 3) I like deep learning • Therefore, the resultant cooccurrence matrix A with fixed window size 1 looks like : https: //medium. com/@apargarg 99/co-occurrence-matrix-singular-value-decomposition-svd-31 b 3 d 3 deb 305 Glo. Ve ver. 0. 1. b 4 9

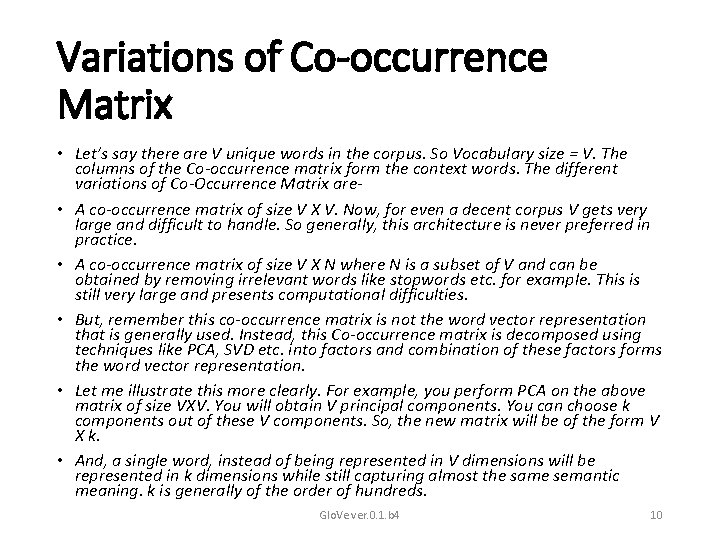

Variations of Co-occurrence Matrix • Let’s say there are V unique words in the corpus. So Vocabulary size = V. The columns of the Co-occurrence matrix form the context words. The different variations of Co-Occurrence Matrix are • A co-occurrence matrix of size V X V. Now, for even a decent corpus V gets very large and difficult to handle. So generally, this architecture is never preferred in practice. • A co-occurrence matrix of size V X N where N is a subset of V and can be obtained by removing irrelevant words like stopwords etc. for example. This is still very large and presents computational difficulties. • But, remember this co-occurrence matrix is not the word vector representation that is generally used. Instead, this Co-occurrence matrix is decomposed using techniques like PCA, SVD etc. into factors and combination of these factors forms the word vector representation. • Let me illustrate this more clearly. For example, you perform PCA on the above matrix of size VXV. You will obtain V principal components. You can choose k components out of these V components. So, the new matrix will be of the form V X k. • And, a single word, instead of being represented in V dimensions will be represented in k dimensions while still capturing almost the same semantic meaning. k is generally of the order of hundreds. Glo. Ve ver. 0. 1. b 4 10

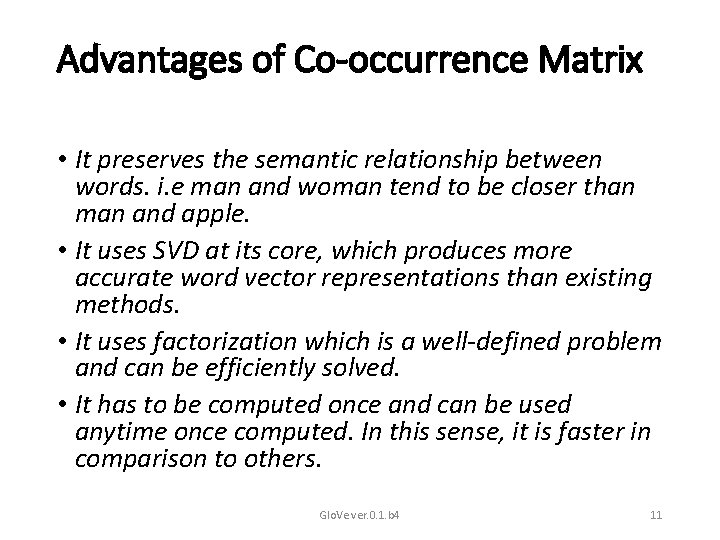

Advantages of Co-occurrence Matrix • It preserves the semantic relationship between words. i. e man and woman tend to be closer than man and apple. • It uses SVD at its core, which produces more accurate word vector representations than existing methods. • It uses factorization which is a well-defined problem and can be efficiently solved. • It has to be computed once and can be used anytime once computed. In this sense, it is faster in comparison to others. Glo. Ve ver. 0. 1. b 4 11

Disadvantages of Co-Occurrence Matrix • It requires huge memory to store the co-occurrence matrix. But, this problem can be circumvented by factorizing the matrix out of the system for example in Hadoop clusters etc. and can be saved. Glo. Ve ver. 0. 1. b 4 12

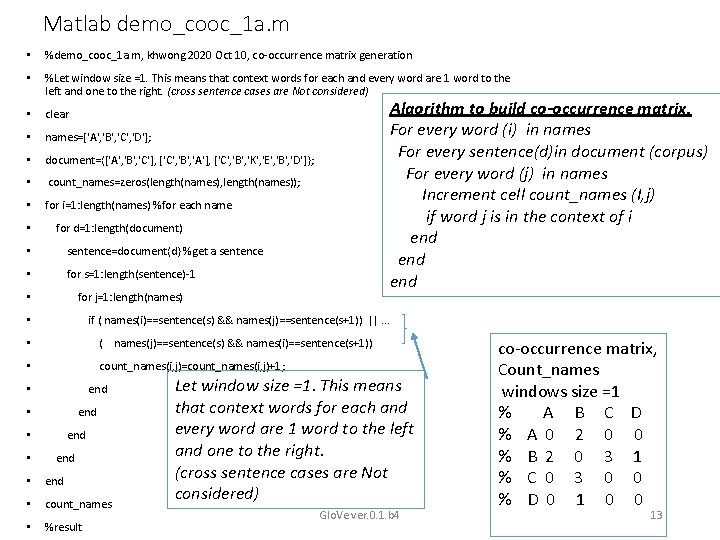

Matlab demo_cooc_1 a. m • %demo_cooc_1 a. m, khwong 2020 Oct 10, co-occurrence matrix generation • %Let window size =1. This means that context words for each and every word are 1 word to the left and one to the right. (cross sentence cases are Not considered) • clear • names=['A', 'B', 'C', 'D']; • document={['A', 'B', 'C'], ['C', 'B', 'A'], ['C', 'B', 'K', 'E', 'B', 'D']}; • count_names=zeros(length(names), length(names)); • for i=1: length(names) %for each name • Algorithm to build co-occurrence matrix. For every word (i) in names For every sentence(d)in document (corpus) For every word (j) in names Increment cell count_names (I, j) if word j is in the context of i end end for d=1: length(document) • sentence=document{d} %get a sentence • for s=1: length(sentence)-1 • for j=1: length(names) if ( names(i)==sentence(s) && names(j)==sentence(s+1)) ||. . . • • ( names(j)==sentence(s) && names(i)==sentence(s+1)) • count_names(i, j)=count_names(i, j)+1; end • • end • count_names • %result Let window size =1. This means that context words for each and every word are 1 word to the left and one to the right. (cross sentence cases are Not considered) Glo. Ve ver. 0. 1. b 4 co-occurrence matrix, Count_names windows size =1 % A B C D % A 0 2 0 0 % B 2 0 3 1 % C 0 3 0 0 % D 0 1 0 0 13

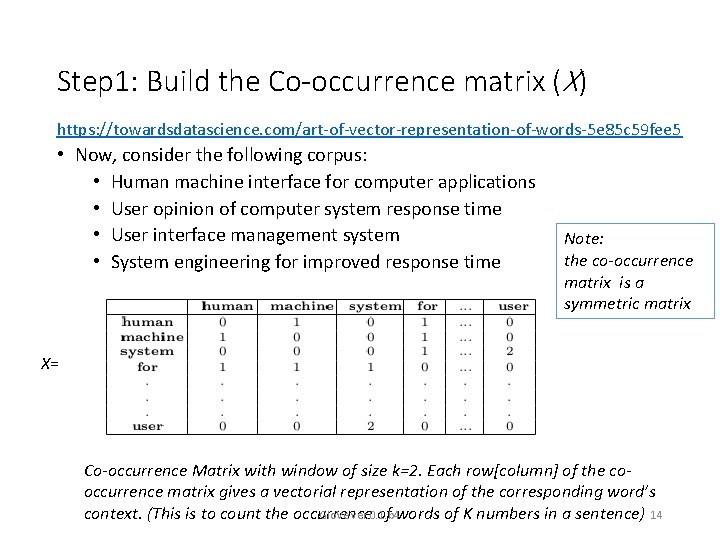

Step 1: Build the Co-occurrence matrix (X) https: //towardsdatascience. com/art-of-vector-representation-of-words-5 e 85 c 59 fee 5 • Now, consider the following corpus: • Human machine interface for computer applications • User opinion of computer system response time • User interface management system • System engineering for improved response time Note: the co-occurrence matrix is a symmetric matrix X= Co-occurrence Matrix with window of size k=2. Each row[column] of the cooccurrence matrix gives a vectorial representation of the corresponding word’s context. (This is to count the occurrence of words of K numbers in a sentence) 14 Glo. Ve ver. 0. 1. b 4

![Step 2: Perform SVD on X • By definition: [U, , V] =SVD(X), then Step 2: Perform SVD on X • By definition: [U, , V] =SVD(X), then](http://slidetodoc.com/presentation_image_h2/5763423c977af0f01418ff904d3301c8/image-15.jpg)

Step 2: Perform SVD on X • By definition: [U, , V] =SVD(X), then X=U VT Glo. Ve ver. 0. 1. b 4 15

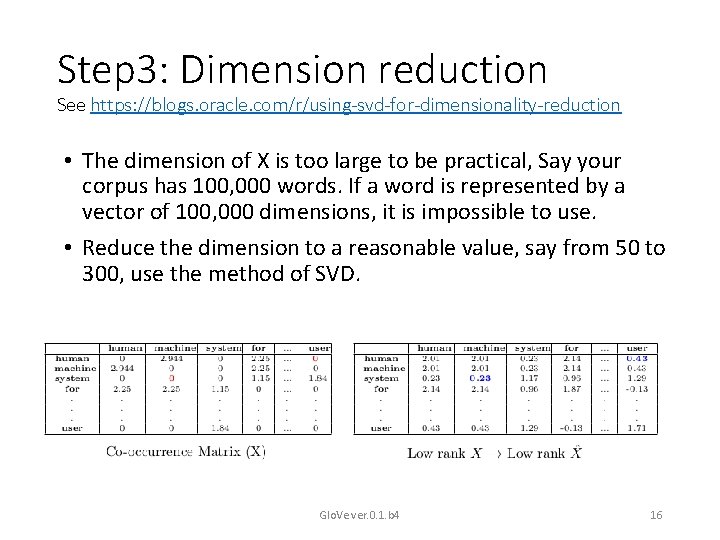

Step 3: Dimension reduction See https: //blogs. oracle. com/r/using-svd-for-dimensionality-reduction • The dimension of X is too large to be practical, Say your corpus has 100, 000 words. If a word is represented by a vector of 100, 000 dimensions, it is impossible to use. • Reduce the dimension to a reasonable value, say from 50 to 300, use the method of SVD. Glo. Ve ver. 0. 1. b 4 16

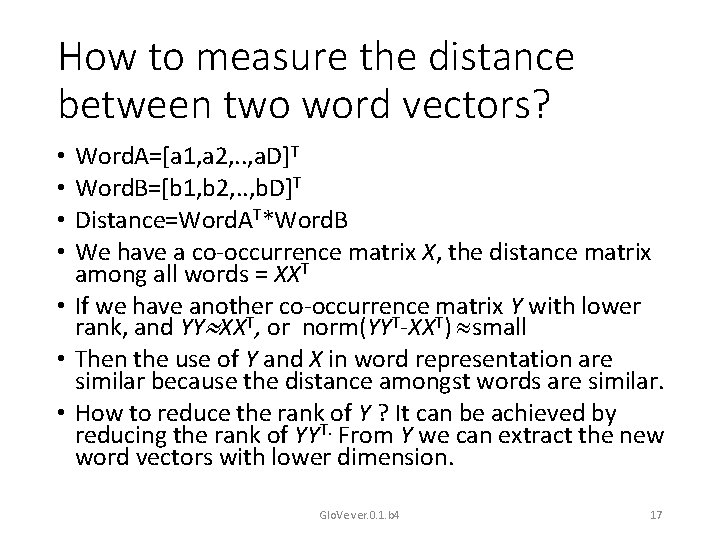

How to measure the distance between two word vectors? Word. A=[a 1, a 2, . . , a. D]T Word. B=[b 1, b 2, . . , b. D]T Distance=Word. AT*Word. B We have a co-occurrence matrix X, the distance matrix among all words = XXT • If we have another co-occurrence matrix Y with lower rank, and YY XXT, or norm(YYT-XXT) small • Then the use of Y and X in word representation are similar because the distance amongst words are similar. • How to reduce the rank of Y ? It can be achieved by reducing the rank of YYT. From Y we can extract the new word vectors with lower dimension. • • Glo. Ve ver. 0. 1. b 4 17

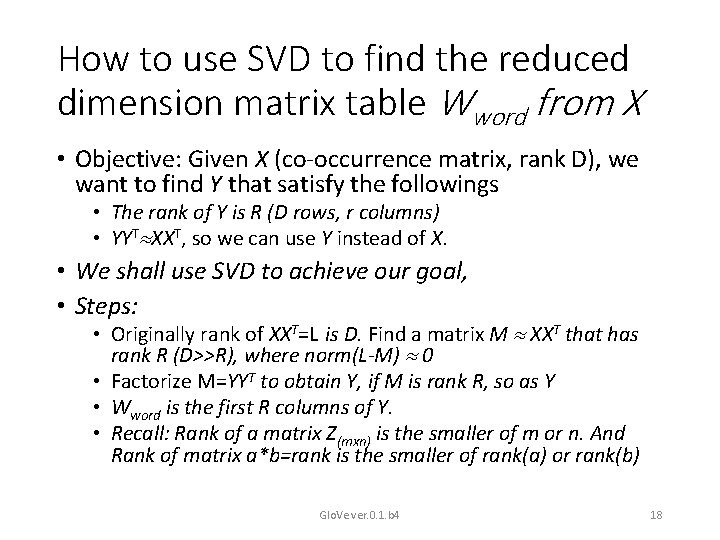

How to use SVD to find the reduced dimension matrix table Wword from X • Objective: Given X (co-occurrence matrix, rank D), we want to find Y that satisfy the followings • The rank of Y is R (D rows, r columns) • YYT XXT, so we can use Y instead of X. • We shall use SVD to achieve our goal, • Steps: • Originally rank of XXT=L is D. Find a matrix M XXT that has rank R (D>>R), where norm(L-M) 0 • Factorize M=YYT to obtain Y, if M is rank R, so as Y • Wword is the first R columns of Y. • Recall: Rank of a matrix Z(mxn) is the smaller of m or n. And Rank of matrix a*b=rank is the smaller of rank(a) or rank(b) Glo. Ve ver. 0. 1. b 4 18

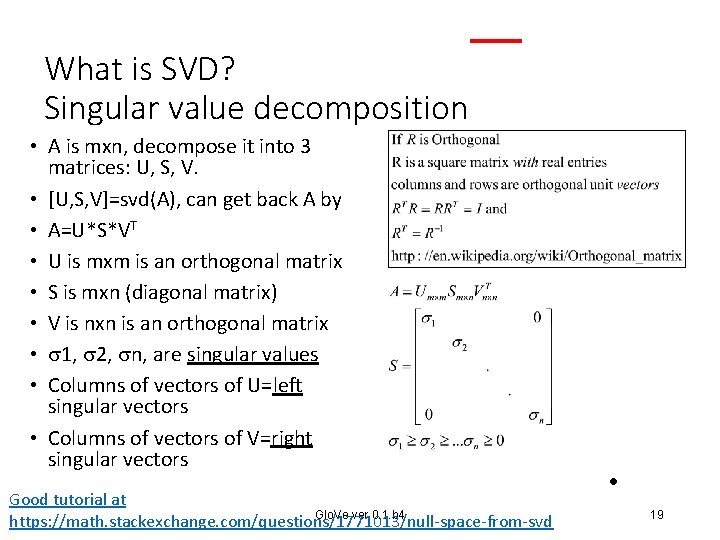

What is SVD? Singular value decomposition • A is mxn, decompose it into 3 matrices: U, S, V. • [U, S, V]=svd(A), can get back A by • A=U*S*VT • U is mxm is an orthogonal matrix • S is mxn (diagonal matrix) • V is nxn is an orthogonal matrix • 1, 2, n, are singular values • Columns of vectors of U=left singular vectors • Columns of vectors of V=right singular vectors Good tutorial at Glo. Ve ver. 0. 1. b 4 https: //math. stackexchange. com/questions/1771013/null-space-from-svd • 19

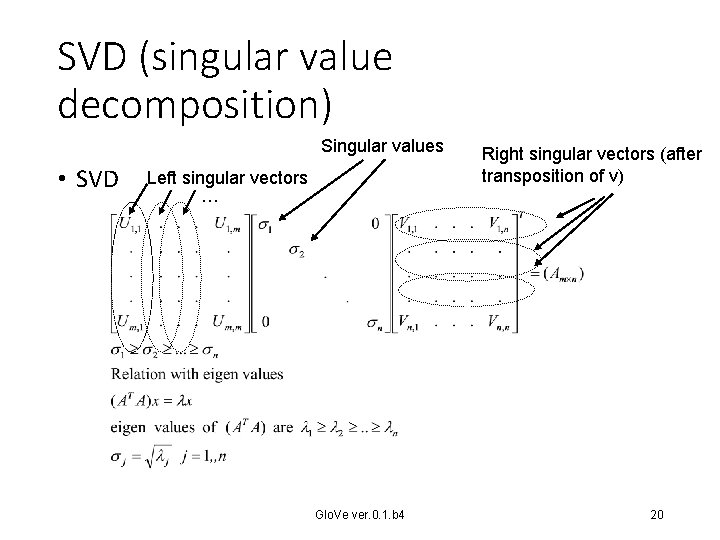

SVD (singular value decomposition) Singular values • SVD Left singular vectors … Glo. Ve ver. 0. 1. b 4 Right singular vectors (after transposition of v) 20

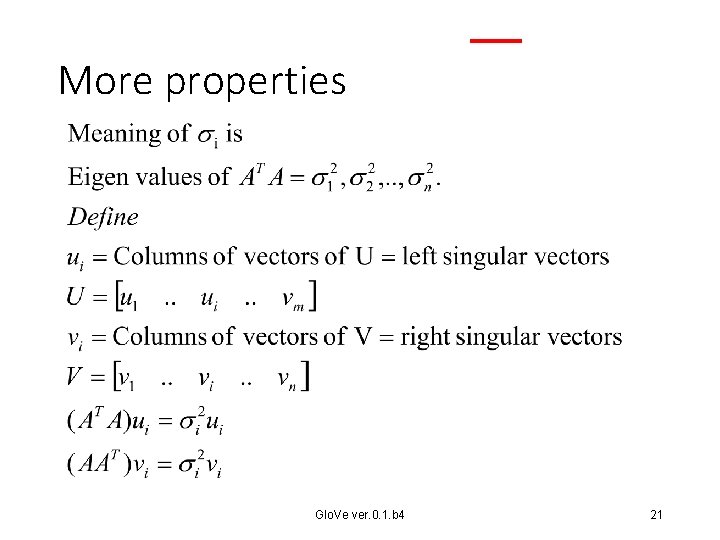

More properties Glo. Ve ver. 0. 1. b 4 21

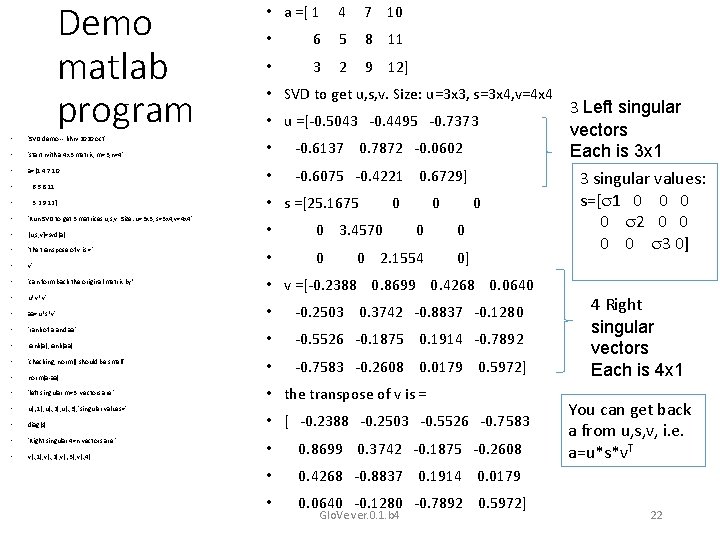

Demo matlab program • 'SVD demo -- khw 2020 oct' • 'start with a 4 x 3 matrix, m=3, n=4' • a=[1 4 7 10 • 6 5 8 11 • 3 2 9 12] • 'Run SVD to get 3 matrices u, s, v. Size: u=3 x 3, s=3 x 4, v=4 x 4' • [u, s, v]=svd(a) • 'the transpose of v is =' • v' • 'can form back the original matrix by’ • u*v*v' • aa=u*s*v' • 'rank of a and aa' • rank(a), rank(aa) • 'checking, norm() should be small' • norm(a-aa) • 'left singular m=3 vectors are ' • u(: , 1), u(: , 2), u(: , 3), 'singular values=' • diag(s) • 'Right singular 4=n vectors are ' • v(: , 1), v(: , 2), v(: , 3), v(: , 4) • a =[ 1 4 7 10 • 6 5 8 11 • 3 2 9 12] • SVD to get u, s, v. Size: u=3 x 3, s=3 x 4, v=4 x 4 • u =[-0. 5043 -0. 4495 -0. 7373 • -0. 6137 0. 7872 -0. 0602 • -0. 6075 -0. 4221 0. 6729] • s =[25. 1675 • 0 3. 4570 • 0 0 0 2. 1554 0 0 0] • v =[-0. 2388 0. 8699 0. 4268 0. 0640 • -0. 2503 0. 3742 -0. 8837 -0. 1280 • -0. 5526 -0. 1875 0. 1914 -0. 7892 • -0. 7583 -0. 2608 0. 0179 0. 5972] • the transpose of v is = • [ -0. 2388 -0. 2503 -0. 5526 -0. 7583 • 0. 8699 0. 3742 -0. 1875 -0. 2608 • 0. 4268 -0. 8837 0. 1914 0. 0179 • 0. 0640 -0. 1280 -0. 7892 0. 5972] Glo. Ve ver. 0. 1. b 4 3 Left singular vectors Each is 3 x 1 3 singular values: s=[ 1 0 0 2 0 0 3 0] 4 Right singular vectors Each is 4 x 1 You can get back a from u, s, v, i. e. a=u*s*v. T 22

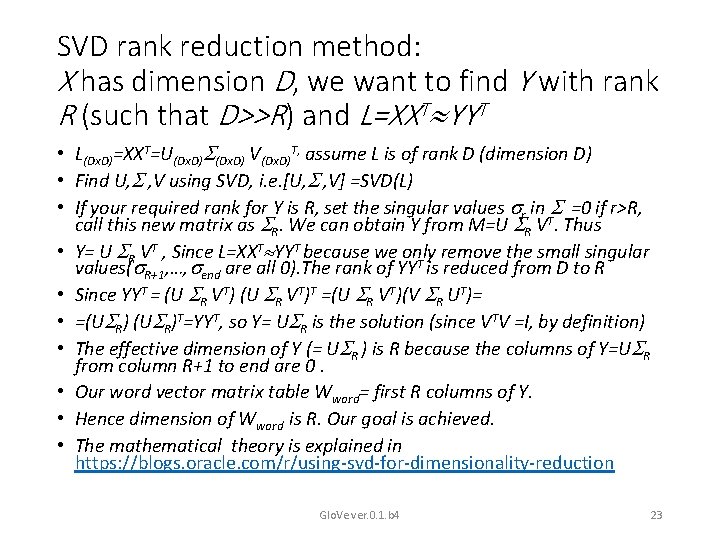

SVD rank reduction method: X has dimension D, we want to find Y with rank R (such that D>>R) and L=XXT YYT • L(Dx. D)=XXT=U(Dx. D) V(Dx. D)T, assume L is of rank D (dimension D) • Find U, , V using SVD, i. e. [U, , V] =SVD(L) • If your required rank for Y is R, set the singular values r in =0 if r>R, call this new matrix as R. We can obtain Y from M=U R VT. Thus • Y= U R VT , Since L=XXT YYT because we only remove the small singular values( R+1, …, end are all 0). The rank of YYT is reduced from D to R • Since YYT = (U R VT)T =(U R VT)(V R UT)= • =(U R)T=YYT, so Y= U R is the solution (since VTV =I, by definition) • The effective dimension of Y (= U R ) is R because the columns of Y=U R from column R+1 to end are 0. • Our word vector matrix table Wword= first R columns of Y. • Hence dimension of Wword is R. Our goal is achieved. • The mathematical theory is explained in https: //blogs. oracle. com/r/using-svd-for-dimensionality-reduction Glo. Ve ver. 0. 1. b 4 23

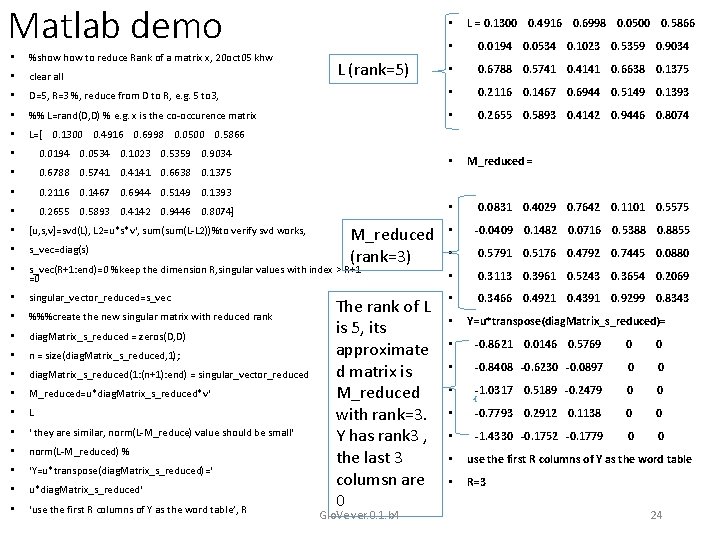

Matlab demo • L = 0. 1300 0. 4916 0. 6998 0. 0500 0. 5866 • 0. 0194 0. 0534 0. 1023 0. 5359 0. 9034 • 0. 6788 0. 5741 0. 4141 0. 6638 0. 1375 • %show to reduce Rank of a matrix x, 20 oct 05 khw • clear all • D=5, R=3 %, reduce from D to R, e. g. 5 to 3, • 0. 2116 0. 1467 0. 6944 0. 5149 0. 1393 • %% L=rand(D, D) % e. g. x is the co-occurence matrix • 0. 2655 0. 5893 0. 4142 0. 9446 0. 8074 • L=[ 0. 1300 0. 4916 0. 6998 0. 0500 0. 5866 • 0. 0194 0. 0534 0. 1023 0. 5359 0. 9034 • 0. 6788 0. 5741 0. 4141 0. 6638 0. 1375 • 0. 2116 0. 1467 0. 6944 0. 5149 0. 1393 • 0. 2655 0. 5893 0. 4142 0. 9446 0. 8074] L (rank=5) • M_reduced (rank=3) M_reduced = • 0. 0831 0. 4029 0. 7642 0. 1101 0. 5575 • -0. 0409 0. 1482 0. 0716 0. 5388 0. 8855 • 0. 5791 0. 5176 0. 4792 0. 7445 0. 0880 • [u, s, v]=svd(L), L 2=u*s*v', sum(L-L 2))%to verify svd works, • s_vec=diag(s) • s_vec(R+1: end)=0 %keep the dimension R, singular values with index > R+1 =0 • 0. 3113 0. 3961 0. 5243 0. 3654 0. 2069 • singular_vector_reduced=s_vec • 0. 3466 0. 4921 0. 4391 0. 9299 0. 8343 • %%%create the new singular matrix with reduced rank • diag. Matrix_s_reduced = zeros(D, D) • n = size(diag. Matrix_s_reduced, 1); • diag. Matrix_s_reduced(1: (n+1): end) = singular_vector_reduced • M_reduced=u*diag. Matrix_s_reduced*v' • L • ' they are similar, norm(L-M_reduce) value should be small' • norm(L-M_reduced) % • ‘Y=u*transpose(diag. Matrix_s_reduced)=' • u*diag. Matrix_s_reduced‘ • ‘use the first R columns of Y as the word table’, R The rank of L is 5, its approximate d matrix is M_reduced with rank=3. Y has rank 3 , the last 3 columsn are 0 Glo. Ve ver. 0. 1. b 4 • Y=u*transpose(diag. Matrix_s_reduced)= • -0. 8621 0. 0146 0. 5769 0 0 • -0. 8408 -0. 6230 -0. 0897 0 0 • -1. 0317 0. 5189 -0. 2479 0 0 • -0. 7793 0. 2912 0. 1138 0 0 • -1. 4330 -0. 1752 -0. 1779 0 0 • use the first R columns of Y as the word table • R=3 24

![The SVD algorithm Given the co-occurrence matrix X Find L=XXT [U, , V]=svd(L) Set The SVD algorithm Given the co-occurrence matrix X Find L=XXT [U, , V]=svd(L) Set](http://slidetodoc.com/presentation_image_h2/5763423c977af0f01418ff904d3301c8/image-25.jpg)

The SVD algorithm Given the co-occurrence matrix X Find L=XXT [U, , V]=svd(L) Set the singular values r in =0 if r>R, call this new singular matrix as R. • Mreduced=U R VT, such that L Mreduced , assume Mreduced=YYT , therefore • Y= U R • The SVD_word_vector table is the first R columns of Y. It has dimension R (D>>R) for each word. E. g. , D=100, 000 and R=300 • • Glo. Ve ver. 0. 1. b 4 25

The context prediction method (To be added) • Continuous Bag of Words (CBOW) • Skip-Gram Model (SGM) • Word 2 vec utilizing CBOW or SGM to build a neural net Glo. Ve ver. 0. 1. b 4 26

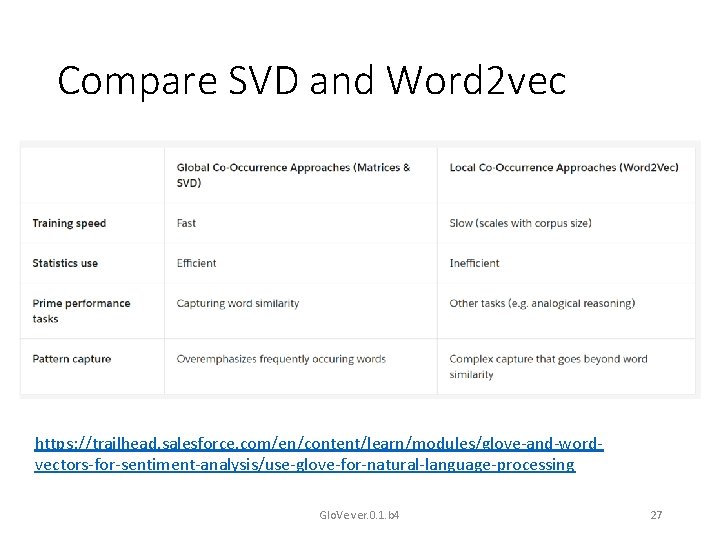

Compare SVD and Word 2 vec • https: //trailhead. salesforce. com/en/content/learn/modules/glove-and-wordvectors-for-sentiment-analysis/use-glove-for-natural-language-processing Glo. Ve ver. 0. 1. b 4 27

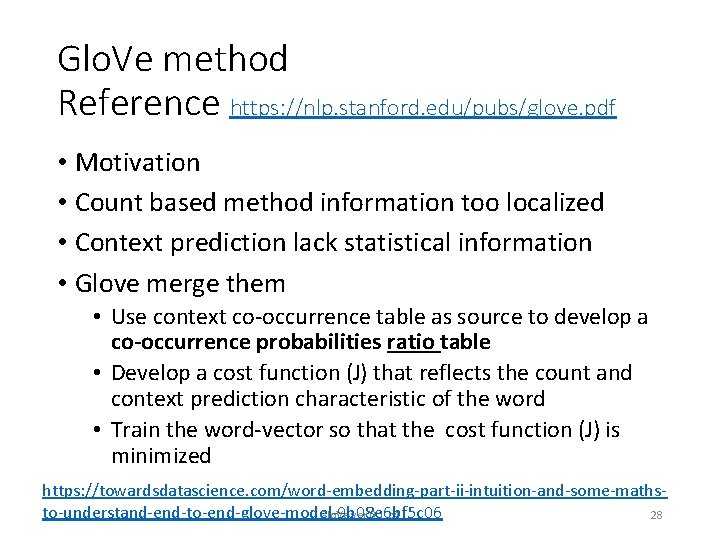

Glo. Ve method Reference https: //nlp. stanford. edu/pubs/glove. pdf • Motivation • Count based method information too localized • Context prediction lack statistical information • Glove merge them • Use context co-occurrence table as source to develop a co-occurrence probabilities ratio table • Develop a cost function (J) that reflects the count and context prediction characteristic of the word • Train the word-vector so that the cost function (J) is minimized https: //towardsdatascience. com/word-embedding-part-ii-intuition-and-some-mathsto-understand-end-to-end-glove-model-9 b 08 e 6 bf 5 c 06 Glo. Ve ver. 0. 1. b 4 28

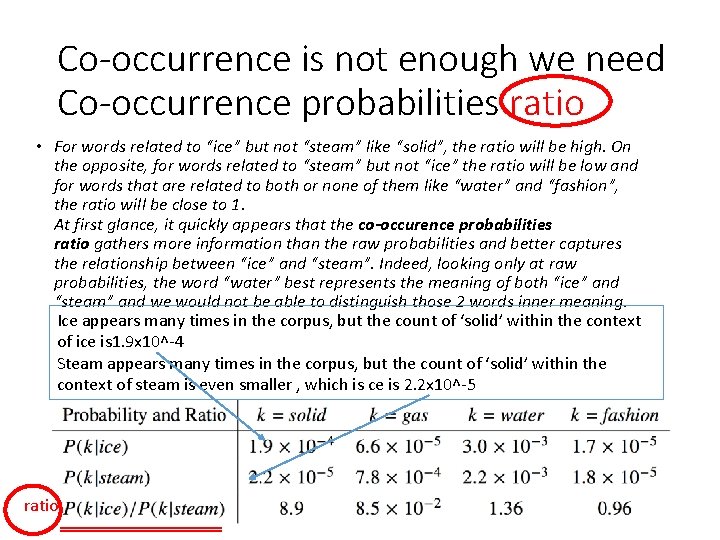

Co-occurrence is not enough we need Co-occurrence probabilities ratio • For words related to “ice” but not “steam” like “solid”, the ratio will be high. On the opposite, for words related to “steam” but not “ice” the ratio will be low and for words that are related to both or none of them like “water” and “fashion”, the ratio will be close to 1. At first glance, it quickly appears that the co-occurence probabilities ratio gathers more information than the raw probabilities and better captures the relationship between “ice” and “steam”. Indeed, looking only at raw probabilities, the word “water” best represents the meaning of both “ice” and “steam” and we would not be able to distinguish those 2 words inner meaning. Ice appears many times in the corpus, but the count of ‘solid’ within the context of ice is 1. 9 x 10^-4 Steam appears many times in the corpus, but the count of ‘solid’ within the context of steam is even smaller , which is ce is 2. 2 x 10^-5 ratio Glo. Ve ver. 0. 1. b 4 29

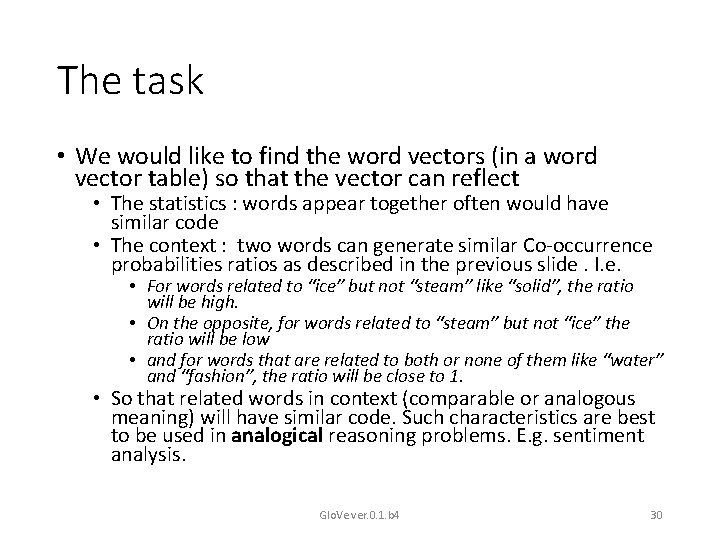

The task • We would like to find the word vectors (in a word vector table) so that the vector can reflect • The statistics : words appear together often would have similar code • The context : two words can generate similar Co-occurrence probabilities ratios as described in the previous slide. I. e. • For words related to “ice” but not “steam” like “solid”, the ratio will be high. • On the opposite, for words related to “steam” but not “ice” the ratio will be low • and for words that are related to both or none of them like “water” and “fashion”, the ratio will be close to 1. • So that related words in context (comparable or analogous meaning) will have similar code. Such characteristics are best to be used in analogical reasoning problems. E. g. sentiment analysis. Glo. Ve ver. 0. 1. b 4 30

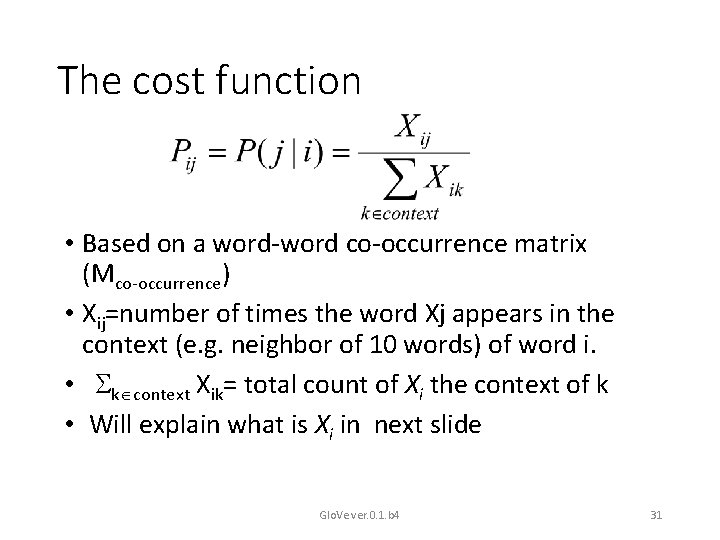

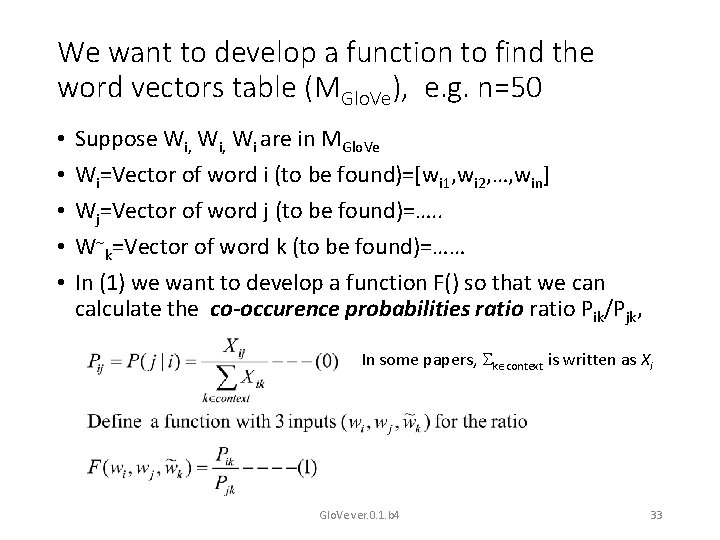

The cost function • Based on a word-word co-occurrence matrix (Mco-occurrence) • Xij=number of times the word Xj appears in the context (e. g. neighbor of 10 words) of word i. • k context Xik= total count of Xi the context of k • Will explain what is Xi in next slide Glo. Ve ver. 0. 1. b 4 31

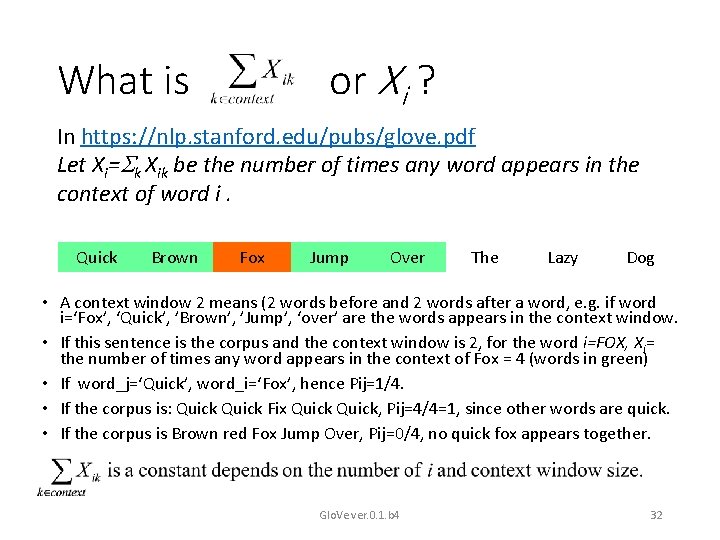

or Xi ? What is In https: //nlp. stanford. edu/pubs/glove. pdf Let Xi= k Xik be the number of times any word appears in the context of word i. Quick Brown Fox Jump Over The Lazy Dog • A context window 2 means (2 words before and 2 words after a word, e. g. if word i=‘Fox’, ‘Quick’, ’Brown’, ’Jump’, ‘over’ are the words appears in the context window. • If this sentence is the corpus and the context window is 2, for the word i=FOX, Xi= the number of times any word appears in the context of Fox = 4 (words in green) • If word_j=‘Quick’, word_i=‘Fox’, hence Pij=1/4. • If the corpus is: Quick Fix Quick, Pij=4/4=1, since other words are quick. • If the corpus is Brown red Fox Jump Over, Pij=0/4, no quick fox appears together. Glo. Ve ver. 0. 1. b 4 32

We want to develop a function to find the word vectors table (MGlo. Ve), e. g. n=50 • • • Suppose Wi, Wi are in MGlo. Ve Wi=Vector of word i (to be found)=[wi 1, wi 2, …, win] Wj=Vector of word j (to be found)=…. . W k=Vector of word k (to be found)=…… In (1) we want to develop a function F() so that we can calculate the co-occurence probabilities ratio Pik/Pjk, In some papers, k context is written as Xi Glo. Ve ver. 0. 1. b 4 33

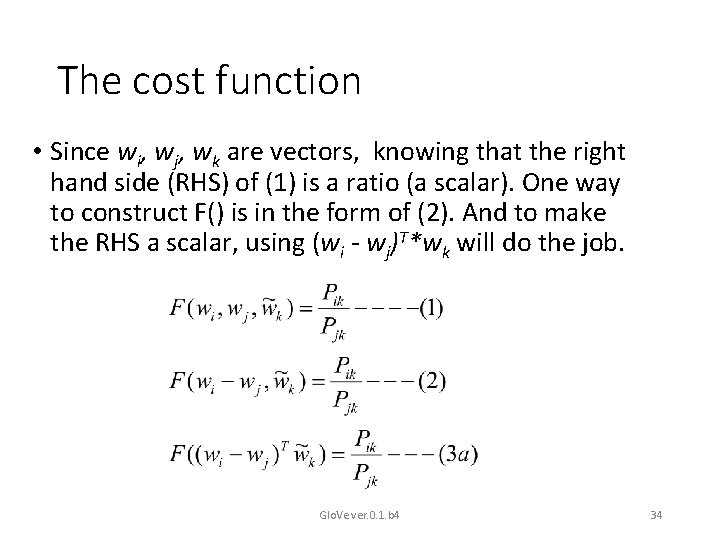

The cost function • Since wi, wj, wk are vectors, knowing that the right hand side (RHS) of (1) is a ratio (a scalar). One way to construct F() is in the form of (2). And to make the RHS a scalar, using (wi - wj)T*wk will do the job. Glo. Ve ver. 0. 1. b 4 34

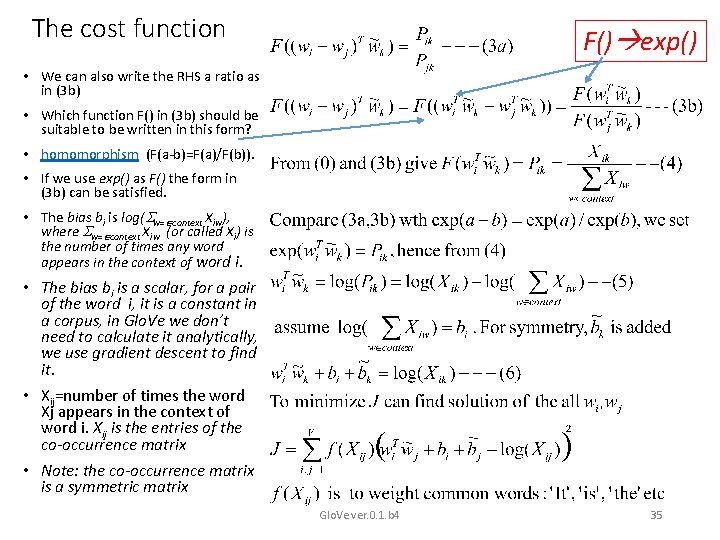

The cost function F() exp() • We can also write the RHS a ratio as in (3 b) • Which function F() in (3 b) should be suitable to be written in this form? • homomorphism (F(a-b)=F(a)/F(b)). • If we use exp() as F() the form in (3 b) can be satisfied. • The bias bi is log( w= context Xiw), where w= context Xiw (or called Xi) is the number of times any word appears in the context of word i. • The bias bi is a scalar, for a pair of the word i, it is a constant in a corpus, in Glo. Ve we don’t need to calculate it analytically, we use gradient descent to find it. • Xij=number of times the word Xj appears in the context of word i. Xij is the entries of the co-occurrence matrix • Note: the co-occurrence matrix is a symmetric matrix Glo. Ve ver. 0. 1. b 4 35

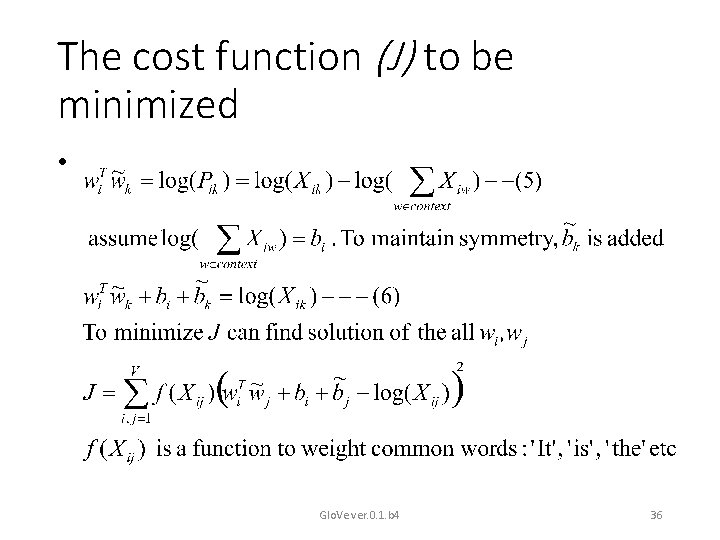

The cost function (J) to be minimized • Glo. Ve ver. 0. 1. b 4 36

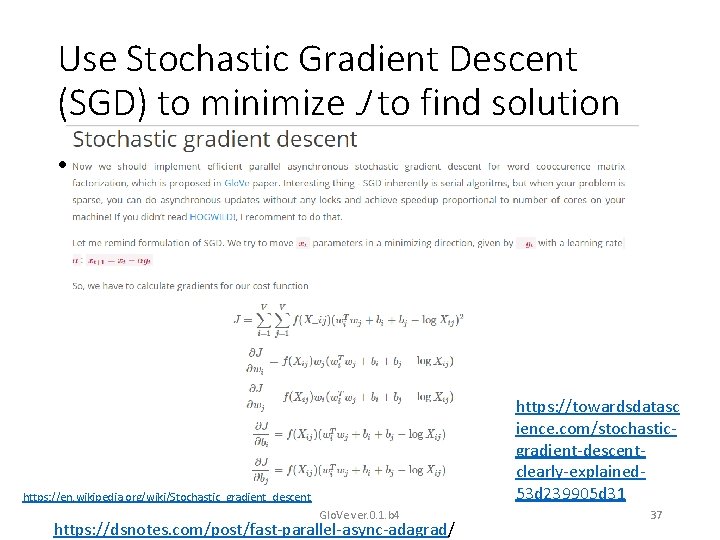

Use Stochastic Gradient Descent (SGD) to minimize J to find solution • https: //towardsdatasc ience. com/stochasticgradient-descentclearly-explained 53 d 239905 d 31 https: //en. wikipedia. org/wiki/Stochastic_gradient_descent Glo. Ve ver. 0. 1. b 4 https: //dsnotes. com/post/fast-parallel-async-adagrad/ 37

Summary • Studied methods for word representation (embedding), including • Count based method using SVD • Context Prediction methods: word 2 vec • Count based plus prediction based: Glo. Ve ver. 0. 1. b 4 38

References 1. https: //medium. com/ai-society/jkljlj-7 d 6 e 699895 c 4 2. Glo. Ve: Global Vectors for Word Representation by Jeffrey Pennington, etal. , https: //towardsdatascience. com/gloveresearch-paper-clearly-explained-7 d 2 c 3641 b 8 a 6 1. 2. 3. 4. 5. https: //nlp. stanford. edu/projects/glove/ (Download pre-trained word vectors, e. g. glove. 6 B. 50 d. txt) https: //towardsdatascience. com/light-on-math-ml-intuitiveguide-to-understanding-glove-embeddings-b 13 b 4 f 19 c 010 https: //towardsdatascience. com/understanding-featureengineering-part-4 -deep-learning-methods-for-text-data 96 c 44370 bbfa https: //medium. com/sciforce/word-vectors-in-natural-languageprocessing-global-vectors-glove-51339 db 89639 http: //text 2 vec. org/glove. html Glo. Ve ver. 0. 1. b 4 39

- Slides: 39