Word 2 Vec Sivan Biham Adam Yaari What

Word 2 Vec Sivan Biham & Adam Yaari

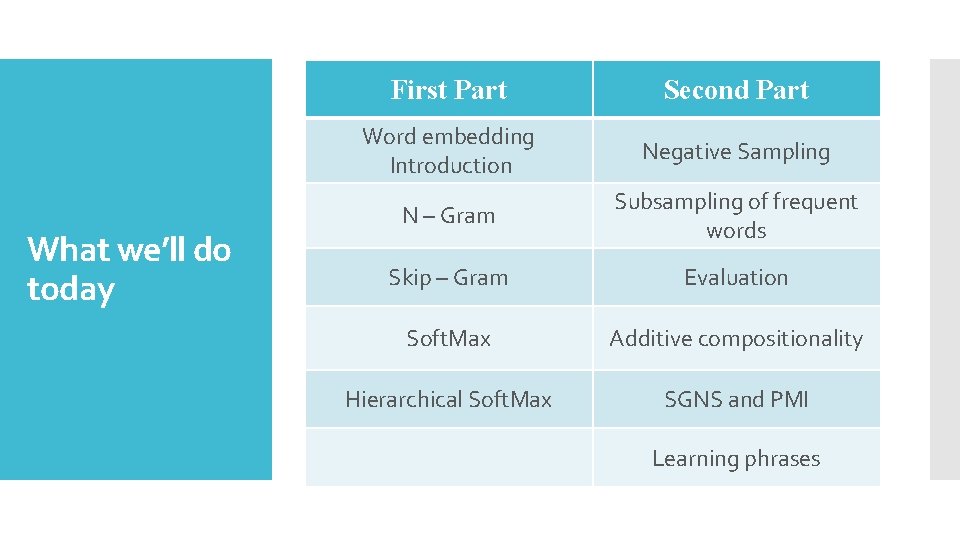

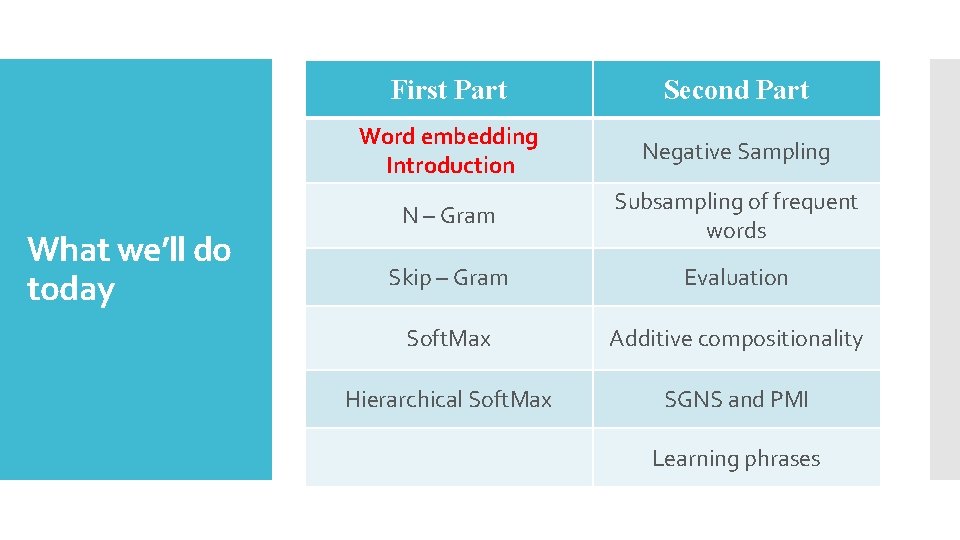

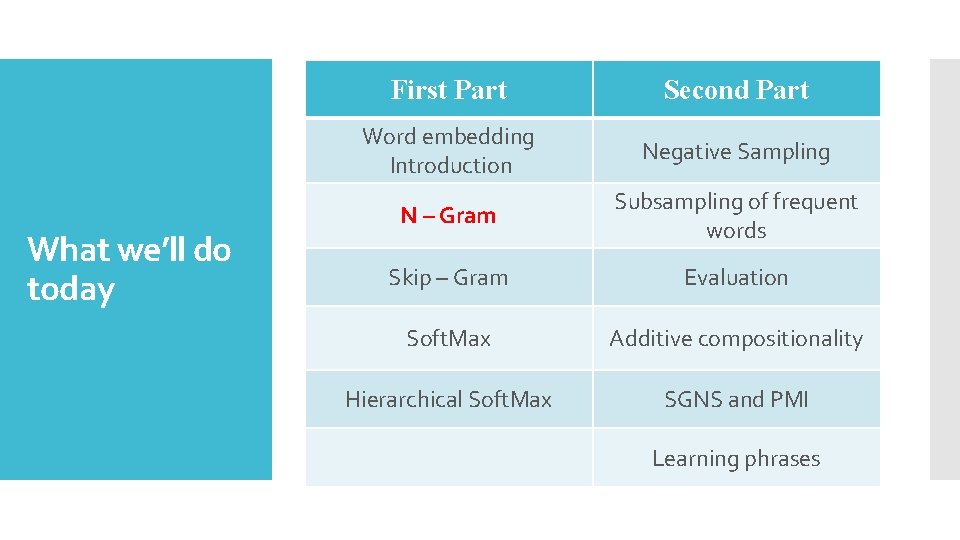

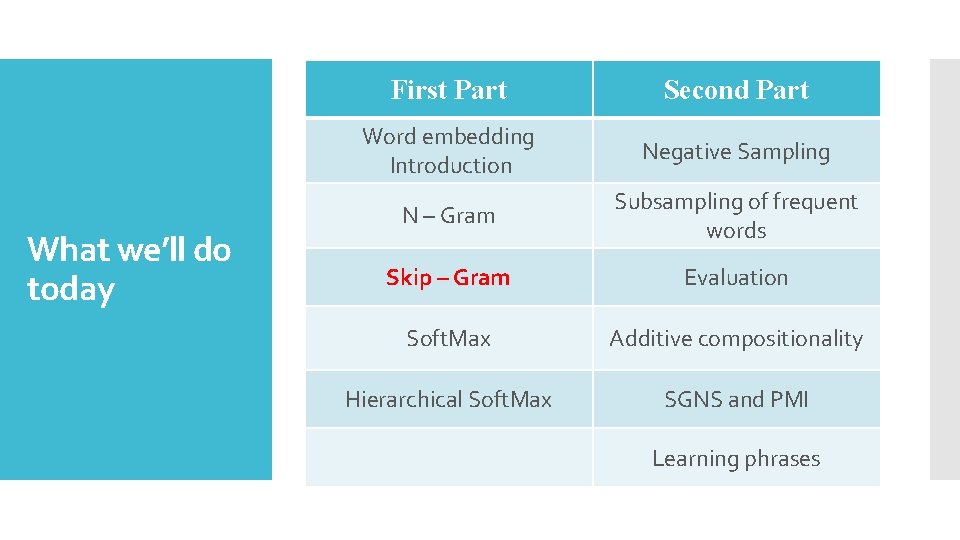

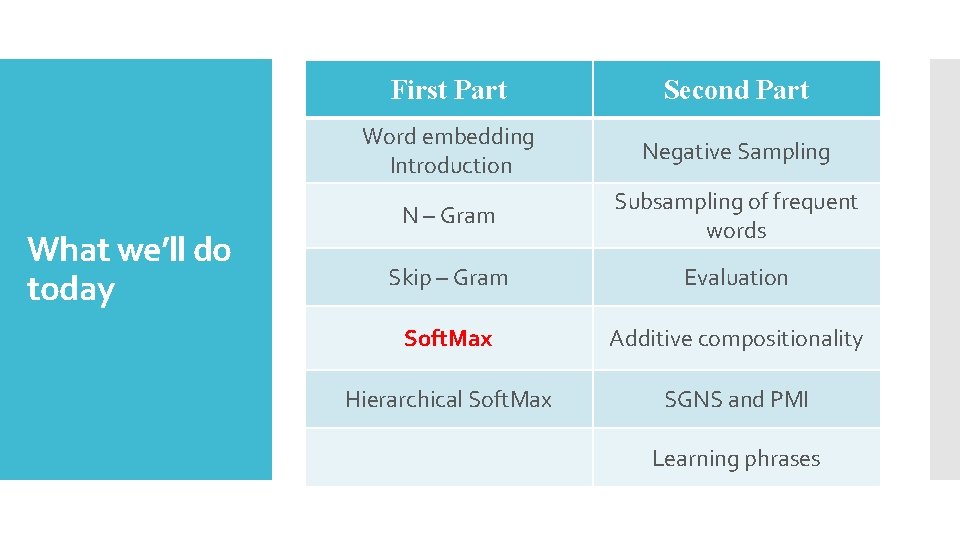

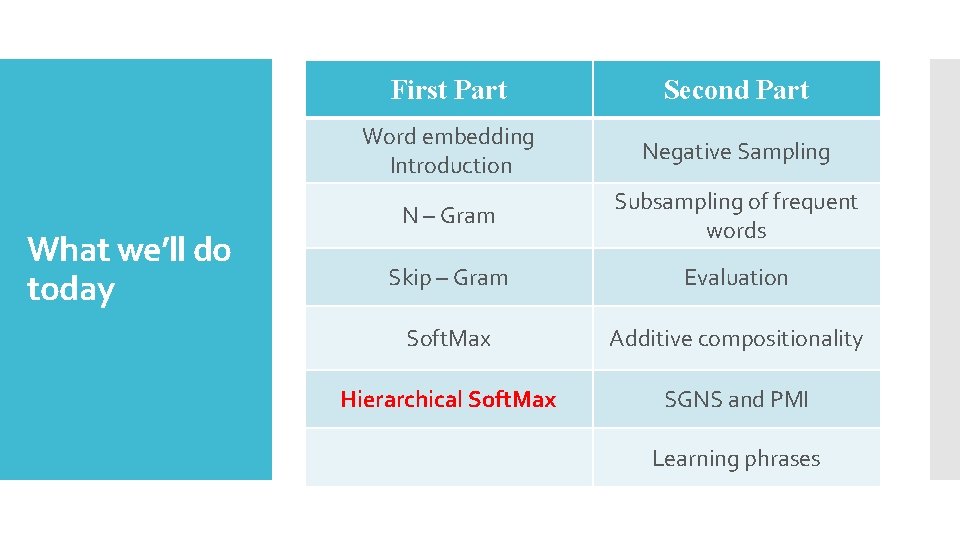

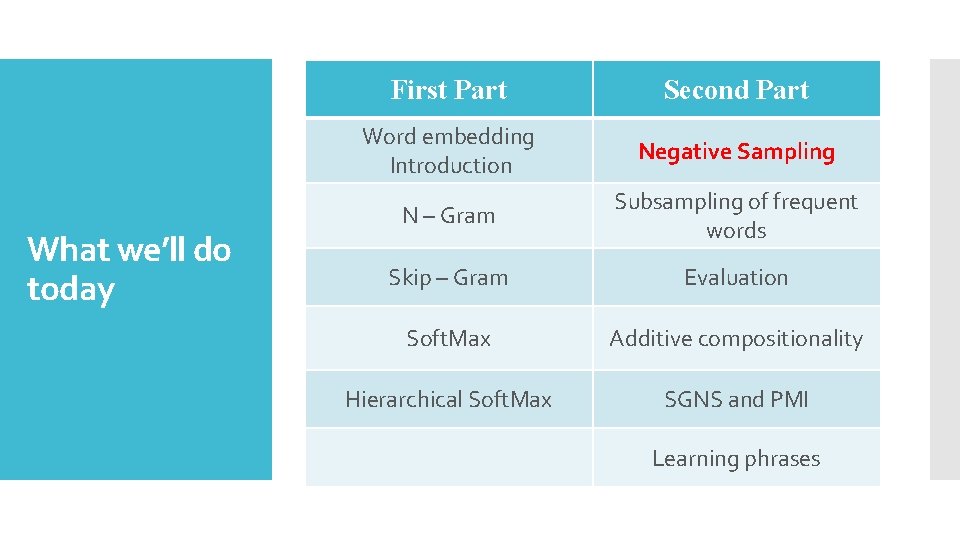

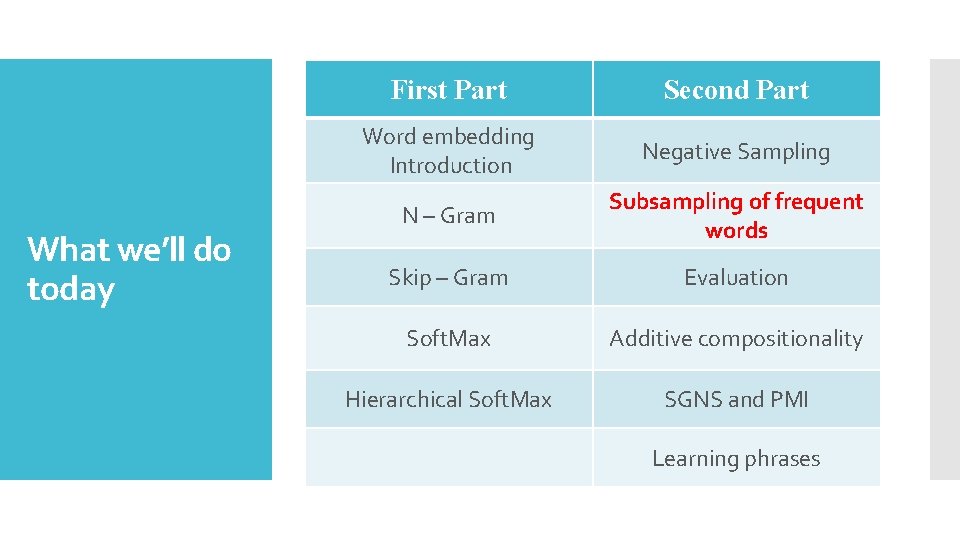

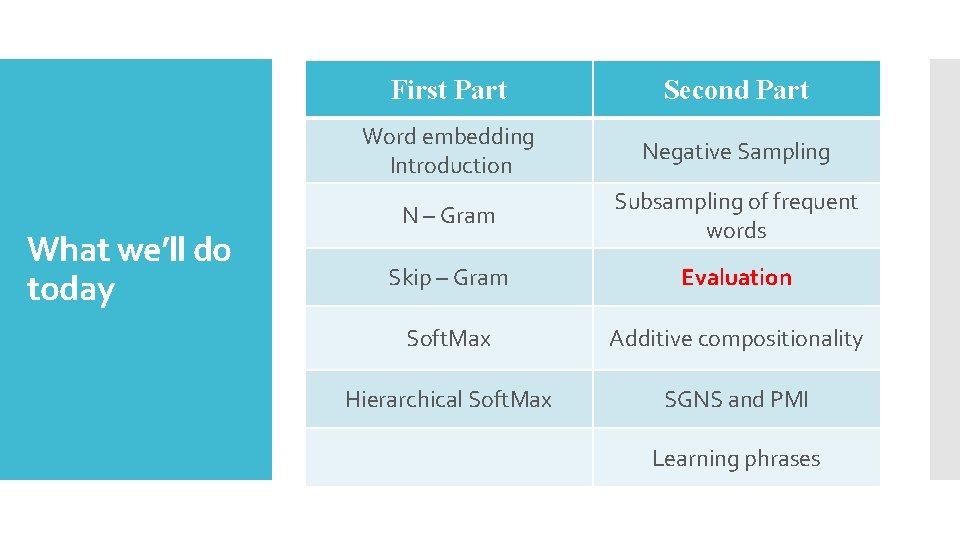

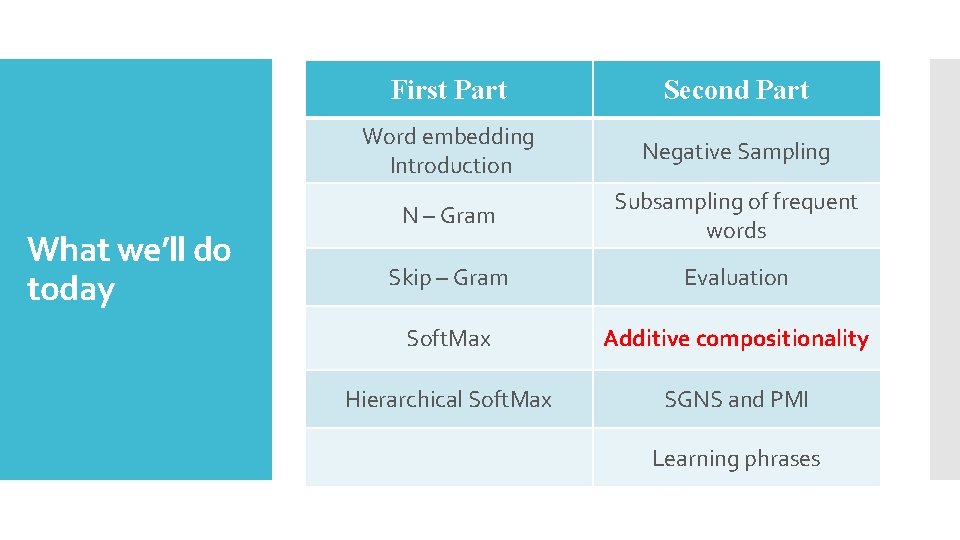

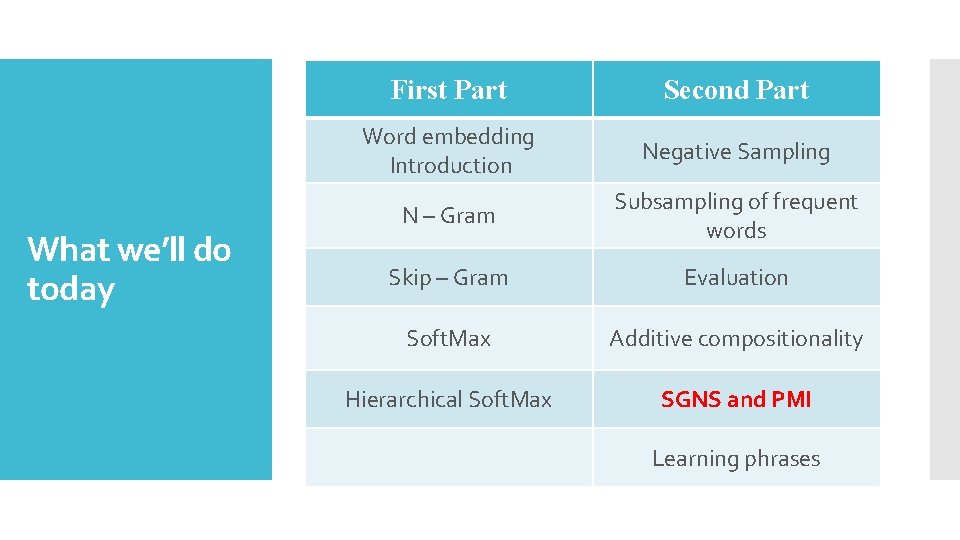

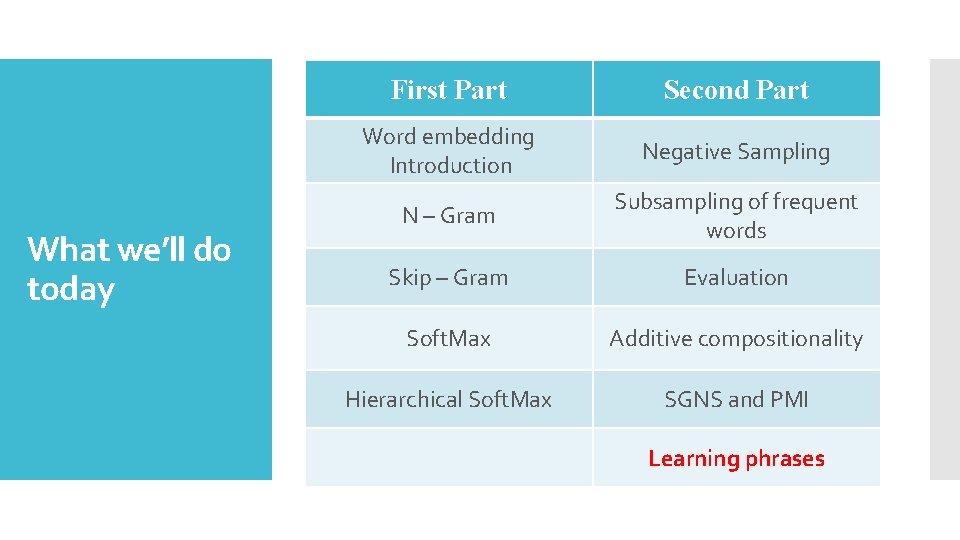

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

Natural Language Processing (NLP) applications and products: Motivation

A way for computers to analyze, understand, and derive meaning from human language. What is NLP? text mining machine translation automated question answering automatic text summarization And many more…

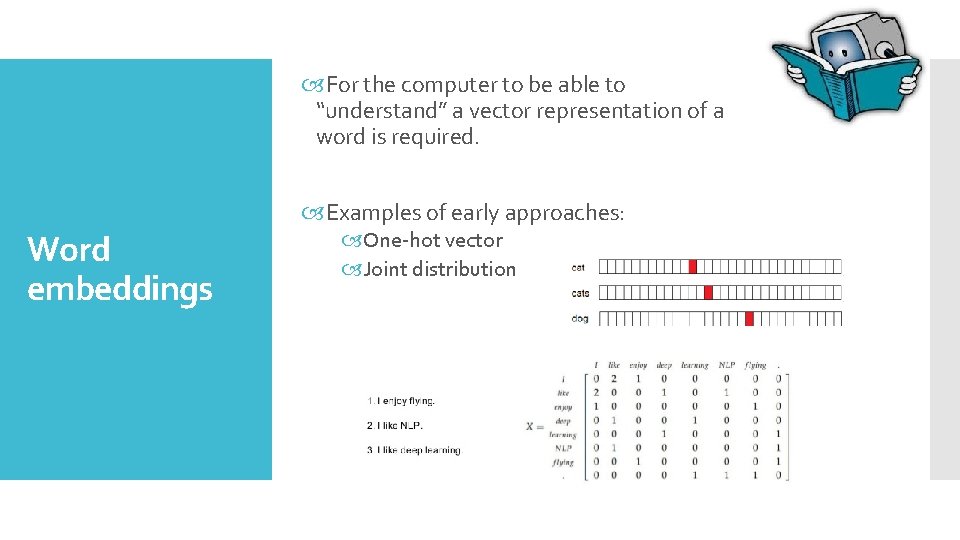

For the computer to be able to “understand” a vector representation of a word is required. Examples of early approaches: Word embeddings One-hot vector Joint distribution

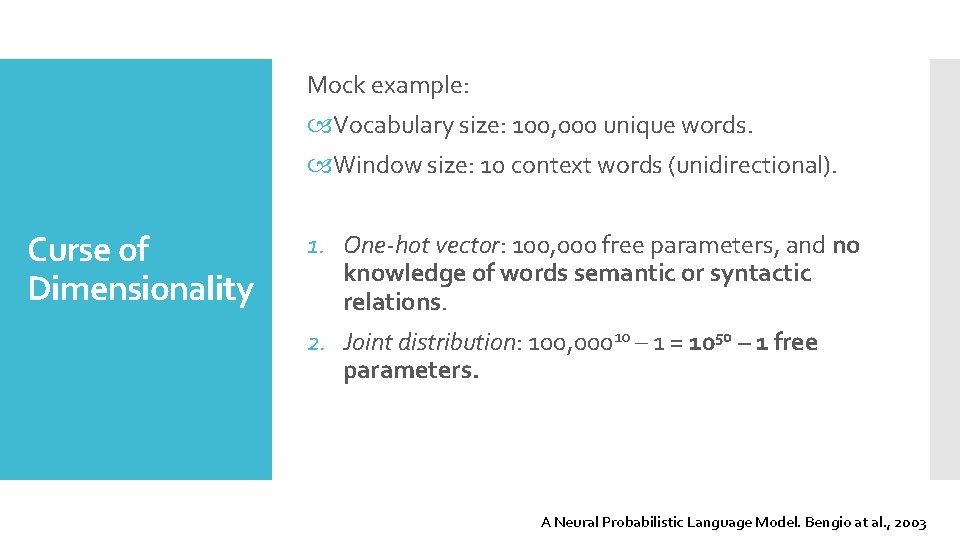

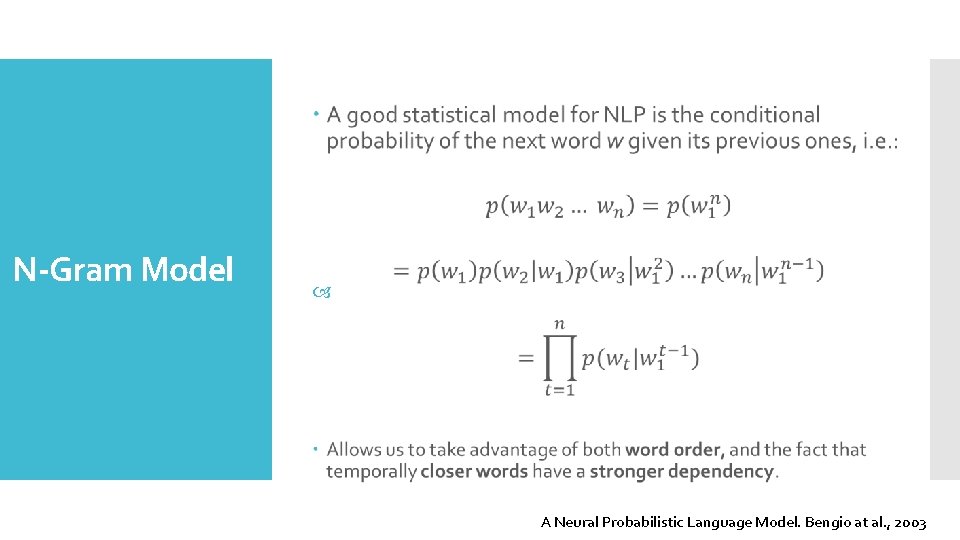

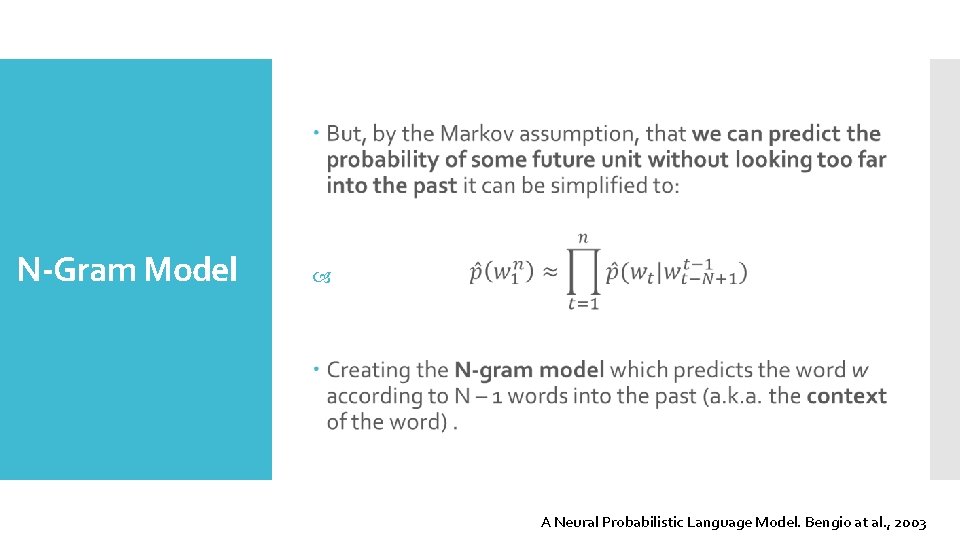

Mock example: Vocabulary size: 100, 000 unique words. Window size: 10 context words (unidirectional). Curse of Dimensionality 1. One-hot vector: 100, 000 free parameters, and no knowledge of words semantic or syntactic relations. 2. Joint distribution: 100, 00010 – 1 = 1050 – 1 free parameters. A Neural Probabilistic Language Model. Bengio at al. , 2003

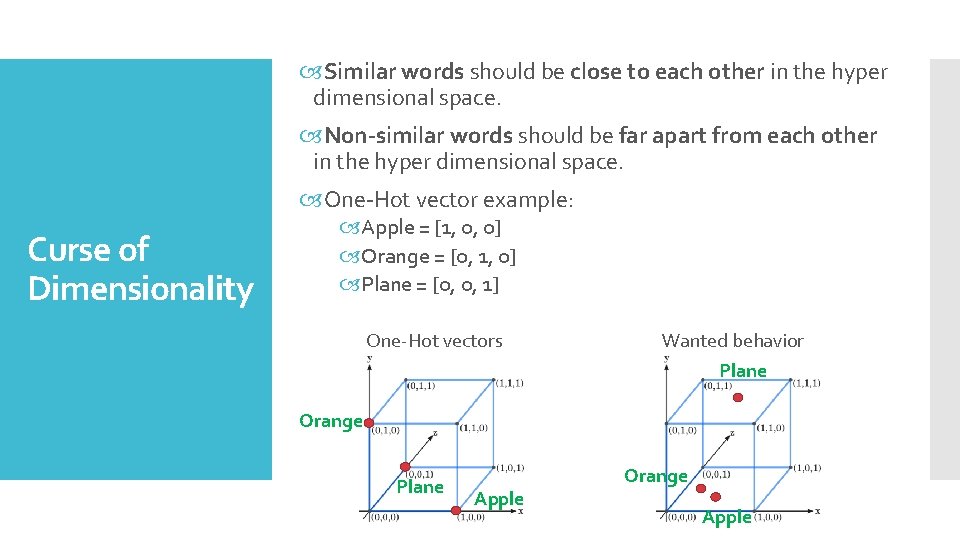

Similar words should be close to each other in the hyper dimensional space. Non-similar words should be far apart from each other in the hyper dimensional space. One-Hot vector example: Curse of Dimensionality Apple = [1, 0, 0] Orange = [0, 1, 0] Plane = [0, 0, 1] One-Hot vectors Wanted behavior Plane Orange Plane Apple Orange Apple

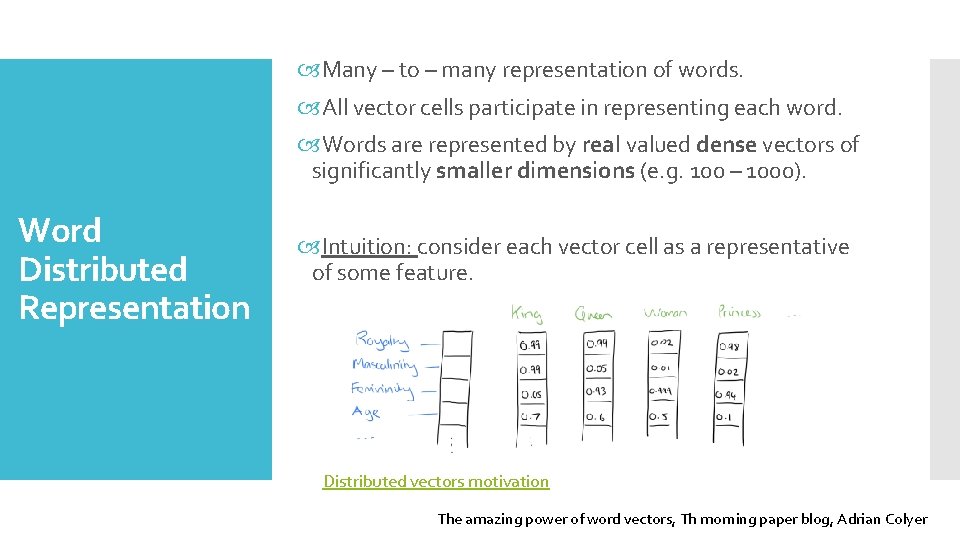

Many – to – many representation of words. All vector cells participate in representing each word. Words are represented by real valued dense vectors of significantly smaller dimensions (e. g. 100 – 1000). Word Distributed Representation Intuition: consider each vector cell as a representative of some feature. Distributed vectors motivation The amazing power of word vectors, Th morning paper blog, Adrian Colyer

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

N-Gram Model A Neural Probabilistic Language Model. Bengio at al. , 2003

N-Gram Model A Neural Probabilistic Language Model. Bengio at al. , 2003

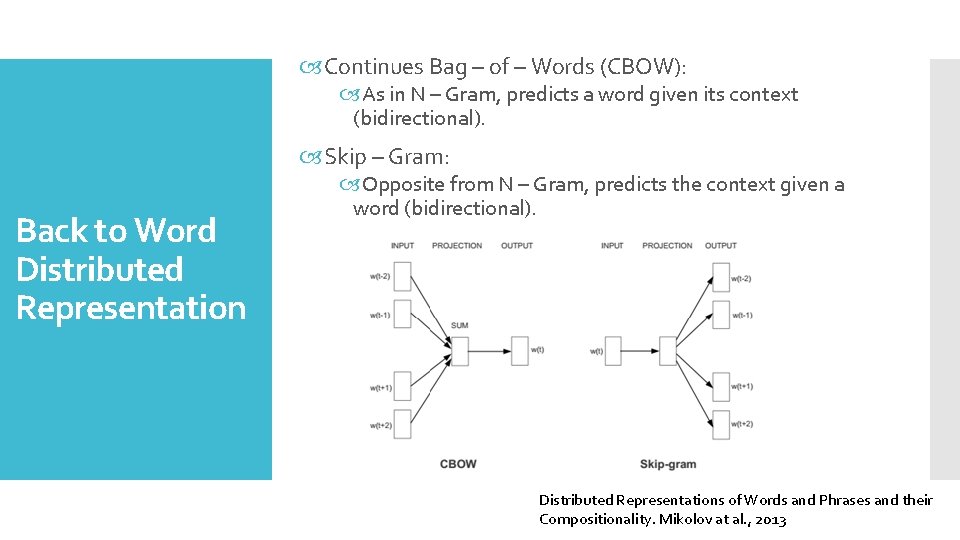

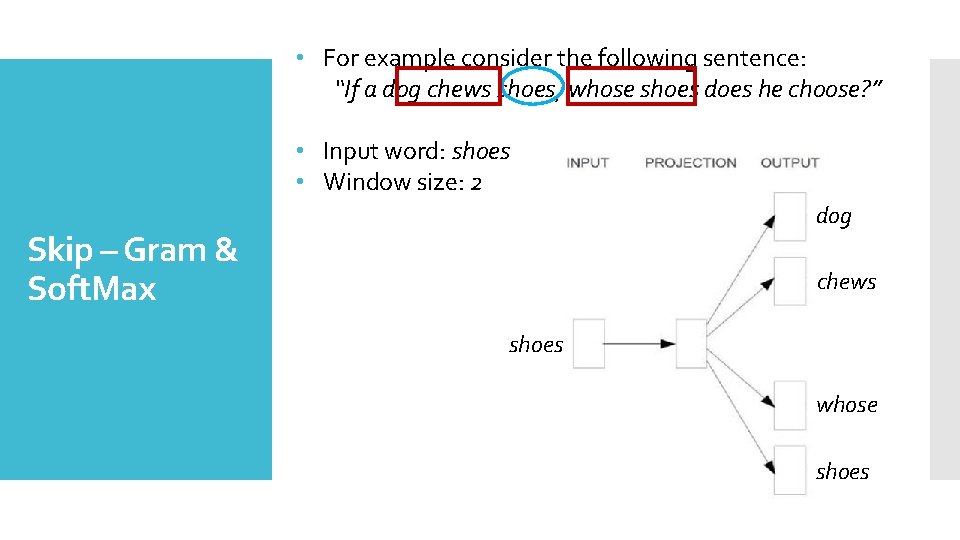

Continues Bag – of – Words (CBOW): As in N – Gram, predicts a word given its context (bidirectional). Skip – Gram: Back to Word Distributed Representation Opposite from N – Gram, predicts the context given a word (bidirectional). Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

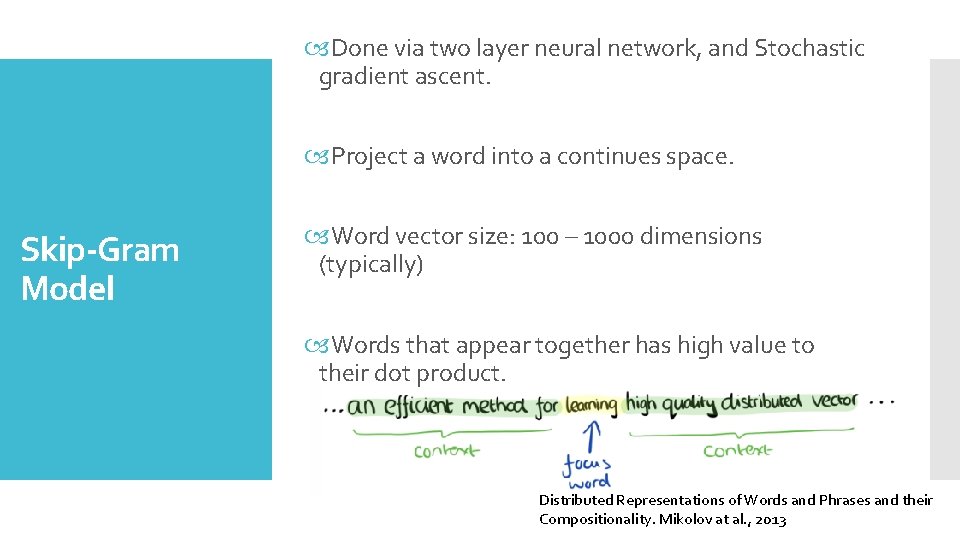

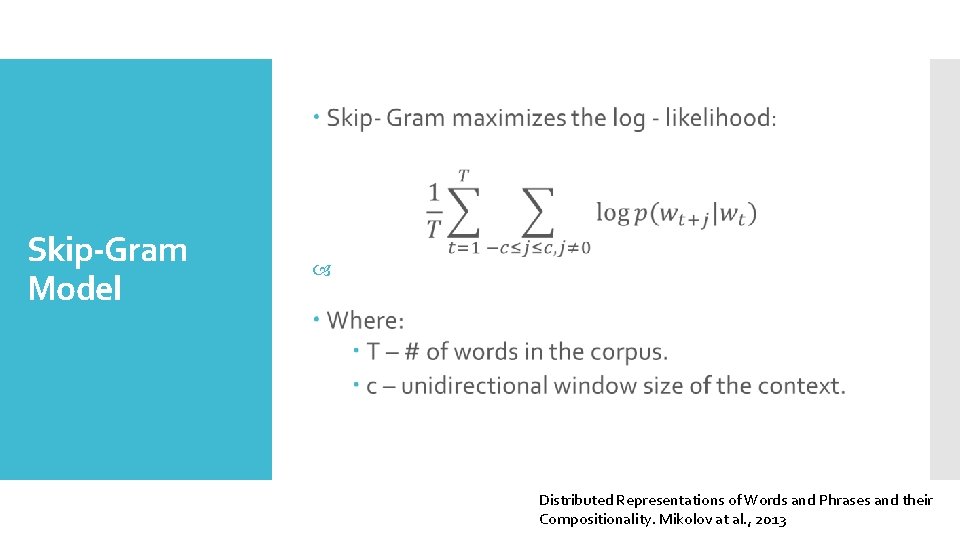

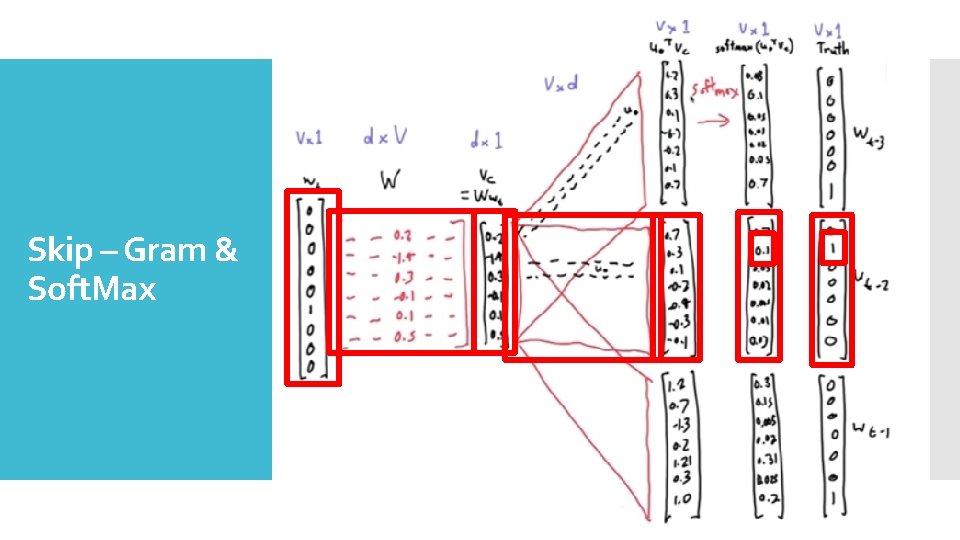

Done via two layer neural network, and Stochastic gradient ascent. Project a word into a continues space. Skip-Gram Model Word vector size: 100 – 1000 dimensions (typically) Words that appear together has high value to their dot product. Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

Skip-Gram Model Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

![Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0. Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0.](http://slidetodoc.com/presentation_image_h/a449a43a4d432db954076e52fb0d6092/image-18.jpg)

Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0. 5, 0. 4, 0. 5] Queen [0. 2, 0. 8, 0. 2] [0. 4, 0. 5] Apple [0. 9, 0. 5, 0. 8] [0. 3, 0. 9, 0. 1] Orange [0. 9, 0. 4, 0. 9] [0. 1, 0. 7, 0. 2] Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

Skip – Gram & Soft. Max

• For example consider the following sentence: “If a dog chews shoes, whose shoes does he choose? ” • Input word: shoes • Window size: 2 dog Skip – Gram & Soft. Max chews shoes whose shoes

![Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0. Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0.](http://slidetodoc.com/presentation_image_h/a449a43a4d432db954076e52fb0d6092/image-21.jpg)

Soft. Max Word Input Output King [0. 2, 0. 9, 0. 1] [0. 5, 0. 4, 0. 5] Queen [0. 2, 0. 8, 0. 2] [0. 4, 0. 5] Apple [0. 9, 0. 5, 0. 8] [0. 3, 0. 9, 0. 1] Orange [0. 9, 0. 4, 0. 9] [0. 1, 0. 7, 0. 2] Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

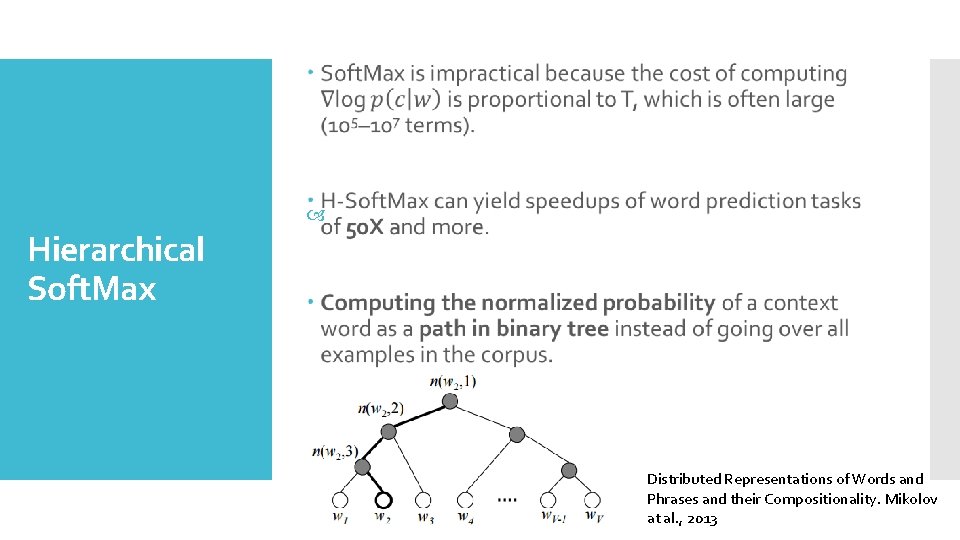

Hierarchical Soft. Max Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

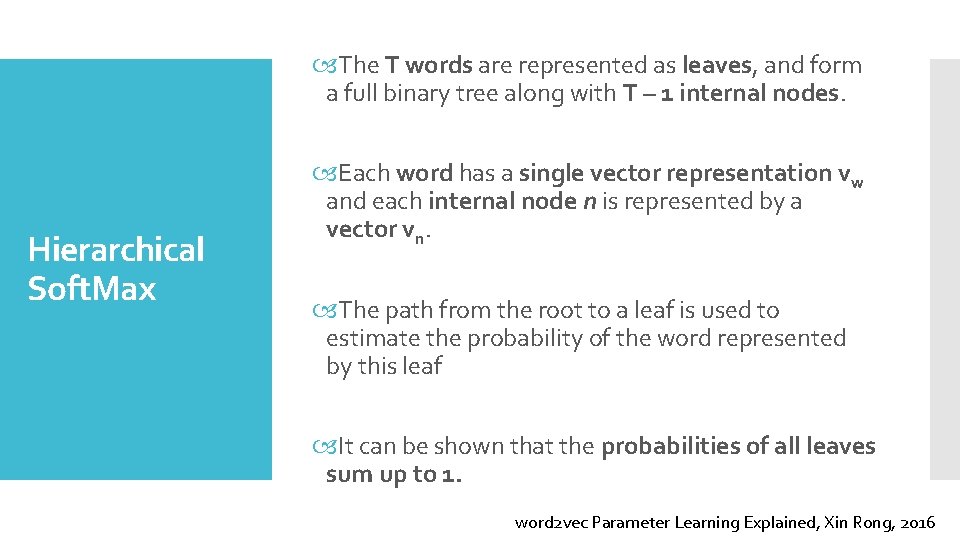

The T words are represented as leaves, and form a full binary tree along with T – 1 internal nodes. Hierarchical Soft. Max Each word has a single vector representation vw and each internal node n is represented by a vector vn. The path from the root to a leaf is used to estimate the probability of the word represented by this leaf It can be shown that the probabilities of all leaves sum up to 1. word 2 vec Parameter Learning Explained, Xin Rong, 2016

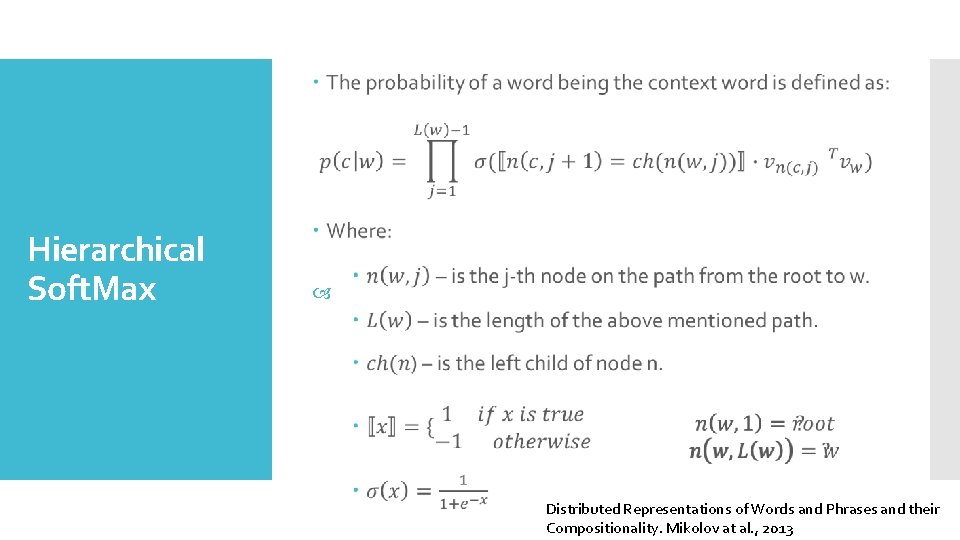

Hierarchical Soft. Max Distributed Representations of Words and Phrases and their Compositionality. Mikolov at al. , 2013

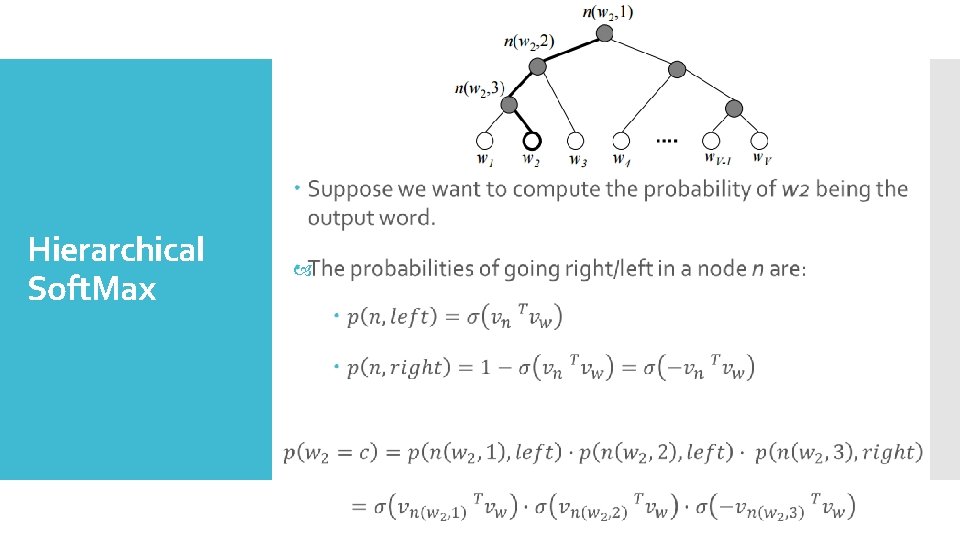

Hierarchical Soft. Max

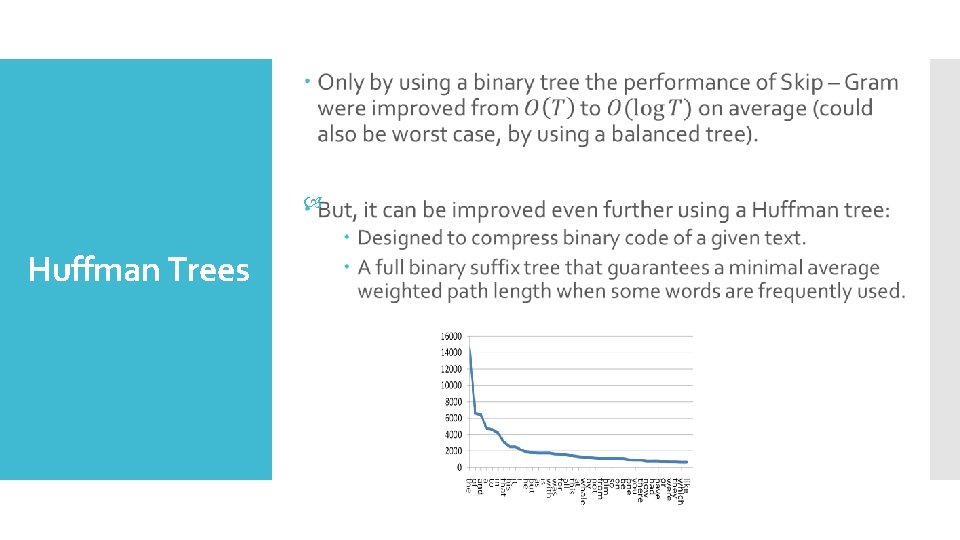

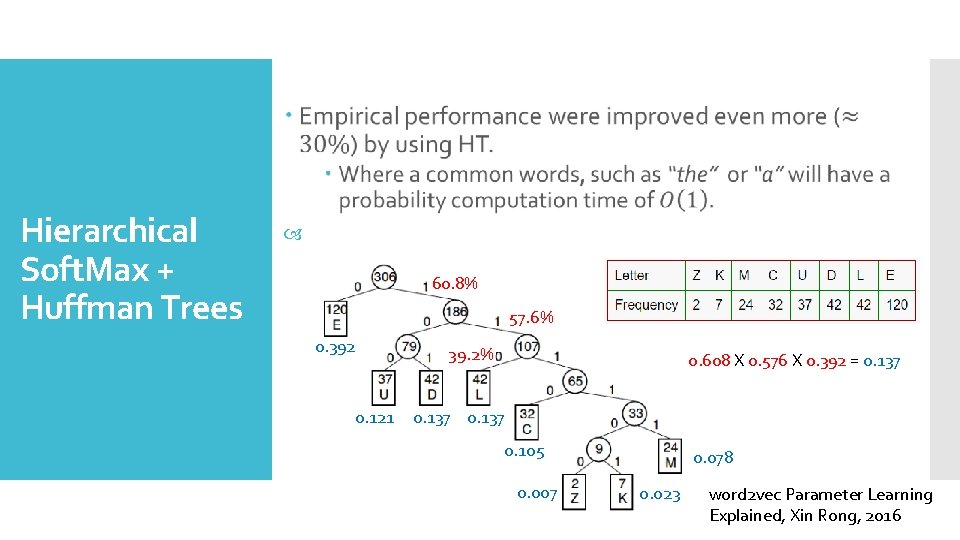

Huffman Trees

Hierarchical Soft. Max + Huffman Trees 60. 8% 57. 6% 0. 392 0. 121 39. 2% 0. 608 X 0. 576 X 0. 392 = 0. 137 0. 105 0. 007 0. 078 0. 023 word 2 vec Parameter Learning Explained, Xin Rong, 2016

Break Time!!!

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

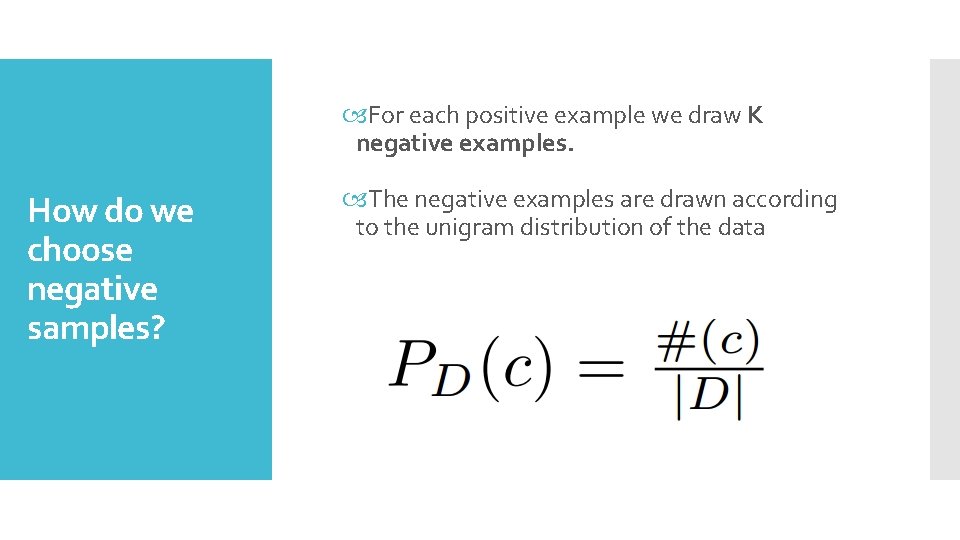

For each positive example we draw K negative examples. How do we choose negative samples? The negative examples are drawn according to the unigram distribution of the data

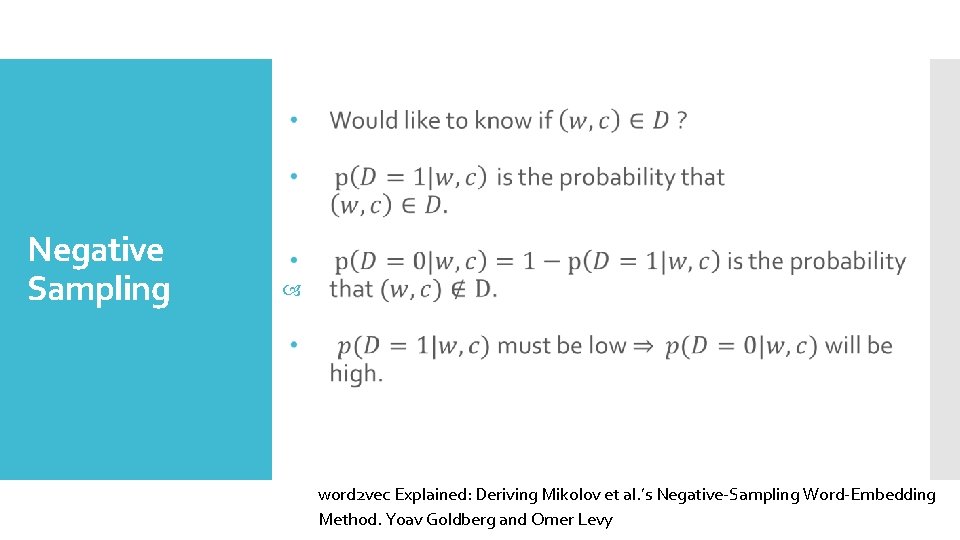

Negative Sampling word 2 vec Explained: Deriving Mikolov et al. ’s Negative-Sampling Word-Embedding Method. Yoav Goldberg and Omer Levy

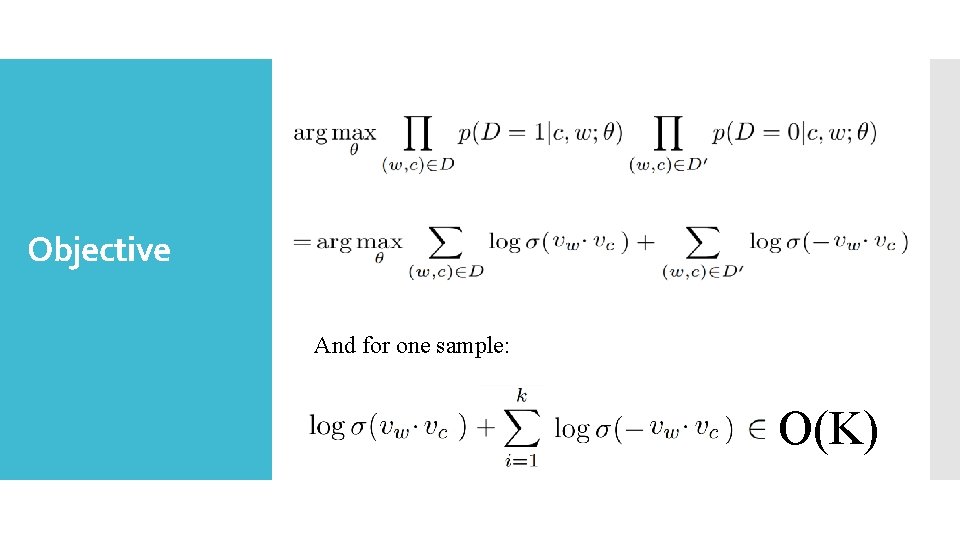

Objective And for one sample: O(K)

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

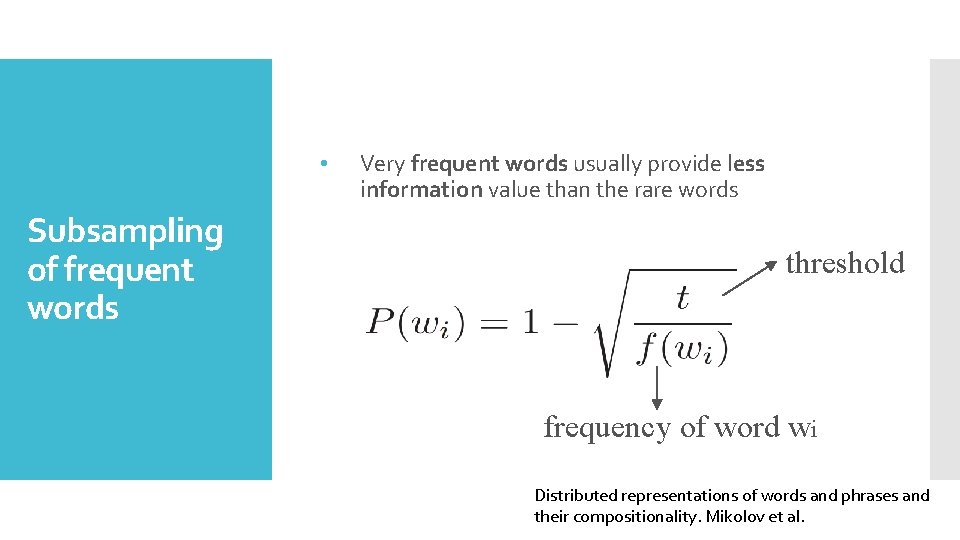

• Subsampling of frequent words Very frequent words usually provide less information value than the rare words threshold frequency of word wi Distributed representations of words and phrases and their compositionality. Mikolov et al.

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

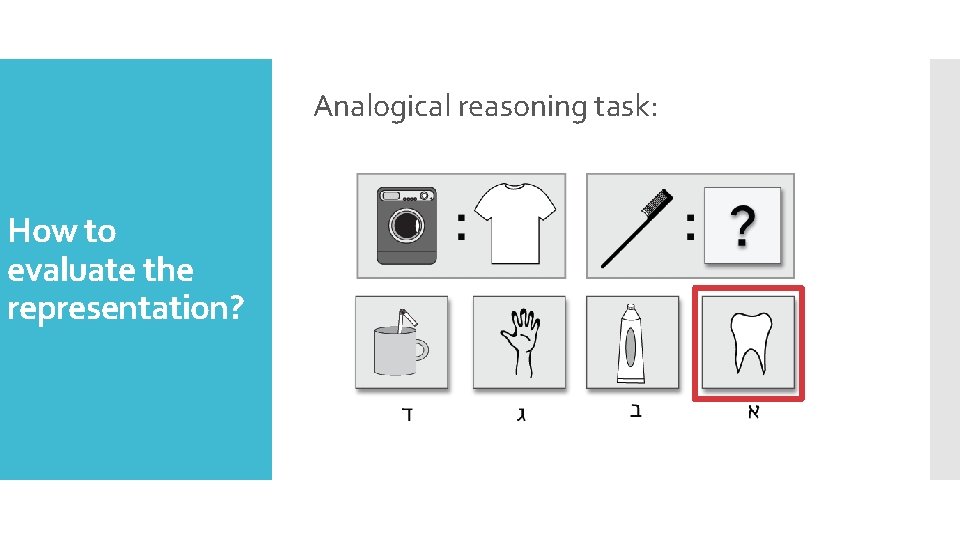

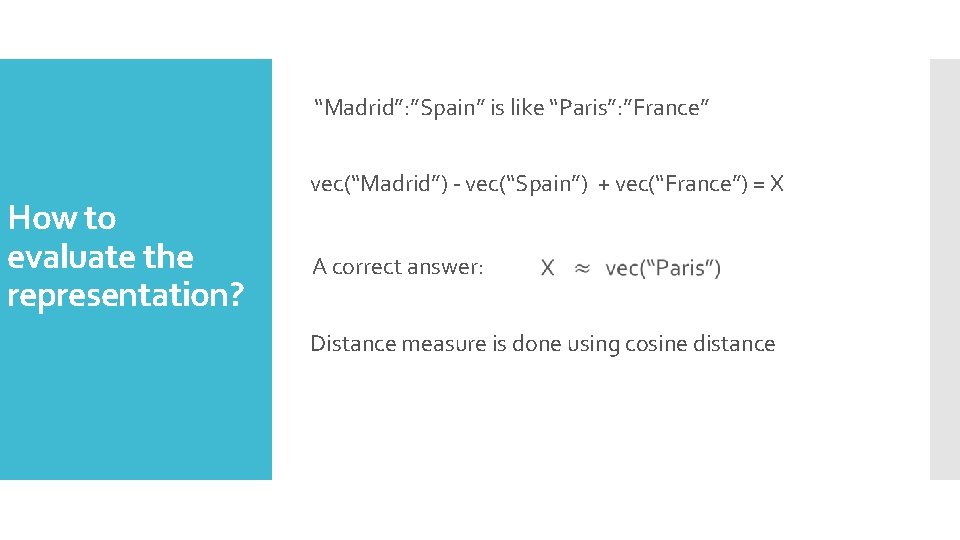

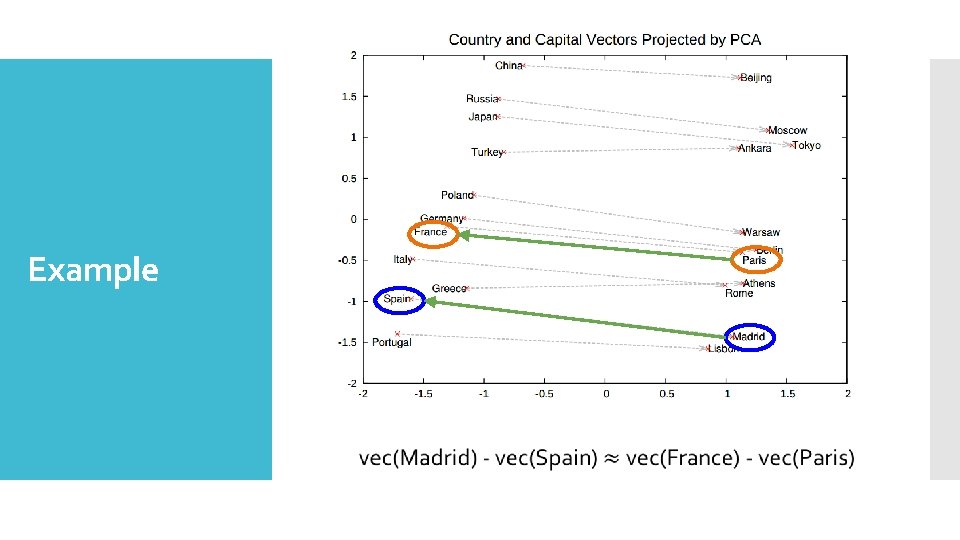

Analogical reasoning task: How to evaluate the representation?

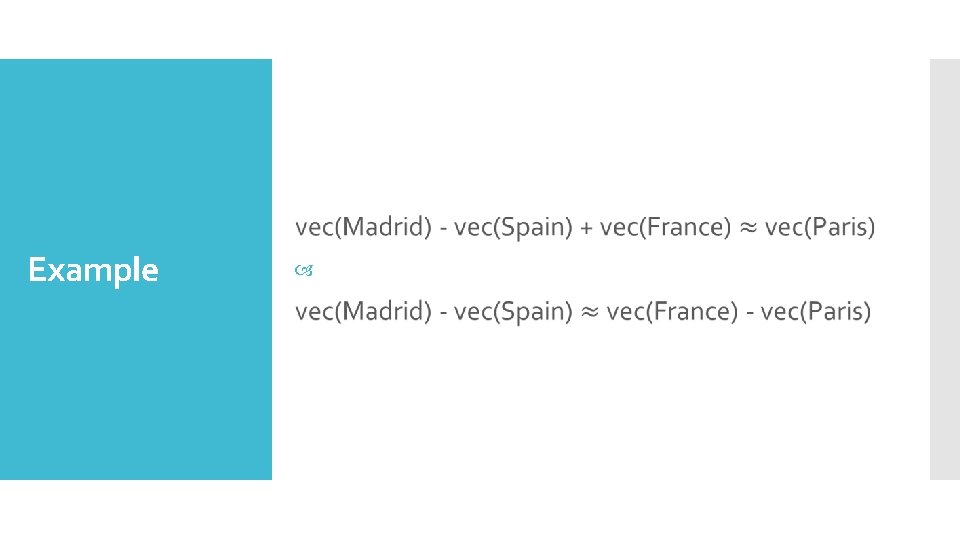

“Madrid”: ”Spain” is like “Paris”: ”France” How to evaluate the representation? vec(“Madrid”) - vec(“Spain”) + vec(“France”) = X A correct answer: Distance measure is done using cosine distance

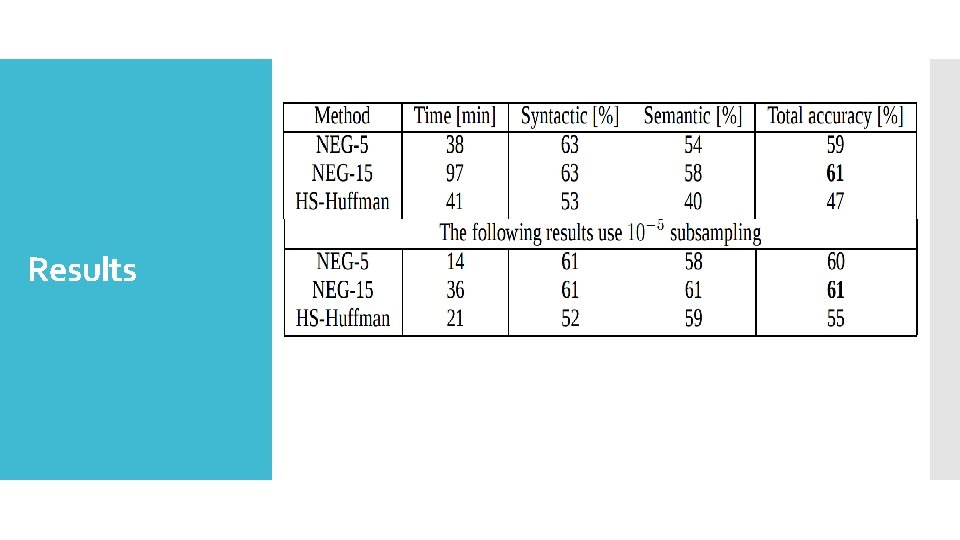

Results

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

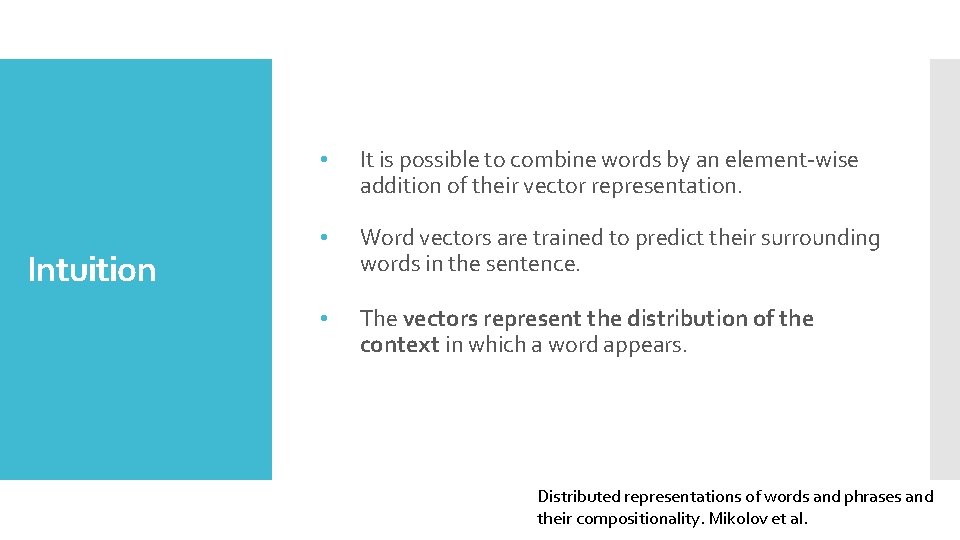

Intuition • It is possible to combine words by an element-wise addition of their vector representation. • Word vectors are trained to predict their surrounding words in the sentence. • The vectors represent the distribution of the context in which a word appears. Distributed representations of words and phrases and their compositionality. Mikolov et al.

Example

Example

Results Distributed representations of words and phrases and their compositionality. Mikolov et al.

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

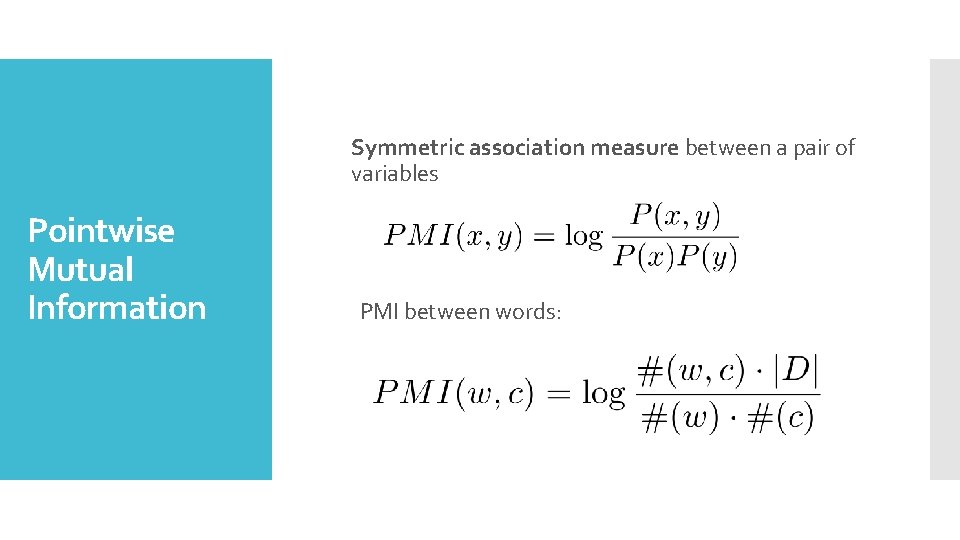

Symmetric association measure between a pair of variables Pointwise Mutual Information PMI between words:

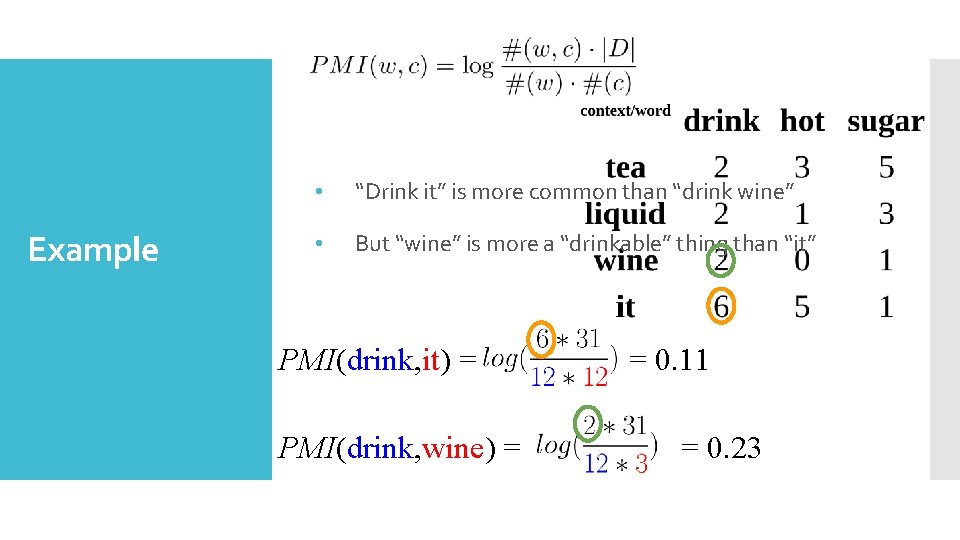

Example • “Drink it” is more common than “drink wine” • But “wine” is more a “drinkable” thing than “it” PMI(drink, it) = PMI(drink, wine) = = 0. 11 = 0. 23

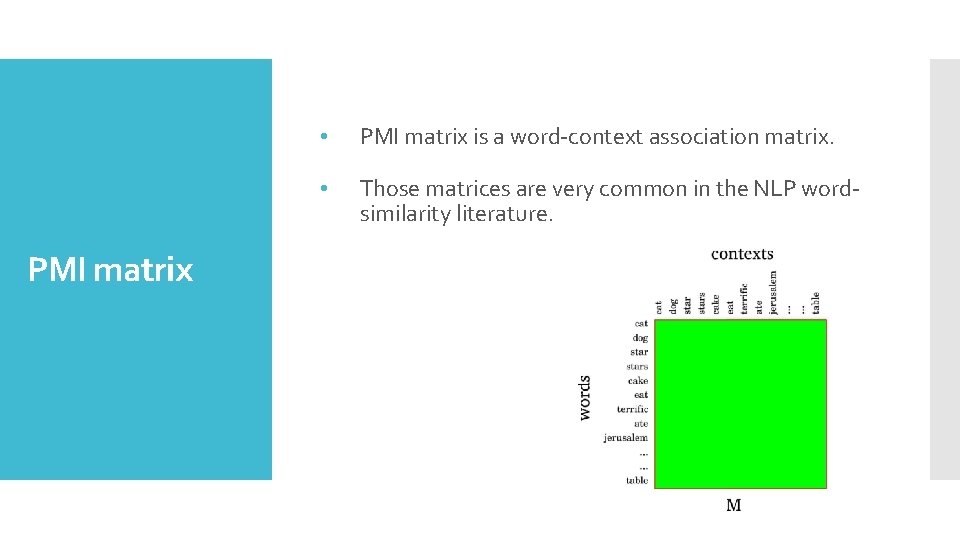

PMI matrix • PMI matrix is a word-context association matrix. • Those matrices are very common in the NLP wordsimilarity literature.

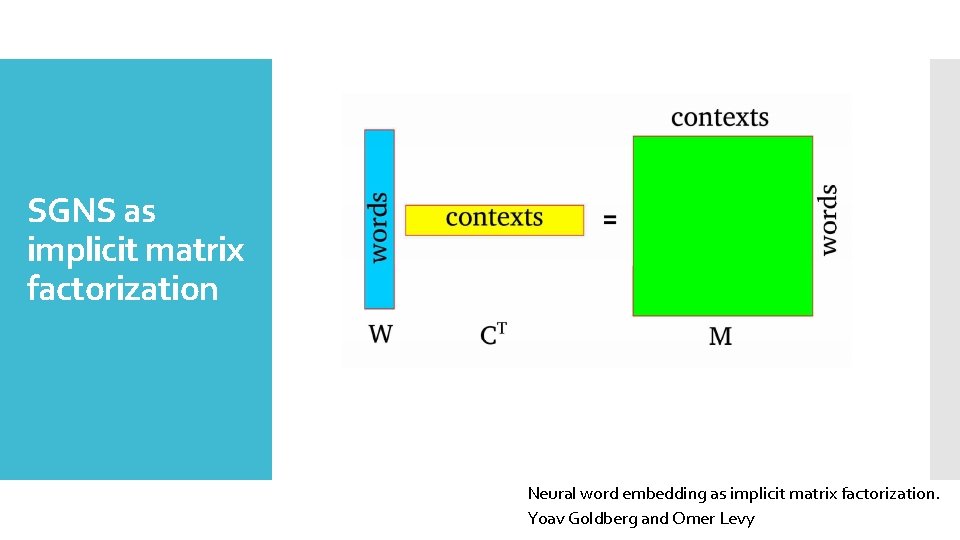

SGNS as implicit matrix factorization Neural word embedding as implicit matrix factorization. Yoav Goldberg and Omer Levy

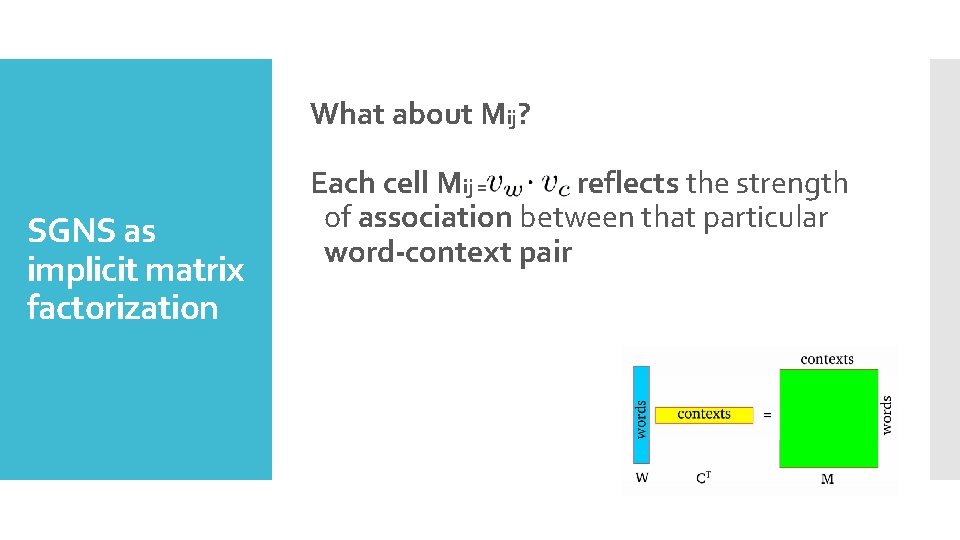

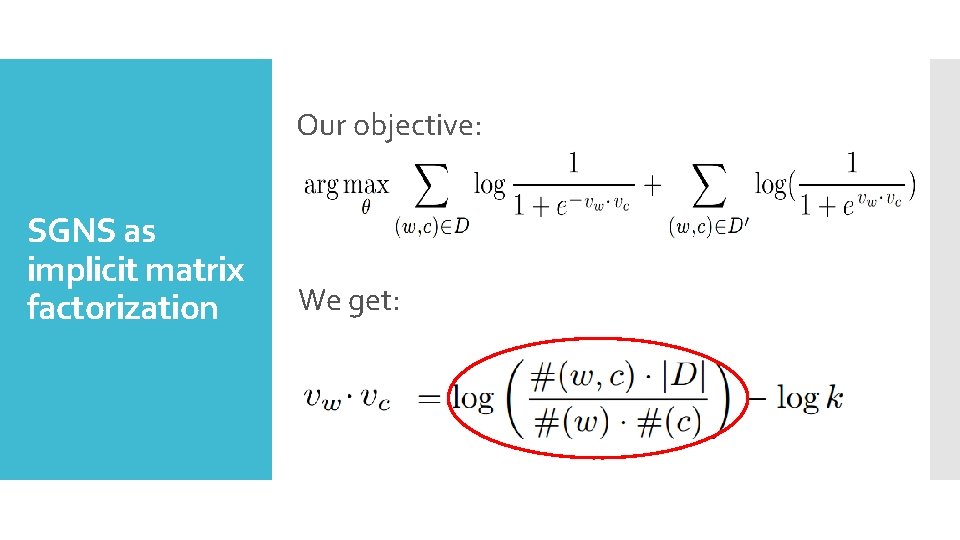

What about Mij? SGNS as implicit matrix factorization Each cell Mij = reflects the strength of association between that particular word-context pair

Our objective: SGNS as implicit matrix factorization We get:

What we’ll do today First Part Second Part Word embedding Introduction Negative Sampling N – Gram Subsampling of frequent words Skip – Gram Evaluation Soft. Max Additive compositionality Hierarchical Soft. Max SGNS and PMI Learning phrases

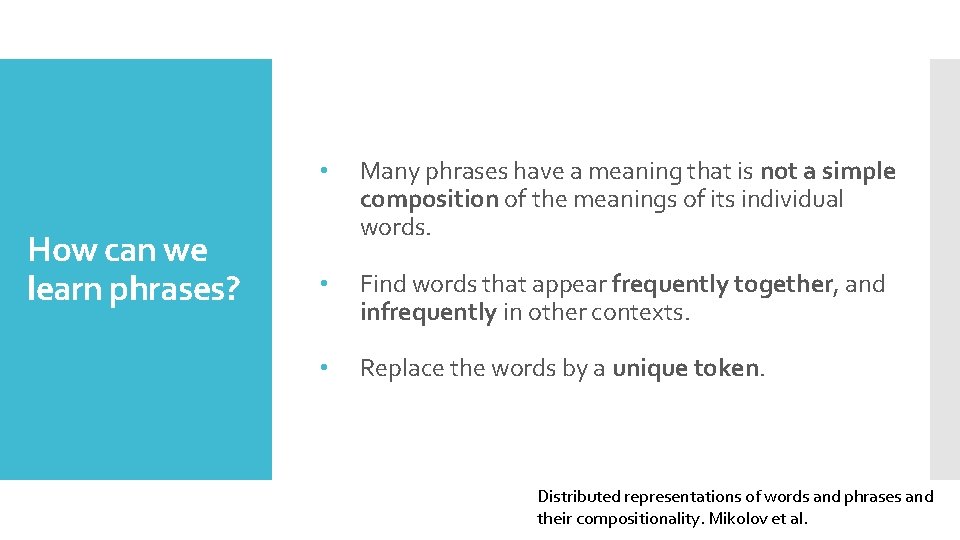

How can we learn phrases? • Many phrases have a meaning that is not a simple composition of the meanings of its individual words. • Find words that appear frequently together, and infrequently in other contexts. • Replace the words by a unique token. Distributed representations of words and phrases and their compositionality. Mikolov et al.

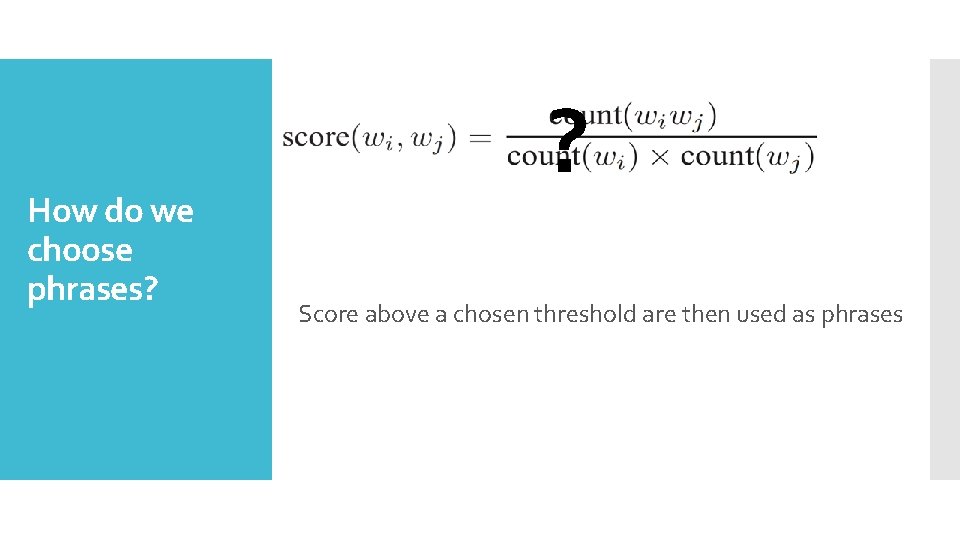

How do we choose phrases? ? Score above a chosen threshold are then used as phrases

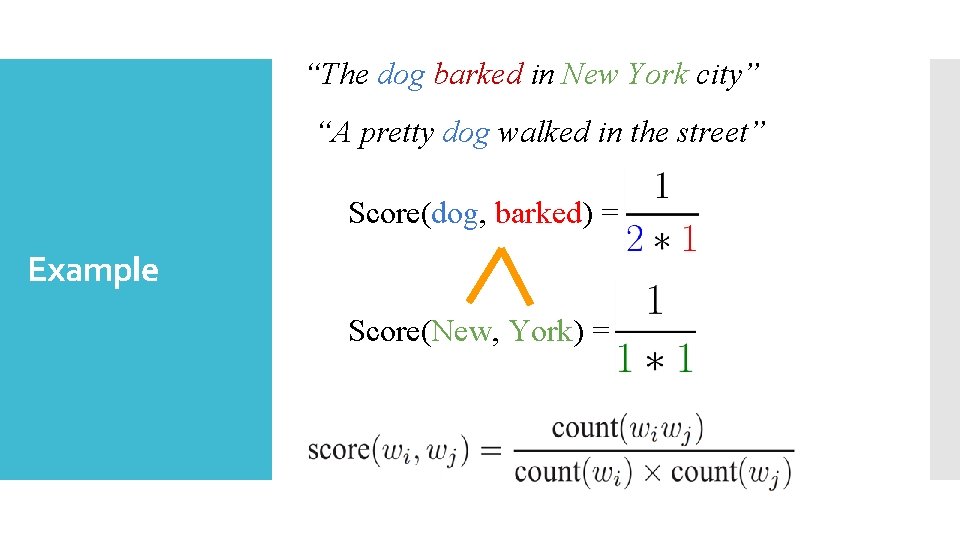

“The dog barked in New York city” “A pretty dog walked in the street” Score(dog, barked) = Example Score(New, York) =

Evaluation

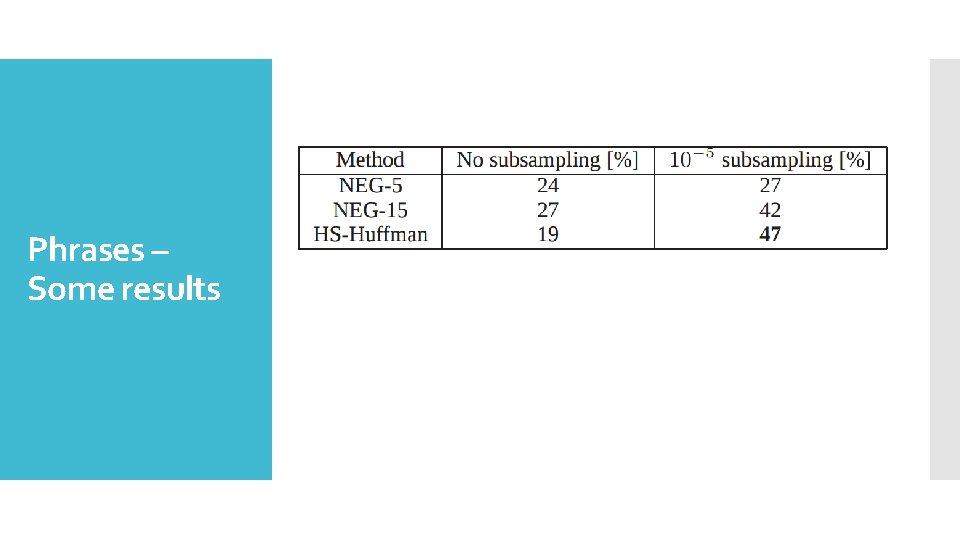

Phrases – Some results

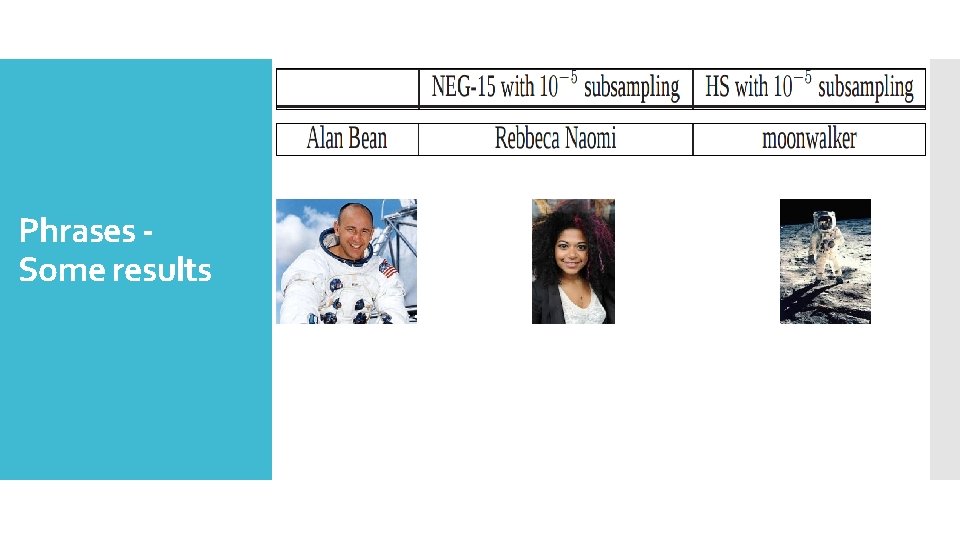

Phrases Some results

- Slides: 59