Unknown Word Acquisition by Reasoning Word Meanings Incrementally

Unknown Word Acquisition by Reasoning Word Meanings Incrementally and Evolutionarily Hiroyuki Kameda Tokyo University of Technology Chiaki Kubomura Yamano College of Aesthetics

Overview 1. 2. 3. 4. Research background Basic ideas of knowledge acquisition Demonstrations Concludings 5

Exchange of Information & Knowledge Language etc. natural & smooth communication 6

A talking Robot with a human 7

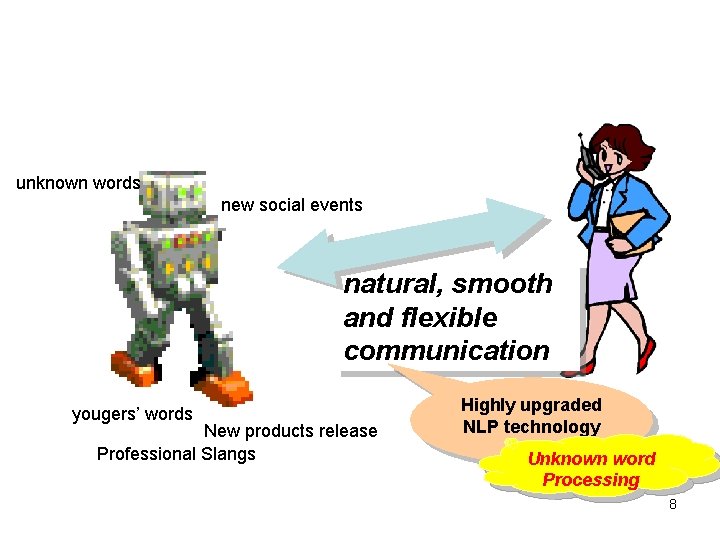

unknown words new social events natural, smooth Dialogue and flexible (communication Various Topics) yougers’ words New products release Professional Slangs Highly upgraded NLP technology Unknown word Processing 8

Unknown Word Acquisition -Our Basic ideas- 9

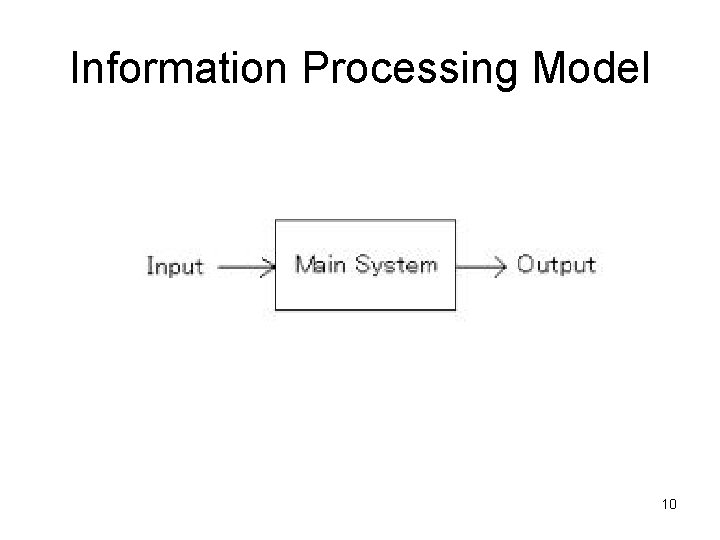

Information Processing Model 10

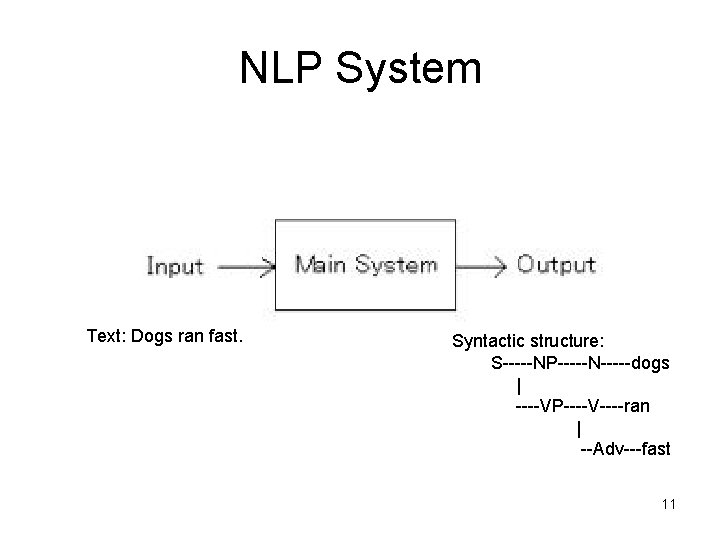

NLP System Text: Dogs ran fast. Syntactic structure: S-----NP-----N-----dogs | ----VP----V----ran | --Adv---fast 11

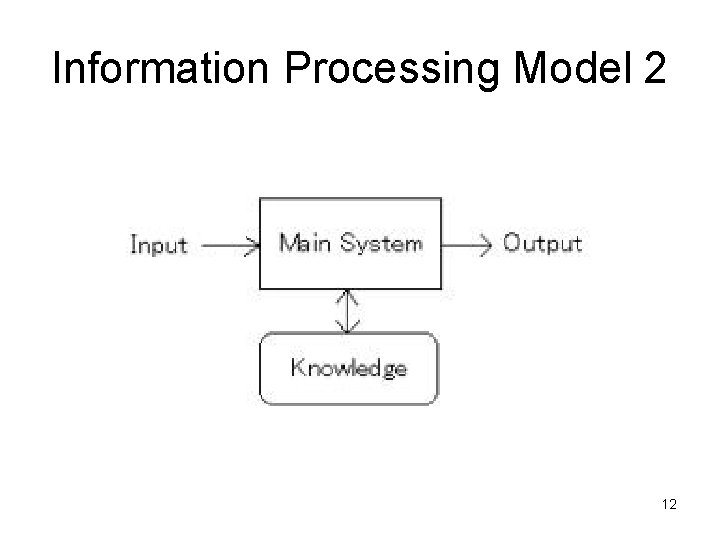

Information Processing Model 2 12

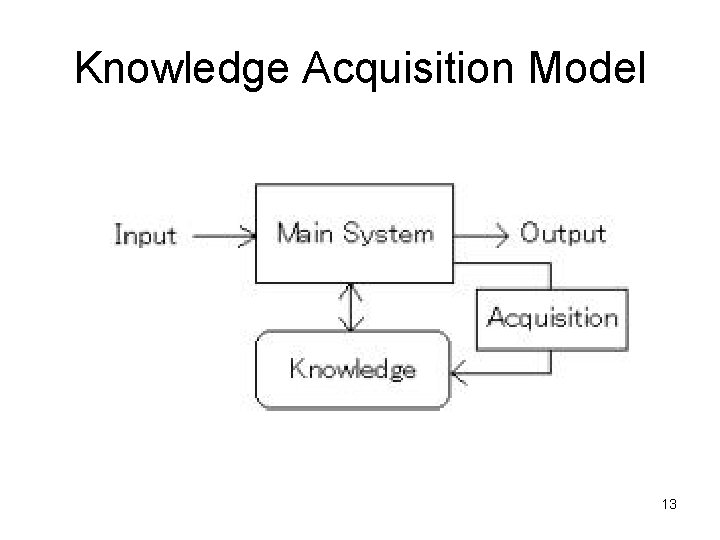

Knowledge Acquisition Model 13

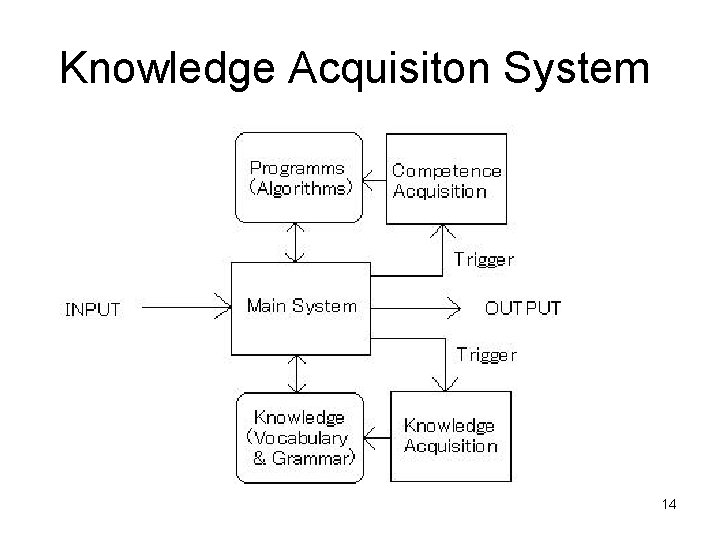

Knowledge Acquisiton System 14

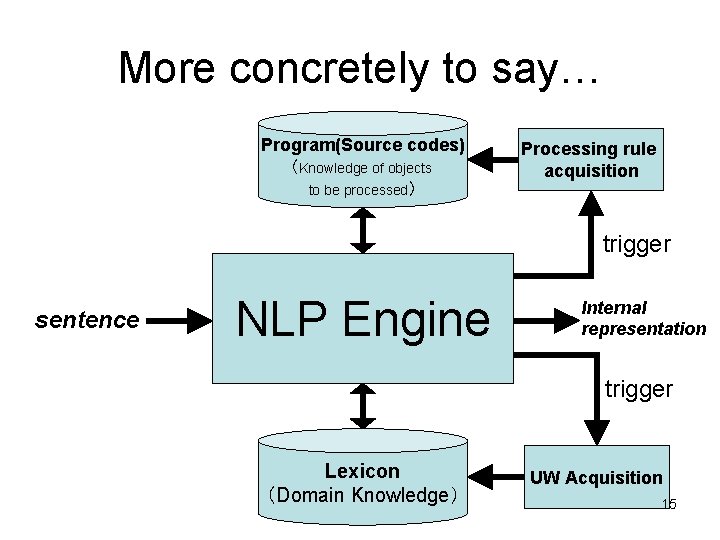

More concretely to say… Program(Source codes) (Knowledge of objects to be processed) Processing rule acquisition trigger sentence NLP Engine Internal representation trigger Lexicon (Domain Knowledge) UW Acquisition 15

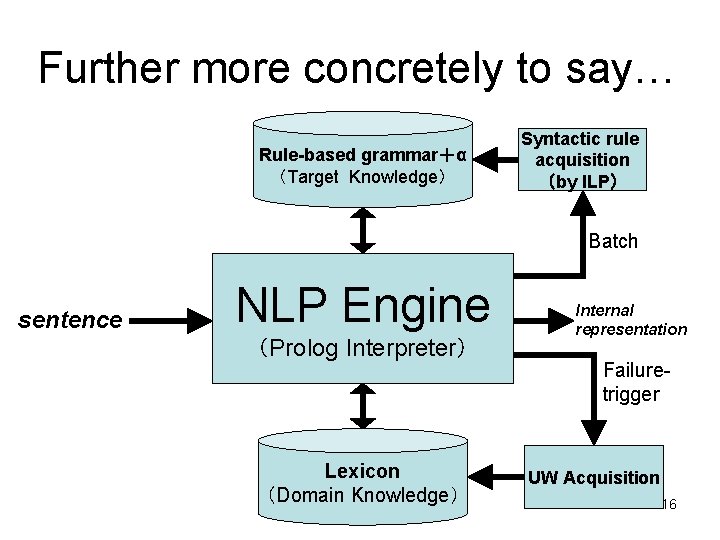

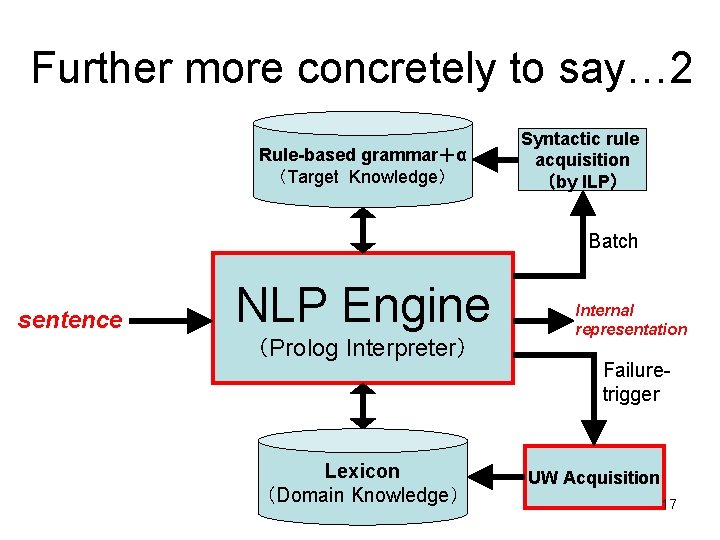

Further more concretely to say… Rule-based grammar+α (Target Knowledge) Syntactic rule acquisition (by ILP) Batch sentence NLP Engine (Prolog Interpreter) Lexicon (Domain Knowledge) Internal representation Failuretrigger UW Acquisition 16

Further more concretely to say… 2 Rule-based grammar+α (Target Knowledge) Syntactic rule acquisition (by ILP) Batch sentence NLP Engine (Prolog Interpreter) Lexicon (Domain Knowledge) Internal representation Failuretrigger UW Acquisition 17

Main topics 18

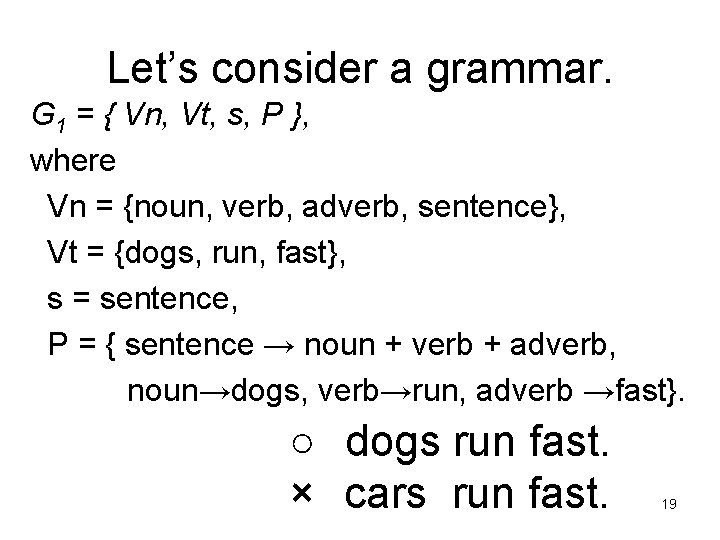

Let’s consider a grammar. G 1 = { Vn, Vt, s, P }, where Vn = {noun, verb, adverb, sentence}, Vt = {dogs, run, fast}, s = sentence, P = { sentence → noun + verb + adverb, noun→dogs, verb→run, adverb →fast}. ○ dogs run fast. × cars run fast. 19

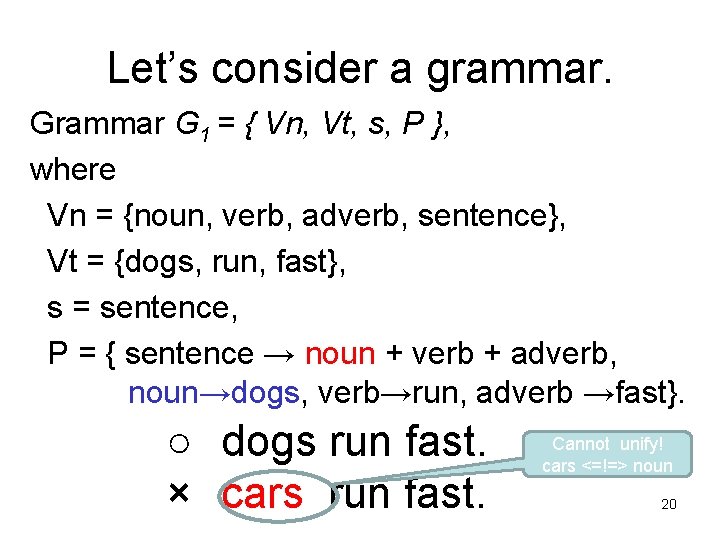

Let’s consider a grammar. Grammar G 1 = { Vn, Vt, s, P }, where Vn = {noun, verb, adverb, sentence}, Vt = {dogs, run, fast}, s = sentence, P = { sentence → noun + verb + adverb, noun→dogs, verb→run, adverb →fast}. ○ dogs run fast. × cars run fast. Cannot unify! cars <=!=> noun 20

Our ideas 1. Processing modes 2. Processing strategies 21

Processing Modes 22

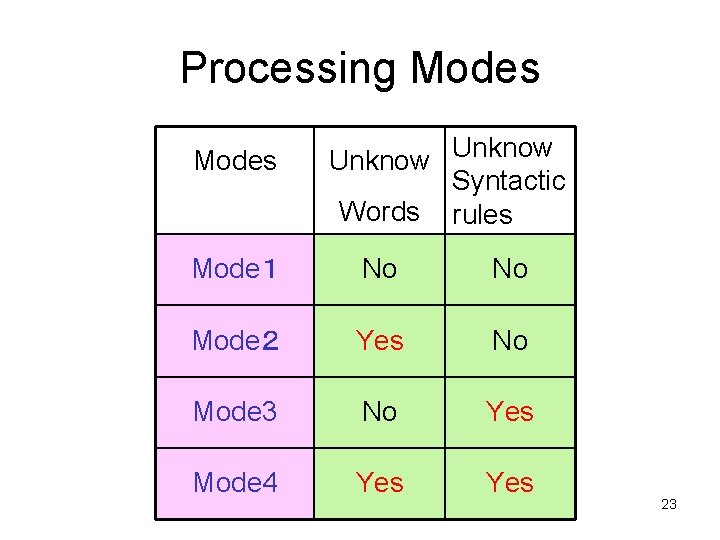

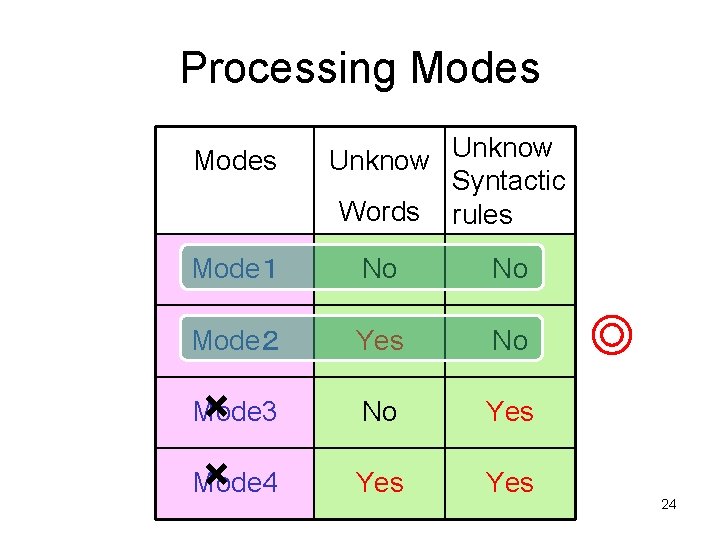

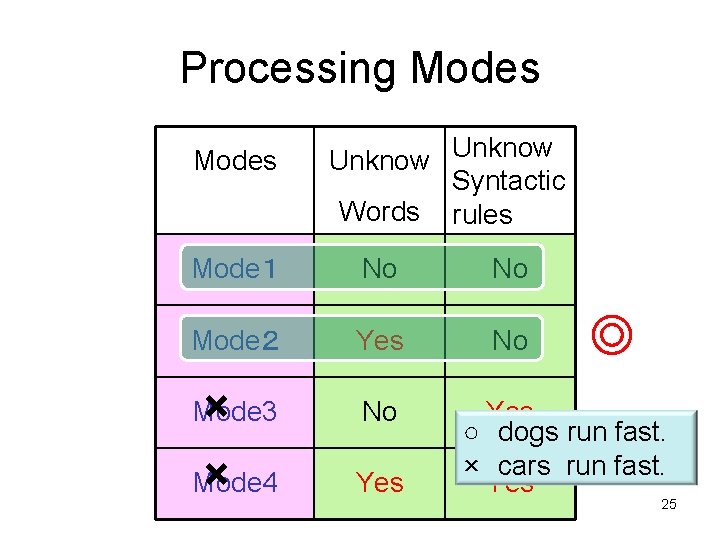

Processing Modes Unknow Syntactic Words rules Mode1 No No Mode2 Yes No Mode 3 No Yes Mode 4 Yes 23

Processing Modes Unknow Syntactic Words rules Mode1 No No Mode2 Yes No No Yes Yes × × Mode 4 Mode 3 ◎ 24

Processing Modes Unknow Syntactic Words rules Mode1 No No Mode2 Yes No × × Mode 4 Mode 3 No Yes ◎ Yes ○ dogs run fast. × cars run fast. Yes 25

Processing Strategies 26

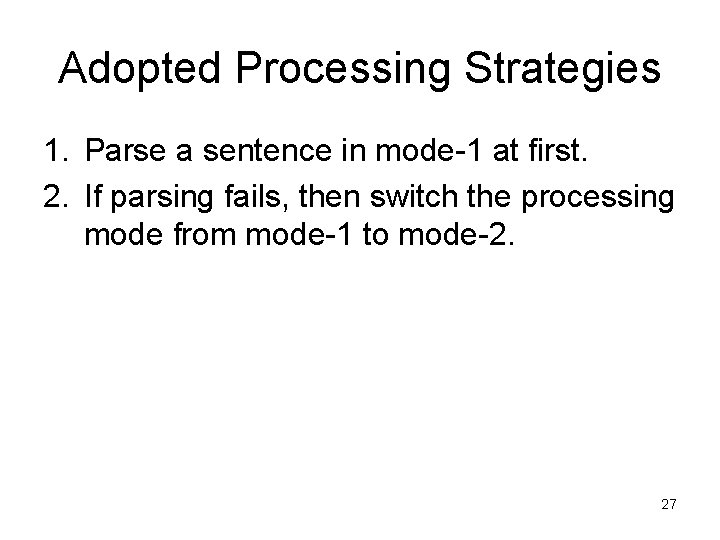

Adopted Processing Strategies 1. Parse a sentence in mode-1 at first. 2. If parsing fails, then switch the processing mode from mode-1 to mode-2. 27

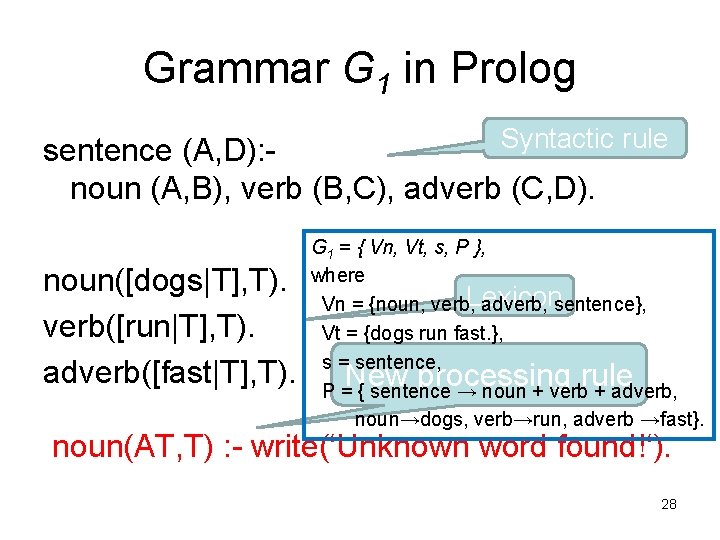

Grammar G 1 in Prolog Syntactic rule sentence (A, D): noun (A, B), verb (B, C), adverb (C, D). noun([dogs|T], T). verb([run|T], T). adverb([fast|T], T). G 1 = { Vn, Vt, s, P }, where Vn = {noun, verb, Lexicon adverb, sentence}, Vt = {dogs run fast. }, s = sentence, P = { sentence → noun + verb + adverb, noun→dogs, verb→run, adverb →fast}. New processing rule noun(AT, T) : - write(‘Unknown word found!‘). 28

References • Kameda, Sakurai and Kubomura: ACAI’ 99 Machine Learning and Applications, Proceedings of Workshop W 01: Machine learning in human language technology, pp. 62 -67(1999). • Kameda & Kubomura: Proc. of Pacling 2001, pp. 146 -152(2001). 29

Let’s explain in more details! 31

Example sentence Tom broke the cup with the hammer. (Okada 1991) tom broke the cup with the hammer 32

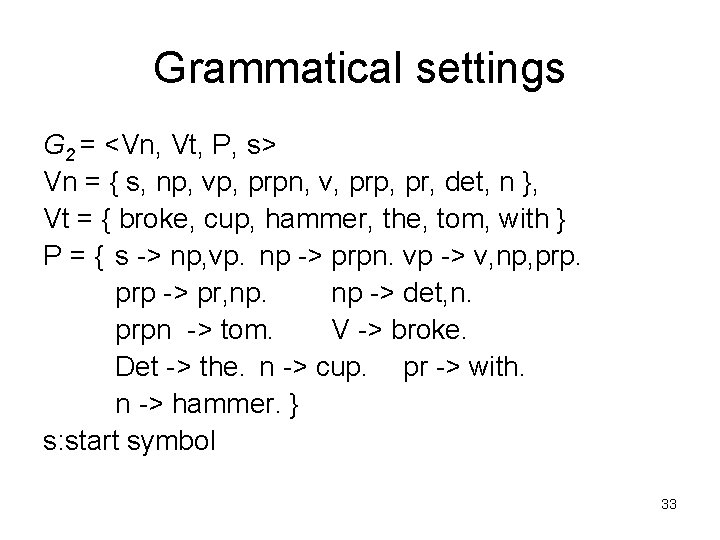

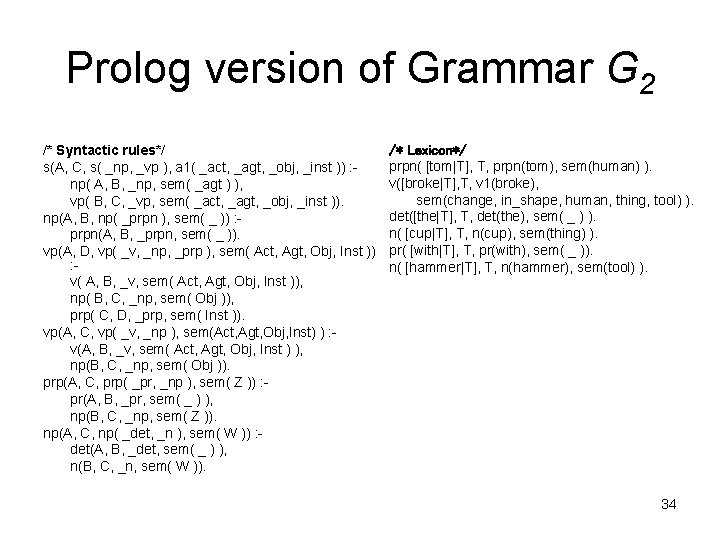

Grammatical settings G 2 = <Vn, Vt, P, s> Vn = { s, np, vp, prpn, v, prp, pr, det, n }, Vt = { broke, cup, hammer, the, tom, with } P = { s -> np, vp. np -> prpn. vp -> v, np, prp -> pr, np. np -> det, n. prpn -> tom. V -> broke. Det -> the. n -> cup. pr -> with. n -> hammer. } s: start symbol 33

Prolog version of Grammar G 2 /* Syntactic rules*/ s(A, C, s( _np, _vp ), a 1( _act, _agt, _obj, _inst )) : np( A, B, _np, sem( _agt ) ), vp( B, C, _vp, sem( _act, _agt, _obj, _inst )). np(A, B, np( _prpn ), sem( _ )) : prpn(A, B, _prpn, sem( _ )). vp(A, D, vp( _v, _np, _prp ), sem( Act, Agt, Obj, Inst )) : v( A, B, _v, sem( Act, Agt, Obj, Inst )), np( B, C, _np, sem( Obj )), prp( C, D, _prp, sem( Inst )). vp(A, C, vp( _v, _np ), sem(Act, Agt, Obj, Inst) ) : v(A, B, _v, sem( Act, Agt, Obj, Inst ) ), np(B, C, _np, sem( Obj )). prp(A, C, prp( _pr, _np ), sem( Z )) : pr(A, B, _pr, sem( _ ) ), np(B, C, _np, sem( Z )). np(A, C, np( _det, _n ), sem( W )) : det(A, B, _det, sem( _ ) ), n(B, C, _n, sem( W )). /* Lexicon*/ prpn( [tom|T], T, prpn(tom), sem(human) ). v([broke|T], T, v 1(broke), sem(change, in_shape, human, thing, tool) ). det([the|T], T, det(the), sem( _ ) ). n( [cup|T], T, n(cup), sem(thing) ). pr( [with|T], T, pr(with), sem( _ )). n( [hammer|T], T, n(hammer), sem(tool) ). 34

![Demonstration(Mode1) • Input 1: [tom, broke, the, cup, with, the, hammer] • Input 2: Demonstration(Mode1) • Input 1: [tom, broke, the, cup, with, the, hammer] • Input 2:](http://slidetodoc.com/presentation_image_h2/82caa2669ffa24db4757d899efc9f77c/image-34.jpg)

Demonstration(Mode1) • Input 1: [tom, broke, the, cup, with, the, hammer] • Input 2: [tom, broke, the, glass, with, the, hammer] 35

Problem • Parsing fails, when unknown words exist in sentences. 36

Unknown word Processing • Switching processing modes (from Mode-1 to Mode-2) • When fails, switch the processing mode from mode-1 to mode-2. • Execute the predicate assert of Prolog to change the mode. 37

![Demonstration(Mode 2) • Input: – P 1: [tom, broke, the, cup, with, the, hammer] Demonstration(Mode 2) • Input: – P 1: [tom, broke, the, cup, with, the, hammer]](http://slidetodoc.com/presentation_image_h2/82caa2669ffa24db4757d899efc9f77c/image-37.jpg)

Demonstration(Mode 2) • Input: – P 1: [tom, broke, the, cup, with, the, hammer] – P 2: [tom, broke, the, glass, with, the, hammer] – P 3: [tom, broke, the, glass, with, the, stone] – P 4: [tom, vvv, the, glass, with, the, hammer] 38

Problem • Leaning is sometimes imperfect. • Learnig order influences learnig results. • Solution: Influence of learning order is covered with introducing a function of evolutionary learning 39

More Explanations • All information of unknown words should be guessed, when the unknown words are registered to lexicon. spelling and POS are guessed, but not pronunciation. (imperfect knowledge) • If the pronunciation can be guessed later, the information will be added to lexicon. → Evolutionary Learning! 40

Solution • Setting(some knowledge may be revised but some must not) – a priori knowledge (Initial Knowledge): must not change – posterior knowledge(Acquired Knowledge): • Must not change, if perfect • May change, if imperfect 41

Demonstration of Final version • Input: – P 4: [tom, vvv, the, glass, with, the, hammer] – P 2: [tom, broke, the, glass, with, the, hammer] – P 3: [tom, broke, the, glass, with, the, stone] 43

Concludings 1. Research background 2. Basic ideas of knowledge acquisition 1. Some models 1. Information processing model 2. Unknown word acquisition model 2. Modes and Strategies 3. Demonstrations 44

Future Works • Applying to more real world domain – Therapeutic robots – Robot for schizophrenia rehabilitation 45

- Slides: 45