TSBK 01 Image Coding and Data Compression Lecture

TSBK 01 Image Coding and Data Compression Lecture 4: Data Compression Techniques Jörgen Ahlberg Div. of Sensor Technology Swedish Defence Research Agency (FOI)

Outline § Huffman coding § Arithmetic coding § Application: JBIG § Universal coding § LZ-coding § LZ 77, LZ 78, LZW § Applications: GIF and PNG

Repetition § Coding: Assigning binary codewords to (blocks of) source symbols. § Variable-length codes (VLC) and fixedlength codes. § Instantaneous codes ½ Uniqely decodable codes ½ Non-singular codes ½ All codes § Tree codes are instantaneous. § Tree code , Kraft’s Inequality.

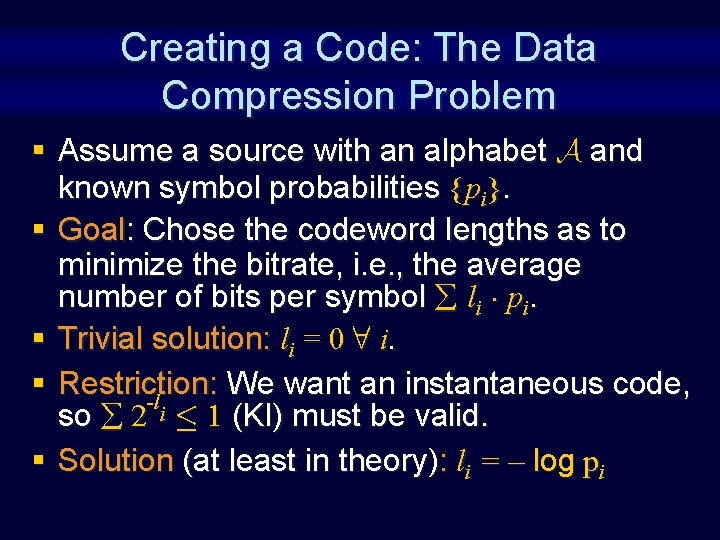

Creating a Code: The Data Compression Problem § Assume a source with an alphabet A and known symbol probabilities {pi}. § Goal: Chose the codeword lengths as to minimize the bitrate, i. e. , the average number of bits per symbol li ¢ pi. § Trivial solution: li = 0 8 i. § Restriction: We want an instantaneous code, -l so 2 i · 1 (KI) must be valid. § Solution (at least in theory): li = – log pi

In practice… § Use some nice algorithm to find the code tree – Huffman coding – Tunnstall coding

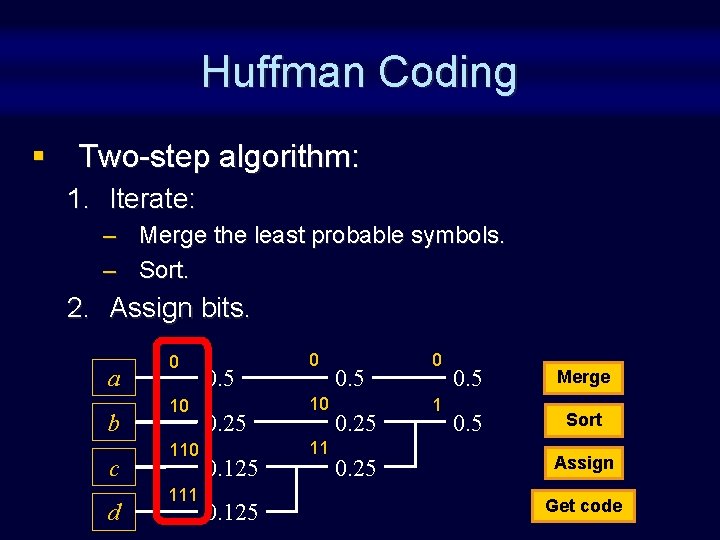

Huffman Coding § Two-step algorithm: 1. Iterate: – Merge the least probable symbols. – Sort. 2. Assign bits. a b c d 0 10 111 0. 5 0. 25 0. 125 0 10 11 0. 5 0. 25 0 1 0. 5 Merge 0. 5 Sort Assign Get code

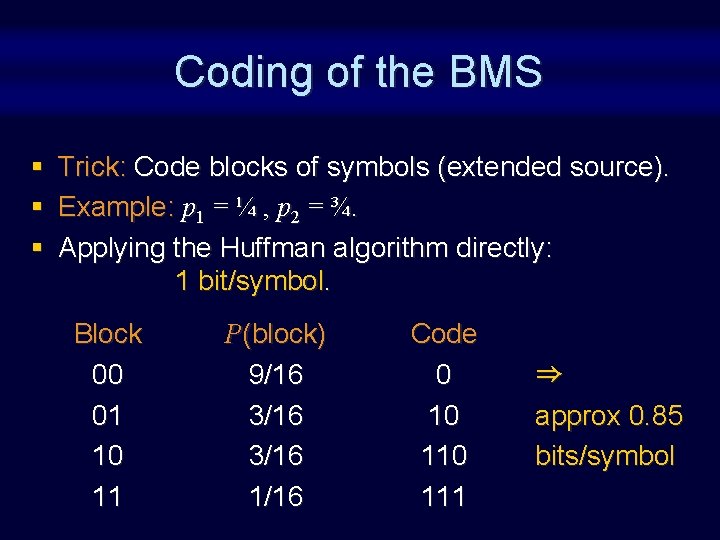

Coding of the BMS § § § Trick: Code blocks of symbols (extended source). Example: p 1 = ¼ , p 2 = ¾. Applying the Huffman algorithm directly: 1 bit/symbol. Block 00 01 10 11 P(block) 9/16 3/16 1/16 Code 0 10 111 ) approx 0. 85 bits/symbol

Huffman Coding: Pros and Cons + Fast implementations. + Error resilient: resynchronizes in ~ l 2 steps. - The code tree grows exponentially when the source is extended. - The symbol probabilities are built-in in the code. Hard to use Huffman coding for extended sources / large alphabets or when the symbol probabilities are varying by time.

![Arithmetic Coding § Shannon-Fano-Elias § Basic idea: Split the interval [0, 1] according to Arithmetic Coding § Shannon-Fano-Elias § Basic idea: Split the interval [0, 1] according to](http://slidetodoc.com/presentation_image_h2/f28d8722f98cbfc98b7f7bf55a2bed5e/image-9.jpg)

Arithmetic Coding § Shannon-Fano-Elias § Basic idea: Split the interval [0, 1] according to the symbol probabilities. § Example: A = {a, b, c, d}, P = {½, ¼, 1/8}.

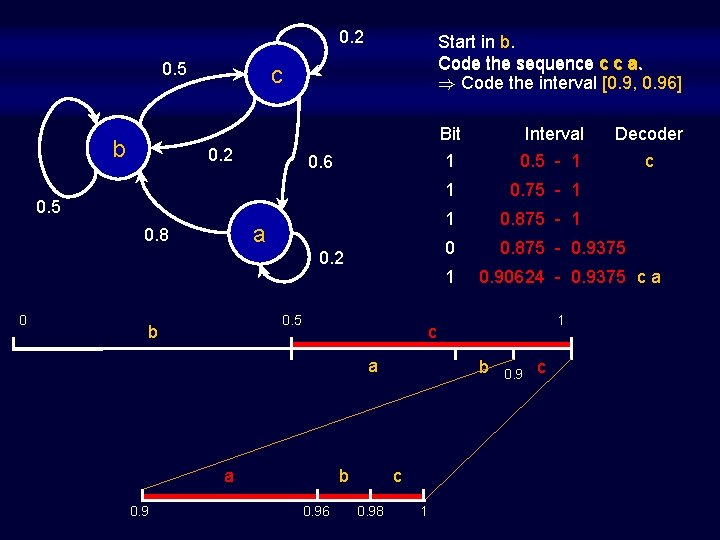

0. 2 0. 5 b Start in b. Code the sequence c c a. ) Code the interval [0. 9, 0. 96] c 0. 2 0. 6 0. 5 a 0. 8 0. 2 0 a 0. 9 0. 75 - 1 1 0. 875 - 1 0 0. 875 - 0. 9375 0. 90624 - 0. 9375 c a 1 c a b 0. 96 b c 0. 98 1 Decoder c 1 1 0. 5 b Interval 0. 5 - 1 Bit 1 0. 9 c

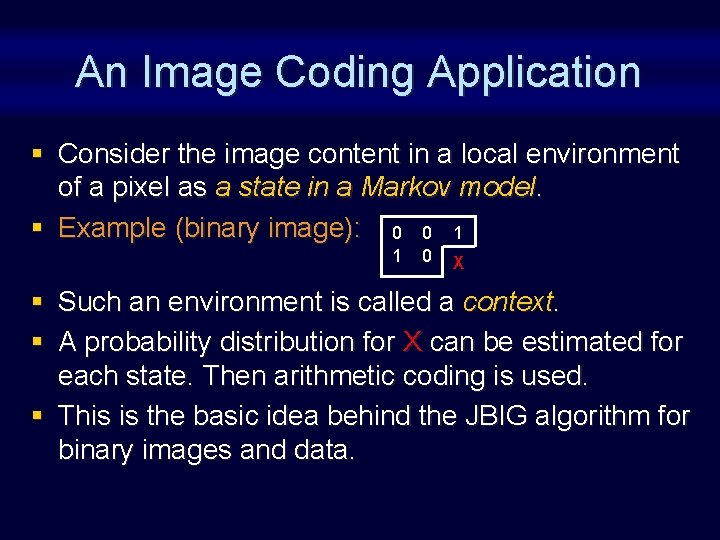

An Image Coding Application § Consider the image content in a local environment of a pixel as a state in a Markov model. § Example (binary image): 0 0 1 1 0 X § Such an environment is called a context. § A probability distribution for X can be estimated for each state. Then arithmetic coding is used. § This is the basic idea behind the JBIG algorithm for binary images and data.

Flushing the Coder § The coding process is ended (restarted) and the coder flushed – after a given number of symbols (FIVO) or – When the interval is too small for a fixed number of output bits (VIFO).

Universal Coding § A universal coder doesn’t need to know the statistics in advance. Instead, estimate from data. § Forward estimation: Estimate statistics in a first pass and transmit to the decoder. § Backward estimation: Estimate from already transmitted (received) symbols.

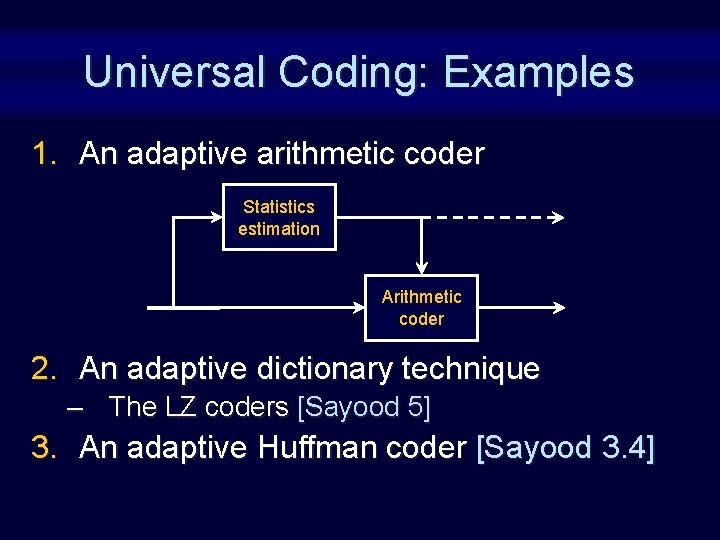

Universal Coding: Examples 1. An adaptive arithmetic coder Statistics estimation Arithmetic coder 2. An adaptive dictionary technique – The LZ coders [Sayood 5] 3. An adaptive Huffman coder [Sayood 3. 4]

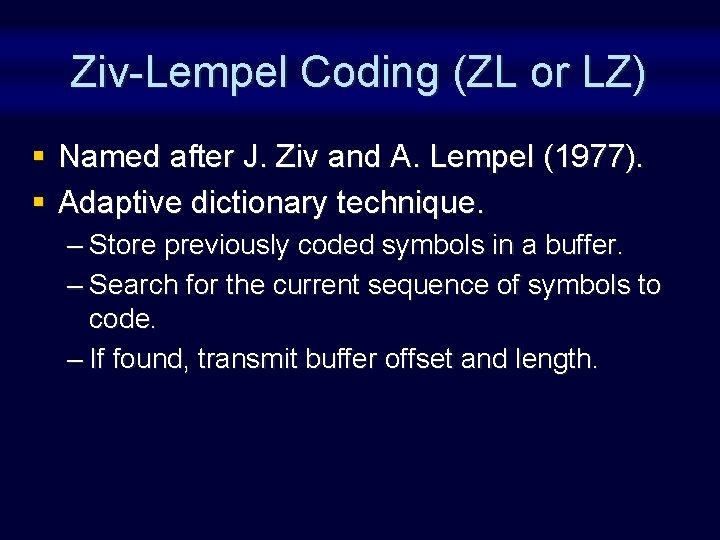

Ziv-Lempel Coding (ZL or LZ) § Named after J. Ziv and A. Lempel (1977). § Adaptive dictionary technique. – Store previously coded symbols in a buffer. – Search for the current sequence of symbols to code. – If found, transmit buffer offset and length.

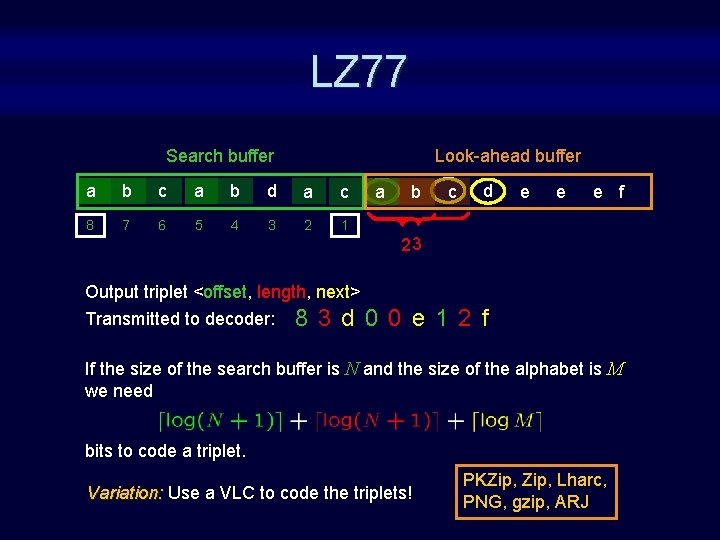

LZ 77 Search buffer Look-ahead buffer a b c a b d a c 8 7 6 5 4 3 2 1 a Output triplet <offset, length, next> Transmitted to decoder: 8 3 d 0 b c d e e e f 23 0 e 1 2 f If the size of the search buffer is N and the size of the alphabet is M we need bits to code a triplet. Variation: Use a VLC to code the triplets! PKZip, Lharc, PNG, gzip, ARJ

Drawback with LZ 77 § Repetetive patterns with a period longer than the search buffer size are not found. § If the search buffer size is 4, the sequence abcdeabcde… will be expanded, not compressed.

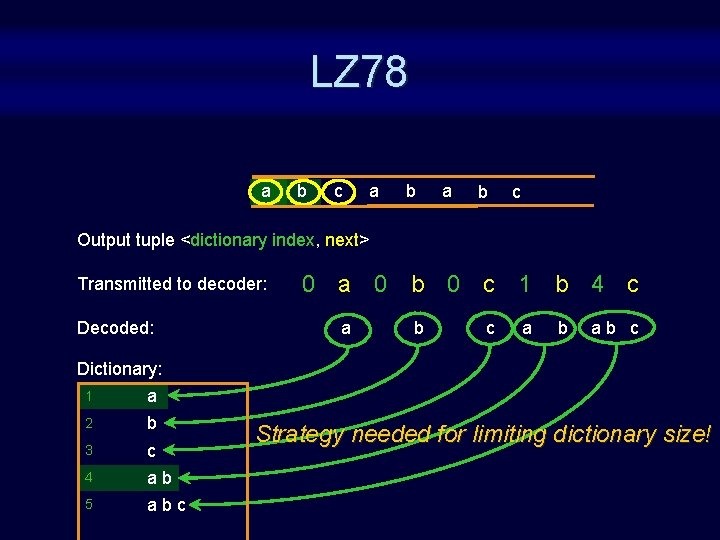

LZ 78 § Store patterns in a dictionary § Transmit a tuple <dictionary index, next>

LZ 78 a b c Output tuple <dictionary index, next> Transmitted to decoder: Decoded: 0 a 0 b 0 c 1 b 4 c a b ab c Dictionary: 1 a 2 b 3 c 4 ab 5 abc Strategy needed for limiting dictionary size!

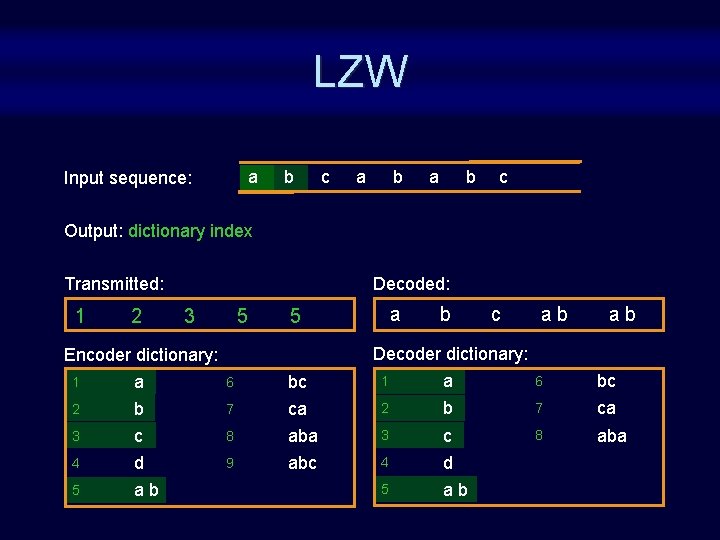

LZW § § § Modification to LZ 78 by Terry Welch, 1984. Applications: GIF, v 42 bis Patented by Uni. Sys Corp. Transmit only the dictionary index. The alphabet is stored in the dictionary in advance.

LZW a Input sequence: b c a b c Output: dictionary index Transmitted: 1 2 Decoded: 3 5 a 5 b c ab ab Decoder dictionary: Encoder dictionary: 1 a 6 bc 2 b 7 ca 3 c 8 aba 4 d 9 abc 4 d 5 ab

And now for some applications: GIF & PNG

GIF § Compu. Serve Graphics Interchange Format (1987, 89). § Features: – Designed for up/downloading images to/from BBSes via PSTN. – 1 -, 4 -, or 8 -bit colour palettes. – Interlace for progressive decoding (four passes, starts with every 8 th row). – Transparent colour for non-rectangular images. – Supports multiple images in one file (”animated GIFs”).

GIF: Method § Compression by LZW. § Dictionary size 2 b+1 8 -bit symbols – b is the number of bits in the palette. § Dictionary size doubled if filled (max 4096). § Works well on computer generated images.

GIF: Problems § Unsuitable for natural images (photos): – Maximum 256 colors () bad quality). – Repetetive patterns uncommon () bad compression). § LZW patented by Uni. Sys Corp. § Alternative: PNG

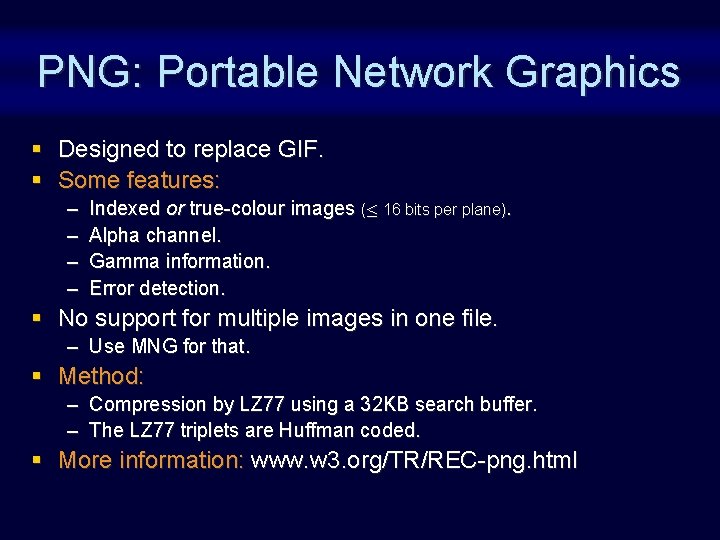

PNG: Portable Network Graphics § Designed to replace GIF. § Some features: – – Indexed or true-colour images (· 16 bits per plane). Alpha channel. Gamma information. Error detection. § No support for multiple images in one file. – Use MNG for that. § Method: – Compression by LZ 77 using a 32 KB search buffer. – The LZ 77 triplets are Huffman coded. § More information: www. w 3. org/TR/REC-png. html

Summary § Huffman coding – Simple, easy, fast – Complexity grows exponentially with the block length – Statistics built-in in the code § Arithmetic coding – Complexity grows linearly with the block size – Easily adapted to variable statistics ) used for coding of Markov sources § Universal coding – – Adaptive Huffman or arithmetic coder LZ 77: Buffer with previously sent sequences <offset, length, next> LZ 78: Dictionary instead of buffer <index, next> LZW: Modification to LZ 78 <index>

Summary, cont § Where are the algorithms used? – Huffman coding: JPEG, MPEG, PNG, … – Arithmetic coding: JPEG, JBIG, MPEG-4, … – LZ 77: PNG, PKZip, gzip, … – LZW: compress, GIF, v 42 bis, …

Finally § These methods work best if the source alphabet is small and the distribution skewed. – Text – Graphics § Analog sources (images, sound) require other methods – complex dependencies – accepted distortion

- Slides: 29