TRAINING MODELS IN TENSORFLOW WITH OVERSUBSCRIBED GPU MEMORY

TRAINING MODELS IN TENSORFLOW WITH OVERSUBSCRIBED GPU MEMORY AND NVLINK 2. 0 JASON FURMANEK@US. IBM. COM KRIS MURPHY KRISKEND@US. IBM. COM IBM

OVERVIEW • GPU memory limits • Tensor. Flow graph modifications for tensor swapping • What’s possible with NVIDIA NVLink 2. 0 connections between CPU and GPU

DEEP LEARNING IS MEMORY CONSTRAINED • GPUs have limited memory • Neural networks are growing deeper and wider • Amount and size of data to process is always growing

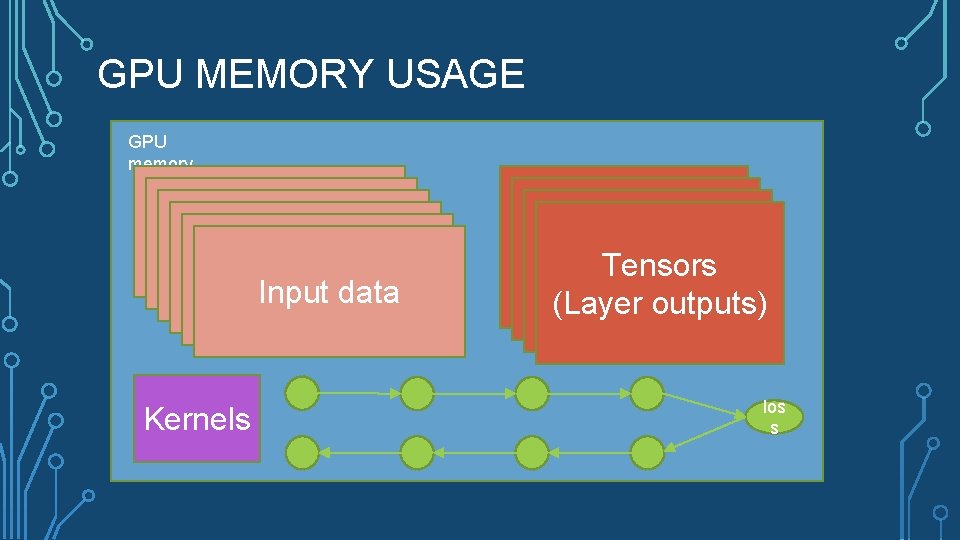

GPU MEMORY USAGE GPU memory Input data Kernels Tensors (Layer outputs) los s

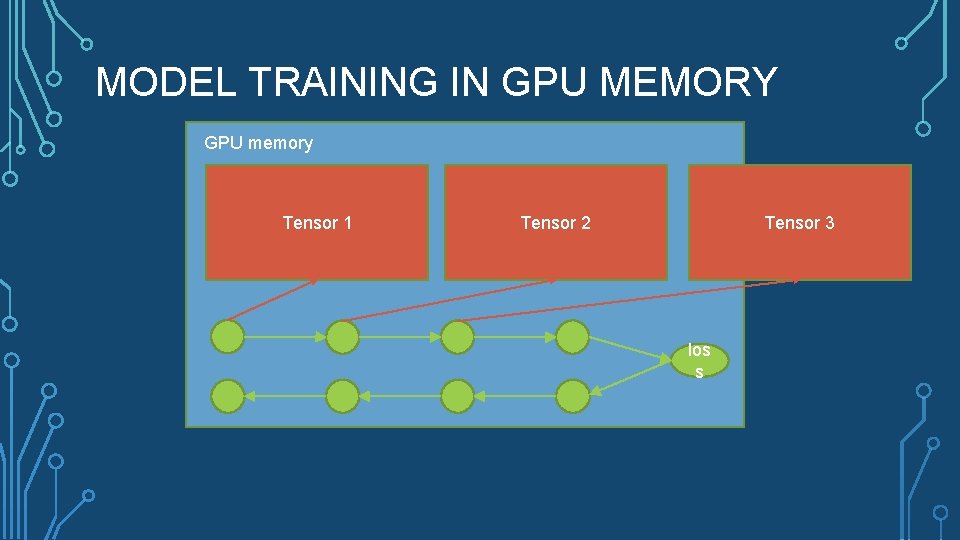

MODEL TRAINING IN GPU MEMORY GPU memory Tensor 1 Tensor 2 Tensor 3 los s

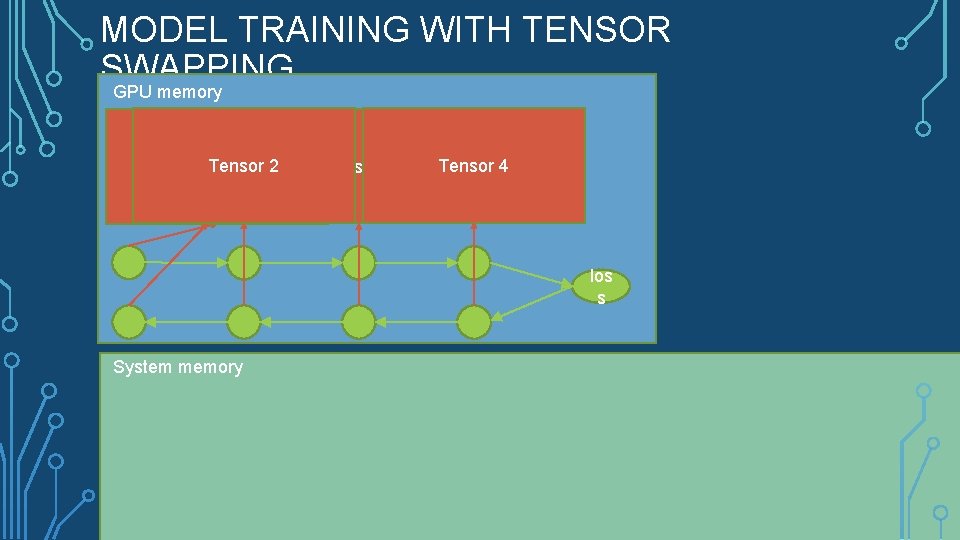

MODEL TRAINING WITH TENSOR SWAPPING GPU memory Tensor 1 2 Tensor 3 Tensor 4 los s System memory

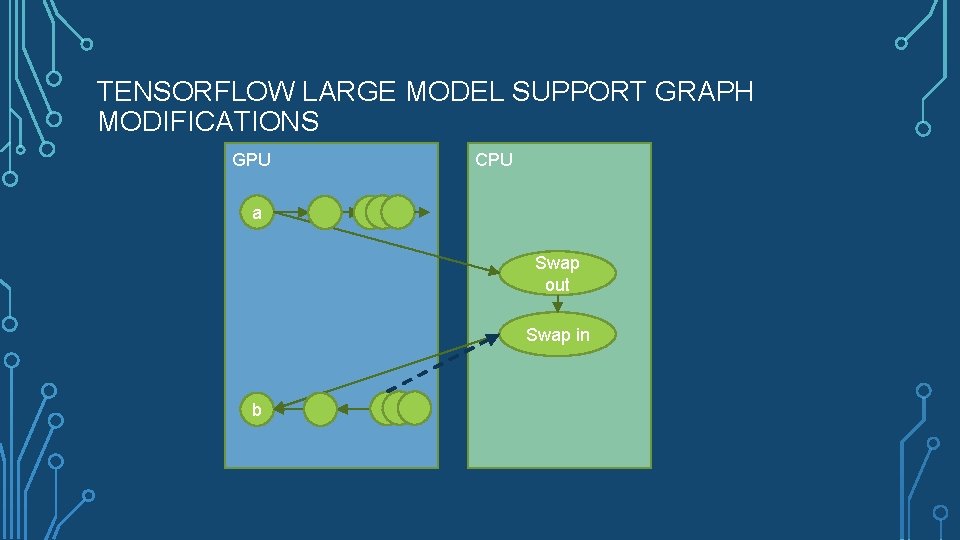

TENSORFLOW LARGE MODEL SUPPORT GRAPH MODIFICATIONS GPU CPU a Swap out Swap in b

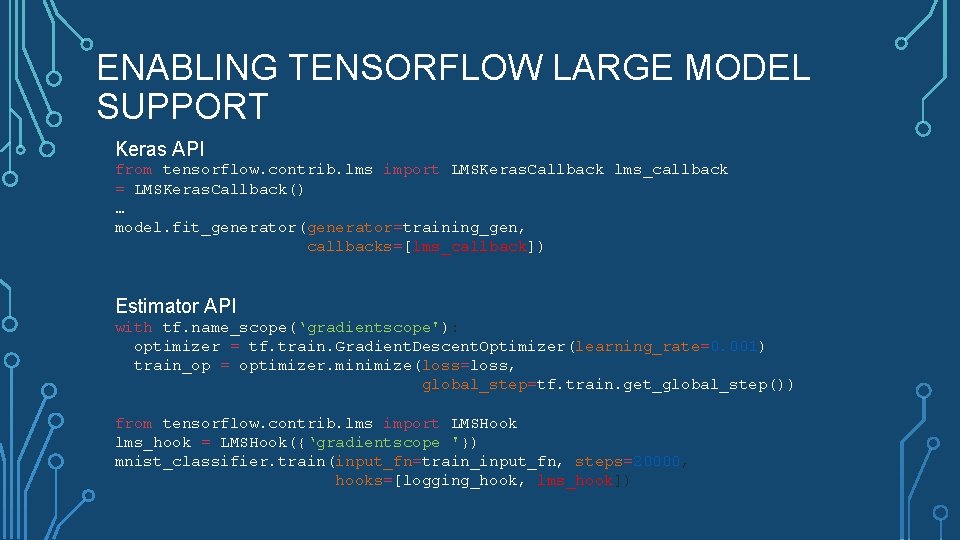

ENABLING TENSORFLOW LARGE MODEL SUPPORT Keras API from tensorflow. contrib. lms import LMSKeras. Callback lms_callback = LMSKeras. Callback() … model. fit_generator(generator=training_gen, callbacks=[lms_callback]) Estimator API with tf. name_scope(‘gradientscope'): optimizer = tf. train. Gradient. Descent. Optimizer(learning_rate=0. 001) train_op = optimizer. minimize(loss=loss, global_step=tf. train. get_global_step()) from tensorflow. contrib. lms import LMSHook lms_hook = LMSHook({‘gradientscope '}) mnist_classifier. train(input_fn=train_input_fn, steps=20000, hooks=[logging_hook, lms_hook])

WHAT’S POSSIBLE WITH LARGE MODEL SUPPORT? • Google. Net: 2. 5 x increase in the max image resolution • Resnet 50: 5 x increase in batch size • Resnet 152: 4. 6 x increase in batch size • 3 D-Unet brain MRIs: 2. 4 x increase in the max image resolution

3 D-UNET IMAGE SEGMENTATION • 3 D-Unet generally has high memory usage requirements • International Multimodal Brain Tumor Segmentation Challenge (Bra. TS) • Existing Keras model with Tensor. Flow backend

EFFECT OF 2 X RESOLUTION ON DICE COEFFICIENTS (HIGHER IS BETTER)

“SWAPPING MAKES EVERYTHING SLOW”

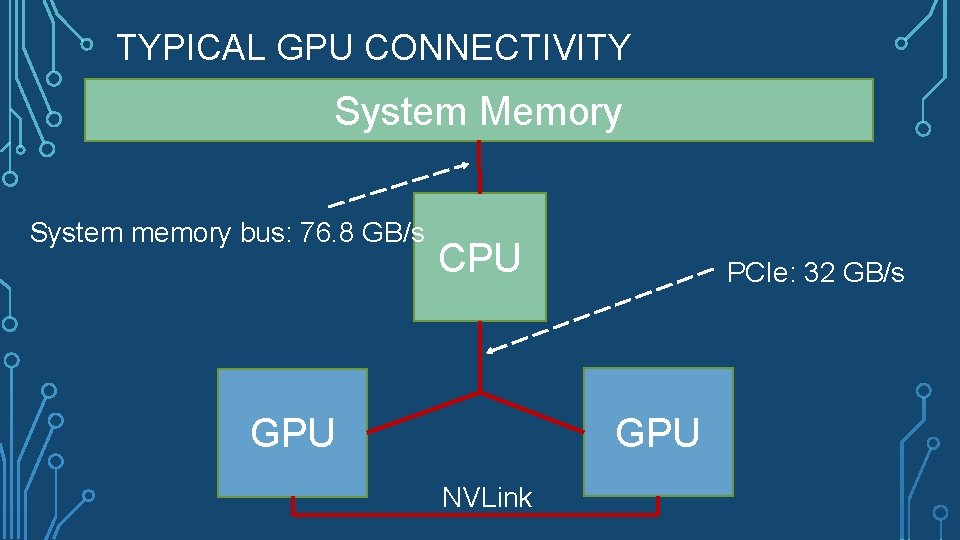

TYPICAL GPU CONNECTIVITY System Memory System memory bus: 76. 8 GB/s CPU PCIe: 32 GB/s GPU NVLink

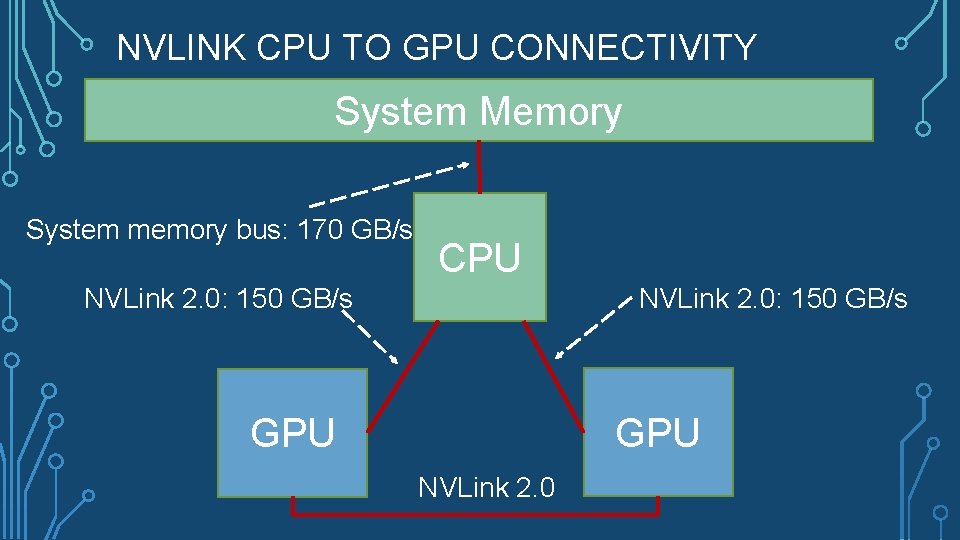

NVLINK CPU TO GPU CONNECTIVITY System Memory System memory bus: 170 GB/s CPU NVLink 2. 0: 150 GB/s GPU NVLink 2. 0

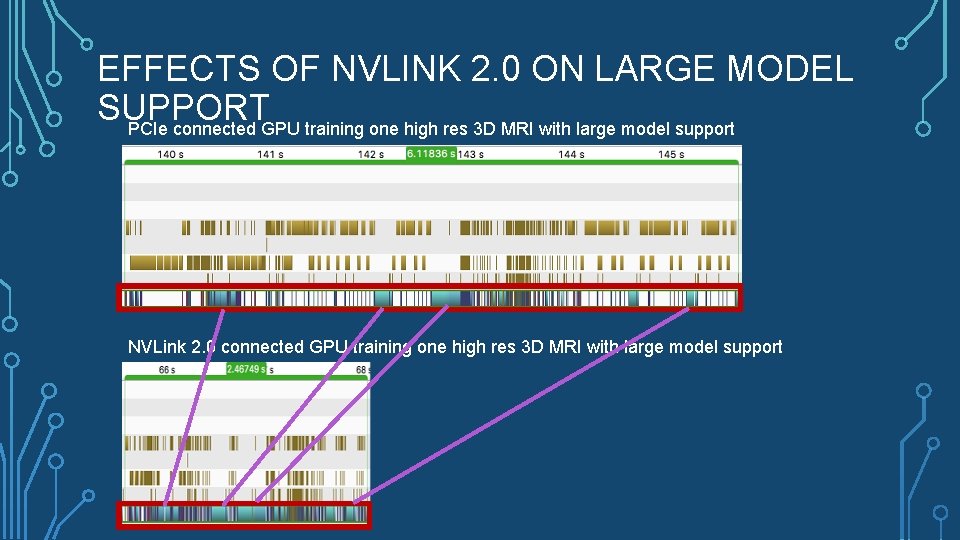

EFFECTS OF NVLINK 2. 0 ON LARGE MODEL SUPPORT PCIe connected GPU training one high res 3 D MRI with large model support NVLink 2. 0 connected GPU training one high res 3 D MRI with large model support

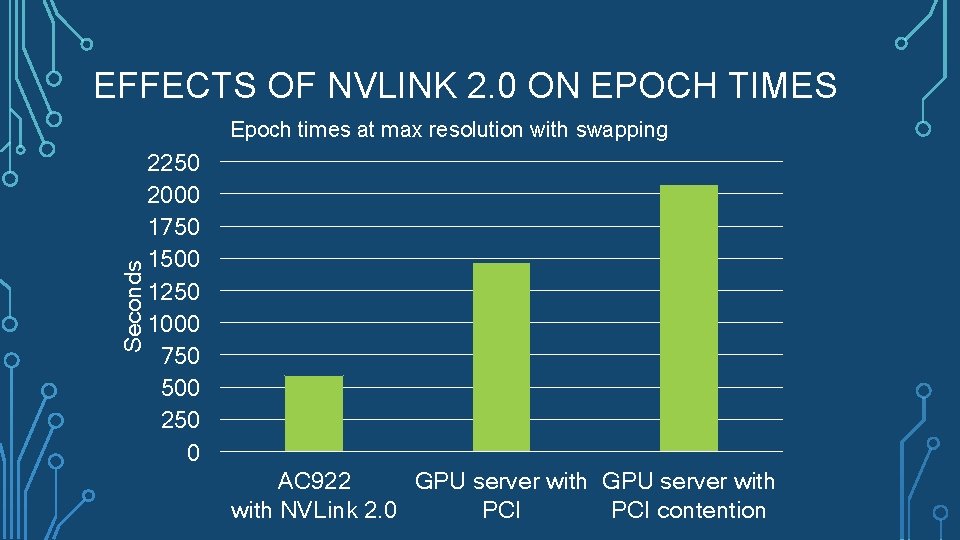

EFFECTS OF NVLINK 2. 0 ON EPOCH TIMES Seconds Epoch times at max resolution with swapping 2250 2000 1750 1500 1250 1000 750 500 250 0 AC 922 GPU server with NVLink 2. 0 PCI contention

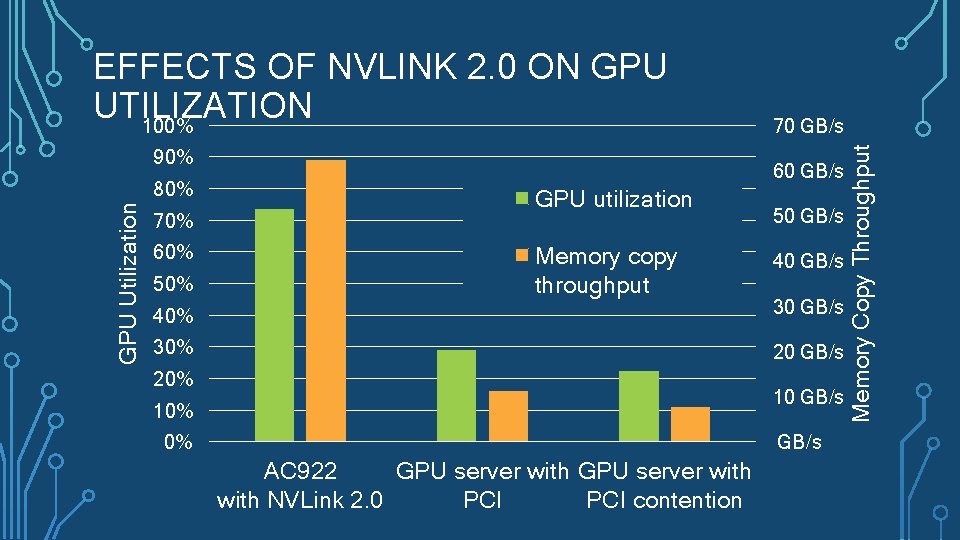

90% GPU Utilization 80% 70% 60% 50% 70 GB/s 60 GB/s GPU utilization Memory copy throughput 50 GB/s 40% 30 GB/s 30% 20 GB/s 20% 10 GB/s 10% 0% GB/s AC 922 GPU server with NVLink 2. 0 PCI contention Memory Copy Throughput EFFECTS OF NVLINK 2. 0 ON GPU UTILIZATION 100%

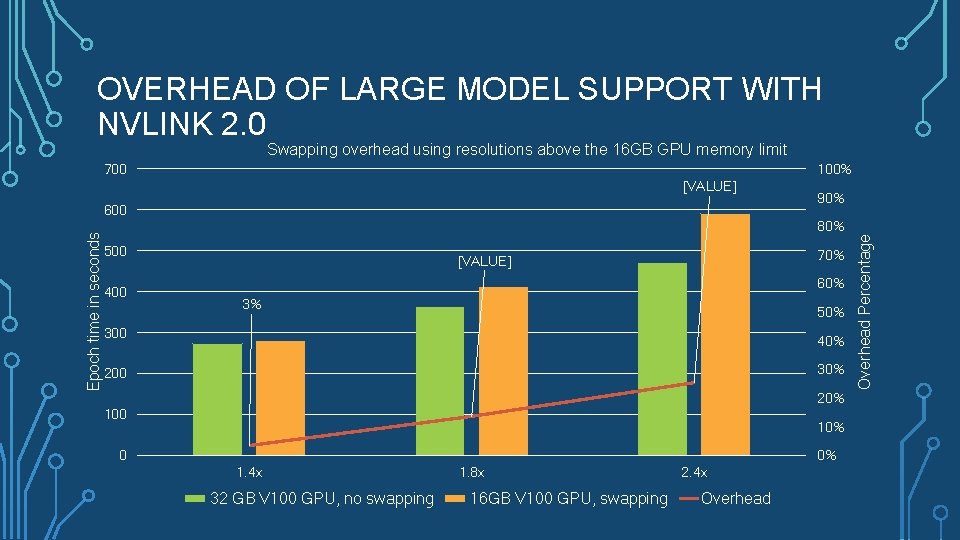

OVERHEAD OF LARGE MODEL SUPPORT WITH NVLINK 2. 0 Swapping overhead using resolutions above the 16 GB GPU memory limit 100% [VALUE] Epoch time in seconds 600 90% 80% 500 400 70% [VALUE] 60% 3% 50% 300 40% 30% 200 20% 100 10% 0 0% 1. 4 x 32 GB V 100 GPU, no swapping 1. 8 x 16 GB V 100 GPU, swapping 2. 4 x Overhead Percentage 700

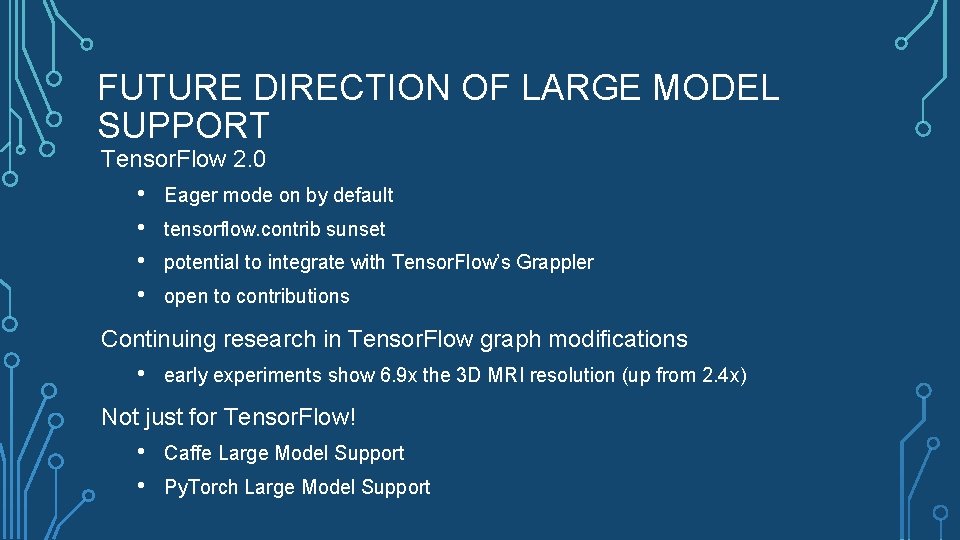

FUTURE DIRECTION OF LARGE MODEL SUPPORT Tensor. Flow 2. 0 • • Eager mode on by default tensorflow. contrib sunset potential to integrate with Tensor. Flow’s Grappler open to contributions Continuing research in Tensor. Flow graph modifications • early experiments show 6. 9 x the 3 D MRI resolution (up from 2. 4 x) Not just for Tensor. Flow! • • Caffe Large Model Support Py. Torch Large Model Support

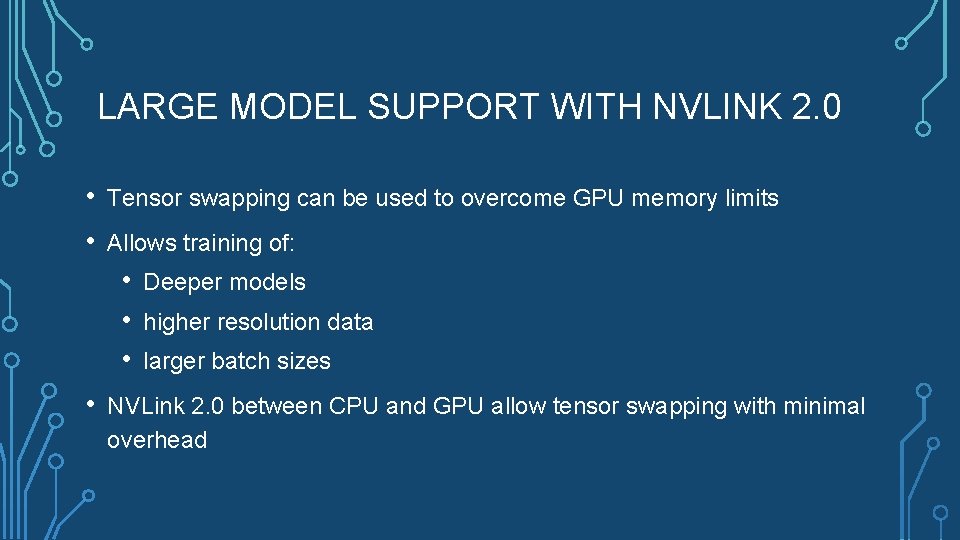

LARGE MODEL SUPPORT WITH NVLINK 2. 0 • • Tensor swapping can be used to overcome GPU memory limits Allows training of: • • Deeper models higher resolution data larger batch sizes NVLink 2. 0 between CPU and GPU allow tensor swapping with minimal overhead

MORE INFORMATION Tensor. Flow Large Model Support Code / Pull Request: https: //github. com/tensorflow/pull/19845/ Tensor. Flow Large Model Support Research Paper: https: //arxiv. org/pdf/1807. 02037. pdf Tensor. Flow Large Model Support Case Study: https: //developer. ibm. com/linuxonpower/2018/07/27/tensorflow-large-model-support-case-study-3 d-image-segmentation/ IBM AC 922 with NVLink 2. 0 connections between CPU and GPU: https: //www. ibm. com/us-en/marketplace/power-systems-ac 922

- Slides: 21