The worlds best chatbot Marcus Liwicki EISLAB Machine

The world’s best chatbot Marcus Liwicki EISLAB Machine Learning (chair) Luleå University of Technology EISLAB: Embedded Intelligent Systems LAB Marcus Liwicki: Machine Learning Meetup @LTU 1

What is the Biggest Break Through of AI? Marcus Liwicki: Machine Learning Meetup @LTU 2

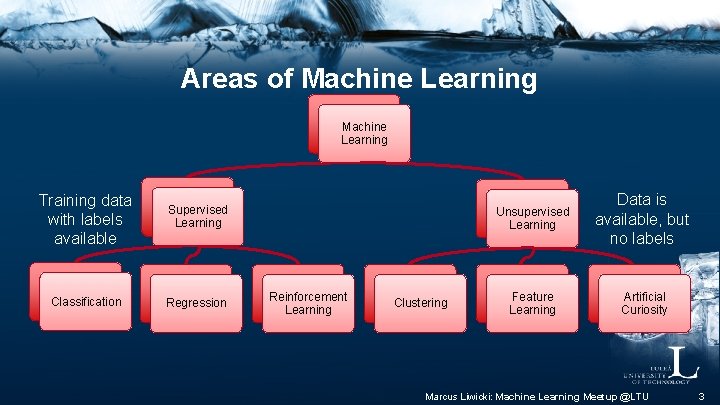

Areas of Machine Learning Training data with labels available Supervised Learning Classification Regression Reinforcement Learning Clustering Unsupervised Learning Data is available, but no labels Feature Learning Artificial Curiosity Marcus Liwicki: Machine Learning Meetup @LTU 3

Machine Learning @ LTU § § § § Fundamental Res. Document Analysis e. Health Space Speech And of course: natural language processing http: //bit. ly/liwicki-vdl-17 (all my lecture material) Marcus Liwicki: Machine Learning Meetup @LTU 4

Overview of Today § Background: small overview of ML § Tools, and links – just for your reference (slides available) § Creating the world's best chatbot – Natural language processing – Semantic hashing § Conclusion Marcus Liwicki: Machine Learning Meetup @LTU 5

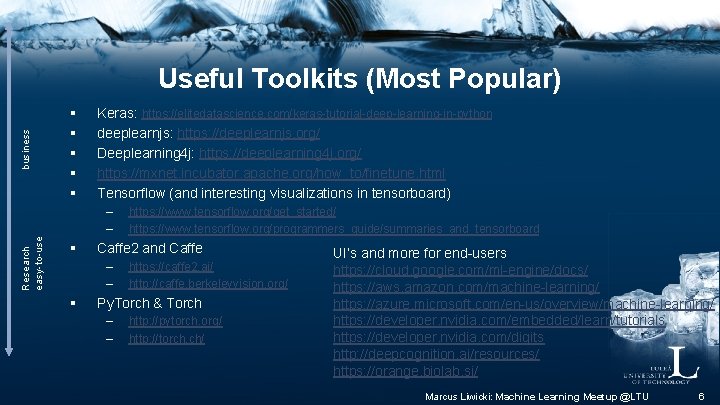

Research business easy-to-use Useful Toolkits (Most Popular) § § § Keras: https: //elitedatascience. com/keras-tutorial-deep-learning-in-python deeplearnjs: https: //deeplearnjs. org/ Deeplearning 4 j: https: //deeplearning 4 j. org/ https: //mxnet. incubator. apache. org/how_to/finetune. html Tensorflow (and interesting visualizations in tensorboard) – – § Caffe 2 and Caffe – – § https: //www. tensorflow. org/get_started/ https: //www. tensorflow. org/programmers_guide/summaries_and_tensorboard https: //caffe 2. ai/ http: //caffe. berkeleyvision. org/ Py. Torch & Torch – – http: //pytorch. org/ http: //torch. ch/ UI’s and more for end-users https: //cloud. google. com/ml-engine/docs/ https: //aws. amazon. com/machine-learning/ https: //azure. microsoft. com/en-us/overview/machine-learning/ https: //developer. nvidia. com/embedded/learn/tutorials https: //developer. nvidia. com/digits http: //deepcognition. ai/resources/ https: //orange. biolab. si/ Marcus Liwicki: Machine Learning Meetup @LTU 6

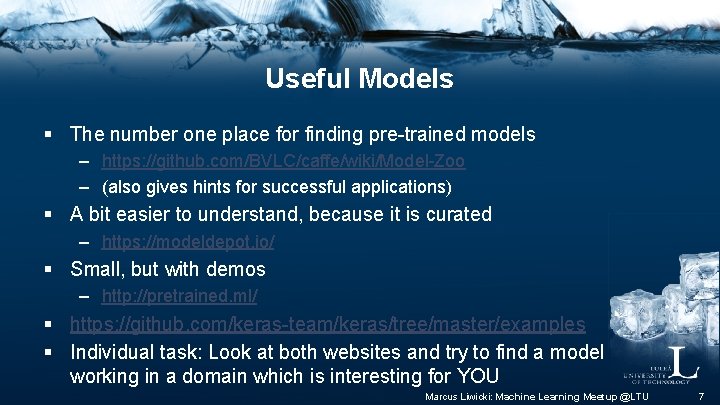

Useful Models § The number one place for finding pre-trained models – https: //github. com/BVLC/caffe/wiki/Model-Zoo – (also gives hints for successful applications) § A bit easier to understand, because it is curated – https: //modeldepot. io/ § Small, but with demos – http: //pretrained. ml/ § https: //github. com/keras-team/keras/tree/master/examples § Individual task: Look at both websites and try to find a model working in a domain which is interesting for YOU Marcus Liwicki: Machine Learning Meetup @LTU 7

Other Useful Links § § § https: //teachablemachine. withgoogle. com/ http: //playground. tensorflow. org https: //experiments. withgoogle. com/ai https: //transcranial. github. io/keras-js/#/imdb-bidirectional-lstm https: //transcranial. github. io/keras-js/#/mnist-acgan https: //quickdraw. withgoogle. com/ Marcus Liwicki: Machine Learning Meetup @LTU 8

We can Learn From Failures & Success § Deep Learning and AI is not the answer to everything – https: //www. techrepublic. com/article/top-10 -ai-failures-of-2016/ § An extension of reinforcement learning is Artificial Curiosity – Could (and definitely would) go terribly wrong § https: //blog. statsbot. co/deep-learning-achievements 4 c 563 e 034257 Marcus Liwicki: Machine Learning Meetup @LTU 9

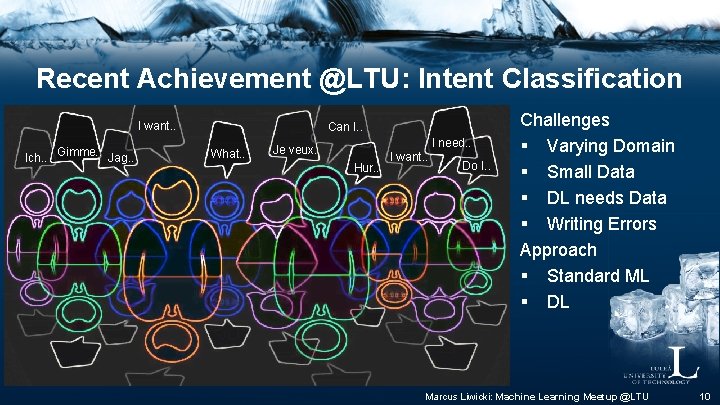

Recent Achievement @LTU: Intent Classification I want. . Ich. . Gimme. . Jag. . Can I. . What. . I need. . Je veux. . Hur. . I want. . Do I. . Challenges § Varying Domain § Small Data § DL needs Data § Writing Errors Approach § Standard ML § DL Marcus Liwicki: Machine Learning Meetup @LTU 10

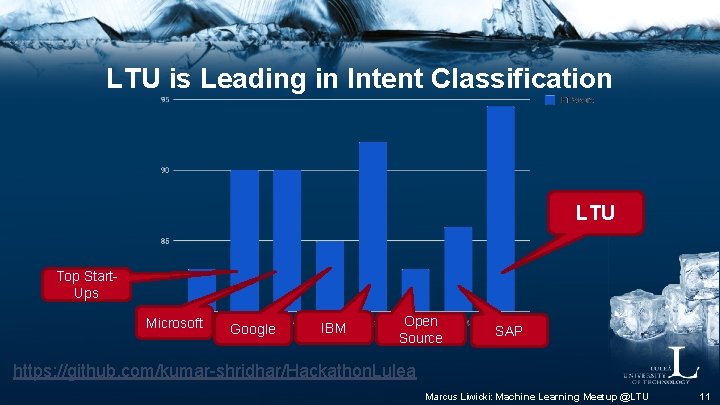

LTU is Leading in Intent Classification LTU Top Start. Ups Microsoft Google IBM Open Source SAP https: //github. com/kumar-shridhar/Hackathon. Lulea Marcus Liwicki: Machine Learning Meetup @LTU 11

Natural Language Processing Marcus Liwicki: Machine Learning Meetup @LTU 12

Natural Language Processing § Languages often seem to behave in arbitrary ways and forms – cabz, cats § Ambiguity, sarcasm and irony are often not apparent from purely textual information § Domain-specific terms and phrases that may not even be grammatically correct – to short a stock – no woman no cry Marcus Liwicki: Machine Learning Meetup @LTU 13

§ Word order Differences in Principles – English: John ate apples – Japanese: Jon wa ringo o tabeta § Null Subject – English: It is raining – Spanish: Está lloviendo § Ambiguity – John and Henry’s parents arrived at the house. -> how many people? § Recursion – He said that _ she knew that _ they are there _ where we have been _ when Marcus Liwicki: Machine Learning Meetup @LTU 14

Discussion § What is better? – Rule-based NLP (Grammar, like software constructs) – Deep Learning based NLP (much text available) Marcus Liwicki: Machine Learning Meetup @LTU 15

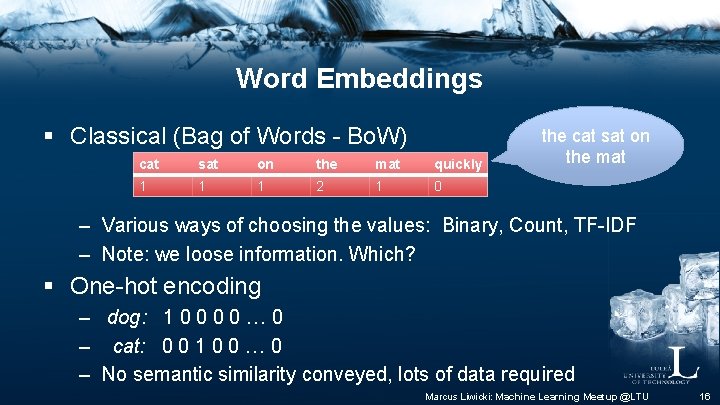

Word Embeddings § Classical (Bag of Words - Bo. W) cat sat on the mat quickly 1 1 1 2 1 0 the cat sat on the mat – Various ways of choosing the values: Binary, Count, TF-IDF – Note: we loose information. Which? § One-hot encoding – dog: 1 0 0 … 0 – cat: 0 0 1 0 0 … 0 – No semantic similarity conveyed, lots of data required Marcus Liwicki: Machine Learning Meetup @LTU 16

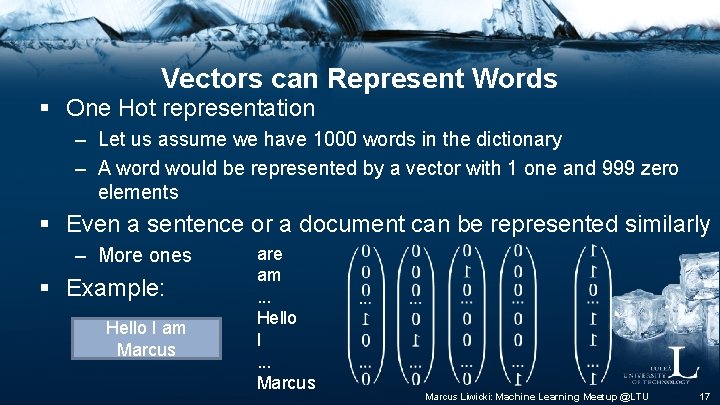

Vectors can Represent Words § One Hot representation – Let us assume we have 1000 words in the dictionary – A word would be represented by a vector with 1 one and 999 zero elements § Even a sentence or a document can be represented similarly – More ones § Example: Hello I am Marcus are am. . . Hello I. . . Marcus Liwicki: Machine Learning Meetup @LTU 17

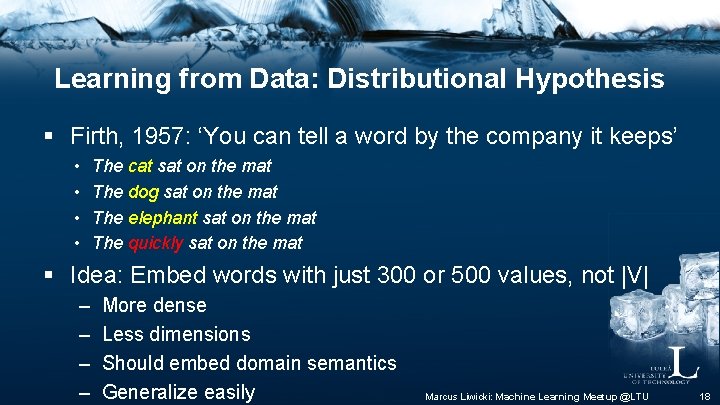

Learning from Data: Distributional Hypothesis § Firth, 1957: ‘You can tell a word by the company it keeps’ • • The cat sat on the mat The dog sat on the mat The elephant sat on the mat The quickly sat on the mat § Idea: Embed words with just 300 or 500 values, not |V| – – More dense Less dimensions Should embed domain semantics Generalize easily Marcus Liwicki: Machine Learning Meetup @LTU 18

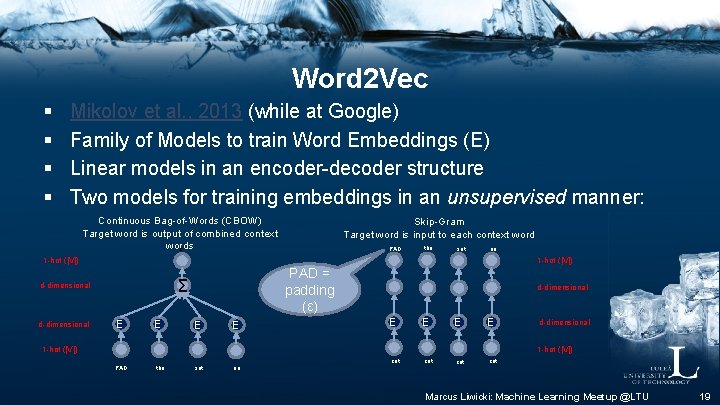

Word 2 Vec § § Mikolov et al. , 2013 (while at Google) Family of Models to train Word Embeddings (E) Linear models in an encoder-decoder structure Two models for training embeddings in an unsupervised manner: Continuous Bag-of-Words (CBOW) Target word is output of combined context words c PAD the sat on at 1 -hot (|V|) PAD = padding (ε) Σ d-dimensional Skip-Gram Target word is input to each context word E E d-dimensional E E cat cat d-dimensional 1 -hot (|V|) PAD the sat on Marcus Liwicki: Machine Learning Meetup @LTU 19

And These can be Really Useful and Fun § Task: test the word 2 vec online demo – https: //rare-technologies. com/word 2 vec-tutorial/#app § Try out your own word combinations § Are there cases where it is particularly good/bad? Marcus Liwicki: Machine Learning Meetup @LTU 20

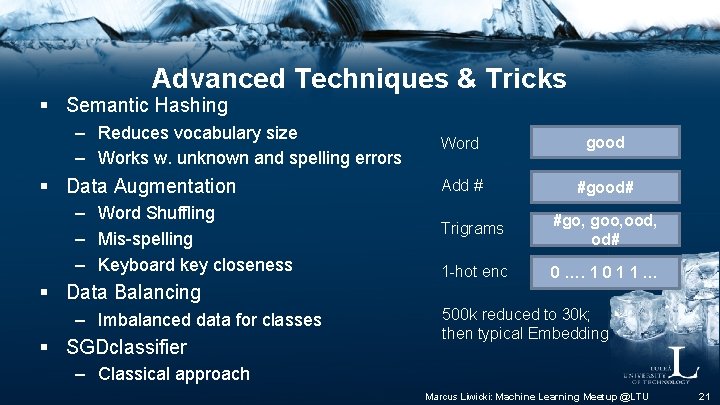

Advanced Techniques & Tricks § Semantic Hashing – Reduces vocabulary size – Works w. unknown and spelling errors § Data Augmentation – Word Shuffling – Mis-spelling – Keyboard key closeness § Data Balancing – Imbalanced data for classes § SGDclassifier Word good Add # #good# Trigrams #go, goo, ood, od# 1 -hot enc 0 …. 1 0 1 1 … 500 k reduced to 30 k; then typical Embedding – Classical approach Marcus Liwicki: Machine Learning Meetup @LTU 21

Marvin 2025 is happy! § § § Most of our work is Open Source Including data and documentation https: //diva-dia. github. io/Deep. DIVAweb/ https: //diuf. unifr. ch/main/hisdoc/divaservices As interactive i. Python Notebook with tutorial-like explanation https: //github. com/kumar-shridhar/Hackathon. Lulea Marcus Liwicki: Machine Learning Meetup @LTU 22

Engaging Education with Music § i. Mu. Sci. CA www. imuscica. eu – Ongoing EU project – Try it out with Chrome: https: //workbench. imuscica. eu/ § Team Teaching with STEAM – Science, Technology, Engineering & Mathematics combined with Arts § Workshop & Concert in Luleå (2019 -03 -02) – http: //www. kulturenshus. com/evenemang/imuscica/ Marcus Liwicki: Machine Learning Meetup @LTU – http: //www. ltu. se/eu-steam-2019 23

Conclusion § Deep Learning is really good (Sot. A) in many tasks – Speech, image, handwriting, video recognition – Intend recognition, sentiment analysis – Stock market prediction, big data forecast § However, it does not solve everything – – More than 1000 classes? Often biased to training set https: //arxiv. org/ftp/arxiv/papers/1801. 00631. pdf And: https: //medium. com/@Gary. Marcus/in-defense-of-skepticism-about -deep-learning-6 e 8 bfd 5 ae 0 f 1 Marcus Liwicki: Machine Learning Meetup @LTU 24

Thank You + Lab Members & Beyond Marcus Gustav Fotini Pedro Rajkumar Priamvada György And colleagues - LTU - Kaiserslautern - Fribourg - International Oluwatosin Marcus Liwicki: Machine Learning Meetup @LTU 25

- Slides: 26