Safe and Robust Deep Learning Gagandeep Singh Ph

Safe and Robust Deep Learning Gagandeep Singh Ph. D Student Department of Computer Science 1

Safe. AI @ ETH Zurich Joint work with z Martin Vechev Markus Püschel Timon Gehr Matthew Mirman Mislav Balunovic Maximilian Baader Petar Tsankov Dana Drachsler Publications: [1] AI 2: Safety and Robustness Certification of Neural Networks with Abstract Interpretation, S&P’ 18 [2] Differentiable Abstract Interpretation for Provably Robust Neural Networks, ICML’ 18 [3] Fast and Effective Robustness Certification, Neur. IPS’ 19 [4] An Abstract Domain for Certifying Neural Networks, POPL’ 19 [5] Boosting Robustness Certification of Neural Networks, ICLR’ 19 safeai. ethz. ch 2

Deep Learning Systems Self driving cars Chatbot https: //waymo. com/tech/ https: //yekaliva. ai Voice assistant https: //www. amazon. com/ 3 Amazon-Echo-And-Alexa-Device

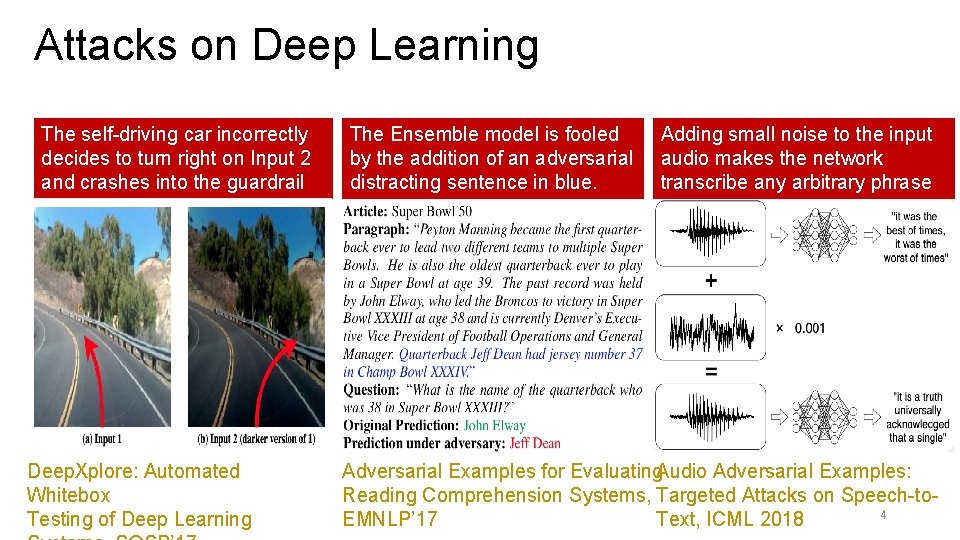

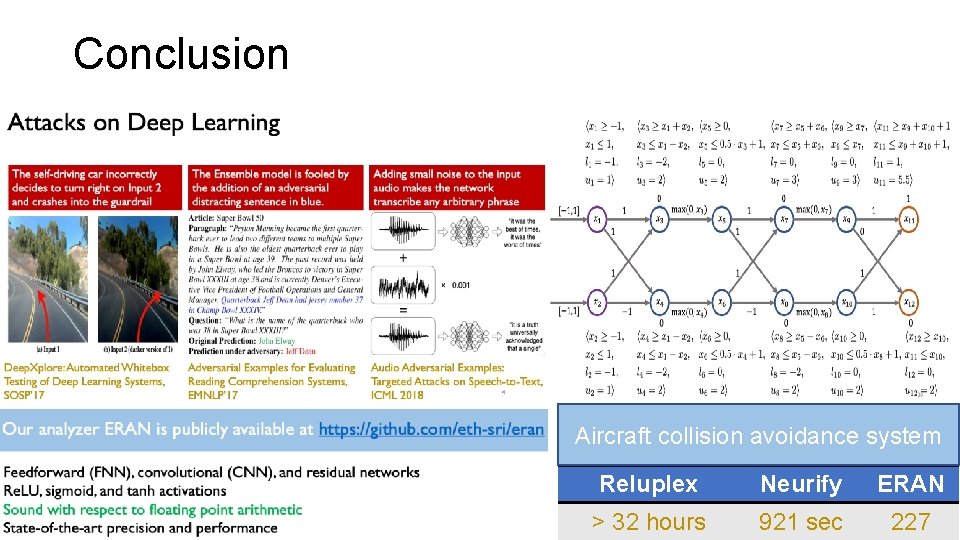

Attacks on Deep Learning The self-driving car incorrectly decides to turn right on Input 2 and crashes into the guardrail Deep. Xplore: Automated Whitebox Testing of Deep Learning The Ensemble model is fooled by the addition of an adversarial distracting sentence in blue. Adding small noise to the input audio makes the network transcribe any arbitrary phrase Adversarial Examples for Evaluating Audio Adversarial Examples: Reading Comprehension Systems, Targeted Attacks on Speech-to 4 EMNLP’ 17 Text, ICML 2018

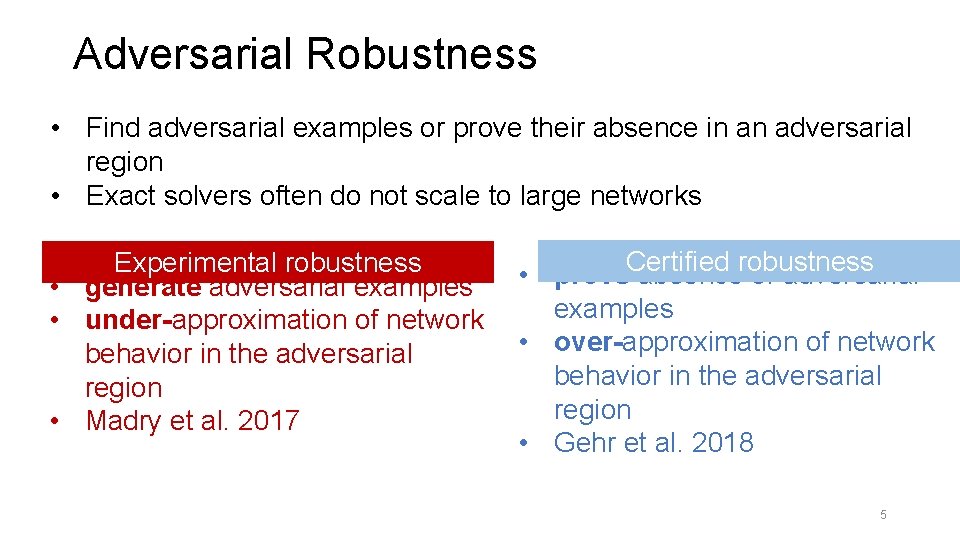

Adversarial Robustness • Find adversarial examples or prove their absence in an adversarial region • Exact solvers often do not scale to large networks Certified robustness Experimental robustness • prove absence of adversarial • generate adversarial examples • under-approximation of network • over-approximation of network behavior in the adversarial region • Madry et al. 2017 • Gehr et al. 2018 5

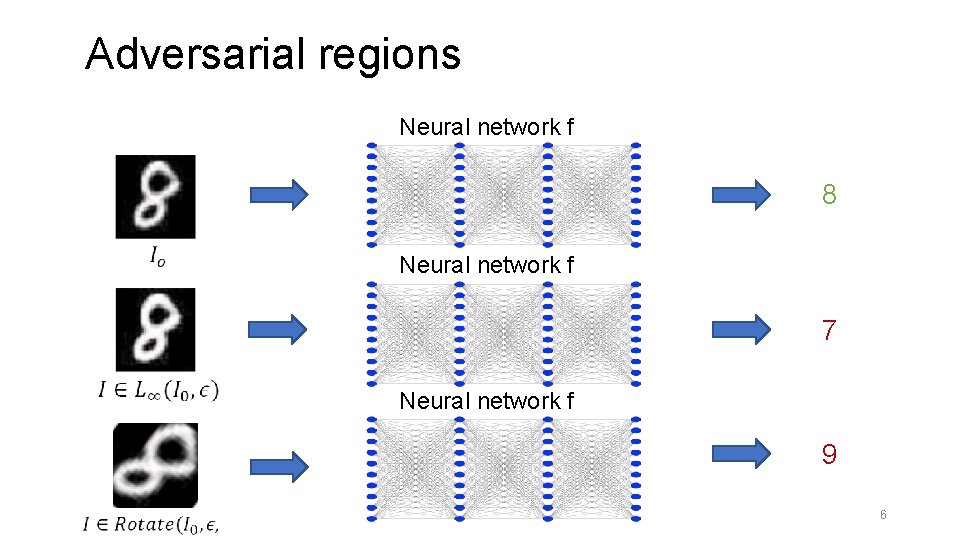

Adversarial regions Neural network f 8 Neural network f 7 Neural network f 9 6

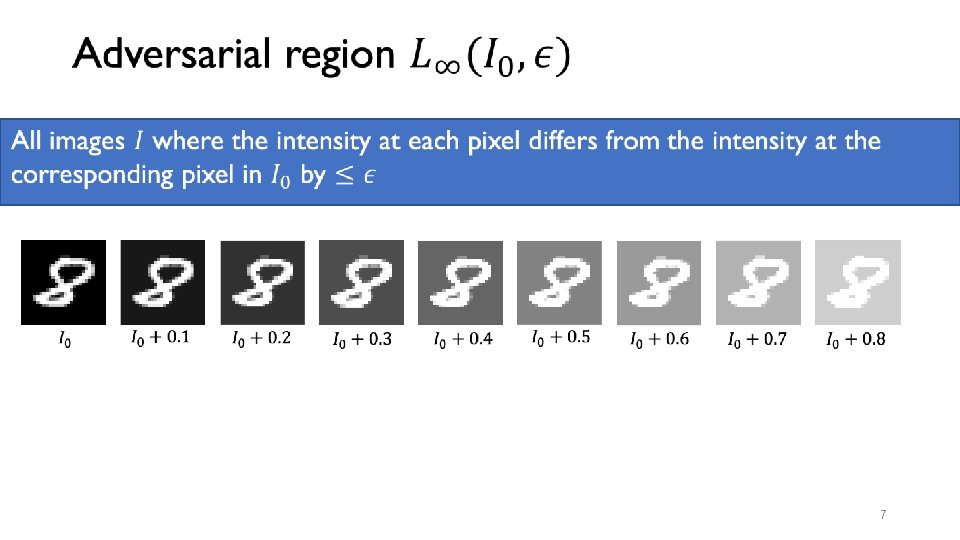

7

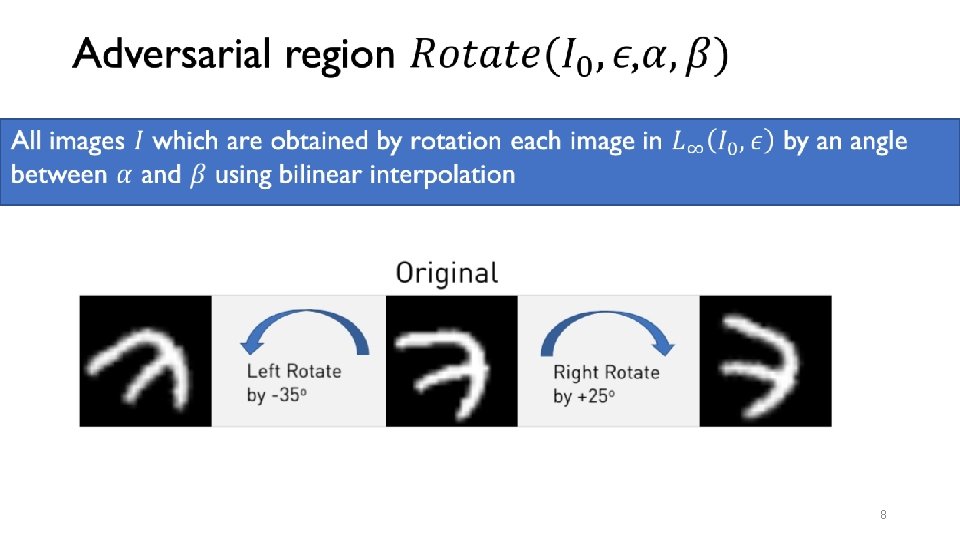

8

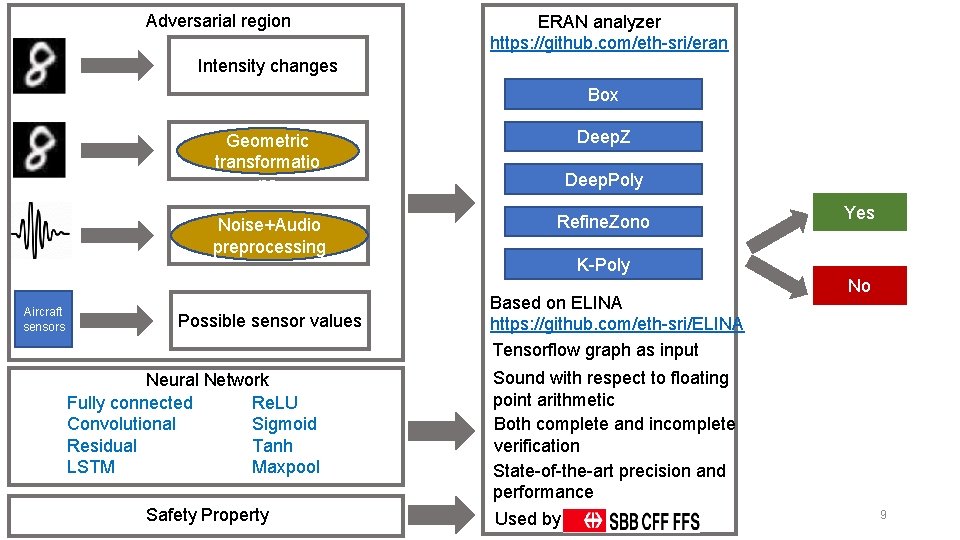

Adversarial region ERAN analyzer https: //github. com/eth-sri/eran Intensity changes Box Geometric transformatio ns Noise+Audio preprocessing Aircraft sensors Possible sensor values Neural Network Re. LU Fully connected Sigmoid Convolutional Tanh Residual Maxpool LSTM Safety Property Deep. Z Deep. Poly Refine. Zono Yes K-Poly Based on ELINA https: //github. com/eth-sri/ELINA Tensorflow graph as input No Sound with respect to floating point arithmetic Both complete and incomplete verification State-of-the-art precision and performance Used by 9

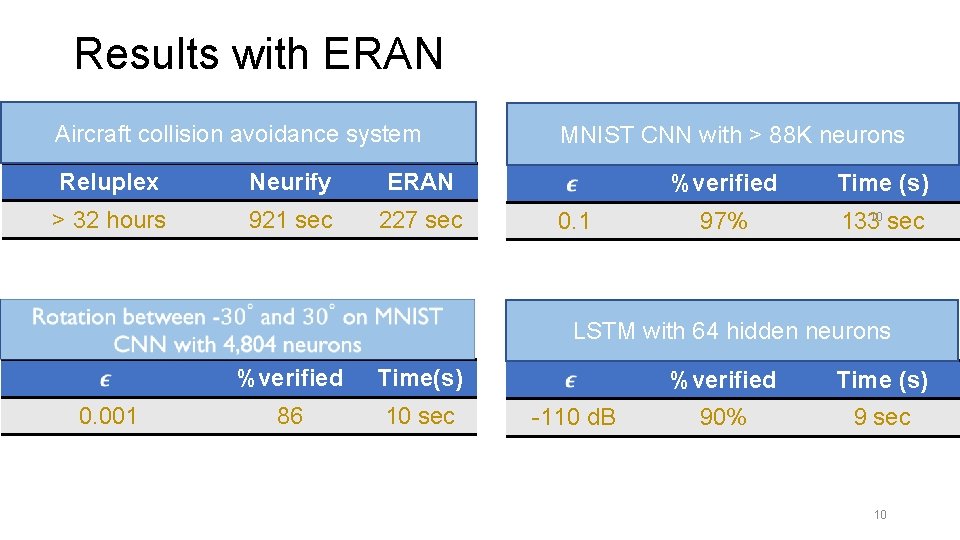

Results with ERAN Aircraft collision avoidance system Reluplex Neurify ERAN > 32 hours 921 sec 227 sec MNIST CNN with > 88 K neurons 0. 1 %verified Time (s) 97% 10 133 sec LSTM with 64 hidden neurons 0. 001 %verified Time(s) 86 10 sec -110 d. B %verified Time (s) 90% 9 sec 10

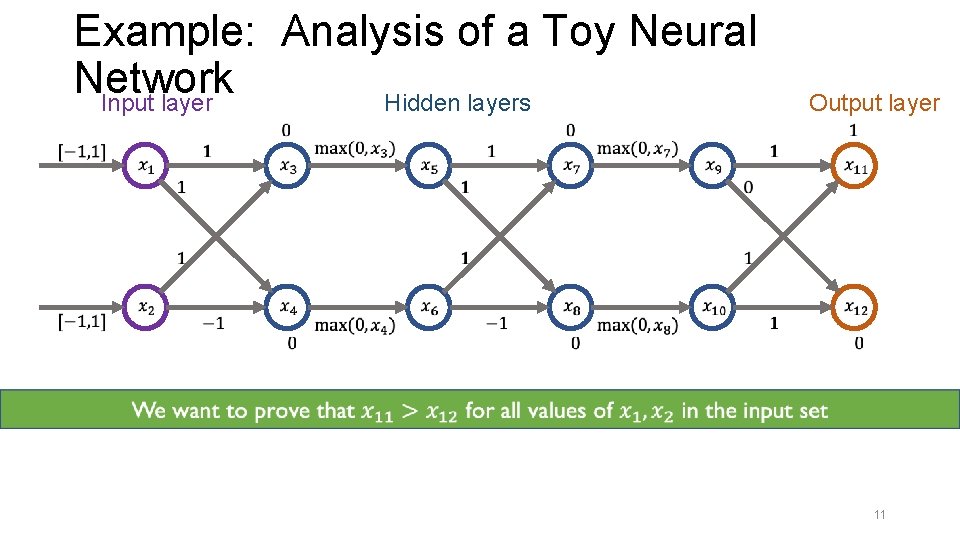

Example: Analysis of a Toy Neural Network Input layer Hidden layers Output layer 11

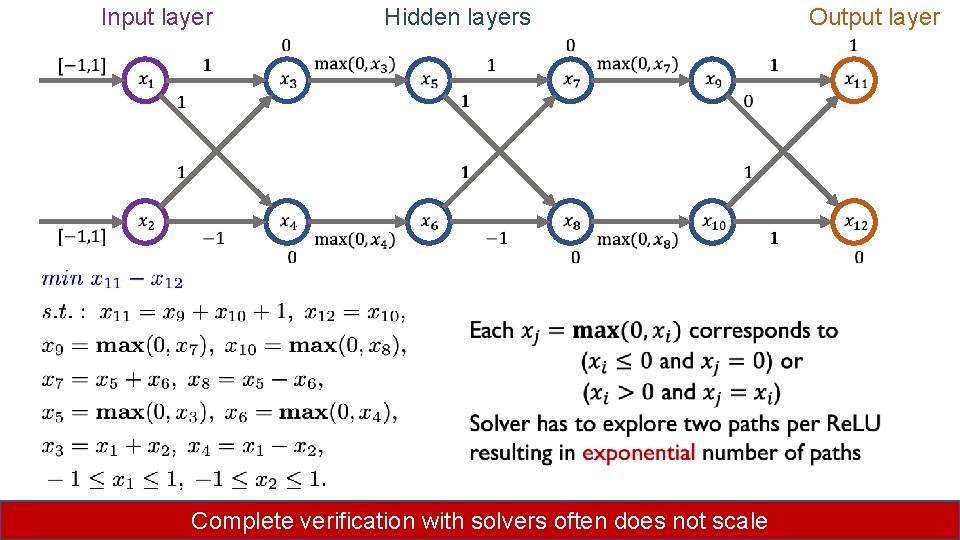

Input layer Hidden layers Output layer Complete verification with solvers often does not scale 12

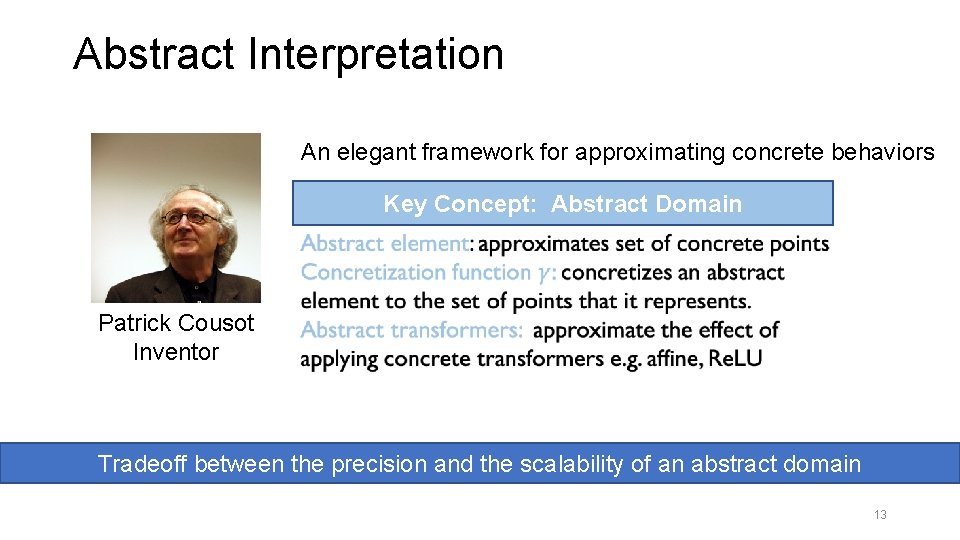

Abstract Interpretation An elegant framework for approximating concrete behaviors Key Concept: Abstract Domain Patrick Cousot Inventor Tradeoff between the precision and the scalability of an abstract domain 13

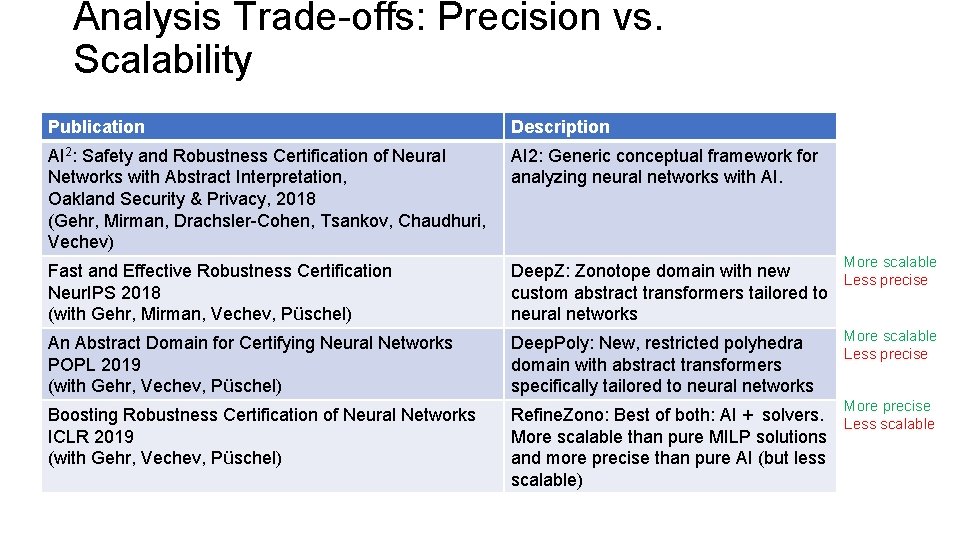

Analysis Trade-offs: Precision vs. Scalability Publication Description AI 2: Safety and Robustness Certification of Neural Networks with Abstract Interpretation, Oakland Security & Privacy, 2018 (Gehr, Mirman, Drachsler-Cohen, Tsankov, Chaudhuri, Vechev) AI 2: Generic conceptual framework for analyzing neural networks with AI. Fast and Effective Robustness Certification Neur. IPS 2018 (with Gehr, Mirman, Vechev, Püschel) Deep. Z: Zonotope domain with new Less precise custom abstract transformers tailored to neural networks An Abstract Domain for Certifying Neural Networks POPL 2019 (with Gehr, Vechev, Püschel) Deep. Poly: New, restricted polyhedra domain with abstract transformers specifically tailored to neural networks Boosting Robustness Certification of Neural Networks ICLR 2019 (with Gehr, Vechev, Püschel) Refine. Zono: Best of both: AI + solvers. Less scalable More scalable than pure MILP solutions and more precise than pure AI (but less scalable) More scalable Less precise More precise

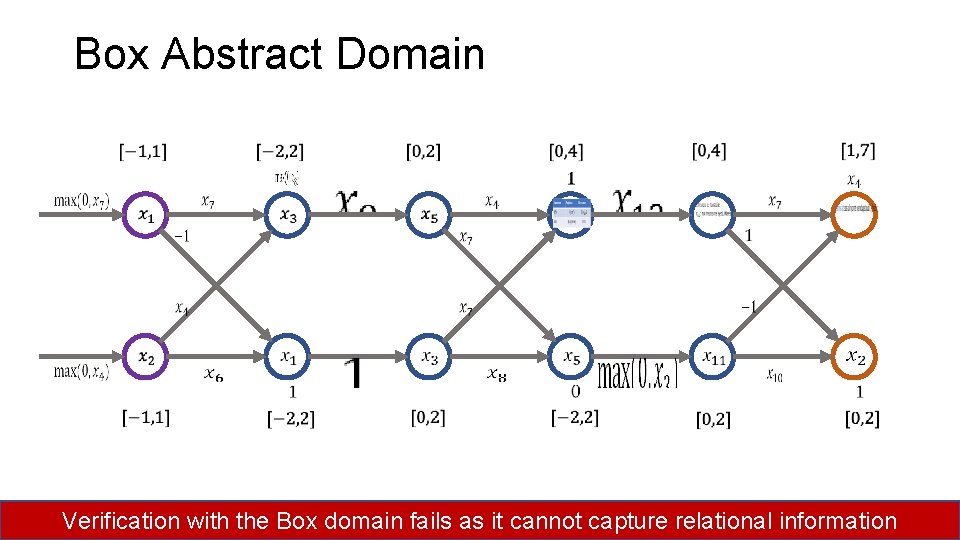

Box Abstract Domain 15 Verification with the Box domain fails as it cannot capture relational information

![Deep. Poly Abstract Domain [POPL’ 19] • less precise than Polyhedra, restriction needed to Deep. Poly Abstract Domain [POPL’ 19] • less precise than Polyhedra, restriction needed to](http://slidetodoc.com/presentation_image_h/c0f7c469d2ddfc6dfcb731b5ec81508c/image-16.jpg)

Deep. Poly Abstract Domain [POPL’ 19] • less precise than Polyhedra, restriction needed to ensure scalability • captures affine transformation precisely Transformer unlike Octagon, TVPI Affine • custom transformers for Re. LU, sigmoid, Re. LU tanh, and maxpool activations Polyhedra Our domain 16

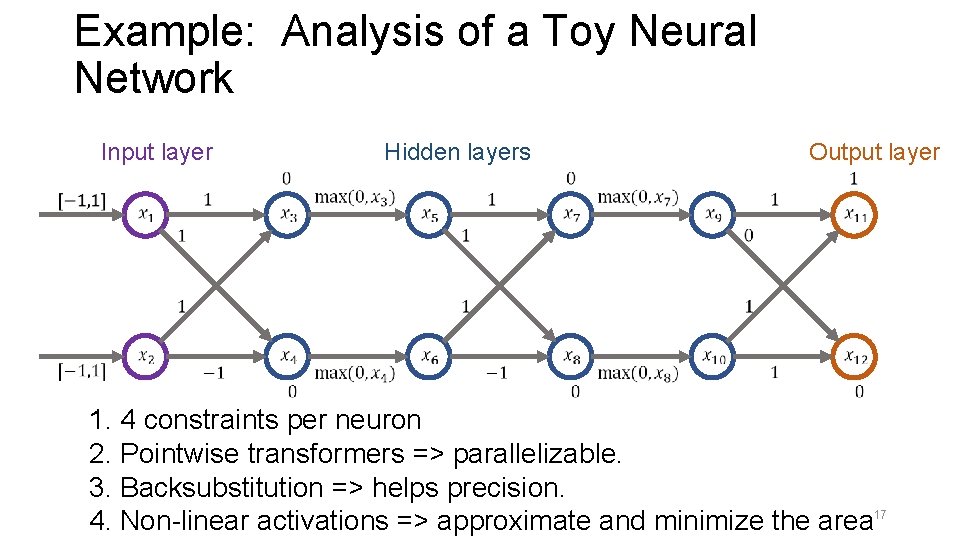

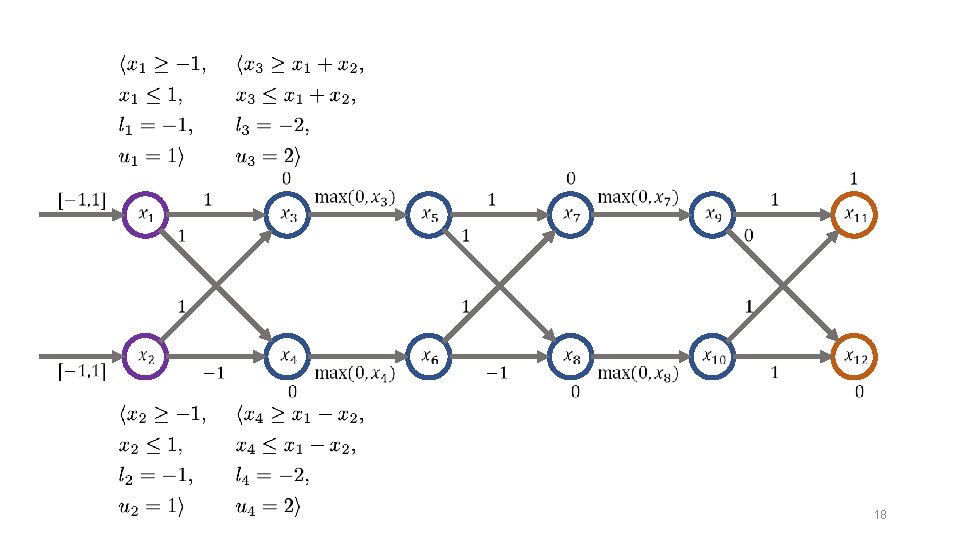

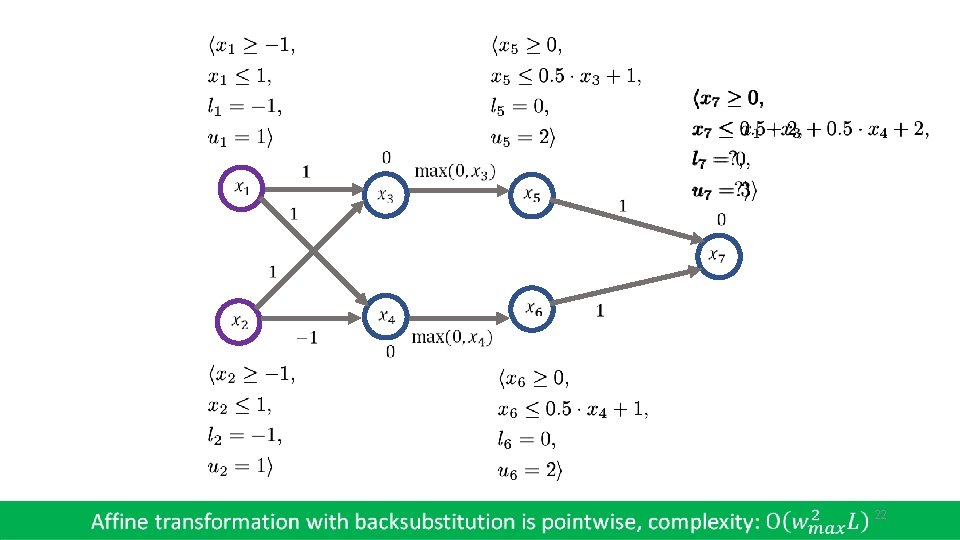

Example: Analysis of a Toy Neural Network Input layer Hidden layers Output layer 1. 4 constraints per neuron 2. Pointwise transformers => parallelizable. 3. Backsubstitution => helps precision. 4. Non-linear activations => approximate and minimize the area 17

18

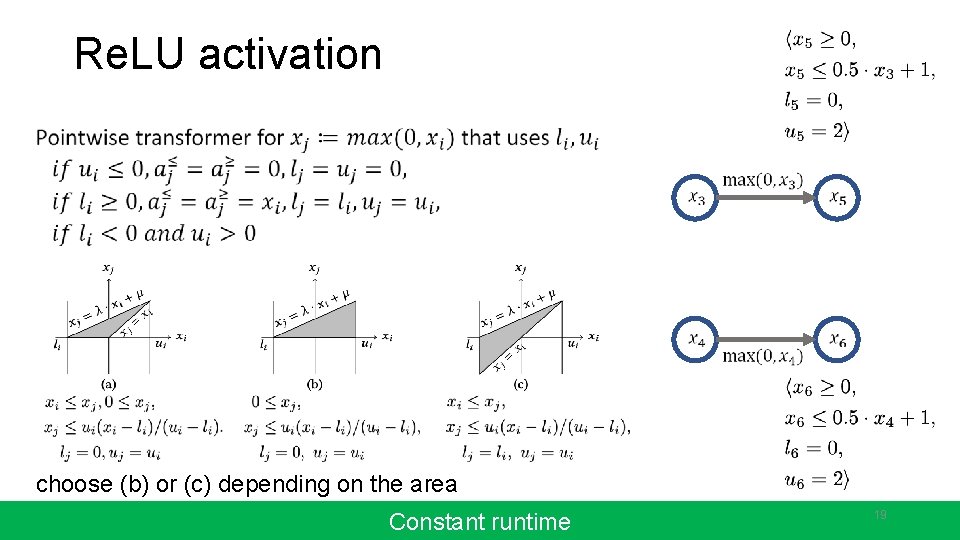

Re. LU activation choose (b) or (c) depending on the area Constant runtime 19

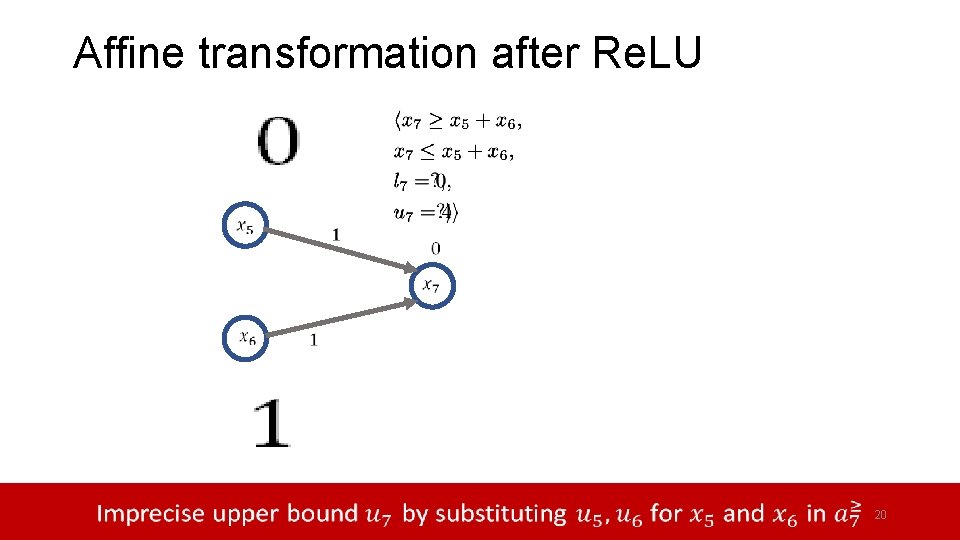

Affine transformation after Re. LU 20

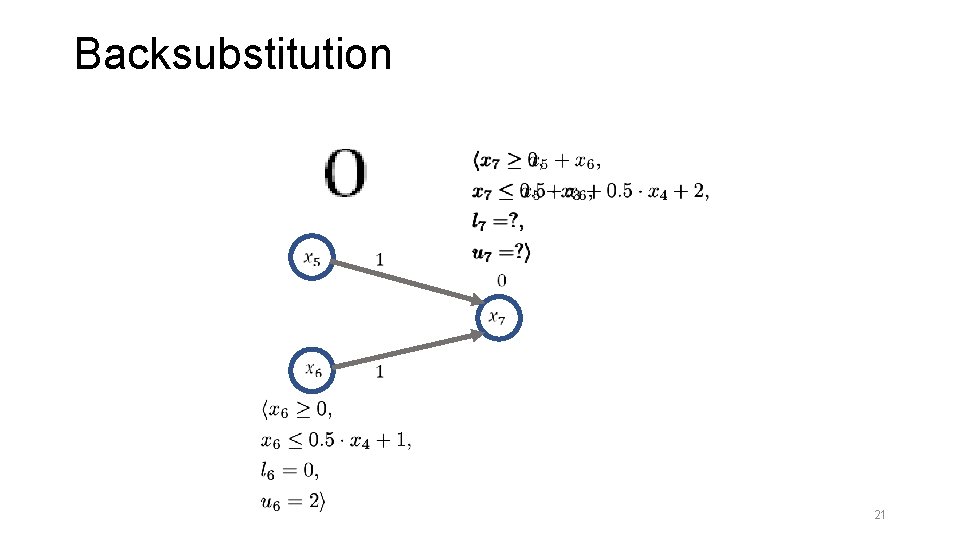

Backsubstitution 21

22

23

Checking for robustness 24

Benchmarks Dataset MNIST Model Type feedforward Conv. Small convolutional Conv. Big convolutional Conv. Super convolutional CIFAR 10 Conv. Small convolutional #Neurons #Layers Defense 610 1, 210 1, 810 3, 604 34, 688 88, 500 4, 852 6 6 9 3 6 6 3 None Diff. AI 25

Results Dataset MNIST Model 0. 02 0. 015 Conv. Smal 0. 12 l Conv. Big 0. 2 Conv. Supe 0. 1 Deep. Z time(s ) 31 0. 6 13 1. 8 12 3. 7 Deep. Poly Refine. Zono %� time(s) %� time(s ) 47 0. 2 67 194 32 0. 5 39 567 30 0. 9 38 826 7 1. 4 13 6. 0 21 748 79 97 7 133 78 97 61 400 80 97 193 665 26

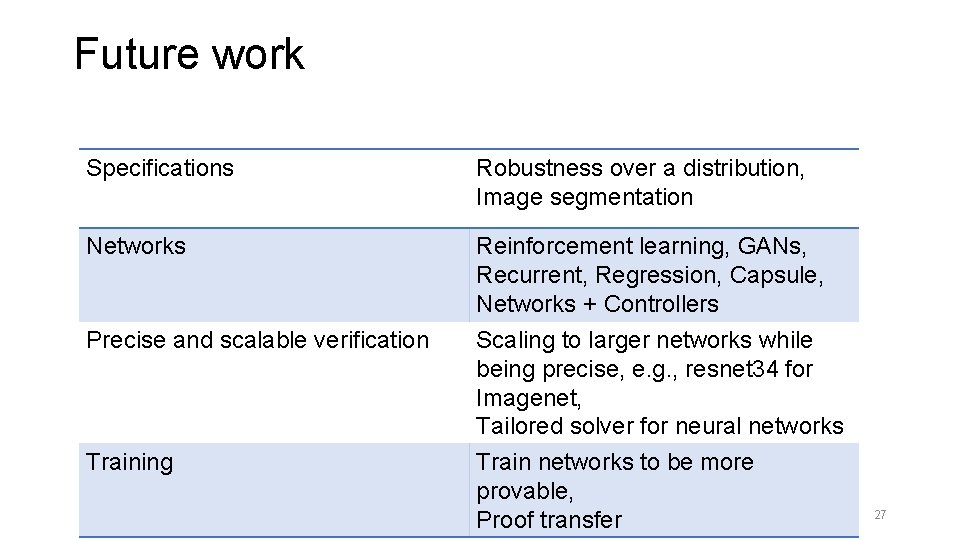

Future work Specifications Robustness over a distribution, Image segmentation Networks Reinforcement learning, GANs, Recurrent, Regression, Capsule, Networks + Controllers Scaling to larger networks while being precise, e. g. , resnet 34 for Imagenet, Tailored solver for neural networks Train networks to be more provable, Proof transfer Precise and scalable verification Training 27

Conclusion Aircraft collision avoidance system Reluplex Neurify ERAN > 32 hours 921 sec 28 227

- Slides: 28