Patternbased Write Scheduling and Read Balanceoriented Wearleveling for

Pattern-based Write Scheduling and Read Balance-oriented Wear-leveling for Solid State Drivers Jun Li, Xiaofei Xu, Xiaoning Pengy, Jianwei Liaoy College of Computer and Information Science, Southwest University, Chongqing, China, 400715 College of Computer Science and Engineering, Huaihua University, Huaihua, Hunan, China, 418000 2019 35 th Symposium on Mass Storage Systems and Technologies (MSST)

Outline Introduction Motivation Design Evaluation Conclusion

Introduction(1/2) SSDs are featured with small size, high performance, randomaccess performance and low energy consumption , However, flash units (e. g. blocks of SSDs) are written upon, they must be erased before they can be written again. Generally, the basic unit of NAND flash memory has an erase limit, and the unit will become unreliable once its erase count reaches the limit. Consequently, a part of SSD blocks might wear out before the SSD device wears out, that lead to smaller available capacity of a SSD device at the late stage of the device’s lifetime.

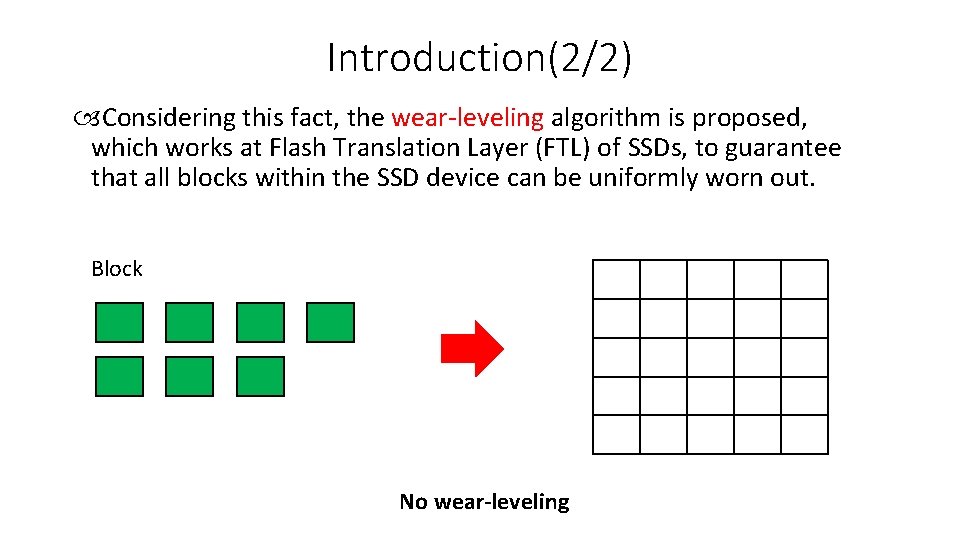

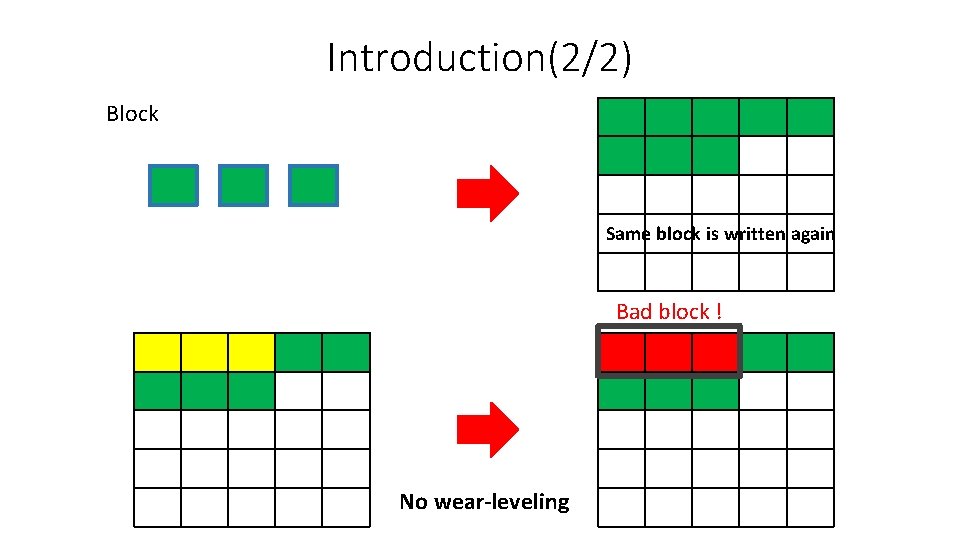

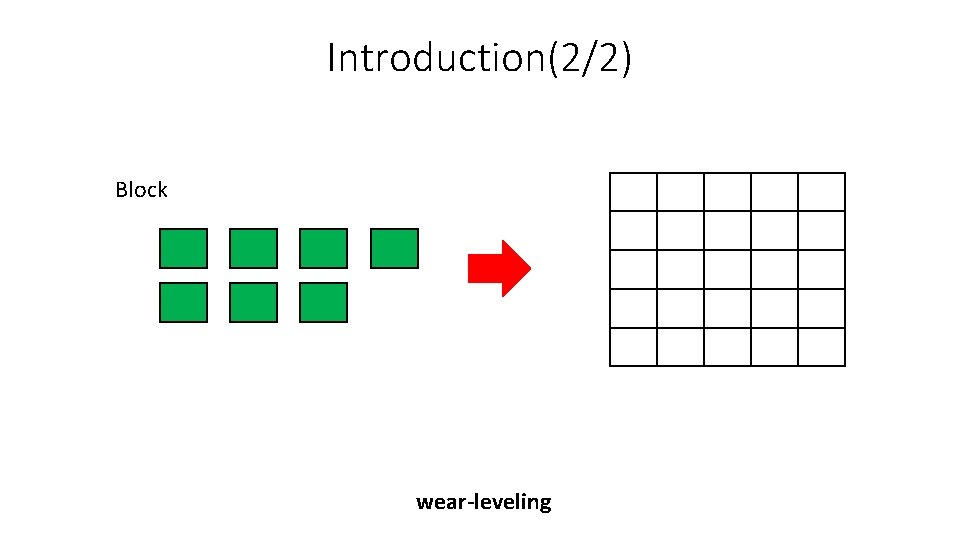

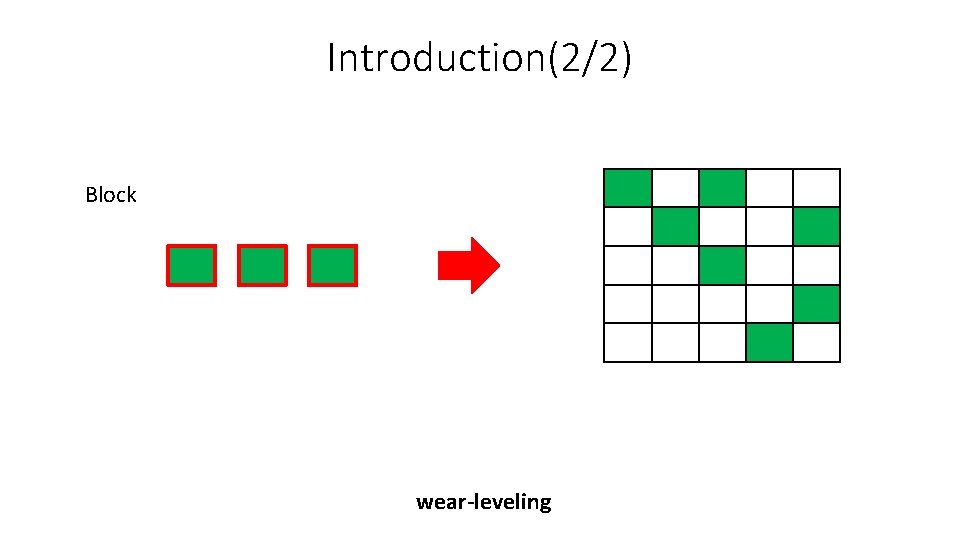

Introduction(2/2) Considering this fact, the wear-leveling algorithm is proposed, which works at Flash Translation Layer (FTL) of SSDs, to guarantee that all blocks within the SSD device can be uniformly worn out. Block No wear-leveling

Introduction(2/2) Block Same block is written again Bad block ! No wear-leveling

Introduction(2/2) Block wear-leveling

Introduction(2/2) Block wear-leveling

Outline Introduction Motivation Design Evaluation Conclusion

Motivation(1/2) An adaptive wear-leveling approach, to allow varied wear-leveling policies at different stages of device lifespan. Proposed a scheme on the basis of hot/cold data swapping, to better enhance wear-leveling effectiveness after categorizing data blocks into several sets. The data stored in SSD blocks can be divided into three categories: hot read data, cold read data, hot write data, by referring their access characteristics. They commonly migrate a block of (valid) data to another free SSD block in the same SSD plane. Thus, the erase counts across all blocks can be guaranteed.

Motivation(2/2) In other words, the factor of hot read data and cold read data within the same SSD block is not taken into account. Migrating hot read data to different chips of SSDs can obviously benefit to exploiting the chip-level internal parallelism for real-time responses. To address this issue, we propose a read balance-oriented wear-leveling mechanism.

Outline Introduction Related work Design Evaluation Conclusion

Design (1/6) 1. We first identify hot write requests with frequently access patterns, and then map these requests to the same SSD block. It is able to boost the garbage collection efficiency and benefit wear-leveling at the late stages. 2. After that, we can re-distribute the frequently read data accompanying with the less accessed data onto the different chips (or dies, or planes), when conducting wear-leveling. 3. Consequently, the hot read data can be accessed concurrently for achieving better system performance, through taking advantage of the internal parallelism of SSD devices.

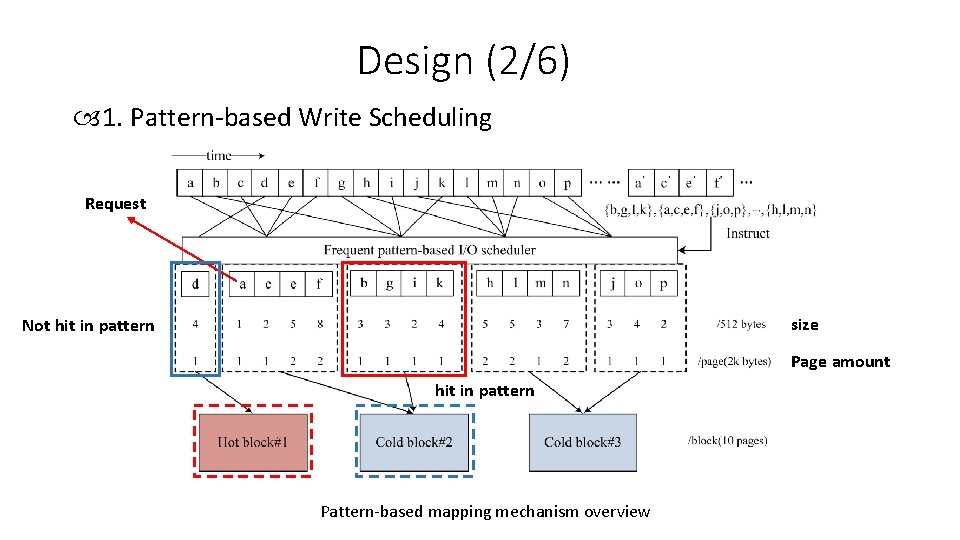

Design (2/6) 1. Pattern-based Write Scheduling Request size Not hit in pattern Page amount hit in pattern Pattern-based mapping mechanism overview

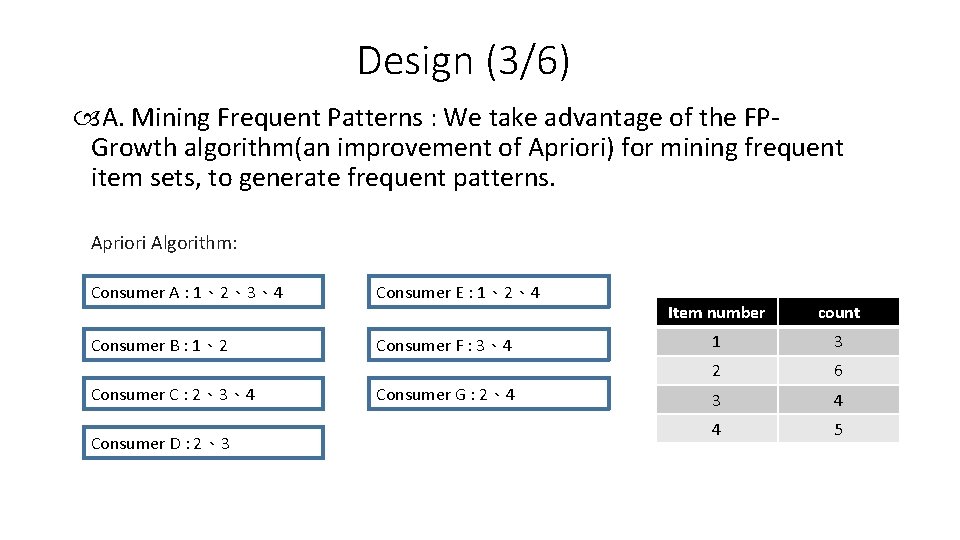

Design (3/6) A. Mining Frequent Patterns : We take advantage of the FPGrowth algorithm(an improvement of Apriori) for mining frequent item sets, to generate frequent patterns. Apriori Algorithm: Consumer A : 1、2、3、4 Consumer E : 1、2、4 Consumer B : 1、2 Consumer F : 3、4 Consumer C : 2、3、4 Consumer D : 2、3 Consumer G : 2、4 Item number count 1 3 2 6 3 4 4 5

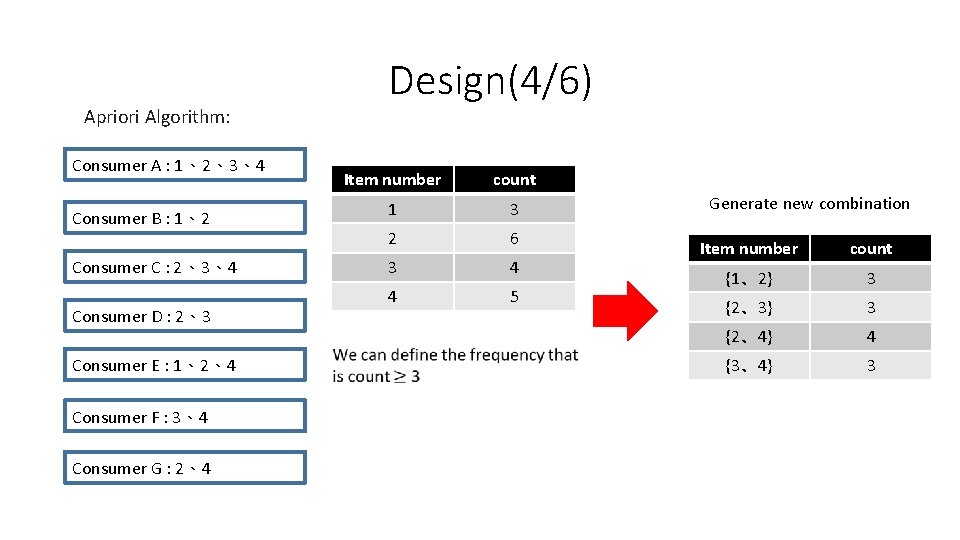

Apriori Algorithm: Consumer A : 1、2、3、4 Consumer B : 1、2 Consumer C : 2、3、4 Consumer D : 2、3 Consumer E : 1、2、4 Consumer F : 3、4 Consumer G : 2、4 Design(4/6) Item number count 1 3 2 6 3 4 4 5 Generate new combination Item number count {1、2} 3 {2、3} 3 {2、4} 4 {3、4} 3

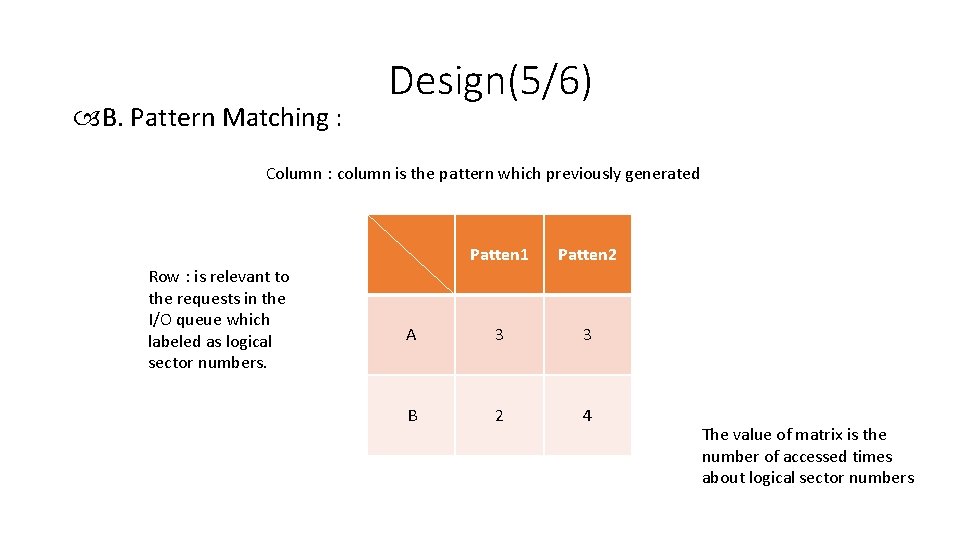

B. Pattern Matching : Design(5/6) Column : column is the pattern which previously generated Row : is relevant to the requests in the I/O queue which labeled as logical sector numbers. Patten 1 Patten 2 A 3 3 B 2 4 The value of matrix is the number of accessed times about logical sector numbers

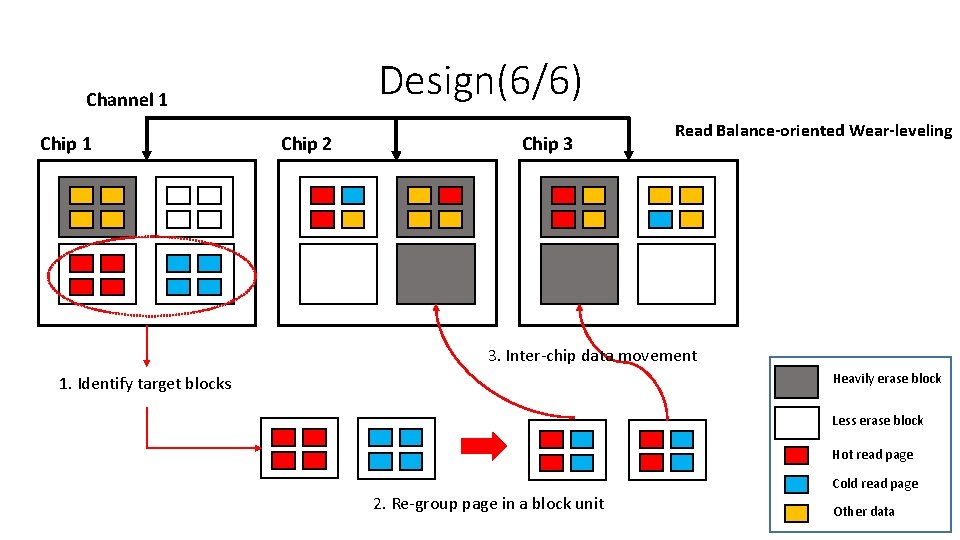

Design(6/6) Channel 1 Chip 2 Chip 3 Read Balance-oriented Wear-leveling 3. Inter-chip data movement Heavily erase block 1. Identify target blocks Less erase block Hot read page Cold read page 2. Re-group page in a block unit Other data

Outline Introduction Motivation Design Evaluation Conclusion

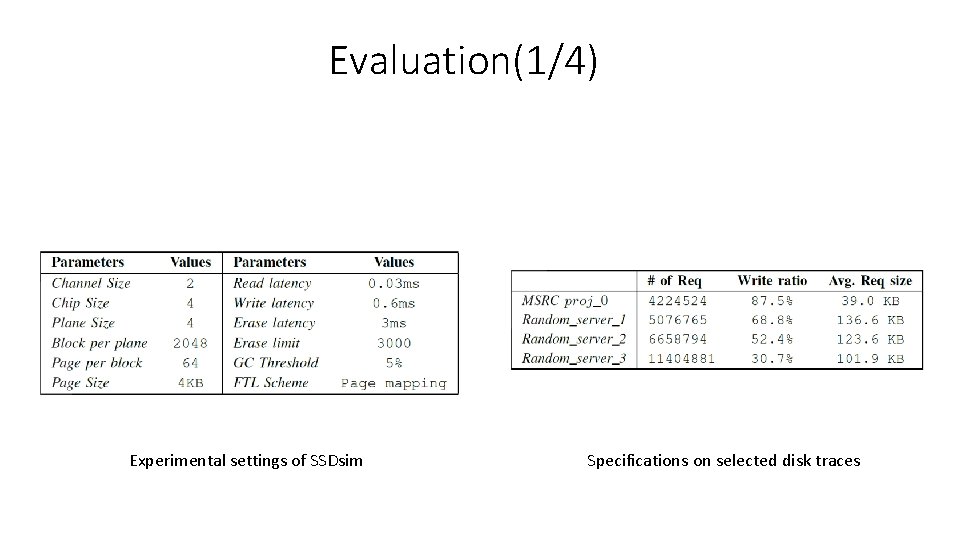

Evaluation(1/4) Experimental settings of SSDsim Specifications on selected disk traces

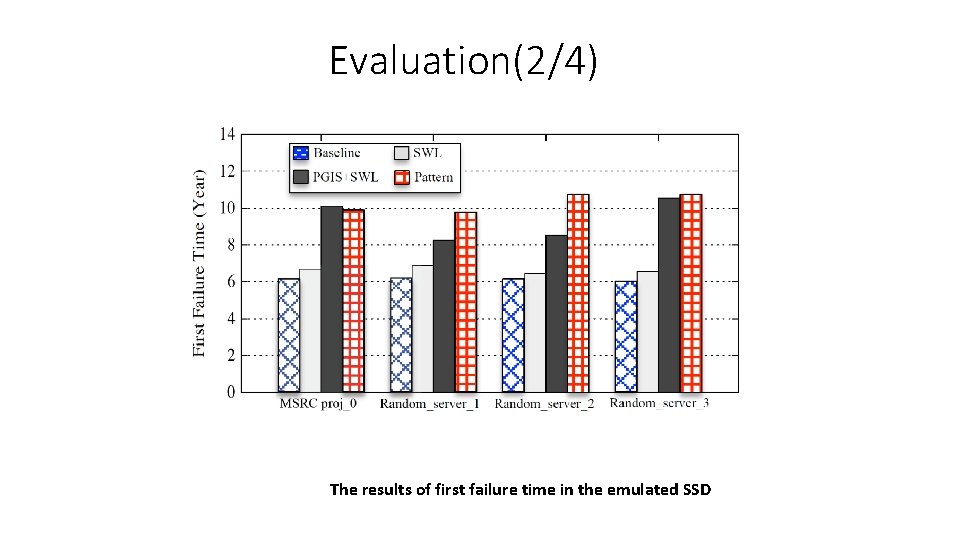

Evaluation(2/4) The results of first failure time in the emulated SSD

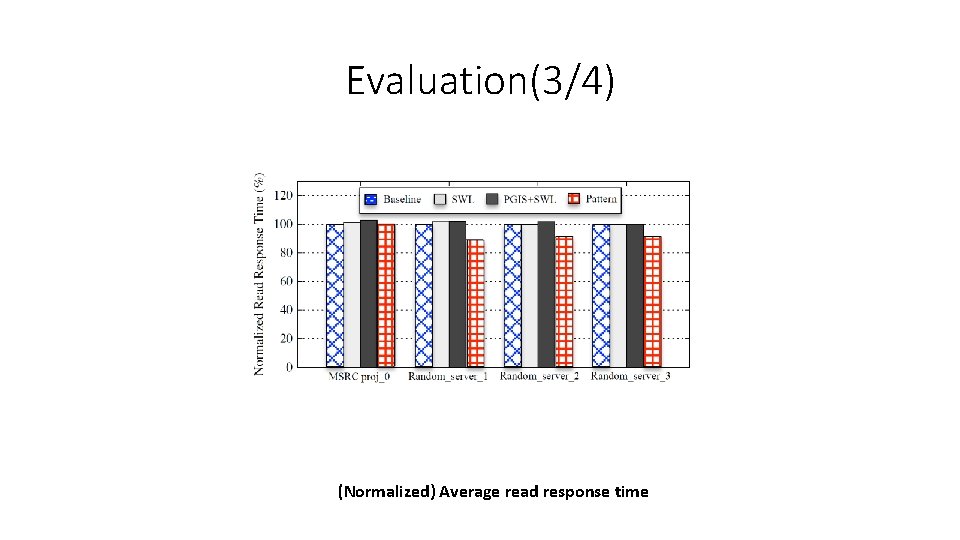

Evaluation(3/4) (Normalized) Average read response time

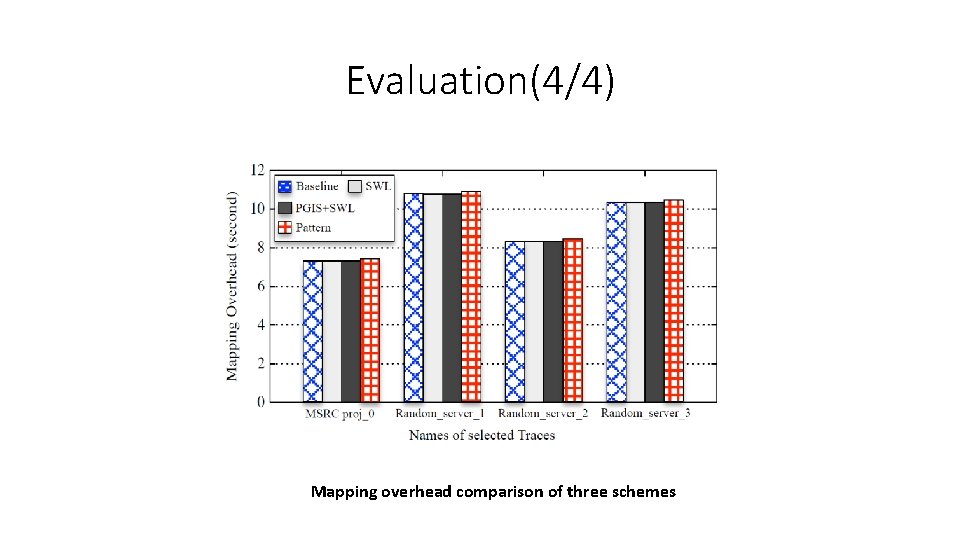

Evaluation(4/4) Mapping overhead comparison of three schemes

Outline Introduction Motivation Design Evaluation Conclusion

Conclusion In this paper, we first have proposed a frequent pattern-based I/O scheduler for dispatching write requests, to purposely cut down the garbage collection overhead. we have introduced a read balance-oriented wearleveling method. It targets at not only extending the lifetime of SSDs by maintaining an even block erasure distribution but also exploiting the internal parallelism of SSDs to speed up read responses.

Thank you for listening

- Slides: 25