Parallel Programming with Java Aamir Shafi National University

- Slides: 37

Parallel Programming with Java Aamir Shafi National University of Sciences and Technology (NUST) http: //hpc. seecs. edu. pk/~aamir http: //mpj-express. org 1

Two Important Concepts • Two fundamental concepts of parallel programming are: • Domain decomposition • Functional decomposition 2

Domain Decomposition Image taken from https: //computing. llnl. gov/tutorials/parallel_comp/ 3

Functional Decomposition Image taken from https: //computing. llnl. gov/tutorials/parallel_comp/ 4

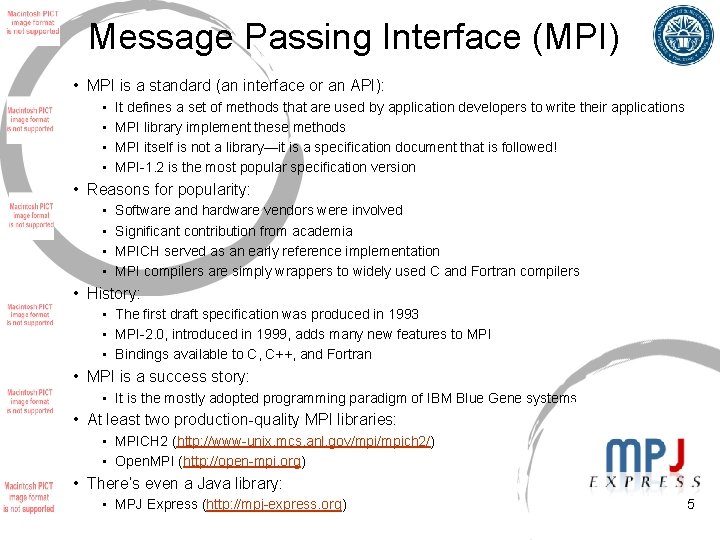

Message Passing Interface (MPI) • MPI is a standard (an interface or an API): • • It defines a set of methods that are used by application developers to write their applications MPI library implement these methods MPI itself is not a library—it is a specification document that is followed! MPI-1. 2 is the most popular specification version • Reasons for popularity: • • Software and hardware vendors were involved Significant contribution from academia MPICH served as an early reference implementation MPI compilers are simply wrappers to widely used C and Fortran compilers • History: • The first draft specification was produced in 1993 • MPI-2. 0, introduced in 1999, adds many new features to MPI • Bindings available to C, C++, and Fortran • MPI is a success story: • It is the mostly adopted programming paradigm of IBM Blue Gene systems • At least two production-quality MPI libraries: • MPICH 2 (http: //www-unix. mcs. anl. gov/mpich 2/) • Open. MPI (http: //open-mpi. org) • There’s even a Java library: • MPJ Express (http: //mpj-express. org) 5

Message Passing Model • Message passing model allows processors to communicate by passing messages: • Processors do not share memory • Data transfer between processors required cooperative operations to be performed by each processor: • One processor sends the message while other receives the message 6

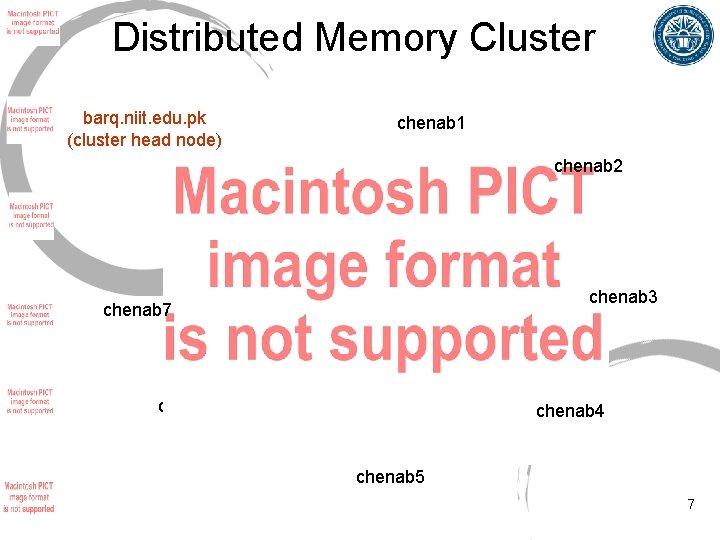

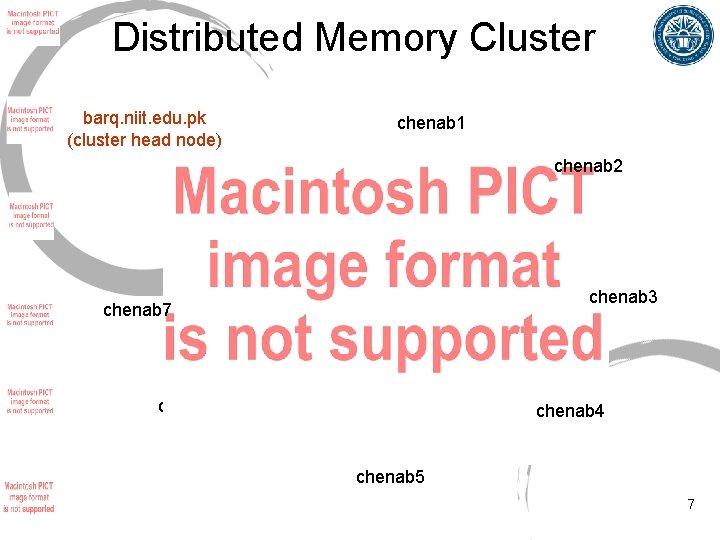

Distributed Memory Cluster barq. niit. edu. pk (cluster head node) chenab 1 chenab 2 chenab 3 chenab 7 chenab 6 chenab 4 chenab 5 7

Steps involved in executing the “Hello World!” program 1. 2. 3. 4. 5. 6. 7. Let’s logon to the cluster head node Write the Hello World program Compile the program Write the machines files Start MPJ Express daemons Execute the parallel program Stop MPJ Express daemons 8

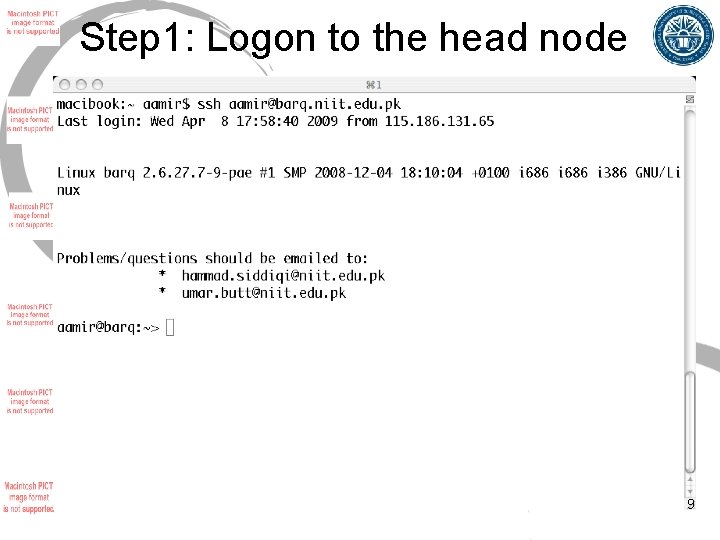

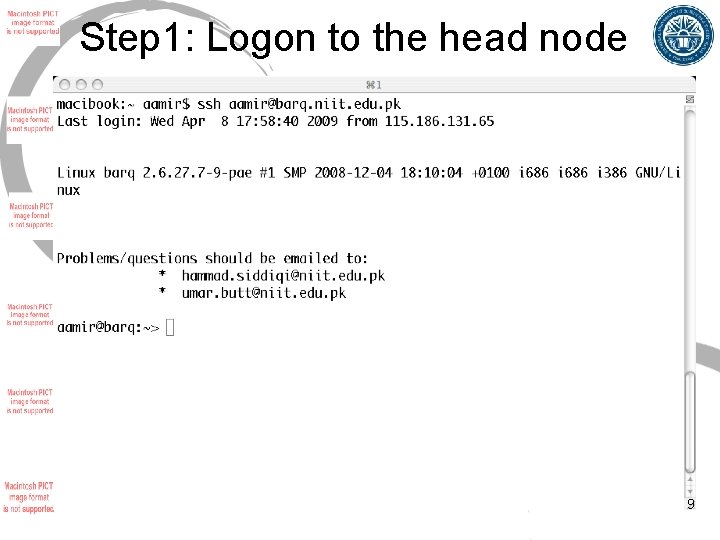

Step 1: Logon to the head node 9

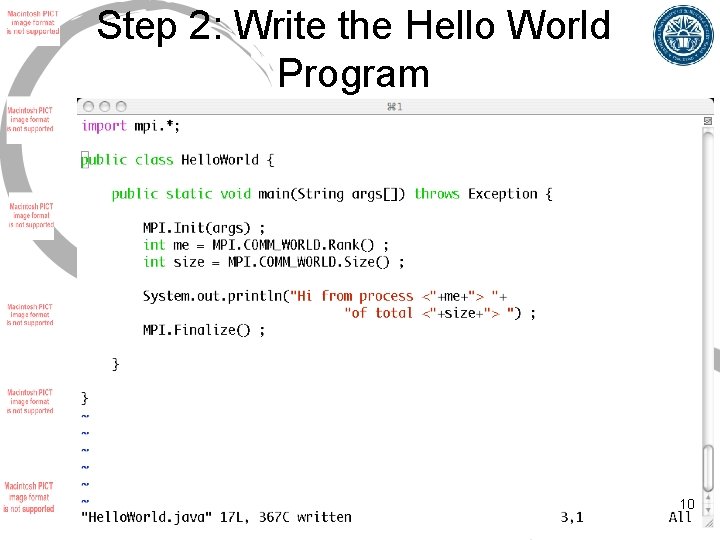

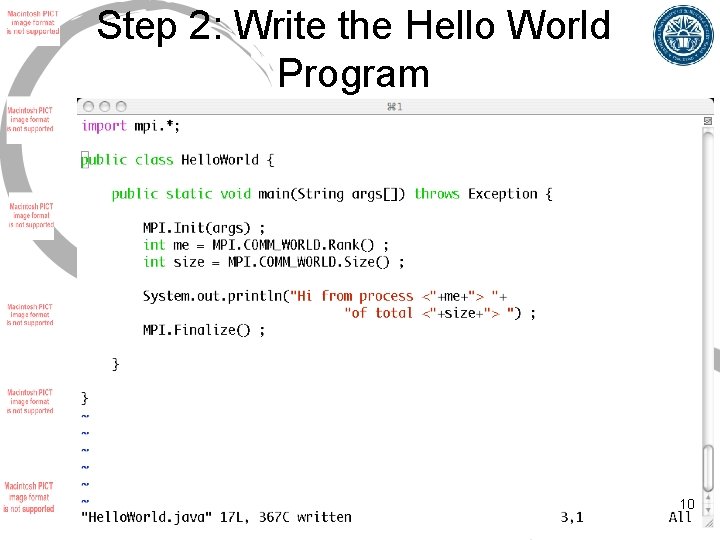

Step 2: Write the Hello World Program 10

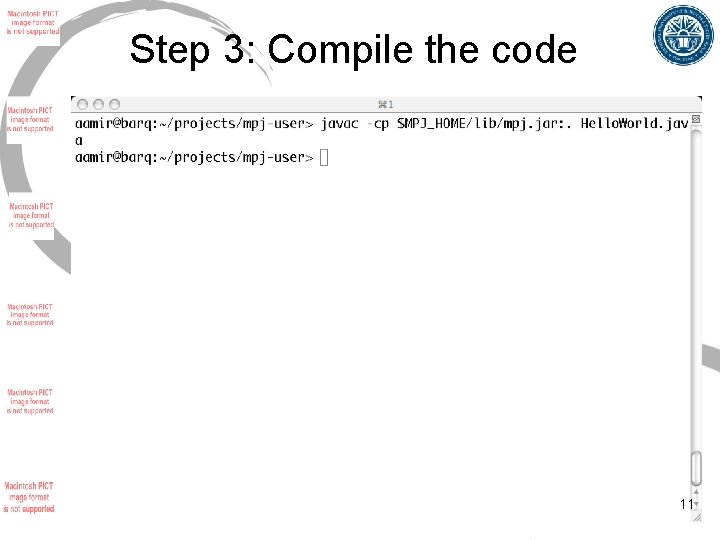

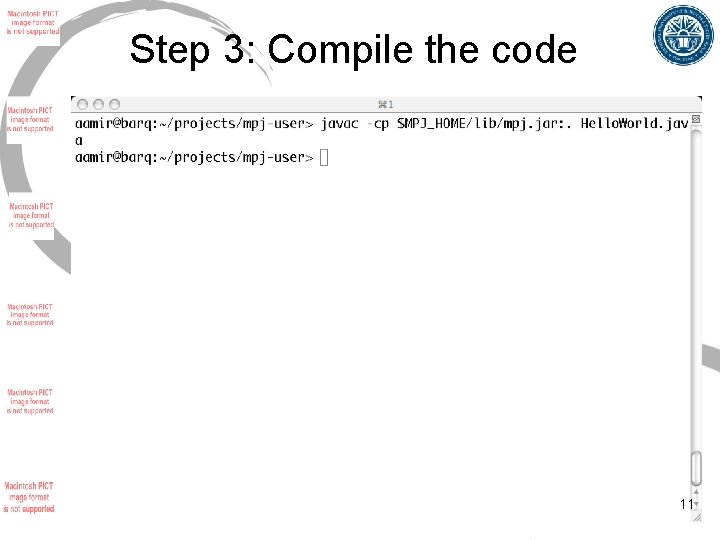

Step 3: Compile the code 11

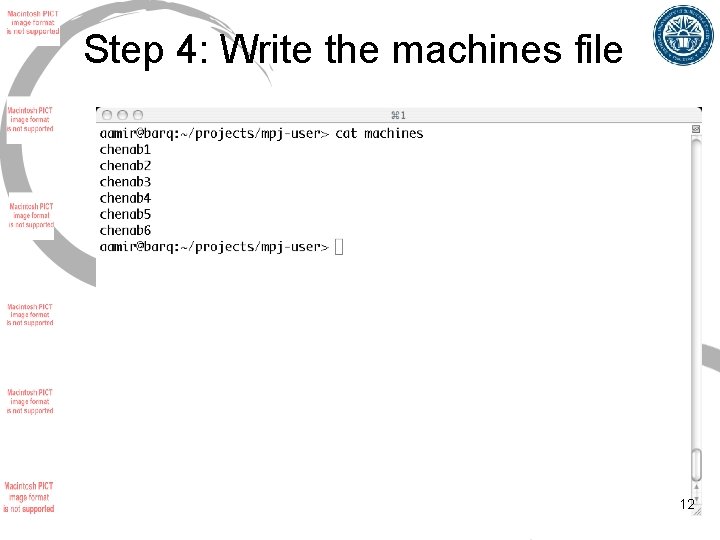

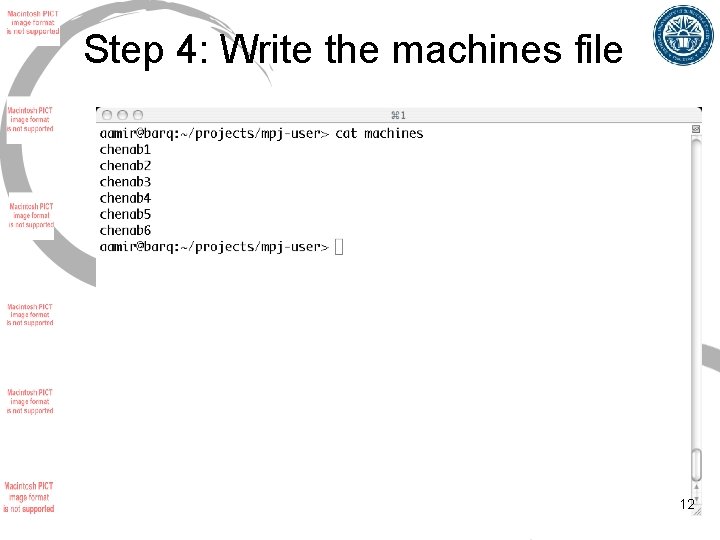

Step 4: Write the machines file 12

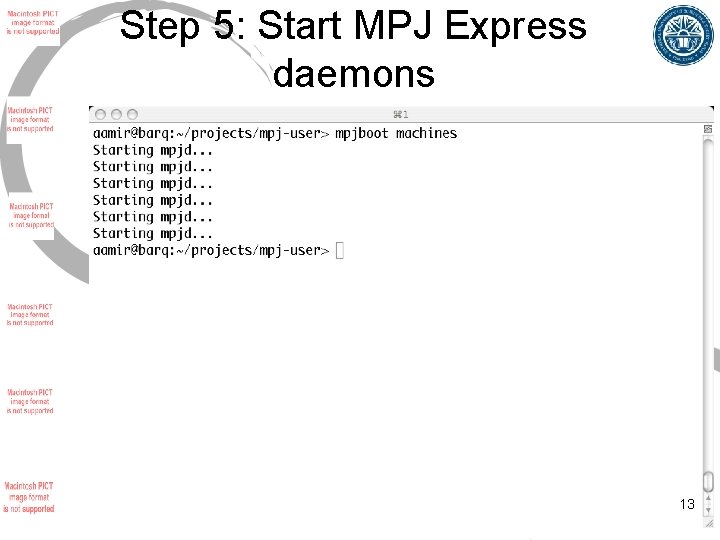

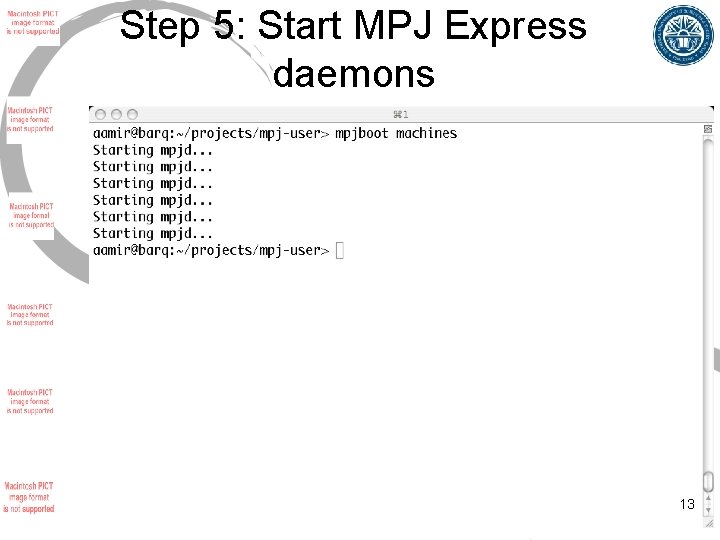

Step 5: Start MPJ Express daemons 13

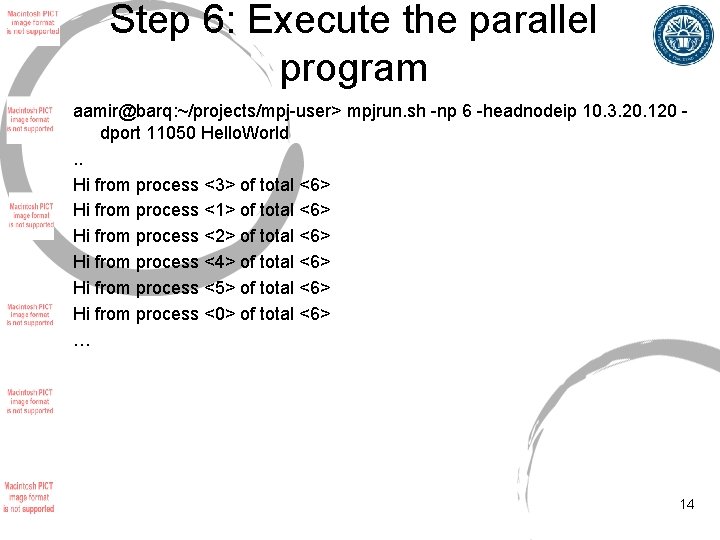

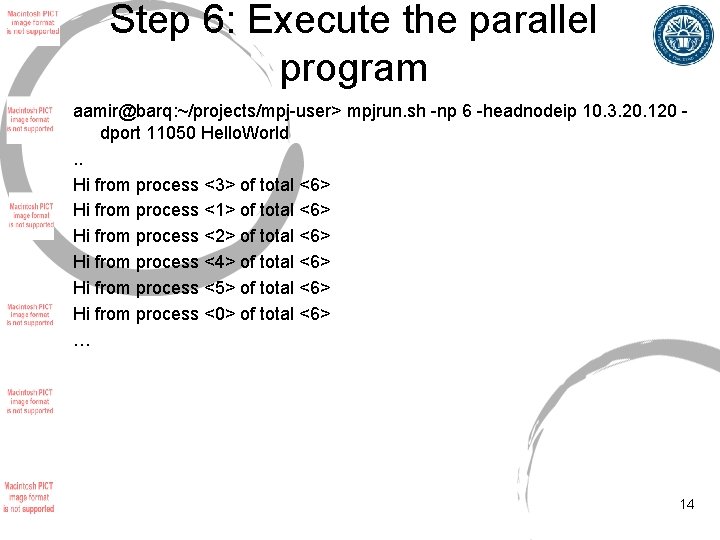

Step 6: Execute the parallel program aamir@barq: ~/projects/mpj-user> mpjrun. sh -np 6 -headnodeip 10. 3. 20. 120 dport 11050 Hello. World. . Hi from process <3> of total <6> Hi from process <1> of total <6> Hi from process <2> of total <6> Hi from process <4> of total <6> Hi from process <5> of total <6> Hi from process <0> of total <6> … 14

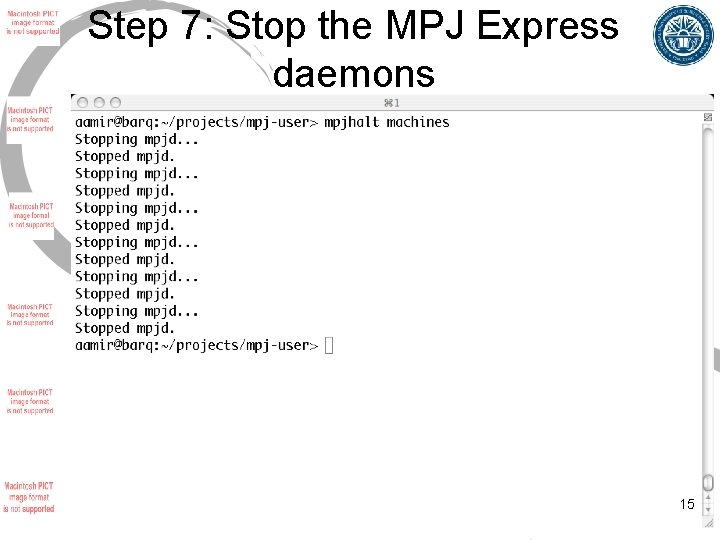

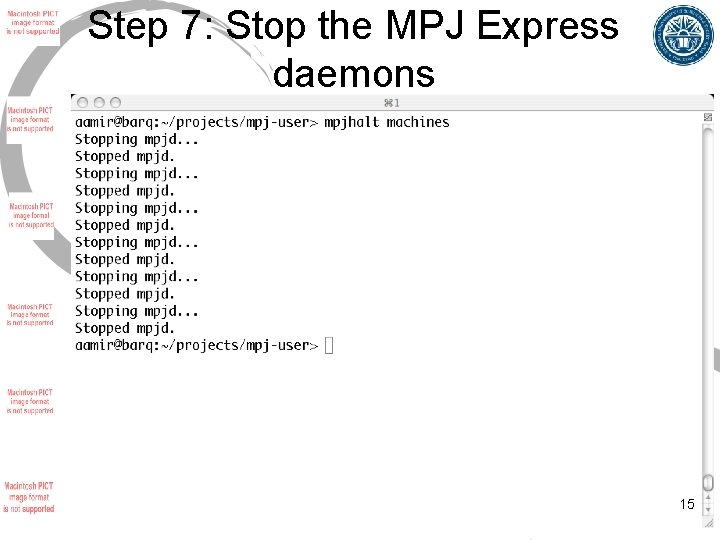

Step 7: Stop the MPJ Express daemons 15

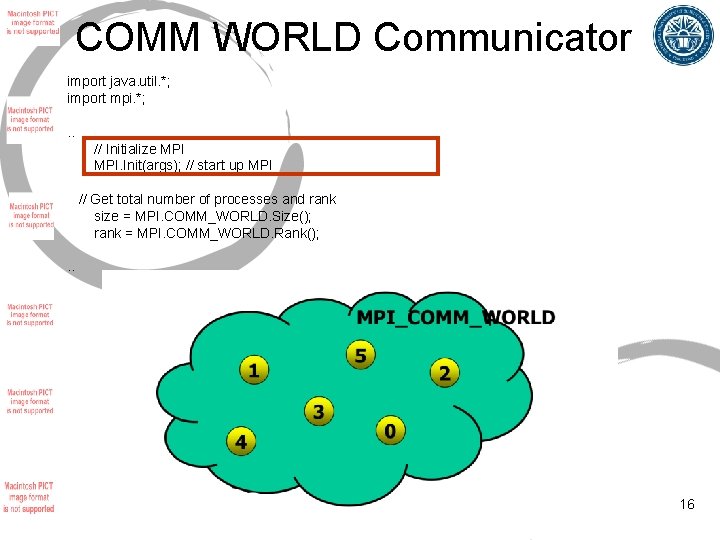

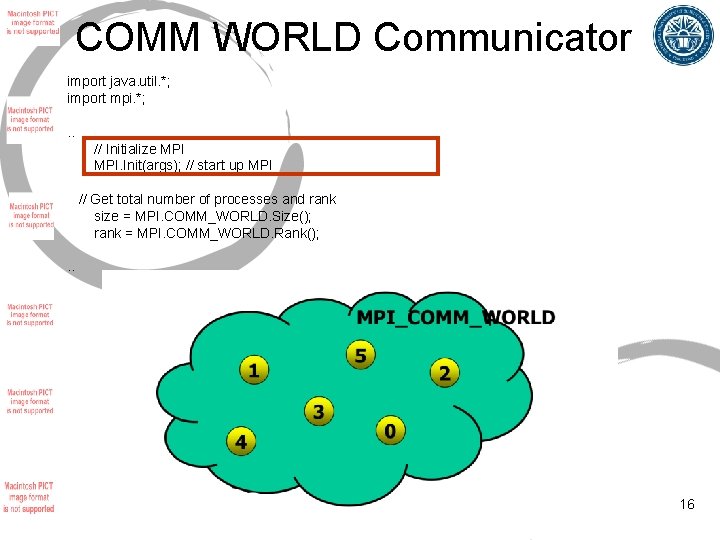

COMM WORLD Communicator import java. util. *; import mpi. *; . . // Initialize MPI. Init(args); // start up MPI // Get total number of processes and rank size = MPI. COMM_WORLD. Size(); rank = MPI. COMM_WORLD. Rank(); . . 16

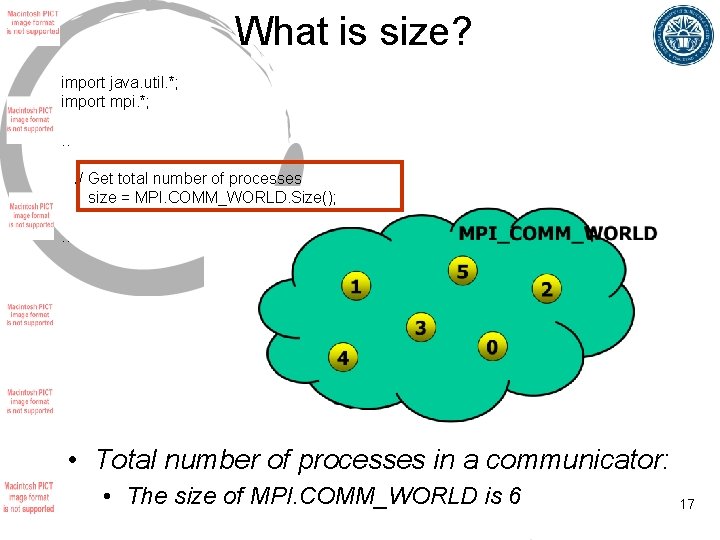

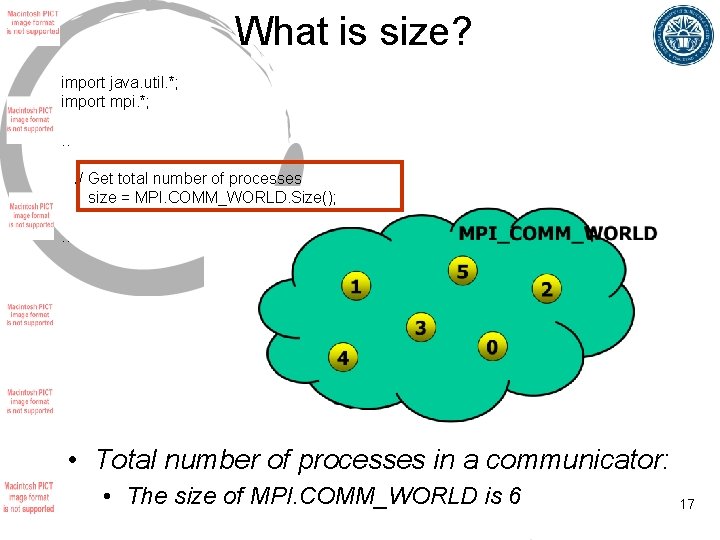

What is size? import java. util. *; import mpi. *; . . // Get total number of processes size = MPI. COMM_WORLD. Size(); . . • Total number of processes in a communicator: • The size of MPI. COMM_WORLD is 6 17

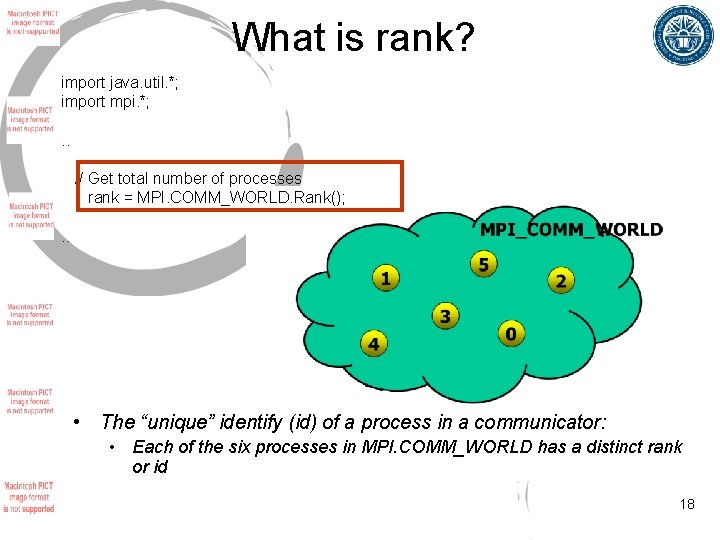

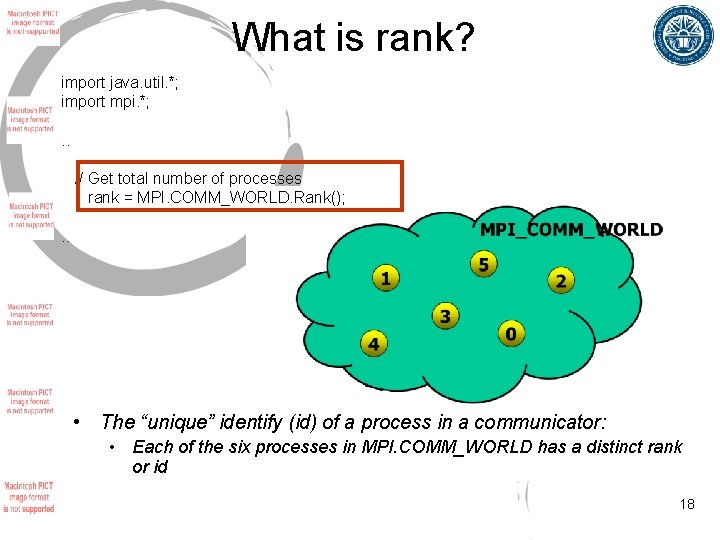

What is rank? import java. util. *; import mpi. *; . . // Get total number of processes rank = MPI. COMM_WORLD. Rank(); . . • The “unique” identify (id) of a process in a communicator: • Each of the six processes in MPI. COMM_WORLD has a distinct rank or id 18

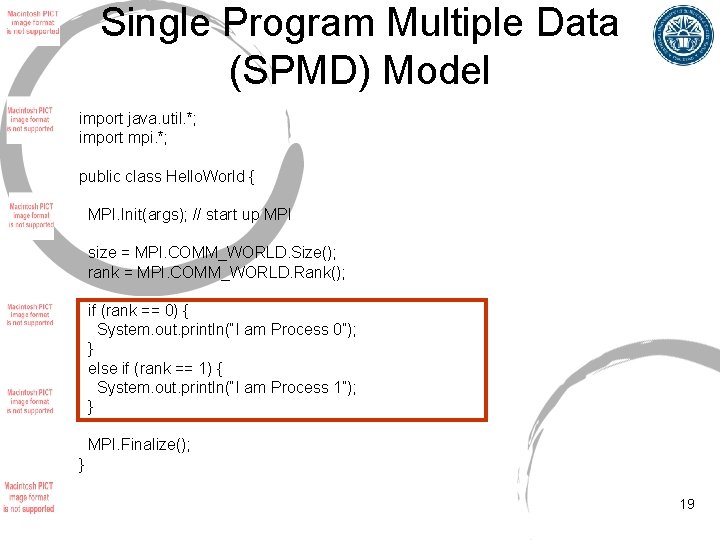

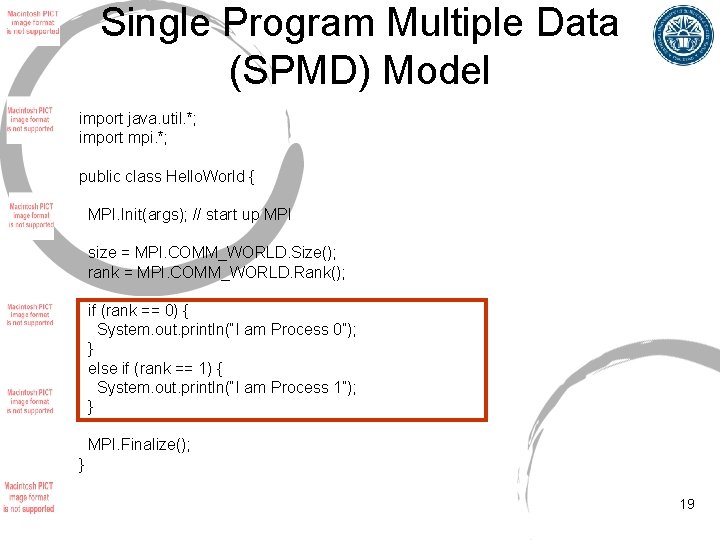

Single Program Multiple Data (SPMD) Model import java. util. *; import mpi. *; public class Hello. World { MPI. Init(args); // start up MPI size = MPI. COMM_WORLD. Size(); rank = MPI. COMM_WORLD. Rank(); if (rank == 0) { System. out. println(“I am Process 0”); } else if (rank == 1) { System. out. println(“I am Process 1”); } MPI. Finalize(); } 19

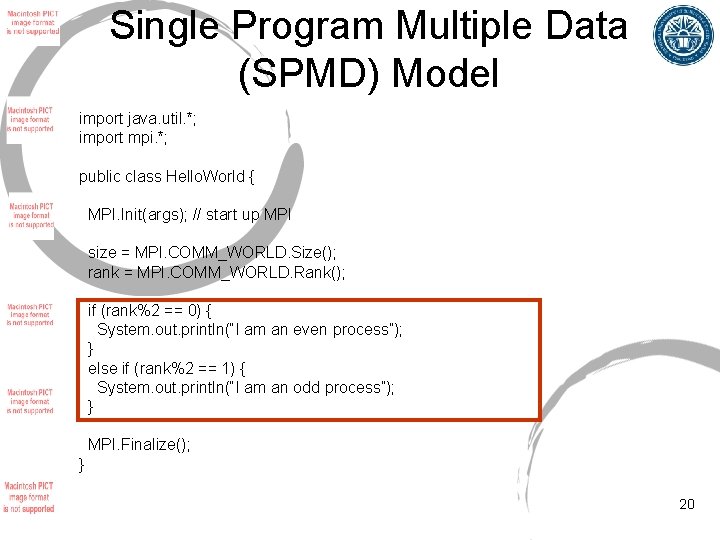

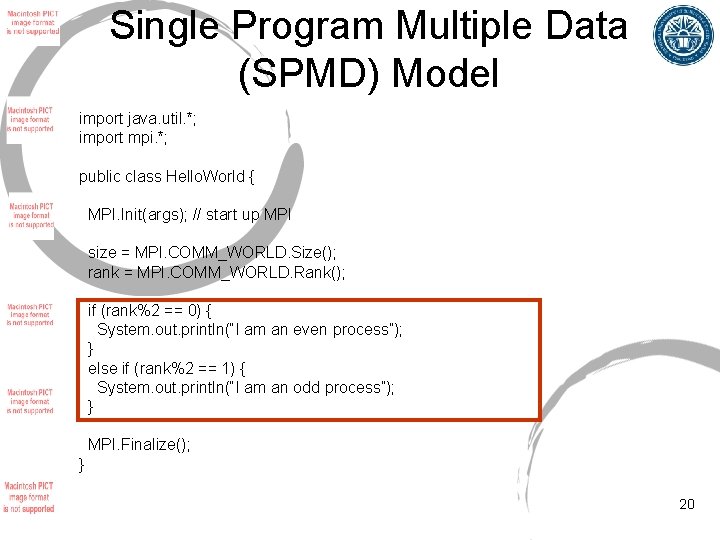

Single Program Multiple Data (SPMD) Model import java. util. *; import mpi. *; public class Hello. World { MPI. Init(args); // start up MPI size = MPI. COMM_WORLD. Size(); rank = MPI. COMM_WORLD. Rank(); if (rank%2 == 0) { System. out. println(“I am an even process”); } else if (rank%2 == 1) { System. out. println(“I am an odd process”); } MPI. Finalize(); } 20

Point to Point Communication • The most fundamental facility provided by MPI • Basically “exchange messages between two processes”: • One process (source) sends message • The other process (destination) receives message 21

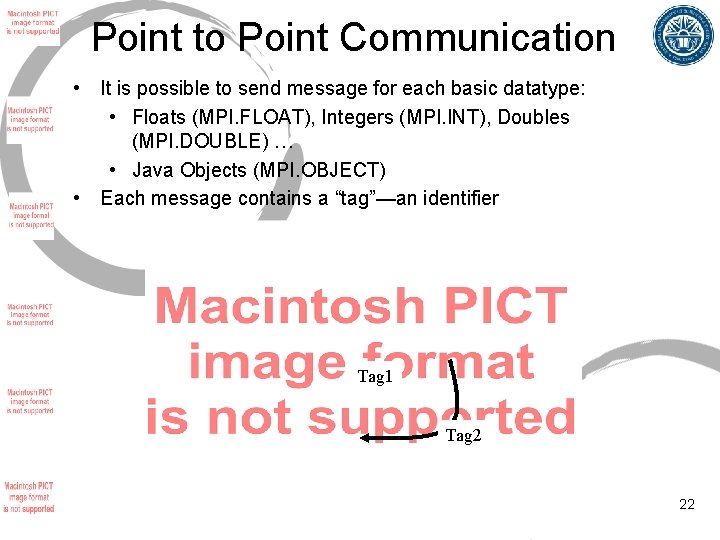

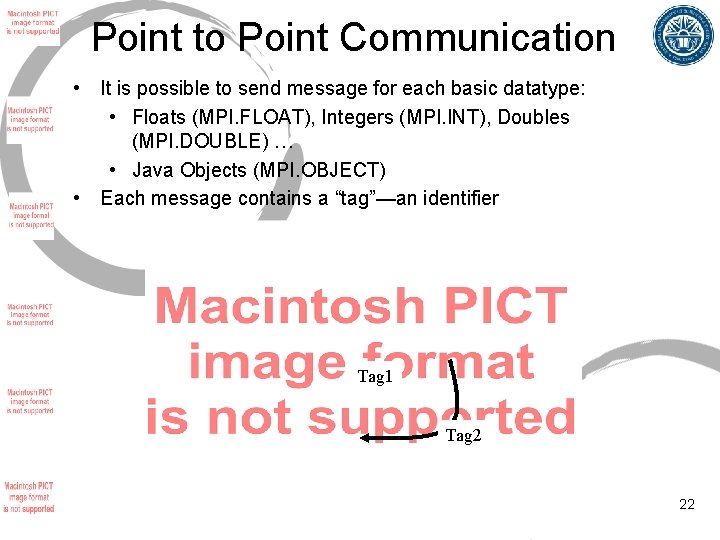

Point to Point Communication • It is possible to send message for each basic datatype: • Floats (MPI. FLOAT), Integers (MPI. INT), Doubles (MPI. DOUBLE) … • Java Objects (MPI. OBJECT) • Each message contains a “tag”—an identifier Tag 1 Tag 2 22

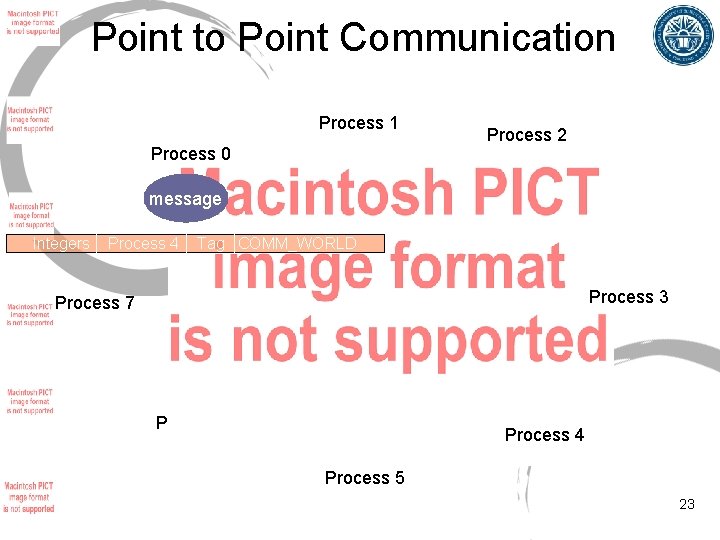

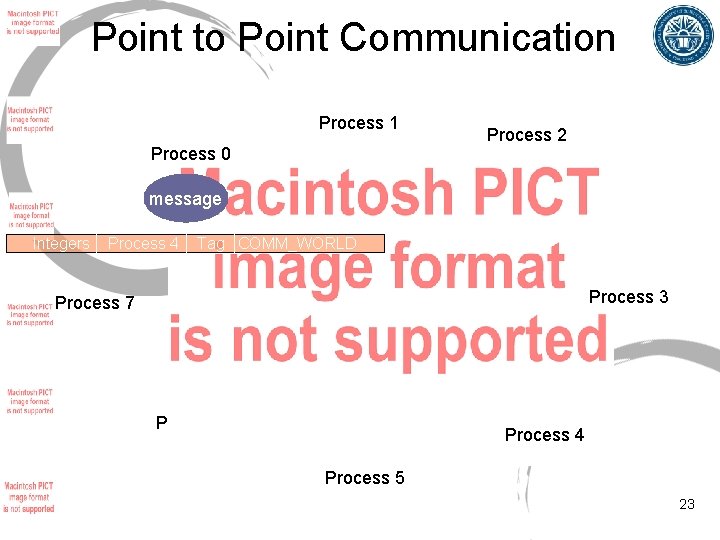

Point to Point Communication Process 1 Process 0 Process 2 message Integers Process 4 Tag COMM_WORLD Process 3 Process 7 Process 6 Process 4 Process 5 23

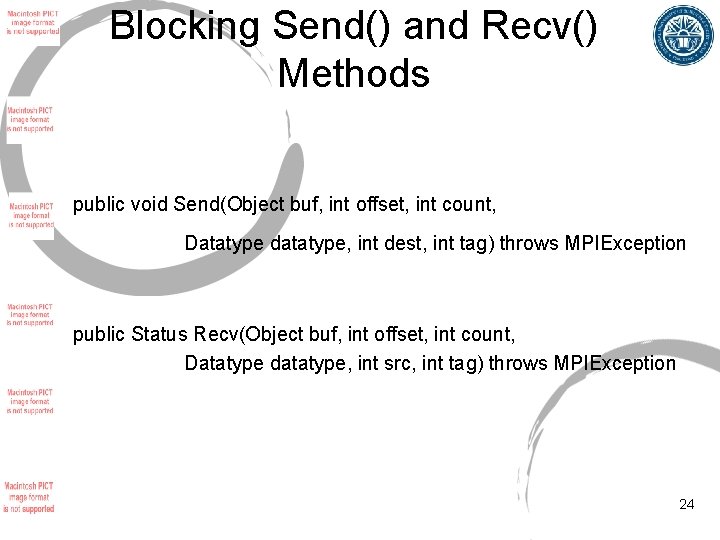

Blocking Send() and Recv() Methods public void Send(Object buf, int offset, int count, Datatype datatype, int dest, int tag) throws MPIException public Status Recv(Object buf, int offset, int count, Datatype datatype, int src, int tag) throws MPIException 24

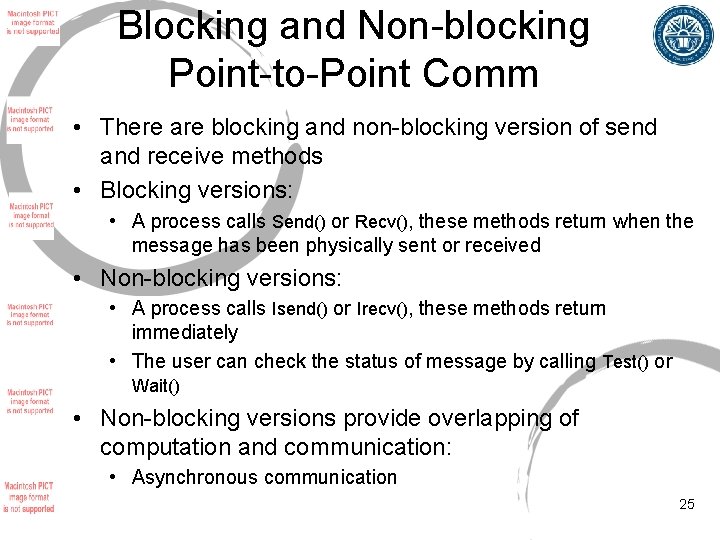

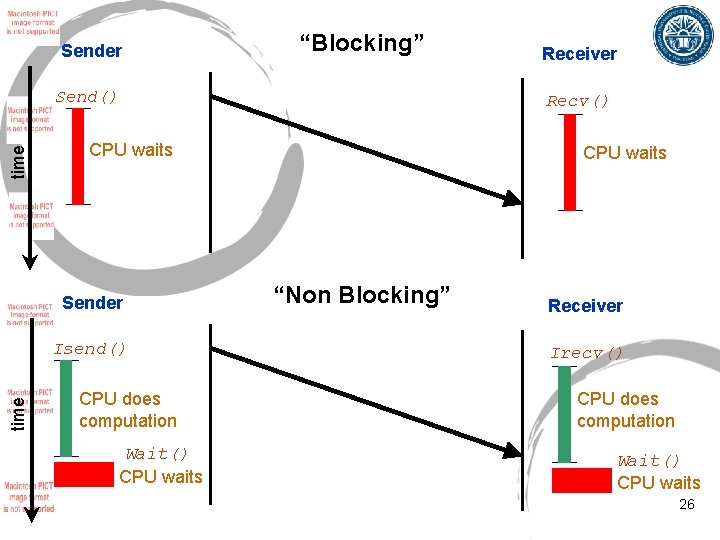

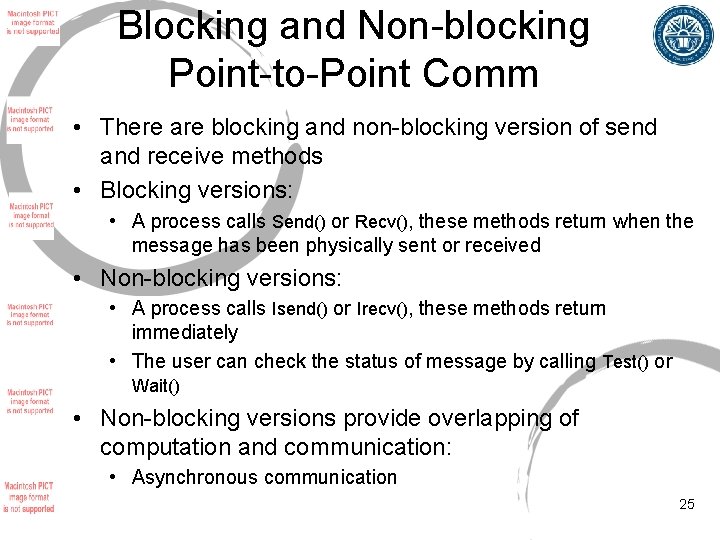

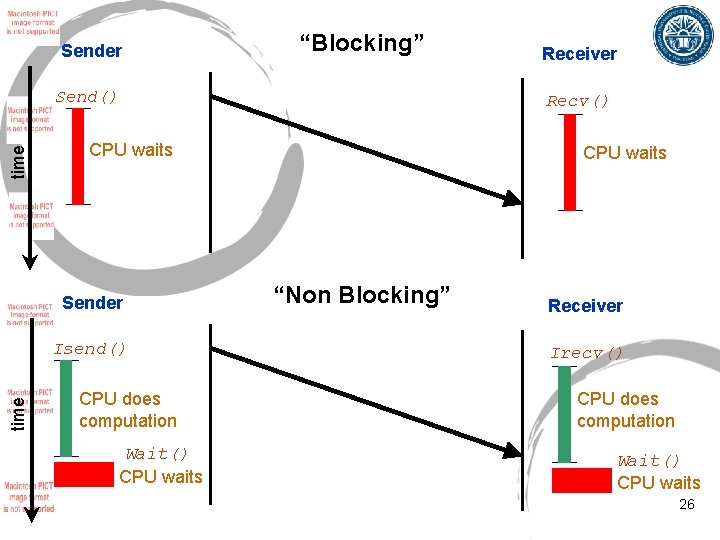

Blocking and Non-blocking Point-to-Point Comm • There are blocking and non-blocking version of send and receive methods • Blocking versions: • A process calls Send() or Recv(), these methods return when the message has been physically sent or received • Non-blocking versions: • A process calls Isend() or Irecv(), these methods return immediately • The user can check the status of message by calling Test() or Wait() • Non-blocking versions provide overlapping of computation and communication: • Asynchronous communication 25

Sender “Blocking” time Send() Isend() time Recv() CPU waits Sender CPU does computation Wait() CPU waits Receiver CPU waits “Non Blocking” Receiver Irecv() CPU does computation Wait() CPU waits 26

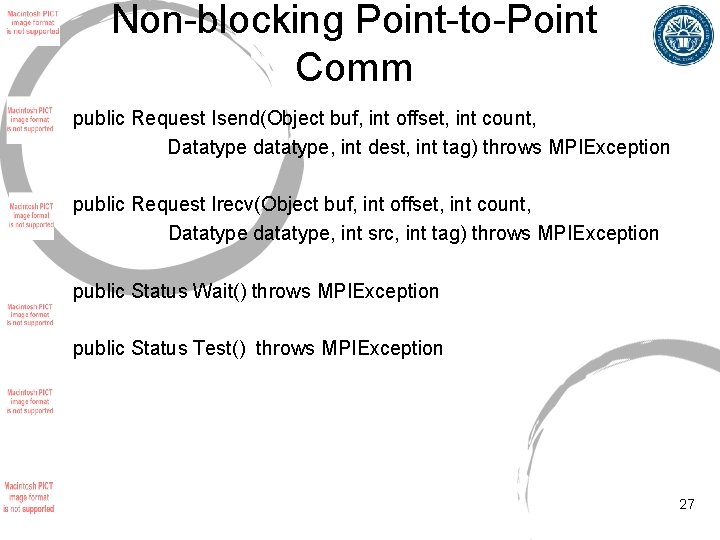

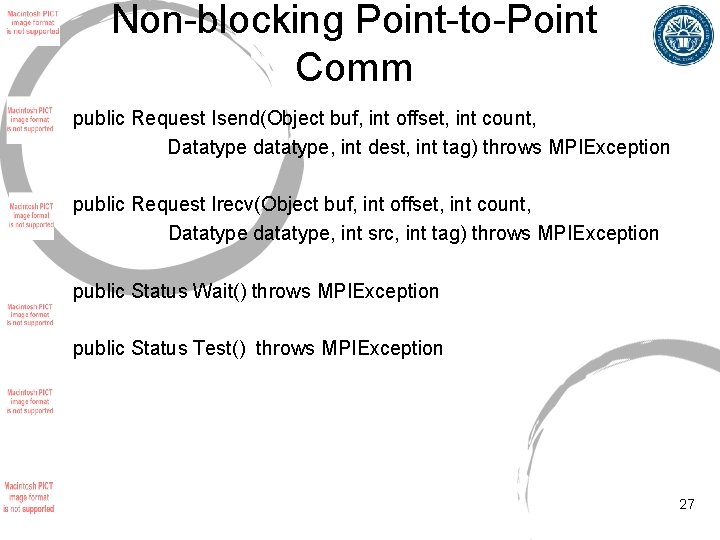

Non-blocking Point-to-Point Comm public Request Isend(Object buf, int offset, int count, Datatype datatype, int dest, int tag) throws MPIException public Request Irecv(Object buf, int offset, int count, Datatype datatype, int src, int tag) throws MPIException public Status Wait() throws MPIException public Status Test() throws MPIException 27

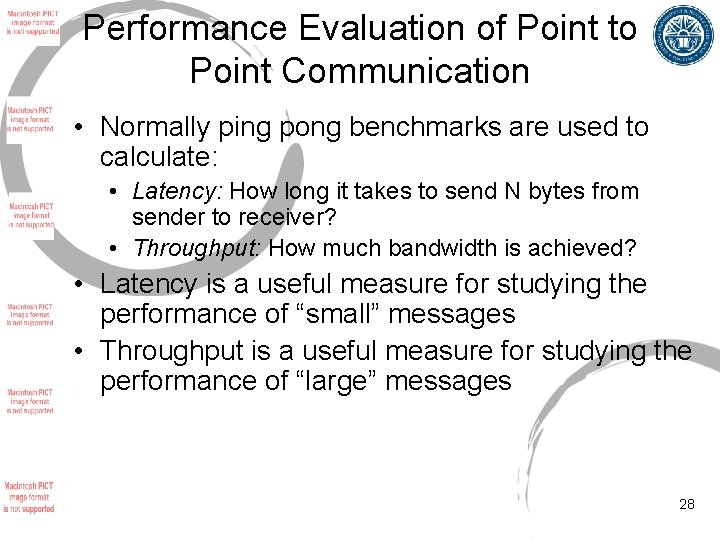

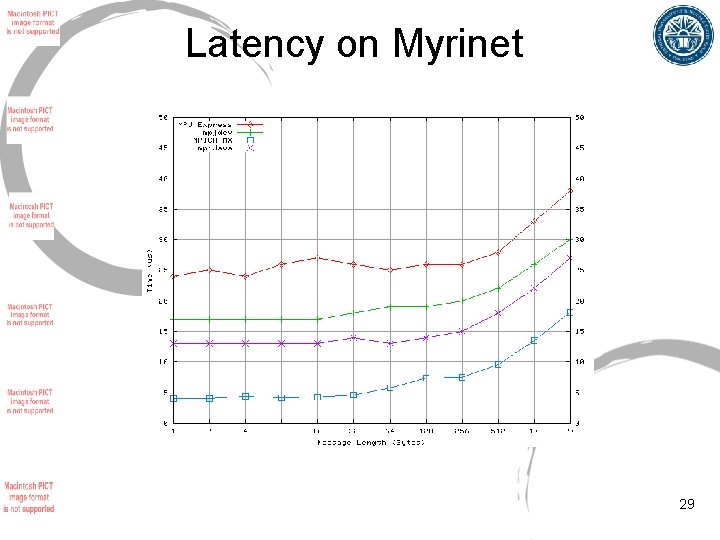

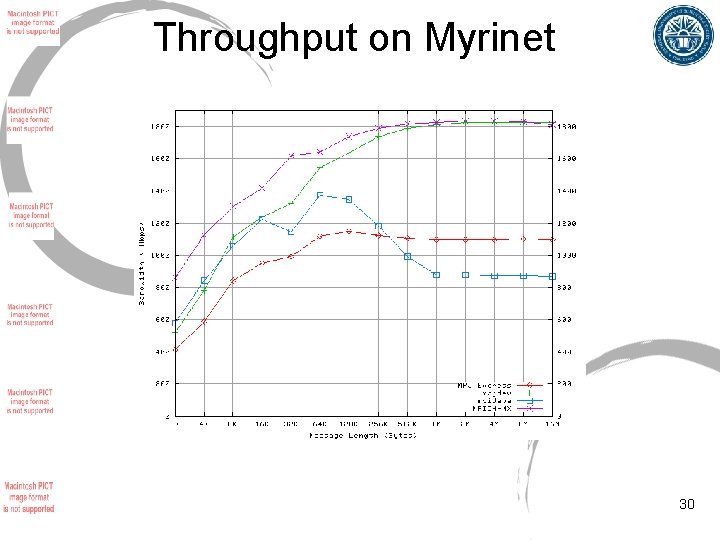

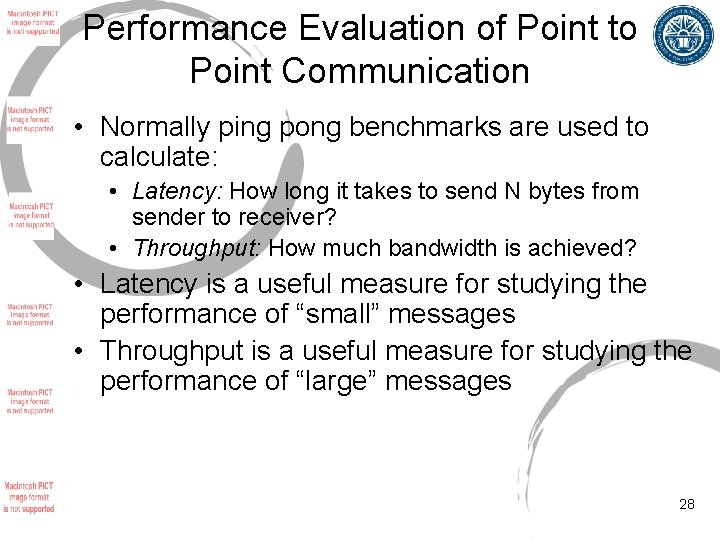

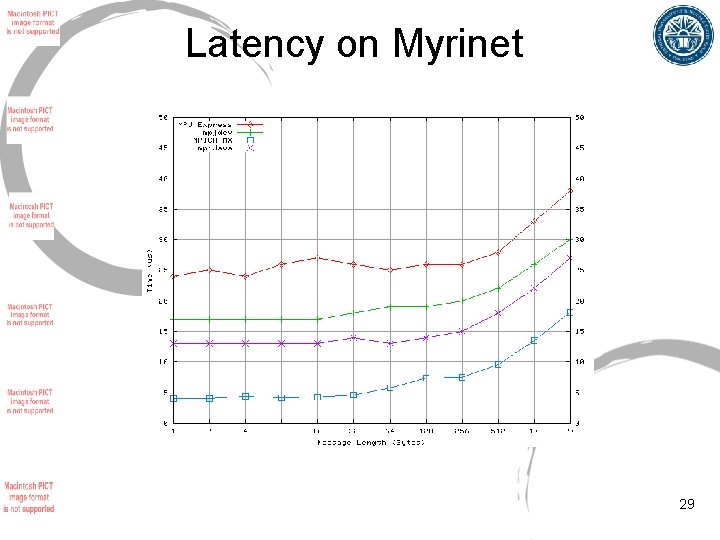

Performance Evaluation of Point to Point Communication • Normally ping pong benchmarks are used to calculate: • Latency: How long it takes to send N bytes from sender to receiver? • Throughput: How much bandwidth is achieved? • Latency is a useful measure for studying the performance of “small” messages • Throughput is a useful measure for studying the performance of “large” messages 28

Latency on Myrinet 29

Throughput on Myrinet 30

Collective communications • Provided as a convenience for application developers: • Save significant development time • Efficient algorithms may be used • Stable (tested) • Built on top of point-to-point communications • These operations include: • Broadcast, Barrier, Reduce, Allreduce, Alltoall, Scatter, Scan, Allscatter • Versions that allows displacements between the data 31

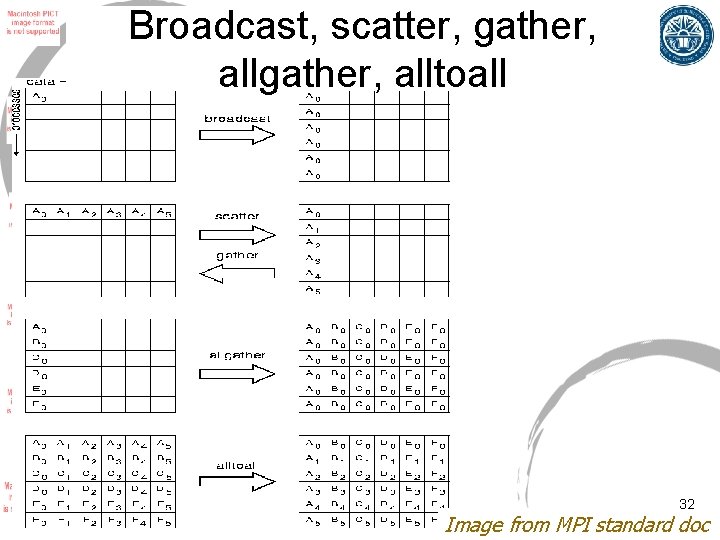

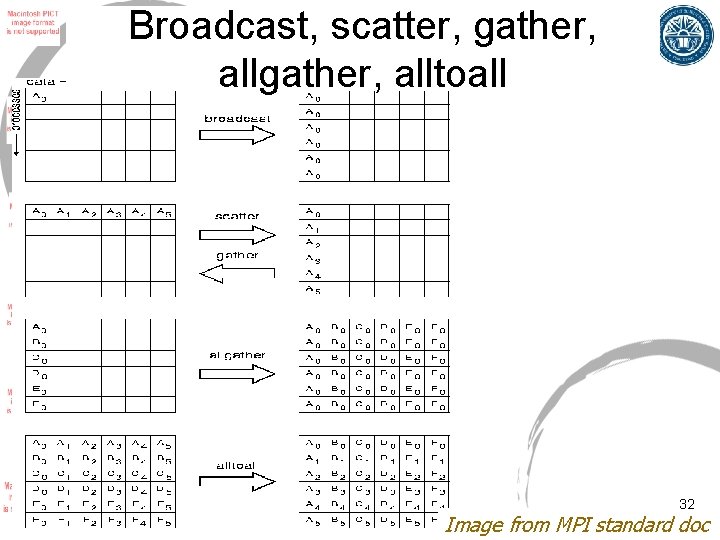

Broadcast, scatter, gather, alltoall 32 Image from MPI standard doc

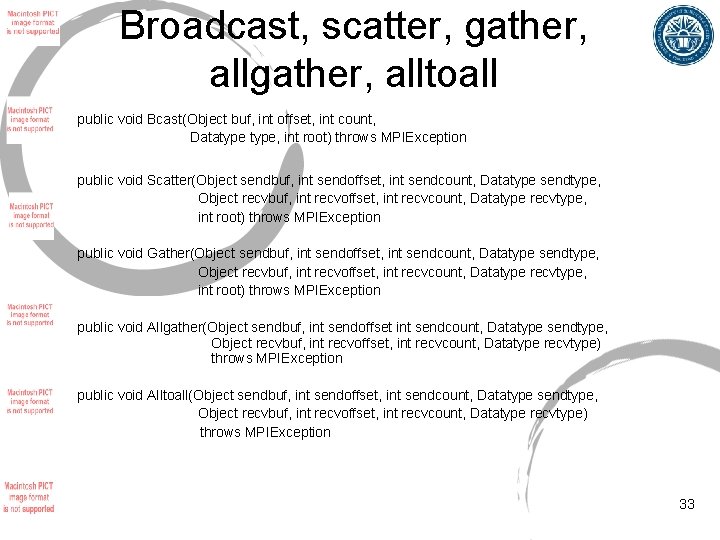

Broadcast, scatter, gather, alltoall public void Bcast(Object buf, int offset, int count, Datatype, int root) throws MPIException public void Scatter(Object sendbuf, int sendoffset, int sendcount, Datatype sendtype, Object recvbuf, int recvoffset, int recvcount, Datatype recvtype, int root) throws MPIException public void Gather(Object sendbuf, int sendoffset, int sendcount, Datatype sendtype, Object recvbuf, int recvoffset, int recvcount, Datatype recvtype, int root) throws MPIException public void Allgather(Object sendbuf, int sendoffset int sendcount, Datatype sendtype, Object recvbuf, int recvoffset, int recvcount, Datatype recvtype) throws MPIException public void Alltoall(Object sendbuf, int sendoffset, int sendcount, Datatype sendtype, Object recvbuf, int recvoffset, int recvcount, Datatype recvtype) throws MPIException 33

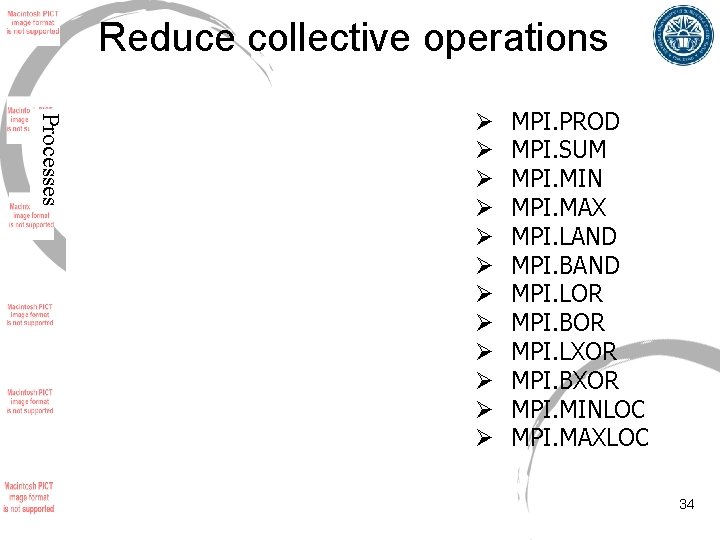

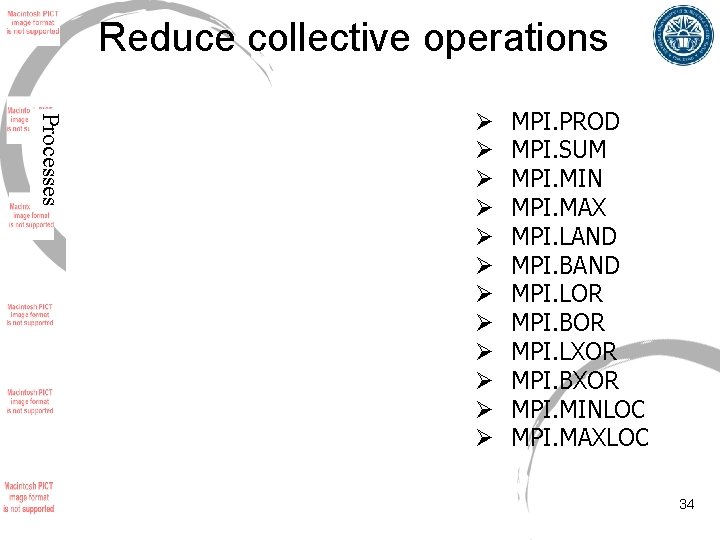

Reduce collective operations Processes Ø Ø Ø MPI. PROD MPI. SUM MPI. MIN MPI. MAX MPI. LAND MPI. BAND MPI. LOR MPI. BOR MPI. LXOR MPI. BXOR MPI. MINLOC MPI. MAXLOC 34

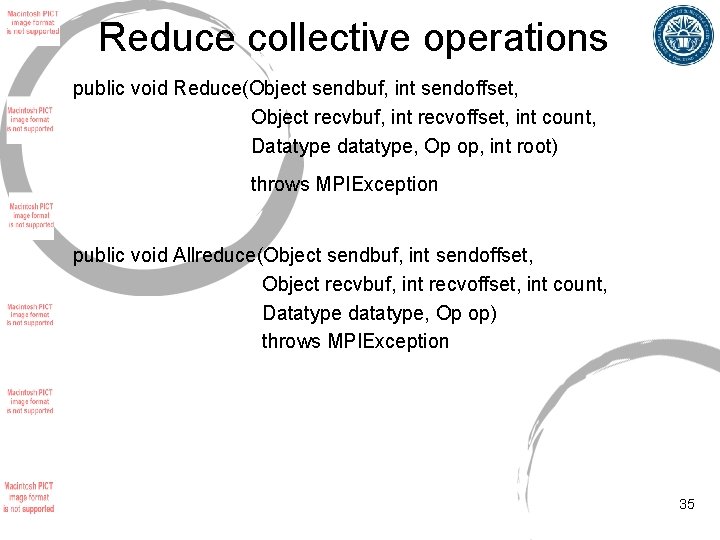

Reduce collective operations public void Reduce(Object sendbuf, int sendoffset, Object recvbuf, int recvoffset, int count, Datatype datatype, Op op, int root) throws MPIException public void Allreduce(Object sendbuf, int sendoffset, Object recvbuf, int recvoffset, int count, Datatype datatype, Op op) throws MPIException 35

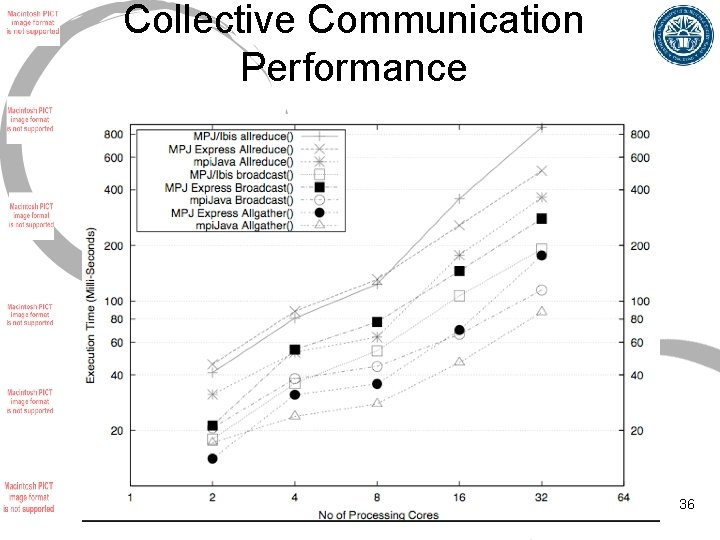

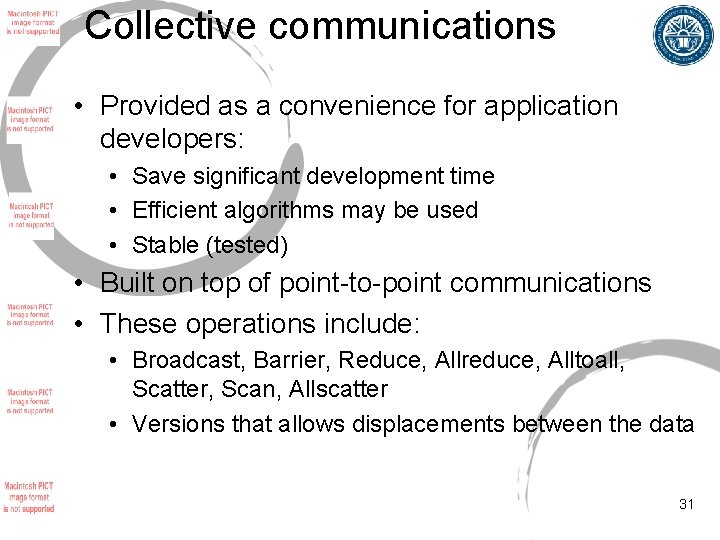

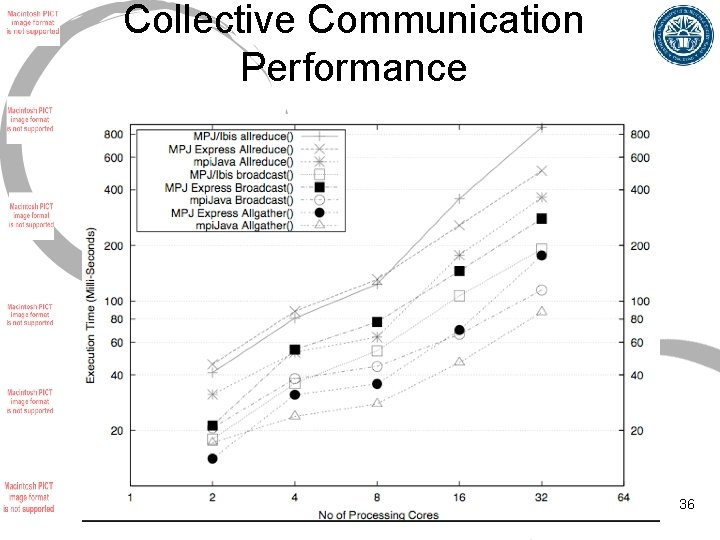

Collective Communication Performance 36

Summary • MPJ Express is a Java messaging system that can be used to write parallel applications: • MPJ/Ibis and mpi. Java are other similar software • MPJ Express provides point-to-point communication methods like Send() and Recv(): • Blocking and non-blocking versions • Collective communications is also supported • Feel free to contact me if you have any queries 37