Parallel Programming Examples Assignment 2 Parallel Programming Goal

- Slides: 30

Parallel Programming Examples Assignment 2

Parallel Programming • Goal: Parallelize some simple sequential programs • Understand some of the challenges involved • Not tied to any specific language • Homework • Implement each parallel program and check performance • Try to improve performance with better parallelization strategies 2

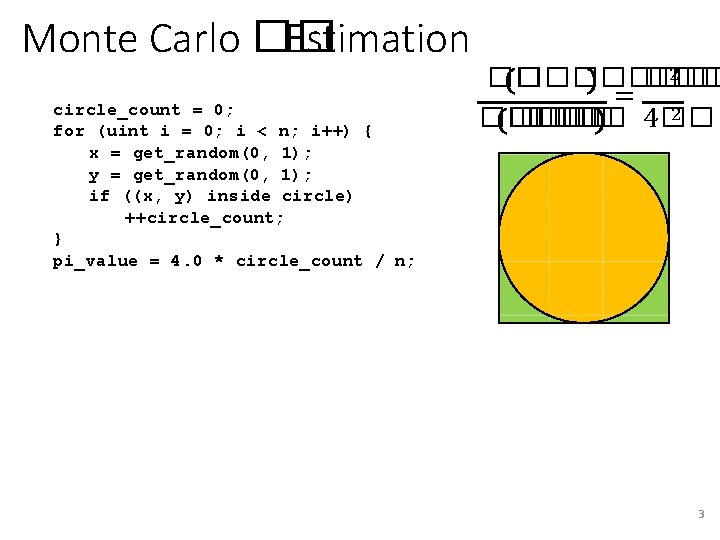

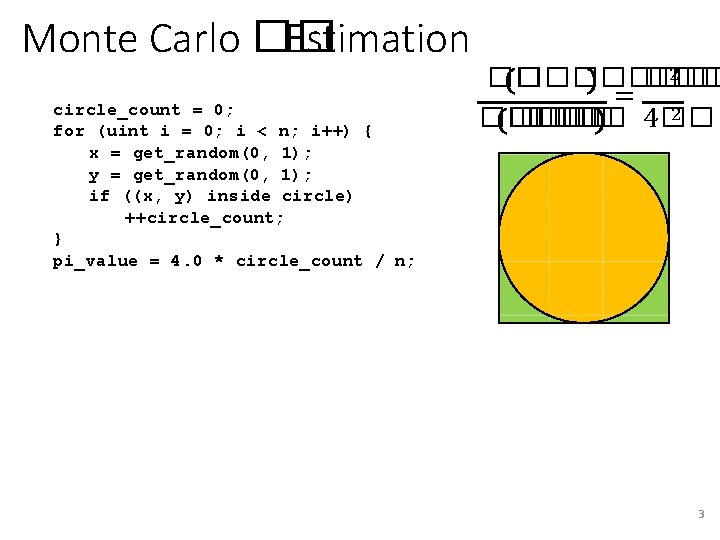

Monte Carlo �� Estimation circle_count = 0; for (uint i = 0; i < n; i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) ++circle_count; } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� 3

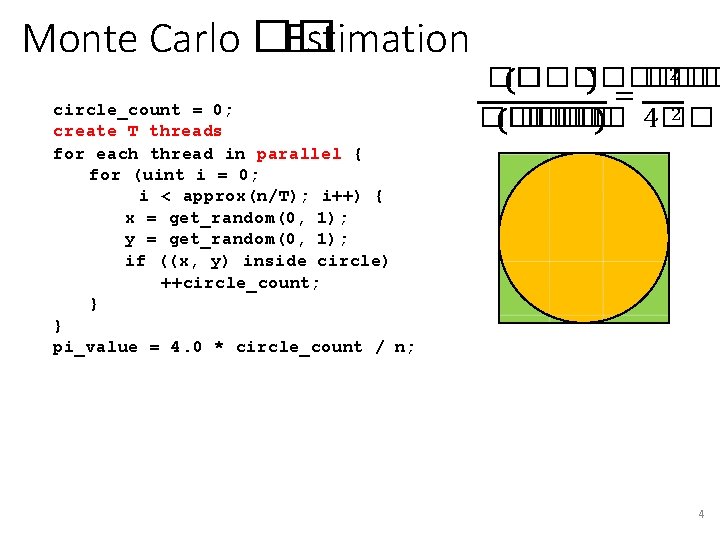

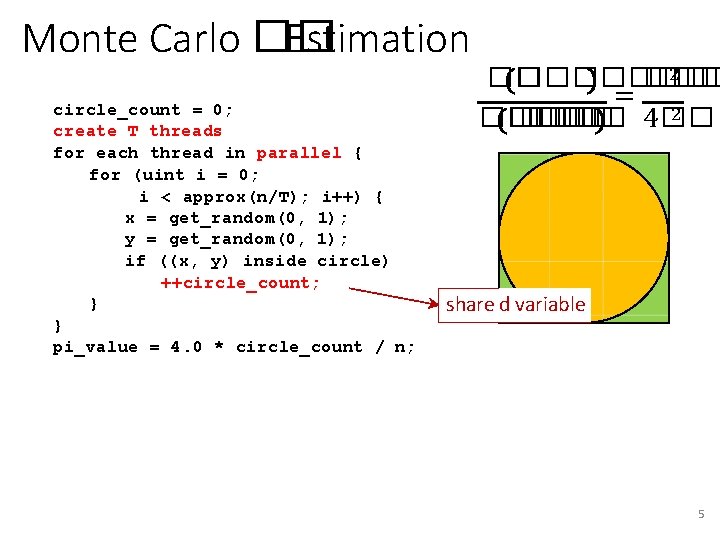

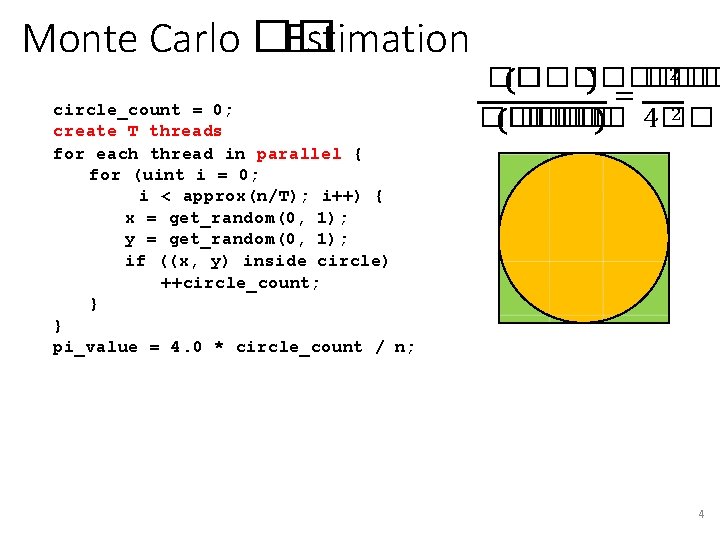

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) ++circle_count; } } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4

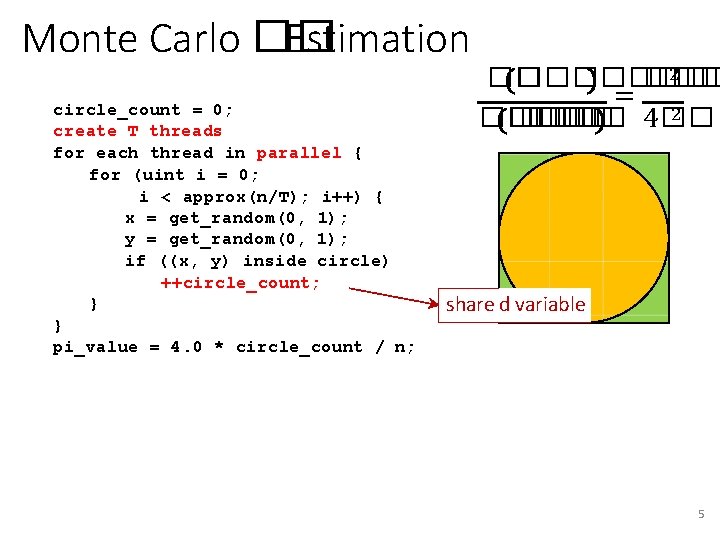

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) ++circle_count; } } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� share d variable 5

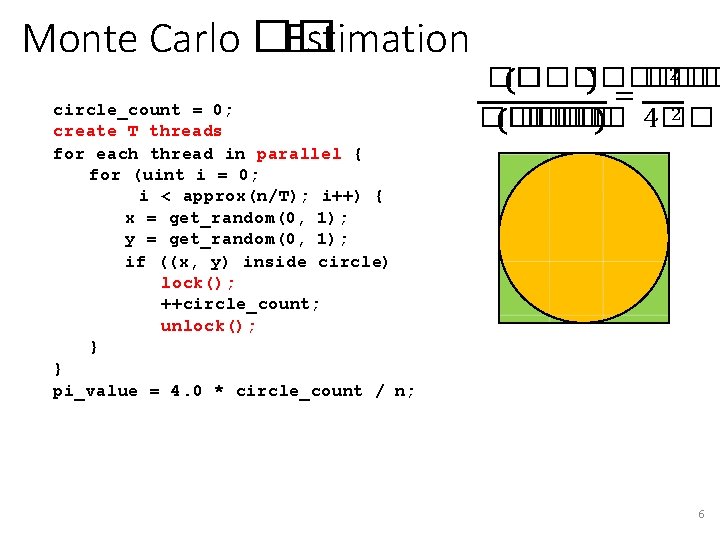

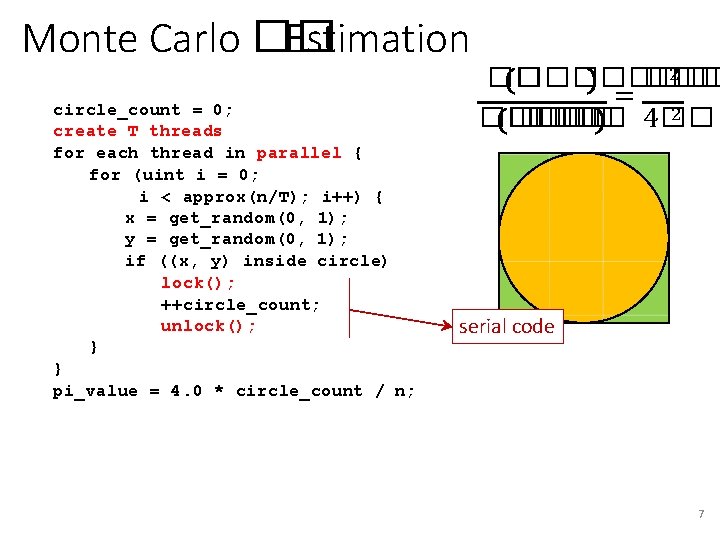

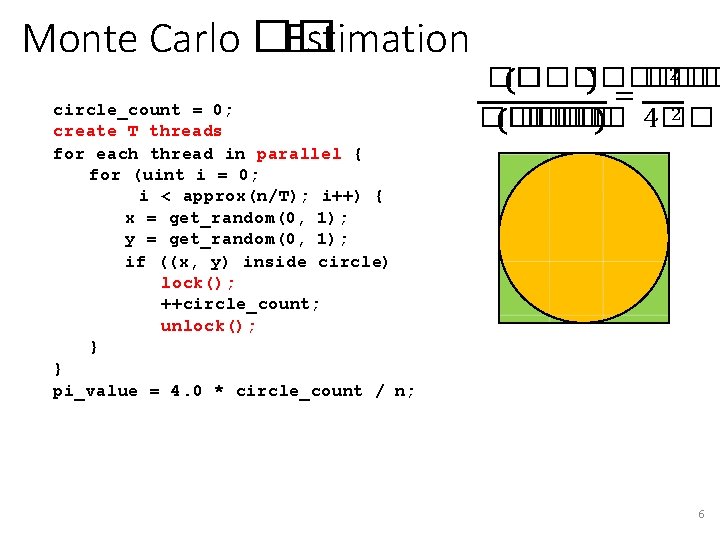

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) lock(); ++circle_count; unlock(); } } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� 6

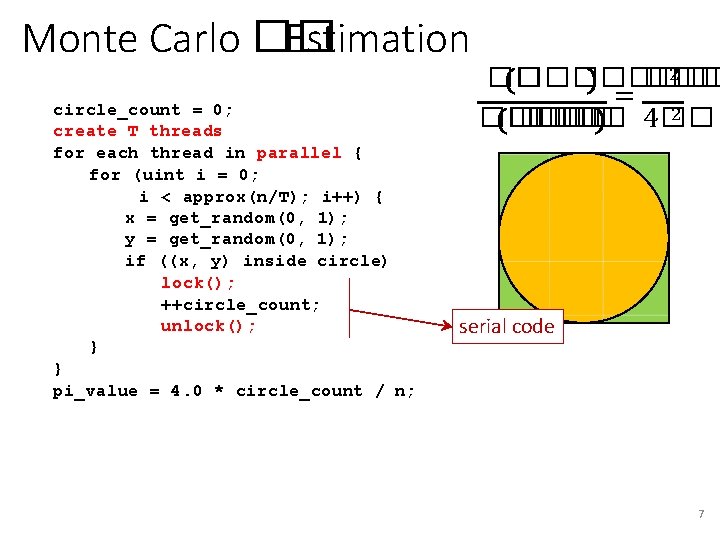

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) lock(); ++circle_count; unlock(); } } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� serial code 7

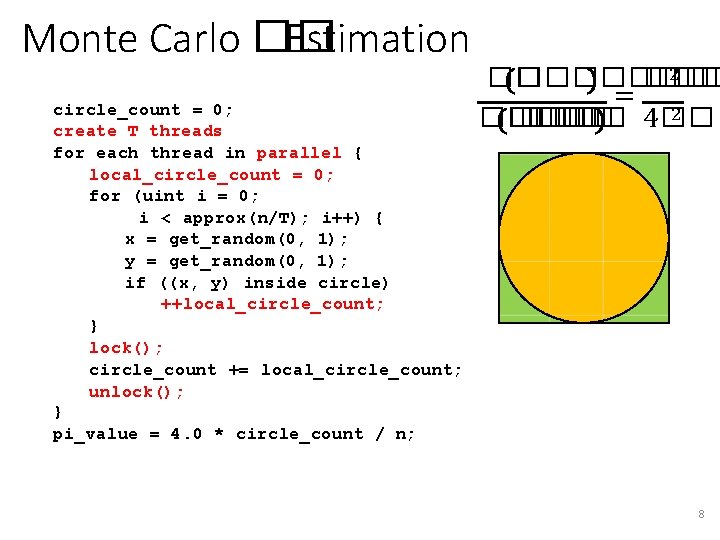

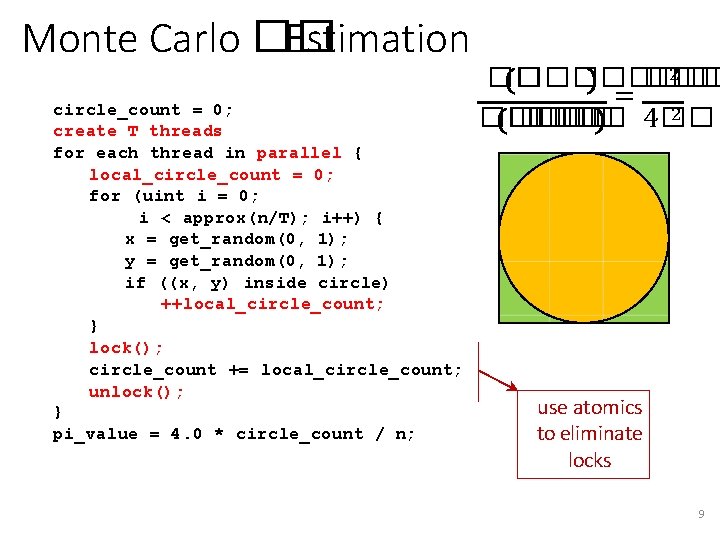

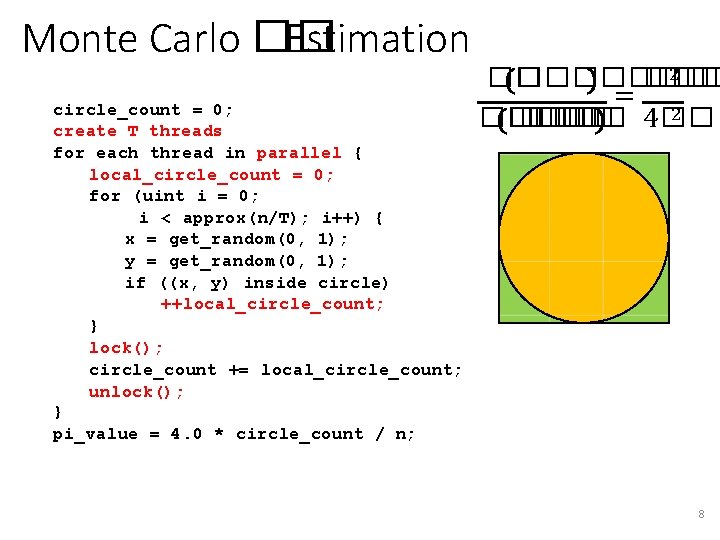

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { local_circle_count = 0; for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) ++local_circle_count; } lock(); circle_count += local_circle_count; unlock(); } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� 8

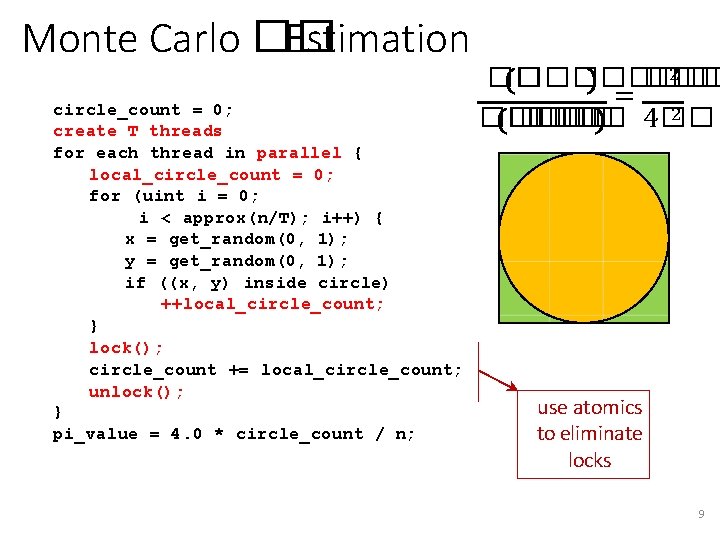

Monte Carlo �� Estimation circle_count = 0; create T threads for each thread in parallel { local_circle_count = 0; for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); y = get_random(0, 1); if ((x, y) inside circle) ++local_circle_count; } lock(); circle_count += local_circle_count; unlock(); } pi_value = 4. 0 * circle_count / n; 2 �� ���� ��� = 2 �� �� �� 4�� use atomics to eliminate locks 9

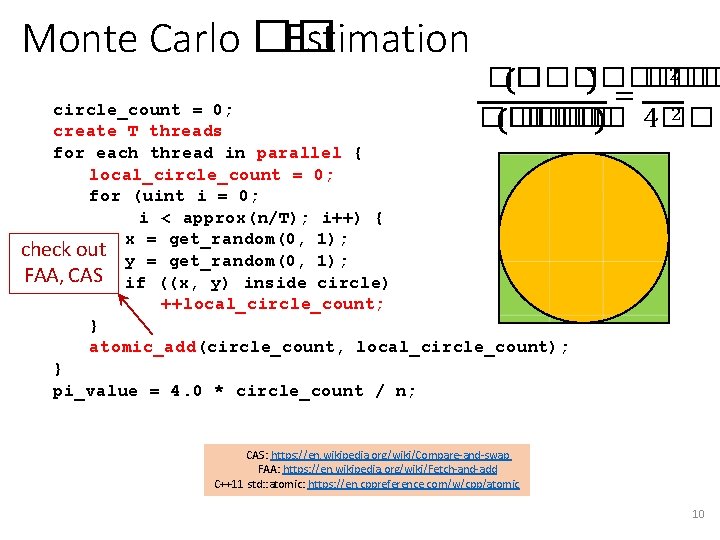

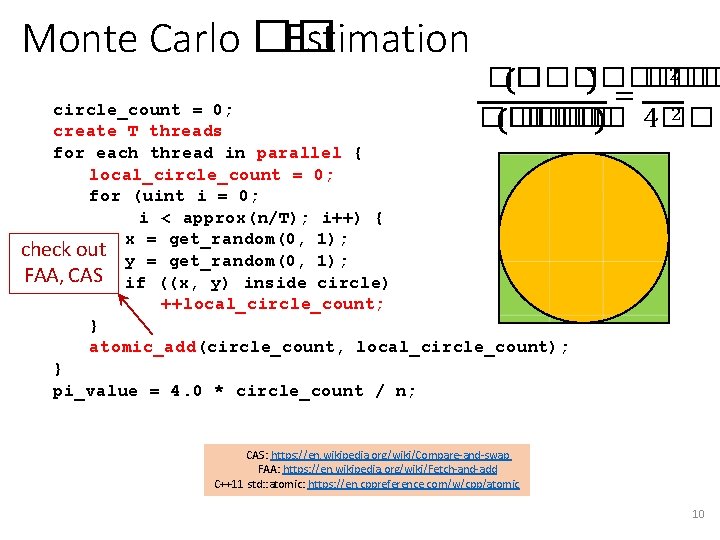

Monte Carlo �� Estimation 2 �� ���� ��� = 2 �� �� �� 4�� circle_count = 0; create T threads for each thread in parallel { local_circle_count = 0; for (uint i = 0; i < approx(n/T); i++) { x = get_random(0, 1); check out y = get_random(0, 1); FAA, CAS if ((x, y) inside circle) ++local_circle_count; } atomic_add(circle_count, local_circle_count); } pi_value = 4. 0 * circle_count / n; CAS: https: //en. wikipedia. org/wiki/Compare-and-swap FAA: https: //en. wikipedia. org/wiki/Fetch-and-add C++11 std: : atomic: https: //en. cppreference. com/w/cpp/atomic 10

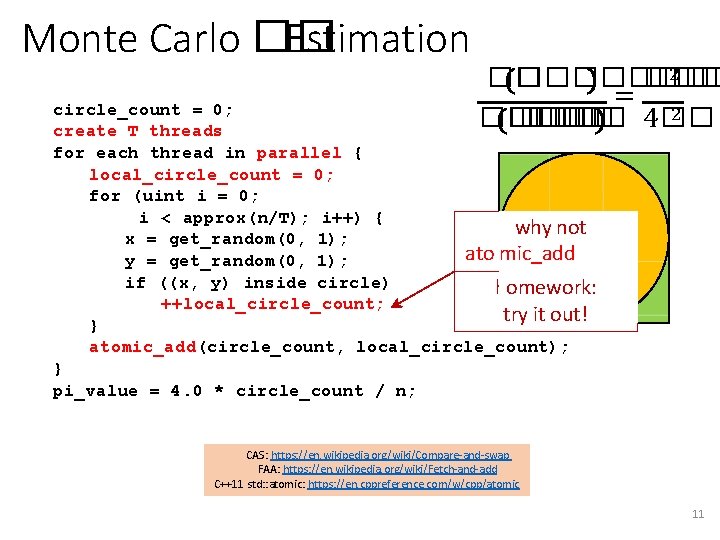

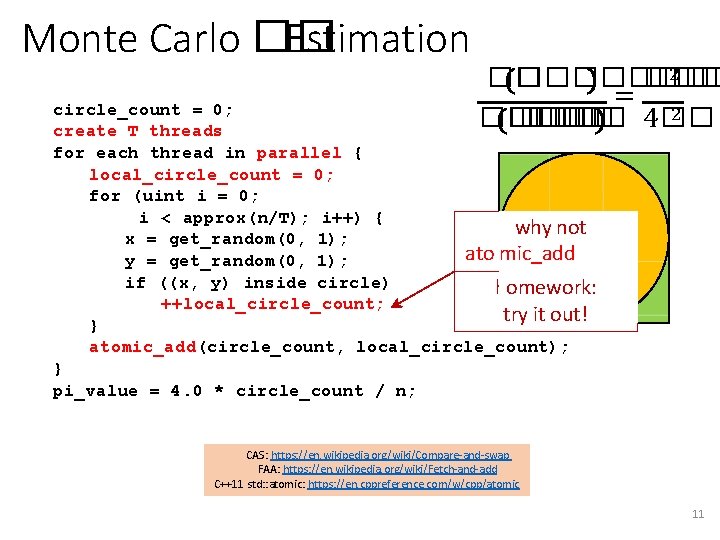

Monte Carlo �� Estimation 2 �� ���� ��� = 2 �� �� �� 4�� circle_count = 0; create T threads for each thread in parallel { local_circle_count = 0; for (uint i = 0; i < approx(n/T); i++) { why not x = get_random(0, 1); ato mic_add y = get_random(0, 1); if ((x, y) inside circle) omework: hhere? ++local_circle_count; try it out! } atomic_add(circle_count, local_circle_count); } pi_value = 4. 0 * circle_count / n; CAS: https: //en. wikipedia. org/wiki/Compare-and-swap FAA: https: //en. wikipedia. org/wiki/Fetch-and-add C++11 std: : atomic: https: //en. cppreference. com/w/cpp/atomic 11

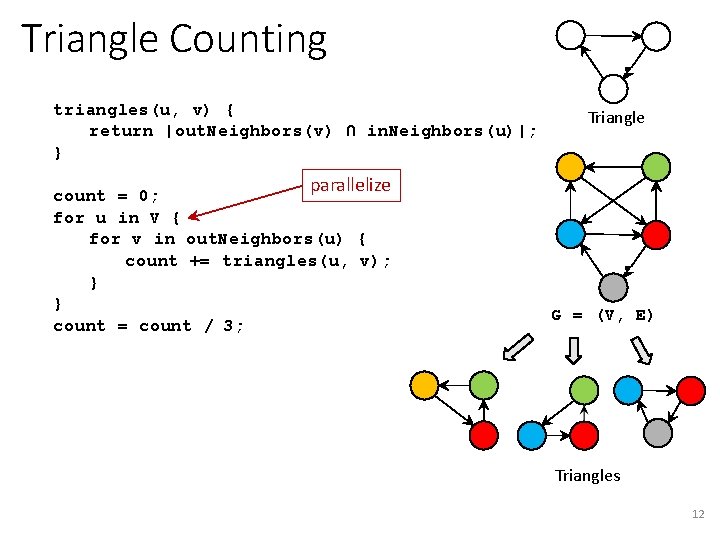

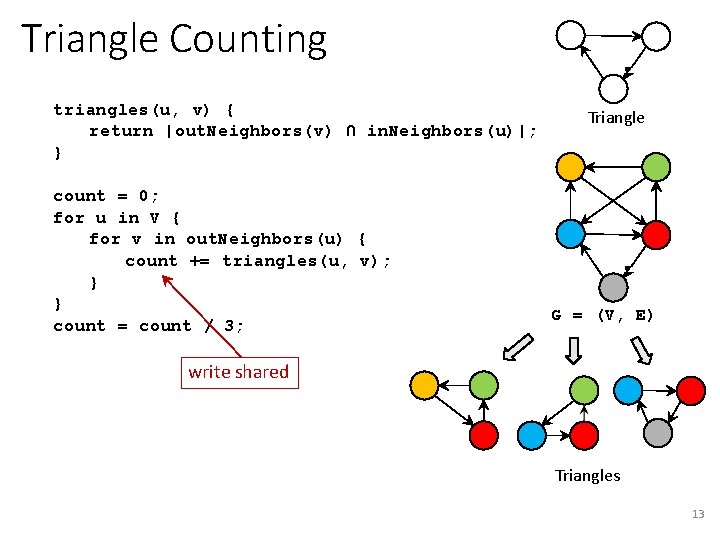

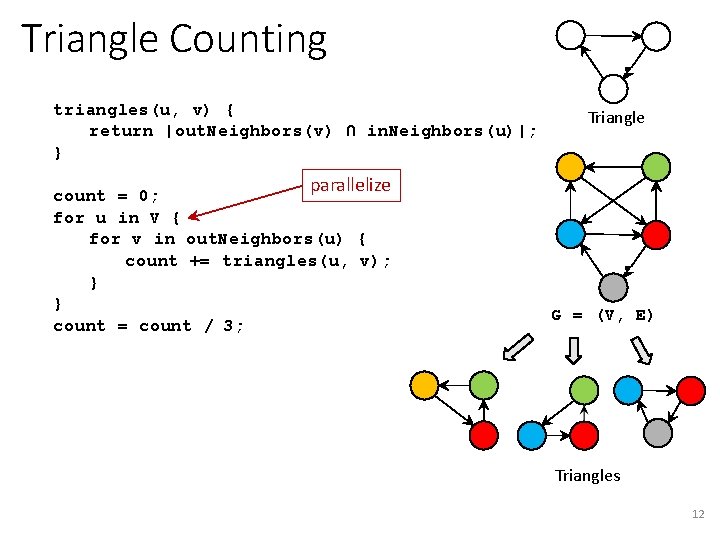

Triangle Counting triangles(u, v) { return |out. Neighbors(v) ∩ in. Neighbors(u)|; } Triangle parallelize count = 0; for u in V { for v in out. Neighbors(u) { count += triangles(u, v); } } count = count / 3; G = (V, E) Triangles 12

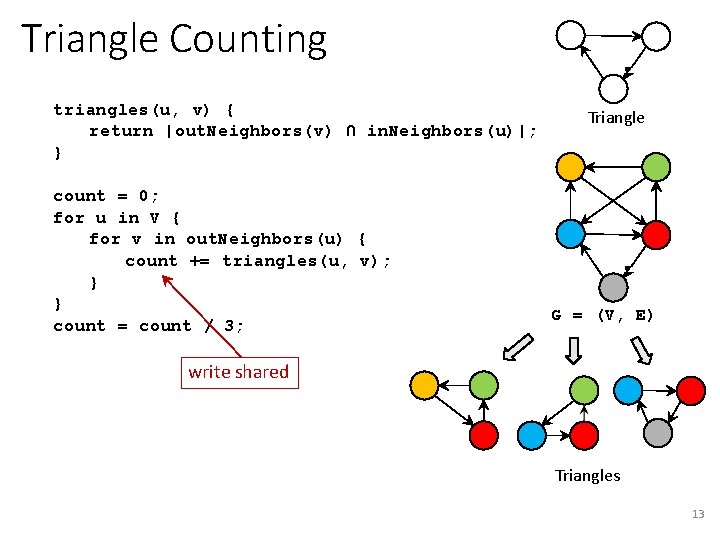

Triangle Counting triangles(u, v) { return |out. Neighbors(v) ∩ in. Neighbors(u)|; } count = 0; for u in V { for v in out. Neighbors(u) { count += triangles(u, v); } } count = count / 3; Triangle G = (V, E) write shared Triangles 13

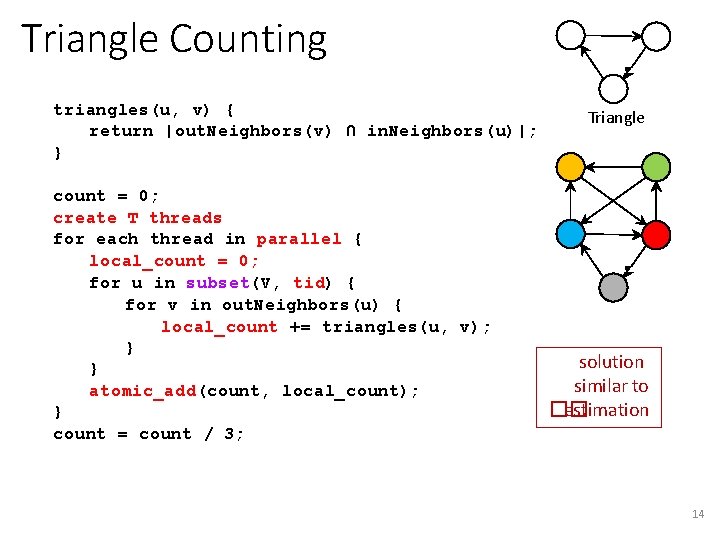

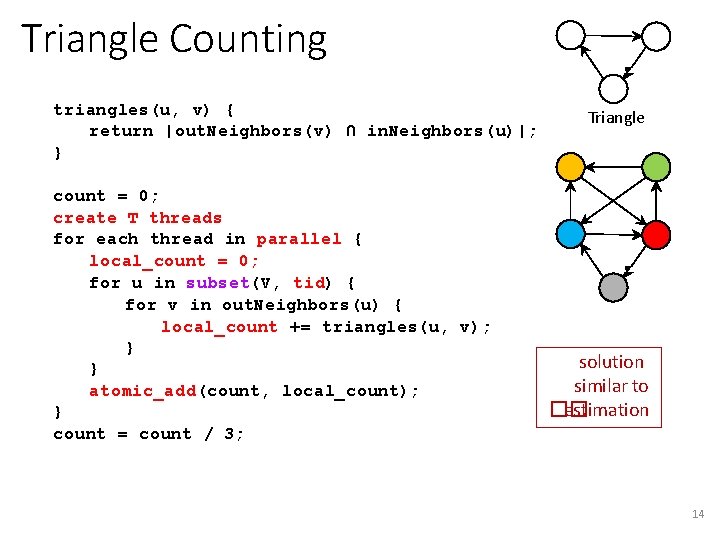

Triangle Counting triangles(u, v) { return |out. Neighbors(v) ∩ in. Neighbors(u)|; } count = 0; create T threads for each thread in parallel { local_count = 0; for u in subset(V, tid) { for v in out. Neighbors(u) { local_count += triangles(u, v); } } atomic_add(count, local_count); } count = count / 3; Triangle solution similar to �� estimation 14

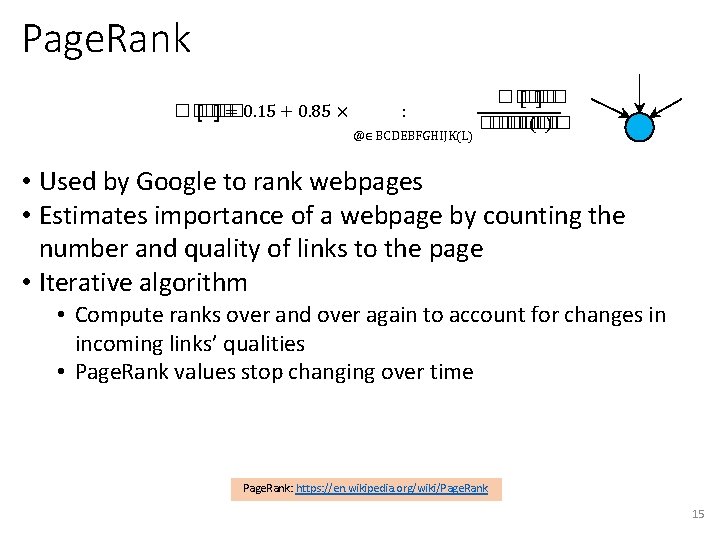

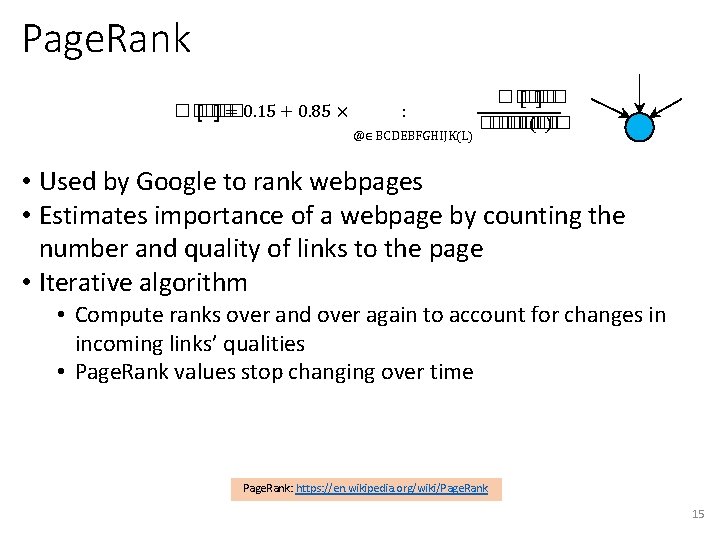

Page. Rank ���� �� = 0. 15 + 0. 85 × : @∈ BCDEBFGHIJK(L) ���� �� (�� ) • Used by Google to rank webpages • Estimates importance of a webpage by counting the number and quality of links to the page • Iterative algorithm • Compute ranks over and over again to account for changes in incoming links’ qualities • Page. Rank values stop changing over time Page. Rank: https: //en. wikipedia. org/wiki/Page. Rank 15

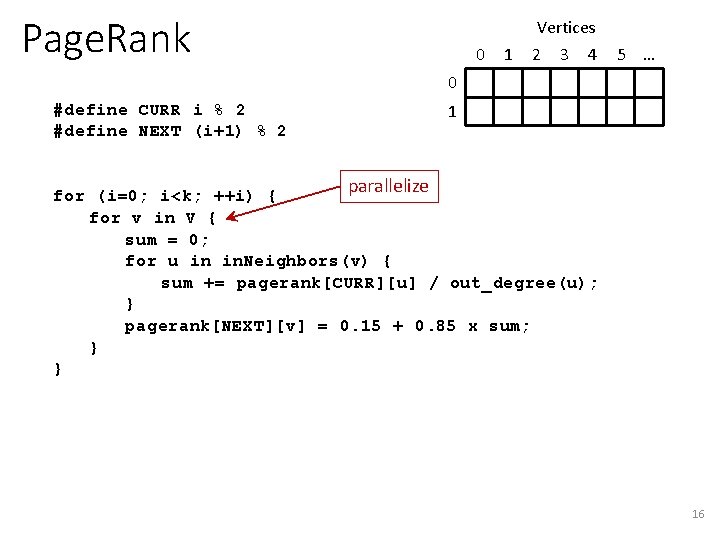

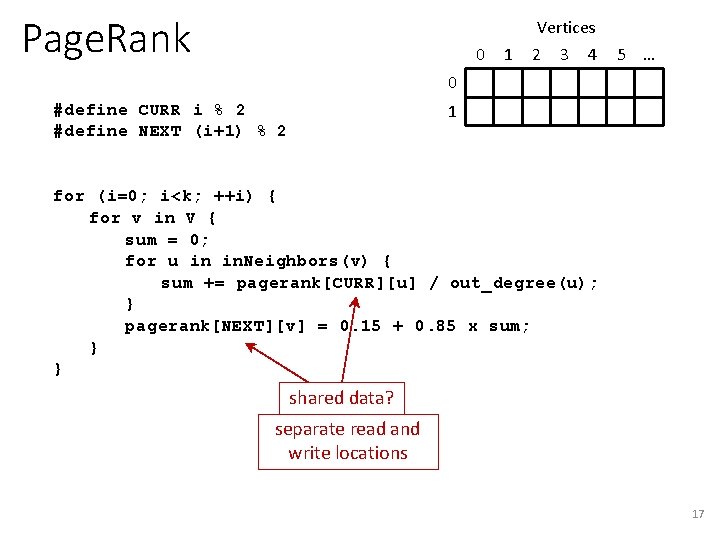

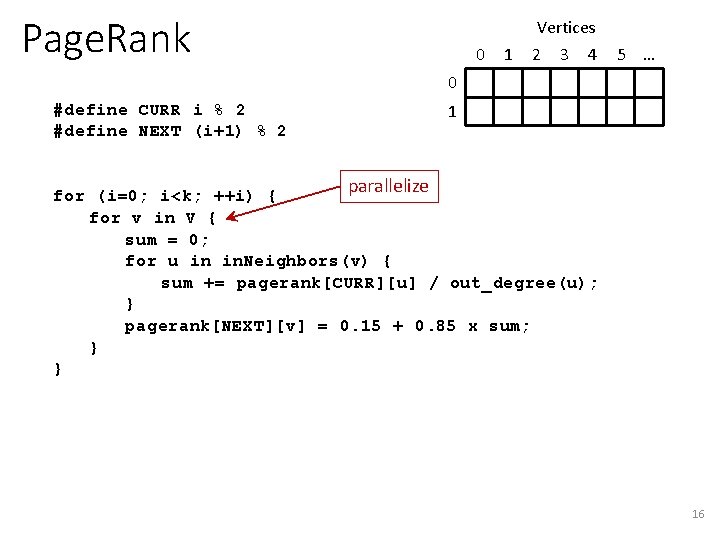

Page. Rank 0 1 Vertices 2 3 4 5 … 0 1 #define CURR i % 2 #define NEXT (i+1) % 2 parallelize for (i=0; i<k; ++i) { for v in V { sum = 0; for u in in. Neighbors(v) { sum += pagerank[CURR][u] / out_degree(u); } pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; } } 16

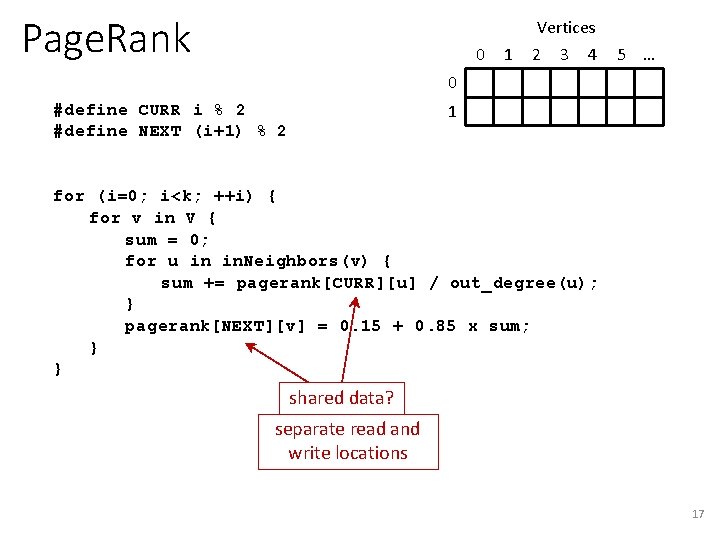

Page. Rank 0 1 Vertices 2 3 4 5 … 0 1 #define CURR i % 2 #define NEXT (i+1) % 2 for (i=0; i<k; ++i) { for v in V { sum = 0; for u in in. Neighbors(v) { sum += pagerank[CURR][u] / out_degree(u); } pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; } } shared data? separate read and write locations 17

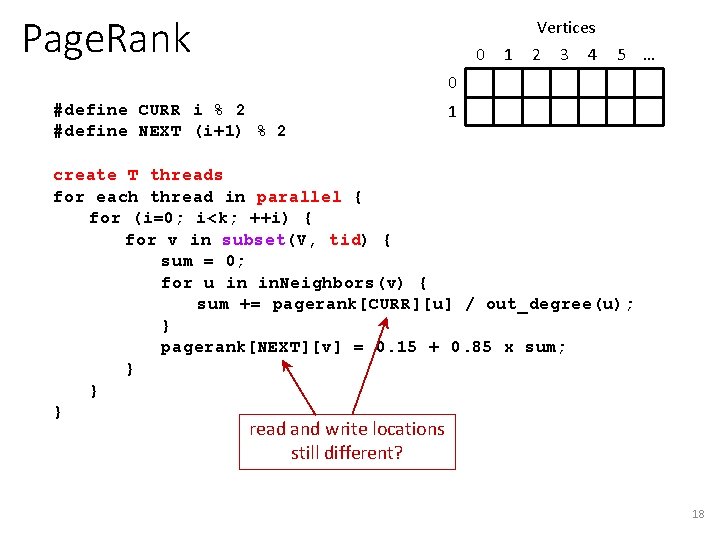

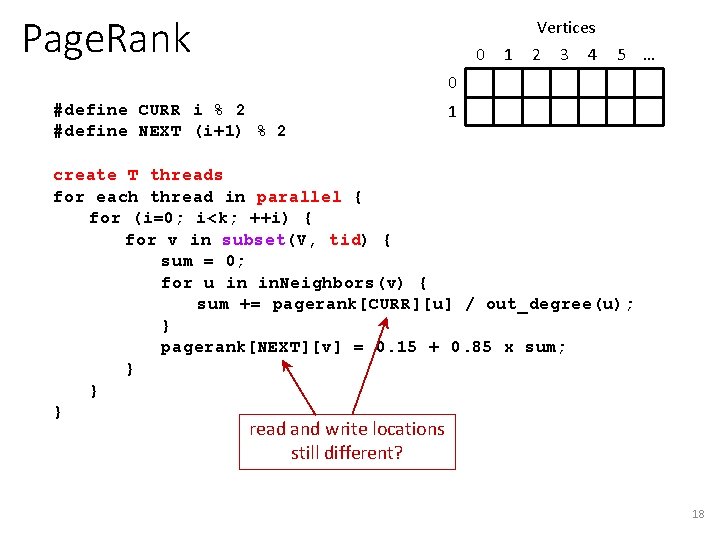

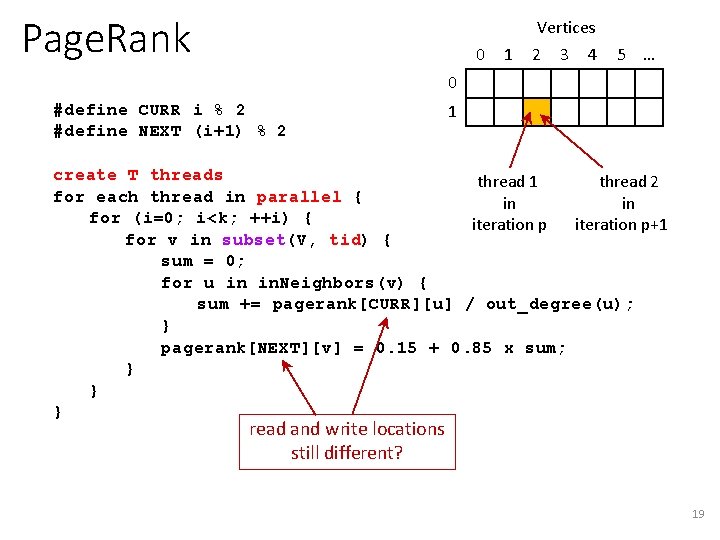

Page. Rank 0 1 Vertices 2 3 4 5 … 0 #define CURR i % 2 #define NEXT (i+1) % 2 1 create T threads for each thread in parallel { for (i=0; i<k; ++i) { for v in subset(V, tid) { sum = 0; for u in in. Neighbors(v) { sum += pagerank[CURR][u] / out_degree(u); } pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; } } } read and write locations still different? 18

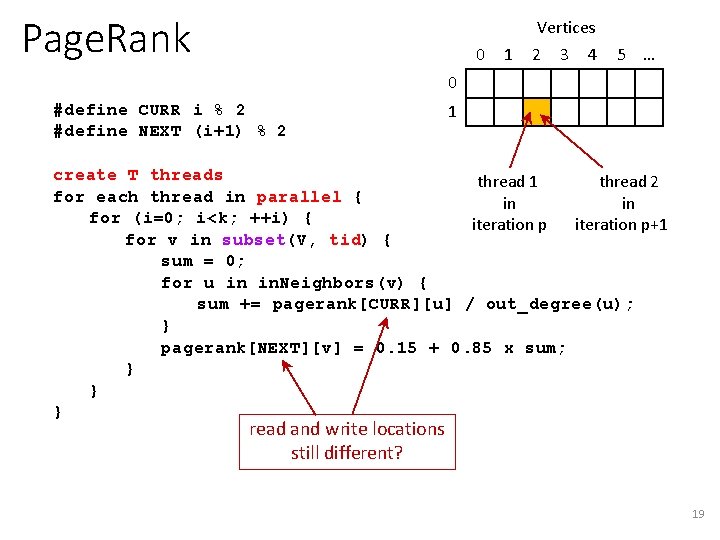

Page. Rank 0 1 Vertices 2 3 4 5 … 0 #define CURR i % 2 #define NEXT (i+1) % 2 1 create T threads thread 1 thread 2 for each thread in parallel { in in for (i=0; i<k; ++i) { iteration p+1 for v in subset(V, tid) { sum = 0; for u in in. Neighbors(v) { sum += pagerank[CURR][u] / out_degree(u); } pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; } } } read and write locations still different? 19

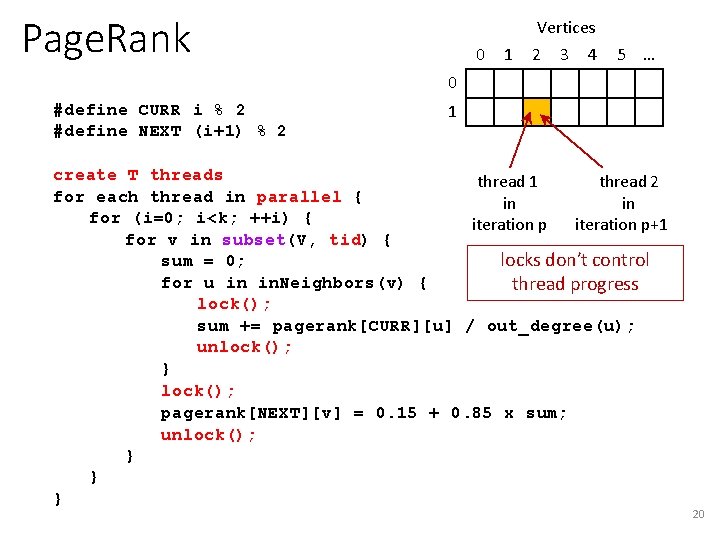

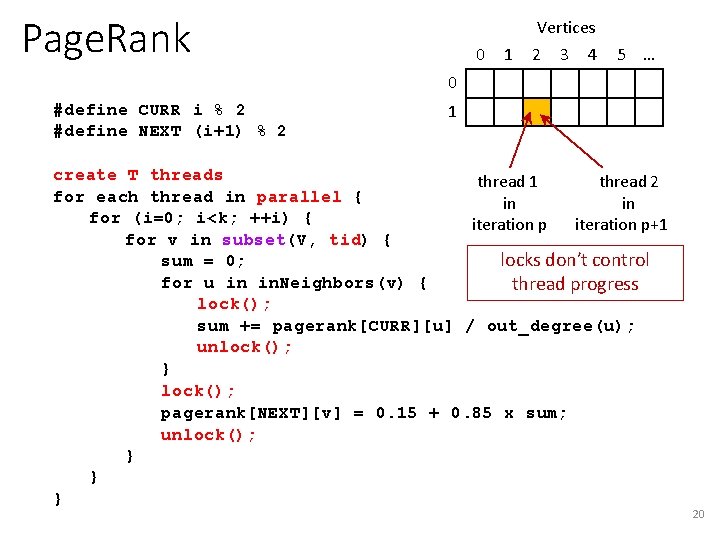

Page. Rank 0 1 Vertices 2 3 4 5 … 0 #define CURR i % 2 #define NEXT (i+1) % 2 1 create T threads thread 1 thread 2 for each thread in parallel { in in for (i=0; i<k; ++i) { iteration p+1 for v in subset(V, tid) { locks don’t control sum = 0; for u in in. Neighbors(v) { thread progress lock(); sum += pagerank[CURR][u] / out_degree(u); unlock(); } lock(); pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; unlock(); } } } 20

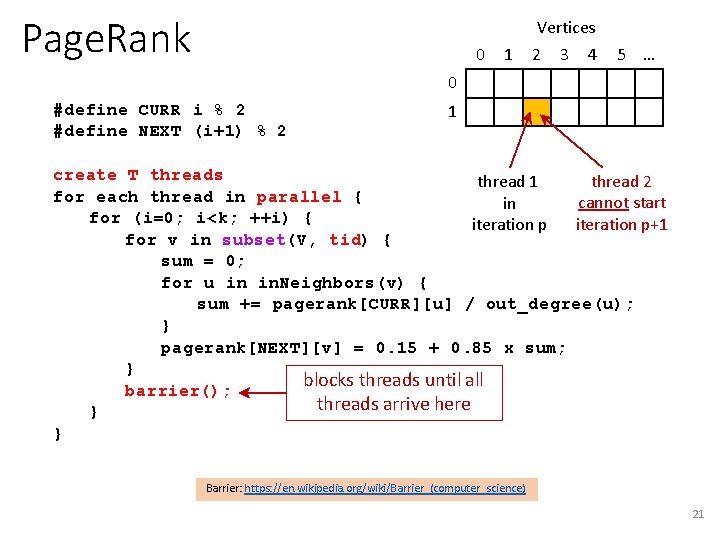

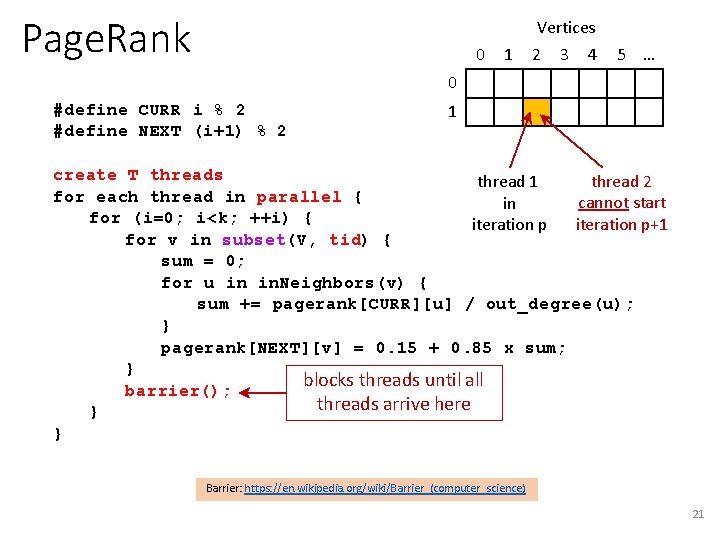

Page. Rank 0 1 Vertices 2 3 4 5 … 0 #define CURR i % 2 #define NEXT (i+1) % 2 1 create T threads thread 2 thread 1 for each thread in parallel { cannot start in for (i=0; i<k; ++i) { iteration p+1 iteration p for v in subset(V, tid) { sum = 0; for u in in. Neighbors(v) { sum += pagerank[CURR][u] / out_degree(u); } pagerank[NEXT][v] = 0. 15 + 0. 85 x sum; } blocks threads until all barrier(); threads arrive here } } Barrier: https: //en. wikipedia. org/wiki/Barrier_(computer_science) 21

End of assignment programs Following Djikstra’s algorithm not related to assignment 2

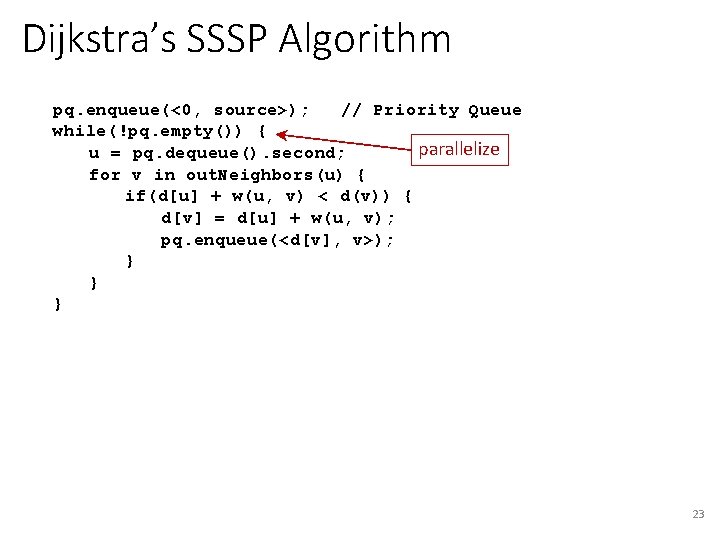

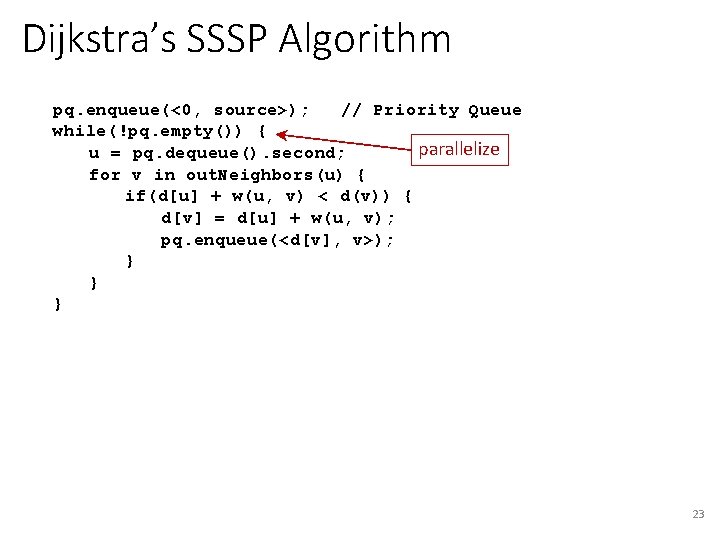

Dijkstra’s SSSP Algorithm pq. enqueue(<0, source>); // Priority Queue while(!pq. empty()) { parallelize u = pq. dequeue(). second; for v in out. Neighbors(u) { if(d[u] + w(u, v) < d(v)) { d[v] = d[u] + w(u, v); pq. enqueue(<d[v], v>); } } } 23

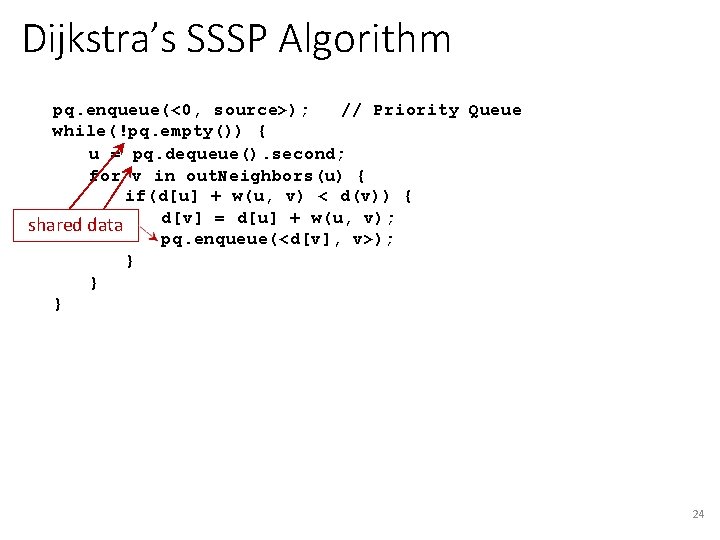

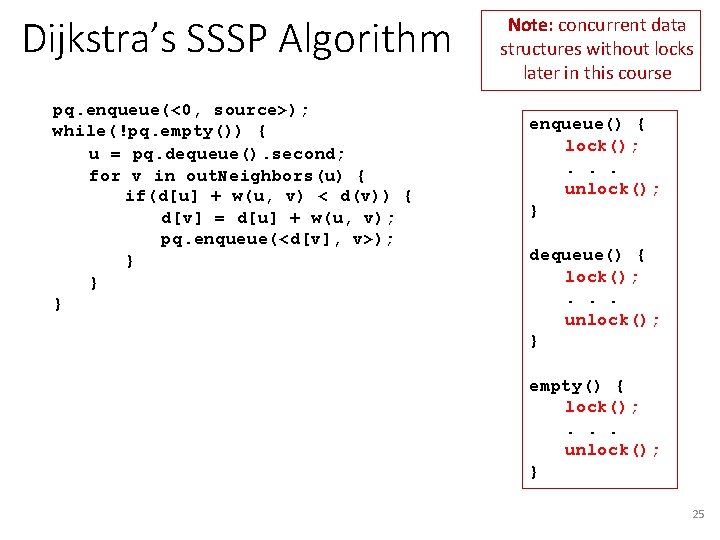

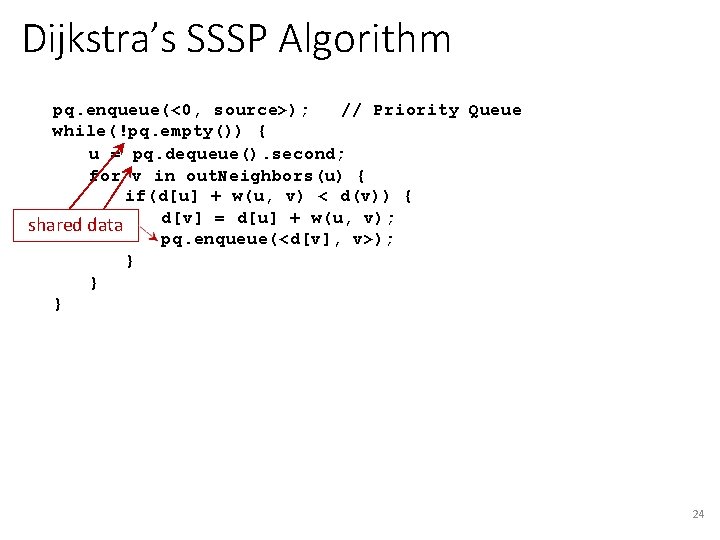

Dijkstra’s SSSP Algorithm pq. enqueue(<0, source>); // Priority Queue while(!pq. empty()) { u = pq. dequeue(). second; for v in out. Neighbors(u) { if(d[u] + w(u, v) < d(v)) { d[v] = d[u] + w(u, v); shared data pq. enqueue(<d[v], v>); } } } 24

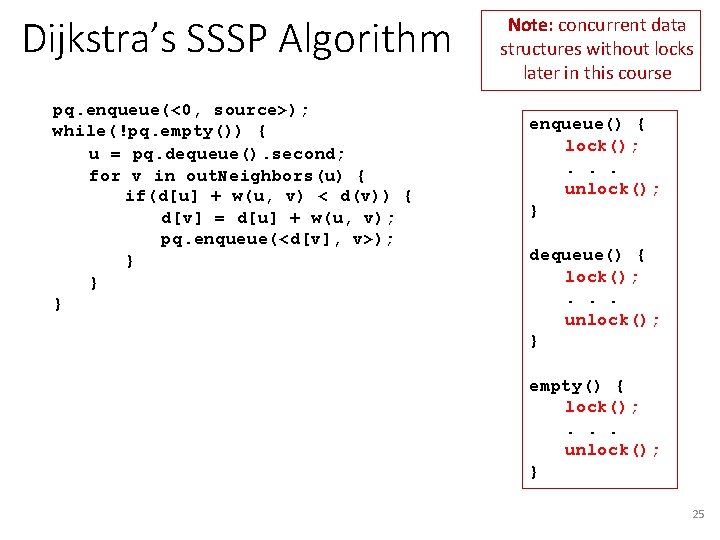

Dijkstra’s SSSP Algorithm pq. enqueue(<0, source>); while(!pq. empty()) { u = pq. dequeue(). second; for v in out. Neighbors(u) { if(d[u] + w(u, v) < d(v)) { d[v] = d[u] + w(u, v); pq. enqueue(<d[v], v>); } } } Note: concurrent data structures without locks later in this course enqueue() { lock(); . . . unlock(); } dequeue() { lock(); . . . unlock(); } empty() { lock(); . . . unlock(); } 25

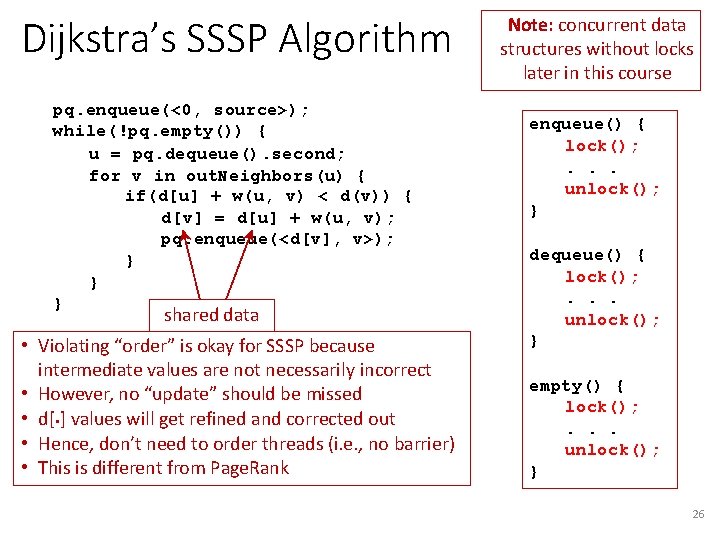

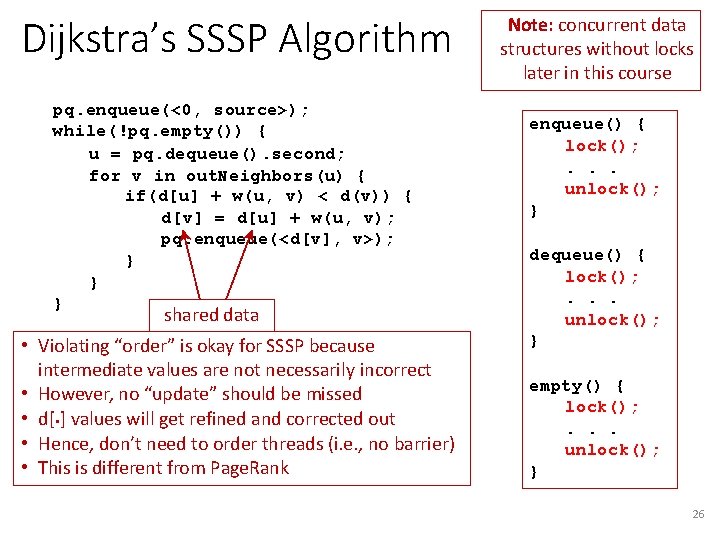

Dijkstra’s SSSP Algorithm pq. enqueue(<0, source>); while(!pq. empty()) { u = pq. dequeue(). second; for v in out. Neighbors(u) { if(d[u] + w(u, v) < d(v)) { d[v] = d[u] + w(u, v); pq. enqueue(<d[v], v>); } } } shared data • Violating “order” is okay for SSSP because intermediate values are not necessarily incorrect • However, no “update” should be missed • d[. ] values will get refined and corrected out • Hence, don’t need to order threads (i. e. , no barrier) • This is different from Page. Rank Note: concurrent data structures without locks later in this course enqueue() { lock(); . . . unlock(); } dequeue() { lock(); . . . unlock(); } empty() { lock(); . . . unlock(); } 26

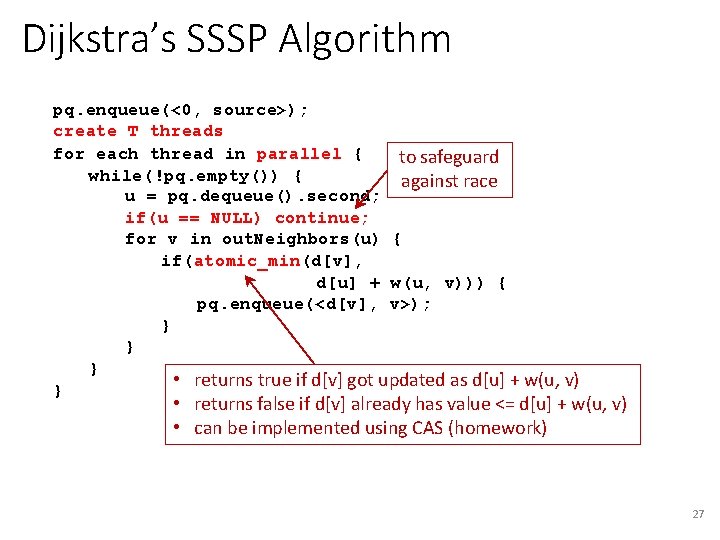

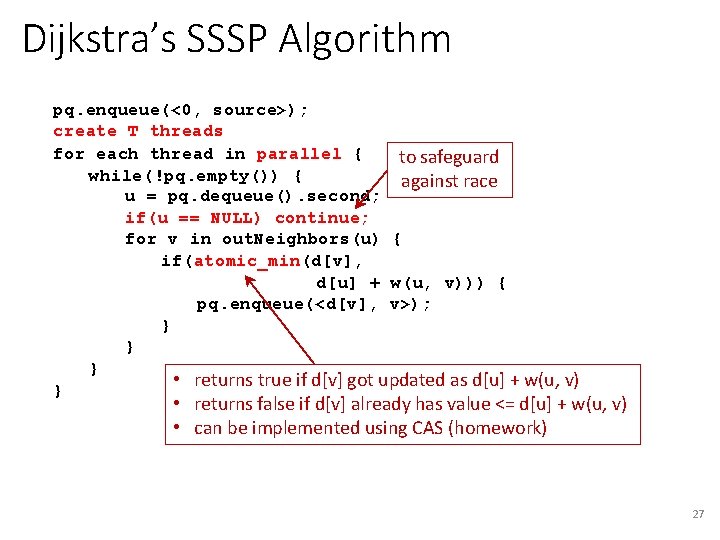

Dijkstra’s SSSP Algorithm pq. enqueue(<0, source>); create T threads for each thread in parallel { to safeguard while(!pq. empty()) { against race u = pq. dequeue(). second; if(u == NULL) continue; for v in out. Neighbors(u) { if(atomic_min(d[v], d[u] + w(u, v))) { pq. enqueue(<d[v], v>); } } } • returns true if d[v] got updated as d[u] + w(u, v) } • returns false if d[v] already has value <= d[u] + w(u, v) • can be implemented using CAS (homework) 27

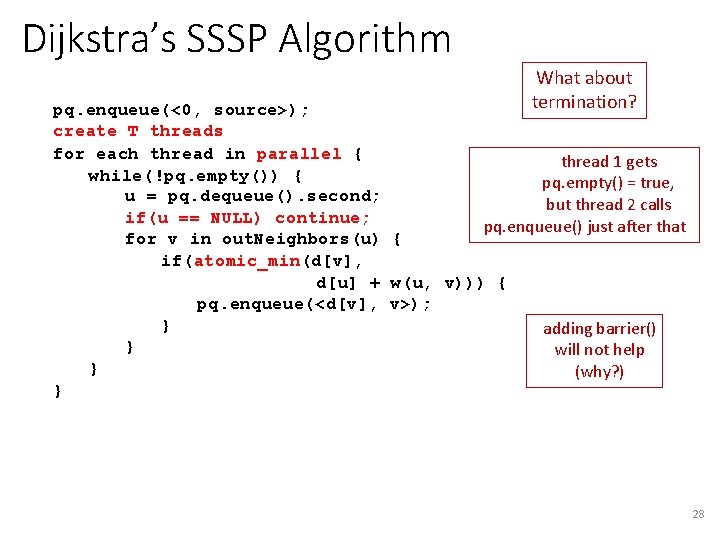

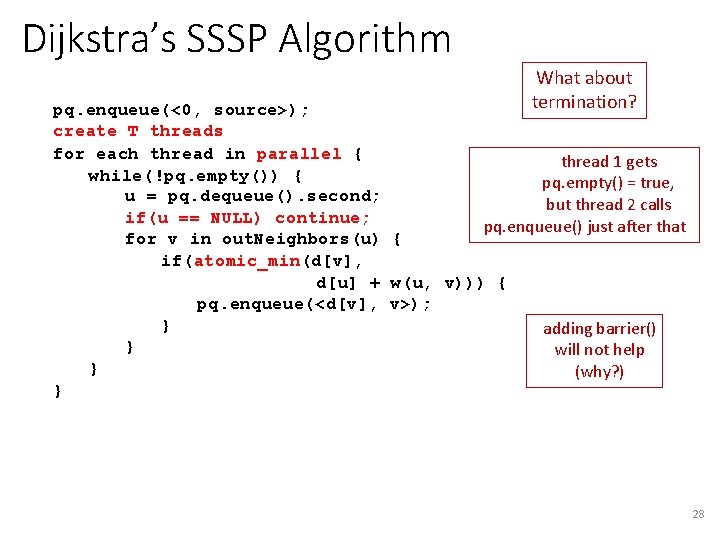

Dijkstra’s SSSP Algorithm What about termination? pq. enqueue(<0, source>); create T threads for each thread in parallel { thread 1 gets while(!pq. empty()) { pq. empty() = true, u = pq. dequeue(). second; but thread 2 calls if(u == NULL) continue; pq. enqueue() just after that for v in out. Neighbors(u) { if(atomic_min(d[v], d[u] + w(u, v))) { pq. enqueue(<d[v], v>); } adding barrier() } will not help } (why? ) } 28

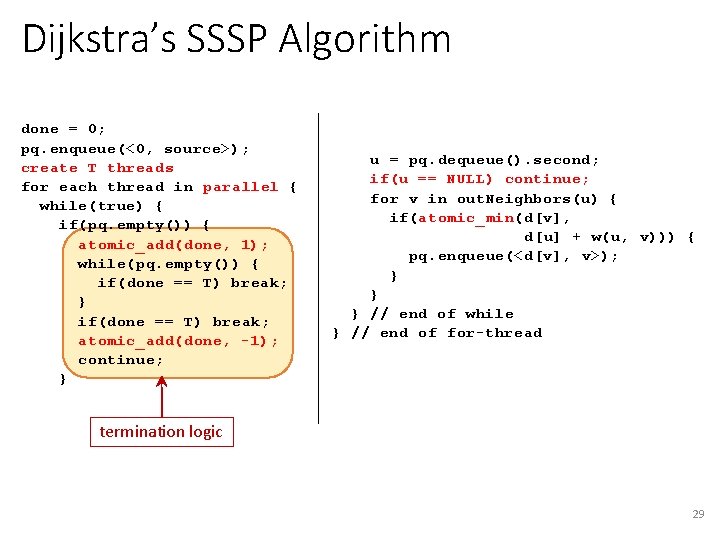

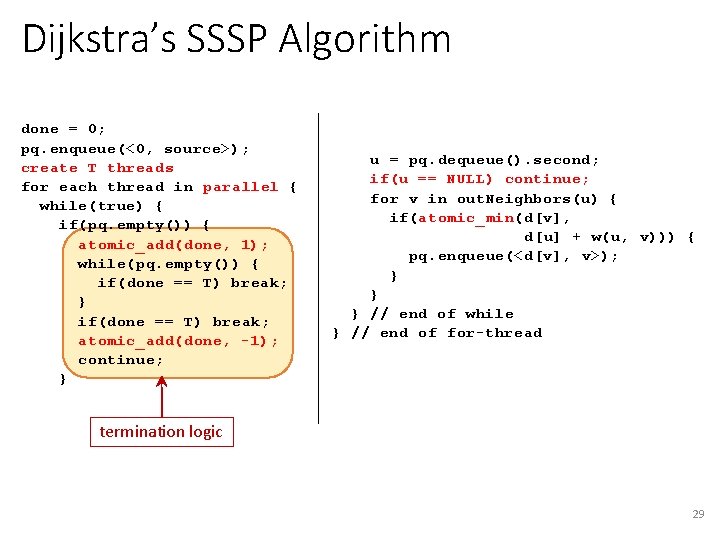

Dijkstra’s SSSP Algorithm done = 0; pq. enqueue(<0, source>); create T threads for each thread in parallel { while(true) { if(pq. empty()) { atomic_add(done, 1); while(pq. empty()) { if(done == T) break; } if(done == T) break; atomic_add(done, -1); continue; } u = pq. dequeue(). second; if(u == NULL) continue; for v in out. Neighbors(u) { if(atomic_min(d[v], d[u] + w(u, v))) { pq. enqueue(<d[v], v>); } } } // end of while } // end of for-thread termination logic 29

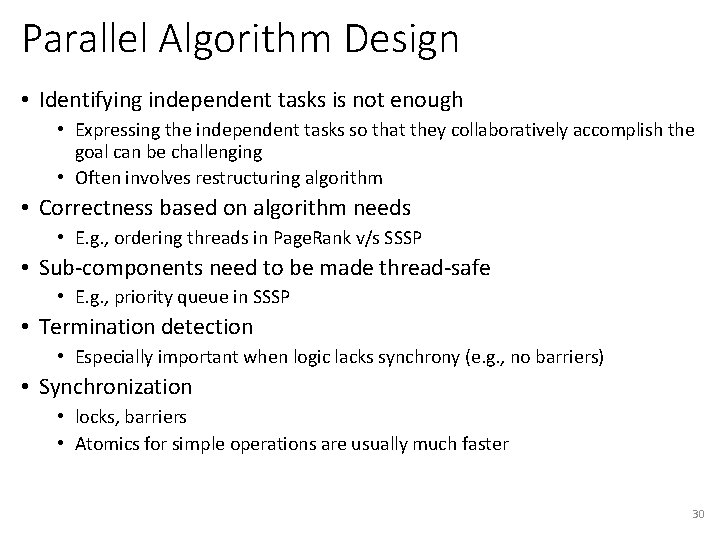

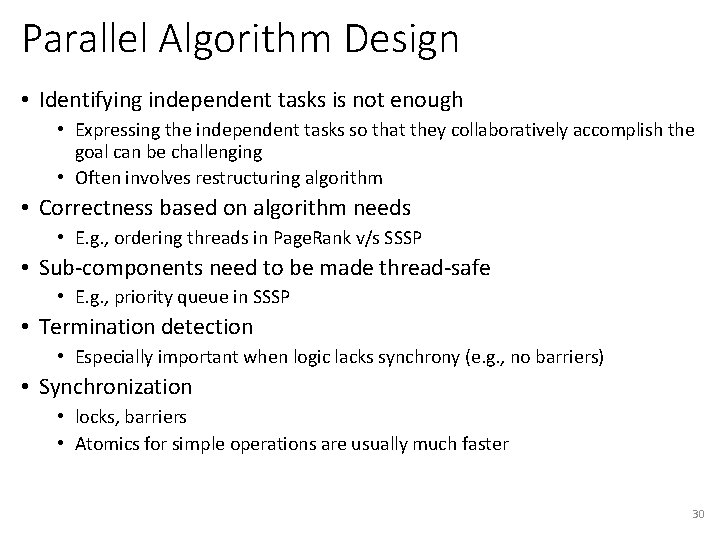

Parallel Algorithm Design • Identifying independent tasks is not enough • Expressing the independent tasks so that they collaboratively accomplish the goal can be challenging • Often involves restructuring algorithm • Correctness based on algorithm needs • E. g. , ordering threads in Page. Rank v/s SSSP • Sub-components need to be made thread-safe • E. g. , priority queue in SSSP • Termination detection • Especially important when logic lacks synchrony (e. g. , no barriers) • Synchronization • locks, barriers • Atomics for simple operations are usually much faster 30