Modular Layer 2 In Open Stack Neutron Robert

Modular Layer 2 In Open. Stack Neutron Robert Kukura, Red Hat Kyle Mestery, Cisco

1. I’ve heard the Open v. Switch and Linuxbridge Neutron Plugins are being deprecated. 2. I’ve heard ML 2 does some cool stuff! 3. I don’t know what ML 2 is but want to learn about it and what it provides.

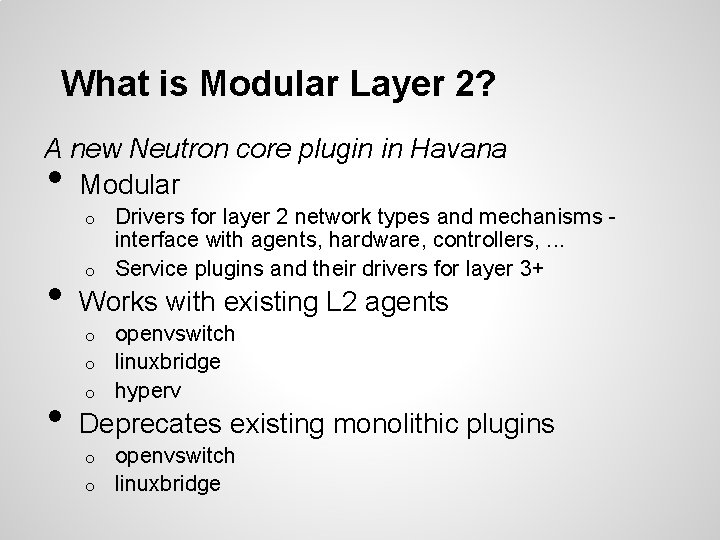

What is Modular Layer 2? A new Neutron core plugin in Havana Modular • Drivers for layer 2 network types and mechanisms interface with agents, hardware, controllers, . . . o Service plugins and their drivers for layer 3+ o • Works with existing L 2 agents openvswitch o linuxbridge o hyperv o • Deprecates existing monolithic plugins openvswitch o linuxbridge o

Motivations For a Modular Layer 2 Plugin

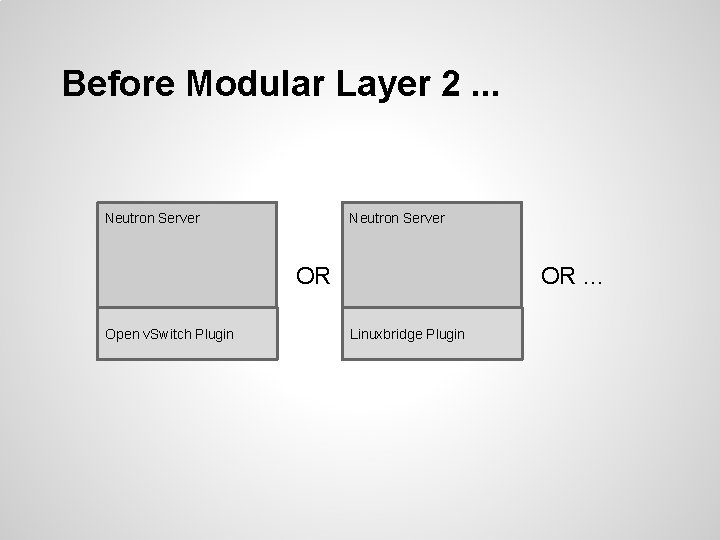

Before Modular Layer 2. . . Neutron Server OR. . . OR Open v. Switch Plugin Linuxbridge Plugin

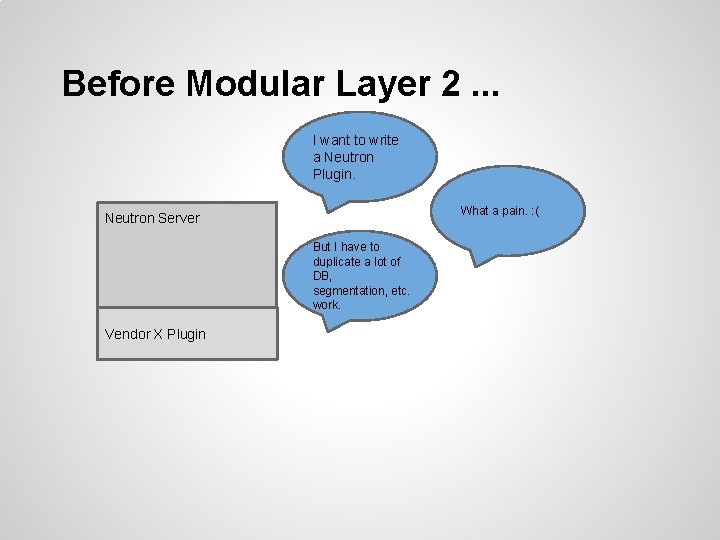

Before Modular Layer 2. . . I want to write a Neutron Plugin. What a pain. : ( Neutron Server But I have to duplicate a lot of DB, segmentation, etc. work. Vendor X Plugin

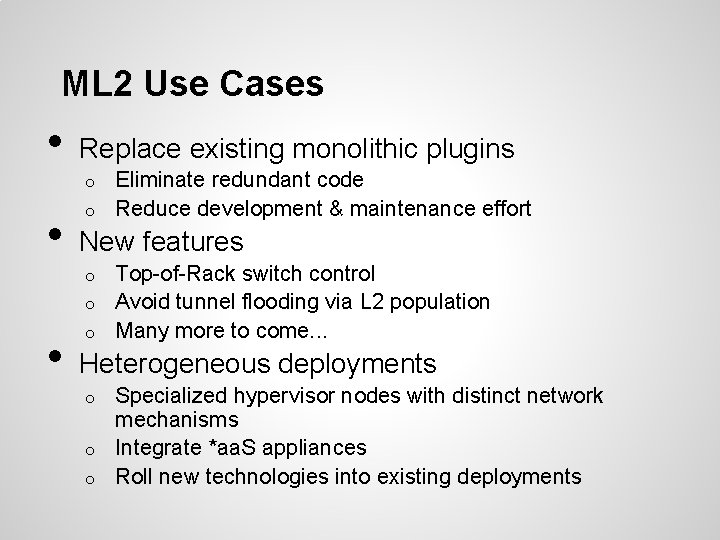

ML 2 Use Cases • Replace existing monolithic plugins Eliminate redundant code o Reduce development & maintenance effort o • New features Top-of-Rack switch control o Avoid tunnel flooding via L 2 population o Many more to come. . . o • Heterogeneous deployments Specialized hypervisor nodes with distinct network mechanisms o Integrate *aa. S appliances o Roll new technologies into existing deployments o

Modular Layer 2 Architecture

The Modular Layer 2 (ML 2) Plugin is a framework allowing Open. Stack Neutron to simultaneously utilize the variety of layer 2 networking technologies found in complex real-world data centers.

What’s Similar? ML 2 is functionally a superset of the monolithic openvswitch, linuxbridge, and hyperv plugins: • • Based on Neutron. DBPlugin. V 2 Models networks in terms of provider attributes RPC interface to L 2 agents Extension APIs

What’s Different? ML 2 introduces several innovations to achieve its goals: • • Cleanly separates management of network types from the mechanisms for accessing those networks o Makes types and mechanisms pluggable via drivers o Allows multiple mechanism drivers to access same network simultaneously o Optional features packaged as mechanism drivers Supports multi-segment networks Flexible port binding L 3 router extension integrated as a service plugin

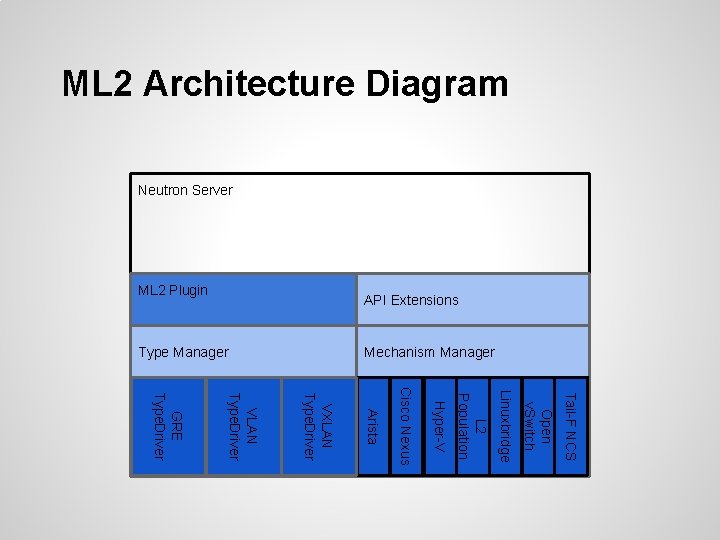

ML 2 Architecture Diagram Neutron Server API Extensions ML 2 Plugin Mechanism Manager Type Manager Tail-F NCS Open v. Switch Linuxbridge L 2 Population Hyper-V Cisco Nexus Arista VXLAN Type. Driver VLAN Type. Driver GRE Type. Driver

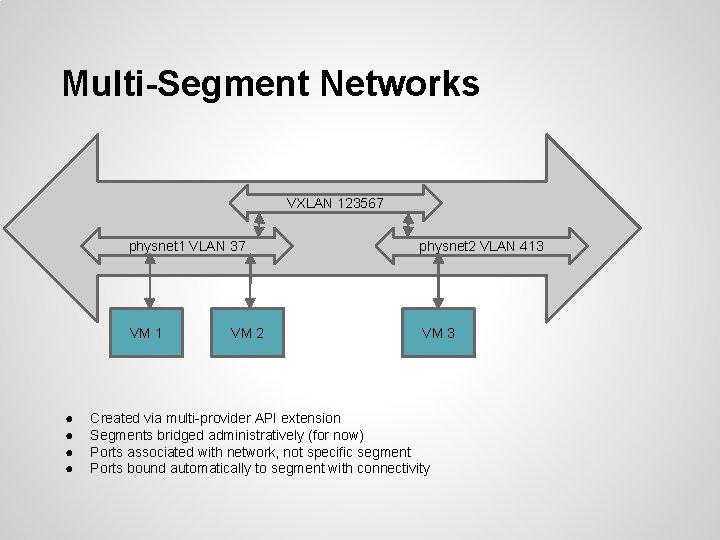

Multi-Segment Networks VXLAN 123567 ● ● physnet 1 VLAN 37 physnet 2 VLAN 413 VM 1 VM 3 VM 2 Created via multi-provider API extension Segments bridged administratively (for now) Ports associated with network, not specific segment Ports bound automatically to segment with connectivity

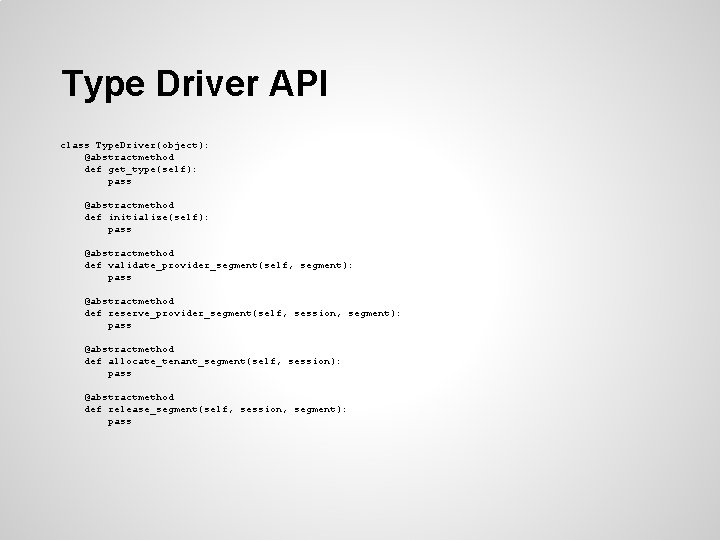

Type Driver API class Type. Driver(object): @abstractmethod def get_type(self): pass @abstractmethod def initialize(self): pass @abstractmethod def validate_provider_segment(self, segment): pass @abstractmethod def reserve_provider_segment(self, session, segment): pass @abstractmethod def allocate_tenant_segment(self, session): pass @abstractmethod def release_segment(self, session, segment): pass

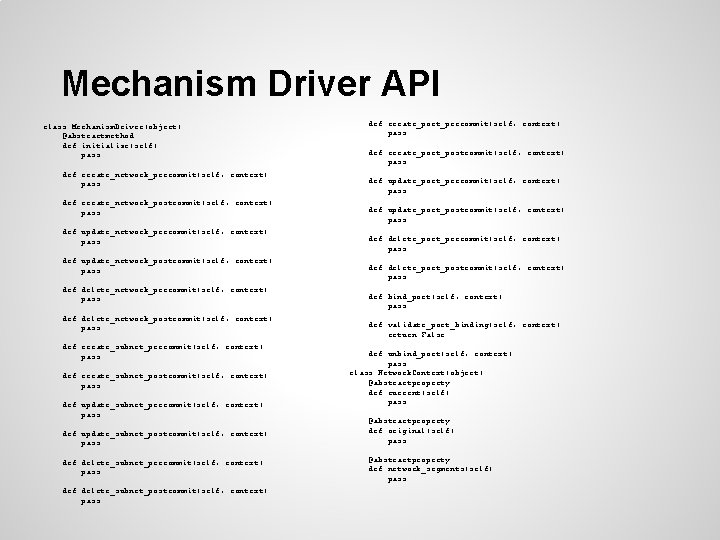

Mechanism Driver API class Mechanism. Driver(object): @abstractmethod def initialize(self): pass def create_network_precommit(self, context): pass def create_network_postcommit(self, context): pass def update_network_precommit(self, context): pass def update_network_postcommit(self, context): pass def delete_network_precommit(self, context): pass def delete_network_postcommit(self, context): pass def create_subnet_precommit(self, context): pass def create_subnet_postcommit(self, context): pass def update_subnet_precommit(self, context): pass def update_subnet_postcommit(self, context): pass def delete_subnet_precommit(self, context): pass def delete_subnet_postcommit(self, context): pass def create_port_precommit(self, context): pass def create_port_postcommit(self, context): pass def update_port_precommit(self, context): pass def update_port_postcommit(self, context): pass def delete_port_precommit(self, context): pass def delete_port_postcommit(self, context): pass def bind_port(self, context): pass def validate_port_binding(self, context): return False def unbind_port(self, context): pass class Network. Context(object): @abstractproperty def current(self): pass @abstractproperty def original(self): pass @abstractproperty def network_segments(self): pass

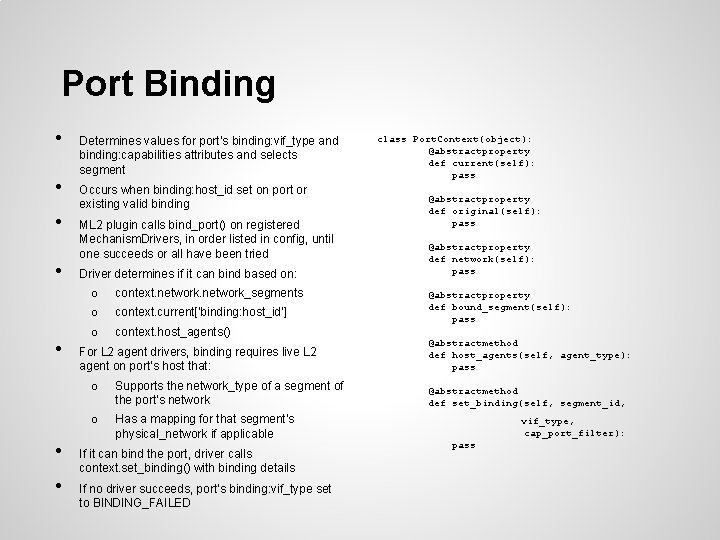

Port Binding • • Determines values for port’s binding: vif_type and binding: capabilities attributes and selects segment Occurs when binding: host_id set on port or existing valid binding ML 2 plugin calls bind_port() on registered Mechanism. Drivers, in order listed in config, until one succeeds or all have been tried Driver determines if it can bind based on: o context. network_segments o context. current[‘binding: host_id’] o context. host_agents() For L 2 agent drivers, binding requires live L 2 agent on port’s host that: o Supports the network_type of a segment of the port’s network o Has a mapping for that segment’s physical_network if applicable If it can bind the port, driver calls context. set_binding() with binding details If no driver succeeds, port’s binding: vif_type set to BINDING_FAILED class Port. Context(object): @abstractproperty def current(self): pass @abstractproperty def original(self): pass @abstractproperty def network(self): pass @abstractproperty def bound_segment(self): pass @abstractmethod def host_agents(self, agent_type): pass @abstractmethod def set_binding(self, segment_id, vif_type, cap_port_filter): pass

Havana Features

Type Drivers in Havana The following are supported segmentation types in ML 2 for the Havana release: ● local ● flat ● VLAN ● GRE ● VXLAN

Mechanism Drivers in Havana The following ML 2 Mechanism. Drivers exist in Havana: ● ● ● ● Arista Cisco Nexus Hyper-V Agent L 2 Population Linuxbridge Agent Open v. Switch Agent Tail-f NCS

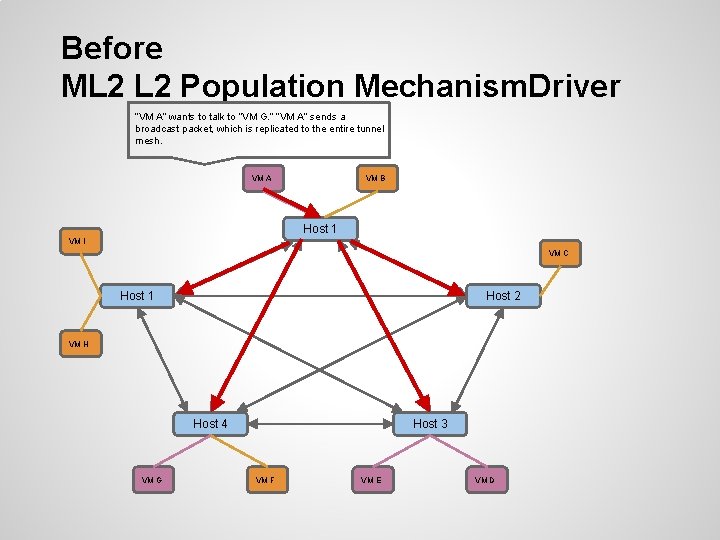

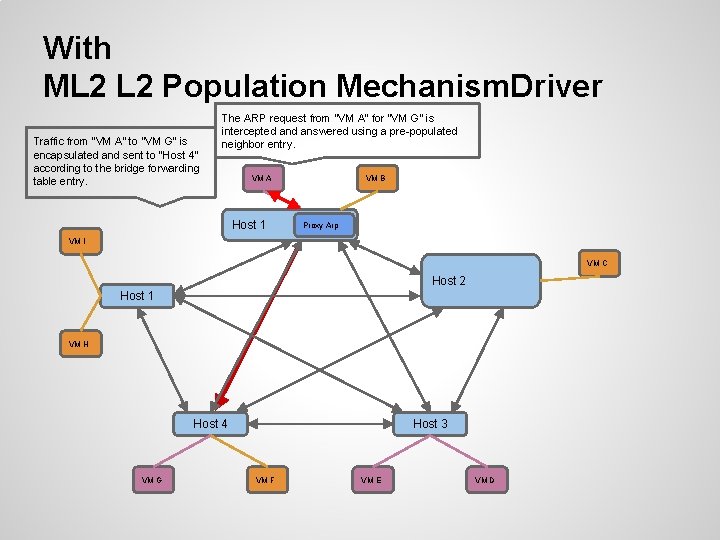

Before ML 2 Population Mechanism. Driver “VM A” wants to talk to “VM G. ” “VM A” sends a broadcast packet, which is replicated to the entire tunnel mesh. VM A VM B Host 1 VM I VM C Host 1 Host 2 VM H Host 4 VM G Host 3 VM F VM E VM D

With ML 2 Population Mechanism. Driver Traffic from “VM A” to “VM G” is encapsulated and sent to “Host 4” according to the bridge forwarding table entry. The ARP request from “VM A” for “VM G” is intercepted answered using a pre-populated neighbor entry. VM A Host 1 VM B Proxy Arp VM I VM C Host 2 Host 1 VM H Host 4 VM G Host 3 VM F VM E VM D

Modular Layer 2 Futures

ML 2 Futures: Deprecation Items • The future of the Open v. Switch and Linuxbridge plugins These are planned for deprecation in Icehouse o ML 2 supports all their functionality o ML 2 works with the existing OVS and Linuxbrige agents o No new features being added in Icehouse to OVS and Linuxbridge plugins o • Migration Tool being developed

Plugin vs. ML 2 Mechanism. Driver? • Advantages of writing an ML 2 Driver instead of a new monolithic plugin Much less code to write (or clone) and maintain o New neutron features supported as they are added o Support for heterogeneous deployments o • Vendors integrating new plugins should consider an ML 2 Driver instead o Existing plugins may want to migrate to ML 2 as well

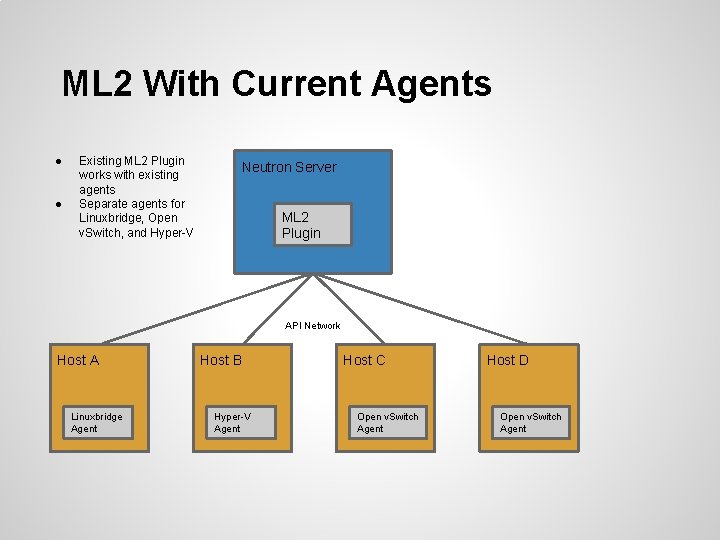

ML 2 With Current Agents ● ● Existing ML 2 Plugin works with existing agents Separate agents for Linuxbridge, Open v. Switch, and Hyper-V Neutron Server ML 2 Plugin API Network Host A Linuxbridge Agent Host B Hyper-V Agent Host C Open v. Switch Agent Host D Open v. Switch Agent

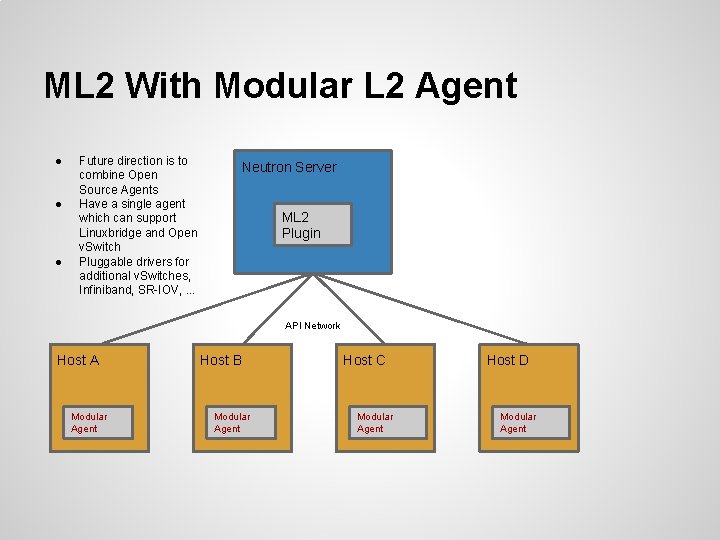

ML 2 With Modular L 2 Agent ● ● ● Future direction is to combine Open Source Agents Have a single agent which can support Linuxbridge and Open v. Switch Pluggable drivers for additional v. Switches, Infiniband, SR-IOV, . . . Neutron Server ML 2 Plugin API Network Host A Modular Agent Host B Modular Agent Host C Modular Agent Host D Modular Agent

ML 2 Demo

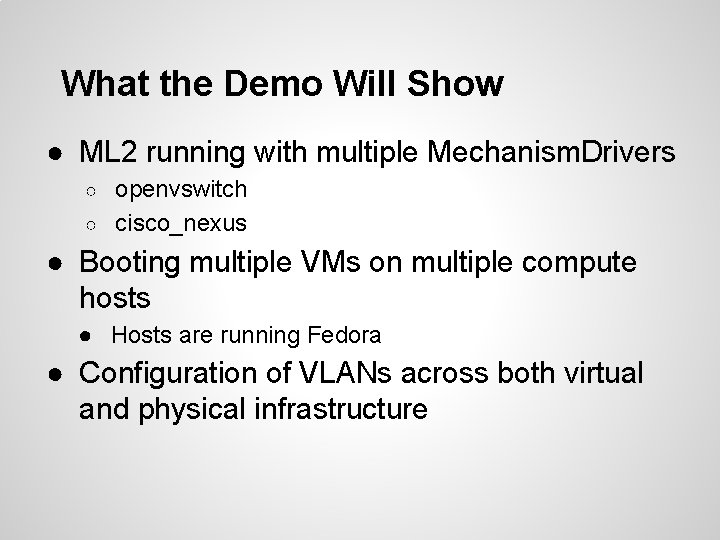

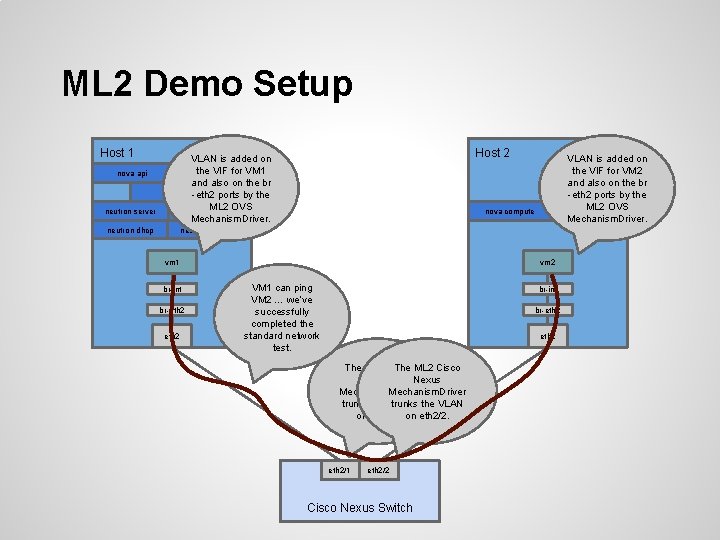

What the Demo Will Show ● ML 2 running with multiple Mechanism. Drivers openvswitch ○ cisco_nexus ○ ● Booting multiple VMs on multiple compute hosts ● Hosts are running Fedora ● Configuration of VLANs across both virtual and physical infrastructure

ML 2 Demo Setup Host 1 nova api neutron server Host 2 VLAN is added on thecompute VIF for VM 1 nova and also on the br. . . -eth 2 ports by the ML 2 OVS neutron ovs agent Mechanism. Driver. neutron dhcp VLAN is added on the VIF for VM 2 and also on the br -eth 2 ports by the neutron ovs. OVS ML 2 agent Mechanism. Driver. nova compute neutron l 3 agent vm 1 br-int br-eth 2 vm 2 VM 1 can ping VM 2 … we’ve successfully completed the standard network test. br-int br-eth 2 The ML 2 Cisco Nexus Mechanism. Driver trunks the VLAN on eth 2/1. on eth 2/2. eth 2/1 eth 2/2 Cisco Nexus Switch

Questions?

- Slides: 31