Modern Information Retrieval Lecture 3 Boolean Retrieval Lecture

Modern Information Retrieval Lecture 3: Boolean Retrieval

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 2

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 3

Types of data • Unstructured • Semi-structured • Structured Marjan Ghazvininejad Sharif University Spring 2012 4

Unstructured data • Typically refers to free text • Allows Ø Keyword queries including operators Ø More sophisticated “concept” queries e. g. , • find all web pages dealing with drug abuse • Classic model for searching text documents Marjan Ghazvininejad Sharif University Spring 2012 5

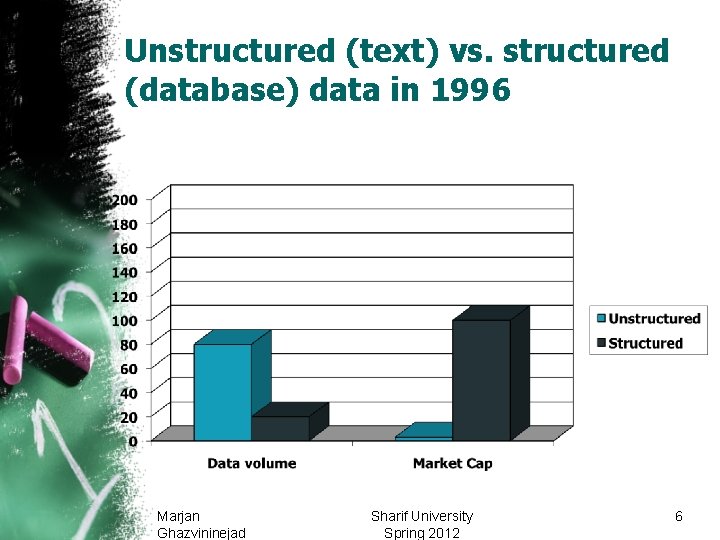

Unstructured (text) vs. structured (database) data in 1996 Marjan Ghazvininejad Sharif University Spring 2012 6

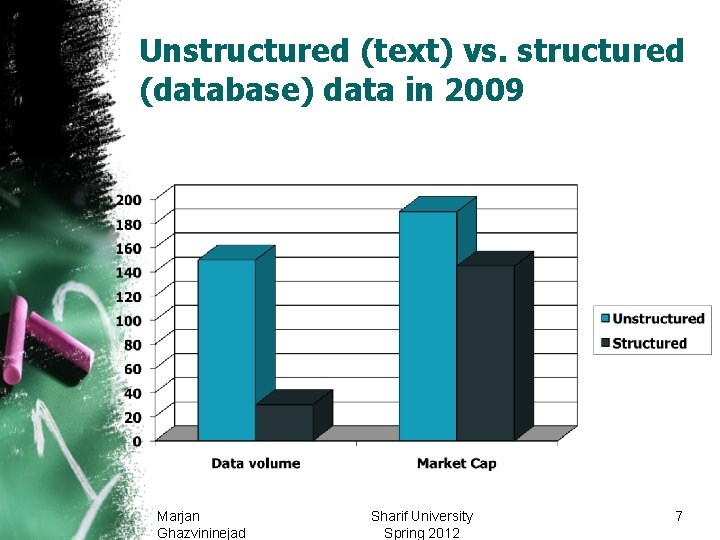

Unstructured (text) vs. structured (database) data in 2009 Marjan Ghazvininejad Sharif University Spring 2012 7

Sec. 1. 1 Unstructured data in 1680 • Which plays of Shakespeare contain the words Brutus AND Caesar but NOT Calpurnia? • One could grep all of Shakespeare’s plays for Brutus and Caesar, then strip out lines containing Calpurnia? • Why is that not the answer? Ø Slow (for large corpora) Ø NOT Calpurnia is non-trivial Ø Other operations (e. g. , find the word Romans near countrymen) not feasible Ø Ranked retrieval (best documents to return) • Later lectures Marjan Ghazvininejad Sharif University Spring 2012 8

Semi-structured data • In fact almost no data is “unstructured” • E. g. , this slide has distinctly identified zones such as the Title and Bullets • Facilitates “semi-structured” search such as Ø Title contains data AND Bullets contain search … to say nothing of linguistic structure 9

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 10

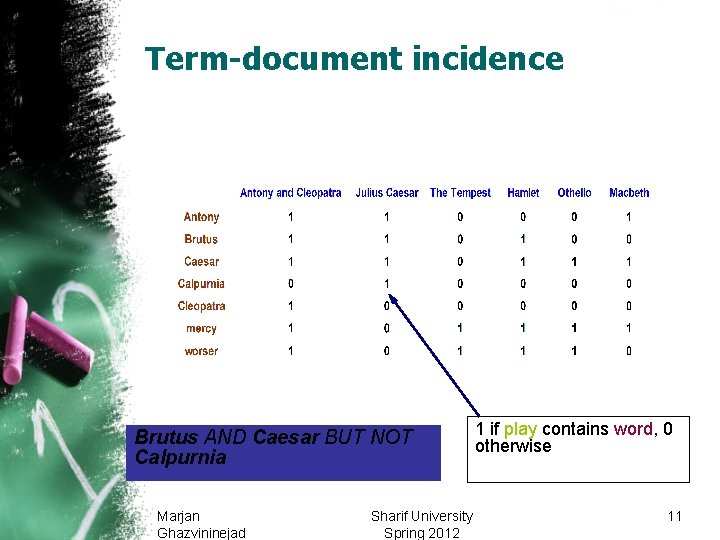

Sec. 1. 1 Term-document incidence Brutus AND Caesar BUT NOT Calpurnia Marjan Ghazvininejad Sharif University Spring 2012 1 if play contains word, 0 otherwise 11

Sec. 1. 1 Incidence vectors • So we have a 0/1 vector for each term. • To answer query: take the vectors for Brutus, Caesar and Calpurnia (complemented) bitwise AND. • 110100 AND 110111 AND 101111 = 100100. Marjan Ghazvininejad Sharif University Spring 2012 12

Sec. 1. 1 Bigger collections • Consider N = 1 million documents, each with about 1000 words. • Avg 6 bytes/word including spaces/punctuation Ø 6 GB of data in the documents. • Say there are M = 500 K distinct terms among these. Marjan Ghazvininejad Sharif University Spring 2012 13

Sec. 1. 1 Can’t build the matrix • 500 K x 1 M matrix has half-a-trillion 0’s and 1’s. • But it has no more than one billion 1’s. Ø matrix is extremely sparse. Why? • What’s a better representation? Ø We only record the 1 positions. Marjan Ghazvininejad Sharif University Spring 2012 14

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 15

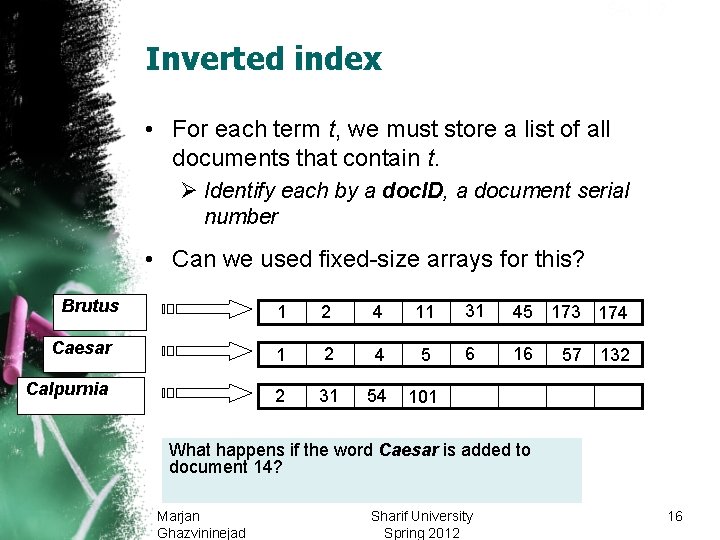

Sec. 1. 2 Inverted index • For each term t, we must store a list of all documents that contain t. Ø Identify each by a doc. ID, a document serial number • Can we used fixed-size arrays for this? Brutus 1 2 4 11 31 45 173 174 Caesar 1 2 4 5 6 16 Calpurnia 2 31 54 101 57 132 What happens if the word Caesar is added to document 14? Marjan Ghazvininejad Sharif University Spring 2012 16

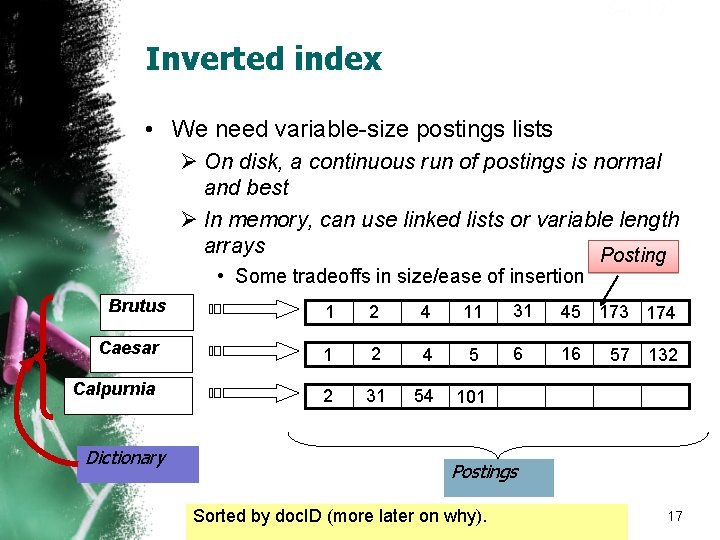

Sec. 1. 2 Inverted index • We need variable-size postings lists Ø On disk, a continuous run of postings is normal and best Ø In memory, can use linked lists or variable length arrays Posting • Some tradeoffs in size/ease of insertion Brutus 1 2 4 11 31 45 173 174 Caesar 1 2 4 5 6 16 Calpurnia 2 31 54 101 Dictionary 57 132 Postings Sorted by doc. ID (more later on why). 17

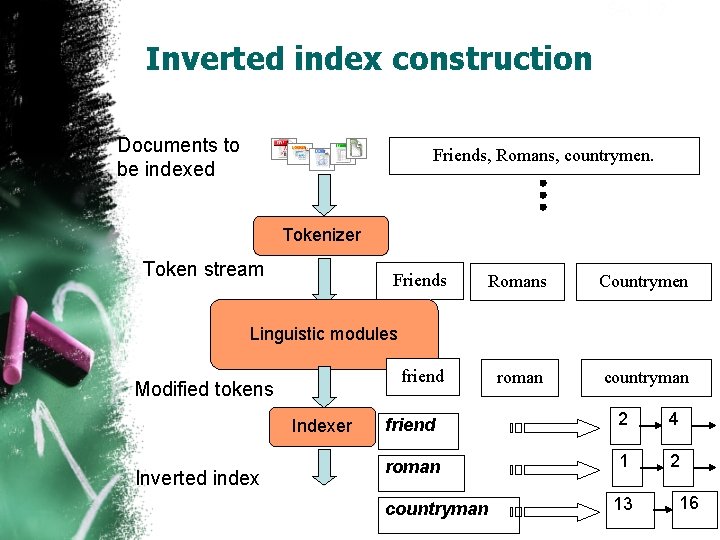

Sec. 1. 2 Inverted index construction Documents to be indexed Friends, Romans, countrymen. Tokenizer Token stream Friends Romans Countrymen roman countryman Linguistic modules friend Modified tokens Indexer Inverted index friend 2 4 roman 1 2 countryman 13 16

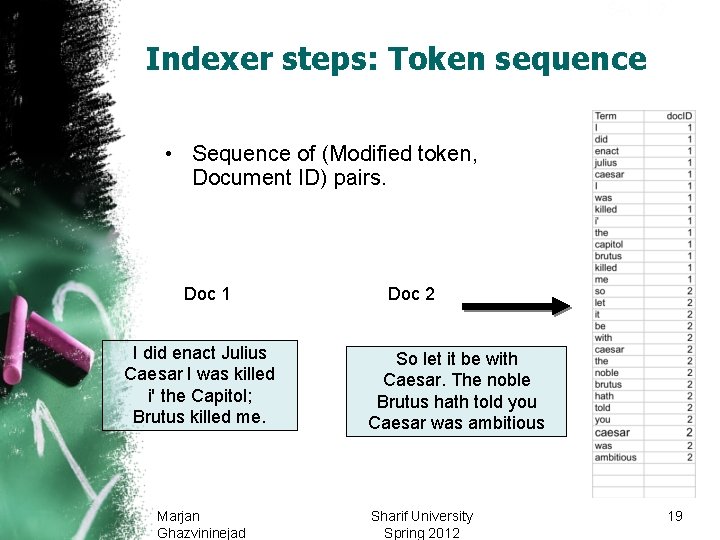

Sec. 1. 2 Indexer steps: Token sequence • Sequence of (Modified token, Document ID) pairs. Doc 1 I did enact Julius Caesar I was killed i' the Capitol; Brutus killed me. Marjan Ghazvininejad Doc 2 So let it be with Caesar. The noble Brutus hath told you Caesar was ambitious Sharif University Spring 2012 19

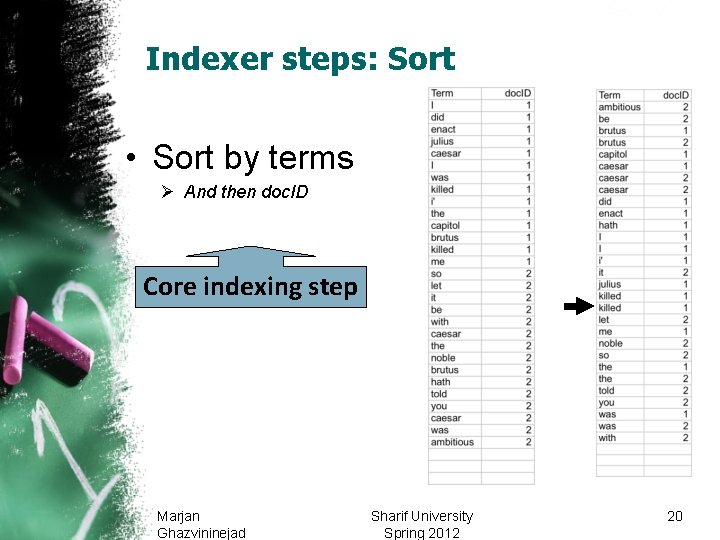

Sec. 1. 2 Indexer steps: Sort • Sort by terms Ø And then doc. ID Core indexing step Marjan Ghazvininejad Sharif University Spring 2012 20

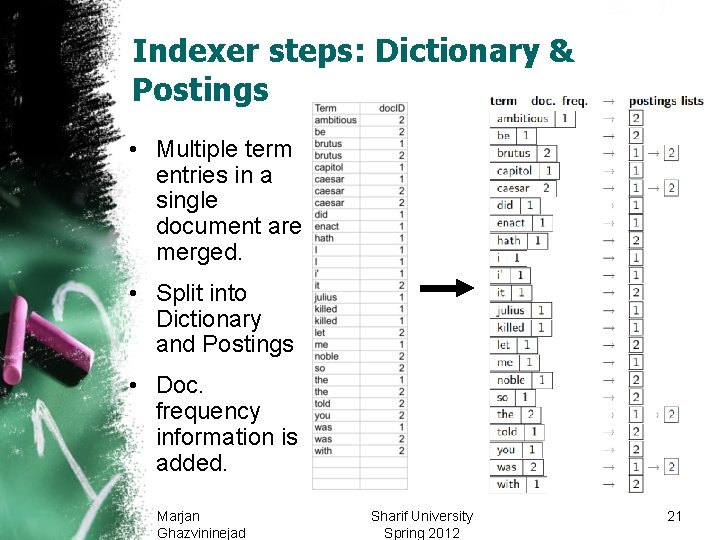

Sec. 1. 2 Indexer steps: Dictionary & Postings • Multiple term entries in a single document are merged. • Split into Dictionary and Postings • Doc. frequency information is added. Marjan Ghazvininejad Sharif University Spring 2012 21

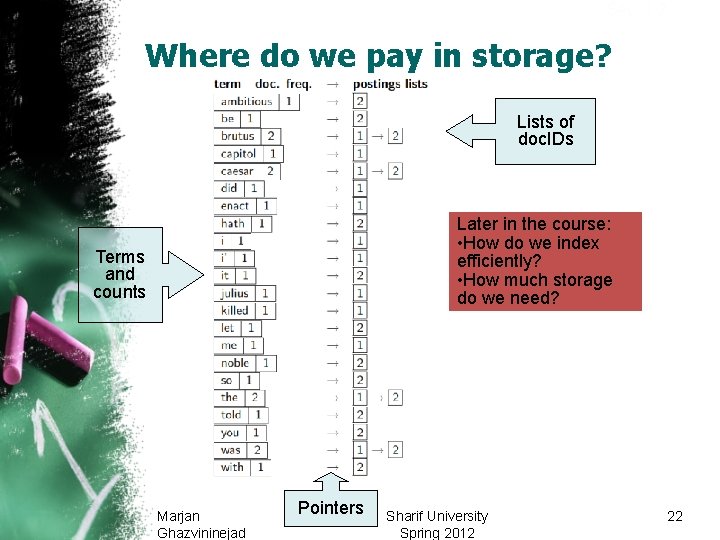

Sec. 1. 2 Where do we pay in storage? Lists of doc. IDs Later in the course: • How do we index efficiently? • How much storage do we need? Terms and counts Marjan Ghazvininejad Pointers Sharif University Spring 2012 22

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 23

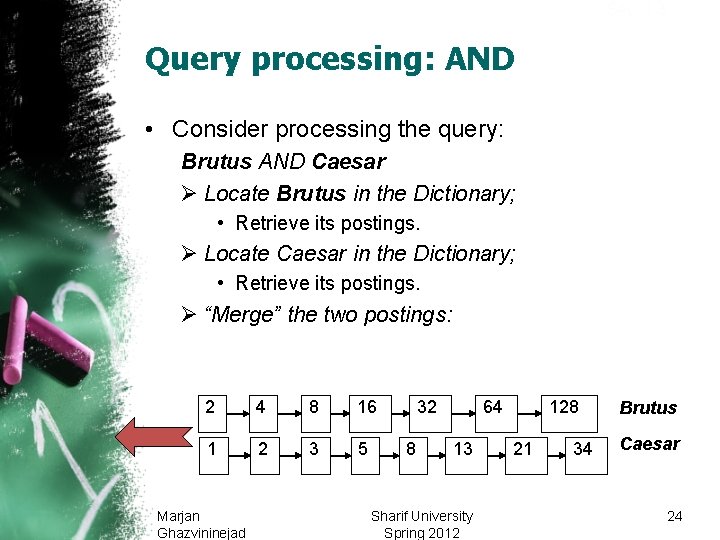

Sec. 1. 3 Query processing: AND • Consider processing the query: Brutus AND Caesar Ø Locate Brutus in the Dictionary; • Retrieve its postings. Ø Locate Caesar in the Dictionary; • Retrieve its postings. Ø “Merge” the two postings: 2 4 8 16 1 2 3 5 Marjan Ghazvininejad 32 8 64 13 Sharif University Spring 2012 128 21 34 Brutus Caesar 24

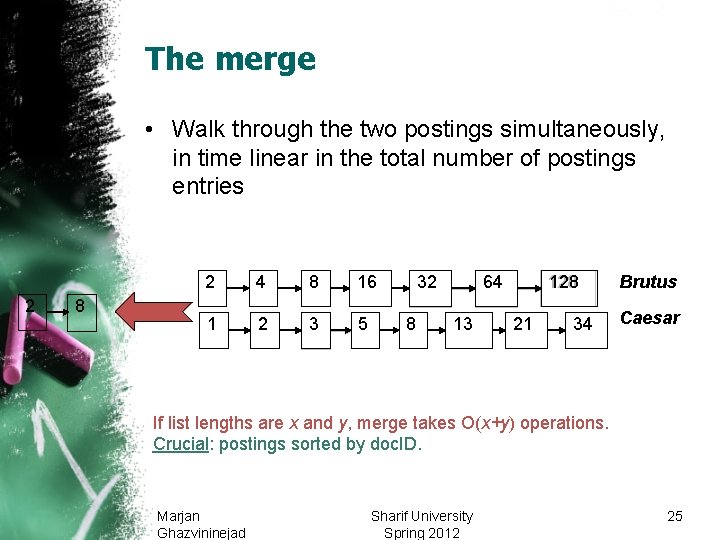

Sec. 1. 3 The merge • Walk through the two postings simultaneously, in time linear in the total number of postings entries 2 8 2 4 8 16 1 2 3 5 32 8 128 64 13 21 34 Brutus Caesar If list lengths are x and y, merge takes O(x+y) operations. Crucial: postings sorted by doc. ID. Marjan Ghazvininejad Sharif University Spring 2012 25

Sec. 1. 3 Boolean queries: Exact match • The Boolean retrieval model is being able to ask a query that is a Boolean expression: Ø Boolean Queries use AND, OR and NOT to join query terms • Views each document as a set of words • Is precise: document matches condition or not. Ø Perhaps the simplest model to build an IR system on • Primary commercial retrieval tool for 3 decades. • Many search systems you still use are Boolean: Ø Email, library catalog, Mac OS X Spotlight Marjan Ghazvininejad Sharif University Spring 2012 26

Boolean queries • Many professional searchers still like Boolean search Ø You know exactly what you are getting • But that doesn’t mean it actually works better…. Marjan Ghazvininejad Sharif University Spring 2012 27

Boolean queries: More general merges Sec. 1. 3 • Exercise: Adapt the merge for the queries: Brutus AND NOT Caesar Brutus OR NOT Caesar Can we still run through the merge in time O(x+y)? What can we achieve? Marjan Ghazvininejad Sharif University Spring 2012 28

Sec. 1. 3 Merging What about an arbitrary Boolean formula? (Brutus OR Caesar) AND NOT (Antony OR Cleopatra) • Can we always merge in “linear” time? Ø Linear in what? • Can we do better? Marjan Ghazvininejad Sharif University Spring 2012 29

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 30

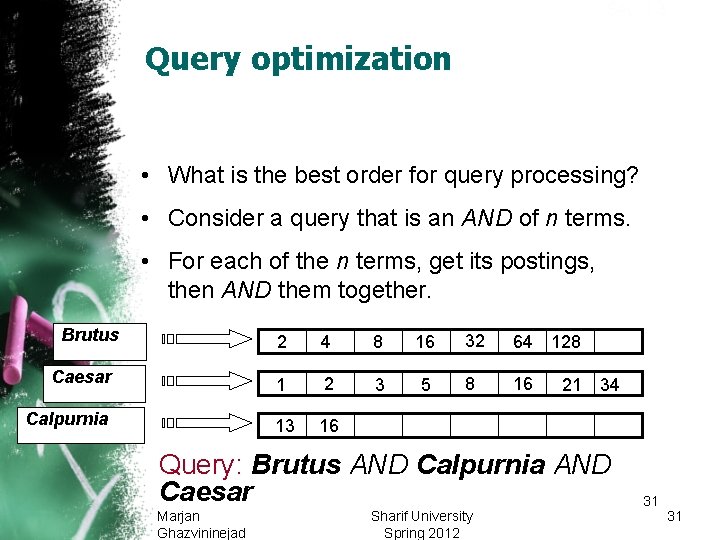

Sec. 1. 3 Query optimization • What is the best order for query processing? • Consider a query that is an AND of n terms. • For each of the n terms, get its postings, then AND them together. Brutus Caesar Calpurnia 2 4 8 16 32 64 128 1 2 3 5 8 16 13 16 21 34 Query: Brutus AND Calpurnia AND Caesar Marjan Ghazvininejad Sharif University Spring 2012 31 31

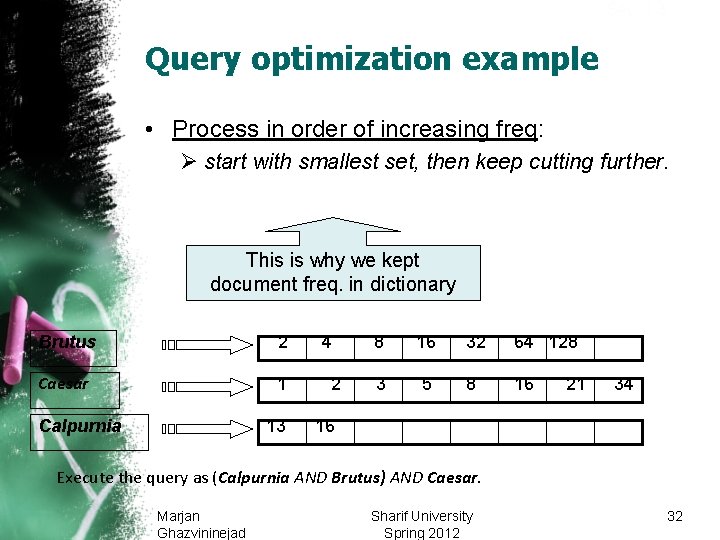

Sec. 1. 3 Query optimization example • Process in order of increasing freq: Ø start with smallest set, then keep cutting further. This is why we kept document freq. in dictionary Brutus 2 Caesar 1 Calpurnia 13 4 2 8 16 32 64 128 3 5 8 16 21 34 16 Execute the query as (Calpurnia AND Brutus) AND Caesar. Marjan Ghazvininejad Sharif University Spring 2012 32

Sec. 1. 3 More general optimization • e. g. , (madding OR crowd) AND (ignoble OR strife) • Get doc. freq. ’s for all terms. • Estimate the size of each OR by the sum of its doc. freq. ’s (conservative). • Process in increasing order of OR sizes. Marjan Ghazvininejad Sharif University Spring 2012 33

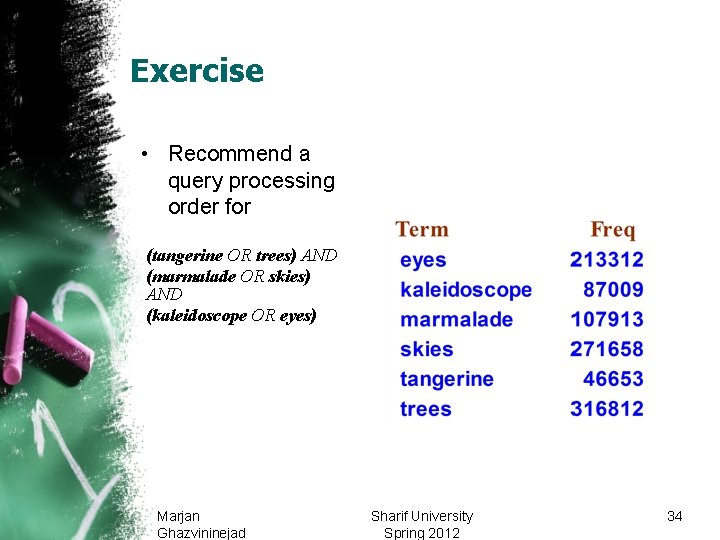

Exercise • Recommend a query processing order for (tangerine OR trees) AND (marmalade OR skies) AND (kaleidoscope OR eyes) Marjan Ghazvininejad Sharif University Spring 2012 34

Query processing exercises • Exercise: If the query is friends AND romans AND (NOT countrymen), how could we use the freq of countrymen? • Exercise: Extend the merge to an arbitrary Boolean query. Can we always guarantee execution in time linear in the total postings size? • Hint: Begin with the case of a Boolean formula query where each term appears only once in the query. Marjan Ghazvininejad Sharif University Spring 2012 35

Exercise • Try the search feature at http: //www. rhymezone. com/shakespeare/ • Write down five search features you think it could do better Marjan Ghazvininejad Sharif University Spring 2012 36

What’s ahead in IR? Beyond term search • What about phrases? Ø Stanford University • Proximity: Find Gates NEAR Microsoft. Ø Need index to capture position information in docs. • Zones in documents: Find documents with (author = Ullman) AND (text contains automata). Marjan Ghazvininejad Sharif University Spring 2012 37

Evidence accumulation • 1 vs. 0 occurrence of a search term Ø 2 vs. 1 occurrence Ø 3 vs. 2 occurrences, etc. Ø Usually more seems better • Need term frequency information in docs Marjan Ghazvininejad Sharif University Spring 2012 38

Ranking search results • Boolean queries give inclusion or exclusion of docs. • Often we want to rank/group results Ø Need to measure proximity from query to each doc. Ø Need to decide whether docs presented to user are singletons, or a group of docs covering various aspects of the query. Marjan Ghazvininejad Sharif University Spring 2012 39

Clustering, classification and ranking • Clustering: Given a set of docs, group them into clusters based on their contents. • Classification: Given a set of topics, plus a new doc D, decide which topic(s) D belongs to. • Ranking: Can we learn how to best order a set of documents, e. g. , a set of search results Marjan Ghazvininejad Sharif University Spring 2012 40

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 41

Next Time • ? ? ? • Readings Ø Chapter ? ? ? in IR text (? ? ? ? ) Ø Joyce & Needham “The Thesaurus Approach to Information Retrieval” (in Readings book) Ø Luhn “The Automatic Derivation of Information Retrieval Encodements from Machine-Readable Texts” (in Readings) Ø Doyle “Indexing and Abstracting by Association, Pt I” (in Readings) Marjan Ghazvininejad Sharif University Spring 2012 42

Lecture Overview • Data Types • Incidence Vectors • Inverted Indexes • Query Processing • Query Optimization • Discussion • References Marjan Ghazvininejad Sharif University Spring 2012 43

Resources for today’s lecture • Introduction to Information Retrieval, chapter 1 • Shakespeare: Ø http: //www. rhymezone. com/shakespeare/ Ø Try the neat browse by keyword sequence feature! • Managing Gigabytes, chapter 3. 2 • Modern Information Retrieval, chapter 8. 2 Marjan Ghazvininejad Sharif University Spring 2012 44

- Slides: 44