11 741 Information Retrieval Models Boolean and Vector

11 -741: Information Retrieval Models: Boolean and Vector Space Jamie Callan Carnegie Mellon University callan@cs. cmu. edu © 2003, Jamie Callan

Lecture Outline • Introduction to retrieval models • Exact-match retrieval – Unranked Boolean retrieval model – Ranked Boolean retrieval model • Best-match retrieval – Vector space retrieval model » Term weights » Boolean query operators (p-norm) 2 © 2003, Jamie Callan

What is a Retrieval Model? • A model is an abstract representation of a process or object – Used to study properties, draw conclusions, make predictions – The quality of the conclusions depends upon how closely the model represents reality • A retrieval model describes the human and computational processes involved in ad-hoc retrieval – Example: A model of human information seeking behavior – Example: A model of how documents are ranked computationally – Components: Users, information needs, queries, documents, relevance assessments, …. – Retrieval models define relevance, explicitly or implicitly 3 © 2003, Jamie Callan

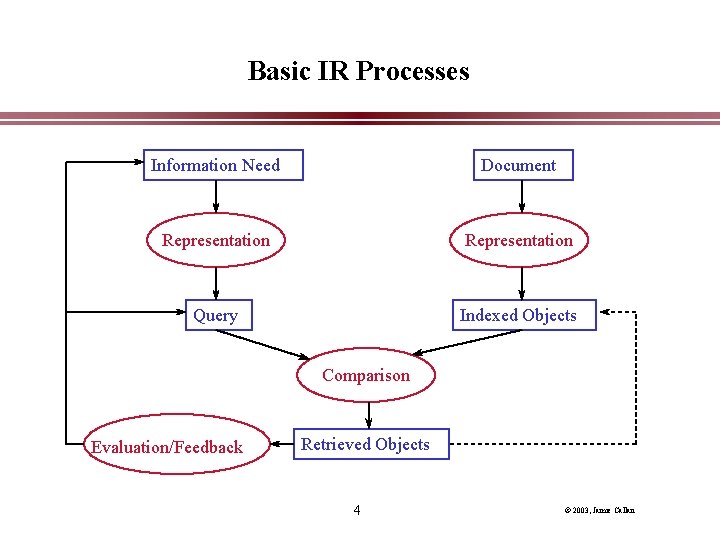

Basic IR Processes Information Need Document Representation Query Indexed Objects Comparison Evaluation/Feedback Retrieved Objects 4 © 2003, Jamie Callan

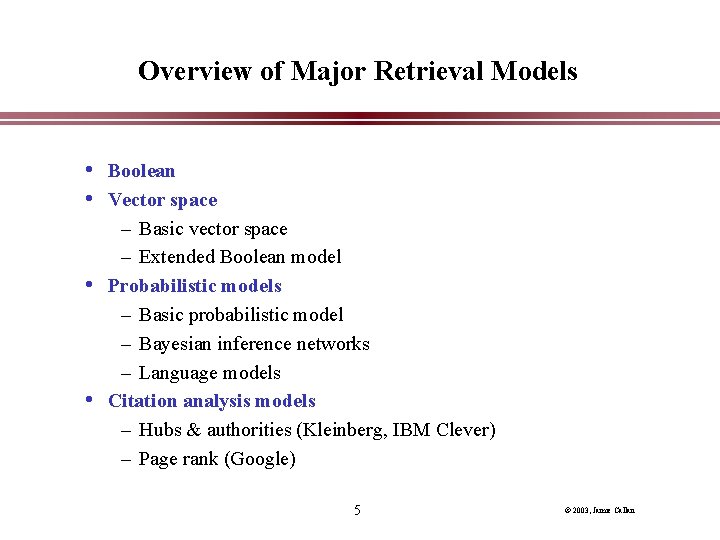

Overview of Major Retrieval Models • Boolean • Vector space – Basic vector space – Extended Boolean model • Probabilistic models – Basic probabilistic model – Bayesian inference networks – Language models • Citation analysis models – Hubs & authorities (Kleinberg, IBM Clever) – Page rank (Google) 5 © 2003, Jamie Callan

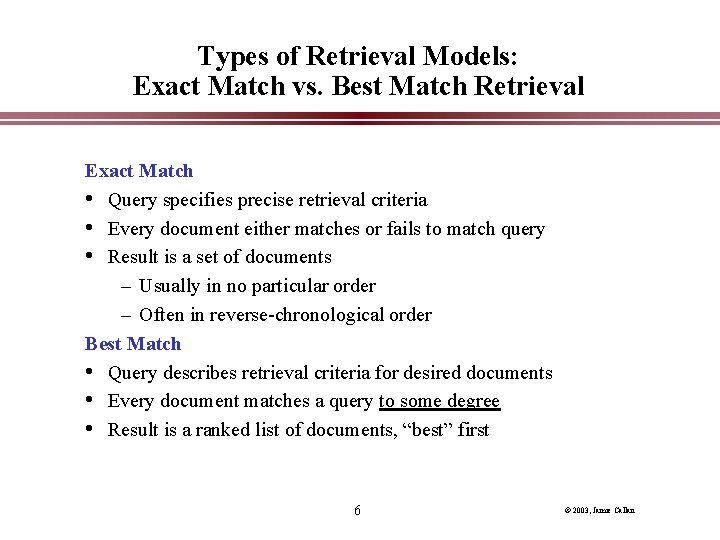

Types of Retrieval Models: Exact Match vs. Best Match Retrieval Exact Match • Query specifies precise retrieval criteria • Every document either matches or fails to match query • Result is a set of documents – Usually in no particular order – Often in reverse-chronological order Best Match • Query describes retrieval criteria for desired documents • Every document matches a query to some degree • Result is a ranked list of documents, “best” first 6 © 2003, Jamie Callan

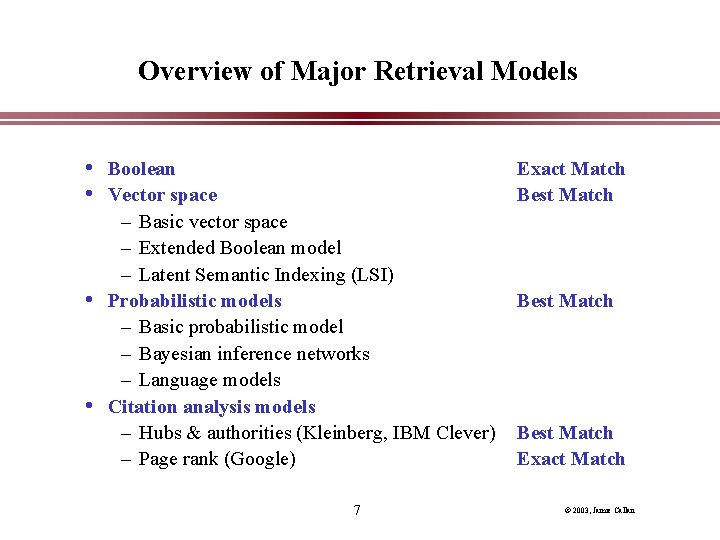

Overview of Major Retrieval Models • Boolean • Vector space Exact Match Best Match – Basic vector space – Extended Boolean model – Latent Semantic Indexing (LSI) • Probabilistic models – Basic probabilistic model – Bayesian inference networks – Language models • Citation analysis models – Hubs & authorities (Kleinberg, IBM Clever) – Page rank (Google) 7 Best Match Exact Match © 2003, Jamie Callan

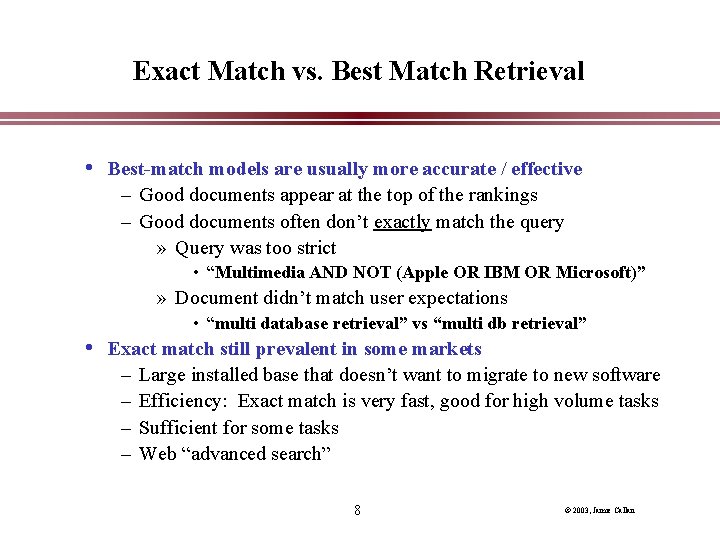

Exact Match vs. Best Match Retrieval • Best-match models are usually more accurate / effective – Good documents appear at the top of the rankings – Good documents often don’t exactly match the query » Query was too strict • “Multimedia AND NOT (Apple OR IBM OR Microsoft)” » Document didn’t match user expectations • “multi database retrieval” vs “multi db retrieval” • Exact match still prevalent in some markets – – Large installed base that doesn’t want to migrate to new software Efficiency: Exact match is very fast, good for high volume tasks Sufficient for some tasks Web “advanced search” 8 © 2003, Jamie Callan

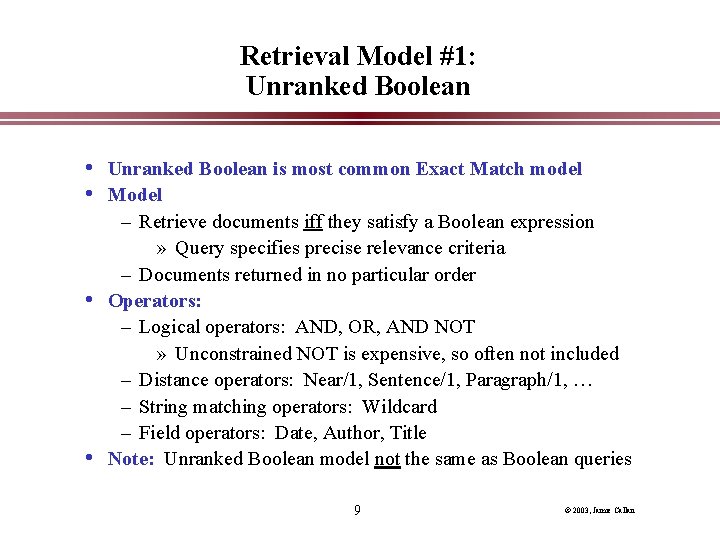

Retrieval Model #1: Unranked Boolean • Unranked Boolean is most common Exact Match model • Model – Retrieve documents iff they satisfy a Boolean expression » Query specifies precise relevance criteria – Documents returned in no particular order • Operators: – Logical operators: AND, OR, AND NOT » Unconstrained NOT is expensive, so often not included – Distance operators: Near/1, Sentence/1, Paragraph/1, … – String matching operators: Wildcard – Field operators: Date, Author, Title • Note: Unranked Boolean model not the same as Boolean queries 9 © 2003, Jamie Callan

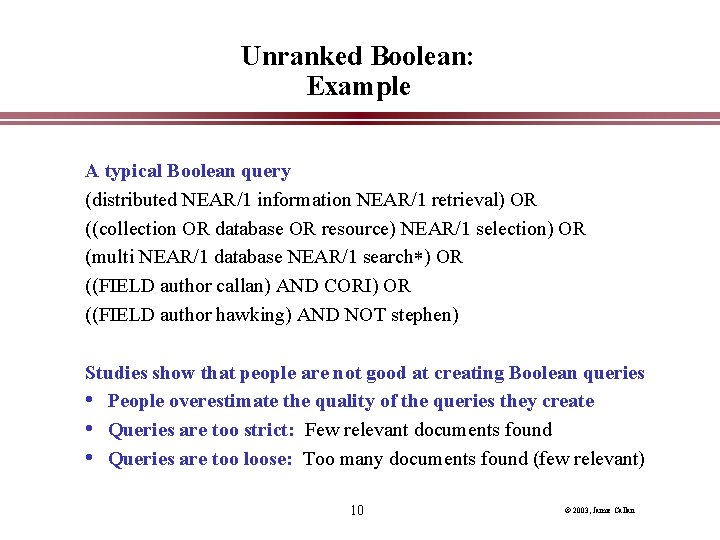

Unranked Boolean: Example A typical Boolean query (distributed NEAR/1 information NEAR/1 retrieval) OR ((collection OR database OR resource) NEAR/1 selection) OR (multi NEAR/1 database NEAR/1 search ) OR ((FIELD author callan) AND CORI) OR ((FIELD author hawking) AND NOT stephen) Studies show that people are not good at creating Boolean queries • People overestimate the quality of the queries they create • Queries are too strict: Few relevant documents found • Queries are too loose: Too many documents found (few relevant) 10 © 2003, Jamie Callan

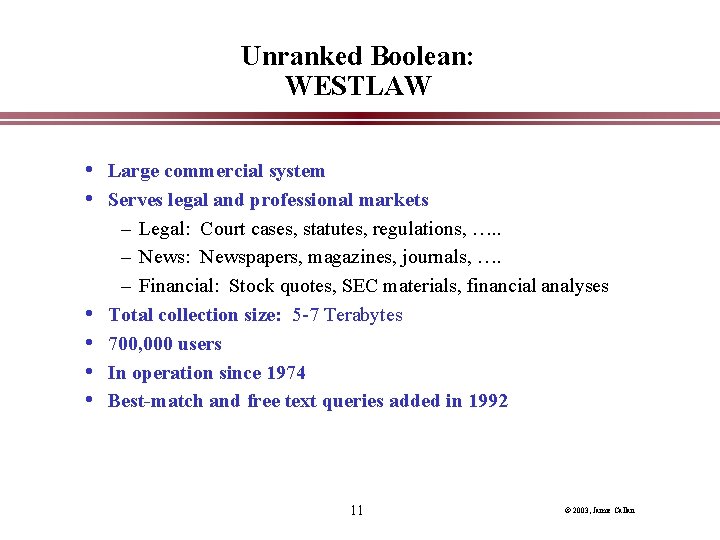

Unranked Boolean: WESTLAW • Large commercial system • Serves legal and professional markets • • – Legal: Court cases, statutes, regulations, …. . – News: Newspapers, magazines, journals, …. – Financial: Stock quotes, SEC materials, financial analyses Total collection size: 5 -7 Terabytes 700, 000 users In operation since 1974 Best-match and free text queries added in 1992 11 © 2003, Jamie Callan

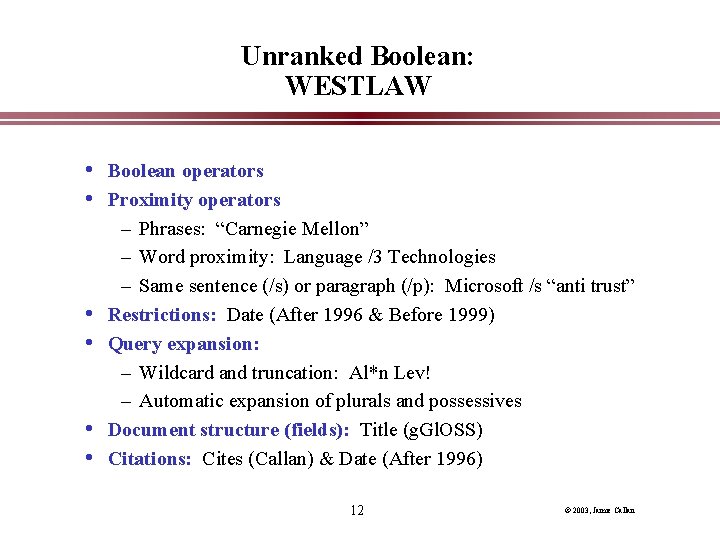

Unranked Boolean: WESTLAW • Boolean operators • Proximity operators • • – Phrases: “Carnegie Mellon” – Word proximity: Language /3 Technologies – Same sentence (/s) or paragraph (/p): Microsoft /s “anti trust” Restrictions: Date (After 1996 & Before 1999) Query expansion: – Wildcard and truncation: Al*n Lev! – Automatic expansion of plurals and possessives Document structure (fields): Title (g. Gl. OSS) Citations: Cites (Callan) & Date (After 1996) 12 © 2003, Jamie Callan

Unranked Boolean: WESTLAW • Queries are typically developed incrementally – Implicit relevance feedback V 1: machine AND learning V 2: (machine AND learning) OR (neural AND networks) OR (decision AND tree) V 3: (machine AND learning) OR (neural AND networks) OR (decision AND tree) AND C 4. 5 OR Ripper OR EG OR EM • Queries are complex – Proximity operators used often – NOT is rare • Queries are long (9 -10 words, on average) 13 © 2003, Jamie Callan

Unranked Boolean: WESTLAW • • • Two stopword lists: 1 for indexing, 50 for queries Legal thesaurus: To help people expand queries Document ordering: Determined by user (often date) Query term highlighting: Help people see how document matched Performance: – Response time controlled by varying amount of parallelism – Average response time set to 7 seconds » Even for collections larger than 100 GB 14 © 2003, Jamie Callan

Unranked Boolean: Summary Advantages: • Very efficient • Predictable, easy to explain • Structured queries • Works well when searchers knows exactly what is wanted Disadvantages: • Most people find it difficult to create good Boolean queries – Difficulty increases with size of collection • Precision and recall usually have strong inverse correlation • Predictability of results causes people to overestimate recall – Documents that are “close” are not retrieved 15 © 2003, Jamie Callan

Term Weights: A Brief Introduction • The words of a text are not equally indicative of its meaning • • “Most scientists think that butterflies use the position of the sun in the sky as a kind of compass that allows them to determine which way is north. Scientists think that butterflies may use other cues, such as the earth's magnetic field, but we have a lot to learn about monarchs' sense of direction. ” Important: Butterflies, monarchs, scientists, direction, compass Unimportant: Most, think, kind, sky, determine, cues, learn Term weights reflect the (estimated) importance of each term There are many variations on how they are calculated – Historically it was tf weights – The standard approach for many IR systems is tf. idf weights 16 © 2003, Jamie Callan

Retrieval Model #2: Ranked Boolean • Ranked Boolean is another common Exact Match retrieval model • Model – Retrieve documents iff they satisfy a Boolean expression » Query specifies precise relevance criteria – Documents returned ranked by frequency of query terms • Operators: – Logical operators: AND, OR, AND NOT » Unconstrained NOT is expensive, so often not included – Distance operators: Proximity – String matching operators: Wildcard – Field operators: Date, Author, Title 17 © 2003, Jamie Callan

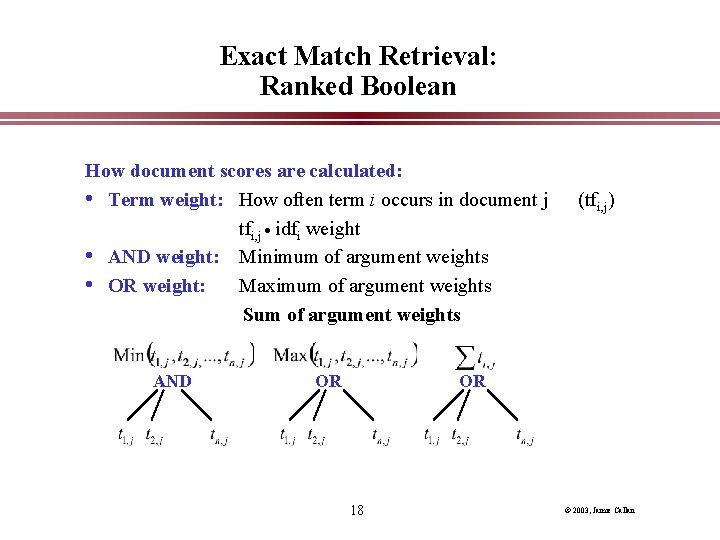

Exact Match Retrieval: Ranked Boolean How document scores are calculated: • Term weight: How often term i occurs in document j tfi, j idfi weight • AND weight: Minimum of argument weights • OR weight: Maximum of argument weights Sum of argument weights AND OR (tfi, j) OR 18 © 2003, Jamie Callan

Exact Match Retrieval: Ranked Boolean Advantages: • All of the advantages of the unranked Boolean model – Very efficient, predictable, easy to explain, structured queries, works well when searchers know exactly what is wanted • Result set is ordered by how redundantly a document satisfies a query – Usually enables a person to find relevant documents more quickly • Other term weighting methods can be used, too – Example: tf, tf. idf, … 19 © 2003, Jamie Callan

Exact Match Retrieval: Ranked Boolean Disadvantages: • It’s still an Exact-Match model – Good Boolean queries hard to create – Difficulty increases with size of collection • Precision and recall usually have strong inverse correlation • Predictability of results causes people to overestimate recall – The returned documents match expectations…. …so it is easy to forget that many relevant documents are missed – Documents that are “close” are not retrieved 20 © 2003, Jamie Callan

Are Boolean Retrieval Models Still Relevant? • Many people prefer Boolean – Professional searchers (e. g. , librarians, paralegals) – Some Web surfers (e. g. , “Advanced Search” feature) – About 80% of WESTLAW & Dialog searches are Boolean – What do they like? Control, predictability, understandability • Boolean and free-text queries find different documents • Solution: Retrieval models that support free-text and Boolean queries – Recall that almost any retrieval model can be Exact Match – Extended Boolean (vector space) retrieval model – Bayesian inference networks 21 © 2003, Jamie Callan

Retrieval Model #3: Vector Space Model Retrieval Model: • Any text object can be represented by a term vector – Examples: Documents, queries, sentences, …. • Similarity is determined by distance in a vector space – Example: The cosine of the angle between the vectors • The SMART system: – Developed at Cornell University, 1960 -1999 – Still used widely 22 © 2003, Jamie Callan

How Different Retrieval Models View Ad-hoc Retrieval Boolean • Query: A set of FOL conditions a document must satisfy • Retrieval: Deductive inference Vector space • Query: A short document • Retrieval: Finding similar objects 23 © 2003, Jamie Callan

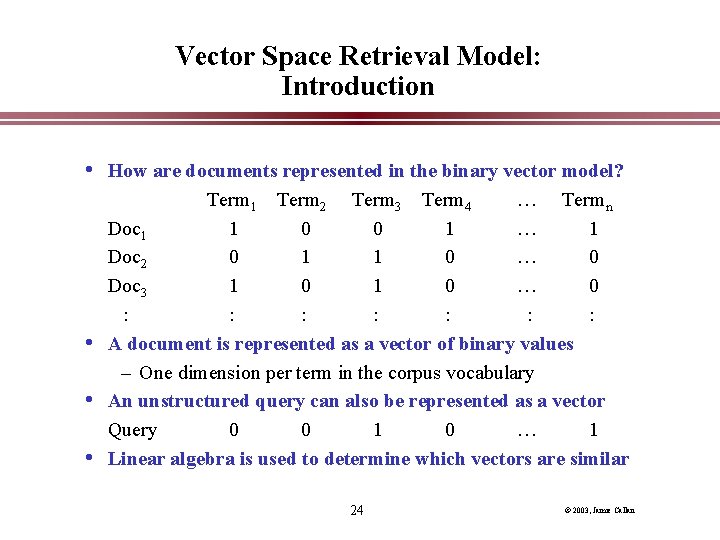

Vector Space Retrieval Model: Introduction • How are documents represented in the binary vector model? • • • Term 1 Term 2 Term 3 Term 4 … Termn Doc 1 1 0 0 1 … 1 Doc 2 0 1 1 0 … 0 Doc 3 1 0 … 0 : : : : A document is represented as a vector of binary values – One dimension per term in the corpus vocabulary An unstructured query can also be represented as a vector Query 0 0 1 0 … 1 Linear algebra is used to determine which vectors are similar 24 © 2003, Jamie Callan

Vector Space Representation: Linear Algebra Review • Formally, a vector space is defined by a set of linearly • independent basis vectors. Basis vectors: – correspond to the dimensions or directions in the vector space; – determine what can be described in the vector space; and – must be orthogonal, or linearly independent, i. e. a value along one dimension implies nothing about a value along another. Basis vectors for 3 dimensions Basis vectors for 2 dimensions 25 © 2003, Jamie Callan

Vector Space Representation What should be the basis vectors for information retrieval? • “Basic” concepts: – Difficult to determine (Philosophy? Cognitive science? ) – Orthogonal (by definition) – A relatively static vector space (“there are no new ideas”) • Terms (Words, Word stems): – Easy to determine – Not really orthogonal (orthogonal enough? ) – A constantly growing vector space » new vocabulary creates new dimensions 26 © 2003, Jamie Callan

Vector Space Representation: Vector Coefficients • The coefficients (vector elements, term weights) represent term presence, • • • importance, or “representativeness” The vector space model does not specify how to set term weights Some common elements: – Document term weight: Importance of the term in this document – Collection term weight: Importance of the term in this collection – Length normalization: Compensate for documents of different lengths Naming convention for term weight functions: DCL – First triple is document term weights, second triple is query term weights – n=none (no weighting on that factor) – Example: lnc. ltc 27 © 2003, Jamie Callan

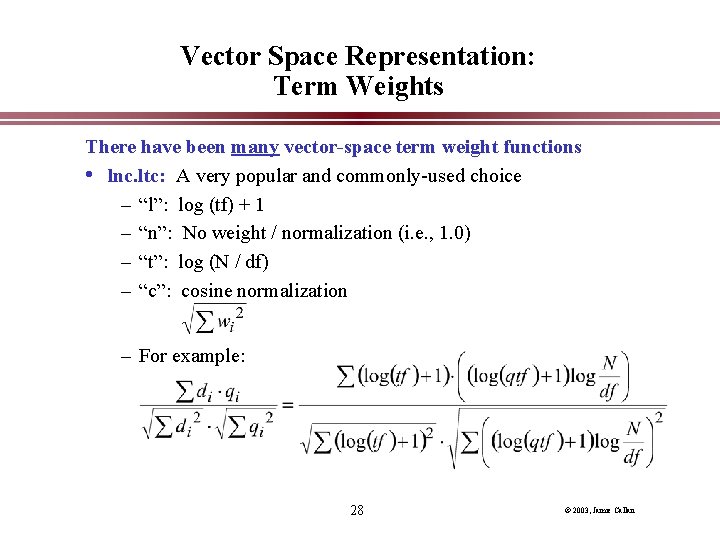

Vector Space Representation: Term Weights There have been many vector-space term weight functions • lnc. ltc: A very popular and commonly-used choice – “l”: log (tf) + 1 – “n”: No weight / normalization (i. e. , 1. 0) – “t”: log (N / df) – “c”: cosine normalization – For example: 28 © 2003, Jamie Callan

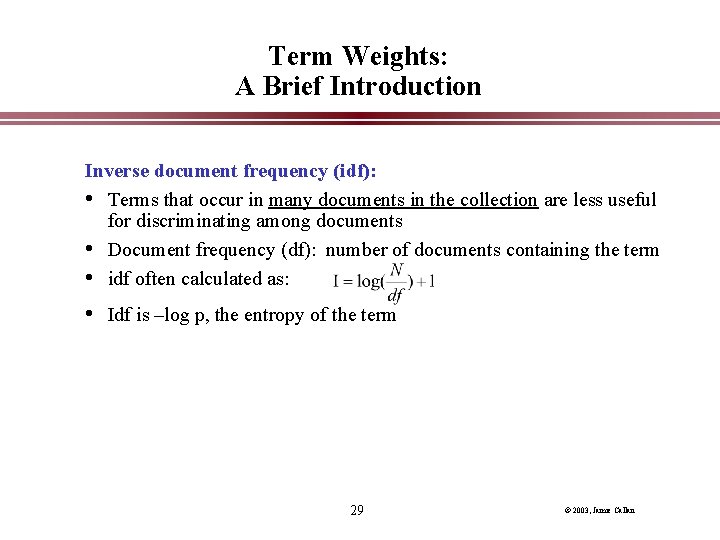

Term Weights: A Brief Introduction Inverse document frequency (idf): • Terms that occur in many documents in the collection are less useful for discriminating among documents • Document frequency (df): number of documents containing the term • idf often calculated as: • Idf is –log p, the entropy of the term 29 © 2003, Jamie Callan

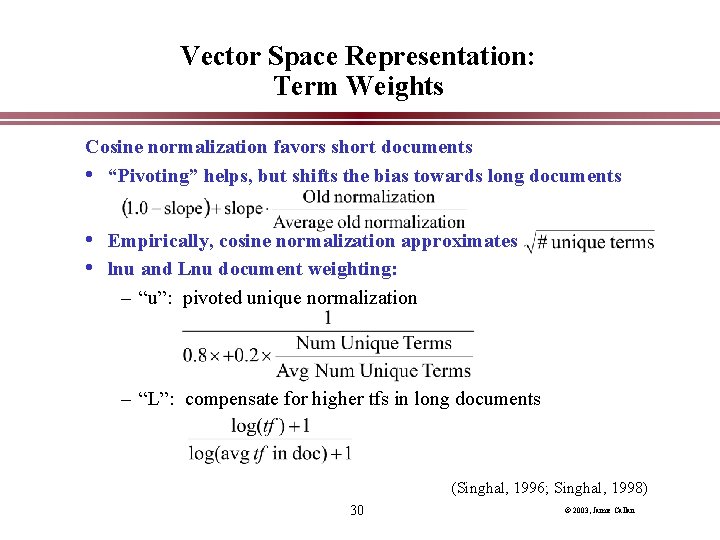

Vector Space Representation: Term Weights Cosine normalization favors short documents • “Pivoting” helps, but shifts the bias towards long documents • Empirically, cosine normalization approximates • lnu and Lnu document weighting: – “u”: pivoted unique normalization – “L”: compensate for higher tfs in long documents (Singhal, 1996; Singhal, 1998) 30 © 2003, Jamie Callan

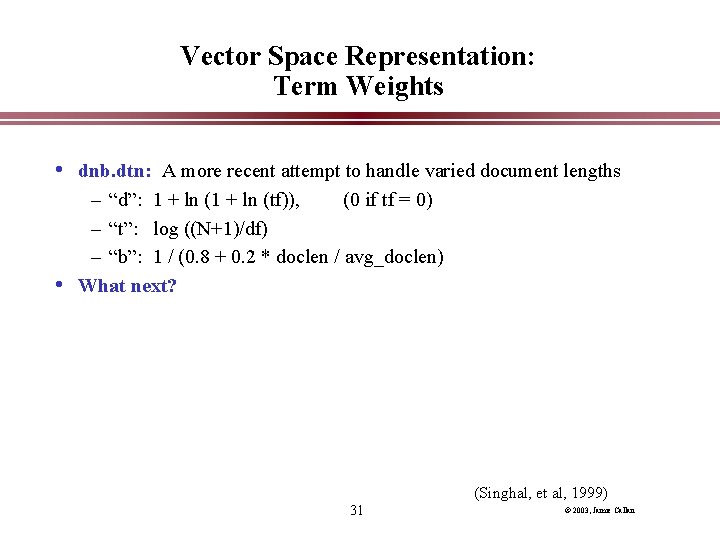

Vector Space Representation: Term Weights • dnb. dtn: A more recent attempt to handle varied document lengths – “d”: 1 + ln (tf)), (0 if tf = 0) – “t”: log ((N+1)/df) – “b”: 1 / (0. 8 + 0. 2 * doclen / avg_doclen) • What next? (Singhal, et al, 1999) 31 © 2003, Jamie Callan

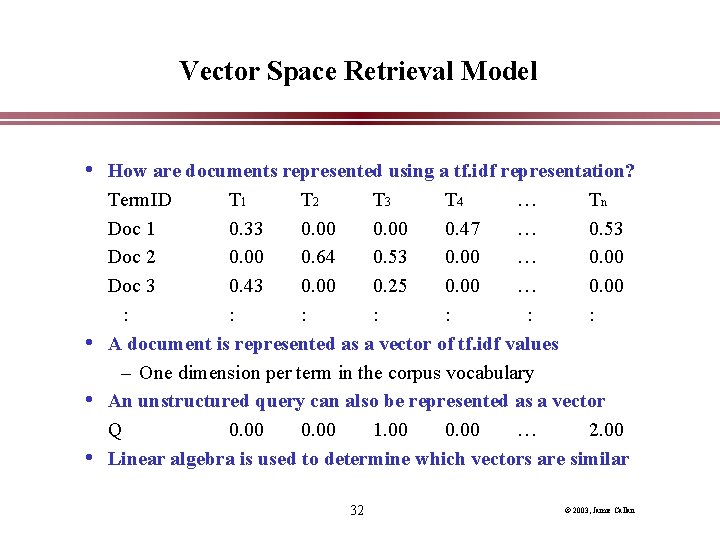

Vector Space Retrieval Model • How are documents represented using a tf. idf representation? • • • Term. ID T 1 T 2 T 3 T 4 … Tn Doc 1 0. 33 0. 00 0. 47 … 0. 53 Doc 2 0. 00 0. 64 0. 53 0. 00 … 0. 00 Doc 3 0. 43 0. 00 0. 25 0. 00 … 0. 00 : : : : A document is represented as a vector of tf. idf values – One dimension per term in the corpus vocabulary An unstructured query can also be represented as a vector Q 0. 00 1. 00 0. 00 … 2. 00 Linear algebra is used to determine which vectors are similar 32 © 2003, Jamie Callan

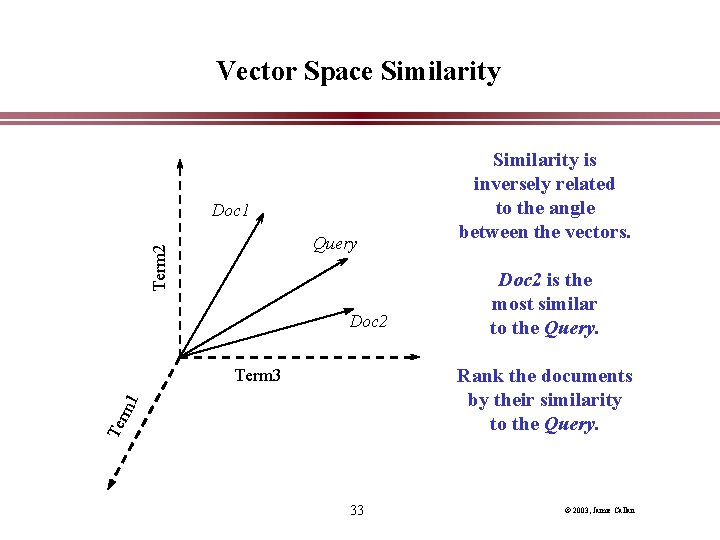

Vector Space Similarity Doc 1 Term 2 Query Doc 2 Similarity is inversely related to the angle between the vectors. Doc 2 is the most similar to the Query. Rank the documents by their similarity to the Query. Ter m 1 Term 3 33 © 2003, Jamie Callan

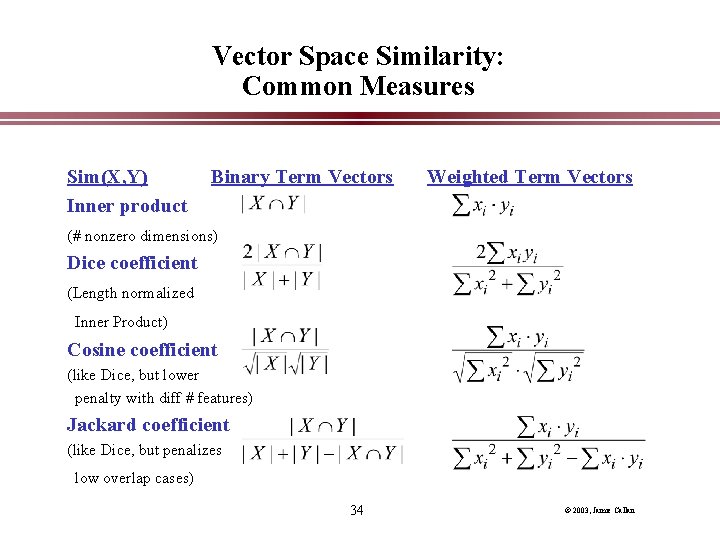

Vector Space Similarity: Common Measures Sim(X, Y) Inner product Binary Term Vectors Weighted Term Vectors (# nonzero dimensions) Dice coefficient (Length normalized Inner Product) Cosine coefficient (like Dice, but lower penalty with diff # features) Jackard coefficient (like Dice, but penalizes low overlap cases) 34 © 2003, Jamie Callan

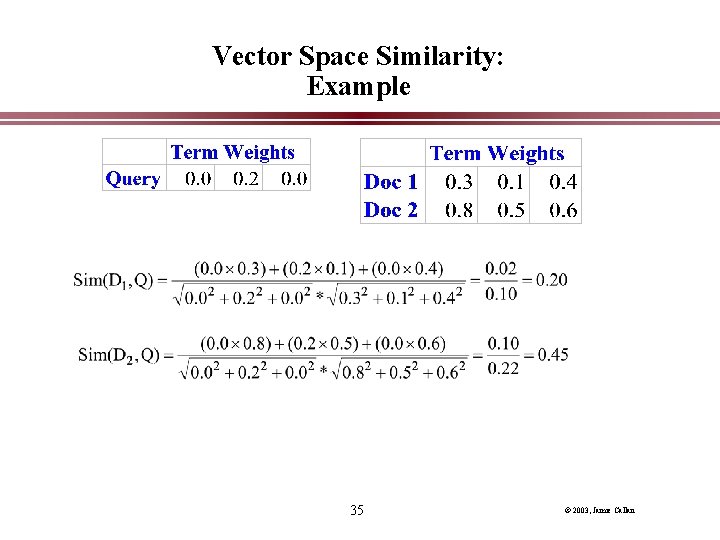

Vector Space Similarity: Example 35 © 2003, Jamie Callan

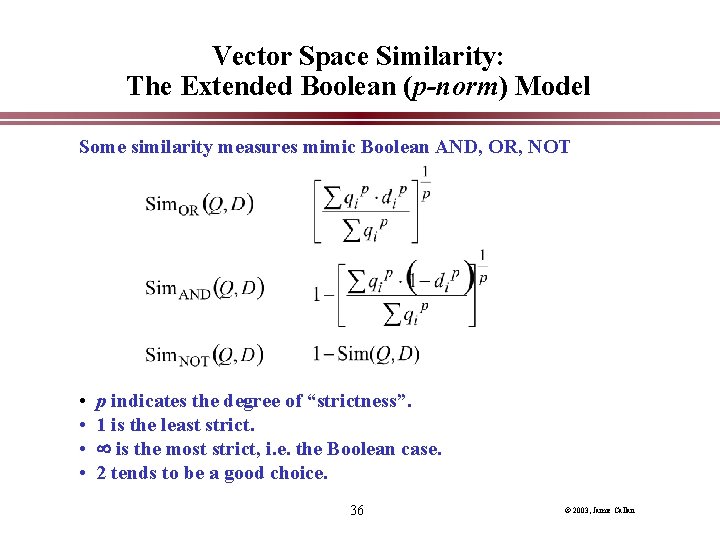

Vector Space Similarity: The Extended Boolean (p-norm) Model Some similarity measures mimic Boolean AND, OR, NOT • • p indicates the degree of “strictness”. 1 is the least strict. is the most strict, i. e. the Boolean case. 2 tends to be a good choice. 36 © 2003, Jamie Callan

Vector Space Similarity: The Extended Boolean (p-norm) Model Characteristics: • Full Boolean queries – (White AND House) OR (Bill AND Clinton AND (NOT Hillary)) • Ranked retrieval • Handle tf • idf weighting • Effective • Boolean operators are not used often with the vector space model – It is not clear why 37 © 2003, Jamie Callan

Vector Space Retrieval Model: Summary • Standard vector space – each dimension corresponds to a term in the vocabulary – vector elements are real-valued, reflecting term importance – any vector (document, query, . . . ) can be compared to any other – cosine correlation is the similarity metric used most often • Ranked Boolean – vector elements are binary • Extended Boolean – multiple nested similarity measures 38 © 2003, Jamie Callan

Vector Space Retrieval Model: Disadvantages • Assumed independence relationship among terms • Lack of justification for some vector operations – e. g. choice of similarity function – e. g. , choice of term weights • Barely a retrieval model – Doesn’t explicitly model relevance, a person’s information need, language models, etc. • Assumes a query and a document can be treated the same • Lack of a cognitive (or other) justification 39 © 2003, Jamie Callan

Vector Space Retrieval Model: Advantages • • • Simplicity: Easy to implement Effectiveness: It works very well Ability to incorporate any kind of term weights Can measure similarities between almost anything: – documents and queries, documents and documents, queries and queries, sentences and sentences, etc. Used in a variety of IR tasks: – Retrieval, classification, summarization, SDI, visualization, … The vector space model is the most popular retrieval model (today) 40 © 2003, Jamie Callan

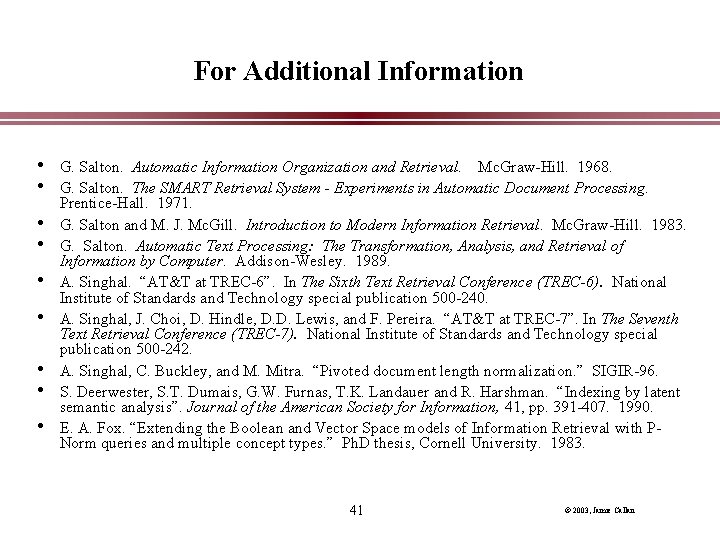

For Additional Information • G. Salton. Automatic Information Organization and Retrieval. Mc. Graw-Hill. 1968. • G. Salton. The SMART Retrieval System - Experiments in Automatic Document Processing. • • Prentice-Hall. 1971. G. Salton and M. J. Mc. Gill. Introduction to Modern Information Retrieval. Mc. Graw-Hill. 1983. G. Salton. Automatic Text Processing: The Transformation, Analysis, and Retrieval of Information by Computer. Addison-Wesley. 1989. A. Singhal. “AT&T at TREC-6”. In The Sixth Text Retrieval Conference (TREC-6). National Institute of Standards and Technology special publication 500 -240. A. Singhal, J. Choi, D. Hindle, D. D. Lewis, and F. Pereira. “AT&T at TREC-7”. In The Seventh Text Retrieval Conference (TREC-7). National Institute of Standards and Technology special publication 500 -242. A. Singhal, C. Buckley, and M. Mitra. “Pivoted document length normalization. ” SIGIR-96. S. Deerwester, S. T. Dumais, G. W. Furnas, T. K. Landauer and R. Harshman. “Indexing by latent semantic analysis”. Journal of the American Society for Information, 41, pp. 391 -407. 1990. E. A. Fox. “Extending the Boolean and Vector Space models of Information Retrieval with PNorm queries and multiple concept types. ” Ph. D thesis, Cornell University. 1983. 41 © 2003, Jamie Callan

- Slides: 41