Modeling Diversity in Information Retrieval Cheng Xiang Cheng

![Generative Model of Document & Query [Lafferty & Zhai 01] Us er U q Generative Model of Document & Query [Lafferty & Zhai 01] Us er U q](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-10.jpg)

![Risk Minimization with Language Models [Lafferty & Zhai 01, Zhai & Lafferty 06] Choice: Risk Minimization with Language Models [Lafferty & Zhai 01, Zhai & Lafferty 06] Choice:](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-11.jpg)

![Diversify = Remove Redundancy [Zhai et al. 03] Greedy Algorithm for Ranking: Maximal Marginal Diversify = Remove Redundancy [Zhai et al. 03] Greedy Algorithm for Ranking: Maximal Marginal](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-15.jpg)

![Diversity = Satisfy Diverse Info. Need [Zhai 02] • Need to directly model latent Diversity = Satisfy Diverse Info. Need [Zhai 02] • Need to directly model latent](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-27.jpg)

![Diversify = Active Feedback [Shen & Zhai 05] Decision problem: Decide subset of documents Diversify = Active Feedback [Shen & Zhai 05] Decision problem: Decide subset of documents](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-36.jpg)

![References • Risk Minimization – [Lafferty & Zhai 01] John Lafferty and Cheng. Xiang References • Risk Minimization – [Lafferty & Zhai 01] John Lafferty and Cheng. Xiang](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-48.jpg)

- Slides: 49

Modeling Diversity in Information Retrieval Cheng. Xiang (“Cheng”) Zhai Department of Computer Science Graduate School of Library & Information Science Institute for Genomic Biology Department of Statistics University of Illinois, Urbana-Champaign Joint work with John Lafferty, William Cohen, and Xuehua Shen ACM SIGIR 2009 Workshop on Redundancy, Diversity, and Interdependent Document Relevance, July 23, 2009, Boston, MA 1

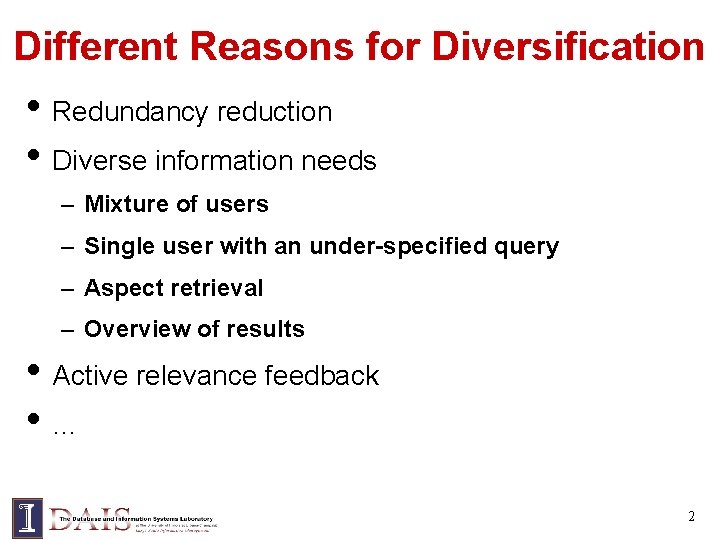

Different Reasons for Diversification • Redundancy reduction • Diverse information needs – Mixture of users – Single user with an under-specified query – Aspect retrieval – Overview of results • Active relevance feedback • … 2

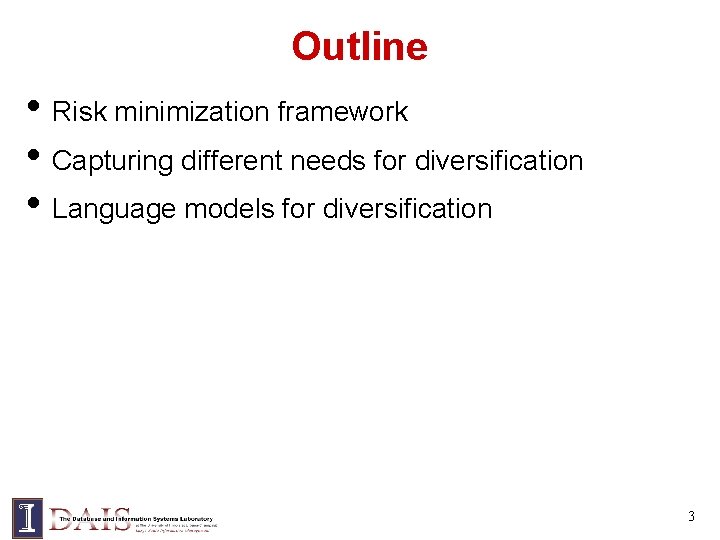

Outline • Risk minimization framework • Capturing different needs for diversification • Language models for diversification 3

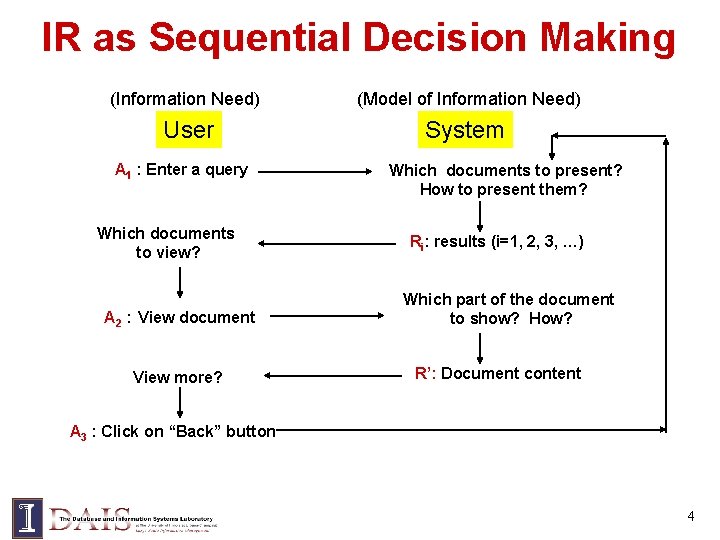

IR as Sequential Decision Making (Information Need) (Model of Information Need) User System A 1 : Enter a query Which documents to view? A 2 : View document View more? Which documents to present? How to present them? Ri: results (i=1, 2, 3, …) Which part of the document to show? How? R’: Document content A 3 : Click on “Back” button 4

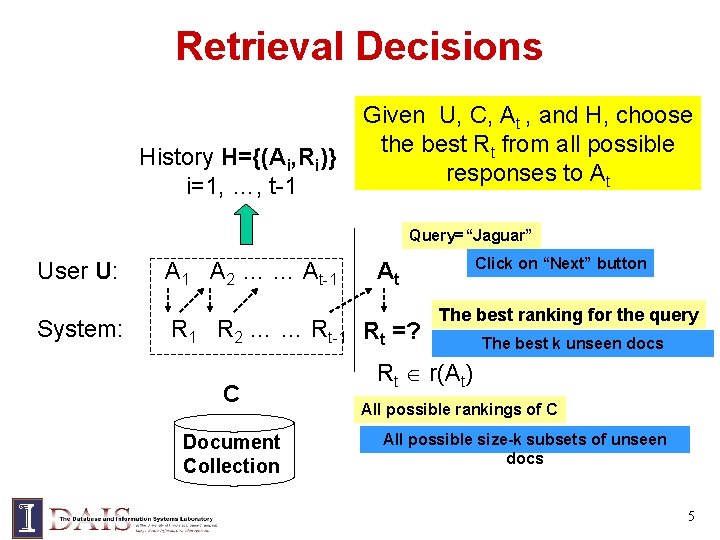

Retrieval Decisions History H={(Ai, Ri)} i=1, …, t-1 Given U, C, At , and H, choose the best Rt from all possible responses to At Query=“Jaguar” User U: System: A 1 A 2 … … At-1 R 2 … … Rt-1 Rt =? C Document Collection Click on “Next” button At The best ranking for the query The best k unseen docs Rt r(At) All possible rankings of C All possible size-k subsets of unseen docs 5

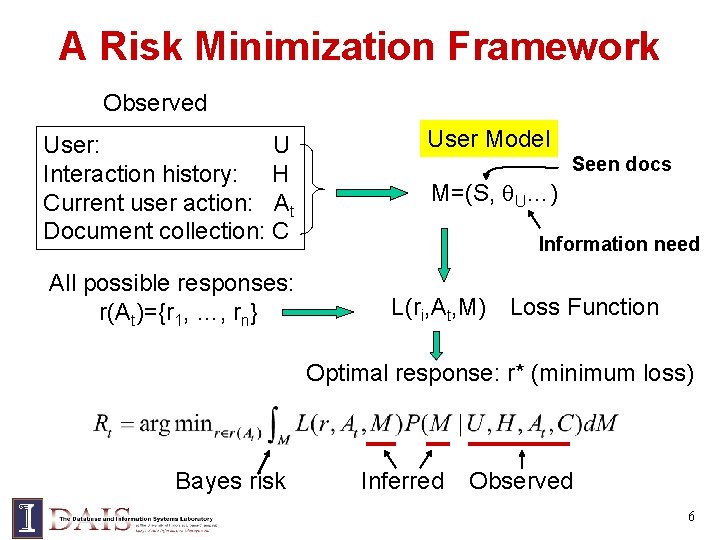

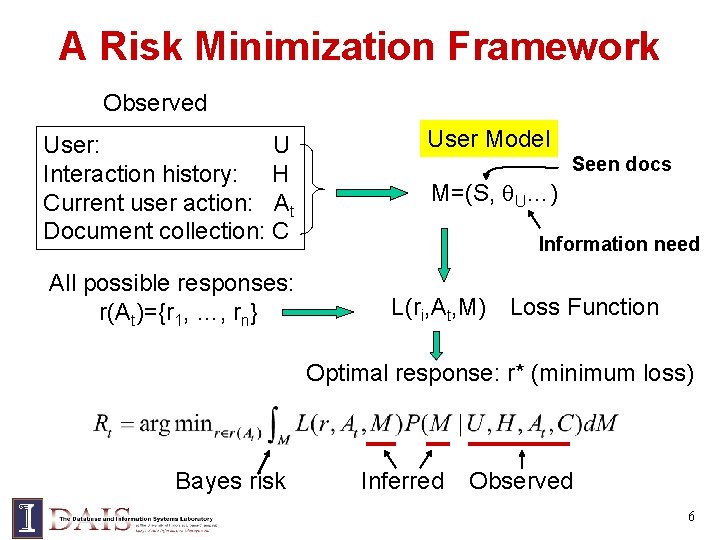

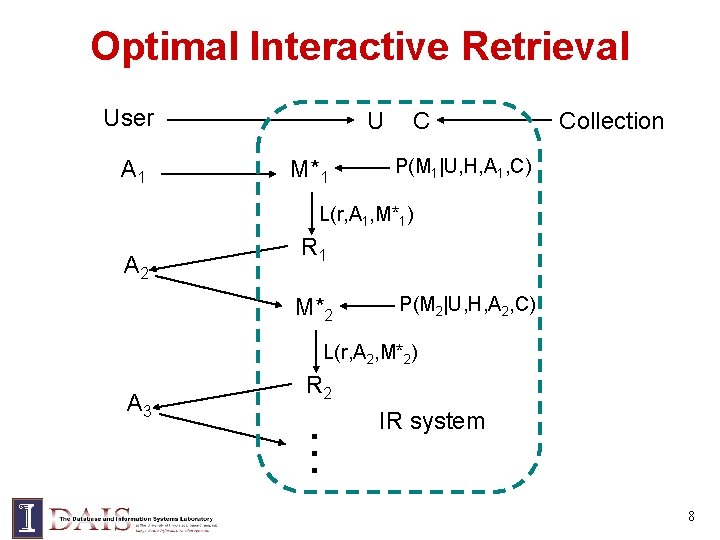

A Risk Minimization Framework Observed User: U Interaction history: H Current user action: At Document collection: C All possible responses: r(At)={r 1, …, rn} User Model Seen docs M=(S, U…) Information need L(ri, At, M) Loss Function Optimal response: r* (minimum loss) Bayes risk Inferred Observed 6

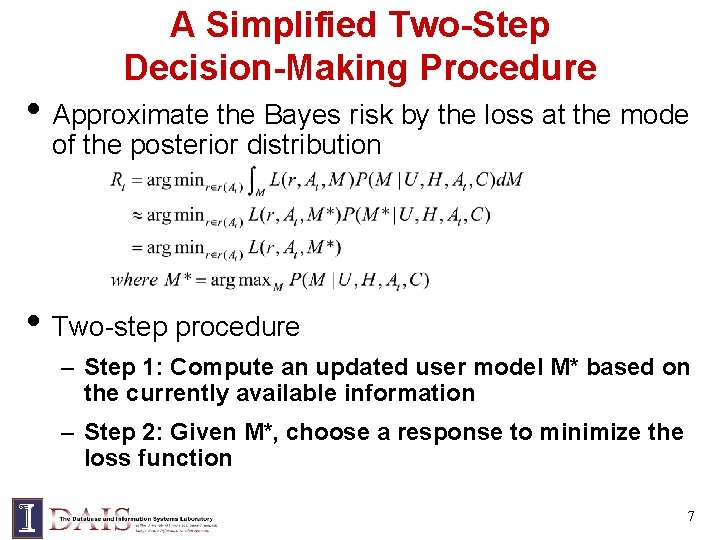

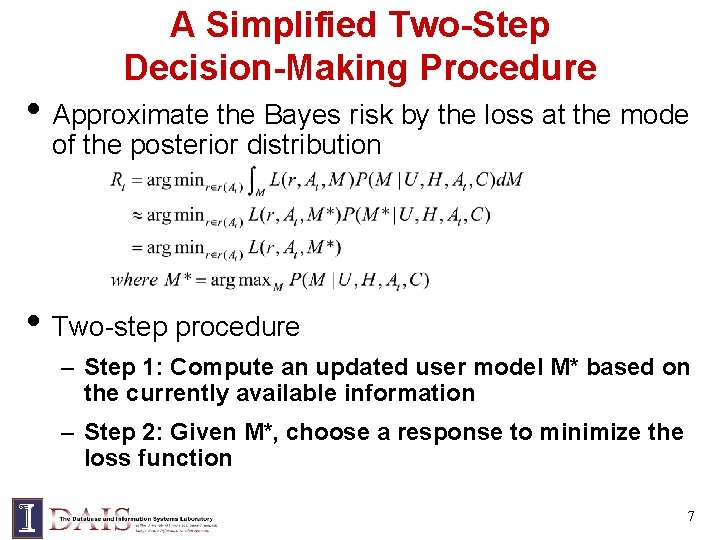

A Simplified Two-Step Decision-Making Procedure • Approximate the Bayes risk by the loss at the mode of the posterior distribution • Two-step procedure – Step 1: Compute an updated user model M* based on the currently available information – Step 2: Given M*, choose a response to minimize the loss function 7

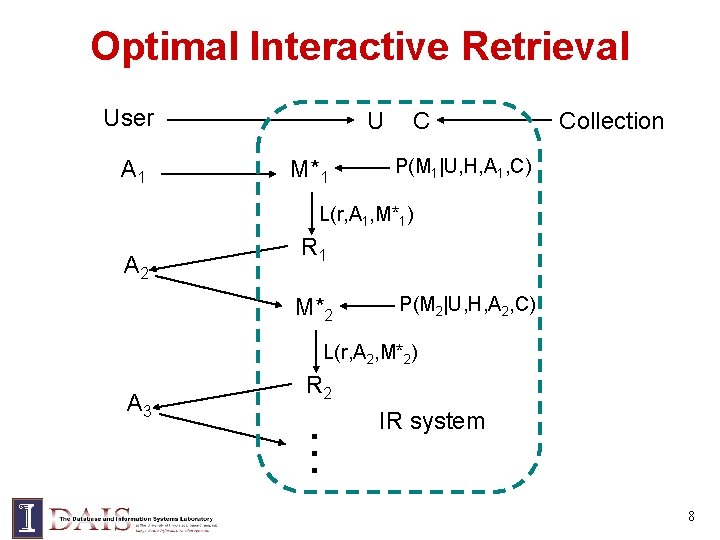

Optimal Interactive Retrieval User A 1 U M*1 C Collection P(M 1|U, H, A 1, C) L(r, A 1, M*1) A 2 R 1 M*2 P(M 2|U, H, A 2, C) L(r, A 2, M*2) A 3 R 2 … IR system 8

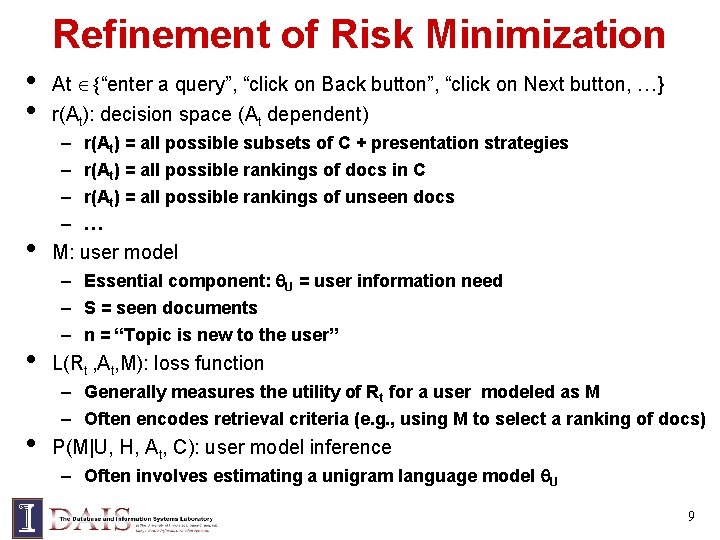

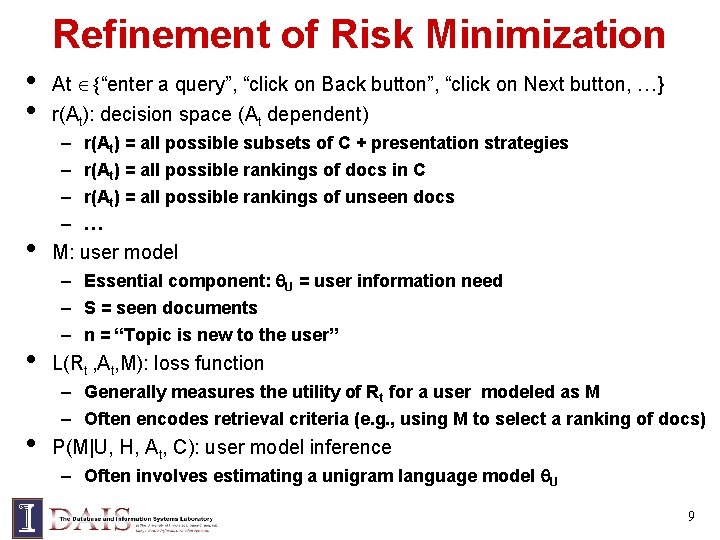

Refinement of Risk Minimization • • • At {“enter a query”, “click on Back button”, “click on Next button, …} r(At): decision space (At dependent) – – r(At) = all possible subsets of C + presentation strategies r(At) = all possible rankings of docs in C r(At) = all possible rankings of unseen docs … M: user model – Essential component: U = user information need – S = seen documents – n = “Topic is new to the user” L(Rt , At, M): loss function – Generally measures the utility of Rt for a user modeled as M – Often encodes retrieval criteria (e. g. , using M to select a ranking of docs) P(M|U, H, At, C): user model inference – Often involves estimating a unigram language model U 9

![Generative Model of Document Query Lafferty Zhai 01 Us er U q Generative Model of Document & Query [Lafferty & Zhai 01] Us er U q](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-10.jpg)

Generative Model of Document & Query [Lafferty & Zhai 01] Us er U q Partially observed Sourc e observed R d S Query Document inferred 10

![Risk Minimization with Language Models Lafferty Zhai 01 Zhai Lafferty 06 Choice Risk Minimization with Language Models [Lafferty & Zhai 01, Zhai & Lafferty 06] Choice:](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-11.jpg)

Risk Minimization with Language Models [Lafferty & Zhai 01, Zhai & Lafferty 06] Choice: (D 1, 1) Choice: (D 2, 2) Los s q L 1 L Choice: (Dn, n) L query q user U . . . doc set C source S N 11

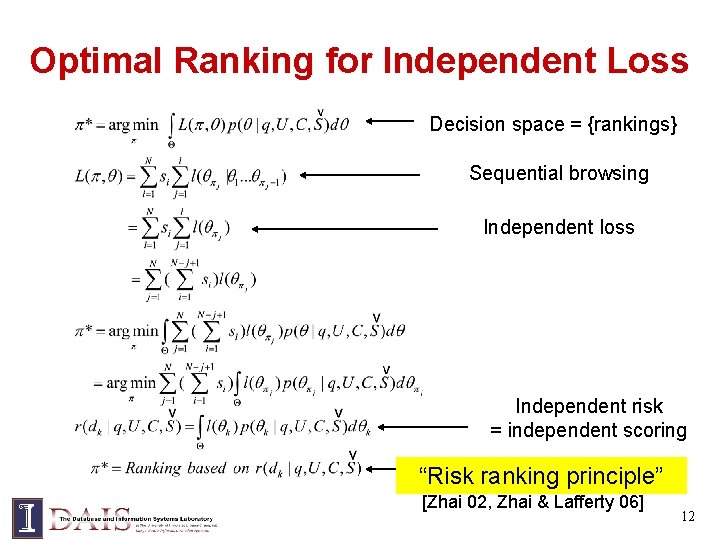

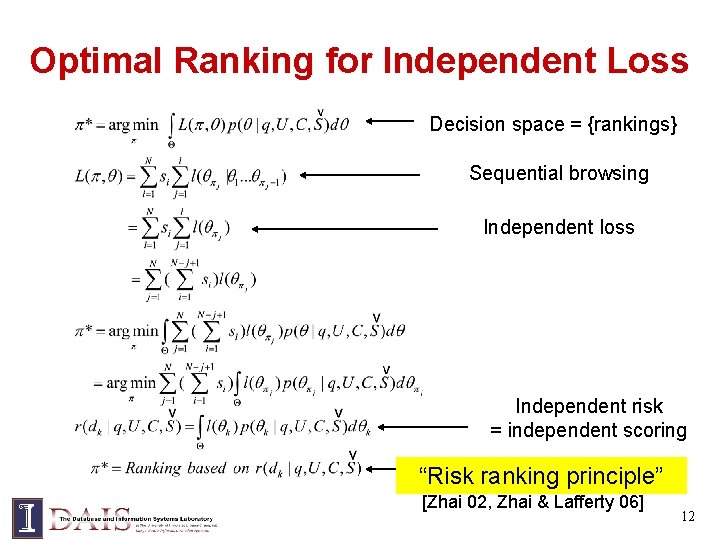

Optimal Ranking for Independent Loss Decision space = {rankings} Sequential browsing Independent loss Independent risk = independent scoring “Risk ranking principle” [Zhai 02, Zhai & Lafferty 06] 12

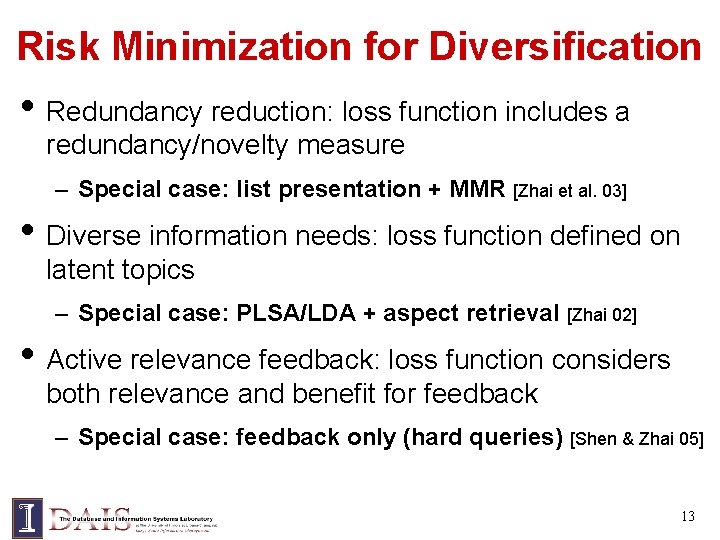

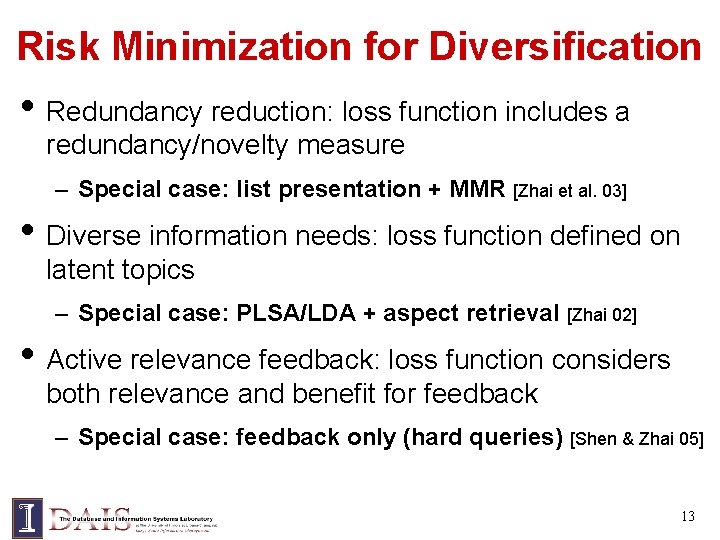

Risk Minimization for Diversification • Redundancy reduction: loss function includes a redundancy/novelty measure – Special case: list presentation + MMR [Zhai et al. 03] • Diverse information needs: loss function defined on latent topics – Special case: PLSA/LDA + aspect retrieval [Zhai 02] • Active relevance feedback: loss function considers both relevance and benefit for feedback – Special case: feedback only (hard queries) [Shen & Zhai 05] 13

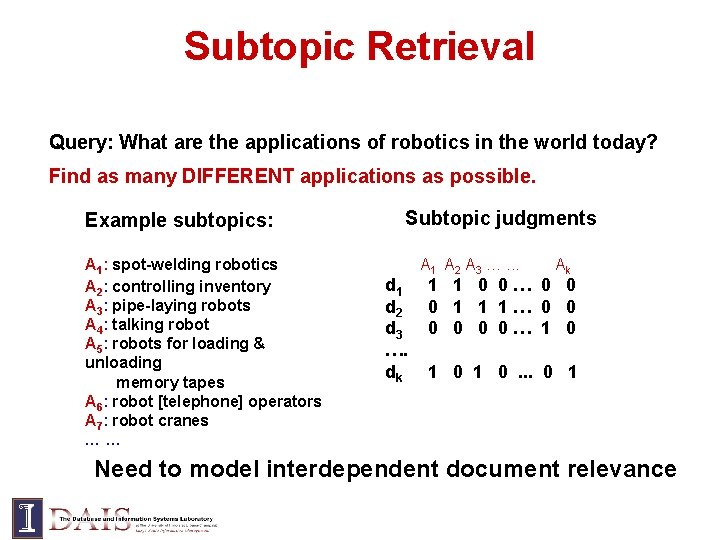

Subtopic Retrieval Query: What are the applications of robotics in the world today? Find as many DIFFERENT applications as possible. Example subtopics: A 1: spot-welding robotics A 2: controlling inventory A 3: pipe-laying robots A 4: talking robot A 5: robots for loading & unloading memory tapes A 6: robot [telephone] operators A 7: robot cranes …… Subtopic judgments d 1 d 2 d 3 …. dk A 1 A 2 A 3 …. . . Ak 1 1 0 0… 0 0 0 1 1 1… 0 0 0… 1 0 1 0. . . 0 1 Need to model interdependent document relevance

![Diversify Remove Redundancy Zhai et al 03 Greedy Algorithm for Ranking Maximal Marginal Diversify = Remove Redundancy [Zhai et al. 03] Greedy Algorithm for Ranking: Maximal Marginal](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-15.jpg)

Diversify = Remove Redundancy [Zhai et al. 03] Greedy Algorithm for Ranking: Maximal Marginal Relevance (MMR) “Willingness to tolerate redundancy” C 2<C 3, since a redundant relevant doc is better than a non-relevant doc 15

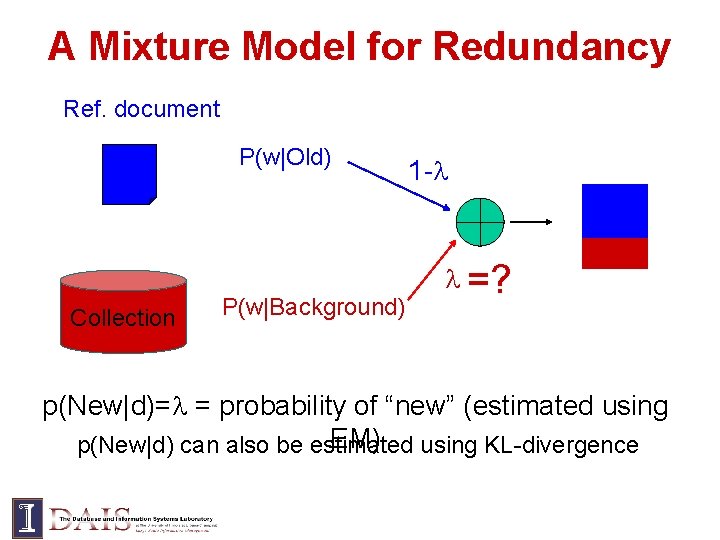

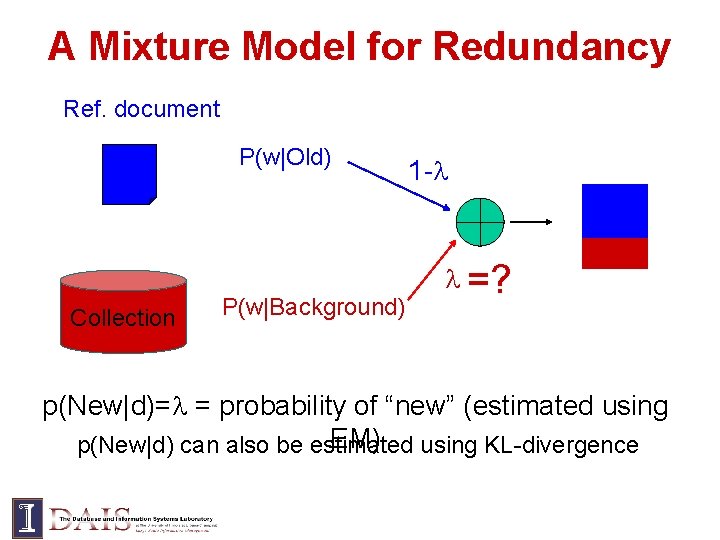

A Mixture Model for Redundancy Ref. document P(w|Old) Collection P(w|Background) 1 - =? p(New|d)= = probability of “new” (estimated using EM) using KL-divergence p(New|d) can also be estimated

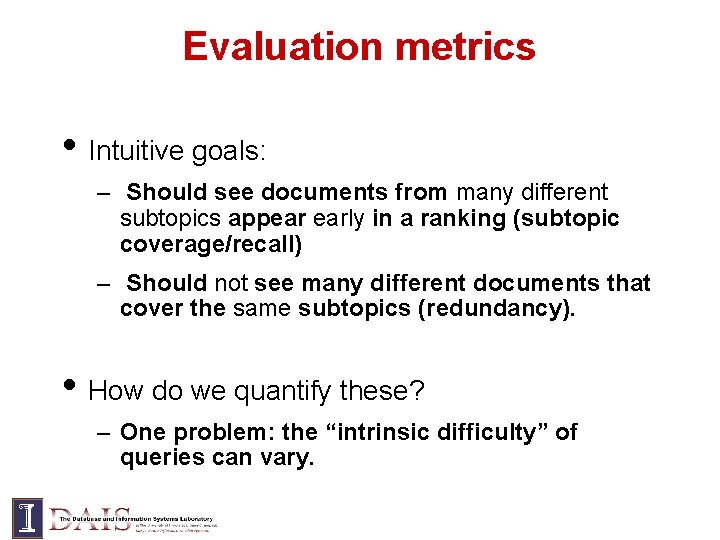

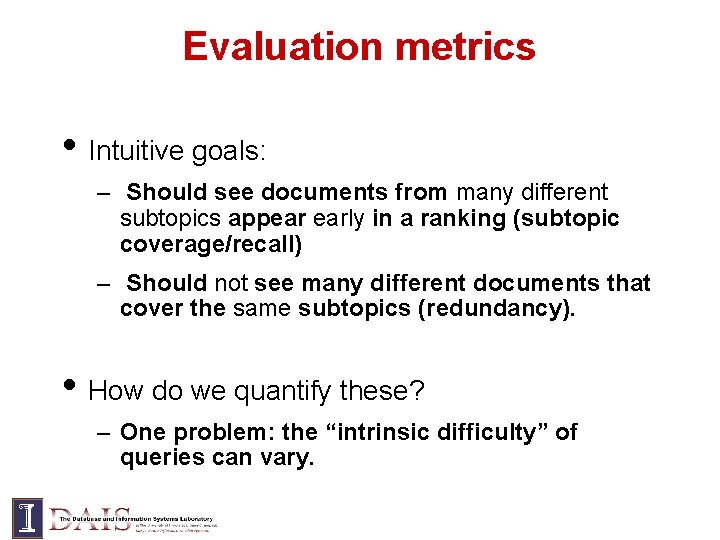

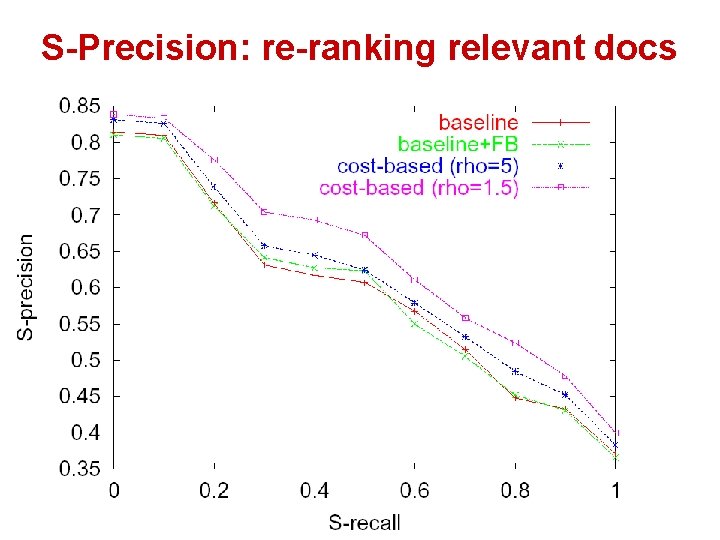

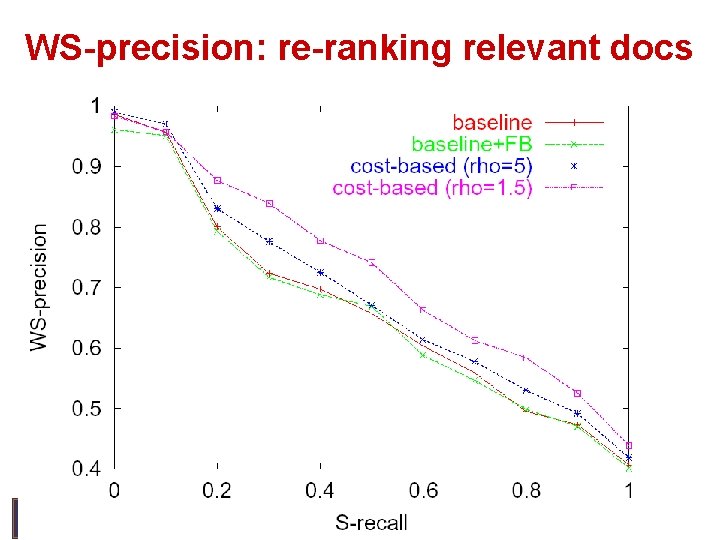

Evaluation metrics • Intuitive goals: – Should see documents from many different subtopics appear early in a ranking (subtopic coverage/recall) – Should not see many different documents that cover the same subtopics (redundancy). • How do we quantify these? – One problem: the “intrinsic difficulty” of queries can vary.

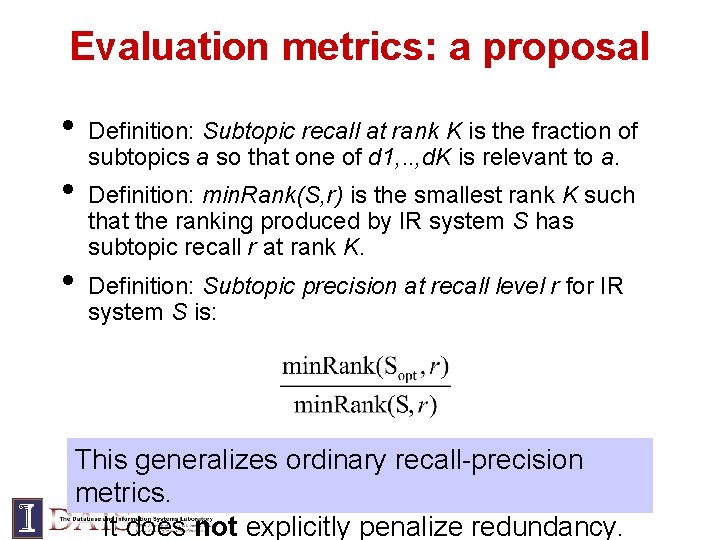

Evaluation metrics: a proposal • • • Definition: Subtopic recall at rank K is the fraction of subtopics a so that one of d 1, . . , d. K is relevant to a. Definition: min. Rank(S, r) is the smallest rank K such that the ranking produced by IR system S has subtopic recall r at rank K. Definition: Subtopic precision at recall level r for IR system S is: This generalizes ordinary recall-precision metrics. It does not explicitly penalize redundancy.

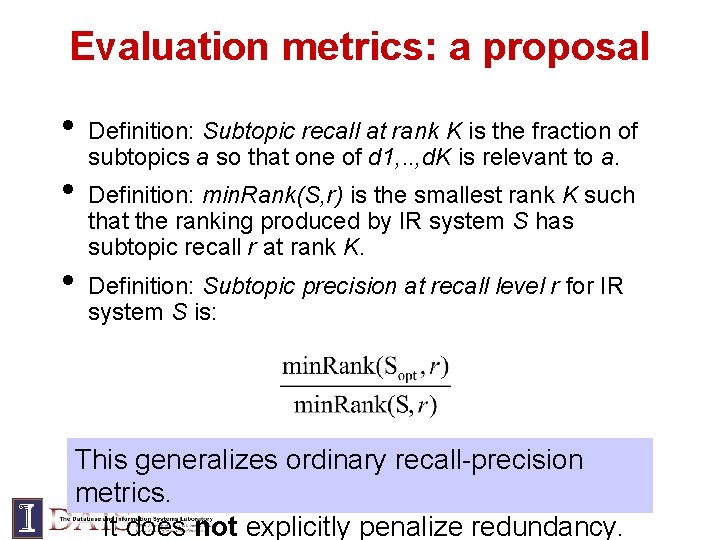

Evaluation metrics: rationale K min. Rank(S, r) precision 1. 0 For subtopics, the min. Rank(Sopt, r) curve’s shape is not predictable and linear. min. Rank(Sopt, r) 0. 0 recall

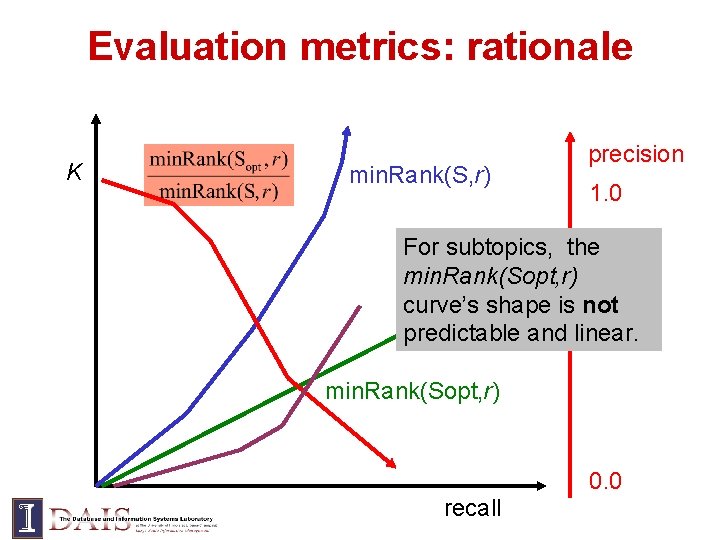

Evaluating redundancy Definition: the cost of a ranking d 1, …, d. K is where b is cost of seeing document, a is cost of seeing a subtopic inside a document (before a=0). Definition: min. Cost(S, r) is the minimal cost at which recall r is obtained. Definition: weighted subtopic precision at r is will use a=b=1

Evaluation Metrics Summary • Measure performance (size of ranking min. Rank, cost of ranking min. Cost) relative to optimal. • Generalizes ordinary precision/recall. • Possible problems: – Computing min. Rank, min. Cost is NP-hard! – A greedy approximation seems to work well for our data set

Experiment Design • Dataset: TREC “interactive track” data. – London Financial Times: 210 k docs, 500 Mb – 20 queries from TREC 6 -8 • Subtopics: average 20, min 7, max 56 • Judged docs: average 40, min 5, max 100 • • • Non-judged docs assumed not relevant to any subtopic. Baseline: relevance-based ranking (using language models) Two experiments – Ranking only relevant documents – Ranking all documents

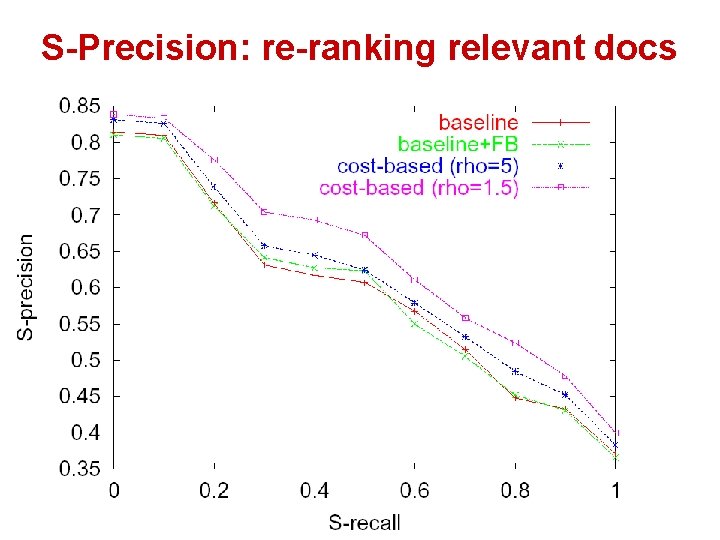

S-Precision: re-ranking relevant docs

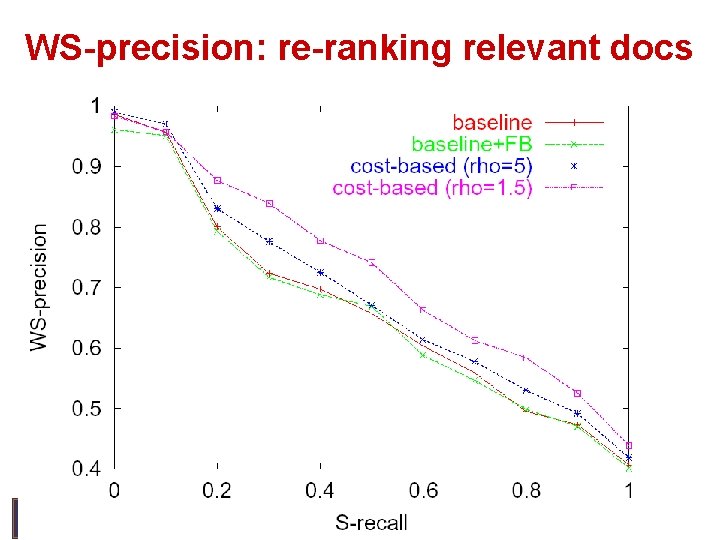

WS-precision: re-ranking relevant docs

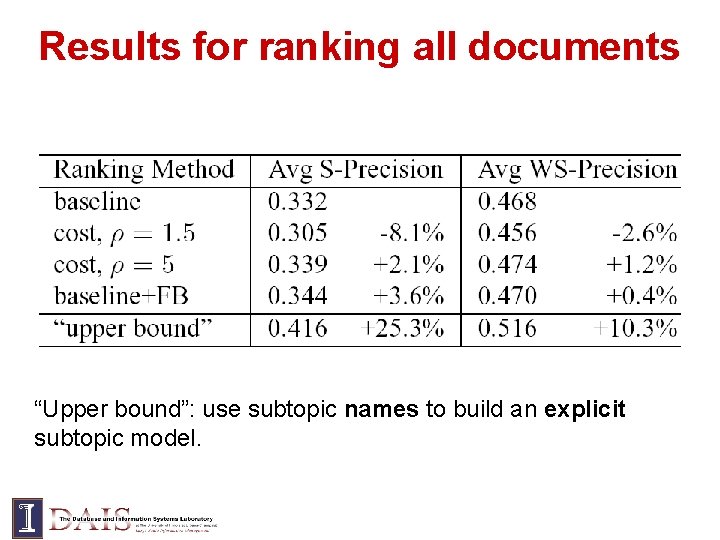

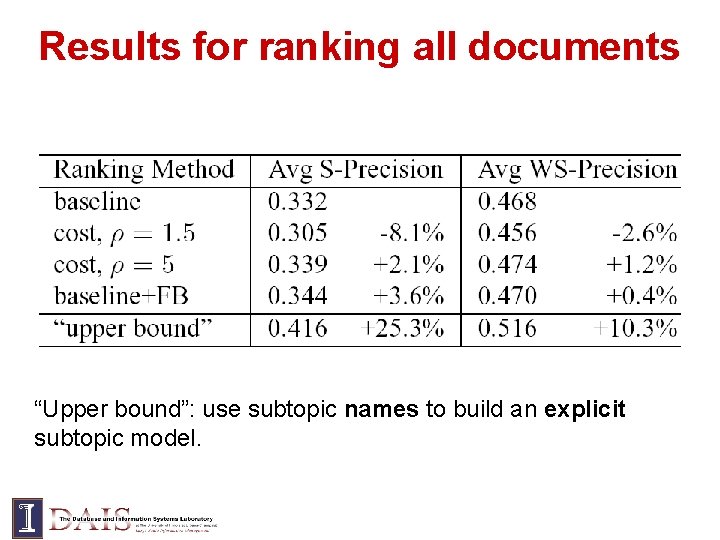

Results for ranking all documents “Upper bound”: use subtopic names to build an explicit subtopic model.

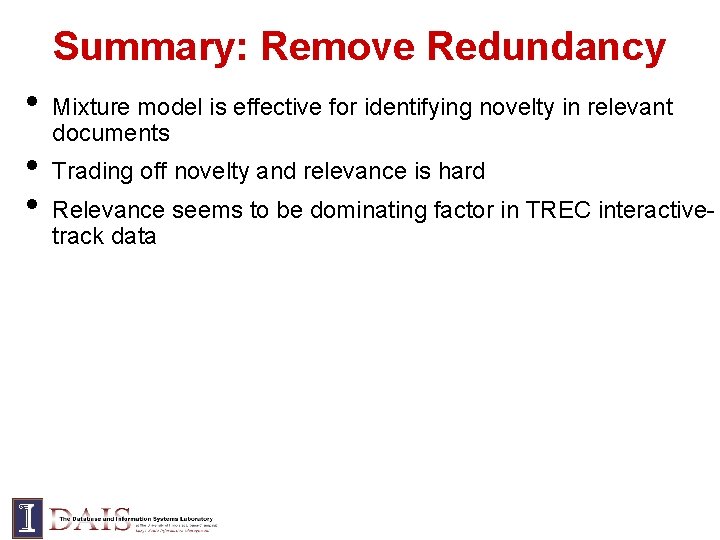

Summary: Remove Redundancy • • • Mixture model is effective for identifying novelty in relevant documents Trading off novelty and relevance is hard Relevance seems to be dominating factor in TREC interactivetrack data

![Diversity Satisfy Diverse Info Need Zhai 02 Need to directly model latent Diversity = Satisfy Diverse Info. Need [Zhai 02] • Need to directly model latent](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-27.jpg)

Diversity = Satisfy Diverse Info. Need [Zhai 02] • Need to directly model latent aspects and then optimize results based on aspect/topic matching • Reducing redundancy doesn’t ensure complete coverage of diverse aspects 27

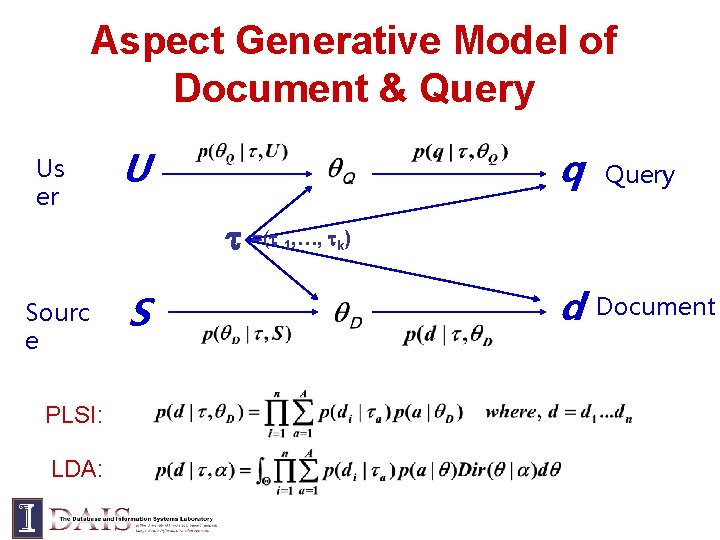

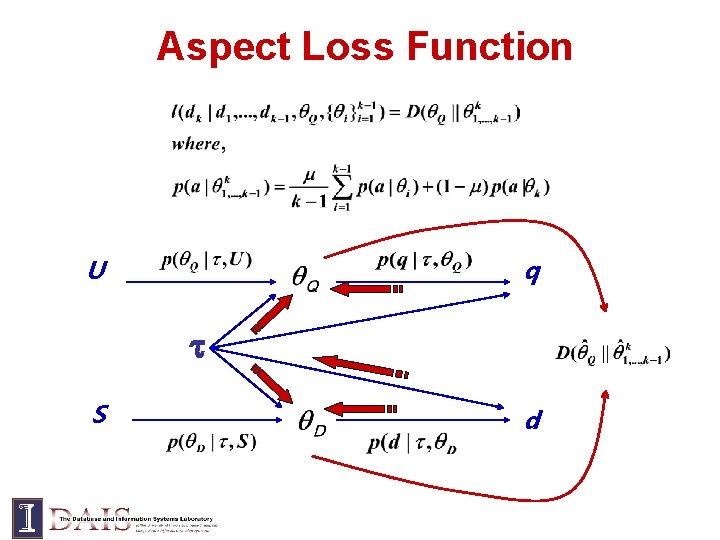

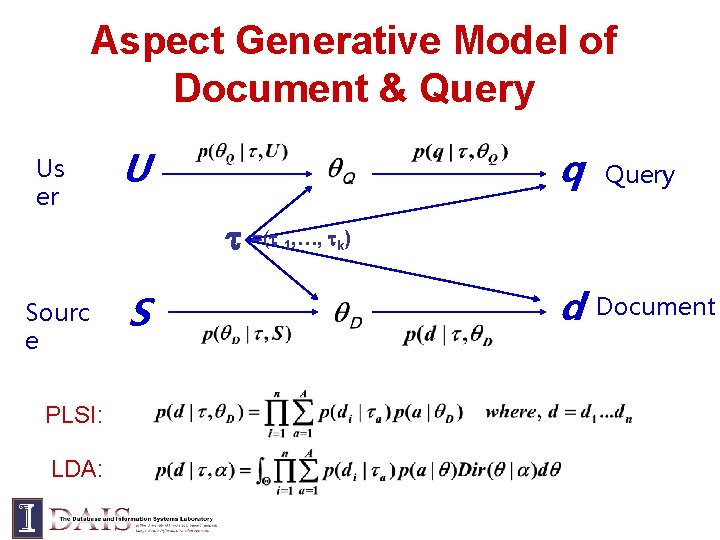

Aspect Generative Model of Document & Query Us er U q =( Sourc e PLSI: LDA: S 1, …, Query k ) d Document

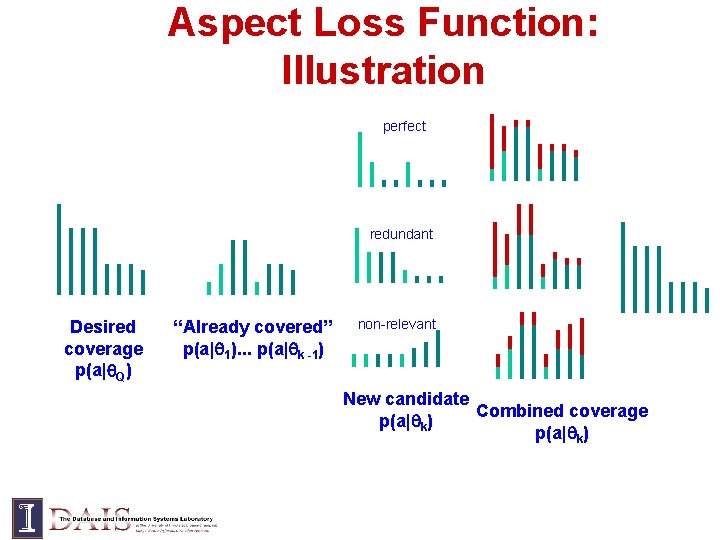

Aspect Loss Function U q S d

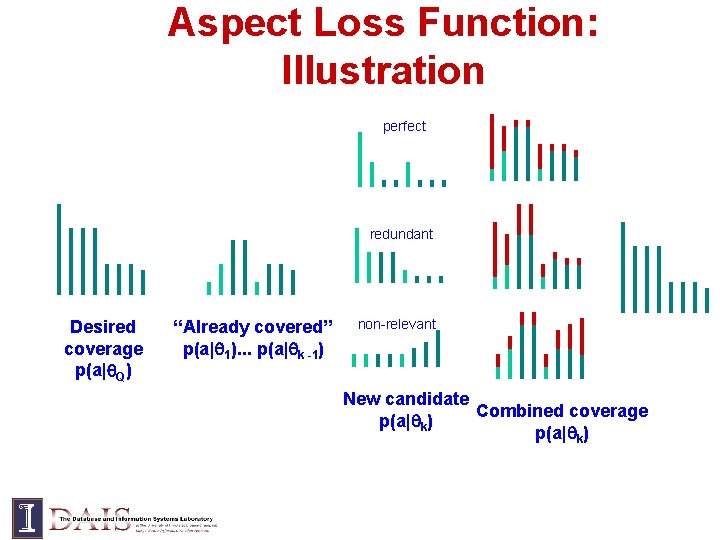

Aspect Loss Function: Illustration perfect redundant Desired coverage p(a| Q) “Already covered” p(a| 1). . . p(a| k -1) non-relevant New candidate Combined coverage p(a| k)

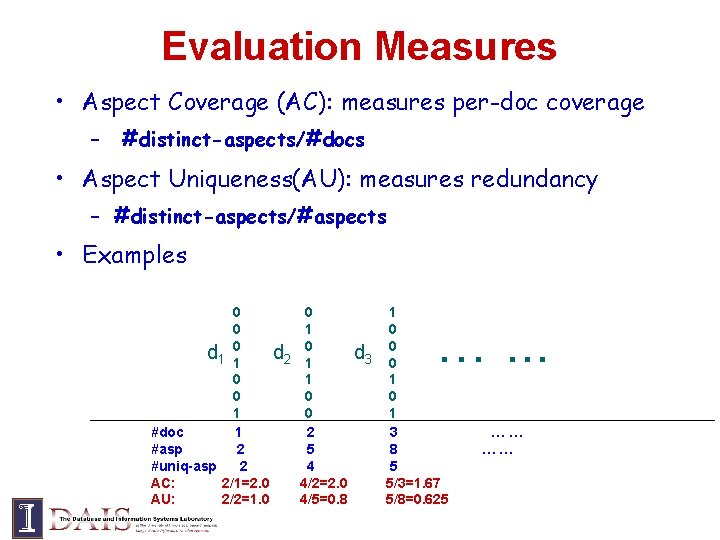

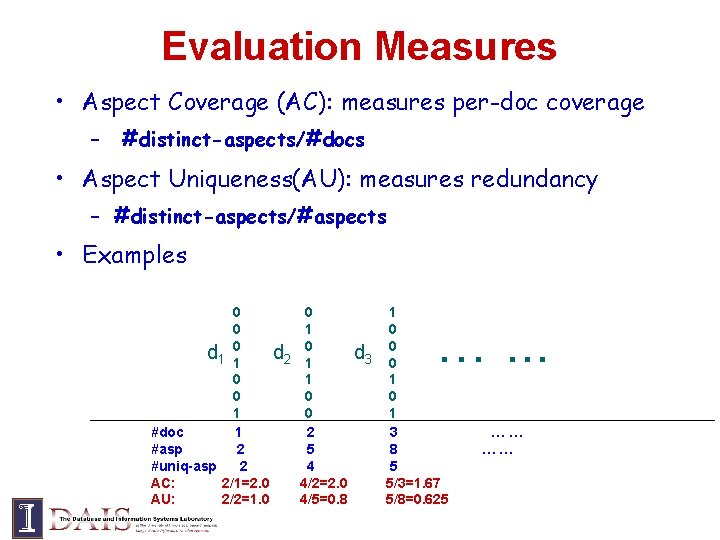

Evaluation Measures • Aspect Coverage (AC): measures per-doc coverage – #distinct-aspects/#docs • Aspect Uniqueness(AU): measures redundancy – #distinct-aspects/#aspects • Examples 0 0 d 1 01 d 2 0 0 1 #doc 1 #asp 2 #uniq-asp 2 AC: 2/1=2. 0 AU: 2/2=1. 0 0 1 1 0 0 2 5 4 4/2=2. 0 4/5=0. 8 1 0 d 3 00 1 3 8 5 5/3=1. 67 5/8=0. 625 …. . . …… ……

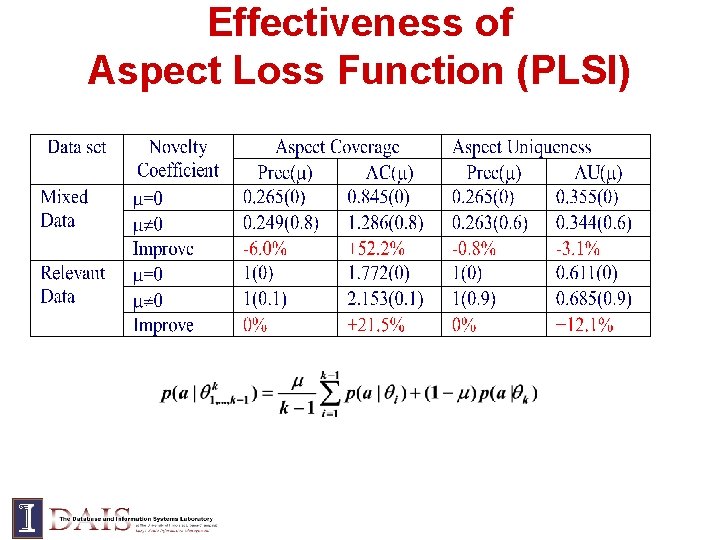

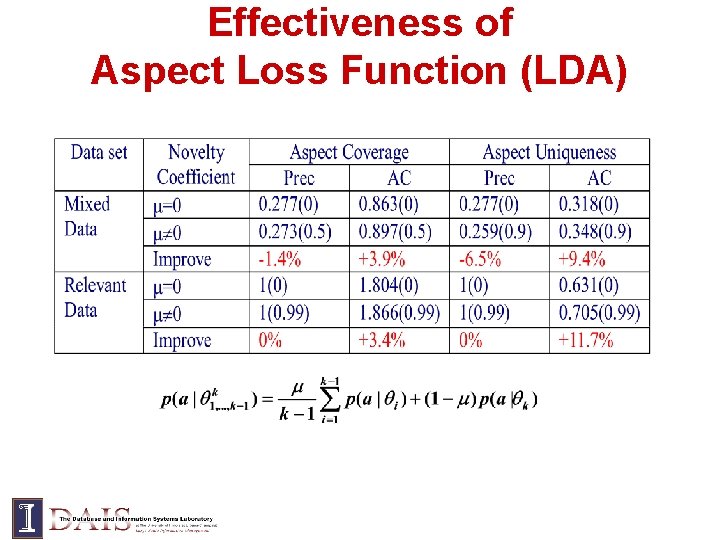

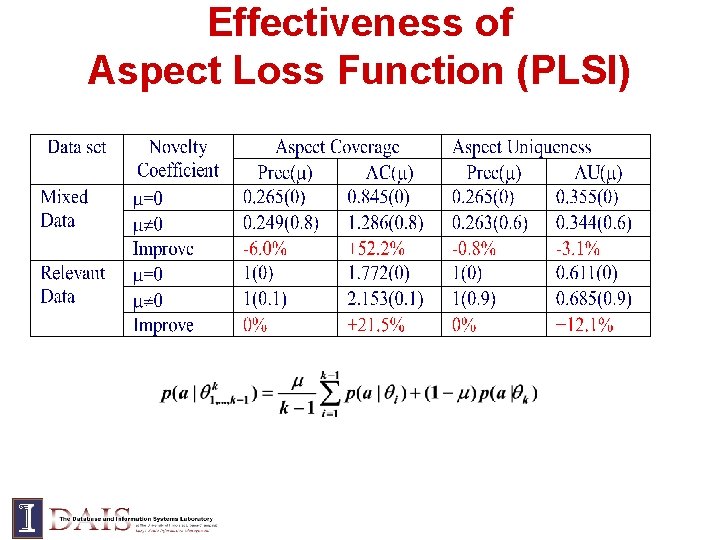

Effectiveness of Aspect Loss Function (PLSI)

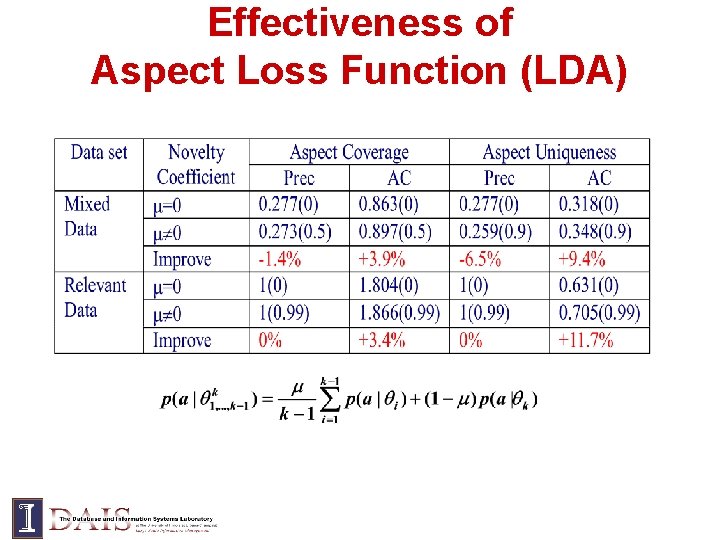

Effectiveness of Aspect Loss Function (LDA)

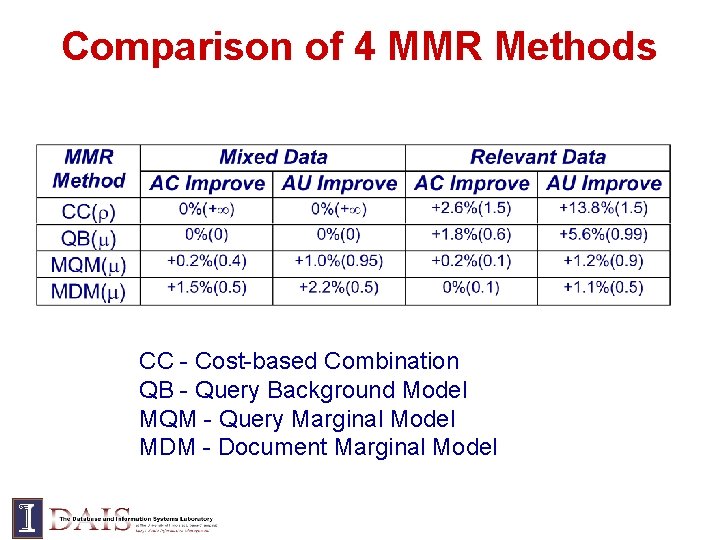

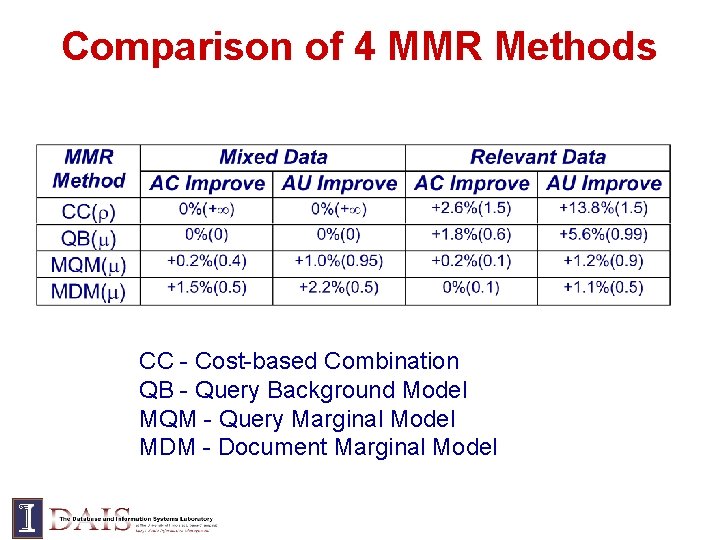

Comparison of 4 MMR Methods CC - Cost-based Combination QB - Query Background Model MQM - Query Marginal Model MDM - Document Marginal Model

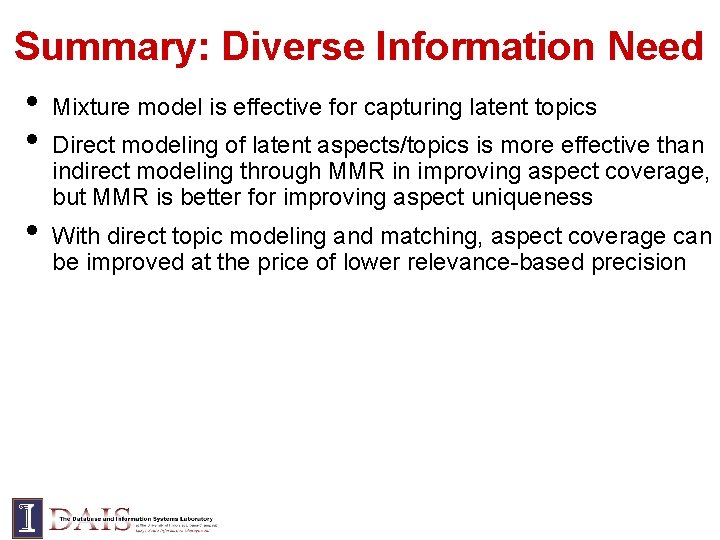

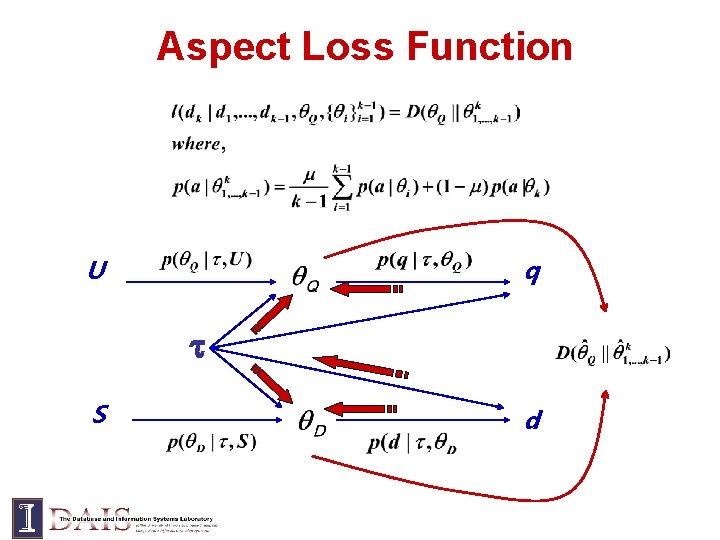

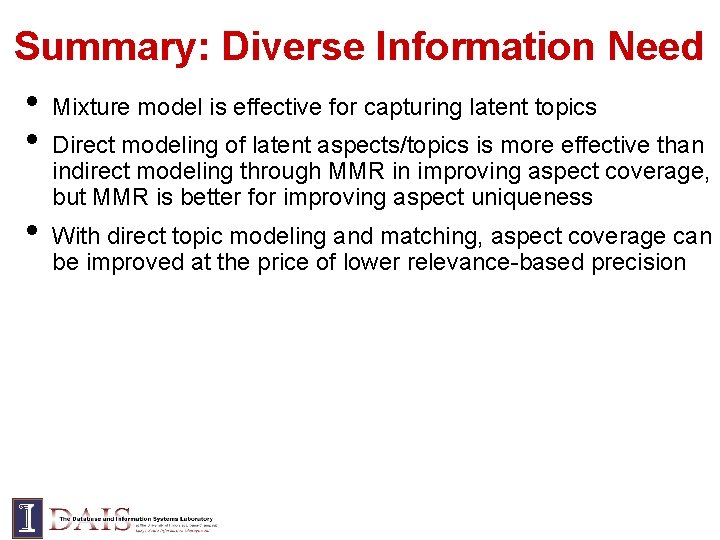

Summary: Diverse Information Need • Mixture model is effective for capturing latent topics • Direct modeling of latent aspects/topics is more effective than • indirect modeling through MMR in improving aspect coverage, but MMR is better for improving aspect uniqueness With direct topic modeling and matching, aspect coverage can be improved at the price of lower relevance-based precision

![Diversify Active Feedback Shen Zhai 05 Decision problem Decide subset of documents Diversify = Active Feedback [Shen & Zhai 05] Decision problem: Decide subset of documents](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-36.jpg)

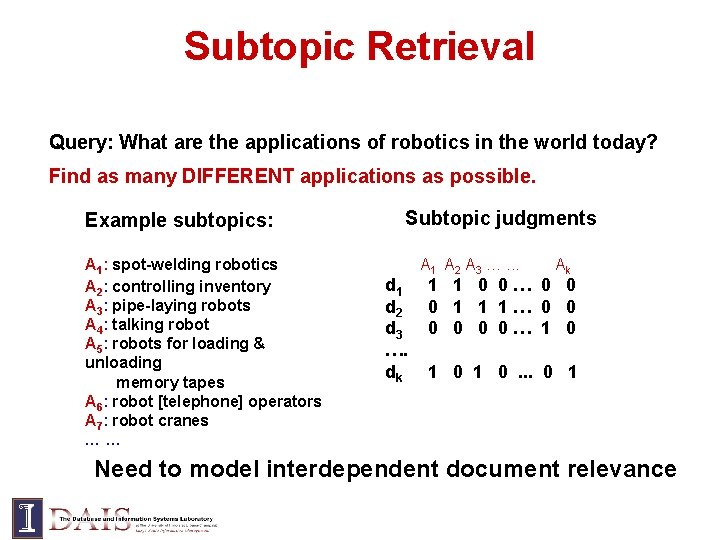

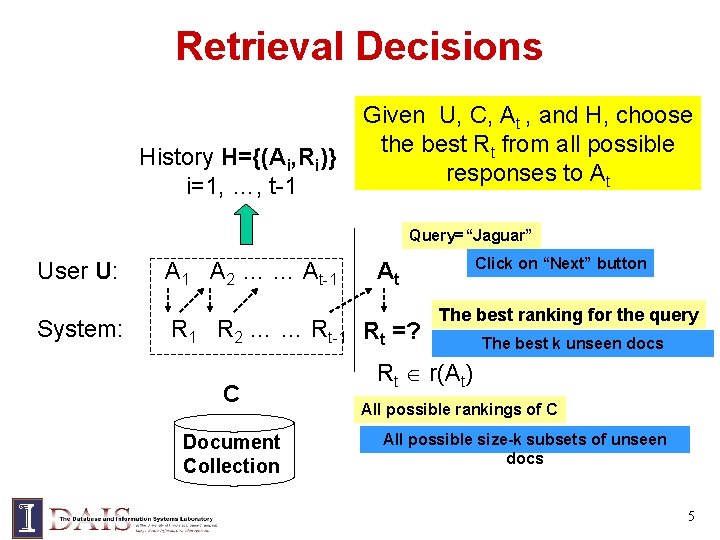

Diversify = Active Feedback [Shen & Zhai 05] Decision problem: Decide subset of documents for relevance judgment

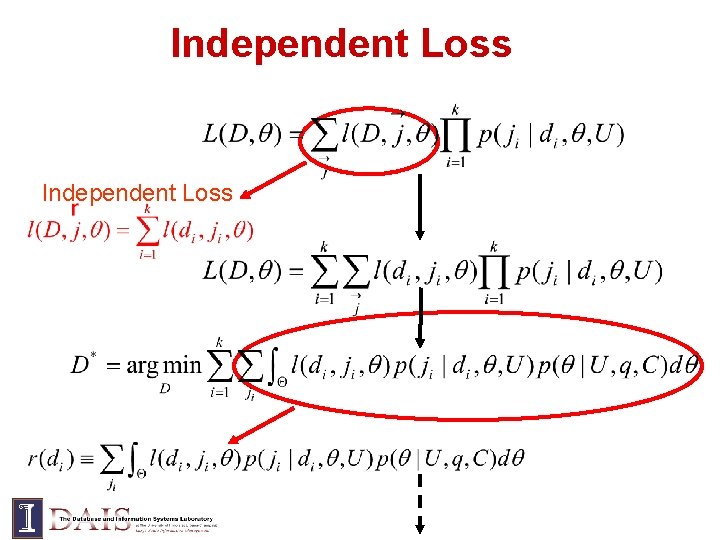

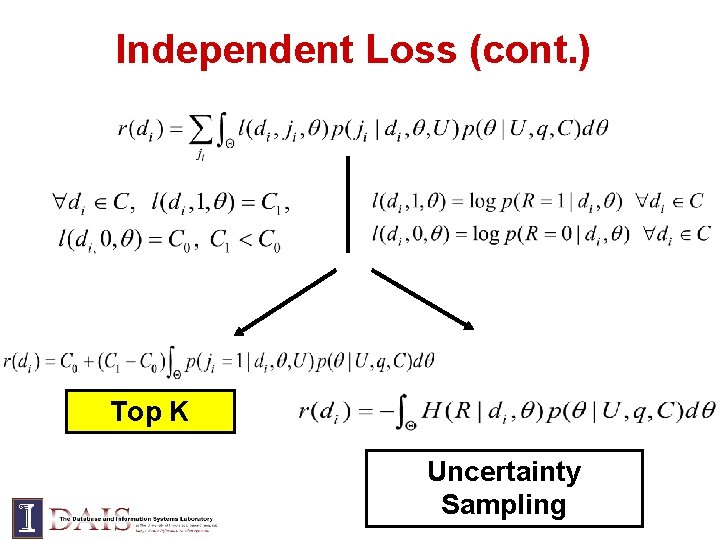

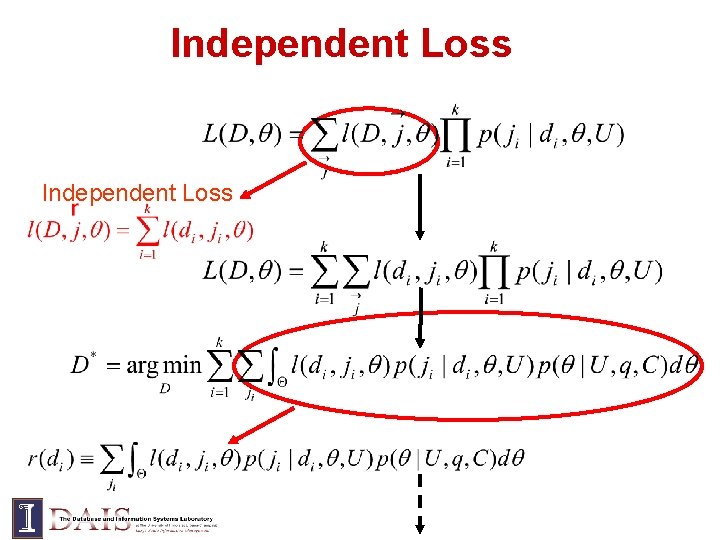

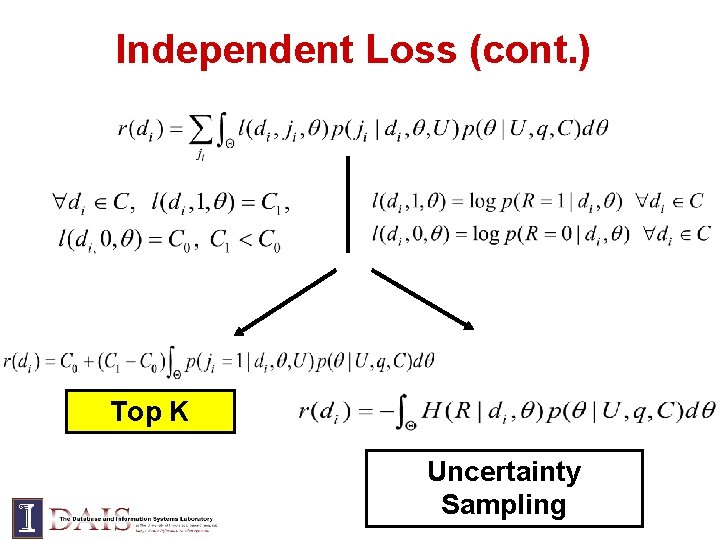

Independent Loss

Independent Loss (cont. ) Top K Uncertainty Sampling

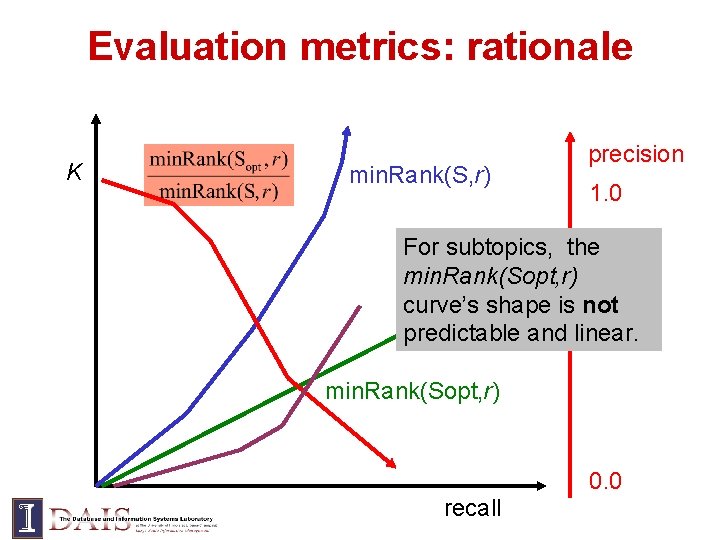

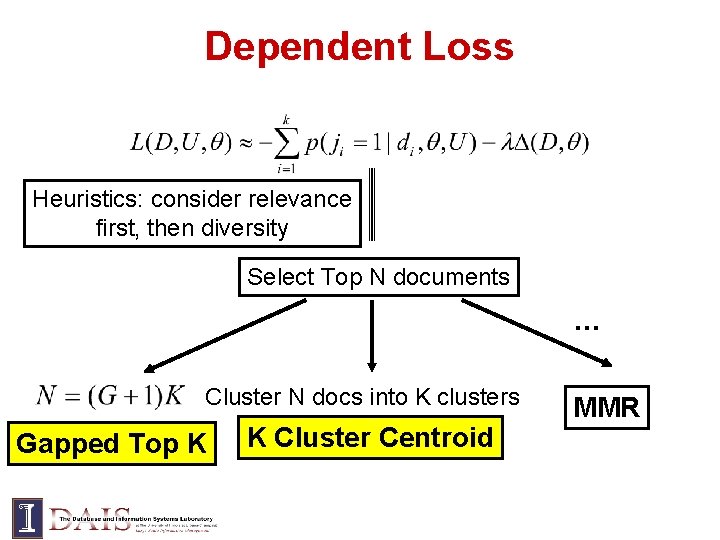

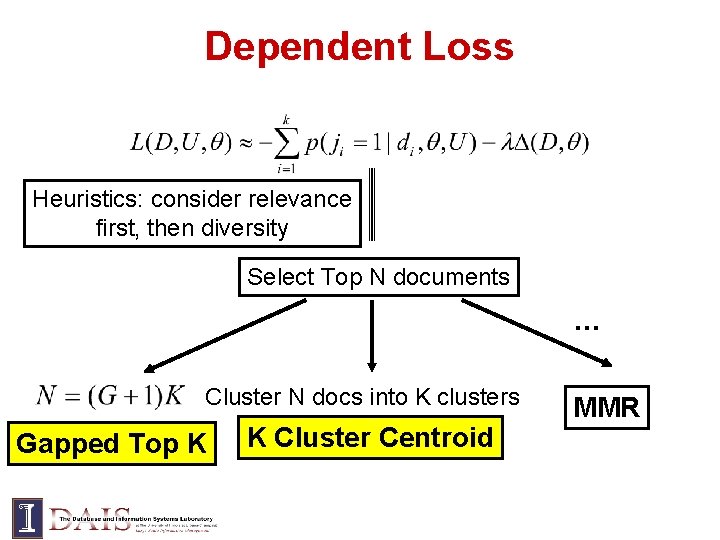

Dependent Loss Heuristics: consider relevance first, then diversity Select Top N documents … Cluster N docs into K clusters Gapped Top K K Cluster Centroid MMR

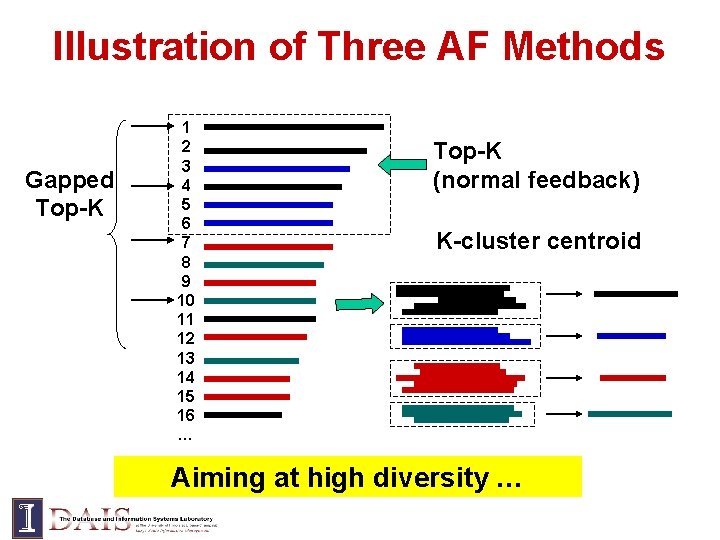

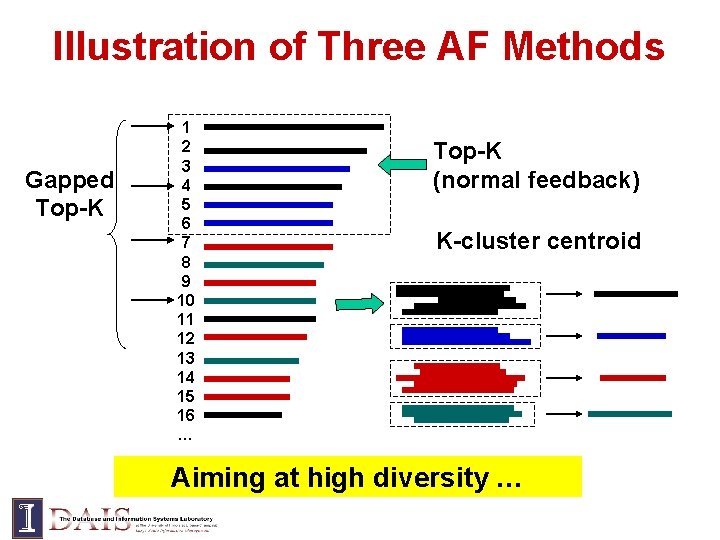

Illustration of Three AF Methods Gapped Top-K 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 … Top-K (normal feedback) K-cluster centroid Aiming at high diversity …

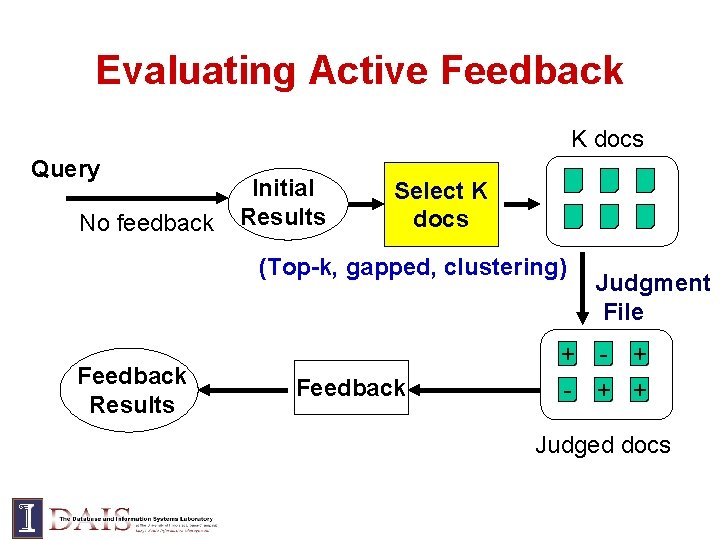

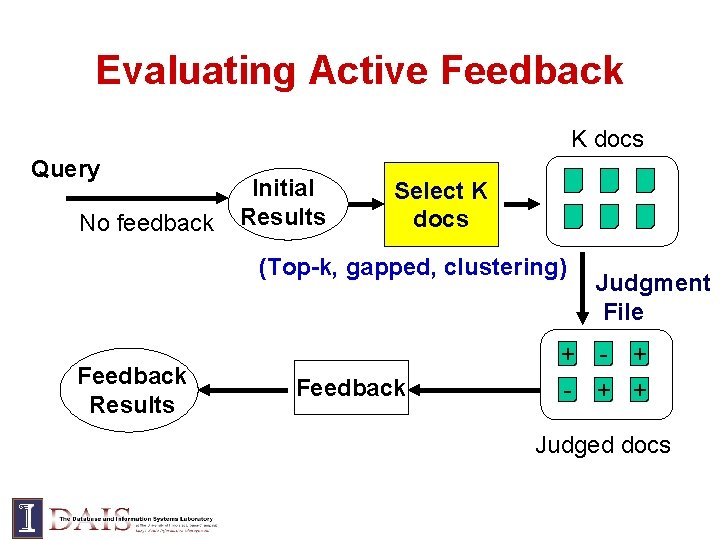

Evaluating Active Feedback K docs Query No feedback Initial Results Select K docs (Top-k, gapped, clustering) Feedback Results Feedback Judgment File + - + + Judged docs

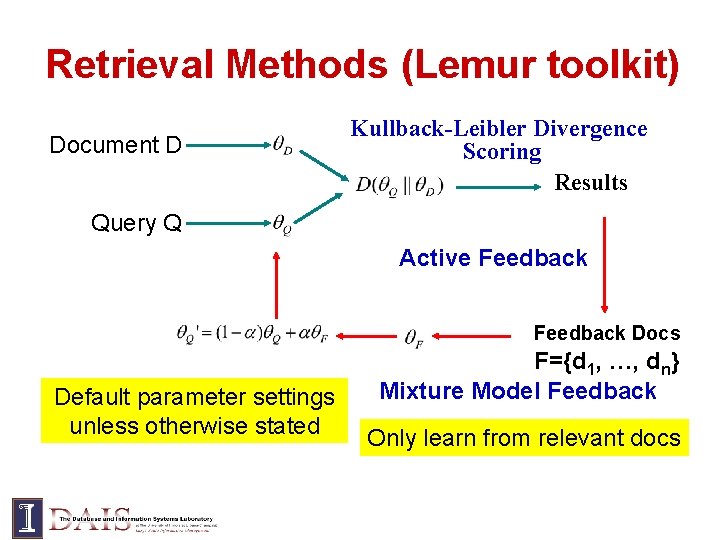

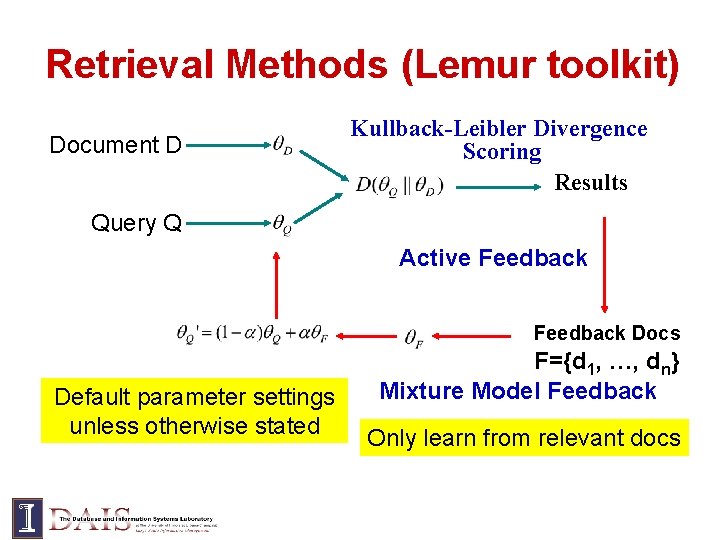

Retrieval Methods (Lemur toolkit) Document D Kullback-Leibler Divergence Scoring Results Query Q Active Feedback Docs Default parameter settings unless otherwise stated F={d 1, …, dn} Mixture Model Feedback Only learn from relevant docs

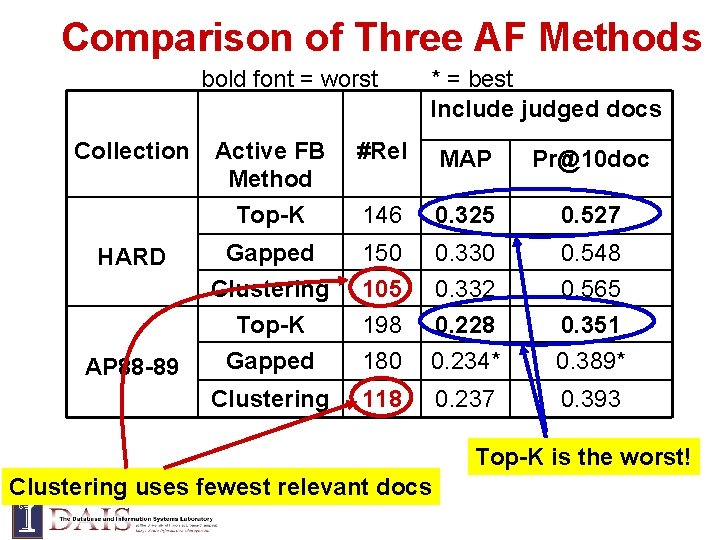

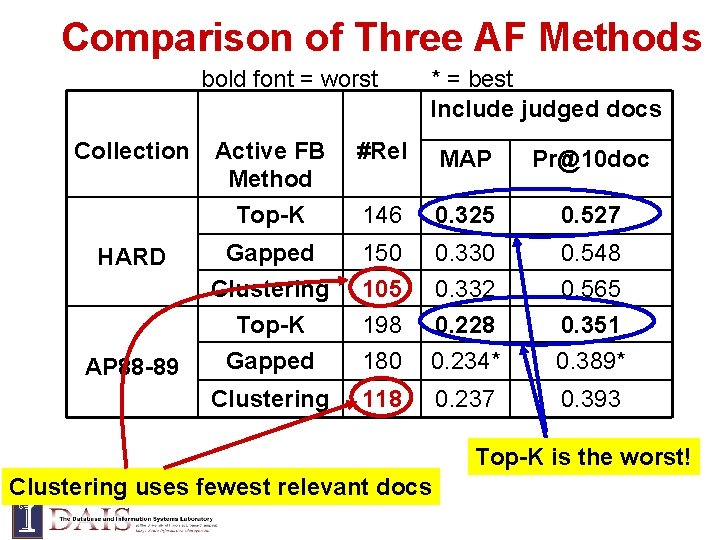

Comparison of Three AF Methods bold font = worst Collection * = best Include judged docs Active FB Method Top-K #Rel MAP Pr@10 doc 146 0. 325 0. 527 HARD Gapped 150 0. 330 0. 548 AP 88 -89 Clustering Top-K Gapped 105 198 180 0. 332 0. 228 0. 234* 0. 565 0. 351 0. 389* Clustering 118 0. 237 0. 393 Top-K is the worst! Clustering uses fewest relevant docs

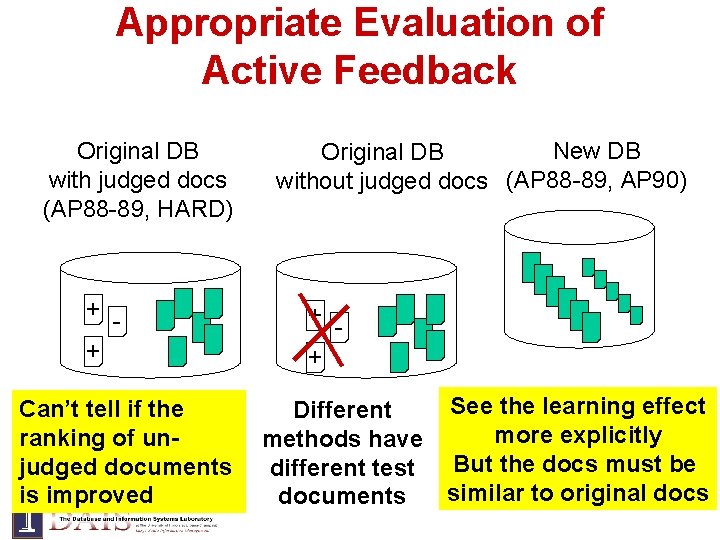

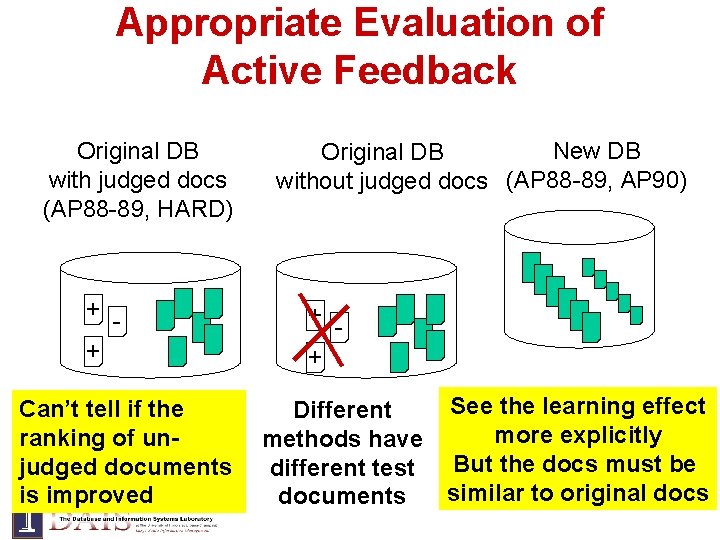

Appropriate Evaluation of Active Feedback Original DB with judged docs (AP 88 -89, HARD) + + Can’t tell if the ranking of unjudged documents is improved New DB Original DB without judged docs (AP 88 -89, AP 90) + + See the learning effect Different more explicitly methods have But the docs must be different test similar to original docs documents

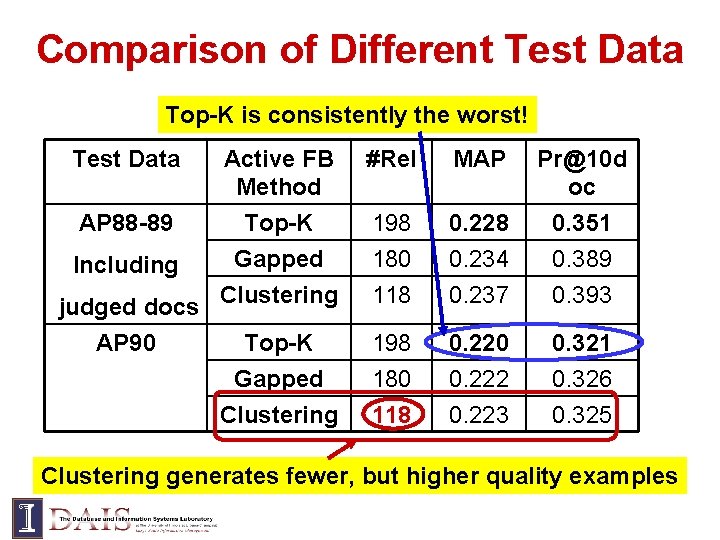

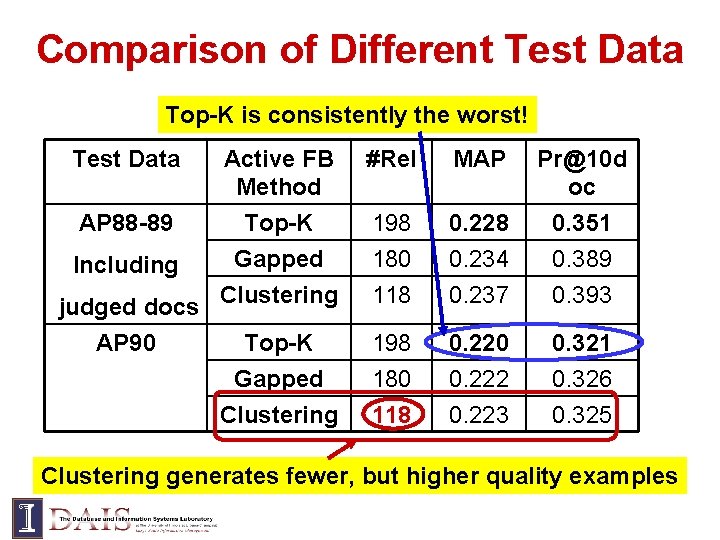

Comparison of Different Test Data Top-K is consistently the worst! Test Data Active FB Method #Rel MAP Pr@10 d oc Top-K Gapped Including judged docs Clustering AP 90 Top-K Gapped 198 180 118 0. 228 0. 234 0. 237 0. 351 0. 389 0. 393 198 180 0. 222 0. 321 0. 326 Clustering 118 0. 223 0. 325 AP 88 -89 Clustering generates fewer, but higher quality examples

Summary: Active Feedback • Presenting the top-k is not the best strategy • Clustering can generate fewer, higher quality feedback examples

Conclusions • There are many reasons for diversifying search results (redundancy, diverse information needs, active feedback) • Risk minimization framework can model all these cases of diversification • Different scenarios may need different techniques and different evaluation measures 47

![References Risk Minimization Lafferty Zhai 01 John Lafferty and Cheng Xiang References • Risk Minimization – [Lafferty & Zhai 01] John Lafferty and Cheng. Xiang](https://slidetodoc.com/presentation_image_h2/663a83f1c563c4cc491d068b30e5b62e/image-48.jpg)

References • Risk Minimization – [Lafferty & Zhai 01] John Lafferty and Cheng. Xiang Zhai. Document language models, query models, and risk minimization for information retrieval. In Proceedings of the ACM SIGIR 2001, pages 111 -119. – [Zhai & Lafferty 06] Cheng. Xiang Zhai and John Lafferty, A risk minimization framework for information retrieval, Information Processing and Management, 42(1), Jan. 2006, pages 31 -55. • Subtopic Retrieval – [Zhai et al. 03] Cheng. Xiang Zhai, William Cohen, and John Lafferty, Beyond Independent Relevance: Methods and Evaluation Metrics for Subtopic Retrieval, In Proceedings of ACM SIGIR 2003. – • [Zhai 02] Cheng. Xiang Zhai, Language Modeling and Risk Minimization in Text Retrieval, Ph. D. thesis, Carnegie Mellon University, 2002. Active Feedback – [Shen & Zhai 05] Xuehua Shen, Cheng. Xiang Zhai, Active Feedback in Ad Hoc Information Retrieval, Proceedings of the 28 th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval ( SIGIR'05), 59 -66, 2005 ACM SIGIR 2009 Workshop on Redundancy, Diversity, and Interdependent Document Relevance, July 23, 2009, Boston, MA 48

Thank You! 49