Making fashion recommendations with humanintheloop machine learning Four

- Slides: 52

Making fashion recommendations with human-in-the-loop machine learning Four lessons O’Reilly AI | New York | September 2016 Jay Wang, jaywang@stitchfix. com Jasmine Nettiksimmons, jnettiksimmons@stitchfix. com Stitch Fix

Recommendations with humans in the loop Effortless, personalized fashion with humans in the loop Challenge 1: You have more than one feedback loop Challenge 2: Who gets credit? Human or Machine? Challenge 3: Human selection changes your objective function Challenge 4: Even humans benefit from feature selection

Humans in the loop at Stitch Fix

Stitch Fix

Stitch Fix

Stitch Fix

Styling at Stitch Fix Inventory Personal styling

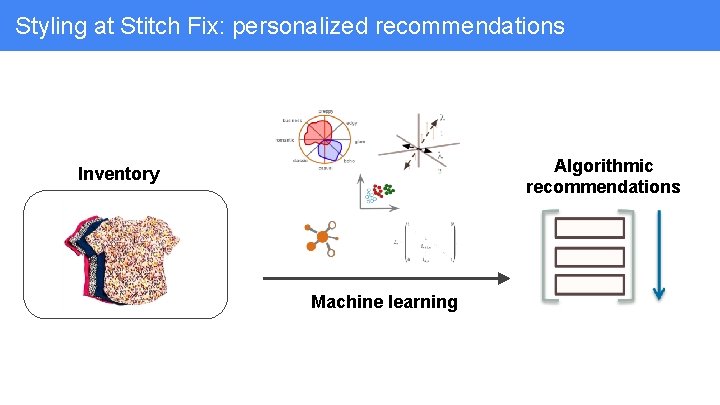

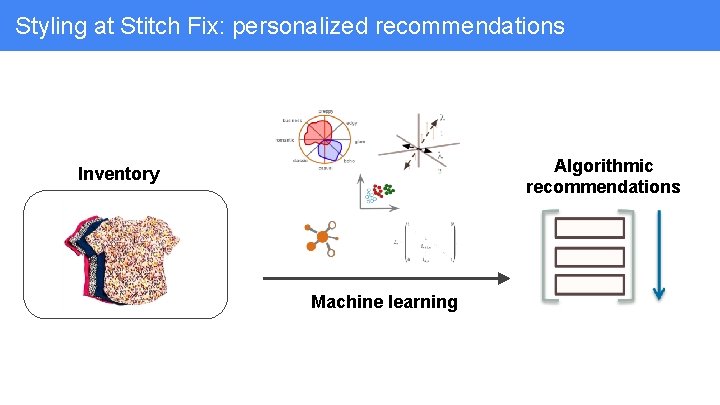

Styling at Stitch Fix: personalized recommendations Algorithmic recommendations Inventory Machine learning

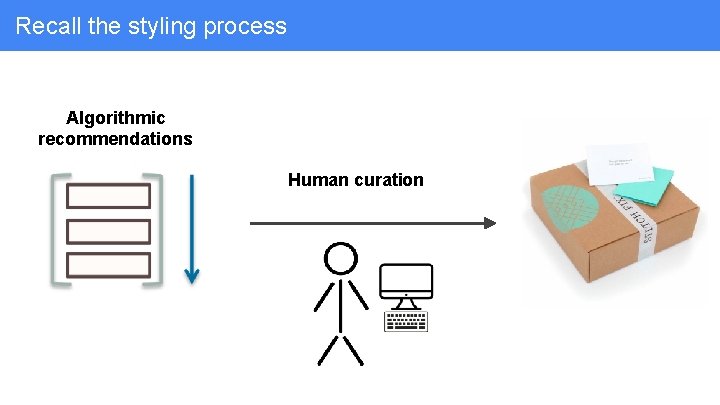

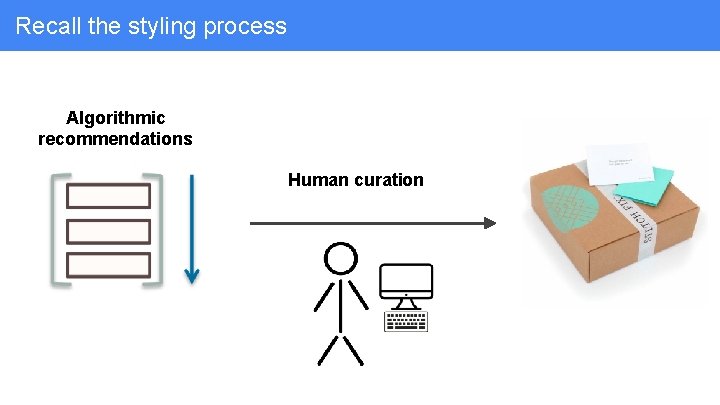

Styling at Stitch Fix: expert human curation Algorithmic recommendations Human curation

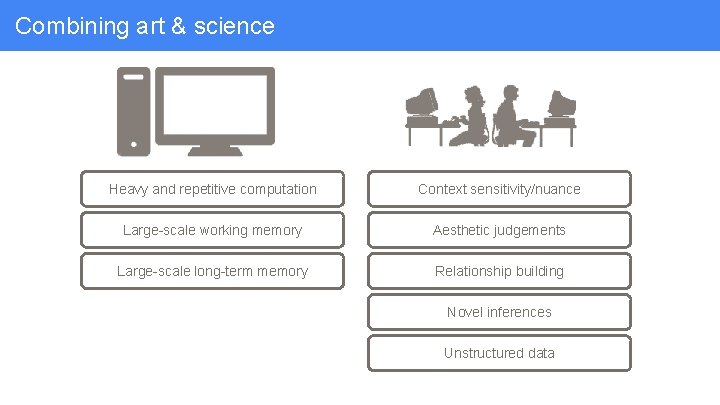

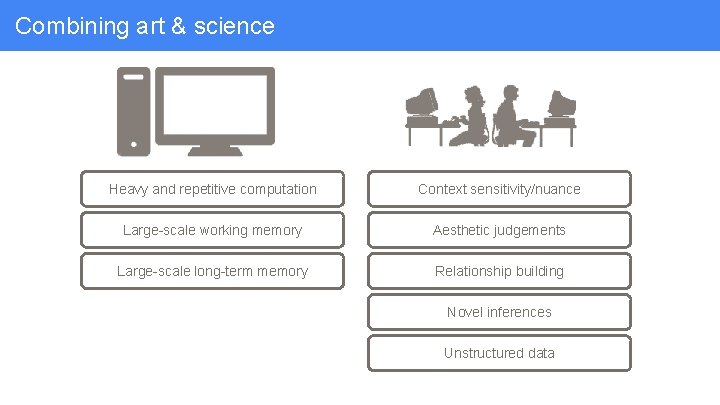

Combining art & science Stitch Fix = Augmented Intelligence

Combining art & science Heavy and repetitive computation Context sensitivity/nuance Large-scale working memory Aesthetic judgements Large-scale long-term memory Relationship building Novel inferences Unstructured data

Combining art & science ● Select and rank huge volume of inventory ● Find patterns in massive amount of data

Combining art & science ● Select and rank huge volume of inventory ● Find patterns in massive amount of data ● Provide a baseline of consistency in our service at scale

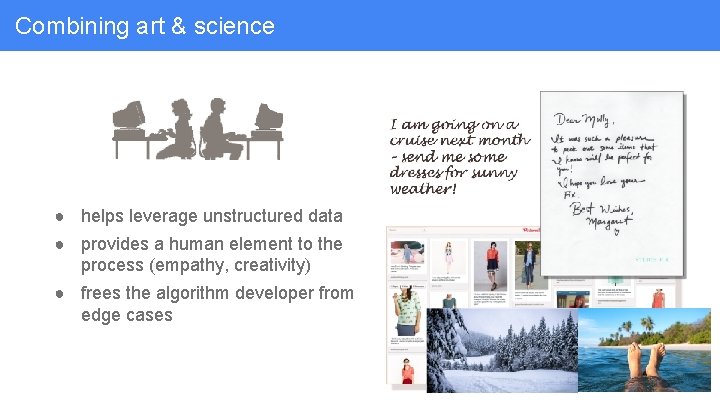

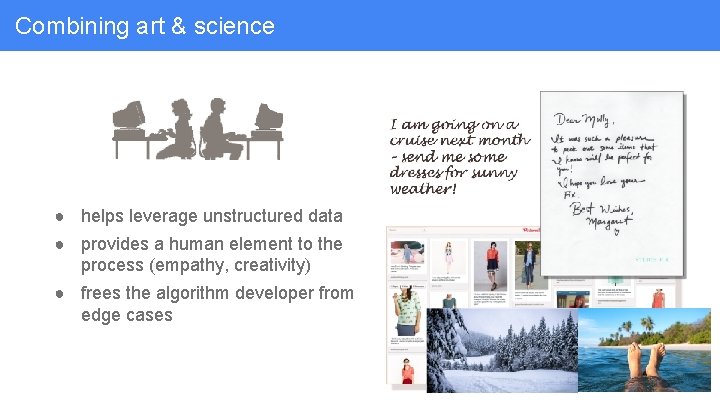

Combining art & science ● helps leverage unstructured data

Combining art & science ● helps leverage unstructured data ● provides a human element to the process (empathy, creativity)

Combining art & science ● helps leverage unstructured data ● provides a human element to the process (empathy, creativity) ● frees the algorithm developer from edge cases

Challenge 1: You have more than one feedback loop

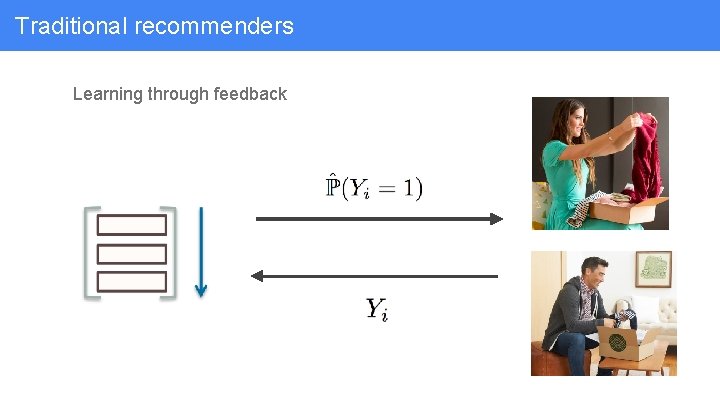

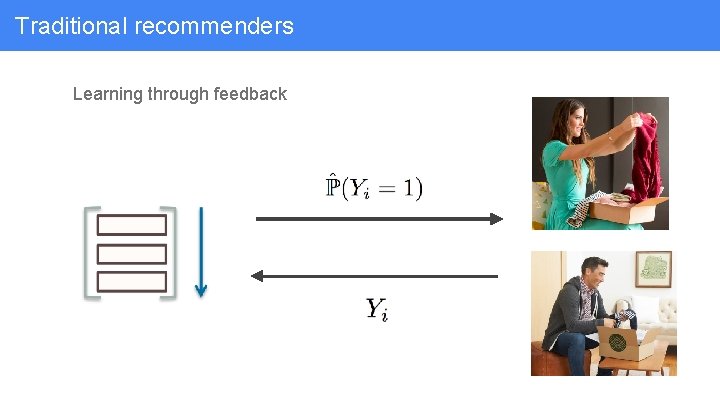

Traditional recommenders Learning through feedback

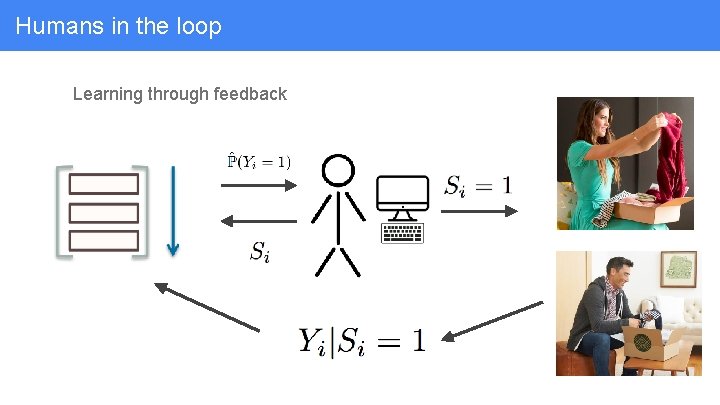

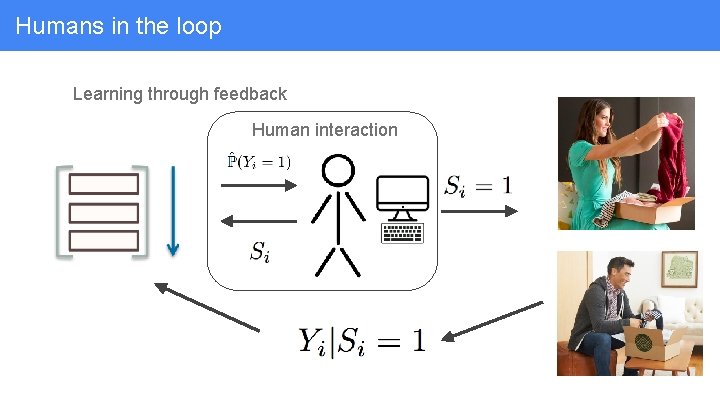

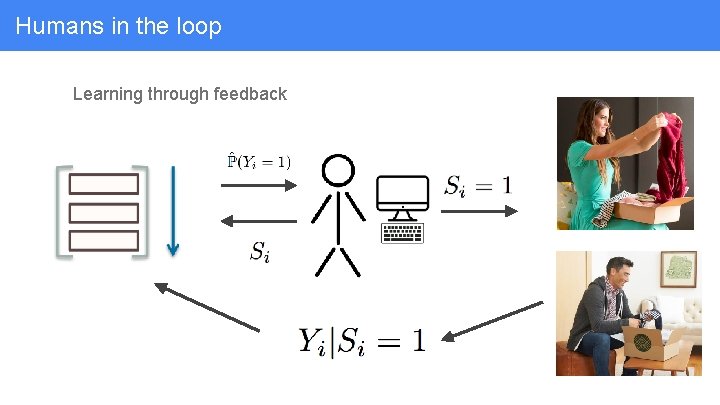

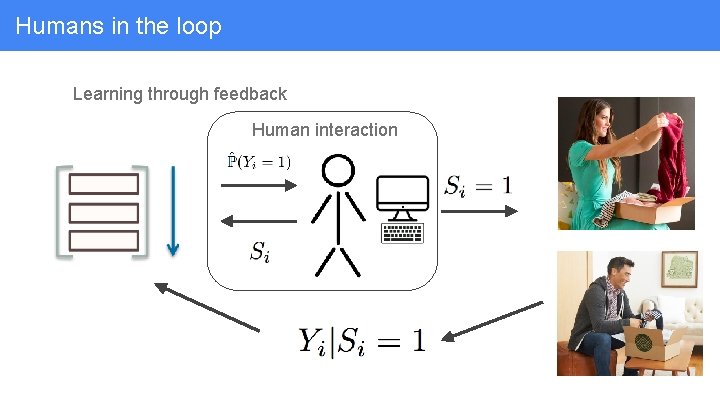

Humans in the loop Learning through feedback

Humans in the loop Learning through feedback Human interaction

Challenge 2: Who gets credit? Machine or Human?

Who gets credit? When we delight (or disappoint) a client, who should get the credit (or the blame)?

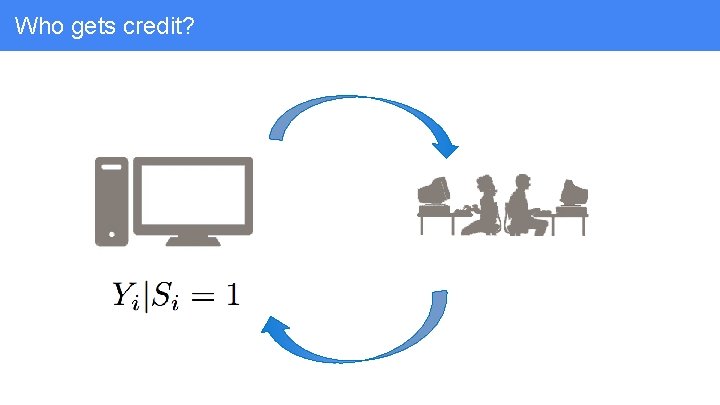

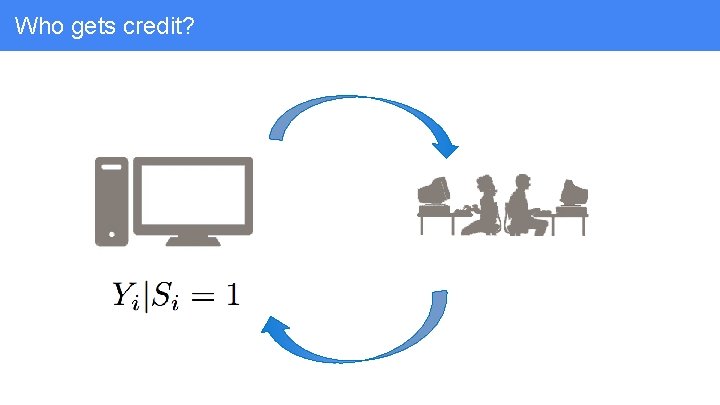

Who gets credit?

Who gets credit? How do we identify stylists who consistently make great decisions? • Noisy system • Potential confounding

Who gets credit? How do we identify stylists who consistently make great decisions? • Noisy system • Potential confounding How do we identify good behaviors? • Hypothesize, characterize and back-test • Logging

Challenge 3: Human selection changes your objective function

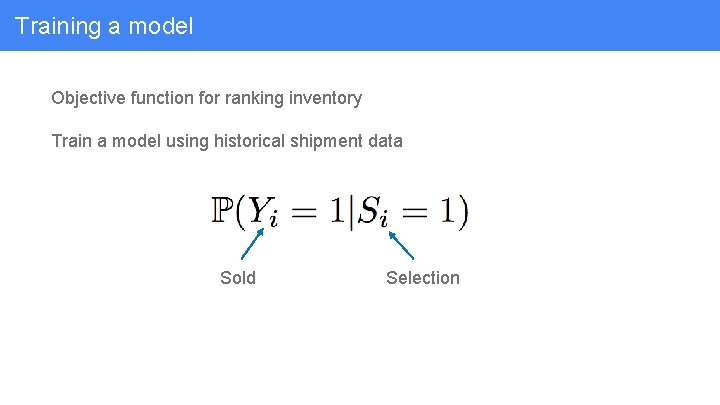

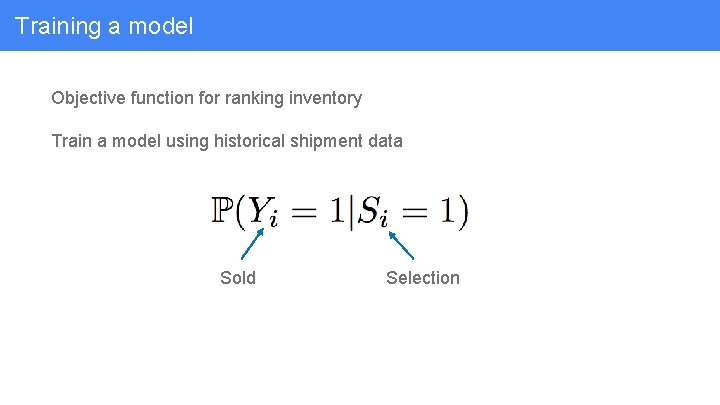

Role of algorithmic recommendations Filter and rank the inventory Objective function for ranking inventory

Training a model Objective function for ranking inventory Train a model using historical shipment data Sold Selection

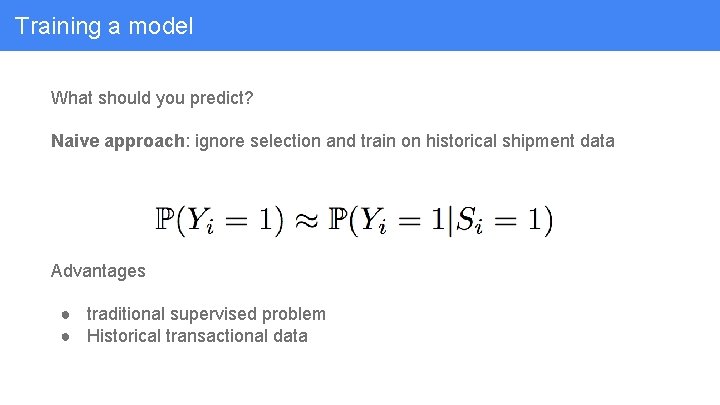

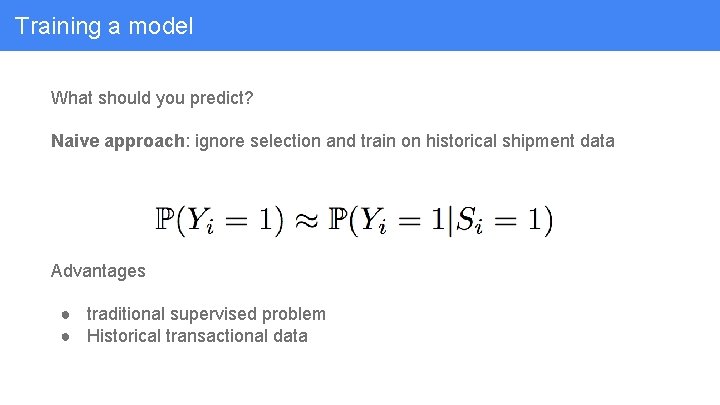

Training a model What should you predict? Naive approach: ignore selection and train on historical shipment data Advantages ● traditional supervised problem ● Historical transactional data

Training a model Problem 1: selection can censor your data

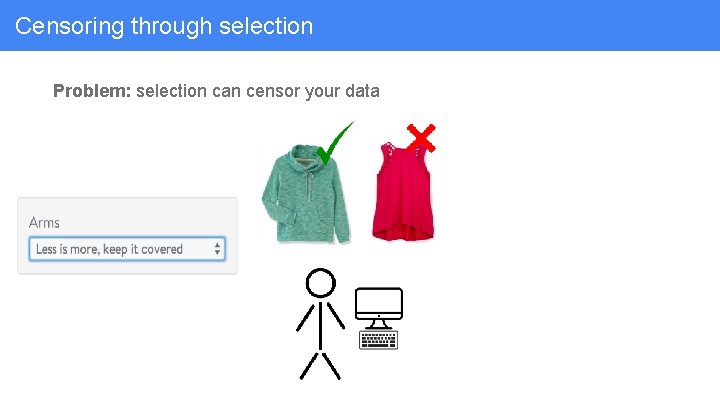

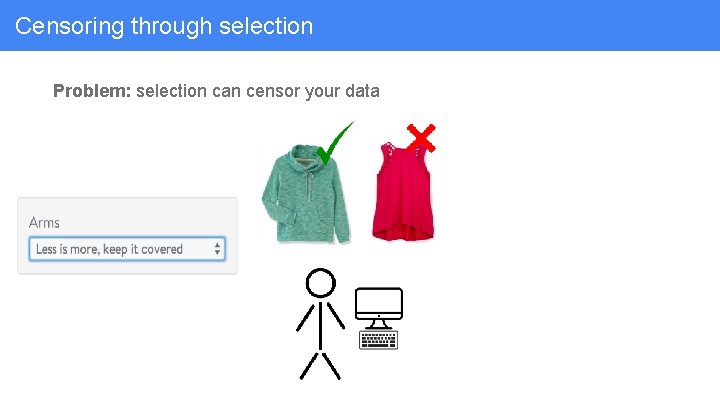

Censoring through selection Problem: selection can censor your data

Censoring through selection Problem: selection can censor your data

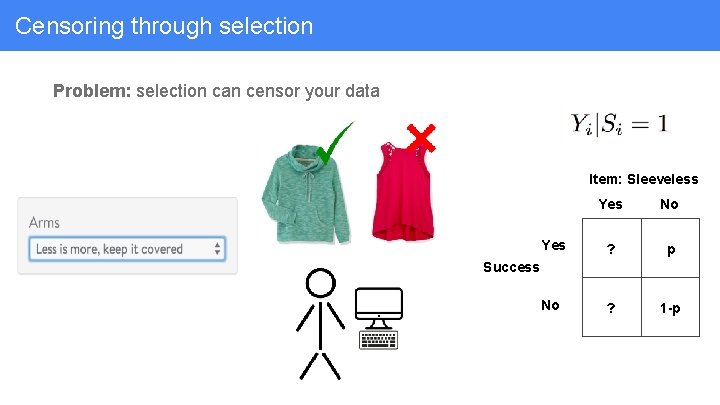

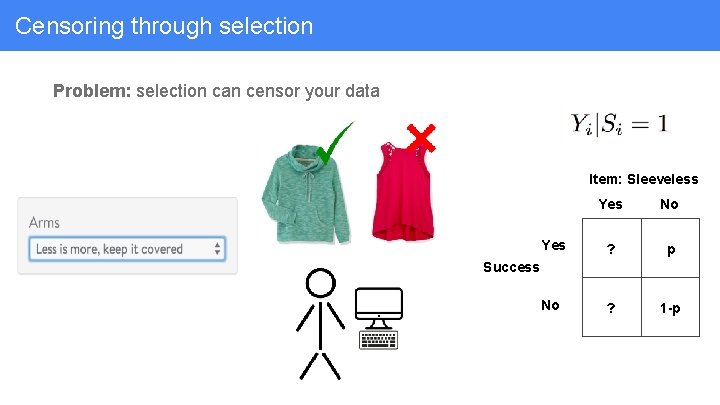

Censoring through selection Problem: selection can censor your data Item: Sleeveless Yes No Yes ? p No ? 1 -p Success

Success given selection Problem 2: success probabilities can make for terrible recommendations

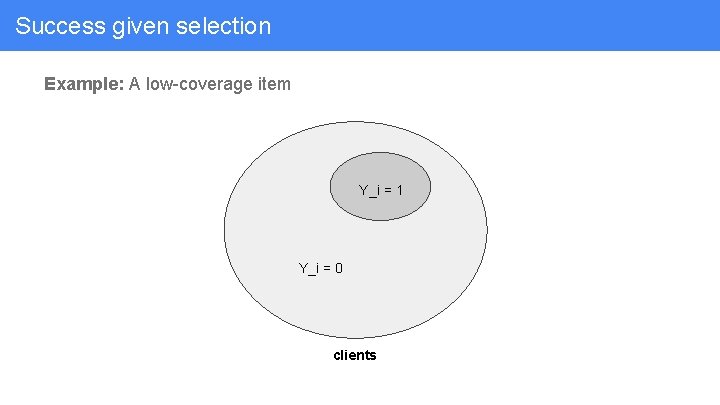

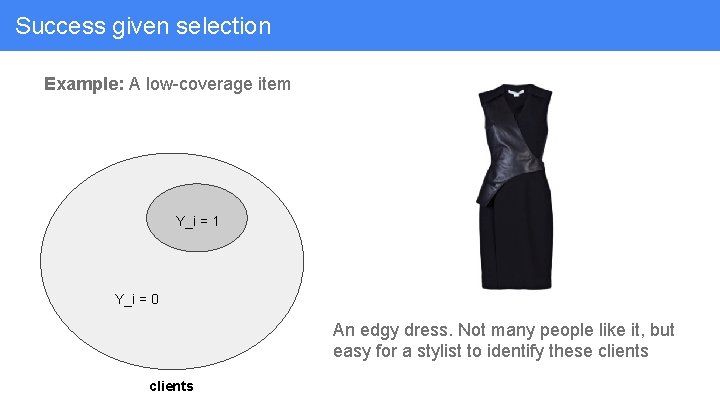

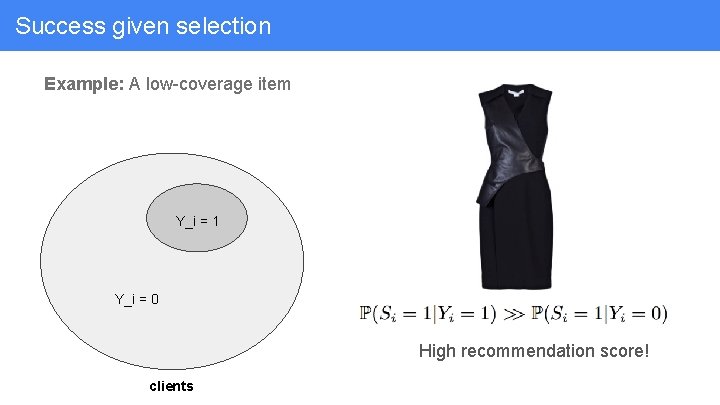

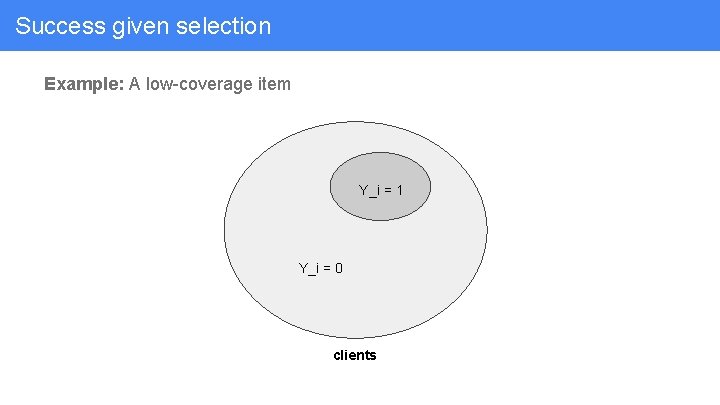

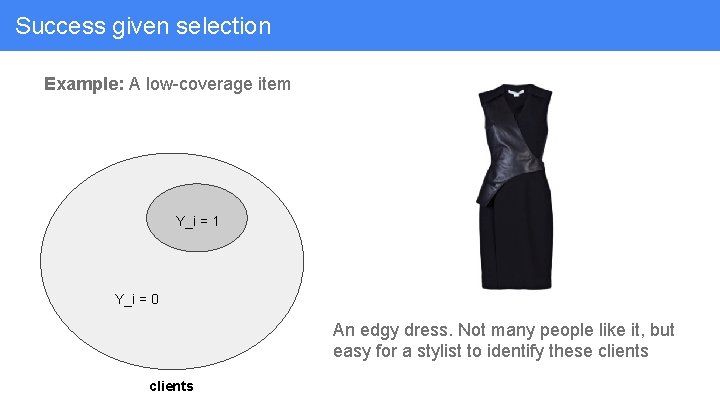

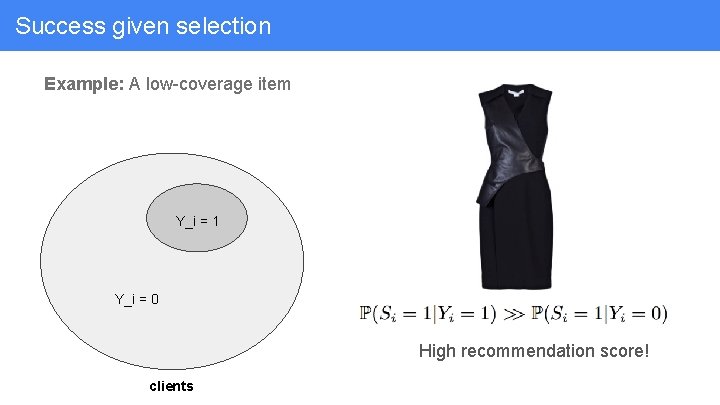

Success given selection Example: A low-coverage item Y_i = 1 Y_i = 0 clients

Success given selection Example: A low-coverage item Y_i = 1 Y_i = 0 An edgy dress. Not many people like it, but easy for a stylist to identify these clients

Success given selection Example: A low-coverage item Y_i = 1 Y_i = 0 High recommendation score! clients

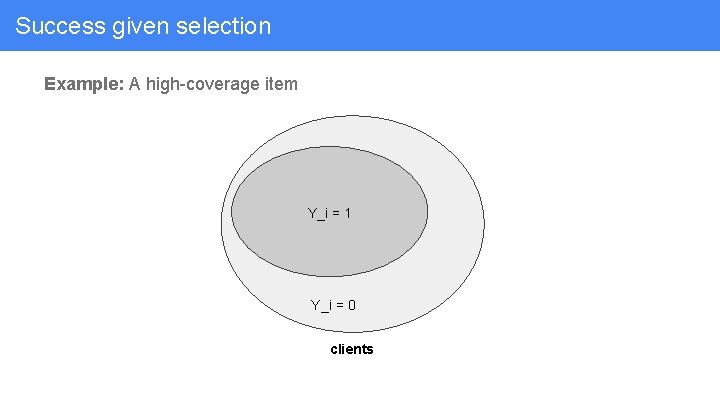

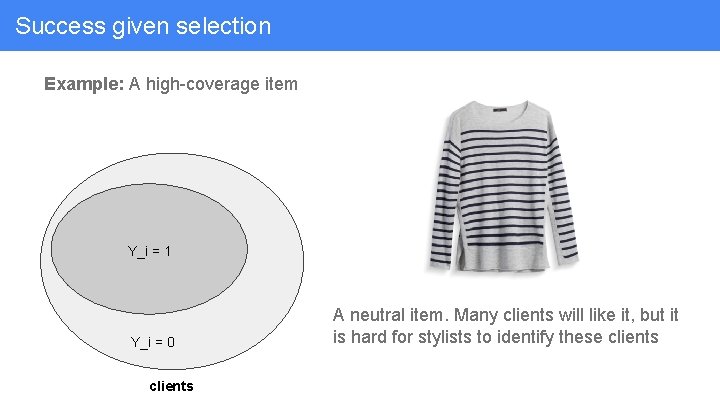

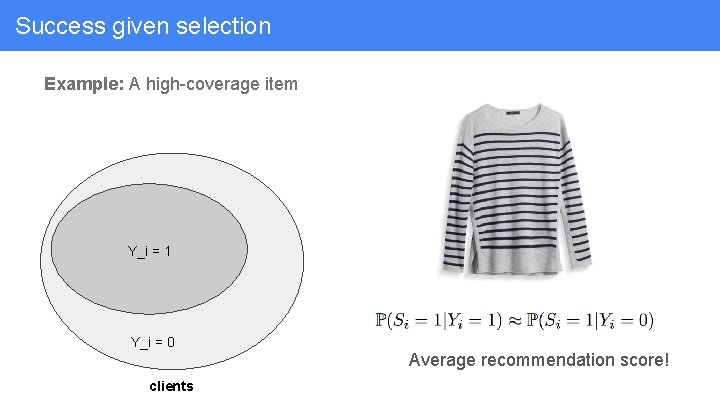

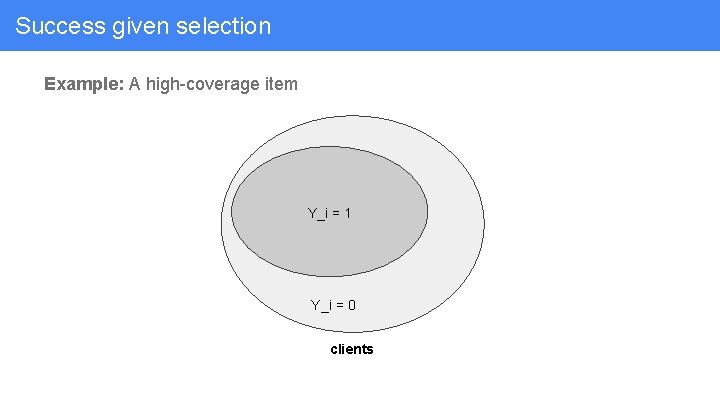

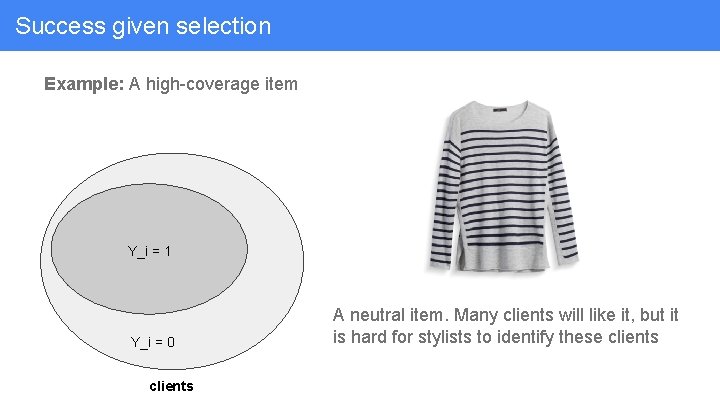

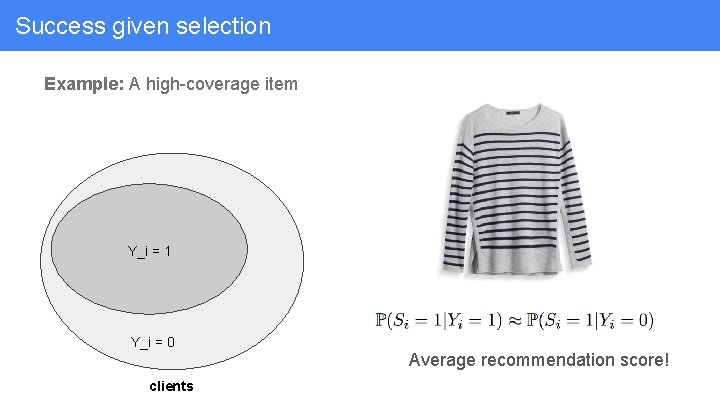

Success given selection Example: A high-coverage item Y_i = 1 Y_i = 0 clients

Success given selection Example: A high-coverage item Y_i = 1 Y_i = 0 clients A neutral item. Many clients will like it, but it is hard for stylists to identify these clients

Success given selection Example: A high-coverage item Y_i = 1 Y_i = 0 clients Average recommendation score!

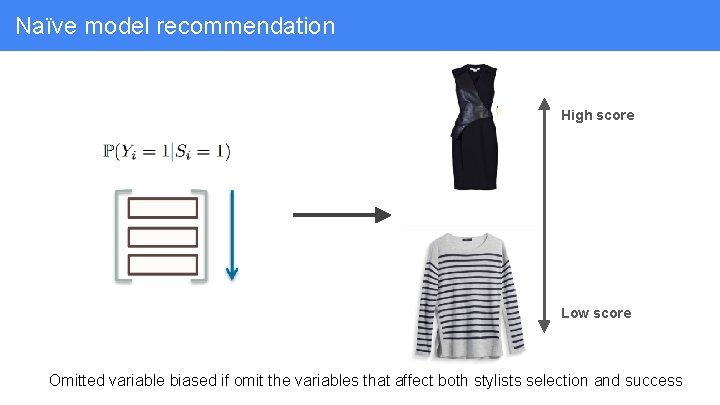

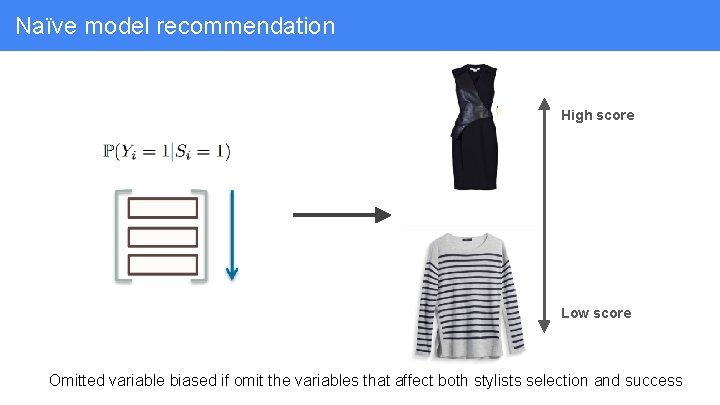

Naïve model recommendation High score Low score Omitted variable biased if omit the variables that affect both stylists selection and success

The lesson In both of these cases, Effective recommendation requires understanding human selection

How to understand human selection Generally, selection data will be large-scale and complex to collect and work with ● Negative cases: logging the set of things that was available to be selected but was not selected ● Inventory dynamics ● Presentation effects ○ similar to search results and ads display

Challenge 4: Even humans benefit from feature selection

Recall the styling process Algorithmic recommendations Human curation

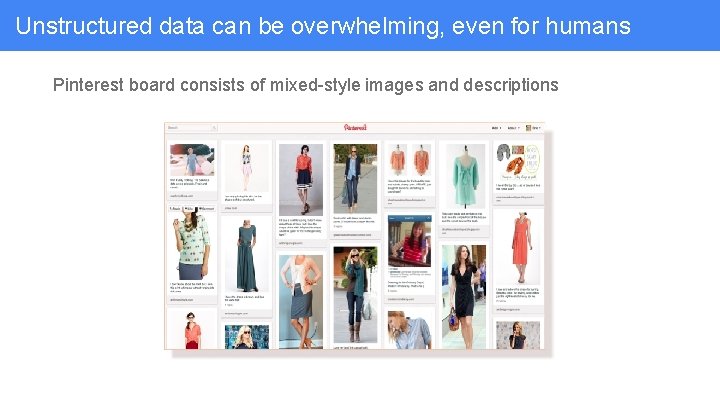

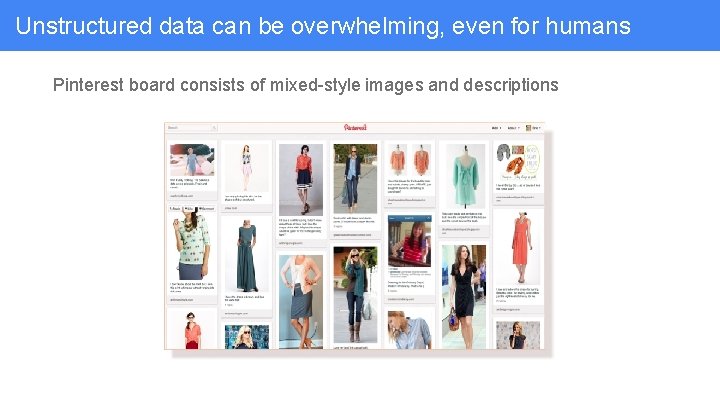

Unstructured data can be overwhelming, even for humans Pinterest board consists of mixed-style images and descriptions

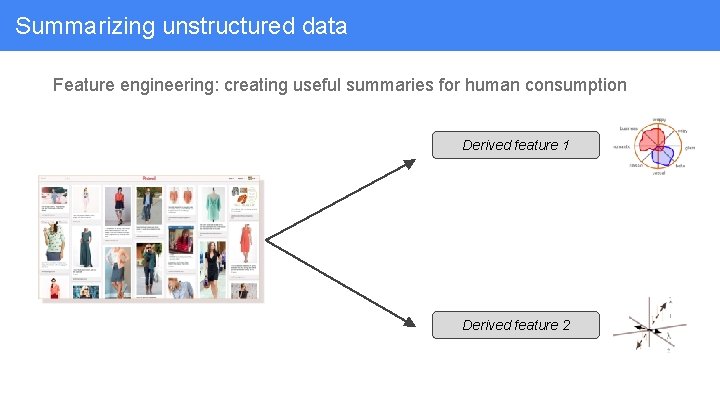

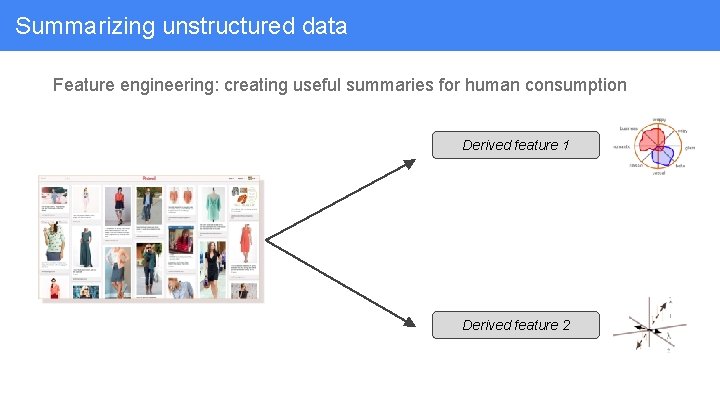

Summarizing unstructured data Feature engineering: creating useful summaries for human consumption Derived feature 1 Derived feature 2

Summarizing unstructured data Feature engineering: creating useful summaries for human consumption Derived feature 1 Inventory images similar to pinterest board Derived feature 2

Creating features for the human classifier It is important to focus on ● Interpretability ● Evidence ● Orthogonality For fashion data especially, this is hard!

What features are useful for stylists to make the right choice A/B Ultimately, this is an empirical question Run experiments with ● Production systems ● Simulations for human classifiers

Personal styling recommendations with humans in the loop Recommendations with humans in the loop: It works really well, but it’s complicated Challenge 1: You have more than one feedback loop Challenge 2: Who gets credit? Human or Machine? Challenge 3: Human selection changes your objective function Challenge 4: Even humans benefit from feature selection

Thanks! Questions?