Lecture 23 SSD Data Integrity and Protection Distributed

Lecture 23 SSD Data Integrity and Protection Distributed Systems

SSD • Basic Flash Operations: read, erase, and program • Bit, page, block, and bank • Wear out • Flash translation layer (FTL) – control logic to turn client reads and writes into flash operations • Direct Mapped is bad. Why?

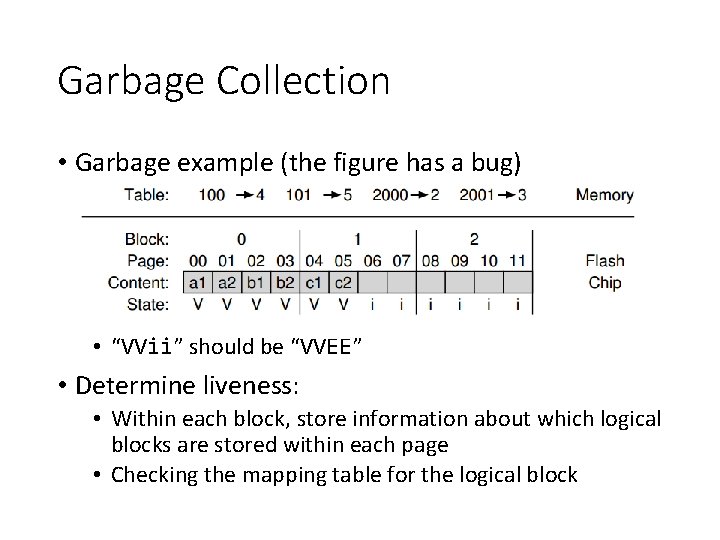

Garbage Collection • Garbage example (the figure has a bug) • “VVii” should be “VVEE” • Determine liveness: • Within each block, store information about which logical blocks are stored within each page • Checking the mapping table for the logical block

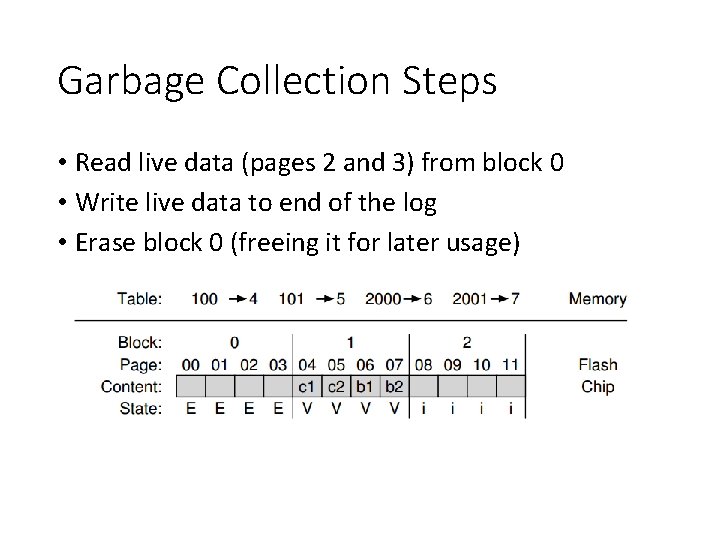

Garbage Collection Steps • Read live data (pages 2 and 3) from block 0 • Write live data to end of the log • Erase block 0 (freeing it for later usage)

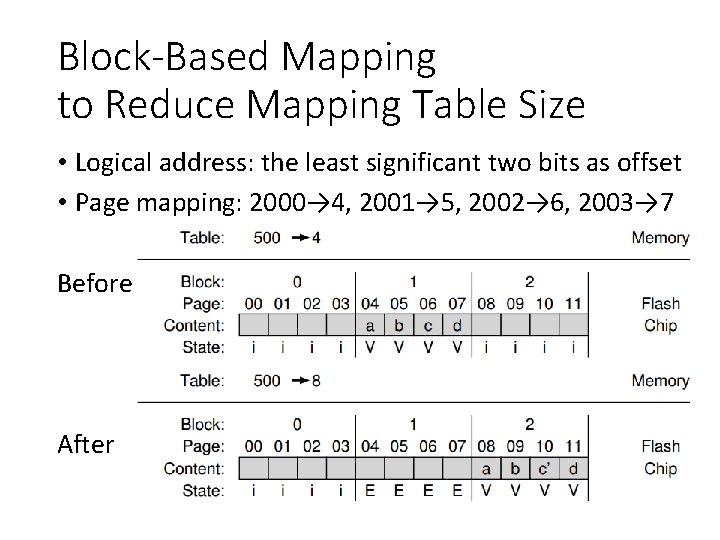

Block-Based Mapping to Reduce Mapping Table Size • Logical address: the least significant two bits as offset • Page mapping: 2000→ 4, 2001→ 5, 2002→ 6, 2003→ 7 Before After

Problem with Block-Based Mapping • Small write • The FTL must read a large amount of live data from the old block and copy it into a new one • What might be a good solution? • Page-based mapping is good at …, but bad at … • Block-based mapping is bad at …, but good at …

Hybrid Mapping • Log blocks: a few blocks that are per-page mapped • Call the per-page mapping log table • Data blocks: blocks that are per-block mapped • Call the per-block mapping data table • How to read and write? • How to switch between per-page mapping and perblock mapping?

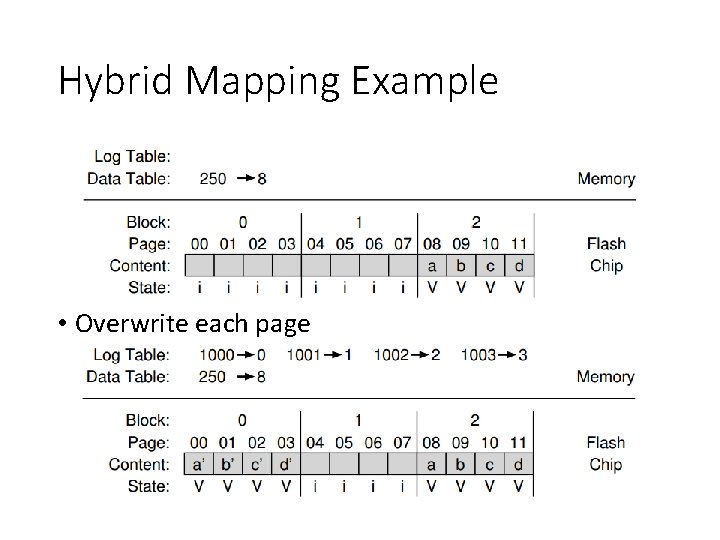

Hybrid Mapping Example • Overwrite each page

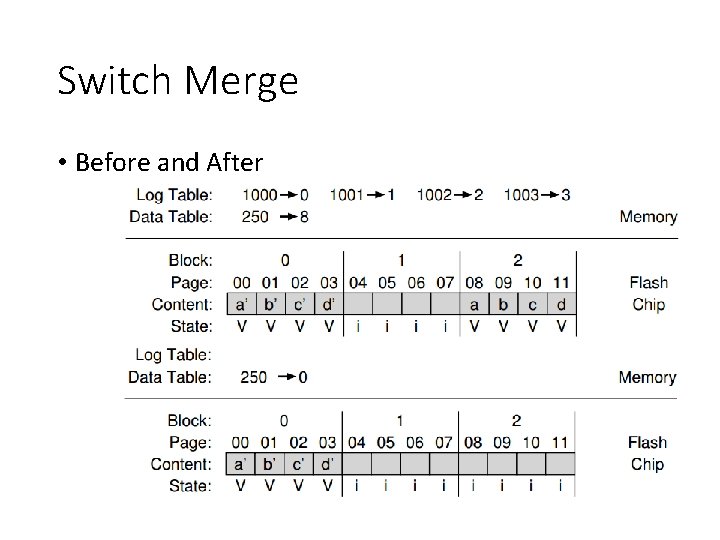

Switch Merge • Before and After

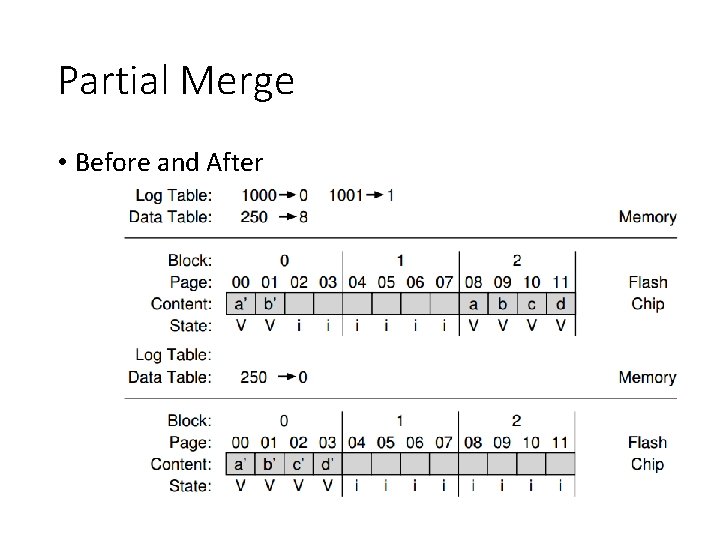

Partial Merge • Before and After

Full Merge • The FTL must pull together pages from many other blocks to perform cleaning • Imagine that pages 0, 4, 8, and 12 are written to log block A

Wear Leveling • The FTL should try its best to spread that work across all the blocks of the device evenly • The log-structuring approach does a good initial job • What if a block is filled with long-lived data that does not get over-written? • Periodically read all the live data out of such blocks and re-write it elsewhere

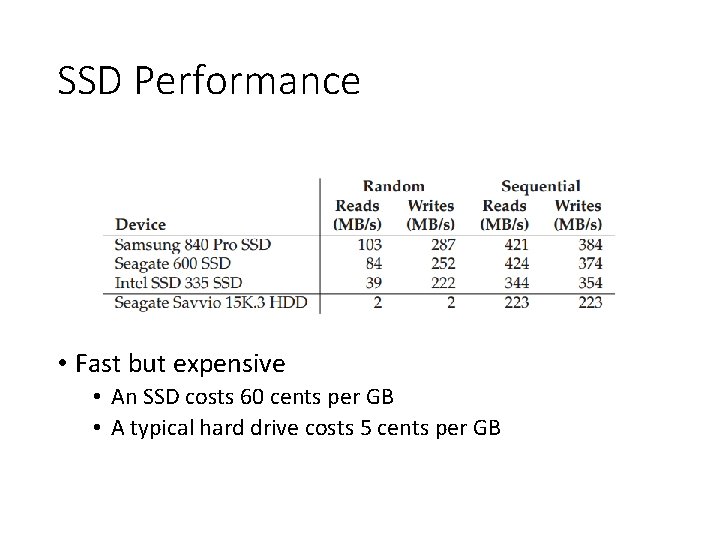

SSD Performance • Fast but expensive • An SSD costs 60 cents per GB • A typical hard drive costs 5 cents per GB

Data Integrity and Protection • Ensure that the data you put into your storage system is the same when the storage system returns it to you

Disk Failure Modes • Fail-stop as assumed by RAID • Fail-partial: • Latent-sector errors (LSEs): a disk sector (or group of sectors) has been damaged in some way, e. g. , head crash, cosmic rays • Silent faults, e. g. , block corruption caused by buggy firmware or faulty bus

Findings about LSEs • Costly drives with more than one LSE are as likely to develop additional errors as cheaper drives • For most drives, annual error rate increases in year two • LSEs increase with disk size • Most disks with LSEs have less than 50 • Disks with LSEs are more likely to develop additional LSEs • There exists a significant amount of spatial and temporal locality • Disk scrubbing is useful (most LSEs were found this way)

Findings about Corruption • Chance of corruption varies greatly across different drive models within the same drive class • Age affects are different across models • Workload and disk size have little impact on corruption • Most disks with corruption only have a few corruptions • Corruption is not independent with a disk or across disks in RAID • There exists spatial locality, and some temporal locality • There is a weak correlation with LSEs

Latent Sector Errors • Many ways: • head crash • cosmic rays can also flip bits • How to detect: • A storage system tries to access a block, and the disk returns an error (with ECC) • How to fix: • Use whatever redundancy mechanism it has to return the correct data

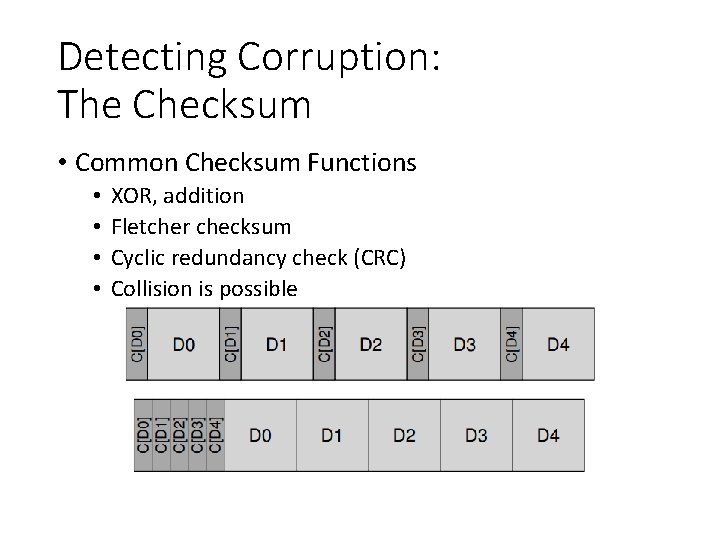

Detecting Corruption: The Checksum • Common Checksum Functions • • XOR, addition Fletcher checksum Cyclic redundancy check (CRC) Collision is possible

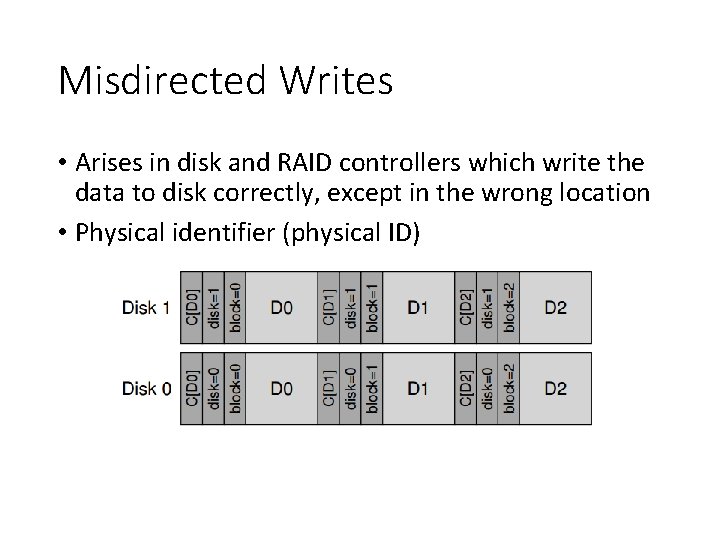

Misdirected Writes • Arises in disk and RAID controllers which write the data to disk correctly, except in the wrong location • Physical identifier (physical ID)

Lost Writes • Occur when the device informs the upper layer that a write has completed but in fact it never is persisted。 • Do any of our strategies from above (e. g. , basic checksums, or physical ID) help to detect lost writes? • Solutions: • Perform a write verify or read-after-write • Some systems add a checksum elsewhere in the system to detect lost writes • ZFS includes a checksum in each file system inode and indirect block for every block included within a file

Scrubbing • When do these checksums actually get checked? • Many systems utilize disk scrubbing: • Periodically read through every block of the system • Check whether checksums are still valid • Schedule scans on a nightly or weekly basis

Overhead of Checksumming • Space • Small • Time • Noticeable • CPU overhead • I/O overhead

Distributed Systems

OSTEP Definition • Def: more than 1 machine • Examples: • client/server: web server and web client • cluster: page rank computation • Other courses • Networking • Distributed Systems

Why Go Distributed? • More compute power • More storage capacity • Fault tolerance • Data sharing

New Challenges • System failure: need to worry about partial failure. • Communication failure: links unreliable • Performance • Security

Communication • All communication is inherently unreliable. • Need to worry about: • bit errors • packet loss • node/link failure

Overview • Raw messages • Reliable messages • OS abstractions • virtual memory • global file system • Programming-languages abstractions • remote procedure call

Raw Messages: UDP • API: • reads and writes over socket file descriptors • messages sent from/to ports to target a process on machine • Provide minimal reliability features: • • messages may be lost messages may be reordered messages may be duplicated only protection checksums

Raw Messages: UDP • Advantages • lightweight • some applications make better reliability decisions themselves (e. g. , video conferencing programs) • Disadvantages • more difficult to write application correctly

Reliable Messages Strategy • Using software, build reliable, logical connections over unreliable connections. • Strategies: • acknowledgment

![ACK Sender [send message] Receiver [recv message] [send ack] [recv ack] • Sender knows ACK Sender [send message] Receiver [recv message] [send ack] [recv ack] • Sender knows](http://slidetodoc.com/presentation_image_h2/71bb82b0278f2e78e1e4addfcf2c79e0/image-33.jpg)

ACK Sender [send message] Receiver [recv message] [send ack] [recv ack] • Sender knows message was received.

![ACK Sender [send message] Receiver • Sender misses ACK. . . What to do? ACK Sender [send message] Receiver • Sender misses ACK. . . What to do?](http://slidetodoc.com/presentation_image_h2/71bb82b0278f2e78e1e4addfcf2c79e0/image-34.jpg)

ACK Sender [send message] Receiver • Sender misses ACK. . . What to do?

Reliable Messages Strategy • Using software, build reliable, logical connections over unreliable connections. • Strategies: • acknowledgment • timeout

![ACK Sender [send message] [start timer]. . . waiting for ack. . . [timer ACK Sender [send message] [start timer]. . . waiting for ack. . . [timer](http://slidetodoc.com/presentation_image_h2/71bb82b0278f2e78e1e4addfcf2c79e0/image-36.jpg)

ACK Sender [send message] [start timer]. . . waiting for ack. . . [timer goes off] [send message] Receiver [recv message] [send ack] [recv ack]

Timeout: Issue 1 • How long to wait? • Too long: system feels unresponsive! • Too short: messages needlessly re-sent! • Messages may have been dropped due to overloaded server. Aggressive clients worsen this. • One strategy: be adaptive! • Adjust time based on how long acks usually take. • For each missing ack, wait longer between retries.

Timeout: Issue 2 • What does a lost ack really mean? • Maybe the receiver does not get the message • Maybe the receiver gets the message, but the ack is not delivered successfully • ACK: message received exactly once • No ACK: message received at most once • Proposed Solution • Sender could send an Ack so receiver knows whether to retry sending an Ack • Sound good?

Reliable Messages Strategy • Using software, build reliable, logical connections over unreliable connections. • Strategies: • acknowledgment • timeout • remember sent messages

![Receiver Remembers Messages Sender [send message] Receiver [recv message] [send ack] [timeout] [send message] Receiver Remembers Messages Sender [send message] Receiver [recv message] [send ack] [timeout] [send message]](http://slidetodoc.com/presentation_image_h2/71bb82b0278f2e78e1e4addfcf2c79e0/image-40.jpg)

Receiver Remembers Messages Sender [send message] Receiver [recv message] [send ack] [timeout] [send message] [ignore message] [send ack] [recv ack]

Solutions • Solution 1: remember every message ever sent. • Solution 2: sequence numbers • give each message a seq number • receiver knows all messages before an N have been seen • receiver remembers messages sent after N

TCP • Most popular protocol based on seq nums. • Also buffers messages so they arrive in order • Timeouts are adaptive.

Overview • Raw messages • Reliable messages • OS abstractions • virtual memory • global file system • Programming-languages abstractions • remote procedure call

Virtual Memory • Inspiration: threads share memory • Idea: processes on different machines share mem • Strategy: • a bit like swapping we saw before • instead of swap to disk, swap to other machine • sometimes multiple copies may be in memory on different machines

Virtual Memory Problems • What if a machine crashes? • mapping disappears in other machines • how to handle? • Performance? • when to prefetch? • loads/stores expected to be fast • DSM (distributed shared memory) not used today.

Global File System • Advantages • file access is already expected to be slow • use common API • no need to modify applications (sorta true) • Disadvantages • doesn’t always make sense, e. g. , for video app

RPC: Remote Procedure Call • What could be easier than calling a function? • Strategy: create wrappers so calling a function on another machine feels just like calling a local function. • This abstraction is very common in industry.

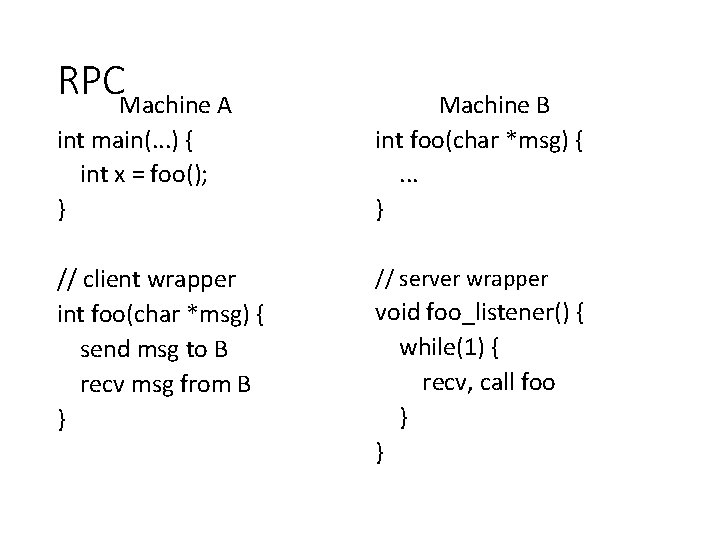

RPCMachine A int main(. . . ) { int x = foo(); } // client wrapper int foo(char *msg) { send msg to B recv msg from B } Machine B int foo(char *msg) {. . . } // server wrapper void foo_listener() { while(1) { recv, call foo } }

RPC Tools • RPC packages help with this with two components. • (1) Stub generation • create wrappers automatically • (2) Runtime library • thread pool • socket listeners call functions on server

Client Stub Steps • Create a message buffer • Pack the needed information into the message buffer • Send the message to the destination RPC server • Wait for the reply • Unpack return code and other arguments • Return to the caller

Server Stub Steps • Unpack the message • Call into the actual function • Package the results • Send the reply

Wrapper Generation • Wrappers must do conversions: • • client arguments to message to server arguments server return to message to client return • Need uniform endianness (wrappers do this). • Conversion is called marshaling/unmarshaling, or serializing/deserializing.

Stub Generation • Many tools will automatically generate wrappers: • rpcgen • thrift • protobufs • Programmer fills in generated stubs.

Wrapper Generation: Pointers • Why are pointers problematic? • The addr passed from the client will not be valid on the server. • Solutions? • smart RPC package: follow pointers • distribute generic data structs with RPC package

Runtime Library • Naming: how to locate a remote service • How to serve calls? • usually with a thread pool • What underlying protocol to use? • usually UDP • Some RPC packages enable a asynchronous RPC

RPC over UDP • Strategy: use function return as implicit ACK. • Piggybacking technique. • What if function takes a long time? • then send a separate ACK

Conclusion • Many communication abstraction possible: • • • Raw messages (UDP) Reliable messages (TCP) Virtual memory (OS) Global file system (OS) Function calls (RPC)

Next • NFS • AFS

- Slides: 58