LEARNING MIXTURES OF LINEAR REGRESSIONS IN SUBEXPONENTIAL TIME

LEARNING MIXTURES OF LINEAR REGRESSIONS IN SUBEXPONENTIAL TIME VIA FOURIER MOMENTS Sitan Chen MIT Jerry Li MSR Zhao Song Princeton, IAS

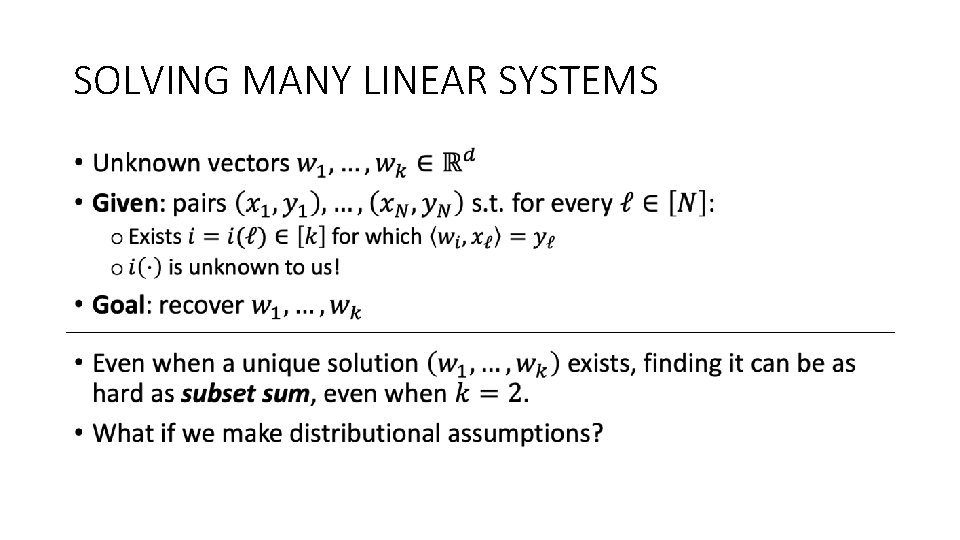

SOLVING MANY LINEAR SYSTEMS •

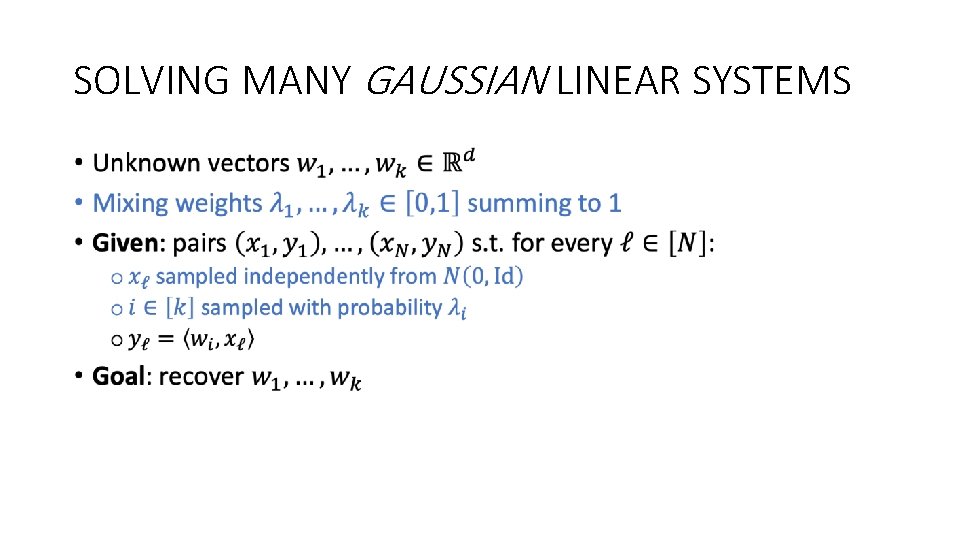

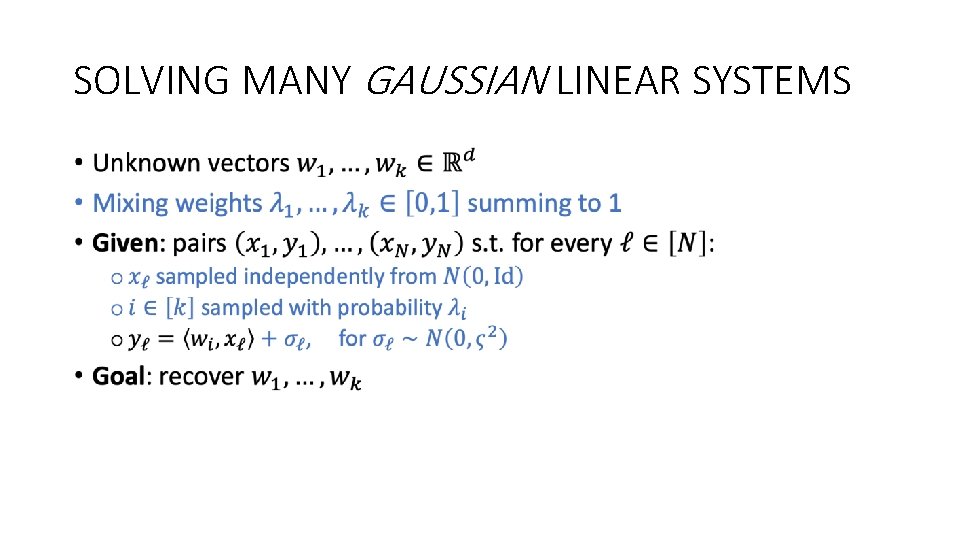

SOLVING MANY GAUSSIAN LINEAR SYSTEMS •

SOLVING MANY GAUSSIAN LINEAR SYSTEMS •

SOLVING MANY GAUSSIAN LINEAR SYSTEMS •

SOLVING MANY GAUSSIAN LINEAR SYSTEMS •

SOLVING MANY GAUSSIAN LINEAR SYSTEMS •

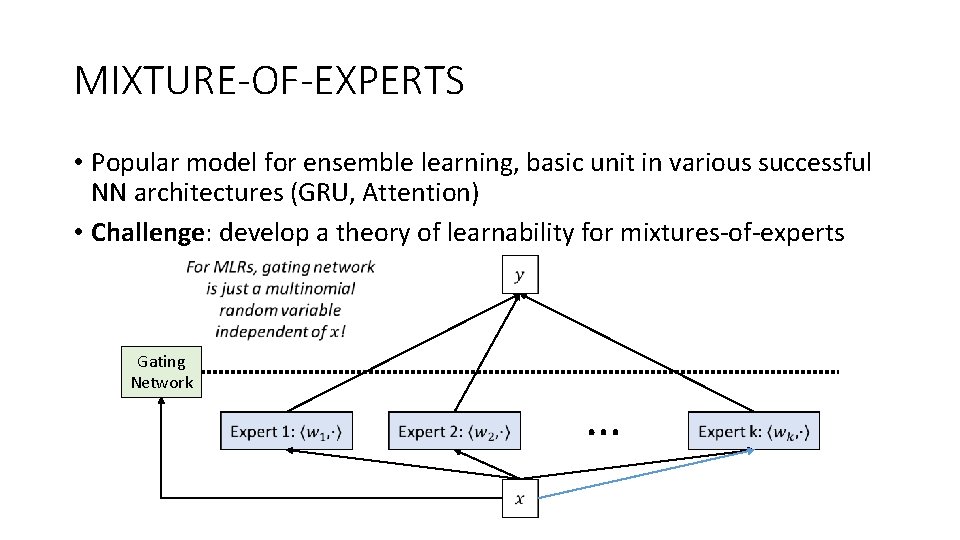

MIXTURE-OF-EXPERTS • Popular model for ensemble learning, basic unit in various successful NN architectures (GRU, Attention) • Challenge: develop a theory of learnability for mixtures-of-experts Gating Network …

MIXTURE MODELS • A mixture model is a convex combination of structured distributions • e. g. Gaussians, exponentials, rankings, HMMs, topic models, product measures • Popular class of latent variable models for representing data coming from heterogeneous sources • Powerful testbed for understanding: • • clustering distribution learning, robust statistics alternating minimization information-computation gaps • Goal: Given iid samples from mixture, recover parameters of components

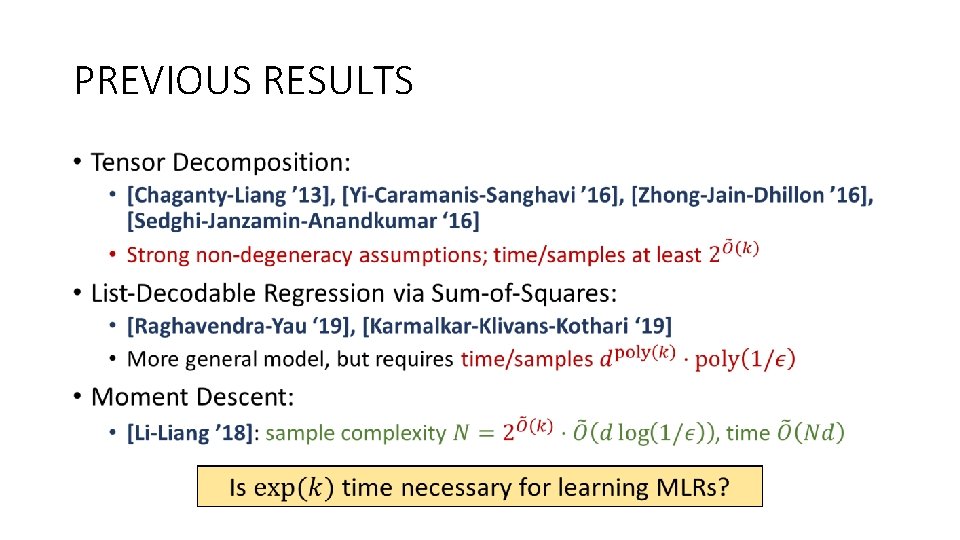

PREVIOUS RESULTS •

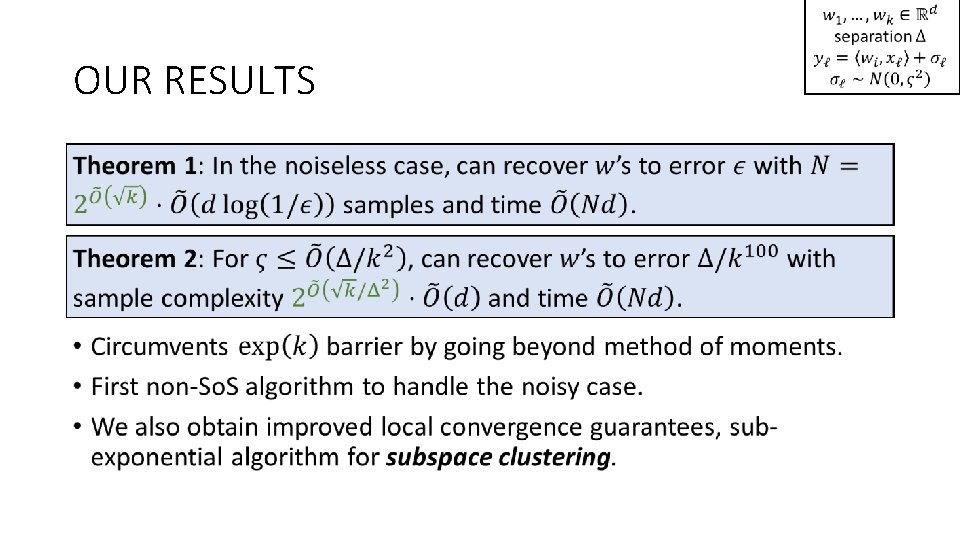

OUR RESULTS

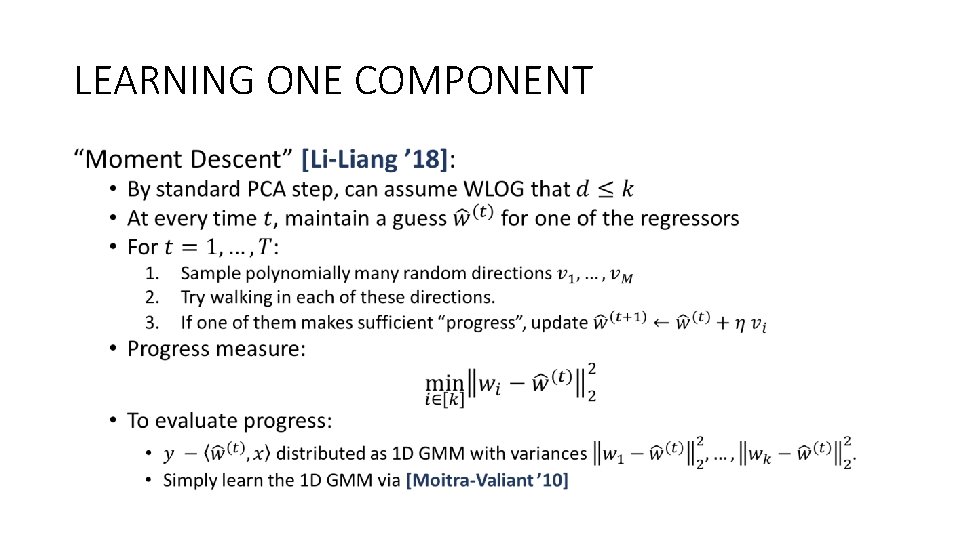

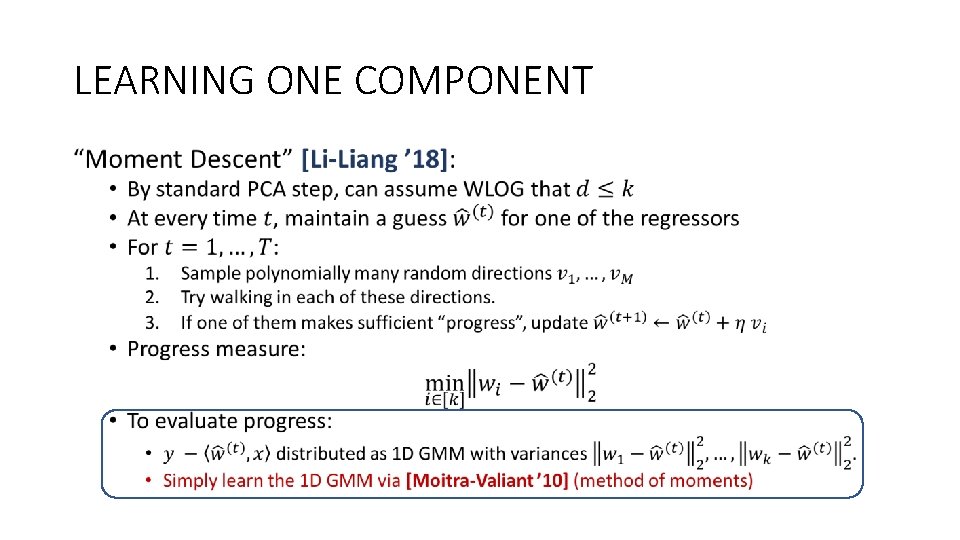

LEARNING ONE COMPONENT •

LEARNING ONE COMPONENT •

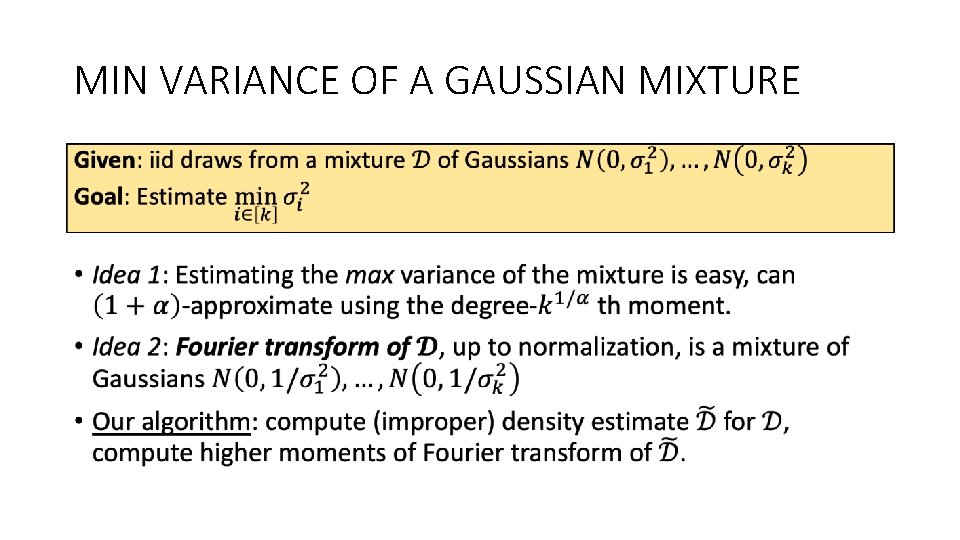

MIN VARIANCE OF A GAUSSIAN MIXTURE •

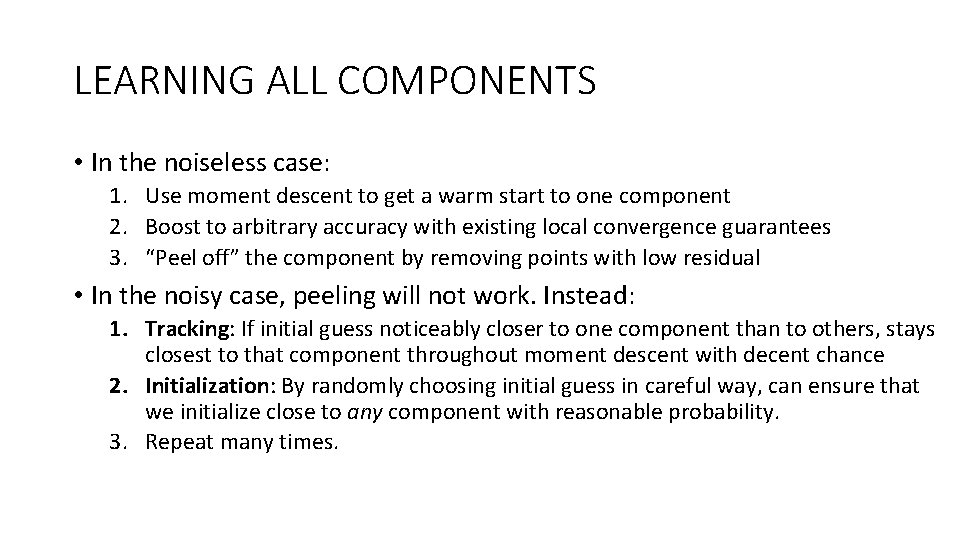

LEARNING ALL COMPONENTS • In the noiseless case: 1. Use moment descent to get a warm start to one component 2. Boost to arbitrary accuracy with existing local convergence guarantees 3. “Peel off” the component by removing points with low residual • In the noisy case, peeling will not work. Instead: 1. Tracking: If initial guess noticeably closer to one component than to others, stays closest to that component throughout moment descent with decent chance 2. Initialization: By randomly choosing initial guess in careful way, can ensure that we initialize close to any component with reasonable probability. 3. Repeat many times.

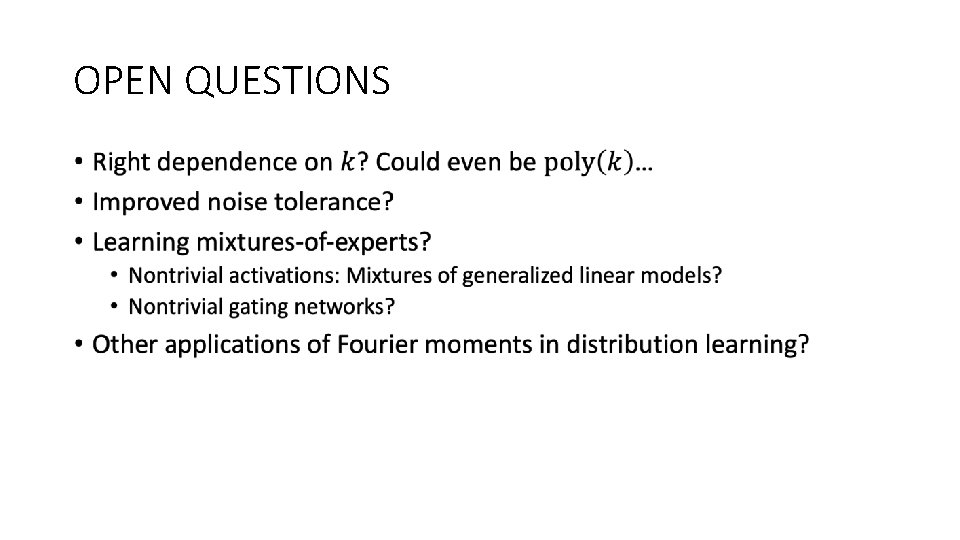

OPEN QUESTIONS •

Thanks!

- Slides: 20