Kernels and Margins Maria Florina Balcan 03042010 Kernel

Kernels and Margins Maria Florina Balcan 03/04/2010

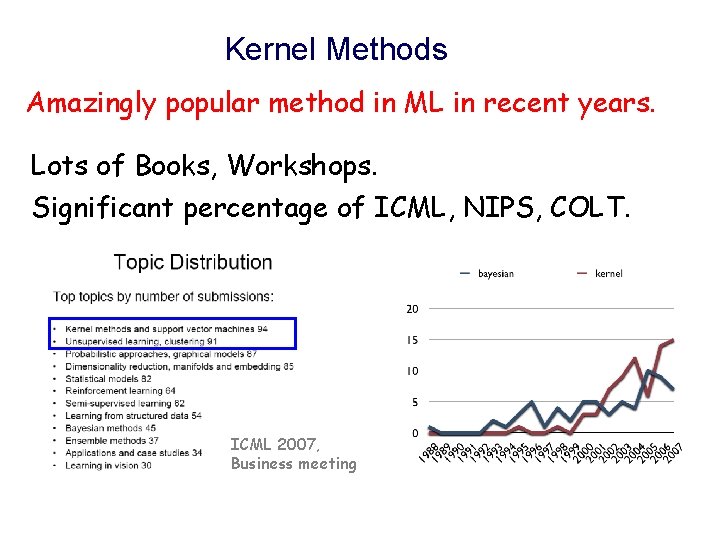

Kernel Methods Amazingly popular method in ML in recent years. Lots of Books, Workshops. Significant percentage of ICML, NIPS, COLT. ICML 2007, Business meeting

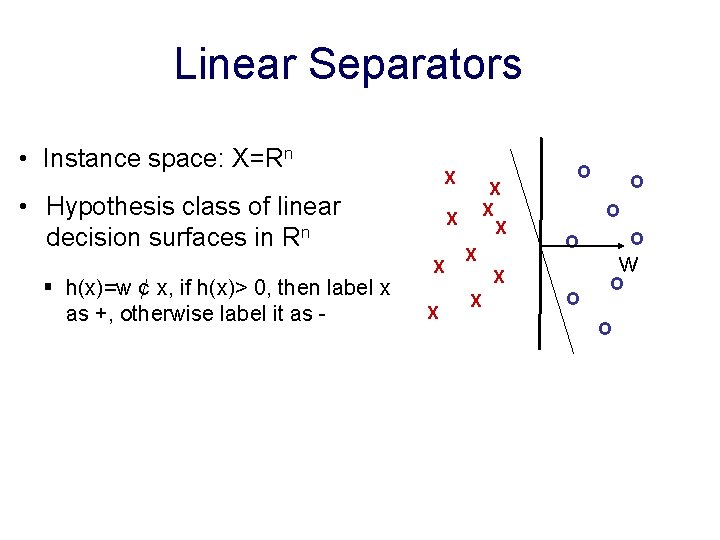

Linear Separators • Instance space: X=Rn X • Hypothesis class of linear decision surfaces in Rn § h(x)=w ¢ x, if h(x)> 0, then label x as +, otherwise label it as - X X X X O O w O O

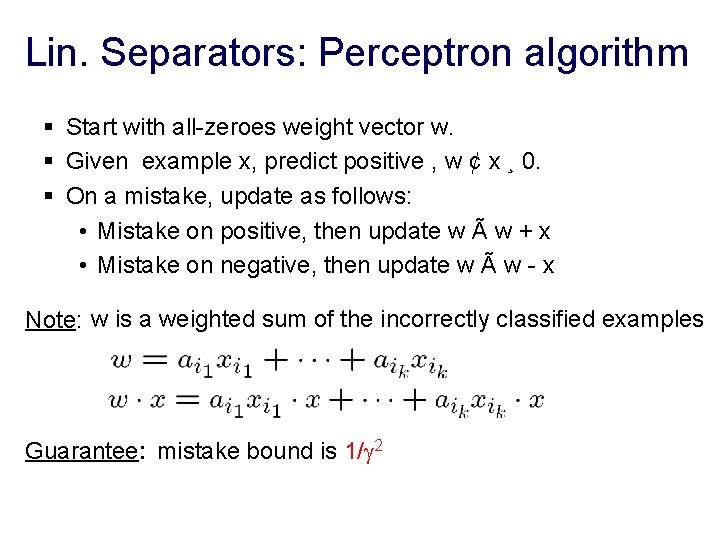

Lin. Separators: Perceptron algorithm § Start with all-zeroes weight vector w. § Given example x, predict positive , w ¢ x ¸ 0. § On a mistake, update as follows: • Mistake on positive, then update w à w + x • Mistake on negative, then update w à w - x Note: w is a weighted sum of the incorrectly classified examples Guarantee: mistake bound is 1/ 2

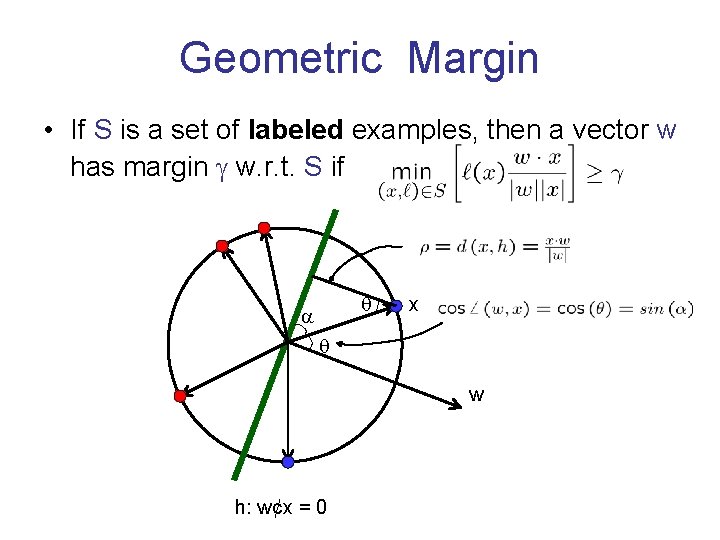

Geometric Margin • If S is a set of labeled examples, then a vector w has margin w. r. t. S if x w h: w¢x = 0

What if Not Linearly Separable Problem: data not linearly separable in the most natural feature representation. Example: vs No good linear separator in pixel representation. Solutions: • Classic: “Learn a more complex class of functions”. • Modern: “Use a Kernel” (prominent method today)

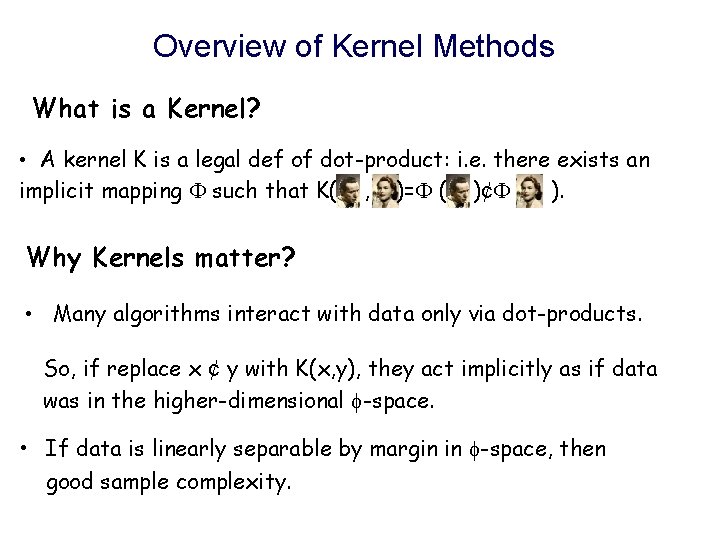

Overview of Kernel Methods What is a Kernel? • A kernel K is a legal def of dot-product: i. e. there exists an implicit mapping such that K( , )= ( )¢ ( ). Why Kernels matter? • Many algorithms interact with data only via dot-products. So, if replace x ¢ y with K(x, y), they act implicitly as if data was in the higher-dimensional -space. • If data is linearly separable by margin in -space, then good sample complexity.

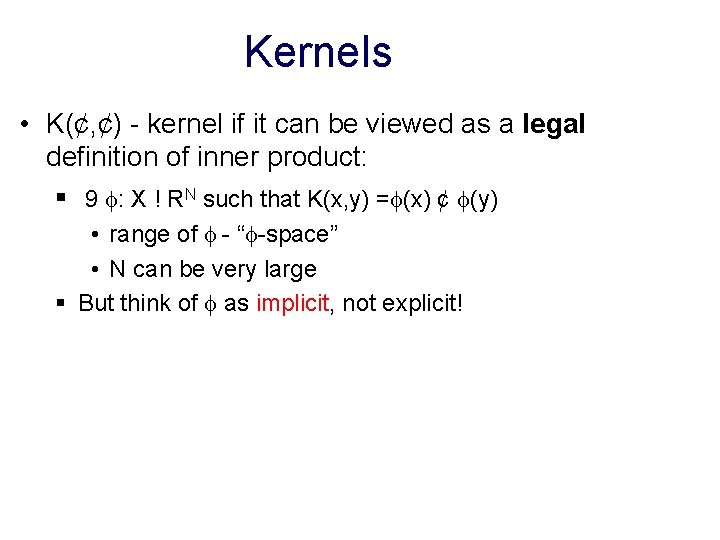

Kernels • K(¢, ¢) - kernel if it can be viewed as a legal definition of inner product: § 9 : X ! RN such that K(x, y) = (x) ¢ (y) • range of - “ -space” • N can be very large § But think of as implicit, not explicit!

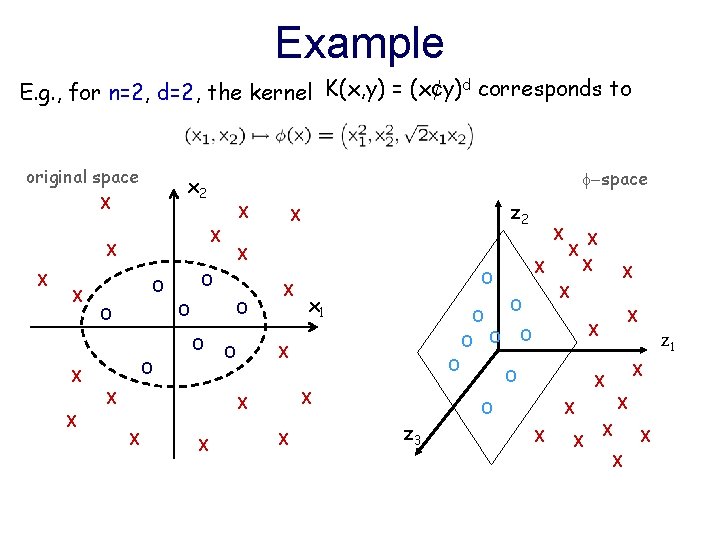

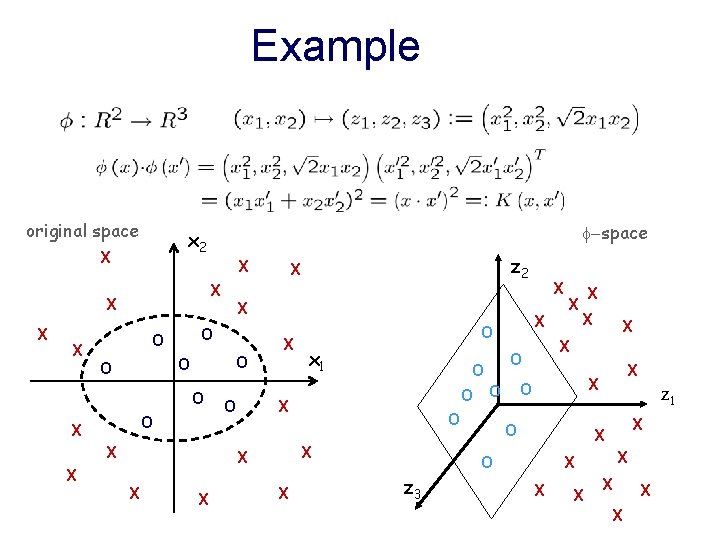

Example E. g. , for n=2, d=2, the kernel K(x, y) = (x¢y)d corresponds to original space X X z 2 X X -space x 2 X O O O O O X X X O X z 3 X X X O O O X X X O x 1 X O z 1 X X O X X X X

Example original space X X z 2 X X -space x 2 X O O O O O X X X O X z 3 X X X O O O X X X O x 1 X O z 1 X X O X X X X

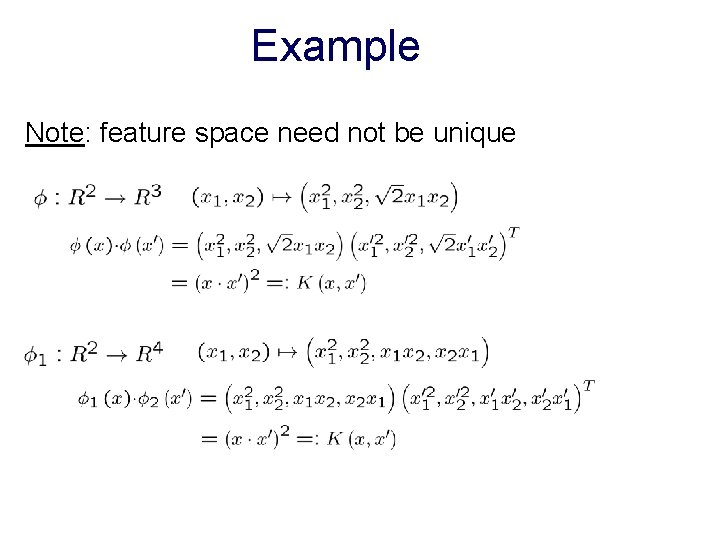

Example Note: feature space need not be unique

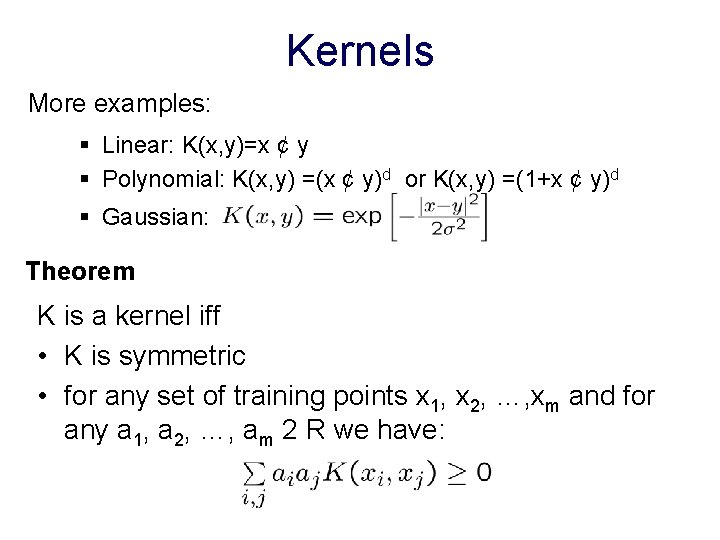

Kernels More examples: § Linear: K(x, y)=x ¢ y § Polynomial: K(x, y) =(x ¢ y)d or K(x, y) =(1+x ¢ y)d § Gaussian: Theorem K is a kernel iff • K is symmetric • for any set of training points x 1, x 2, …, xm and for any a 1, a 2, …, am 2 R we have:

Kernelizing a learning algorithm • If all computations involving instances are in terms of inner products then: § Conceptually, work in a very high diml space and the alg’s performance depends only on linear separability in that extended space. § Computationally, only need to modify the alg. by replacing each x ¢ y with a K(x, y). • Examples of kernalizable algos: Perceptron, SVM.

Lin. Separators: Perceptron algorithm § Start with all-zeroes weight vector w. § Given example x, predict positive , w ¢ x ¸ 0. § On a mistake, update as follows: • Mistake on positive, then update w à w + x • Mistake on negative, then update w à w - x Easy to kernelize since w is a weighted sum of examples: Replace Note: need to store all the mistakes so far. with

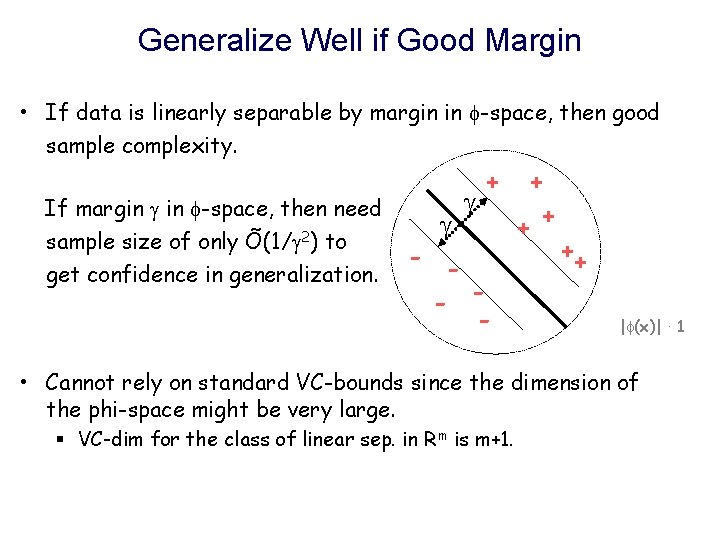

Generalize Well if Good Margin • If data is linearly separable by margin in -space, then good sample complexity. If margin in -space, then need sample size of only Õ(1/ 2) to get confidence in generalization. + - - - + ++ | (x)| · 1 • Cannot rely on standard VC-bounds since the dimension of the phi-space might be very large. § VC-dim for the class of linear sep. in Rm is m+1.

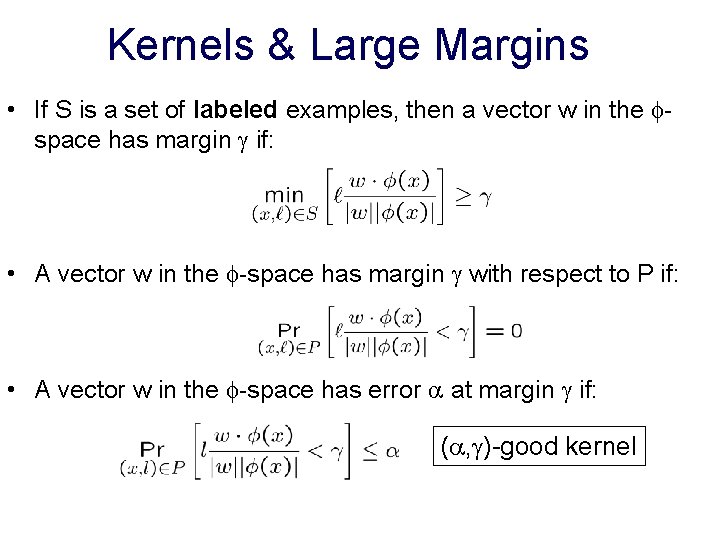

Kernels & Large Margins • If S is a set of labeled examples, then a vector w in the space has margin if: • A vector w in the -space has margin with respect to P if: • A vector w in the -space has error at margin if: ( , )-good kernel

Large Margin Classifiers • If large margin, then the amount of data we need depends only on 1/ and is independent on the dim of the space! § If large margin and if our alg. produces a large margin classifier, then the amount of data we need depends only on 1/ [Bartlett & Shawe-Taylor ’ 99] § If large margin, then Perceptron also behaves well. § Another nice justification based on Random Projection [Arriaga & Vempala ’ 99].

Kernels & Large Margins • Powerful combination in ML in recent years! § A kernel implicitly allows mapping data into a high dimensional space and performing certain operations there without paying a high price computationally. § If data indeed has a large margin linear separator in that space, then one can avoid paying a high price in terms of sample size as well.

Kernels Methods Offer great modularity. • No need to change the underlying learning algorithm to accommodate a particular choice of kernel function. • Also, we can substitute a different algorithm while maintaining the same kernel.

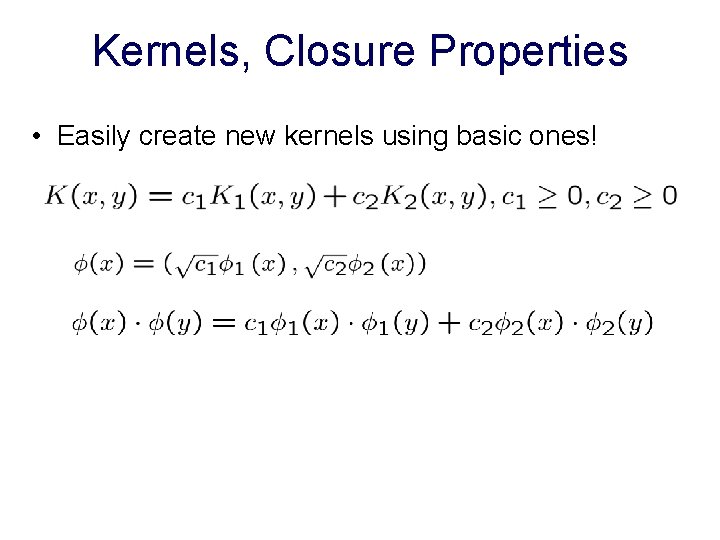

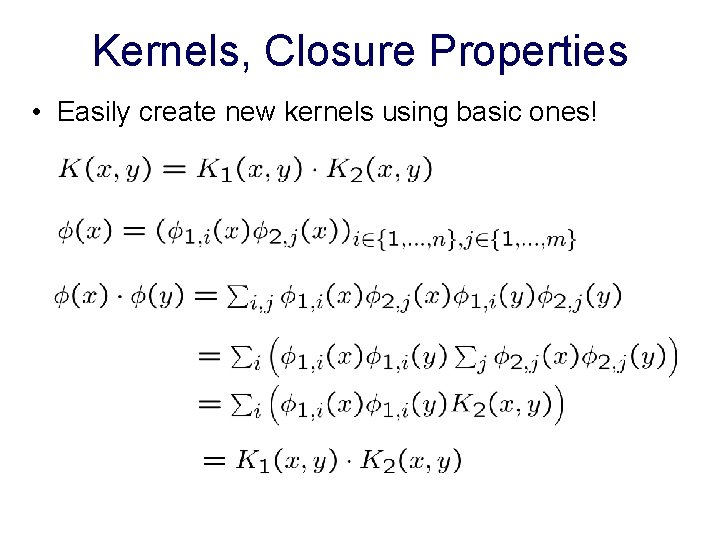

Kernels, Closure Properties • Easily create new kernels using basic ones!

Kernels, Closure Properties • Easily create new kernels using basic ones!

What we really care about are good kernels not only legal kernels!

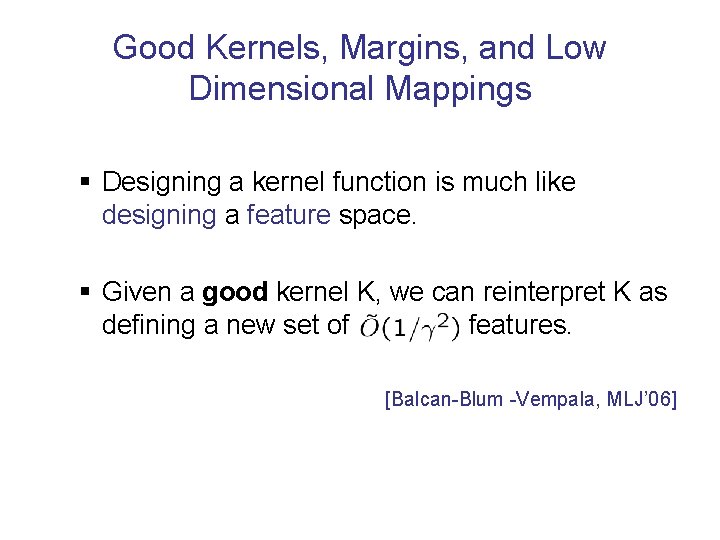

Good Kernels, Margins, and Low Dimensional Mappings § Designing a kernel function is much like designing a feature space. § Given a good kernel K, we can reinterpret K as defining a new set of features. [Balcan-Blum -Vempala, MLJ’ 06]

![Kernels as Features [BBV 06] • If indeed large margin under K, then a Kernels as Features [BBV 06] • If indeed large margin under K, then a](http://slidetodoc.com/presentation_image/482502bac5e958298df36bffaf6eb06c/image-24.jpg)

Kernels as Features [BBV 06] • If indeed large margin under K, then a random linear projection of the -space down to a low dimensional space approximately preserves linear separability. • by Johnson-Lindenstrauss lemma!

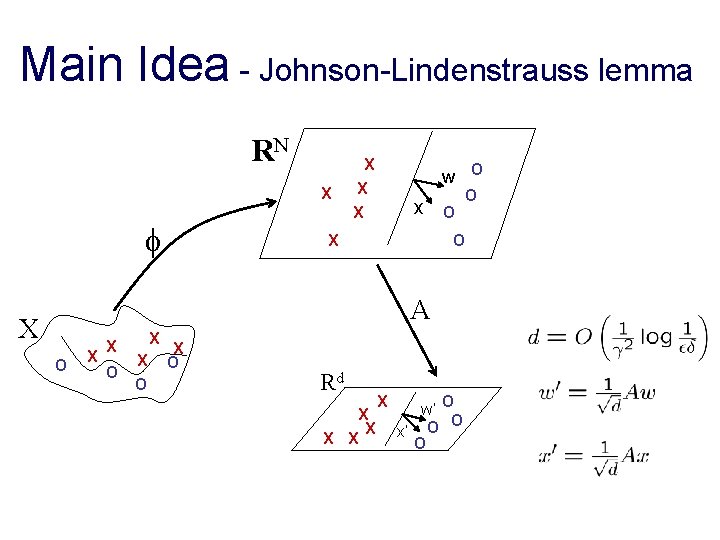

Main Idea - Johnson-Lindenstrauss lemma RN X X w O X X x X O O A X O X X O Rd X X O X X X O w’ O x’ O O

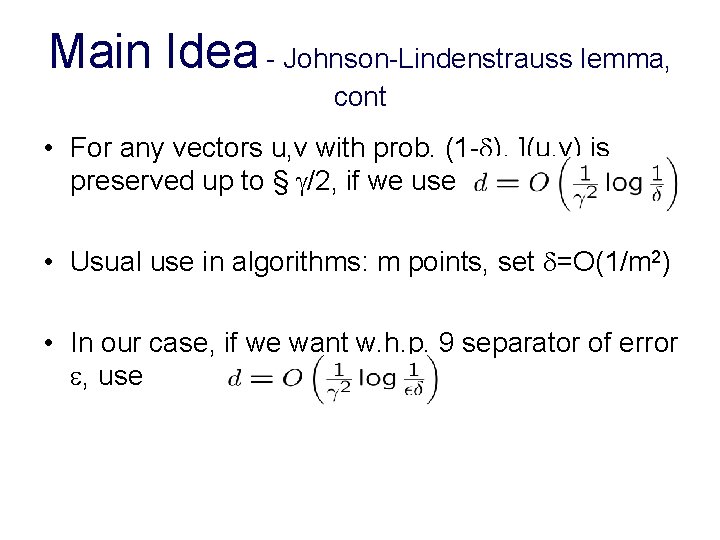

Main Idea - Johnson-Lindenstrauss lemma, cont • For any vectors u, v with prob. (1 - ), ](u, v) is preserved up to § /2, if we use • Usual use in algorithms: m points, set =O(1/m 2) • In our case, if we want w. h. p. 9 separator of error , use

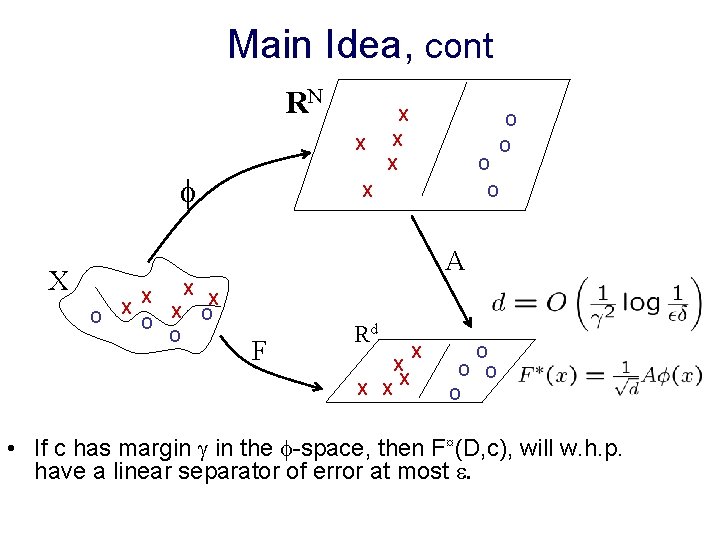

Main Idea, cont RN X X O X O O A X O X X O F Rd X X X O O • If c has margin in the -space, then F¤(D, c), will w. h. p. have a linear separator of error at most .

Problem Statement • For a given kernel K, the dimensionality and description of (x) might be large, or even unknown. § Do not want to explicitly compute (x). • Given kernel K, produce such a mapping F efficiently: § running time that depends polynomially on 1/ and the time to compute K. § no dependence on the dimension of the “ -space”.

![Main Result [BBV 06] • Positive answer - if our procedure for computing the Main Result [BBV 06] • Positive answer - if our procedure for computing the](http://slidetodoc.com/presentation_image/482502bac5e958298df36bffaf6eb06c/image-29.jpg)

Main Result [BBV 06] • Positive answer - if our procedure for computing the mapping F is also given black-box access to the distribution D (unlabeled data). Formally. . . • Given black-box access to K(¢, ¢), given access to D and , , , construct, in poly time, F: X ! Rd, where , s. t. if c has margin in the -space, then with prob. 1 - , the induced distribution in Rd is separable at margin °/2 with error · .

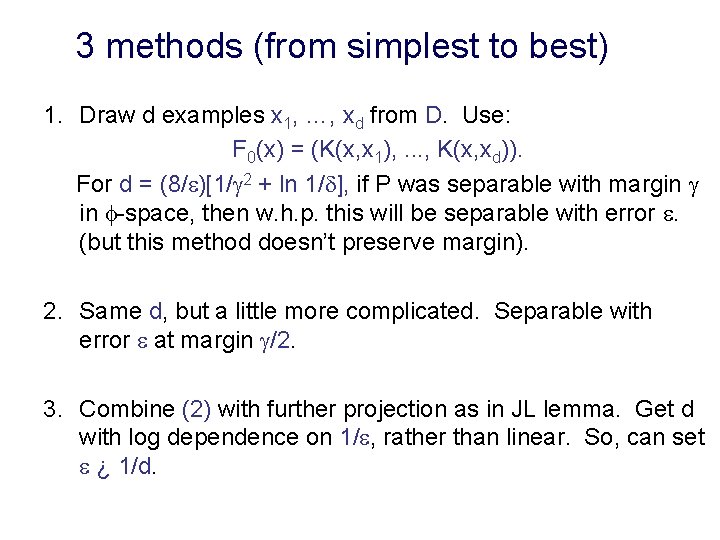

3 methods (from simplest to best) 1. Draw d examples x 1, …, xd from D. Use: F 0(x) = (K(x, x 1), . . . , K(x, xd)). For d = (8/ )[1/ 2 + ln 1/ ], if P was separable with margin in -space, then w. h. p. this will be separable with error . (but this method doesn’t preserve margin). 2. Same d, but a little more complicated. Separable with error at margin /2. 3. Combine (2) with further projection as in JL lemma. Get d with log dependence on 1/ , rather than linear. So, can set ¿ 1/d.

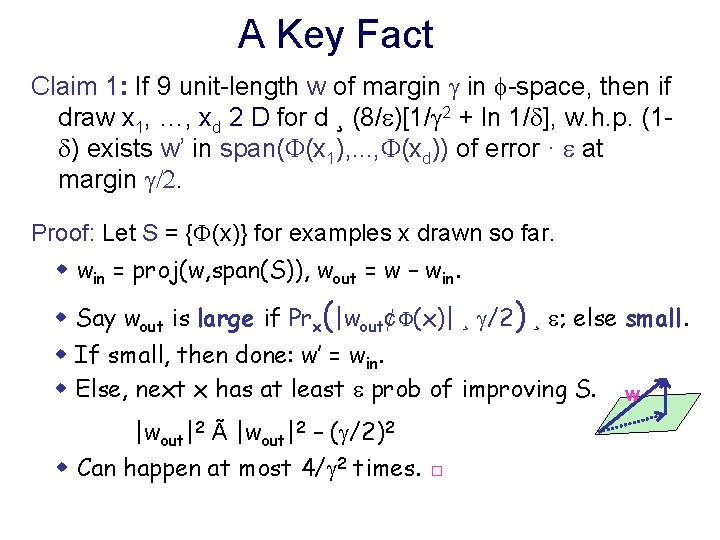

A Key Fact Claim 1: If 9 unit-length w of margin in -space, then if draw x 1, …, xd 2 D for d ¸ (8/ )[1/ 2 + ln 1/ ], w. h. p. (1 ) exists w’ in span( (x 1), . . . , (xd)) of error · at margin /2. Proof: Let S = { (x)} for examples x drawn so far. w win = proj(w, span(S)), wout = w – win. w Say wout is large if Prx(|wout¢ (x)| ¸ /2) ¸ ; else small. w If small, then done: w’ = win. w Else, next x has at least prob of improving S. w |wout|2 Ã |wout|2 – ( /2)2 w Can happen at most 4/ 2 times. □

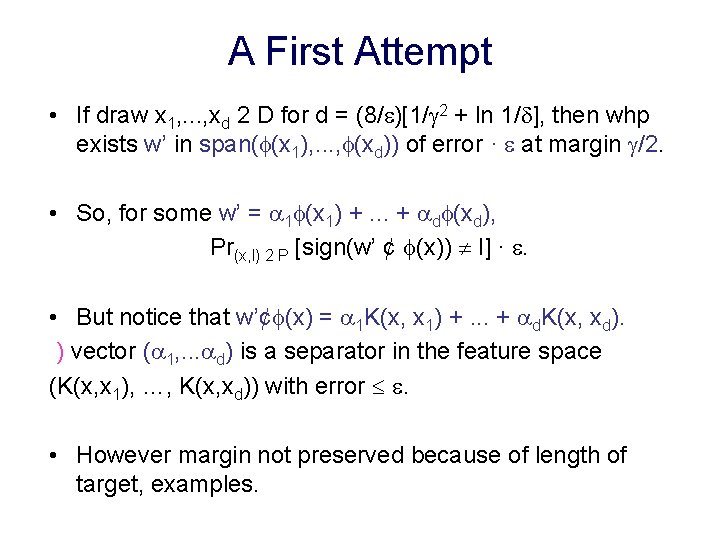

A First Attempt • If draw x 1, . . . , xd 2 D for d = (8/ )[1/ 2 + ln 1/ ], then whp exists w’ in span( (x 1), . . . , (xd)) of error · at margin /2. • So, for some w’ = 1 (x 1) +. . . + d (xd), Pr(x, l) 2 P [sign(w’ ¢ (x)) ¹ l] · . • But notice that w’¢ (x) = 1 K(x, x 1) +. . . + d. K(x, xd). ) vector ( 1, . . . d) is a separator in the feature space (K(x, x 1), …, K(x, xd)) with error . • However margin not preserved because of length of target, examples.

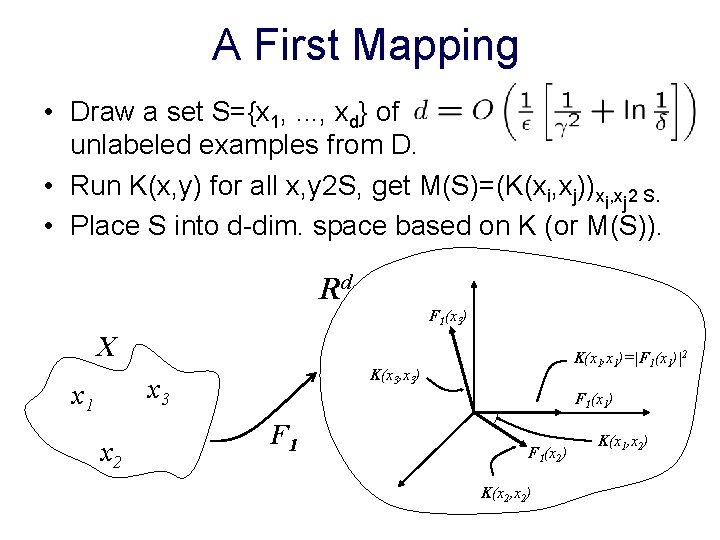

A First Mapping • Draw a set S={x 1, . . . , xd} of unlabeled examples from D. • Run K(x, y) for all x, y 2 S, get M(S)=(K(xi, xj))xi, xj 2 S. • Place S into d-dim. space based on K (or M(S)). Rd F 1(x 3) X K(x 3, x 3) x 3 x 1 x 2 K(x 1, x 1)=|F 1(x 1)|2 F 1(x 1) F 1(x 2) K(x 2, x 2) K(x 1, x 2)

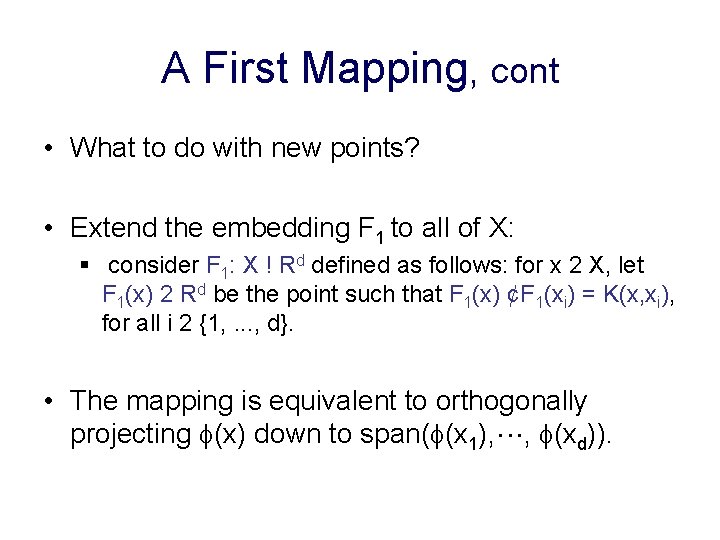

A First Mapping, cont • What to do with new points? • Extend the embedding F 1 to all of X: § consider F 1: X ! Rd defined as follows: for x 2 X, let F 1(x) 2 Rd be the point such that F 1(x) ¢F 1(xi) = K(x, xi), for all i 2 {1, . . . , d}. • The mapping is equivalent to orthogonally projecting (x) down to span( (x 1), , (xd)).

A First Mapping, cont • From Claim 1, we have that with prob. 1 -±, for some w’ = 1 (x 1) +. . . + d (xd) we have: • Consider w’’ = 1 F_1(x 1) +. . . + d. F_d(xd). We have |w’’| = |w’|, w’ ¢ Á(x) = w’’ ¢ F 1(x), |F 1(x)| · |Á(x)| • So:

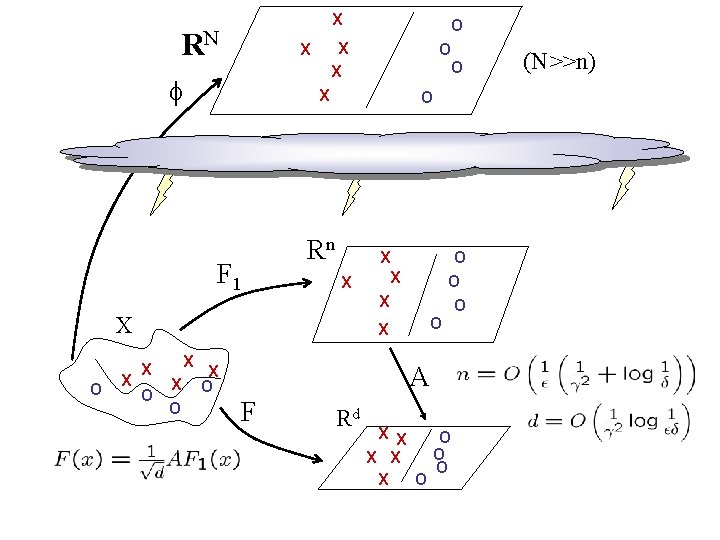

An improved mapping • A two-stage process, compose the first mapping, F 1, with a random linear projection. • Combine two types of random projection: § a projection based on points chosen at random from D. § a projection based on choosing points uniformly at random in the intermediate space.

X RN X X F 1 O Rn X X O O O X X X O O X X O A X O F Rd X X O O X X O (N>>n)

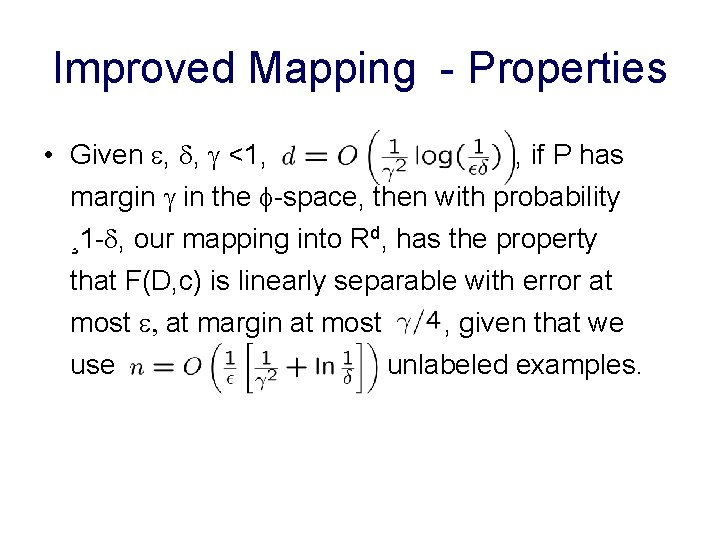

Improved Mapping - Properties • Given , , <1, , if P has margin in the -space, then with probability ¸ 1 - , our mapping into Rd, has the property that F(D, c) is linearly separable with error at most , at margin at most , given that we use unlabeled examples.

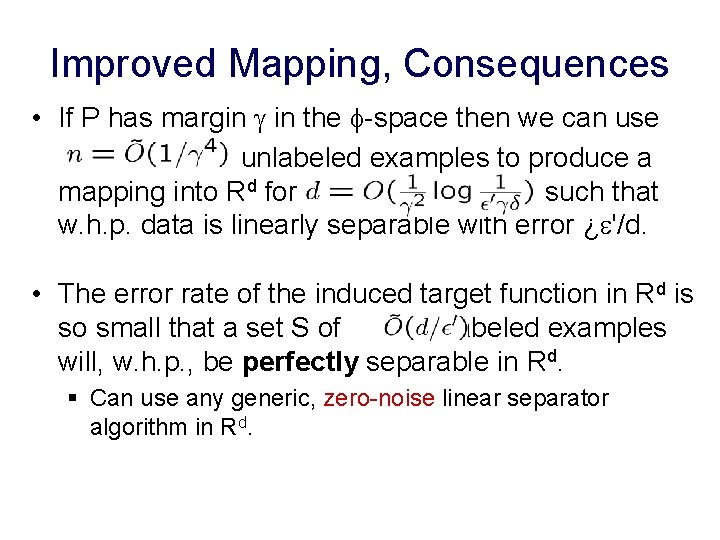

Improved Mapping, Consequences • If P has margin in the -space then we can use unlabeled examples to produce a mapping into Rd for , such that w. h. p. data is linearly separable with error ¿ '/d. • The error rate of the induced target function in Rd is so small that a set S of labeled examples will, w. h. p. , be perfectly separable in Rd. § Can use any generic, zero-noise linear separator algorithm in Rd.

- Slides: 40