Active Learning of Binary Classifiers Presenters Nina Balcan

Active Learning of Binary Classifiers Presenters: Nina Balcan and Steve Hanneke Maria-Florina Balcan

Outline • What is Active Learning? • Active Learning Linear Separators • General Theories of Active Learning • Open Problems Maria-Florina Balcan

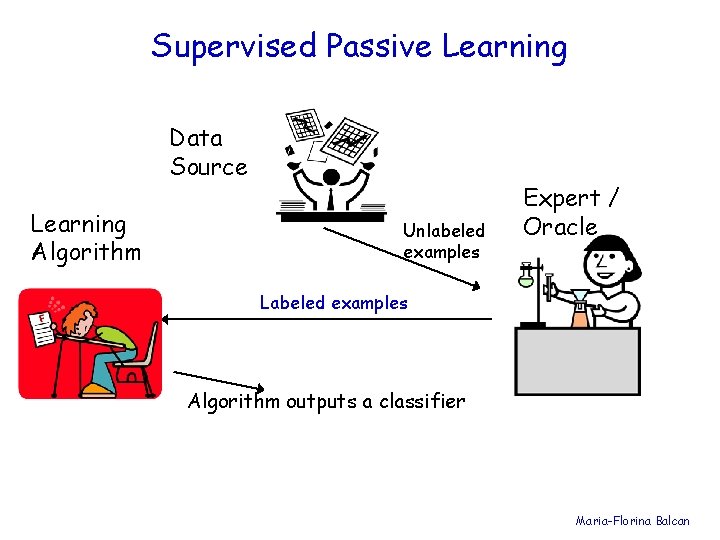

Supervised Passive Learning Data Source Learning Algorithm Unlabeled examples Expert / Oracle Labeled examples Algorithm outputs a classifier Maria-Florina Balcan

Incorporating Unlabeled Data in the Learning process • In many settings, unlabeled data is cheap & easy to obtain, labeled data is much more expensive. • Web page, document classification • OCR, Image classification Maria-Florina Balcan

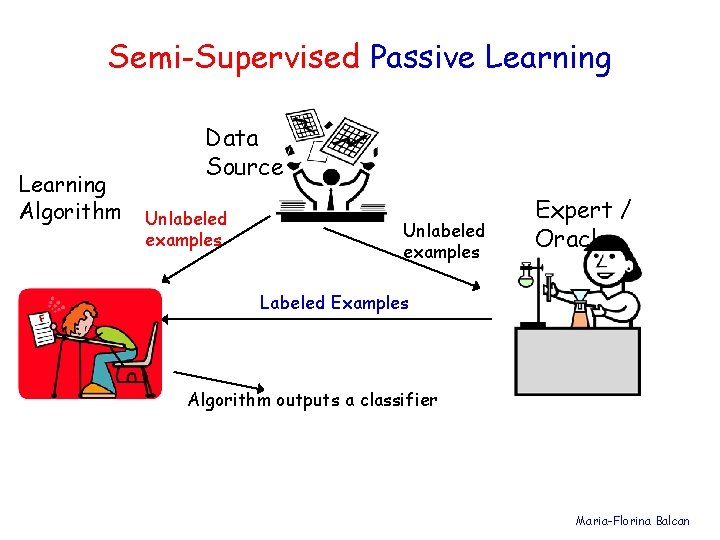

Semi-Supervised Passive Learning Algorithm Data Source Unlabeled examples Expert / Oracle Labeled Examples Algorithm outputs a classifier Maria-Florina Balcan

Semi-Supervised Passive Learning • Several methods have been developed to try to use unlabeled data to improve performance, e. g. : • Transductive SVM [Joachims ’ 98] • Co-training [Blum & Mitchell ’ 98], [BBY 04] • Graph-based methods [Blum & Chawla 01], [ZGL 03] Maria-Florina Balcan

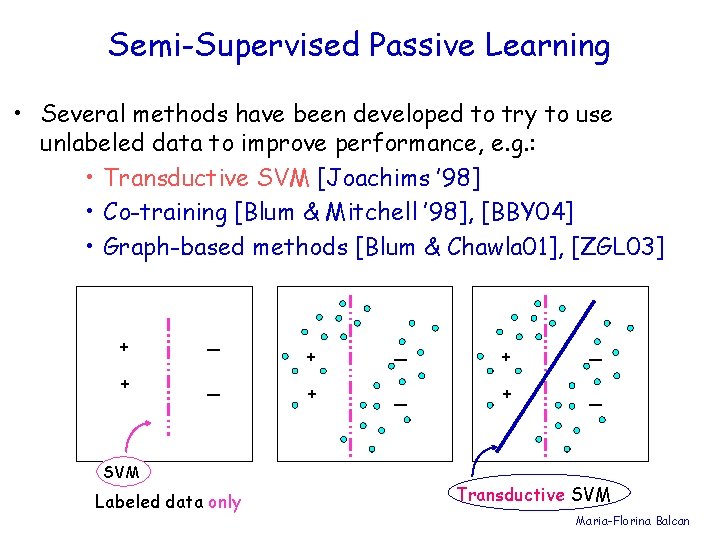

Semi-Supervised Passive Learning • Several methods have been developed to try to use unlabeled data to improve performance, e. g. : • Transductive SVM [Joachims ’ 98] • Co-training [Blum & Mitchell ’ 98], [BBY 04] • Graph-based methods [Blum & Chawla 01], [ZGL 03] + _ SVM Labeled data only + _ + _ Transductive SVM Maria-Florina Balcan

Semi-Supervised Passive Learning • Several methods have been developed to try to use unlabeled data to improve performance, e. g. : • Transductive SVM [Joachims ’ 98] • Co-training [Blum & Mitchell ’ 98], [BBY 04] • Graph-based methods [Blum & Chawla 01], [ZGL 03] Workshops [ICML ’ 03, ICML’ 05] Books: Semi-Supervised Learning, MIT 2006 O. Chapelle, B. Scholkopf and A. Zien (eds) Theoretical models: Balcan-Blum’ 05 Maria-Florina Balcan

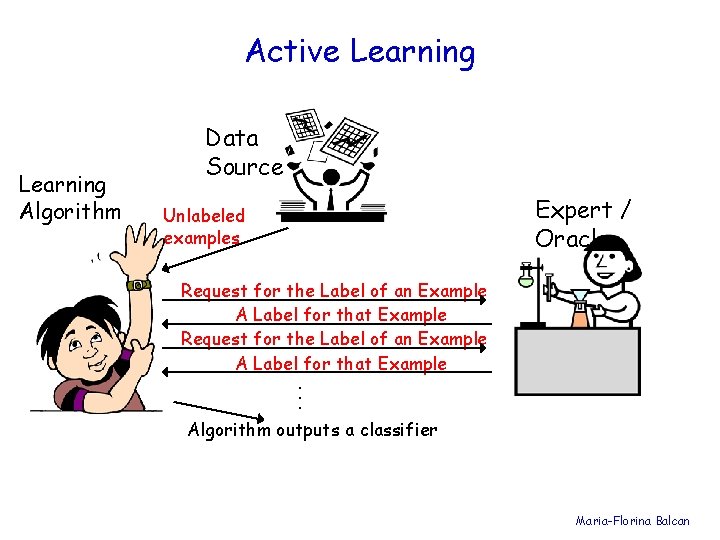

Active Learning Algorithm Data Source Expert / Oracle Unlabeled examples Request for the Label of an Example A Label for that Example. . . Algorithm outputs a classifier Maria-Florina Balcan

What Makes a Good Algorithm? • Guaranteed to output a relatively good classifier for most learning problems. • Doesn’t make too many label requests. Choose the label requests carefully, to get informative labels. Maria-Florina Balcan

Can It Really Do Better Than Passive? • YES! (sometimes) • We often need far fewer labels for active learning than for passive. • This is predicted by theory and has been observed in practice. Maria-Florina Balcan

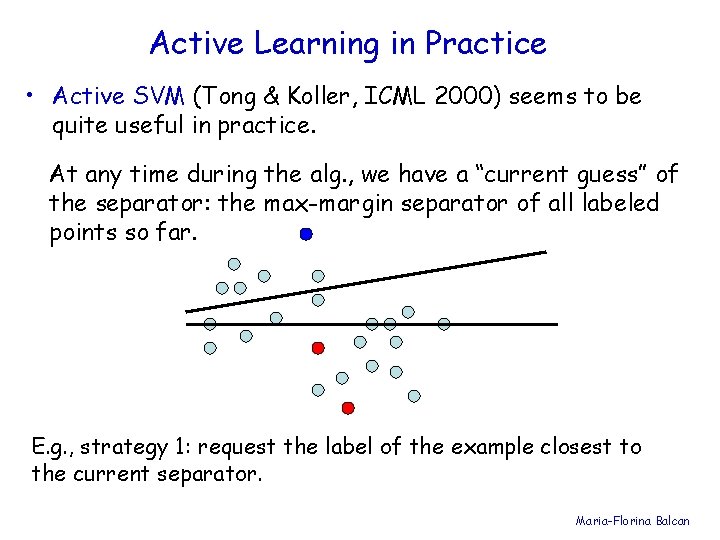

Active Learning in Practice • Active SVM (Tong & Koller, ICML 2000) seems to be quite useful in practice. At any time during the alg. , we have a “current guess” of the separator: the max-margin separator of all labeled points so far. E. g. , strategy 1: request the label of the example closest to the current separator. Maria-Florina Balcan

When Does it Work? And Why? • The algorithms currently used in practice are not well understood theoretically. • We don’t know if/when they output a good classifier, nor can we say how many labels they will need. • So we seek algorithms that we can understand state formal guarantees for. Rest of this talk: surveys recent theoretical results. Maria-Florina Balcan

Standard Supervised Learning Setting • S={(x, l)} - set of labeled examples - drawn i. i. d. from some distr. D over X and labeled by some target concept c* 2 C • Want to do optimization over S to find some hyp. h, but we want h to have small error over D. –err(h)=Prx 2 D(h(x) c*(x)) Sample Complexity, Finite Hyp. Space, Realizable case Maria-Florina Balcan

Sample Complexity: Uniform Convergence Bounds • Infinite Hypothesis Case E. g. , if C - class of linear separators in Rd, then we need roughly O(d/ ) examples to achieve generalization error . Non-realizable case – replace with 2. Maria-Florina Balcan

Active Learning • We get to see unlabeled data first, and there is a charge for every label. • The learner has the ability to choose specific examples to be labeled: - The learner works harder, in order to use fewer labeled examples. • How many labels can we save by querying adaptively? Maria-Florina Balcan

![Can adaptive querying help? [CAL 92, Dasgupta 04] • Consider threshold functions on the Can adaptive querying help? [CAL 92, Dasgupta 04] • Consider threshold functions on the](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-17.jpg)

Can adaptive querying help? [CAL 92, Dasgupta 04] • Consider threshold functions on the real line: hw(x) = 1(x ¸ w), C = {hw: w 2 R} - • + w Sample with 1/ unlabeled examples. - - + • Binary search – need just O(log 1/ ) labels. Active setting: O(log 1/ ) labels to find an -accurate threshold. Supervised learning needs O(1/ ) labels. Exponential improvement in sample complexity Maria-Florina Balcan

![Active Learning might not help [Dasgupta 04] In general, number of queries needed depends Active Learning might not help [Dasgupta 04] In general, number of queries needed depends](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-18.jpg)

Active Learning might not help [Dasgupta 04] In general, number of queries needed depends on C and also on D. h 3 R 1}: C = {linear separators in active learning reduces sample complexity substantially. h 2 C = {linear separators in R 2}: there are some target hyp. for which no improvement can be achieved! - no matter how benign the input distr. h 1 h 0 In this case: learning to accuracy requires 1/ labels… Maria-Florina Balcan

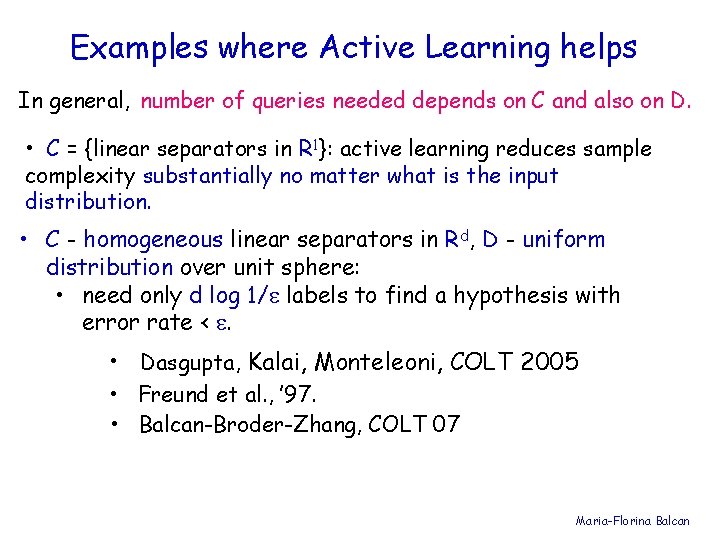

Examples where Active Learning helps In general, number of queries needed depends on C and also on D. • C = {linear separators in R 1}: active learning reduces sample complexity substantially no matter what is the input distribution. • C - homogeneous linear separators in Rd, D - uniform distribution over unit sphere: • need only d log 1/ labels to find a hypothesis with error rate < . • Dasgupta, Kalai, Monteleoni, COLT 2005 • Freund et al. , ’ 97. • Balcan-Broder-Zhang, COLT 07 Maria-Florina Balcan

![Region of uncertainty [CAL 92] • Current version space: part of C consistent with Region of uncertainty [CAL 92] • Current version space: part of C consistent with](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-20.jpg)

Region of uncertainty [CAL 92] • Current version space: part of C consistent with labels so far. • “Region of uncertainty” = part of data space about which there is still some uncertainty (i. e. disagreement within version space) • Example: data lies on circle in R 2 and hypotheses are homogeneous linear separators. current version space + + region of uncertainty in data space Maria-Florina Balcan

![Region of uncertainty [CAL 92] current version space region of uncertainy Algorithm: Pick a Region of uncertainty [CAL 92] current version space region of uncertainy Algorithm: Pick a](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-21.jpg)

Region of uncertainty [CAL 92] current version space region of uncertainy Algorithm: Pick a few points at random from the current region of uncertainty and query their labels. Maria-Florina Balcan

![Region of uncertainty [CAL 92] • Current version space: part of C consistent with Region of uncertainty [CAL 92] • Current version space: part of C consistent with](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-22.jpg)

Region of uncertainty [CAL 92] • Current version space: part of C consistent with labels so far. • “Region of uncertainty” = part of data space about which there is still some uncertainty (i. e. disagreement within version space) current version space + + region of uncertainty in data space Maria-Florina Balcan

![Region of uncertainty [CAL 92] • Current version space: part of C consistent with Region of uncertainty [CAL 92] • Current version space: part of C consistent with](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-23.jpg)

Region of uncertainty [CAL 92] • Current version space: part of C consistent with labels so far. • “Region of uncertainty” = part of data space about which there is still some uncertainty (i. e. disagreement within version space) new version space + + New region of uncertainty in data space Maria-Florina Balcan

![Region of uncertainty [CAL 92], Guarantees Algorithm: Pick a few points at random from Region of uncertainty [CAL 92], Guarantees Algorithm: Pick a few points at random from](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-24.jpg)

Region of uncertainty [CAL 92], Guarantees Algorithm: Pick a few points at random from the current region of uncertainty and query their labels. [Balcan, Beygelzimer, Langford, ICML’ 06] Analyze a version of this alg. which is robust to noise. • C- linear separators on the line, low noise, exponential improvement. • C - homogeneous linear separators in Rd, D -uniform distribution over unit sphere. • low noise, need only d 2 log 1/ labels to find a hypothesis with error rate < . • realizable case, d 3/2 log 1/ labels. • supervised -- d/ labels. Maria-Florina Balcan

![Margin Based Active-Learning Algorithm [Balcan-Broder-Zhang, COLT 07] wk+1 Use O(d) examples to find w Margin Based Active-Learning Algorithm [Balcan-Broder-Zhang, COLT 07] wk+1 Use O(d) examples to find w](http://slidetodoc.com/presentation_image/0ef430b4bc4a9d2d72bcc26b9f43d48d/image-25.jpg)

Margin Based Active-Learning Algorithm [Balcan-Broder-Zhang, COLT 07] wk+1 Use O(d) examples to find w 1 of error 1/8. wk iterate k=2, … , log(1/ ) • rejection sample mk samples x from D satisfying |wk-1 T ¢ x| · k ; γk • label them; • find wk 2 B(wk-1, 1/2 k ) consistent with all these examples. end iterate w* Maria-Florina Balcan

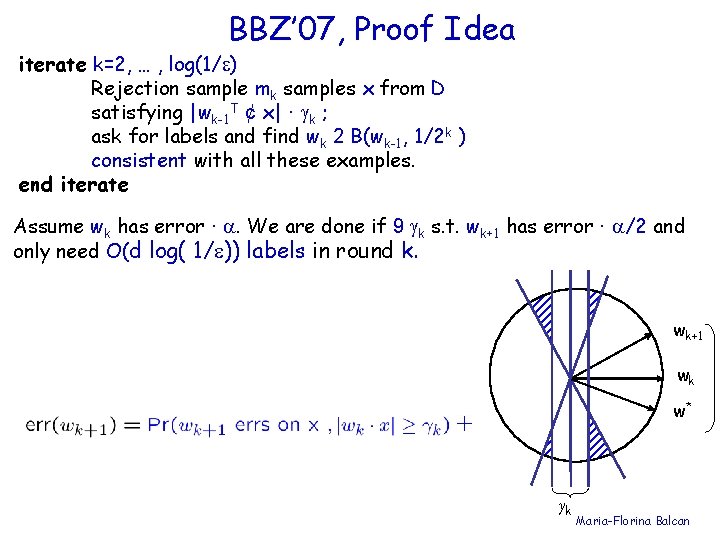

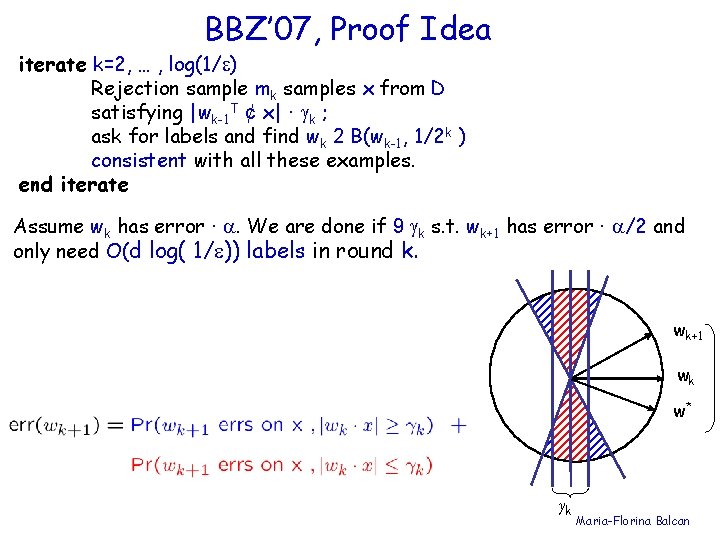

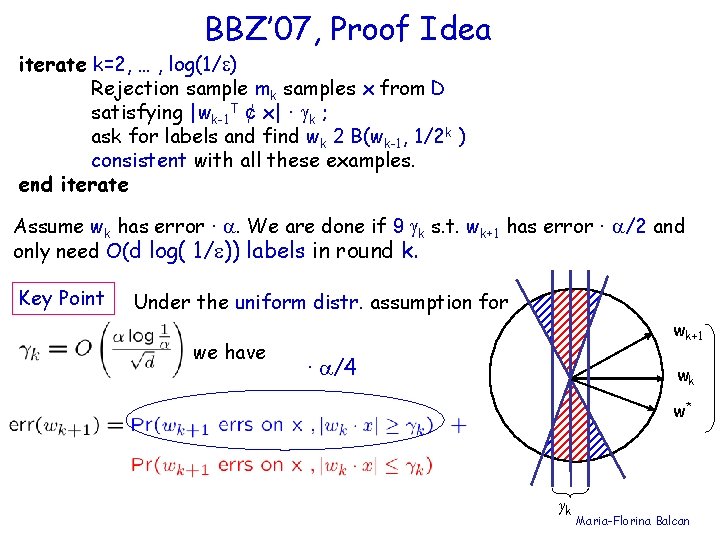

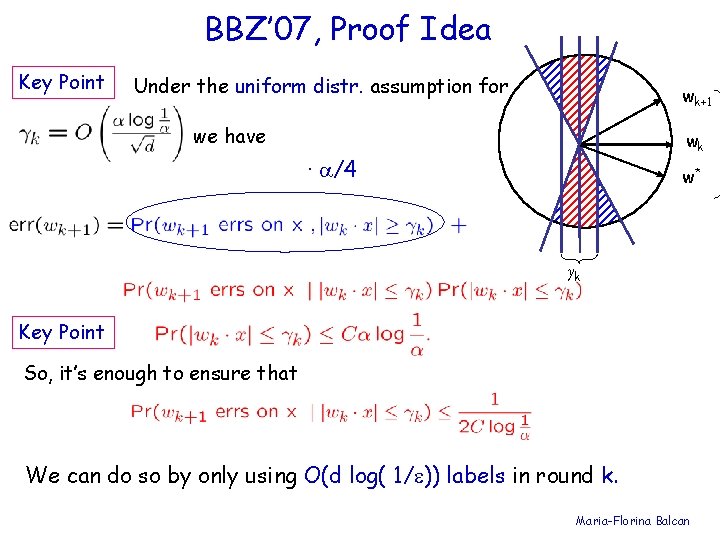

BBZ’ 07, Proof Idea iterate k=2, … , log(1/ ) Rejection sample mk samples x from D satisfying |wk-1 T ¢ x| · k ; ask for labels and find wk 2 B(wk-1, 1/2 k ) consistent with all these examples. end iterate Assume wk has error · . We are done if 9 k s. t. wk+1 has error · /2 and only need O(d log( 1/ )) labels in round k. wk+1 wk w* γk Maria-Florina Balcan

BBZ’ 07, Proof Idea iterate k=2, … , log(1/ ) Rejection sample mk samples x from D satisfying |wk-1 T ¢ x| · k ; ask for labels and find wk 2 B(wk-1, 1/2 k ) consistent with all these examples. end iterate Assume wk has error · . We are done if 9 k s. t. wk+1 has error · /2 and only need O(d log( 1/ )) labels in round k. wk+1 wk w* γk Maria-Florina Balcan

BBZ’ 07, Proof Idea iterate k=2, … , log(1/ ) Rejection sample mk samples x from D satisfying |wk-1 T ¢ x| · k ; ask for labels and find wk 2 B(wk-1, 1/2 k ) consistent with all these examples. end iterate Assume wk has error · . We are done if 9 k s. t. wk+1 has error · /2 and only need O(d log( 1/ )) labels in round k. Key Point Under the uniform distr. assumption for we have wk+1 · /4 wk w* γk Maria-Florina Balcan

BBZ’ 07, Proof Idea Key Point Under the uniform distr. assumption for wk+1 we have wk · /4 w* γk Key Point So, it’s enough to ensure that We can do so by only using O(d log( 1/ )) labels in round k. Maria-Florina Balcan

General Theories of Active Learning Maria-Florina Balcan

General Concept Spaces • In the general learning problem, there is a concept space C, and we want to find an -optimal classifier h C with high probability 1 -. Maria-Florina Balcan

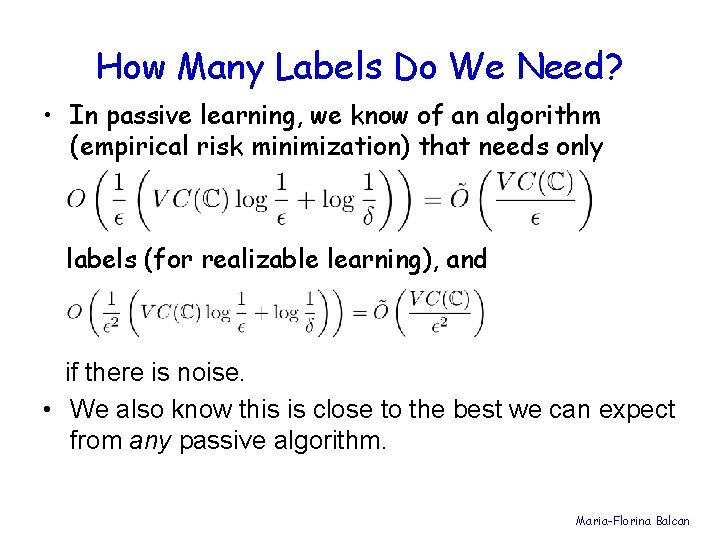

How Many Labels Do We Need? • In passive learning, we know of an algorithm (empirical risk minimization) that needs only labels (for realizable learning), and if there is noise. • We also know this is close to the best we can expect from any passive algorithm. Maria-Florina Balcan

How Many Labels Do We Need? • As before, we want to explore the analogous idea for Active Learning, (but now for general concept space C). • How many label requests are necessary and sufficient for Active Learning? • What are the relevant complexity measures? (i. e. , the Active Learning analogue of VC dimension) Maria-Florina Balcan

What ARE the Interesting Quantities? • Generally speaking, we want examples whose labels are highly controversial among the set of remaining concepts. • The likelihood of drawing such an informative example is an important quantity to consider. • But there are many ways to define “informative” in general. Maria-Florina Balcan

What Do You Mean By “Informative”? • Want examples that reduce the version space. • But how do we measure progress? • • A problem-specific measure P on C? The Diameter? Measure of the region of disagreement? Cover size? (see e. g. , Hanneke, COLT 2007) All of these seem to have interesting theories associated with them. As an example, let’s take a look at Diameter in detail. Maria-Florina Balcan

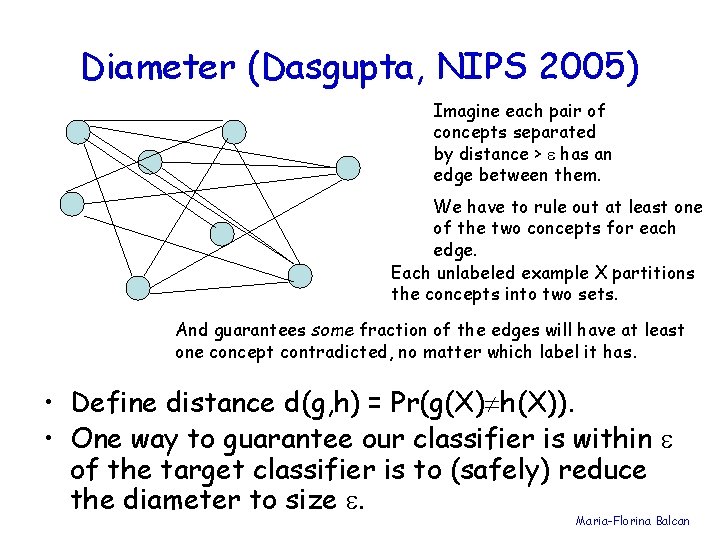

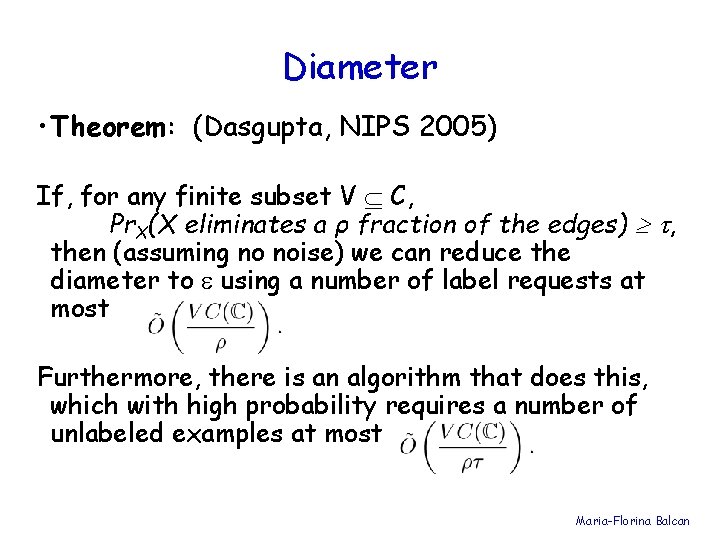

Diameter (Dasgupta, NIPS 2005) Imagine each pair of concepts separated by distance > has an edge between them. We have to rule out at least one of the two concepts for each edge. Each unlabeled example X partitions the concepts into two sets. And guarantees some fraction of the edges will have at least one concept contradicted, no matter which label it has. • Define distance d(g, h) = Pr(g(X) h(X)). • One way to guarantee our classifier is within of the target classifier is to (safely) reduce the diameter to size . Maria-Florina Balcan

Diameter • Theorem: (Dasgupta, NIPS 2005) If, for any finite subset V C, Pr. X(X eliminates a ρ fraction of the edges) , then (assuming no noise) we can reduce the diameter to using a number of label requests at most Furthermore, there is an algorithm that does this, which with high probability requires a number of unlabeled examples at most Maria-Florina Balcan

Open Problems in Active Learning • Efficient (correct) learning algorithms for linear separators provably achieving significant improvements on many distributions. • What about binary feature spaces? • Tight general-purpose sample complexity bounds, for both realizable and agnostic. • An optimal active learning algorithm? Maria-Florina Balcan

- Slides: 38