Introduction to Probabilistic Image Processing and Bayesian Networks

Introduction to Probabilistic Image Processing and Bayesian Networks Kazuyuki Tanaka Graduate School of Information Sciences, Tohoku University, Sendai, Japan http: //www. smapip. is. tohoku. ac. jp/~kazu/ Collaborators Prof. Mike Titterington (University of Glasgow, UK) Dr. Koji Tsuda (MPI for Biological Cybernetics, Germany) Dr. Muneki Yasuda (Tohoku University, Japan) 29 December, 2008 National Tsing Hua University, Taiwan 1

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 2

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 3

More is different Probabilistic information processing can give us unexpected capacity in a system constructed from many cooperating elements with randomness. The circumstances are similar to everything in the natural world being made up of a lot of molecules with interactions and fluctuations. The collection of molecules can give rise to unpredictable phenomena. This is often called “More is different” in physics. 29 December, 2008 National Tsing Hua University, Taiwan 4

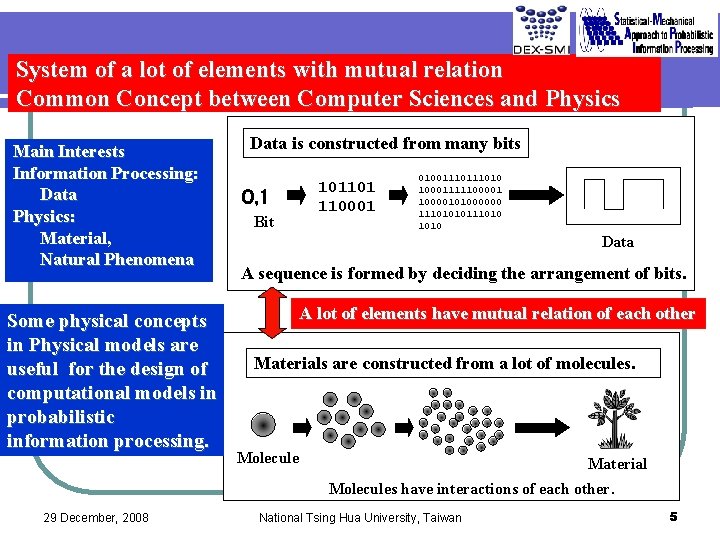

System of a lot of elements with mutual relation Common Concept between Computer Sciences and Physics Main Interests Information Processing: Data Physics: Material, Natural Phenomena Some physical concepts in Physical models are useful for the design of computational models in probabilistic information processing. Data is constructed from many bits 0, 1 Bit 101101 110001 0100111010 1000111110000101000000 11101010111010 Data A sequence is formed by deciding the arrangement of bits. A lot of elements have mutual relation of each other Materials are constructed from a lot of molecules. Molecule Material Molecules have interactions of each other. 29 December, 2008 National Tsing Hua University, Taiwan 5

More is different in informatics as well. Our goal is to establish theoretical paradigms for probabilistic information processing by means of statistical science and statistical physics. The probabilistic information processing is based on both modeling of problems and design of algorithms, which is often realized as graphical models including Bayesian network. 29 December, 2008 National Tsing Hua University, Taiwan 6

Purpose of My Talk l l l Review of formulation of probabilistic model for image processing by means of conventional statistical schemes. Review of probabilistic image processing by using Gaussian graphical model (Gauss Markov Random Fields) as the most basic example. Review of how to construct a belief propagation algorithm for image processing. 29 December, 2008 National Tsing Hua University, Taiwan 7

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 8

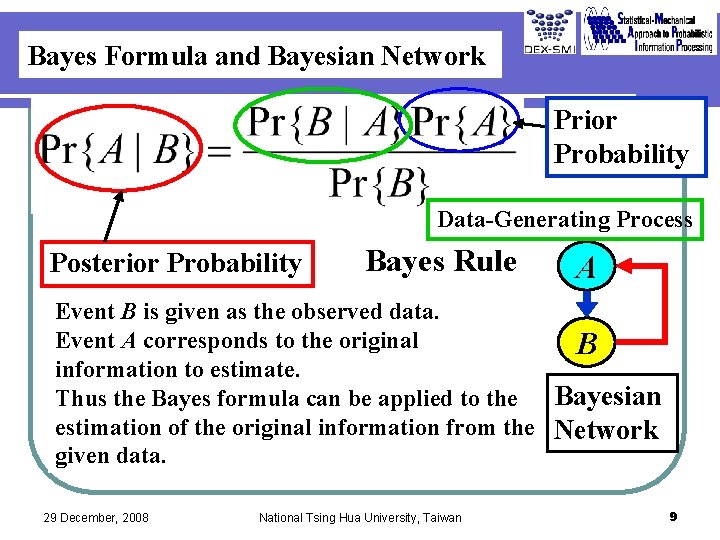

Bayes Formula and Bayesian Network Prior Probability Data-Generating Process Posterior Probability Bayes Rule A Event B is given as the observed data. Event A corresponds to the original B information to estimate. Bayesian Thus the Bayes formula can be applied to the estimation of the original information from the Network given data. 29 December, 2008 National Tsing Hua University, Taiwan 9

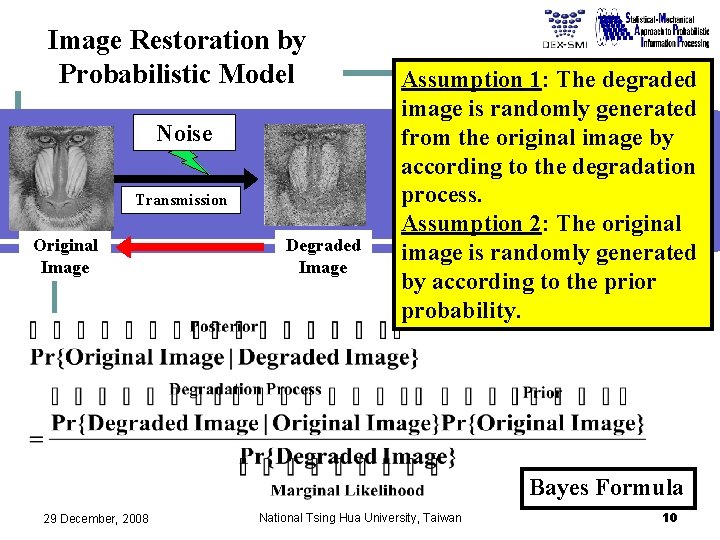

Image Restoration by Probabilistic Model Noise Transmission Original Image Degraded Image Assumption 1: The degraded image is randomly generated from the original image by according to the degradation process. Assumption 2: The original image is randomly generated by according to the prior probability. Bayes Formula 29 December, 2008 National Tsing Hua University, Taiwan 10

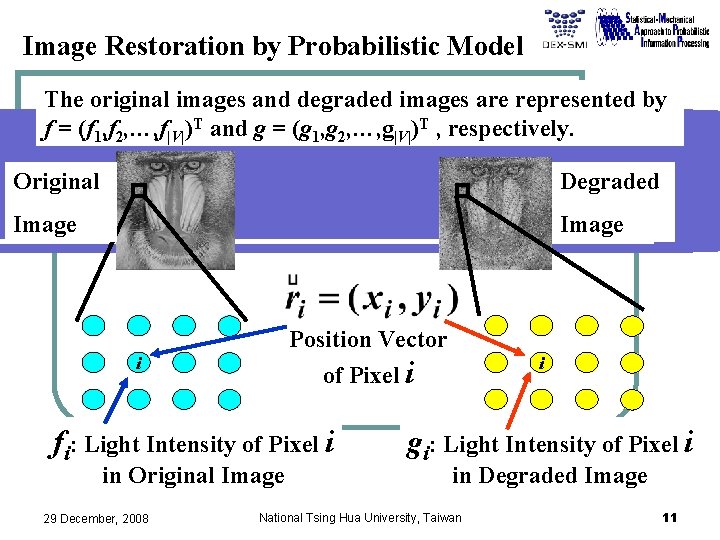

Image Restoration by Probabilistic Model The original images and degraded images are represented by f = (f 1, f 2, …, f|V|)T and g = (g 1, g 2, …, g|V|)T , respectively. Original Degraded Image Position Vector i fi: Light Intensity of Pixel i in Original Image 29 December, 2008 i of Pixel i gi: Light Intensity of Pixel i in Degraded Image National Tsing Hua University, Taiwan 11

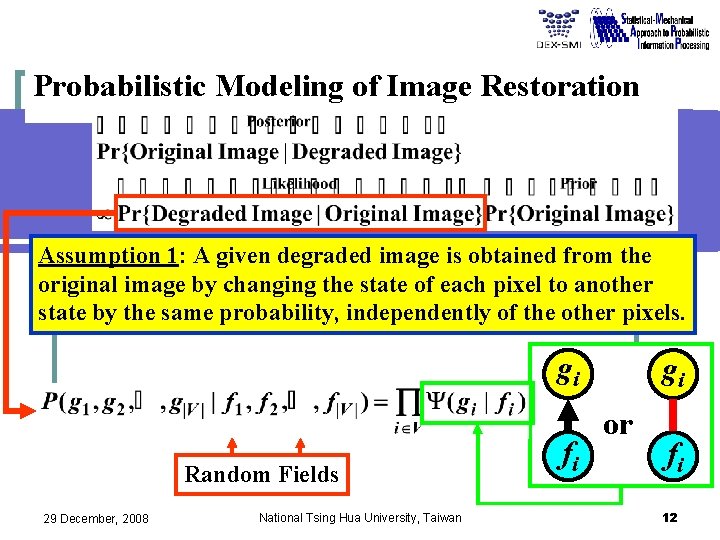

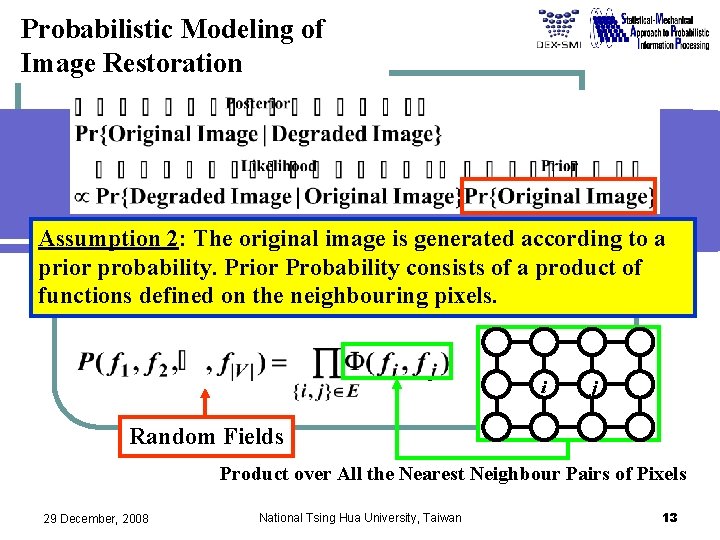

Probabilistic Modeling of Image Restoration Assumption 1: A given degraded image is obtained from the original image by changing the state of each pixel to another state by the same probability, independently of the other pixels. gi Random Fields 29 December, 2008 National Tsing Hua University, Taiwan fi gi or fi 12

Probabilistic Modeling of Image Restoration Assumption 2: The original image is generated according to a prior probability. Prior Probability consists of a product of functions defined on the neighbouring pixels. i j Random Fields Product over All the Nearest Neighbour Pairs of Pixels 29 December, 2008 National Tsing Hua University, Taiwan 13

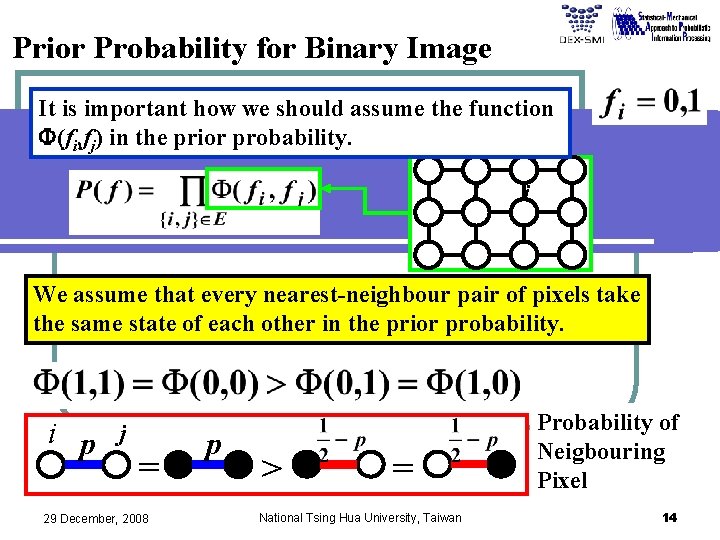

Prior Probability for Binary Image It is important how we should assume the function F(fi, fj) in the prior probability. i j We assume that every nearest-neighbour pair of pixels take the same state of each other in the prior probability. i p j = 29 December, 2008 p > = National Tsing Hua University, Taiwan Probability of Neigbouring Pixel 14

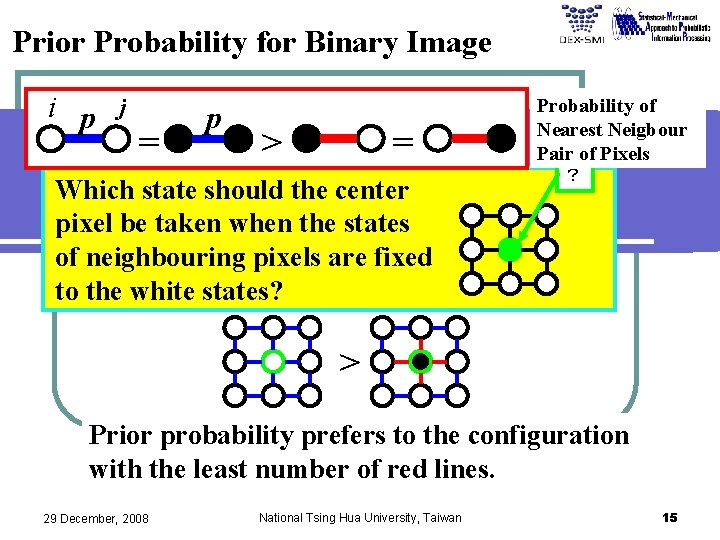

Prior Probability for Binary Image i p j = p > = Which state should the center pixel be taken when the states of neighbouring pixels are fixed to the white states? Probability of Nearest Neigbour Pair of Pixels ? > Prior probability prefers to the configuration with the least number of red lines. 29 December, 2008 National Tsing Hua University, Taiwan 15

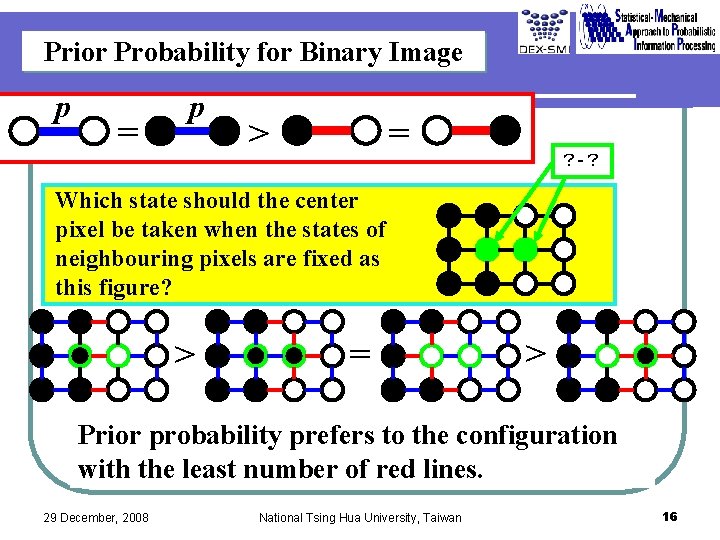

Prior Probability for Binary Image p = p > = ?-? Which state should the center pixel be taken when the states of neighbouring pixels are fixed as this figure? > = > Prior probability prefers to the configuration with the least number of red lines. 29 December, 2008 National Tsing Hua University, Taiwan 16

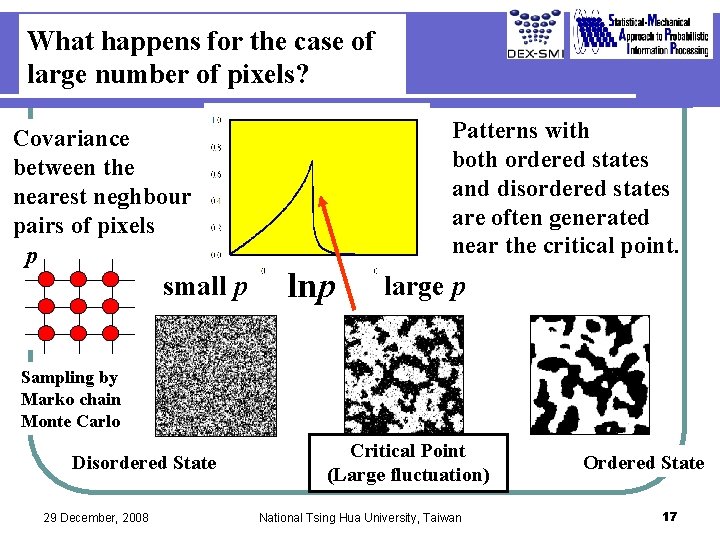

What happens for the case of large number of pixels? Covariance between the nearest neghbour pairs of pixels p small p Patterns with both ordered states and disordered states are often generated near the critical point. lnp large p Sampling by Marko chain Monte Carlo Disordered State 29 December, 2008 Critical Point (Large fluctuation) National Tsing Hua University, Taiwan Ordered State 17

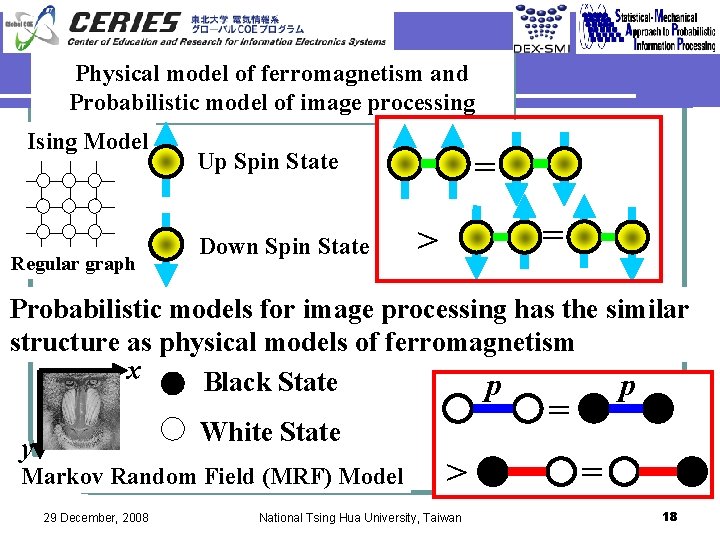

Physical model of ferromagnetism and Probabilistic model of image processing Ising Model Regular graph p = Up Spin State Down Spin State p = > Probabilistic models for image processing has the similar structure as physical models of ferromagnetism x Black State p p = White State y Markov Random Field (MRF) Model 29 December, 2008 > National Tsing Hua University, Taiwan = 18

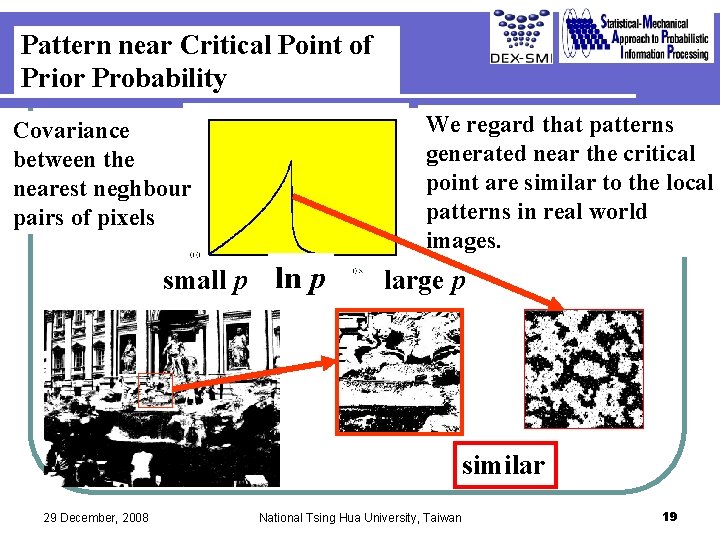

Pattern near Critical Point of Prior Probability We regard that patterns generated near the critical point are similar to the local patterns in real world images. Covariance between the nearest neghbour pairs of pixels small p ln p large p similar 29 December, 2008 National Tsing Hua University, Taiwan 19

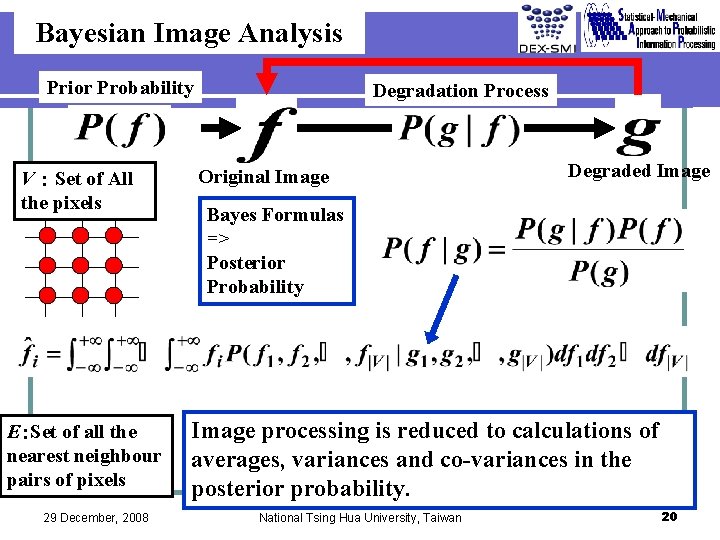

Bayesian Image Analysis Prior Probability V:Set of All the pixels E:Set of all the nearest neighbour pairs of pixels 29 December, 2008 Degradation Process Original Image Degraded Image Bayes Formulas => Posterior Probability Image processing is reduced to calculations of averages, variances and co-variances in the posterior probability. National Tsing Hua University, Taiwan 20

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 21

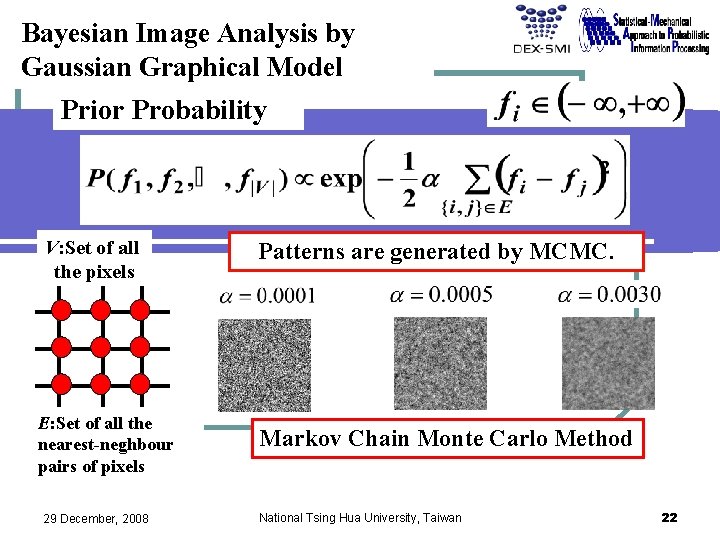

Bayesian Image Analysis by Gaussian Graphical Model Prior Probability V: Set of all the pixels E: Set of all the nearest-neghbour pairs of pixels 29 December, 2008 Patterns are generated by MCMC. Markov Chain Monte Carlo Method National Tsing Hua University, Taiwan 22

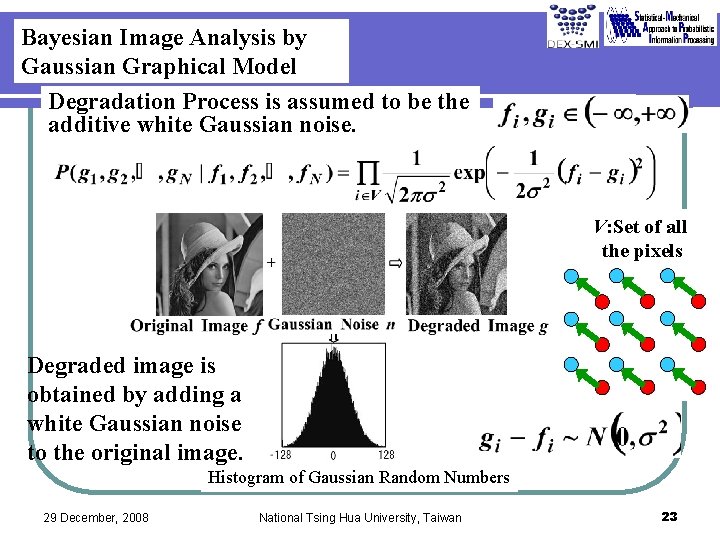

Bayesian Image Analysis by Gaussian Graphical Model Degradation Process is assumed to be the additive white Gaussian noise. V: Set of all the pixels Degraded image is obtained by adding a white Gaussian noise to the original image. Histogram of Gaussian Random Numbers 29 December, 2008 National Tsing Hua University, Taiwan 23

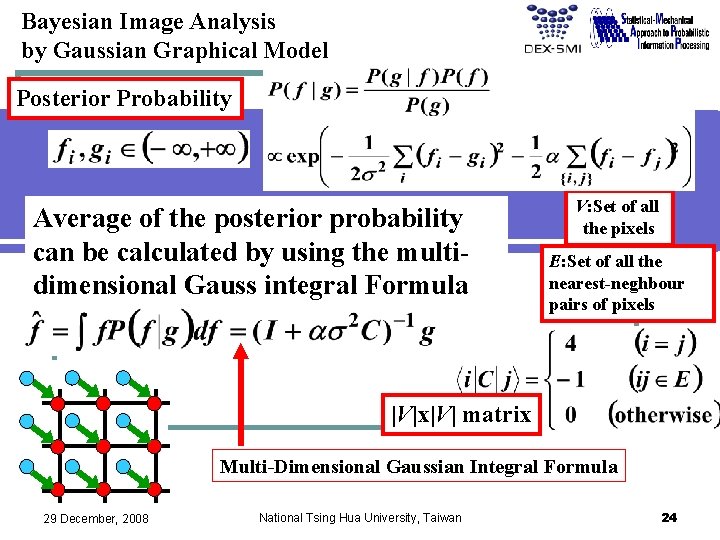

Bayesian Image Analysis by Gaussian Graphical Model Posterior Probability Average of the posterior probability can be calculated by using the multidimensional Gauss integral Formula V: Set of all the pixels E: Set of all the nearest-neghbour pairs of pixels |V|x|V| matrix Multi-Dimensional Gaussian Integral Formula 29 December, 2008 National Tsing Hua University, Taiwan 24

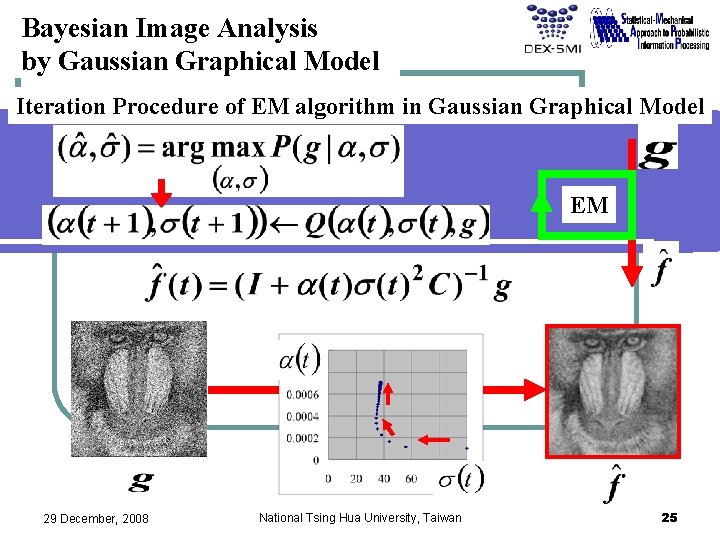

Bayesian Image Analysis by Gaussian Graphical Model Iteration Procedure of EM algorithm in Gaussian Graphical Model EM 29 December, 2008 National Tsing Hua University, Taiwan 25

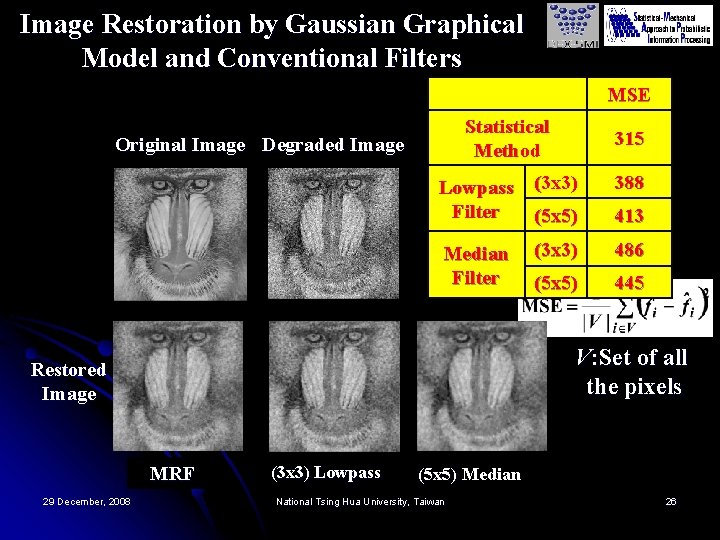

Image Restoration by Gaussian Graphical Model and Conventional Filters MSE Statistical Method Original Image Degraded Image Lowpass (3 x 3) Filter (5 x 5) 388 (3 x 3) 486 (5 x 5) 445 Median Filter 413 V: Set of all the pixels Restored Image MRF 29 December, 2008 315 (3 x 3) Lowpass (5 x 5) Median National Tsing Hua University, Taiwan 26

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 27

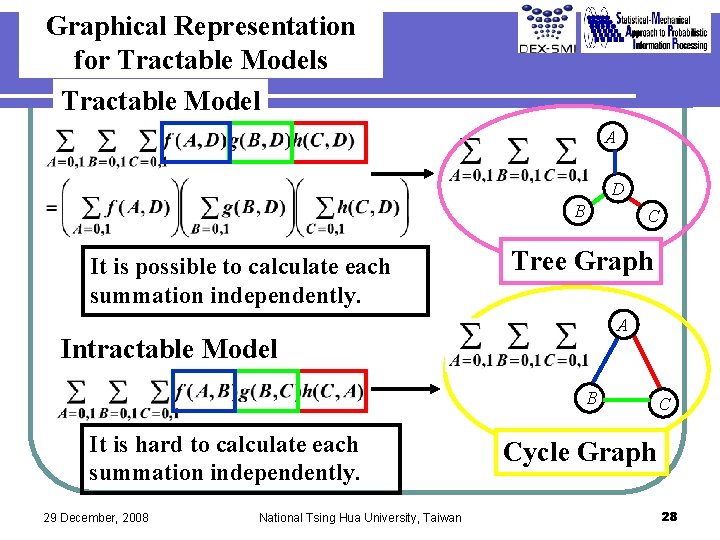

Graphical Representation for Tractable Models Tractable Model A D B It is possible to calculate each summation independently. C Tree Graph A Intractable Model B It is hard to calculate each summation independently. 29 December, 2008 National Tsing Hua University, Taiwan C Cycle Graph 28

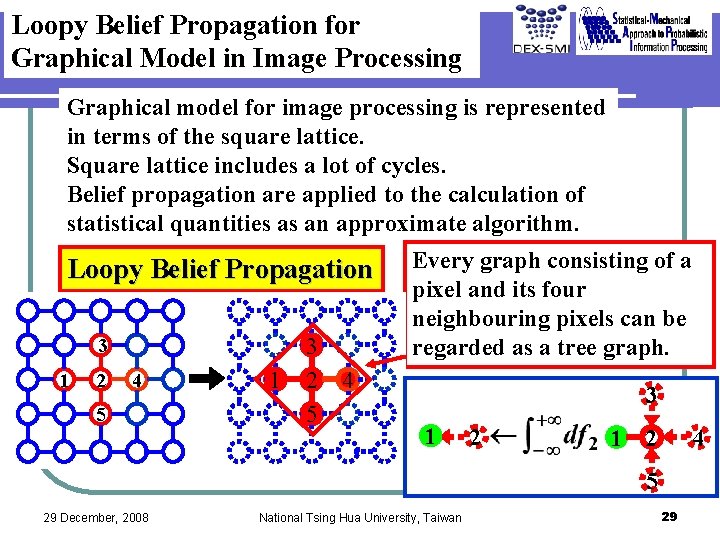

Loopy Belief Propagation for Graphical Model in Image Processing Graphical model for image processing is represented in terms of the square lattice. Square lattice includes a lot of cycles. Belief propagation are applied to the calculation of statistical quantities as an approximate algorithm. Loopy Belief Propagation 3 1 2 4 5 1 3 2 5 Every graph consisting of a pixel and its four neighbouring pixels can be regarded as a tree graph. 4 3 1 2 4 5 29 December, 2008 National Tsing Hua University, Taiwan 29

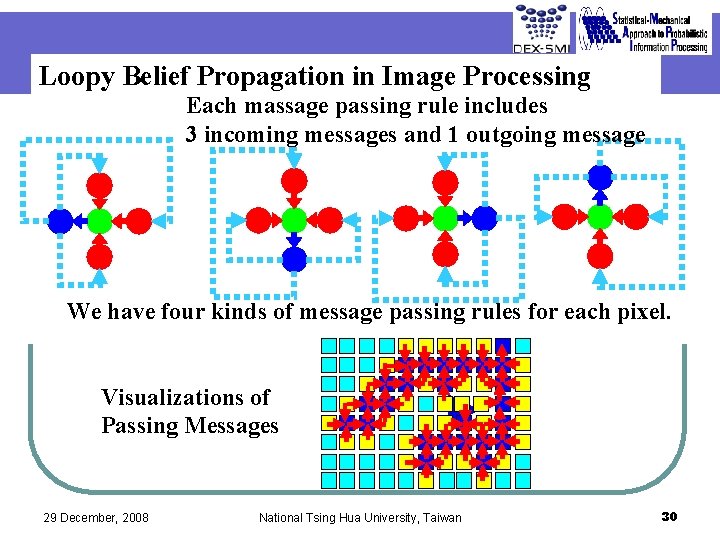

Loopy Belief Propagation in Image Processing Each massage passing rule includes 3 incoming messages and 1 outgoing message We have four kinds of message passing rules for each pixel. Visualizations of Passing Messages 29 December, 2008 National Tsing Hua University, Taiwan 30

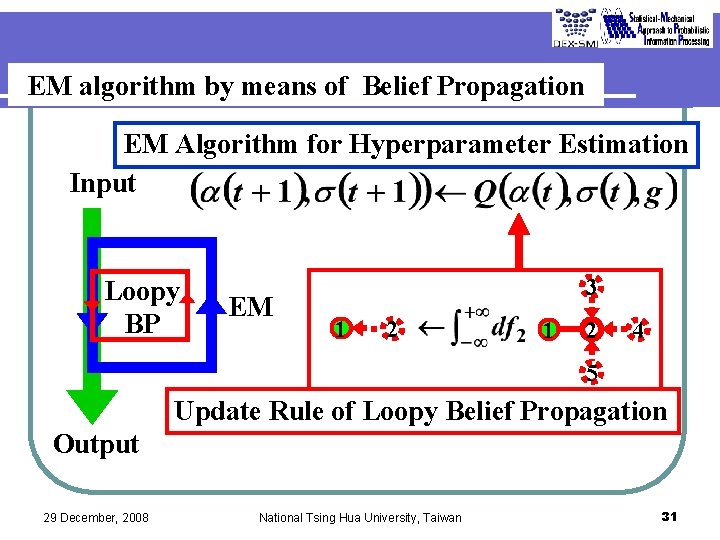

EM algorithm by means of Belief Propagation EM Algorithm for Hyperparameter Estimation Input Loopy BP EM 3 1 2 4 5 Update Rule of Loopy Belief Propagation Output 29 December, 2008 National Tsing Hua University, Taiwan 31

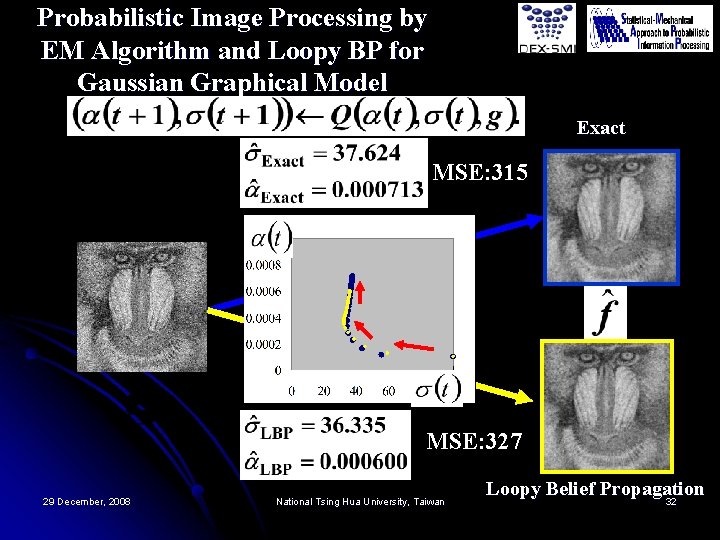

Probabilistic Image Processing by EM Algorithm and Loopy BP for Gaussian Graphical Model Exact MSE: 315 MSE: 327 29 December, 2008 National Tsing Hua University, Taiwan Loopy Belief Propagation 32

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 33

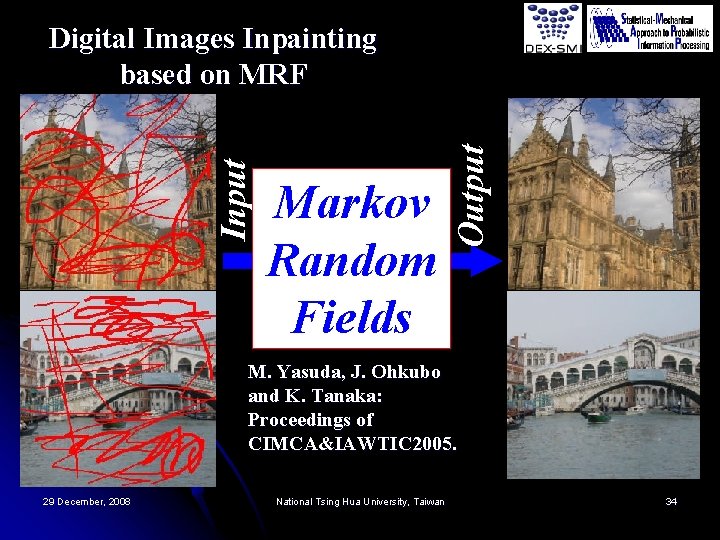

Markov Random Fields Output Input Digital Images Inpainting based on MRF M. Yasuda, J. Ohkubo and K. Tanaka: Proceedings of CIMCA&IAWTIC 2005. 29 December, 2008 National Tsing Hua University, Taiwan 34

Contents 1. 2. 3. 4. 5. 6. Introduction Probabilistic Image Processing Gaussian Graphical Model Belief Propagation Other Application Concluding Remarks 29 December, 2008 National Tsing Hua University, Taiwan 35

Summary l l l Formulation of probabilistic model for image processing by means of conventional statistical schemes has been summarized. Probabilistic image processing by using Gaussian graphical model has been shown as the most basic example. It has been explained how to construct a belief propagation algorithm for image processing. 29 December, 2008 National Tsing Hua University, Taiwan 36

References 1. 2. 3. 4. 5. 6. K. Tanaka: “Statistical-Mechanical Approach to Image Processing” (Topical Review), Journal of Physics A: Mathematical and General, vol. 35, no. 37, pp. R 81 R 150, 2002. K. Tanaka: “Probabilistic Inference by Means of Cluster Variation Method and Linear Response Theory, ” IEICE Transactions on Information and Systems, vol. E 86 -D, no. 7, pp. 1228 -1242, 2003. K. Tanaka, H. Shouno, M. Okada and D. M. Titterington: “Accuracy of the Bethe Approximation for Hyperparameter Estimation in Probabilistic Image Processing, ” Journal of Physics A: Mathematical and General, vol. 37, no. 36, pp. 8675 -8696, 2004. J. Ohkubo, M. Yasuda and K. Tanaka: “Statistical-mechanical Iterative Algorithms on Complex Networks, ” Physical Review E, vol. 72, no. 4, Article No. 046135, 2005. K. Tanaka and D. M. Titterington: “Statistical Trajectory of Approximate EM Algorithm for Probabilistic Image Processing, ” Journal of Physics A: Mathematical and Theoretical, vol. 40, no. 37, pp. 11285 -11300, 2007. M. Yasuda and K. Tanaka: “The Mathematical Structure of the Approximate Linear Response Relation, ” Journal of Physics A: Mathematical and Theoretical, vol. 40, no. 33, pp. 9993 -10007, 2007. 29 December, 2008 National Tsing Hua University, Taiwan 37

SMAPIP Project Statistical Mechanical Approach to Probabilistic Information Processing Member: K. Tanaka, Y. Kabashima, H. Nishimori, T. Tanaka, M. Okada, O. Watanabe, N. Murata, . . . Period: 2002 – 2005 Head Investigator: Kazuyuki Tanaka MEXT Grant-in Aid for Scientific Research on Priority Areas 29 December, 2008 National Tsing Hua University, Taiwan 38

DEX-SMI Project Deepening and Expansion of Statistical Mechanical Informatics MEXT Grant-in Aid for Scientific Research on Priority Areas Member: Y. Kabashima, K. Tanaka, H. Nishimori, T. Tanaka, M. Okada, S. Ishii, M. Hayashi, … Period: 2006 – 2009 Head Investigator: Prof. Yoshiyuki Kabashima (Tokyo Institute of Technology) http: //dex-smi. sp. dis. titech. ac. jp/DEX-SMI/ DEX-SMI 29 December, 2008 GO National Tsing Hua University, Taiwan 39

CERIES Project Center of Education and Research for Information Electronics Systems GCOE Program Period: 2007 – 2011 Head Investigator: Prof. Fumiyuki Adachi (Tohoku University) CERIES GO http: //www. ecei. tohoku. ac. jp/gcoe/ 29 December, 2008 National Tsing Hua University, Taiwan 40

- Slides: 40