inst eecs berkeley educs 61 csu 05 CS

inst. eecs. berkeley. edu/~cs 61 c/su 05 CS 61 C : Machine Structures Lecture #18: Pipelining 1 2005 -07 -20 Andy Carle CS 61 C L 18 Pipelining I (1) A Carle, Summer 2005 © UCB

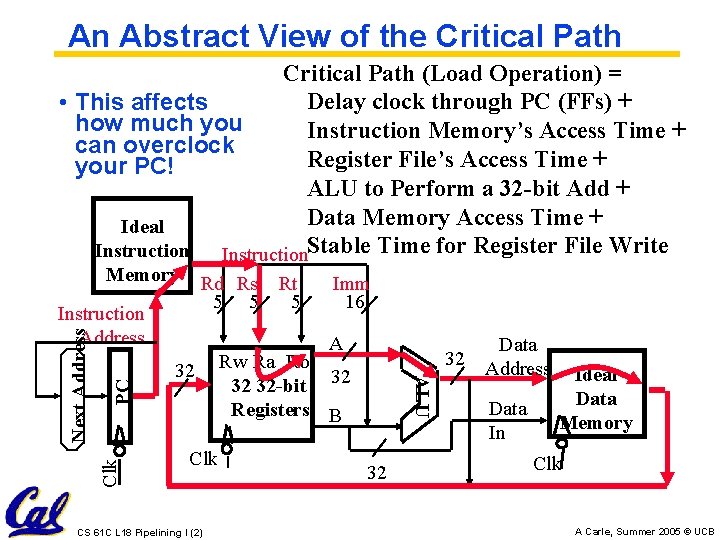

An Abstract View of the Critical Path (Load Operation) = Delay clock through PC (FFs) + • This affects how much you Instruction Memory’s Access Time + can overclock Register File’s Access Time + your PC! ALU to Perform a 32 -bit Add + Data Memory Access Time + Ideal Instruction. Stable Time for Register File Write Memory Rd Rs Rt 5 5 5 PC Clk A 32 Clk CS 61 C L 18 Pipelining I (2) Rw Ra Rb 32 32 -bit 32 Registers B ALU Next Address Instruction Address Imm 16 32 Data 32 Address Data In Ideal Data Memory Clk A Carle, Summer 2005 © UCB

Improve Critical Path Improve Clock Clk. . . • “Critical path” (longest path through logic) determines length of clock period • To reduce clock period decrease path through CL by inserting State CS 61 C L 18 Pipelining I (3) A Carle, Summer 2005 © UCB

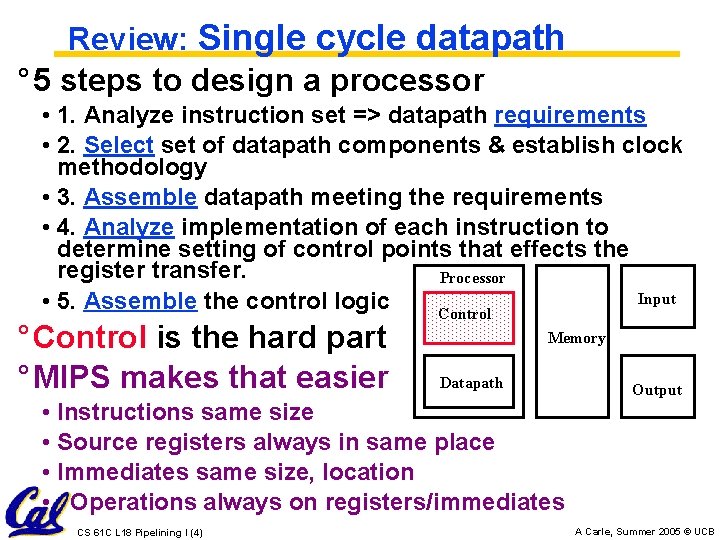

Review: Single cycle datapath ° 5 steps to design a processor • 1. Analyze instruction set => datapath requirements • 2. Select set of datapath components & establish clock methodology • 3. Assemble datapath meeting the requirements • 4. Analyze implementation of each instruction to determine setting of control points that effects the register transfer. Processor Input • 5. Assemble the control logic Control ° Control is the hard part ° MIPS makes that easier Memory Datapath • Instructions same size • Source registers always in same place • Immediates same size, location • Operations always on registers/immediates CS 61 C L 18 Pipelining I (4) Output A Carle, Summer 2005 © UCB

Review Datapath (1/3) • Datapath is the hardware that performs operations necessary to execute programs. • Control instructs datapath on what to do next. • Datapath needs: • access to storage (general purpose registers and memory) • computational ability (ALU) • helper hardware (local registers and PC) CS 61 C L 18 Pipelining I (5) A Carle, Summer 2005 © UCB

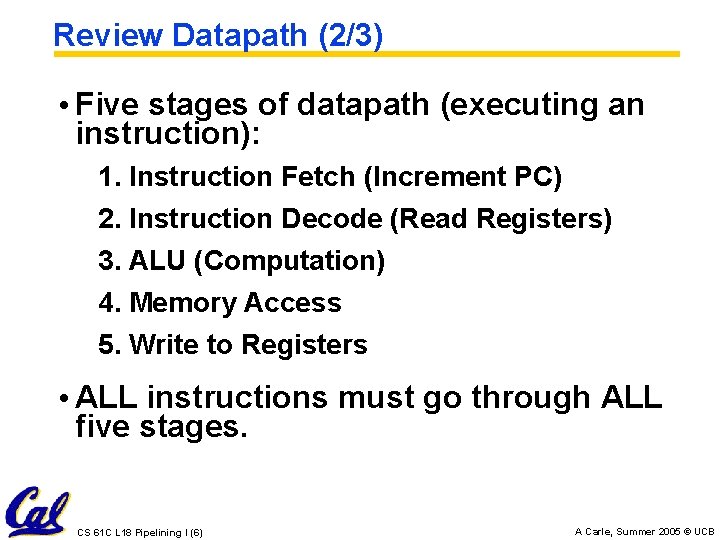

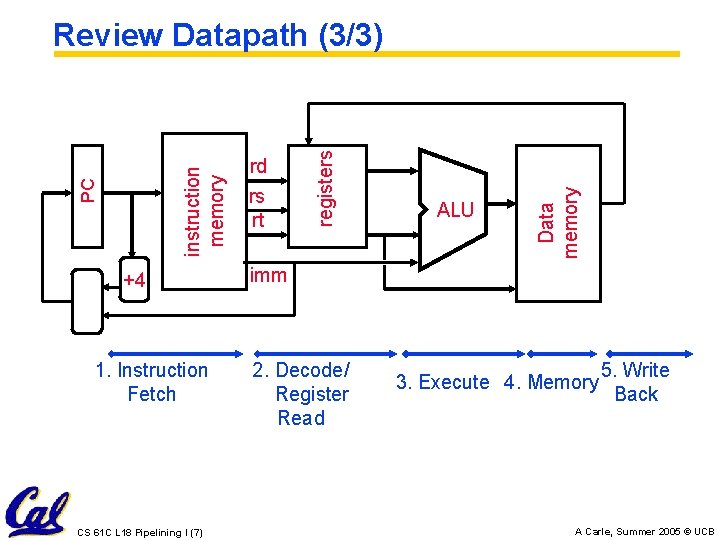

Review Datapath (2/3) • Five stages of datapath (executing an instruction): 1. Instruction Fetch (Increment PC) 2. Instruction Decode (Read Registers) 3. ALU (Computation) 4. Memory Access 5. Write to Registers • ALL instructions must go through ALL five stages. CS 61 C L 18 Pipelining I (6) A Carle, Summer 2005 © UCB

+4 1. Instruction Fetch CS 61 C L 18 Pipelining I (7) ALU Data memory rd rs rt registers PC instruction memory Review Datapath (3/3) imm 2. Decode/ Register Read 3. Execute 4. Memory 5. Write Back A Carle, Summer 2005 © UCB

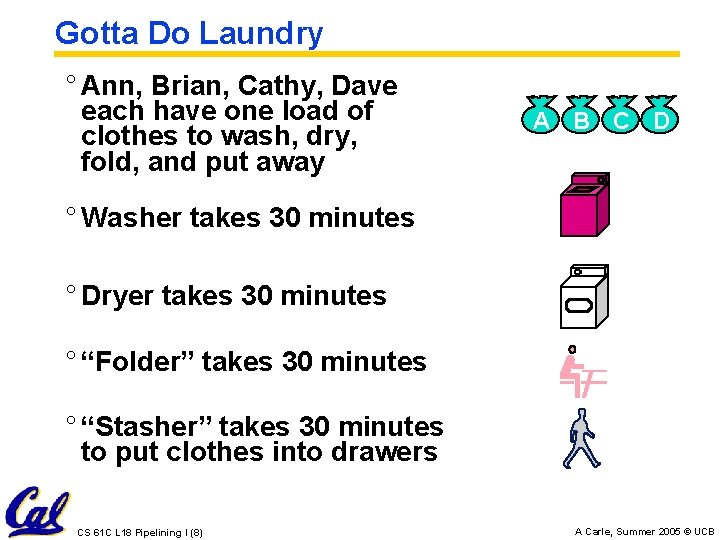

Gotta Do Laundry ° Ann, Brian, Cathy, Dave each have one load of clothes to wash, dry, fold, and put away A B C D ° Washer takes 30 minutes ° Dryer takes 30 minutes ° “Folder” takes 30 minutes ° “Stasher” takes 30 minutes to put clothes into drawers CS 61 C L 18 Pipelining I (8) A Carle, Summer 2005 © UCB

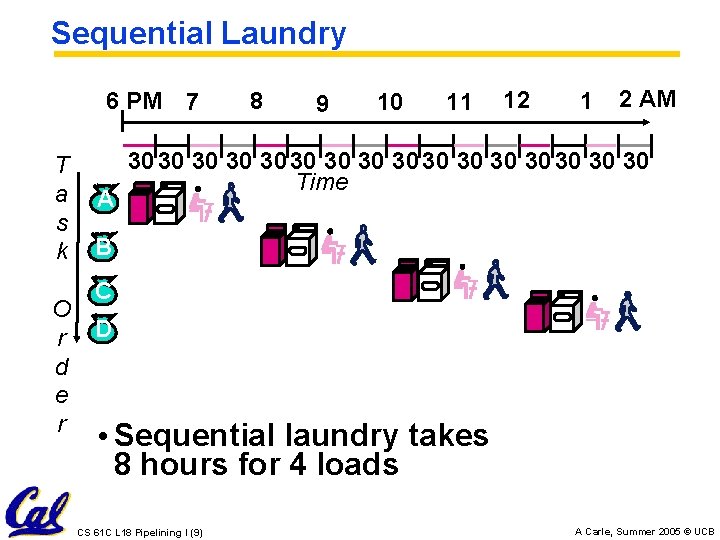

Sequential Laundry 6 PM 7 T a s k O r d e r A 8 9 10 11 12 1 2 AM 30 30 30 30 Time B C D • Sequential laundry takes 8 hours for 4 loads CS 61 C L 18 Pipelining I (9) A Carle, Summer 2005 © UCB

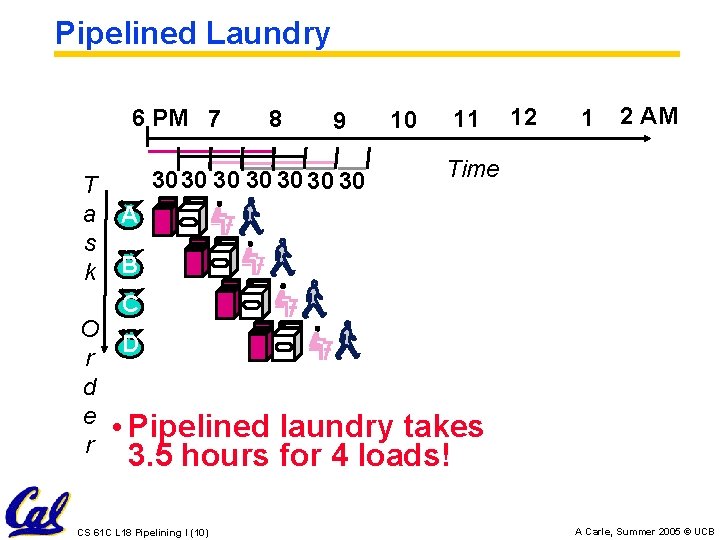

Pipelined Laundry 6 PM 7 T a s k 8 9 3030 30 30 10 11 12 1 2 AM Time A B C O D r d e • Pipelined r laundry takes 3. 5 hours for 4 loads! CS 61 C L 18 Pipelining I (10) A Carle, Summer 2005 © UCB

General Definitions • Latency: time to completely execute a certain task • for example, time to read a sector from disk is disk access time or disk latency • Instruction latency is time from when instruction starts to time when it finishes. • Throughput: amount of work that can be done over a period of time CS 61 C L 18 Pipelining I (11) A Carle, Summer 2005 © UCB

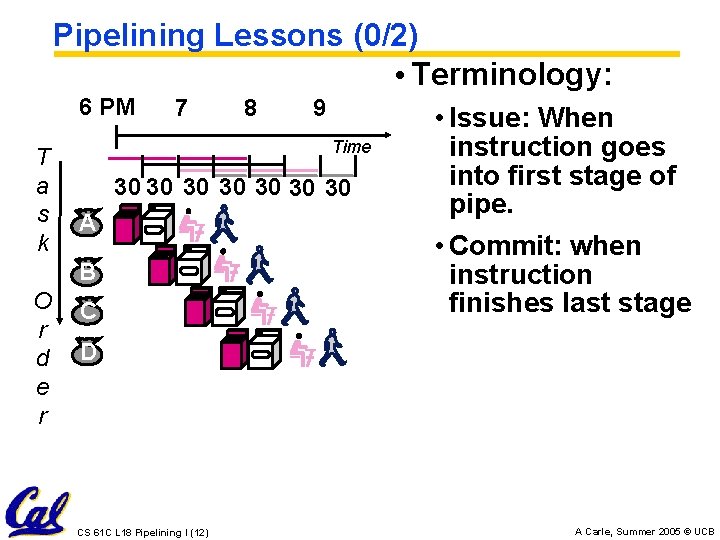

Pipelining Lessons (0/2) • Terminology: 6 PM T a s k 7 9 Time 30 30 A B O r d e r 8 C • Issue: When instruction goes into first stage of pipe. • Commit: when instruction finishes last stage D CS 61 C L 18 Pipelining I (12) A Carle, Summer 2005 © UCB

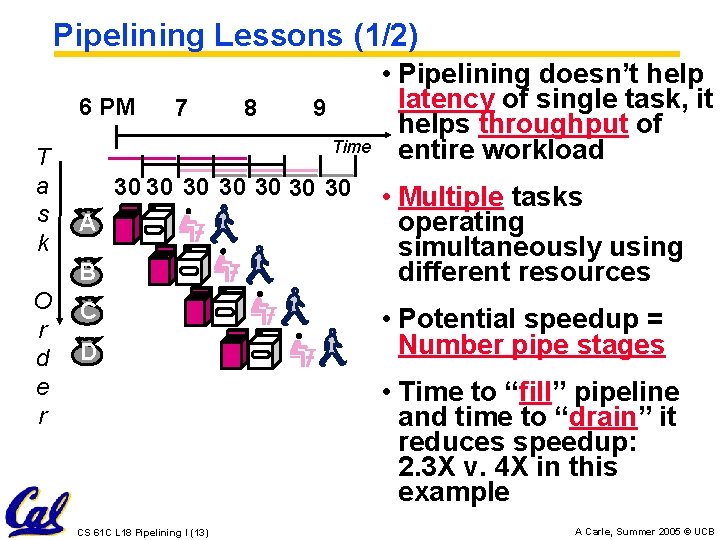

Pipelining Lessons (1/2) 6 PM T a s k 7 9 Time 30 30 A B O r d e r 8 C D • Pipelining doesn’t help latency of single task, it helps throughput of entire workload • Multiple tasks operating simultaneously using different resources • Potential speedup = Number pipe stages • Time to “fill” pipeline and time to “drain” it reduces speedup: 2. 3 X v. 4 X in this example CS 61 C L 18 Pipelining I (13) A Carle, Summer 2005 © UCB

Pipelining Lessons (2/2) • Suppose new Washer takes 20 6 PM 7 8 9 minutes, new Time T Stasher takes 20 a 30 30 minutes. How s A much faster is k pipeline? B O r d e r C D • Pipeline rate limited by slowest pipeline stage • Unbalanced lengths of pipe stages also reduces speedup CS 61 C L 18 Pipelining I (14) A Carle, Summer 2005 © UCB

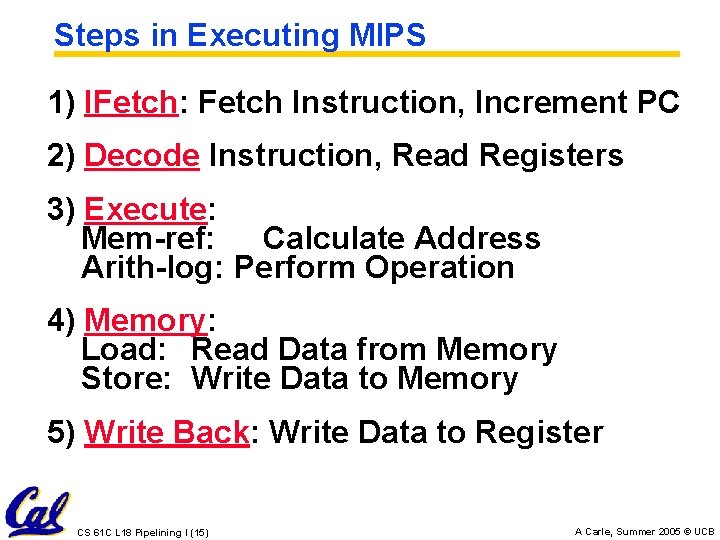

Steps in Executing MIPS 1) IFetch: Fetch Instruction, Increment PC 2) Decode Instruction, Read Registers 3) Execute: Mem-ref: Calculate Address Arith-log: Perform Operation 4) Memory: Load: Read Data from Memory Store: Write Data to Memory 5) Write Back: Write Data to Register CS 61 C L 18 Pipelining I (15) A Carle, Summer 2005 © UCB

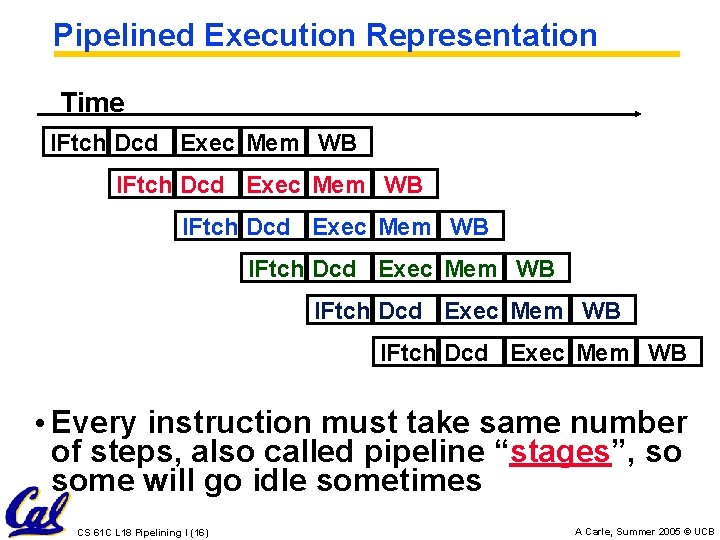

Pipelined Execution Representation Time IFtch Dcd Exec Mem WB IFtch Dcd Exec Mem WB • Every instruction must take same number of steps, also called pipeline “stages”, so some will go idle sometimes CS 61 C L 18 Pipelining I (16) A Carle, Summer 2005 © UCB

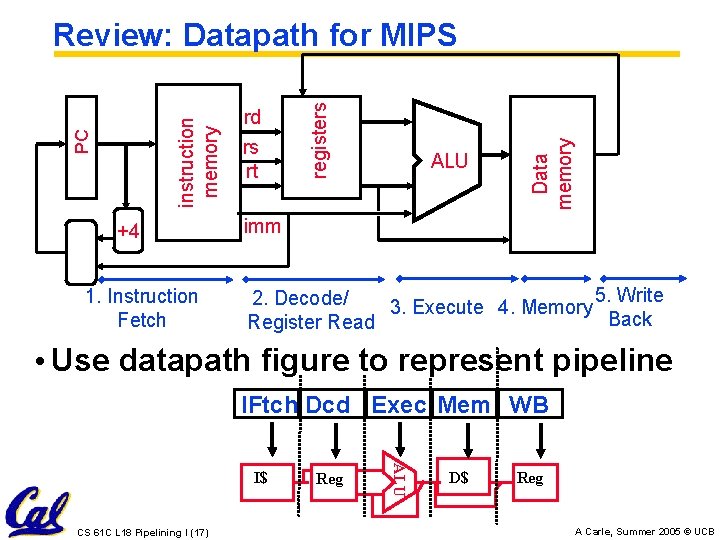

+4 1. Instruction Fetch rs rt ALU Data memory rd registers PC instruction memory Review: Datapath for MIPS imm 5. Write 2. Decode/ 3. Execute 4. Memory Back Register Read • Use datapath figure to represent pipeline IFtch Dcd Exec Mem WB CS 61 C L 18 Pipelining I (17) Reg ALU I$ D$ Reg A Carle, Summer 2005 © UCB

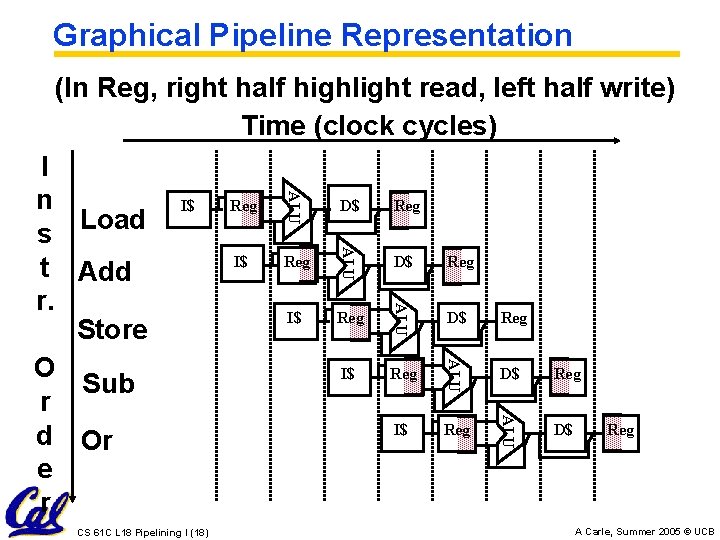

Graphical Pipeline Representation (In Reg, right half highlight read, left half write) Time (clock cycles) Reg D$ Reg I$ Reg ALU I$ D$ ALU CS 61 C L 18 Pipelining I (18) Reg ALU I$ ALU I n s Load t Add r. Store O Sub r d Or e r D$ Reg A Carle, Summer 2005 © UCB

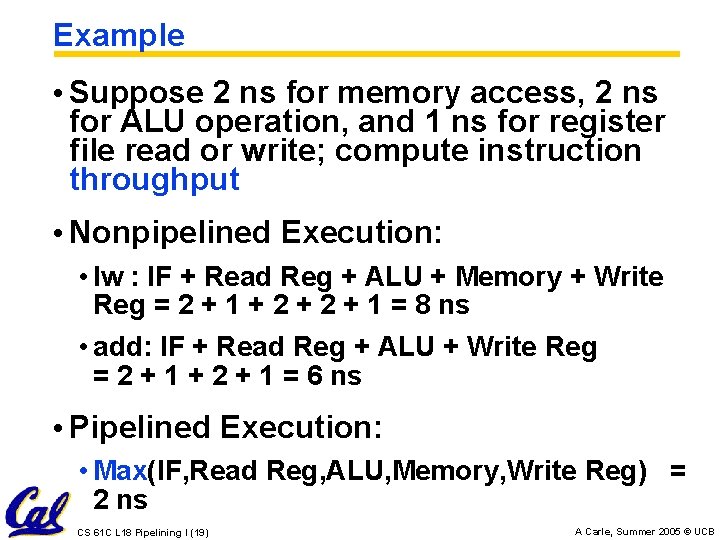

Example • Suppose 2 ns for memory access, 2 ns for ALU operation, and 1 ns for register file read or write; compute instruction throughput • Nonpipelined Execution: • lw : IF + Read Reg + ALU + Memory + Write Reg = 2 + 1 + 2 + 1 = 8 ns • add: IF + Read Reg + ALU + Write Reg = 2 + 1 + 2 + 1 = 6 ns • Pipelined Execution: • Max(IF, Read Reg, ALU, Memory, Write Reg) = 2 ns CS 61 C L 18 Pipelining I (19) A Carle, Summer 2005 © UCB

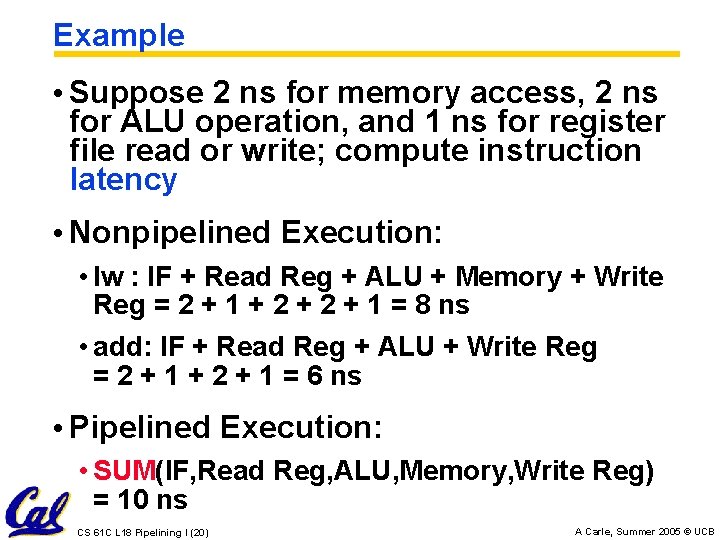

Example • Suppose 2 ns for memory access, 2 ns for ALU operation, and 1 ns for register file read or write; compute instruction latency • Nonpipelined Execution: • lw : IF + Read Reg + ALU + Memory + Write Reg = 2 + 1 + 2 + 1 = 8 ns • add: IF + Read Reg + ALU + Write Reg = 2 + 1 + 2 + 1 = 6 ns • Pipelined Execution: • SUM(IF, Read Reg, ALU, Memory, Write Reg) = 10 ns CS 61 C L 18 Pipelining I (20) A Carle, Summer 2005 © UCB

Things to Remember • Optimal Pipeline • Each stage is executing part of an instruction each clock cycle. • One instruction finishes during each clock cycle. • On average, executes far more quickly. • What makes this work? • Similarities between instructions allow us to use same stages for all instructions (generally). • Each stage takes about the same amount of time as all others: little wasted time. CS 61 C L 18 Pipelining I (21) A Carle, Summer 2005 © UCB

Pipeline Summary • Pipelining is a BIG IDEA • widely used concept • What makes it less than perfect? … CS 61 C L 18 Pipelining I (22) A Carle, Summer 2005 © UCB

Administrivia • Project 2 – Sunday • HW 6 out now • Due next Tuesday CS 61 C L 18 Pipelining I (23) A Carle, Summer 2005 © UCB

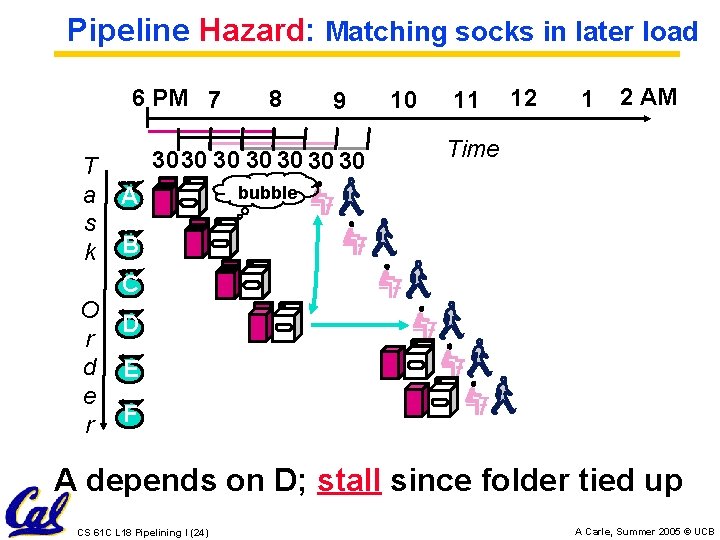

Pipeline Hazard: Matching socks in later load 6 PM 7 T a s k 8 9 3030 30 30 A 10 11 12 1 2 AM Time bubble B C O D r d E e r F A depends on D; stall since folder tied up CS 61 C L 18 Pipelining I (24) A Carle, Summer 2005 © UCB

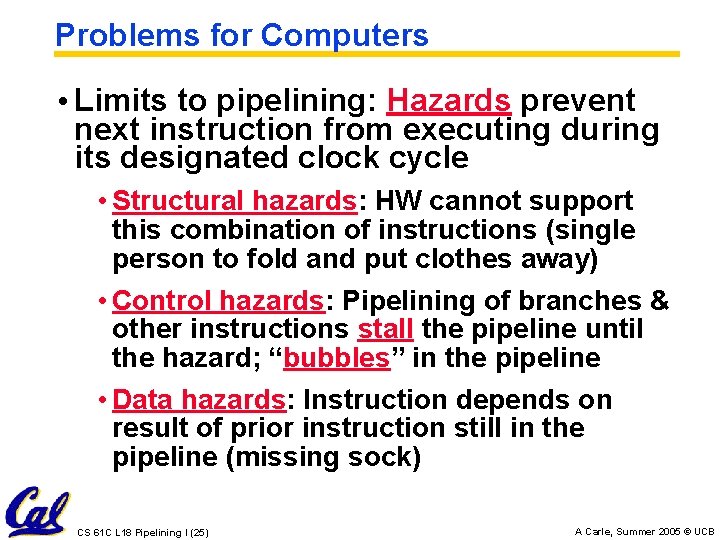

Problems for Computers • Limits to pipelining: Hazards prevent next instruction from executing during its designated clock cycle • Structural hazards: HW cannot support this combination of instructions (single person to fold and put clothes away) • Control hazards: Pipelining of branches & other instructions stall the pipeline until the hazard; “bubbles” in the pipeline • Data hazards: Instruction depends on result of prior instruction still in the pipeline (missing sock) CS 61 C L 18 Pipelining I (25) A Carle, Summer 2005 © UCB

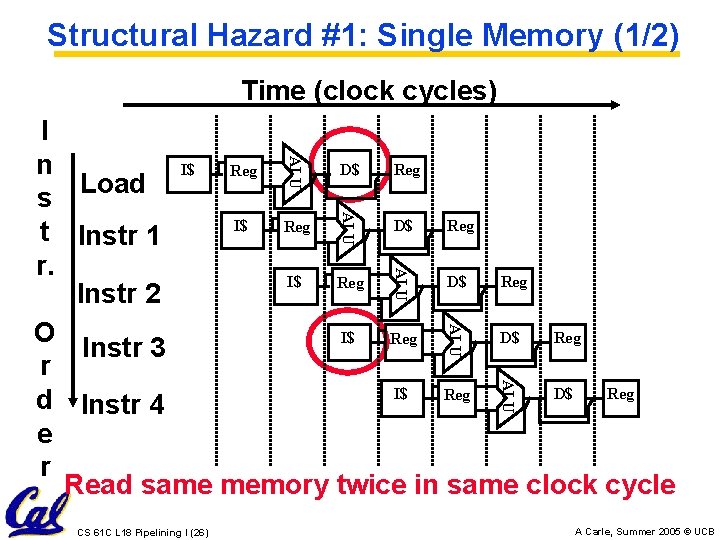

Structural Hazard #1: Single Memory (1/2) Time (clock cycles) ALU I n I$ D$ Reg s Load I$ D$ Reg t Instr 1 r. I$ D$ Reg Instr 2 O I$ D$ Reg Instr 3 r I$ D$ Reg d Instr 4 e r Read same memory twice in same clock cycle ALU ALU CS 61 C L 18 Pipelining I (26) A Carle, Summer 2005 © UCB

Structural Hazard #1: Single Memory (2/2) • Solution: • infeasible and inefficient to create second memory • (We’ll learn about this more next week) • so simulate this by having two Level 1 Caches (a temporary smaller [of usually most recently used] copy of memory) • have both an L 1 Instruction Cache and an L 1 Data Cache • requires complex hardware to control when both caches miss! CS 61 C L 18 Pipelining I (27) A Carle, Summer 2005 © UCB

Structural Hazard #2: Registers (1/2) Time (clock cycles) Reg D$ Reg I$ Reg ALU I$ D$ ALU O Instr 2 r Instr 3 d e Instr 4 r Reg ALU I$ ALU I n s t sw r. Instr 1 D$ Reg Can’t read and write to registers simultaneously CS 61 C L 18 Pipelining I (28) A Carle, Summer 2005 © UCB

Structural Hazard #2: Registers (2/2) • Fact: Register access is VERY fast: takes less than half the time of ALU stage • Solution: introduce convention • always Write to Registers during first half of each clock cycle • always Read from Registers during second half of each clock cycle (easy when async) • Result: can perform Read and Write during same clock cycle CS 61 C L 18 Pipelining I (29) A Carle, Summer 2005 © UCB

Control Hazard: Branching (1/7) Time (clock cycles) ALU I n I$ D$ Reg beq s I$ D$ Reg t Instr 1 r. I$ D$ Reg Instr 2 O I$ D$ Reg Instr 3 r I$ D$ Reg d Instr 4 e r Where do we do the compare for the branch? ALU ALU CS 61 C L 18 Pipelining I (30) A Carle, Summer 2005 © UCB

Control Hazard: Branching (2/7) • We put branch decision-making hardware in ALU stage • therefore two more instructions after the branch will always be fetched, whether or not the branch is taken • Desired functionality of a branch • if we do not take the branch, don’t waste any time and continue executing normally • if we take the branch, don’t execute any instructions after the branch, just go to the desired label CS 61 C L 18 Pipelining I (31) A Carle, Summer 2005 © UCB

Control Hazard: Branching (3/7) • Initial Solution: Stall until decision is made • insert “no-op” instructions: those that accomplish nothing, just take time • Drawback: branches take 3 clock cycles each (assuming comparator is put in ALU stage) • Drawback: Will still fetch inst at branch+4. Must either decode branch in IF or squash fetched branch+4. CS 61 C L 18 Pipelining I (32) A Carle, Summer 2005 © UCB

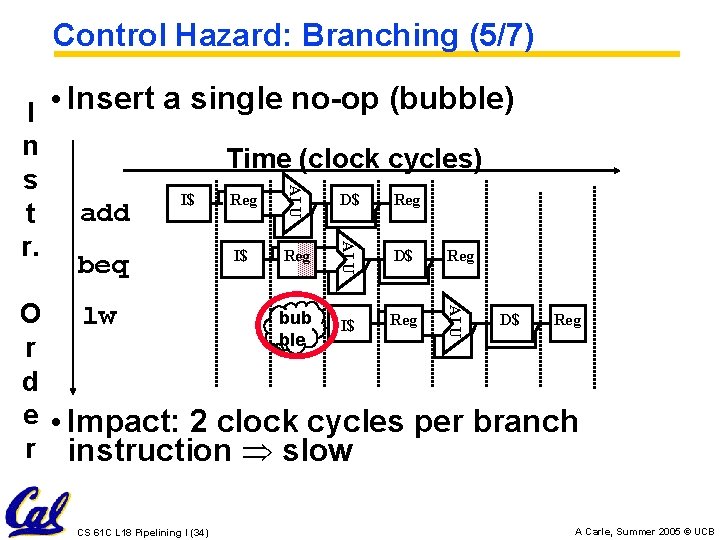

Control Hazard: Branching (4/7) • Optimization #1: • move asynchronous comparator up to Stage 2 • as soon as instruction is decoded (Opcode identifies is as a branch), immediately make a decision and set the value of the PC (if necessary) • Benefit: since branch is complete in Stage 2, only one unnecessary instruction is fetched, so only one no-op is needed • Side Note: This means that branches are idle in Stages 3, 4 and 5. CS 61 C L 18 Pipelining I (33) A Carle, Summer 2005 © UCB

Control Hazard: Branching (5/7) ALU I • Insert a single no-op (bubble) n Time (clock cycles) s D$ Reg I$ Reg add t r. D$ Reg I$ Reg beq ALU O lw bub D$ Reg I$ ble r d e • Impact: 2 clock cycles per branch r instruction slow CS 61 C L 18 Pipelining I (34) A Carle, Summer 2005 © UCB

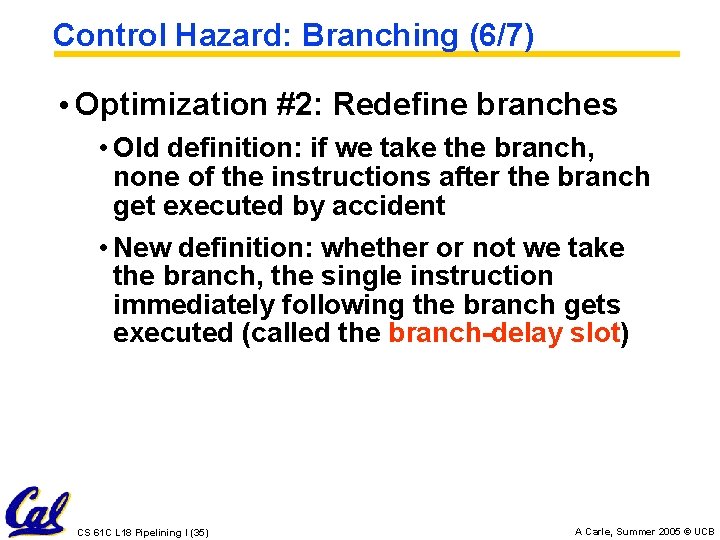

Control Hazard: Branching (6/7) • Optimization #2: Redefine branches • Old definition: if we take the branch, none of the instructions after the branch get executed by accident • New definition: whether or not we take the branch, the single instruction immediately following the branch gets executed (called the branch-delay slot) CS 61 C L 18 Pipelining I (35) A Carle, Summer 2005 © UCB

Control Hazard: Branching (7/7) • Notes on Branch-Delay Slot • Worst-Case Scenario: can always put a no-op in the branch-delay slot • Better Case: can find an instruction preceding the branch which can be placed in the branch-delay slot without affecting flow of the program - re-ordering instructions is a common method of speeding up programs - compiler must be very smart in order to find instructions to do this - usually can find such an instruction at least 50% of the time - Jumps also have a delay slot… CS 61 C L 18 Pipelining I (36) A Carle, Summer 2005 © UCB

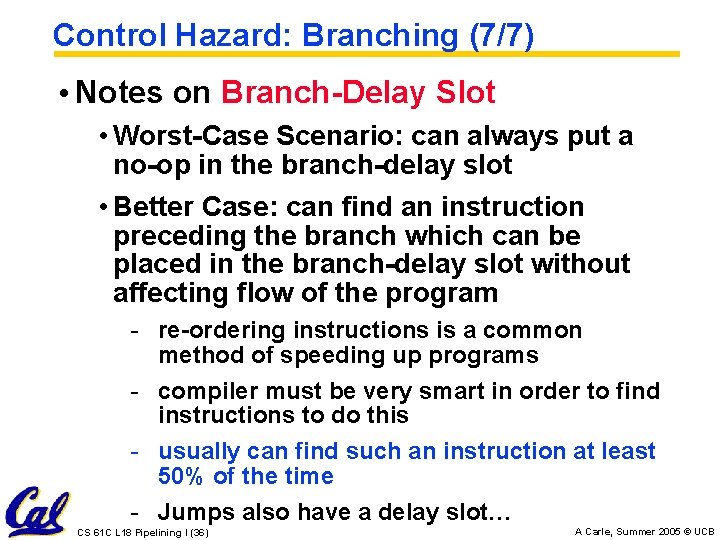

Example: Nondelayed vs. Delayed Branch Nondelayed Branch or $8, $9 , $10 Delayed Branch add $1 , $2, $3 sub $4, $5, $6 beq $1, $4, Exit or xor $10, $1, $11 Exit: CS 61 C L 18 Pipelining I (37) $8, $9 , $10 Exit: A Carle, Summer 2005 © UCB

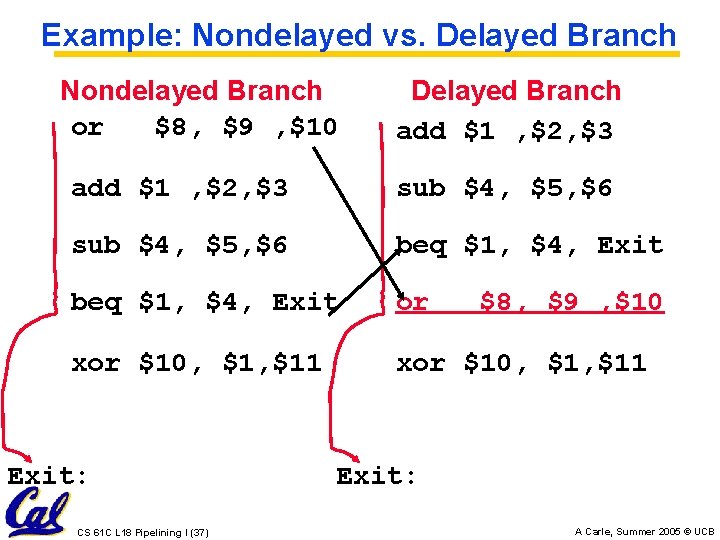

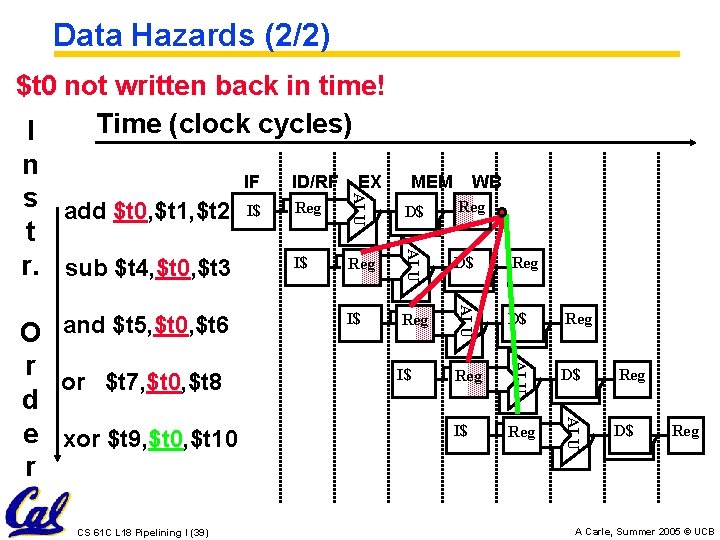

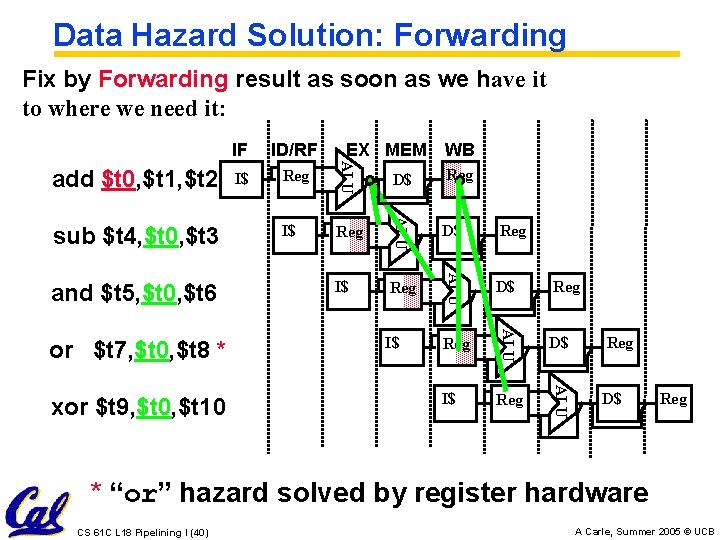

Data Hazards (1/2) • Consider the following sequence of instructions add $t 0, $t 1, $t 2 sub $t 4, $t 0 , $t 3 and $t 5, $t 0 , $t 6 or $t 7, $t 0 , $t 8 xor $t 9, $t 0 , $t 10 CS 61 C L 18 Pipelining I (38) A Carle, Summer 2005 © UCB

Data Hazards (2/2) Reg D$ Reg I$ Reg ALU CS 61 C L 18 Pipelining I (39) D$ ALU r or $t 7, $t 0, $t 8 d e xor $t 9, $t 0, $t 10 r I$ WB ALU O and $t 5, $t 0, $t 6 MEM ALU $t 0 not written back in time! Time (clock cycles) I n IF ID/RF EX s add $t 0, $t 1, $t 2 I$ Reg t I$ Reg r. sub $t 4, $t 0, $t 3 D$ Reg A Carle, Summer 2005 © UCB

Data Hazard Solution: Forwarding Fix by Forwarding result as soon as we have it to where we need it: Reg D$ Reg I$ Reg ALU xor $t 9, $t 0, $t 10 I$ D$ ALU or $t 7, $t 0, $t 8 * Reg WB ALU and $t 5, $t 0, $t 6 I$ EX MEM ALU sub $t 4, $t 0, $t 3 ID/RF ALU add $t 0, $t 1, $t 2 IF D$ Reg * “or” hazard solved by register hardware CS 61 C L 18 Pipelining I (40) A Carle, Summer 2005 © UCB

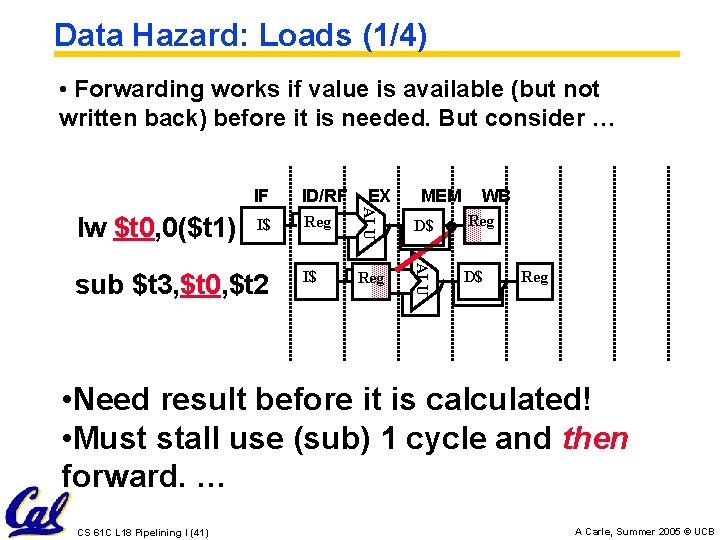

Data Hazard: Loads (1/4) • Forwarding works if value is available (but not written back) before it is needed. But consider … I$ Reg I$ sub $t 3, $t 0, $t 2 EX MEM WB D$ Reg ALU ID/RF ALU lw $t 0, 0($t 1) IF D$ Reg • Need result before it is calculated! • Must stall use (sub) 1 cycle and then forward. … CS 61 C L 18 Pipelining I (41) A Carle, Summer 2005 © UCB

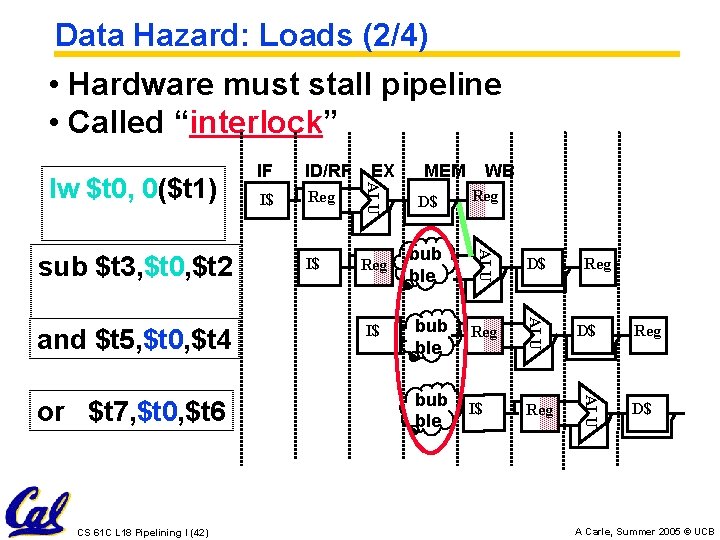

Data Hazard: Loads (2/4) • Hardware must stall pipeline • Called “interlock” CS 61 C L 18 Pipelining I (42) I$ WB D$ Reg bub ble I$ D$ bub ble Reg D$ bub ble I$ Reg ALU or $t 7, $t 0, $t 6 Reg MEM ALU and $t 5, $t 0, $t 4 I$ EX ALU sub $t 3, $t 0, $t 2 ID/RF ALU lw $t 0, 0($t 1) IF Reg D$ A Carle, Summer 2005 © UCB

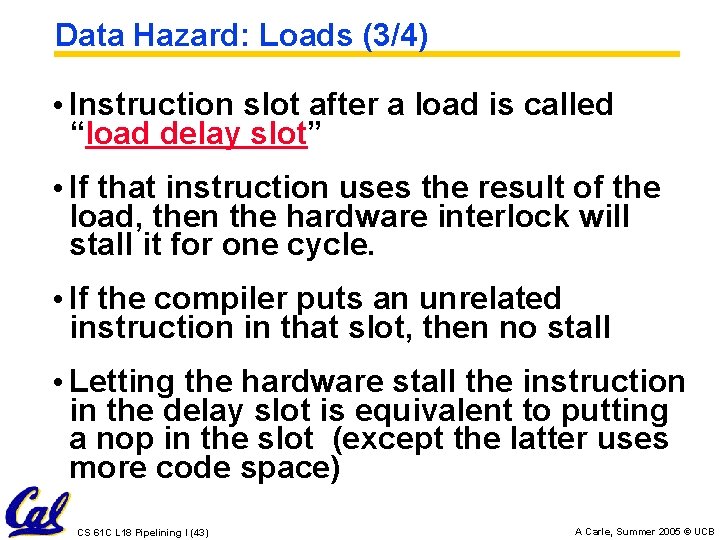

Data Hazard: Loads (3/4) • Instruction slot after a load is called “load delay slot” • If that instruction uses the result of the load, then the hardware interlock will stall it for one cycle. • If the compiler puts an unrelated instruction in that slot, then no stall • Letting the hardware stall the instruction in the delay slot is equivalent to putting a nop in the slot (except the latter uses more code space) CS 61 C L 18 Pipelining I (43) A Carle, Summer 2005 © UCB

Data Hazard: Loads (4/4) • Stall is equivalent to nop or $t 7, $t 0, $t 6 CS 61 C L 18 Pipelining I (44) bub ble bub ble I$ Reg D$ Reg I$ Reg ALU and $t 5, $t 0, $t 4 Reg ALU sub $t 3, $t 0, $t 2 D$ ALU nop I$ ALU lw $t 0, 0($t 1) D$ Reg A Carle, Summer 2005 © UCB

C. f. Branch Delay vs. Load Delay • Load Delay occurs only if necessary (dependent instructions). • Branch Delay always happens (part of the ISA). • Why not have Branch Delay interlocked? • Answer: Interlocks only work if you can detect hazard ahead of time. By the time we detect a branch, we already need its value … hence no interlock is possible! CS 61 C L 18 Pipelining I (45) A Carle, Summer 2005 © UCB

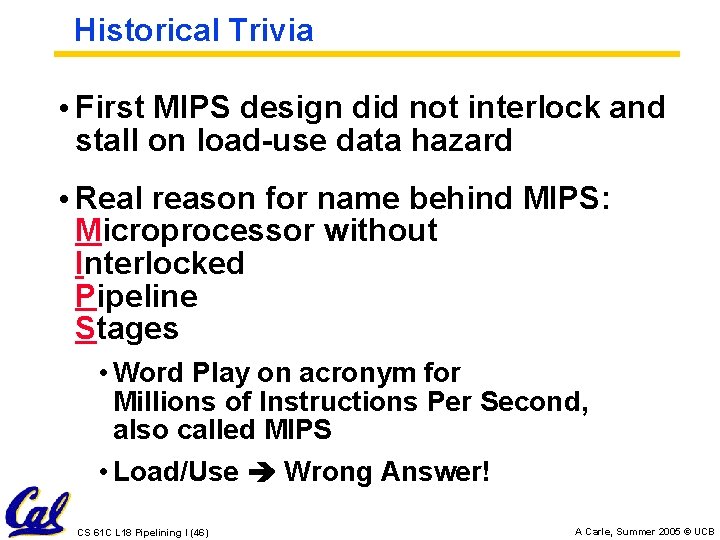

Historical Trivia • First MIPS design did not interlock and stall on load-use data hazard • Real reason for name behind MIPS: Microprocessor without Interlocked Pipeline Stages • Word Play on acronym for Millions of Instructions Per Second, also called MIPS • Load/Use Wrong Answer! CS 61 C L 18 Pipelining I (46) A Carle, Summer 2005 © UCB

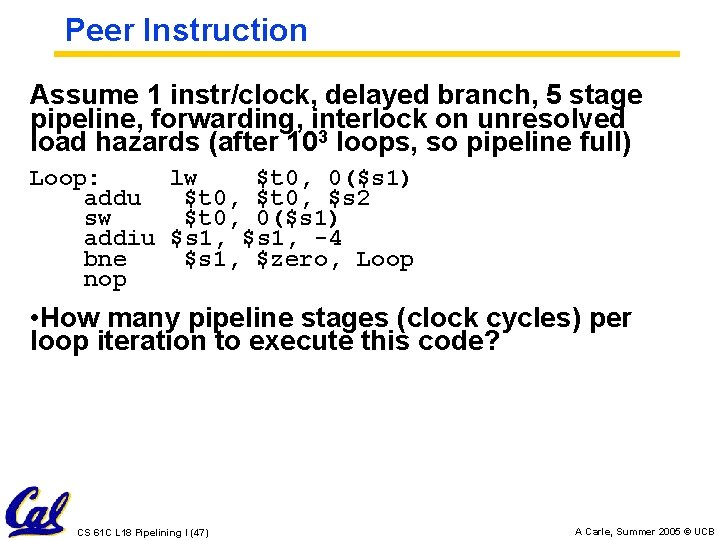

Peer Instruction Assume 1 instr/clock, delayed branch, 5 stage pipeline, forwarding, interlock on unresolved load hazards (after 103 loops, so pipeline full) Loop: lw $t 0, 0($s 1) addu $t 0, $s 2 sw $t 0, 0($s 1) addiu $s 1, -4 bne $s 1, $zero, Loop nop • How many pipeline stages (clock cycles) per loop iteration to execute this code? CS 61 C L 18 Pipelining I (47) A Carle, Summer 2005 © UCB

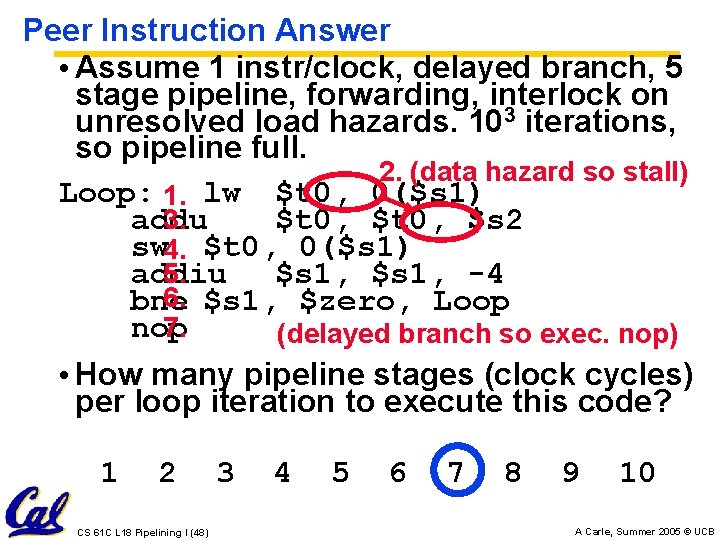

Peer Instruction Answer • Assume 1 instr/clock, delayed branch, 5 stage pipeline, forwarding, interlock on unresolved load hazards. 103 iterations, so pipeline full. 2. (data hazard so stall) Loop: 1. lw $t 0, 0($s 1) 3. addu $t 0, $s 2 sw 4. $t 0, 0($s 1) 5. addiu $s 1, -4 6. $s 1, $zero, Loop bne 7. nop (delayed branch so exec. nop) • How many pipeline stages (clock cycles) per loop iteration to execute this code? 1 2 CS 61 C L 18 Pipelining I (48) 3 4 5 6 7 8 9 10 A Carle, Summer 2005 © UCB

“And in Conclusion. . ” • Pipeline challenge is hazards • Forwarding helps w/many data hazards • Delayed branch helps with control hazard in 5 stage pipeline CS 61 C L 18 Pipelining I (49) A Carle, Summer 2005 © UCB

- Slides: 49