Inferring Accountability from Trust Perceptions Koen Decroix Denis

Inferring Accountability from Trust Perceptions Koen Decroix, Denis Butin, Joachim Jansen, Vincent Naessens ICISS 2014, Hyderabad

Outline • • • Introducing Accountability Goal Modeling Approach Evaluation Conclusions

Introducing Accountability

Username Password Email Date of birth Sex Name Credit card information

Privacy policy Alice agrees with the terms and policies of Spotify and gives her explicit consent for the specified data handling practices Often vague about: • • • Purpose for which personal data is used The collaborating third-parties they forward data to Obligations in terms of third-party forwarding Retention of personal data …

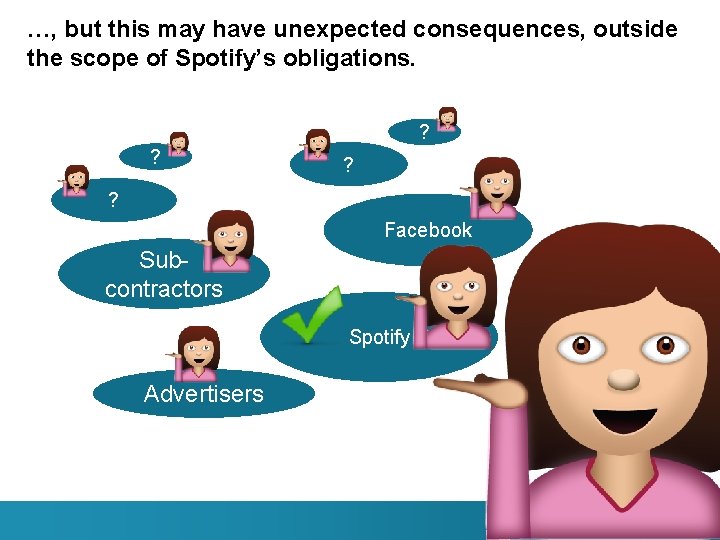

…, but this may have unexpected consequences, outside the scope of Spotify’s obligations. ? ? ? ? Facebook Subcontractors Spotify Advertisers

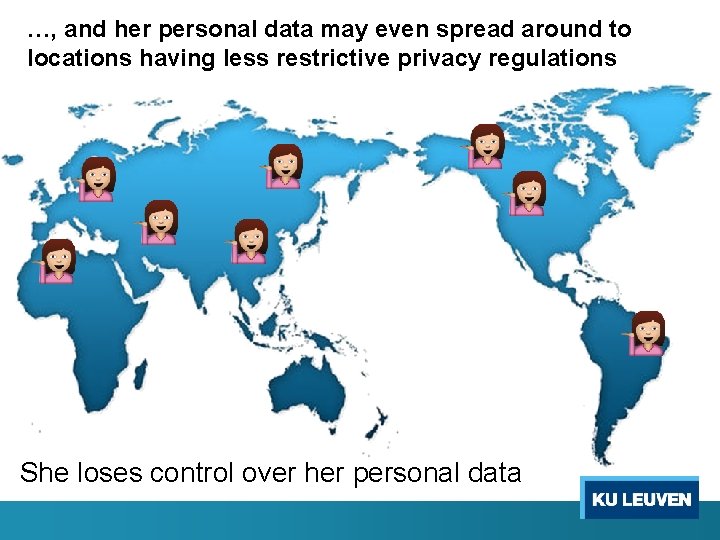

…, and her personal data may even spread around to locations having less restrictive privacy regulations She loses control over her personal data

a key component for protecting an individual’s privacy Accountability Necessity to demonstrate compliance as a burden for data controllers

Accountability explicitly cited as an obligation of data processors for their data handling practices in the upcoming EU Data Protection Regulation

Proposal upcoming EU Data Protection Regulation Article 22 takes account of the debate on a "principle of accountability" and describes in detail the obligation of responsibility of the controller to comply with this Regulation and to demonstrate this compliance, including by way of adoption of internal policies and mechanisms for ensuring such compliance.

Goal

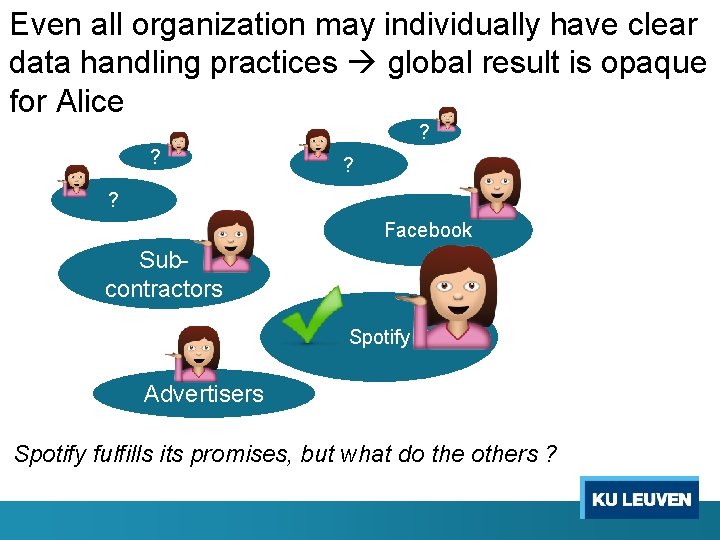

Even all organization may individually have clear data handling practices global result is opaque for Alice ? ? ? ? Facebook Subcontractors Spotify Advertisers Spotify fulfills its promises, but what do the others ?

What would she like … Spotify To understand the system-wide (global) guarantees of data controllers that apply to her personal data.

Modeling Approach

Spotify Inferring Global Accountability Guarantees = A panoramic overview from the viewpoint of a trusted auditor who operates on behalf of the user. This overview also takes the user’s privacy preferences into account

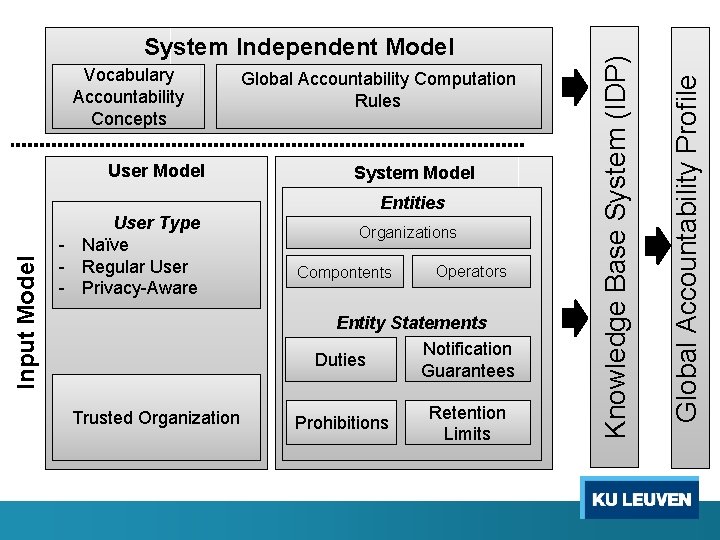

User Model Global Accountability Computation Rules System Model Input Model Entities User Type - Naïve - Regular User - Privacy-Aware Organizations Compontents Operators Entity Statements Notification Duties Guarantees Trusted Organization Prohibitions Retention Limits Global Accountability Profile Vocabulary Accountability Concepts Knowledge Base System (IDP) System Independent Model

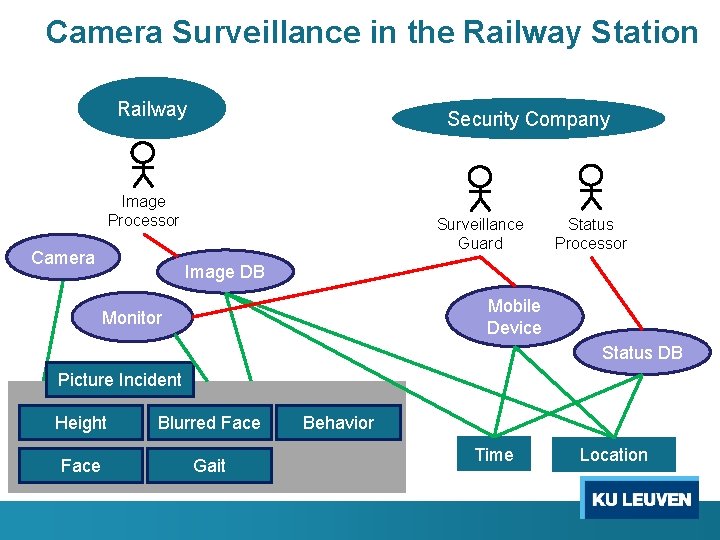

Camera Surveillance in the Railway Station Railway Security Company Image Processor Camera Surveillance Guard Status Processor Image DB Mobile Device Monitor Status DB Picture Incident Height Blurred Face Gait Behavior Time Location

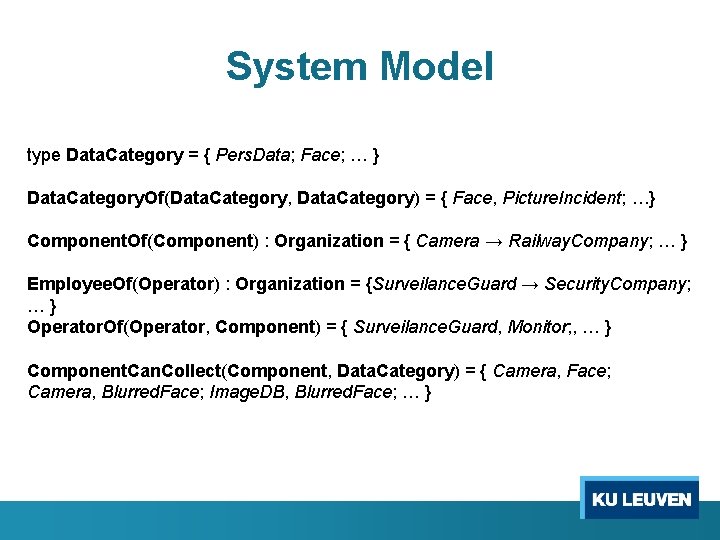

System Model type Data. Category = { Pers. Data; Face; … } Data. Category. Of(Data. Category, Data. Category) = { Face, Picture. Incident; …} Component. Of(Component) : Organization = { Camera → Railway. Company; … } Employee. Of(Operator) : Organization = {Surveilance. Guard → Security. Company; … } Operator. Of(Operator, Component) = { Surveilance. Guard, Monitor; , … } Component. Can. Collect(Component, Data. Category) = { Camera, Face; Camera, Blurred. Face; Image. DB, Blurred. Face; … }

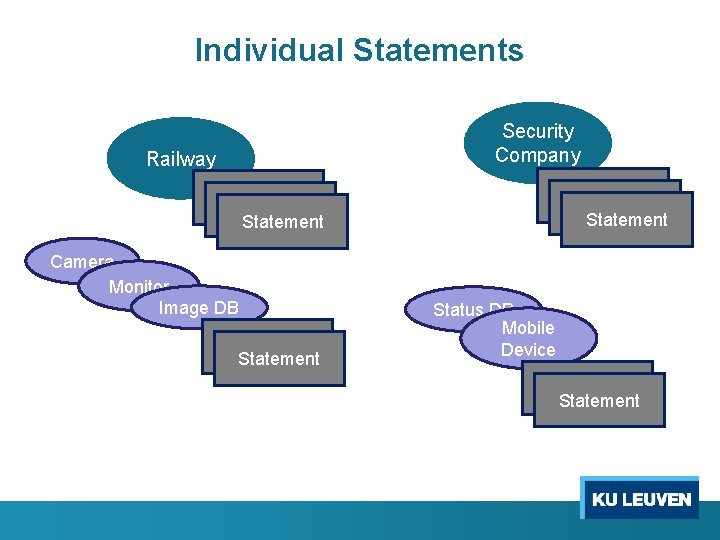

Individual Statements Security Company Railway Statement Statement Camera Monitor Image DB Statement Status DB Mobile Device Statement

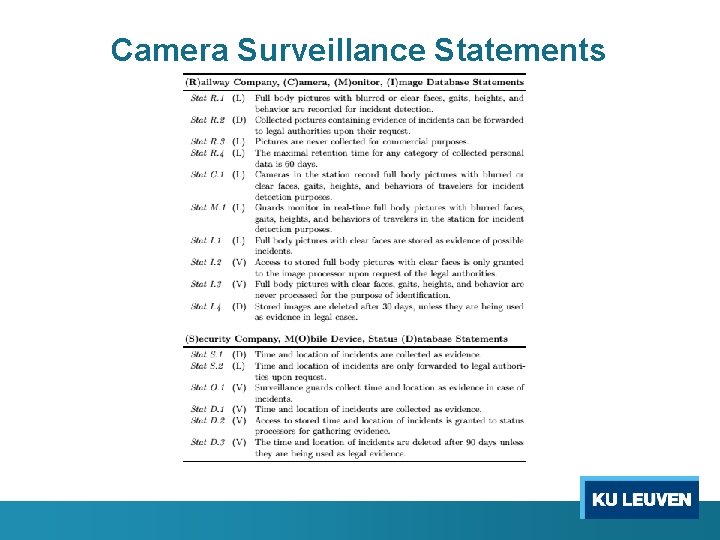

Camera Surveillance Statements

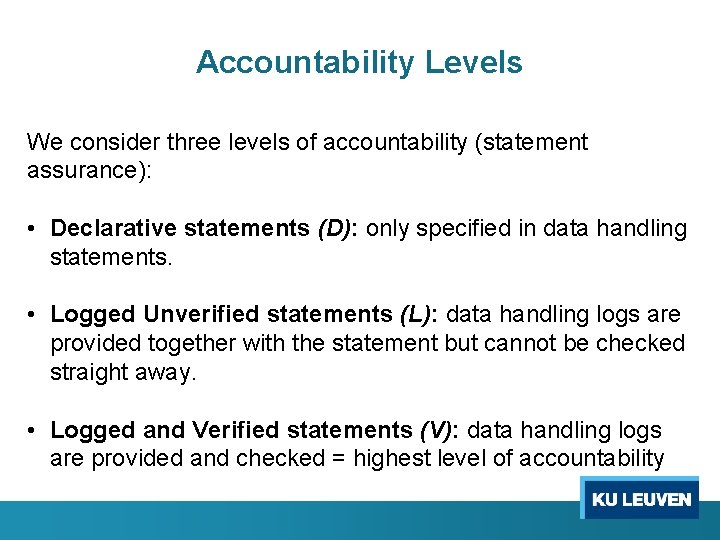

Accountability Levels We consider three levels of accountability (statement assurance): • Declarative statements (D): only specified in data handling statements. • Logged Unverified statements (L): data handling logs are provided together with the statement but cannot be checked straight away. • Logged and Verified statements (V): data handling logs are provided and checked = highest level of accountability

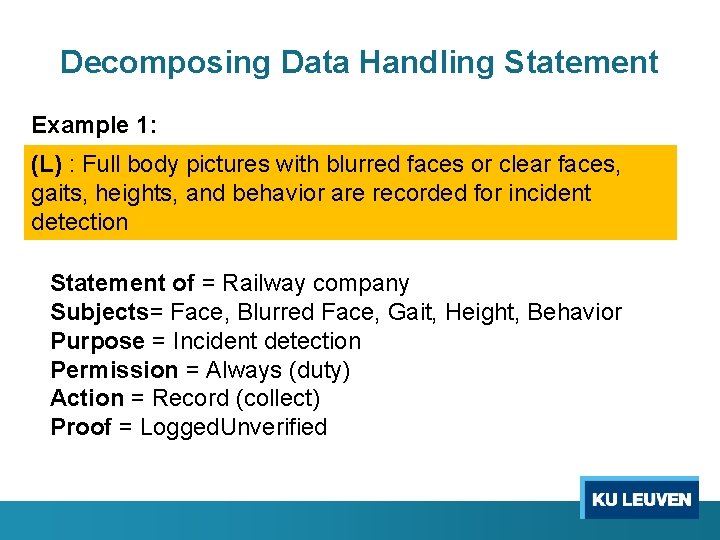

Decomposing Data Handling Statement Example 1: (L) : Full body pictures with blurred faces or clear faces, gaits, heights, and behavior are recorded for incident detection Statement of = Railway company Subjects= Face, Blurred Face, Gait, Height, Behavior Purpose = Incident detection Permission = Always (duty) Action = Record (collect) Proof = Logged. Unverified

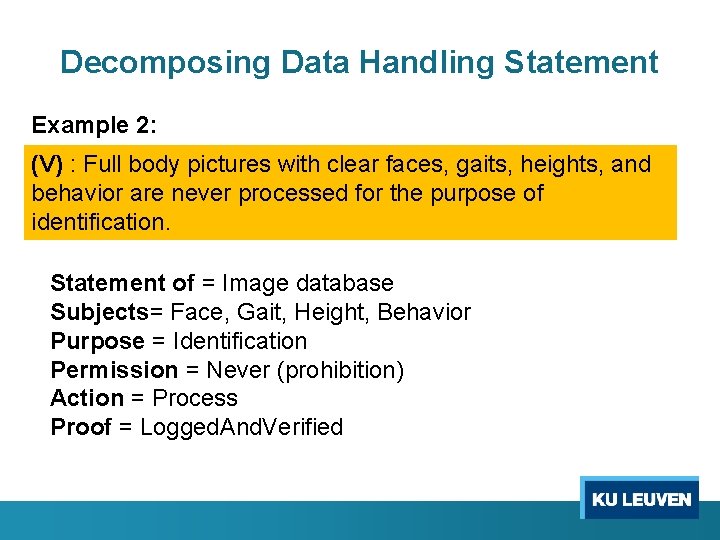

Decomposing Data Handling Statement Example 2: (V) : Full body pictures with clear faces, gaits, heights, and behavior are never processed for the purpose of identification. Statement of = Image database Subjects= Face, Gait, Height, Behavior Purpose = Identification Permission = Never (prohibition) Action = Process Proof = Logged. And. Verified

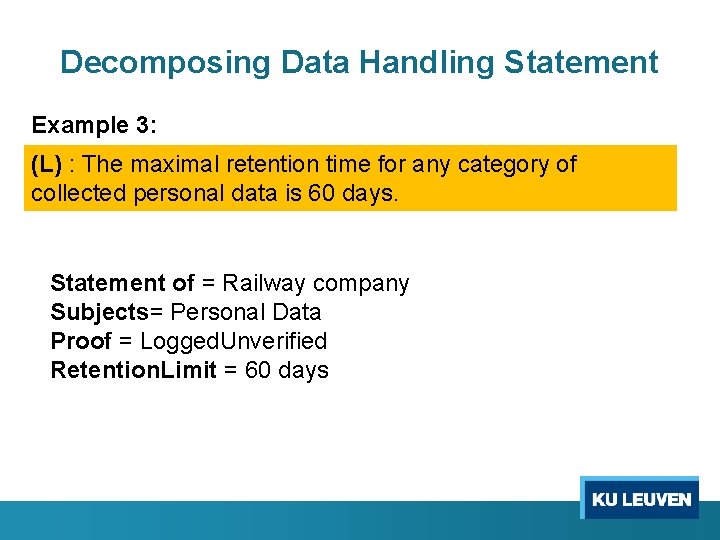

Decomposing Data Handling Statement Example 3: (L) : The maximal retention time for any category of collected personal data is 60 days. Statement of = Railway company Subjects= Personal Data Proof = Logged. Unverified Retention. Limit = 60 days

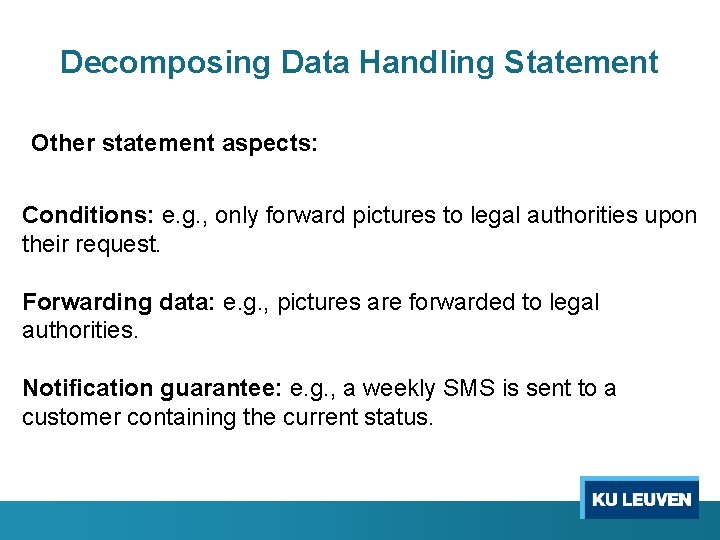

Decomposing Data Handling Statement Other statement aspects: Conditions: e. g. , only forward pictures to legal authorities upon their request. Forwarding data: e. g. , pictures are forwarded to legal authorities. Notification guarantee: e. g. , a weekly SMS is sent to a customer containing the current status.

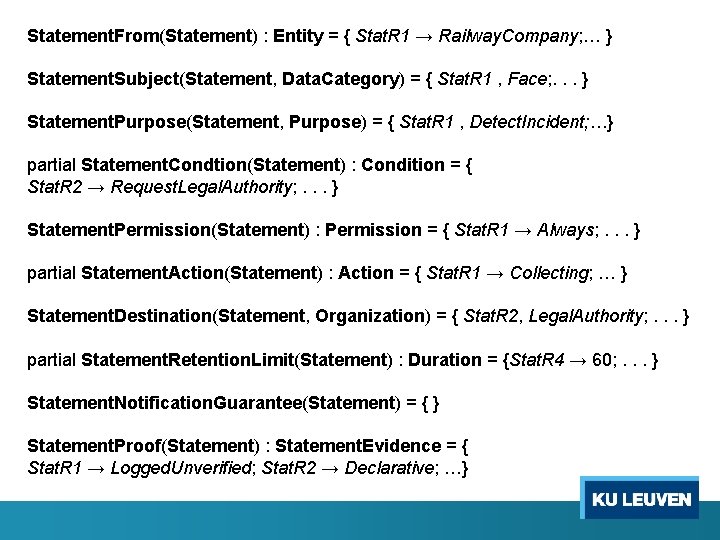

Statement. From(Statement) : Entity = { Stat. R 1 → Railway. Company; … } Statement. Subject(Statement, Data. Category) = { Stat. R 1 , Face; . . . } Statement. Purpose(Statement, Purpose) = { Stat. R 1 , Detect. Incident; …} partial Statement. Condtion(Statement) : Condition = { Stat. R 2 → Request. Legal. Authority; . . . } Statement. Permission(Statement) : Permission = { Stat. R 1 → Always; . . . } partial Statement. Action(Statement) : Action = { Stat. R 1 → Collecting; … } Statement. Destination(Statement, Organization) = { Stat. R 2, Legal. Authority; . . . } partial Statement. Retention. Limit(Statement) : Duration = {Stat. R 4 → 60; . . . } Statement. Notification. Guarantee(Statement) = { } Statement. Proof(Statement) : Statement. Evidence = { Stat. R 1 → Logged. Unverified; Stat. R 2 → Declarative; …}

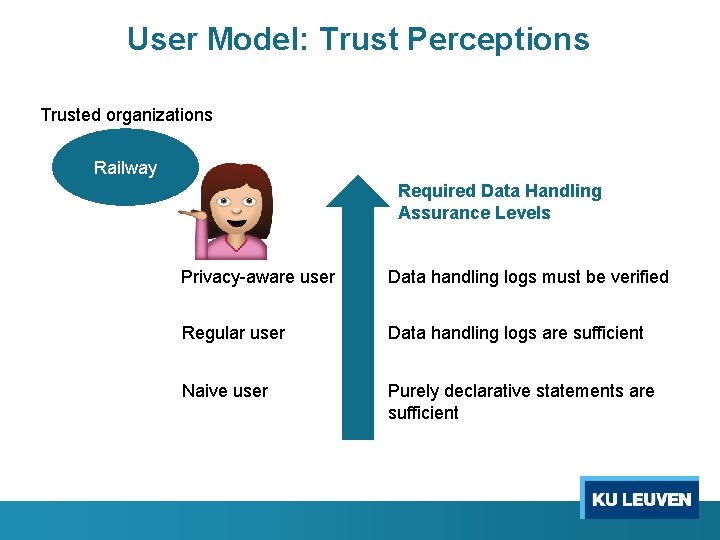

User Model: Trust Perceptions Trusted organizations Railway Required Data Handling Assurance Levels Privacy-aware user Data handling logs must be verified Regular user Data handling logs are sufficient Naive user Purely declarative statements are sufficient

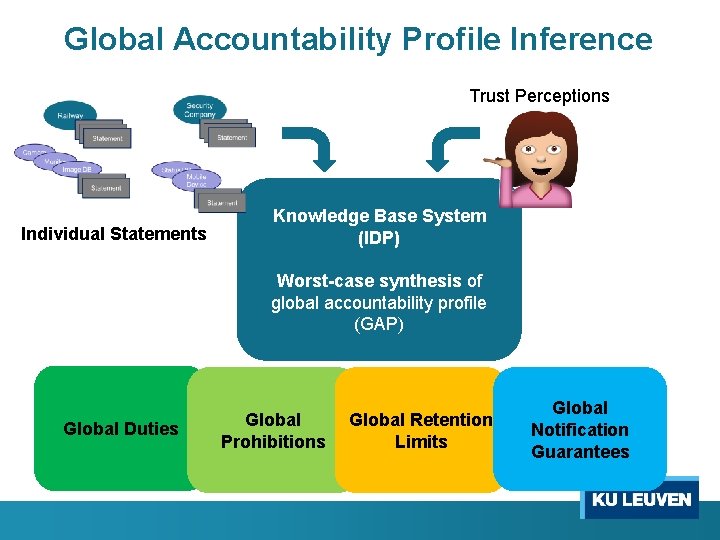

Global Accountability Profile Inference Trust Perceptions Individual Statements Knowledge Base System (IDP) Worst-case synthesis of global accountability profile (GAP) Global Duties Global Prohibitions Global Retention Limits Global Notification Guarantees

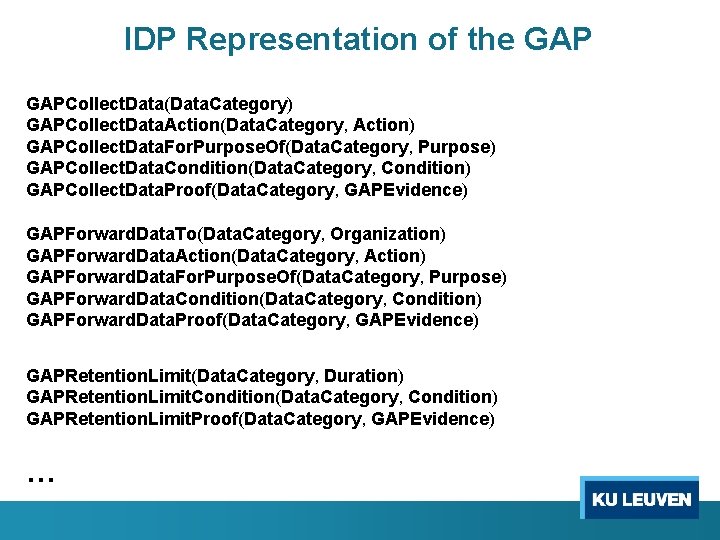

IDP Representation of the GAPCollect. Data(Data. Category) GAPCollect. Data. Action(Data. Category, Action) GAPCollect. Data. For. Purpose. Of(Data. Category, Purpose) GAPCollect. Data. Condition(Data. Category, Condition) GAPCollect. Data. Proof(Data. Category, GAPEvidence) GAPForward. Data. To(Data. Category, Organization) GAPForward. Data. Action(Data. Category, Action) GAPForward. Data. For. Purpose. Of(Data. Category, Purpose) GAPForward. Data. Condition(Data. Category, Condition) GAPForward. Data. Proof(Data. Category, GAPEvidence) GAPRetention. Limit(Data. Category, Duration) GAPRetention. Limit. Condition(Data. Category, Condition) GAPRetention. Limit. Proof(Data. Category, GAPEvidence) …

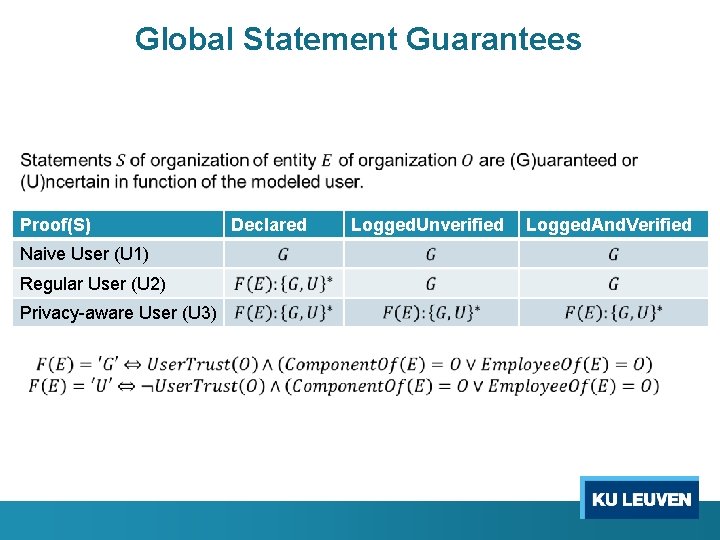

Global Statement Guarantees Proof(S) Naive User (U 1) Regular User (U 2) Privacy-aware User (U 3) Declared Logged. Unverified Logged. And. Verified

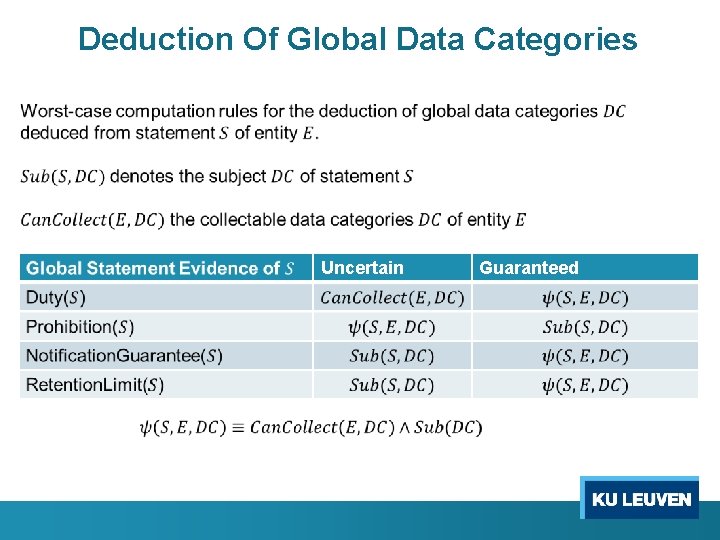

Deduction Of Global Data Categories Uncertain Guaranteed

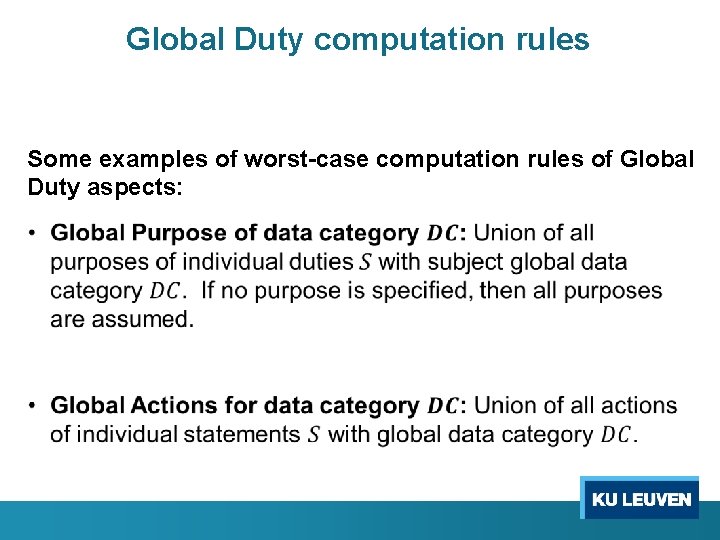

Global Duty computation rules Some examples of worst-case computation rules of Global Duty aspects:

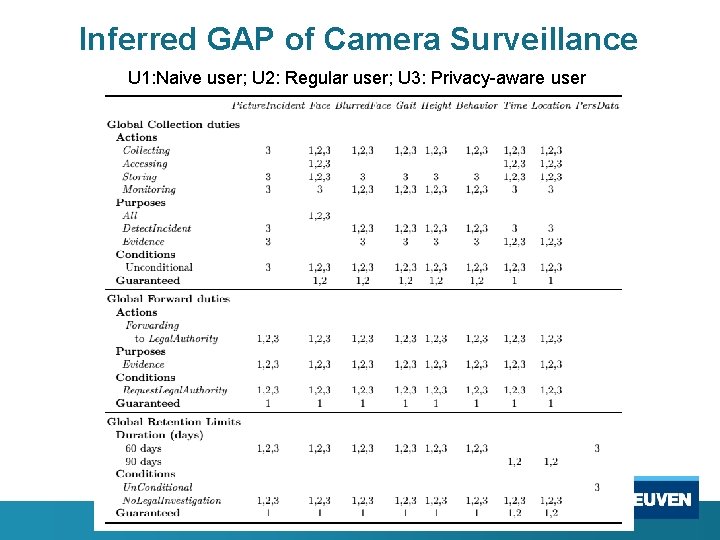

Inferred GAP of Camera Surveillance U 1: Naive user; U 2: Regular user; U 3: Privacy-aware user

Evaluation

Modeling Concepts • Modeling concepts defined for statements containing single declarations. • Modeling statements containing multiple declarations. E. g. , The image database stores the blurred faces and gait for max. of 30 days and for the purpose of statistics and marketing. o Must be split in two statements: • a duty that blurred face and gait are stored • a retention limit that it stores personal data for max. of 30 days

Framework Components •

Modeling Extensions • Detecting Conflicts o Models can be extended with user privacy preferences. Conflicts can be detected between these and the data handling statements in the system.

Conclusions

Conclusions • A modeling approach for inferring accountability is realized in IDP (knowledge base system). Results can be found at code. google. com/p/inferring-accountability • A panoramic view is inferred from individual data handling practices using worst-case computation rules. • Different types of users can easily be modeled • We modeled coarse-grained implicit data handling evidence. A more refined approach would model semantics of log compliance explicitly. This is difficult to implement using FO.

Questions

- Slides: 42