ID 3 Fuzzy ID 3 By Moeini Aghtaie

üID 3 & Fuzzy ID 3 By : Moeini Aghtaie Mohammad Javad Ghorbani Supervisor : Dr. Bagheri Shouraki ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Overview ¢ Introduction ¢ Decision Tree ¢ ID 3 ¢ Fuzzy Finite State Machine 2 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

introduction Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ Decision Tree approach is most useful in Classification problems With this techniques, a tree is constructed to model the classification process Once the tree is built, it is applied to each tuple in the database & results in a classification for that tuple There are 2 basic step in the techniques : l Building the tree to the database l Applying the tree to the database Most research has focused on how to build effective trees 3 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

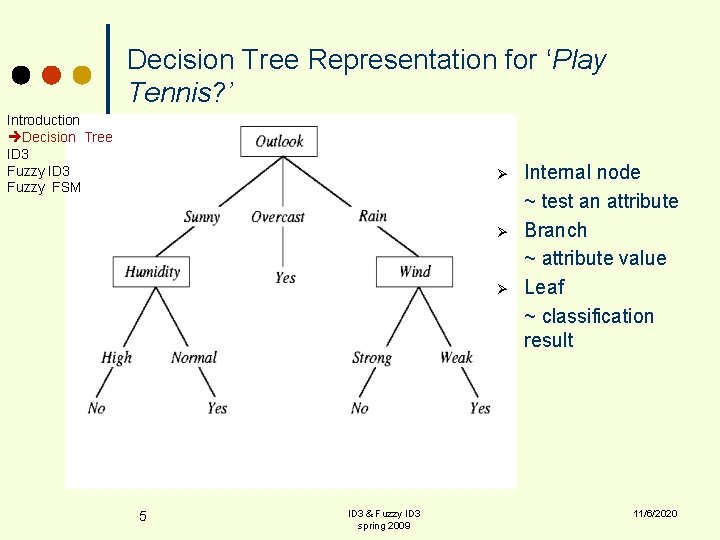

What is a Decision Tree? Introduction Decision Tree ID 3 Fuzzy FSM ¢ An inductive learning task l ¢ A predictive model based on a branching series of Boolean tests l ¢ 4 Use particular facts to make more generalized conclusions These smaller Boolean tests are less complex than a one-stage classifier Let’s look at a sample decision tree… ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Decision Tree Representation for ‘Play Tennis? ’ Introduction Decision Tree ID 3 Fuzzy FSM Ø Ø Ø 5 ID 3 & Fuzzy ID 3 spring 2009 Internal node ~ test an attribute Branch ~ attribute value Leaf ~ classification result 11/6/2020

The Advantages of DT Algorithm Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ ¢ 6 Easy to understand Easy to generate rules Easy to represent using database access language like SQL ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

The Disadvantages of DT Algorithm Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ ¢ 7 Do not easily handle continuous data ¢ Building the tree to the database Not all classification problems are applied to the domain space Difficult to handle missing data ¢ Correct branches in the tree could not be taken According to the training data, overfitting maybe occur ¢ When too many branches are generated, there could be included some noise or outliers Correlations among attributes in the database are ignored by the DT process ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

What is ID 3(Inductive Dichotomizer 3)? Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ ¢ 8 A mathematical algorithm for building the decision tree. Invented by J. Ross Quinlan in 1979. Use Information Theory invented by Shannon in 1948. Build the tree from the top down, with no backtracking. Information Gain is used to select the most useful attribute for classification. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Which Attribute is Best to Test? Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ The one, which is most informative for the classification we want to get. The attribute which best reduces the uncertainty or the disorder. How can we measure something like that? ¢ ¢ 9 We need a quantity to measure the disorder in a set of examples Then we need a quantity to measure the amount of reduction of the disorder level in the instance of knowing the value of a particular attribute ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Entropy Introduction Decision Tree ID 3 Fuzzy FSM ¢ Entropy is minimized when all values of the target attribute are the same. ¢ Entropy is maximized when there is an equal chance of all values for the target attribute (i. e. the result is random) 10 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

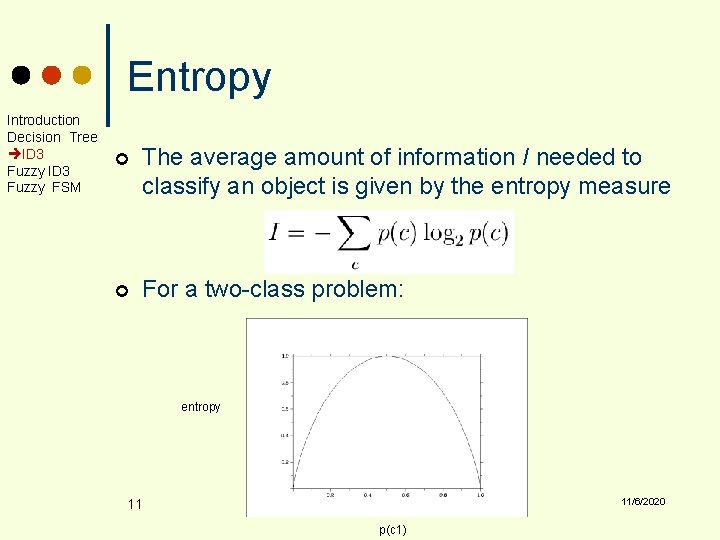

Entropy Introduction Decision Tree ID 3 Fuzzy FSM ¢ The average amount of information I needed to classify an object is given by the entropy measure ¢ For a two-class problem: entropy 11/6/2020 11 p(c 1)

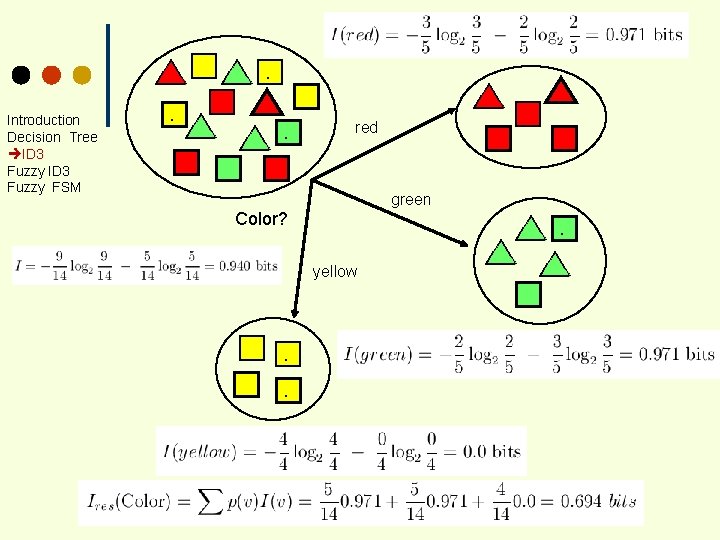

Introduction Decision Tree ID 3 Fuzzy FSM . . . . red green Color? . yellow . . .

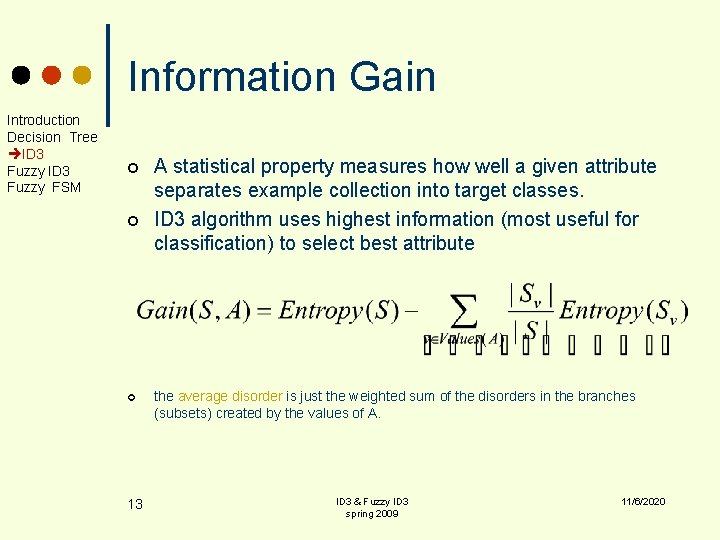

Information Gain Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ ¢ 13 A statistical property measures how well a given attribute separates example collection into target classes. ID 3 algorithm uses highest information (most useful for classification) to select best attribute the average disorder is just the weighted sum of the disorders in the branches (subsets) created by the values of A. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

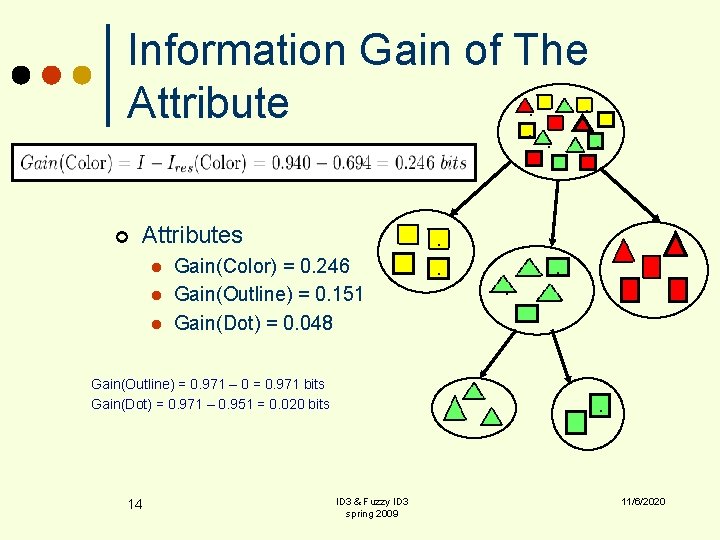

Information Gain of The. . Attribute. . . ¢ Attributes l l l . Gain(Color) = 0. 246 Gain(Outline) = 0. 151 Gain(Dot) = 0. 048 Gain(Outline) = 0. 971 – 0 = 0. 971 bits Gain(Dot) = 0. 971 – 0. 951 = 0. 020 bits 14 . . . ID 3 & Fuzzy ID 3 spring 2009 . . 11/6/2020 .

Overfitting Introduction Decision Tree ID 3 Fuzzy FSM ¢ where do we stop growing the tree? ¢ what if there are noisy (mislabelled) data as well in data set? 15 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

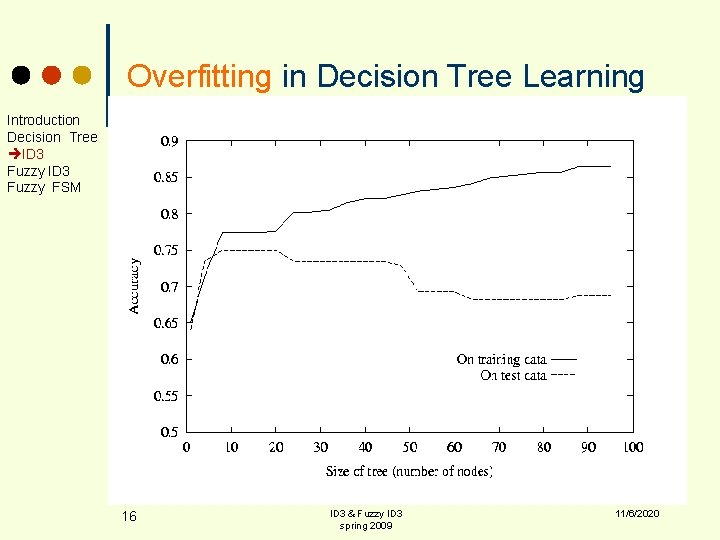

Overfitting in Decision Tree Learning Introduction Decision Tree ID 3 Fuzzy FSM 16 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

How can we avoid overfitting? Introduction Decision Tree ID 3 Fuzzy FSM Pruning Trees A technique for reducing the number of attributes used in a tree – pruning ¢ Two types of pruning: ¢ Pre-pruning (forward pruning) l Post-pruning (backward pruning) l 17 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Prepruning Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ 18 In prepruning, we decide during the building process when to stop adding attributes (possibly based on their information gain) However, this may be problematic – Why? l Sometimes attributes individually do not contribute much to a decision, but combined, they may have a significant impact ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Postpruning Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ 19 Postpruning waits until the full decision tree has built and then prunes the attributes Two techniques l Subtree Replacement l Subtree Raising ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

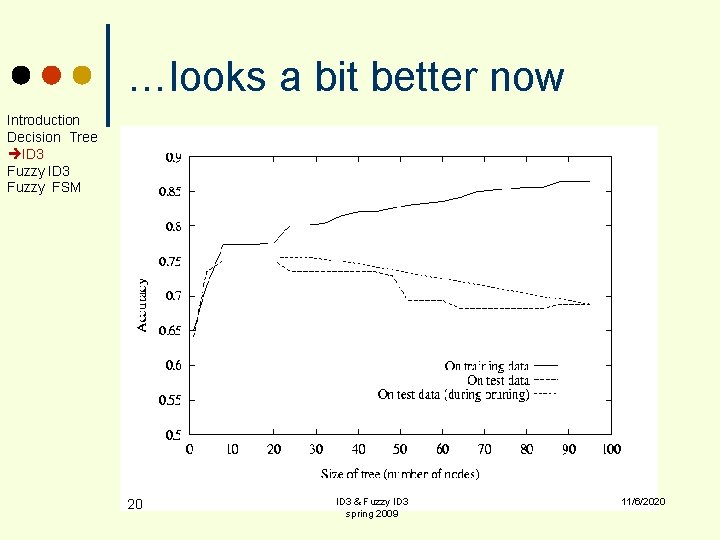

…looks a bit better now Introduction Decision Tree ID 3 Fuzzy FSM 20 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Example: The Simpsons Introduction Decision Tree ID 3 Fuzzy FSM 21 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

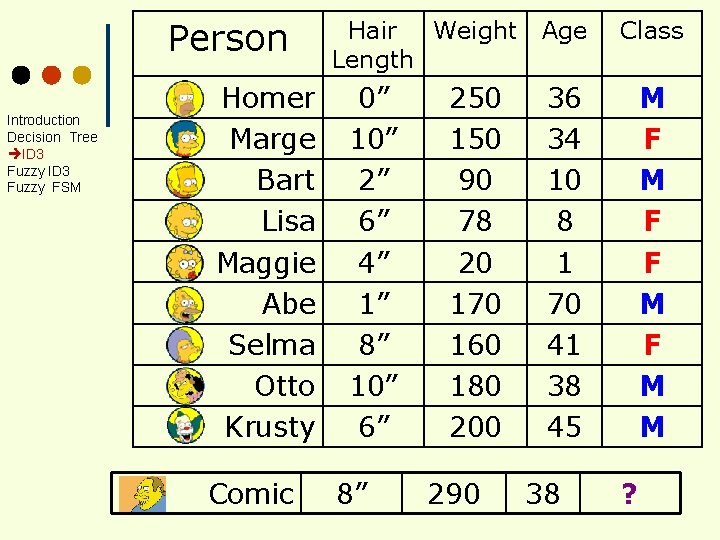

Person Introduction Decision Tree ID 3 Fuzzy FSM Homer Marge Bart Lisa Maggie Abe Selma Otto Krusty Comic Hair Weight Length 0” 10” 2” 6” 4” 1” 8” 10” 6” 8” 250 150 90 78 20 170 160 180 200 290 Age Class 36 34 10 8 1 70 41 38 45 M F F M M 38 ?

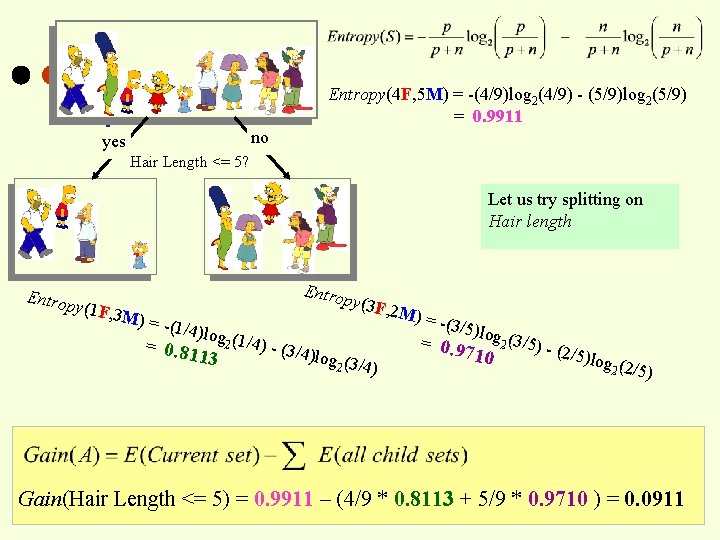

Entropy(4 F, 5 M) = -(4/9)log 2(4/9) - (5/9)log 2(5/9) = 0. 9911 no yes Hair Length <= 5? Let us try splitting on Hair length Entrop y(1 F, 3 M) = - Entro p (1/4)lo y(3 F, g 2 (1/4) = 0. 81 13 - (3/4) log (3/ 2 4) 2 M) = -(3/5) l = 0. 9 og 2 (3/5) - ( 2/5)lo 710 g 2 (2/5) Gain(Hair Length <= 5) = 0. 9911 – (4/9 * 0. 8113 + 5/9 * 0. 9710 ) = 0. 0911

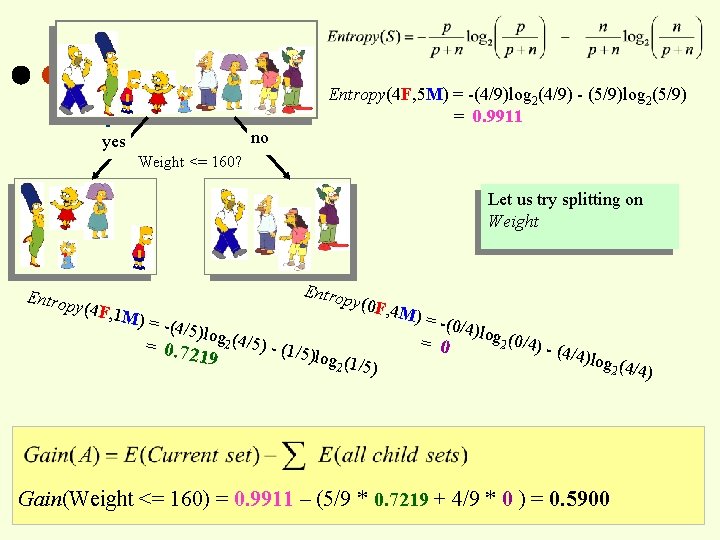

Entropy(4 F, 5 M) = -(4/9)log 2(4/9) - (5/9)log 2(5/9) = 0. 9911 no yes Weight <= 160? Let us try splitting on Weight Entrop y(4 F, 1 M) = - Entro p (4/5)lo y(0 F, g 2 (4/5) = 0. 72 19 - (1/5) log (1/ 2 5) 4 M) = -(0/4) = 0 log (0 2 /4) - ( 4/4)lo g 2 (4/4 Gain(Weight <= 160) = 0. 9911 – (5/9 * 0. 7219 + 4/9 * 0 ) = 0. 5900 )

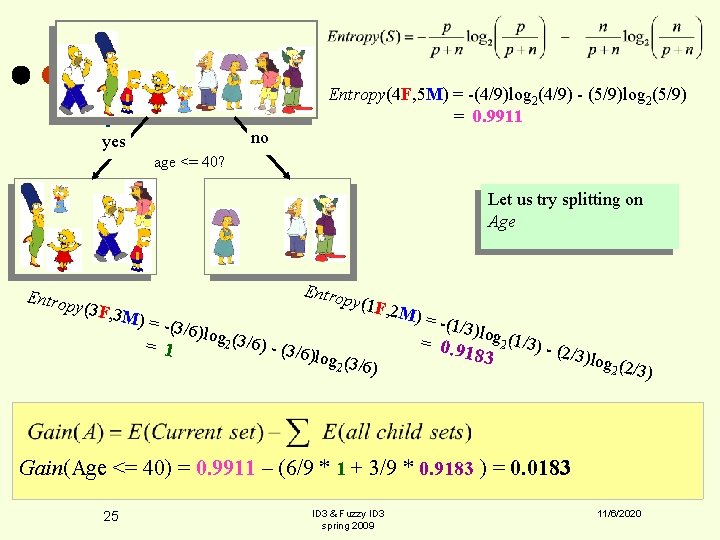

Entropy(4 F, 5 M) = -(4/9)log 2(4/9) - (5/9)log 2(5/9) = 0. 9911 no yes age <= 40? Let us try splitting on Age Entrop y(3 F, 3 Entro p M) = - (3/6)lo y(1 F, = 1 g 2 (3/6) - (3/6) log (3/ 2 6) 2 M) = -(1/3) l = 0. 9 og 2 (1/3) - ( 2/3)lo 183 g 2 (2/3) Gain(Age <= 40) = 0. 9911 – (6/9 * 1 + 3/9 * 0. 9183 ) = 0. 0183 25 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

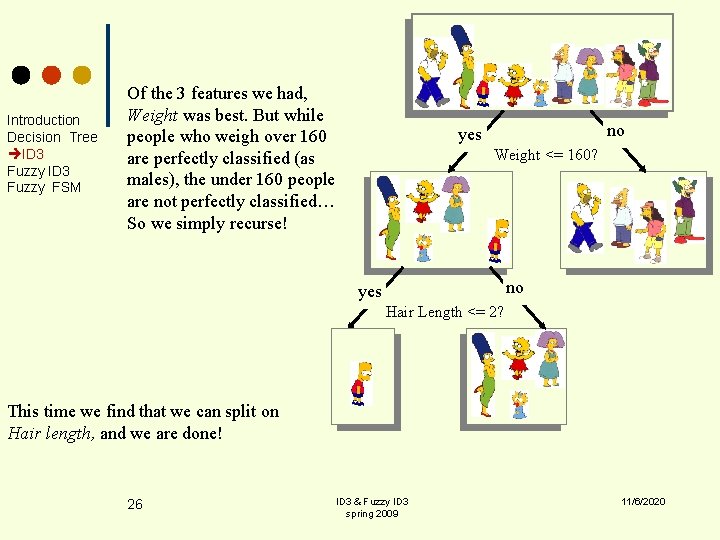

Introduction Decision Tree ID 3 Fuzzy FSM Of the 3 features we had, Weight was best. But while people who weigh over 160 are perfectly classified (as males), the under 160 people are not perfectly classified… So we simply recurse! no yes Weight <= 160? no yes Hair Length <= 2? This time we find that we can split on Hair length, and we are done! 26 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

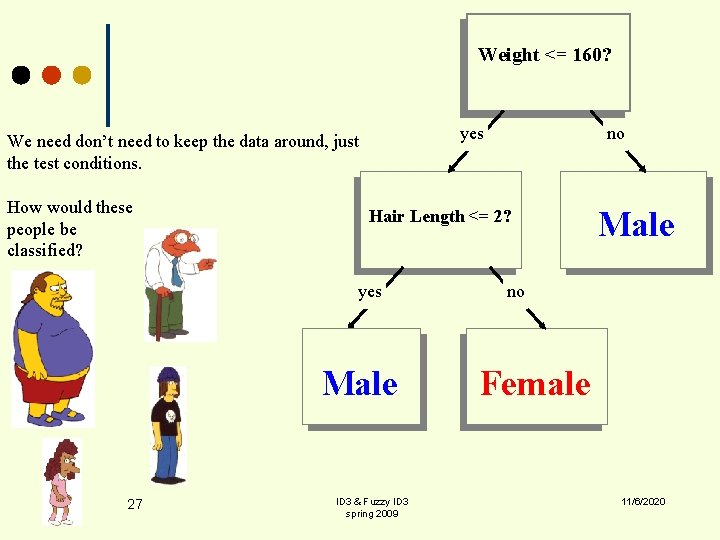

Weight <= 160? yes We need don’t need to keep the data around, just the test conditions. How would these people be classified? Hair Length <= 2? yes Male 27 no ID 3 & Fuzzy ID 3 spring 2009 Male no Female 11/6/2020

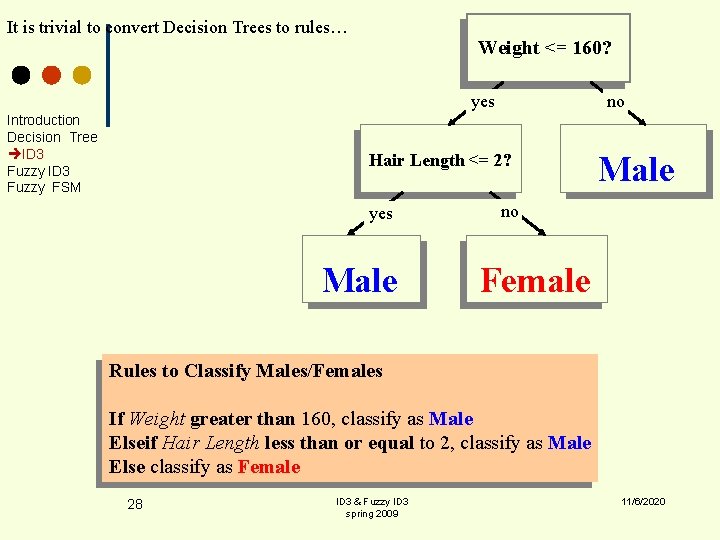

It is trivial to convert Decision Trees to rules… Weight <= 160? yes Introduction Decision Tree ID 3 Fuzzy FSM no Hair Length <= 2? yes Male no Female Rules to Classify Males/Females If Weight greater than 160, classify as Male Elseif Hair Length less than or equal to 2, classify as Male Else classify as Female 28 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

ID 3 & Need For Fuzzification Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ ¢ 29 Learning from examples, concept acquistion, is one of the most important branches of machine learning. The induction of decision trees is an efficient way of learning from examples. Cognitive uncertainties, such as vagueness and ambiguity have been incorporated in to the knowledge induction process by fuzzy DT. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

ID 3 & Need For Fuzzification Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ 30 ID 3 cannot provide any information in the intersection region when classes are overlapped. Decision Trees is recognized as highly unstable classifier with respect to minor perturbation in the training data. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Construction: – handle imprecise attribute values. (membership >0 for more than one linguistic value) – handle imprecise class values. ¢ Classification: – produce class membership values. – FDTs allow greater flexibility. ¢ 31 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

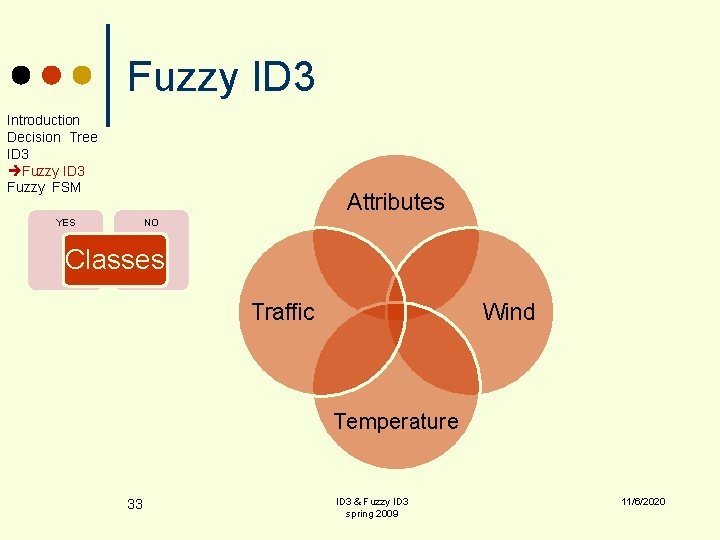

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Example Traffic jam ¢ When I should use car/public trasportation? 32 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Attributes YES NO Classes Traffic Wind Temperature 33 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM “Temperature” ¢ Hot, Cool, Mild “Wind” ¢ Strong, Weak “Traffic” ¢ Long, Short 34 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

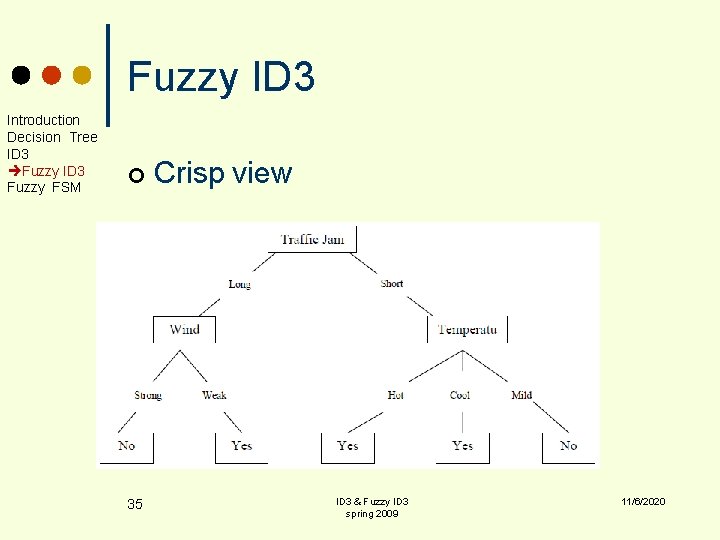

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ 35 Crisp view ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

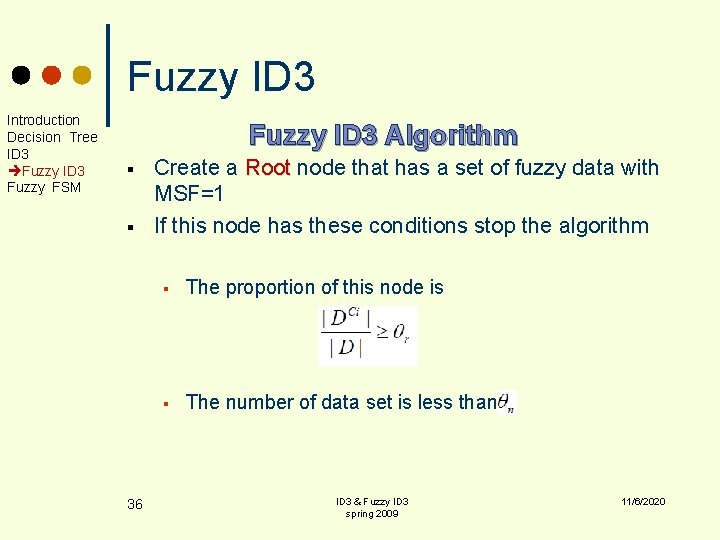

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Fuzzy ID 3 Algorithm § § 36 Create a Root node that has a set of fuzzy data with MSF=1 If this node has these conditions stop the algorithm § The proportion of this node is § The number of data set is less than ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ ¢ Fuzziness Control Threshold Leaf Decision Threshold l l 37 If the proporation of a data set of a class is greater than a threshold stop expanding the tree. If the number of a data set is less than a threshold, stop expanding the tree. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM § If a node does not satisfy these conditions, expand the tree with using Entropy or IG for attributes § Use the attribute with the best IG for first sub tree. § Divide the samples between different values of this attribute(the best), if the MSF of this sample for that value is not zero. Now, if class of the samples for every leaf in the tree is the same, or the termination conditions is satisfied, stop. else repeat for other attributes recursively. § 38 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

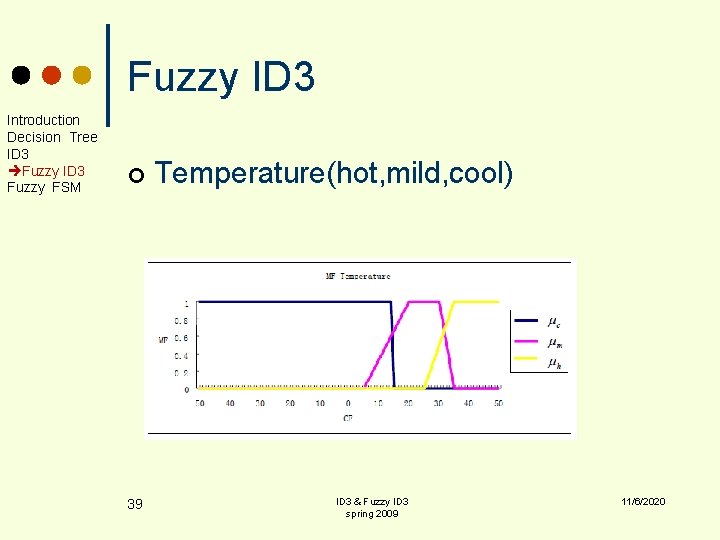

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ 39 Temperature(hot, mild, cool) ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

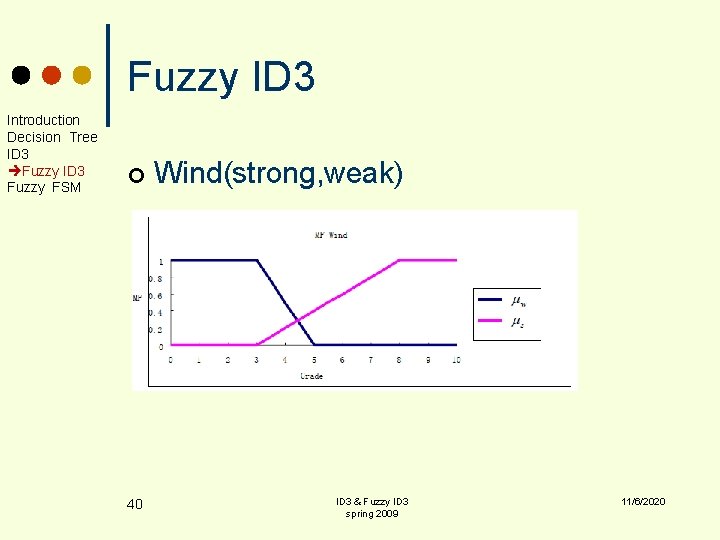

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ 40 Wind(strong, weak) ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

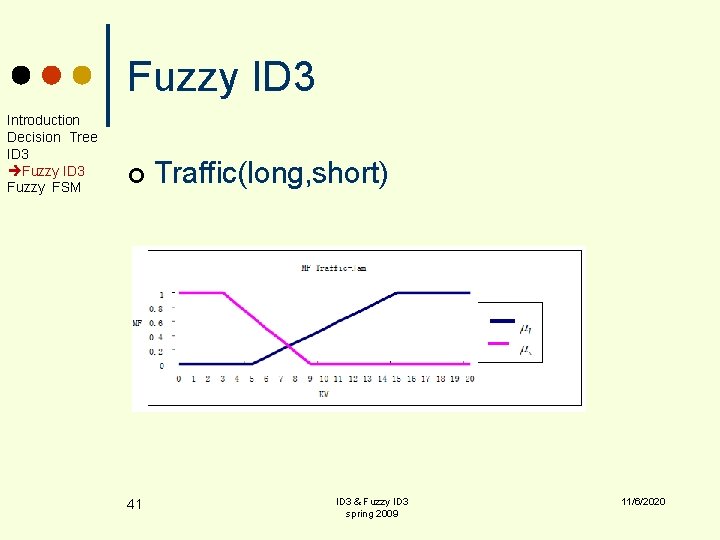

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ 41 Traffic(long, short) ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

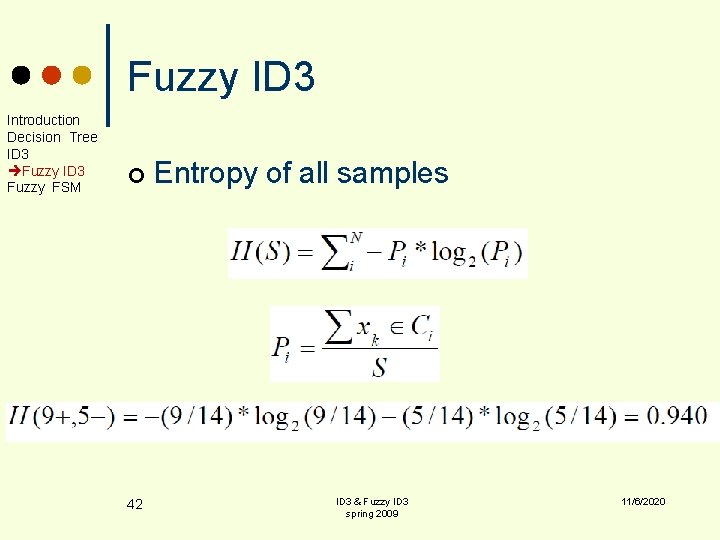

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ 42 Entropy of all samples ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

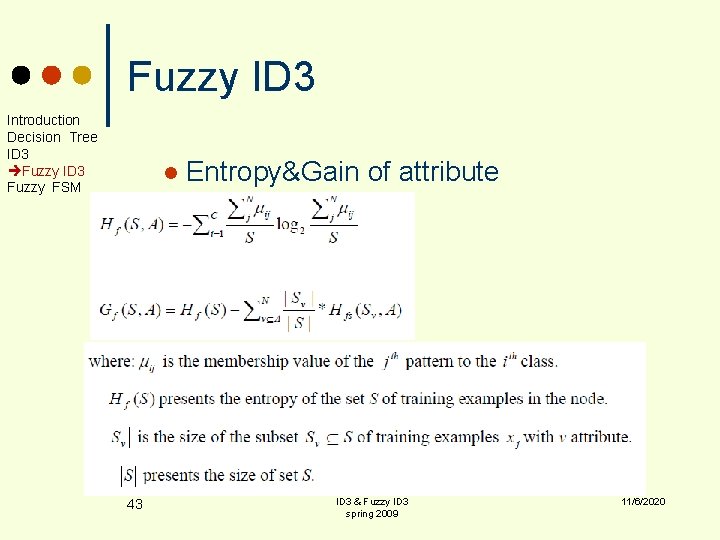

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM l 43 Entropy&Gain of attribute ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

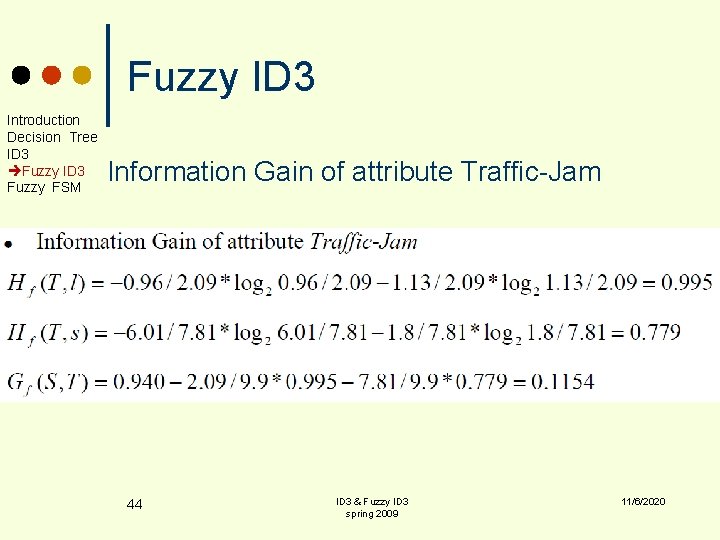

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Information Gain of attribute Traffic-Jam 44 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

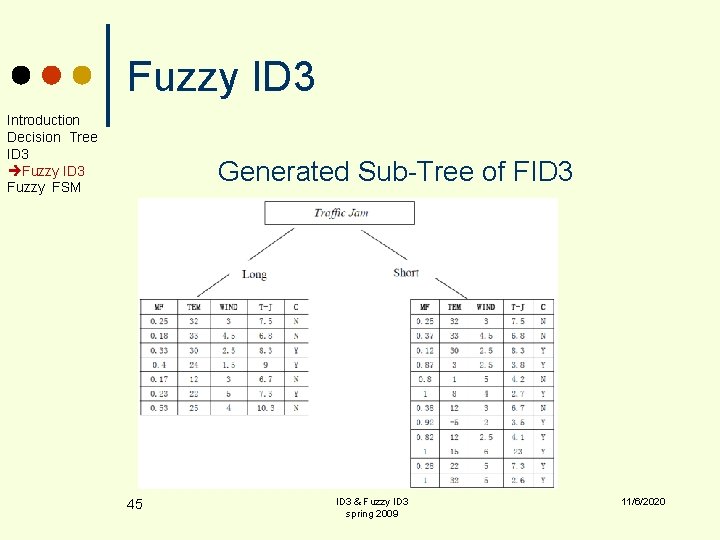

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Generated Sub-Tree of FID 3 45 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Reasoning with FID 3 ü 46 We start reasoning from the top node(Root) Root of the FID 3, repeat testing the attribute at the node, branching an edge by its value of the MSF and multiplying these values until the last node is reached. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

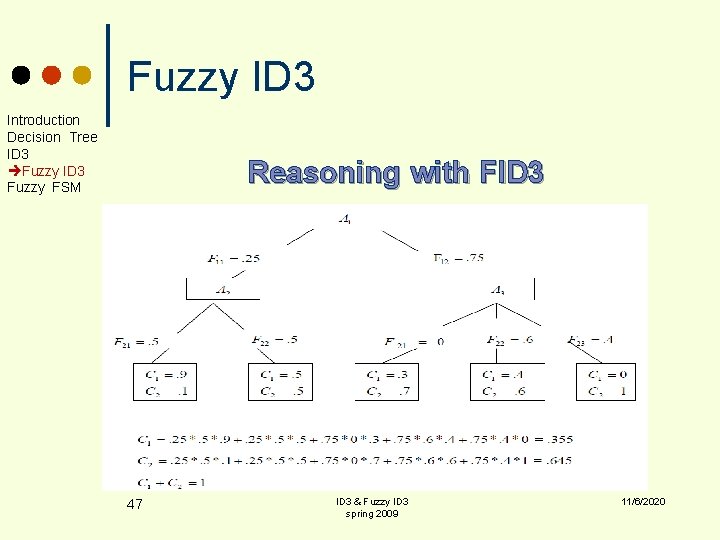

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM Reasoning with FID 3 47 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy ID 3 Introduction Decision Tree ID 3 Fuzzy FSM ¢ Comparing the algorithms ID 3&FID 3 Data Representation The data representation of ID 3 is crisp while for FID 3 they are fuzzy with continous attributes. l Reasoning The reasoning of ID 3 begins from the root node of the tree, and then branch one edge to test the tree. but in FID 3 it does not branch one edge, but all of the edges l 48 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM Finite State Machine § 49 A finite state machine is a device, or a model of a device, which has a finite number of states it can be in at any given time and can operate on input to either make transitions from one state to another or to cause an output or action to take place. A finite state machine can only be in one state at a moment in time. § (informally) A finite state machine is a routing of AI building blocks that lets you control an agent. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ 50 All finite state machines are composed of three things: l all states possible in the machine, numerous input conditions, conditions and a transition function in order to connect states. l The classic FSM is one in which each state has no knowledge of any other state in the system ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

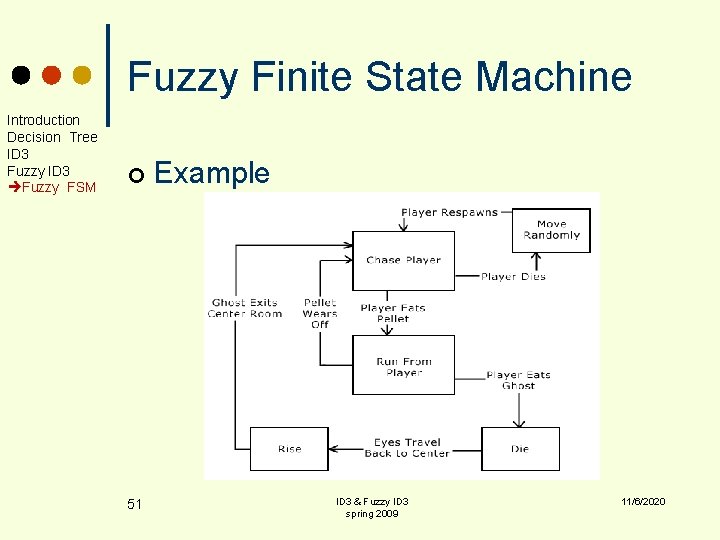

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ 51 Example ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

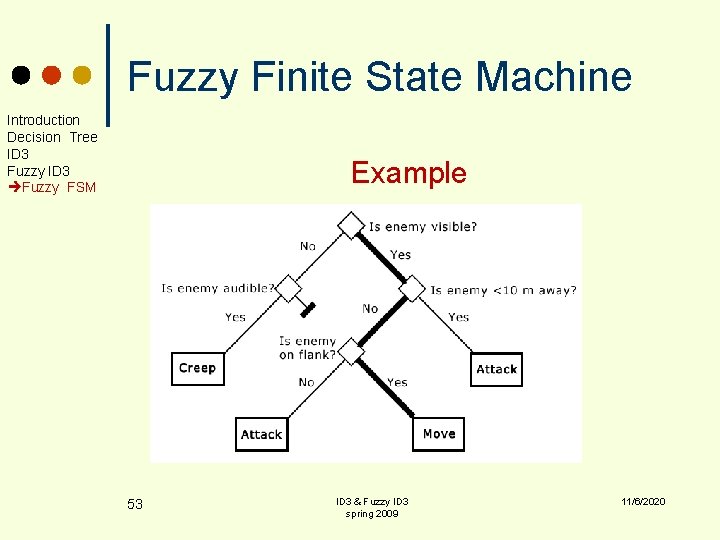

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM Decision Tree & FSM ¢ Decision trees are fast, easy to implement, and easy to maintain. They are ideal for testing execution of actions with a finite set of conditionals. ¢ Decision trees can be used for knowledge sets for which an action may depend on a series of conditions to be satisfied before it can be executed, and additionally may even be executed under different conditional series. 52 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM Example 53 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ Finite state machines can extended by the following ways: 1. 2. 54 be The ability to exist in more than one state simultaneously (fuzzy automata) automata Fuzzy transition among states (using fuzzy rules) ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

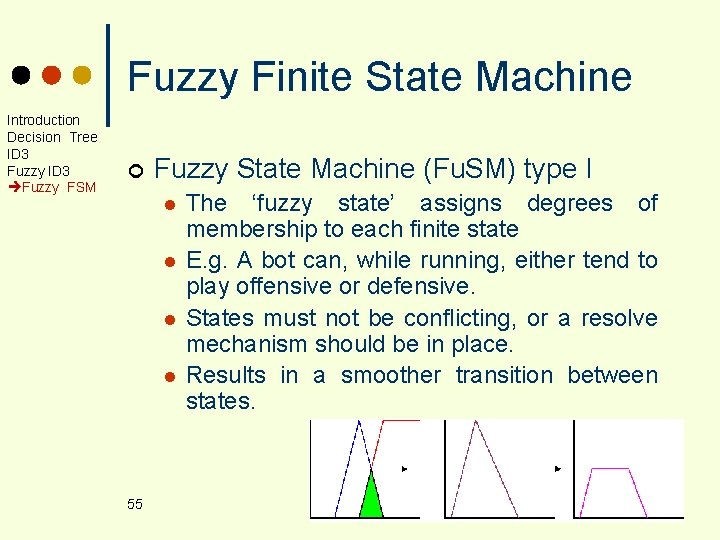

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ Fuzzy State Machine (Fu. SM) type I l l 55 The ‘fuzzy state’ assigns degrees of membership to each finite state E. g. A bot can, while running, either tend to play offensive or defensive. States must not be conflicting, or a resolve mechanism should be in place. Results in a smoother transition between states. ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

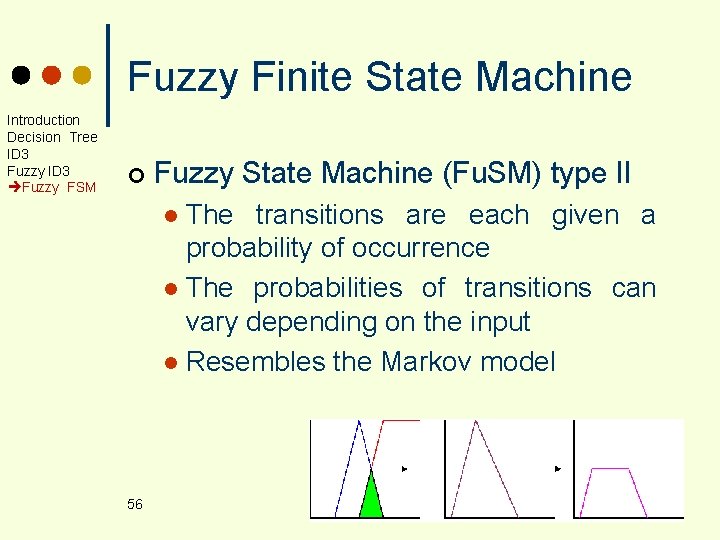

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ Fuzzy State Machine (Fu. SM) type II The transitions are each given a probability of occurrence l The probabilities of transitions can vary depending on the input l Resembles the Markov model l 56 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

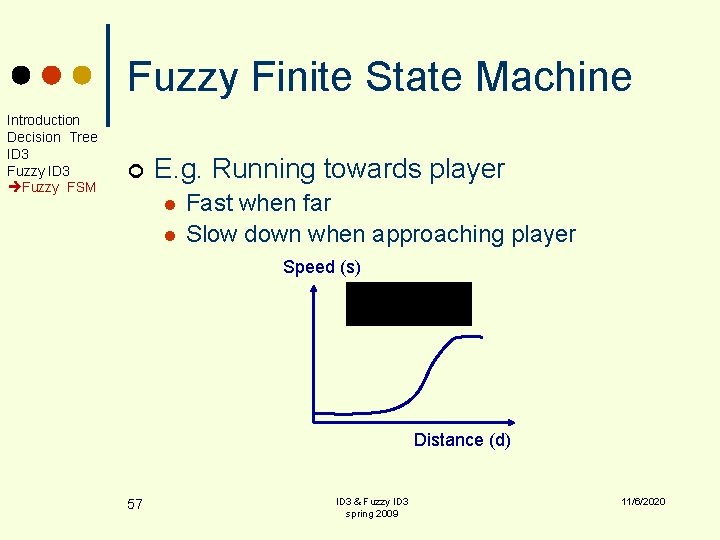

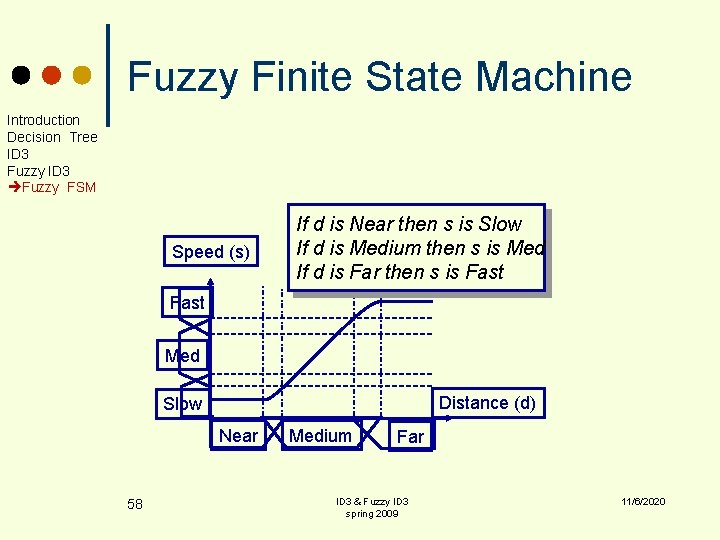

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM ¢ E. g. Running towards player l l Fast when far Slow down when approaching player Speed (s) Distance (d) 57 ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Fuzzy Finite State Machine Introduction Decision Tree ID 3 Fuzzy FSM Speed (s) If d is Near then s is Slow If d is Medium then s is Med If d is Far then s is Fast Med Distance (d) Slow Near 58 Medium Far ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

Any question ? Thanks for your attention ID 3 & Fuzzy ID 3 spring 2009 11/6/2020

- Slides: 59