Finding a Needle in Haystack Facebooks Photo storage

Finding a Needle in Haystack : Facebook’s Photo storage Doug Beaver, Sanjeev Kumar, Harry C. Li, Jason Sobel, Peter Vajgel Presented by : Pallavi Kasula

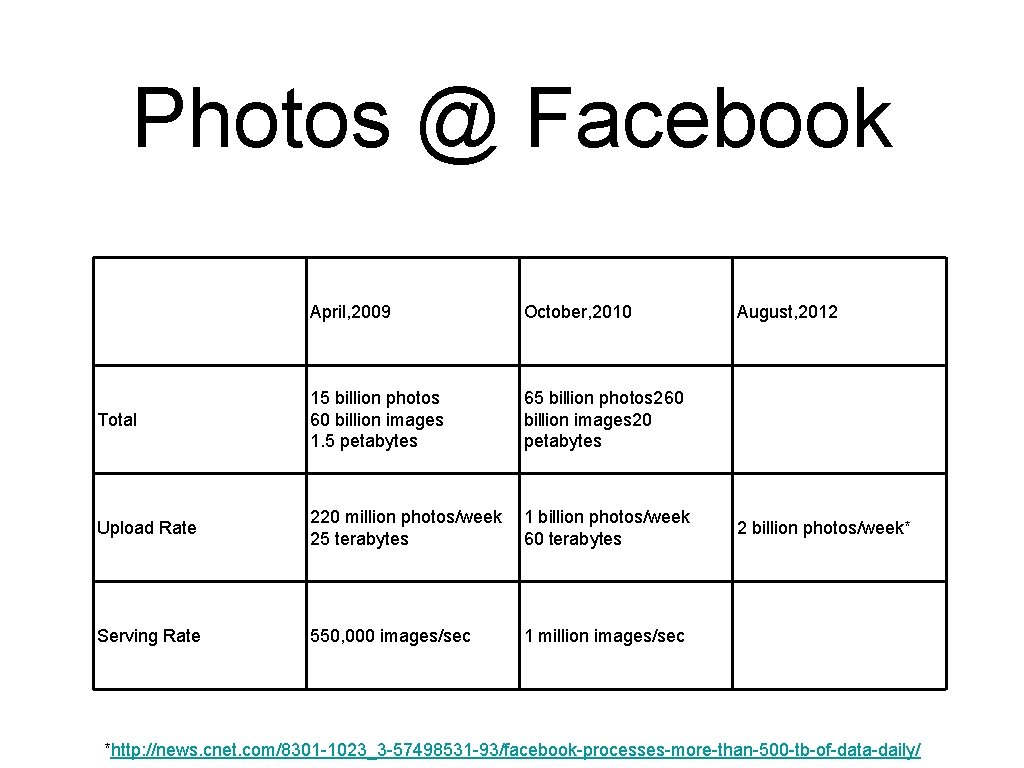

Photos @ Facebook April, 2009 October, 2010 Total 15 billion photos 60 billion images 1. 5 petabytes 65 billion photos 260 billion images 20 petabytes Upload Rate 220 million photos/week 25 terabytes 1 billion photos/week 60 terabytes Serving Rate 550, 000 images/sec 1 million images/sec August, 2012 2 billion photos/week* *http: //news. cnet. com/8301 -1023_3 -57498531 -93/facebook-processes-more-than-500 -tb-of-data-daily/

Goals • High throughput and low latency • Fault-tolerant • Cost-effective • Simple

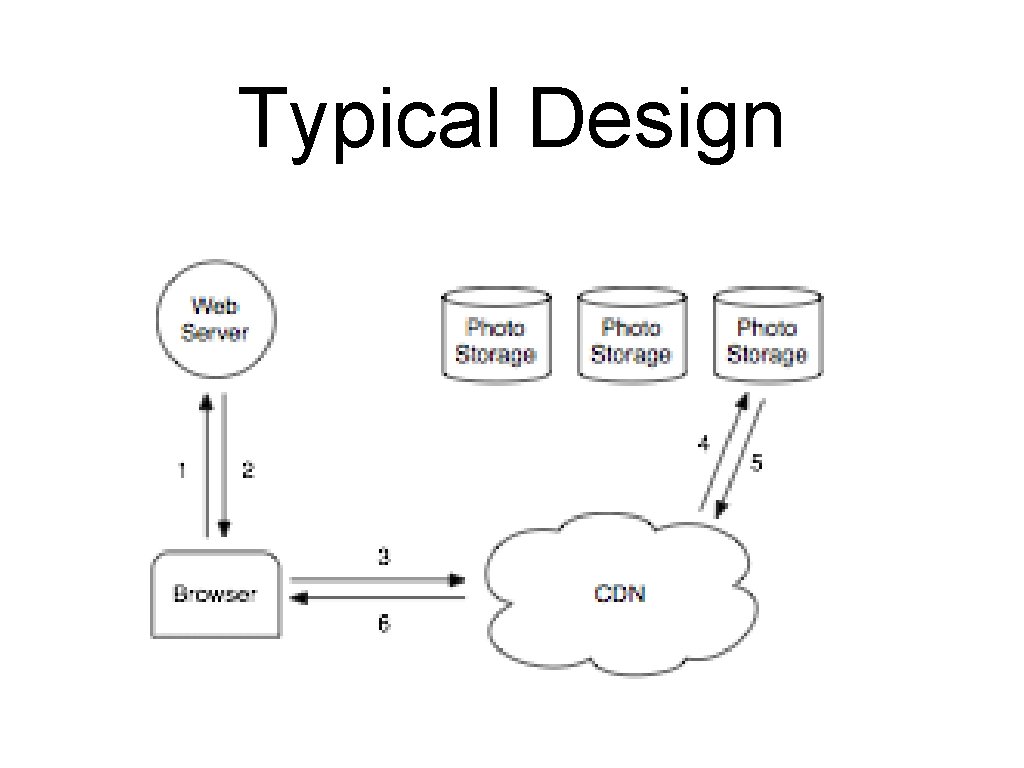

Typical Design

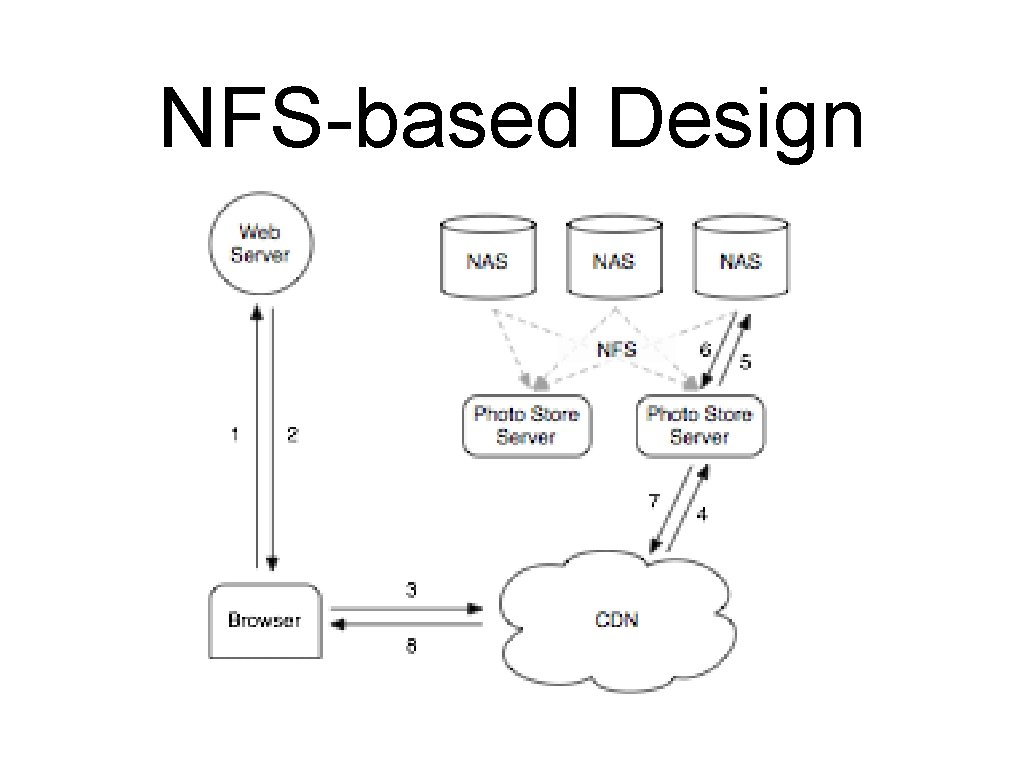

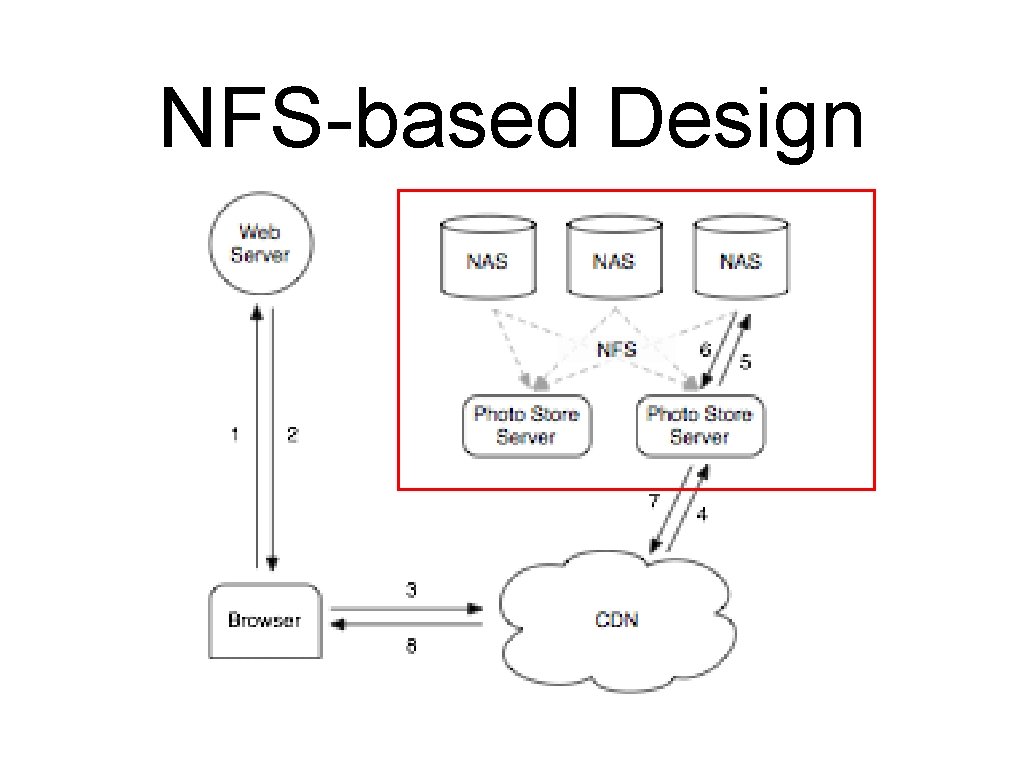

NFS-based Design

NFS-based Design • Typical website • • small working set Infrequent access to old content ~99% CDN hit rate Facebook • • • large working set Frequent access to old content ~80% CDN hit rate

NFS-based Design • Metadata bottleneck • • • Each image stored as a file Large metadata size severely limits the metadata hit ratio Image read performance • • • ~10 iops / image read (Large directories-thousands of files) 3 iops / image read (smaller directories - hundreds of files) 2. 5 iops / image read ( file handle cache)

NFS-based Design

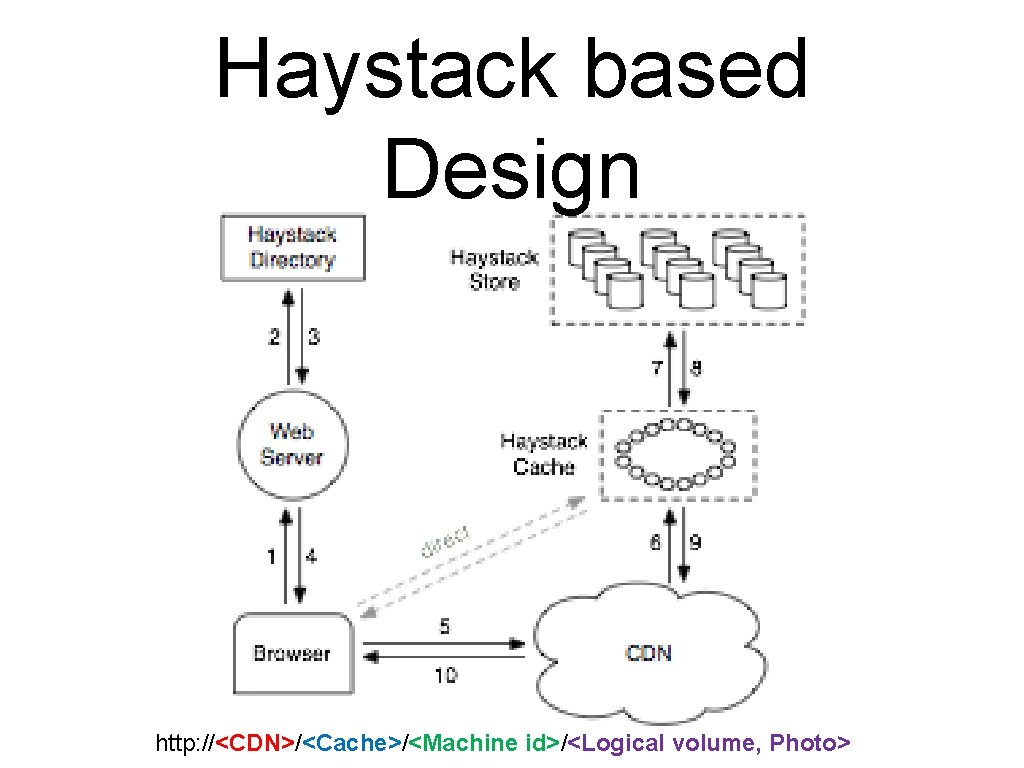

Haystack based Design http: //<CDN>/<Cache>/<Machine id>/<Logical volume, Photo>

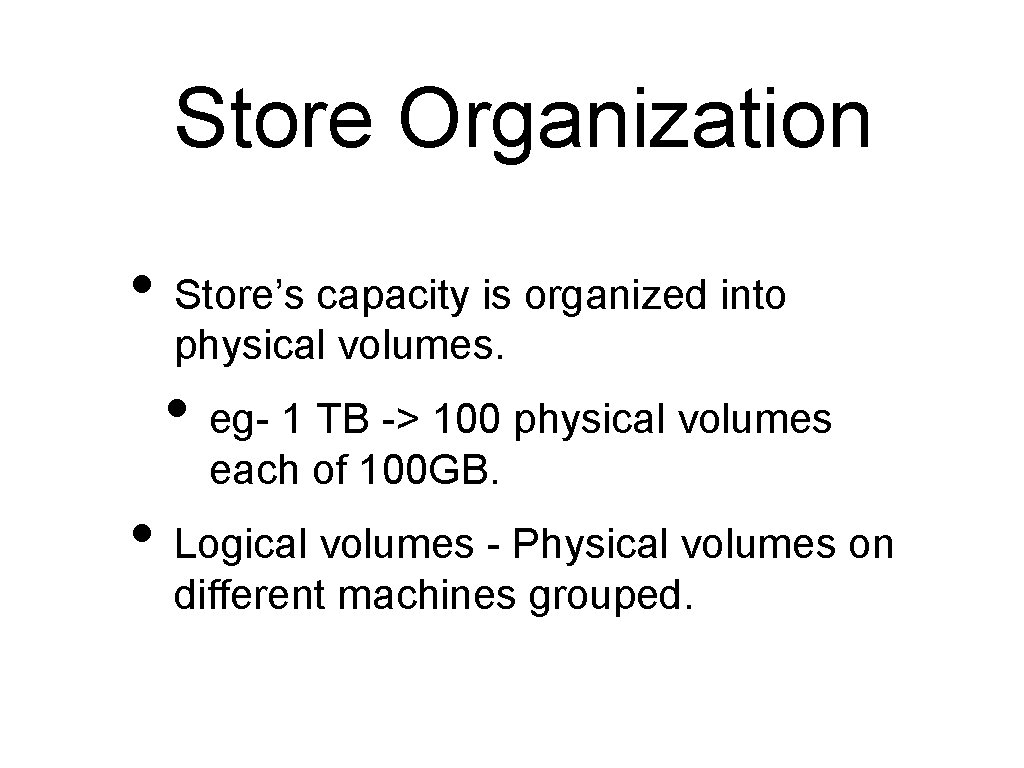

Store Organization • Store’s capacity is organized into physical volumes. • eg- 1 TB -> 100 physical volumes each of 100 GB. • Logical volumes - Physical volumes on different machines grouped.

Photo upload

Haystack Directory

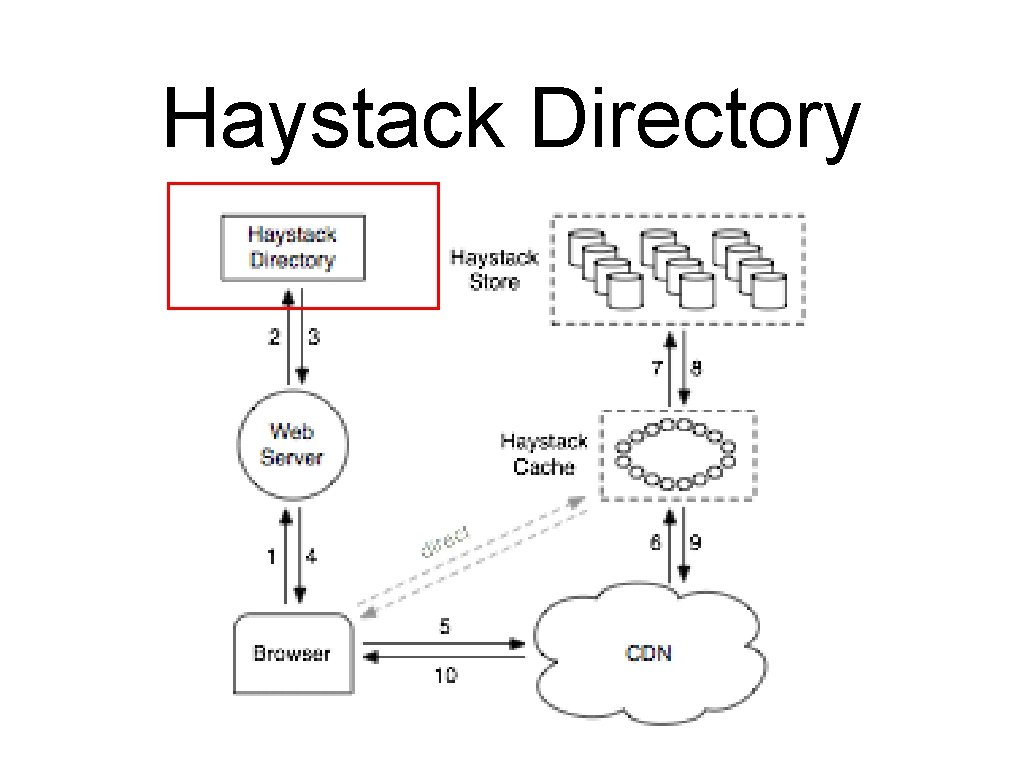

Haystack Directory • Logical to physical volume mapping • Load balancing • writes across logical volumes • reads across physical volumes • Request handled by CDN or Cache • Identifies and marks volumes Read. Only

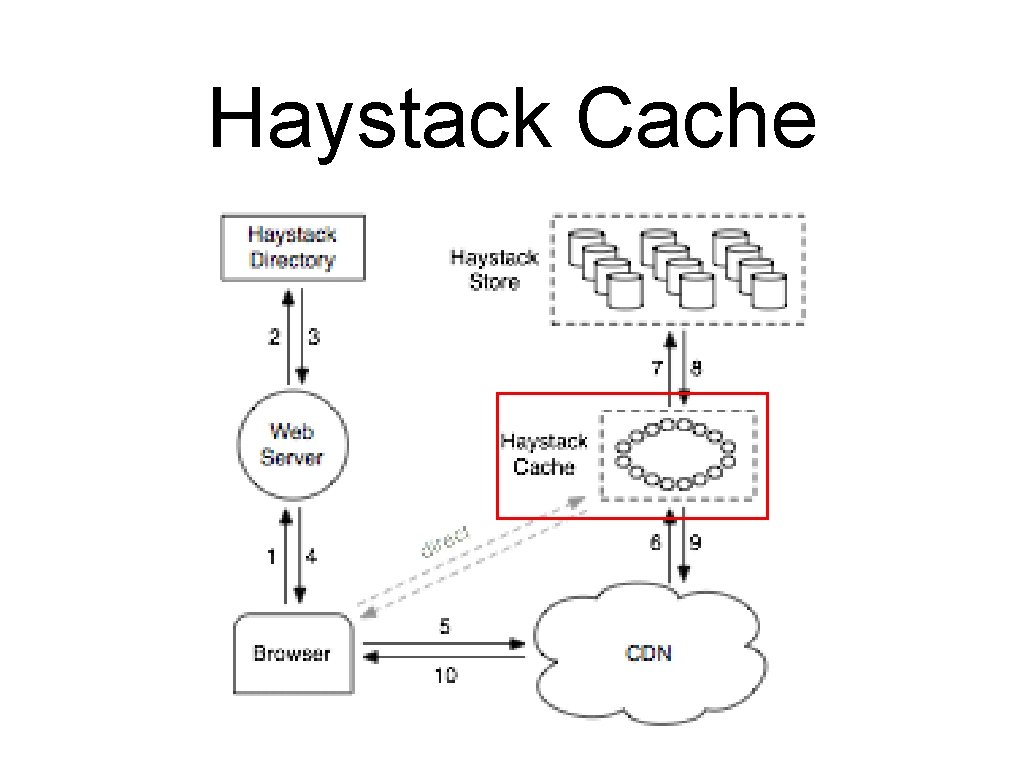

Haystack Cache

Haystack Cache • • • Distributed hash table with photo-id as key Receives HTTP requests from CDNs and browsers Cache a photo if following two conditions are met: • • Request is directly from browser not CDN Photo fetched is from write enabled Store machine

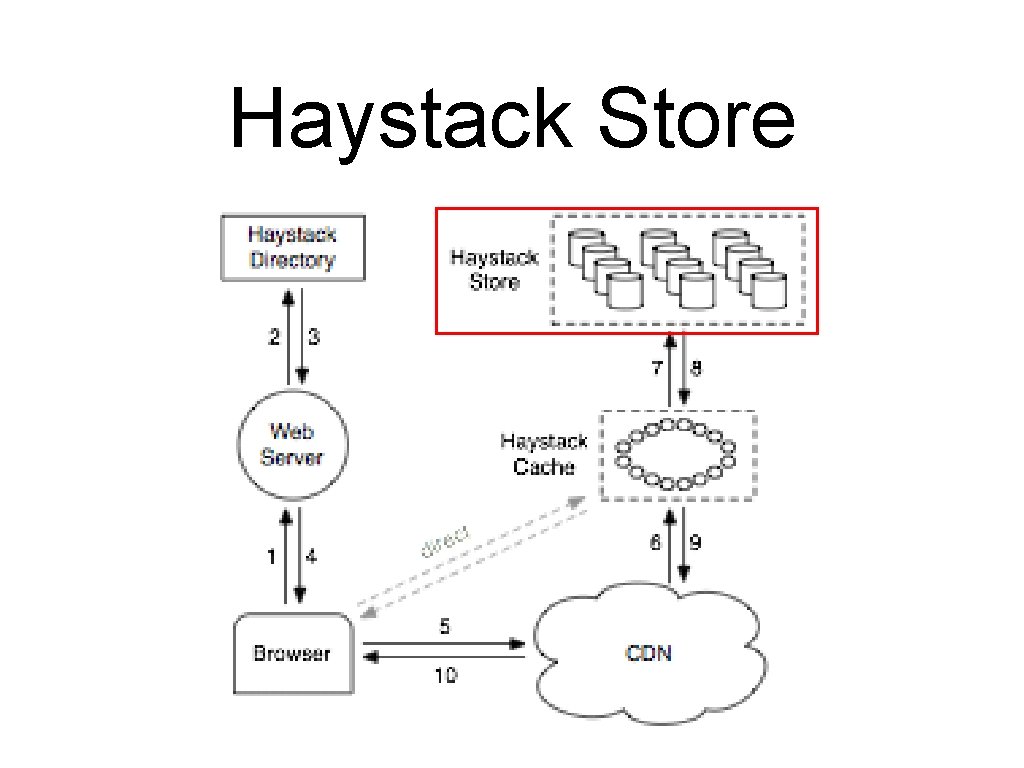

Haystack Store

Haystack Store • • Each store manages multiple physical volumes Physical volume analogous to a large file saved as “/haystack_<logical volume id>” Contains in-memory mappings of Photo ids to filesystem metadata (file, offset, size etc. ) Represents each physical volume as a large file

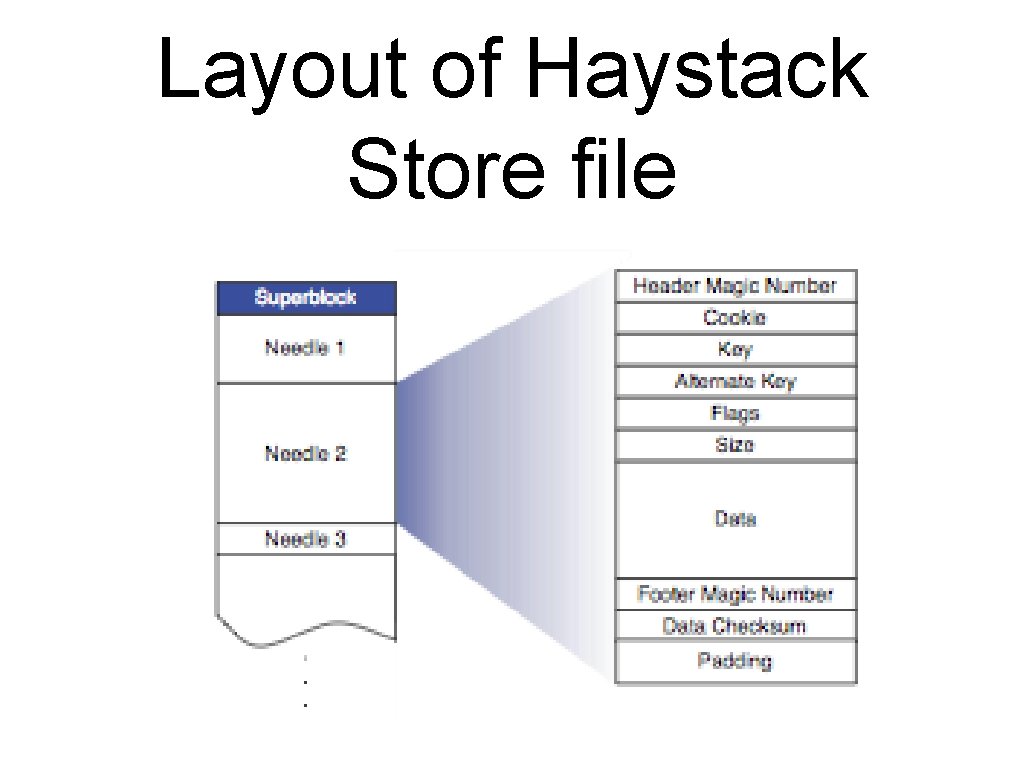

Layout of Haystack Store file

Haystack Store Read • • • Cache machine supplies the logical volume id, key, alternate key, and cookie to the Store machine looks up the relevant metadata in its in-memory mappings Seeks to the appropriate offset in the volume file, reads the entire needle Verifies cookie and integrity of the data Returns data to the Cache

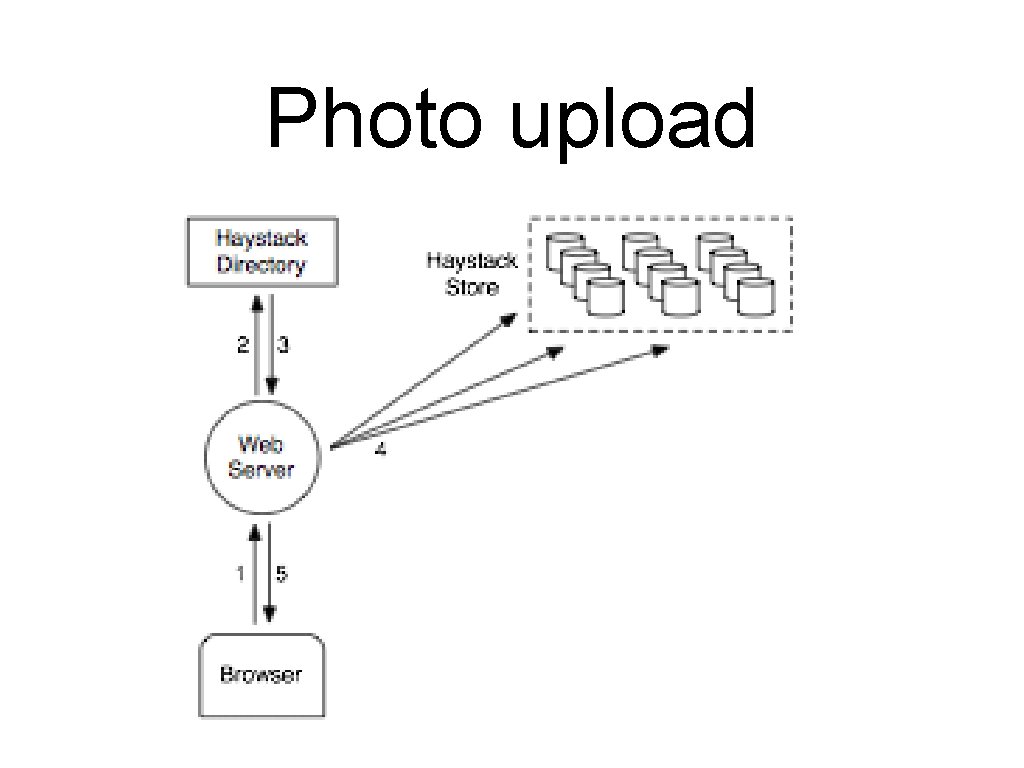

Haystack Store Write • Web server provides logical volume id, key, alternate key, cookie, and data to Store machines • Store machines synchronously append needle images to physical volume files • Update in-memory mappings as needed

Haystack Store Delete • Store machine sets the delete flag in both the in-memory mapping and in the volume file • Space occupied by deleted needles is lost! • How to reclaim? • Compaction! • Important because 25% of photos get deleted in a given year.

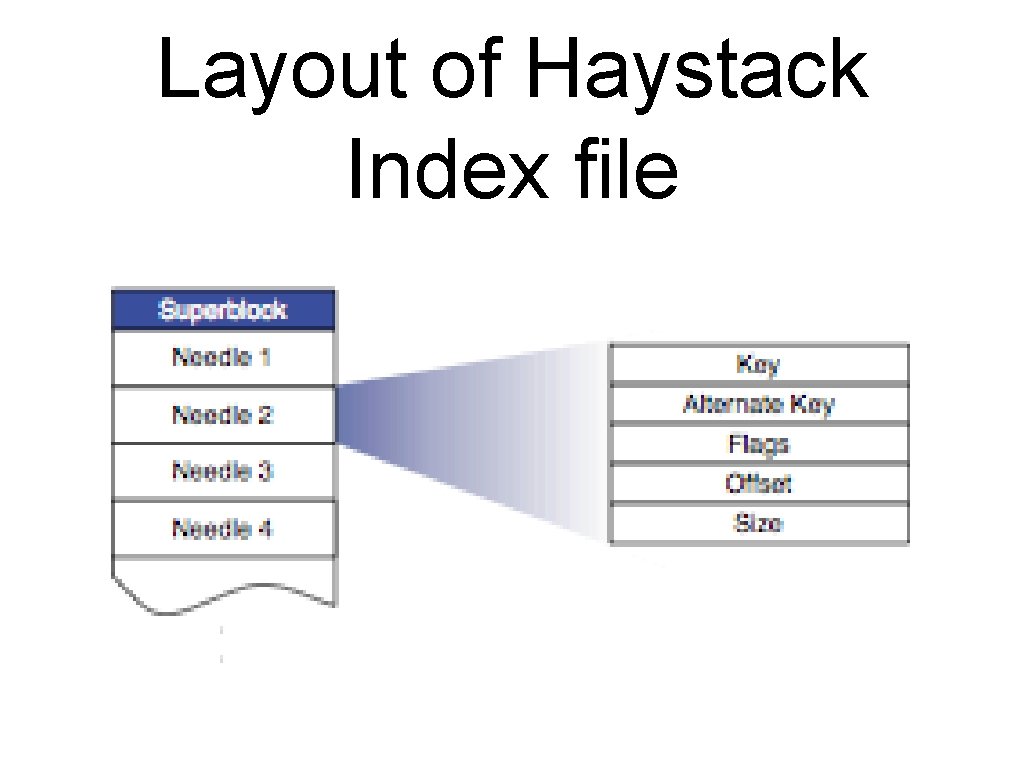

Layout of Haystack Index file

Haystack Index File • • The index file provides the minimal metadata required to locate a particular needle in the store Main purpose: allow quick loading of the needle metadata into memory without traversing the larger Haystack store file Index is usually less than 1% the size of the store file Problem: Updated asynchronously leading to stale checkpoints with needles without index needles and index needles not updated when deleted.

File System • Uses XFS - extent based file system • Advantages : • Small blockmaps for several contigious large files that can be stored in main memory • Efficient file preallocation which mitigates fragmentation

Failure Recovery • Pitch-fork - Background task that periodically checks health of each store. • If health checks fails, marks logical volumes on that store as Read-Only • Failures addressed manually with operations like Bulk sync.

Optimizations • Compaction • Deleted and Duplicate needles • Saving more memory • Delete flag replaced with offset 0 • Cookie value not stored • Batch upload

Evaluation • Characterize photo requests seen by Facebook • Effectiveness of Directory and Cache • Store performance

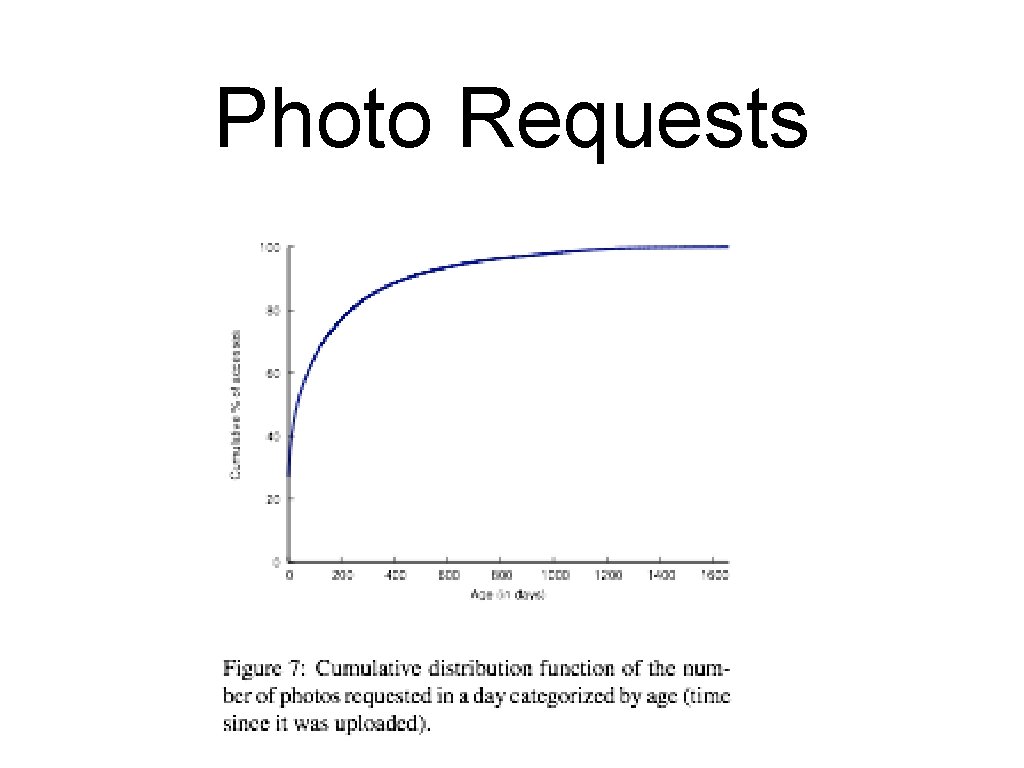

Photo Requests

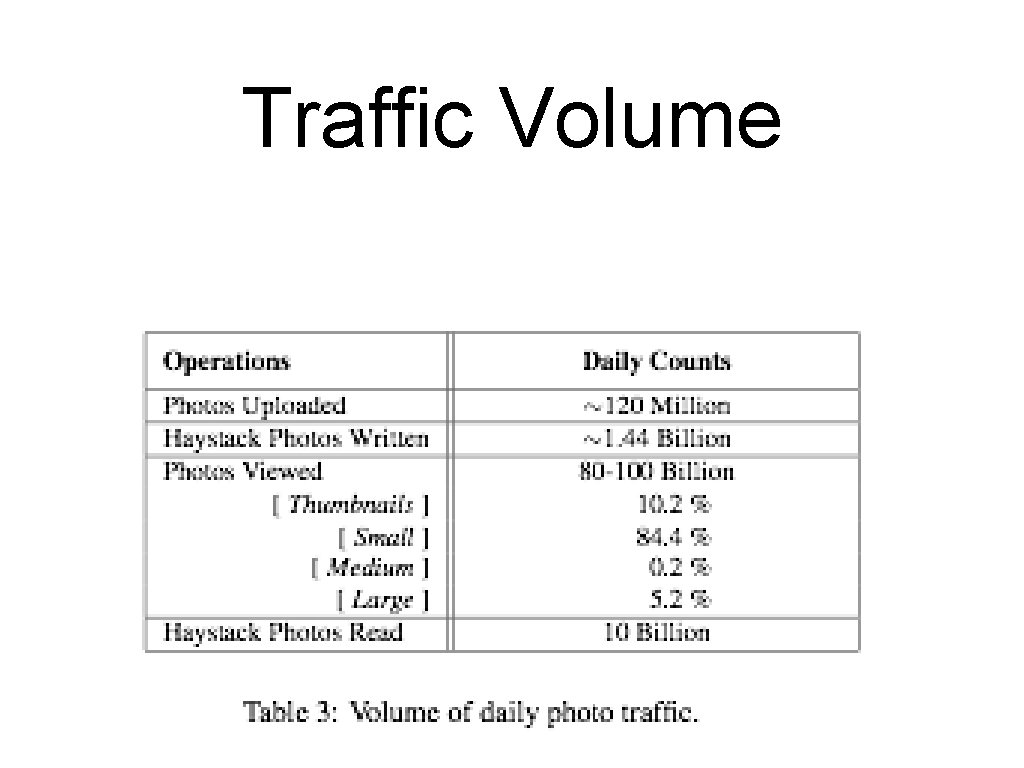

Traffic Volume

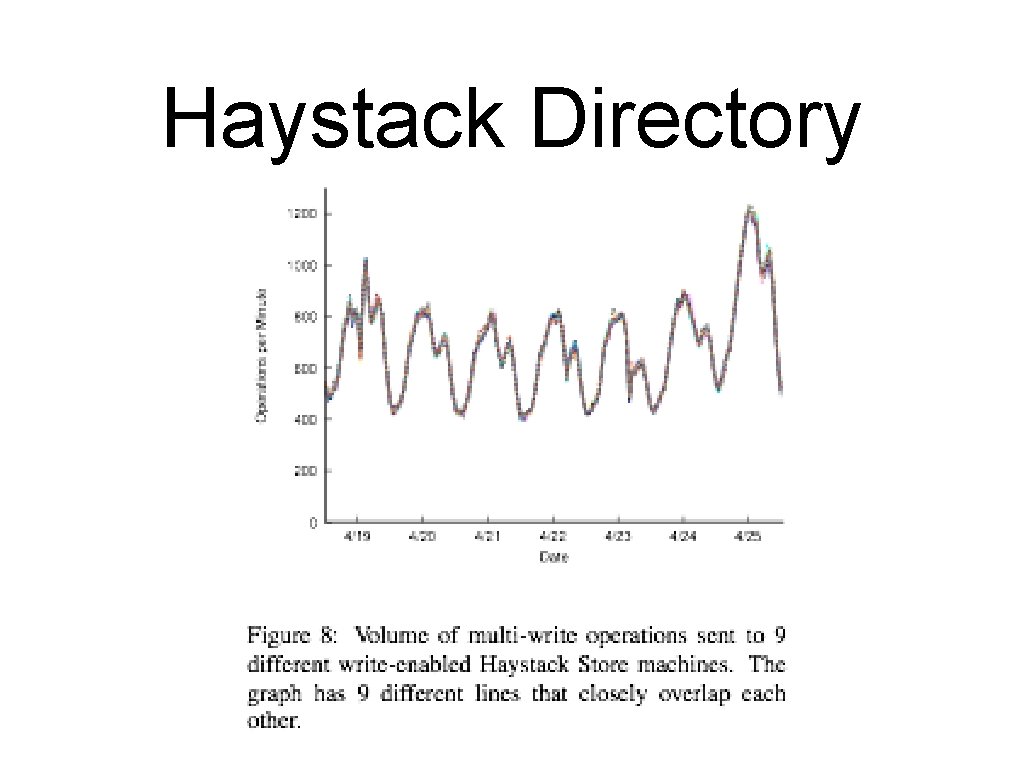

Haystack Directory

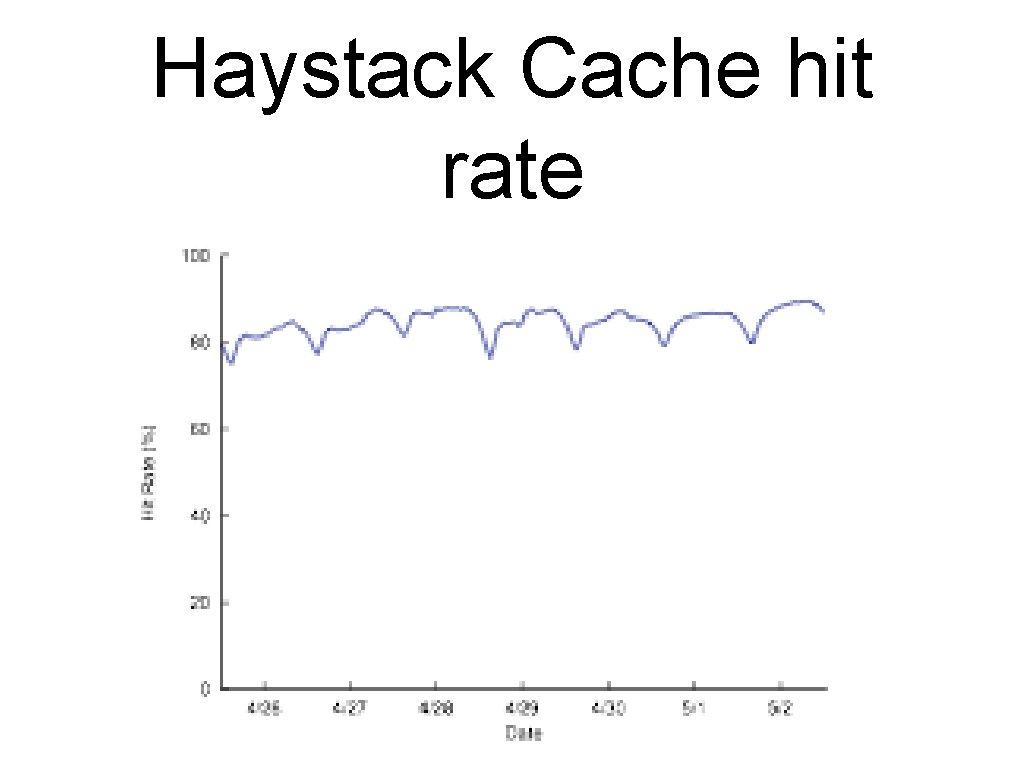

Haystack Cache hit rate

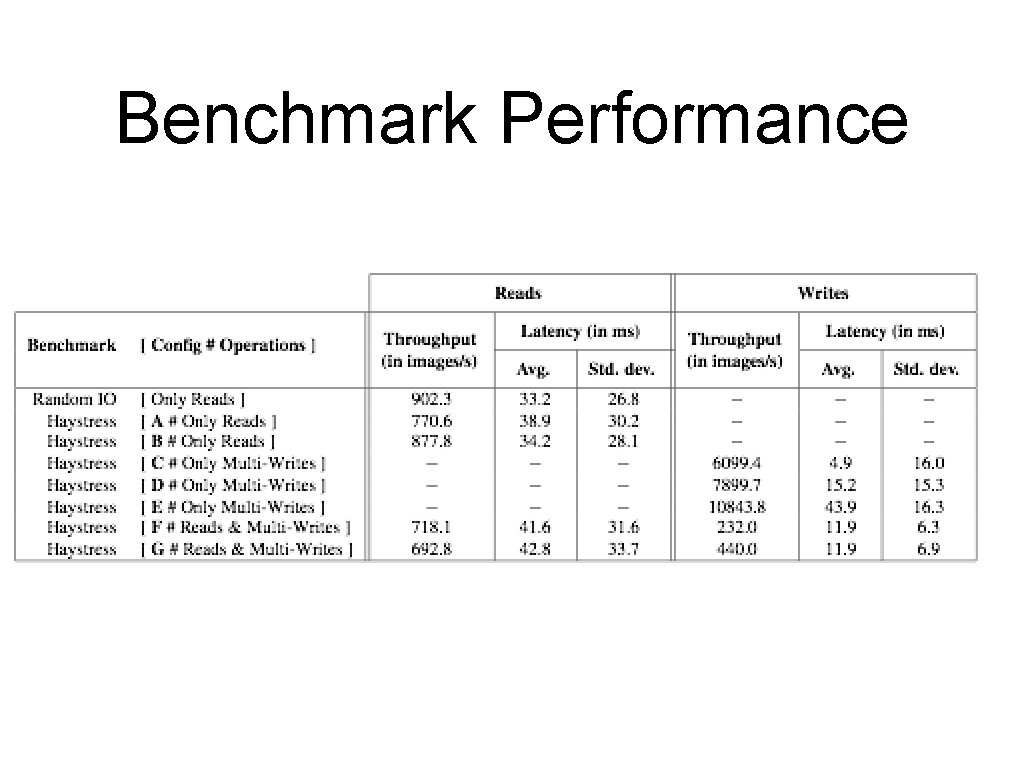

Benchmark Performance

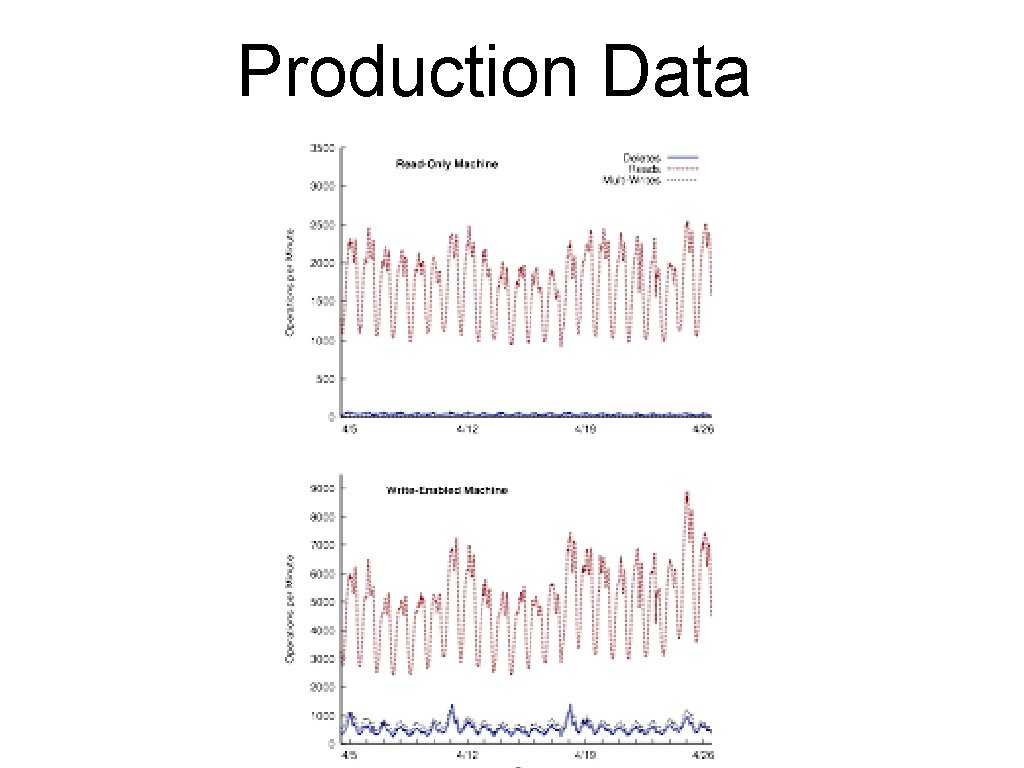

Production Data

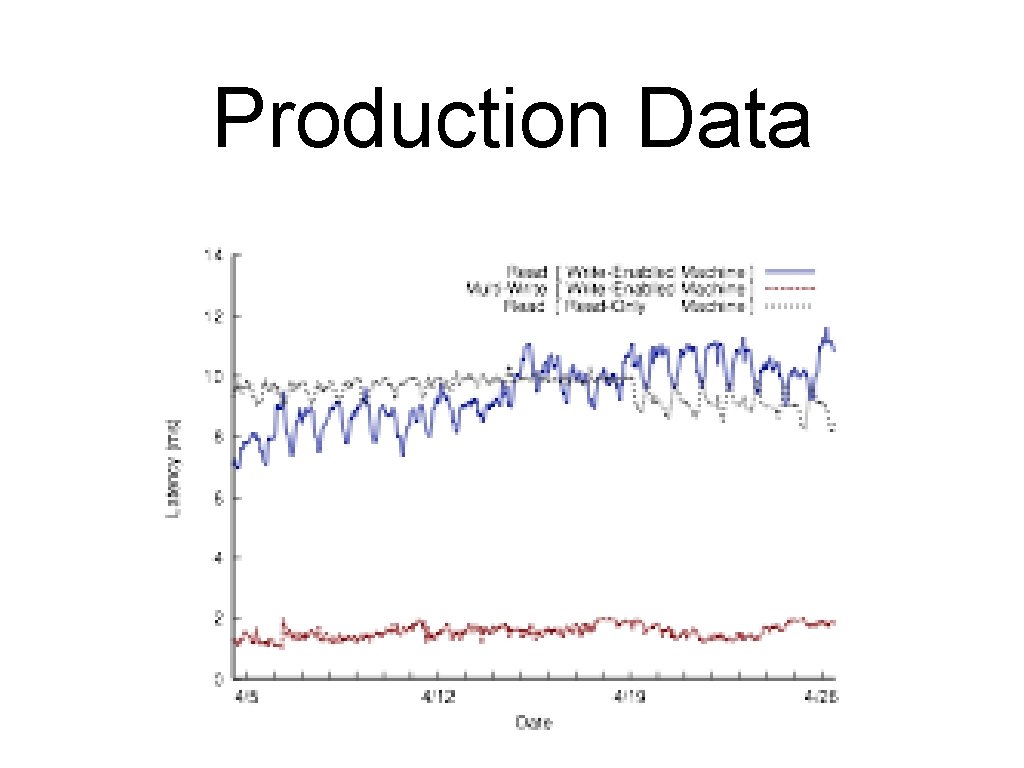

Production Data

Conclusion • Haystack simple and effective storage system • Optimized for random reads • Cheap commodity storage • Fault-tolerant • Incrementally scalable

Q&A • Thanks

- Slides: 36