Finding a needle in haystack Facebooks photo storage

Finding a needle in haystack: Facebook's photo storage By: Neha Allangh Seattle University

Contents • • Problem Description Typical Design description Need for new design (HAYSTACK) Haystack’s goal Haystack’s Design Optimization techniques Results Evaluation

Problem Description • Facebook stores over 260 billion images i. e about 20 PB of data Users upload 1 billion new photos each week i. e about 60 TB of data Facebook serves over 1 million images per second at peak Two types of workload for image serving 1. Profile pictures and pictures recently updated 2. Photo albums and older photos How to deal with this much amount of data? • • •

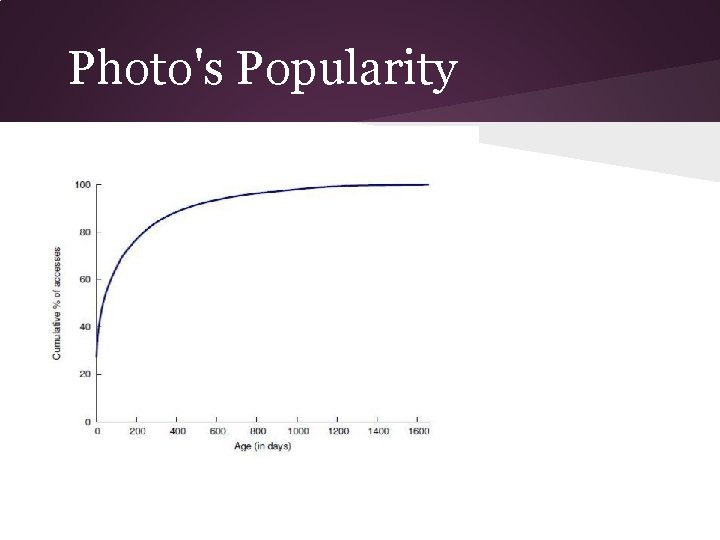

Photo's Popularity

Requirements • High throughput and Low latency • Fault tolerance • Cost effective • Simple design

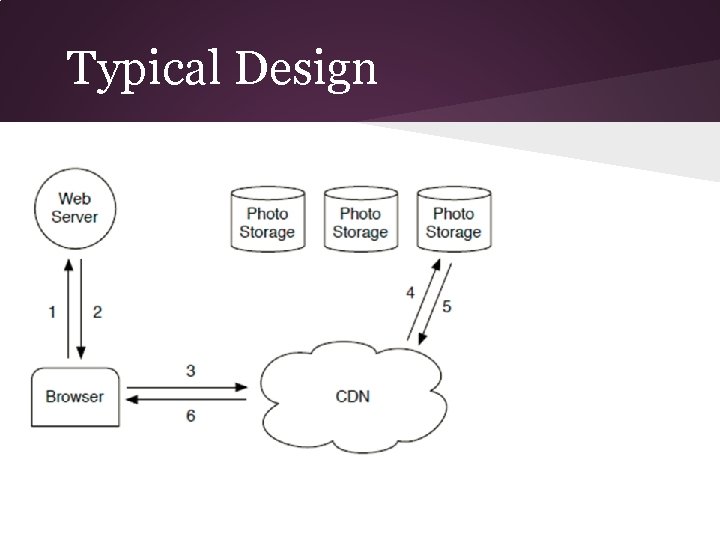

Typical Design

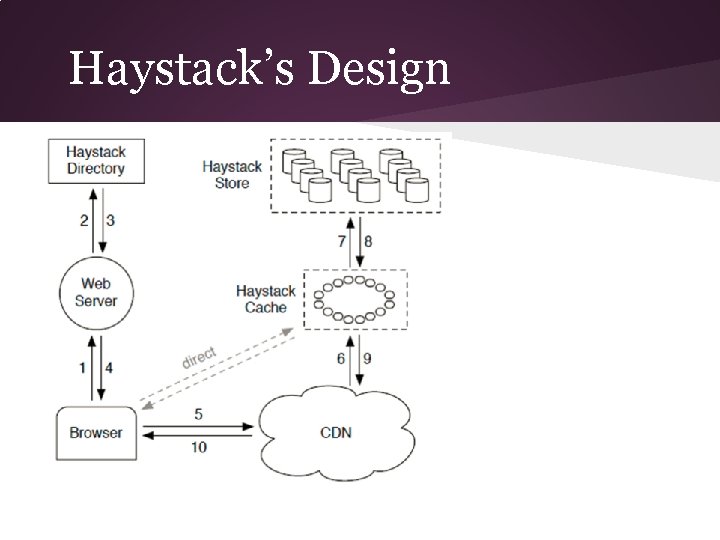

1. Browser sends an HTTP request to the web browser 2. Web server is responsible for generating the markup for the browser to render 3. For each image there is a URL directing the browser to a location from which to download the data: for popular sites this URL often point to CDN(Content Delivery Network) a. If CDN has the data ->respond immediately b. Else it examines the URL->get from photostorage system->update cache data

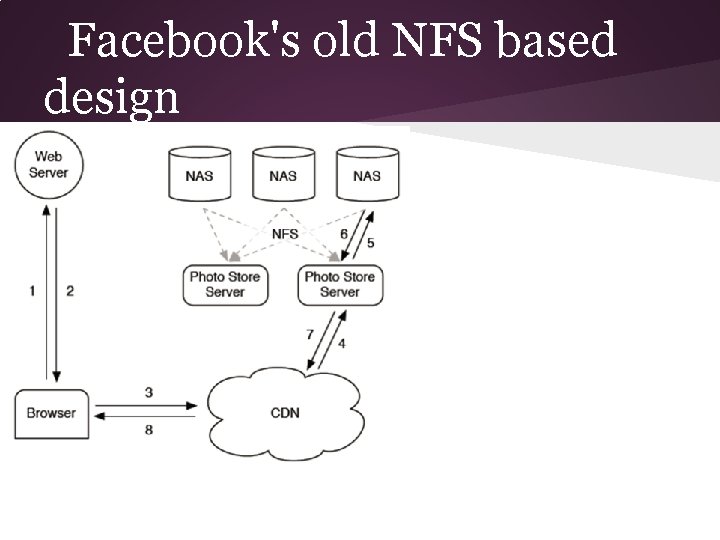

Facebook's old NFS based design

NFS Design description • Each photo stored in it's own file on a set of commercial NAS appliances • Photo store servers mount all the volumes exported by NAS appliances over NFS • Photo store serve process HTTP request for images

a. Extracts the volume and full path to the file from URL of the image b. Read the data over NFS c. Return the result to CDN

Problems with NFS Design • Large number of disk operations needed in order to fetch less popular/old photos Upto 10 disk operations needed to fetch a single photo Upto several i/o operations needed to find the correct inode Too much reliance on CDN's which is an expensive approach Key problem is DISK OPERATION • • •

Solution 1. Reduce the directory sizes to 100 of images per directory 2. Reduction from 10 operations per image to 3 operations per image a. Read the directory metadata into memory b. Load the into memory c. Read the file contents 3. Photo storage server explicitly cache file handles returned by NAS appliances

Moving towards Haystack • The existing systems lack the right RAM to disk ratio i. e they do not have enough main memory to hold the file systems metadata • Each photo corresponds to one file and each file requires atleast one inode which is 100 bytes long and some inodes like xfs_inode_t is 536 bytes long So keeping all these heavy inodes in main memory? Feasibility?

Facebook decided to develop it's own storage system!!! HAYSTACK

Haystack Goals • • High throughput and low latency ->Photos should be served quickly to facilitate a good user experience Fault Tolerant ->Users should not experience errors despite inevitable server crashes and hard drive failures

• Cost Effective ->Haystack is less expensive as compared to old NFS design ->Cost per terabyte of usable storage ->Read rate normalized for each terabyte of storage Simple ->Simple design, easy to develop , less time •

Haystack Design Overview • • Use CDN to serve popular images Store multiple photos on single file Arrange them one after “another” Three core components: ->Haystack Directory ->Haystack Cache ->Haystack Store

Haystack’s Design

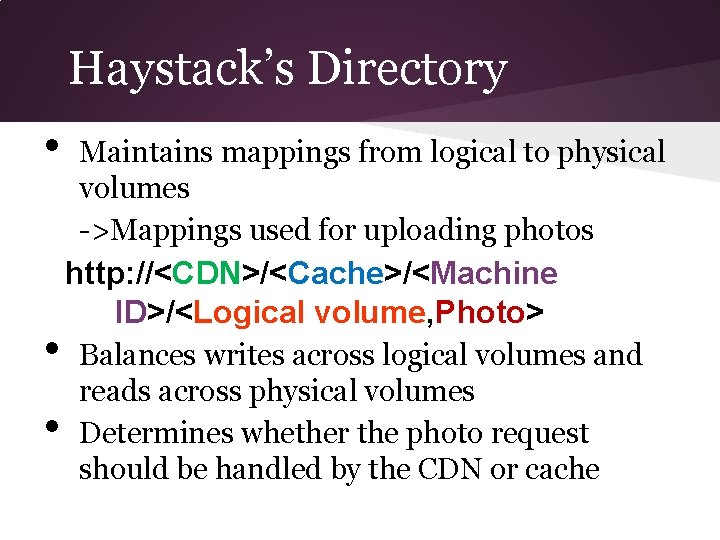

Haystack’s Directory • • • Maintains mappings from logical to physical volumes ->Mappings used for uploading photos http: //<CDN>/<Cache>/<Machine ID>/<Logical volume, Photo> Balances writes across logical volumes and reads across physical volumes Determines whether the photo request should be handled by the CDN or cache

• It identifies those logical volumes that are read only ->machine becomes read only logical volumes reach there storage capacity ->because of operational reasons

Haystack’s Cache • It functions as an internal CDN ->Receives HTTP requests for photos from CDN and also directly from users browser Cache’s a photo when: ->Request comes directly from the user and not the CDN ->Photo is fetched from a write enabled store machine a. Use of cache to shelter write enabled store machine from reads as photos are most •

heavily accessed soon after they are uploaded b. Haystack performs better when doing either reads or writes

Haystack’s Store • • Each store machine manages multiple physical volumes(holds millions of photos) Each physical volume is assigned to a logical one(redundancy for fault tolerance) Each physical volume is a large file(100 GB) that contains many photos Basic operations: ->Read ->Write ->Delete

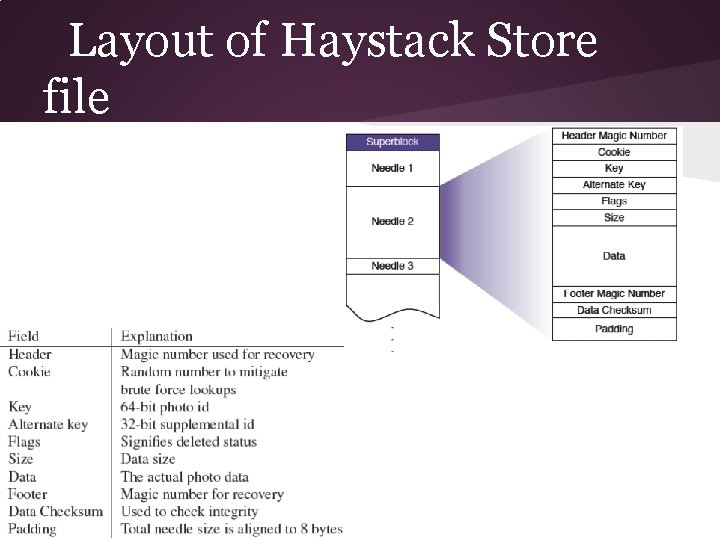

Physical Volume Layout • Store machine represents a physical volume as a large file consisting of a superblock followed by a sequence of needles Think of a physical volume as a very large file (100 GB) saved as ‘/haystack <logical volume id>’

• Each needle represents a photo stored in Haystack Uniquely identified by <Offset, Key, Alternate Key, Cookie>

Layout of Haystack Store file

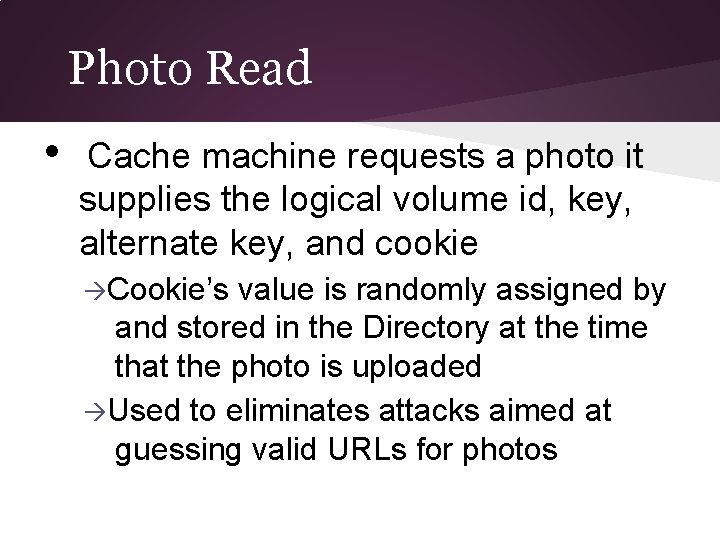

Photo Read • Cache machine requests a photo it supplies the logical volume id, key, alternate key, and cookie Cookie’s value is randomly assigned by and stored in the Directory at the time that the photo is uploaded Used to eliminates attacks aimed at guessing valid URLs for photos

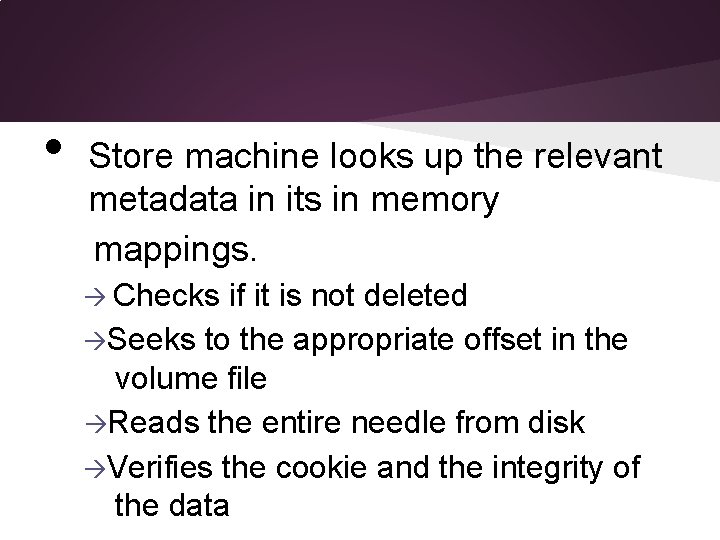

• Store machine looks up the relevant metadata in its in memory mappings. Checks if it is not deleted Seeks to the appropriate offset in the volume file Reads the entire needle from disk Verifies the cookie and the integrity of the data

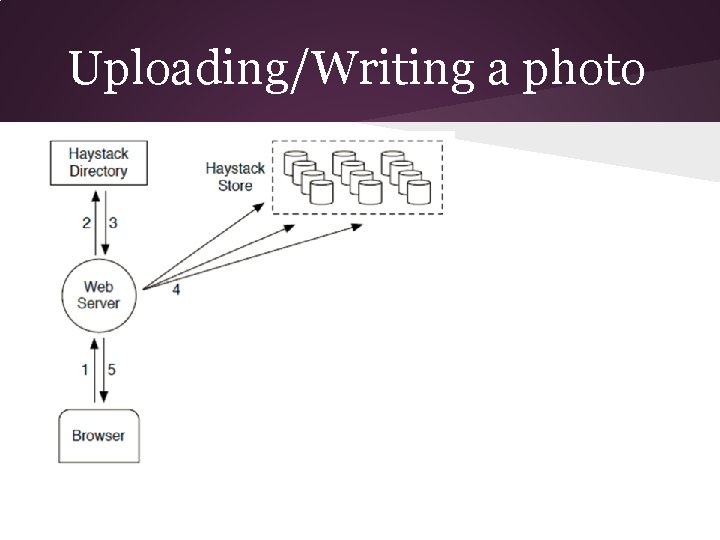

Photo Write • • • When Uploading a photo into haystack webservers provide: Logical volume id, key, alternate key, cookie and data to store machine Each machine synchronously appends needle images to its physical volume files and update in-memory mappings as needed Volumes are append-only so photos can only be modified by adding an updated needle with the same key and alternate key

If the new needle is written to different logical volume: the Directory updates its application metadata and future requests will never fetch the older version. If same logical volume then append the needle to same physical volume. Duplicated distinguished based on their offsets: highest offset =latest version

Uploading/Writing a photo jj

Photo Delete • • Is a straightforward technique The store machine sets the delete flag in both the in-memory mapping and synchronously in the volume file The space occupied by deleted files is lost momentarily and reclaimed later via compaction

Optimization techniques by haystack store • • Compaction Online operation that reclaims the space used by deleted and duplicate needles Needles are copied into a new file and the new file is replaces the current file Delete pattern Similar to photo views Batch Upload Batch upload of multiple photos

• Index File Store machines maintain an index file for each of their volumes Index files allow a store machine to build its in-memory mappings quickly, shortening restart time Index file is a checkpoint of the in-memory data structures used to locate needle efficiently on disk

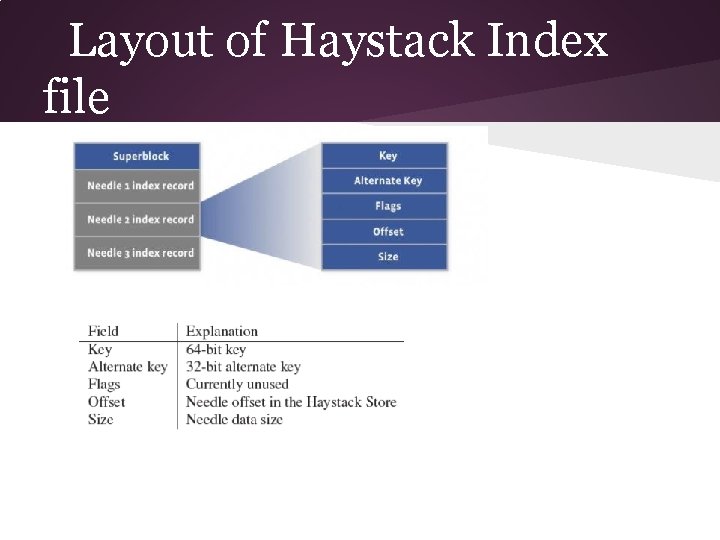

Layout of Haystack Index file

Results • • • Our main aim was to store metadata in memory so haystack achieved that goal It’s less expensive as compared to previous designs Haystack overhead On an average each photo needs 10 bytes of memory Each photo is scaled to four photos: same key(64 bits),

Different alternate keys(32 bits) Different data sizes(assume 16 bits) 2 bytes per image in overhead due to hash table(haystack cache) Total for four scaled photos of same image as 40 bytes which is compared to 536 byte xfs_inode_t in Linux

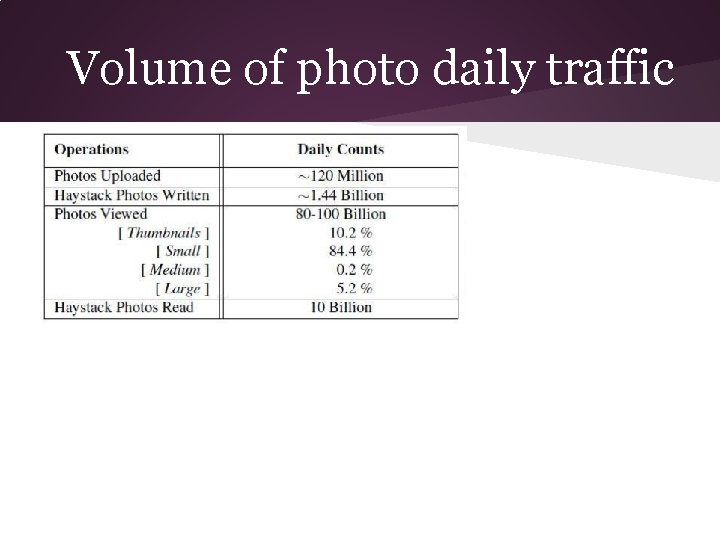

Volume of photo daily traffic

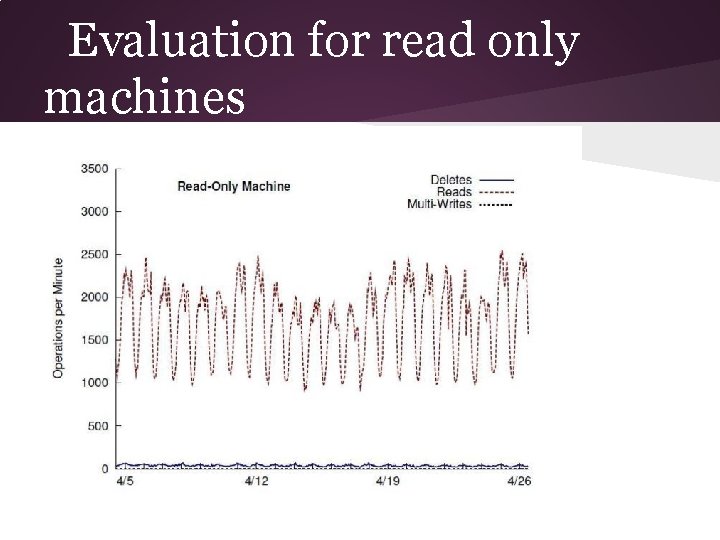

Evaluation for read only machines

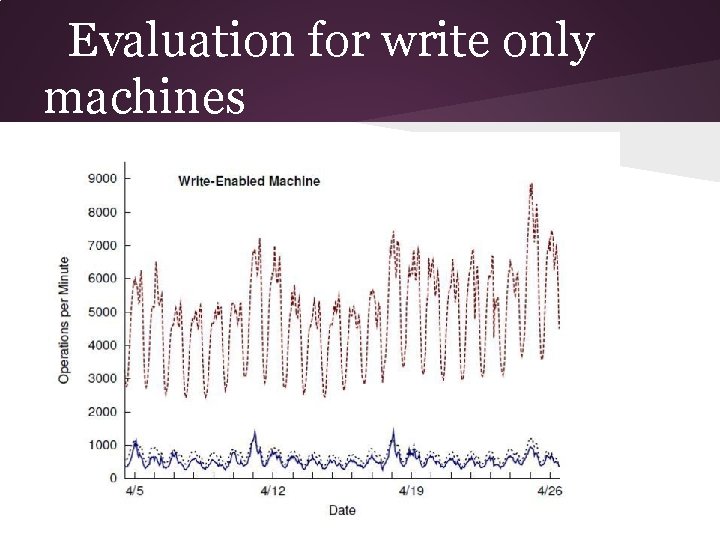

Evaluation for write only machines

While comparing the graphs we found that there are 4 times more read per second (on an average) with using haystack as compared to the standard approach.

THANK YOU!

- Slides: 42