Fall 2017 Fall 2017 Data Center Bridging DCB

Fall 2017

Fall 2017

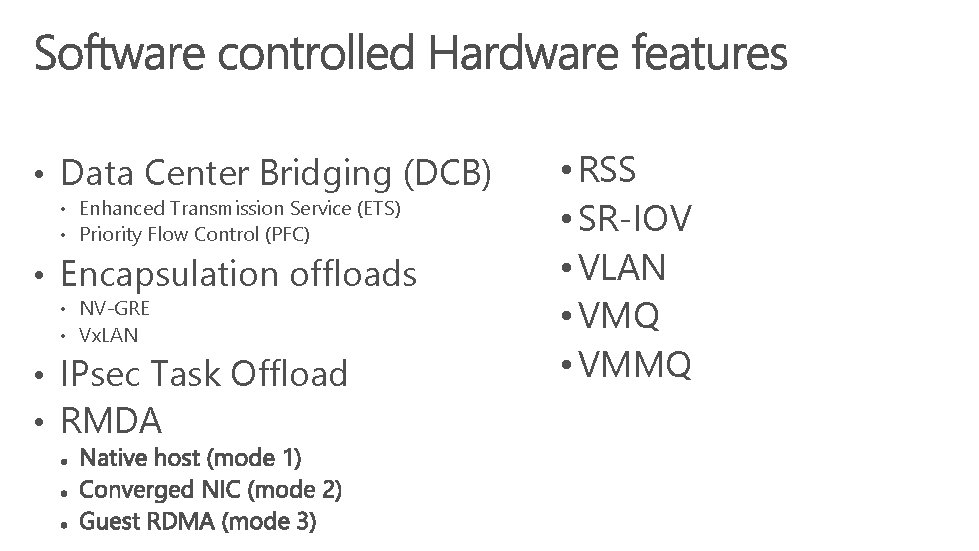

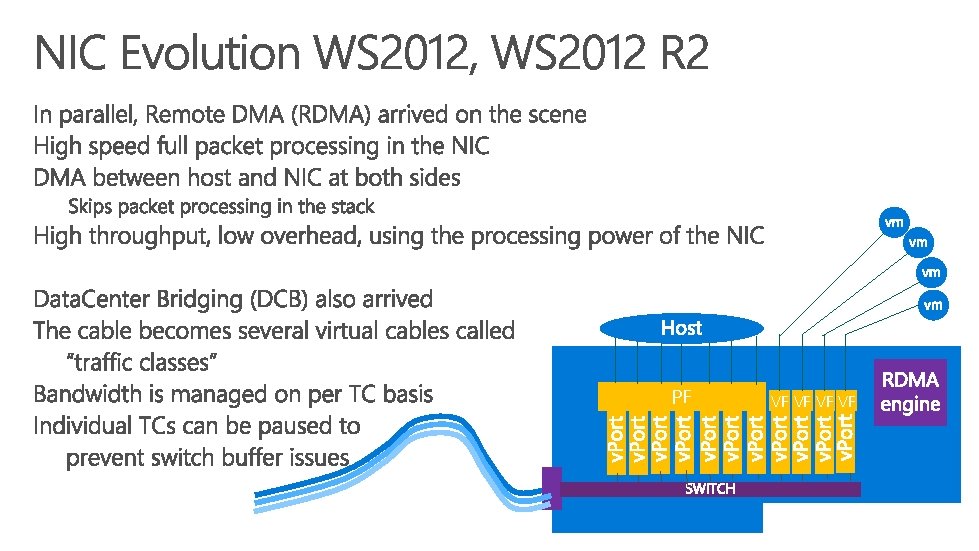

• Data Center Bridging (DCB) • Enhanced Transmission Service (ETS) • Priority Flow Control (PFC) • Encapsulation offloads • NV-GRE • Vx. LAN • IPsec Task Offload • RMDA • RSS • SR-IOV • VLAN • VMQ • VMMQ

Default

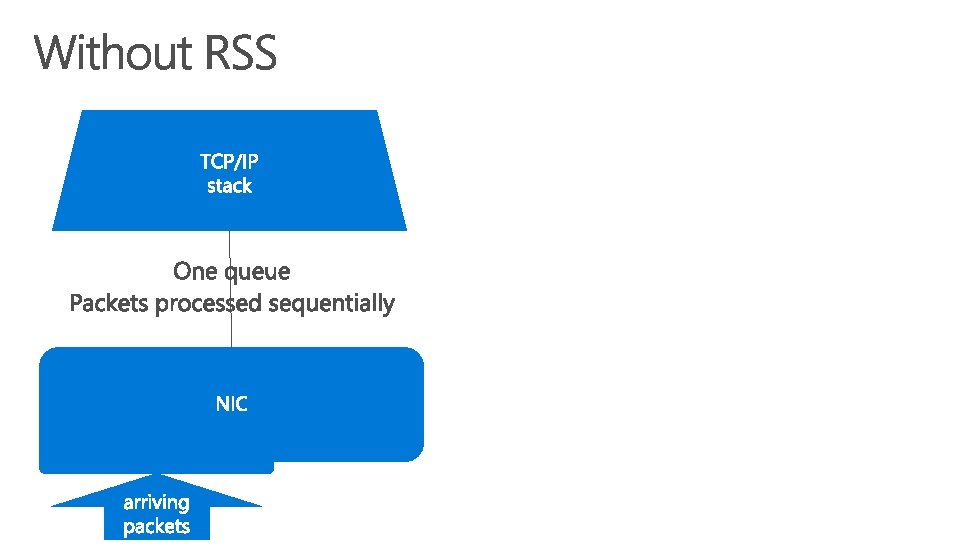

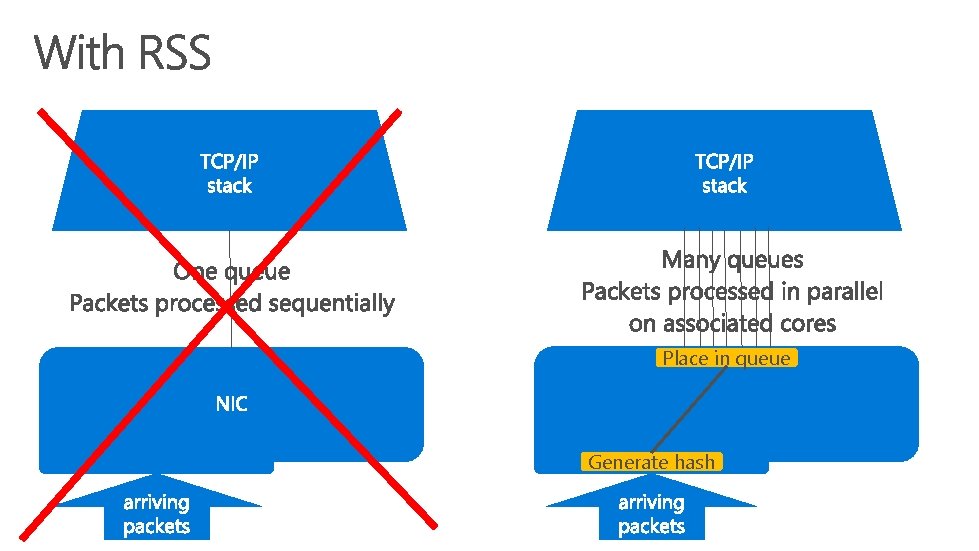

Place in queue Generate hash

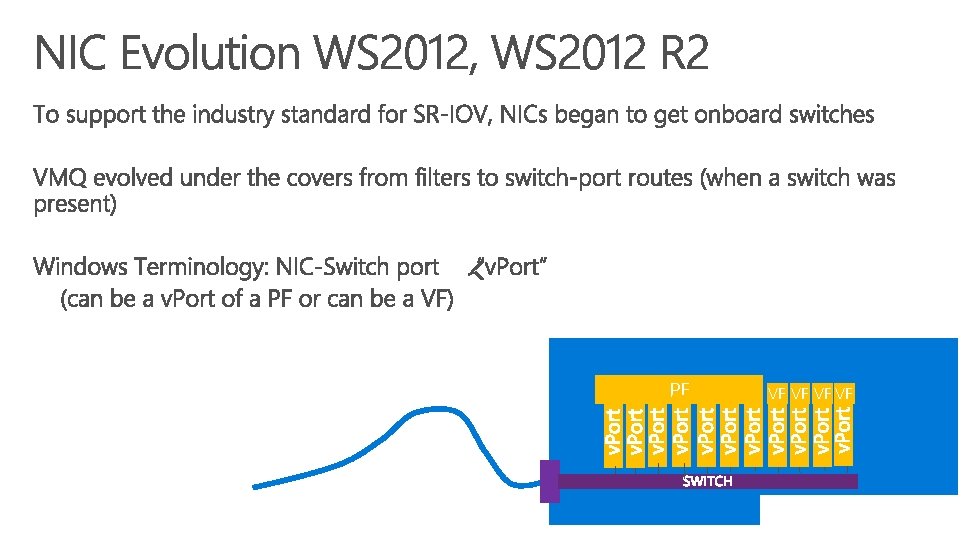

PF VF VF

PF VF VF

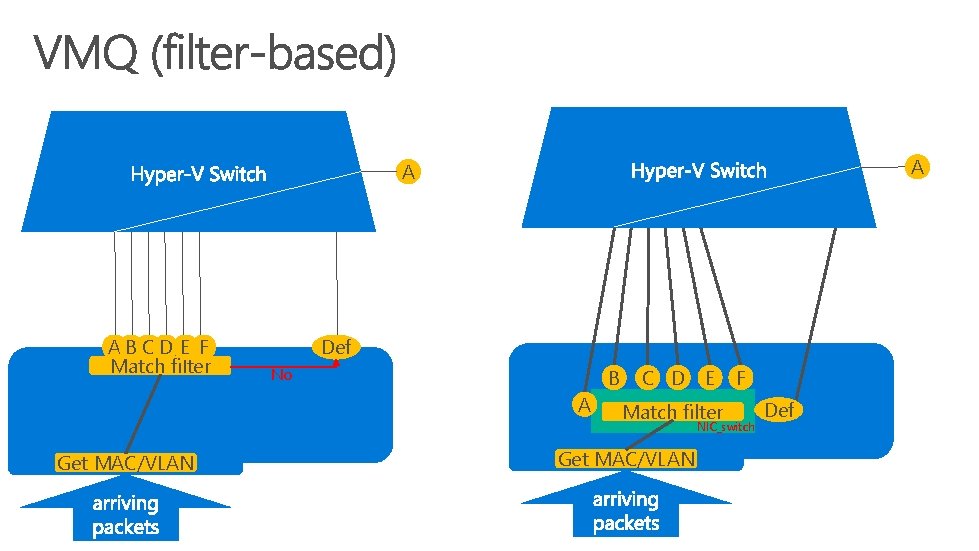

A A AB CD E F Match filter Def No B A C D E Match filter F NIC_switch Get MAC/VLAN Def

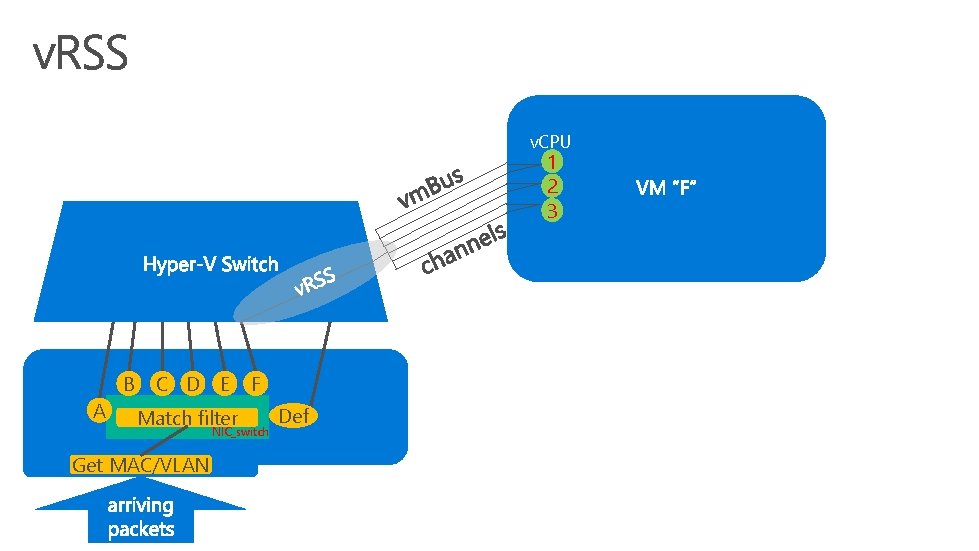

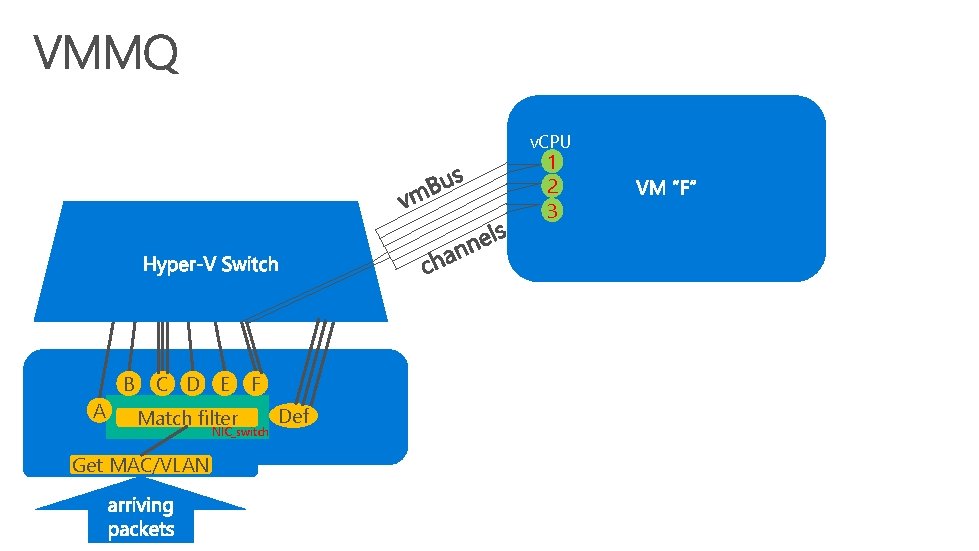

v. CPU 1 2 3 B A C D E Match filter F NIC_switch Get MAC/VLAN Def

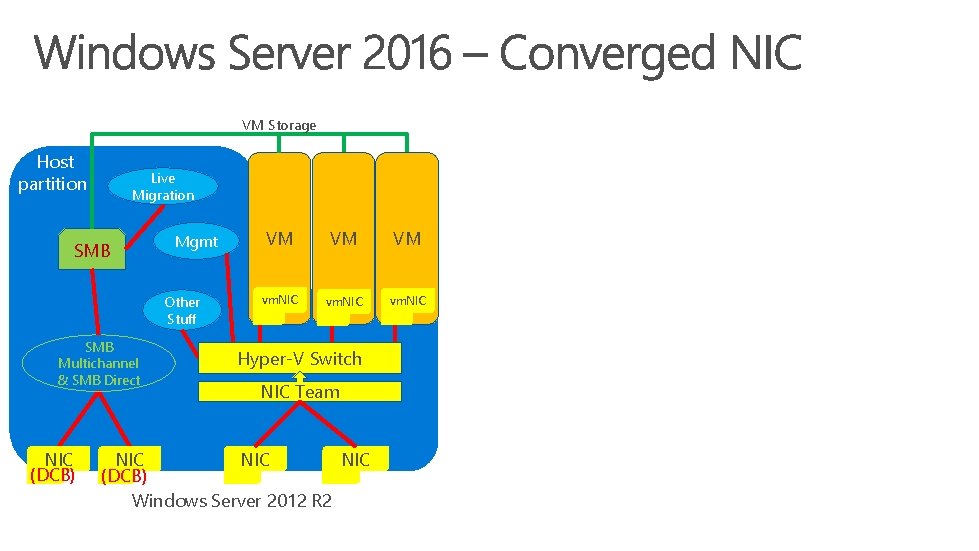

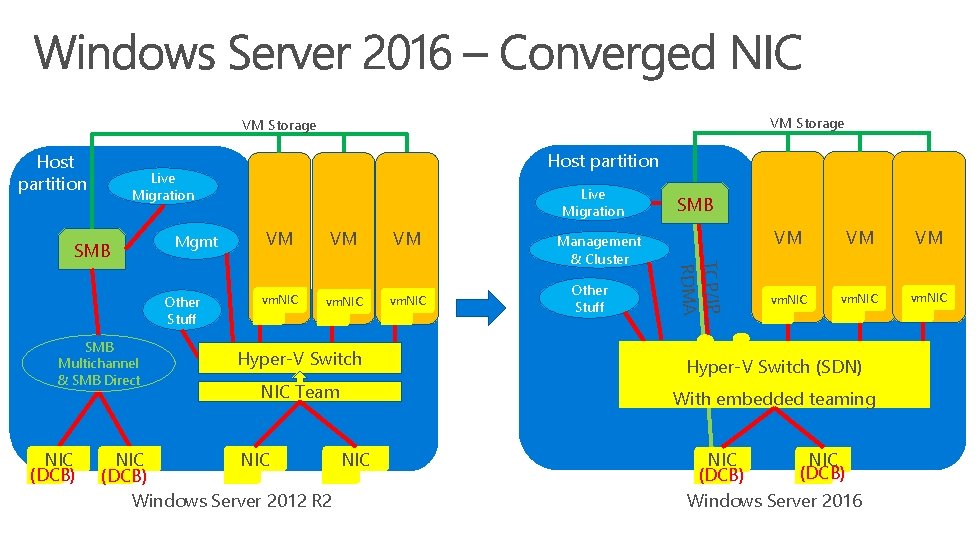

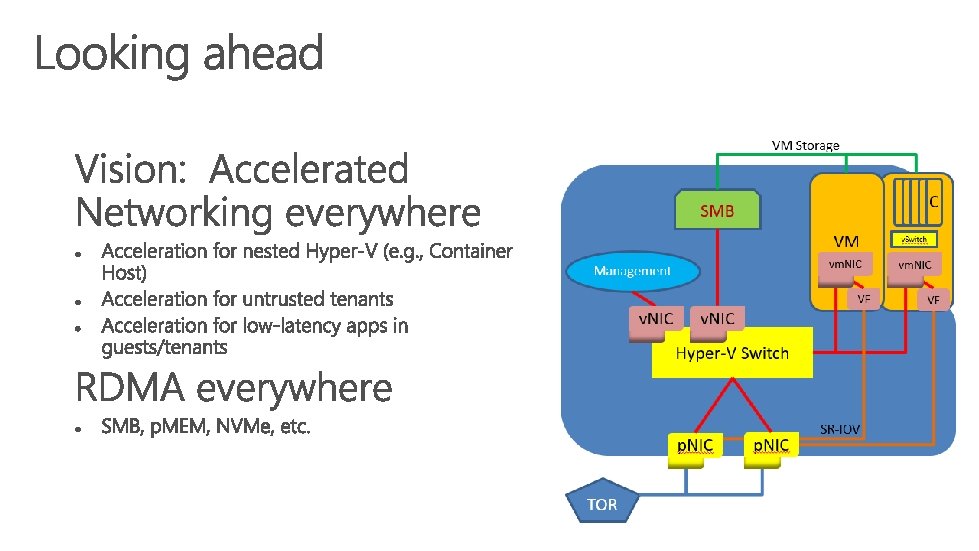

VM Storage Host partition Live Migration SMB Mgmt Other Stuff SMB Multichannel & SMB Direct NIC (DCB) VM VM VM vm. NIC Hyper-V Switch NIC Team NIC NIC (DCB) Windows Server 2012 R 2

VM Storage Host partition Live Migration Mgmt Other Stuff SMB Multichannel & SMB Direct Live Migration VM VM VM vm. NIC Management & Cluster Other Stuff SMB TCP/IP RDMA SMB NIC (DCB) Host partition VM VM VM vm. NIC Hyper-V Switch (SDN) NIC Team With embedded teaming NIC NIC (DCB) Windows Server 2012 R 2 NIC (DCB) Windows Server 2016

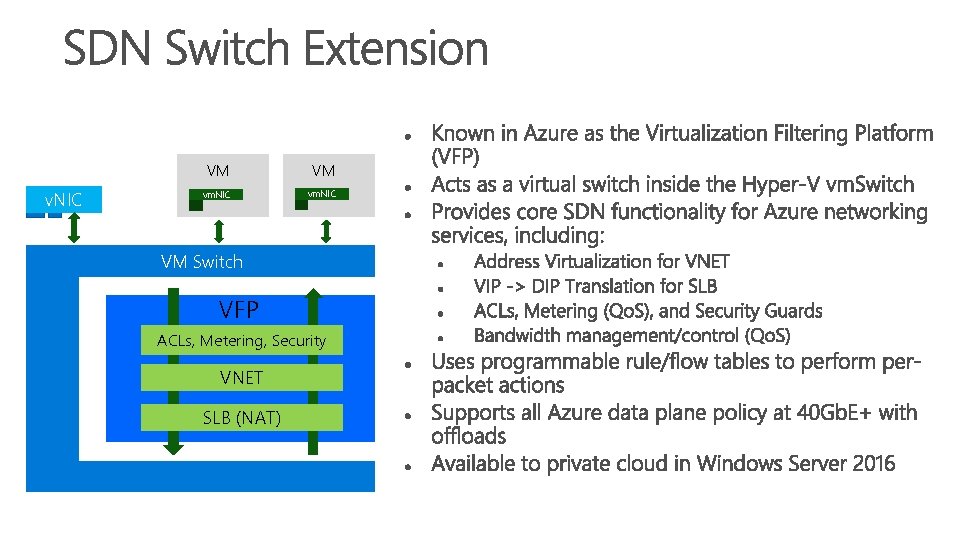

v. NIC VM VM vm. NIC VM Switch VFP ACLs, Metering, Security VNET SLB (NAT)

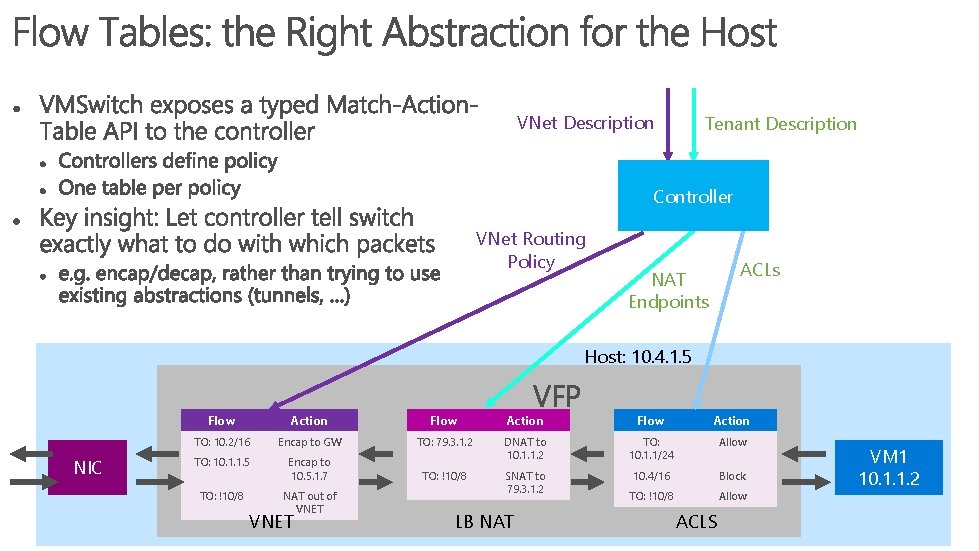

VNet Description Tenant Description Controller VNet Routing Policy ACLs NAT Endpoints Host: 10. 4. 1. 5 NIC VFP Flow Action TO: 10. 2/16 Encap to GW TO: 79. 3. 1. 2 Encap to 10. 5. 1. 7 TO: 10. 1. 1/24 Allow TO: 10. 1. 1. 5 DNAT to 10. 1. 1. 2 TO: !10/8 10. 4/16 Block TO: !10/8 NAT out of VNET SNAT to 79. 3. 1. 2 TO: !10/8 Allow VNET LB NAT ACLS VM 1 10. 1. 1. 2

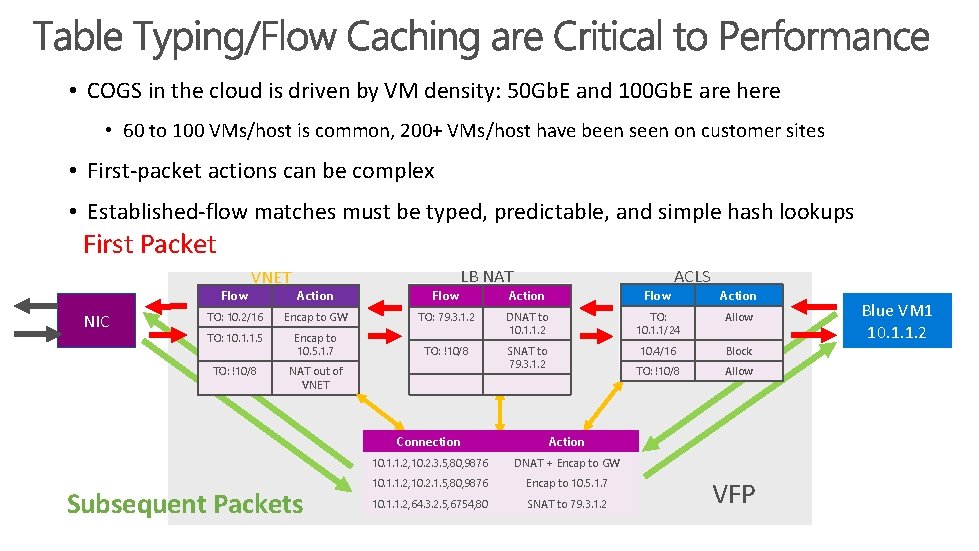

• COGS in the cloud is driven by VM density: 50 Gb. E and 100 Gb. E are here • 60 to 100 VMs/host is common, 200+ VMs/host have been seen on customer sites • First-packet actions can be complex • Established-flow matches must be typed, predictable, and simple hash lookups First Packet Action Flow TO: 10. 2/16 Encap to GW TO: 10. 1. 1. 5 Encap to 10. 5. 1. 7 TO: !10/8 NAT out of VNET Flow NIC VNET Subsequent Packets LB NAT ACLS Action Flow TO: 79. 3. 1. 2 DNAT to 10. 1. 1. 2 TO: 10. 1. 1/24 Allow TO: !10/8 SNAT to 79. 3. 1. 2 10. 4/16 Block TO: !10/8 Allow Connection Action 10. 1. 1. 2, 10. 2. 3. 5, 80, 9876 DNAT + Encap to GW 10. 1. 1. 2, 10. 2. 1. 5, 80, 9876 Encap to 10. 5. 1. 7 10. 1. 1. 2, 64. 3. 2. 5, 6754, 80 SNAT to 79. 3. 1. 2 Action VFP Blue VM 1 10. 1. 1. 2

HNVv 2 – Vx. LAN, NV-GRE • But we still do NV-GRE for those who like that option • Either SCVMM or NRP program the NC • A semi-hidden feature automatically adjusts the MTU on the wire to accommodate the encapsulation overhead • Better performance than splitting packets due to length of encapsulation Microsoft Confidential

SDN Qo. S • • Compatible with RDMA work loads Compatible with DCB Supports Egress reservations (minimum guaranteed bandwidth) Supports Egress limits (maximum permitted bandwidth) Works well even with very different policies for different VMs Works on all vm. Switch ports (host or guest) Managed by Network Controller Implemented in the VFP Microsoft Confidential

v. CPU 1 2 3 B A C D E Match filter F NIC_switch Get MAC/VLAN Def

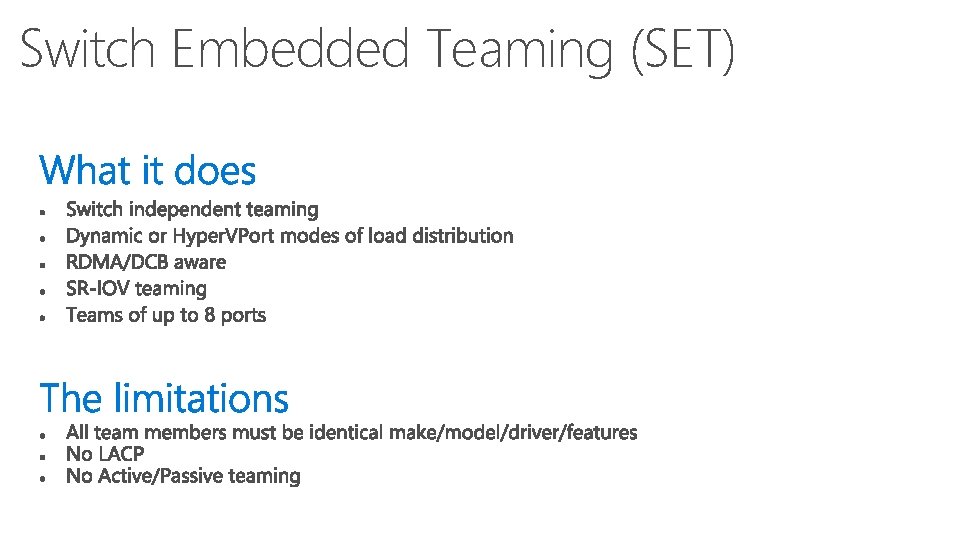

Switch Embedded Teaming (SET) Microsoft Confidential

Switch Embedded Teaming (SET) Microsoft Confidential

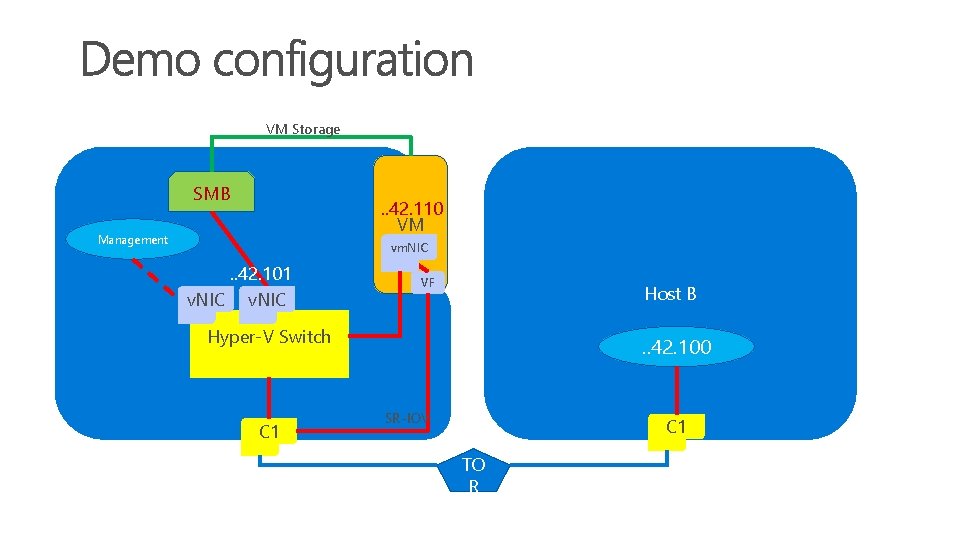

Host partition VM Storage SMB . . 42. 110 VM Management vm. NIC . . 42. 101 v. NIC VF Host B Hyper-V Switch C 1 . . 42. 100 SR-IOV C 1 TO R

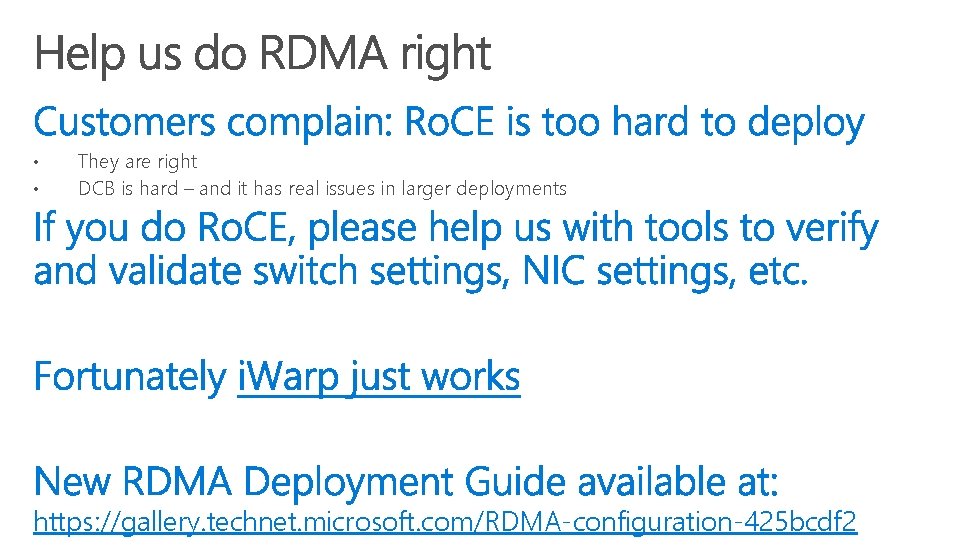

• • They are right DCB is hard – and it has real issues in larger deployments https: //gallery. technet. microsoft. com/RDMA-configuration-425 bcdf 2

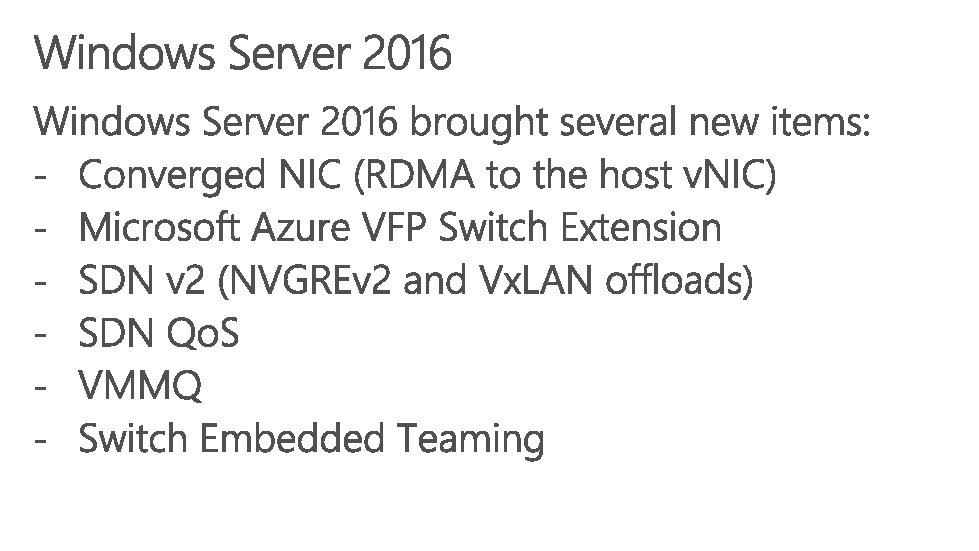

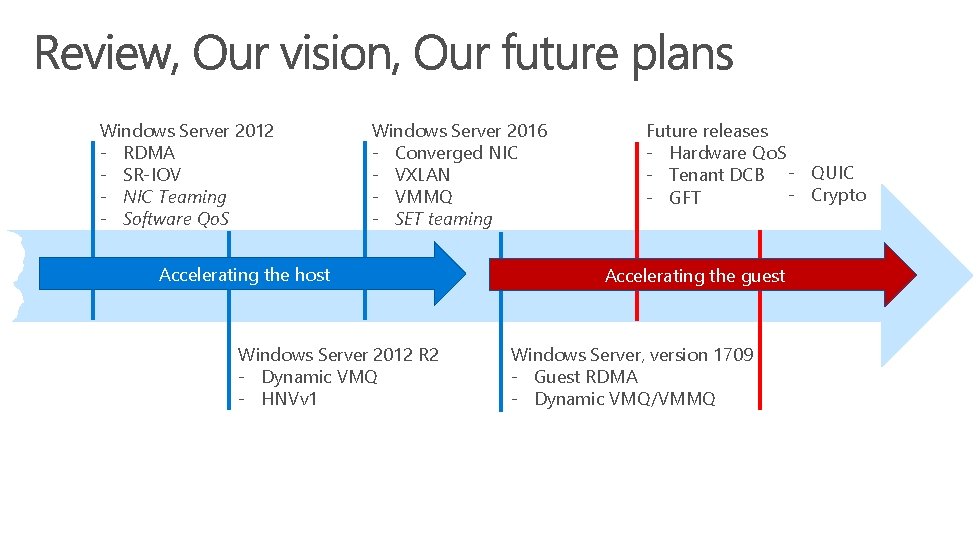

Windows Server 2012 - RDMA - SR-IOV - NIC Teaming - Software Qo. S Windows Server 2016 - Converged NIC - VXLAN - VMMQ - SET teaming Accelerating the host Windows Server 2012 R 2 - Dynamic VMQ - HNVv 1 Future releases - Hardware Qo. S - Tenant DCB - QUIC - Crypto - GFT Accelerating the guest Windows Server, version 1709 - Guest RDMA - Dynamic VMQ/VMMQ

1. 2. 3.

- Slides: 43