Distributed Detection of Weak Network Anomalies Denver Dash

Distributed Detection of Weak Network Anomalies Denver Dash Intel Research, Pittsburgh www. intel. com/research

• Distributed Detection & Inference • The DDI Research Group Intel Research, Santa Clara w John Mark Agosta w Jaideep Chandrashekar w Eve Schooler Past Interns: w Abraham Bachrach – UC Berkeley w Branislav Kveton – University of Pittsburgh w Alex Newman – Rensselaer Polytechnic Institute Other Collaborators: w Simon Crosby – Xen. Source w Karl Levitt – UC Davis w Nina Taft – Intel Research Lab, Berkeley 2 www. intel. com/research

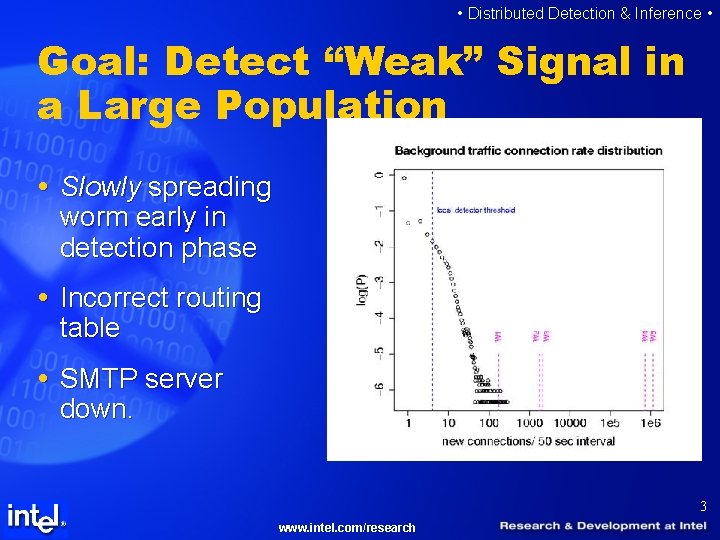

• Distributed Detection & Inference • Goal: Detect “Weak” Signal in a Large Population Slowly spreading worm early in detection phase Incorrect routing table SMTP server down. 3 www. intel. com/research

• Distributed Detection & Inference • Detecting Weak Events: Key Challenges Weak signal in a Large population. Trying to detect “Day-zero” attacks – no data. The system may be gamed by an adversary. High traffic => Extremely low FP rates required. Computationally intensive. Not much background knowledge. 4 www. intel. com/research

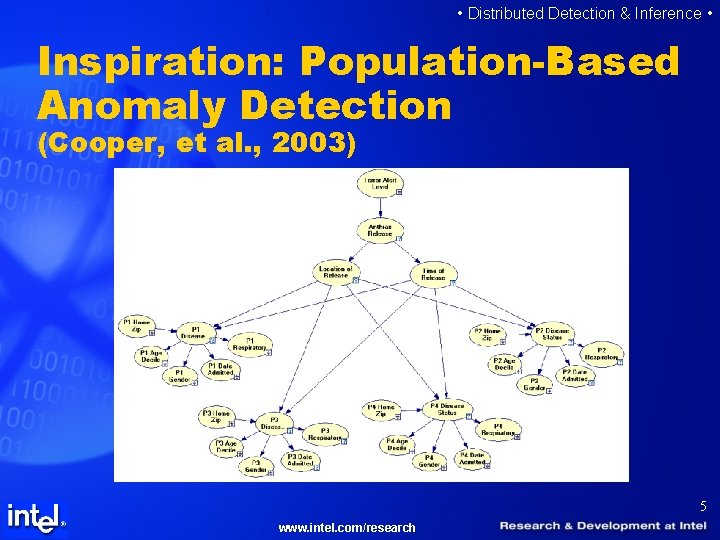

• Distributed Detection & Inference • Inspiration: Population-Based Anomaly Detection (Cooper, et al. , 2003) 5 www. intel. com/research

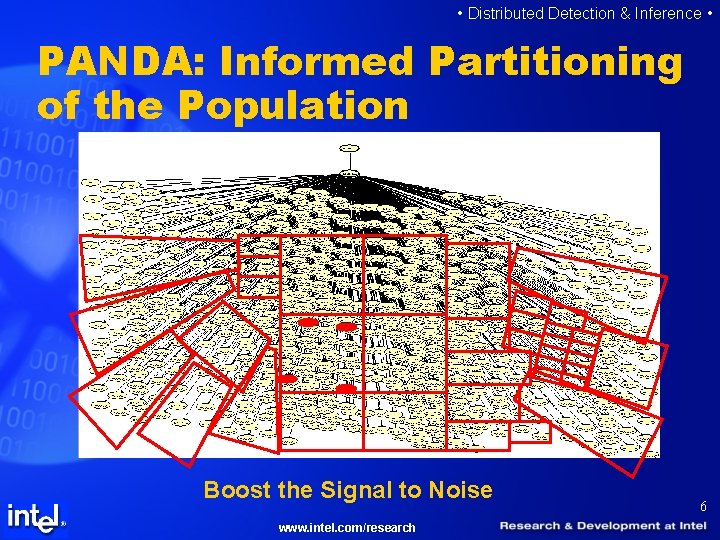

• Distributed Detection & Inference • PANDA: Informed Partitioning of the Population Boost the Signal to Noise www. intel. com/research 6

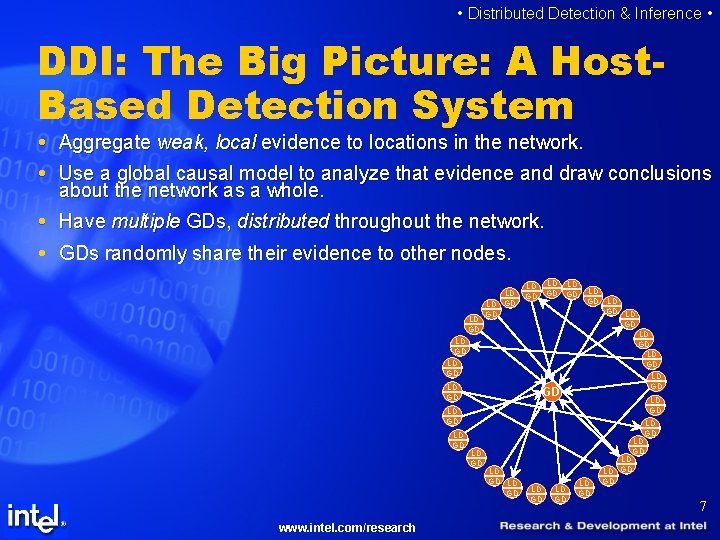

• Distributed Detection & Inference • DDI: The Big Picture: A Host. Based Detection System Aggregate weak, local evidence to locations in the network. Use a global causal model to analyze that evidence and draw conclusions about the network as a whole. Have multiple GDs, distributed throughout the network. GDs randomly share their evidence to other nodes. LD LD GD LD GD LD LD GD LD LD GD GD LD LD LD GD LD LD GD www. intel. com/research LD LD GD LD GD LD LD GD LD LD GD 7

• Distributed Detection & Inference • Why… …distribute? w Partition the data in the one way we know how. w Parallelize the computation. w Adds robustness by eliminating the single (or few) point(s) of failure. w Adds diversity to the global detector, possibly improving performance via ensemble techniques (e. g. , boosting). …aggregate? w Randomly partitions the data to probabilistically boost the S 2 N. w Corroboration implies greater sensitivity and fewer false positives. …use a causal model? w We can incorporate our background knowledge in a principled way. w We can incorporate temporal processes in a principled way. w Keeping a PDF around allows us to be Bayesian. 8 www. intel. com/research

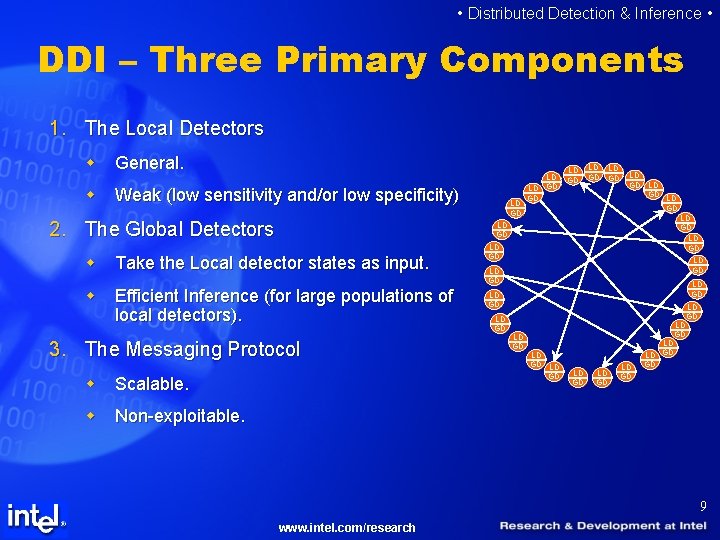

• Distributed Detection & Inference • DDI – Three Primary Components 1. The Local Detectors w General. w Weak (low sensitivity and/or low specificity) 2. The Global Detectors LD LD GD LD GD LD LD GD LD w Take the Local detector states as input. w Efficient Inference (for large populations of local detectors). 3. The Messaging Protocol w Scalable. w Non-exploitable. LD GD LD LD GD LD LD GD LD LD GD LD LD GD LD GD 9 www. intel. com/research

• Distributed Detection & Inference • Local Detectors Two general types: External and Internal. w External: Monitor traffic and other sources of information from outside the host to determine if suspicious/anomalous behavior is occurring. Local Detector Detect probing attacks/ Pre-attack surveillance w Internal: Monitor the host’s internal state to determine if he has been infected by an attack. Local Detector Detect an infected host 10 www. intel. com/research

• Distributed Detection & Inference • Reaction and Dynamics depend strongly on local detector type. How does one respond to a probing/surveillance attack? Most likely the offending IP address is spoofed. External LDs provide information prior to an attack. Growth dynamics of local detections depends strongly on what type of detector it is (more on that later). We focus our research on Internal local detectors, but External dynamics are easier to model and more informative. 11 www. intel. com/research

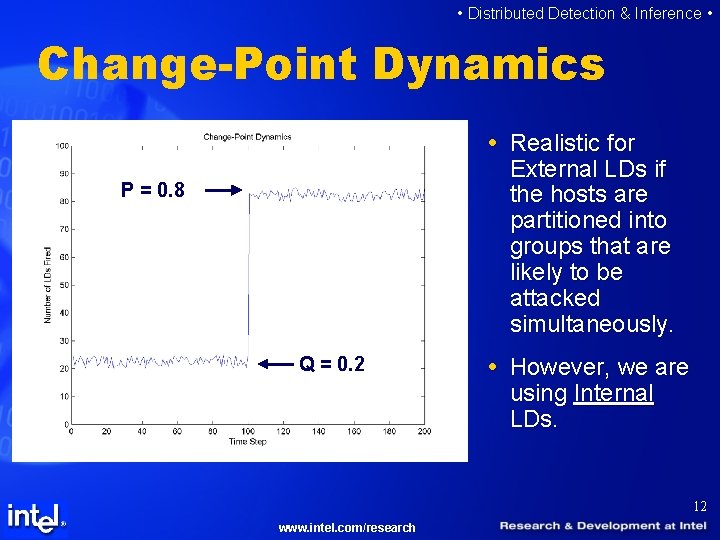

• Distributed Detection & Inference • Change-Point Dynamics Realistic for External LDs if the hosts are partitioned into groups that are likely to be attacked simultaneously. P = 0. 8 Q = 0. 2 However, we are using Internal LDs. 12 www. intel. com/research

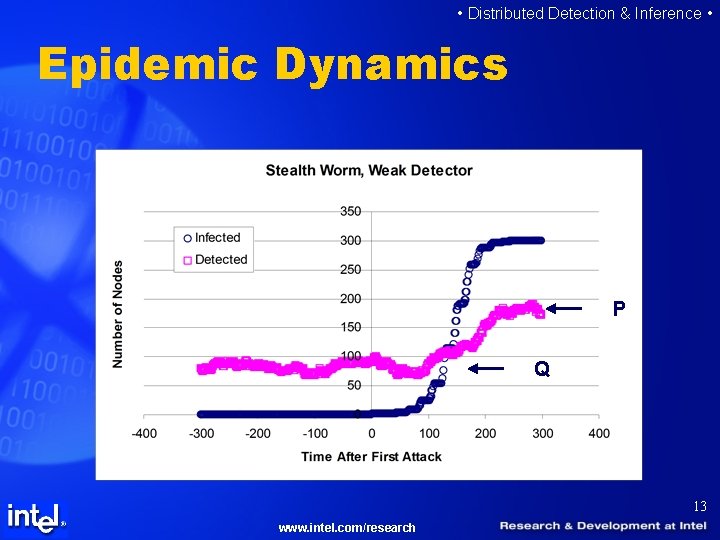

• Distributed Detection & Inference • Epidemic Dynamics P Q 13 www. intel. com/research

• Distributed Detection & Inference • Global Models Use causal graphical models to capture background information. Based on Population-based modeling techniques (Cooper, et al. , 2003, 2004), (Neill, Moore & Cooper, 2005). 14 www. intel. com/research

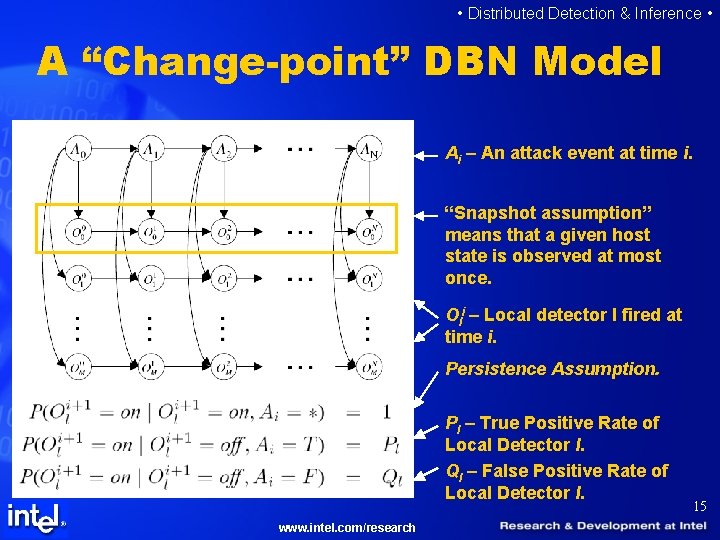

• Distributed Detection & Inference • A “Change-point” DBN Model Ai – An attack event at time i. “Snapshot assumption” means that a given host state is observed at most once. Oli – Local detector l fired at time i. Persistence Assumption. Pl – True Positive Rate of Local Detector l. Ql – False Positive Rate of Local Detector l. www. intel. com/research 15

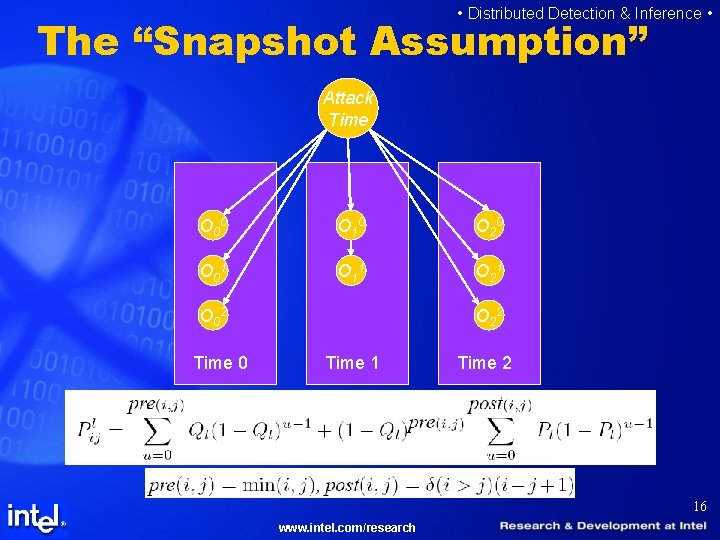

• Distributed Detection & Inference • The “Snapshot Assumption” Attack Time O 0 0 O 1 0 O 2 0 O 0 1 O 1 1 O 2 1 O 0 2 Time 0 O 2 2 Time 1 Time 2 16 www. intel. com/research

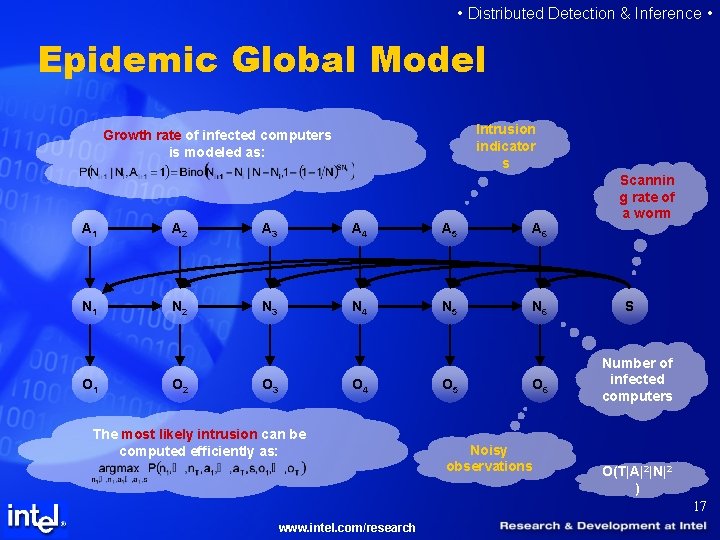

• Distributed Detection & Inference • Epidemic Global Model Intrusion indicator s Growth rate of infected computers is modeled as: A 1 A 2 A 3 A 4 A 5 A 6 N 1 N 2 N 3 N 4 N 5 N 6 O 1 O 2 O 3 O 4 The most likely intrusion can be computed efficiently as: O 5 O 6 Noisy observations Scannin g rate of a worm S Number of infected computers O(T|A|2|N|2 ) 17 www. intel. com/research

• Distributed Detection & Inference • Global Model Comparisons CP Model allows for much more tractable inference. Epidemic model is more realistic for our types of detectors. We primarily use CP model, but we run a few experiments with Epidemic model. 18 www. intel. com/research

• Distributed Detection & Inference • Experimental Setup Used a simple Internal local detector that counts number of new connections to unique destination ports. Included background data in the form of 37 hosts from Intel enterprise over 5 weeks. Sometimes we sampled data to scale up simulation to 500 hosts. Hosts share information via 1 -step evidence beaconing to m hosts uniformly at random. Focus on slow worms. Use Log-Posterior odds to determine an outbreak. 19 www. intel. com/research

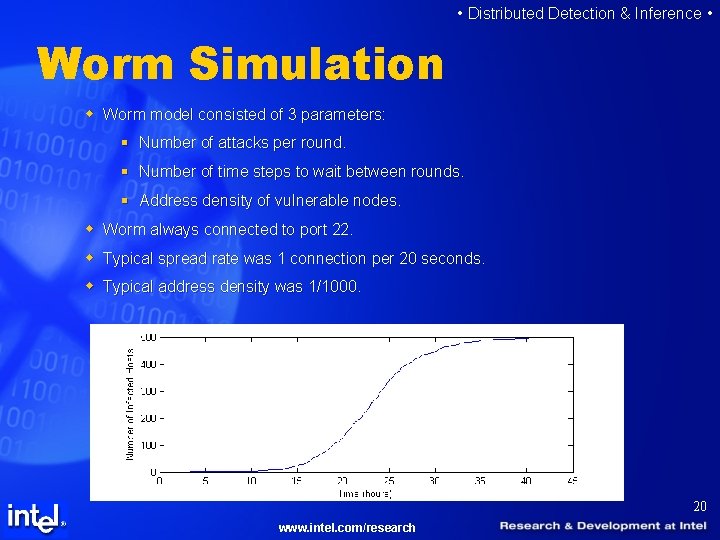

• Distributed Detection & Inference • Worm Simulation w Worm model consisted of 3 parameters: § Number of attacks per round. § Number of time steps to wait between rounds. § Address density of vulnerable nodes. w Worm always connected to port 22. w Typical spread rate was 1 connection per 20 seconds. w Typical address density was 1/1000. 20 www. intel. com/research

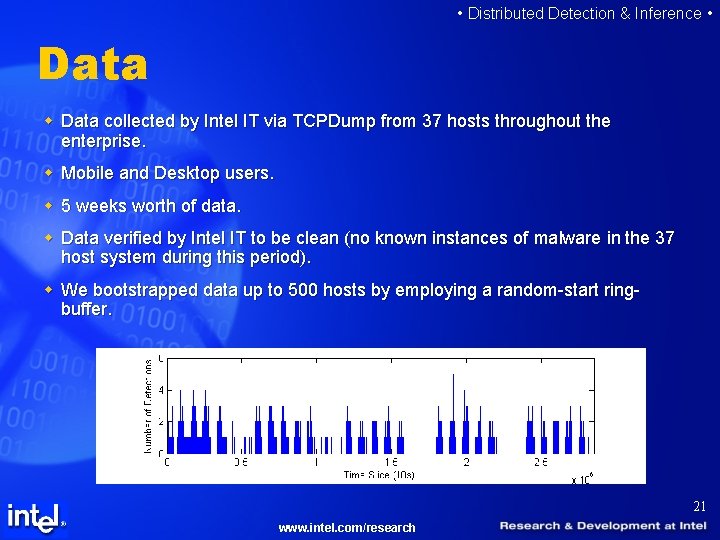

• Distributed Detection & Inference • Data w Data collected by Intel IT via TCPDump from 37 hosts throughout the enterprise. w Mobile and Desktop users. w 5 weeks worth of data. w Data verified by Intel IT to be clean (no known instances of malware in the 37 host system during this period). w We bootstrapped data up to 500 hosts by employing a random-start ringbuffer. 21 www. intel. com/research

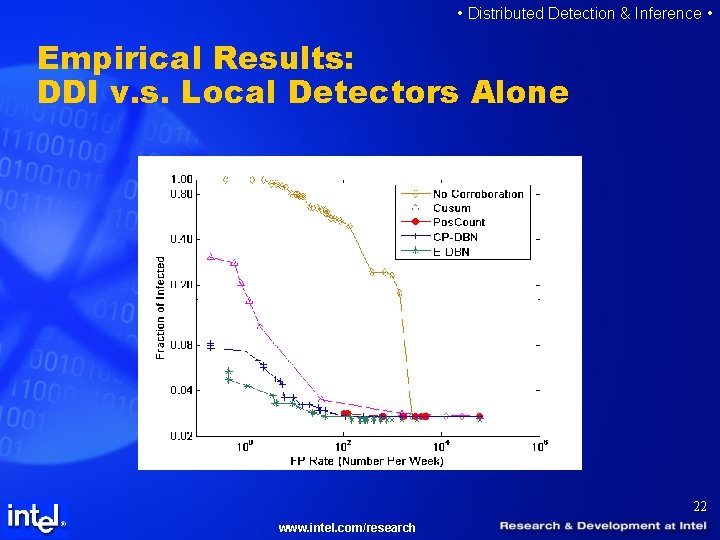

• Distributed Detection & Inference • Empirical Results: DDI v. s. Local Detectors Alone 22 www. intel. com/research

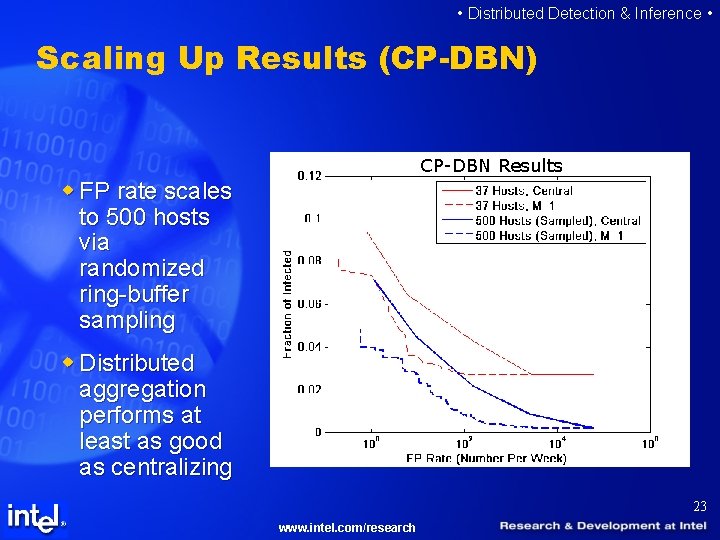

• Distributed Detection & Inference • Scaling Up Results (CP-DBN) CP-DBN Results w FP rate scales to 500 hosts via randomized ring-buffer sampling w Distributed aggregation performs at least as good as centralizing 23 www. intel. com/research

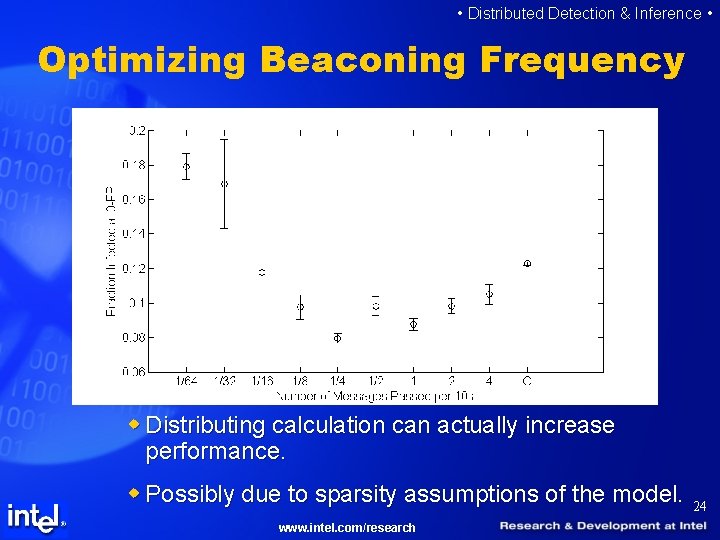

• Distributed Detection & Inference • Optimizing Beaconing Frequency w Distributing calculation can actually increase performance. w Possibly due to sparsity assumptions of the model. www. intel. com/research 24

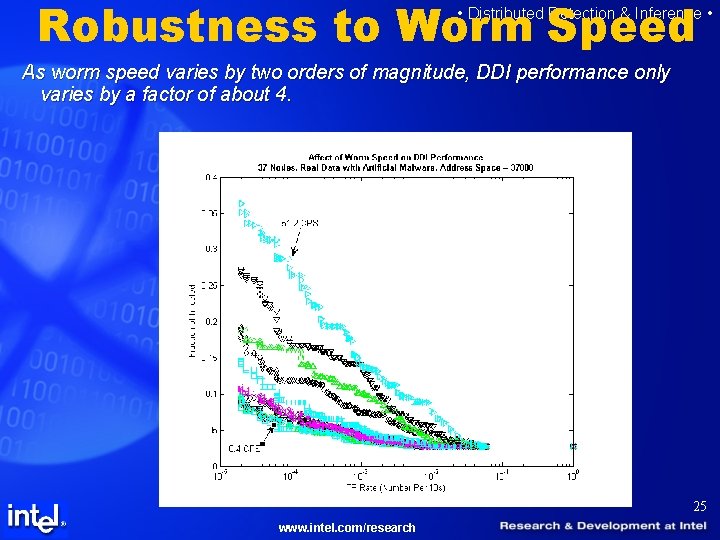

Robustness to Worm Speed • Distributed Detection & Inference • As worm speed varies by two orders of magnitude, DDI performance only varies by a factor of about 4. 25 www. intel. com/research

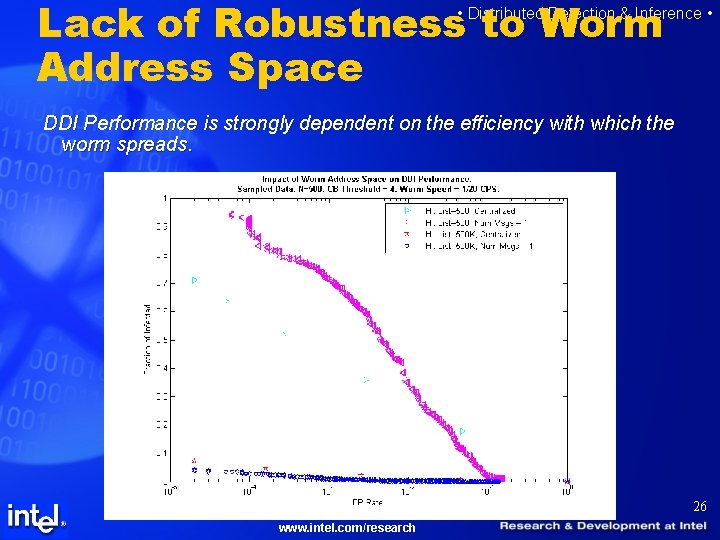

Lack of Robustness to Worm Address Space • Distributed Detection & Inference • DDI Performance is strongly dependent on the efficiency with which the worm spreads. 26 www. intel. com/research

• Distributed Detection & Inference • Some Notes on Efficient Message Passing 27 www. intel. com/research

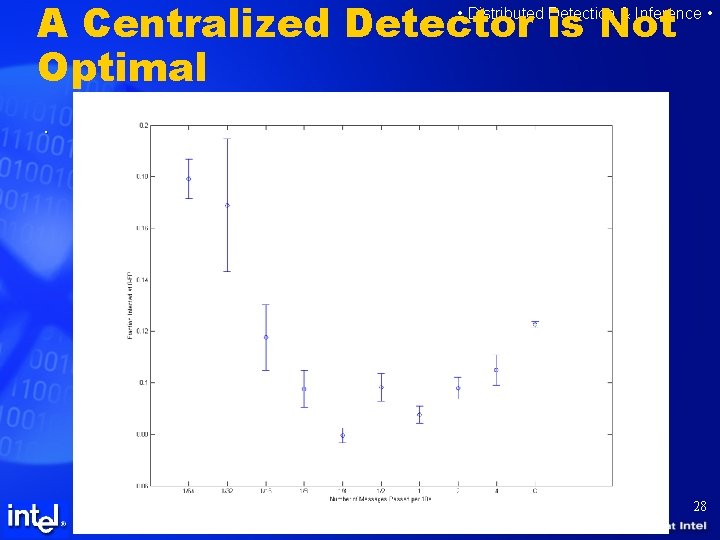

A Centralized Detector is Not Optimal • Distributed Detection & Inference • . 28 www. intel. com/research

• Distributed Detection & Inference • Importance Sampling for Optimal Bandwidth Usage Most observations in early stages of an attack are going to be negative. We desire to increase sampling efficiency. Importance sampling provides the solution. 29 www. intel. com/research

Future Research Challenges www. intel. com/research

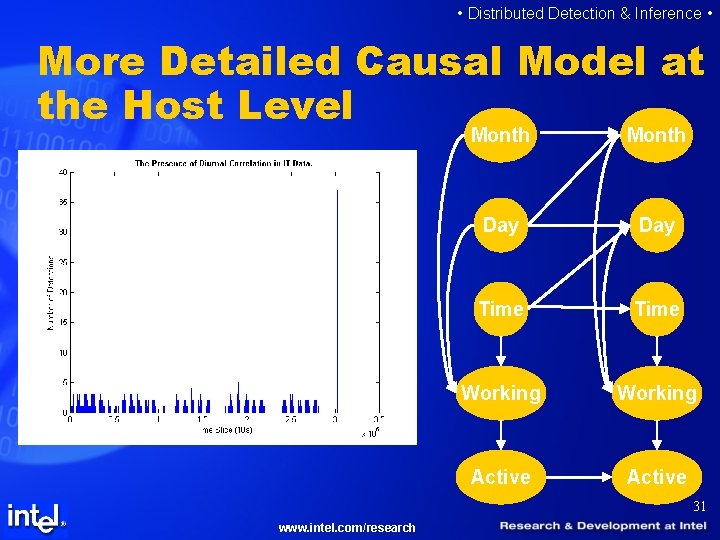

• Distributed Detection & Inference • More Detailed Causal Model at the Host Level Month Day Time Working Active 31 www. intel. com/research

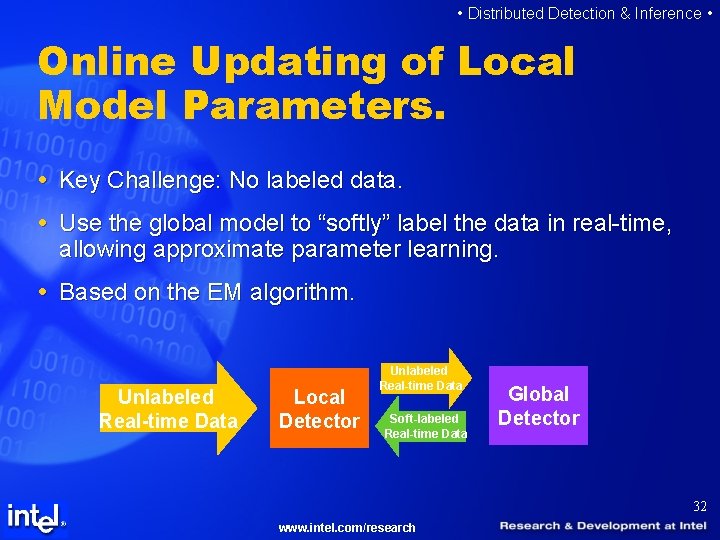

• Distributed Detection & Inference • Online Updating of Local Model Parameters. Key Challenge: No labeled data. Use the global model to “softly” label the data in real-time, allowing approximate parameter learning. Based on the EM algorithm. Unlabeled Real-time Data Local Detector Unlabeled Real-time Data Soft-labeled Real-time Data Global Detector 32 www. intel. com/research

• Distributed Detection & Inference • More Advanced Ensembles of Global Detectors. In the present method, each host has its own global detector. Taking the max over these detectors yields an ensemble classifier: If intentional diversity can be added to global detectors, in principle more advanced ensemble methods can be used, such as BMA, boosting, or bagging. 33 www. intel. com/research

• Distributed Detection & Inference • Questions? 34 www. intel. com/research

- Slides: 34