Crash Course on Machine Learning Part IV Several

- Slides: 54

Crash Course on Machine Learning Part IV Several slides from Derek Hoiem, and Ben Taskar

What you need to know • Dual SVM formulation – How it’s derived • • • The kernel trick Derive polynomial kernel Common kernels Kernelized logistic regression SVMs vs kernel regression SVMs vs logistic regression

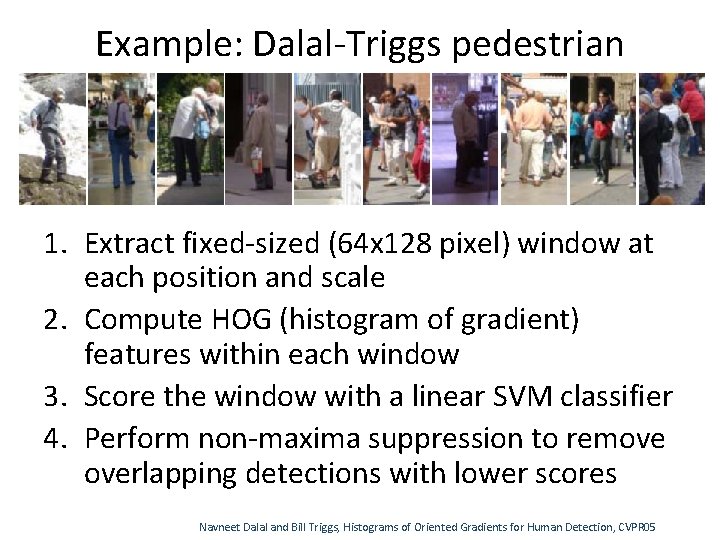

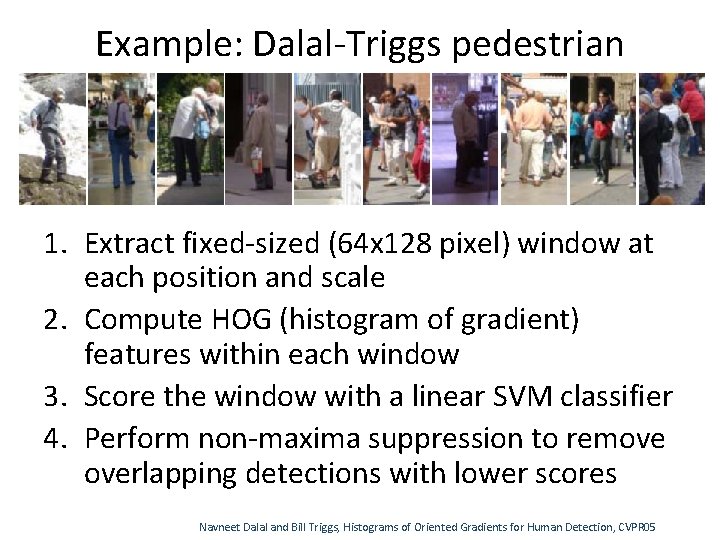

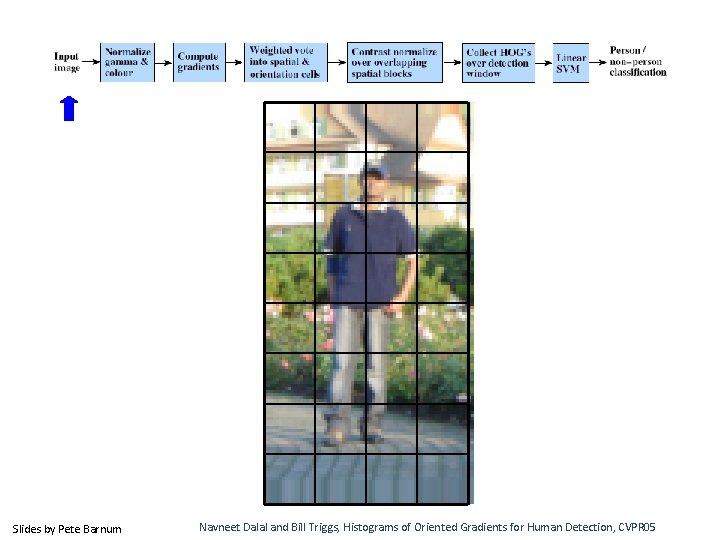

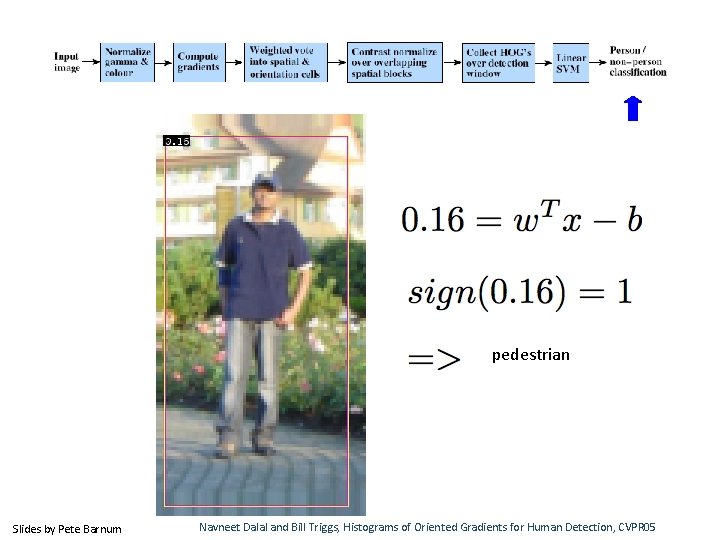

Example: Dalal-Triggs pedestrian detector 1. Extract fixed-sized (64 x 128 pixel) window at each position and scale 2. Compute HOG (histogram of gradient) features within each window 3. Score the window with a linear SVM classifier 4. Perform non-maxima suppression to remove overlapping detections with lower scores Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

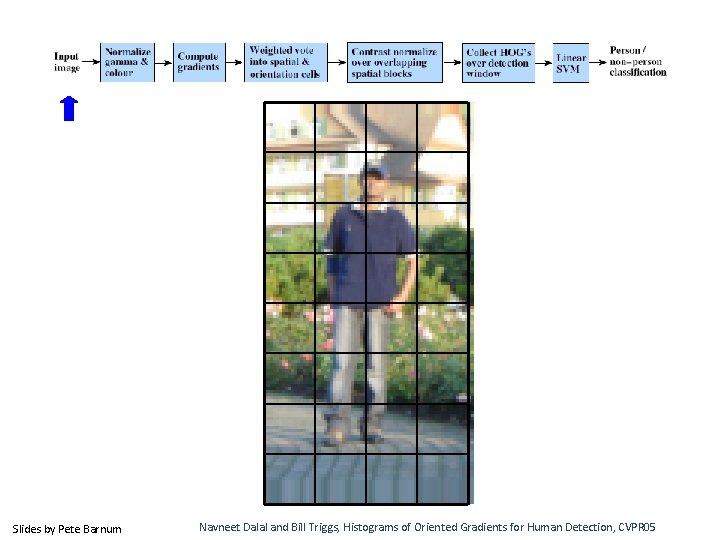

Slides by Pete Barnum Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

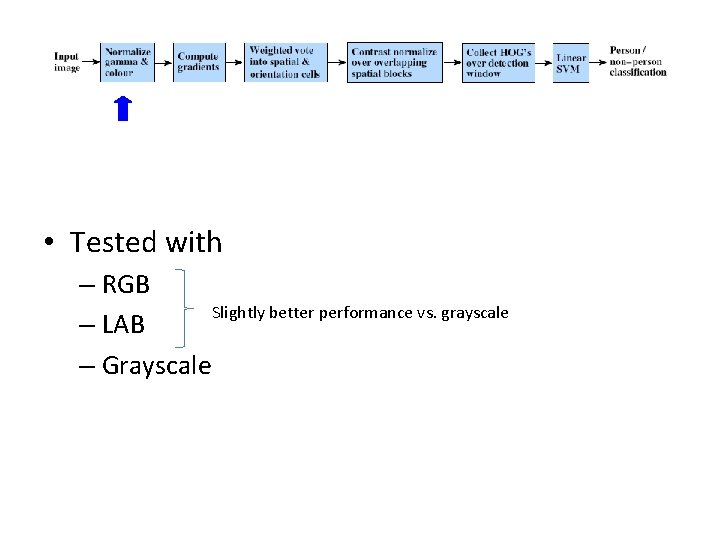

• Tested with – RGB Slightly better performance vs. grayscale – LAB – Grayscale

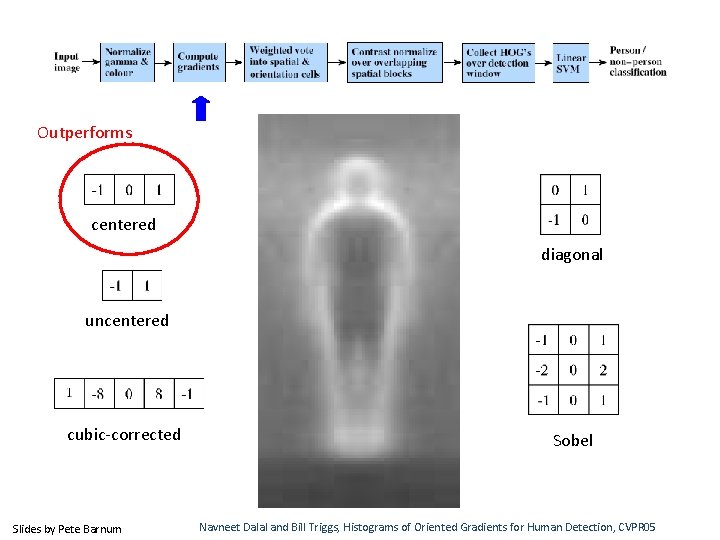

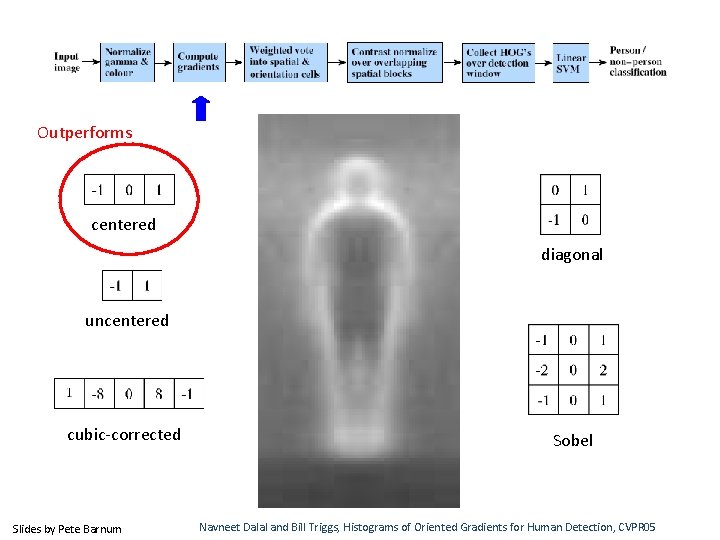

Outperforms centered diagonal uncentered cubic-corrected Slides by Pete Barnum Sobel Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

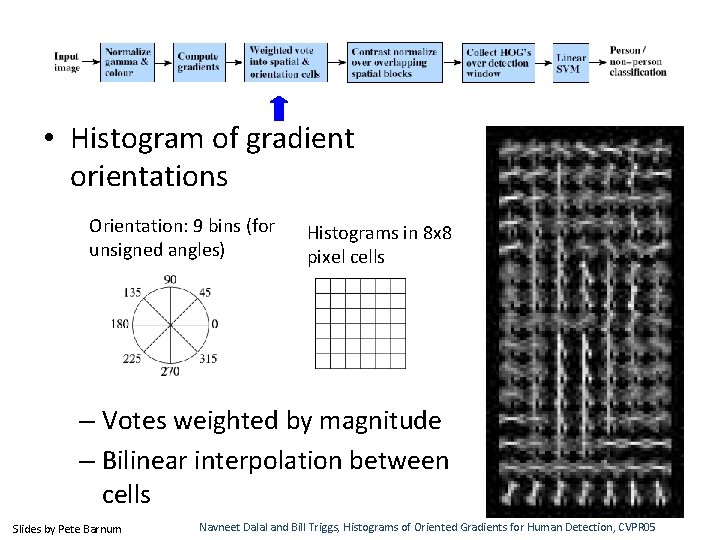

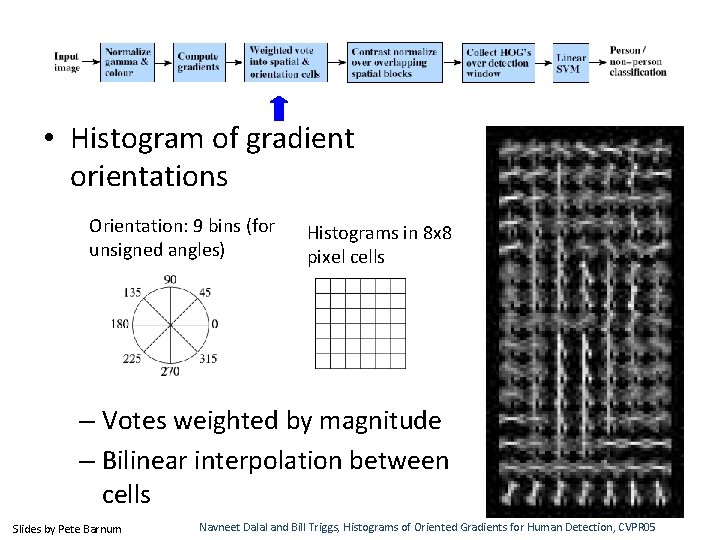

• Histogram of gradient orientations Orientation: 9 bins (for unsigned angles) Histograms in 8 x 8 pixel cells – Votes weighted by magnitude – Bilinear interpolation between cells Slides by Pete Barnum Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

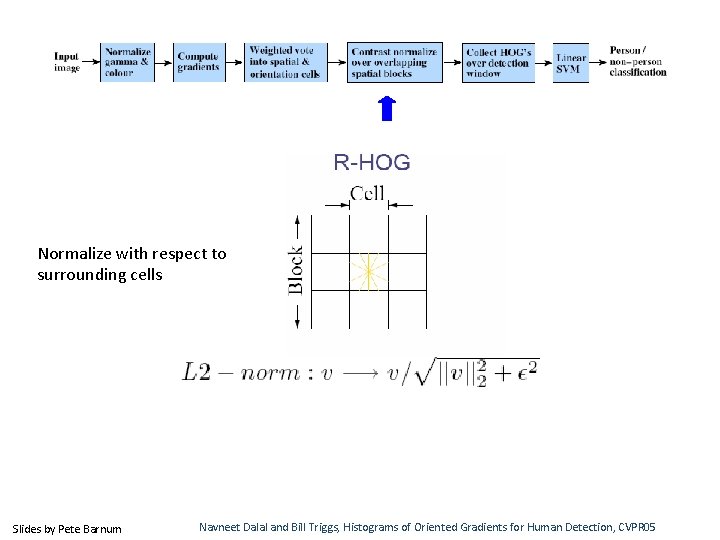

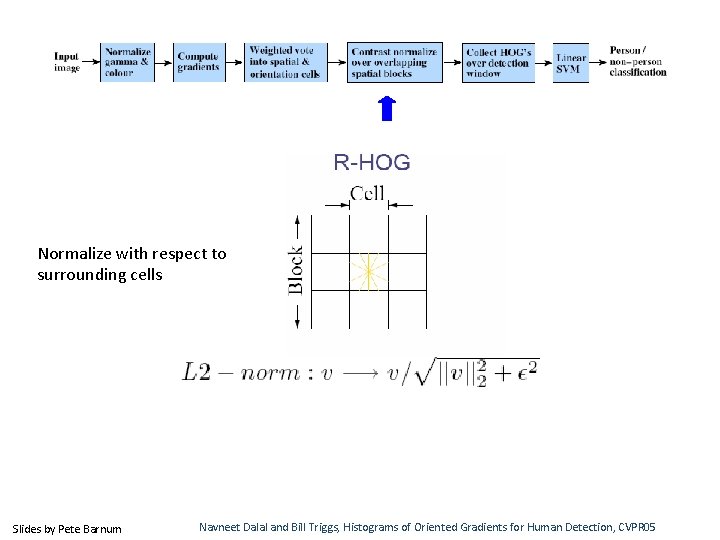

Normalize with respect to surrounding cells Slides by Pete Barnum Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

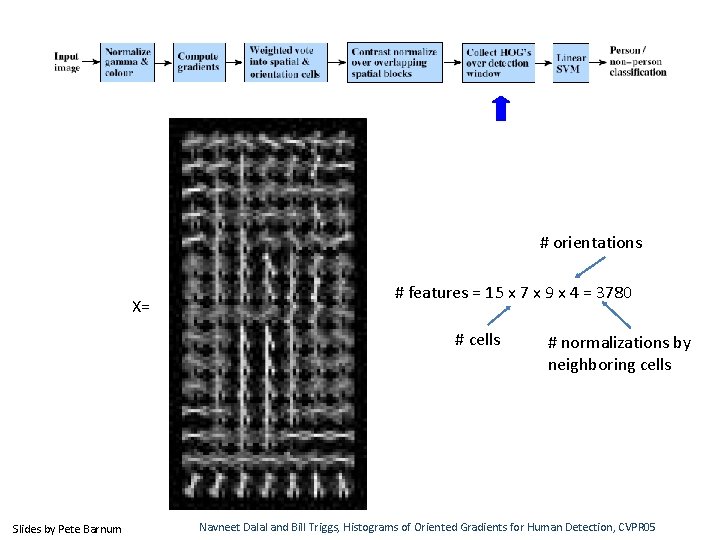

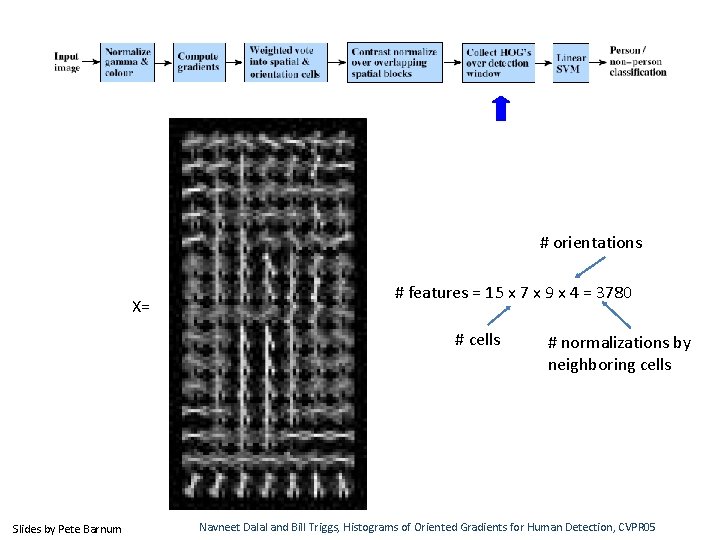

# orientations X= # features = 15 x 7 x 9 x 4 = 3780 # cells Slides by Pete Barnum # normalizations by neighboring cells Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

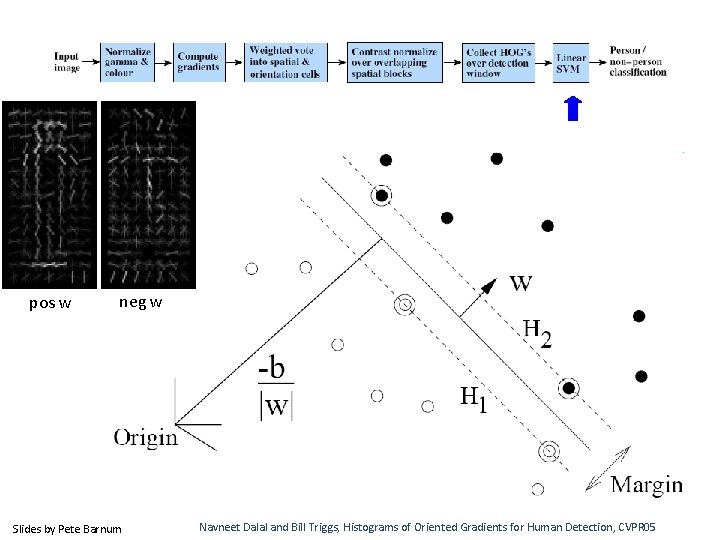

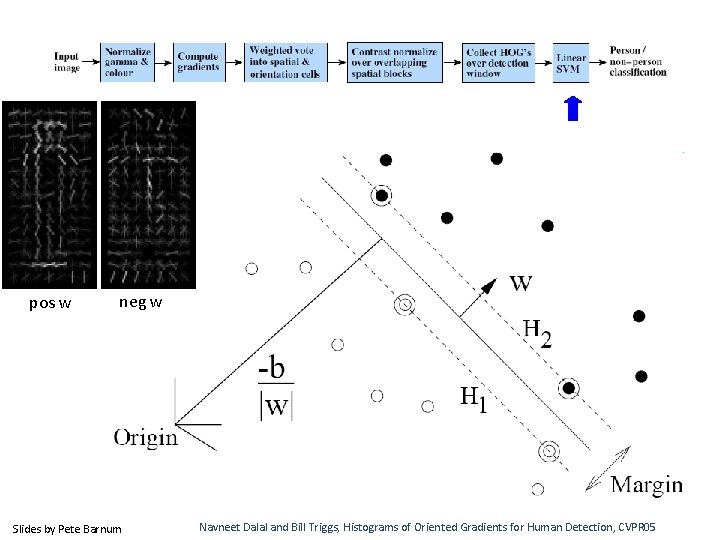

pos w neg w Slides by Pete Barnum Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

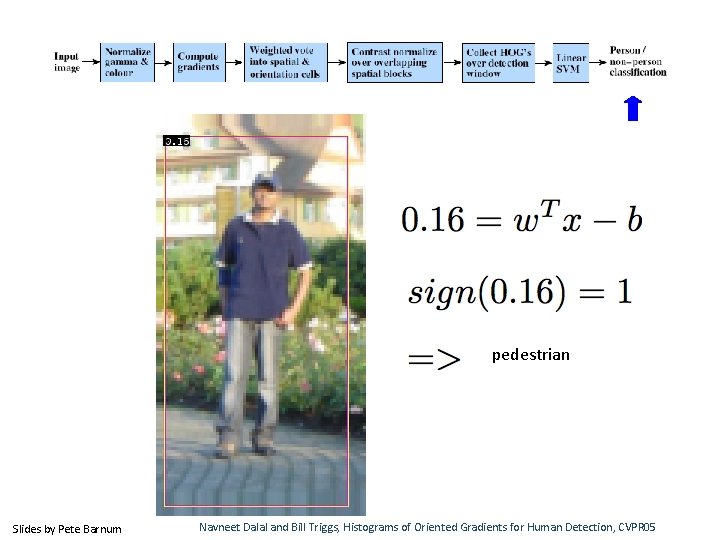

pedestrian Slides by Pete Barnum Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, CVPR 05

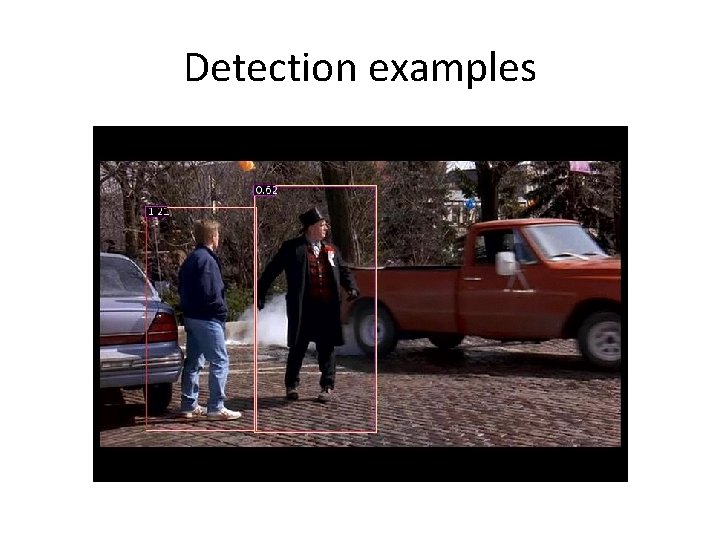

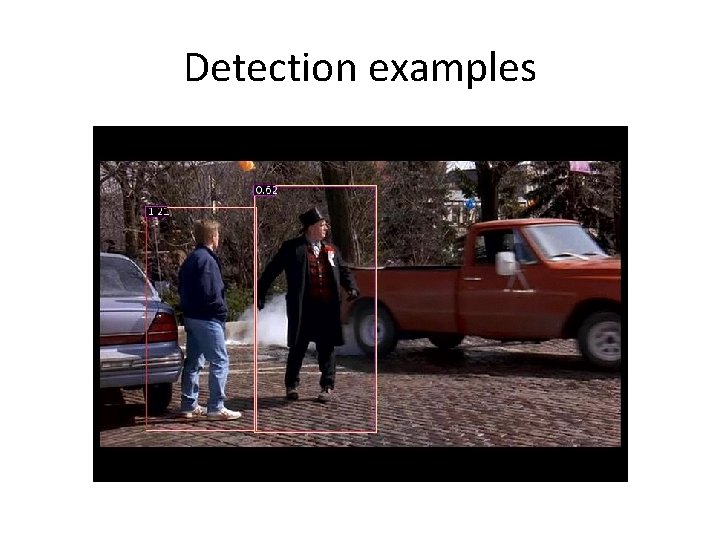

Detection examples

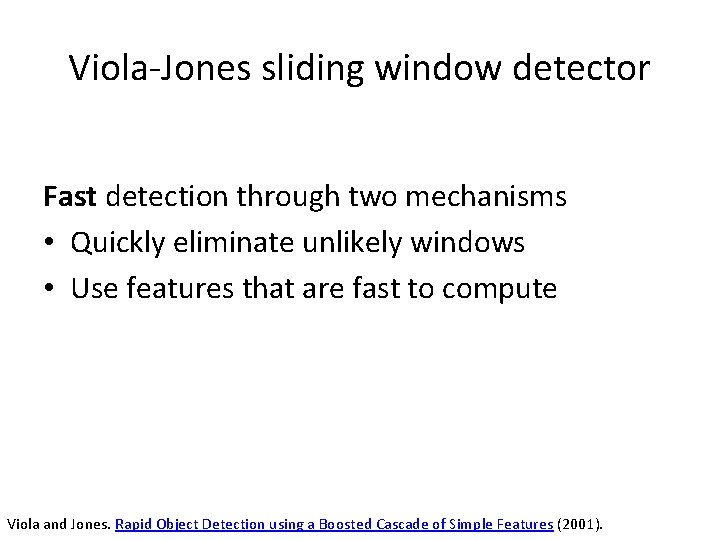

Viola-Jones sliding window detector Fast detection through two mechanisms • Quickly eliminate unlikely windows • Use features that are fast to compute Viola and Jones. Rapid Object Detection using a Boosted Cascade of Simple Features (2001).

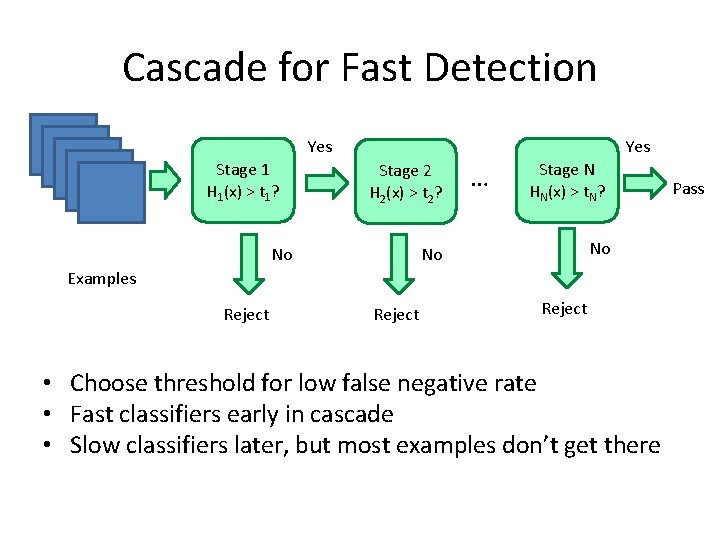

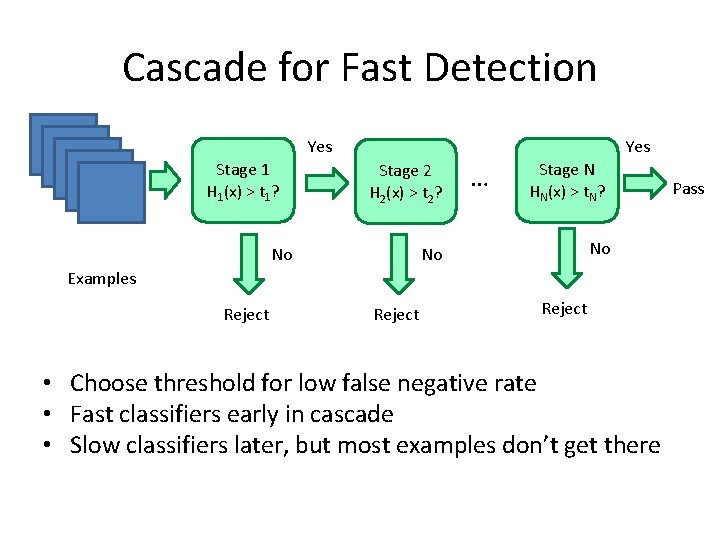

Cascade for Fast Detection Yes Stage 1 H 1(x) > t 1? Yes Stage 2 H 2(x) > t 2? No … Stage N HN(x) > t. N? No No Examples Reject • Choose threshold for low false negative rate • Fast classifiers early in cascade • Slow classifiers later, but most examples don’t get there Pass

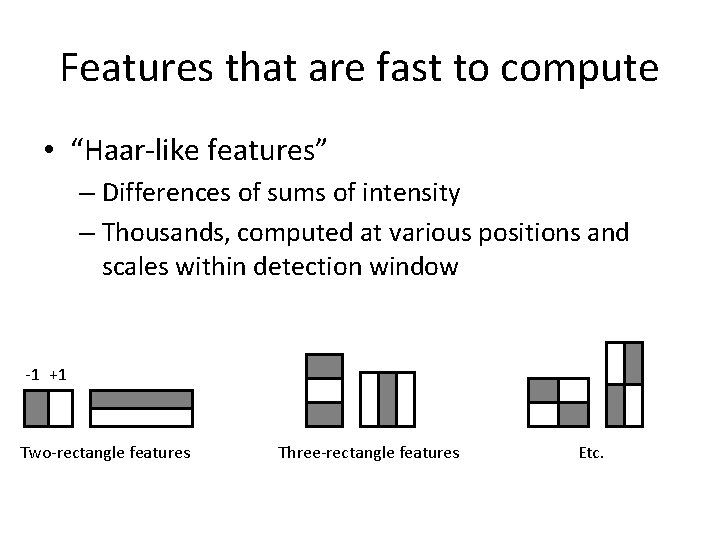

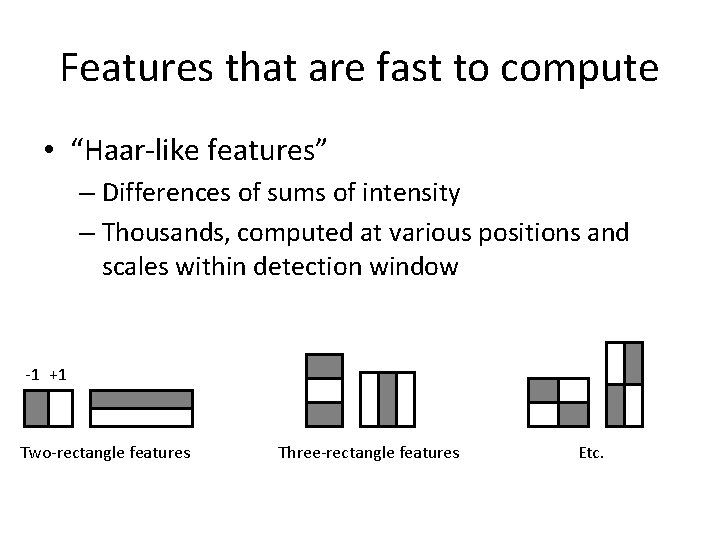

Features that are fast to compute • “Haar-like features” – Differences of sums of intensity – Thousands, computed at various positions and scales within detection window -1 +1 Two-rectangle features Three-rectangle features Etc.

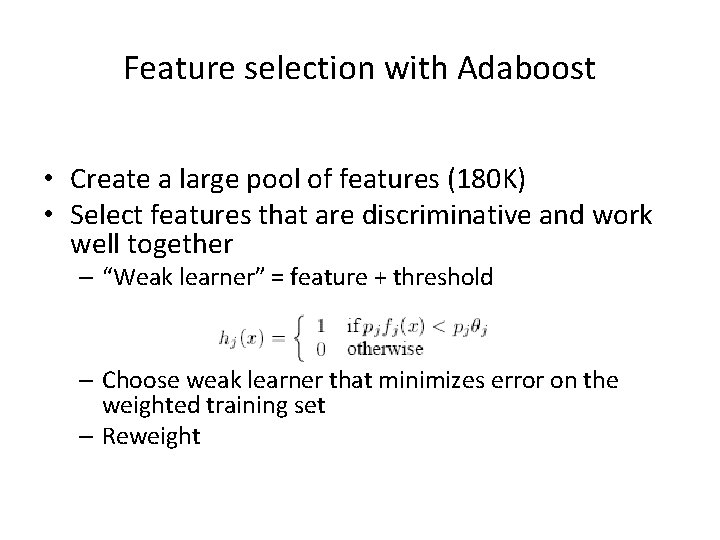

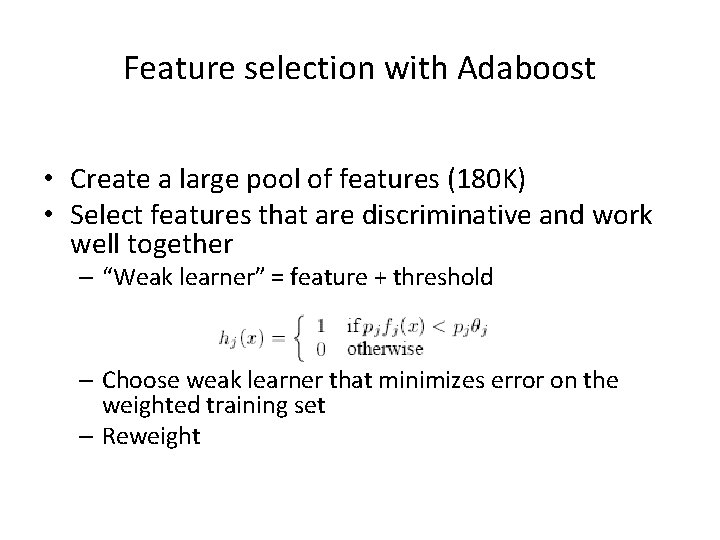

Feature selection with Adaboost • Create a large pool of features (180 K) • Select features that are discriminative and work well together – “Weak learner” = feature + threshold – Choose weak learner that minimizes error on the weighted training set – Reweight

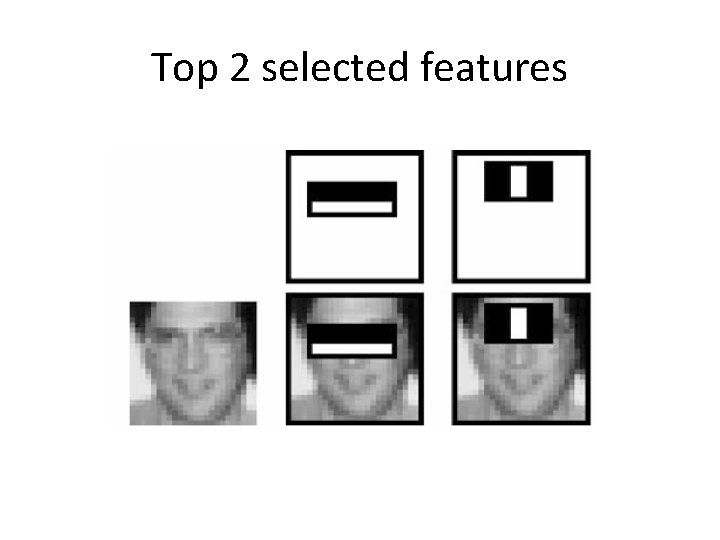

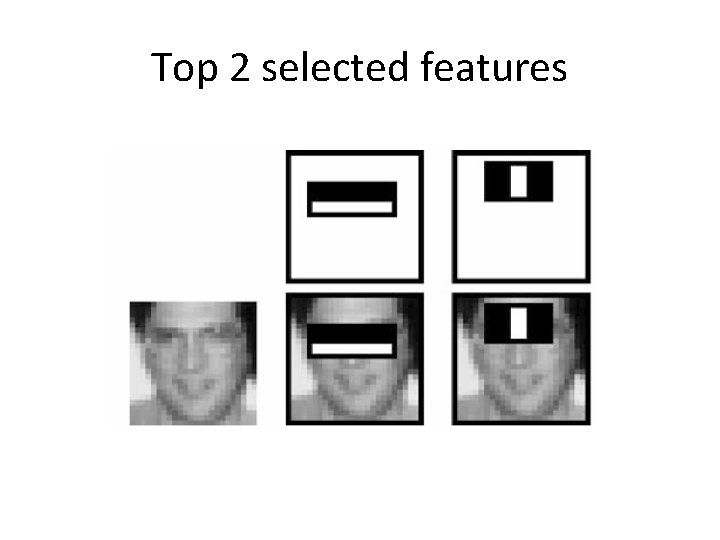

Top 2 selected features

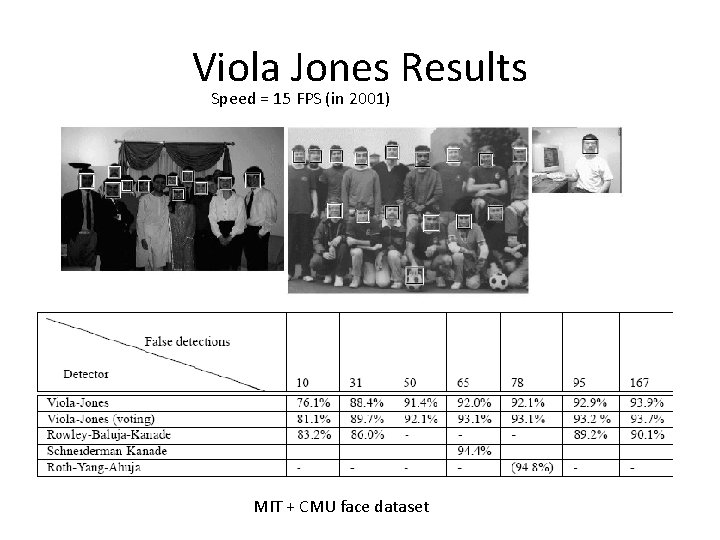

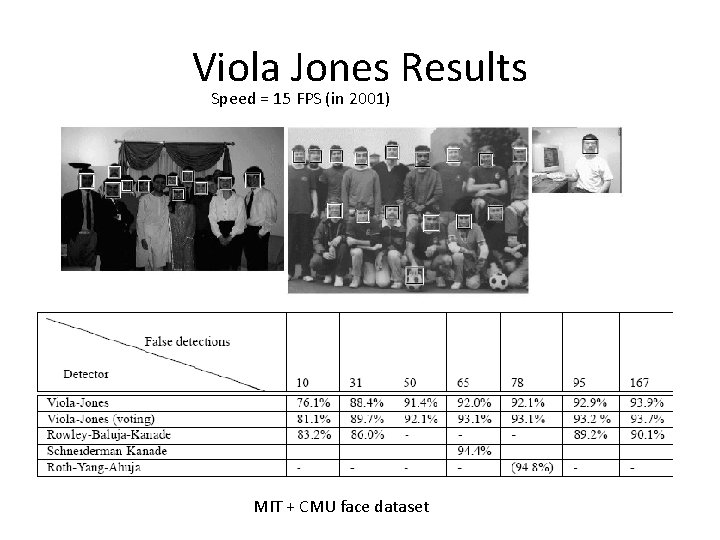

Viola Jones Results Speed = 15 FPS (in 2001) MIT + CMU face dataset

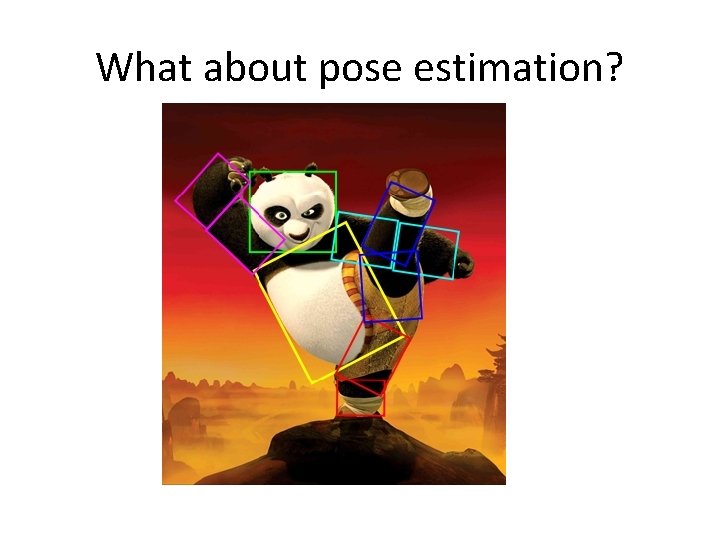

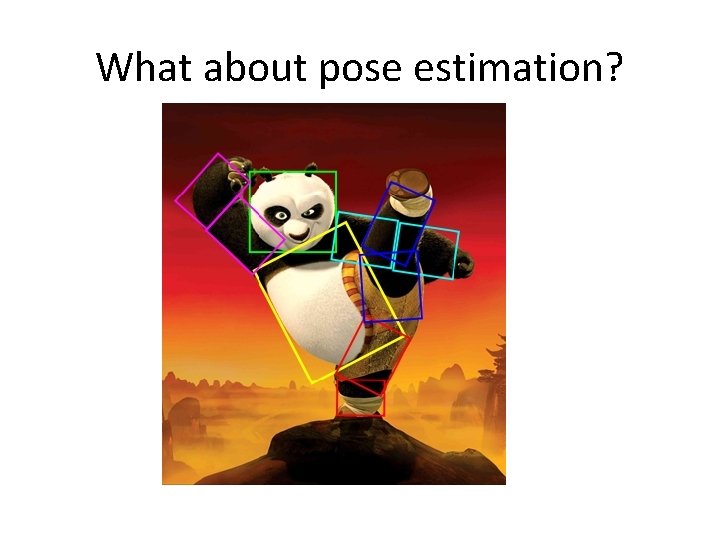

What about pose estimation?

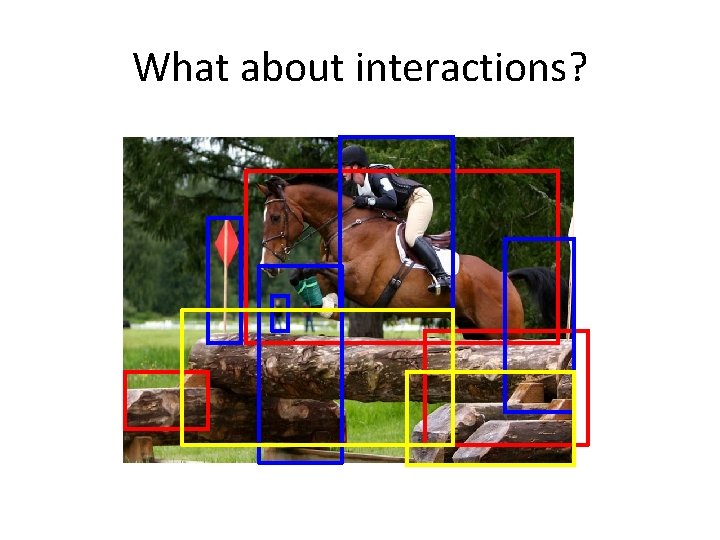

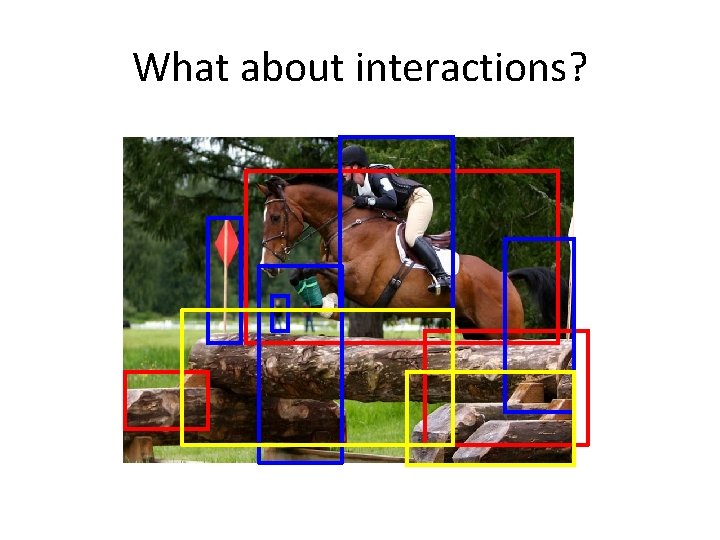

What about interactions?

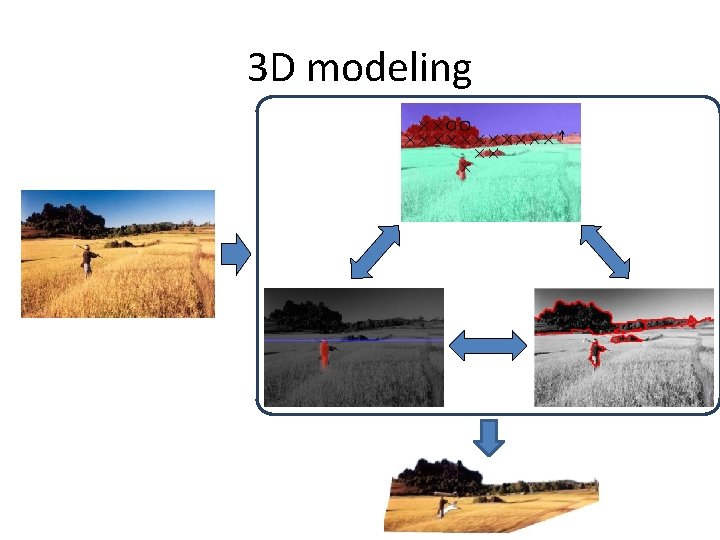

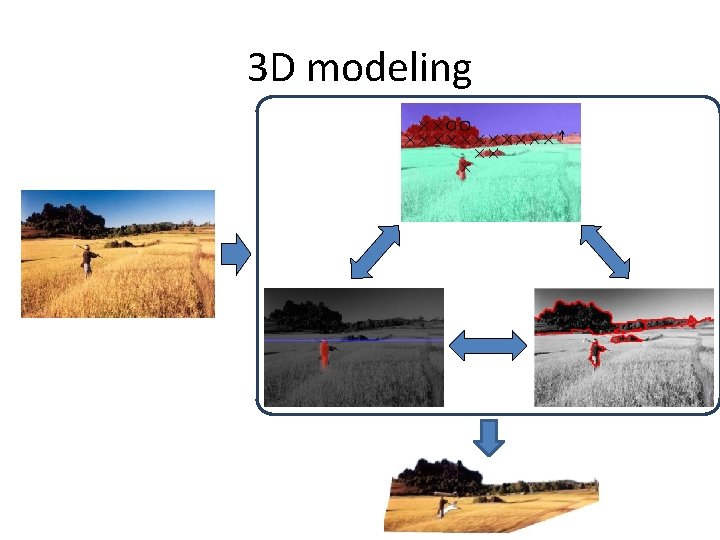

3 D modeling

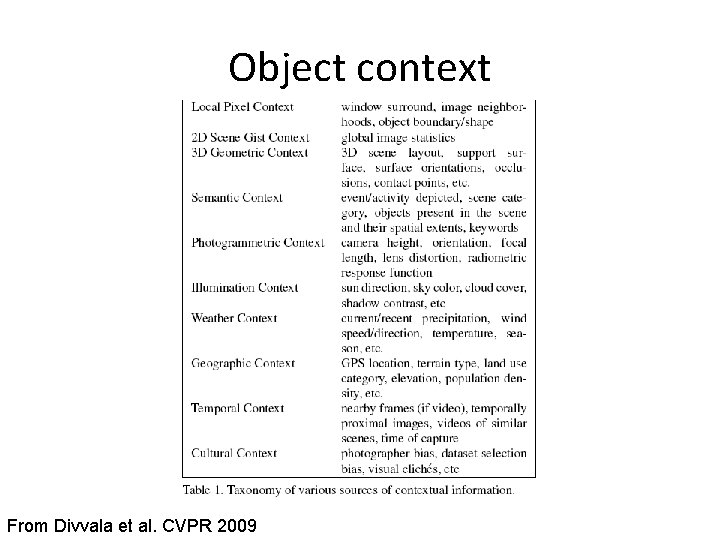

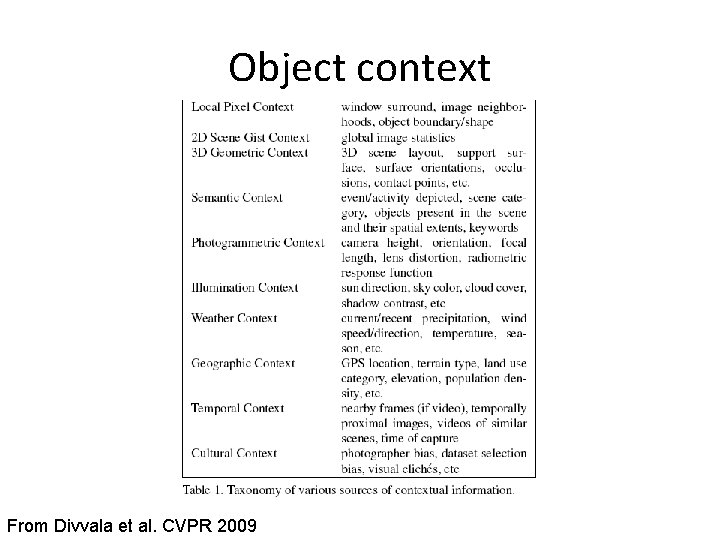

Object context From Divvala et al. CVPR 2009

Integration • Feature level • Margin Based – Max margin Structure Learning • Probabilistic – Graphical Models

Integration • Feature level • Margin Based – Max margin Structure Learning • Probabilistic – Graphical Models

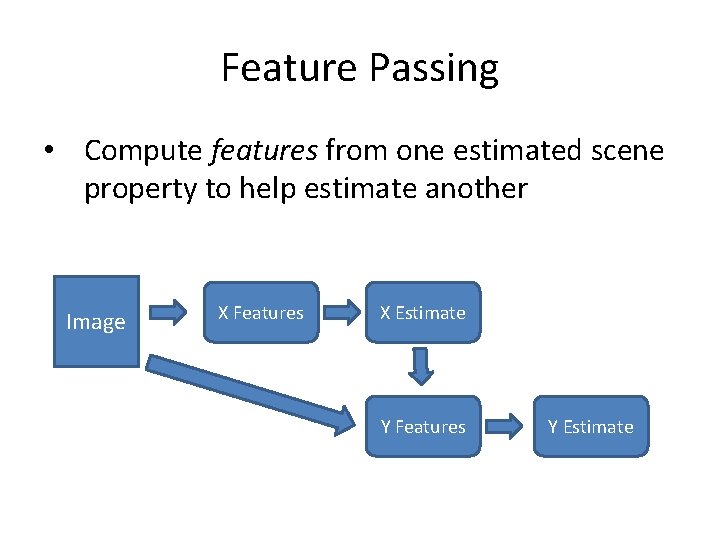

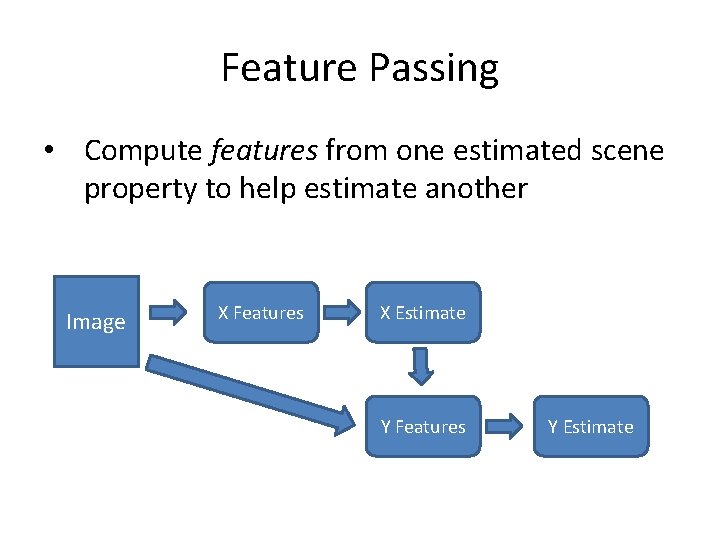

Feature Passing • Compute features from one estimated scene property to help estimate another Image X Features X Estimate Y Features Y Estimate

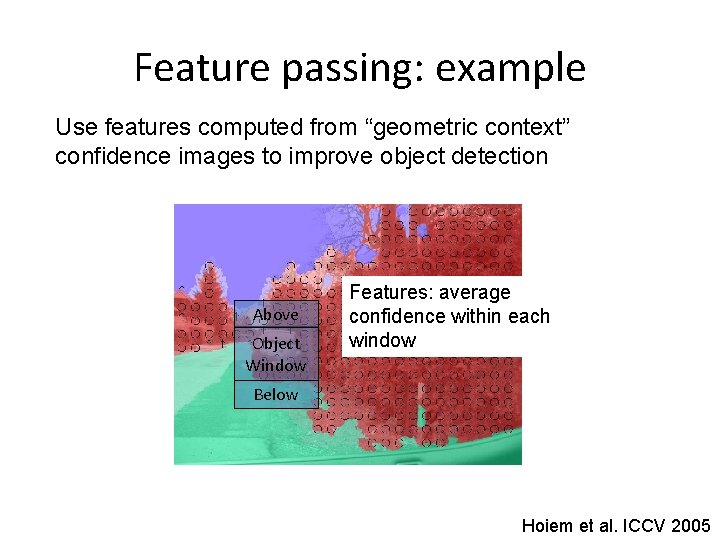

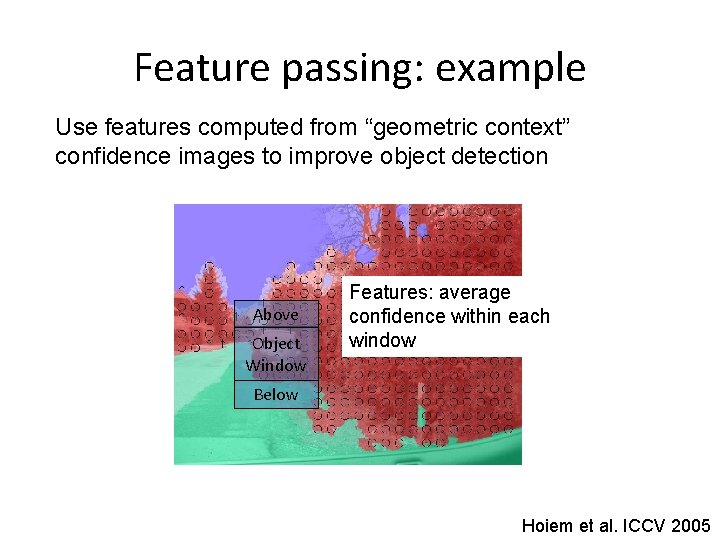

Feature passing: example Use features computed from “geometric context” confidence images to improve object detection Above Object Window Features: average confidence within each window Below Hoiem et al. ICCV 2005

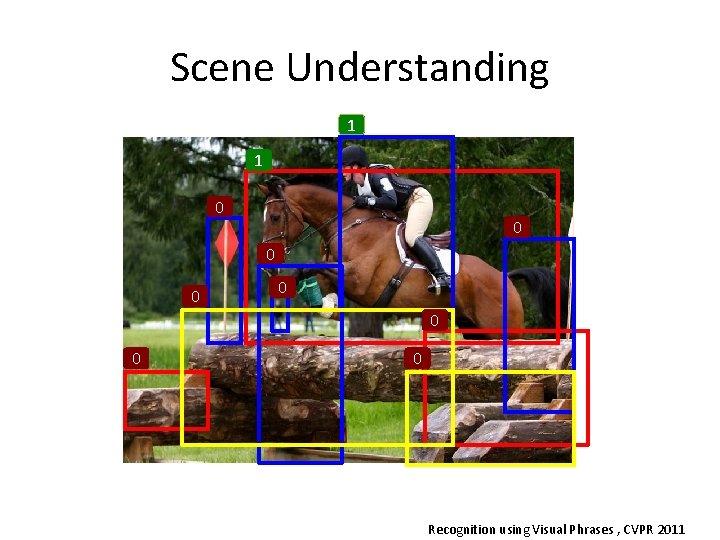

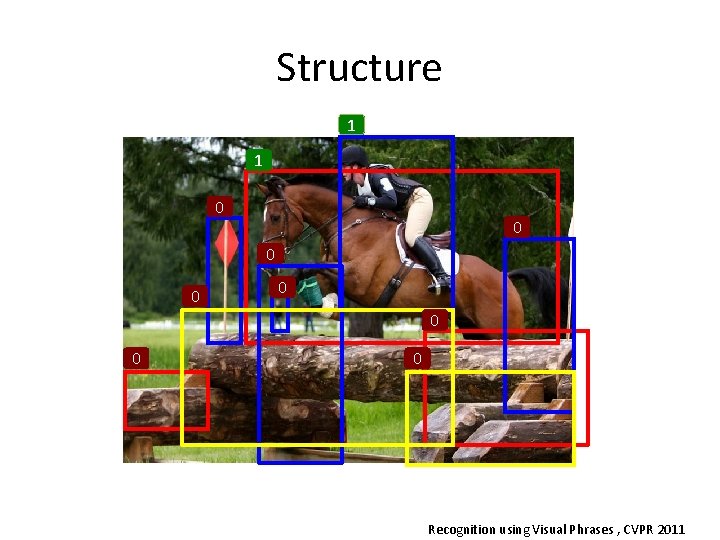

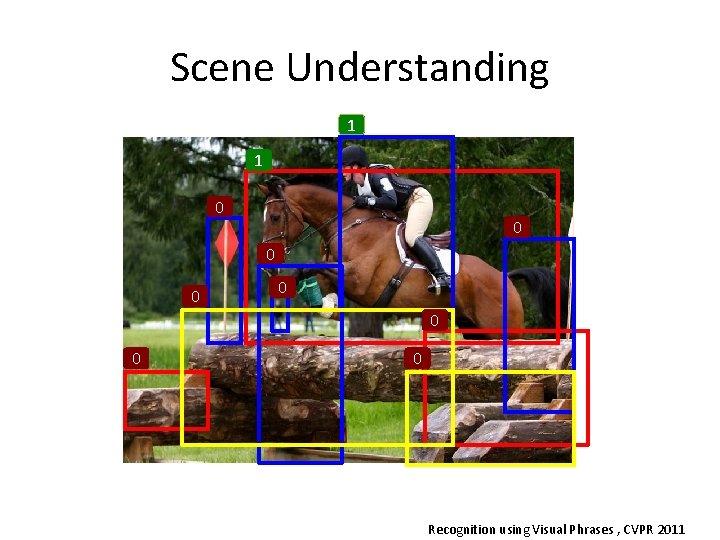

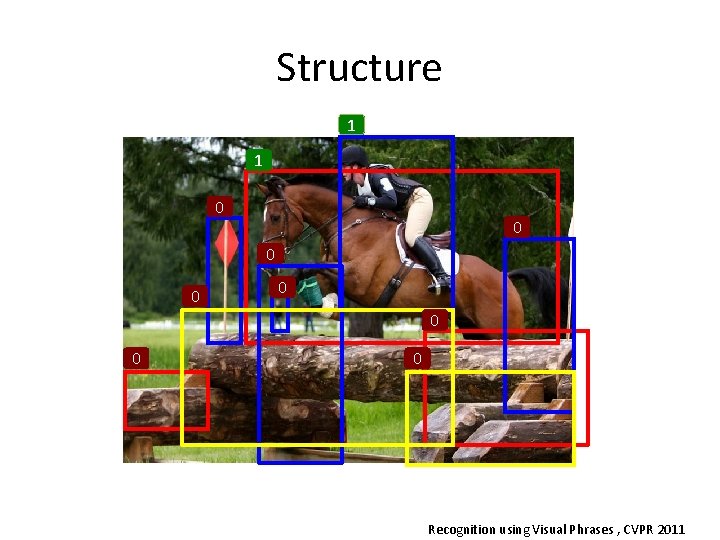

Scene Understanding 1 1 0 0 0 0 Recognition using Visual Phrases , CVPR 2011

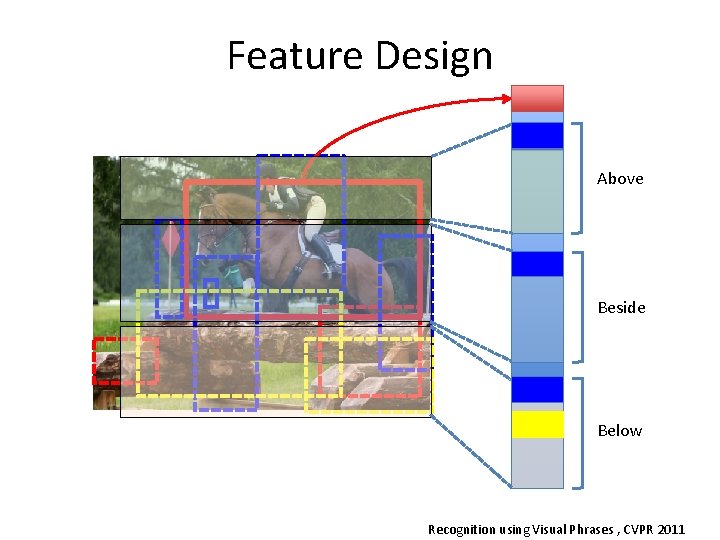

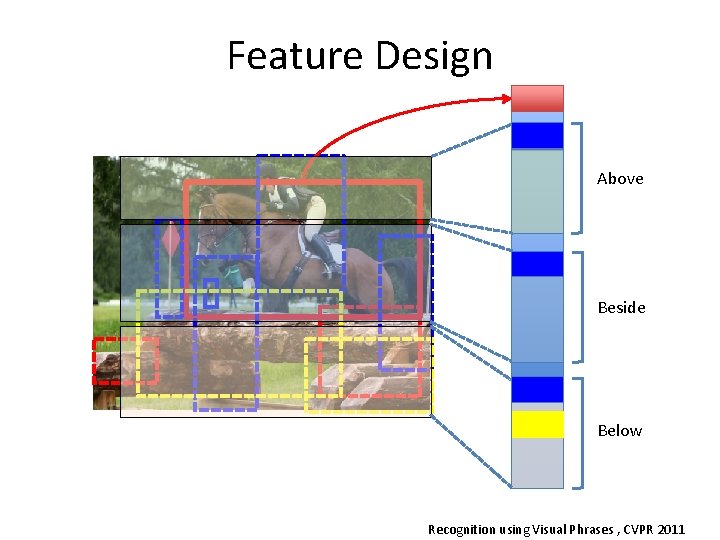

Feature Design Above Beside Below Recognition using Visual Phrases , CVPR 2011

Feature Passing • Pros and cons – Simple training and inference – Very flexible in modeling interactions – Not modular • if we get a new method for first estimates, we may need to retrain

Integration • Feature Passing • Margin Based – Max margin Structure Learning • Probabilistic – Graphical Models

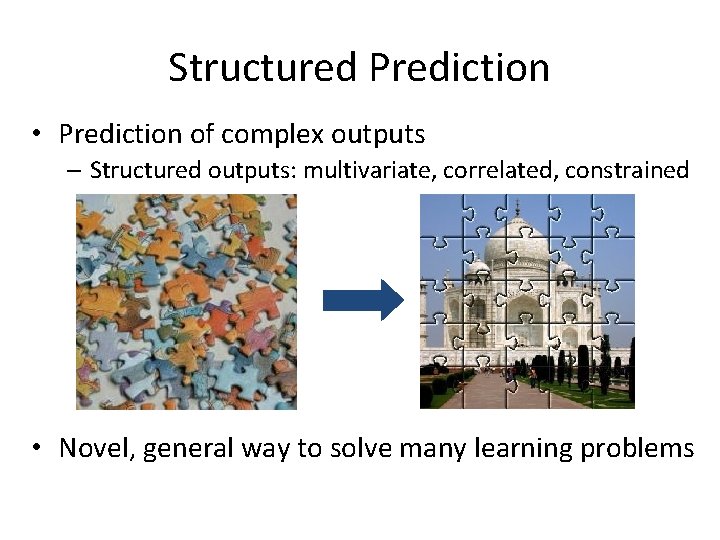

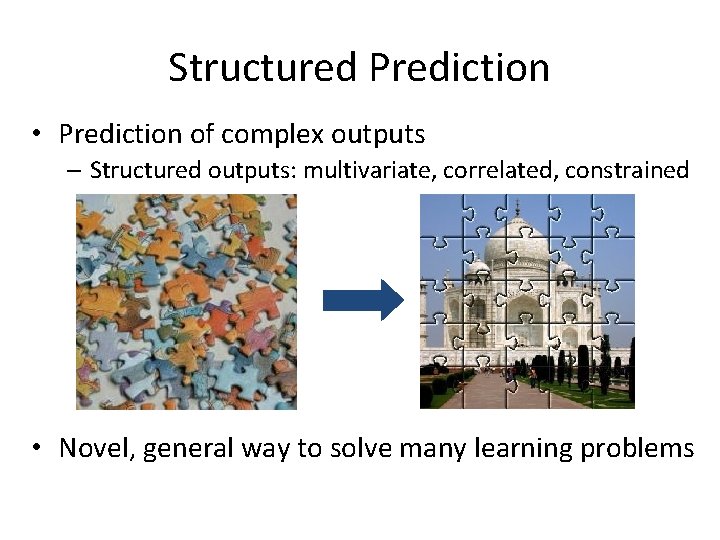

Structured Prediction • Prediction of complex outputs – Structured outputs: multivariate, correlated, constrained • Novel, general way to solve many learning problems

Structure 1 1 0 0 0 0 Recognition using Visual Phrases , CVPR 2011

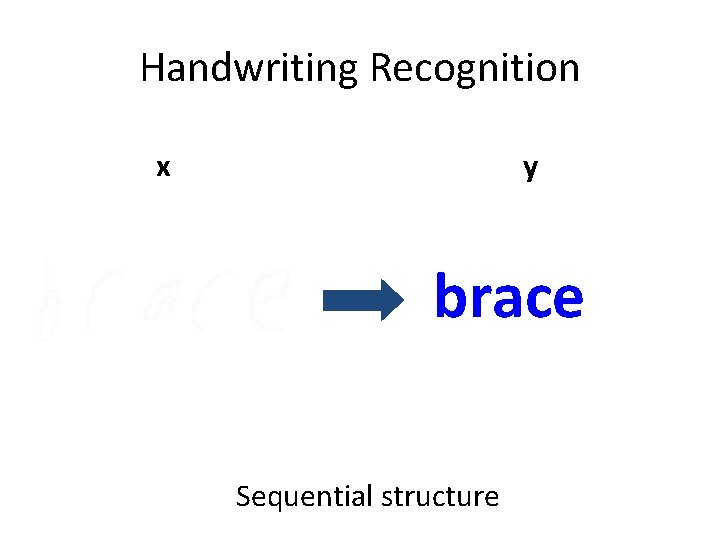

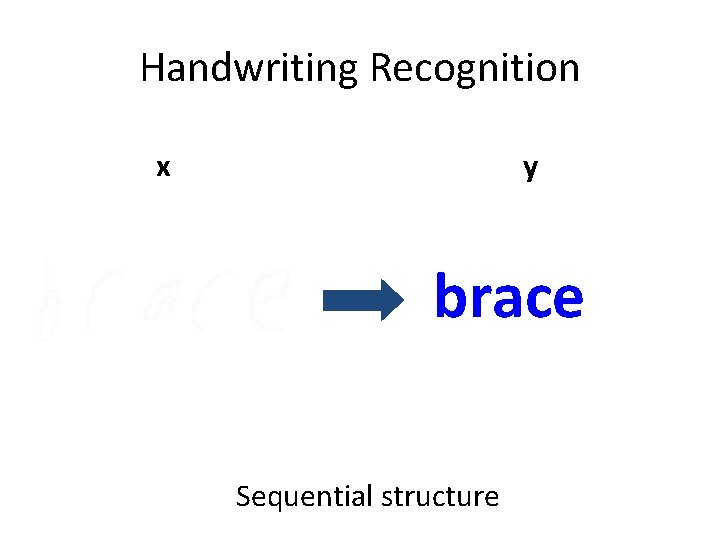

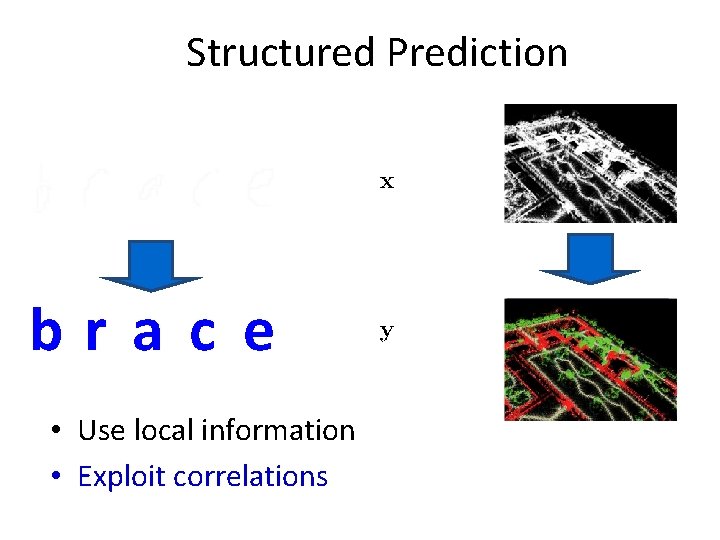

Handwriting Recognition x y brace Sequential structure

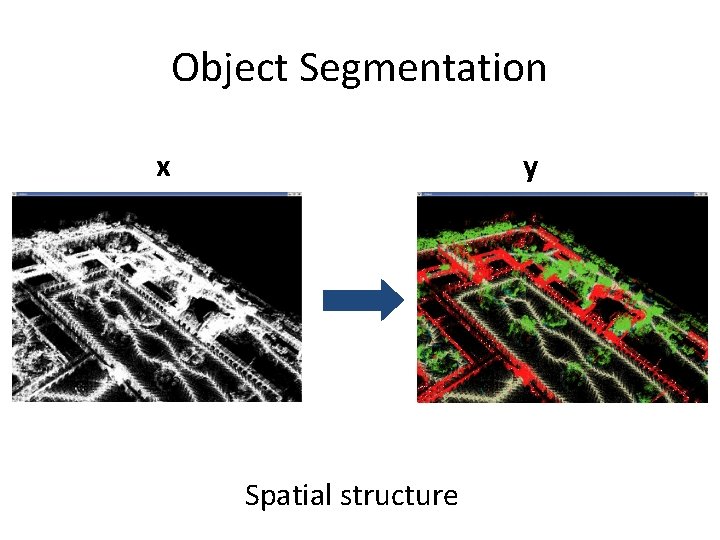

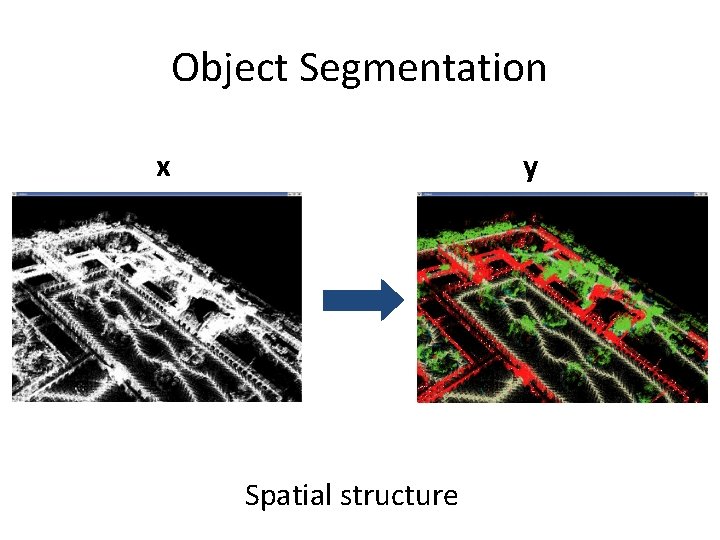

Object Segmentation x y Spatial structure

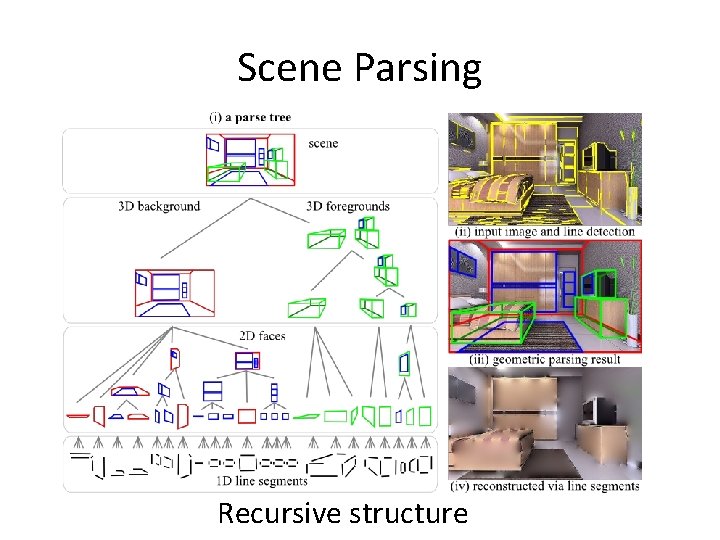

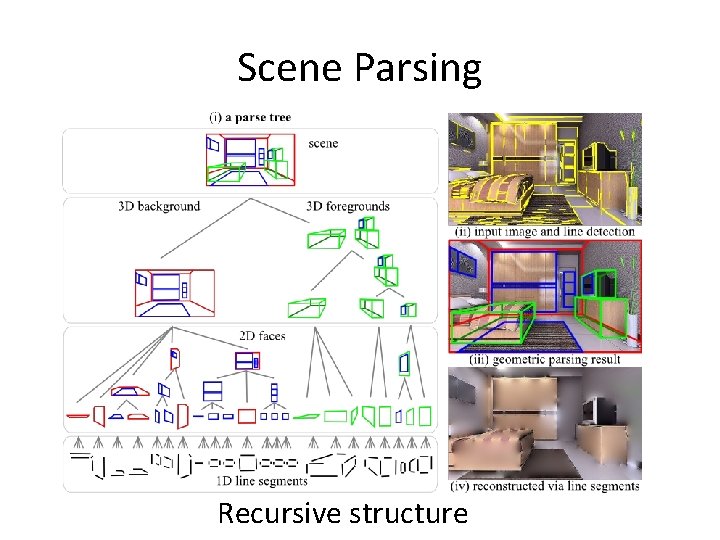

Scene Parsing Recursive structure

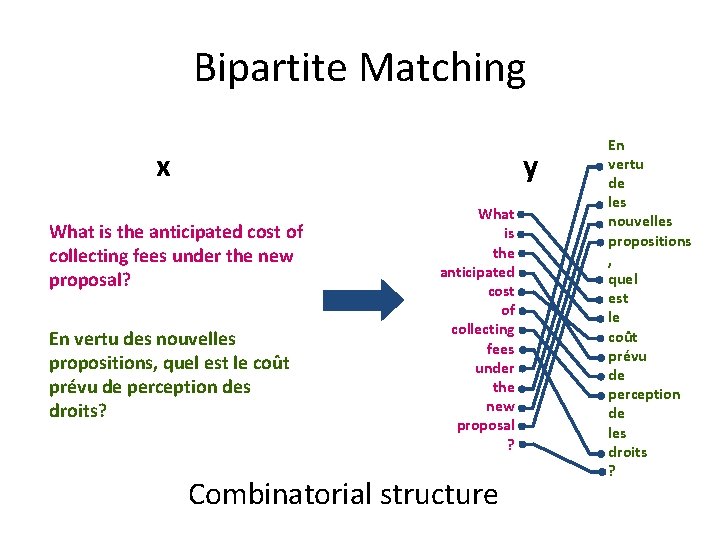

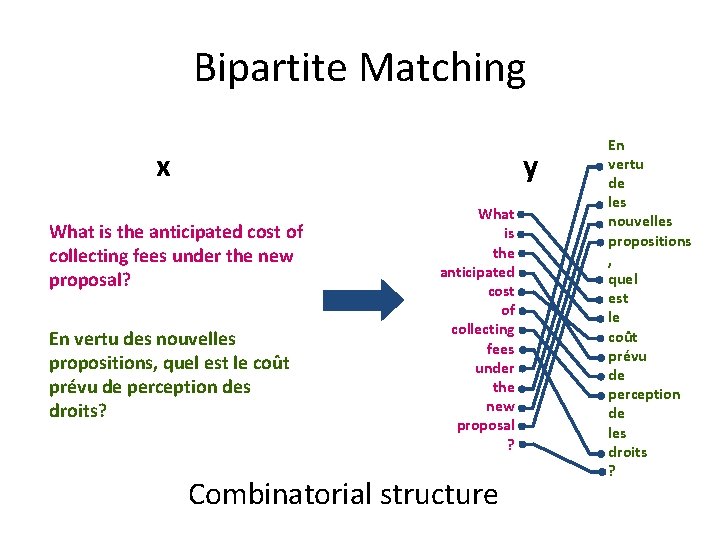

Bipartite Matching x y What is the anticipated cost of collecting fees under the new proposal? En vertu des nouvelles propositions, quel est le coût prévu de perception des droits? What is the anticipated cost of collecting fees under the new proposal ? Combinatorial structure En vertu de les nouvelles propositions , quel est le coût prévu de perception de les droits ?

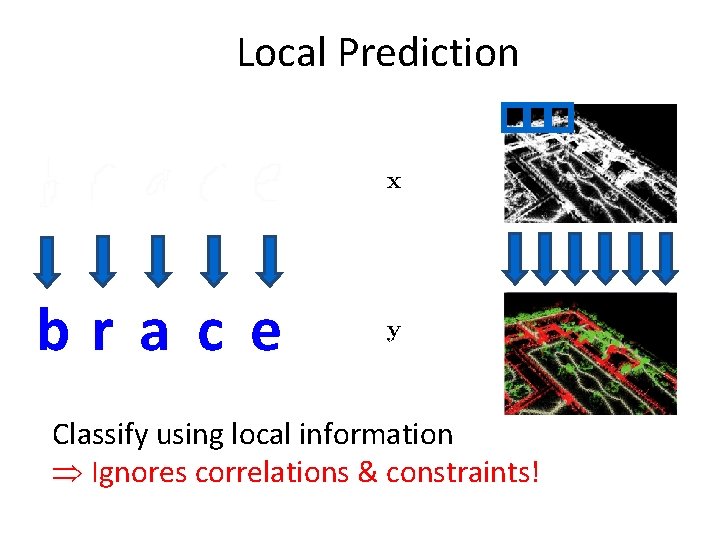

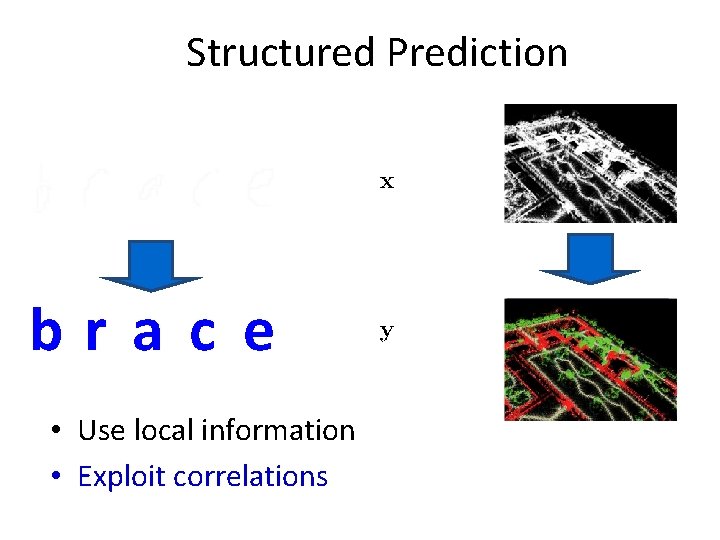

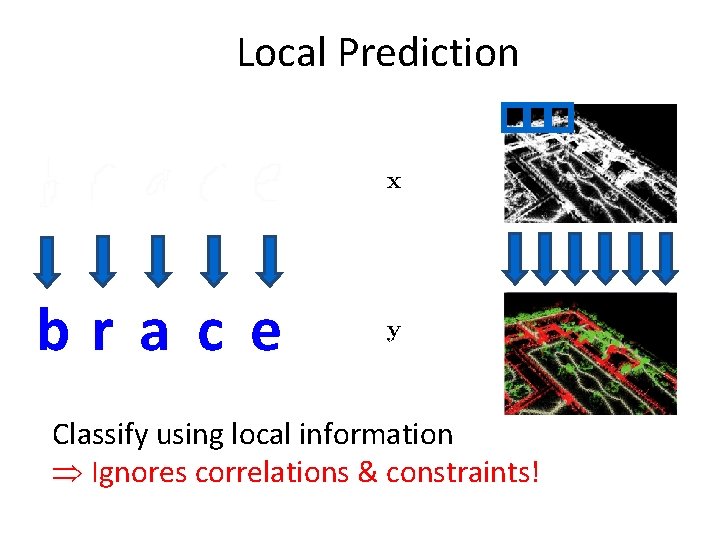

Local Prediction bra c e Classify using local information Ignores correlations & constraints!

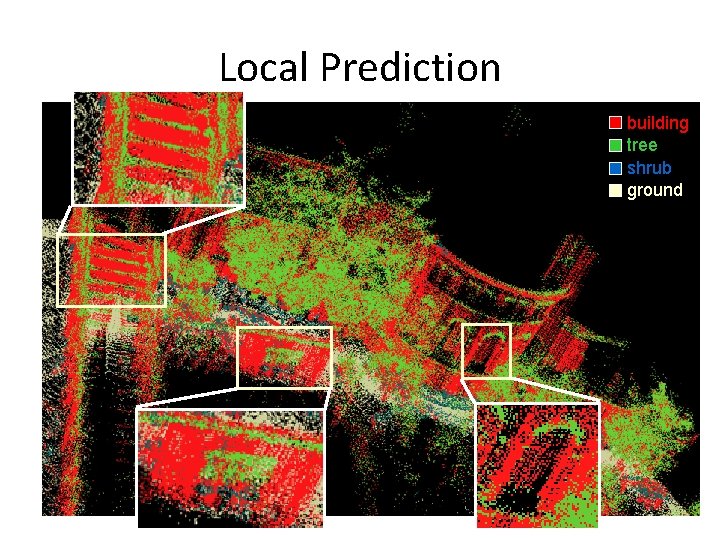

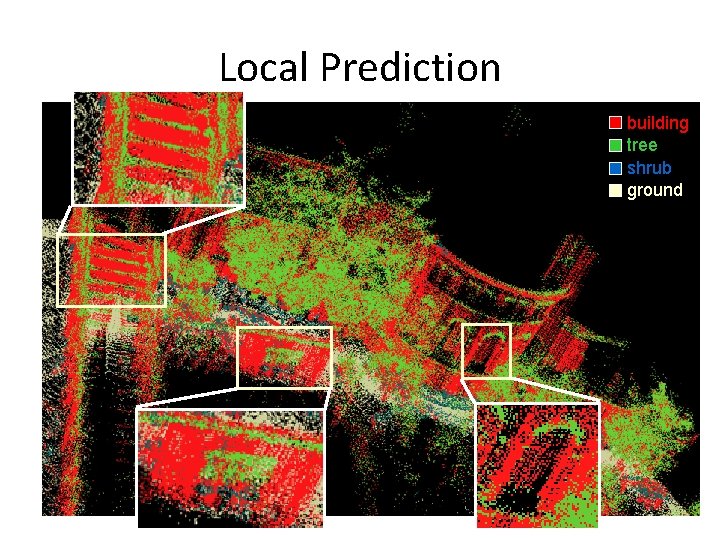

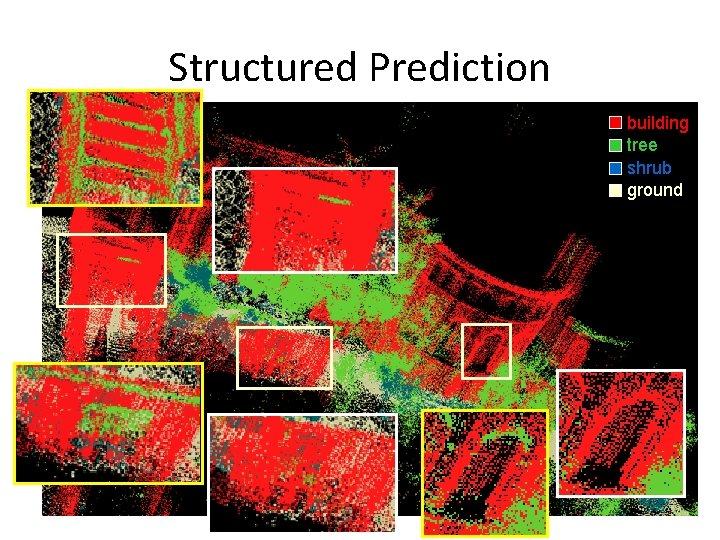

Local Prediction building tree shrub ground

Structured Prediction bra c e • Use local information • Exploit correlations

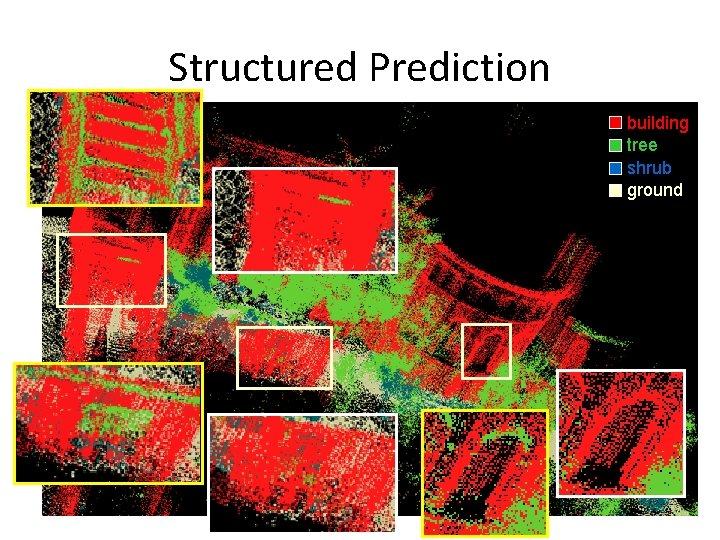

Structured Prediction building tree shrub ground

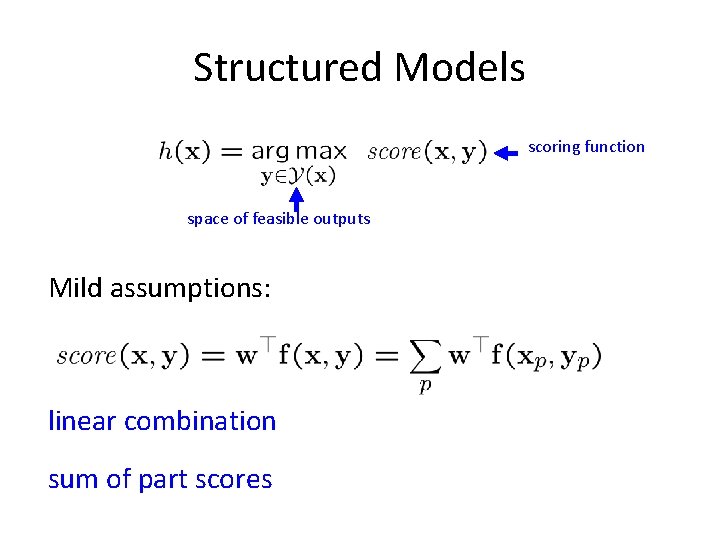

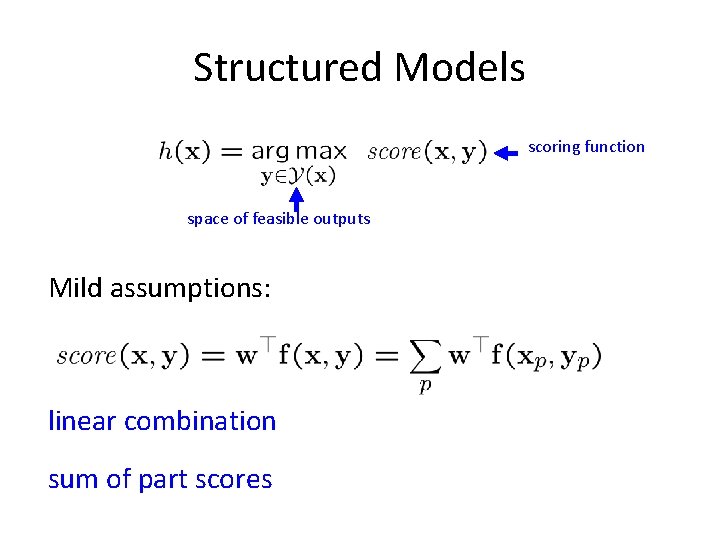

Structured Models scoring function space of feasible outputs Mild assumptions: linear combination sum of part scores

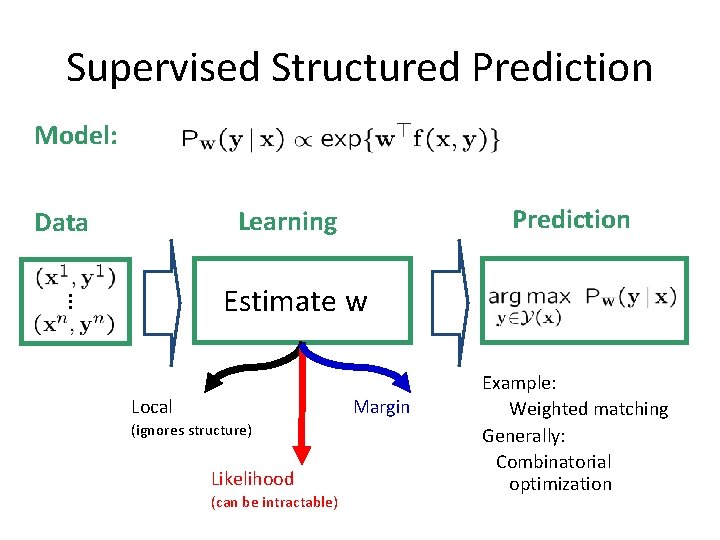

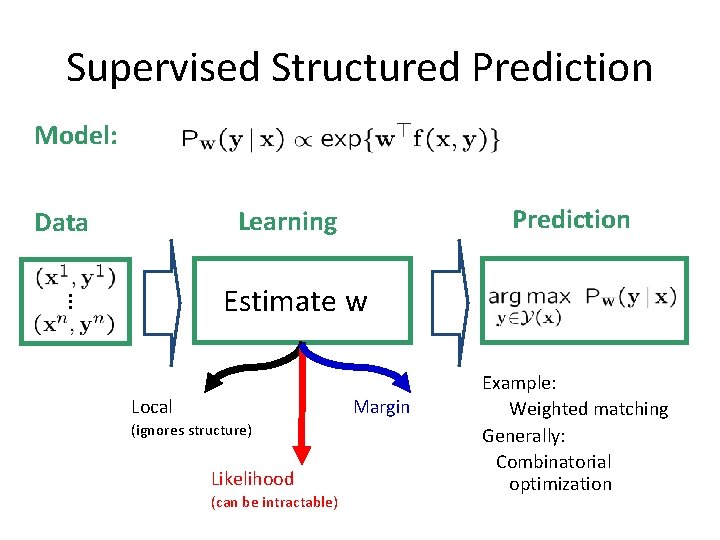

Supervised Structured Prediction Model: Prediction Learning Data Estimate w Local Margin (ignores structure) Likelihood (can be intractable) Example: Weighted matching Generally: Combinatorial optimization

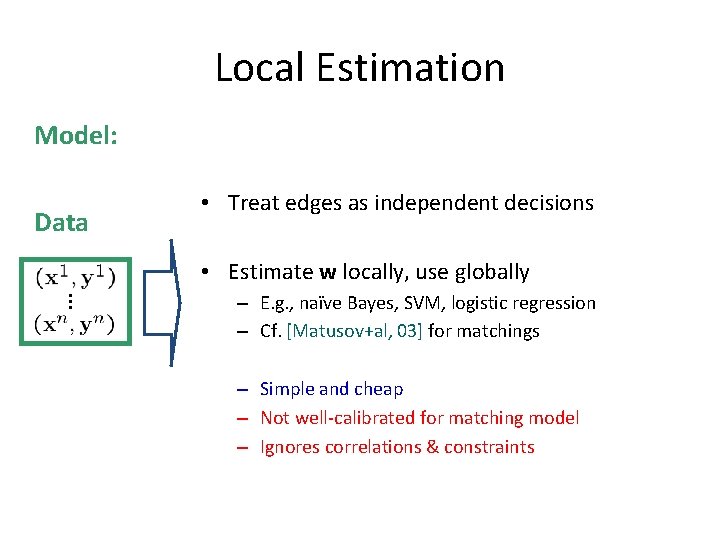

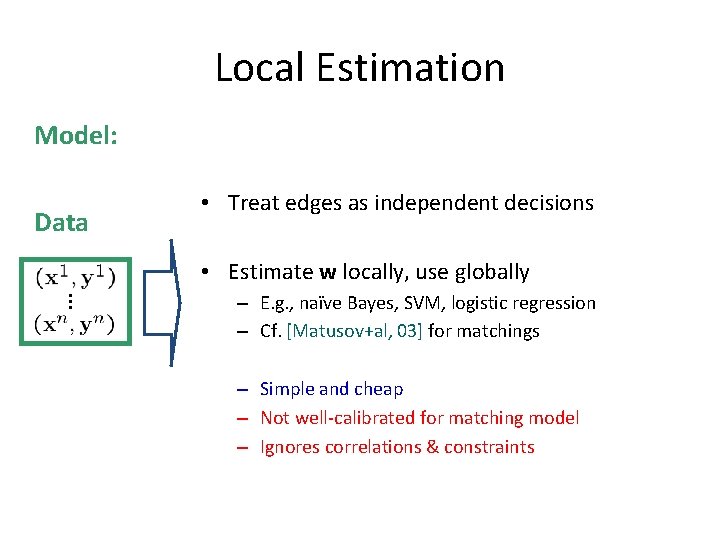

Local Estimation Model: Data • Treat edges as independent decisions • Estimate w locally, use globally – E. g. , naïve Bayes, SVM, logistic regression – Cf. [Matusov+al, 03] for matchings – Simple and cheap – Not well-calibrated for matching model – Ignores correlations & constraints

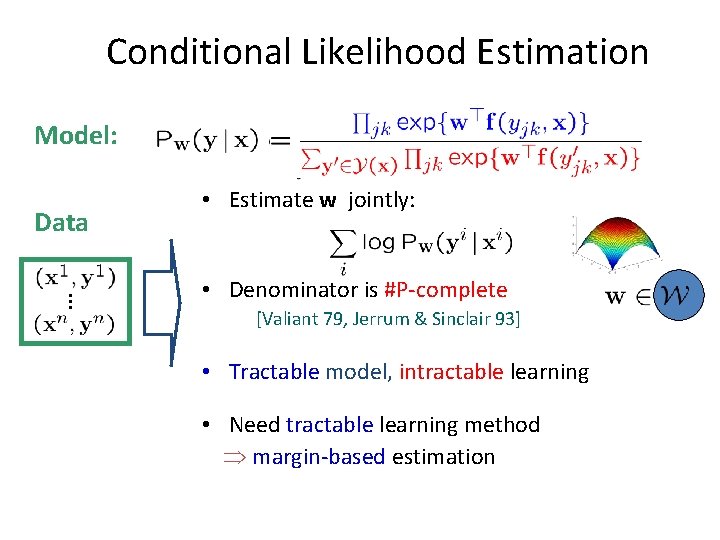

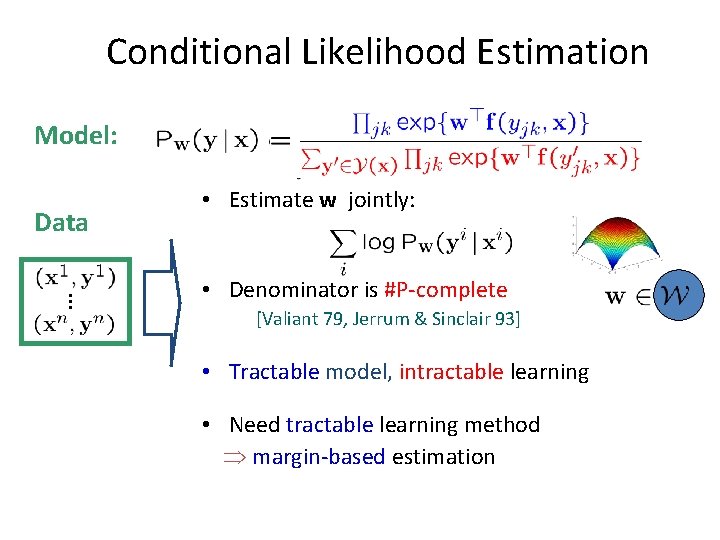

Conditional Likelihood Estimation Model: Data • Estimate w jointly: • Denominator is #P-complete [Valiant 79, Jerrum & Sinclair 93] • Tractable model, intractable learning • Need tractable learning method margin-based estimation

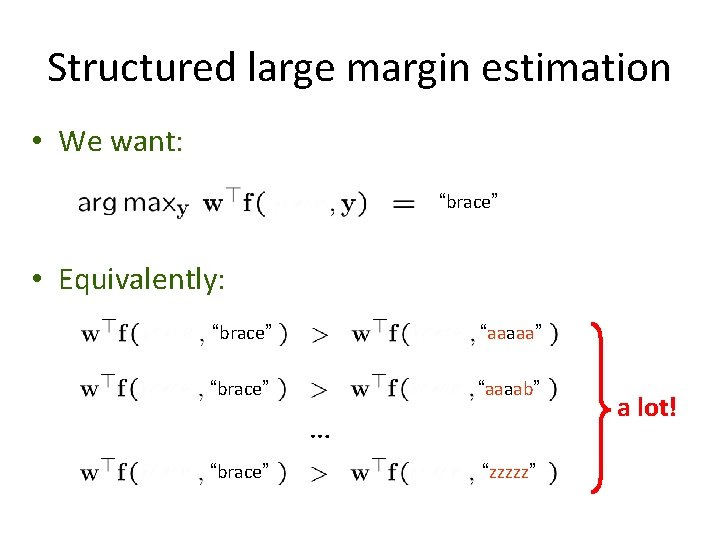

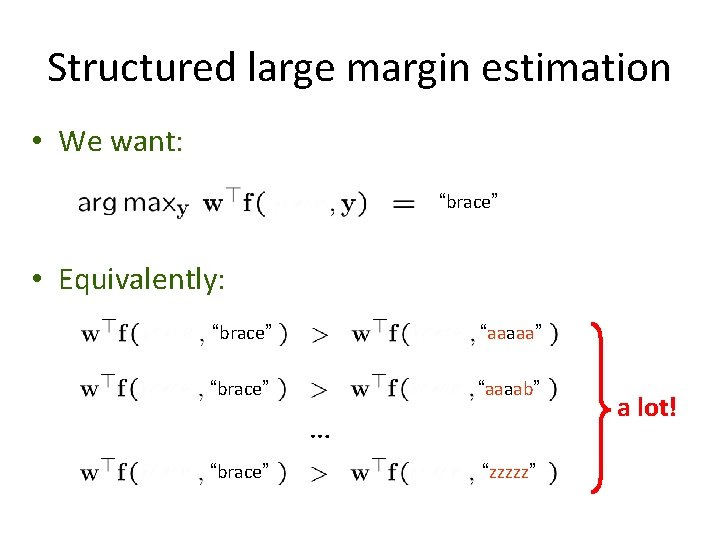

Structured large margin estimation • We want: “brace” • Equivalently: “brace” “aaaaa” “brace” “aaaab” … “brace” “zzzzz” a lot!

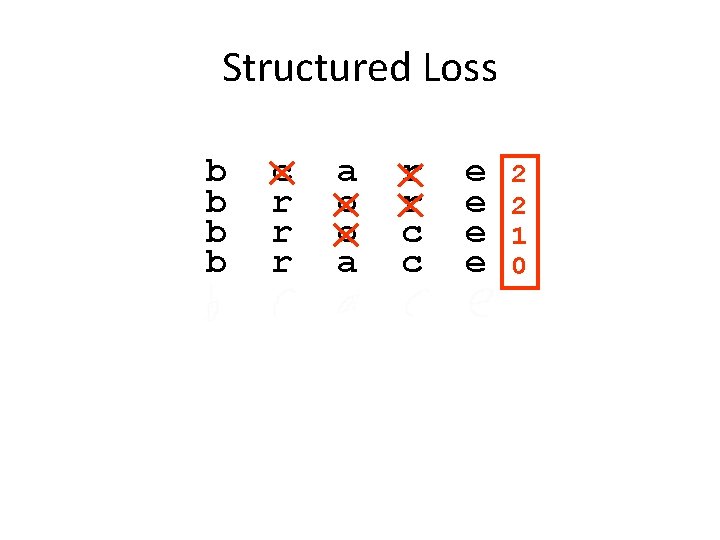

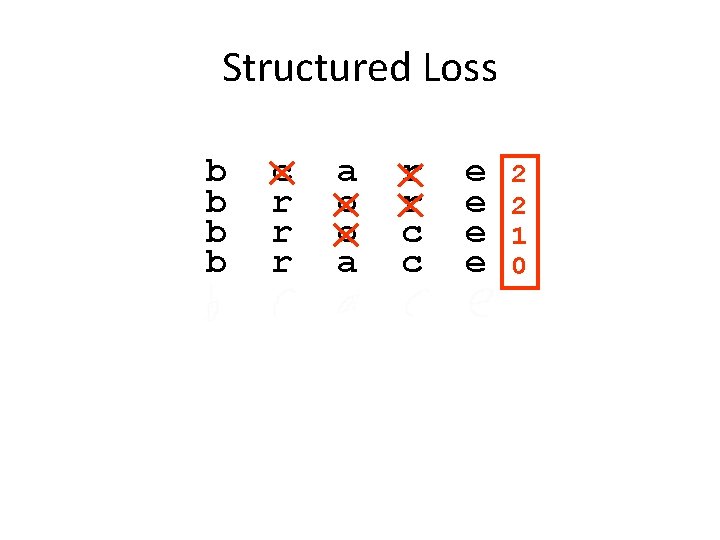

Structured Loss b b c r r r a o o a r r c c e e 2 2 1 0

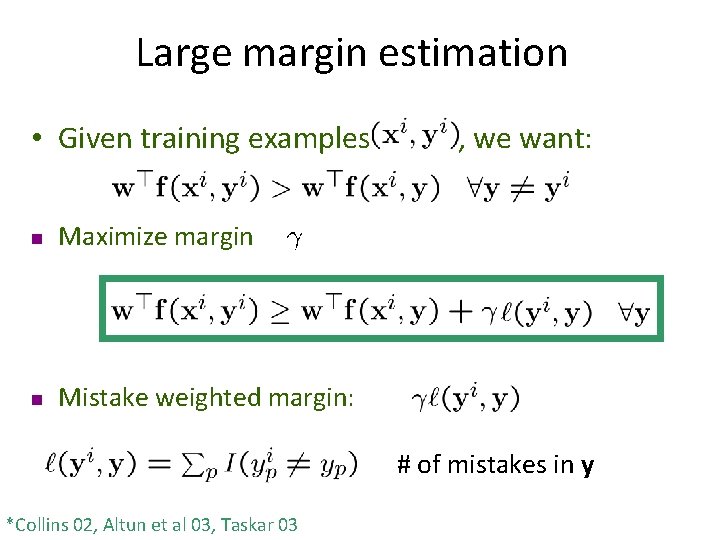

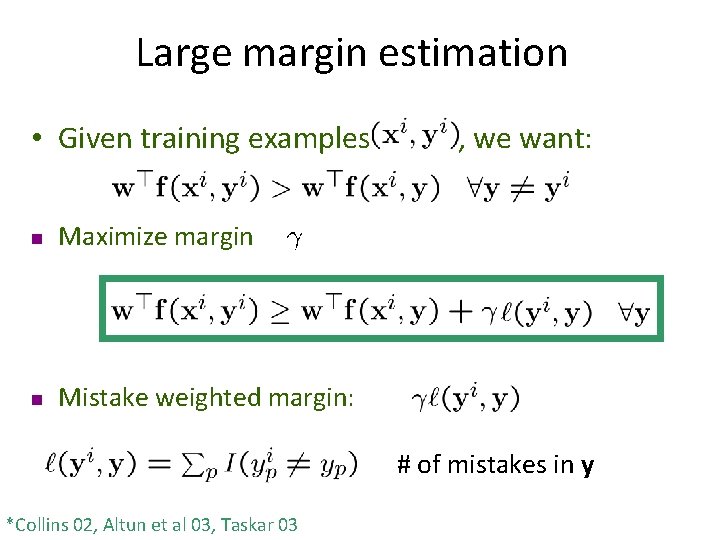

Large margin estimation • Given training examples n Maximize margin n Mistake weighted margin: , we want: # of mistakes in y *Collins 02, Altun et al 03, Taskar 03

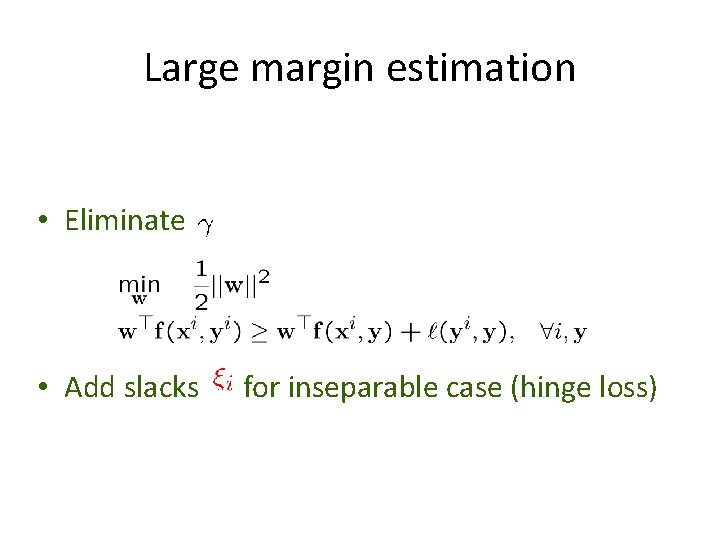

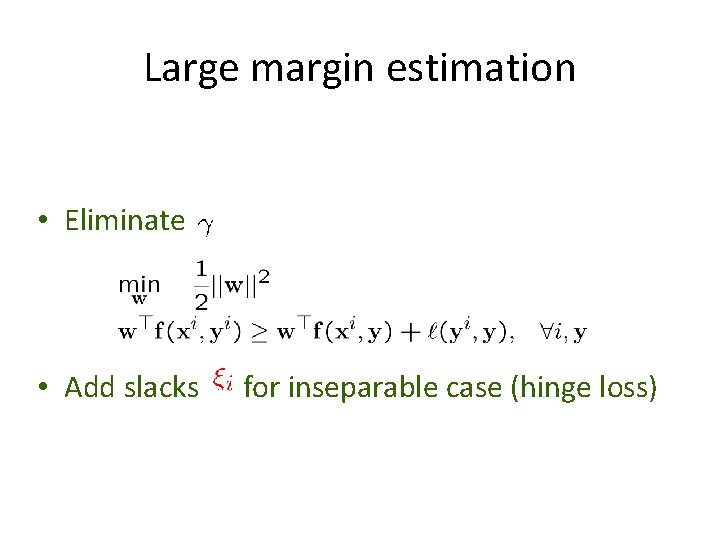

Large margin estimation • Eliminate • Add slacks for inseparable case (hinge loss)

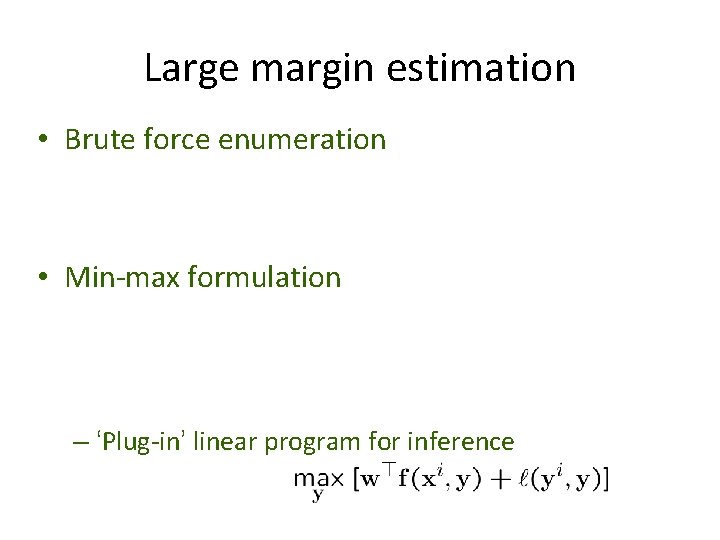

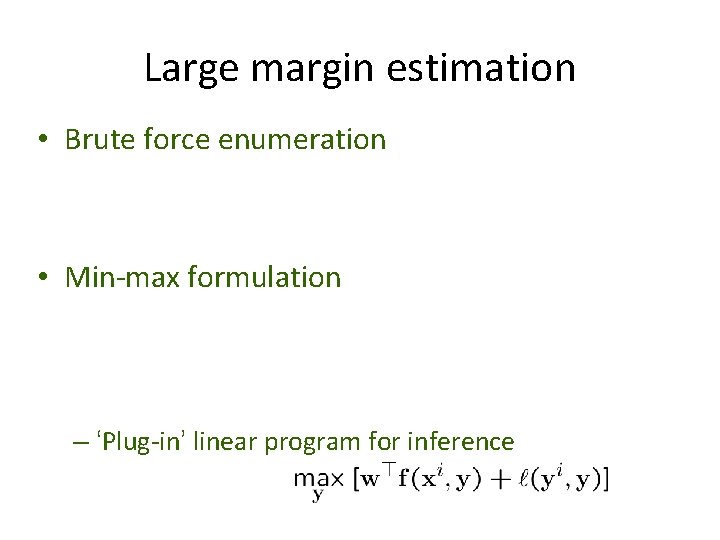

Large margin estimation • Brute force enumeration • Min-max formulation – ‘Plug-in’ linear program for inference

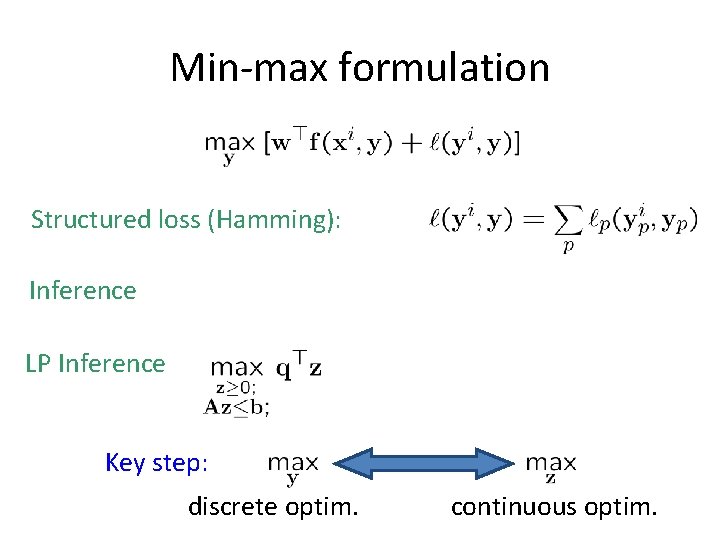

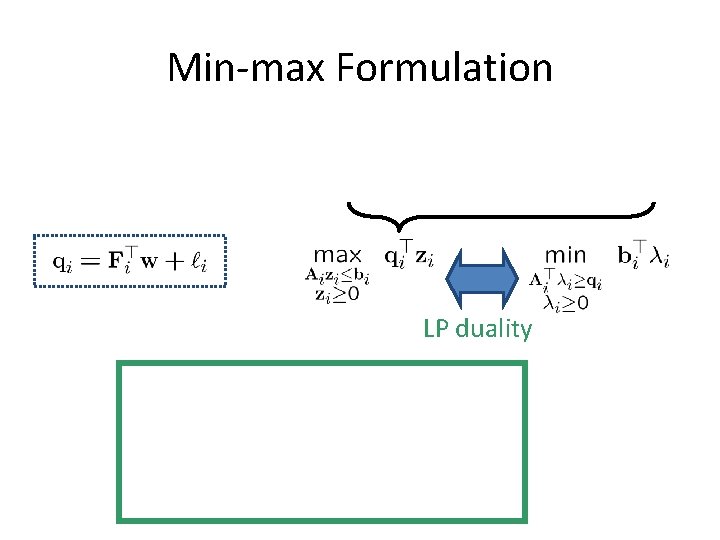

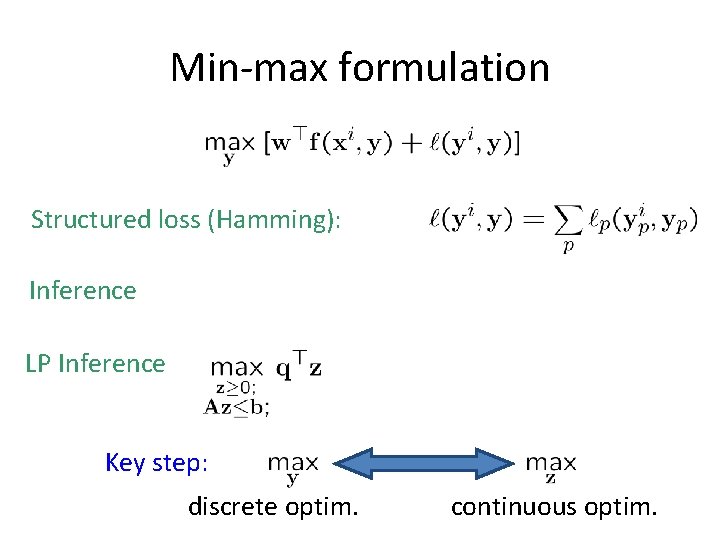

Min-max formulation Structured loss (Hamming): Inference LP Inference Key step: discrete optim. continuous optim.

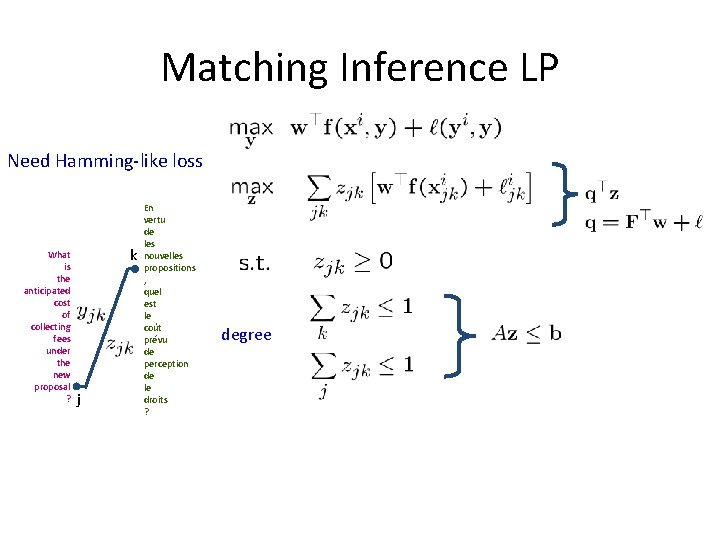

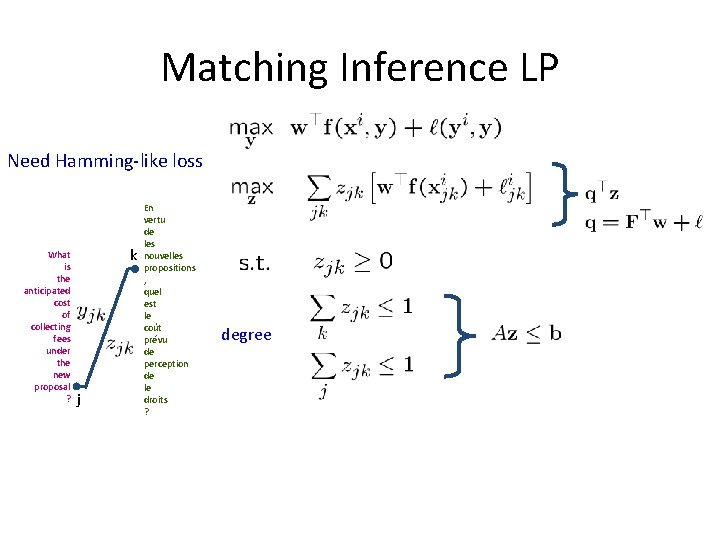

Matching Inference LP Need Hamming-like loss What is the anticipated cost of collecting fees under the new proposal ? k j En vertu de les nouvelles propositions , quel est le coût prévu de perception de le droits ? degree

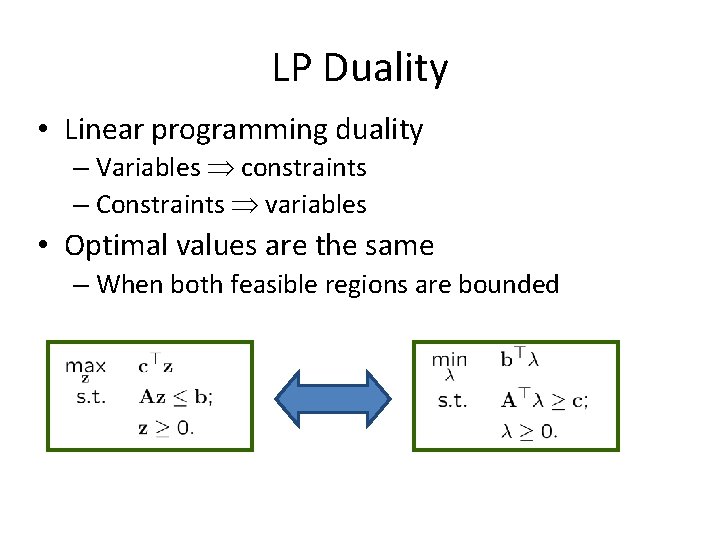

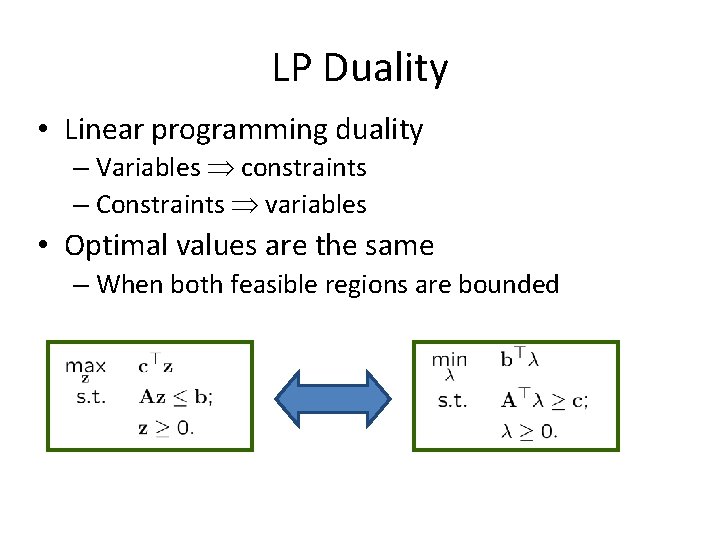

LP Duality • Linear programming duality – Variables constraints – Constraints variables • Optimal values are the same – When both feasible regions are bounded

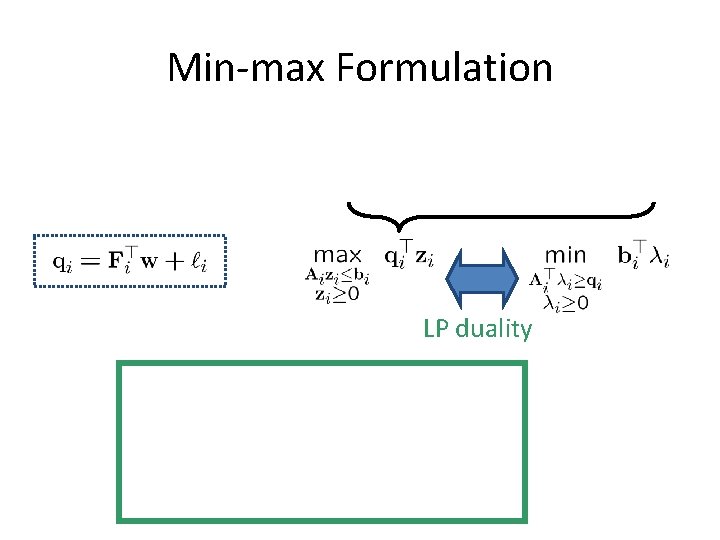

Min-max Formulation LP duality

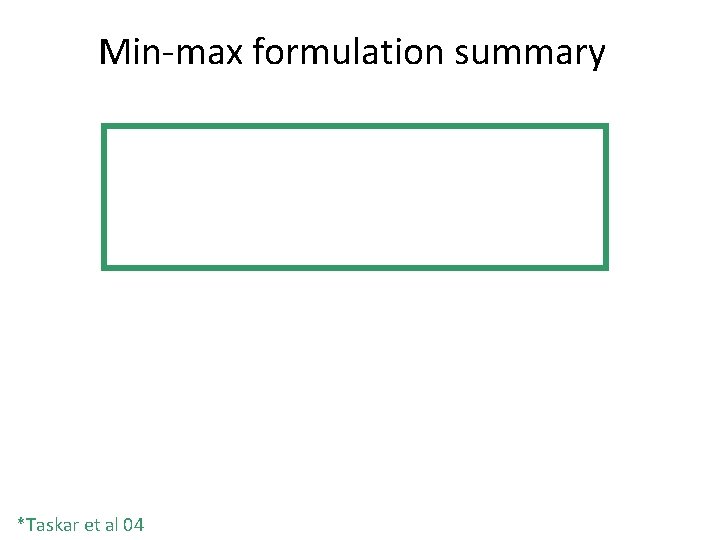

Min-max formulation summary *Taskar et al 04