Cool CAMs PowerEfficient TCAMs for Forwarding Engines Paper

- Slides: 46

Cool. CAMs: Power-Efficient TCAMs for Forwarding Engines Paper by Francis Zane, Girija Narlikar, Anindya Basu Bell Laboratories, Lucent Technologies Presented by Edward Spitznagel 1

Outline z. Introduction z. TCAMs for Address Lookup z. Bit Selection Architecture z. Trie-based Table Partitioning z. Route Table Updates z. Summary and Discussion 2

Introduction z Ternary Content-Addressable Memories (TCAMs) are becoming very popular for designing highthroughput forwarding engines; they are yfast ycost-effective ysimple to manage z Major drawback: high power consumption z This paper presents architectures and algorithms for making TCAM-based routing tables more power-efficient 3

TCAMs for Address Lookup z Fully-associative memory, searchable in a single cycle z Hardware compares query word (destination address) to all stored words (routing prefixes) in parallel yeach bit of a stored word can be 0, 1, or X (don’t care) yin the event that multiple matches occur, typically the entry with lowest address is returned 4

TCAMs for Address Lookup z TCAM vendors now provide for a mechanism that can reduce power consumption by selectively addressing smaller portions of the TCAM z The TCAM is divided into a set of blocks; each block is a contiguous, fixed size chunk of TCAM entries ye. g. a 512 k entry TCAM could be divided into 64 blocks of 8 k entries each z When a search command is issued, it is possible to specify which block(s) to use in the search z This can help us save power, since the main component of TCAM power consumption when searching is proportional to the number of searched entries 5

Bit Selection Architecture z Based on observation that most prefixes in core routing tables are between 16 and 24 bits long y over 98%, in the authors’ datasets z Put the very short (<16 bit) and very long (>24 bit) prefixes in a set of TCAM blocks to search on every lookup z The remaining prefixes are partitioned into “buckets, ” one of which is selected by hashing for each lookup y each bucket is laid out over one or more TCAM blocks z In this paper, the hashing function is restricted to merely using a selected set of input bits as an index 6

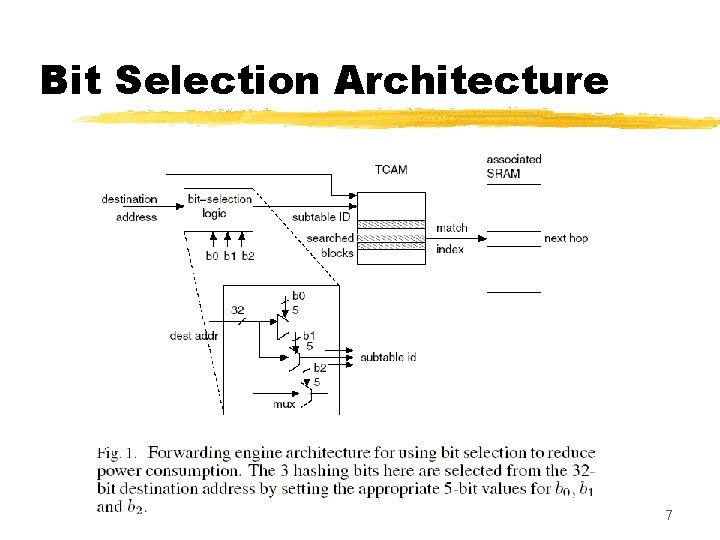

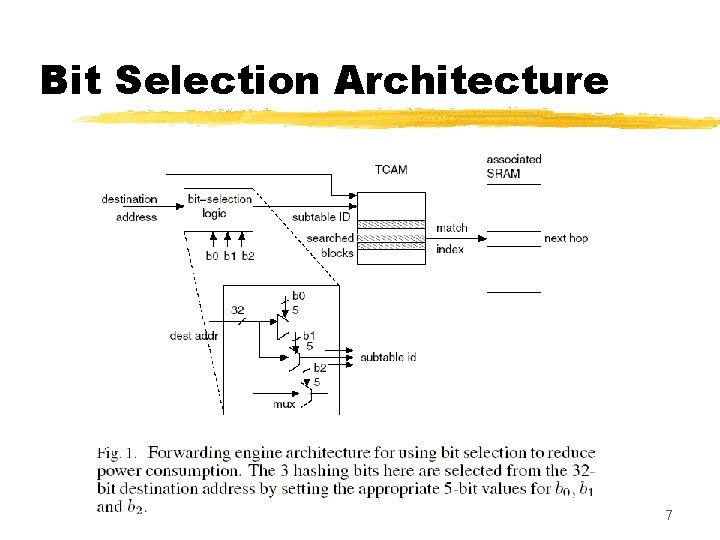

Bit Selection Architecture 7

Bit Selection Architecture z A route lookup, then, involves the following: y hashing function (bit selection logic, really) selects k hashing bits from the destination address, which identifies a bucket to be searched y also search the blocks with the very long and very short prefixes z The main issues now are: y how to select the k hashing bits x. Restrict ourselves to choosing hashing bits from the first 16 bits of the address, to avoid replicating prefixes y how to allocate the different buckets among the various TCAM blocks (since bucket size may not be an integral multiple of the TCAM block size) 8

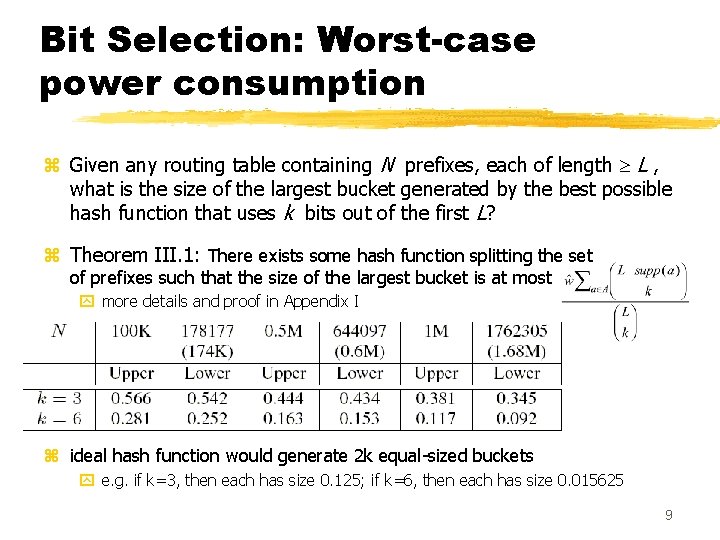

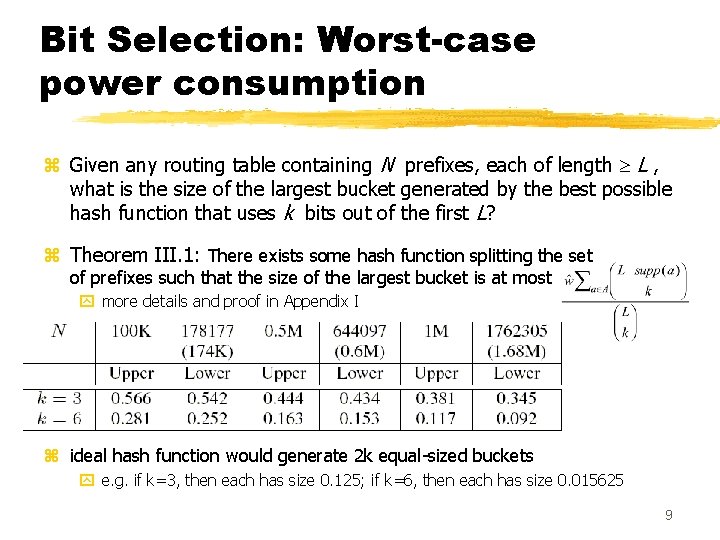

Bit Selection: Worst-case power consumption z Given any routing table containing N prefixes, each of length L , what is the size of the largest bucket generated by the best possible hash function that uses k bits out of the first L? z Theorem III. 1: There exists some hash function splitting the set of prefixes such that the size of the largest bucket is at most y more details and proof in Appendix I z ideal hash function would generate 2 k equal-sized buckets y e. g. if k=3, then each has size 0. 125; if k=6, then each has size 0. 015625 9

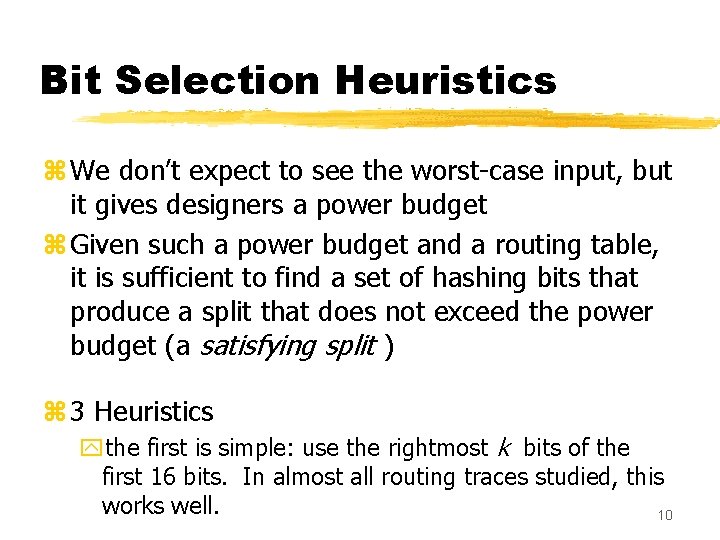

Bit Selection Heuristics z We don’t expect to see the worst-case input, but it gives designers a power budget z Given such a power budget and a routing table, it is sufficient to find a set of hashing bits that produce a split that does not exceed the power budget (a satisfying split ) z 3 Heuristics ythe first is simple: use the rightmost k bits of the first 16 bits. In almost all routing traces studied, this works well. 10

Bit Selection Heuristics z. Second Heuristic: brute force search to check all possible subsets of k bits from the first 16. z. Guaranteed to find a satisfying split z. Since it compares possible sets of k bits, running time is maximum for k =8 11

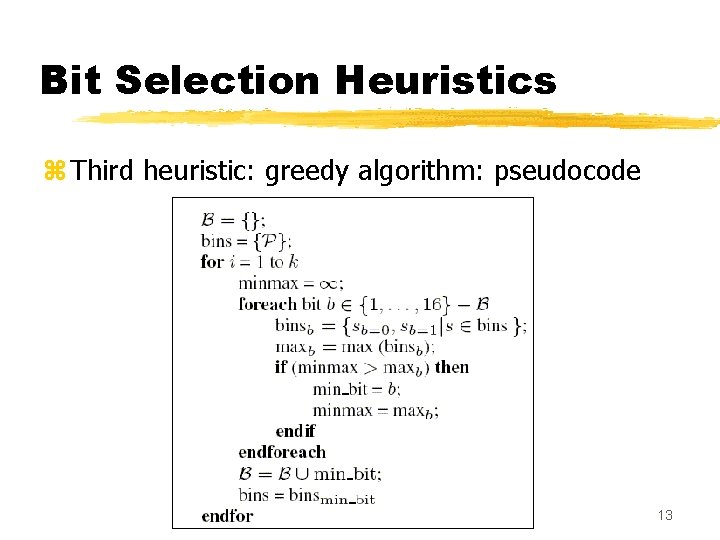

Bit Selection Heuristics z Third heuristic: a greedy algorithm y. Falls between the simple heuristic and the brute-force one, in terms of complexity and accuracy z To select k hashing bits, the algorithm performs k iterations, selecting one bit per iteration ynumber of buckets doubles each iteration z Goal in each iteration is to select a bit that minimizes the size of the biggest bucket produced in that iteration 12

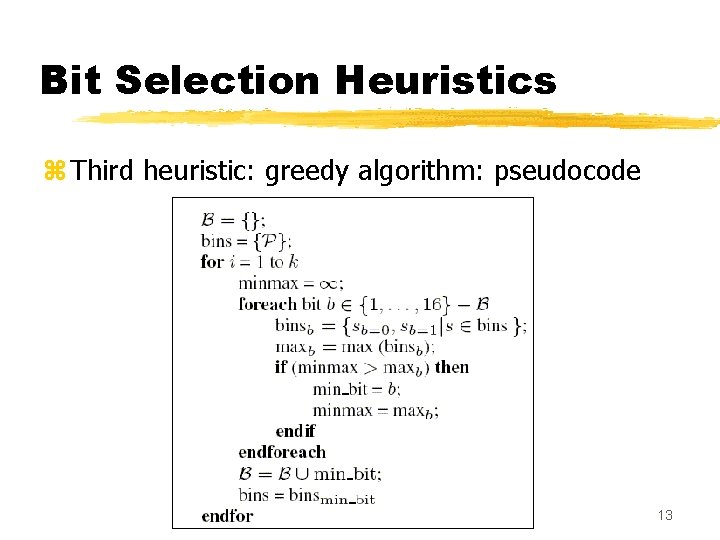

Bit Selection Heuristics z Third heuristic: greedy algorithm: pseudocode 13

Bit Selection Heuristics z Combining the heuristics, to reduce running time (in typical cases) y. First, try the simple heuristic (use k rightmost bits), and stop if that succeeds. y. Otherwise, apply the third heuristic (greedy algorithm), and stop if that succeeds. y. Otherwise, apply the brute-force heuristic z Apply algorithm again whenever route updates cause any bucket to become too large. 14

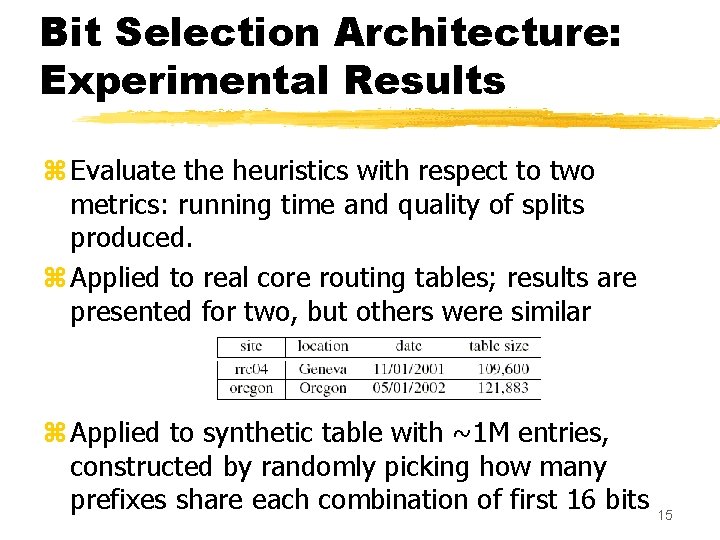

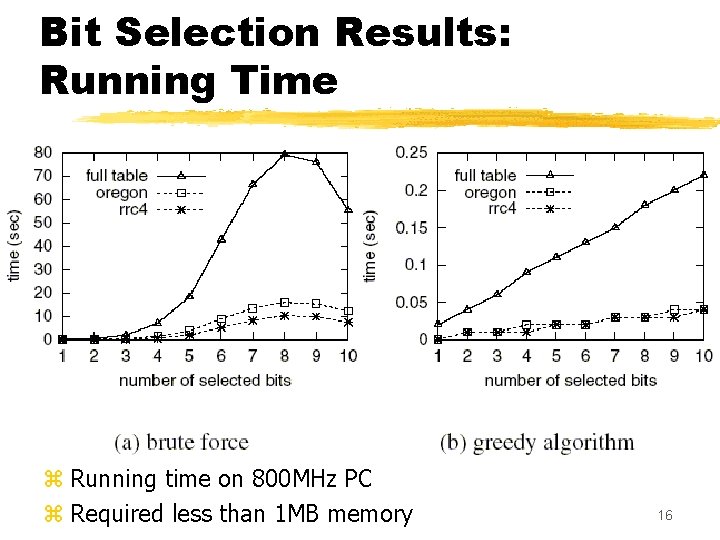

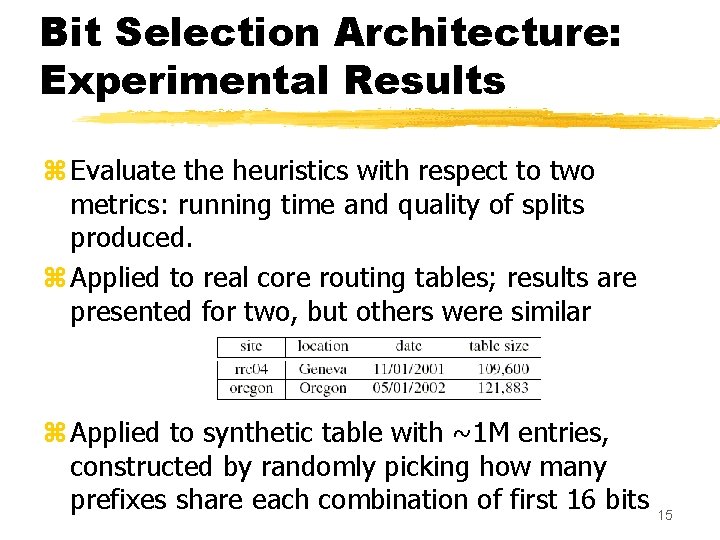

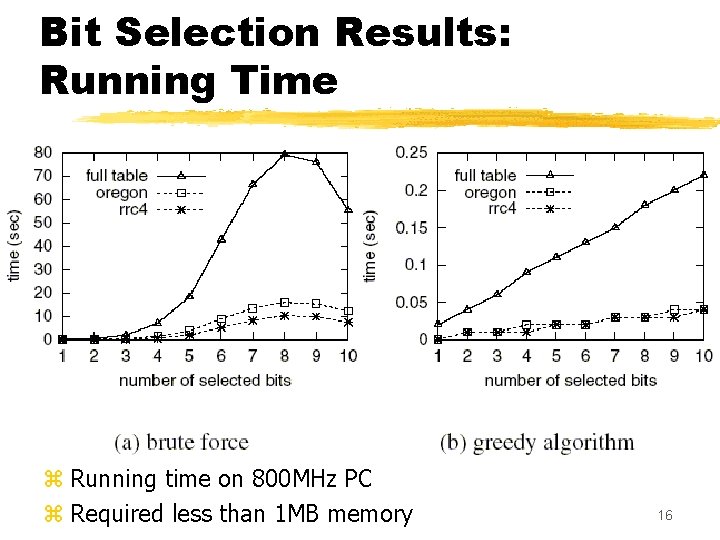

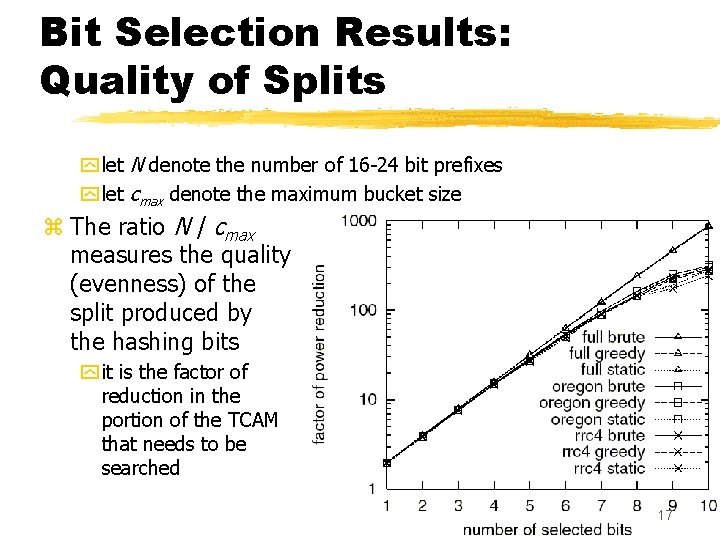

Bit Selection Architecture: Experimental Results z Evaluate the heuristics with respect to two metrics: running time and quality of splits produced. z Applied to real core routing tables; results are presented for two, but others were similar z Applied to synthetic table with ~1 M entries, constructed by randomly picking how many prefixes share each combination of first 16 bits 15

Bit Selection Results: Running Time z Running time on 800 MHz PC z Required less than 1 MB memory 16

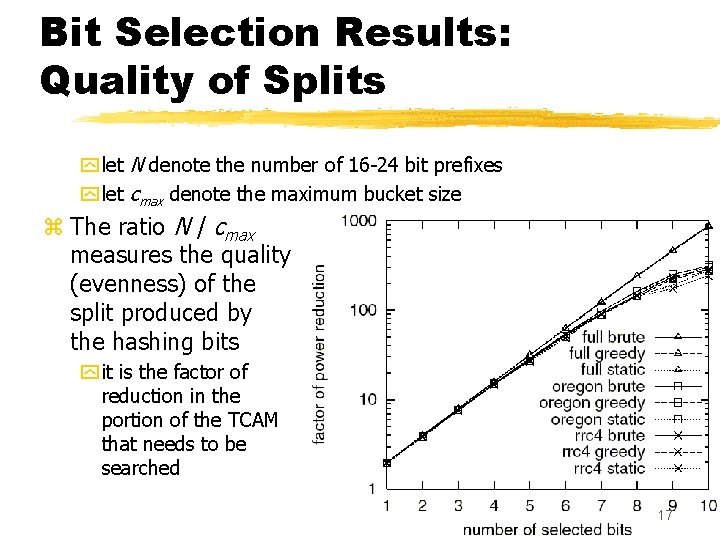

Bit Selection Results: Quality of Splits y let N denote the number of 16 -24 bit prefixes y let cmax denote the maximum bucket size z The ratio N / cmax measures the quality (evenness) of the split produced by the hashing bits y it is the factor of reduction in the portion of the TCAM that needs to be searched 17

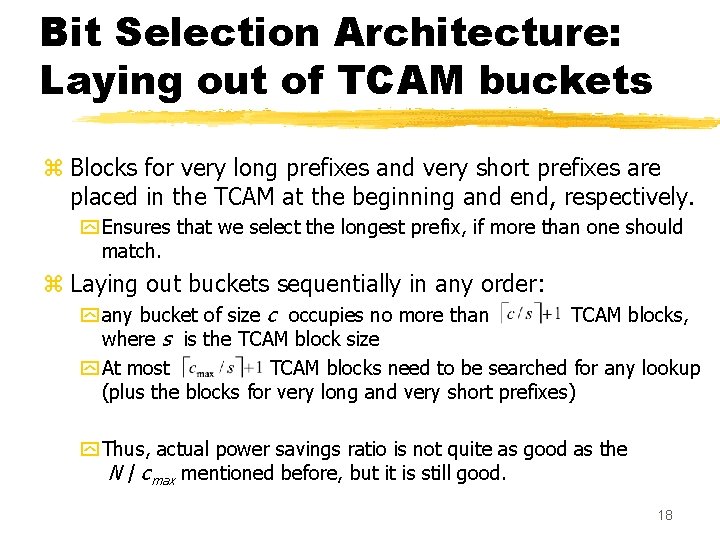

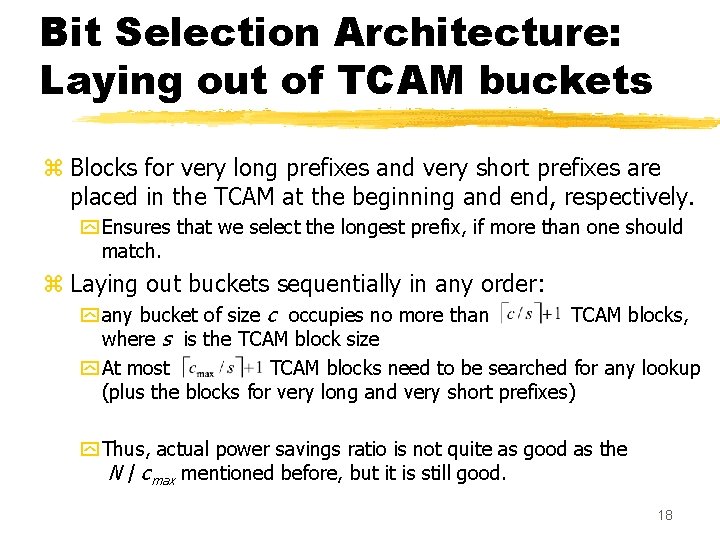

Bit Selection Architecture: Laying out of TCAM buckets z Blocks for very long prefixes and very short prefixes are placed in the TCAM at the beginning and end, respectively. y Ensures that we select the longest prefix, if more than one should match. z Laying out buckets sequentially in any order: y any bucket of size c occupies no more than TCAM blocks, where s is the TCAM block size y At most TCAM blocks need to be searched for any lookup (plus the blocks for very long and very short prefixes) y Thus, actual power savings ratio is not quite as good as the N / cmax mentioned before, but it is still good. 18

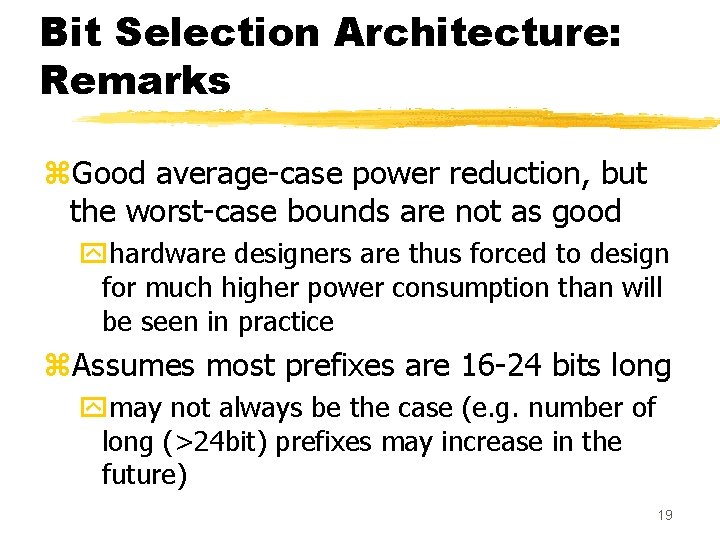

Bit Selection Architecture: Remarks z. Good average-case power reduction, but the worst-case bounds are not as good yhardware designers are thus forced to design for much higher power consumption than will be seen in practice z. Assumes most prefixes are 16 -24 bits long ymay not always be the case (e. g. number of long (>24 bit) prefixes may increase in the future) 19

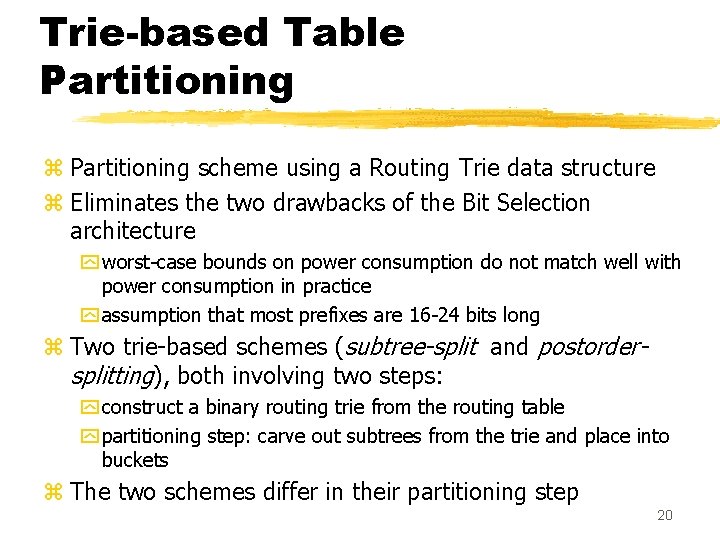

Trie-based Table Partitioning z Partitioning scheme using a Routing Trie data structure z Eliminates the two drawbacks of the Bit Selection architecture y worst-case bounds on power consumption do not match well with power consumption in practice y assumption that most prefixes are 16 -24 bits long z Two trie-based schemes (subtree-split and postordersplitting), both involving two steps: y construct a binary routing trie from the routing table y partitioning step: carve out subtrees from the trie and place into buckets z The two schemes differ in their partitioning step 20

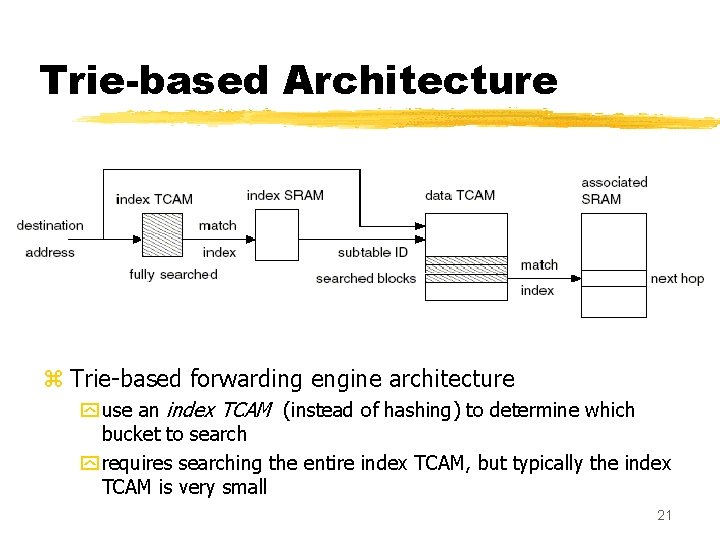

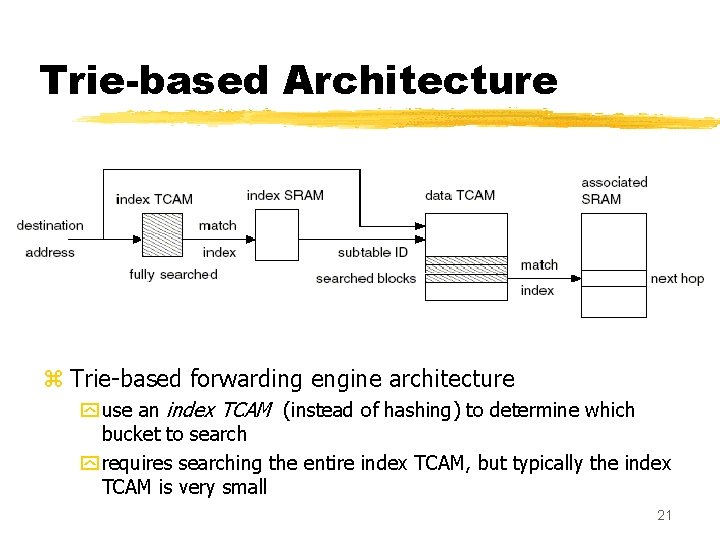

Trie-based Architecture z Trie-based forwarding engine architecture y use an index TCAM (instead of hashing) to determine which bucket to search y requires searching the entire index TCAM, but typically the index TCAM is very small 21

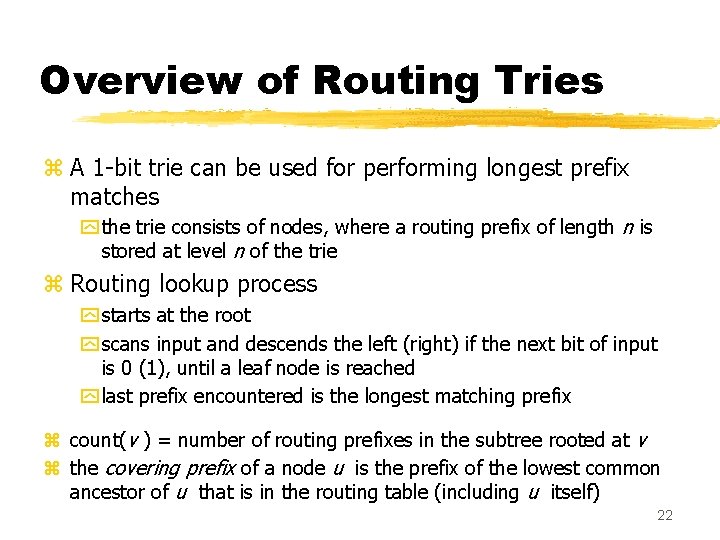

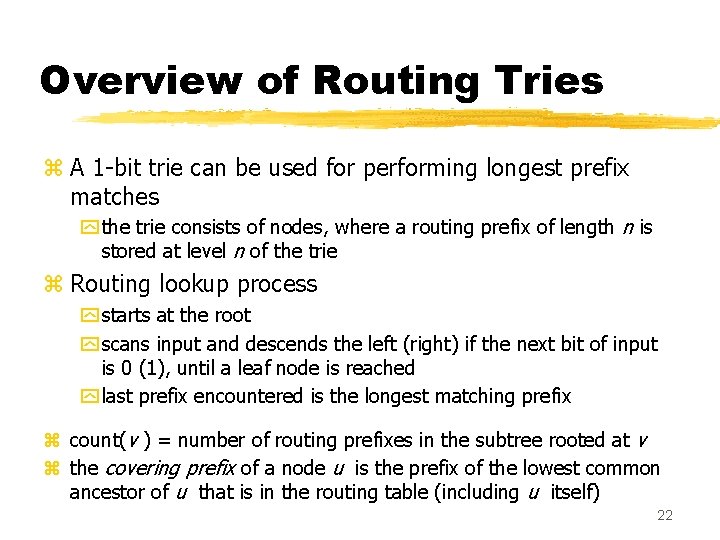

Overview of Routing Tries z A 1 -bit trie can be used for performing longest prefix matches y the trie consists of nodes, where a routing prefix of length n is stored at level n of the trie z Routing lookup process y starts at the root y scans input and descends the left (right) if the next bit of input is 0 (1), until a leaf node is reached y last prefix encountered is the longest matching prefix z count(v ) = number of routing prefixes in the subtree rooted at v z the covering prefix of a node u is the prefix of the lowest common ancestor of u that is in the routing table (including u itself) 22

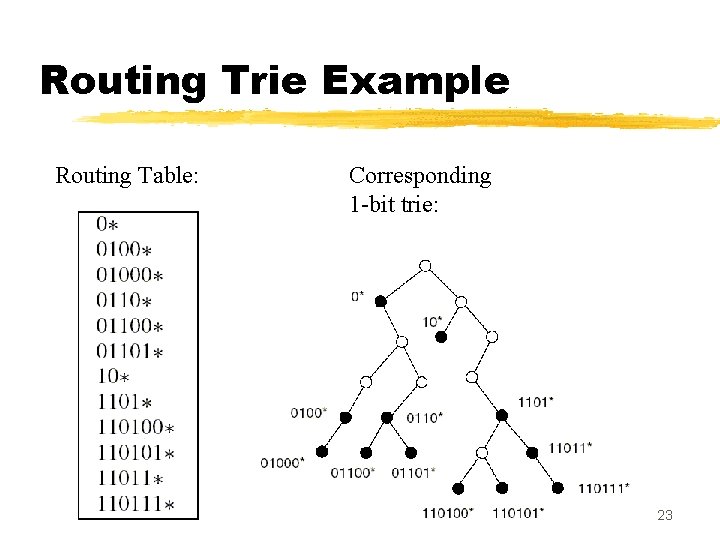

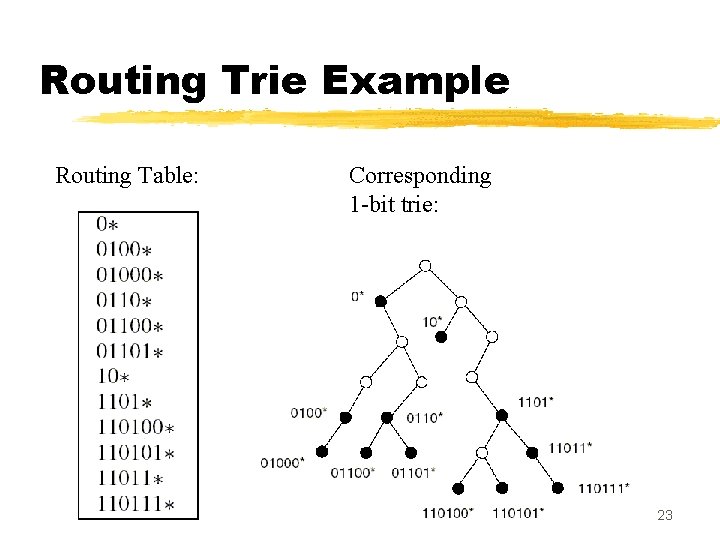

Routing Trie Example Routing Table: Corresponding 1 -bit trie: 23

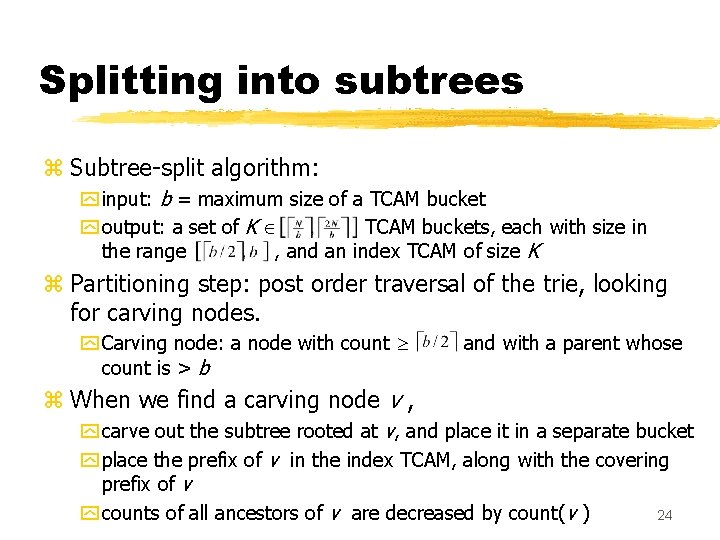

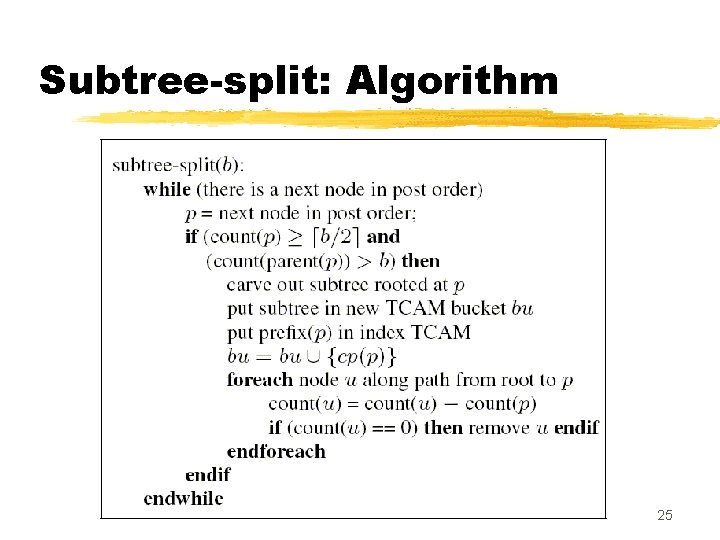

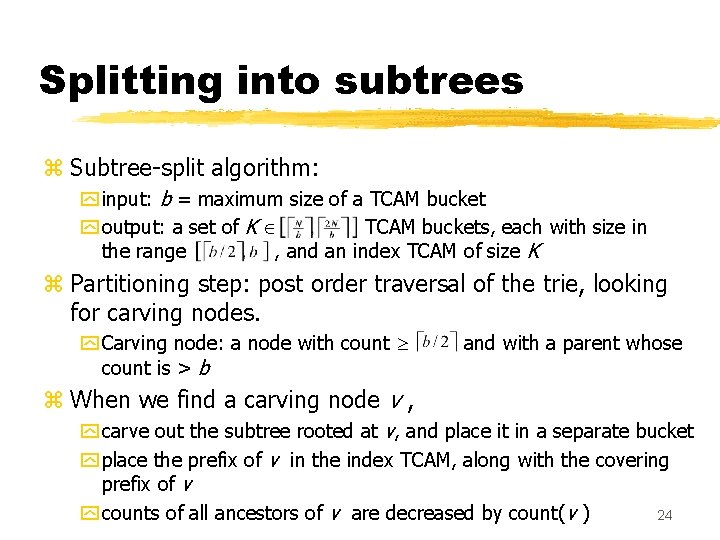

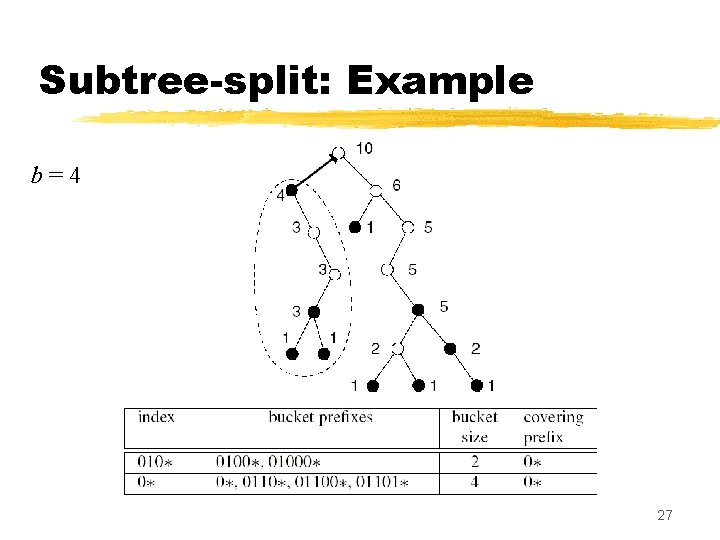

Splitting into subtrees z Subtree-split algorithm: y input: b = maximum size of a TCAM bucket y output: a set of K TCAM buckets, each with size in the range , and an index TCAM of size K z Partitioning step: post order traversal of the trie, looking for carving nodes. y Carving node: a node with count is > b and with a parent whose z When we find a carving node v , y carve out the subtree rooted at v, and place it in a separate bucket y place the prefix of v in the index TCAM, along with the covering prefix of v y counts of all ancestors of v are decreased by count(v ) 24

Subtree-split: Algorithm 25

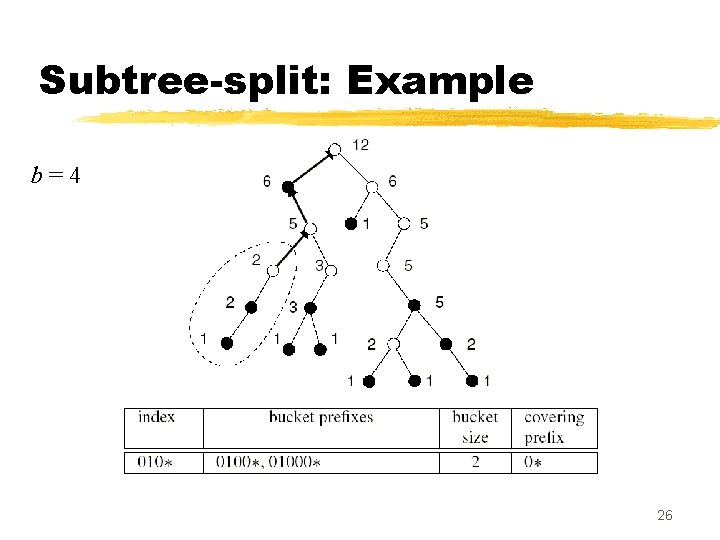

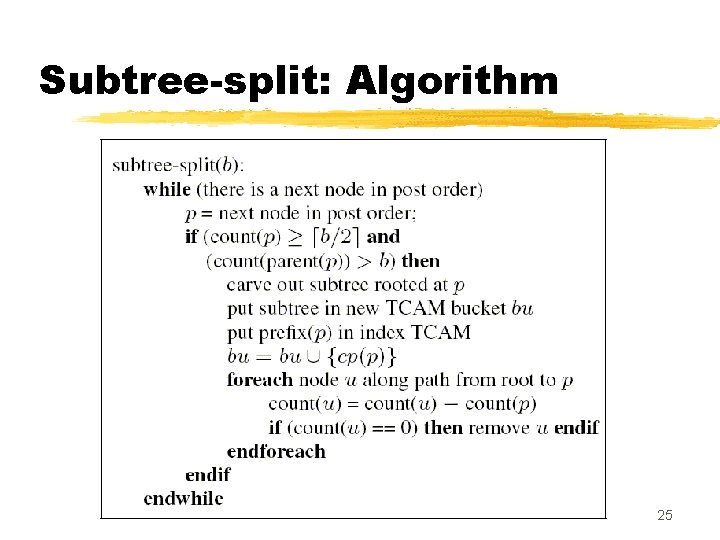

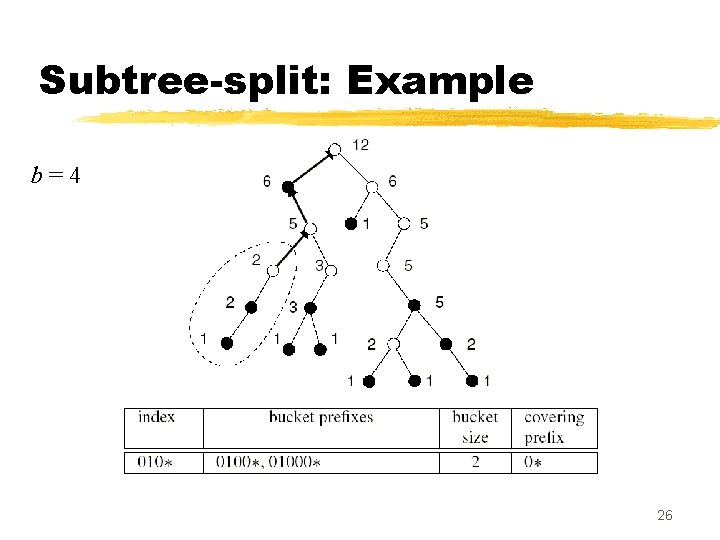

Subtree-split: Example b=4 26

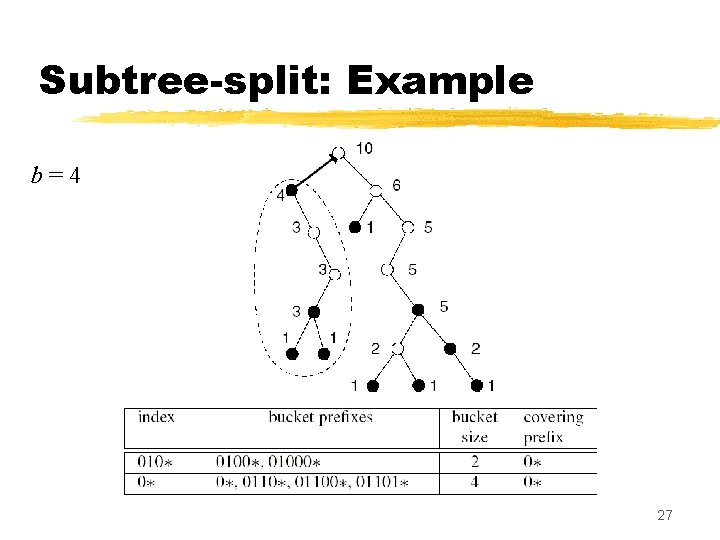

Subtree-split: Example b=4 27

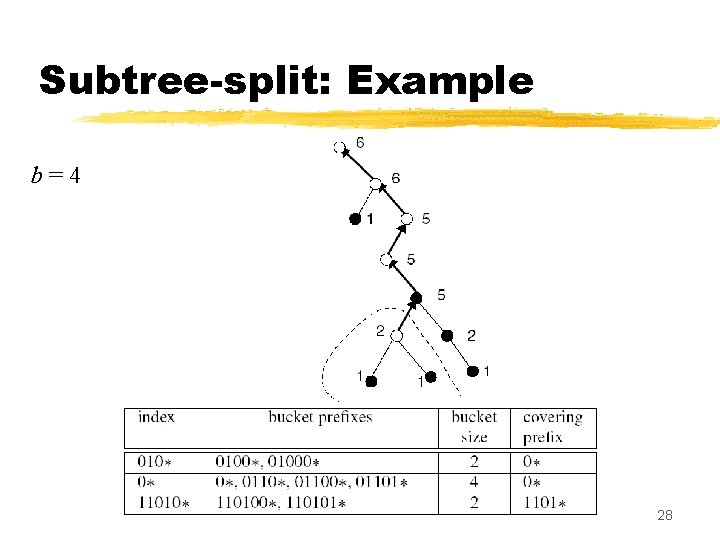

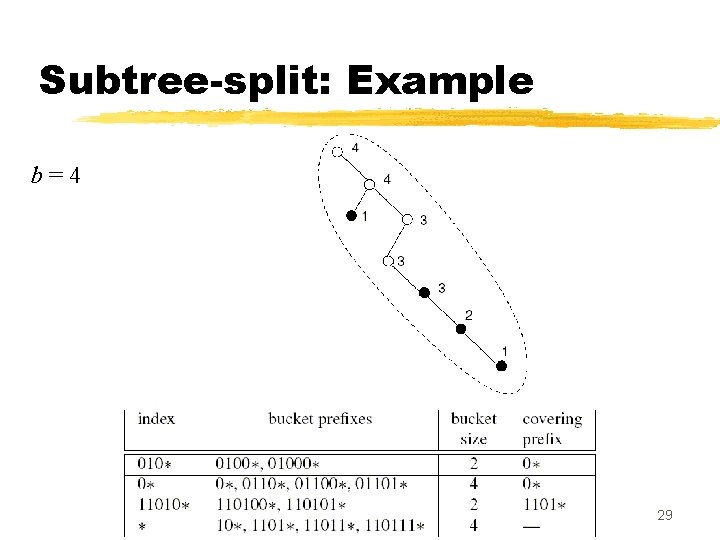

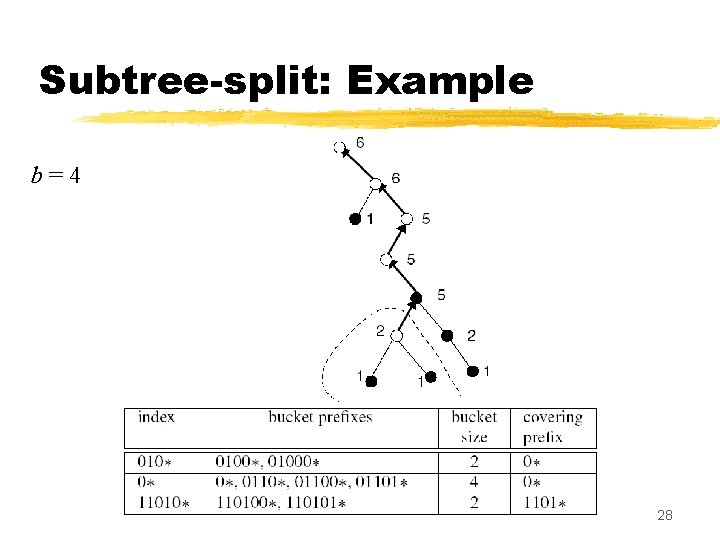

Subtree-split: Example b=4 28

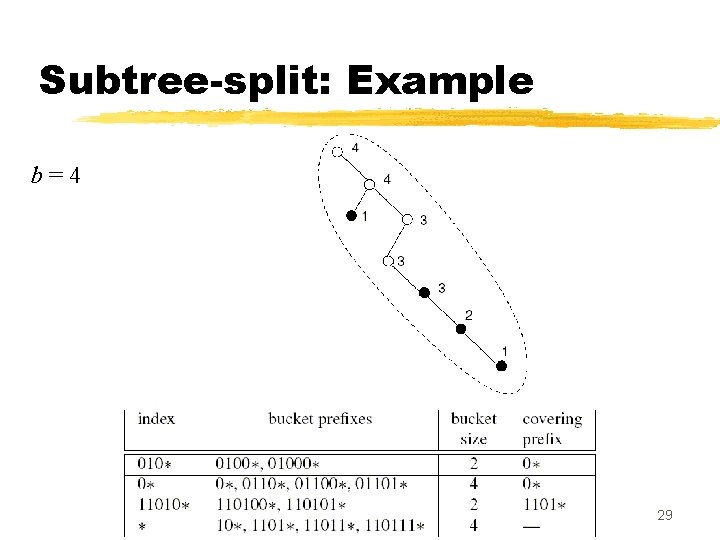

Subtree-split: Example b=4 29

Subtree-split: Remarks z Subtree-split creates buckets whose size range from b/2 to b (except the last, which ranges from 1 to b ) y At most one covering prefix is added to each bucket z The total number of buckets created ranges from N/b to 2 N/b ; each bucket results in one entry in the index TCAM z Using subtree-split in a TCAM with K buckets, during any lookup at most K + 2 N /K prefixes are searched from the index and data TCAMs z Total complexity of the subtree-split algorithm is O(N +NW /b) 30

Post-order splitting z Partitions the table into buckets of exactly b prefixes y improvement over subtree-split, where the smallest and largest bucket sizes can vary by a factor of 2 y this comes with the cost of more entries in the index TCAM z Partitioning step: post-order traversal of the trie, looking for subtrees to carve out, but, z Buckets are made from collections of subtrees, rather than just a single subtree y This is because it is possible the entire trie does not contain N /b subtrees of exactly b prefixes each 31

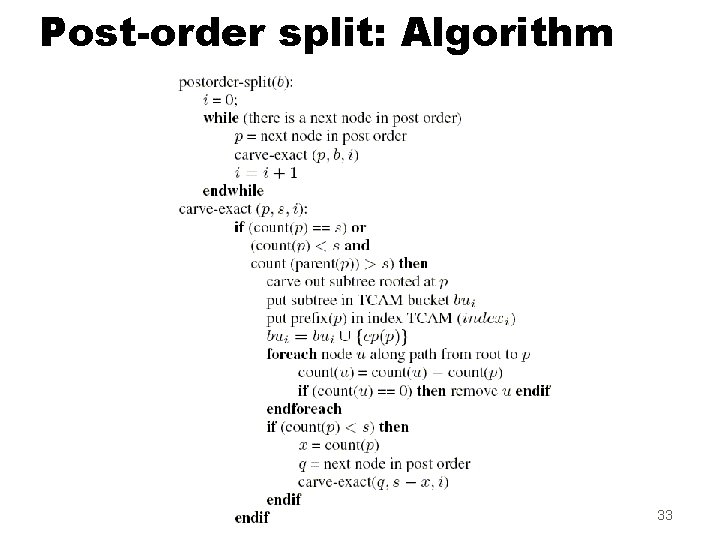

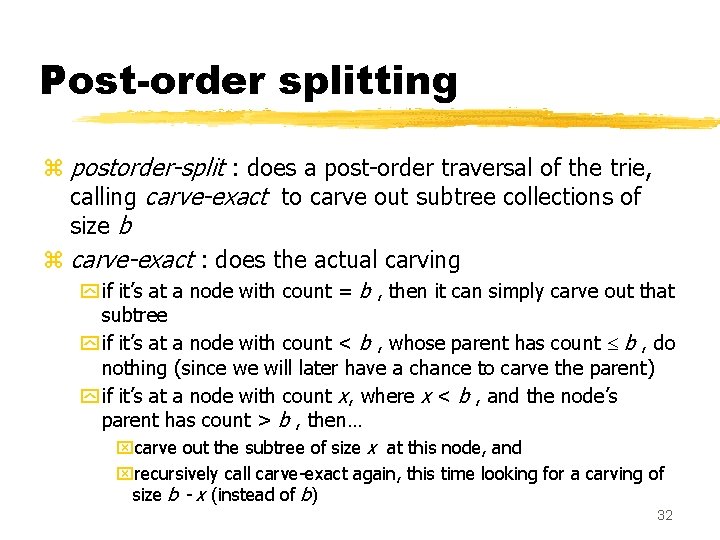

Post-order splitting z postorder-split : does a post-order traversal of the trie, calling carve-exact to carve out subtree collections of size b z carve-exact : does the actual carving y if it’s at a node with count = b , then it can simply carve out that subtree y if it’s at a node with count < b , whose parent has count b , do nothing (since we will later have a chance to carve the parent) y if it’s at a node with count x, where x < b , and the node’s parent has count > b , then… xcarve out the subtree of size x at this node, and xrecursively call carve-exact again, this time looking for a carving of size b - x (instead of b) 32

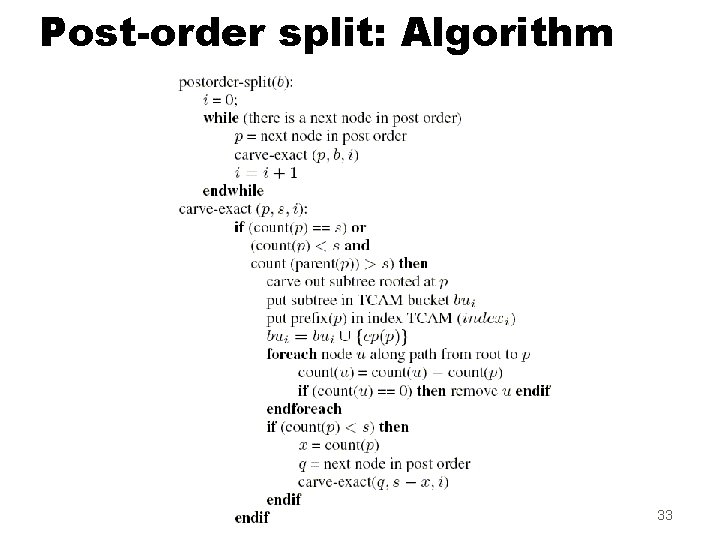

Post-order split: Algorithm 33

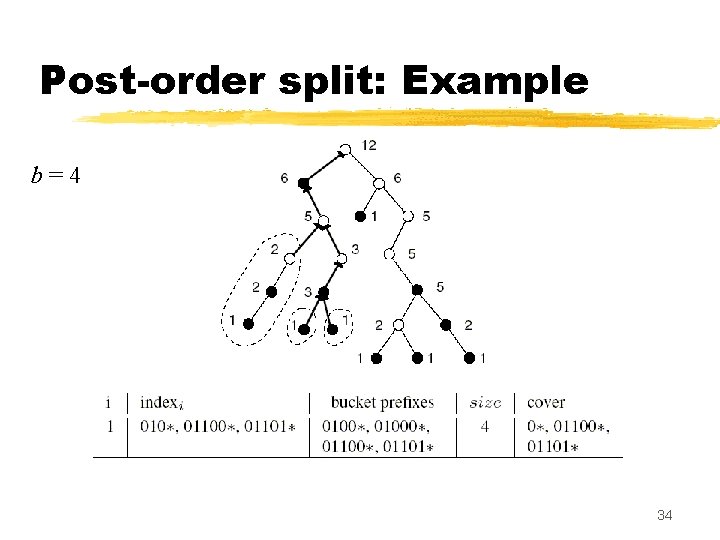

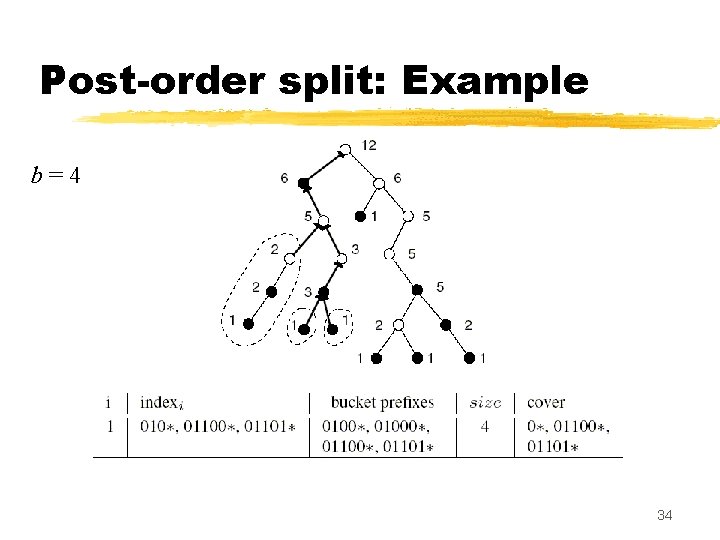

Post-order split: Example b=4 34

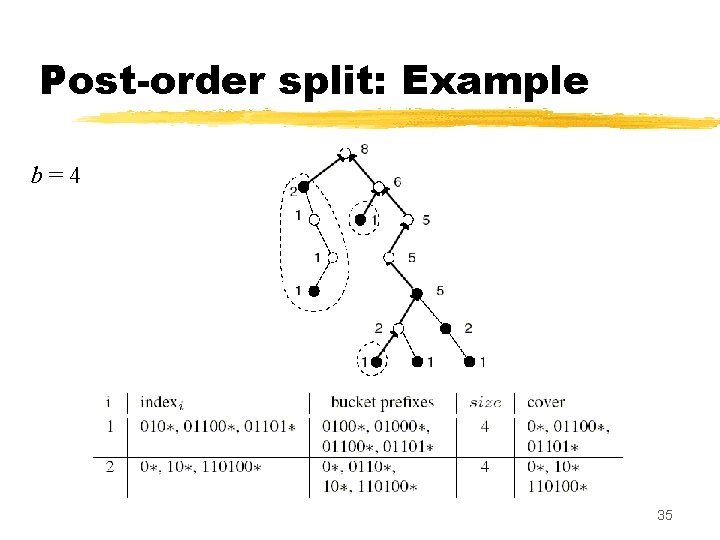

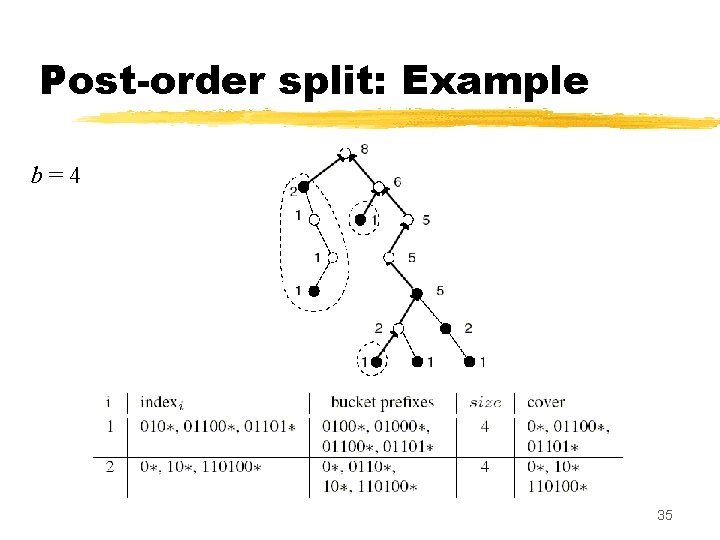

Post-order split: Example b=4 35

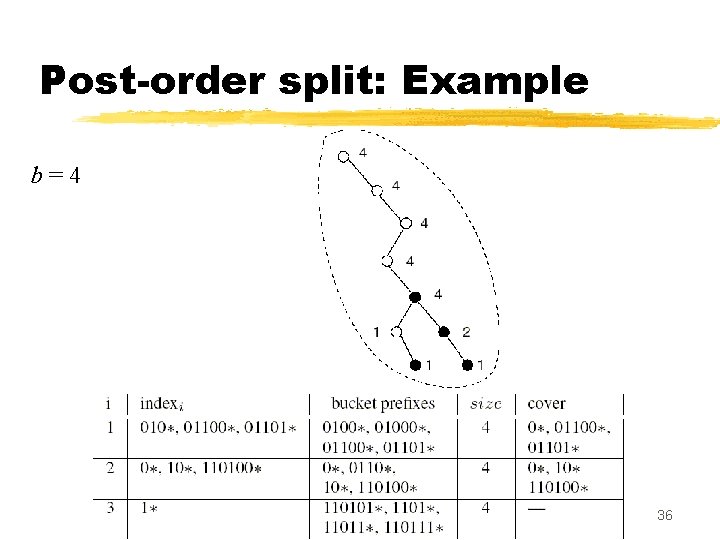

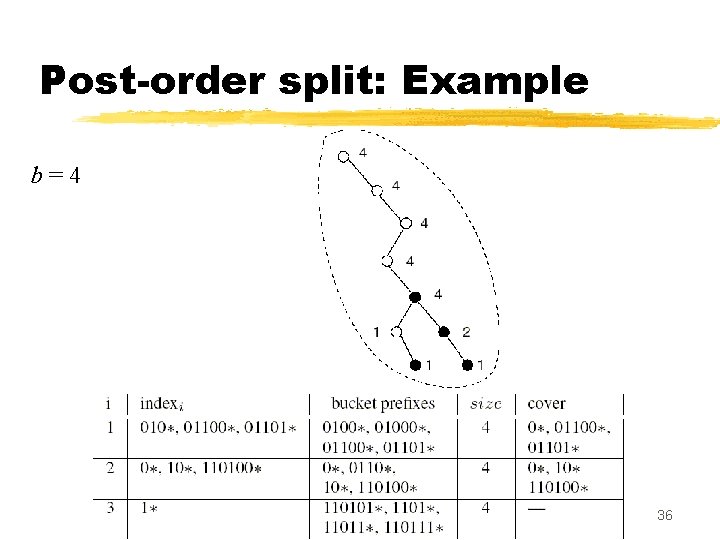

Post-order split: Example b=4 36

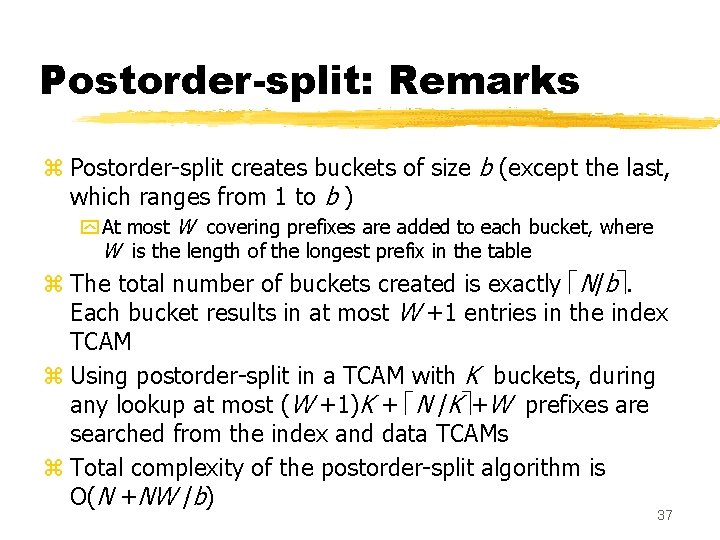

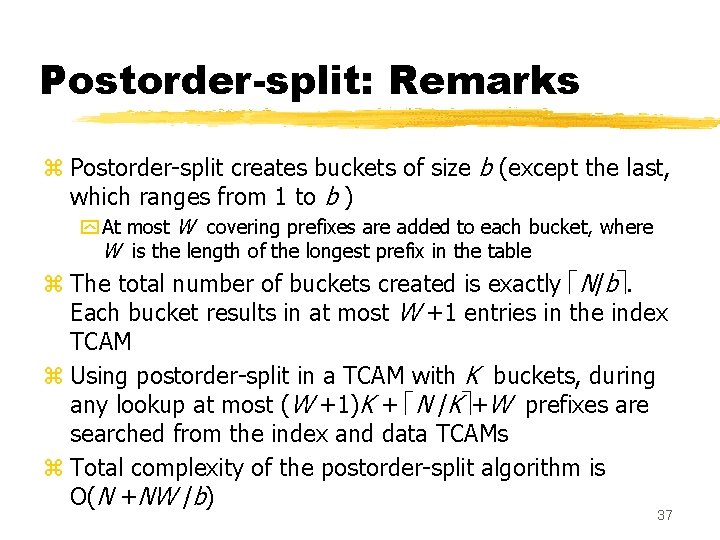

Postorder-split: Remarks z Postorder-split creates buckets of size b (except the last, which ranges from 1 to b ) y At most W covering prefixes are added to each bucket, where W is the length of the longest prefix in the table z The total number of buckets created is exactly N/b. Each bucket results in at most W +1 entries in the index TCAM z Using postorder-split in a TCAM with K buckets, during any lookup at most (W +1)K + N /K +W prefixes are searched from the index and data TCAMs z Total complexity of the postorder-split algorithm is O(N +NW /b) 37

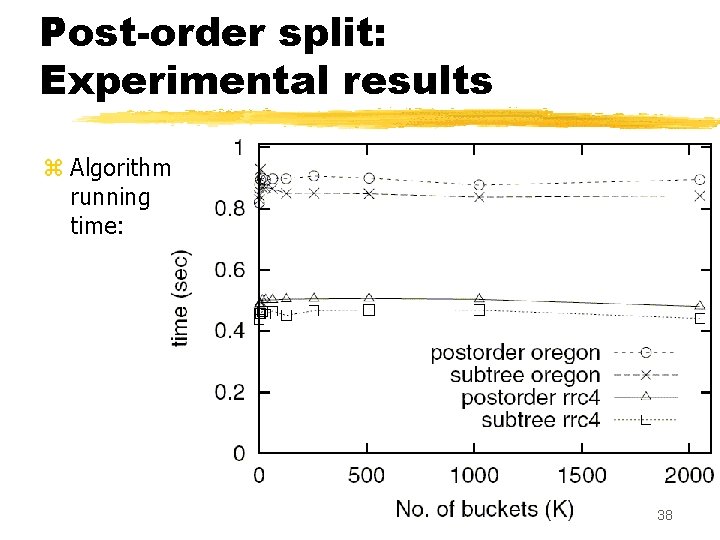

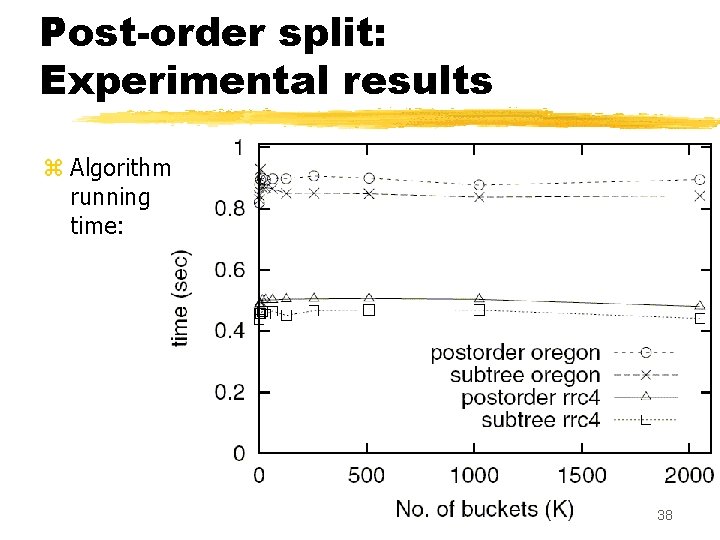

Post-order split: Experimental results z Algorithm running time: 38

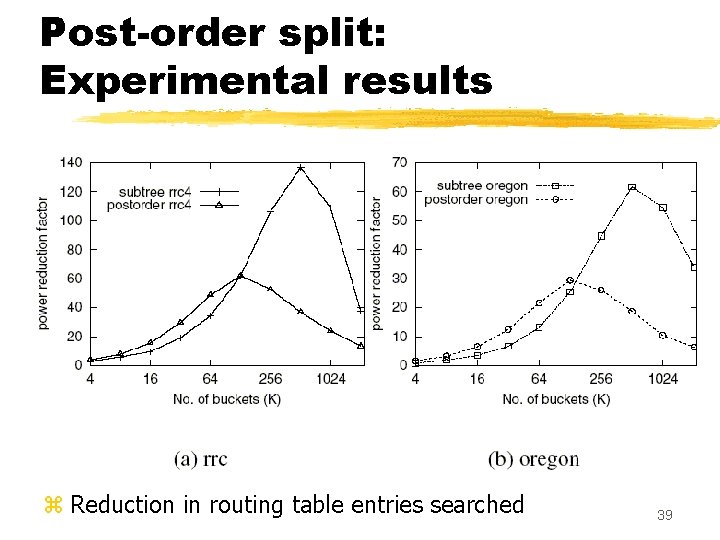

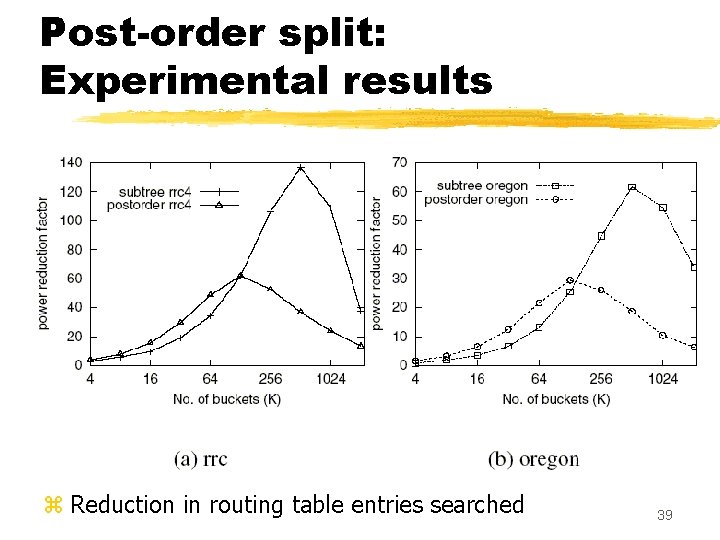

Post-order split: Experimental results z Reduction in routing table entries searched 39

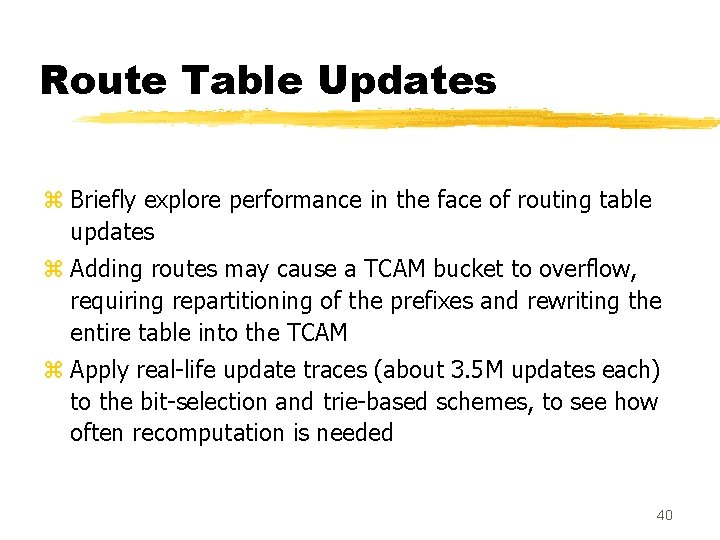

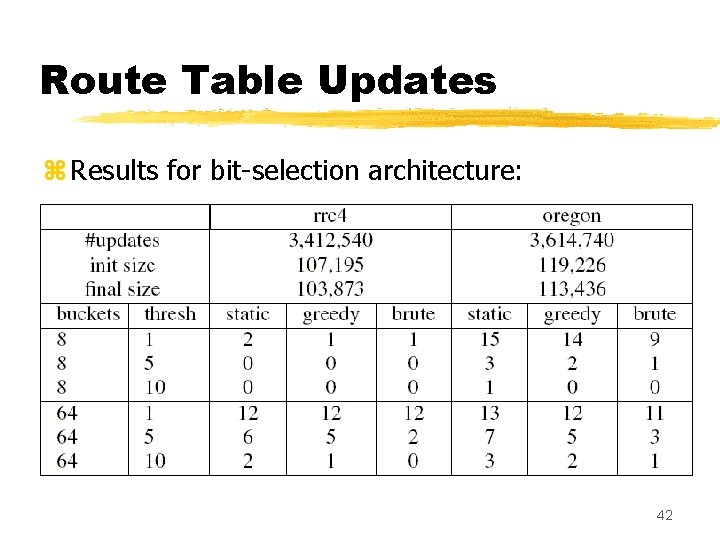

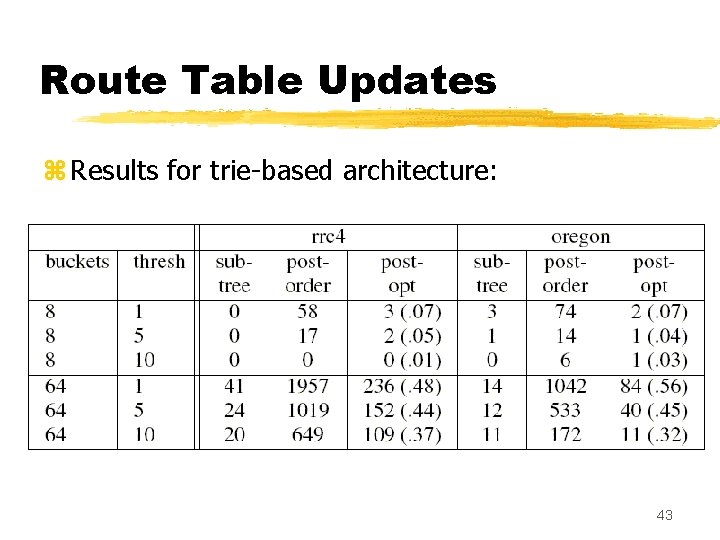

Route Table Updates z Briefly explore performance in the face of routing table updates z Adding routes may cause a TCAM bucket to overflow, requiring repartitioning of the prefixes and rewriting the entire table into the TCAM z Apply real-life update traces (about 3. 5 M updates each) to the bit-selection and trie-based schemes, to see how often recomputation is needed 40

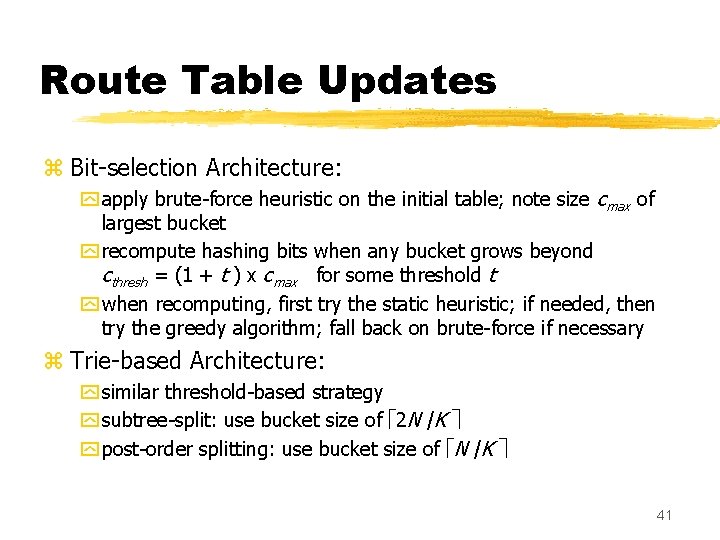

Route Table Updates z Bit-selection Architecture: y apply brute-force heuristic on the initial table; note size cmax of largest bucket y recompute hashing bits when any bucket grows beyond cthresh = (1 + t ) x cmax for some threshold t y when recomputing, first try the static heuristic; if needed, then try the greedy algorithm; fall back on brute-force if necessary z Trie-based Architecture: y similar threshold-based strategy y subtree-split: use bucket size of 2 N /K y post-order splitting: use bucket size of N /K 41

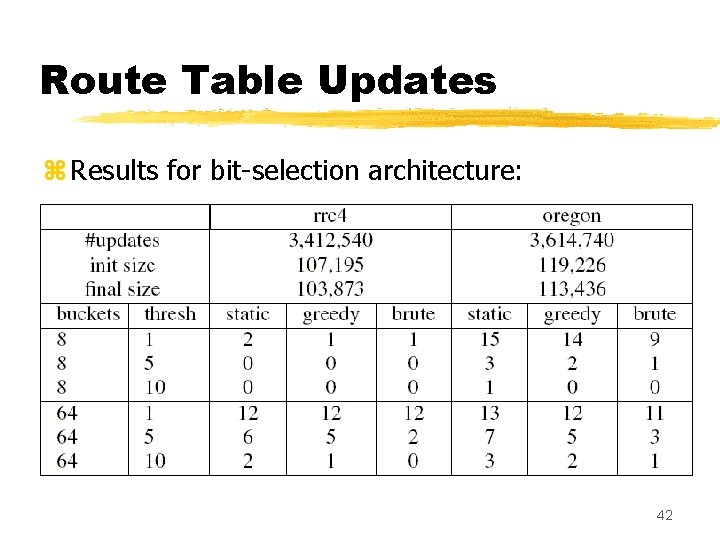

Route Table Updates z Results for bit-selection architecture: 42

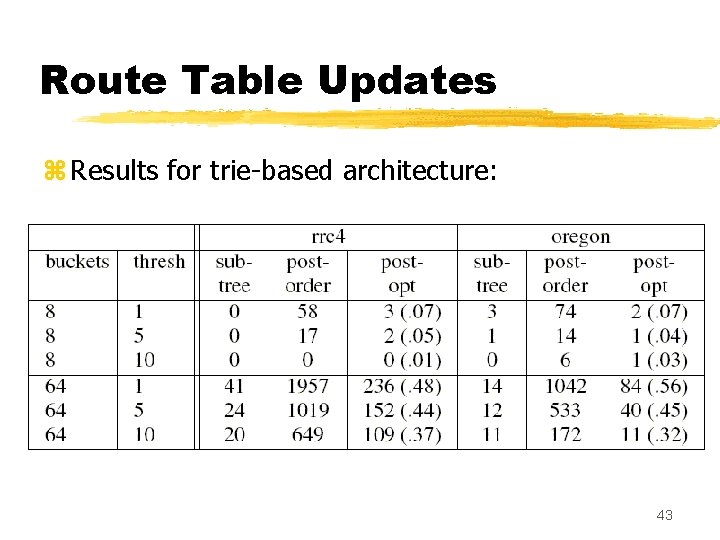

Route Table Updates z Results for trie-based architecture: 43

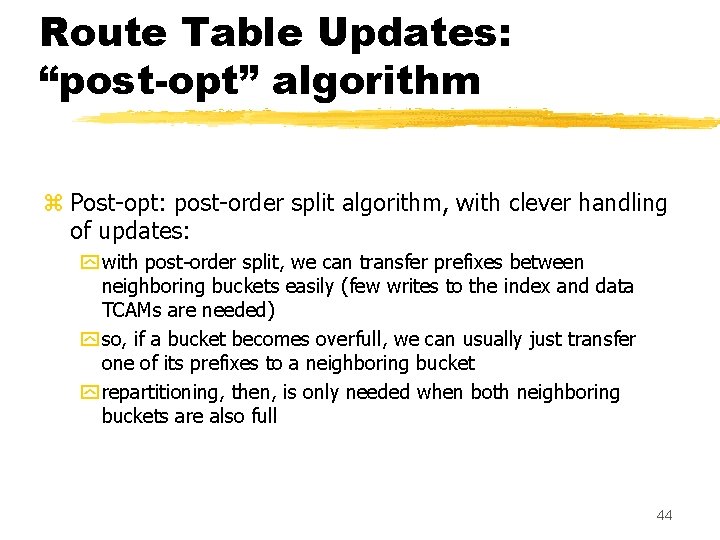

Route Table Updates: “post-opt” algorithm z Post-opt: post-order split algorithm, with clever handling of updates: y with post-order split, we can transfer prefixes between neighboring buckets easily (few writes to the index and data TCAMs are needed) y so, if a bucket becomes overfull, we can usually just transfer one of its prefixes to a neighboring bucket y repartitioning, then, is only needed when both neighboring buckets are also full 44

Summary z TCAMs would be great for routing lookup, if they didn’t use so much power z Cool. CAMs: two architectures that use partitioned TCAMs to reduce power consumption in routing lookup y Bit-selection Architecture y Trie-based Table Partitioning (subtree-split and postordersplitting) y each scheme has its own subtle advantages/disadvantages, but overall they seem to work well 45

Discussion 46