Common recognition tasks Slide from L Lazebnik Adapted

Common recognition tasks Slide from L. Lazebnik. Adapted from Fei-Fei Li

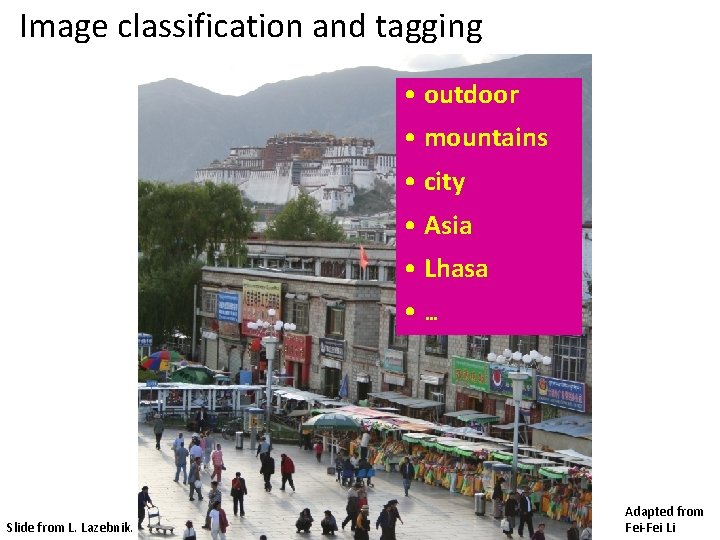

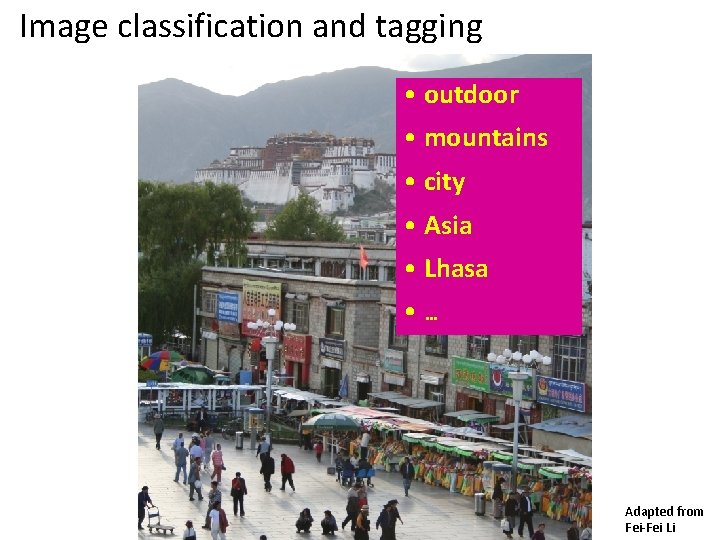

Image classification and tagging • outdoor • mountains • city • Asia • Lhasa • … Slide from L. Lazebnik. Adapted from Fei-Fei Li

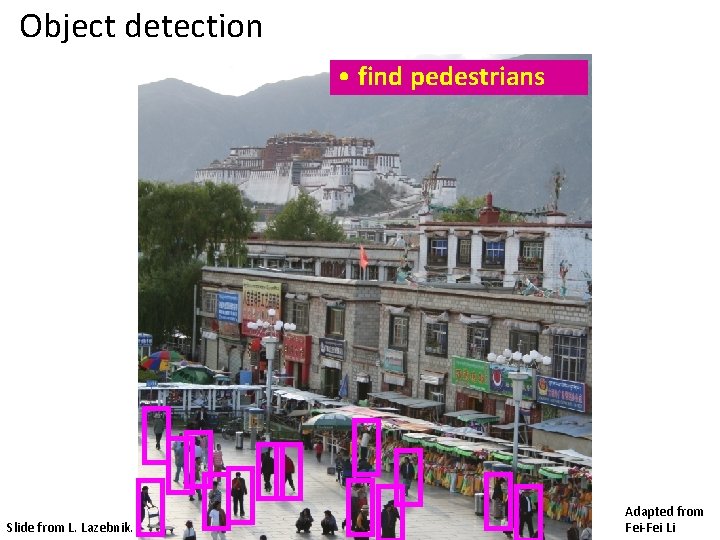

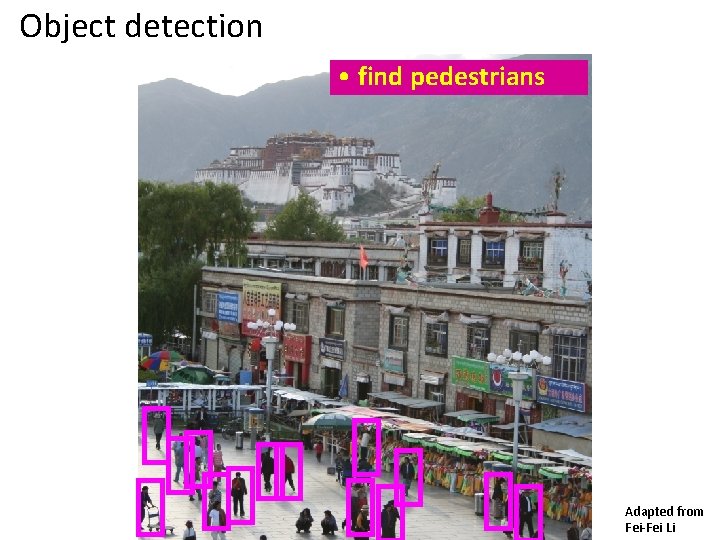

Object detection • find pedestrians Slide from L. Lazebnik. Adapted from Fei-Fei Li

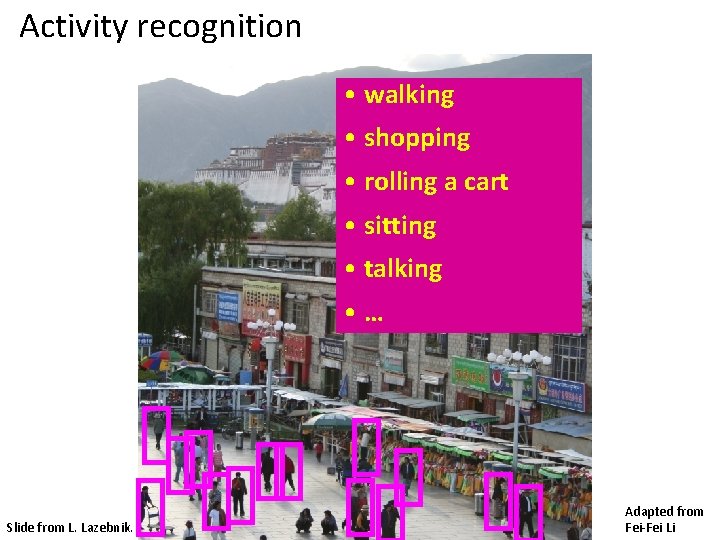

Activity recognition • walking • shopping • rolling a cart • sitting • talking • … Slide from L. Lazebnik. Adapted from Fei-Fei Li

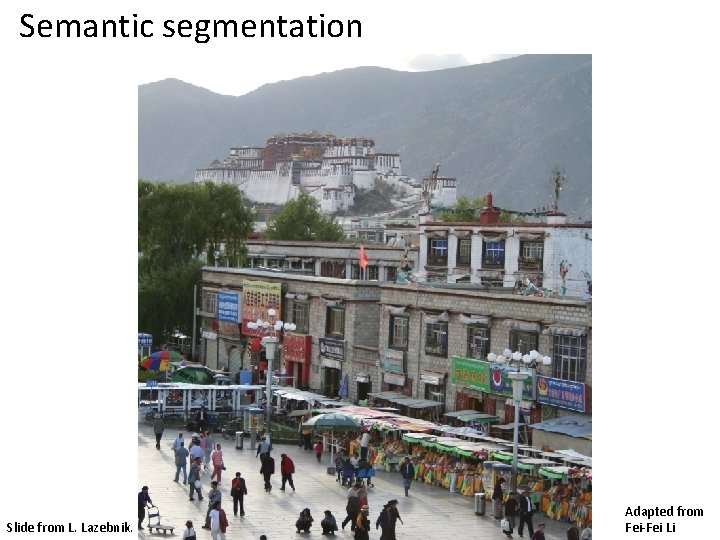

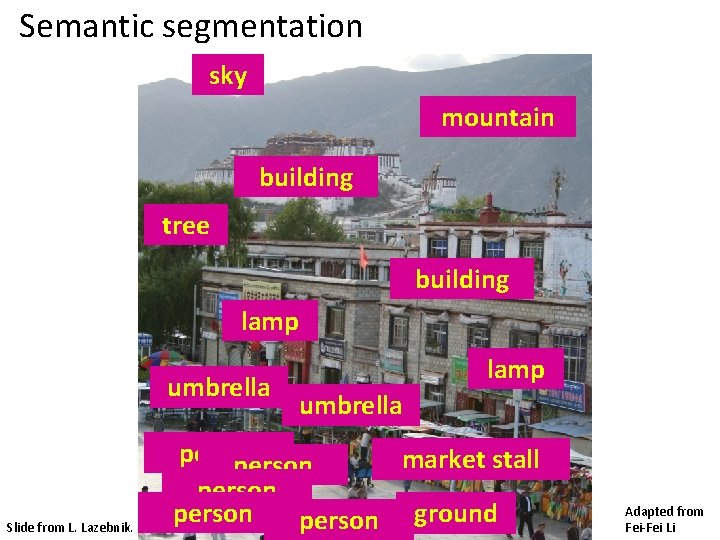

Semantic segmentation Slide from L. Lazebnik. Adapted from Fei-Fei Li

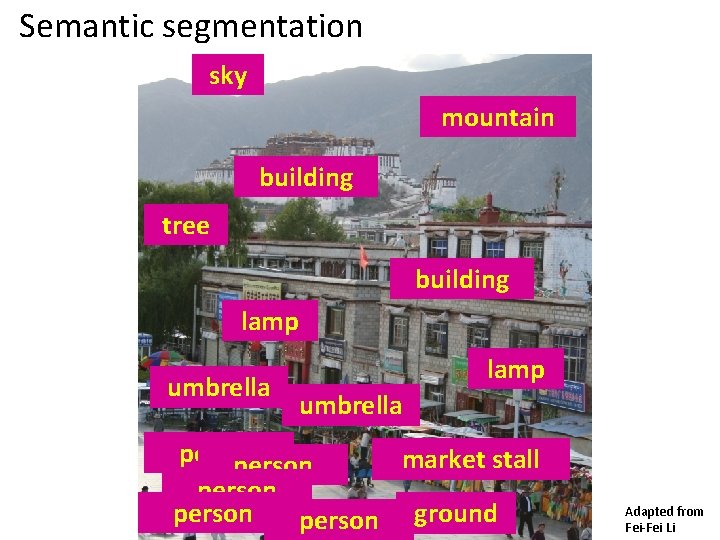

Semantic segmentation sky mountain building tree building lamp umbrella Slide from L. Lazebnik. lamp umbrella person market stall person ground Adapted from Fei-Fei Li

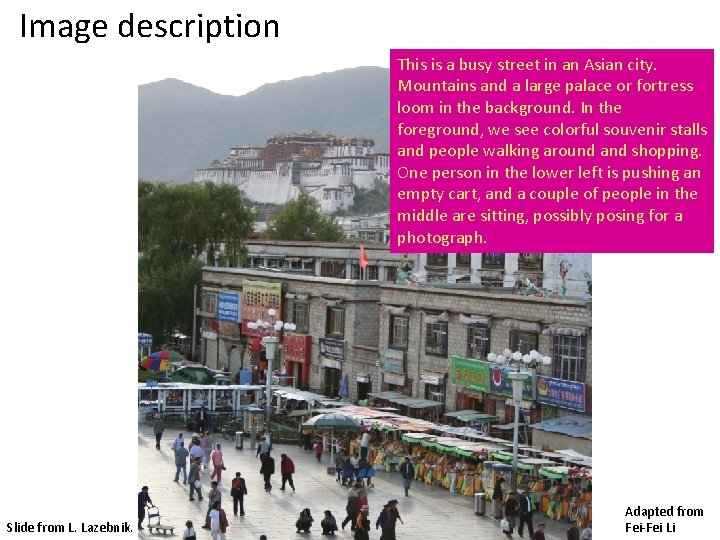

Image description This is a busy street in an Asian city. Mountains and a large palace or fortress loom in the background. In the foreground, we see colorful souvenir stalls and people walking around and shopping. One person in the lower left is pushing an empty cart, and a couple of people in the middle are sitting, possibly posing for a photograph. Slide from L. Lazebnik. Adapted from Fei-Fei Li

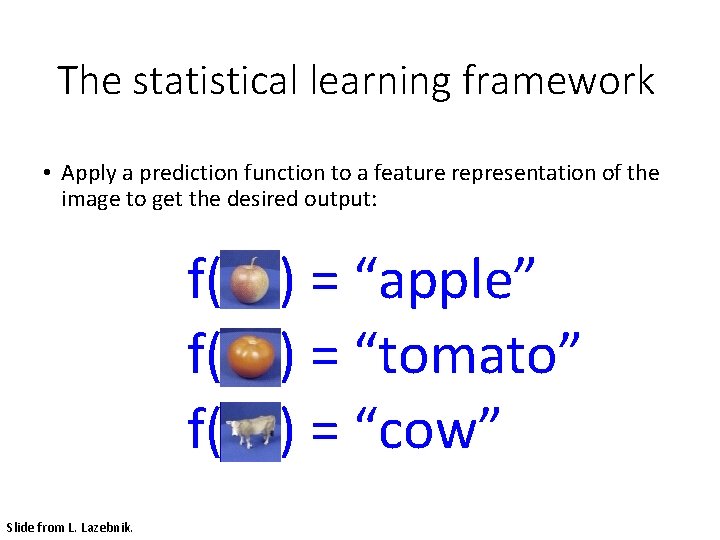

The statistical learning framework • Apply a prediction function to a feature representation of the image to get the desired output: f( ) = “apple” f( ) = “tomato” f( ) = “cow” Slide from L. Lazebnik.

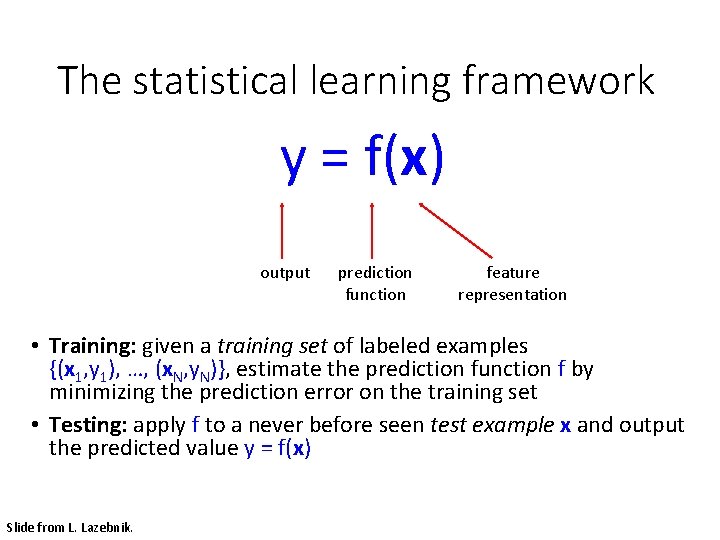

The statistical learning framework y = f(x) output prediction function feature representation • Training: given a training set of labeled examples {(x 1, y 1), …, (x. N, y. N)}, estimate the prediction function f by minimizing the prediction error on the training set • Testing: apply f to a never before seen test example x and output the predicted value y = f(x) Slide from L. Lazebnik.

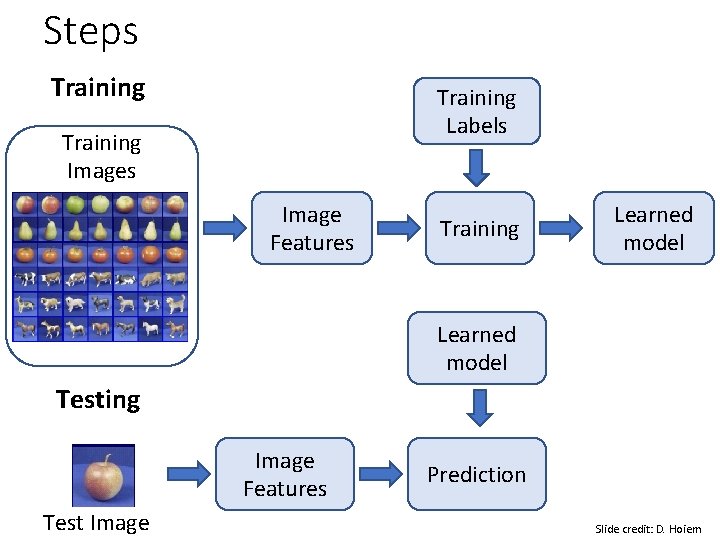

Steps Training Labels Training Images Image Features Training Learned model Testing Image Features Test Image Prediction Slide credit: D. Hoiem

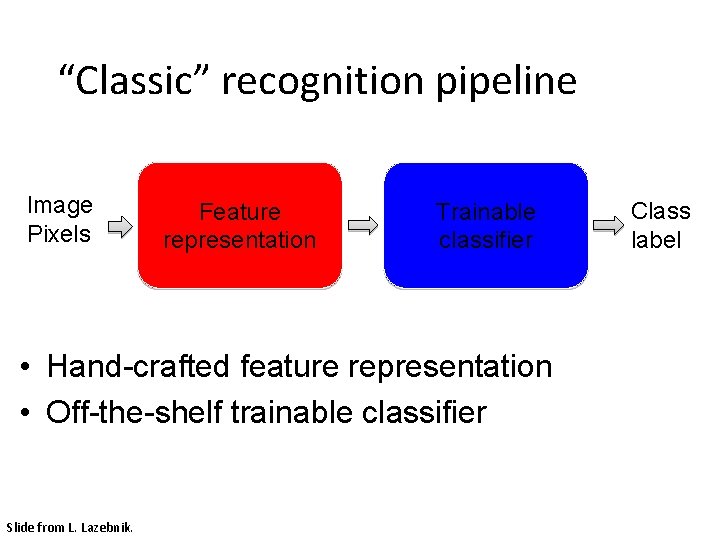

“Classic” recognition pipeline Image Pixels Feature representation Trainable classifier • Hand-crafted feature representation • Off-the-shelf trainable classifier Slide from L. Lazebnik. Class label

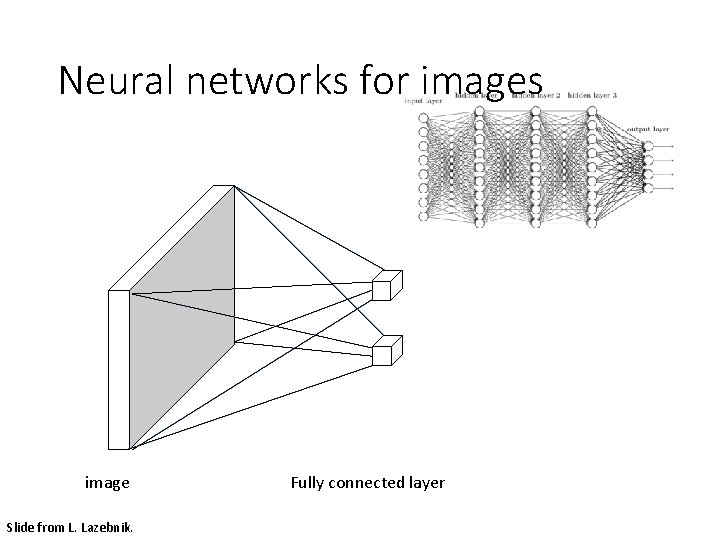

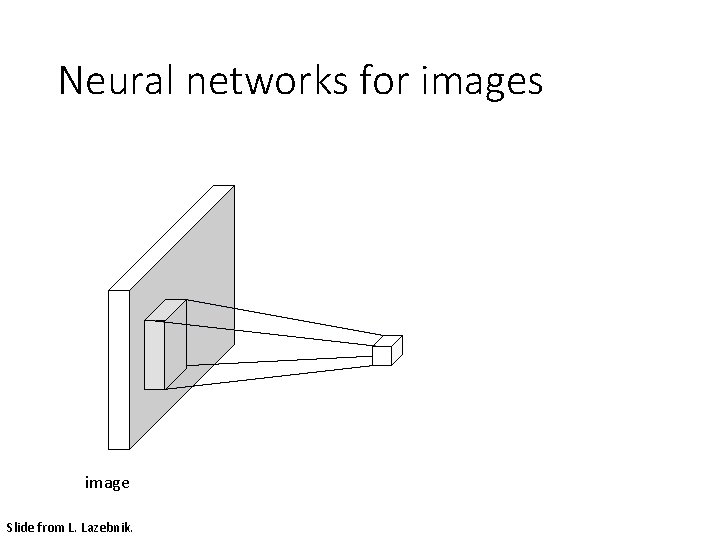

Neural networks for images image Slide from L. Lazebnik. Fully connected layer

Neural networks for images image Slide from L. Lazebnik.

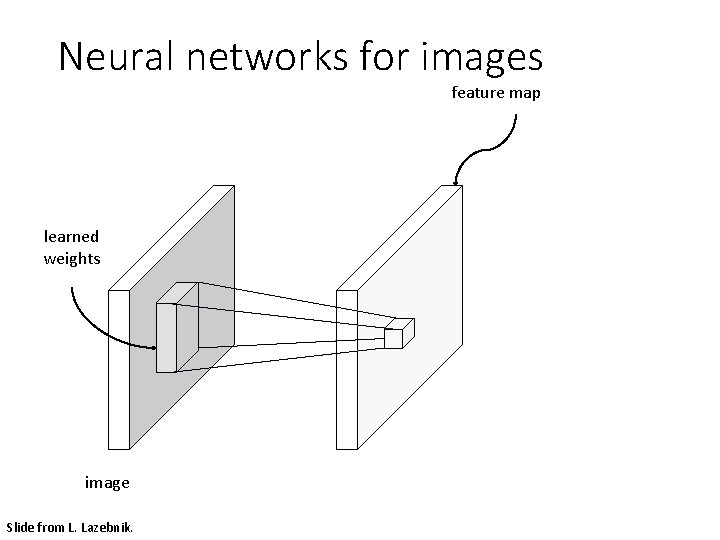

Neural networks for images feature map learned weights image Slide from L. Lazebnik.

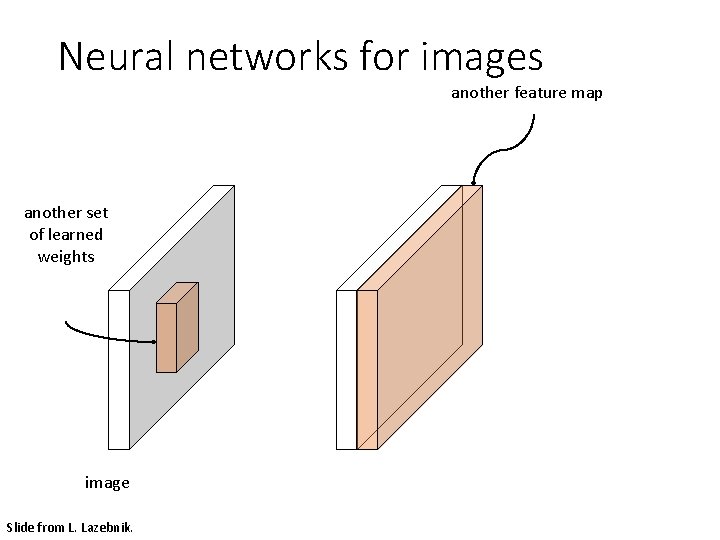

Neural networks for images another feature map another set of learned weights image Slide from L. Lazebnik.

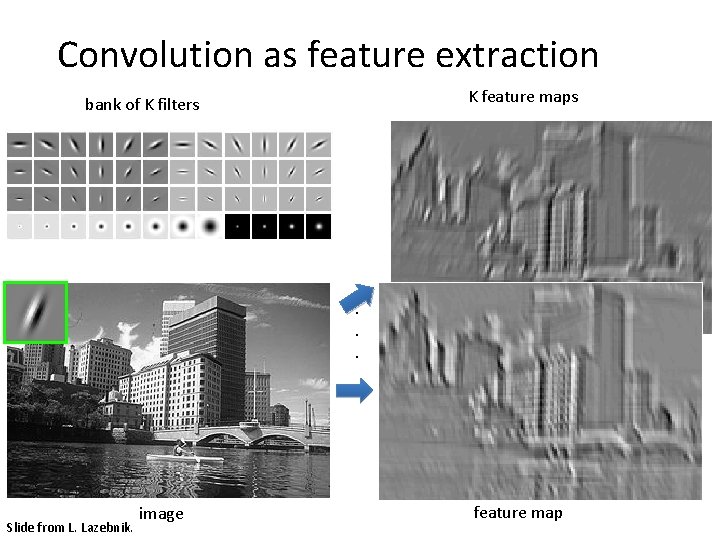

Convolution as feature extraction K feature maps bank of K filters . . . Slide from L. Lazebnik. image feature map

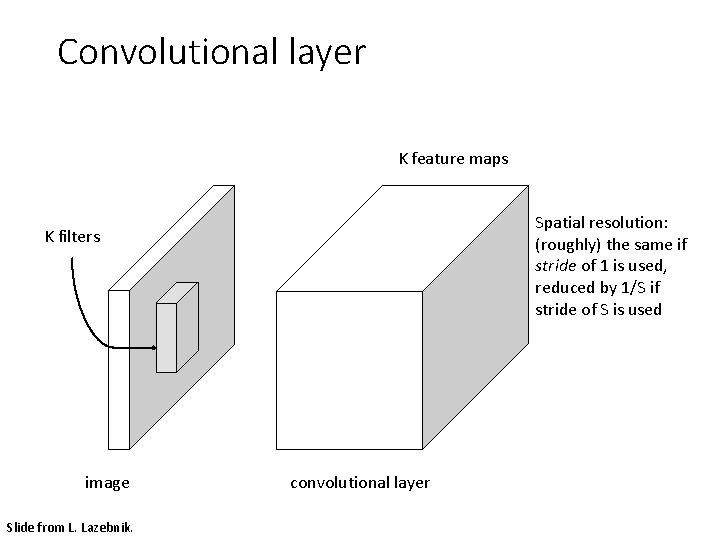

Convolutional layer K feature maps Spatial resolution: (roughly) the same if stride of 1 is used, reduced by 1/S if stride of S is used K filters image Slide from L. Lazebnik. convolutional layer

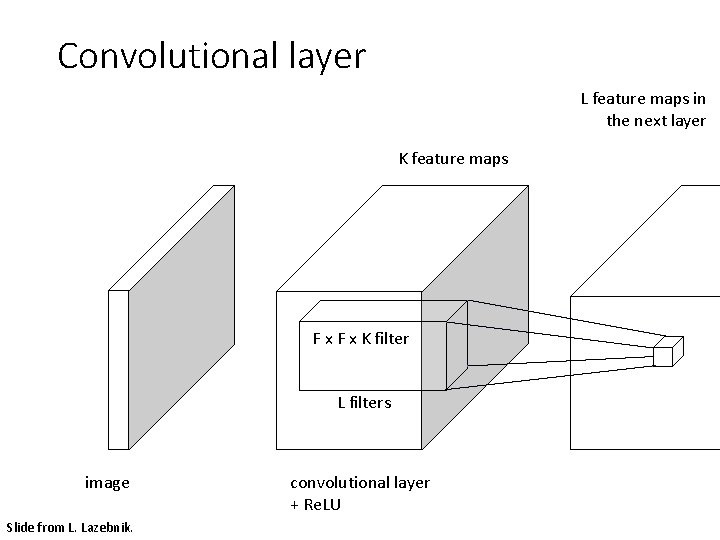

Convolutional layer L feature maps in the next layer K feature maps F x K filter L filters image Slide from L. Lazebnik. convolutional layer + Re. LU

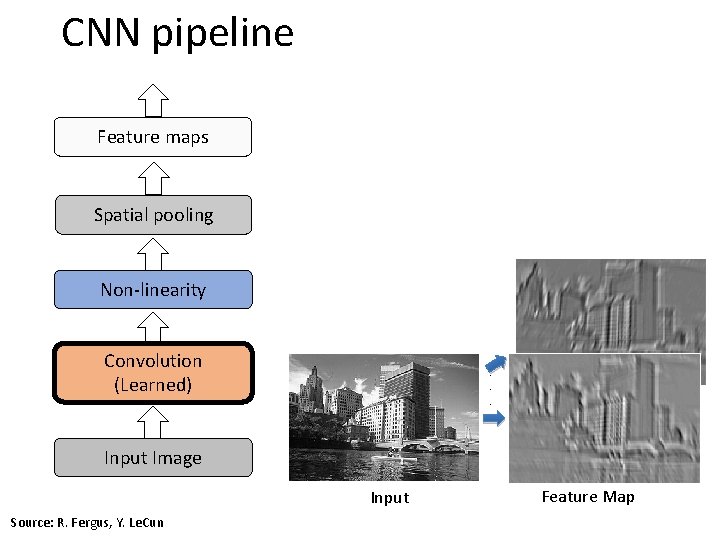

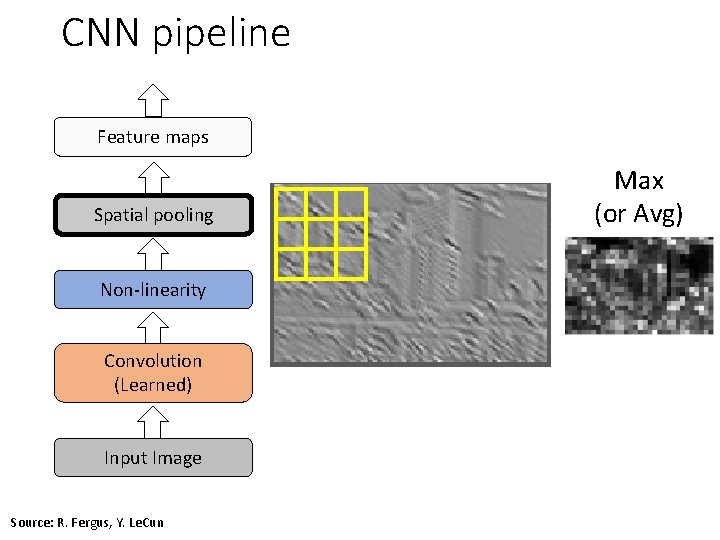

CNN pipeline Feature maps Spatial pooling Non-linearity Convolution (Learned) . . . Input Image Input Source: R. Fergus, Y. Le. Cun Feature Map

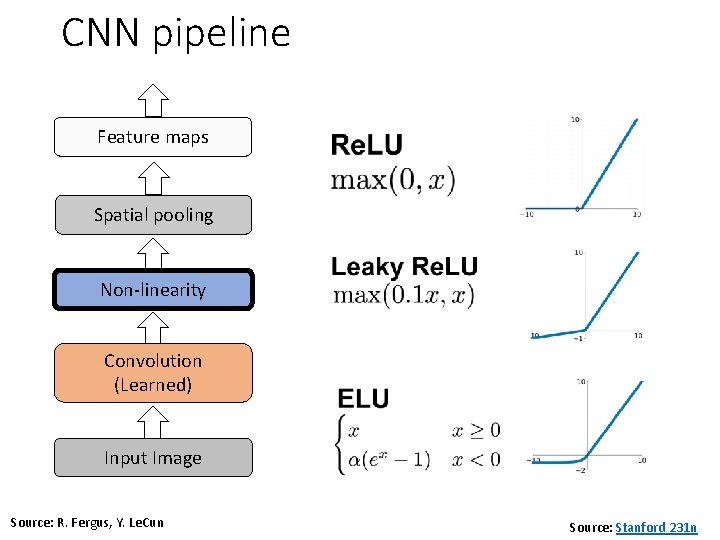

CNN pipeline Feature maps Spatial pooling Non-linearity Convolution (Learned) Input Image Source: R. Fergus, Y. Le. Cun Source: Stanford 231 n

CNN pipeline Feature maps Spatial pooling Non-linearity Convolution (Learned) Input Image Source: R. Fergus, Y. Le. Cun Max (or Avg)

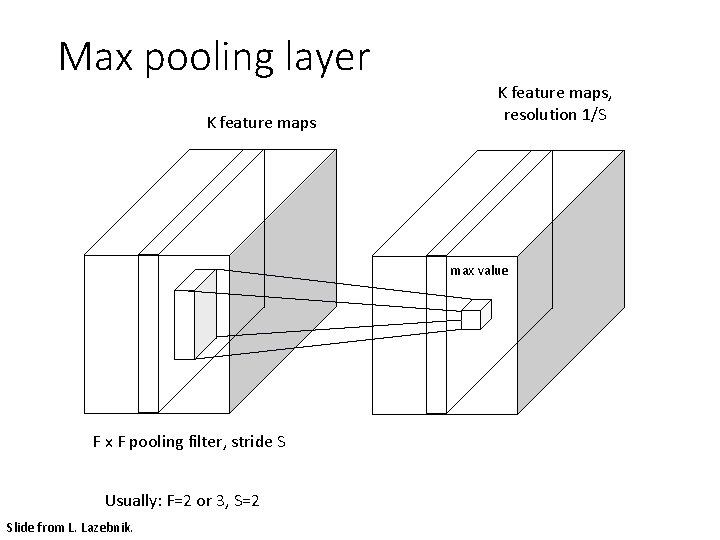

Max pooling layer K feature maps, resolution 1/S max value F x F pooling filter, stride S Usually: F=2 or 3, S=2 Slide from L. Lazebnik.

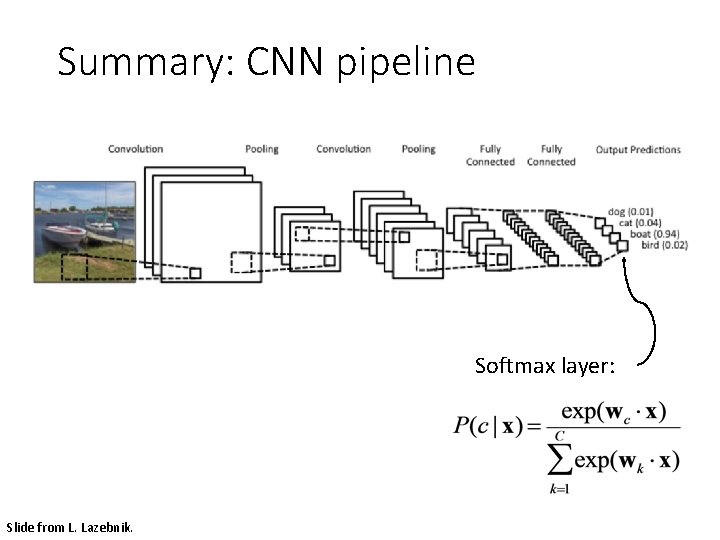

Summary: CNN pipeline Softmax layer: Slide from L. Lazebnik.

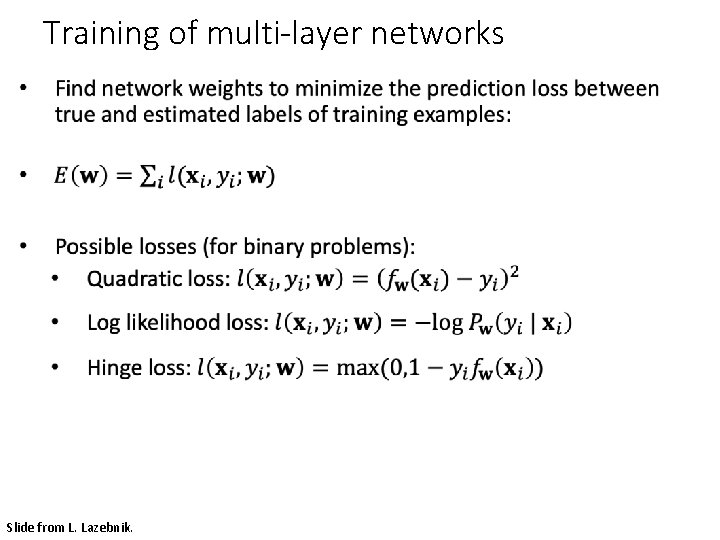

Training of multi-layer networks • Slide from L. Lazebnik.

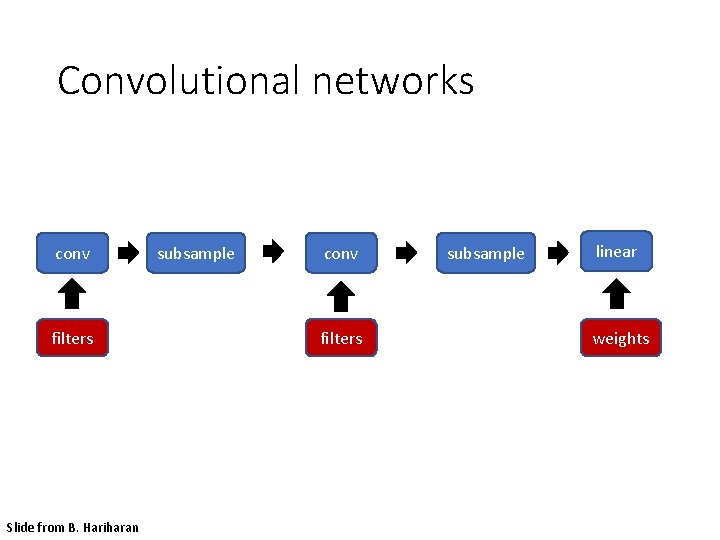

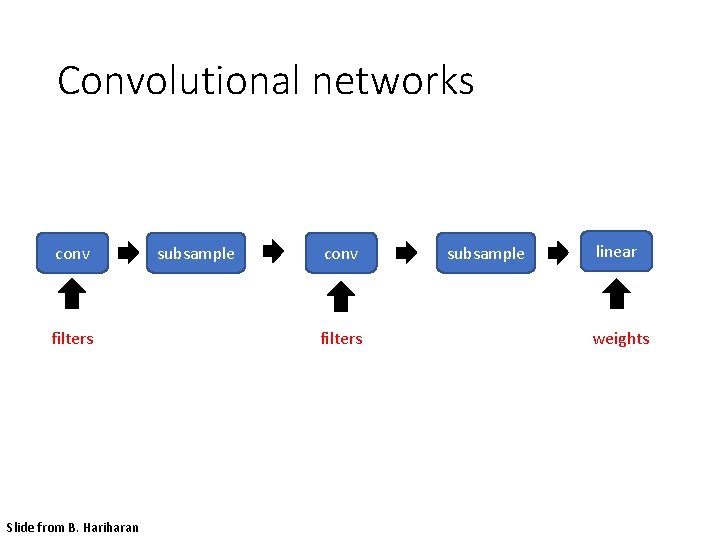

Convolutional networks conv filters Slide from B. Hariharan subsample conv filters subsample linear weights

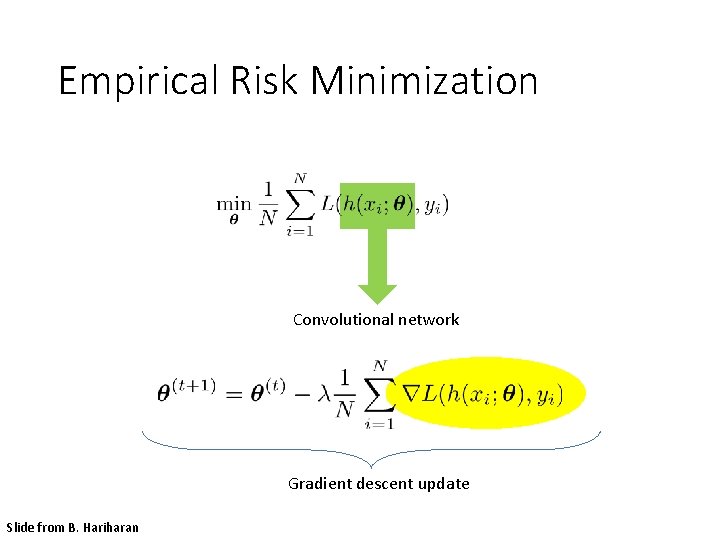

Empirical Risk Minimization Convolutional network Gradient descent update Slide from B. Hariharan

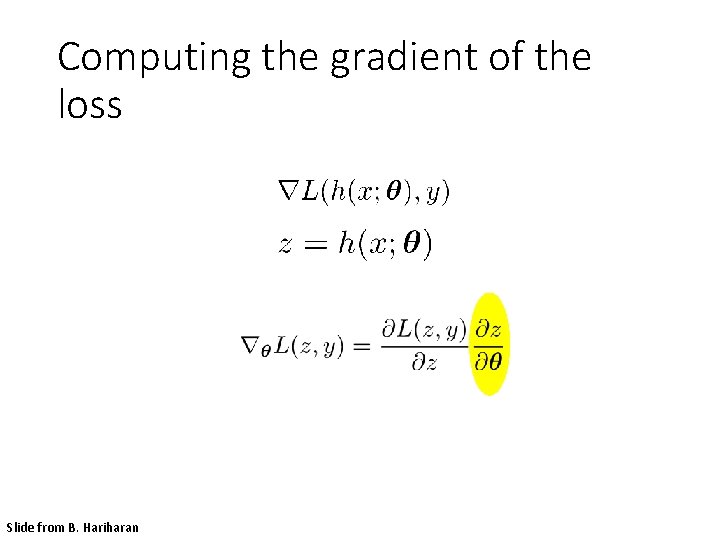

Computing the gradient of the loss Slide from B. Hariharan

Convolutional networks conv filters Slide from B. Hariharan subsample conv filters subsample linear weights

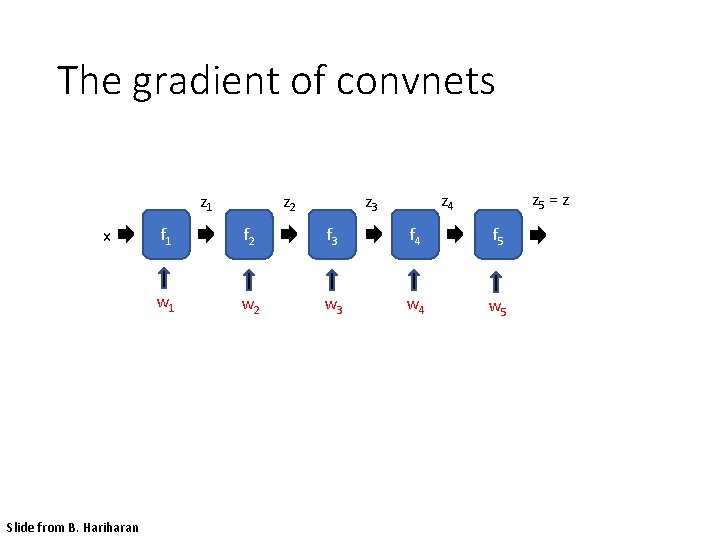

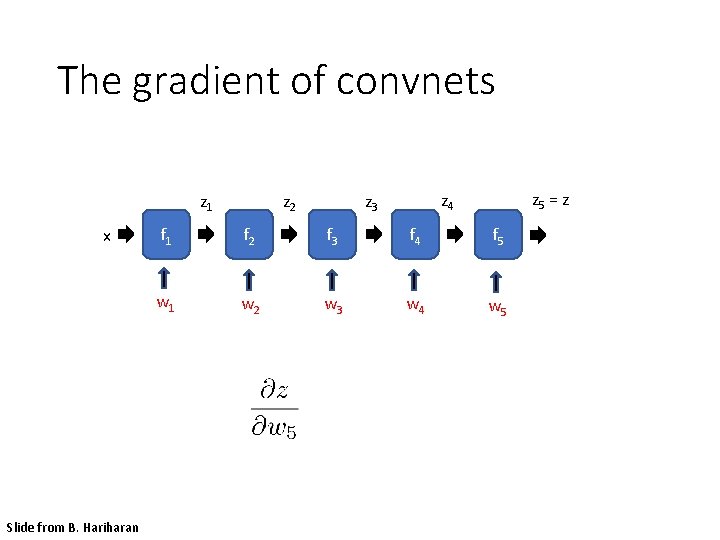

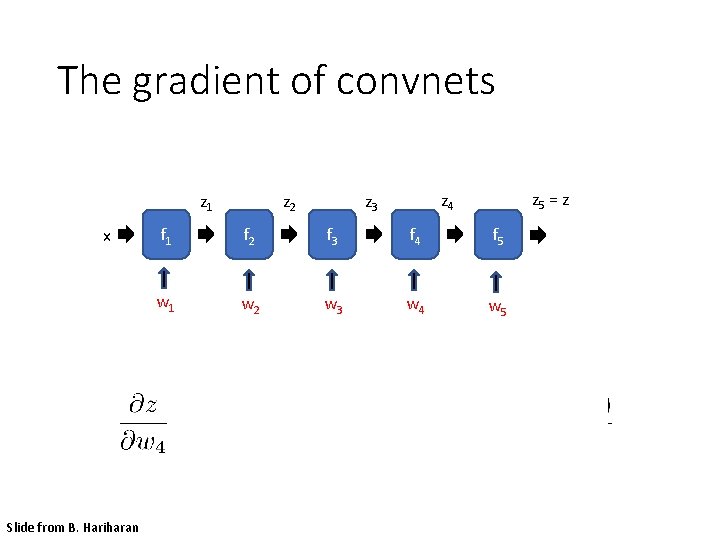

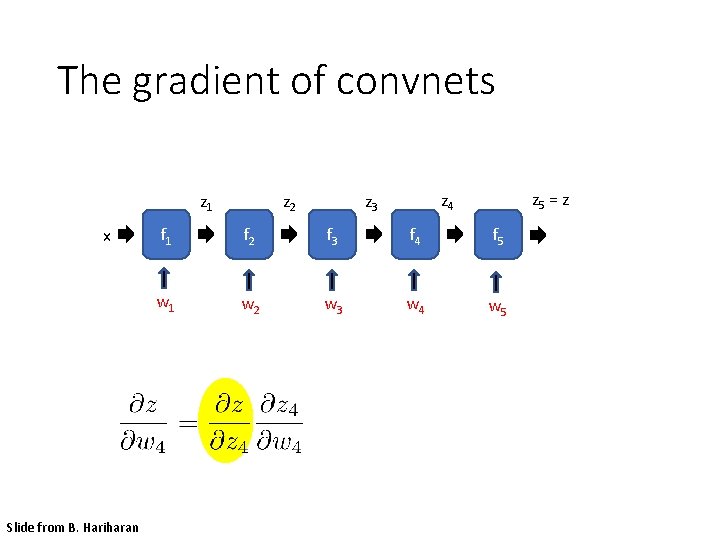

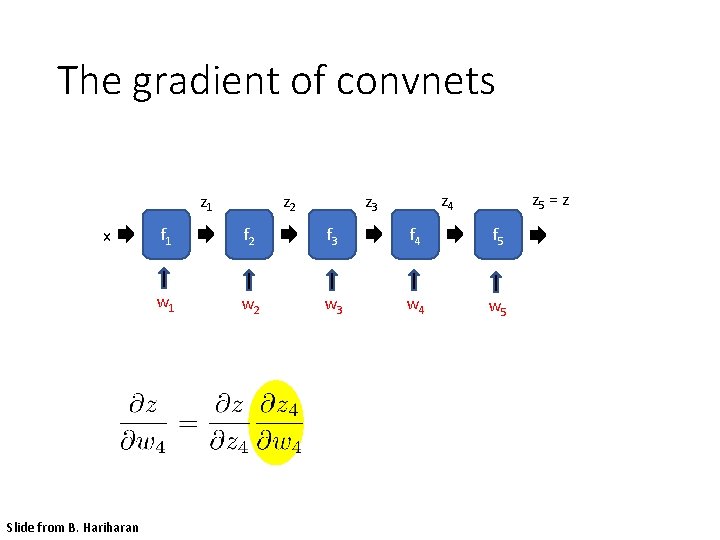

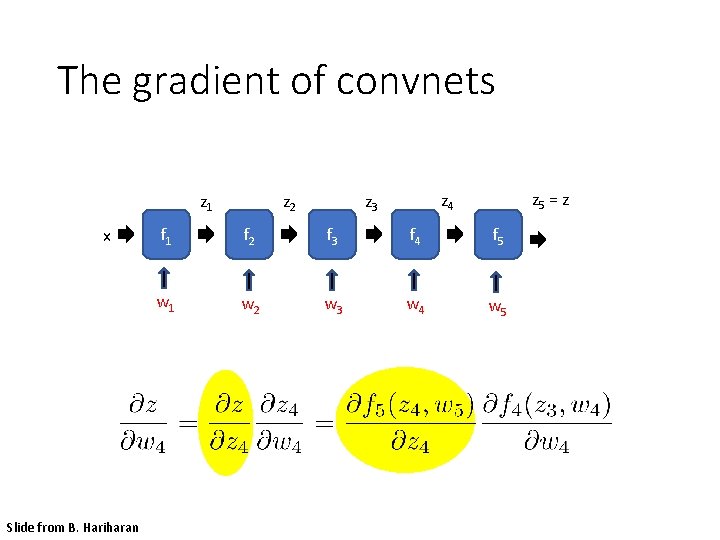

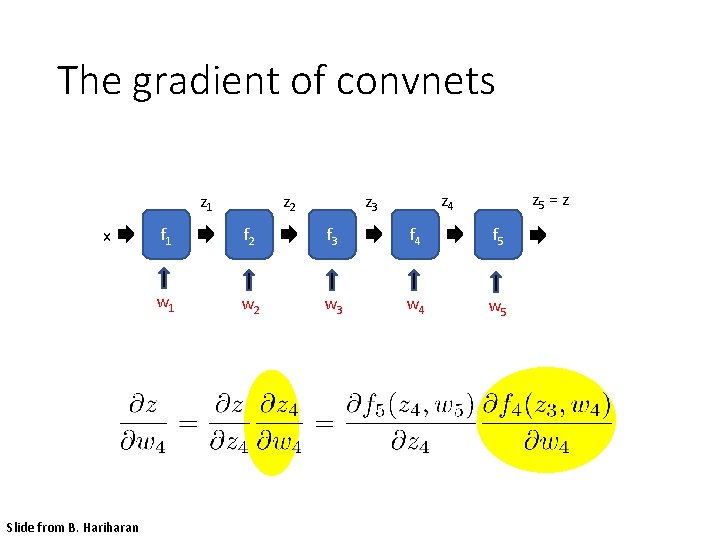

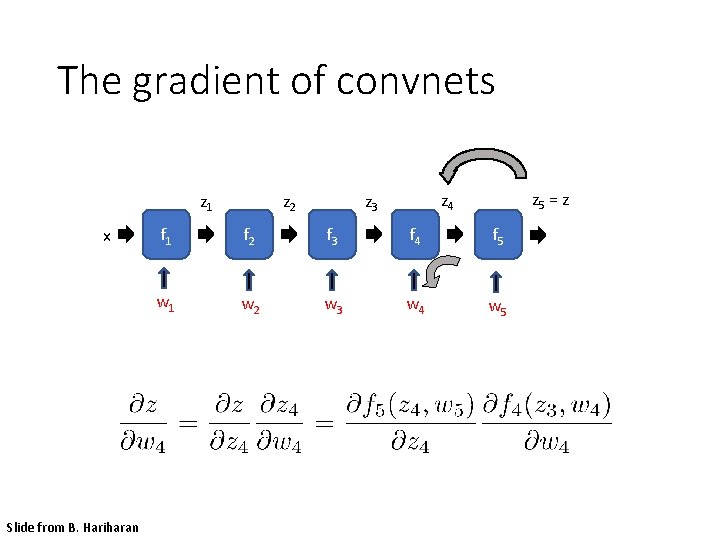

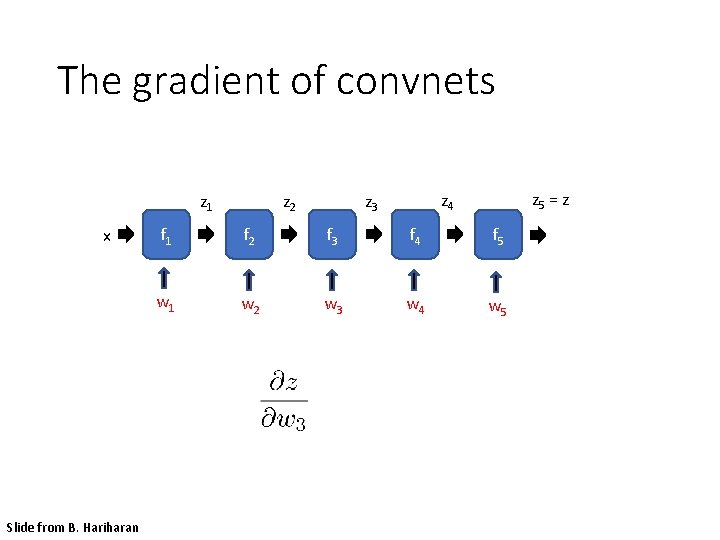

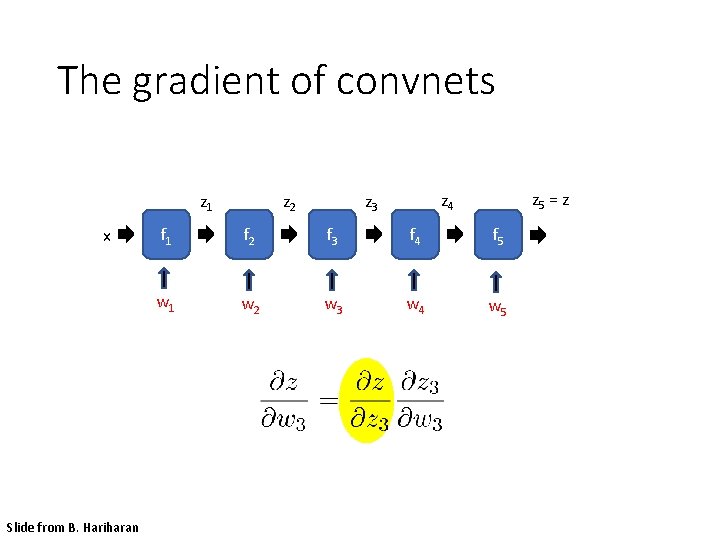

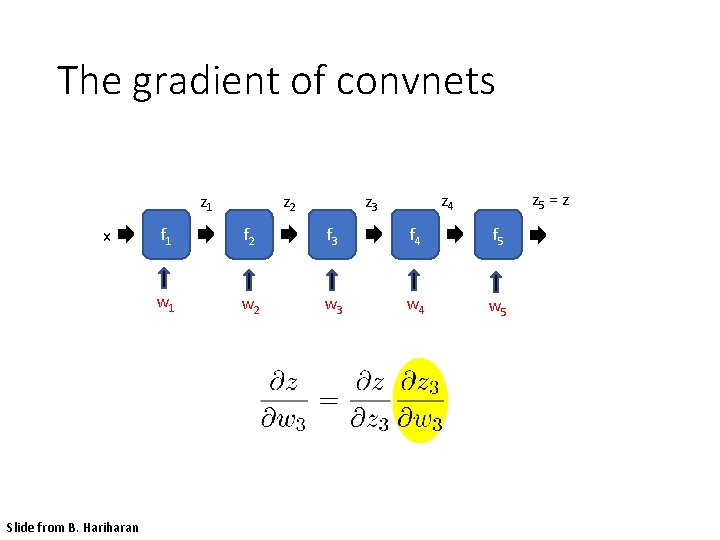

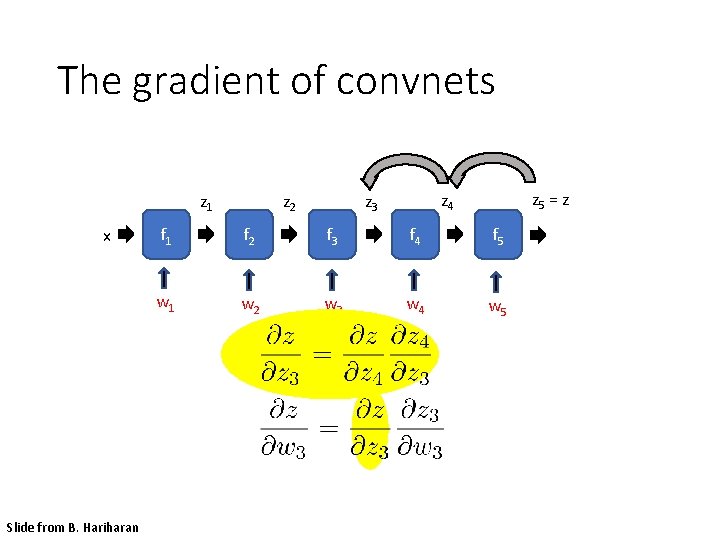

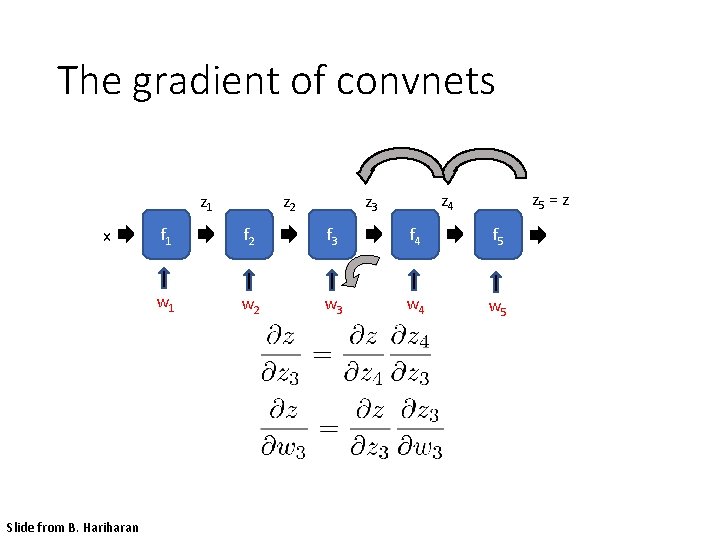

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

The gradient of convnets z 1 x Slide from B. Hariharan z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5

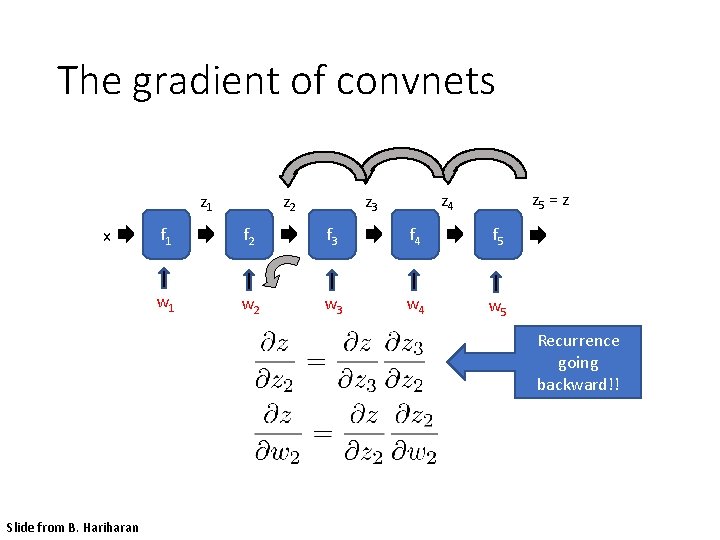

The gradient of convnets z 1 x z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5 Recurrence going backward!! Slide from B. Hariharan

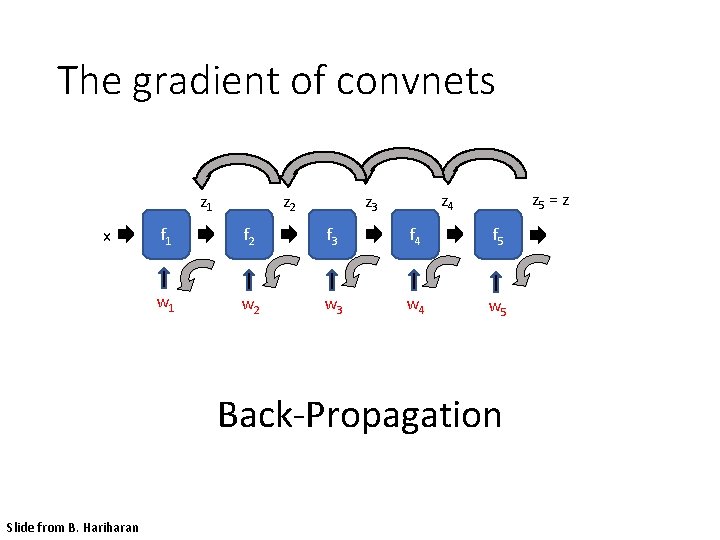

The gradient of convnets z 1 x z 2 z 5 = z z 4 z 3 f 1 f 2 f 3 f 4 f 5 w 1 w 2 w 3 w 4 w 5 Back-Propagation Slide from B. Hariharan

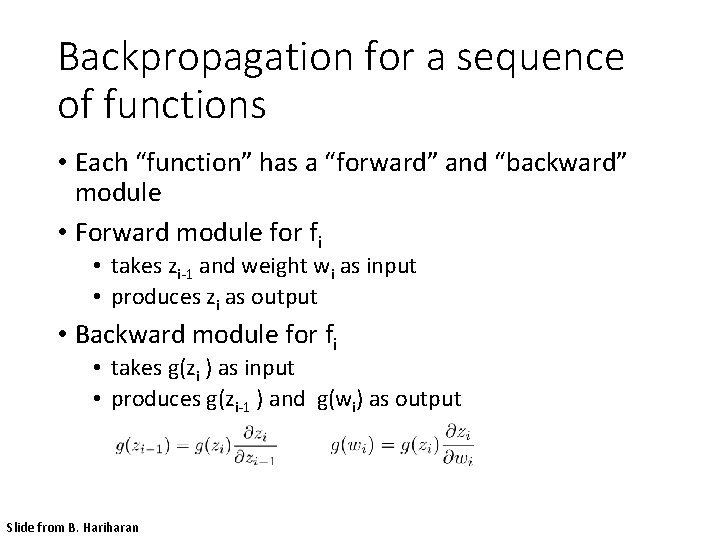

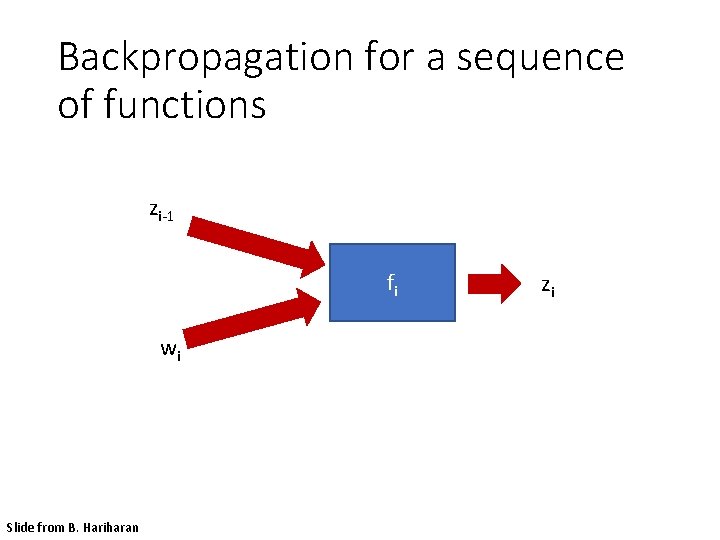

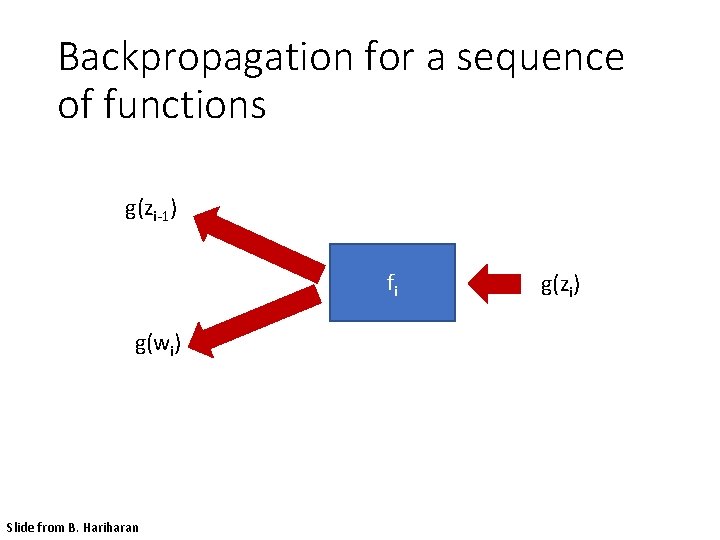

Backpropagation for a sequence of functions • Each “function” has a “forward” and “backward” module • Forward module for fi • takes zi-1 and weight wi as input • produces zi as output • Backward module for fi • takes g(zi ) as input • produces g(zi-1 ) and g(wi) as output Slide from B. Hariharan

Backpropagation for a sequence of functions zi-1 fi wi Slide from B. Hariharan zi

Backpropagation for a sequence of functions g(zi-1) fi g(wi) Slide from B. Hariharan g(zi)

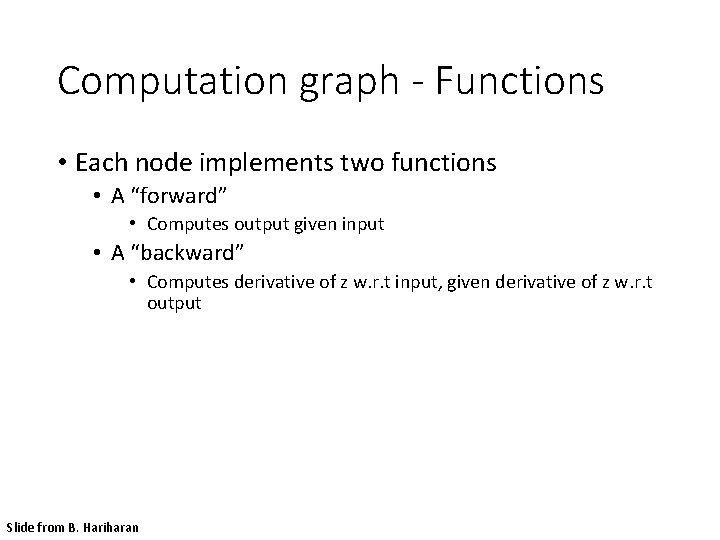

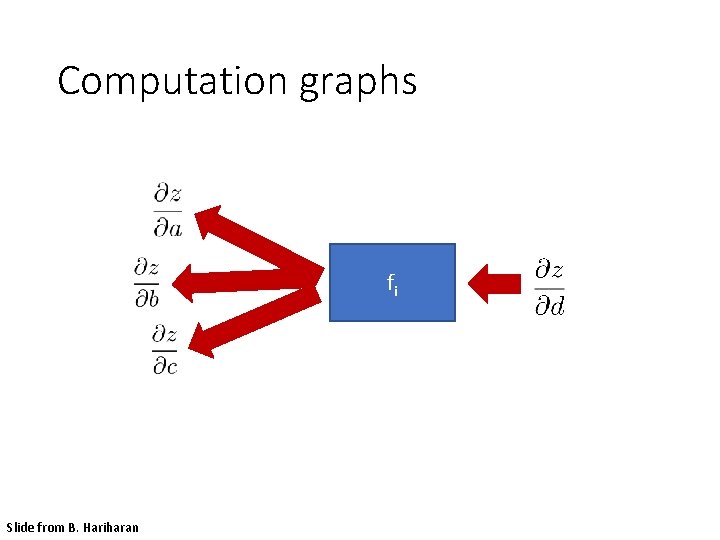

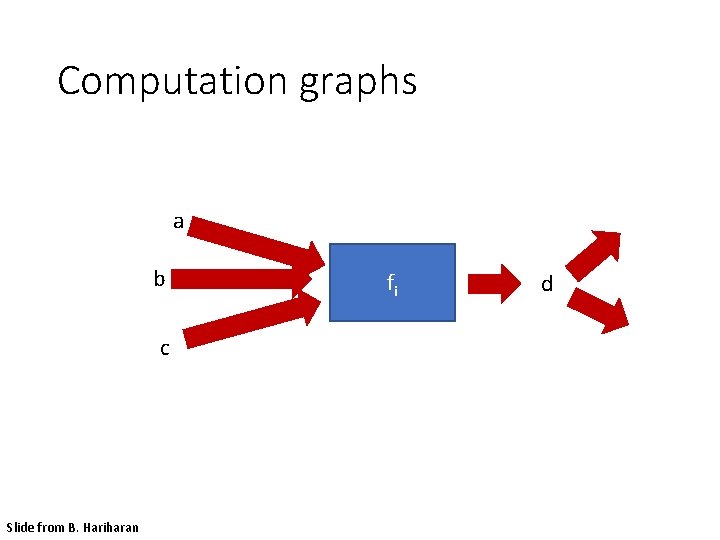

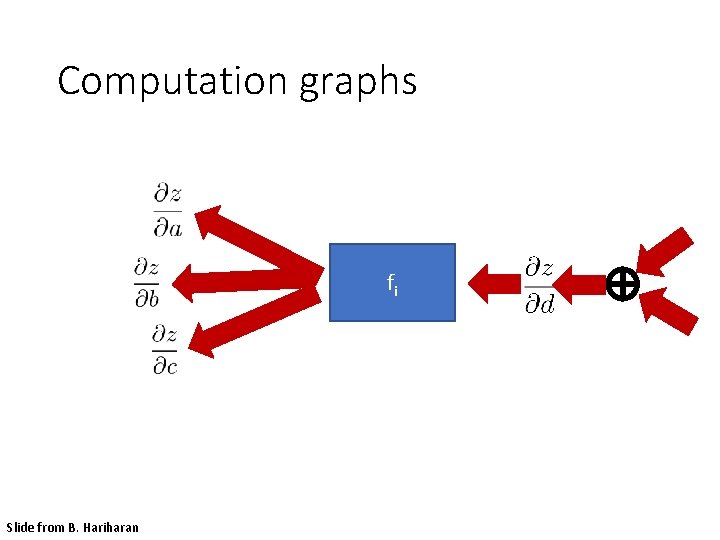

Computation graph - Functions • Each node implements two functions • A “forward” • Computes output given input • A “backward” • Computes derivative of z w. r. t input, given derivative of z w. r. t output Slide from B. Hariharan

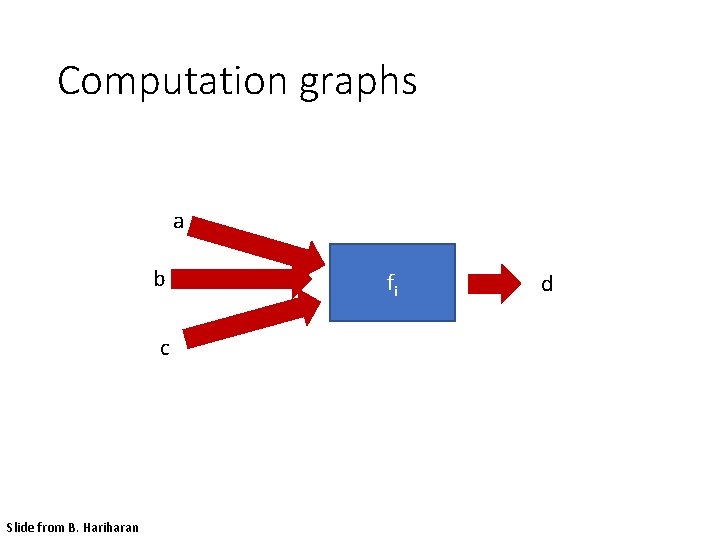

Computation graphs a b c Slide from B. Hariharan fi d

Computation graphs fi Slide from B. Hariharan

Computation graphs a b c Slide from B. Hariharan fi d

Computation graphs fi Slide from B. Hariharan

Neural network frameworks Slide from B. Hariharan

Image classification and tagging • outdoor • mountains • city • Asia • Lhasa • … Adapted from Fei-Fei Li

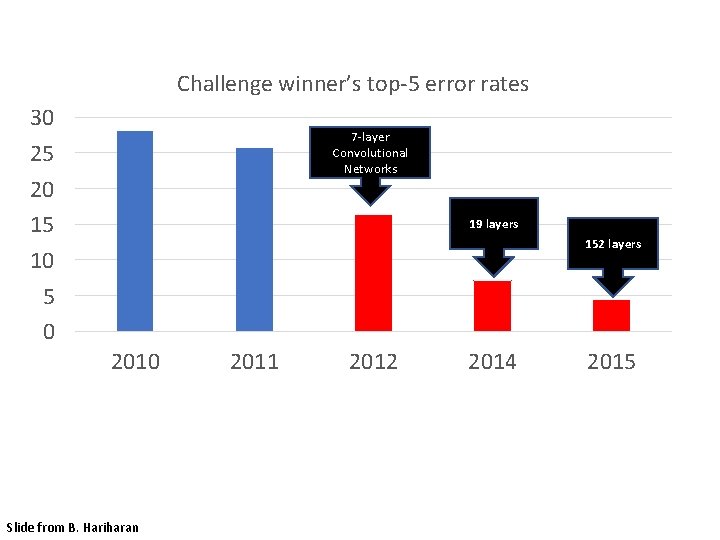

Challenge winner’s top-5 error rates 30 25 20 15 10 5 0 7 -layer Convolutional Networks 19 layers 152 layers 2010 Slide from B. Hariharan 2011 2012 2014 2015

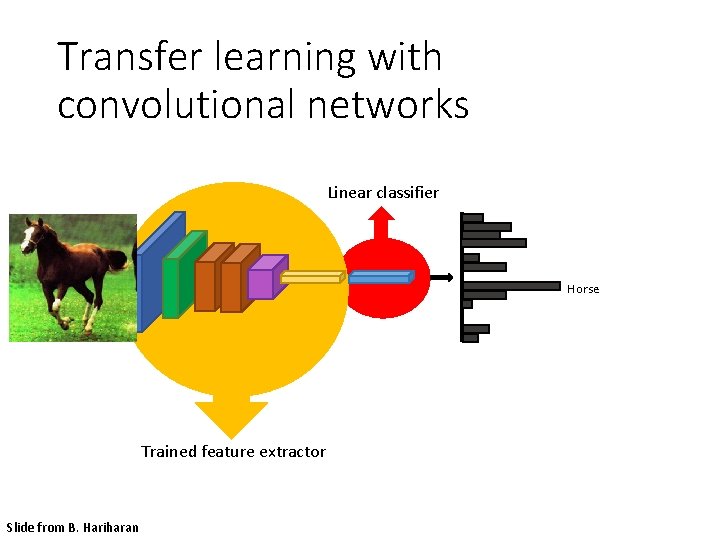

Transfer learning with convolutional networks Linear classifier Horse Trained feature extractor Slide from B. Hariharan

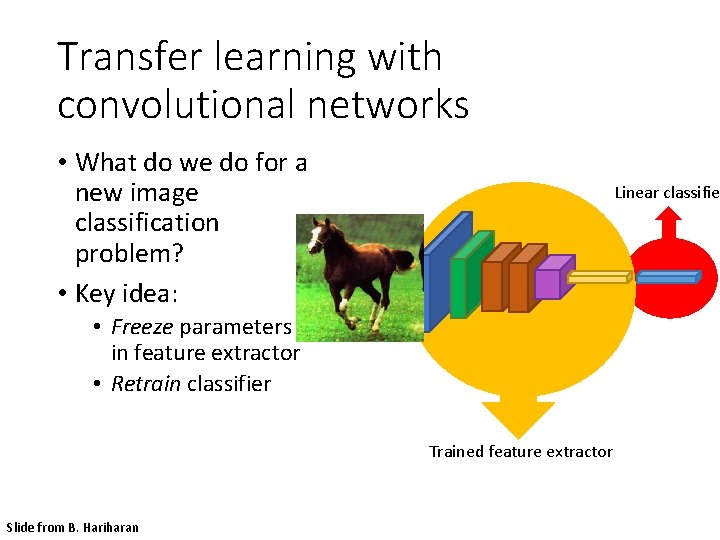

Transfer learning with convolutional networks • What do we do for a new image classification problem? • Key idea: Linear classifie • Freeze parameters in feature extractor • Retrain classifier Trained feature extractor Slide from B. Hariharan

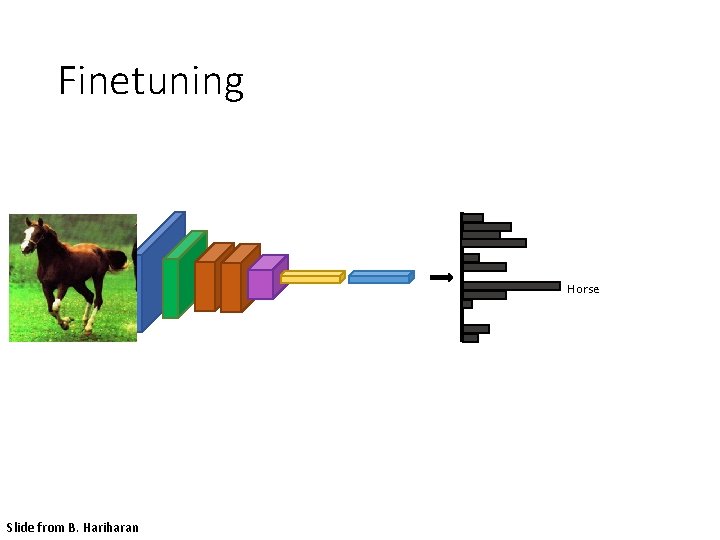

Finetuning Horse Slide from B. Hariharan

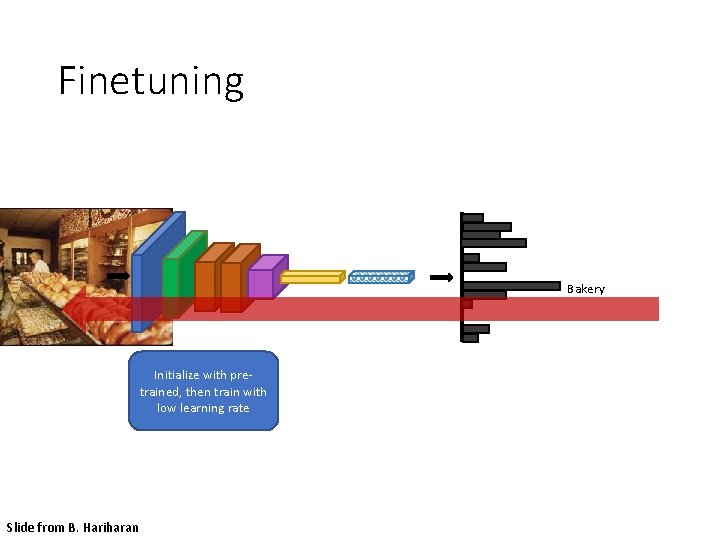

Finetuning Bakery Initialize with pretrained, then train with low learning rate Slide from B. Hariharan

Object detection • find pedestrians Adapted from Fei-Fei Li

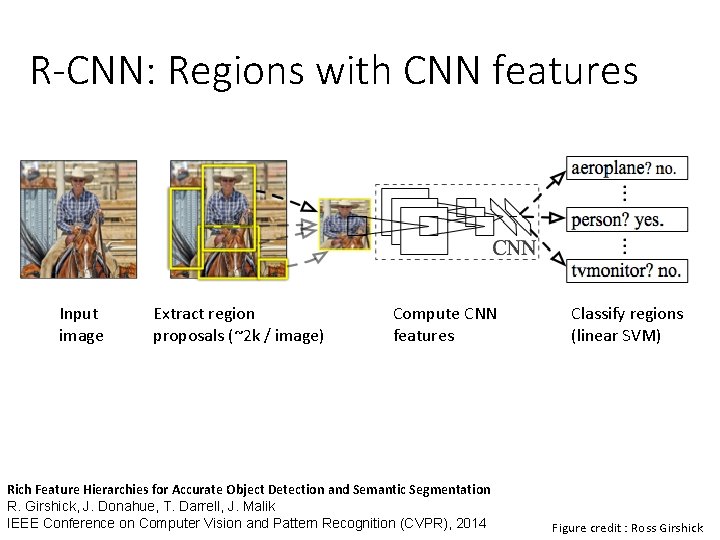

R-CNN: Regions with CNN features Input image Extract region proposals (~2 k / image) Compute CNN features Rich Feature Hierarchies for Accurate Object Detection and Semantic Segmentation R. Girshick, J. Donahue, T. Darrell, J. Malik IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2014 Classify regions (linear SVM) Figure credit : Ross Girshick

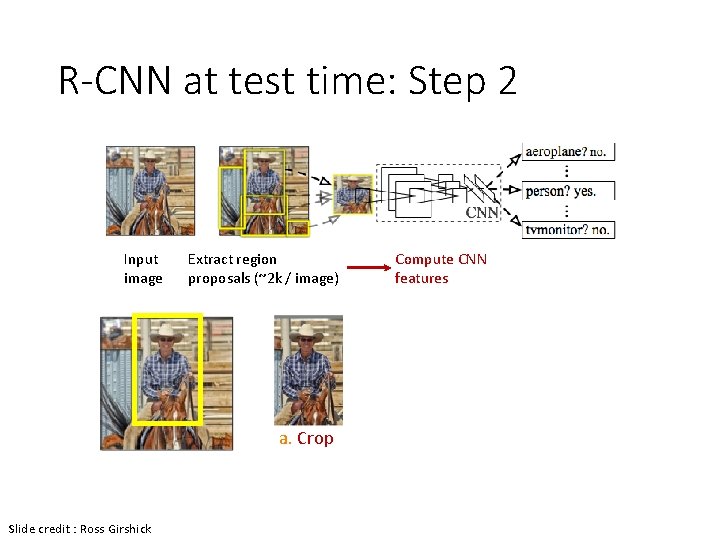

R-CNN at test time: Step 2 Input image Extract region proposals (~2 k / image) a. Crop Slide credit : Ross Girshick Compute CNN features

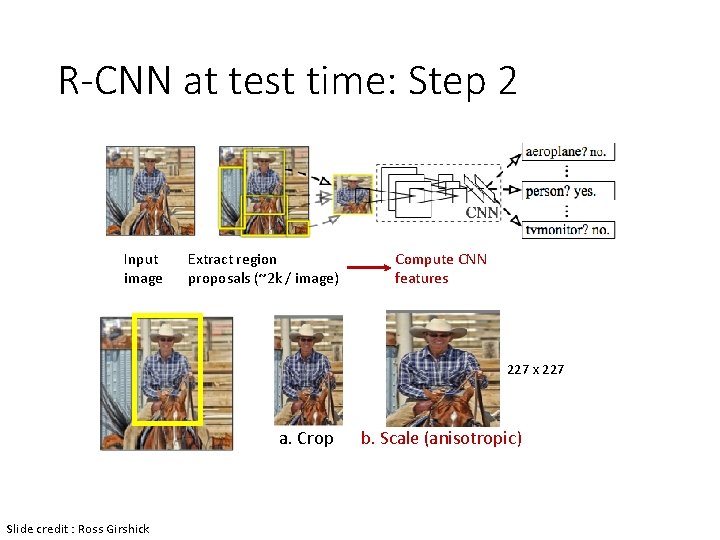

R-CNN at test time: Step 2 Input image Extract region proposals (~2 k / image) Compute CNN features 227 x 227 a. Crop Slide credit : Ross Girshick b. Scale (anisotropic)

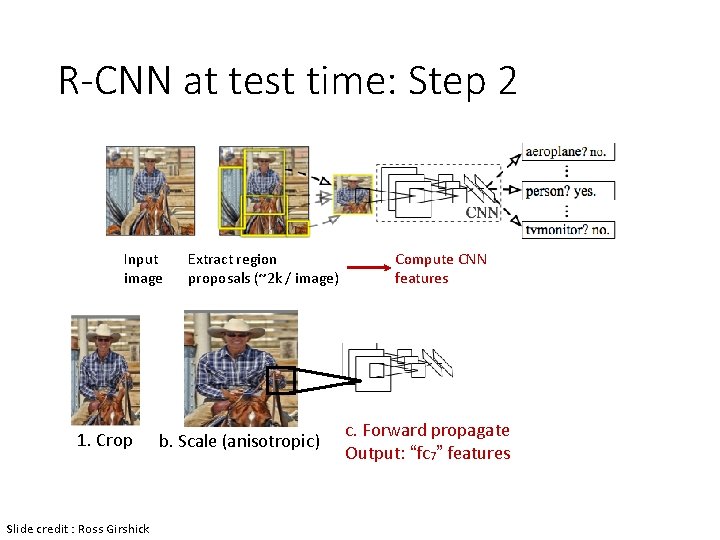

R-CNN at test time: Step 2 Input image 1. Crop Slide credit : Ross Girshick Extract region proposals (~2 k / image) b. Scale (anisotropic) Compute CNN features c. Forward propagate Output: “fc 7” features

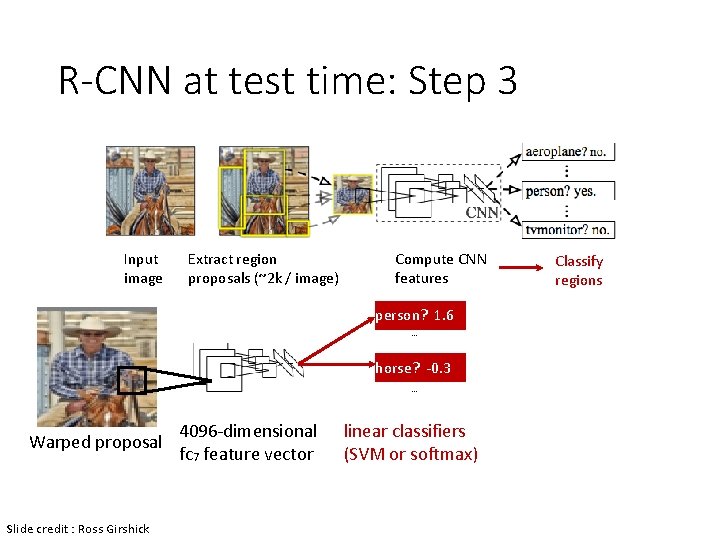

R-CNN at test time: Step 3 Input image Extract region proposals (~2 k / image) Compute CNN features person? 1. 6. . . horse? -0. 3. . . Warped proposal Slide credit : Ross Girshick 4096 -dimensional fc 7 feature vector linear classifiers (SVM or softmax) Classify regions

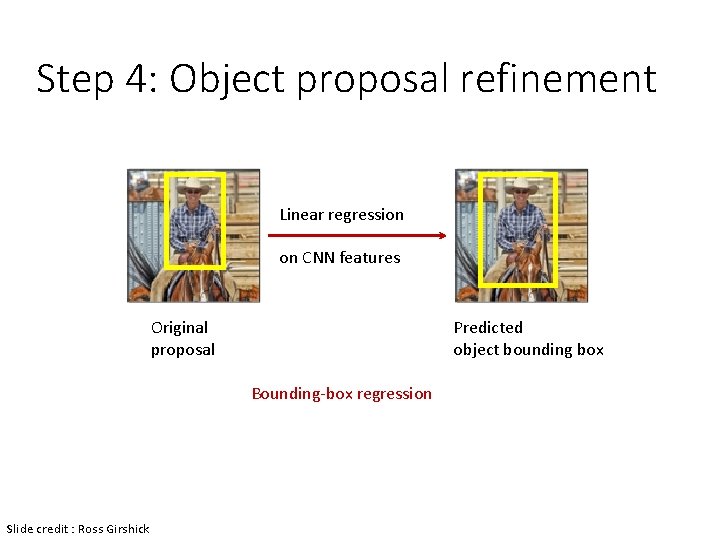

Step 4: Object proposal refinement Linear regression on CNN features Original proposal Predicted object bounding box Bounding-box regression Slide credit : Ross Girshick

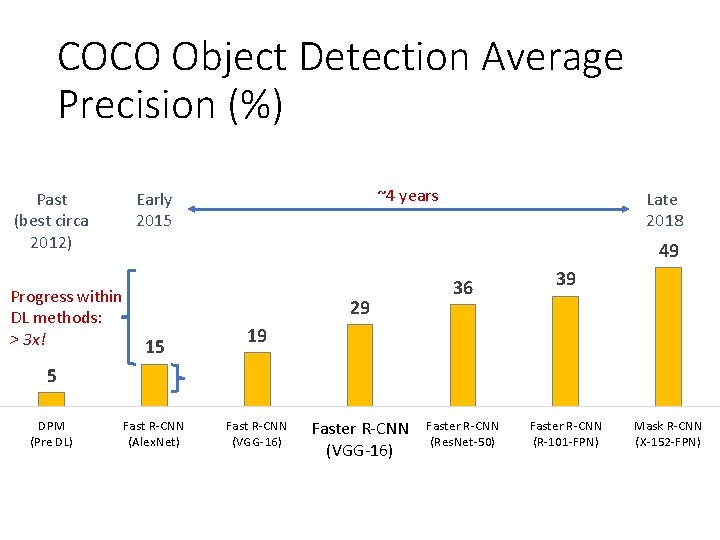

COCO Object Detection Average Precision (%) Past (best circa 2012) Progress within DL methods: > 3 x! ~4 years Early 2015 Late 2018 49 29 15 36 39 19 5 DPM (Pre DL) Fast R-CNN (Alex. Net) Fast R-CNN (VGG-16) Faster R-CNN (Res. Net-50) Faster R-CNN (R-101 -FPN) Mask R-CNN (X-152 -FPN)

![Steady Progress on Boxes and Masks Ø R-CNN [Girshick et al. 2014] Ø SPP-net Steady Progress on Boxes and Masks Ø R-CNN [Girshick et al. 2014] Ø SPP-net](http://slidetodoc.com/presentation_image_h2/7568680482485a568e5fcbf2b2532cb1/image-67.jpg)

Steady Progress on Boxes and Masks Ø R-CNN [Girshick et al. 2014] Ø SPP-net [He et al. 2014] Ø Fast R-CNN [Girshick. 2015] Ø Faster R-CNN [Ren et al. 2015] 39 36 Ø R-FCN [Dai et al. 2016] 29 Ø Feature Pyramid Networks + Faster R-CNN [Lin et al. 2017] 19 Ø Mask R-CNN 15 [He et al. 2017] 5Ø Training with Large Minibatches (Meg. Det) [Peng, Xiao, Li, et al. 2017] Ø Cascade R-CNN [Cai & Vasconcelos 2018] Ø … DPM (Pre DL) Fast R-CNN (Alex. Net) Fast R-CNN (VGG-16) Faster R-CNN (Res. Net-50) Faster R-CNN (R-101 -FPN) 46 Mask R-CNN (X-152 -FPN)

Semantic segmentation sky mountain building tree building lamp umbrella person market stall person ground Adapted from Fei-Fei Li

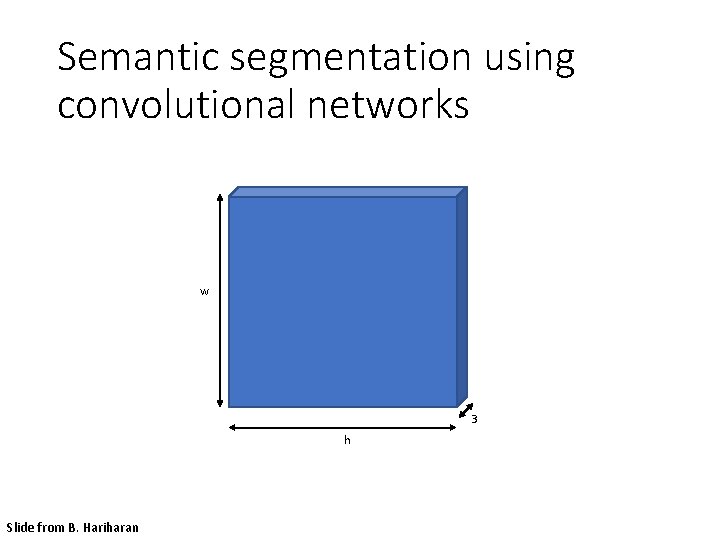

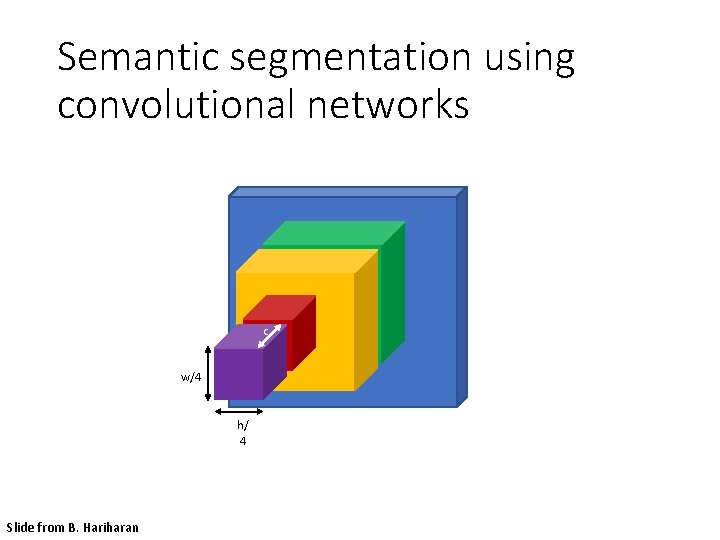

Semantic segmentation using convolutional networks w 3 h Slide from B. Hariharan

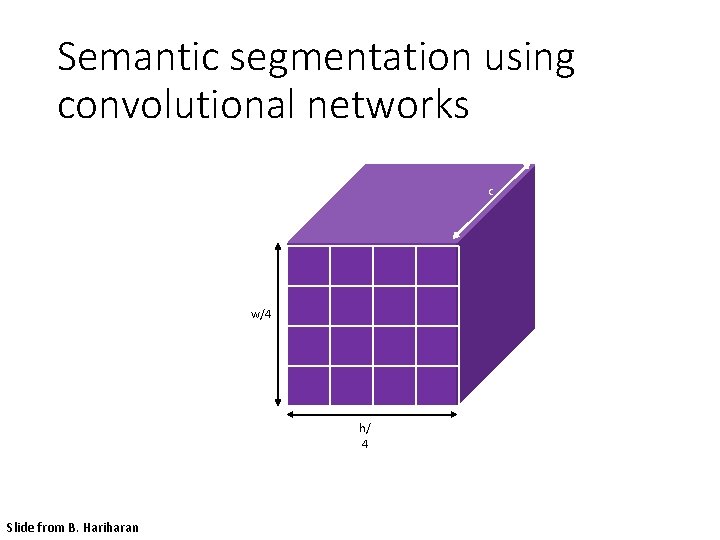

Semantic segmentation using convolutional networks c w/4 h/ 4 Slide from B. Hariharan

Semantic segmentation using convolutional networks c w/4 h/ 4 Slide from B. Hariharan

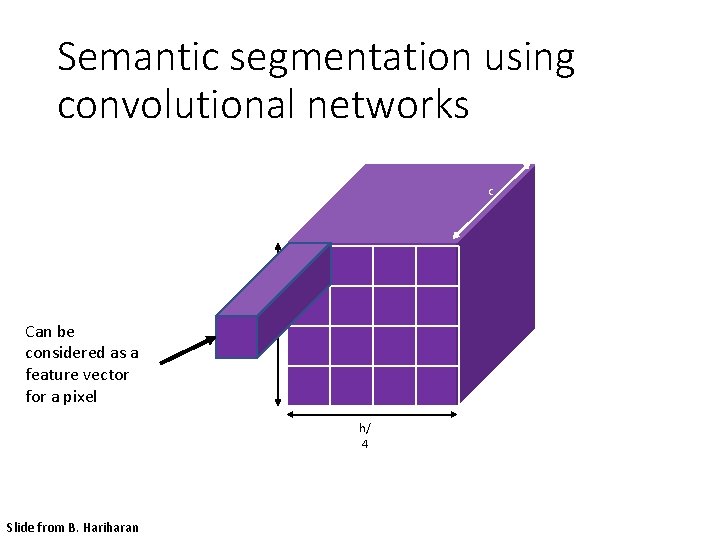

Semantic segmentation using convolutional networks c Can be considered as a feature vector for a pixel w/4 h/ 4 Slide from B. Hariharan

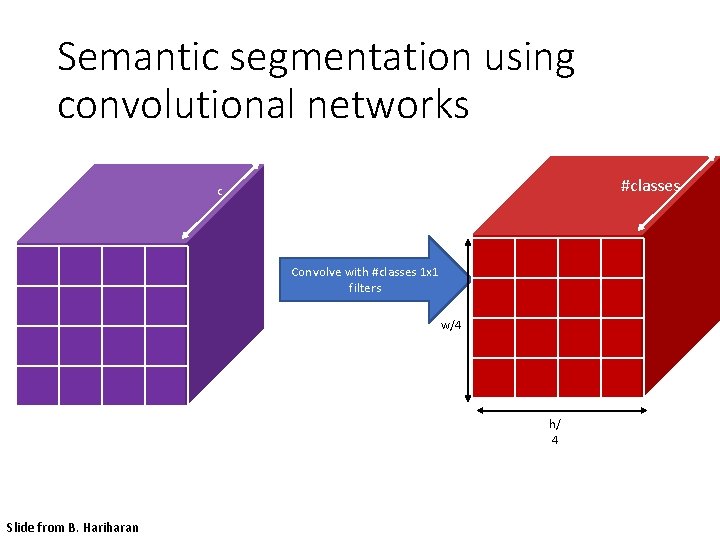

Semantic segmentation using convolutional networks #classes c Convolve with #classes 1 x 1 filters w/4 h/ 4 Slide from B. Hariharan

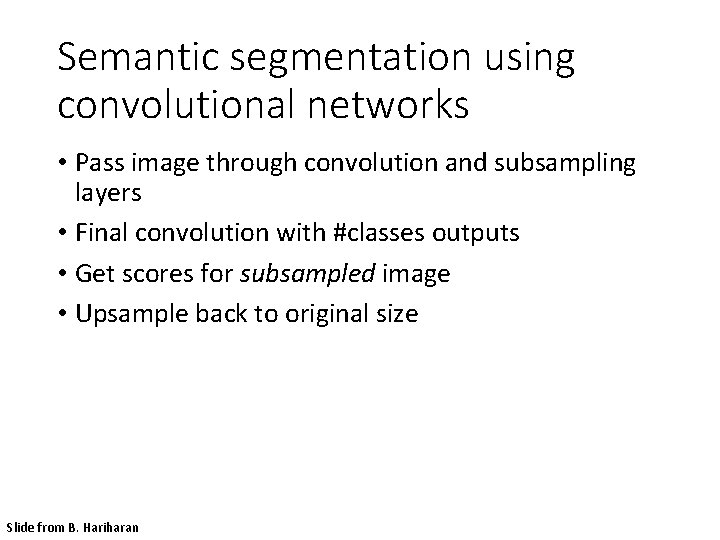

Semantic segmentation using convolutional networks • Pass image through convolution and subsampling layers • Final convolution with #classes outputs • Get scores for subsampled image • Upsample back to original size Slide from B. Hariharan

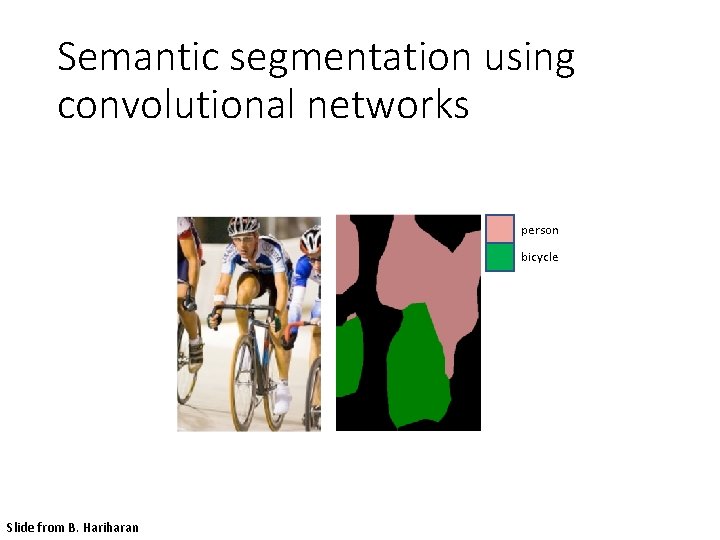

Semantic segmentation using convolutional networks person bicycle Slide from B. Hariharan

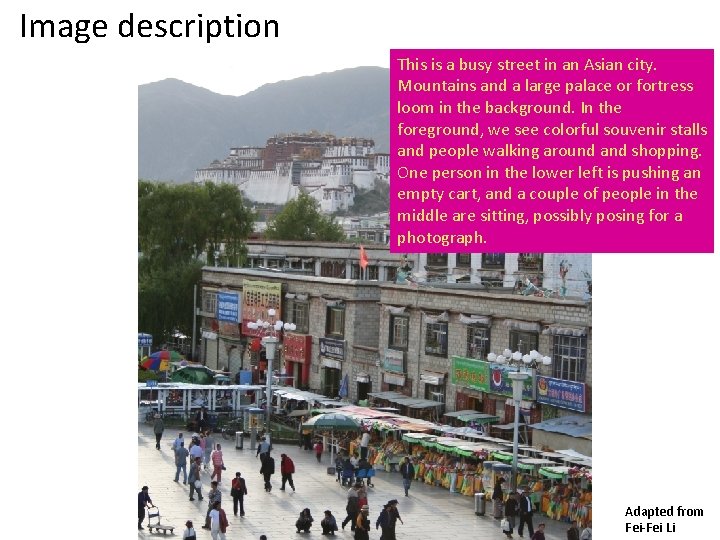

Image description This is a busy street in an Asian city. Mountains and a large palace or fortress loom in the background. In the foreground, we see colorful souvenir stalls and people walking around and shopping. One person in the lower left is pushing an empty cart, and a couple of people in the middle are sitting, possibly posing for a photograph. Adapted from Fei-Fei Li

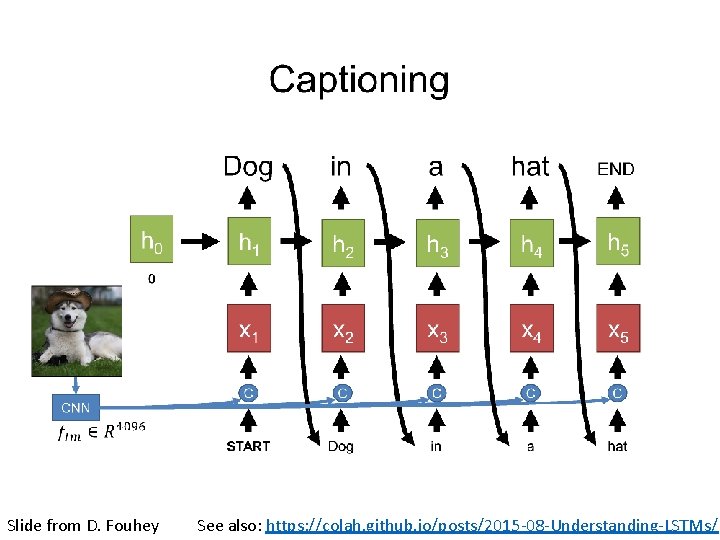

Slide from D. Fouhey See also: https: //colah. github. io/posts/2015 -08 -Understanding-LSTMs/

Vision Review • The vision problem • Why is vision hard? • 3 D Reconstruction (basis from SLAM) • Recognition Additional Resources: • See CS 543 / ECE 549 at UIUC: http: //saurabhg. web. illinois. edu/teaching/ece 549/sp 2020/ • See: CS 231 n at Stanford: http: //cs 231 n. stanford. edu/ • See A Davison’s Robotics course: https: //www. doc. ic. ac. uk/~ajd/Robotics/

- Slides: 78