CHAPTER 7 Touch Gestures Chapter objectives To code

CHAPTER 7 Touch. Gestures

Chapter objectives: • To code the detection of and response to touch gestures. • Patterns of common touches to create touch gestures that can be interpreted by an Android device. • The use of Motion. Events • The differences between touch events and motion events • How to build applications using multi-touch gestures

7. 1 Touchscreens • Most Android devices are equipped with a capacitive touchscreen • They rely on the electrical properties of the human body to detect when and where on a display the user is touching • Capacitive technology provides numerous design opportunities for Android developers

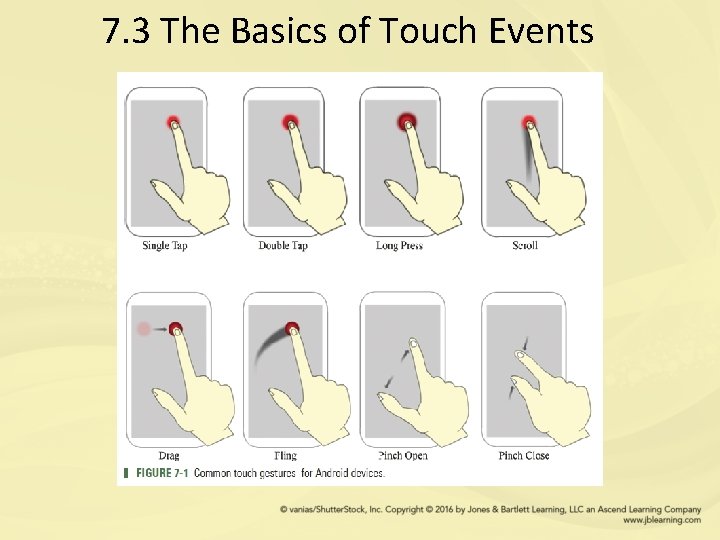

7. 2 Touch Gestures • Gestures are the primary way in which users interact with most Android devices • Touch gestures represent a fundamental form of communication with an Android device • A touch gesture is an action, typically a movement of a user’s finger on a touchscreen

7. 3 The Basics of Touch Events

• The Motion. Event class provides a collection of methods to report on the properties of a given touch gesture • Motion events describe movements in terms of an action code and a set of axis values • Each action code specifies a state change produced by a touch occurrence, such as a pointer going down or up • The axis values describe the position and movement properties.

• three basic Motion. Events can be combined to create touch gestures • ACTION_DOWN • ACTION_MOVE • ACTION_UP

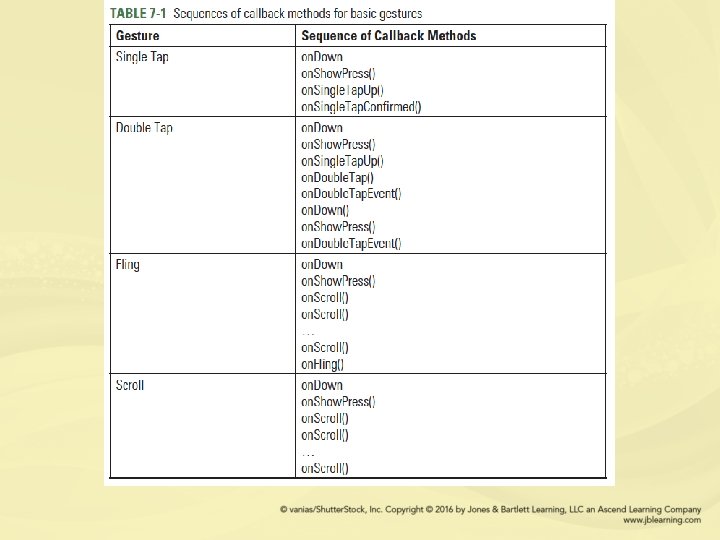

7. 4 Gesture Detector • The easiest approach is to use the Gesture. Detector class for detecting specific touch gestures. • This employs the On. Gesture. Listener to signal when a gesture occurs and then pass the triggered motion event to the Gesture. Detector’s on. Touch. Event() method • The on. Touch. Event() method will determine exactly what action patterns are occurring on the screen • The scroll events produced by a fling gesture can be used to provide information about the velocity of a moving finger and the distance it has traveled on the screen • A completed fling gesture is performed by the execution of the following callback methods: on. Down(), on. Scroll(), and on. Fling().

• Gesture. Detector has limited usage for Android application because it is not able to handle all types of gestures • The on. Down() method is automatically called when a tap occurs with the down Motion. Event that triggered it • The on. Long. Press() is called when a long press occurs with the initial on. Down Motion. Event that triggered it • The on. Single. Tap. Up() callback will occur when a tap gesture takes place with an up motion that triggered it

• Similar to the on. Single. Tap. Up() callback, on. Single. Tap. Confirmed() will occur when a detected tap gesture is confirmed by the system as a single tap and not part of a double tap gesture

7. 5 The Motion. Event Class • Gesture. Detector class allows basic detections for common gestures • This type of gesture detection is suitable for applications that require simple gestures • The Gesture. Detector class is not designed for handling complicated gestures. • A more sophisticated form of gesture detection is to register an On. Touch. Listener event handler to a specific View, such as a graphic object on stage that can be dragged • To provide touch event notification, the on. Touch. Event() method can be overridden for an Activity or touchable View

• The Motion. Event class provides a collection of methods to query the position and other properties of fingers used in a gesture • get. X(): Returns the x axis coordinate value at the finger’s location on the screen • get. Y(): Returns the y axis coordinate value at the finger’s location on the screen • get. Down. Time(): Returns the time when the user initially pressed down to begin a series of events • get. Precision. X(): Returns the precision of the X coordinate • get. Action(): Returns the type of action being performed by the user, such as ACTION_DOWN

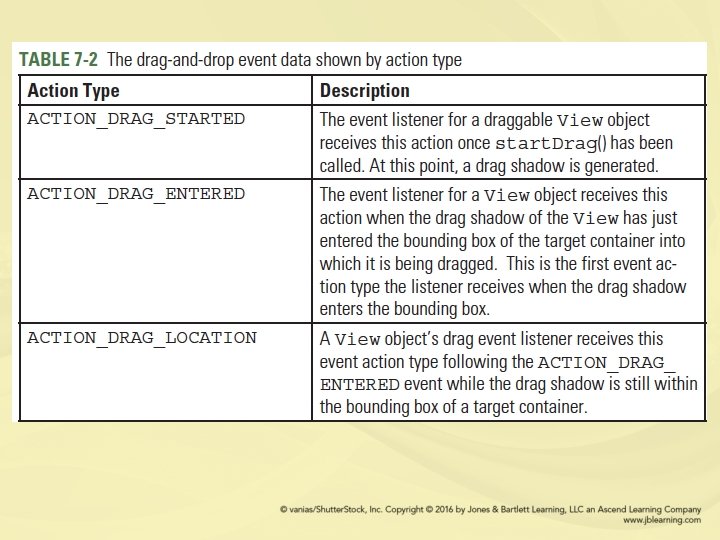

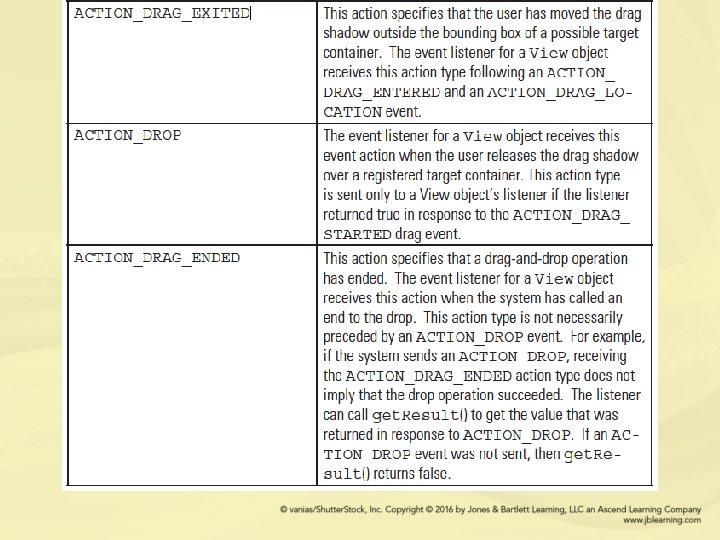

7. 6 The Drag and Drop Gesture • drag-and-drop is the action of tapping on a virtual object and dragging it to a different location • Drag-and-drop is a gesture that is most often associated with methods of data transfer • This gesture assumes a drag source and a drop target exist

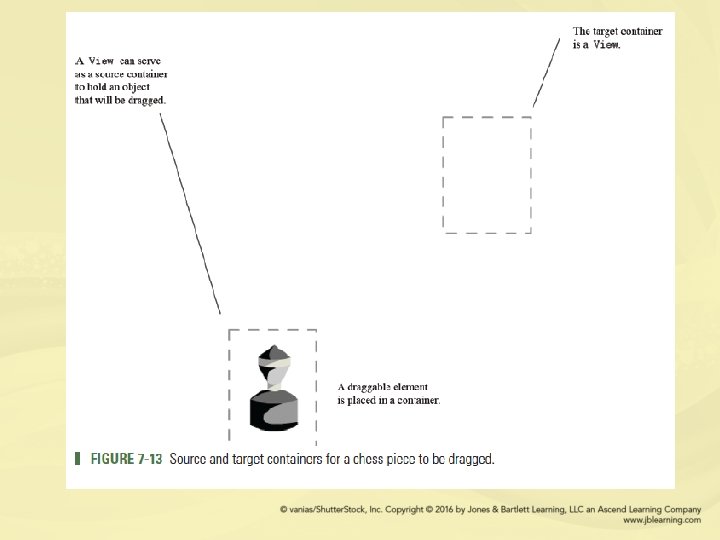

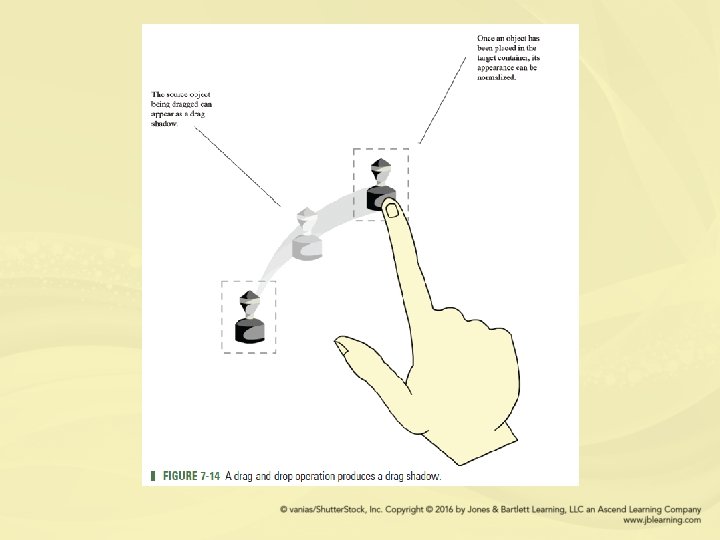

• The Android framework for drag-and-drop includes a drag event class, drag listeners, and helper methods and classes • Source and target containers must be created to hold the View elements that will be dragged and eventually dropped • As Figure 7 - 13 shows, a basic drag-anddrop design relies on at least two Views

• All elements that are the intended moveable objects in a drag-and-drop process must be registered with an appropriate listener event • A touch listener, set. On. Touch. Listener(), must be attached to each draggable View • This will register a callback to be invoked when an explicit touch event is sent to this draggable View object • A view container that functions as a source or target container must be registered with an explicit “on drag” listener event.

• During a drag-and-drop operation, the system provides a separate image that the user drags • For data movement, this image represents a copy of the object being dragged • This mechanism makes it clear to the user that an object is in the process of being dragged and has not yet been placed in its final target location • This dragged image is called a drag shadow because it is a shadow version of itself

7. 7 Fling Gesture • A fling is a core touchscreen gesture that is also known as a swipe • A fling is a quick swiping movement of a finger across a touchscreen • As with other gestures, the Motion. Event object can be used to report a fling event • The motion events will describe the fling movement in terms of an action code and a set of axis values describing movement properties

• For common gestures, such as on. Fling, Android provides the Gesture. Detector class that can be used in tandem with the on. Touch. Event callback method • An application Activity can implement the Gesture. Detector. On. Gesture. Listener interface to identify when a specific touch event has occurred • Once these events are received they can be handed off to the overriden on. Touch. Event callback

7. 8 Fling Velocity • Tracking the movement in a fling gesture requires the start and end positions of the finger, and the velocity of the movement across the touchscreen • The direction of a fling can be determined by the x and y coordinates captured by the ACTION_DOWN and ACTION_UP Motion. Events and the resulting velocities

• Velocity. Tracker is an Android helper class for tracking the velocity of touch events, including the implementation of a fling • In applications such as games, a fling is a movment-based gesture that produces behavior based on the distance and direction a finger travels with a gesture • The Velocity. Tracker class is designed to simplify velocity calculations in movementbased gestures

7. 9 Multi-Touch Gestures • A pinch gesture involves two fingers placed on the screen • The finger positions, and the distance between them, are recorded • When the fingers are lifted from the screen, the distance separating them is recorded • If the second recorded distance is less than the first distance, the gesture is recognized as a pinch

• A spread gesture is similar to a pinch gesture, in that it also records the start and end distance between the fingers on the touchscreen • If the second recorded distance is greater than the first, it is a spread gesture.

- Slides: 27