Budgeted Learning of Nave Bayes Classifiers Daniel Lizotte

Budgeted Learning of Naïve Bayes Classifiers Daniel Lizotte 22 July 2003

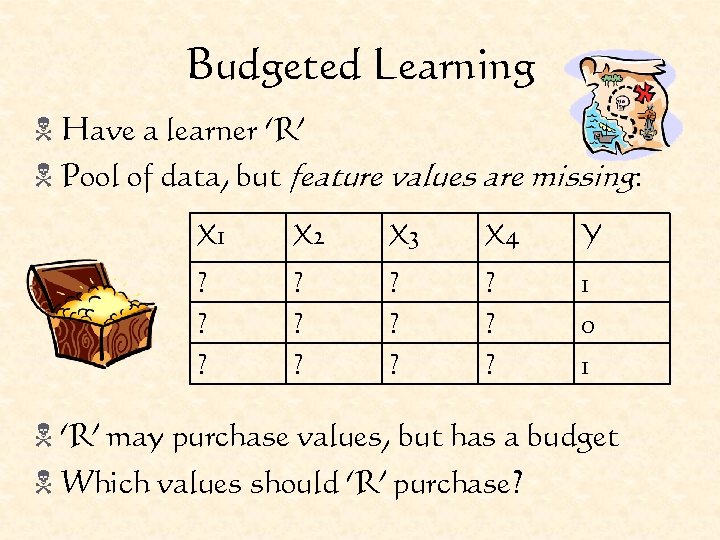

Budgeted Learning N Have a learner ‘R’ N Pool of data, but feature values are missing: X 1 X 2 X 3 X 4 Y ? ? ? 1 0 1 N ‘R’ may purchase values, but has a budget N Which values should ‘R’ purchase?

Motivation N Medical domain: Cancer subtypes N Different subtypes respond differently to different treatments N We have: a pool of patients whose subtype is known from trying different treatments N We want: A classifier that takes as input the results of various tests and outputs a subtype N These tests cost money.

Related Work N Bandit Problems N Play slot machines to maximize $$ N Discounted reward structure N Hoeffding Races N Choose the best model (classifier) N Eliminate models that are likely bad N Active Learning N Make queries to minimize error N Most approaches are greedy

Problem Characteristics N Need to make decisions: Which feature to purchase, from which class value? N Precisely: Which distribution do we sample from? N Uncertain about the outcome (and therefore usefulness) of any purchase (action. ) N Budgeted learning is Decision Making under Uncertainty N Papadimitriou would call it a ‘Game Against Nature’

The Coins Problem N Have a bunch of coins: N Each has a probability of turning up heads when flipped. Call this qi N Problem: You are allowed b flips. Which coin has the highest value qi ? N Your loss for reporting coin r is: ℓ = qmax - qr

Using Posteriors N We define distributions over qi that are influenced by incoming data N Using these posterior distributions, we can calculate things like: N What is P(coin r is really the best) ? N What is E(qmax - qr ) (i. e. , E(ℓ)) if we pick coin r? N Theme of the day: Minimize Expected Loss N minr E(ℓ) = maxr E(qr) N Always report coin with maximum E(qr)

States, Actions, and Policies N s = {(a 1, b 1), (a 2, b 2), … , (an, bn)} N acti = flip coin i (move to new s) N p: S A N If s is an end state (i. e. , no more budget) get a loss of ℓ(s) [reward of -ℓ(s)] N ℓ(s) loss of reporting the best coin in state s N This is a Markov Decision Process (MDP)

Evaluating Policies N We can calculate the expected loss of a policy: N There exists a policy p* that minimizes E(ℓ(p)) N We can compute this by evaluating ℓ at all possible end states (‘leaves’) and ‘backing up’ the values. There are exponentially many. N The probability of reaching an end state can be computed from the current posteriors

Simpler Policies N Goal: Develop simpler, tractable policies that perform well N Ideas: N Round Robin N Biased Robin N Greedy N Single Coin Lookahead

The Robins N Round Robin N Flip coin i, i+1, i+2, … until the budget runs out. N Results in a uniform allocation of the budget N Simplest policy N Theoretical behaviour discussed in Section 3. 4. 3 N Biased Robin N Flip coin i. N If ‘HEADS’, flip it again N If ’TAILS’, flip coin i+1

Greedy Policy N For each coin i, compute the expected loss immediately after flipping it. N E(ℓ(acti)) = qi ·ℓ(s|ai++) + (1 – qi) ·ℓ(s|bi++) N Flip the coin with lowest expected loss N Equivalent to optimal policy for b = 1

Single Coin Lookahead N Imagine spending the entire remaining budget on one coin N This policy has b + 1 possible outcomes N Calculate the expected loss of this policy for each coin i N Flip the coin with the lowest single coin allocation loss ONCE N Repeat

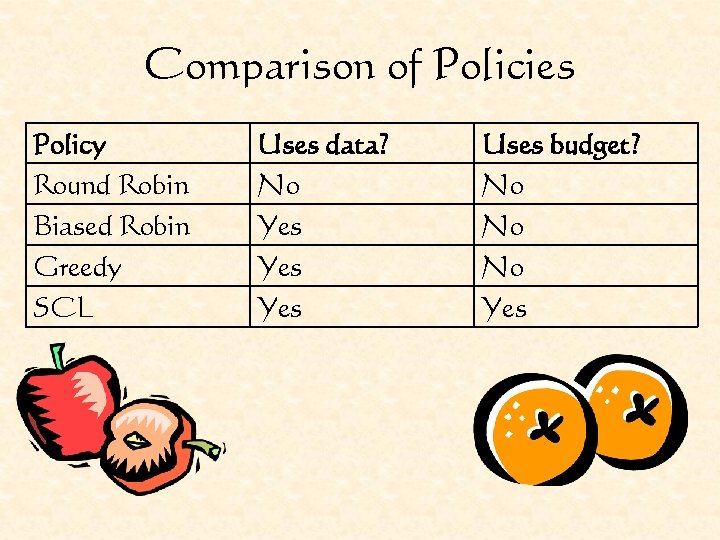

Comparison of Policies Policy Round Robin Biased Robin Greedy SCL Uses data? No Yes Yes Uses budget? No No No Yes

Empirical Evaluation

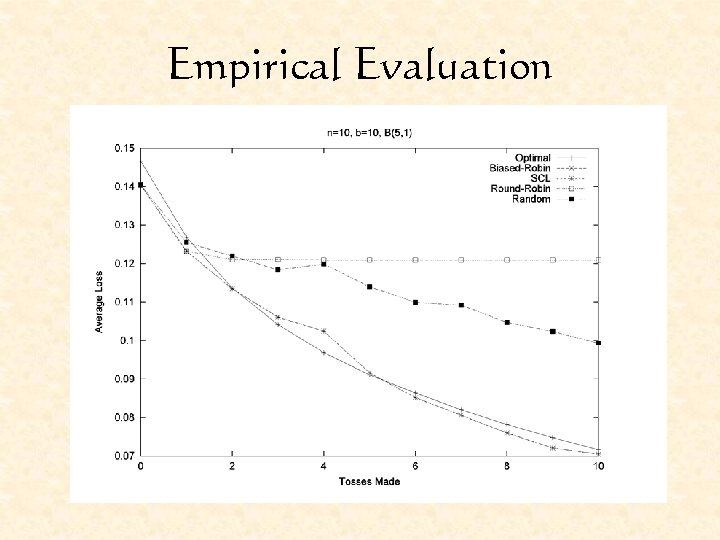

Empirical Evaluation

Coins to NB N Flipping a coin becomes querying a feature N Twice as many choices: For each query, we decide which feature, and what the class label should be. actij means query from P(Xi|Yj). N Two beta distributions for each Xi, one for Y=1, one for Y=0 N Distributions are updated from counts of Xi = 1 or 0, exactly as for the coins problem.

Coins to NB N Loss is based not on a single feature, but on the entire classifier as a performance measure N Loss is dependent on all parameters N Choice of loss functions: N 0/1 loss N Entropy N GINI Index N We have chosen the GINI index

Again, The Robins N Round Robin N Query feature i, i+1, i+2, … until budget runs out. N Draw the label from P(Y) N Results in a uniform allocation of the budget N Biased Robin N Query feature i. N If loss decreases, query it again N If loss increases, query feature i+1

Greedy Policy N For each feature i and class label j, compute the expected loss immediately after querying P(Xi|Yj) N E(ℓ(actij)) = P(Xi|Yj) ·ℓ(s|aij++) + (1 – P(Xi|Yj)) ·ℓ(s|bij++) N Do action with lowest expected loss N Equivalent to optimal policy for b = 1

Single Feature Lookahead N Imagine spending the entire remaining budget on one feature and class label N This policy has b + 1 possible outcomes N Calculate the expected loss of this policy for each feature i N Query the feature with the lowest single feature allocation loss ONCE N Repeat

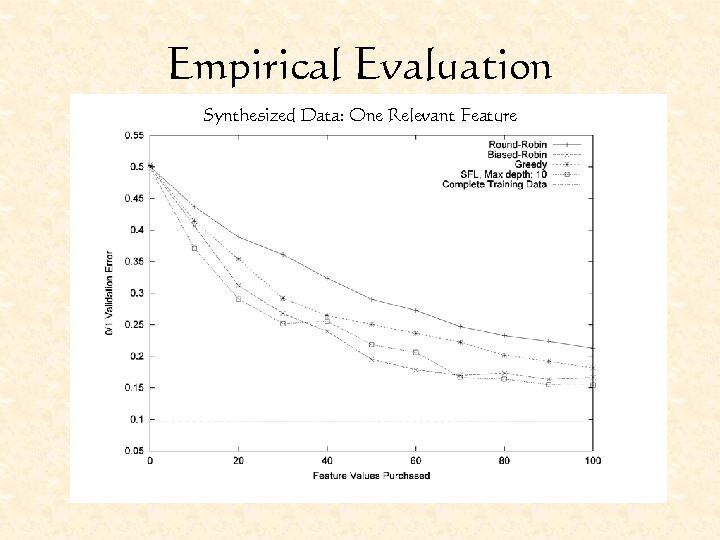

Empirical Evaluation Synthesized Data: One Relevant Feature

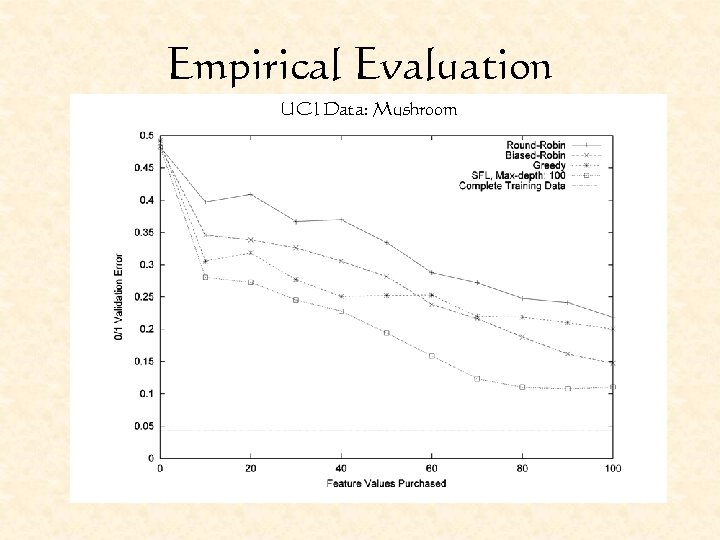

Empirical Evaluation UCI Data: Mushroom

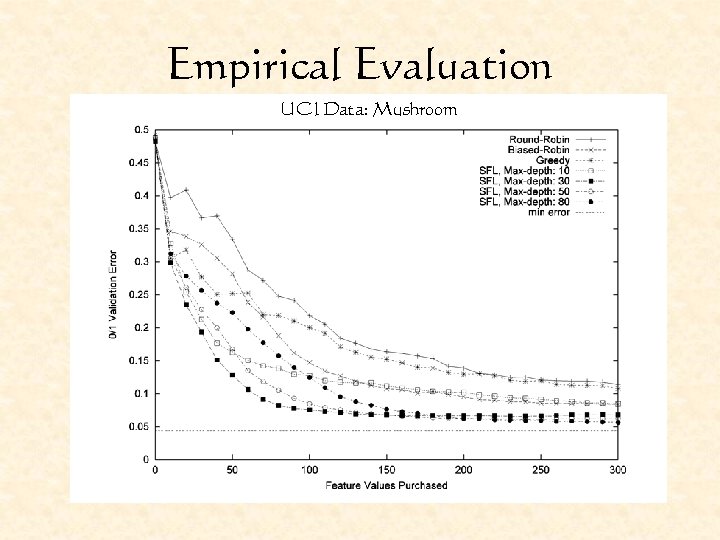

Empirical Evaluation UCI Data: Mushroom

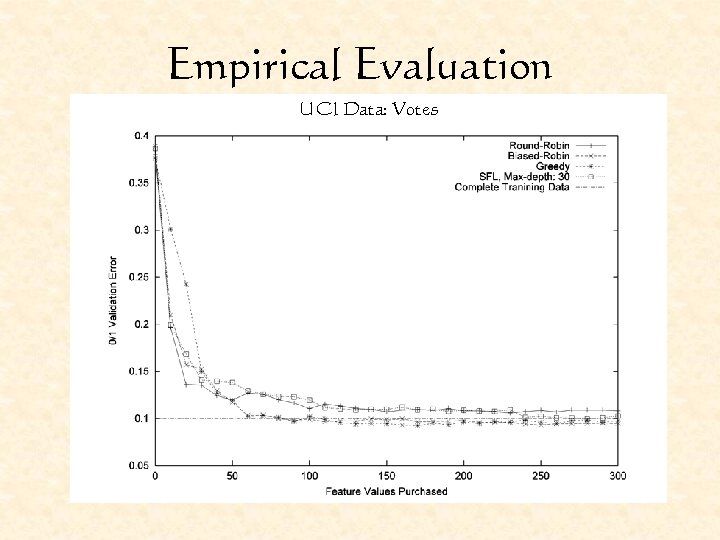

Empirical Evaluation UCI Data: Votes

Conclusions N Budgeted Learning N Is decision making under uncertainty N Appears to be hard in our current formulation N Can be accomplished fairly well with tractable (but sub-optimal) policies N Policies for budgeted learning should incorporate incoming data and knowledge of the budget for best performance

Future Work N Can we learn more complex models? (With dependencies? ) N More complex cost structures are possible. N Gains to be made from MDP literature? N Costs at classification time are currently ignored. Is it possible to learn a good active classifier under a budget?

Contributions N Expressed a budgeted learning framework N Examined the optimal policy in this framework N Developed and empirically evaluated several tractable policies N Shown that contingent policies perform best

The End Any questions?

- Slides: 29