Nave Bayes Classifiers CS 171271 1 Definition n

Naïve Bayes Classifiers CS 171/271 1

Definition n A classifier is a system that categorizes instances Inputs to a classifier: feature/attribute values of a given instance Output of a classifier: predicted category for that instance 2

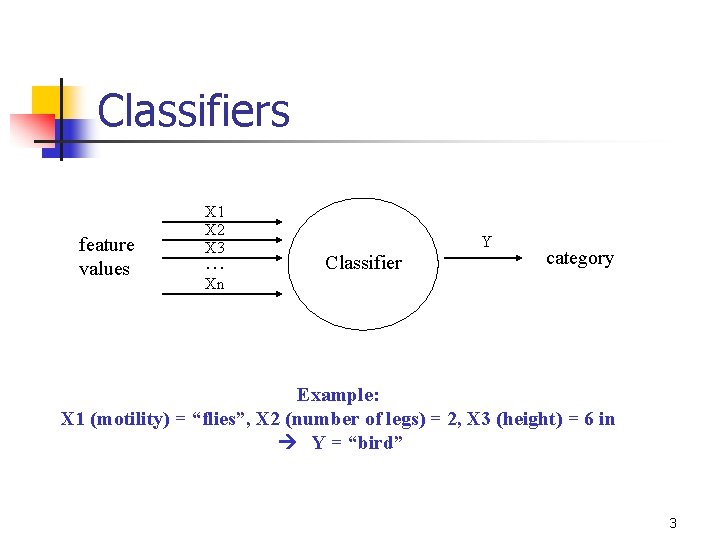

Classifiers feature values X 1 X 2 X 3 … Y Classifier category Xn Example: X 1 (motility) = “flies”, X 2 (number of legs) = 2, X 3 (height) = 6 in Y = “bird” 3

Learning from datasets n n In the context of a learning agent, a classifier’s intelligence will be based on a dataset consisting of instances with known categories Typical goal of a classifier: predict the category of a new instance that is rationally consistent with the dataset 4

Classifier algorithm (approach 1) n n n Select all instances in the dataset that match the input tuple (X 1, X 2, …, Xn) of feature values Determine the distribution of Y-values for all the matches Output the Y-value representing the most instances 5

Problems with this approach n n n Classification process is proportional to dataset size Time complexity: O( m ), where m is the dataset size Not practical if the dataset is huge 6

Pre-computing distributions (approach 2) n What if we pre-compute all distributions for all possible tuples? n n n The classification process is then a simple matter of looking up the pre-computed distribution Time complexity burden will be in the precomputation stage, done only once Still not practical if the number of features is not small n Suppose there are only two possible values per feature and there are n features -> 2 n possible combinations! 7

What we need n n Typically, n (number of features) will be in the hundreds and m (number of instances in the dataset) will be in the tens of thousands We want a classifier that pre-computes enough so that it does not need to scan through the instances during the query, but we do not want to pre-compute too many values 8

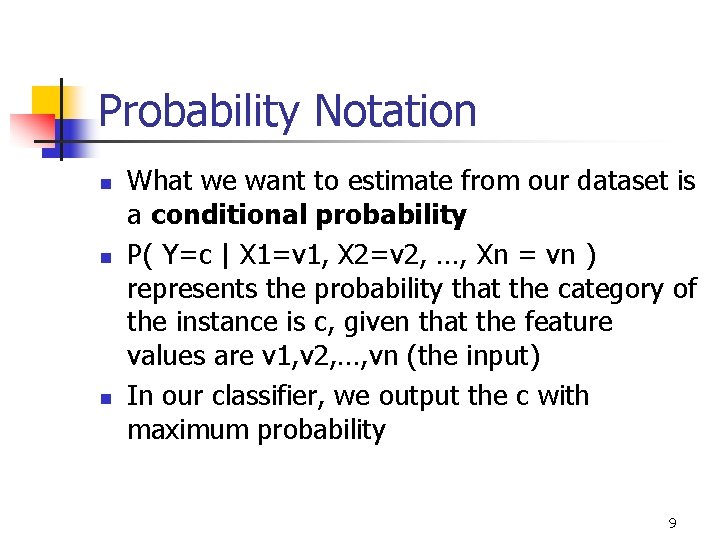

Probability Notation n What we want to estimate from our dataset is a conditional probability P( Y=c | X 1=v 1, X 2=v 2, …, Xn = vn ) represents the probability that the category of the instance is c, given that the feature values are v 1, v 2, …, vn (the input) In our classifier, we output the c with maximum probability 9

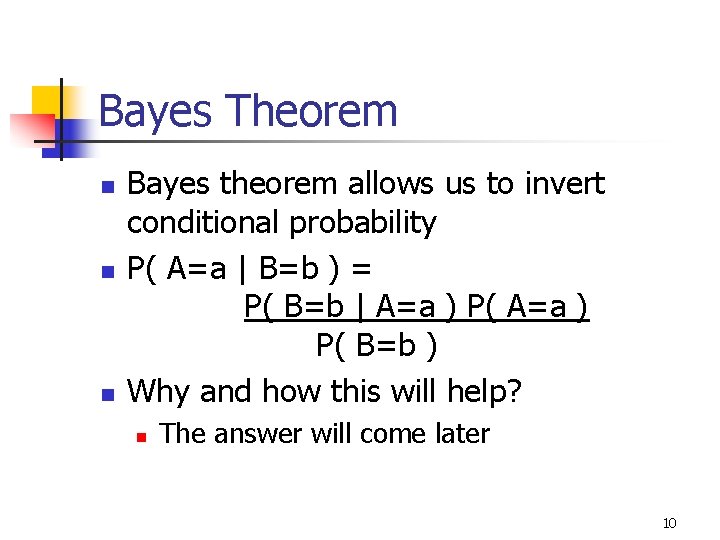

Bayes Theorem n n n Bayes theorem allows us to invert conditional probability P( A=a | B=b ) = P( B=b | A=a ) P( B=b ) Why and how this will help? n The answer will come later 10

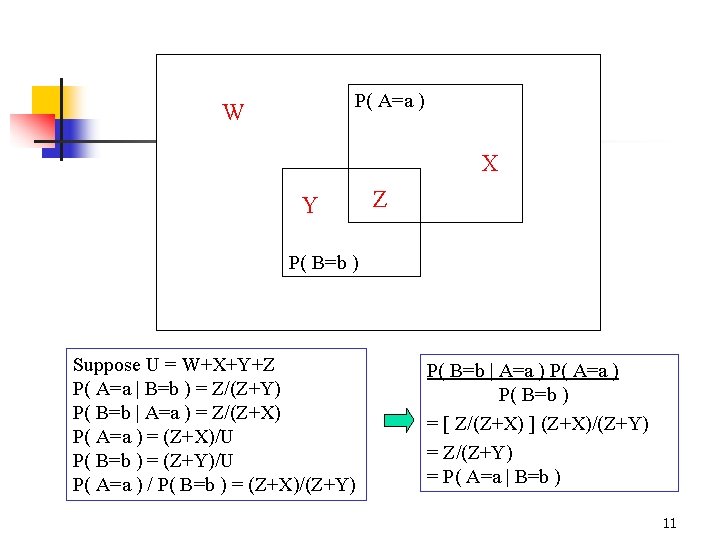

P( A=a ) W X Y Z P( B=b ) Suppose U = W+X+Y+Z P( A=a | B=b ) = Z/(Z+Y) P( B=b | A=a ) = Z/(Z+X) P( A=a ) = (Z+X)/U P( B=b ) = (Z+Y)/U P( A=a ) / P( B=b ) = (Z+X)/(Z+Y) P( B=b | A=a ) P( B=b ) = [ Z/(Z+X) ] (Z+X)/(Z+Y) = Z/(Z+Y) = P( A=a | B=b ) 11

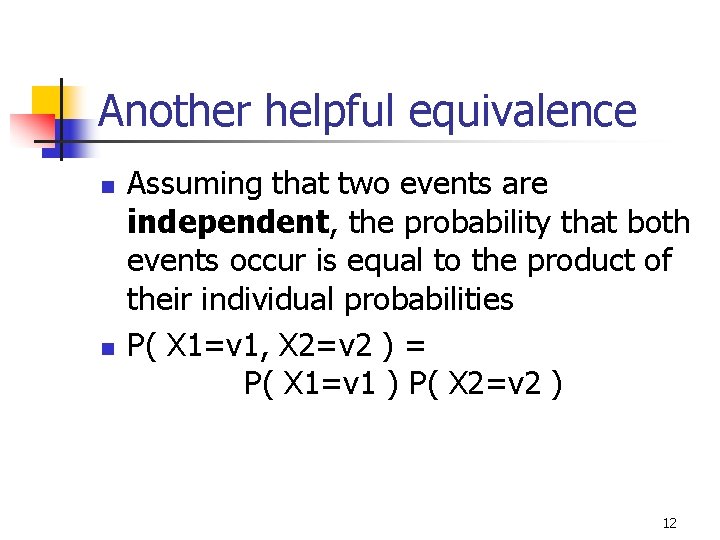

Another helpful equivalence n n Assuming that two events are independent, the probability that both events occur is equal to the product of their individual probabilities P( X 1=v 1, X 2=v 2 ) = P( X 1=v 1 ) P( X 2=v 2 ) 12

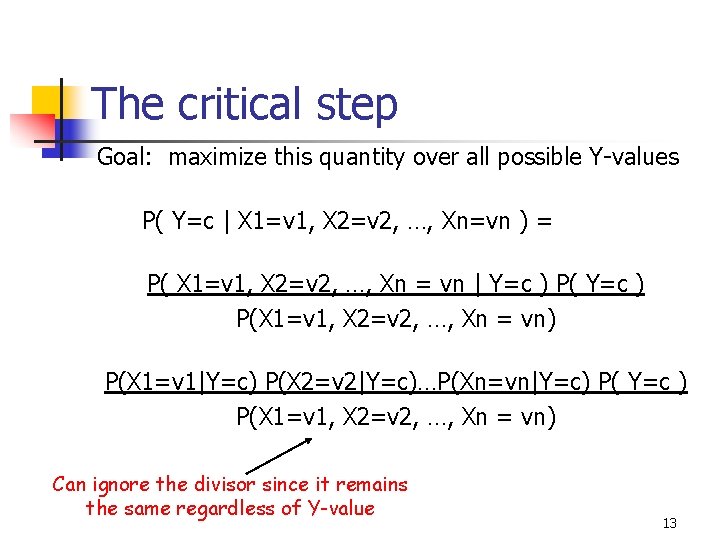

The critical step Goal: maximize this quantity over all possible Y-values P( Y=c | X 1=v 1, X 2=v 2, …, Xn=vn ) = P( X 1=v 1, X 2=v 2, …, Xn = vn | Y=c ) P(X 1=v 1, X 2=v 2, …, Xn = vn) P(X 1=v 1|Y=c) P(X 2=v 2|Y=c)…P(Xn=vn|Y=c) P( Y=c ) P(X 1=v 1, X 2=v 2, …, Xn = vn) Can ignore the divisor since it remains the same regardless of Y-value 13

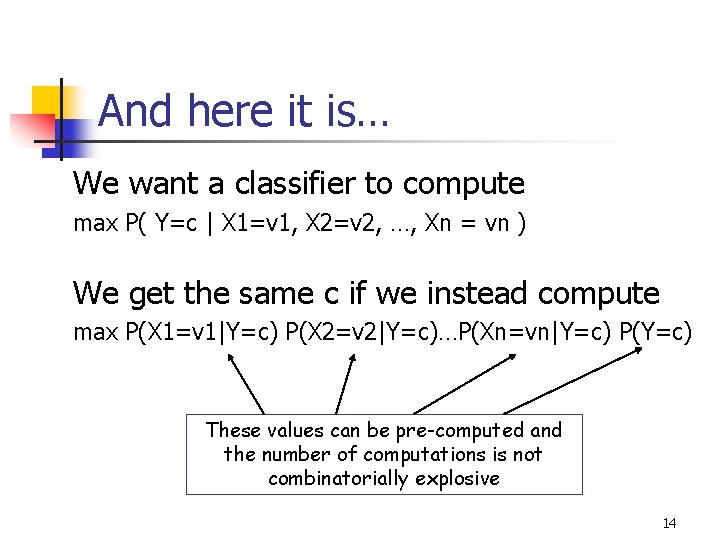

And here it is… We want a classifier to compute max P( Y=c | X 1=v 1, X 2=v 2, …, Xn = vn ) We get the same c if we instead compute max P(X 1=v 1|Y=c) P(X 2=v 2|Y=c)…P(Xn=vn|Y=c) P(Y=c) These values can be pre-computed and the number of computations is not combinatorially explosive 14

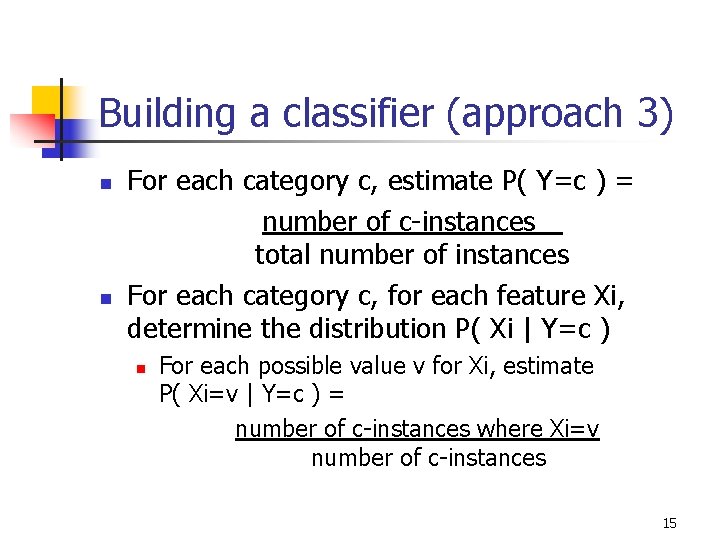

Building a classifier (approach 3) n n For each category c, estimate P( Y=c ) = number of c-instances total number of instances For each category c, for each feature Xi, determine the distribution P( Xi | Y=c ) n For each possible value v for Xi, estimate P( Xi=v | Y=c ) = number of c-instances where Xi=v number of c-instances 15

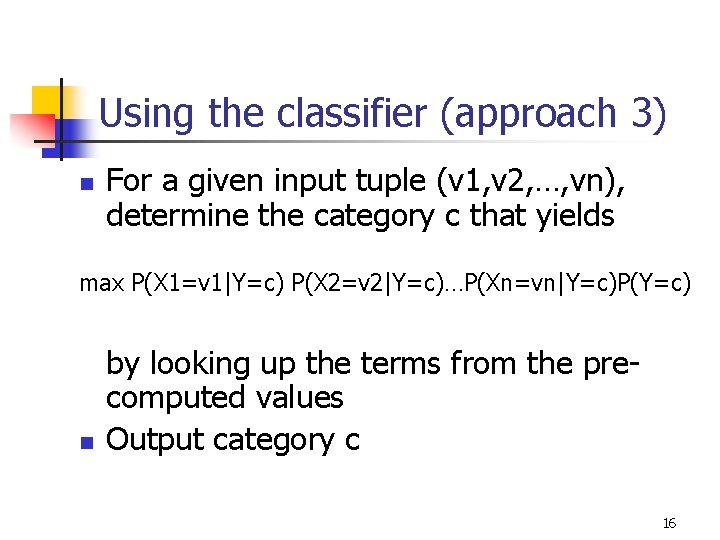

Using the classifier (approach 3) n For a given input tuple (v 1, v 2, …, vn), determine the category c that yields max P(X 1=v 1|Y=c) P(X 2=v 2|Y=c)…P(Xn=vn|Y=c)P(Y=c) n by looking up the terms from the precomputed values Output category c 16

Example n Suppose we wanted a classifier that categorizes organisms according to certain characteristics n n Organism categories (Y) are: mammal, bird, fish, insect, spider Characteristics (X 1, X 2, X 3, X 4): motility (walks, flies, swim), number of legs (2, 4, 6, 8), size (small, large), body-covering (fur, scales, feathers) The dataset contains 1000 organism samples m = 1000, n = 4, number of categories = 5 17

Comparing approaches n Approach 1: requires scanning all tuples for matching feature values n n entails 1000*4 = 4000 comparisons per query, count occurrences of each category Approach 2: pre-compute probabilities n n Preparation: for each of the 3*4*2*3 = 64 combinations, determine the probability for each category (64*5=320 computations) Query: straightforward lookup of answer 18

Comparing approaches n Approach 3: Naïve Bayes classifier n n Preparation: compute P(Y=c) probabilities: 5 of them; compute P( Xi=v | Y=c ), 5*(3+4+2+3)=60 of them Query: straightforward computation of 5 probabilities, determine maximum, return category that yields the maximum 19

About the Naïve Bayes Classifier n Computations and resources required are reasonable, both for the preparatory stage and actual query stage n n Even if the number n of features is in the thousands! The classifier is naïve because it assumes independence of features (this is likely not the case) It turns out that the classifier works well in practice even with this limitation Log of probabilities are often used instead of actual probabilities to avoid underflow when computing the probability products 20

Related areas of study n n Density estimators: alternate methods of computing the probabilities Feature selection: eliminate unnecessary or redundant features (those that don’t help as much with classification) in order to reduce the value of n 21

- Slides: 21