CLASSIFICATION Bayesian Classifiers Uses Bayes Thomas Bayes 1701

CLASSIFICATION: Bayesian Classifiers Uses Bayes’ (Thomas Bayes, 1701 -1781) Theorem to build probabilistic models of relationships between attributes and classes Statistical principle for combining prior class knowledge with new evidence from data Multiple implementations Naïve Bayesian networks

CLASSIFICATION: Bayesian Classifiers Requires concept of conditional probability Measures the probability of an event given that (by evidence or information) another event has occurred Notation: P(A|B) = Probability of A given that knowledge of B occurred P(A|B) = P(A∩B)/P(B) Equivalently if P(B) ≠ 0, = P(A∩B) = P(A|B)P(B)

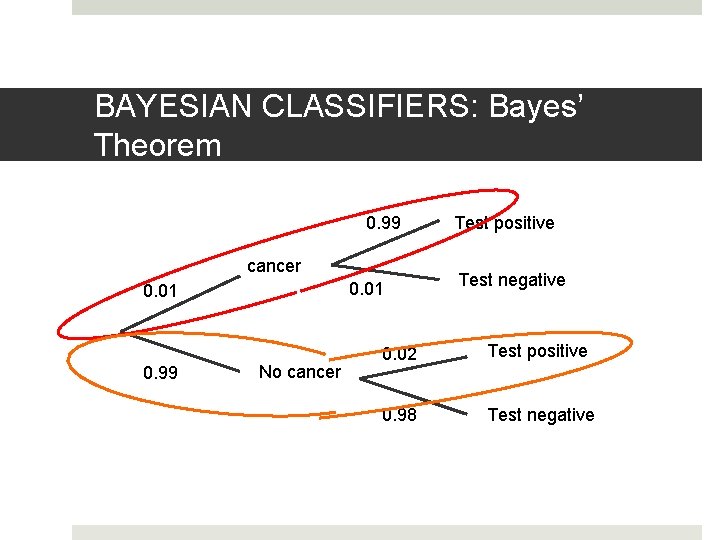

BAYESIAN CLASSIFIERS: Conditional Probability Example: Suppose 1% of a specific population has a form of cancer A new diagnostic test produces correct positive results for those with the cancer of 99% of the time produces correct negative results for those without the cancer of 98% of the time P(cancer) = 0. 01 P(positive test|cancer) = 0. 99 P(negative test|cancer) = 0. 98

BAYESIAN CLASSIFIERS: Conditional Probability Example: But what if you tested positive? What is the probability that you actually have cancer? Bayes’ Theorem “reverses” the process to provide us with an answer.

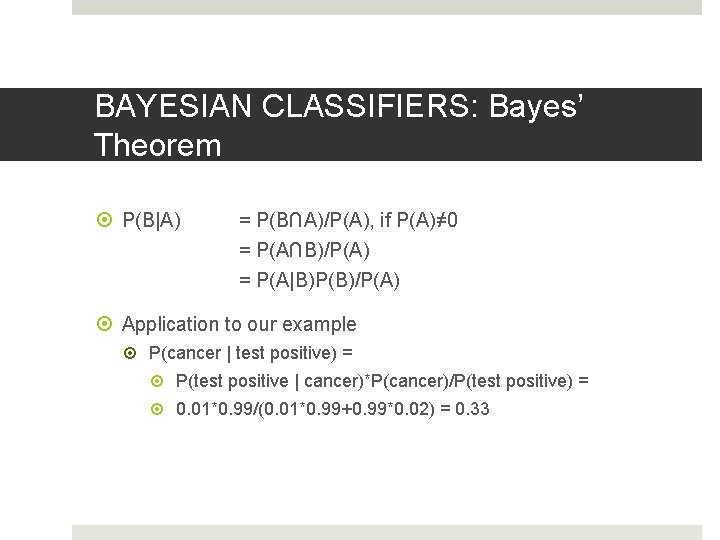

BAYESIAN CLASSIFIERS: Bayes’ Theorem P(B|A) = P(B∩A)/P(A), if P(A)≠ 0 = P(A∩B)/P(A) = P(A|B)P(B)/P(A) Application to our example P(cancer | test positive) = P(test positive | cancer)*P(cancer)/P(test positive) = 0. 01*0. 99/(0. 01*0. 99+0. 99*0. 02) = 0. 33

BAYESIAN CLASSIFIERS: Bayes’ Theorem 0. 99 cancer 0. 01 0. 99 No cancer Test positive Test negative 0. 02 Test positive 0. 98 Test negative

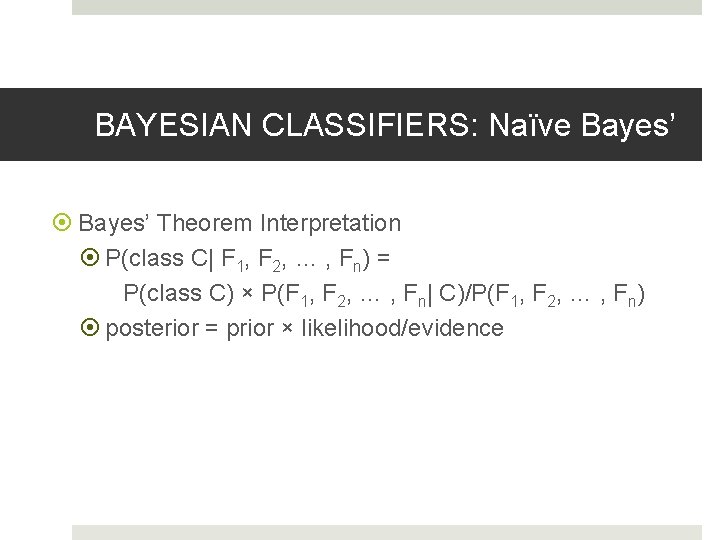

BAYESIAN CLASSIFIERS: Naïve Bayes’ Theorem Interpretation P(class C| F 1, F 2, … , Fn) = P(class C) × P(F 1, F 2, … , Fn| C)/P(F 1, F 2, … , Fn) posterior = prior × likelihood/evidence

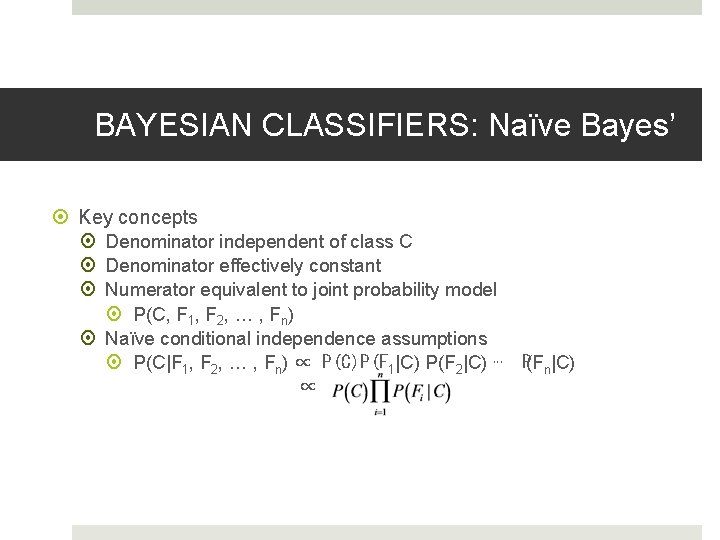

BAYESIAN CLASSIFIERS: Naïve Bayes’ Key concepts Denominator independent of class C Denominator effectively constant Numerator equivalent to joint probability model P(C, F 1, F 2, … , Fn) Naïve conditional independence assumptions P(C|F 1, F 2, … , Fn) ∝ P(C)P(F 1|C) P(F 2|C) ⋯ P(Fn|C) ∝

BAYESIAN CLASSIFIERS: Naïve Bayes’ Multiple distributional assumptions possible Gaussian Multinomial Bernoulli

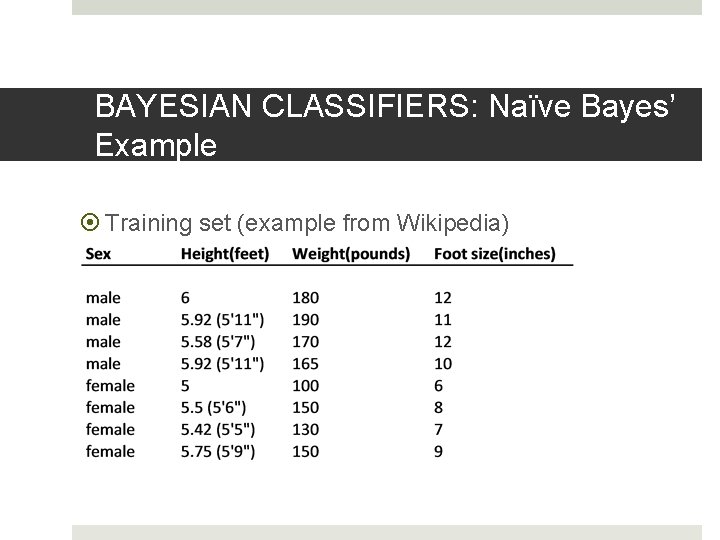

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Training set (example from Wikipedia)

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Assumptions Continuous data Gaussian (Normal) distribution P(male) = P(female) = 0. 5

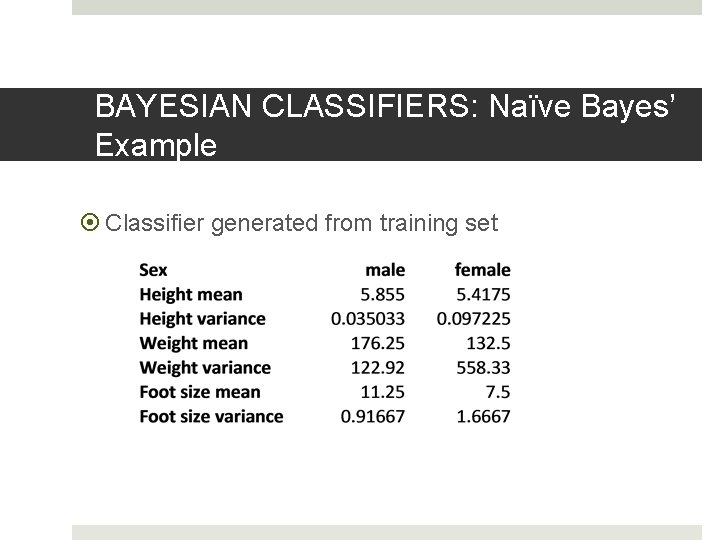

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Classifier generated from training set

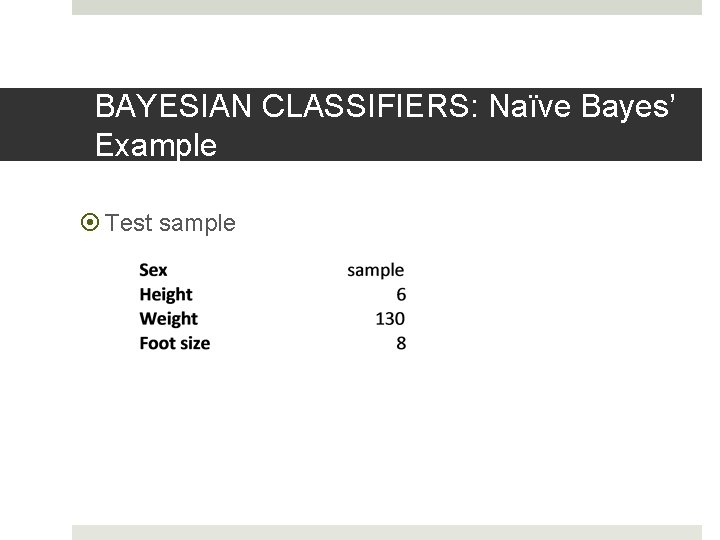

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Test sample

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Calculate posterior probabilities for both genders Posterior(male) = P(male)P(height|male)P(weight|male)P(foot size|male)/evidence Posterior(female) = P(female)P(height|female)P(weight|female)P(foot size|female)/evidence Evidence is constant and same so we ignore denominators

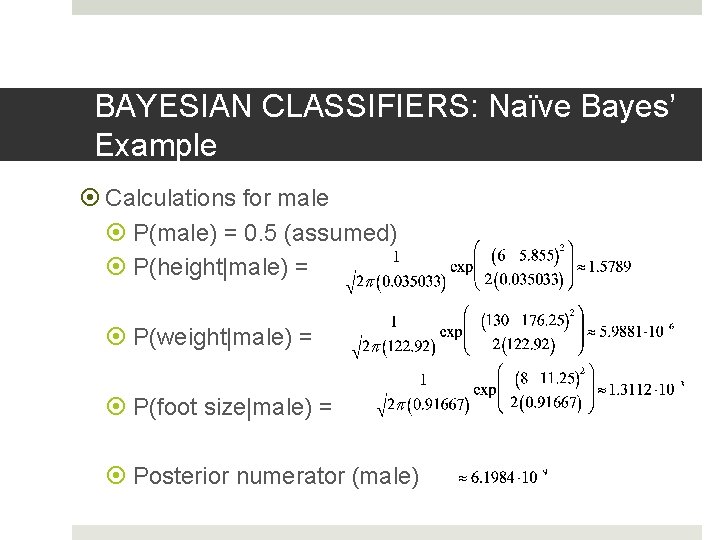

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Calculations for male P(male) = 0. 5 (assumed) P(height|male) = P(weight|male) = P(foot size|male) = Posterior numerator (male)

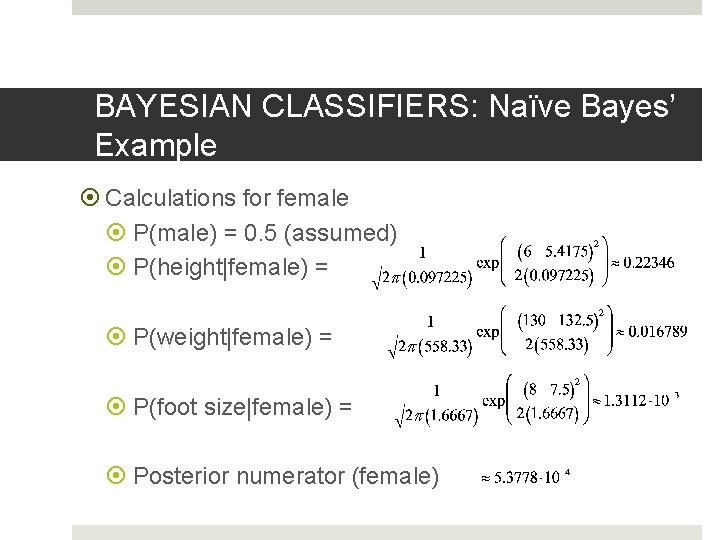

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Calculations for female P(male) = 0. 5 (assumed) P(height|female) = P(weight|female) = P(foot size|female) = Posterior numerator (female)

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Conclusion Posterior numerator (significantly) greater for female classification than for male, so classify sample as female

![BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Note We did not calculate P(evidence) [normalizing constant] since BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Note We did not calculate P(evidence) [normalizing constant] since](http://slidetodoc.com/presentation_image_h/94e500a4038873a842a56666dc5c1ddb/image-18.jpg)

BAYESIAN CLASSIFIERS: Naïve Bayes’ Example Note We did not calculate P(evidence) [normalizing constant] since not needed, but could P(evidence) = P(male)P(height|male)P(weight|male)P(foot size|male) + P(female)P(height|female)P(weight| female)P(foot size|female)

BAYESIAN CLASSIFIERS: Bayesian Networks Judea Pearl (UCLA Computer Science, Cognitive Systems Lab): one of the pioneers of Bayesian Networks Author: Probabilistic Reasoning in Intelligent Systems, 1988 Father of journalist Daniel Pearl Kidnapped and murdered in Pakistan in 2002 by Al. Queda

BAYESIAN CLASSIFIERS: Bayesian Networks Probabilistic graphical model Represents random variables and conditional dependencies using a directed acyclic graph (DAG) Nodes of graph represent random variables

BAYESIAN CLASSIFIERS: Bayesian Networks Edges of graph represent conditional dependencies Unconnected nodes conditionally independent of each other Does not require all attributes to be conditionally independent

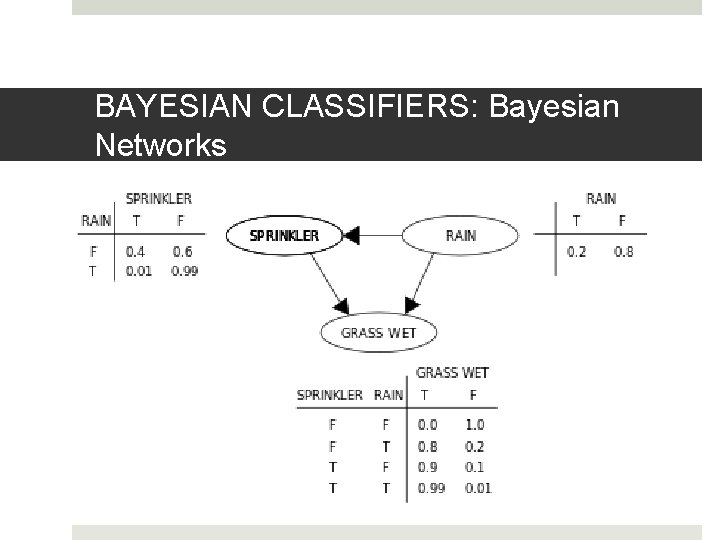

BAYESIAN CLASSIFIERS: Bayesian Networks Probability table associating each node to its immediate parent nodes If node X has no immediate parents, table contains only prior probability P(X) If one parent Y, table contains P(X|Y) If multiple parents {Y 1, Y 2, ⋯ , Yn}, table contains P(X|Y 1, Y 2, ⋯ , Yn)

BAYESIAN CLASSIFIERS: Bayesian Networks

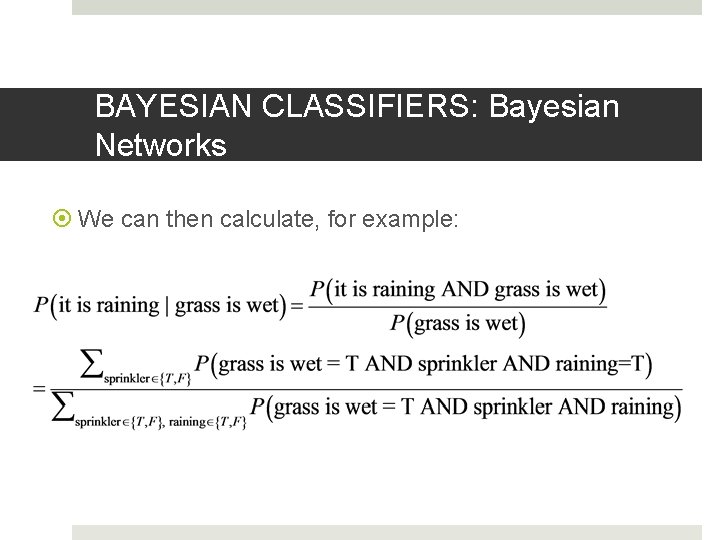

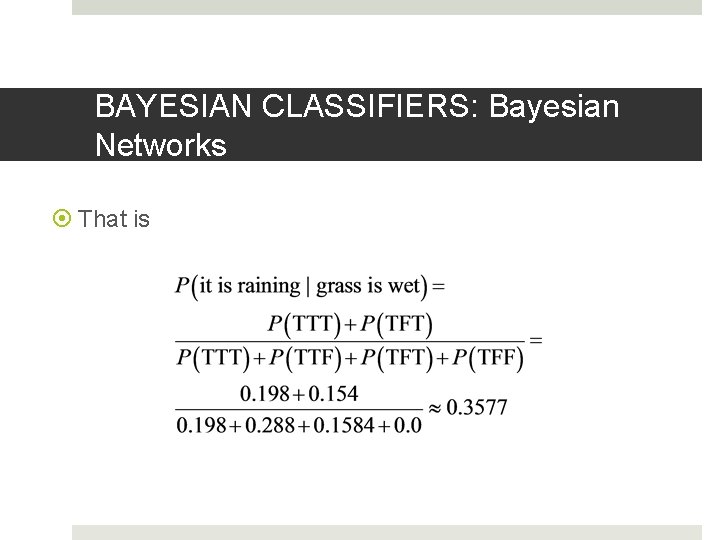

BAYESIAN CLASSIFIERS: Bayesian Networks Model encodes relevant probabilities from which probabilistic inferences can then be calculated Joint probability: P(G, S, R) = P(R)P(S|R)*P(G|S, R) G = “Grass wet” S = “Sprinkler on” R = “Raining”

BAYESIAN CLASSIFIERS: Bayesian Networks We can then calculate, for example:

BAYESIAN CLASSIFIERS: Bayesian Networks That is

BAYESIAN CLASSIFIERS: Bayesian Networks Building the model Create network structure (graph) Determine probability values of tables Simplest case Network defined by user Most real-world cases Defining network too com[plex Use machine learning: many algorithms

BAYESIAN CLASSIFIERS: Bayesian Networks Algorithms built into Weka User defined network Conditional independence tests Genetic search Hill climber K 2 Simulated annealing Maximum weight spanning tree Tabu search

BAYESIAN CLASSIFIERS: Bayesian Networks Many other versions online BNT (Bayes’ Net Tree) Matlab toolbox Kevin Murphy, University of British Columbia http: //www. cs. ubc. ca/~murphyk/Software/

- Slides: 29