Making classifiers by supervised learning Nave Bayes Support

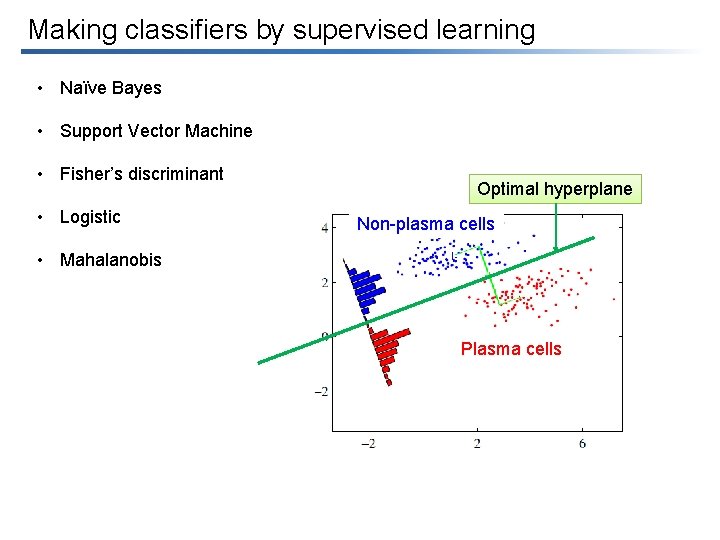

Making classifiers by supervised learning • Naïve Bayes • Support Vector Machine • Fisher’s discriminant • Logistic Optimal hyperplane Non-plasma cells • Mahalanobis Plasma cells

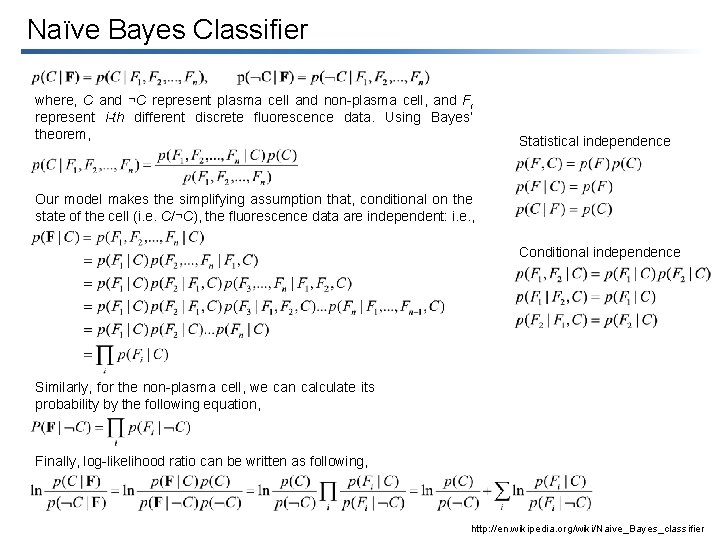

Naïve Bayes Classifier where, C and ¬C represent plasma cell and non-plasma cell, and Fi represent i-th different discrete fluorescence data. Using Bayes’ theorem, Statistical independence Our model makes the simplifying assumption that, conditional on the state of the cell (i. e. C/¬C), the fluorescence data are independent: i. e. , Conditional independence Similarly, for the non-plasma cell, we can calculate its probability by the following equation, Finally, log-likelihood ratio can be written as following, http: //en. wikipedia. org/wiki/Naive_Bayes_classifier

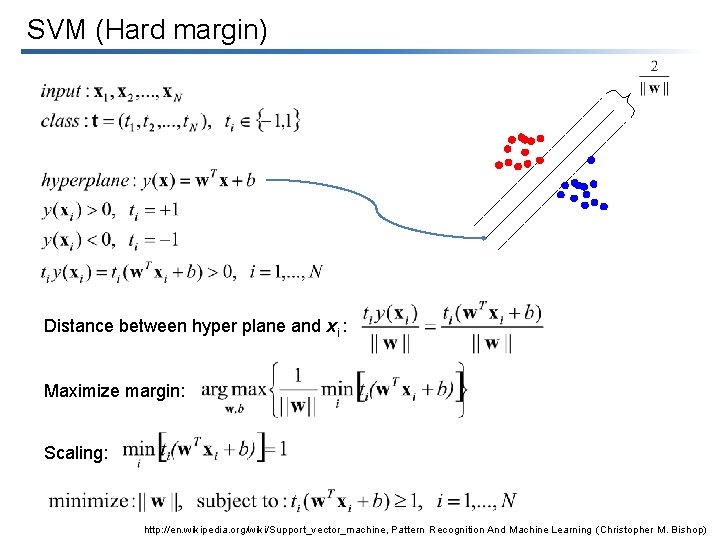

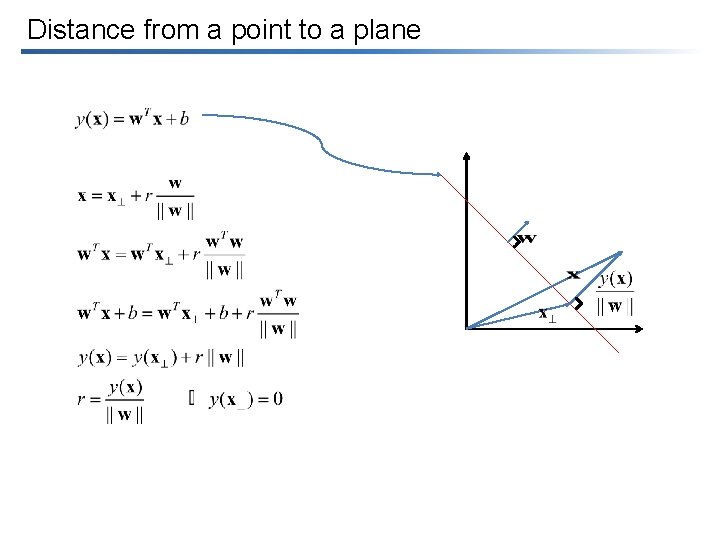

SVM (Hard margin) Distance between hyper plane and xi : Maximize margin: Scaling: http: //en. wikipedia. org/wiki/Support_vector_machine, Pattern Recognition And Machine Learning (Christopher M. Bishop)

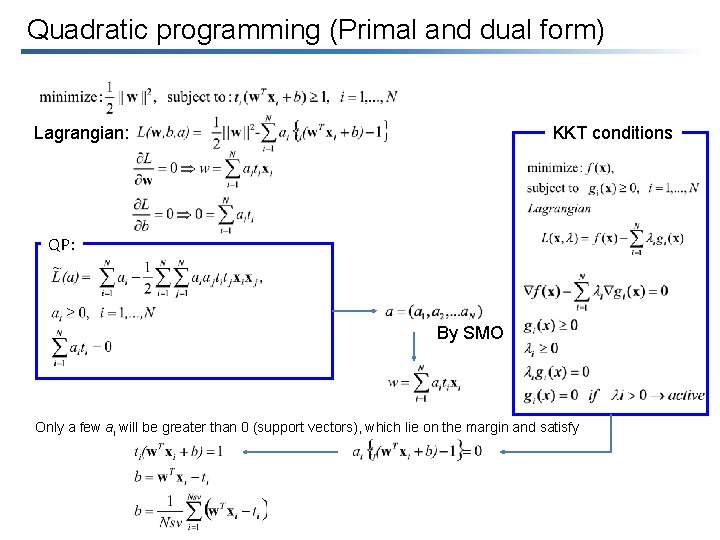

Quadratic programming (Primal and dual form) Lagrangian: KKT conditions QP: By SMO Only a few ai will be greater than 0 (support vectors), which lie on the margin and satisfy

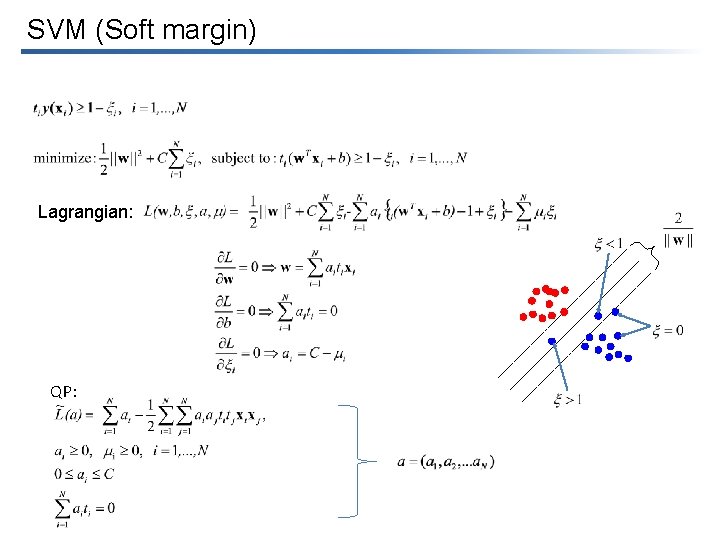

SVM (Soft margin) Lagrangian: QP:

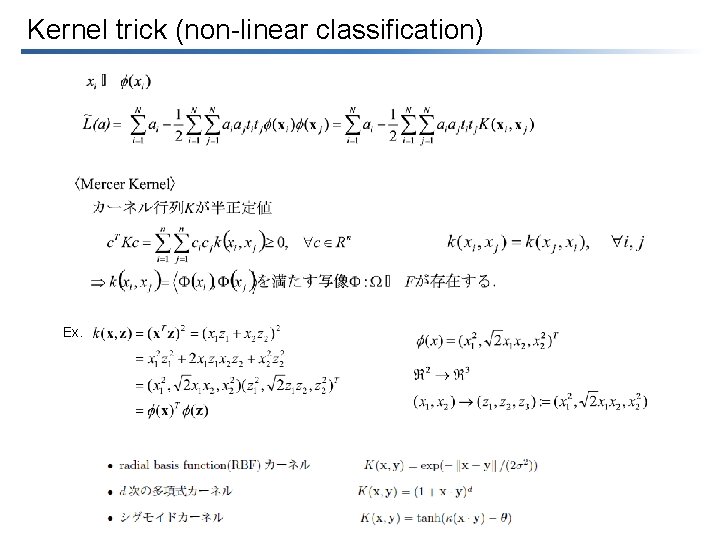

Kernel trick (non-linear classification) Ex.

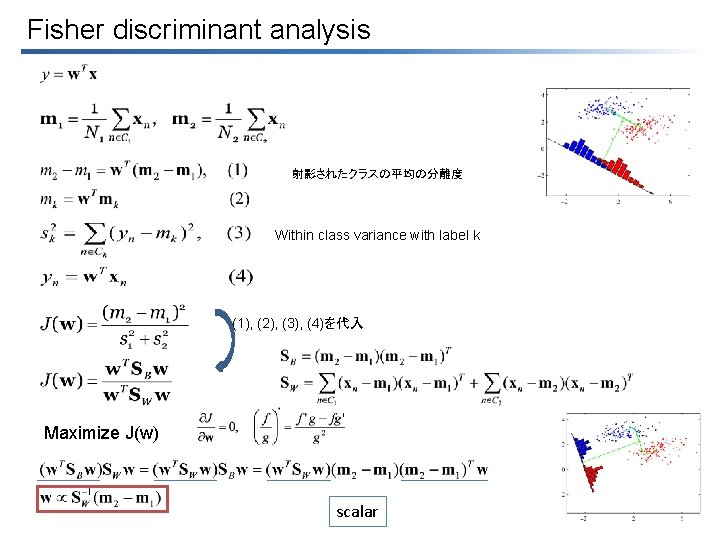

Fisher discriminant analysis 射影されたクラスの平均の分離度 Within class variance with label k (1), (2), (3), (4)を代入 Maximize J(w) scalar

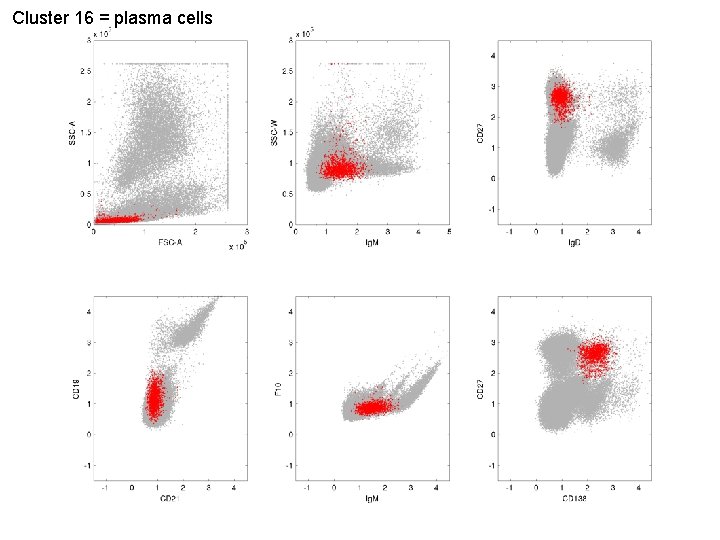

Cluster 16 = plasma cells

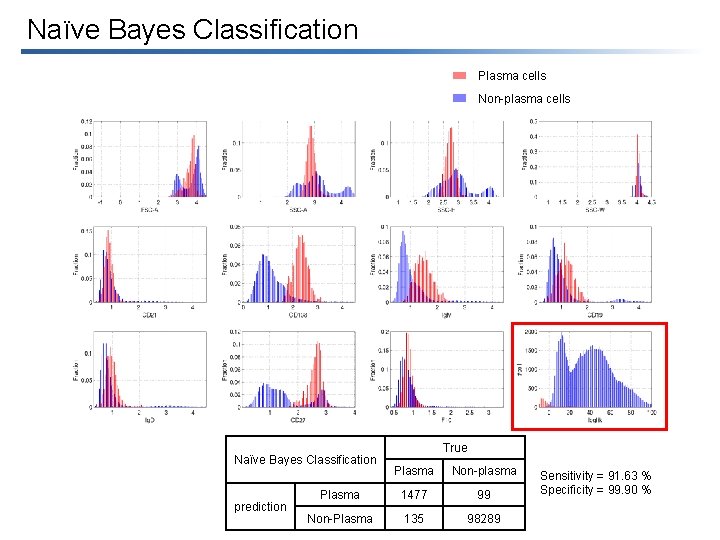

Naïve Bayes Classification Plasma cells Non-plasma cells Naïve Bayes Classification prediction True Plasma Non-plasma Plasma 1477 99 Non-Plasma 135 98289 Sensitivity = 91. 63 % Specificity = 99. 90 %

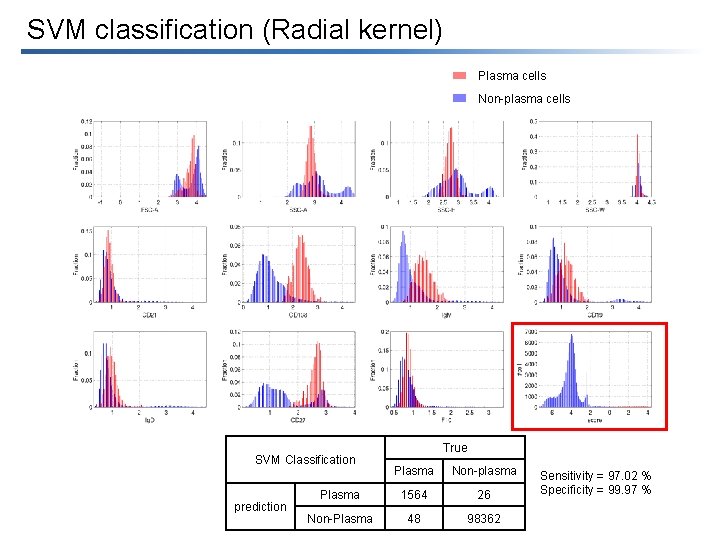

SVM classification (Radial kernel) Plasma cells Non-plasma cells SVM Classification prediction True Plasma Non-plasma Plasma 1564 26 Non-Plasma 48 98362 Sensitivity = 97. 02 % Specificity = 99. 97 %

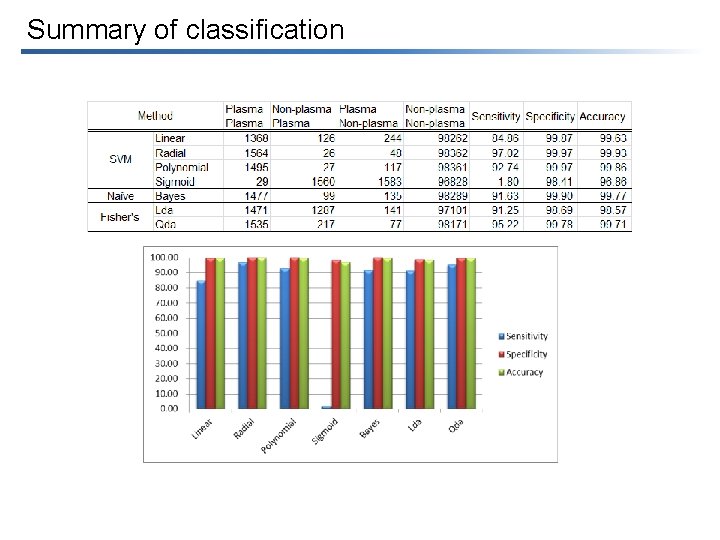

Summary of classification

Distance from a point to a plane

- Slides: 12