Bayes Net Classifiers The Nave Bayes Model Oliver

Bayes Net Classifiers The Naïve Bayes Model Oliver Schulte Machine Learning 726

Classification �Suppose we have a target node V such that all queries of interest are of the form P(V=v| values for all other variables). �Example: predict whether patient has bronchitis given values for all other nodes. Ø Because we know form of query, we can optimize the Bayes net. • V is called the class variable. • v is called the class label. • The other variables are called features. 2/13

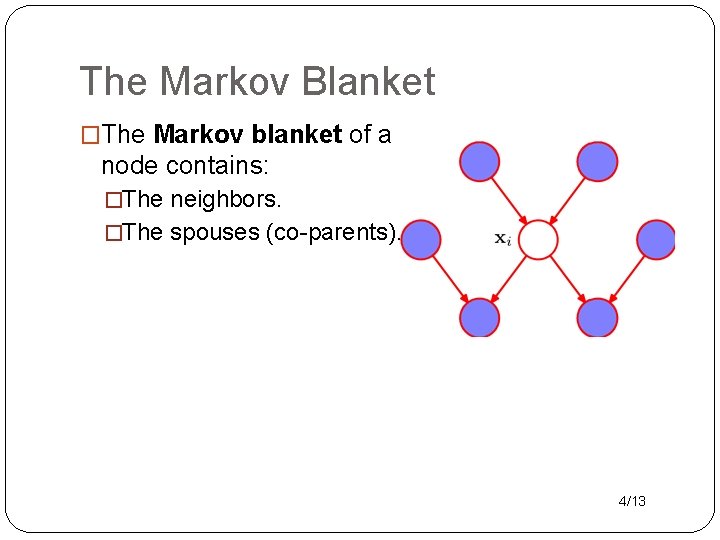

Optimizing the Structure �Some nodes are irrelevant to a target node, given the others. �Examples �Can you guess the pattern? �The Markov blanket of a node contains: �The neighbors. �The spouses (co-parents). 3/13

The Markov Blanket �The Markov blanket of a node contains: �The neighbors. �The spouses (co-parents). 4/13

How to Build a Bayes net classifier • Eliminate nodes not in the Markov blanket. v Feature Selection. �Learn parameters. v Fewer dimensions! 5/13

The Naïve Bayes Model 6/13

Classification Models �A Bayes net is a very general probability model. �Sometimes want to use more specific models. 1. More intelligible for some users. 2. Models make assumptions : if correct → better learning. � Widely used Bayes net-type classifier: Naïve Bayes. 7/13

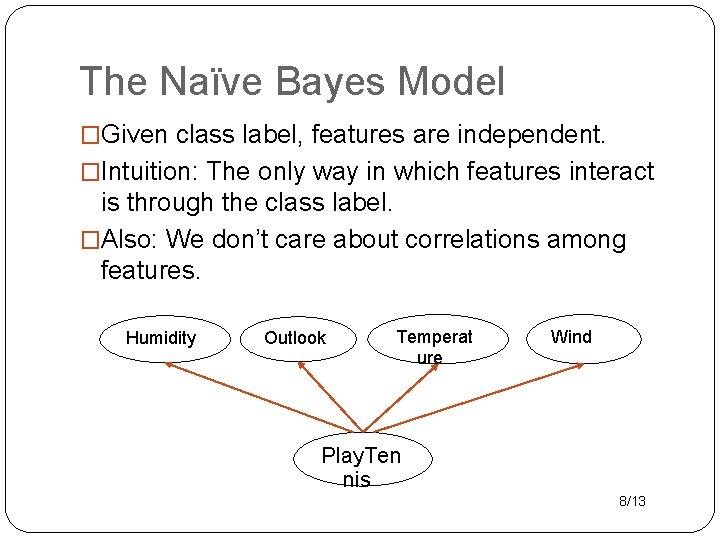

The Naïve Bayes Model �Given class label, features are independent. �Intuition: The only way in which features interact is through the class label. �Also: We don’t care about correlations among features. Humidity Outlook Temperat ure Wind Play. Ten nis 8/13

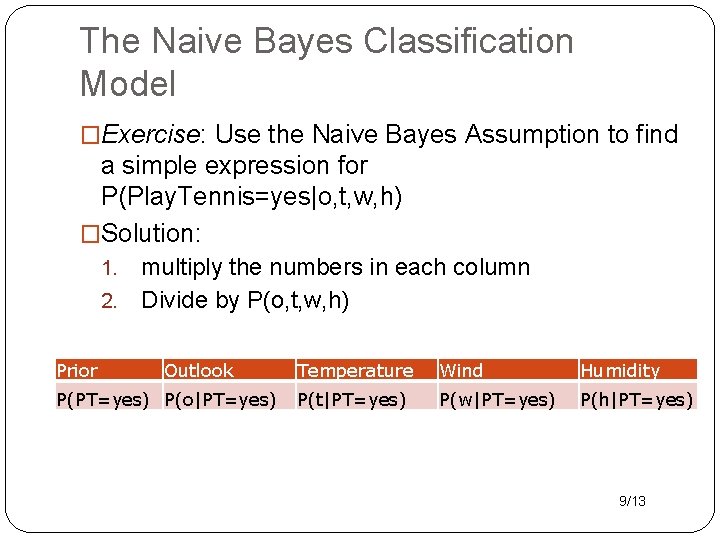

The Naive Bayes Classification Model �Exercise: Use the Naive Bayes Assumption to find a simple expression for P(Play. Tennis=yes|o, t, w, h) �Solution: multiply the numbers in each column 2. Divide by P(o, t, w, h) 1. Prior Outlook P(PT=yes) P(o|PT=yes) Temperature Wind Humidity P(t|PT=yes) P(w|PT=yes) P(h|PT=yes) 9/13

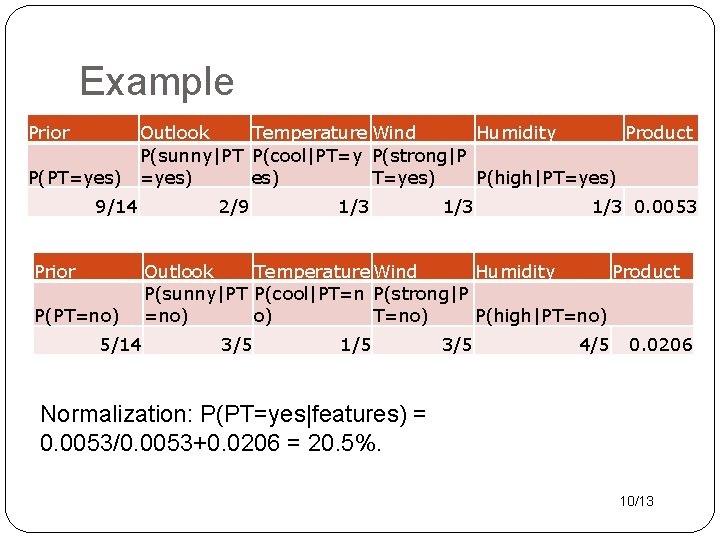

Example Prior Outlook Temperature Wind Humidity Product P(sunny|PT P(cool|PT=y P(strong|P P(PT=yes) es) T=yes) P(high|PT=yes) 9/14 Prior P(PT=no) 5/14 2/9 1/3 1/3 0. 0053 Outlook Temperature Wind Humidity Product P(sunny|PT P(cool|PT=n P(strong|P =no) o) T=no) P(high|PT=no) 3/5 1/5 3/5 4/5 0. 0206 Normalization: P(PT=yes|features) = 0. 0053/0. 0053+0. 0206 = 20. 5%. 10/13

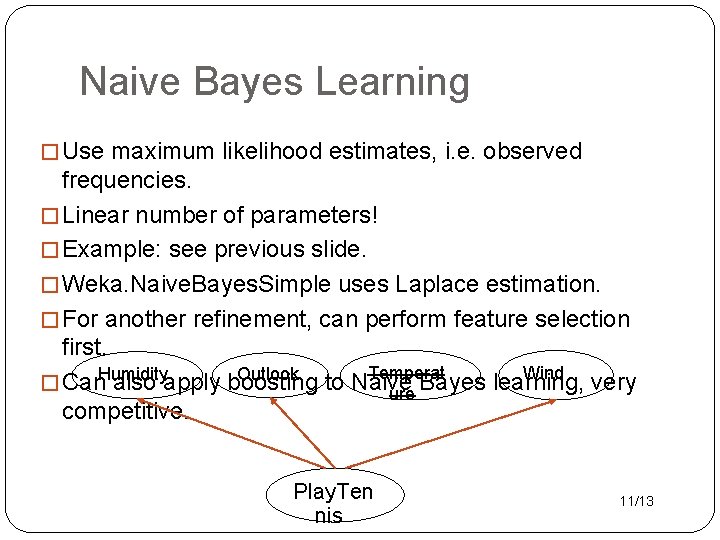

Naive Bayes Learning � Use maximum likelihood estimates, i. e. observed frequencies. � Linear number of parameters! � Example: see previous slide. � Weka. Naive. Bayes. Simple uses Laplace estimation. � For another refinement, can perform feature selection first. Temperat Wind Outlook � Can. Humidity also apply boosting to Naive Bayes learning, very ure competitive. Play. Ten nis 11/13

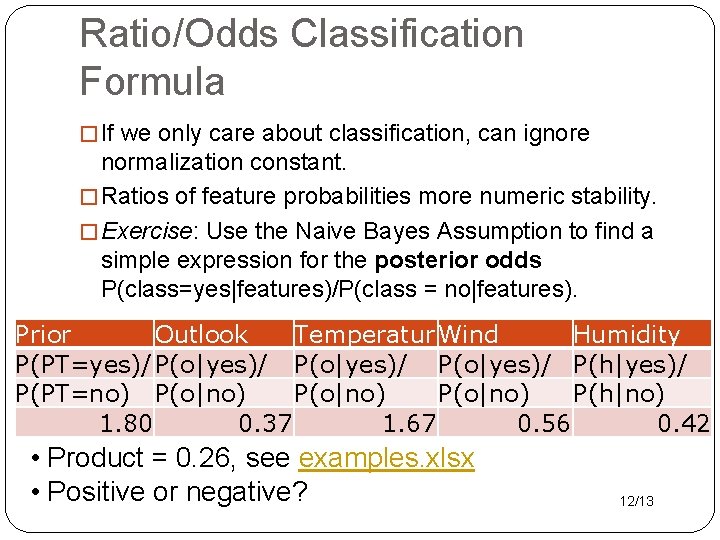

Ratio/Odds Classification Formula � If we only care about classification, can ignore normalization constant. � Ratios of feature probabilities more numeric stability. � Exercise: Use the Naive Bayes Assumption to find a simple expression for the posterior odds P(class=yes|features)/P(class = no|features). Prior Outlook Temperatur Wind Humidity P(PT=yes)/ P(o|yes)/ P(h|yes)/ P(PT=no) P(o|no) P(h|no) 1. 80 0. 37 1. 67 0. 56 0. 42 • Product = 0. 26, see examples. xlsx • Positive or negative? 12/13

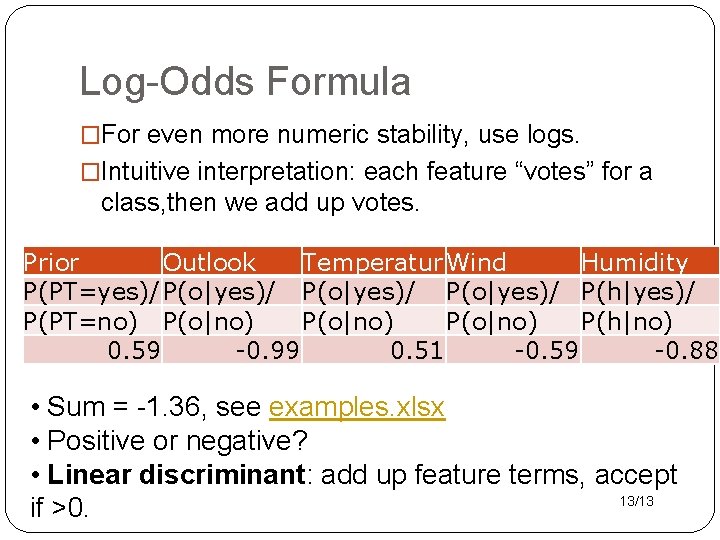

Log-Odds Formula �For even more numeric stability, use logs. �Intuitive interpretation: each feature “votes” for a class, then we add up votes. Prior Outlook Temperatur Wind Humidity P(PT=yes)/ P(o|yes)/ P(h|yes)/ P(PT=no) P(o|no) P(h|no) 0. 59 -0. 99 0. 51 -0. 59 -0. 88 • Sum = -1. 36, see examples. xlsx • Positive or negative? • Linear discriminant: add up feature terms, accept 13/13 if >0.

- Slides: 13