Bayesian ANOVA and Decision Making Greg Francis PSY

Bayesian ANOVA and Decision Making Greg Francis PSY 626: Bayesian Statistics for Psychological Science Fall 2020 Purdue University

Search of memory l How is memory searched? l Explore by varying the number of items in memory set l measure reaction time w Sternberg (1969) NO 5329 8

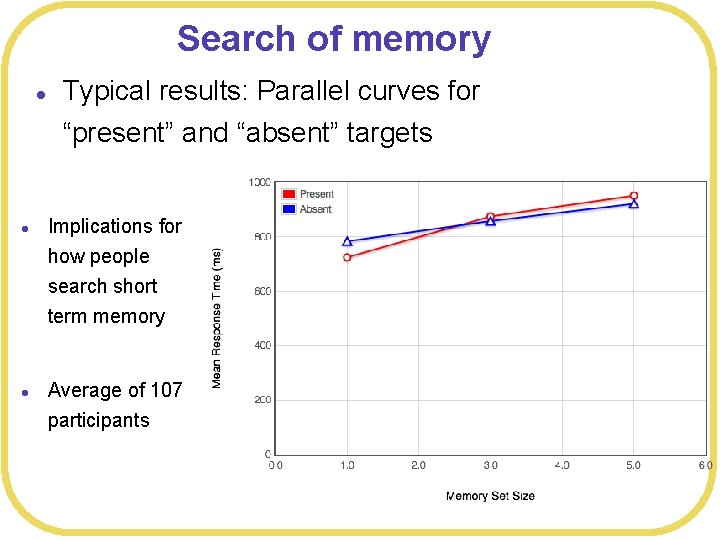

Search of memory l Typical results: Parallel curves for “present” and “absent” targets l l Implications for how people search short term memory Average of 107 participants

Mixed design ANOVA l Sternberg search experiment l 113 participants

![> bf 2 Bayes factor analysis -------[1] Memory. Set. Size + Participant : 5. > bf 2 Bayes factor analysis -------[1] Memory. Set. Size + Participant : 5.](http://slidetodoc.com/presentation_image_h2/aa0436239cb0c423daa322795278b714/image-5.jpg)

> bf 2 Bayes factor analysis -------[1] Memory. Set. Size + Participant : 5. 519399 e+126 ± 0. 59% [2] Condition + Participant : 0. 02776611 ± 1. 88% [3] Memory. Set. Size: Condition + Participant : 70932. 28 ± 1. 18% [4] Memory. Set. Size + Condition + Participant : 1. 518957 e+125 ± 2. 36% [5] Memory. Set. Size + Memory. Set. Size: Condition + Participant : 1. 910749 e+132 ± 1. 23% [6] Condition + Memory. Set. Size: Condition + Participant : 2186. 301 ± 14. 32% [7] Memory. Set. Size + Condition + Memory. Set. Size: Condition + Participant : 5. 138857 e+130 ± 1. 94% Model comparison l compare(SSmodel. Interaction. Condition, SSmodel. Interaction. MMS, SSmodel. Interaction. Only, SSmodel. Interaction, SSmodel. Additive , Against denominator: SSmodel. Condition, SSmodel. MSS, SSmodel. Null) RT ~ Participant --Bayes factor type: BFlinear. Model, JZS WAIC p. WAIC d. WAIC weight SE d. SE WAIC p. W SSmodel. Interaction 93808. 8 23 93929. 4 365. 5 28. 6 0 176. 99 17. 76 SSmodel. Additive 93841. 9 22 93955. 0 325. 4 54. 1 0 176. 49 19. 92 SSmodel. Interaction. MMS 93864. 5 93974. 8 220. 8 74. 0 0 174. 86 25. 94 SSmodel. MSS 93919. 9 16 94161. 9 278. 8 261. 1 0 172. 10 45. 21 SSmodel. Interaction. Condition 94022. 94403. 1 224. 6 502. 2 0 168. 76 55. 18 SSmodel. Interaction. Only 94341. 3 94500. 0 190. 9 599. 1 0 165. 76 56. 60 SSmodel. Condition 94493. 2 16 SSmodel. Null 94556. 6 114. 5 655. 8 0 164. 83 61. 12 94554. 9 112. l SSmodel. Additive 93900. 8 302. 5 0. 0 1 176. 90 l SSmodel. Interaction. MMS l SSmodel. MSS l SSmodel. Interaction. Condition l SSmodel. Interaction. Only l SSmodel. Condition l SSmodel. Null l Strongly favors additive model (with no interaction) NA

Correlations between parameters l l l Additive effects with interaction SSdata. Interaction <- data. frame(RT= SSdata$RT, Condition=SSdata$Target. Present, Memory. Set. Size=SSdata$Memory. Set. Size, Participant=SSdata$Participant) SSmodel. Interaction <- map 2 stan( l alist( RT ~ dnorm(mu, sigma), l mu <- a[Participant] + b[Participant]*Memory. Set. Size + c[Participant]*Condition+ d[Participant]*Memory. Set. Size*Condition, l c(a, b, c, d)[Participant] ~ dmvnorm 2(c(grand_mua, grand_mub, grand_muc, grand_mud), grand_s, Rho), l grand_mua ~ dnorm(1000, 1000), l grand_s ~ dunif(0, 2000), l grand_mub ~ dnorm(0, 100), l grand_muc ~ dnorm(0, 100), l grand_mud ~ dnorm(0, 100), l Rho ~ dlkjcorr(2), l sigma ~ dunif(0, 1000) l ), data= SSdata. Interaction )

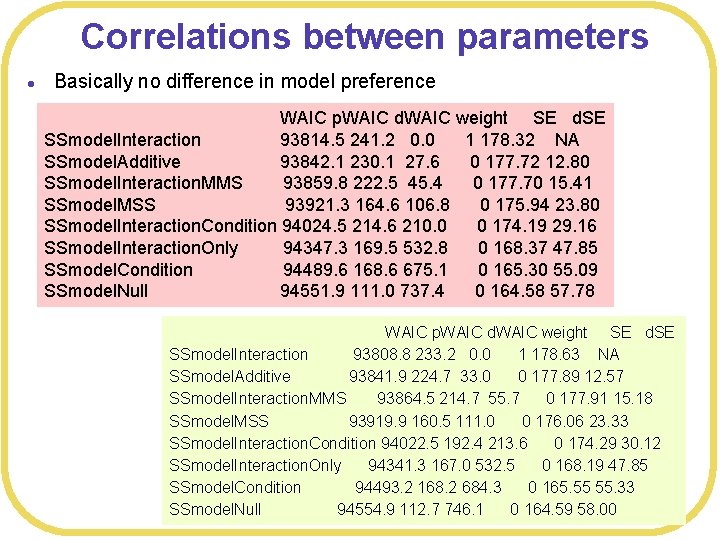

Correlations between parameters l Basically no difference in model preference WAIC p. WAIC d. WAIC weight SE d. SE SSmodel. Interaction 93814. 5 241. 2 0. 0 1 178. 32 NA SSmodel. Additive 93842. 1 230. 1 27. 6 0 177. 72 12. 80 SSmodel. Interaction. MMS 93859. 8 222. 5 45. 4 0 177. 70 15. 41 SSmodel. MSS 93921. 3 164. 6 106. 8 0 175. 94 23. 80 SSmodel. Interaction. Condition 94024. 5 214. 6 210. 0 0 174. 19 29. 16 SSmodel. Interaction. Only 94347. 3 169. 5 532. 8 0 168. 37 47. 85 SSmodel. Condition 94489. 6 168. 6 675. 1 0 165. 30 55. 09 SSmodel. Null 94551. 9 111. 0 737. 4 0 164. 58 57. 78 WAIC p. WAIC d. WAIC weight SE d. SE SSmodel. Interaction 93808. 8 233. 2 0. 0 1 178. 63 NA SSmodel. Additive 93841. 9 224. 7 33. 0 0 177. 89 12. 57 SSmodel. Interaction. MMS 93864. 5 214. 7 55. 7 0 177. 91 15. 18 SSmodel. MSS 93919. 9 160. 5 111. 0 0 176. 06 23. 33 SSmodel. Interaction. Condition 94022. 5 192. 4 213. 6 0 174. 29 30. 12 SSmodel. Interaction. Only 94341. 3 167. 0 532. 5 0 168. 19 47. 85 SSmodel. Condition 94493. 2 168. 2 684. 3 0 165. 55 55. 33 SSmodel. Null 94554. 9 112. 7 746. 1 0 164. 59 58. 00

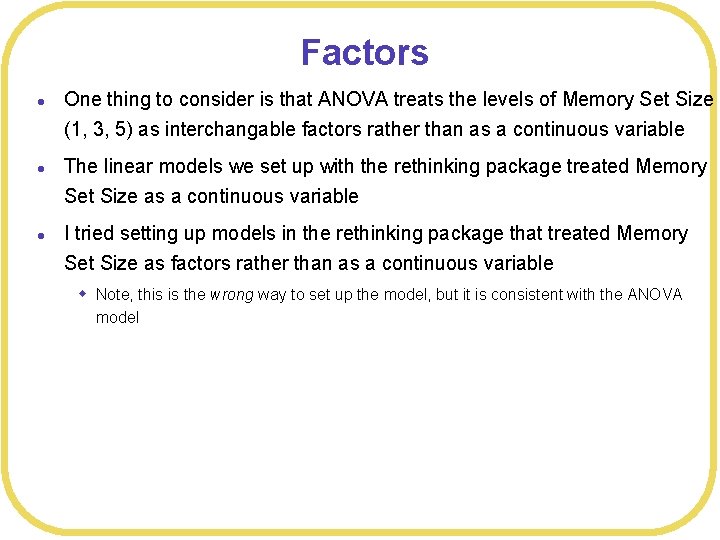

Factors l l l One thing to consider is that ANOVA treats the levels of Memory Set Size (1, 3, 5) as interchangable factors rather than as a continuous variable The linear models we set up with the rethinking package treated Memory Set Size as a continuous variable I tried setting up models in the rethinking package that treated Memory Set Size as factors rather than as a continuous variable w Note, this is the wrong way to set up the model, but it is consistent with the ANOVA model

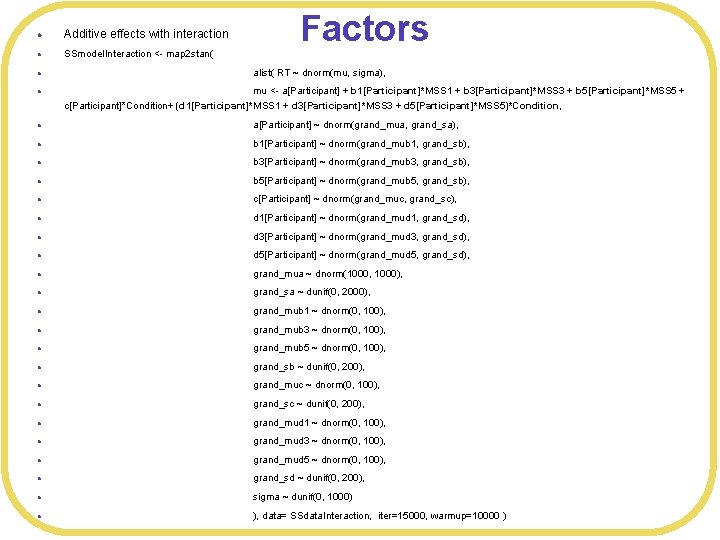

l Additive effects with interaction l SSmodel. Interaction <- map 2 stan( Factors l alist( RT ~ dnorm(mu, sigma), l mu <- a[Participant] + b 1[Participant]*MSS 1 + b 3[Participant]*MSS 3 + b 5[Participant]*MSS 5 + c[Participant]*Condition+ (d 1[Participant]*MSS 1 + d 3[Participant]*MSS 3 + d 5[Participant]*MSS 5)*Condition, l a[Participant] ~ dnorm(grand_mua, grand_sa), l b 1[Participant] ~ dnorm(grand_mub 1, grand_sb), l b 3[Participant] ~ dnorm(grand_mub 3, grand_sb), l b 5[Participant] ~ dnorm(grand_mub 5, grand_sb), l c[Participant] ~ dnorm(grand_muc, grand_sc), l d 1[Participant] ~ dnorm(grand_mud 1, grand_sd), l d 3[Participant] ~ dnorm(grand_mud 3, grand_sd), l d 5[Participant] ~ dnorm(grand_mud 5, grand_sd), l grand_mua ~ dnorm(1000, 1000), l grand_sa ~ dunif(0, 2000), l grand_mub 1 ~ dnorm(0, 100), l grand_mub 3 ~ dnorm(0, 100), l grand_mub 5 ~ dnorm(0, 100), l grand_sb ~ dunif(0, 200), l grand_muc ~ dnorm(0, 100), l grand_sc ~ dunif(0, 200), l grand_mud 1 ~ dnorm(0, 100), l grand_mud 3 ~ dnorm(0, 100), l grand_mud 5 ~ dnorm(0, 100), l grand_sd ~ dunif(0, 200), l sigma ~ dunif(0, 1000) l ), data= SSdata. Interaction, iter=15000, warmup=10000 )

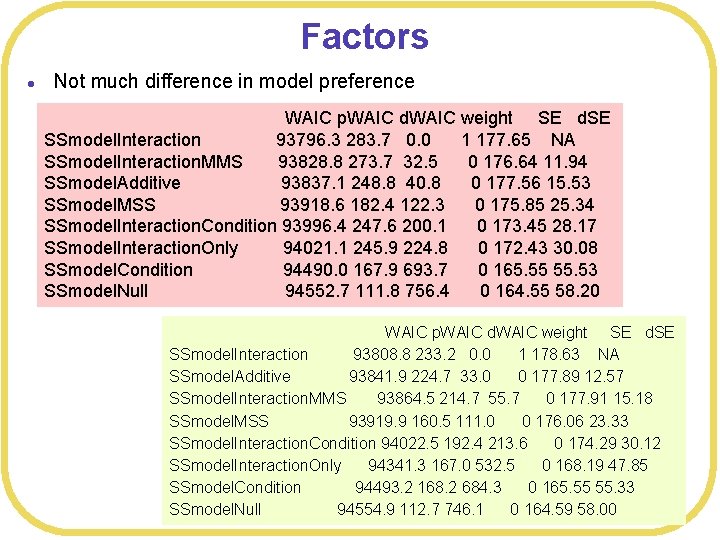

Factors l Not much difference in model preference WAIC p. WAIC d. WAIC weight SE d. SE SSmodel. Interaction 93796. 3 283. 7 0. 0 1 177. 65 NA SSmodel. Interaction. MMS 93828. 8 273. 7 32. 5 0 176. 64 11. 94 SSmodel. Additive 93837. 1 248. 8 40. 8 0 177. 56 15. 53 SSmodel. MSS 93918. 6 182. 4 122. 3 0 175. 85 25. 34 SSmodel. Interaction. Condition 93996. 4 247. 6 200. 1 0 173. 45 28. 17 SSmodel. Interaction. Only 94021. 1 245. 9 224. 8 0 172. 43 30. 08 SSmodel. Condition 94490. 0 167. 9 693. 7 0 165. 55 55. 53 SSmodel. Null 94552. 7 111. 8 756. 4 0 164. 55 58. 20 WAIC p. WAIC d. WAIC weight SE d. SE SSmodel. Interaction 93808. 8 233. 2 0. 0 1 178. 63 NA SSmodel. Additive 93841. 9 224. 7 33. 0 0 177. 89 12. 57 SSmodel. Interaction. MMS 93864. 5 214. 7 55. 7 0 177. 91 15. 18 SSmodel. MSS 93919. 9 160. 5 111. 0 0 176. 06 23. 33 SSmodel. Interaction. Condition 94022. 5 192. 4 213. 6 0 174. 29 30. 12 SSmodel. Interaction. Only 94341. 3 167. 0 532. 5 0 168. 19 47. 85 SSmodel. Condition 94493. 2 168. 2 684. 3 0 165. 55 55. 33 SSmodel. Null 94554. 9 112. 7 746. 1 0 164. 59 58. 00

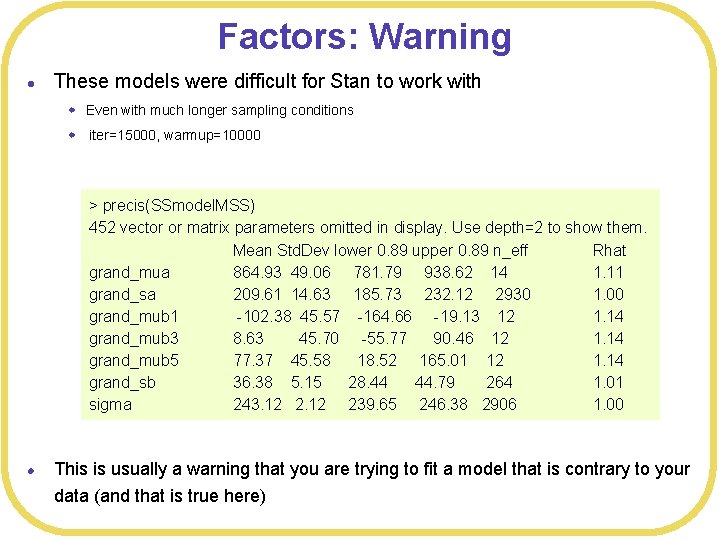

Factors: Warning l These models were difficult for Stan to work with w Even with much longer sampling conditions w iter=15000, warmup=10000 > precis(SSmodel. MSS) 452 vector or matrix parameters omitted in display. Use depth=2 to show them. Mean Std. Dev lower 0. 89 upper 0. 89 n_eff Rhat grand_mua 864. 93 49. 06 781. 79 938. 62 14 1. 11 grand_sa 209. 61 14. 63 185. 73 232. 12 2930 1. 00 grand_mub 1 -102. 38 45. 57 -164. 66 -19. 13 12 1. 14 grand_mub 3 8. 63 45. 70 -55. 77 90. 46 12 1. 14 grand_mub 5 77. 37 45. 58 18. 52 165. 01 12 1. 14 grand_sb 36. 38 5. 15 28. 44 44. 79 264 1. 01 sigma 243. 12 239. 65 246. 38 2906 1. 00 l This is usually a warning that you are trying to fit a model that is contrary to your data (and that is true here)

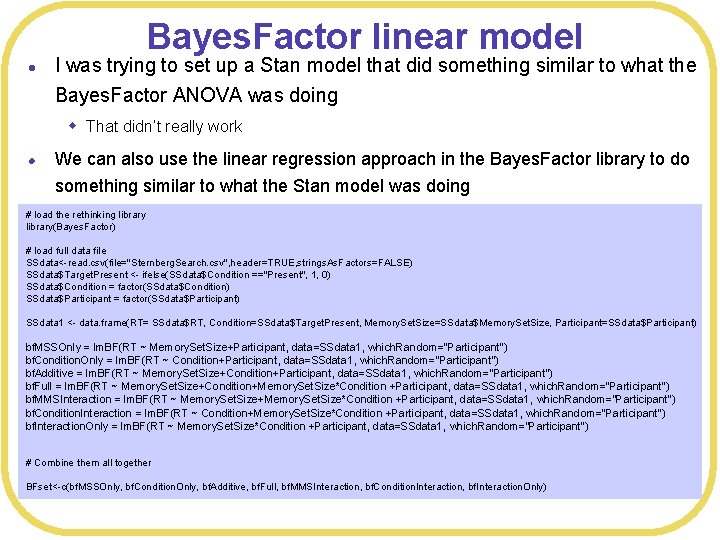

Bayes. Factor linear model l I was trying to set up a Stan model that did something similar to what the Bayes. Factor ANOVA was doing w That didn’t really work l We can also use the linear regression approach in the Bayes. Factor library to do something similar to what the Stan model was doing # load the rethinking library(Bayes. Factor) # load full data file SSdata<-read. csv(file="Sternberg. Search. csv", header=TRUE, strings. As. Factors=FALSE) SSdata$Target. Present <- ifelse(SSdata$Condition =="Present", 1, 0) SSdata$Condition = factor(SSdata$Condition) SSdata$Participant = factor(SSdata$Participant) SSdata 1 <- data. frame(RT= SSdata$RT, Condition=SSdata$Target. Present, Memory. Set. Size=SSdata$Memory. Set. Size, Participant=SSdata$Participant) bf. MSSOnly = lm. BF(RT ~ Memory. Set. Size+Participant, data=SSdata 1, which. Random="Participant”) bf. Condition. Only = lm. BF(RT ~ Condition+Participant, data=SSdata 1, which. Random="Participant”) bf. Additive = lm. BF(RT ~ Memory. Set. Size+Condition+Participant, data=SSdata 1, which. Random="Participant”) bf. Full = lm. BF(RT ~ Memory. Set. Size+Condition+Memory. Set. Size*Condition +Participant, data=SSdata 1, which. Random="Participant”) bf. MMSInteraction = lm. BF(RT ~ Memory. Set. Size+Memory. Set. Size*Condition +Participant, data=SSdata 1, which. Random="Participant”) bf. Condition. Interaction = lm. BF(RT ~ Condition+Memory. Set. Size*Condition +Participant, data=SSdata 1, which. Random="Participant”) bf. Interaction. Only = lm. BF(RT ~ Memory. Set. Size*Condition +Participant, data=SSdata 1, which. Random="Participant") # Combine them all together BFset<-c(bf. MSSOnly, bf. Condition. Only, bf. Additive, bf. Full, bf. MMSInteraction, bf. Condition. Interaction, bf. Interaction. Only)

![Bayes. Factor l > bf 2 Bayes factor analysis -------[1] Memory. Set. Size + Bayes. Factor l > bf 2 Bayes factor analysis -------[1] Memory. Set. Size +](http://slidetodoc.com/presentation_image_h2/aa0436239cb0c423daa322795278b714/image-13.jpg)

Bayes. Factor l > bf 2 Bayes factor analysis -------[1] Memory. Set. Size + Participant : 5. 519399 e+126 ± 0. 59% [2] Condition + Participant : 0. 02776611 ± 1. 88% [3] Memory. Set. Size: Condition + Participant : 70932. 28 ± 1. 18% [4] Memory. Set. Size + Condition + Participant : 1. 518957 e+125 ± 2. 36% [5] Memory. Set. Size + Memory. Set. Size: Condition + Participant : 1. 910749 e+132 ± 1. 23% [6] Condition + Memory. Set. Size: Condition + Participant : 2186. 301 ± 14. 32% [7] Memory. Set. Size + Condition + Memory. Set. Size: Condition + Participant : 5. 138857 e+130 ± 1. 94% Comparison favors Interaction. Only model (compared to the null) Against denominator: RT ~ Participant --Bayes factor type: BFlinear. Model, JZS > BFset Bayes factor analysis -------[1] Memory. Set. Size + Participant : 6. 318224 e+774 ± 3. 18% [2] Condition + Participant : 3. 284865 e+647 ± 1. 05% [3] Memory. Set. Size + Condition + Participant : 2. 186589 e+773 ± 1. 83% [4] Memory. Set. Size + Condition + Memory. Set. Size * Condition + Participant : 1. 346092 e+779 ± 0. 48% [5] Memory. Set. Size + Memory. Set. Size * Condition + Participant : 1. 357101 e+779 ± 0. 47% [6] Condition + Memory. Set. Size * Condition + Participant : 1. 364203 e+779 ± 0. 62% [7] Memory. Set. Size * Condition + Participant : 1. 379715 e+779 ± 1. 83% Against denominator: Intercept only --Bayes factor type: BFlinear. Model, JZS

![Bayes. Factor l Models with the interaction term are indistinguishable > BFset/BFset[7] Bayes factor Bayes. Factor l Models with the interaction term are indistinguishable > BFset/BFset[7] Bayes factor](http://slidetodoc.com/presentation_image_h2/aa0436239cb0c423daa322795278b714/image-14.jpg)

Bayes. Factor l Models with the interaction term are indistinguishable > BFset/BFset[7] Bayes factor analysis -------[1] Memory. Set. Size + Participant : 4. 579368 e-05 ± 3. 67% [2] Condition + Participant : 2. 380828 e-132 ± 2. 11% [3] Memory. Set. Size + Condition + Participant : 1. 584812 e-06 ± 2. 59% [4] Memory. Set. Size + Condition + Memory. Set. Size * Condition + Participant : 0. 9756306 ± 1. 89% [5] Memory. Set. Size + Memory. Set. Size * Condition + Participant : 0. 9836098 ± 1. 89% [6] Condition + Memory. Set. Size * Condition + Participant : 0. 9887569 ± 1. 93% [7] Memory. Set. Size * Condition + Participant : 1 ± 0% Against denominator: RT ~ Memory. Set. Size * Condition + Participant --Bayes factor type: BFlinear. Model, JZS

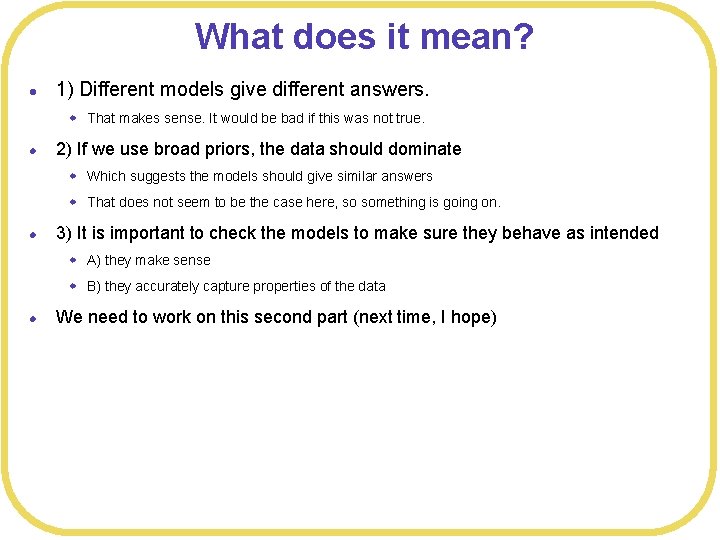

What does it mean? l 1) Different models give different answers. w That makes sense. It would be bad if this was not true. l 2) If we use broad priors, the data should dominate w Which suggests the models should give similar answers w That does not seem to be the case here, so something is going on. l 3) It is important to check the models to make sure they behave as intended w A) they make sense w B) they accurately capture properties of the data l We need to work on this second part (next time, I hope)

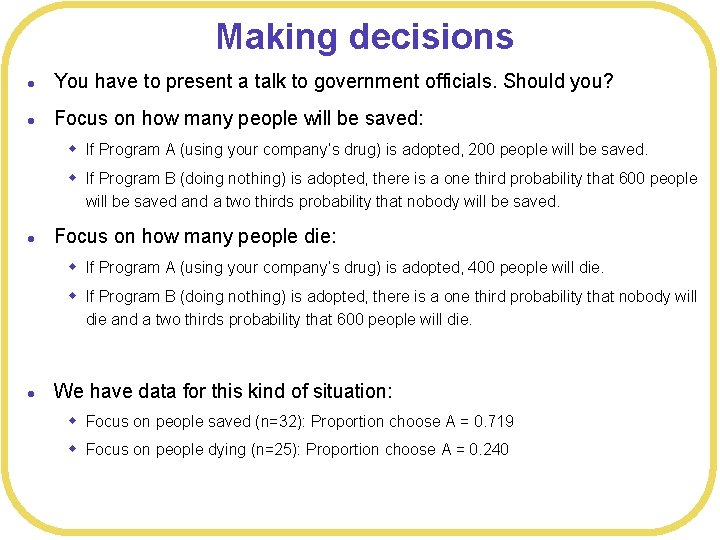

Making decisions l In hypothesis testing, we tend to make a decision based on whether a result is “statistically significant” w E. g. , an effect is “real” or “reliable” l There is a tendency to transfer this approach to a Bayesian analysis w Comparing BF or WAIC l These kinds of approaches to decision making operate in a “vacuum” w They are bound to fail for some situations l A real strength of the Bayesian approach is that the posterior distributions promote decision making that can take into account the context of the situation (not a vacuum)

Making decisions l l Suppose you work for a company that has a drug able to mitigate the effects of a disease but that also has severe side effects You know that how you frame choices can impact decision making, so you want to advise the marketing department on how to present the impact of the drug relative to doing nothing. The situation is: The country is preparing for the outbreak of an unusual disease, which is expected to kill 600 people. Two alternative programs to combat the disease have been proposed.

Making decisions l You have to present a talk to government officials. Should you? l Focus on how many people will be saved: w If Program A (using your company’s drug) is adopted, 200 people will be saved. w If Program B (doing nothing) is adopted, there is a one third probability that 600 people will be saved and a two thirds probability that nobody will be saved. l Focus on how many people die: w If Program A (using your company’s drug) is adopted, 400 people will die. w If Program B (doing nothing) is adopted, there is a one third probability that nobody will die and a two thirds probability that 600 people will die. l We have data for this kind of situation: w Focus on people saved (n=32): Proportion choose A = 0. 719 w Focus on people dying (n=25): Proportion choose A = 0. 240

Bayesian model l We analyzed this data in multiple ways. l Stan l l l > a. Values<-c() > for(p in unique(clean. Alt. Data$Statement. Type)){ Score=DMdata$Problem 1 + code<-sprintf("a[%d]", p) DMalt. Model 1 <- map 2 stan( + a. Values <- c(a. Values, coef(DMalt. Model 1)[code]) +} alist( Score ~ dbinom(1, p), > logit(p) <- a[Statement. Type], Ø cat("Alt probability of respond A: ", logistic(a. Values), "n") a[Statement. Type] ~ dnorm(0, 10) ), data=ofclean. Alt. Data, iter=1 e 4, warmup=2000 ) Alt probability respond A: 0. 736105 0. 2297372 > precis(DMalt. Model 1, depth=2) Mean Std. Dev lower 0. 89 upper 0. 89 n_eff Rhat a[1] 1. 03 0. 40 0. 41 1. 65 4086 1 a[2] -1. 21 0. 48 -1. 94 -0. 42 4061 1

![Posteriors l > post<-extract. samples(DMalt. Model 1) l > mean(logistic(post$a[, 1])) l [1] 0. Posteriors l > post<-extract. samples(DMalt. Model 1) l > mean(logistic(post$a[, 1])) l [1] 0.](http://slidetodoc.com/presentation_image_h2/aa0436239cb0c423daa322795278b714/image-20.jpg)

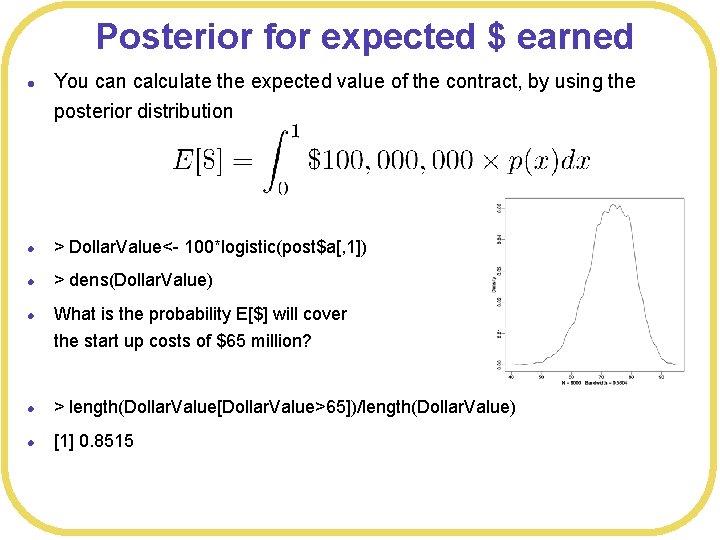

Posteriors l > post<-extract. samples(DMalt. Model 1) l > mean(logistic(post$a[, 1])) l [1] 0. 7292397 l > sd(logistic(post$a[, 1])) l [1] 0. 07514997 l > mean(logistic(post$a[, 2])) l [1] 0. 2402673 l > sd(logistic(post$a[, 2])) l [1] 0. 08346239 l > dens(logistic(post$a[, 1])) l > dens(logistic(post$a[, 2]))

Expected value l If you frame your presentation about your company’s drug around people being saved, what is the probability your drug will be used? l > mean(logistic(post$a[, 1])) l [1] 0. 7292397 l l If your company’s drug is selected by the government, the contract is worth $100 million However, the company has to start production of the drug before it knows it has been selected w Production will cost $65 million, which is nearly all start up costs (manufacturing costs very little) w Is this a good bet?

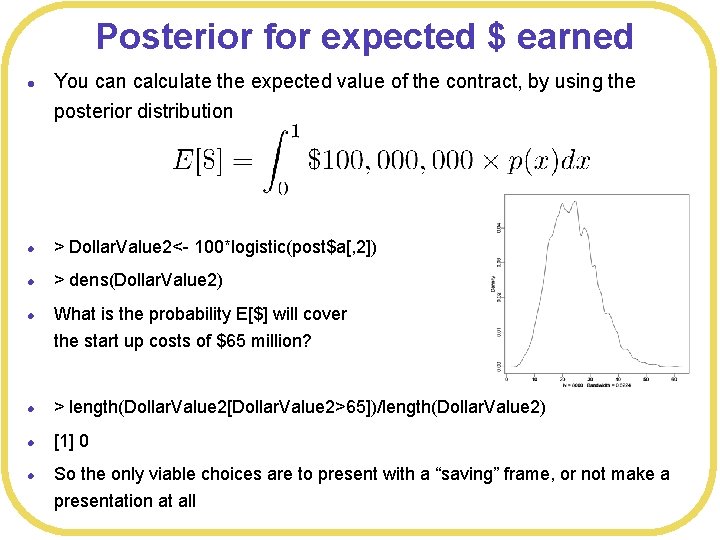

Posterior for expected $ earned l You can calculate the expected value of the contract, by using the posterior distribution l > Dollar. Value<- 100*logistic(post$a[, 1]) l > dens(Dollar. Value) l What is the probability E[$] will cover the start up costs of $65 million? l > length(Dollar. Value[Dollar. Value>65])/length(Dollar. Value) l [1] 0. 8515

Expected value l If you frame your presentation about your company’s drug around people dying, what is the probability your drug will be used? l > mean(logistic(post$a[, 2])) l [1] 0. 2402673 l l If your company’s drug is selected by the government, the contract is worth $100 million However, the company has to start production of the drug before it knows it has been selected w Production will cost $65 million, which is nearly all start up costs (manufacturing costs very little) w Is this a good bet?

Posterior for expected $ earned l You can calculate the expected value of the contract, by using the posterior distribution l > Dollar. Value 2<- 100*logistic(post$a[, 2]) l > dens(Dollar. Value 2) l What is the probability E[$] will cover the start up costs of $65 million? l > length(Dollar. Value 2[Dollar. Value 2>65])/length(Dollar. Value 2) l [1] 0 l So the only viable choices are to present with a “saving” frame, or not make a presentation at all

Other impacts l Your CEO explains that the company can negotiate cheaper start up costs if the probability of getting the contract is higher w Suppliers do not like uncertainty! w Reduces risk of company failure and non-payment l She estimates that every 1% higher probability corresponds to saving $0. 1 million in start up costs l How does this affect the situation? l The expected costs are now (in millions of $’s) l Which has to be compared to the expected gains

Posterior distribution l Posterior distribution of costs is: l > Dollar. Cost<- 65 -0. 1*logistic(post$a[, 1])*100 l > dens(Dollar. Cost)

Posterior distribution of net l Posterior distribution of net earning is: l > Net. Value <-Dollar. Value -Dollar. Cost l > dens(Net. Value) l Probability of making a profit is l > length(Net. Value[Net. Value>0])/length(Net. Value) l [1] 0. 957125 l Probability of making more than $20 million is l > length(Net. Value[Net. Value>20])/length(Net. Value) l [1] 0. 3025 l > mean(Net. Value) l [1] 15. 21637

Decision making under uncertainty l How to make a decision depends on the goal. l For example, I have a small jar that we can imagine is filled with quarters. l Guess how many quarters we think is in the jar. l Give me a “best guess” and a 50% probability interval l Let’s iterate until we get a “class estimate”

Decision making under uncertainty l Given the class estimated probability interval, let’s fit a normal distribution l Mean = 316 l Standard deviation = 137

Decision making under uncertainty l Suppose your goal is to be as accurate as possible in guessing the number of quarters l Obviously your best guess is the mean of the distribution l What is the probability you will be correct?

Decision making under uncertainty l Suppose the situation is that if you happen to guess exactly the number of quarters in the jar, then I will give you the jar l Should this change your guess? l How? l What guess will maximize your expected winnings? l Expected value of guessing x: l Maximized with guessing

Decision making under uncertainty l l A local radio station sometimes runs a ”high-low” promotion Listeners call in and guess an amount in a “pot”. If the guess is correct (to the penny), the listener wins the money. Everyone hears the previous guesses, so listeners gradually zero in on the correct amount Suppose previous guesses have been: w $145. 78, which was deemed “low” w $532. 89, which was deemed “high” w $512. 86, which was deemed “high” w $188. 88, which was deemed “low” l What should you guess?

- Slides: 32