Bayes Factors Greg Francis PSY 626 Bayesian Statistics

Bayes Factors Greg Francis PSY 626: Bayesian Statistics for Psychological Science Fall 2020 Purdue University

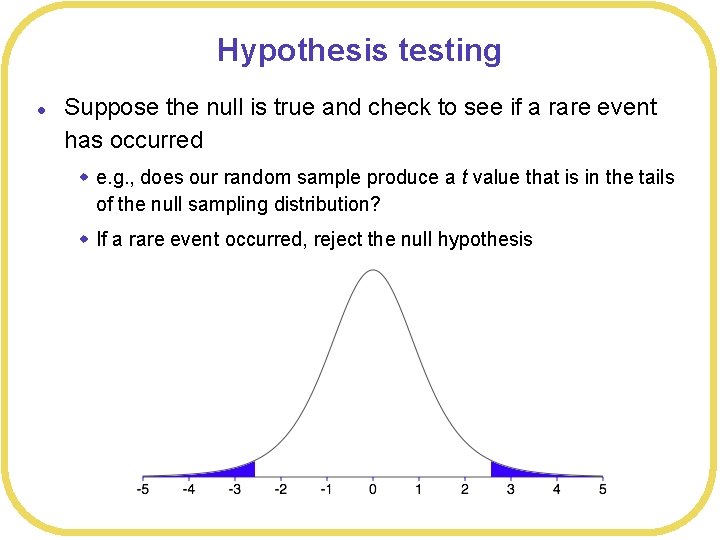

Hypothesis testing l Suppose the null is true and check to see if a rare event has occurred w e. g. , does our random sample produce a t value that is in the tails of the null sampling distribution? w If a rare event occurred, reject the null hypothesis

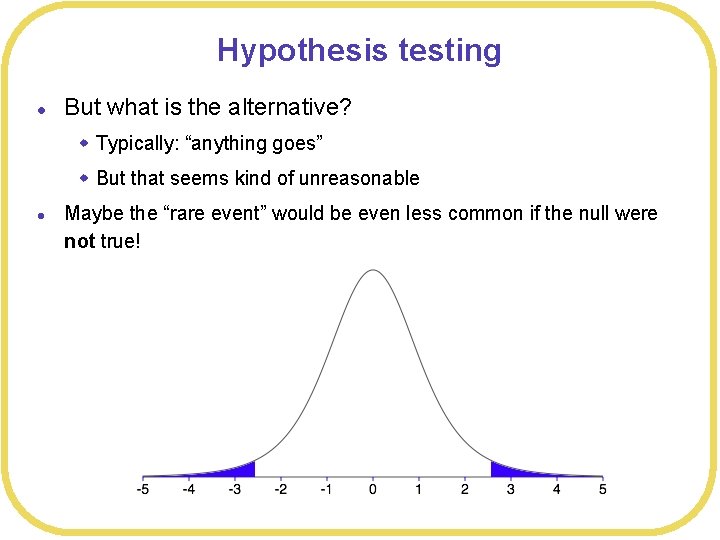

Hypothesis testing l But what is the alternative? w Typically: “anything goes” w But that seems kind of unreasonable l Maybe the “rare event” would be even less common if the null were not true!

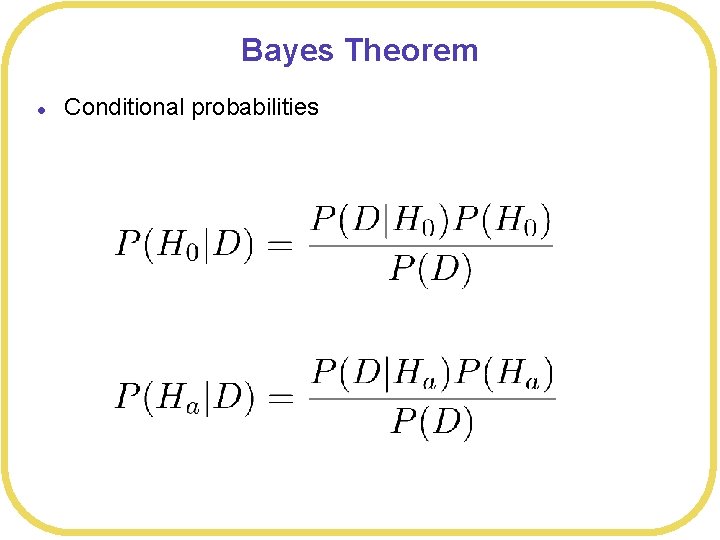

Bayes Theorem l Conditional probabilities

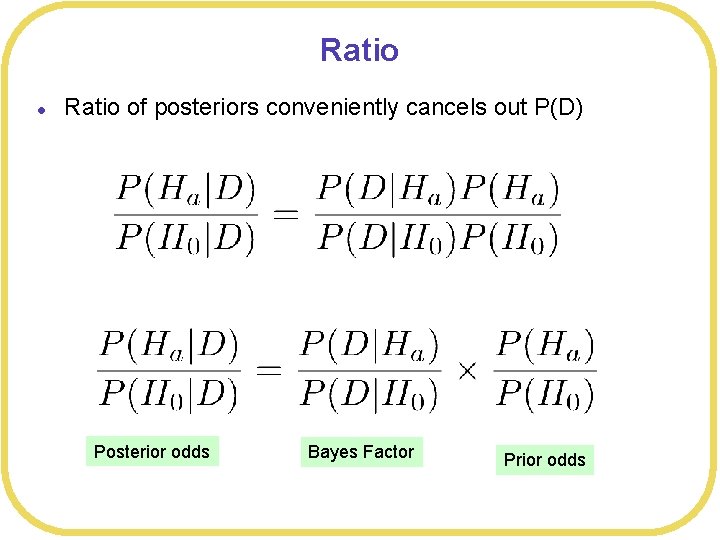

Ratio l Ratio of posteriors conveniently cancels out P(D) Posterior odds Bayes Factor Prior odds

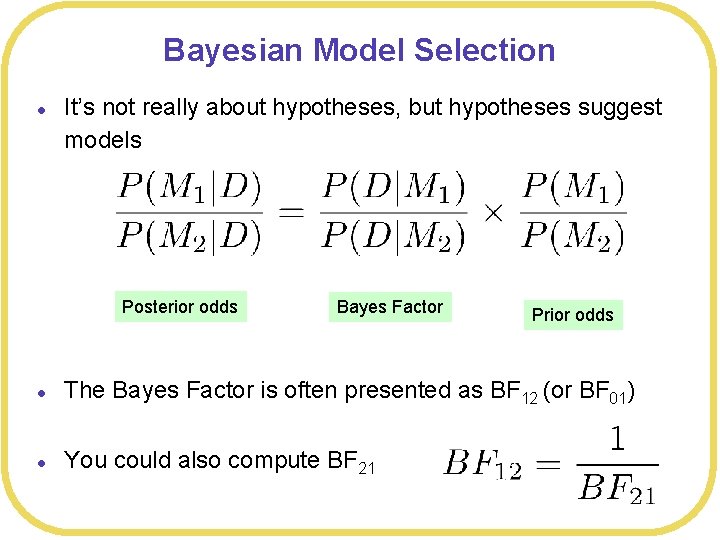

Bayesian Model Selection l It’s not really about hypotheses, but hypotheses suggest models Posterior odds Bayes Factor Prior odds l The Bayes Factor is often presented as BF 12 (or BF 01) l You could also compute BF 21

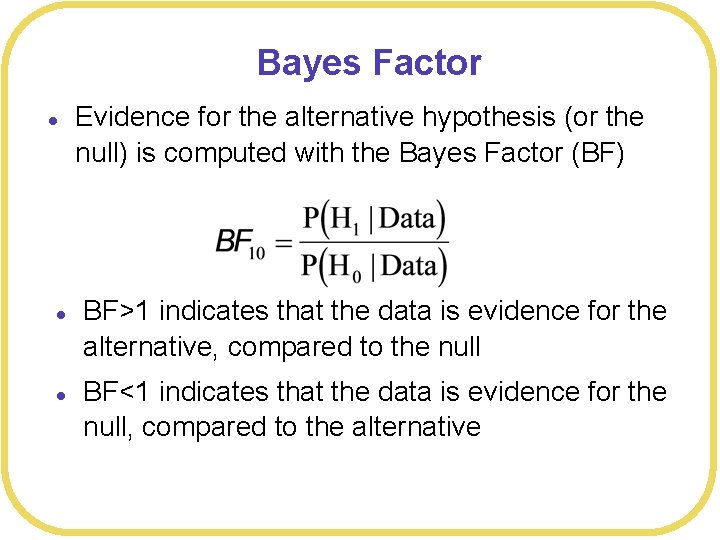

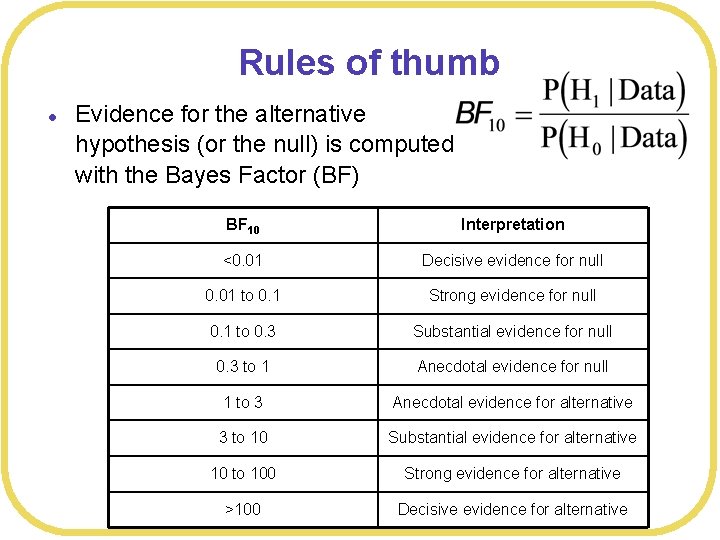

Bayes Factor l l l Evidence for the alternative hypothesis (or the null) is computed with the Bayes Factor (BF) BF>1 indicates that the data is evidence for the alternative, compared to the null BF<1 indicates that the data is evidence for the null, compared to the alternative

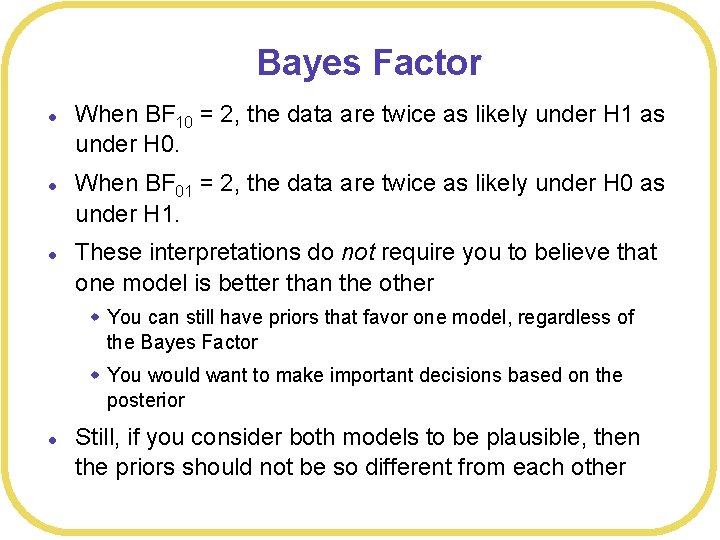

Bayes Factor l l l When BF 10 = 2, the data are twice as likely under H 1 as under H 0. When BF 01 = 2, the data are twice as likely under H 0 as under H 1. These interpretations do not require you to believe that one model is better than the other w You can still have priors that favor one model, regardless of the Bayes Factor w You would want to make important decisions based on the posterior l Still, if you consider both models to be plausible, then the priors should not be so different from each other

Rules of thumb l Evidence for the alternative hypothesis (or the null) is computed with the Bayes Factor (BF) BF 10 Interpretation <0. 01 Decisive evidence for null 0. 01 to 0. 1 Strong evidence for null 0. 1 to 0. 3 Substantial evidence for null 0. 3 to 1 Anecdotal evidence for null 1 to 3 Anecdotal evidence for alternative 3 to 10 Substantial evidence for alternative 10 to 100 Strong evidence for alternative >100 Decisive evidence for alternative

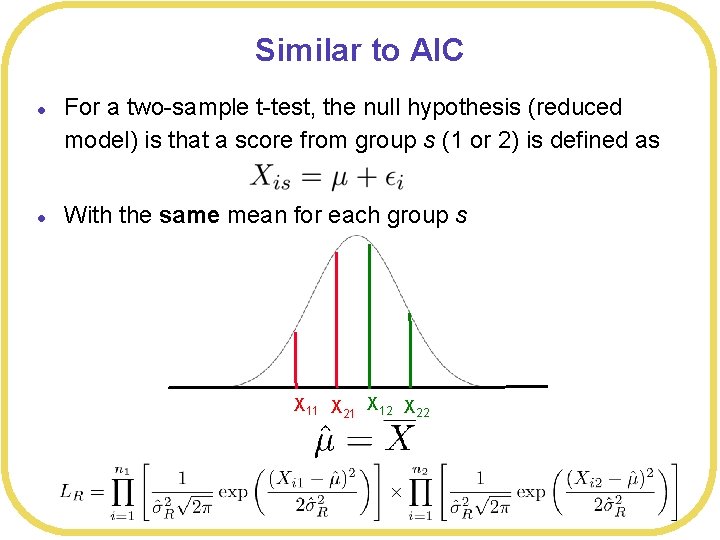

Similar to AIC l l For a two-sample t-test, the null hypothesis (reduced model) is that a score from group s (1 or 2) is defined as With the same mean for each group s X 11 X 21 X 12 X 22

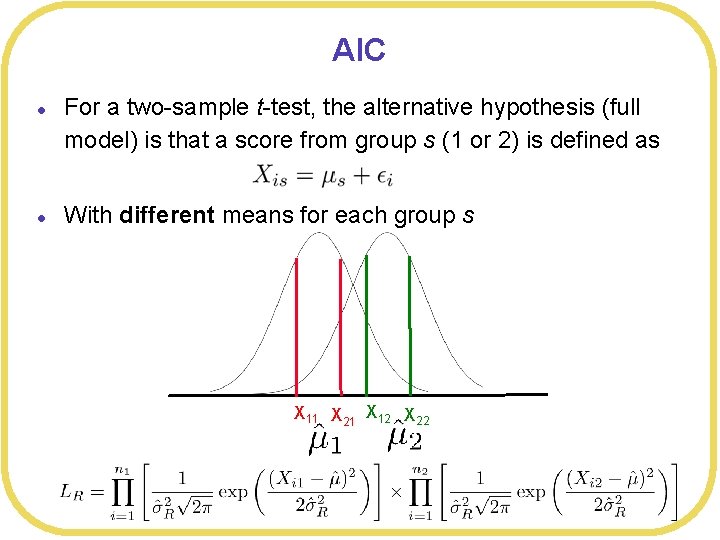

AIC l l For a two-sample t-test, the alternative hypothesis (full model) is that a score from group s (1 or 2) is defined as With different means for each group s X 11 X 21 X 12 X 22

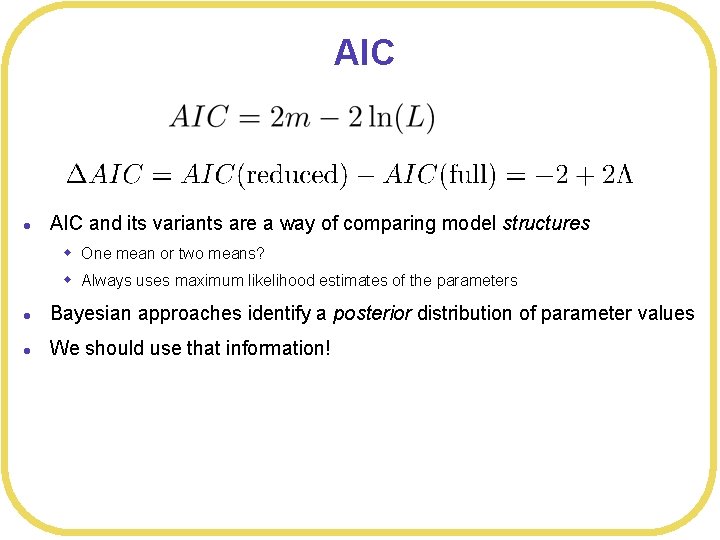

AIC l AIC and its variants are a way of comparing model structures w One mean or two means? w Always uses maximum likelihood estimates of the parameters l Bayesian approaches identify a posterior distribution of parameter values l We should use that information!

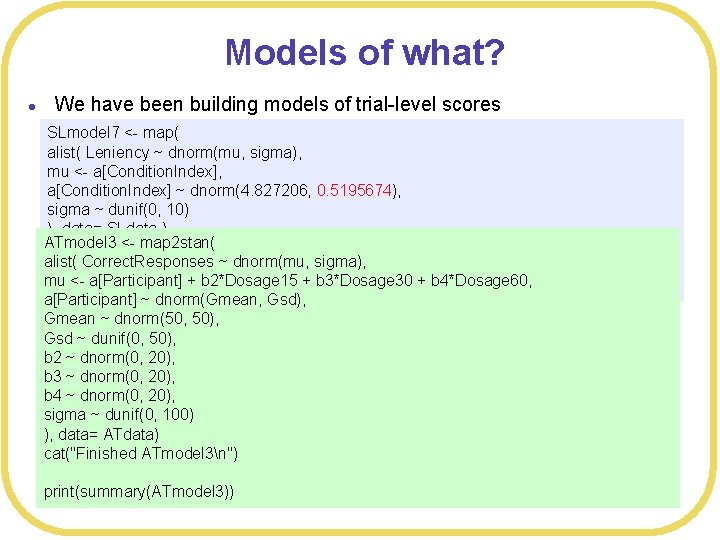

Models of what? l We have been building models of trial-level scores SLmodel 7 <- map( alist( Leniency ~ dnorm(mu, sigma), mu <- a[Condition. Index], a[Condition. Index] ~ dnorm(4. 827206, 0. 5195674), sigma ~ dunif(0, 10) ), data= SLdata ) ATmodel 3 <- map 2 stan( cat("Finished SLmodel 7n") alist( Correct. Responses ~ dnorm(mu, sigma), mu <- a[Participant] + b 2*Dosage 15 + b 3*Dosage 30 + b 4*Dosage 60, print(SLmodel 7) a[Participant] ~ dnorm(Gmean, Gsd), Gmean ~ dnorm(50, 50), Gsd ~ dunif(0, 50), b 2 ~ dnorm(0, 20), b 3 ~ dnorm(0, 20), b 4 ~ dnorm(0, 20), sigma ~ dunif(0, 100) ), data= ATdata) cat("Finished ATmodel 3n") print(summary(ATmodel 3))

Models of what? l We have been building models of trial-level scores l That is not the only option l In traditional hypothesis testing, we care more about effect sizes than about individual scores w Signal-to-noise ratio w Of course, the effect size is derived from the individual scores l In many cases, it is enough to just model the (standardized) effect size itself rather than the individual scores w Cohen’s d w t-statistic w p-value w Correlation r w “Sufficient” statistic

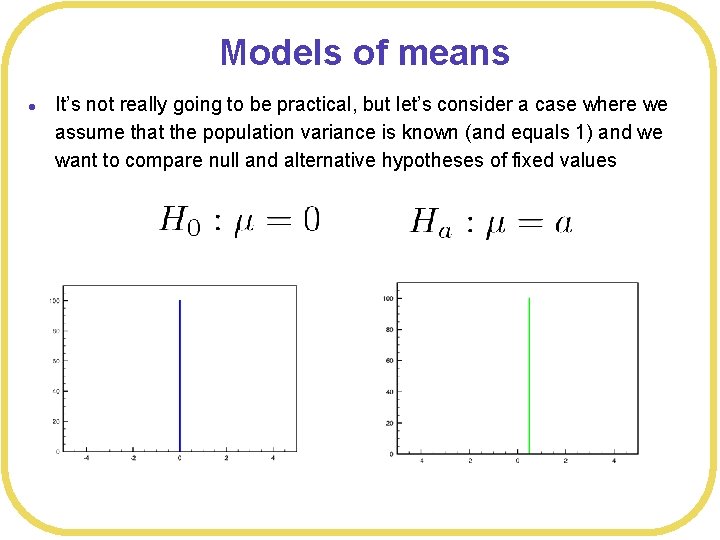

Models of means l It’s not really going to be practical, but let’s consider a case where we assume that the population variance is known (and equals 1) and we want to compare null and alternative hypotheses of fixed values

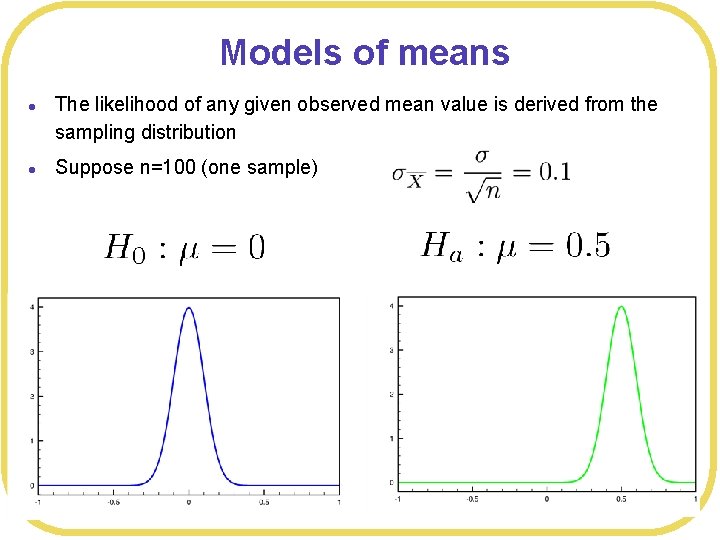

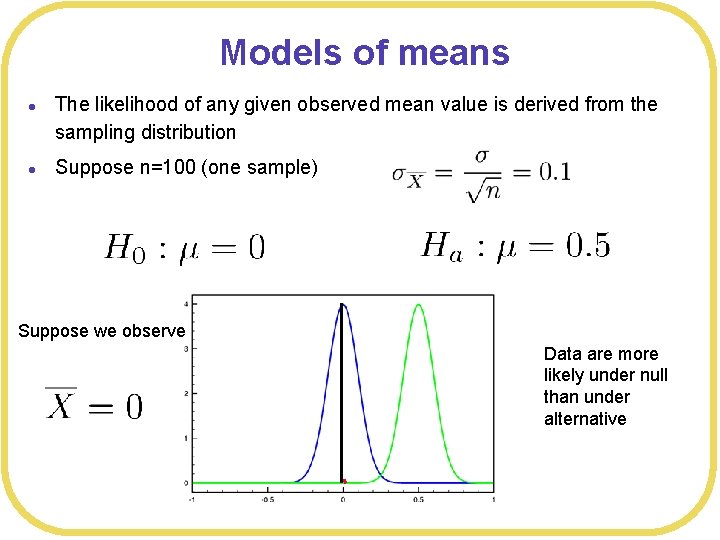

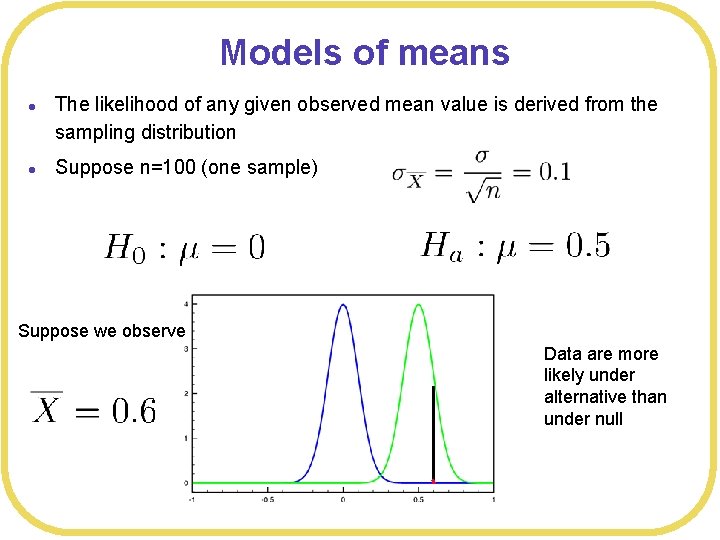

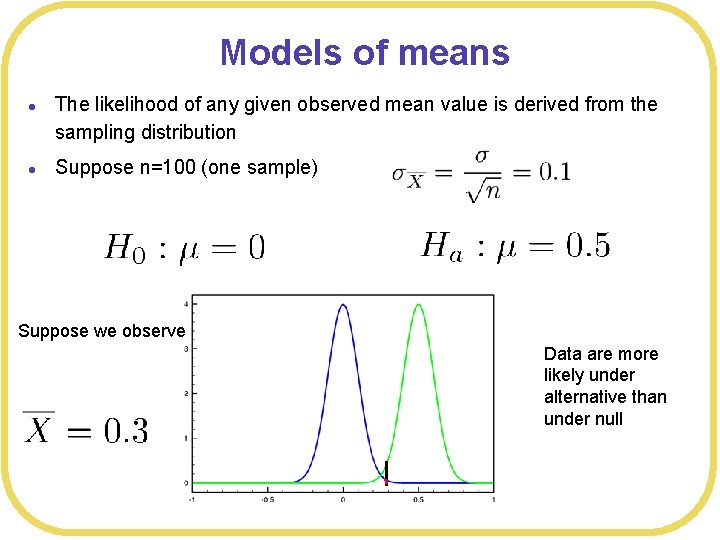

Models of means l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample)

Models of means l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample) Suppose we observe Data are more likely under null than under alternative

Models of means l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample) Suppose we observe Data are more likely under alternative than under null

Models of means l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample) Suppose we observe Data are more likely under alternative than under null

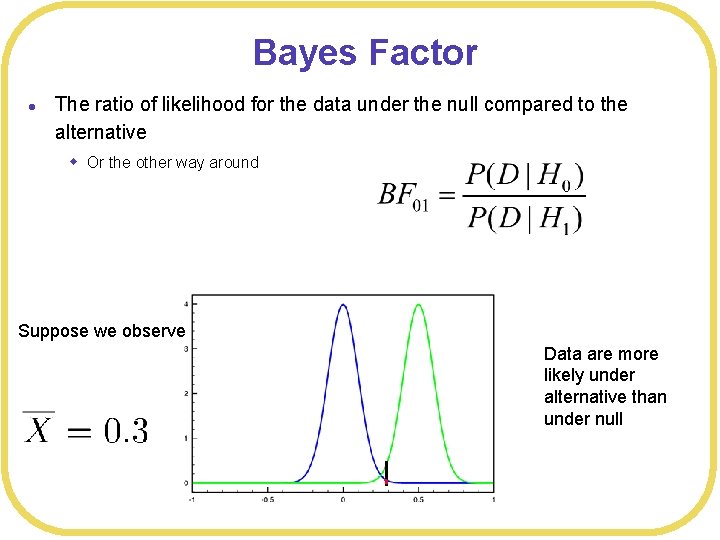

Bayes Factor l The ratio of likelihood for the data under the null compared to the alternative w Or the other way around Suppose we observe Data are more likely under alternative than under null

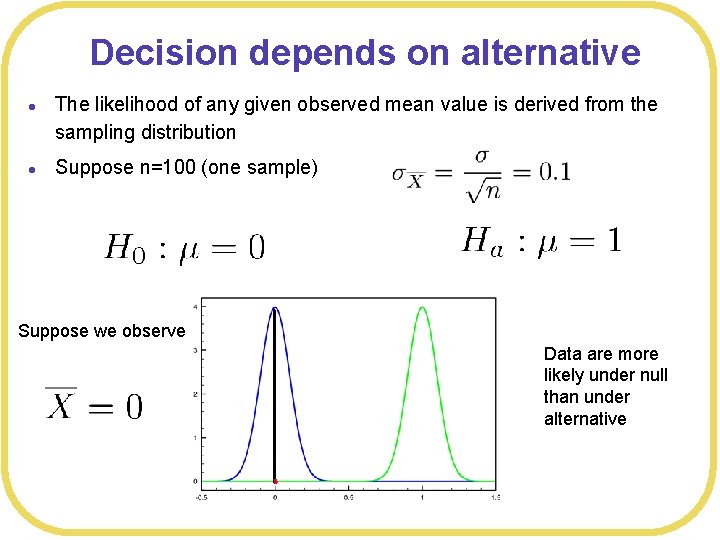

Decision depends on alternative l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample) Suppose we observe Data are more likely under null than under alternative

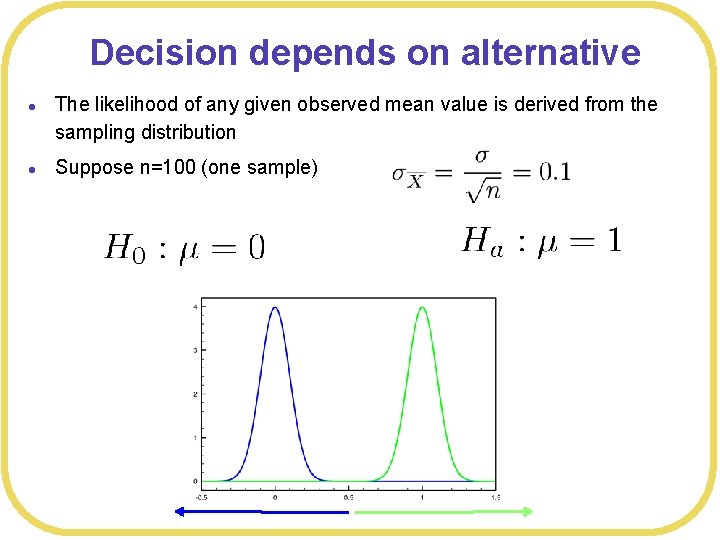

Decision depends on alternative l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample)

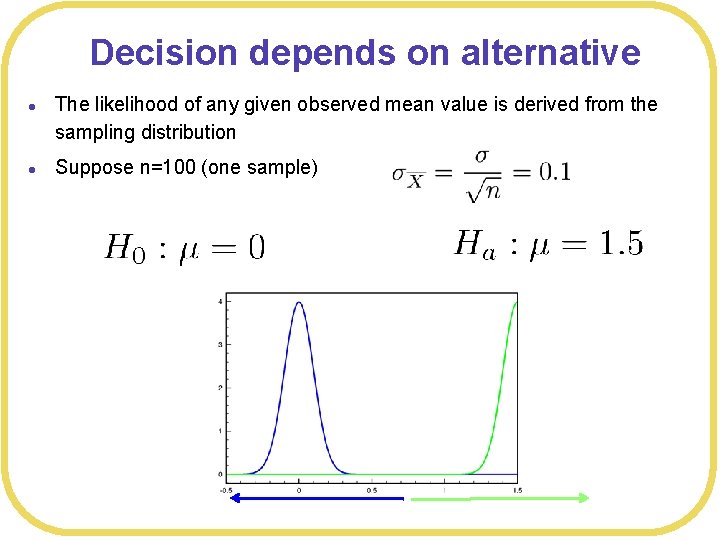

Decision depends on alternative l l The likelihood of any given observed mean value is derived from the sampling distribution Suppose n=100 (one sample)

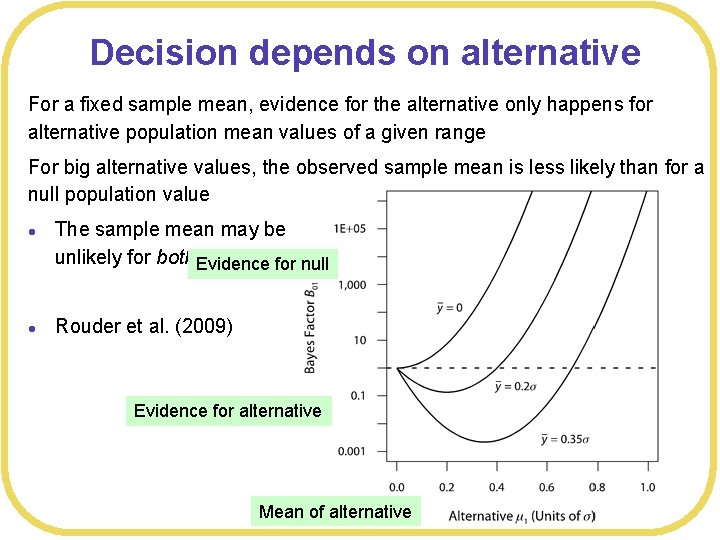

Decision depends on alternative For a fixed sample mean, evidence for the alternative only happens for alternative population mean values of a given range For big alternative values, the observed sample mean is less likely than for a null population value l l The sample mean may be unlikely for both Evidence models for null Rouder et al. (2009) Evidence for alternative Mean of alternative

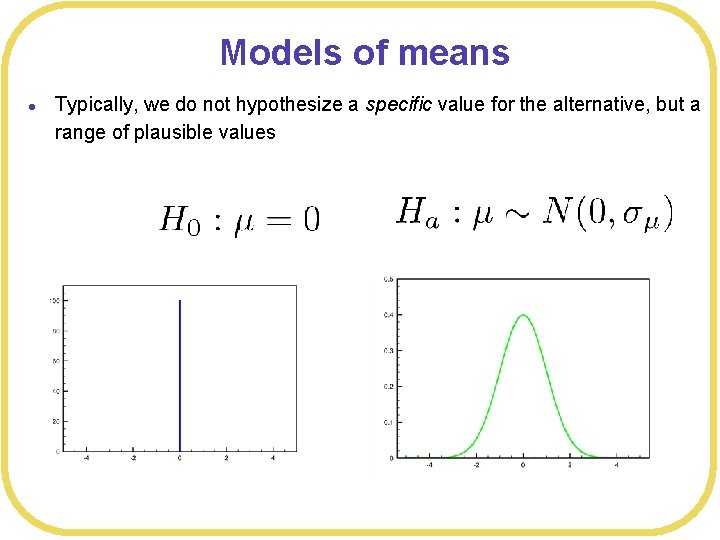

Models of means l Typically, we do not hypothesize a specific value for the alternative, but a range of plausible values

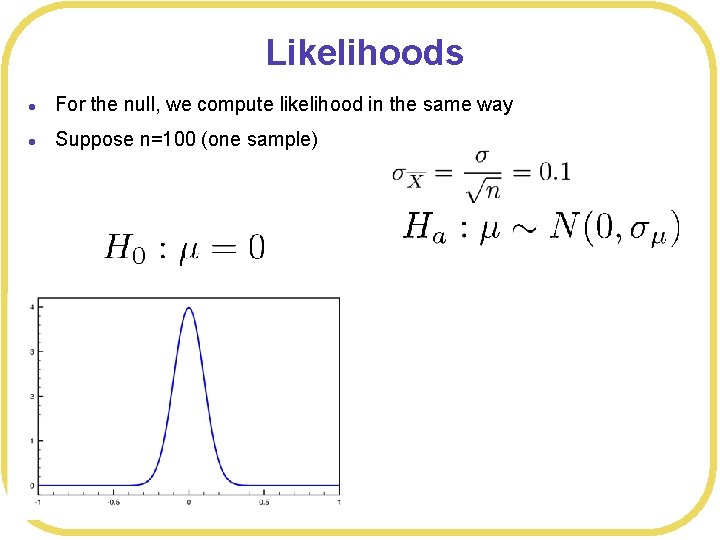

Likelihoods l For the null, we compute likelihood in the same way l Suppose n=100 (one sample)

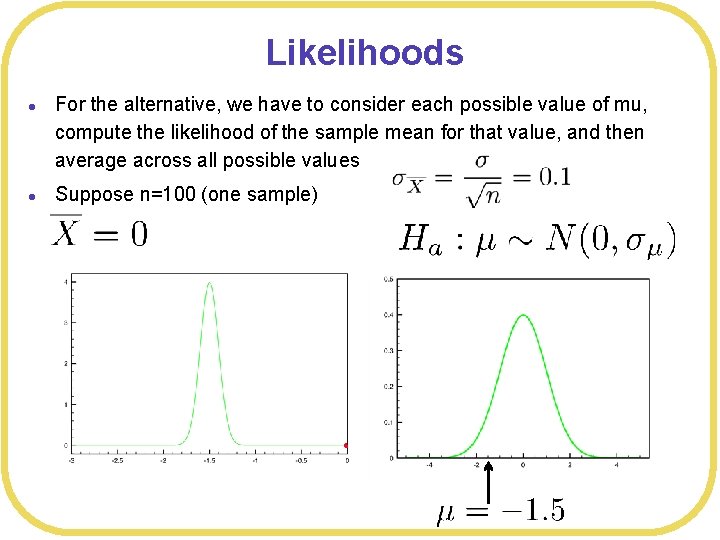

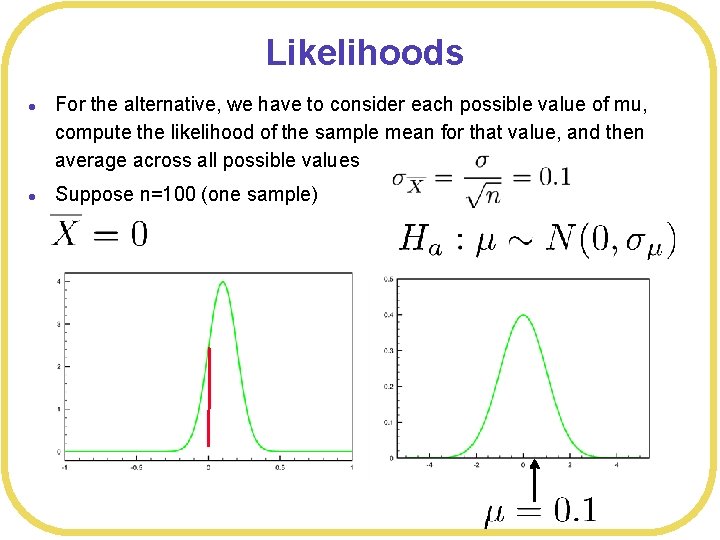

Likelihoods l l For the alternative, we have to consider each possible value of mu, compute the likelihood of the sample mean for that value, and then average across all possible values Suppose n=100 (one sample)

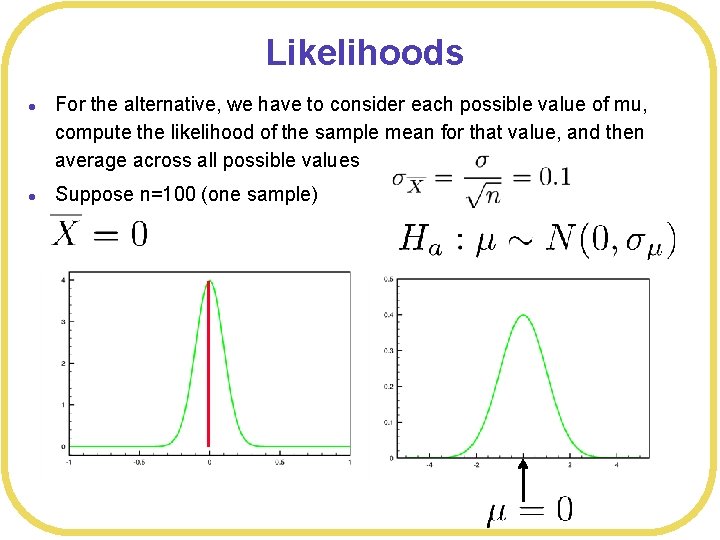

Likelihoods l l For the alternative, we have to consider each possible value of mu, compute the likelihood of the sample mean for that value, and then average across all possible values Suppose n=100 (one sample)

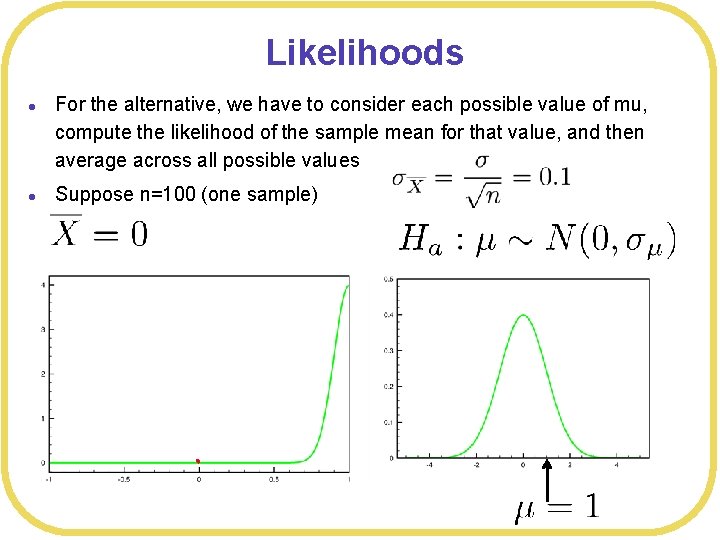

Likelihoods l l For the alternative, we have to consider each possible value of mu, compute the likelihood of the sample mean for that value, and then average across all possible values Suppose n=100 (one sample)

Likelihoods l l For the alternative, we have to consider each possible value of mu, compute the likelihood of the sample mean for that value, and then average across all possible values Suppose n=100 (one sample)

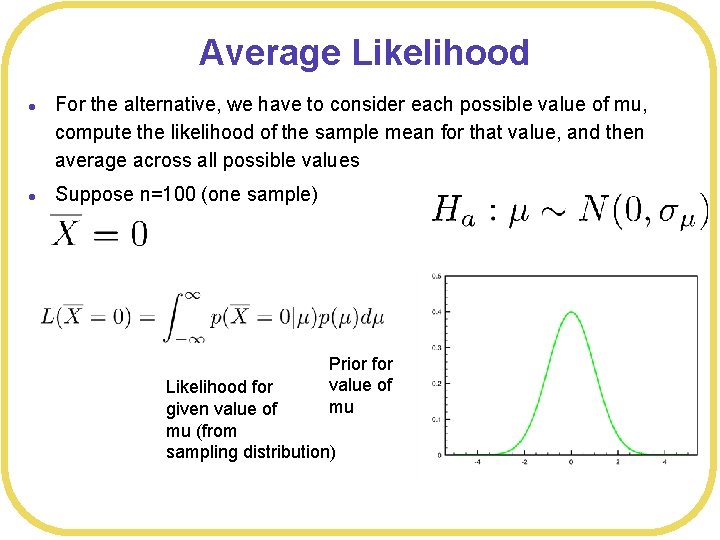

Average Likelihood l l For the alternative, we have to consider each possible value of mu, compute the likelihood of the sample mean for that value, and then average across all possible values Suppose n=100 (one sample) Prior for value of mu Likelihood for given value of mu (from sampling distribution)

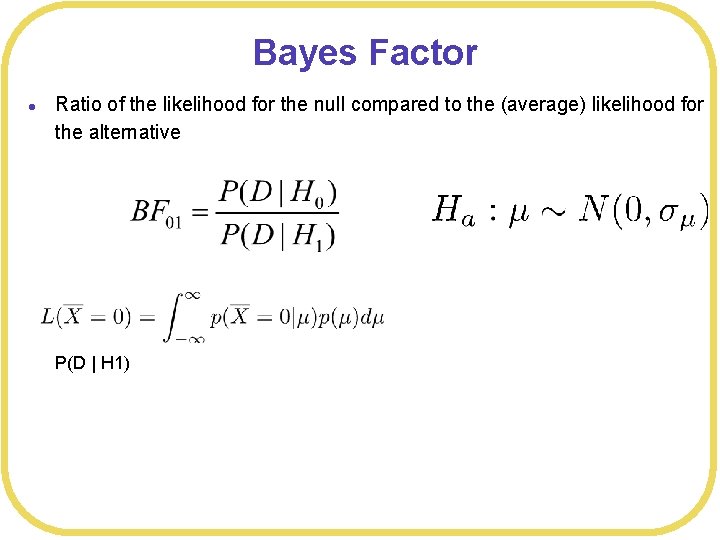

Bayes Factor l Ratio of the likelihood for the null compared to the (average) likelihood for the alternative P(D | H 1)

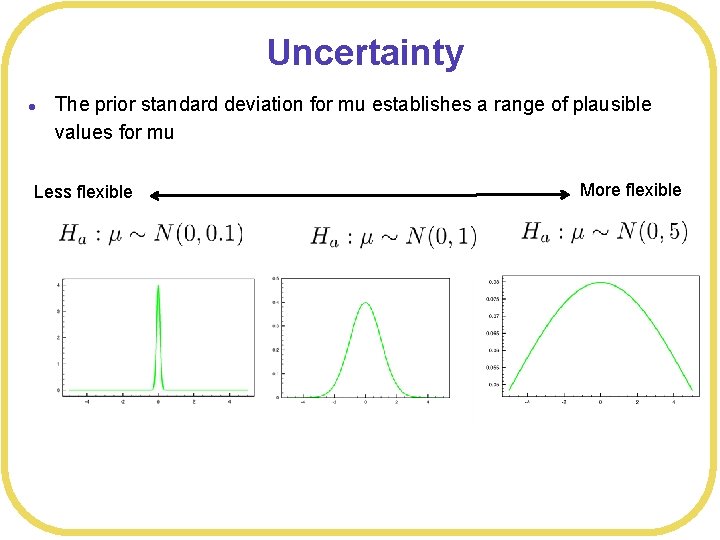

Uncertainty l The prior standard deviation for mu establishes a range of plausible values for mu Less flexible More flexible

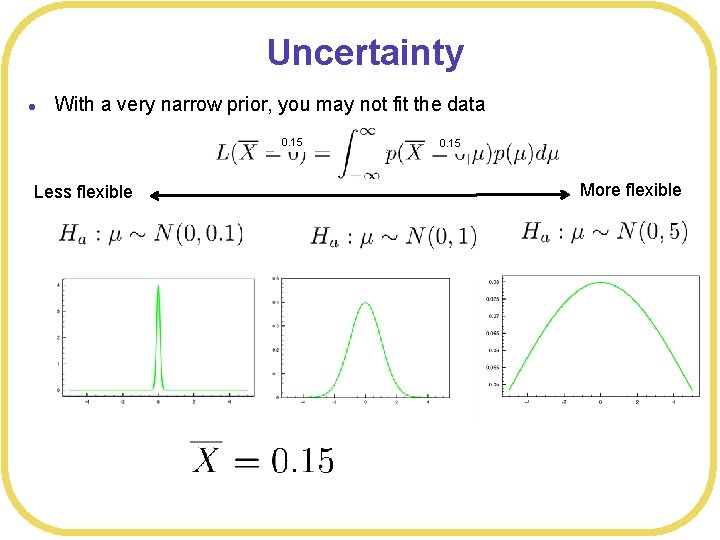

Uncertainty l With a very narrow prior, you may not fit the data 0. 15 Less flexible 0. 15 More flexible

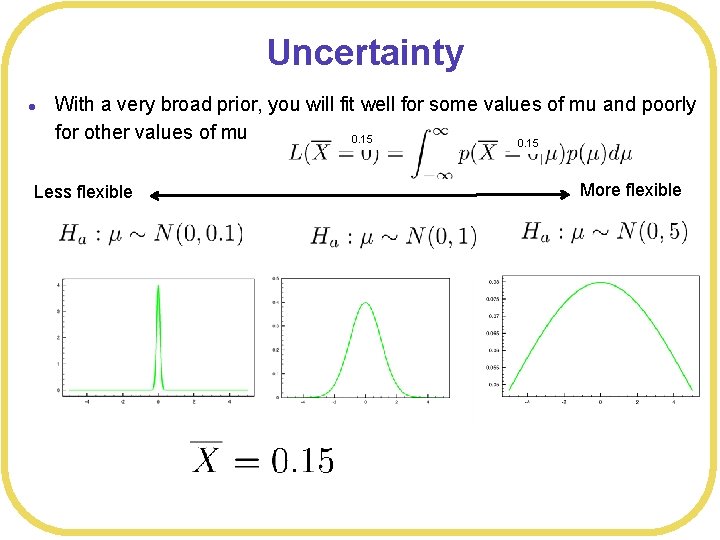

Uncertainty l With a very broad prior, you will fit well for some values of mu and poorly for other values of mu 0. 15 Less flexible More flexible

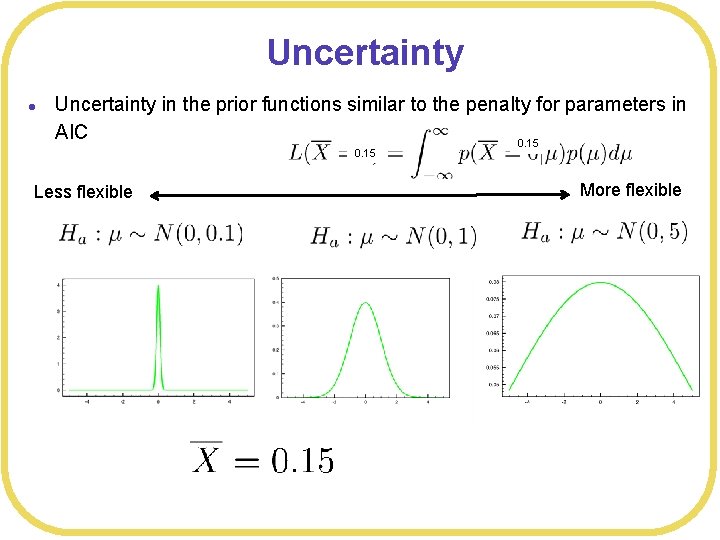

Uncertainty l Uncertainty in the prior functions similar to the penalty for parameters in AIC 0. 15 Less flexible More flexible

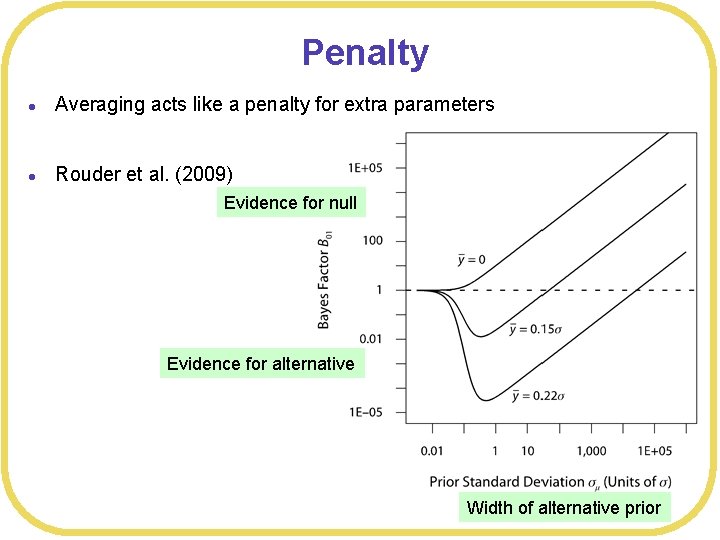

Penalty l Averaging acts like a penalty for extra parameters l Rouder et al. (2009) Evidence for null Evidence for alternative Width of alternative prior

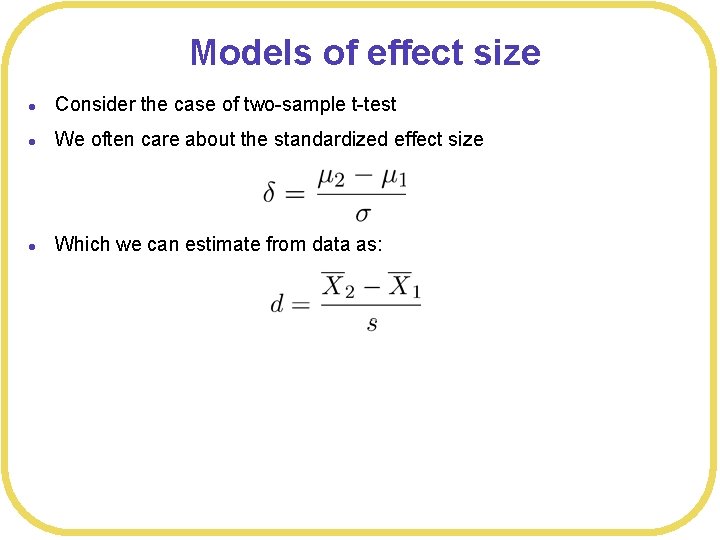

Models of effect size l Consider the case of two-sample t-test l We often care about the standardized effect size l Which we can estimate from data as:

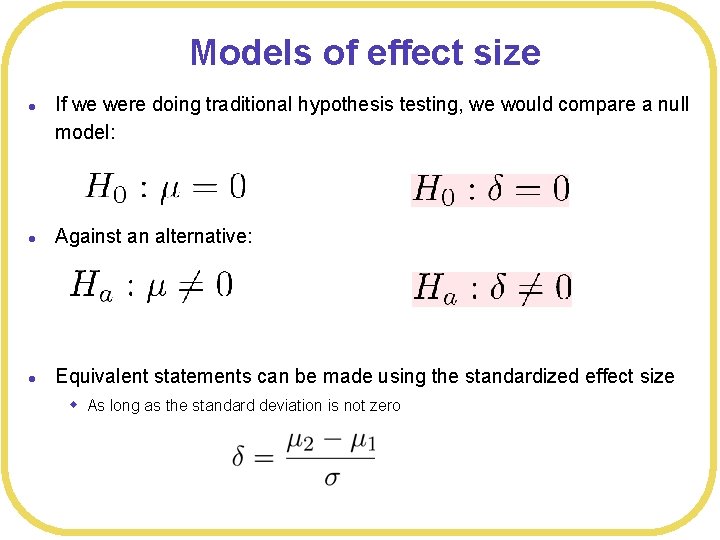

Models of effect size l If we were doing traditional hypothesis testing, we would compare a null model: l Against an alternative: l Equivalent statements can be made using the standardized effect size w As long as the standard deviation is not zero

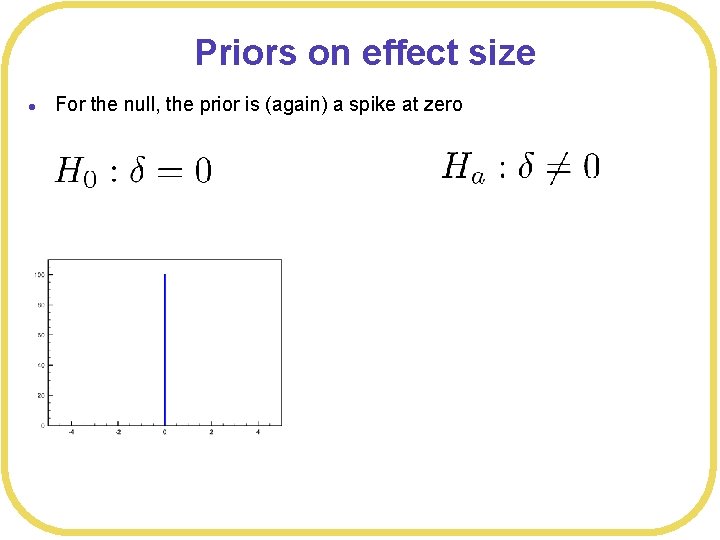

Priors on effect size l For the null, the prior is (again) a spike at zero

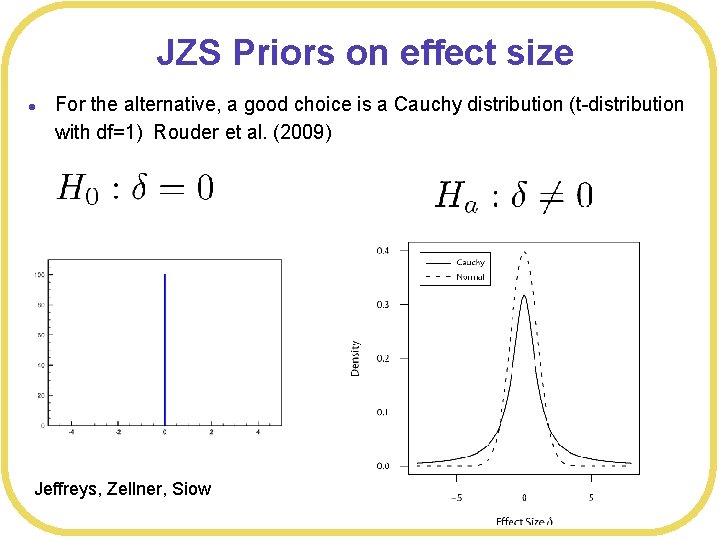

JZS Priors on effect size l For the alternative, a good choice is a Cauchy distribution (t-distribution with df=1) Rouder et al. (2009) Jeffreys, Zellner, Siow

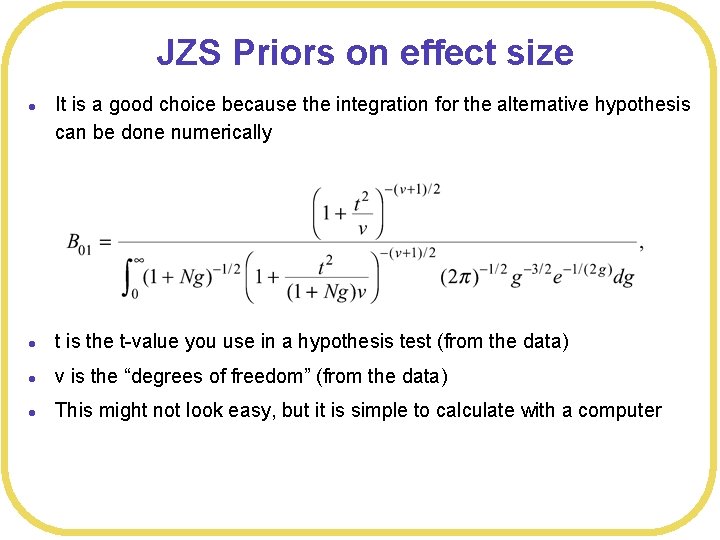

JZS Priors on effect size l It is a good choice because the integration for the alternative hypothesis can be done numerically l t is the t-value you use in a hypothesis test (from the data) l v is the “degrees of freedom” (from the data) l This might not look easy, but it is simple to calculate with a computer

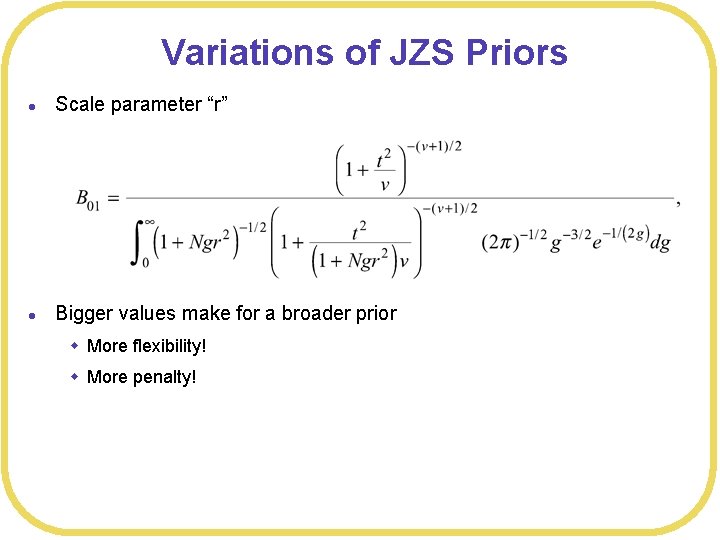

Variations of JZS Priors l Scale parameter “r” l Bigger values make for a broader prior w More flexibility! w More penalty!

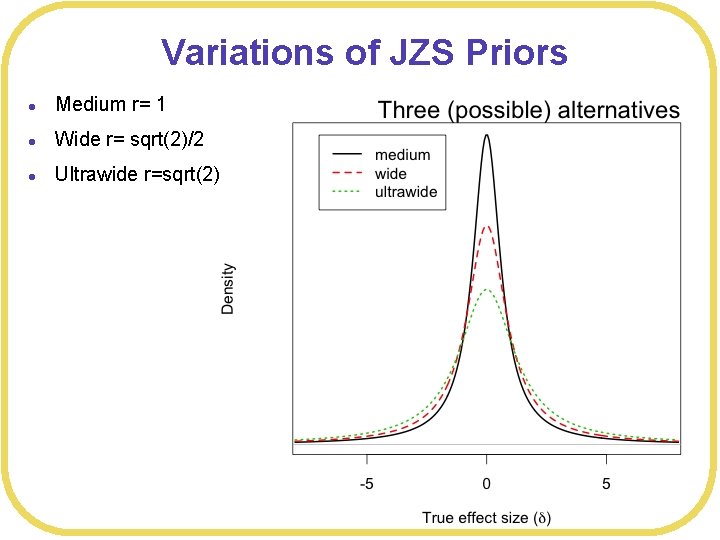

Variations of JZS Priors l Medium r= 1 l Wide r= sqrt(2)/2 l Ultrawide r=sqrt(2)

How do we use it? l Super easy l Rouder’s web site: l http: //pcl. missouri. edu/bayesfactor l In R l library(Bayes. Factor)

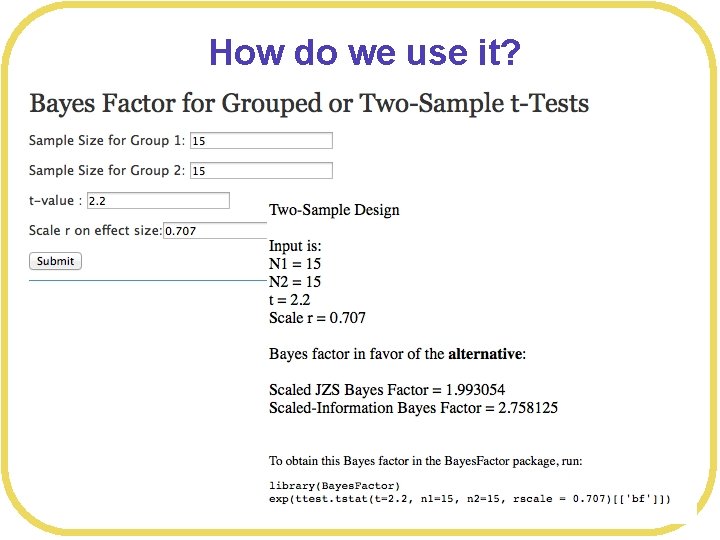

How do we use it?

How do we use it? l l library(Bayes. Factor) ttest. tstat(t=2. 2, n 1=15, n 2=15, simple=TRUE) B 10 1. 993006

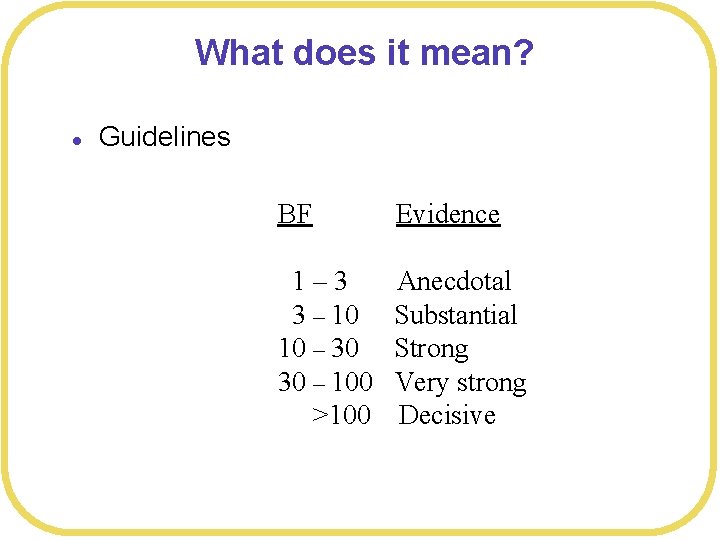

What does it mean? l Guidelines BF Evidence 1– 3 3 – 10 10 – 30 30 – 100 >100 Anecdotal Substantial Strong Very strong Decisive

Conclusions l JZS Bayes Factors l Easy to calculate l Pretty easy to understand results l A bit arbitrary for setting up w Why not other priors? w How to pick scale factor? w Criteria for interpretation are arbitrary l Fairly painless introduction to Bayesian methods

- Slides: 49