Basic Cilk Programming Adapted from Multithreaded Programming in

Basic Cilk Programming

Adapted from Multithreaded Programming in Cilk LECTURE 1 Charles E. Leiserson Supercomputing Technologies Research Group Computer Science and Artificial Intelligence Laboratory Massachusetts Institute of Technology

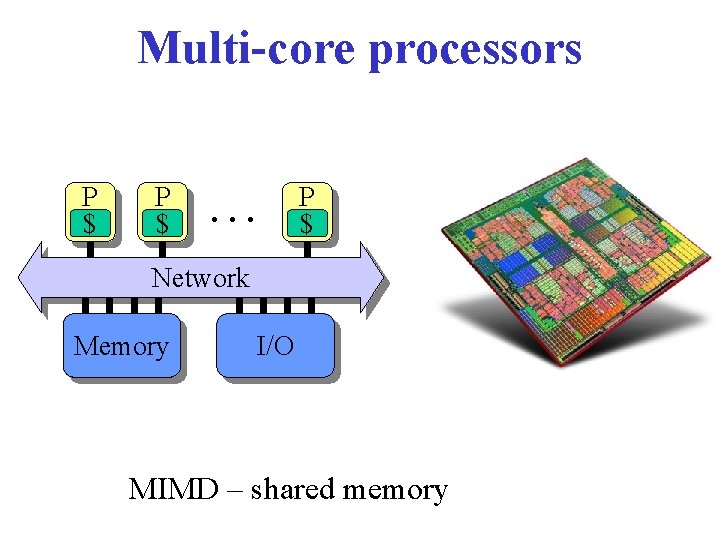

Multi-core processors P $ … P $ Network Memory I/O MIMD – shared memory

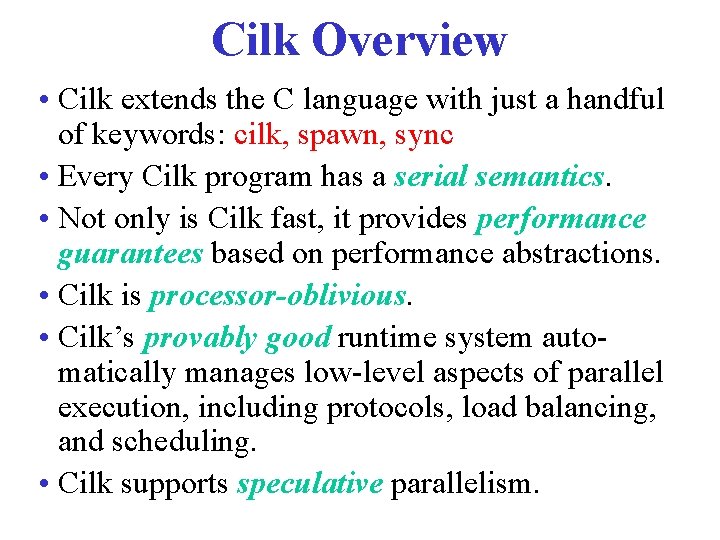

Cilk Overview • Cilk extends the C language with just a handful of keywords: cilk, spawn, sync • Every Cilk program has a serial semantics. • Not only is Cilk fast, it provides performance guarantees based on performance abstractions. • Cilk is processor-oblivious. • Cilk’s provably good runtime system automatically manages low-level aspects of parallel execution, including protocols, load balancing, and scheduling. • Cilk supports speculative parallelism.

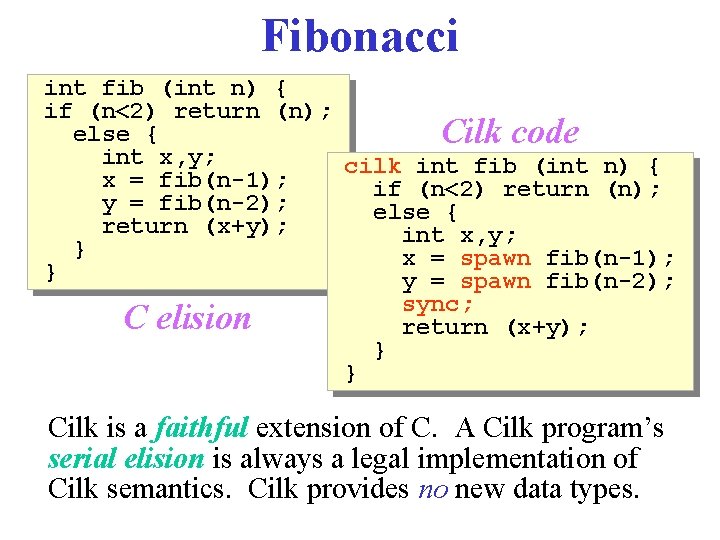

Fibonacci int fib (int n) { if (n<2) return (n); else { Cilk code int x, y; cilk int fib (int n) { x = fib(n-1); if (n<2) return (n); y = fib(n-2); else { return (x+y); int x, y; } x = spawn fib(n-1); } y = spawn fib(n-2); sync; C elision return (x+y); } } Cilk is a faithful extension of C. A Cilk program’s serial elision is always a legal implementation of Cilk semantics. Cilk provides no new data types.

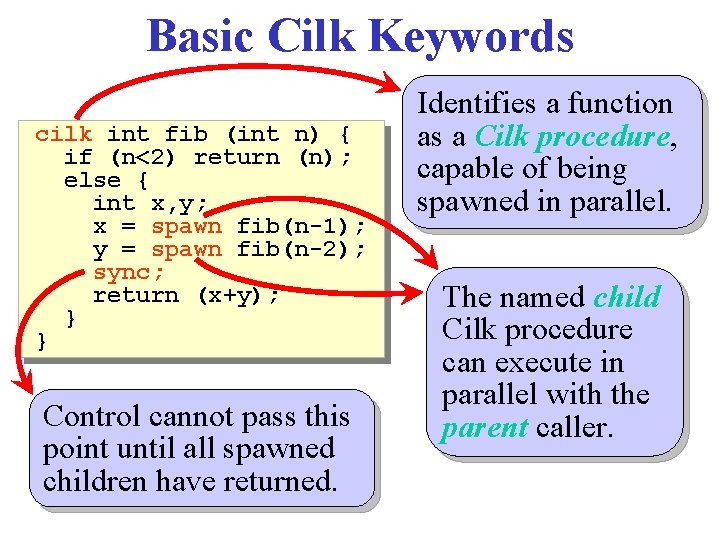

Basic Cilk Keywords cilk int fib (int n) { if (n<2) return (n); else { int x, y; x = spawn fib(n-1); y = spawn fib(n-2); sync; return (x+y); } } Control cannot pass this point until all spawned children have returned. Identifies a function as a Cilk procedure, capable of being spawned in parallel. The named child Cilk procedure can execute in parallel with the parent caller.

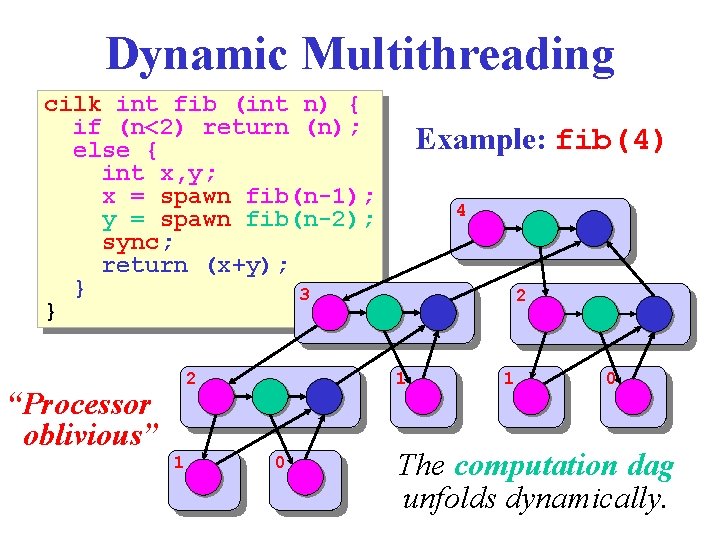

Dynamic Multithreading cilk int fib (int n) { if (n<2) return (n); else { int x, y; x = spawn fib(n-1); y = spawn fib(n-2); sync; return (x+y); } 3 } 2 “Processor oblivious” 1 Example: fib(4) 4 2 1 0 The computation dag unfolds dynamically.

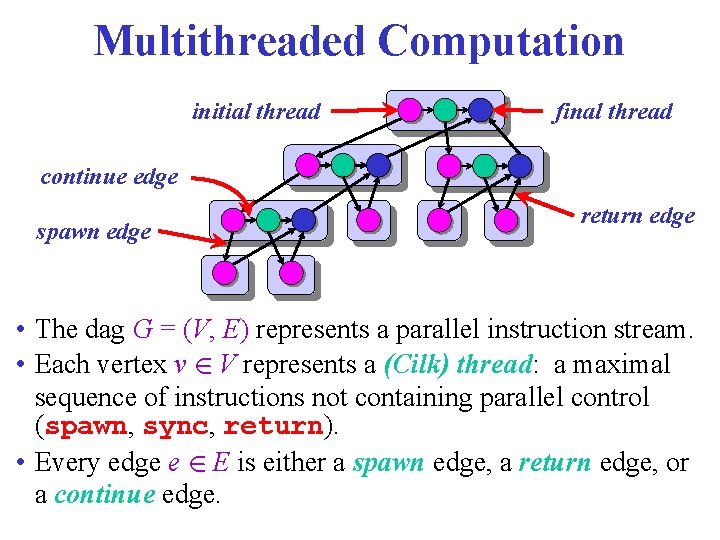

Multithreaded Computation initial thread final thread continue edge spawn edge return edge • The dag G = (V, E) represents a parallel instruction stream. • Each vertex v 2 V represents a (Cilk) thread: a maximal sequence of instructions not containing parallel control (spawn, sync, return). • Every edge e 2 E is either a spawn edge, a return edge, or a continue edge.

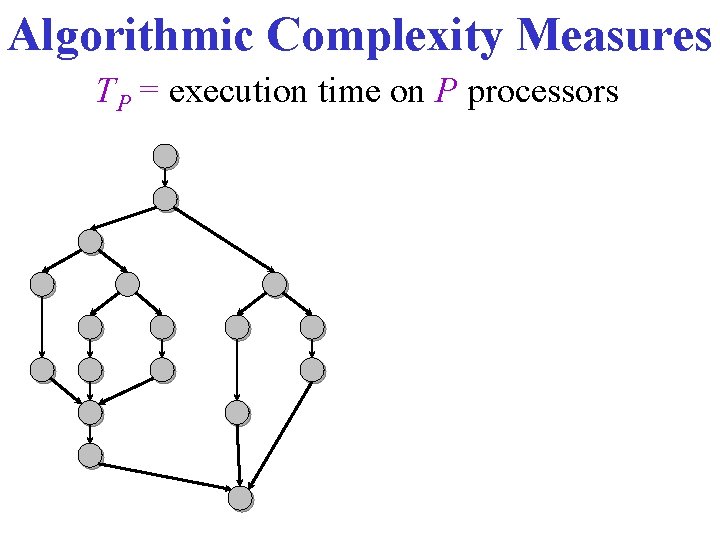

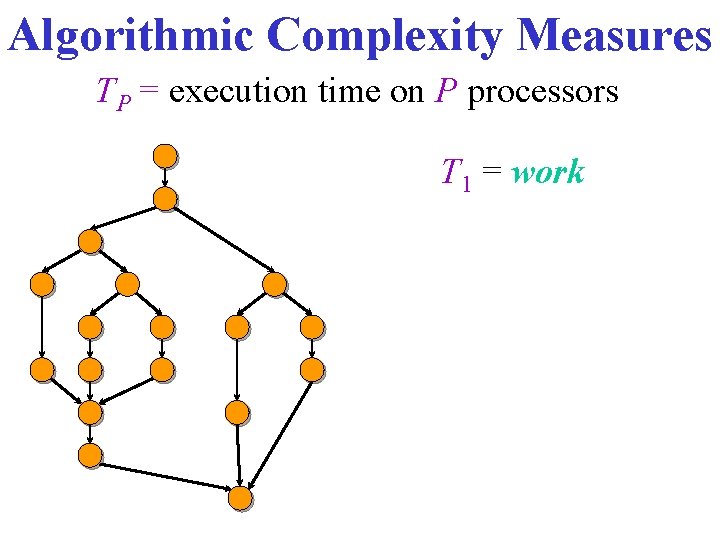

Algorithmic Complexity Measures TP = execution time on P processors

Algorithmic Complexity Measures TP = execution time on P processors T 1 = work

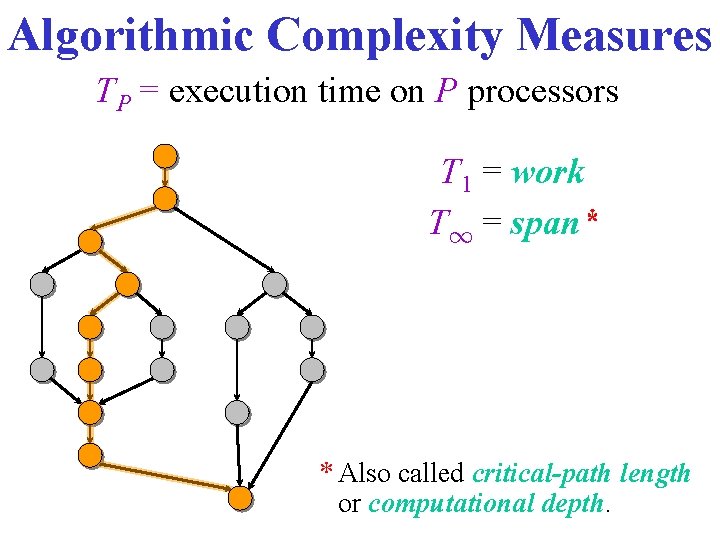

Algorithmic Complexity Measures TP = execution time on P processors T 1 = work T 1 = span* * Also called critical-path length or computational depth.

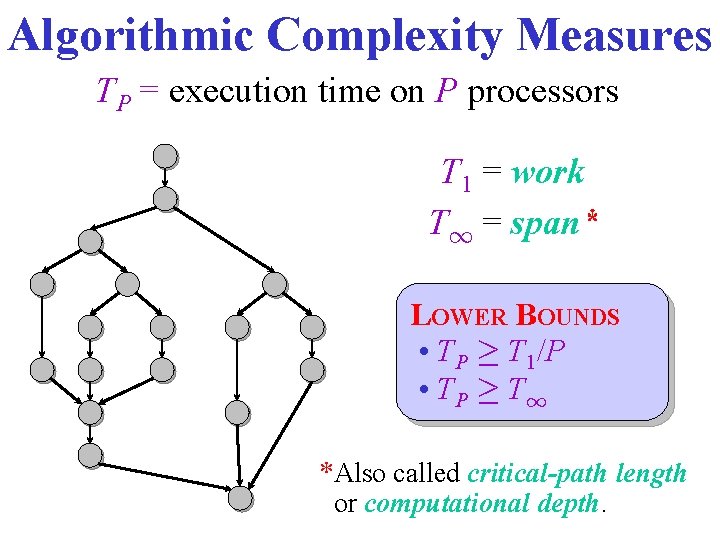

Algorithmic Complexity Measures TP = execution time on P processors T 1 = work T 1 = span* LOWER BOUNDS • TP ¸ T 1/P • TP ¸ T 1 *Also called critical-path length or computational depth.

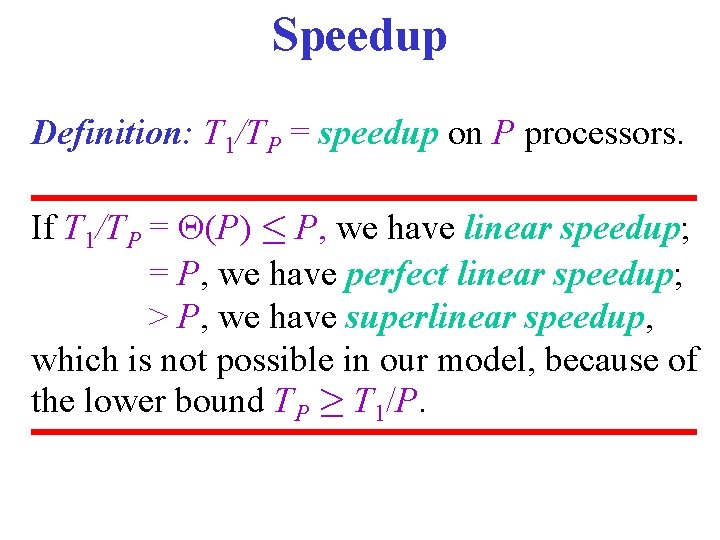

Speedup Definition: T 1/TP = speedup on P processors. If T 1/TP = (P) · P, we have linear speedup; = P, we have perfect linear speedup; > P, we have superlinear speedup, which is not possible in our model, because of the lower bound TP ¸ T 1/P.

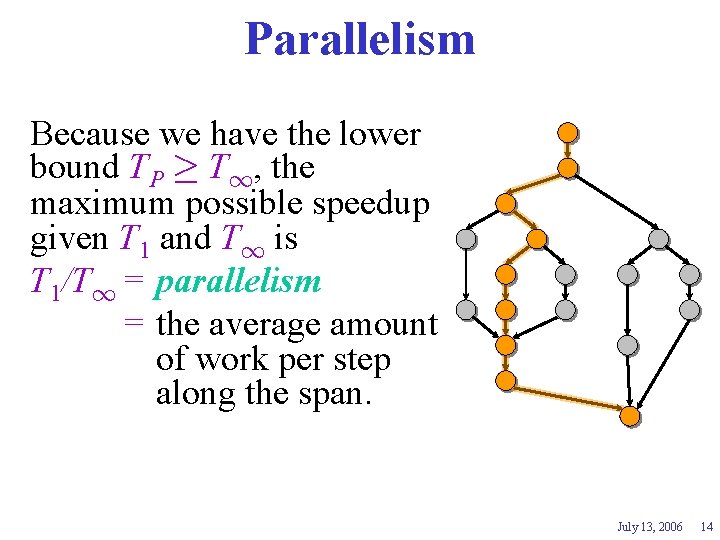

Parallelism Because we have the lower bound TP ¸ T 1, the maximum possible speedup given T 1 and T 1 is T 1/T 1 = parallelism = the average amount of work per step along the span. July 13, 2006 14

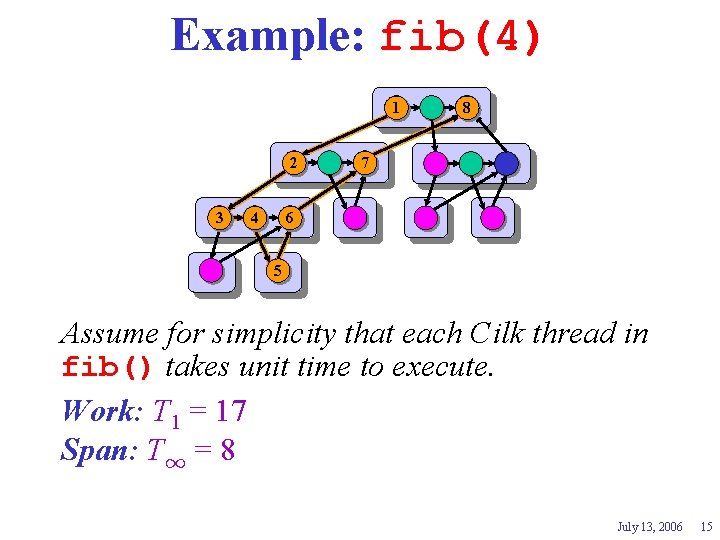

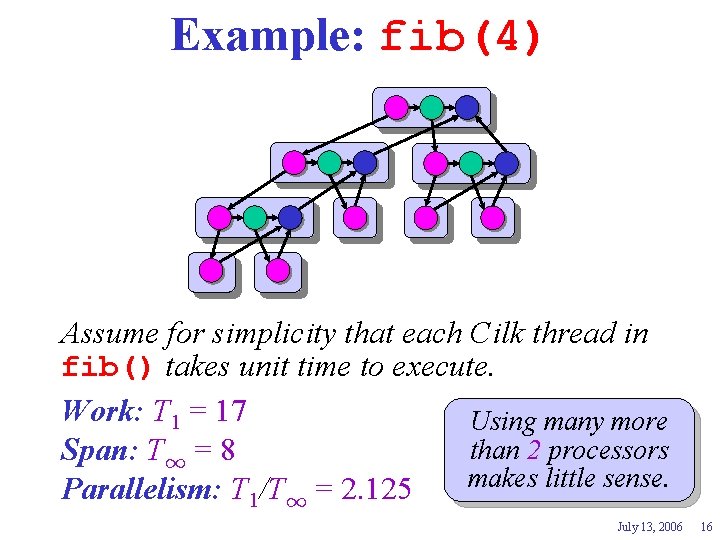

Example: fib(4) 1 2 3 4 8 7 6 5 Assume for simplicity that each Cilk thread in fib() takes unit time to execute. Work: T 1 = 17 ? Span: T 1 = 8? July 13, 2006 15

Example: fib(4) Assume for simplicity that each Cilk thread in fib() takes unit time to execute. Work: T 1 = 17 ? Using many more than 2 processors Span: T 1 = 8? makes little sense. Parallelism: T 1/T 1 = 2. 125 July 13, 2006 16

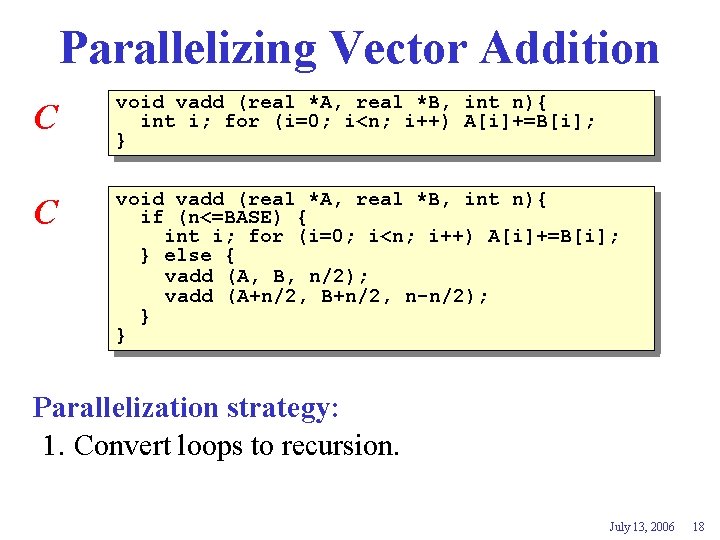

Parallelizing Vector Addition C void vadd (real *A, real *B, int n){ int i; for (i=0; i<n; i++) A[i]+=B[i]; } July 13, 2006 17

Parallelizing Vector Addition C void vadd (real *A, real *B, int n){ int i; for (i=0; i<n; i++) A[i]+=B[i]; } C void vadd (real *A, real *B, int n){ if (n<=BASE) { int i; for (i=0; i<n; i++) A[i]+=B[i]; } else { vadd (A, B, n/2); vadd (A+n/2, B+n/2, n-n/2); } } Parallelization strategy: 1. Convert loops to recursion. July 13, 2006 18

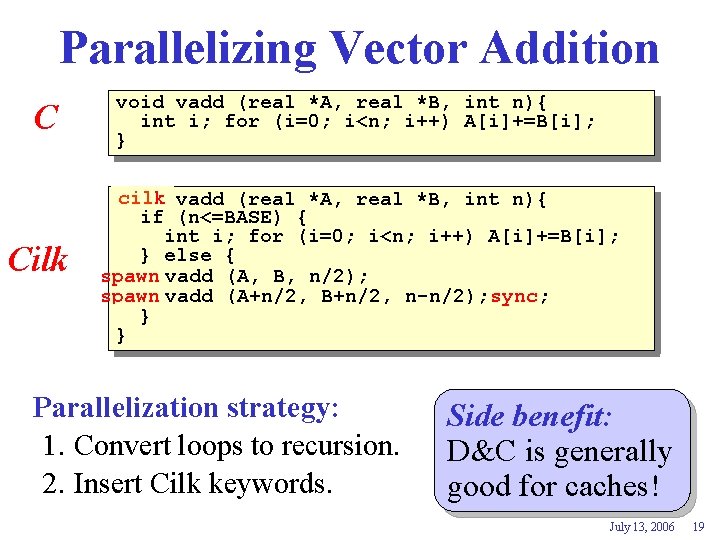

Parallelizing Vector Addition C Cilk void vadd (real *A, real *B, int n){ int i; for (i=0; i<n; i++) A[i]+=B[i]; } cilk vadd (real *A, real *B, int n){ void if (n<=BASE) { int i; for (i=0; i<n; i++) A[i]+=B[i]; } else { spawn vadd (A, B, n/2); spawn vadd (A+n/2, B+n/2, n-n/2); sync; } } Parallelization strategy: 1. Convert loops to recursion. 2. Insert Cilk keywords. Side benefit: D&C is generally good for caches! July 13, 2006 19

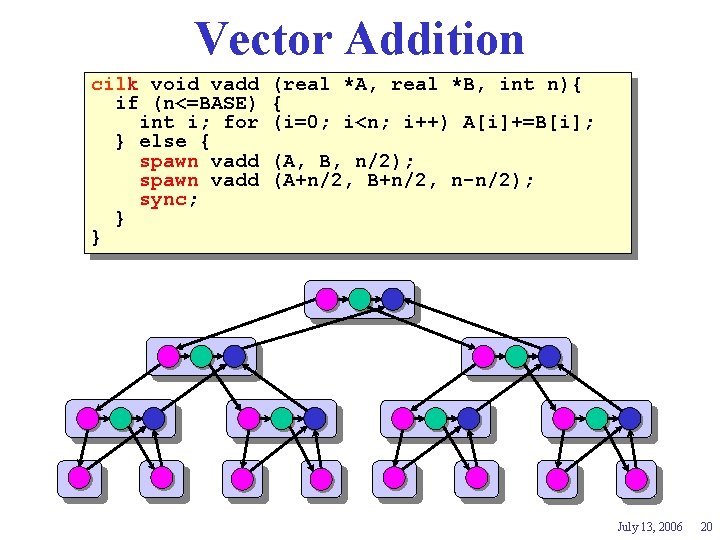

Vector Addition cilk void vadd if (n<=BASE) int i; for } else { spawn vadd sync; } } (real *A, real *B, int n){ { (i=0; i<n; i++) A[i]+=B[i]; (A, B, n/2); (A+n/2, B+n/2, n-n/2); July 13, 2006 20

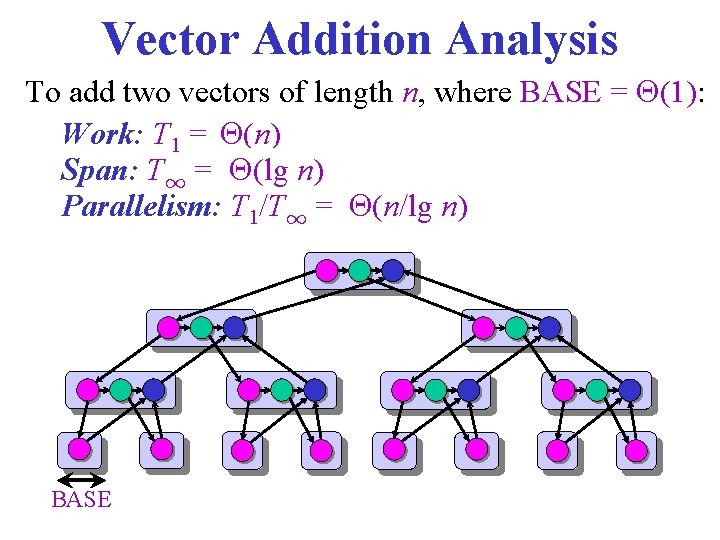

Vector Addition Analysis To add two vectors of length n, where BASE = (1): Work: T 1 = (n) ? Span: T 1 = (lg ? n) Parallelism: T 1/T 1 = (n/lg ? n) BASE

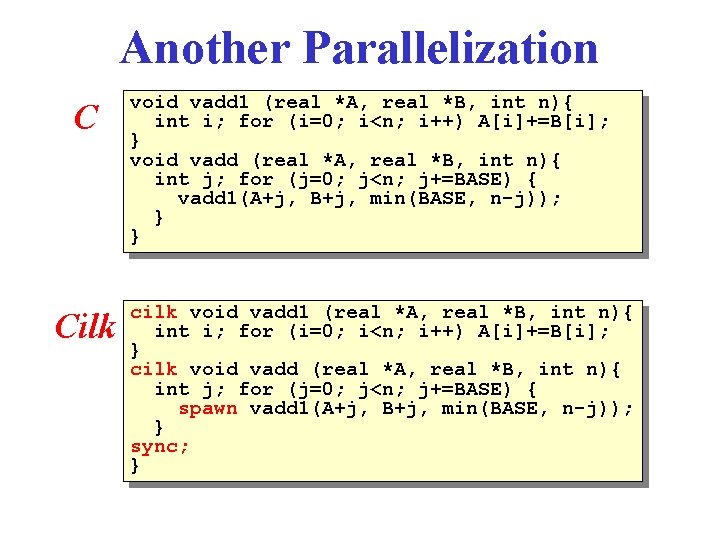

Another Parallelization C Cilk void vadd 1 (real *A, real *B, int n){ int i; for (i=0; i<n; i++) A[i]+=B[i]; } void vadd (real *A, real *B, int n){ int j; for (j=0; j<n; j+=BASE) { vadd 1(A+j, B+j, min(BASE, n-j)); } } cilk void vadd 1 (real *A, real *B, int n){ int i; for (i=0; i<n; i++) A[i]+=B[i]; } cilk void vadd (real *A, real *B, int n){ int j; for (j=0; j<n; j+=BASE) { spawn vadd 1(A+j, B+j, min(BASE, n-j)); } sync; }

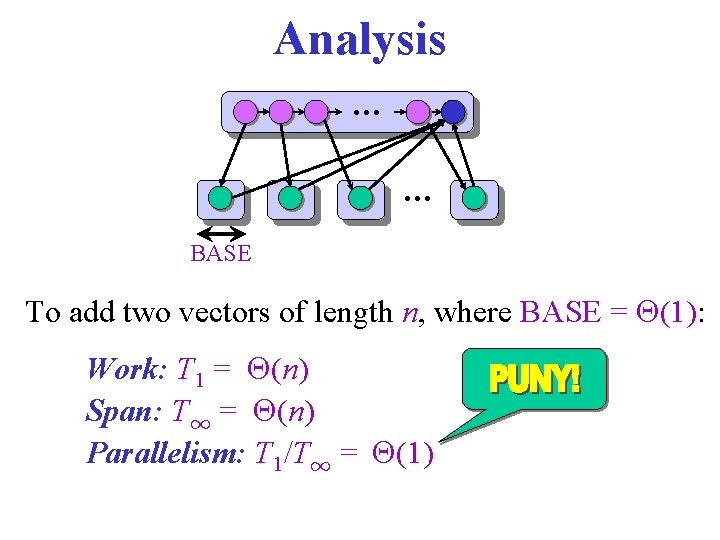

Analysis … … BASE To add two vectors of length n, where BASE = (1): Work: T 1 = (n) ? Span: T 1 = (n) ? Parallelism: T 1/T 1 = (1) ?

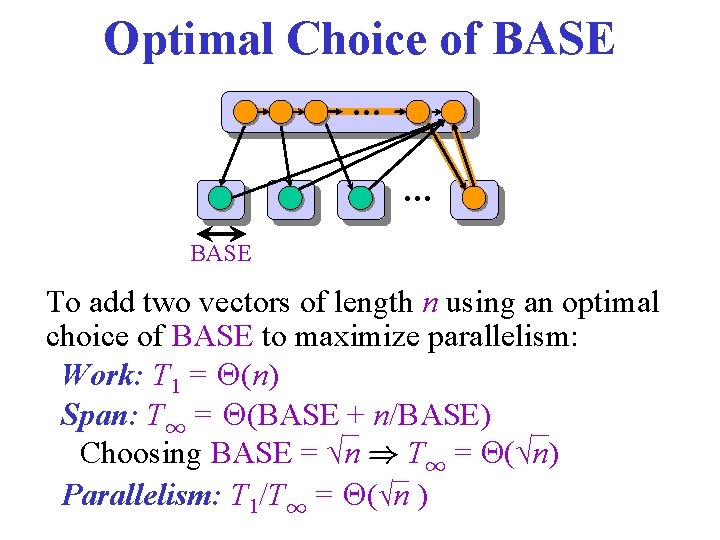

Optimal Choice of BASE … … BASE To add two vectors of length n using an optimal choice of BASE to maximize parallelism: Work: T 1 = (n) ? Span: T 1 = (BASE ? + n/BASE) Choosing BASE = √n ) T 1 = (√n) Parallelism: T 1/T 1 = (? √n )

Weird! Don’t we want to remove recursion? Parallel Programming = Sequential Program + Decomposition + Mapping + Communication and synchronization July 13, 2006 25

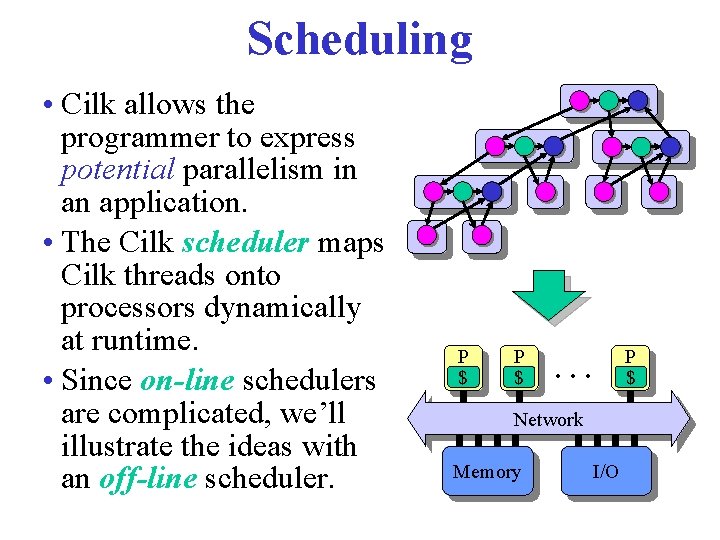

Scheduling • Cilk allows the programmer to express potential parallelism in an application. • The Cilk scheduler maps Cilk threads onto processors dynamically at runtime. • Since on-line schedulers are complicated, we’ll illustrate the ideas with an off-line scheduler. P $ … Network Memory I/O P $

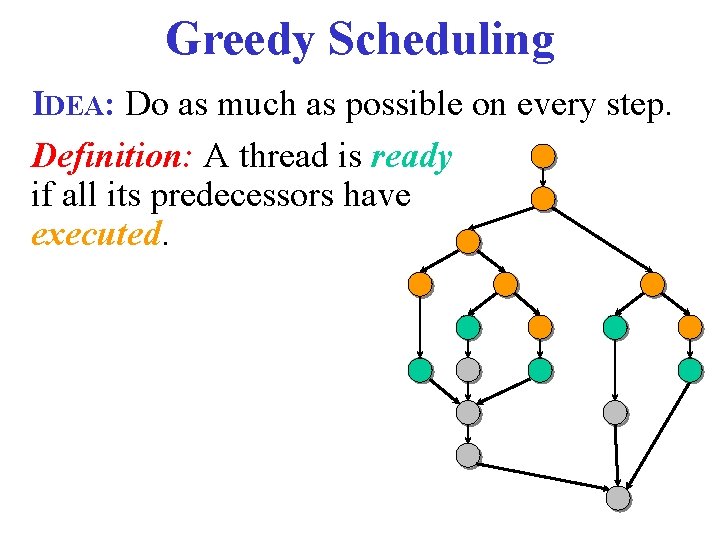

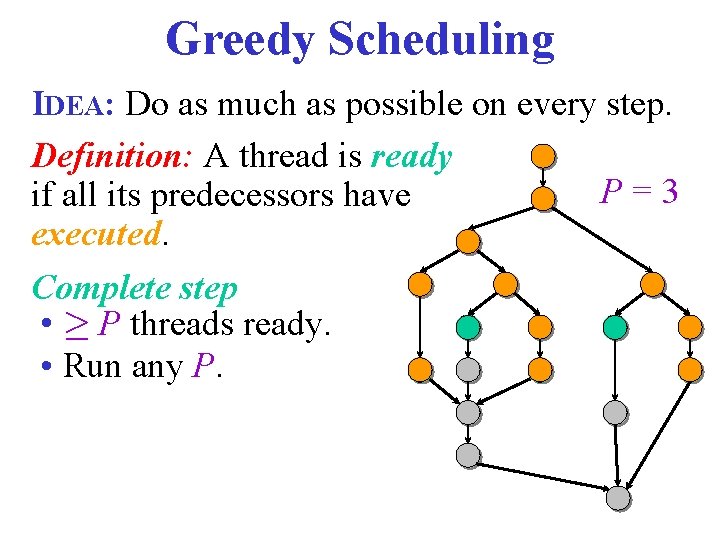

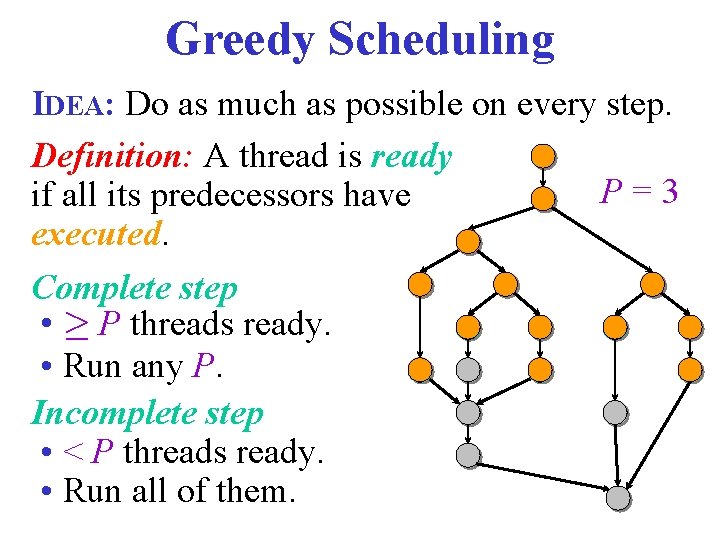

Greedy Scheduling IDEA: Do as much as possible on every step. Definition: A thread is ready if all its predecessors have executed.

Greedy Scheduling IDEA: Do as much as possible on every step. Definition: A thread is ready P=3 if all its predecessors have executed. Complete step • ¸ P threads ready. • Run any P.

Greedy Scheduling IDEA: Do as much as possible on every step. Definition: A thread is ready P=3 if all its predecessors have executed. Complete step • ¸ P threads ready. • Run any P. Incomplete step • < P threads ready. • Run all of them.

![Greedy-Scheduling Theorem [Graham ’ 68 & Brent ’ 75]. Any greedy scheduler achieves TP Greedy-Scheduling Theorem [Graham ’ 68 & Brent ’ 75]. Any greedy scheduler achieves TP](http://slidetodoc.com/presentation_image_h2/f41a85de41236134bcc5a579d826d5dd/image-30.jpg)

Greedy-Scheduling Theorem [Graham ’ 68 & Brent ’ 75]. Any greedy scheduler achieves TP T 1/P + T. Proof. • # complete steps · T 1/P, since each complete step performs P work. • # incomplete steps · T 1, since each incomplete step reduces the span of the unexecuted dag by 1. ■ P=3

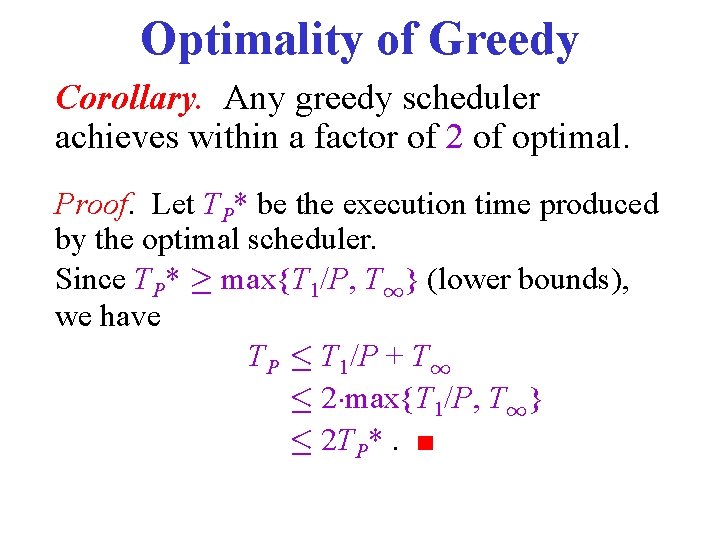

Optimality of Greedy Corollary. Any greedy scheduler achieves within a factor of 2 of optimal. Proof. Let TP* be the execution time produced by the optimal scheduler. Since TP* ¸ max{T 1/P, T 1} (lower bounds), we have TP · T 1/P + T 1 · 2¢max{T 1/P, T 1} · 2 TP*. ■

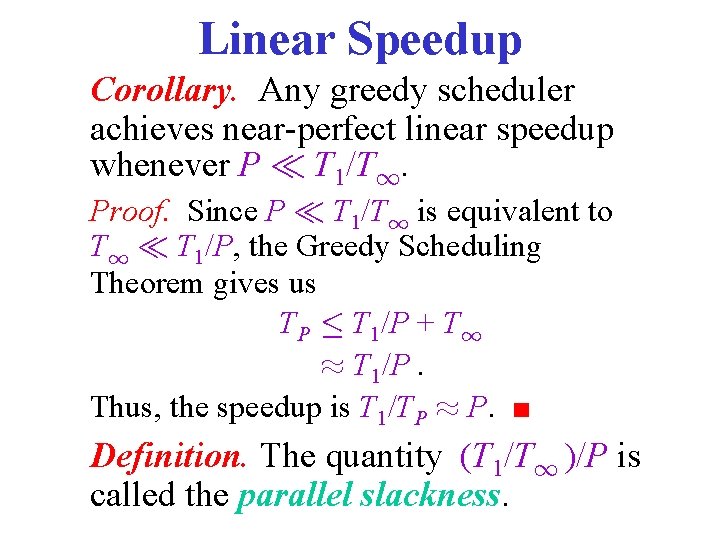

Linear Speedup Corollary. Any greedy scheduler achieves near-perfect linear speedup whenever P ¿ T 1/T 1. Proof. Since P ¿ T 1/T 1 is equivalent to T 1 ¿ T 1/P, the Greedy Scheduling Theorem gives us TP · T 1/P + T 1 ¼ T 1/P. Thus, the speedup is T 1/TP ¼ P. ■ Definition. The quantity (T 1/T 1 )/P is called the parallel slackness.

Lessons Work and span can predict performance on large machines better than running times on small machines can. Focus on improving Parallelism (ie. Maximize (T 1/T 1 )). This will allow you to effectively use larger processor counts.

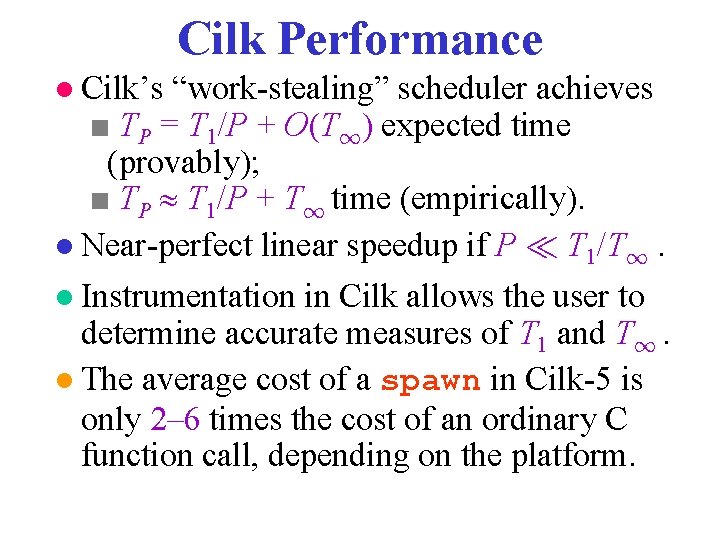

Cilk Performance ● Cilk’s “work-stealing” scheduler achieves ■ TP = T 1/P + O(T 1) expected time (provably); ■ TP T 1/P + T 1 time (empirically). ● Near-perfect linear speedup if P ¿ T 1/T 1. ● Instrumentation in Cilk allows the user to determine accurate measures of T 1 and T 1. ● The average cost of a spawn in Cilk-5 is only 2– 6 times the cost of an ordinary C function call, depending on the platform.

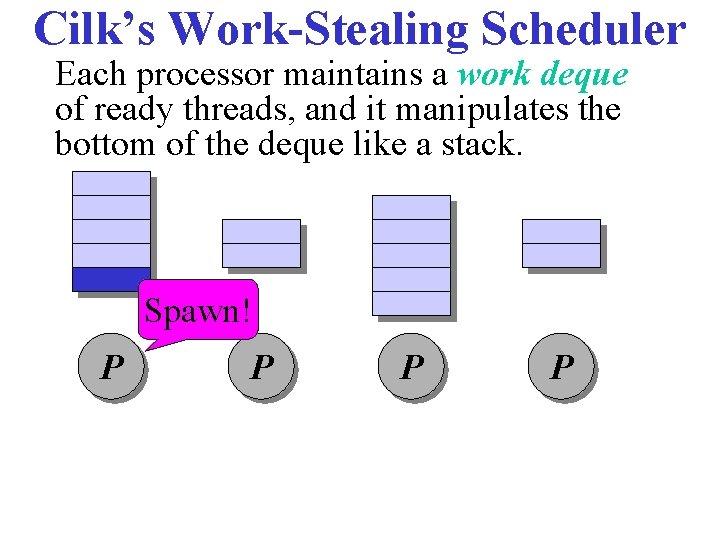

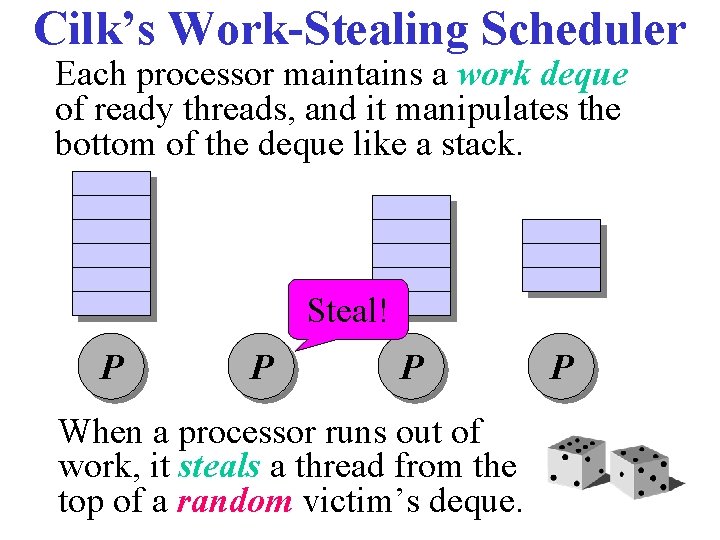

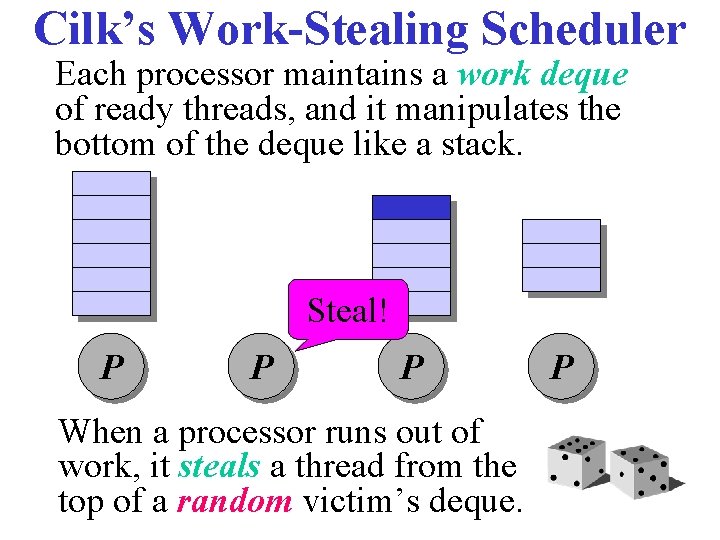

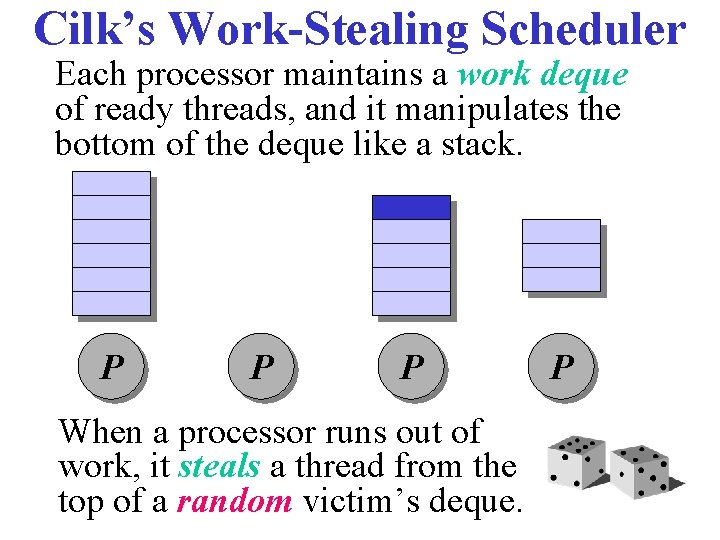

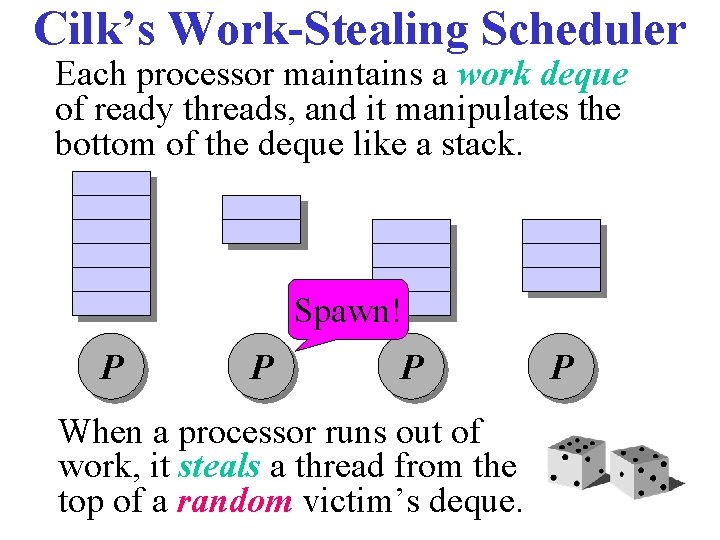

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Spawn! P P

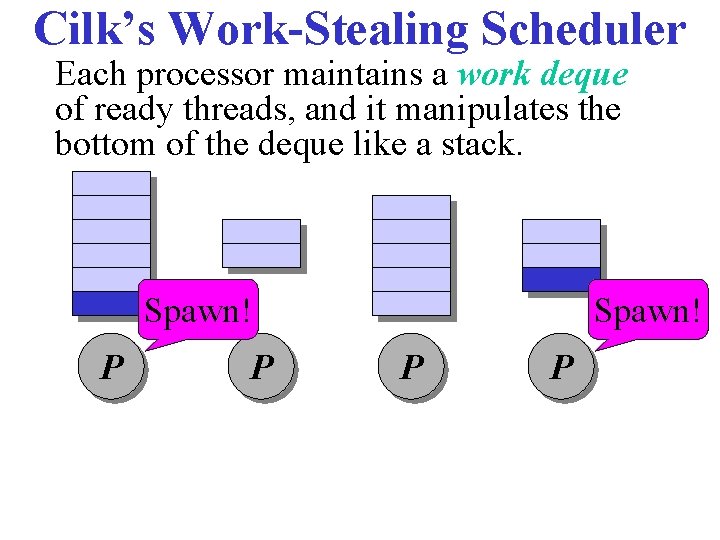

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Spawn! P P

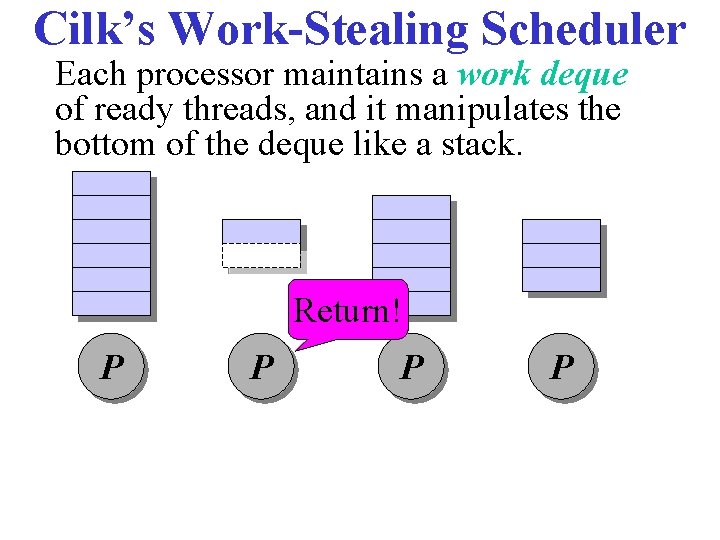

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Return! P P

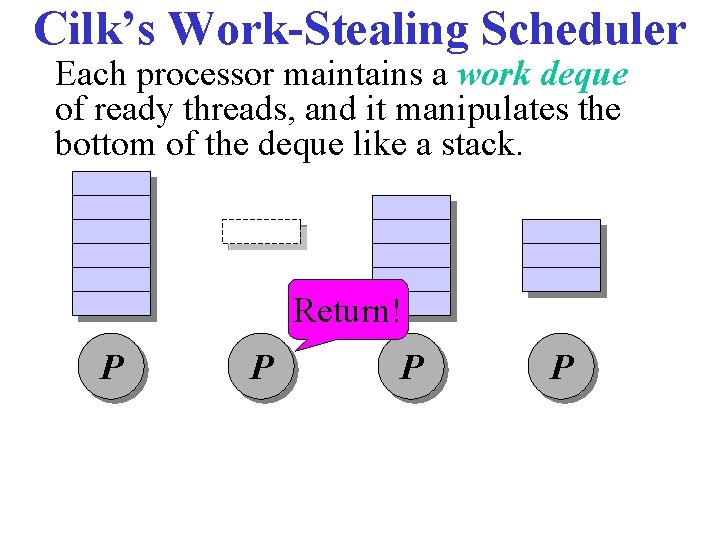

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Return! P P

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Steal! P P P When a processor runs out of work, it steals a thread from the top of a random victim’s deque. P

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Steal! P P P When a processor runs out of work, it steals a thread from the top of a random victim’s deque. P

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. P P P When a processor runs out of work, it steals a thread from the top of a random victim’s deque. P

Cilk’s Work-Stealing Scheduler Each processor maintains a work deque of ready threads, and it manipulates the bottom of the deque like a stack. Spawn! P P P When a processor runs out of work, it steals a thread from the top of a random victim’s deque. P

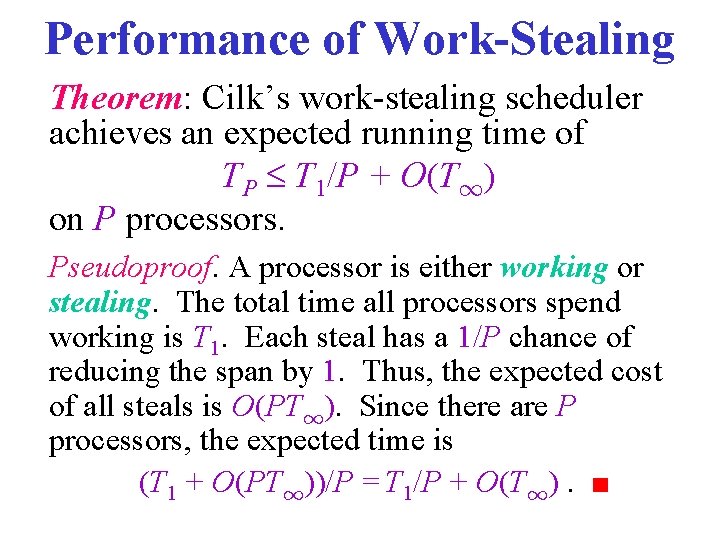

Performance of Work-Stealing Theorem: Cilk’s work-stealing scheduler achieves an expected running time of TP T 1/P + O(T 1) on P processors. Pseudoproof. A processor is either working or stealing. The total time all processors spend working is T 1. Each steal has a 1/P chance of reducing the span by 1. Thus, the expected cost of all steals is O(PT 1). Since there are P processors, the expected time is (T 1 + O(PT 1))/P = T 1/P + O(T 1). ■

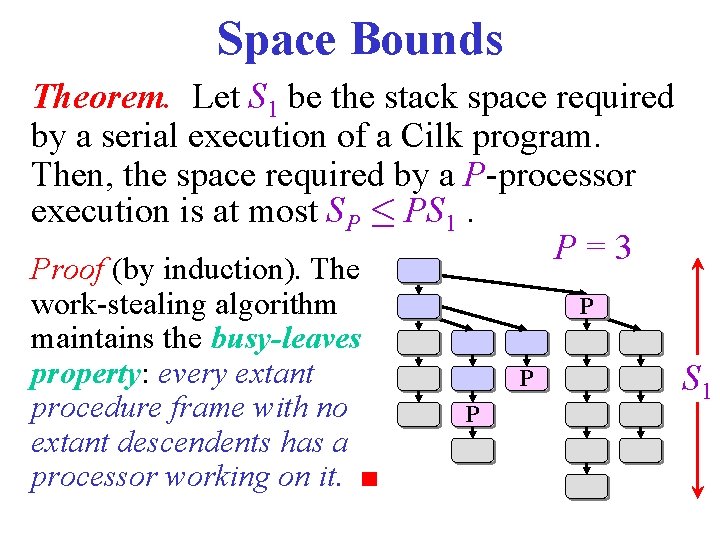

Space Bounds Theorem. Let S 1 be the stack space required by a serial execution of a Cilk program. Then, the space required by a P-processor execution is at most SP · PS 1. P=3 Proof (by induction). The work-stealing algorithm maintains the busy-leaves property: every extant procedure frame with no extant descendents has a processor working on it. ■ P P P S 1

Linguistic Implications Code like the following executes properly without any risk of blowing out memory: for (i=1; i<100000; i++) { spawn foo(i); } sync; MORAL Better to steal parents than children!

Summary • Cilk is simple: cilk, spawn, sync • Recursion, recursion, … • Work & span • Work & span • Work & span • Work & span

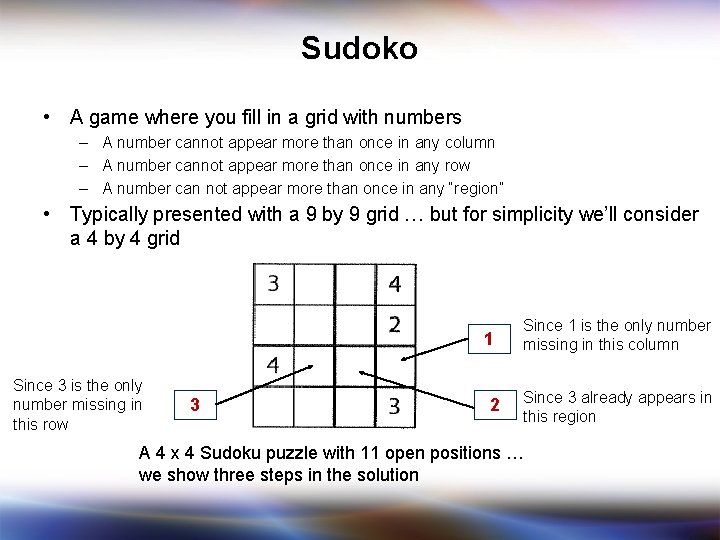

Sudoko • A game where you fill in a grid with numbers – A number cannot appear more than once in any column – A number cannot appear more than once in any row – A number can not appear more than once in any “region” • Typically presented with a 9 by 9 grid … but for simplicity we’ll consider a 4 by 4 grid 1 Since 3 is the only number missing in this row 3 2 Since 1 is the only number missing in this column Since 3 already appears in this region A 4 x 4 Sudoku puzzle with 11 open positions … we show three steps in the solution

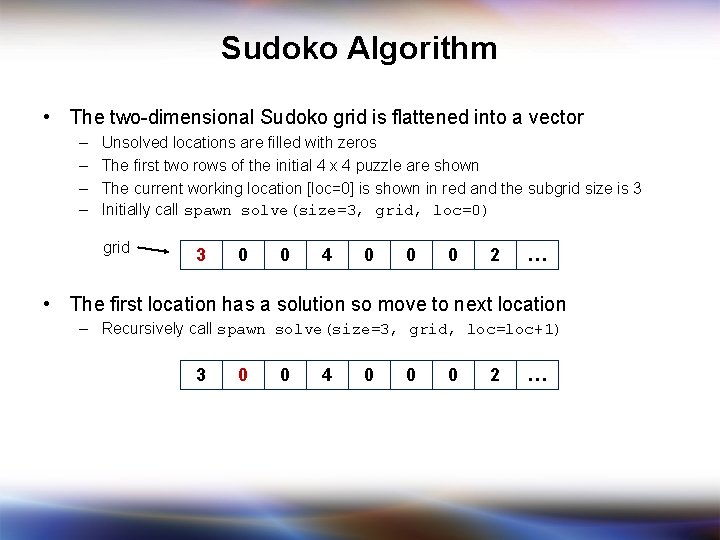

Sudoko Algorithm • The two-dimensional Sudoko grid is flattened into a vector – – Unsolved locations are filled with zeros The first two rows of the initial 4 x 4 puzzle are shown The current working location [loc=0] is shown in red and the subgrid size is 3 Initially call spawn solve(size=3, grid, loc=0) grid 3 0 0 4 0 0 0 2 … • The first location has a solution so move to next location – Recursively call spawn solve(size=3, grid, loc=loc+1) 3 0 0 4 0 0 0 2 …

![Exhaustive Search • The next location [loc=1] has no solution (‘ 0’ in the Exhaustive Search • The next location [loc=1] has no solution (‘ 0’ in the](http://slidetodoc.com/presentation_image_h2/f41a85de41236134bcc5a579d826d5dd/image-49.jpg)

Exhaustive Search • The next location [loc=1] has no solution (‘ 0’ in the current cell) so … – – Create 4 new grids and try each of the 4 possibilities (1, 2, 3, 4) concurrently Note: the search goes much faster if the guess is first tested to see if it is legal Spawn a new search tree for each guess k Call: spawn solve(size=3, grid[k], loc=loc+1) new grids 3 1 0 4 0 0 0 2 … 3 2 0 4 0 0 0 2 … 3 3 0 4 0 0 0 2 … 3 4 0 0 0 2 … Illegal since 3 and 4 are already in the same row Source: Mattson and Keutzer, UCB CS 294 49

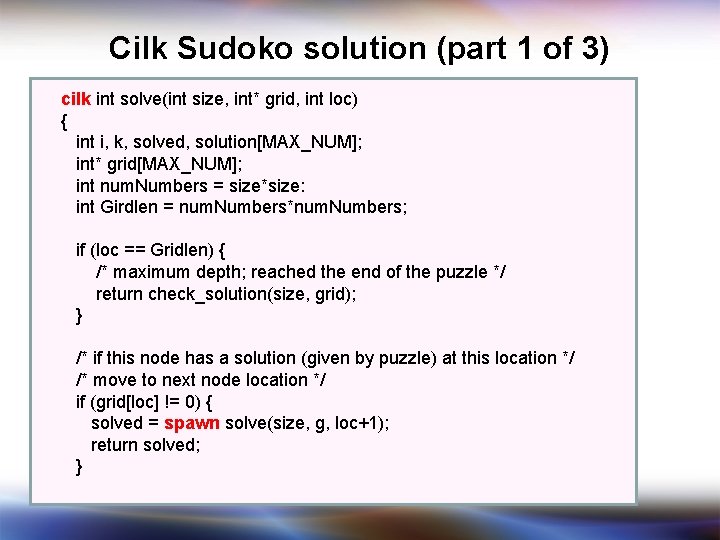

Cilk Sudoko solution (part 1 of 3) cilk int solve(int size, int* grid, int loc) { int i, k, solved, solution[MAX_NUM]; int* grid[MAX_NUM]; int num. Numbers = size*size: int Girdlen = num. Numbers*num. Numbers; if (loc == Gridlen) { /* maximum depth; reached the end of the puzzle */ return check_solution(size, grid); } /* if this node has a solution (given by puzzle) at this location */ /* move to next node location */ if (grid[loc] != 0) { solved = spawn solve(size, g, loc+1); return solved; }

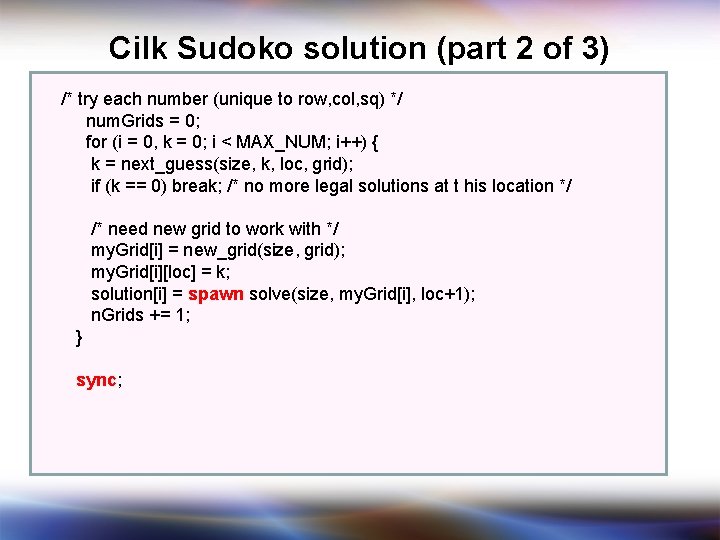

Cilk Sudoko solution (part 2 of 3) /* try each number (unique to row, col, sq) */ num. Grids = 0; for (i = 0, k = 0; i < MAX_NUM; i++) { k = next_guess(size, k, loc, grid); if (k == 0) break; /* no more legal solutions at t his location */ /* need new grid to work with */ my. Grid[i] = new_grid(size, grid); my. Grid[i][loc] = k; solution[i] = spawn solve(size, my. Grid[i], loc+1); n. Grids += 1; } sync;

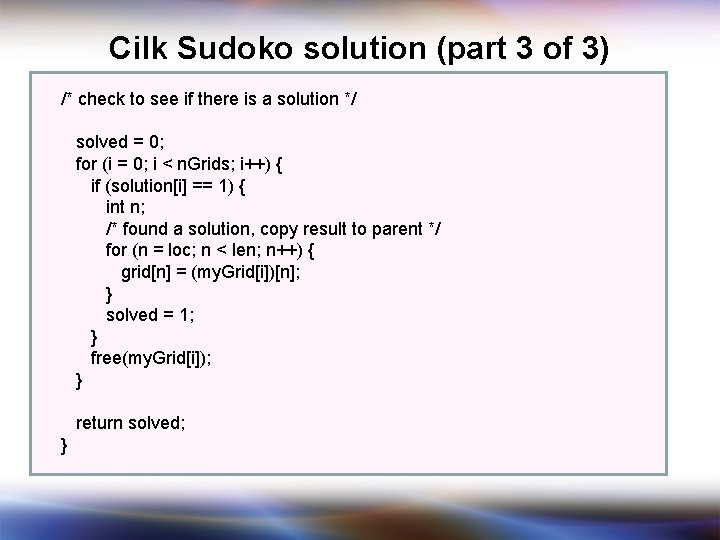

Cilk Sudoko solution (part 3 of 3) /* check to see if there is a solution */ solved = 0; for (i = 0; i < n. Grids; i++) { if (solution[i] == 1) { int n; /* found a solution, copy result to parent */ for (n = loc; n < len; n++) { grid[n] = (my. Grid[i])[n]; } solved = 1; } free(my. Grid[i]); } return solved; }

- Slides: 52