An Abridged Introduction to Differential Privacy Aaron Roth

An Abridged Introduction to Differential Privacy Aaron Roth

Two Anecdotes

Two Anecdotes

But what is “privacy”?

But what is “privacy” not? • Privacy is not hiding “personally identifiable information” (name, zip code, age, etc…)

But what is “privacy” not? • Privacy is not releasing only “aggregate” statistics.

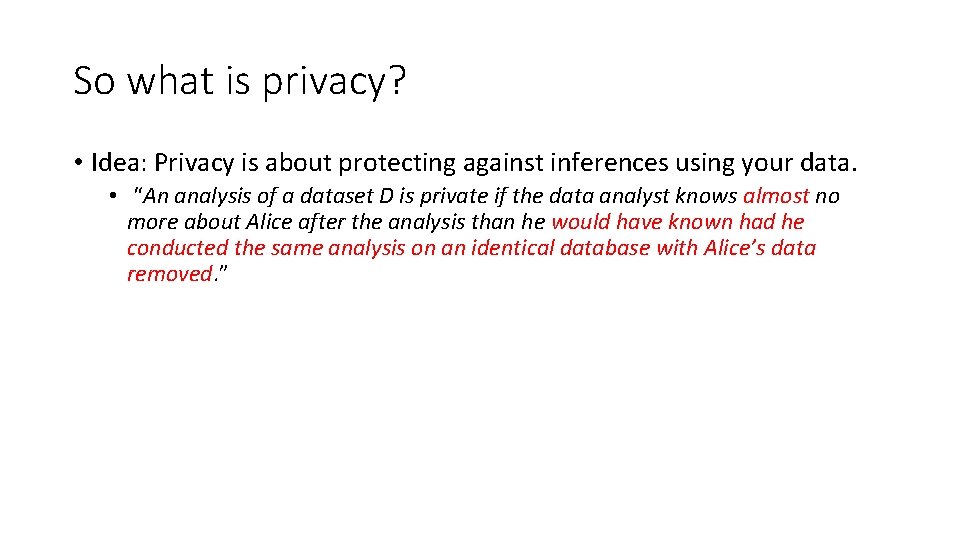

So what is privacy? • Idea: Privacy is about protecting against inferences using your data. • Attempt 1: “An analysis of a dataset D is private if the data analyst knows no more about Alice after the analysis than he knew about Alice before the analysis. ”

So what is privacy? • Problem: Impossible to achieve with auxiliary information. • Suppose an insurance company knows that Alice is a smoker. • An analysis that reveals that smoking and lung cancer are correlated might cause them to raise her rates! • Was her privacy violated? • This is exactly the sort of information we want to be able to learn… • This is a problem even if Alice was not in the database!

So what is privacy? • Idea: Privacy is about protecting against inferences using your data. • “An analysis of a dataset D is private if the data analyst knows almost no more about Alice after the analysis than he would have known had he conducted the same analysis on an identical database with Alice’s data removed. ”

![Differential Privacy D Alice Bob Xavier Chris Donna Algorithm ratio bounded Pr [r] Ernie Differential Privacy D Alice Bob Xavier Chris Donna Algorithm ratio bounded Pr [r] Ernie](http://slidetodoc.com/presentation_image_h/d3fe577a7e22d0c123b7ee84176f822d/image-10.jpg)

Differential Privacy D Alice Bob Xavier Chris Donna Algorithm ratio bounded Pr [r] Ernie

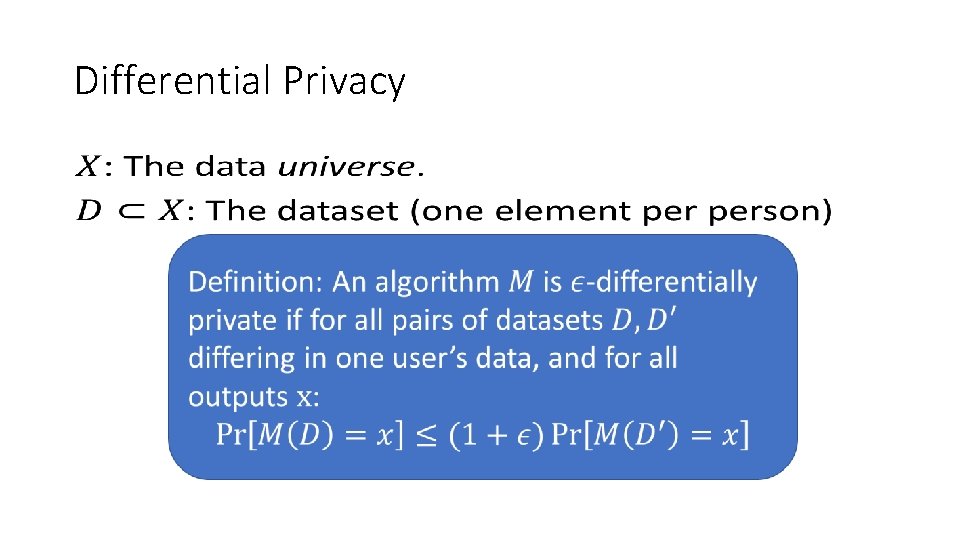

Differential Privacy •

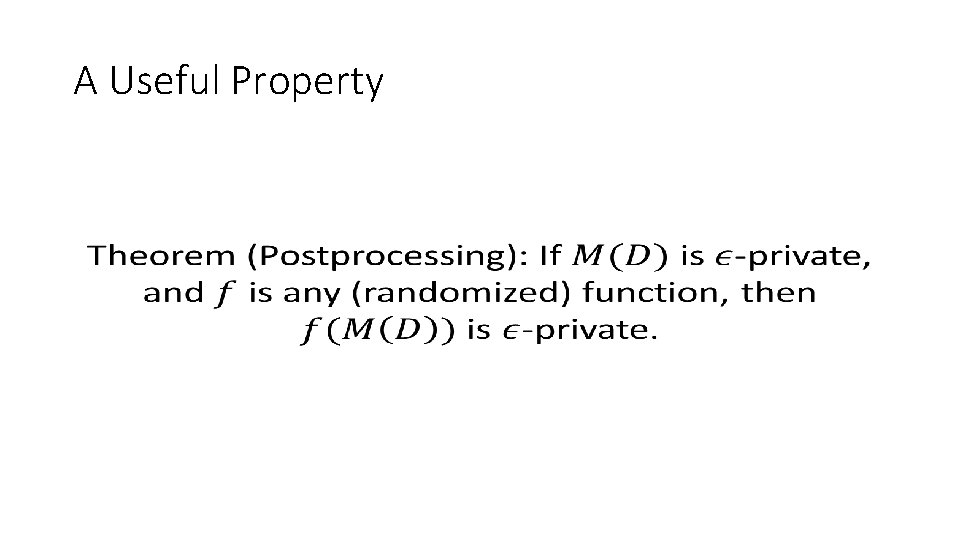

A Useful Property •

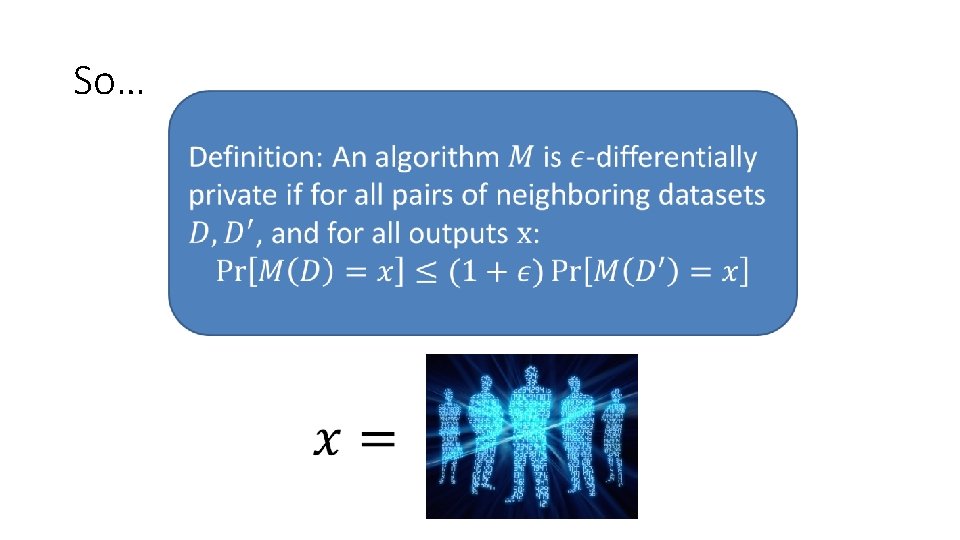

So…

So…

So…

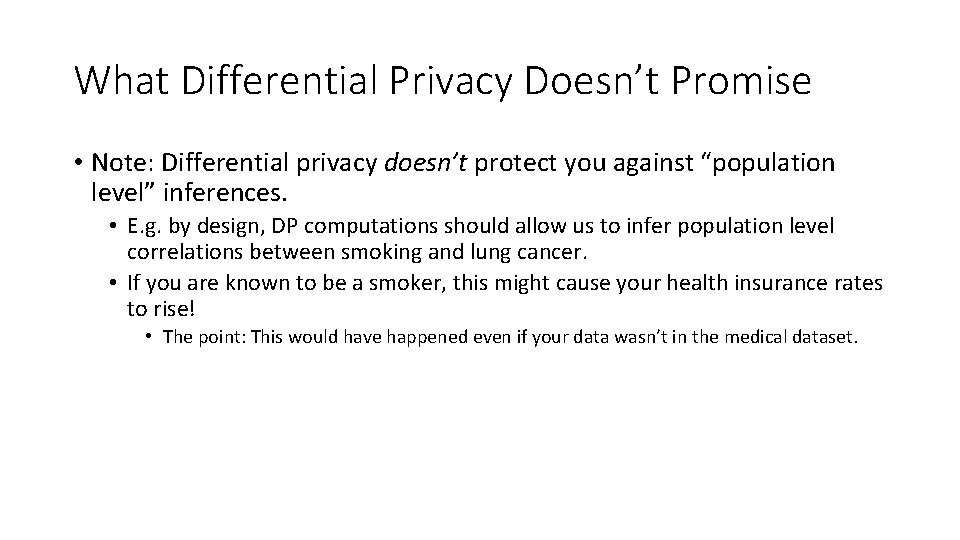

What Differential Privacy Doesn’t Promise • Note: Differential privacy doesn’t protect you against “population level” inferences. • E. g. by design, DP computations should allow us to infer population level correlations between smoking and lung cancer. • If you are known to be a smoker, this might cause your health insurance rates to rise! • The point: This would have happened even if your data wasn’t in the medical dataset.

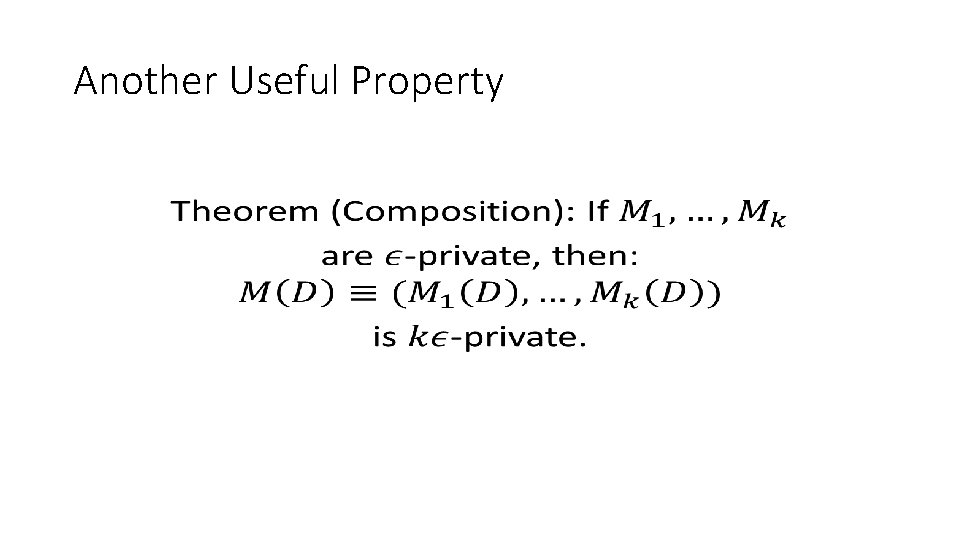

Another Useful Property •

So… You can go about designing algorithms as you normally would. Just access the data using differentially private “subroutines”, and keep track of your “privacy budget” as a resource. Private algorithm design, like regular algorithm design, can be modular.

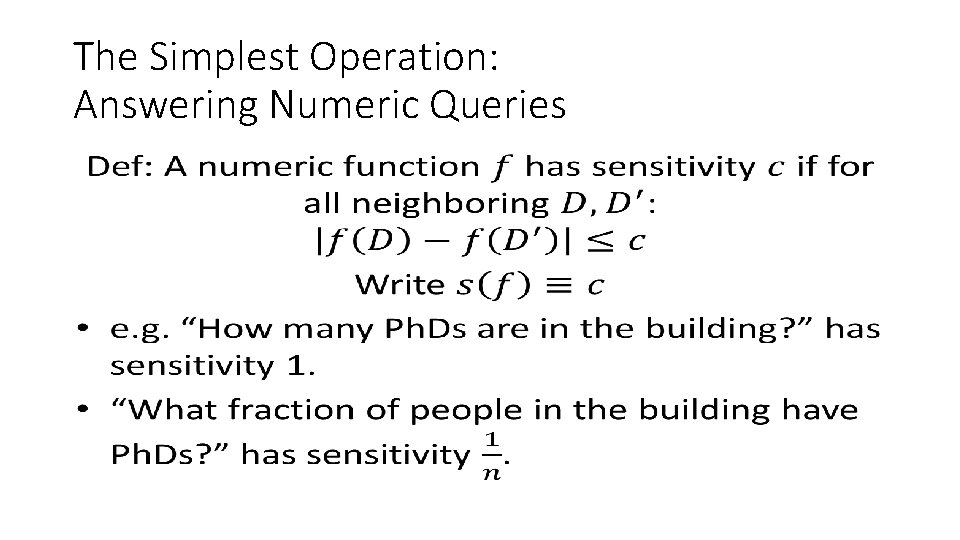

The Simplest Operation: Answering Numeric Queries •

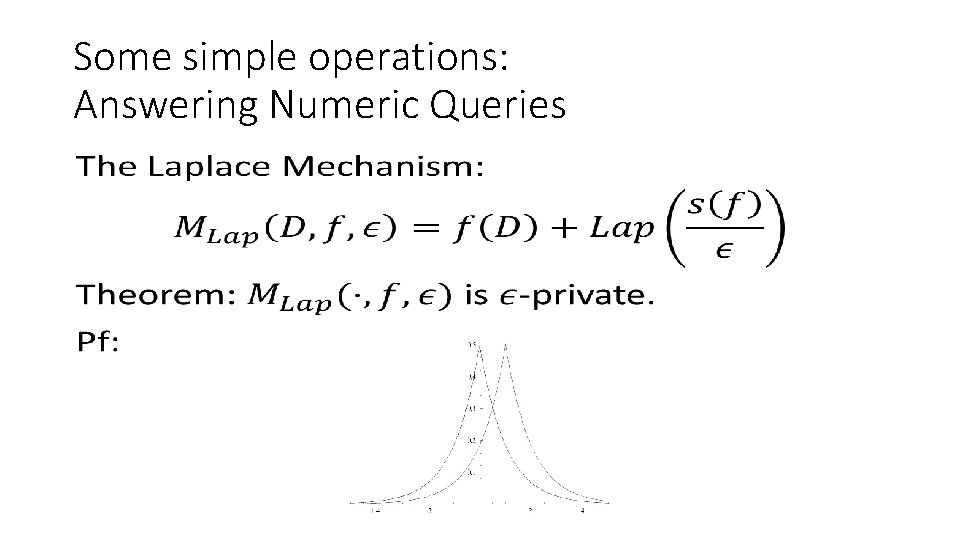

Some simple operations: Answering Numeric Queries •

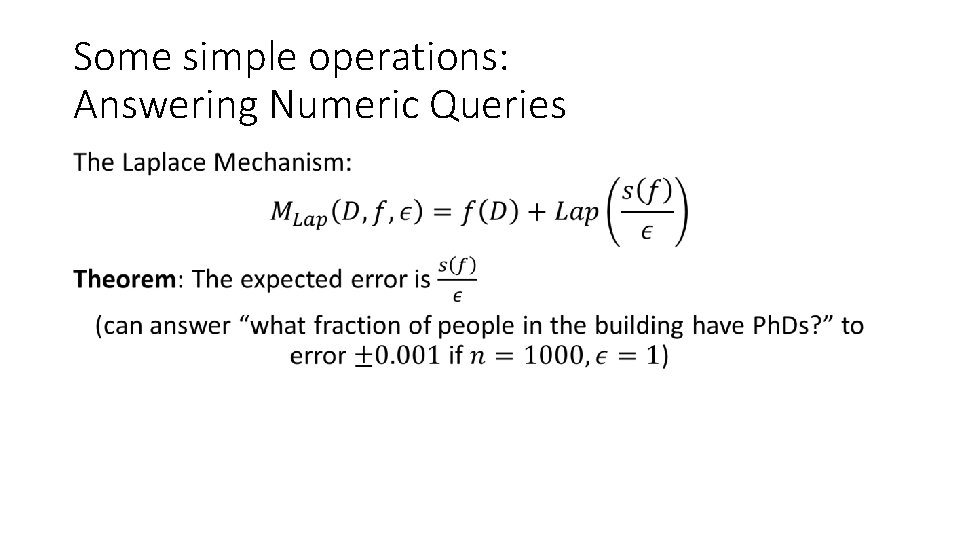

Some simple operations: Answering Numeric Queries •

From simple building blocks… • There is a large and growing set of differentially private tools: • Hypothesis Testing • Machine Learning and Statistical Inference • Linear/Logistic Regression • Convex empirical risk minimization (support vector machines, etc) • Stochastic Gradient Descent on Nonconvex objectives (Deep Learning) • • Principle Component Analysis/Low Rank Matrix Approximation Combinatorial Optimization/Convex Optimization Synthetic Data Generation (more on this later) …

Wait: Noise? ? • A common reaction: My application is important! I can’t tolerate “noise”. • But: • You should already be quantifying uncertainty in your estimates (e. g. from sampling error). • “Noise” added by DP algorithms is transparent: can be easily incorporated into confidence intervals, inference procedures. • In contrast to many less principled “privacy” protections --- this is a feature. • Randomization is unavoidable if you want to protect against inference…

Goals of Privacy and Inference are Aligned

Goals of Privacy and Inference are Aligned • A formal sense in which differentially private algorithms cannot overfit/lead to false discovery. • “Error” of computed statistics can be decomposed into: 1) Error on your data sample: Differential privacy increases this. 2) Degree to which your hypothesis overfits your sample: Differential privacy can decrease this. • Punchline: In natural settings when data is reused, differential privacy can decrease the error of many analyses.

But privacy does not come for free. The Fundamental Law of Information Recovery • “Overly accurate answers to too many questions allows for the exact reconstruction of the underlying dataset” • Dinur and Nissim 2003 and subsequent work. • True for any method of statistical disclosure, not just differential privacy. (In many settings, differential privacy is optimal in this respect)

But privacy does not come for free. •

The Research Frontier • There are many problems for which we don’t precisely understand the Pareto frontier… • And some for which we do --- but we can’t approach with computationally efficient algorithms.

In principle, it is possible to solve: • Statistical Learning [KLNRS 08, BKN 10, BKS 13, FX 14, …] • Synthetic Data Generation • Consistent with any bounded VC class [BLR 08, DNRRV 09, …] • Consistent with exponentially large families of convex ERM problems [Ull 15] • Adaptive Query Answering • For exponentially large sets of statistical queries and arbitrary low sensitivity queries [RR 10, HR 10, …]

With a catch • Statistical Learning [KLNRS 08, BKN 10, BKS 13, FX 14, …] • Synthetic Data Generation • Consistent with any bounded VC class [BLR 08, DNRRV 09, …] • Consistent with exponentially large families of convex ERM problems [Ull 15] • Adaptive Query Answering • For exponentially large sets of statistical queries and arbitrary low sensitivity queries [RR 10, HR 10, …] These tasks are all computationally hard subject to cryptographic assumptions: [DNRRV 09, UV 11, Ull 13, …]

The Research Frontier • Important work needed in the coming years. • A science of heuristic approaches to the hard problems in differential privacy. • This is how machine learning has successfully proceeded in the face of computational hardness. • Harder for privacy: can’t empirically verify privacy, must prove it.

Further Reading: @aaroth • Differential Privacy: • The Algorithmic Foundations of Differential Privacy. Dwork and Roth. NOW Publishers, 2014. http: //www. cis. upenn. edu/~aaroth/privacybook. html • Differential Privacy as a Tool to Reduce Error: • The Reusable Holdout: Preserving Validity in Adaptive Data Analysis. Dwork, Feldman, Hardt, Pitassi, Reingold, Roth. Science, 2015. • Lecture notes: http: //www. adaptivedataanalysis. com • A Popular Treatment: • The Ethical Algorithm. Kearns and Roth. Forthcoming from Oxford University Press. Fall 2019.

- Slides: 32