A Recurrent BERTbased Model for Question Generation Source

A Recurrent BERT-based Model for Question Generation Source : ACL 2019 Speaker : Ya-Fang, Hsiao Advisor : Jia-Ling, Koh Date : 2020/03/09

CONTENTS 01. Introduction 02. Related Work 03. Model 04. Experiment 05. Conclusion

01 Introduction

BERT-based Model for Question Generation BERT Pre-trained Language Model Generates a question corresponding to a context

02 Related Work

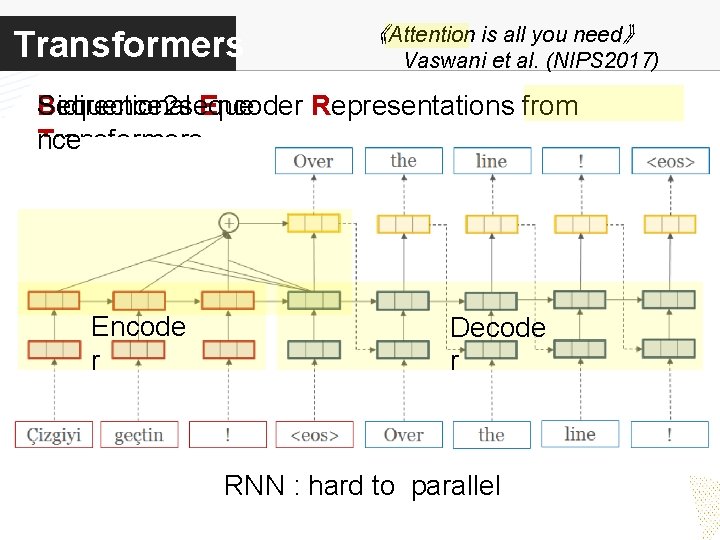

Transformers 《Attention is all you need》 Vaswani et al. (NIPS 2017) Sequence 2 seque Bidirectional Encoder Representations from nce Transformers Encode r Decode r RNN : hard to parallel

Transformers Sequence 2 seque Encoder-Decoder nce 《Attention is all you need》 Vaswani et al. (NIPS 2017)

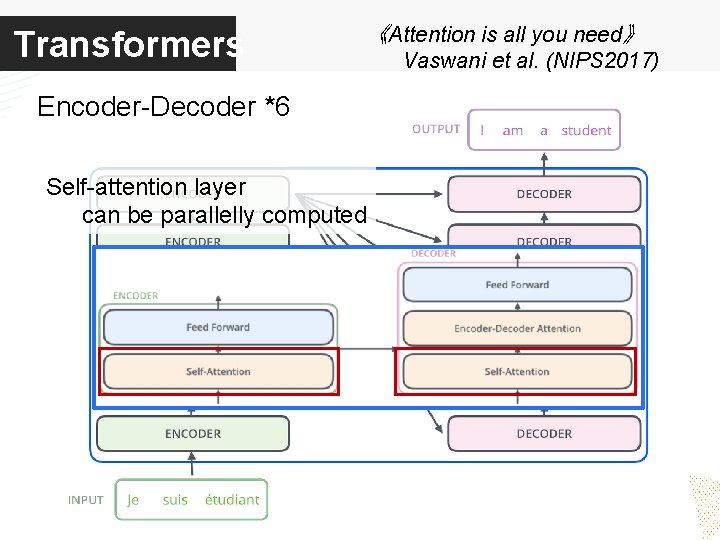

Transformers 《Attention is all you need》 Encoder-Decoder *6 Self-attention layer can be parallelly computed Vaswani et al. (NIPS 2017)

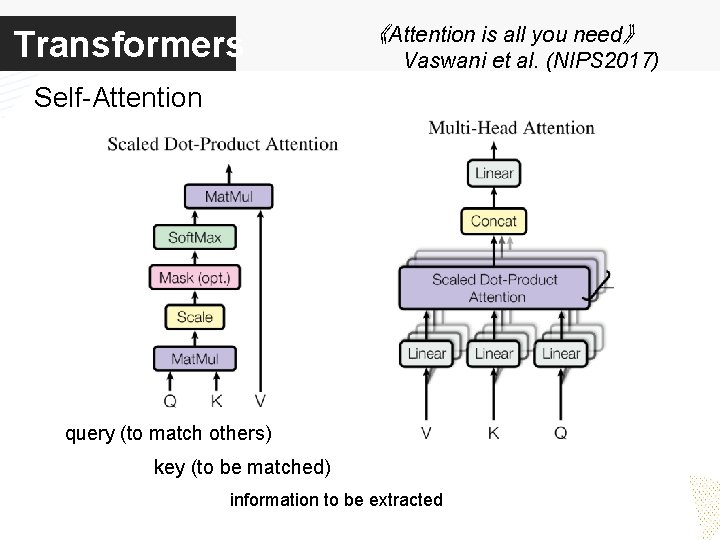

Transformers 《Attention is all you need》 Vaswani et al. (NIPS 2017) Self-Attention query (to match others) key (to be matched) information to be extracted

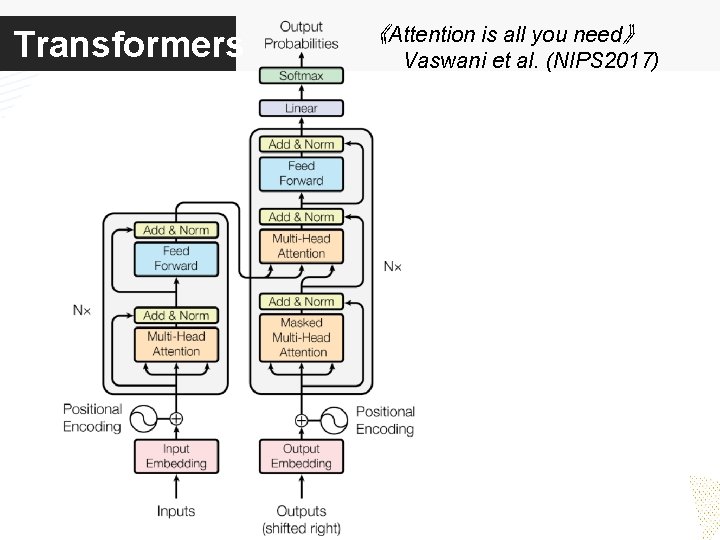

Transformers 《Attention is all you need》 Vaswani et al. (NIPS 2017)

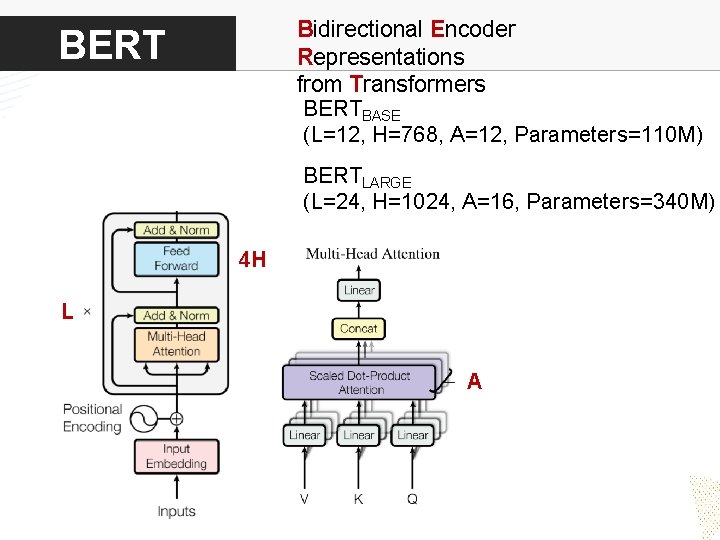

Bidirectional Encoder Representations from Transformers BERTBASE (L=12, H=768, A=12, Parameters=110 M) BERTLARGE (L=24, H=1024, A=16, Parameters=340 M) 4 H L A

03 Model

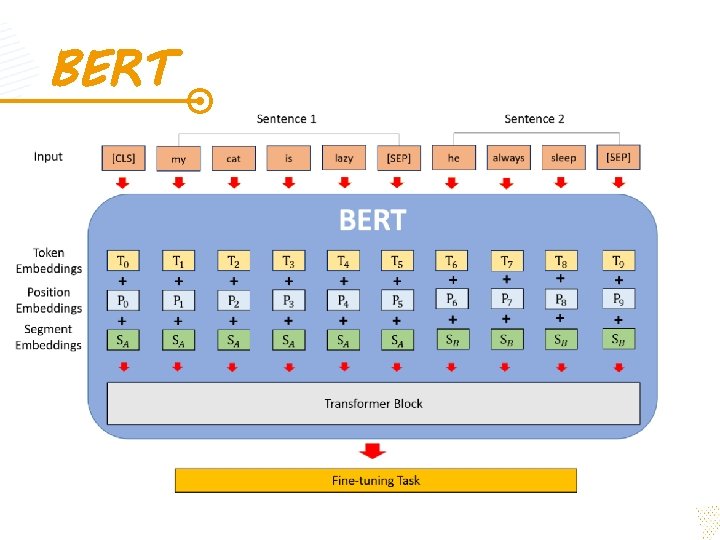

BERT

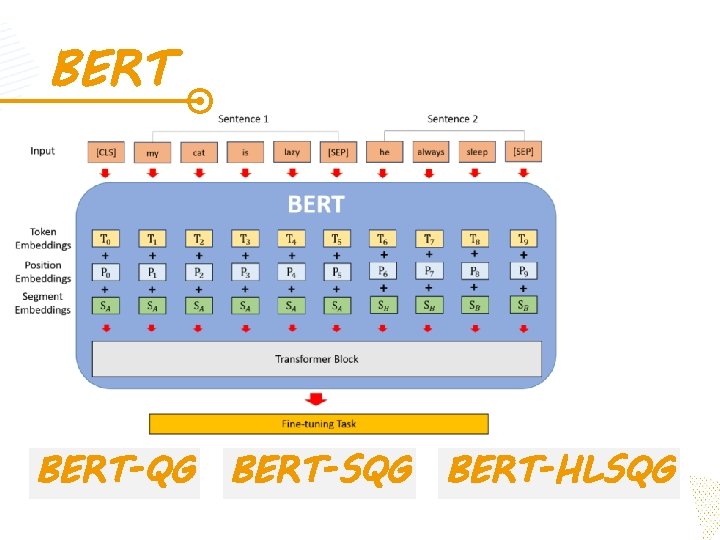

BERT-QG BERT-SQG BERT-HLSQG

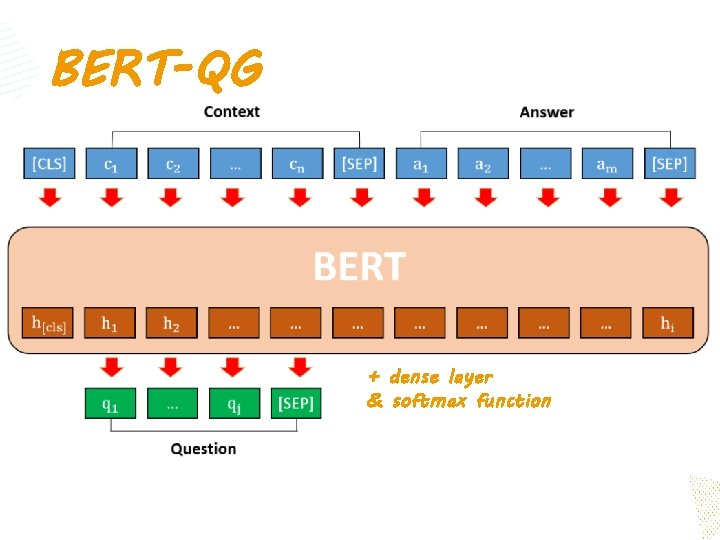

BERT-QG + dense layer & softmax function

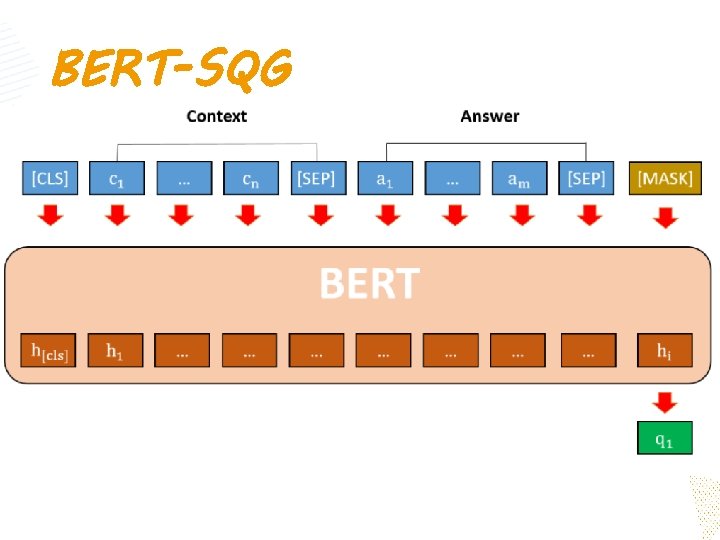

BERT-SQG

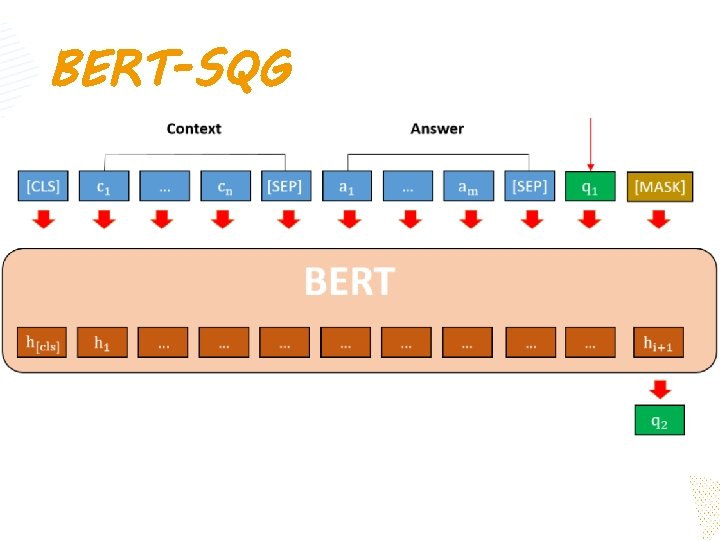

BERT-SQG

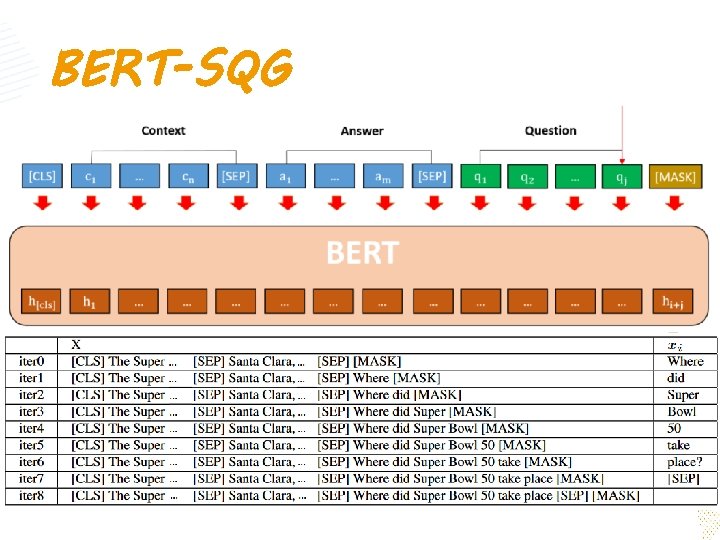

BERT-SQG

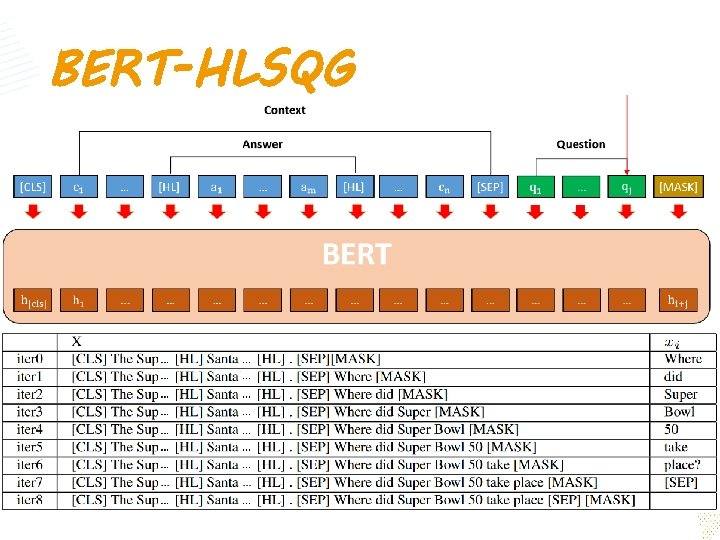

BERT-HLSQG

04 Experiment

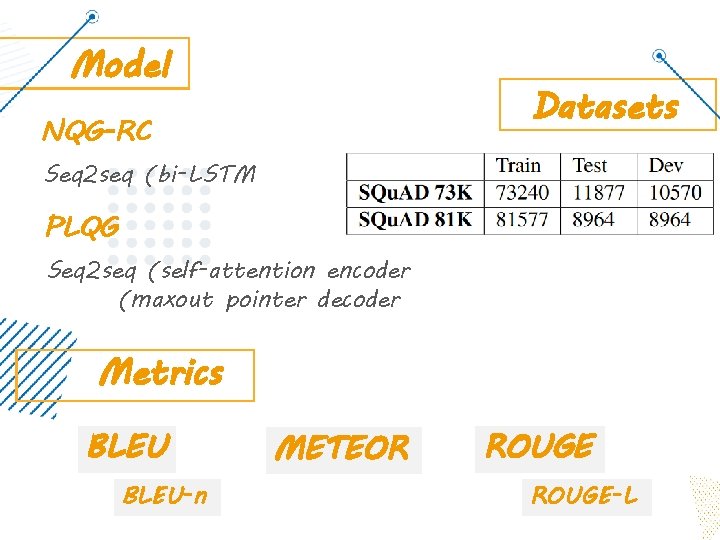

Model Datasets NQG-RC Seq 2 seq (bi-LSTM PLQG Seq 2 seq (self-attention encoder (maxout pointer decoder Metrics BLEU-n METEOR ROUGE-L

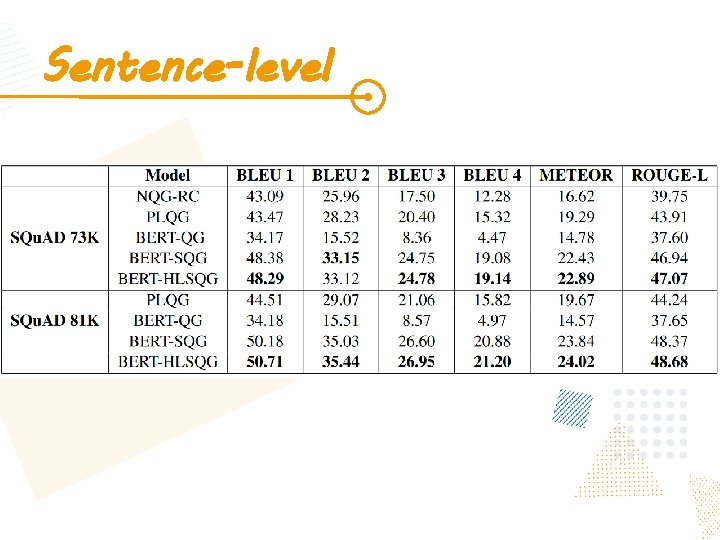

Sentence-level

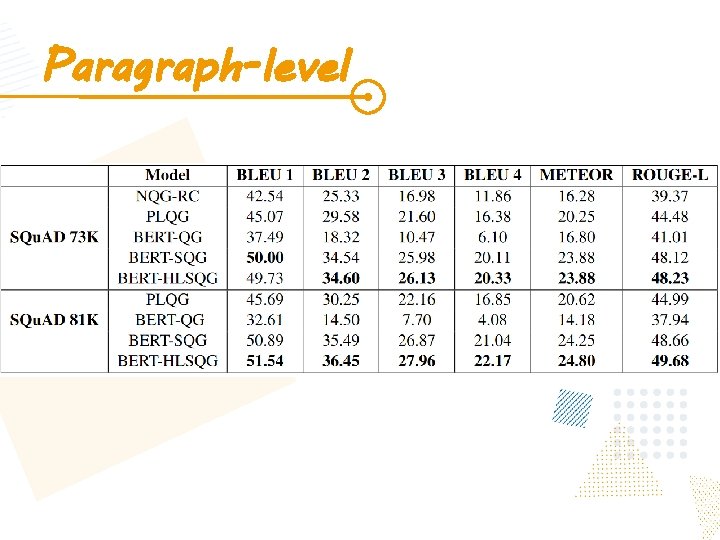

Paragraph-level

05 Conclusion

A Recurrent BERT-based Model for Question Generation

- Slides: 25