A PhraseBased Joint Probability Model for Statistical Machine

A Phrase-Based, Joint Probability Model for Statistical Machine Translation Daniel Marcu, William Wong(2002) Presented by Ping Yu 01/17/2006

Statistical Machine Translation a refresh

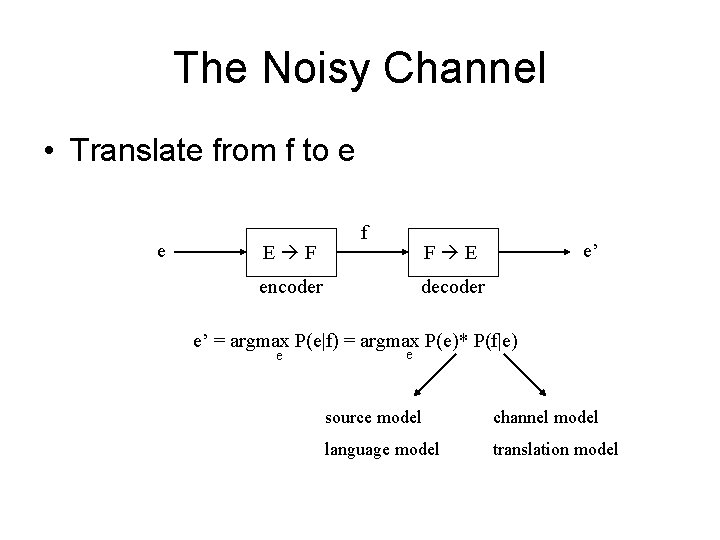

The Noisy Channel • Translate from f to e e E F f e’ F E encoder decoder e’ = argmax P(e|f) = argmax P(e)* P(f|e) e e source model channel model language model translation model

Language Model Bag Translation: sentence => bag of words N-gram language model

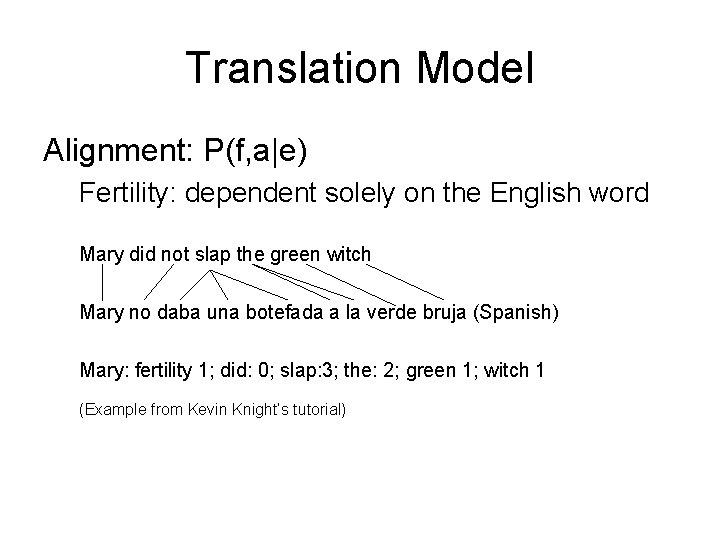

Translation Model Alignment: P(f, a|e) Fertility: dependent solely on the English word Mary did not slap the green witch Mary no daba una botefada a la verde bruja (Spanish) Mary: fertility 1; did: 0; slap: 3; the: 2; green 1; witch 1 (Example from Kevin Knight’s tutorial)

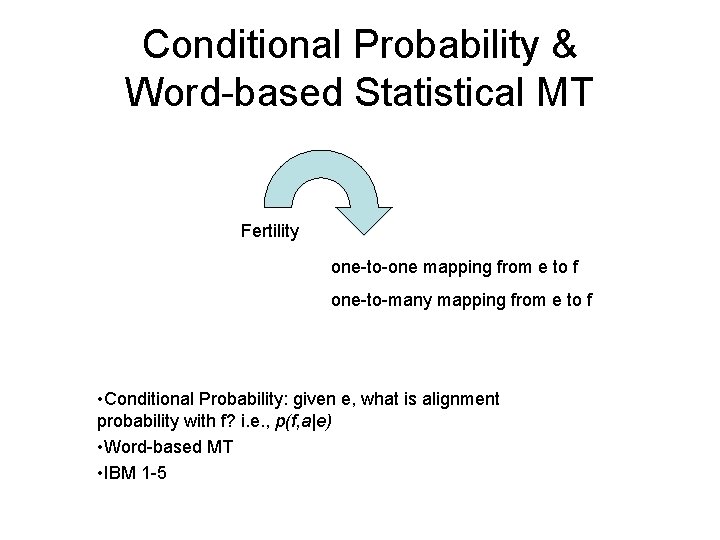

Conditional Probability & Word-based Statistical MT Fertility one-to-one mapping from e to f one-to-many mapping from e to f • Conditional Probability: given e, what is alignment probability with f? i. e. , p(f, a|e) • Word-based MT • IBM 1 -5

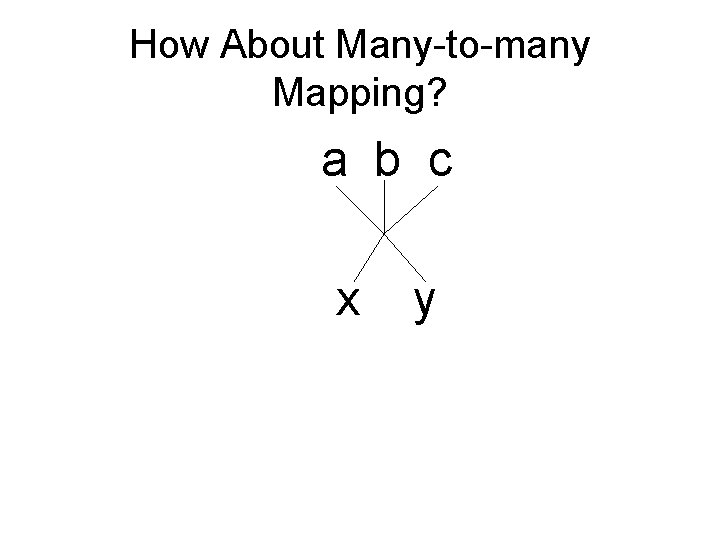

How About Many-to-many Mapping? a b c x y

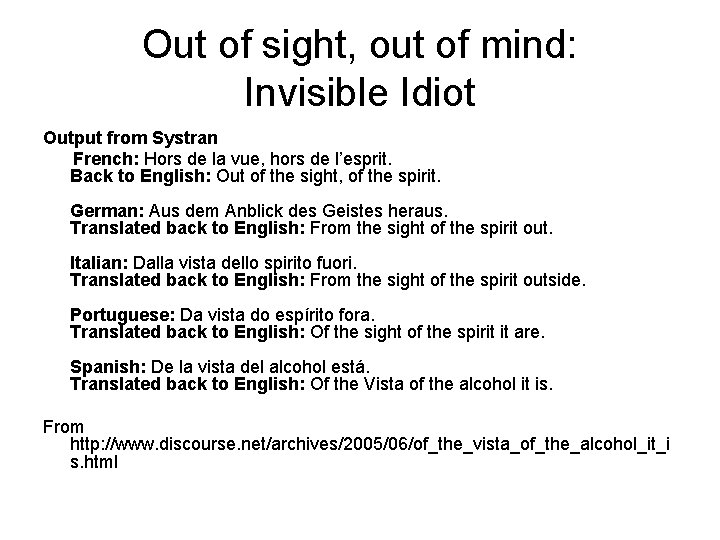

Out of sight, out of mind: Invisible Idiot Output from Systran French: Hors de la vue, hors de l’esprit. Back to English: Out of the sight, of the spirit. German: Aus dem Anblick des Geistes heraus. Translated back to English: From the sight of the spirit out. Italian: Dalla vista dello spirito fuori. Translated back to English: From the sight of the spirit outside. Portuguese: Da vista do espírito fora. Translated back to English: Of the sight of the spirit it are. Spanish: De la vista del alcohol está. Translated back to English: Of the Vista of the alcohol it is. From http: //www. discourse. net/archives/2005/06/of_the_vista_of_the_alcohol_it_i s. html

Lost in Translation

Solution • many-to-many mapping • How? Word-based Phrase-based

Alignment between Multiple Phrases • Phrases are not really phrases • Phrases defined differently in different models • Most extracted phrases based on wordbased alignment Och and Ney (1999): alignment template model Melamed (2001): Non-compositional compounds model

Marcu and Wong (2002)

Promising Features • Looking for phrases and alignments simultaneously for both Source and Target sentences • Directly modeling phrase-based probabilities • Not dependent on word-based probabilities

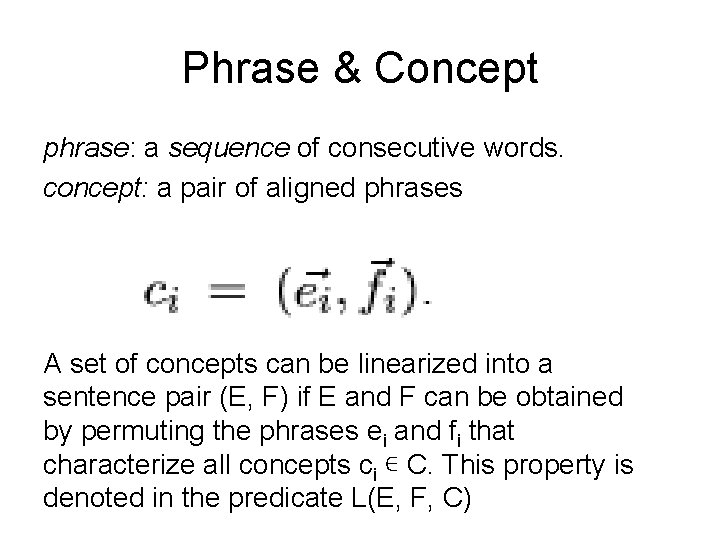

Phrase & Concept phrase: a sequence of consecutive words. concept: a pair of aligned phrases A set of concepts can be linearized into a sentence pair (E, F) if E and F can be obtained by permuting the phrases ei and fi that characterize all concepts ci ∊ C. This property is denoted in the predicate L(E, F, C)

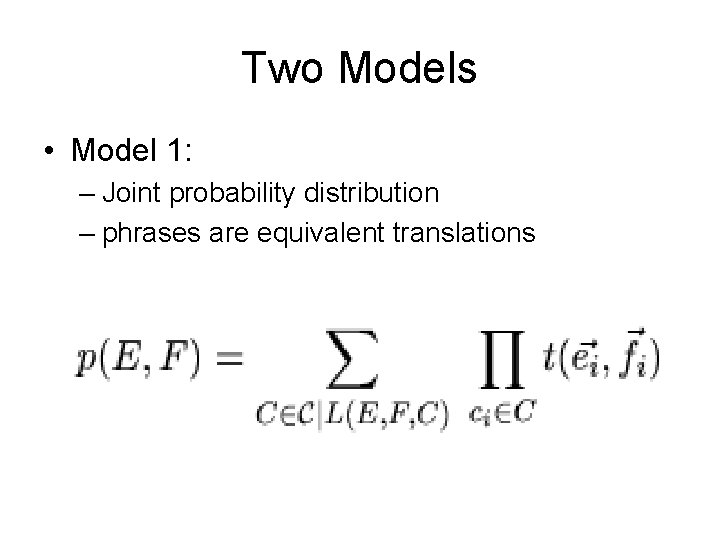

Two Models • Model 1: – Joint probability distribution – phrases are equivalent translations

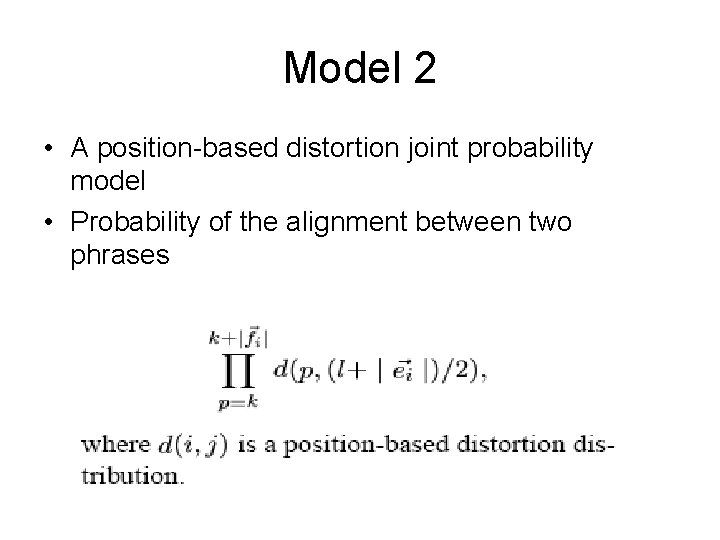

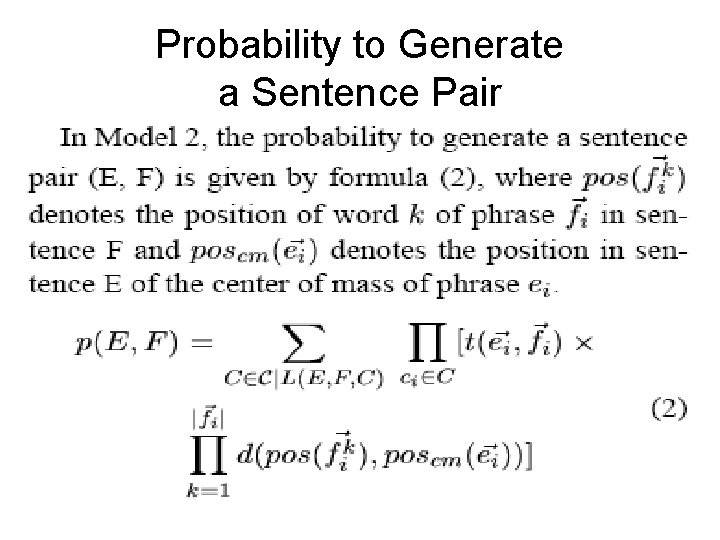

Model 2 • A position-based distortion joint probability model • Probability of the alignment between two phrases

Probability to Generate a Sentence Pair

How? Sentences Phrases Concepts

Four Steps • Phrases & Concepts determination • Initialize the joint probability of concepts, i. e. , t-distribution table • EM training on Viterbi alignments – – Calculate t-distribution table Full Iteration and then approximation of EM Viterbi alignment Smoothing • Generate conditional probability from joint probability, needed in the decoder

Step 1: Phrase Determination • All unigram • Frequency of n-gram >=5

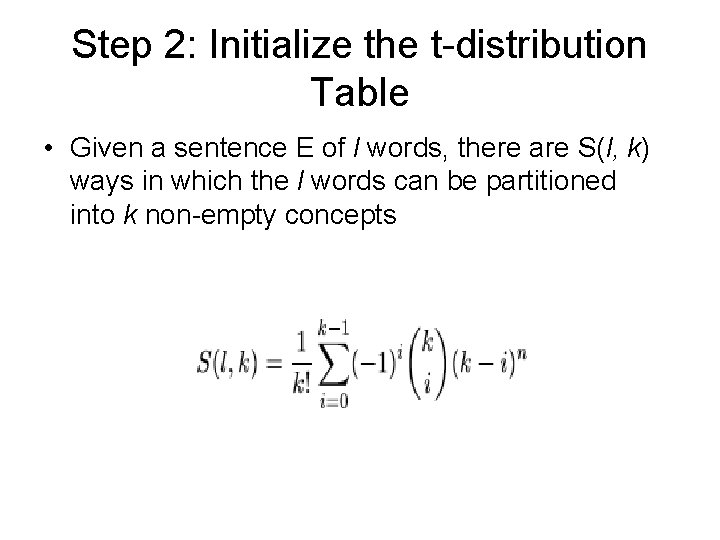

Step 2: Initialize the t-distribution Table • Given a sentence E of l words, there are S(l, k) ways in which the l words can be partitioned into k non-empty concepts

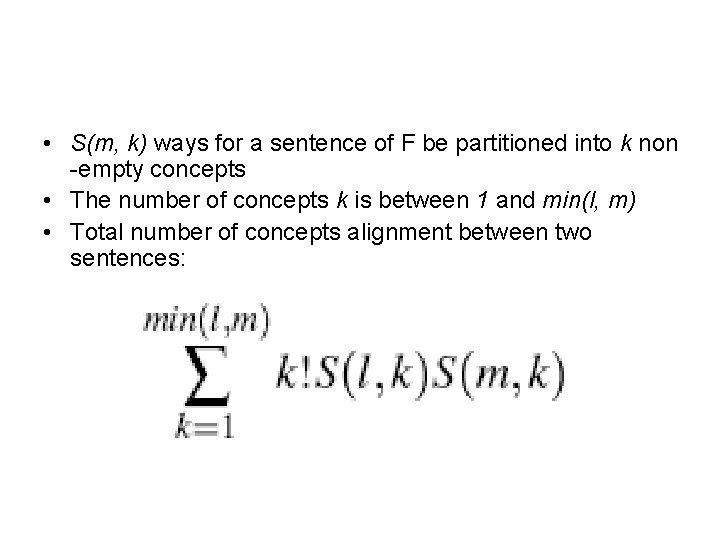

• S(m, k) ways for a sentence of F be partitioned into k non -empty concepts • The number of concepts k is between 1 and min(l, m) • Total number of concepts alignment between two sentences:

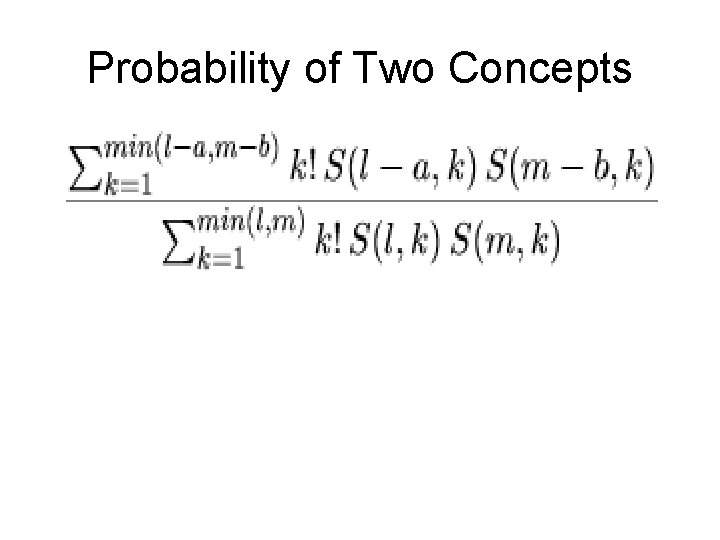

Probability of Two Concepts

How about the Word Order • The equation doesn’t take word order into consideration. Phrases must consist of consecutive words • The formula overestimates the numerator and denominator equally, so the approximation works well in the practice

Step 3: EM training on Viterbi Alignments • After the initial t-table is built, EM can be used to improve the parameters • However, it is impossible to calculate expectations over all possible alignments • So for the initial alignment, only the concepts with high t_probabilities are aligned

Implementation • Greedy Alignment: Greedily produce an initial alignment • Hillclimbing: examine the probability of neighbor concepts to get local maxima by performing the following operations:

• • Swap concepts: <e 1, f 1>, <e 2, f 2>=><e 1, f 2>, <e 2, f 1> Merge concepts: <e 1, f 1>, <e 2, f 2>=><e 1 + e 2, f 1+f 2> Break concepts: <e 1 + e 2, f 1+f 2> =><e 1, f 1>, <e 2, f 2> Move words across concepts: <e 1+e 2, f 1>, <e 3, f 2> => <e 1, f 1>, <e 2 + e 3, f 2> From www. iccs. informatics. ed. ac. uk/ ~osborne/mscprojects/oconnor. pdf

• Viterbi Search • Smoothing

Training Iteration • First iteration used Model 1 • Rest iterations used Model 2

Step 4: Derivation of Conditional Probability Model • P(f|e) = p(e, f)/p(e) • Used in the decoder model

Encoder • Given a Foreign sentence F, maximize the probability p(E, F) • Hillclimb by modifying E and the alignment between E and F to maximize p(E)*P(F|E) • P(E) is a trigram-based language model at word level instead of phrase level

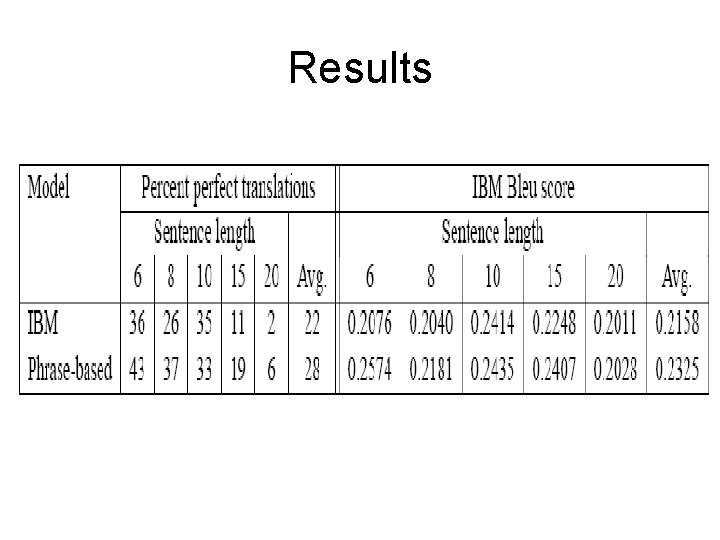

Evaluation • • Data: French-English Hansard data Compared with Giza (IBM Model 4) Training: 100, 000 sentence pairs Testing: 500 unseen sentences, uniformly distributed across length 6, 8, 10, 15 and 20

Results

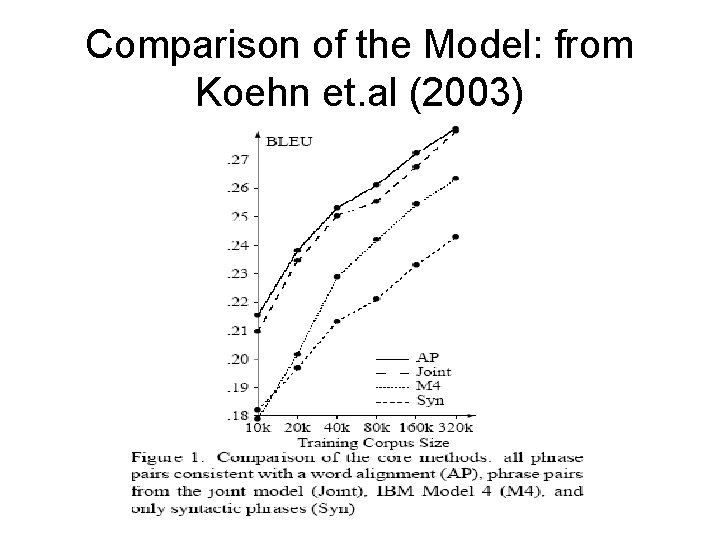

Comparison of the Model: from Koehn et. al (2003)

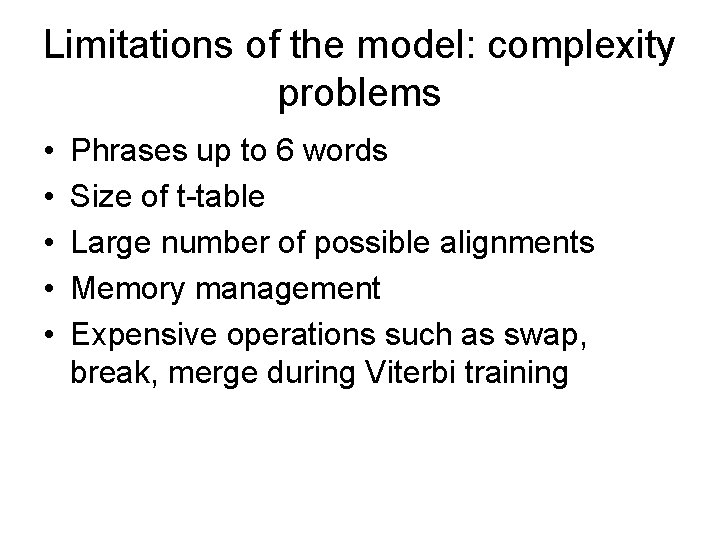

Limitations of the model: complexity problems • • • Phrases up to 6 words Size of t-table Large number of possible alignments Memory management Expensive operations such as swap, break, merge during Viterbi training

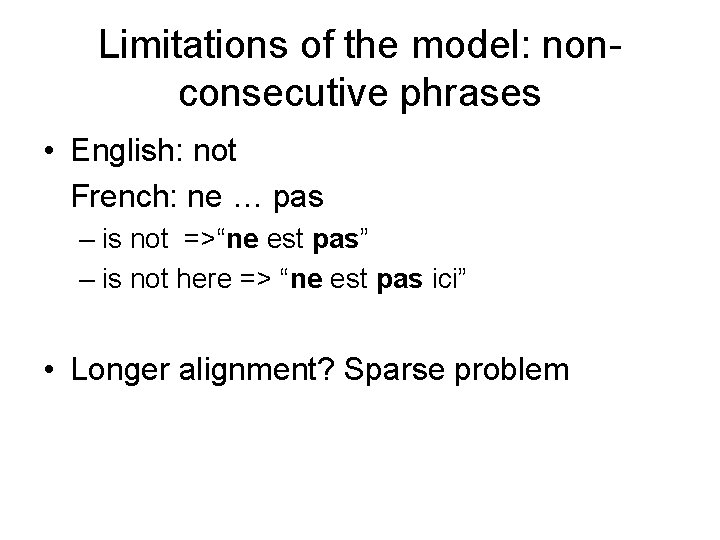

Limitations of the model: nonconsecutive phrases • English: not French: ne … pas – is not =>“ne est pas” – is not here => “ne est pas ici” • Longer alignment? Sparse problem

Complexity vs. Performance • Marcu and Wong: n-gram <=6 • Keohn et al. (2003) – Allow Length of words >3 – Complexity increases largely but no significant improvement

Questions?

- Slides: 38