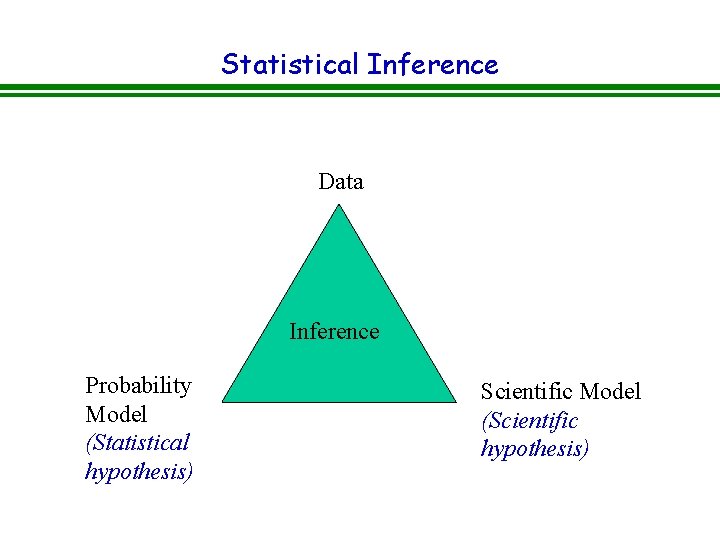

Statistical Inference Data Inference Probability Model Statistical hypothesis

Statistical Inference Data Inference Probability Model (Statistical hypothesis) Scientific Model (Scientific hypothesis)

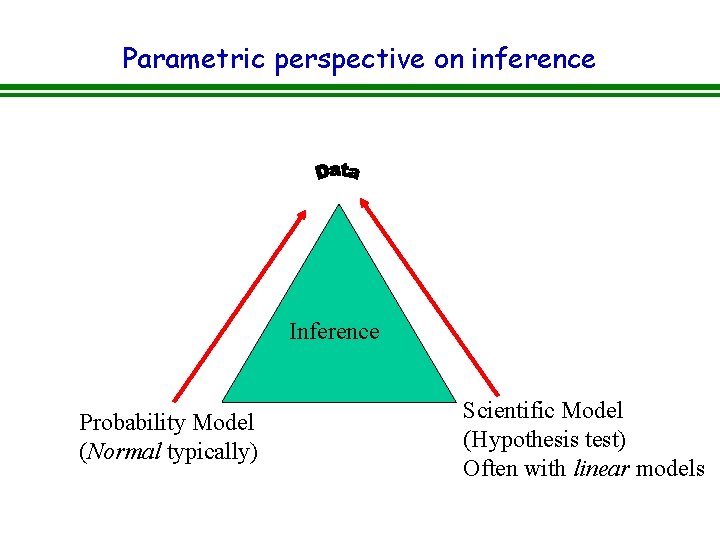

Parametric perspective on inference Inference Probability Model (Normal typically) Scientific Model (Hypothesis test) Often with linear models

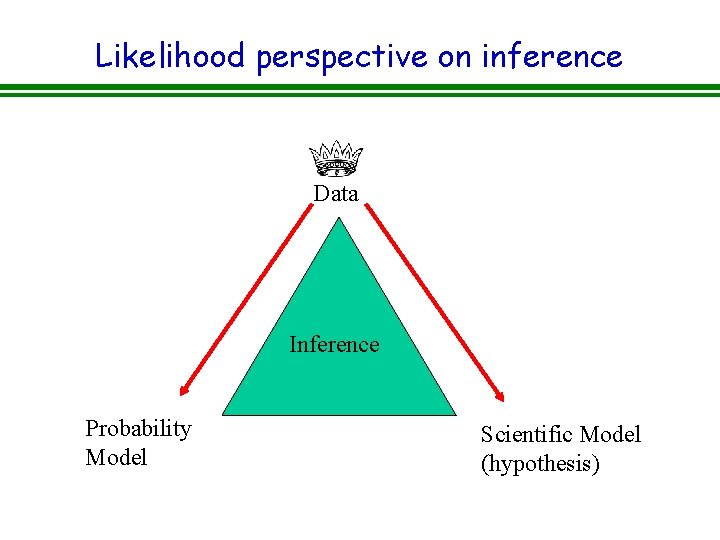

Likelihood perspective on inference Data Inference Probability Model Scientific Model (hypothesis)

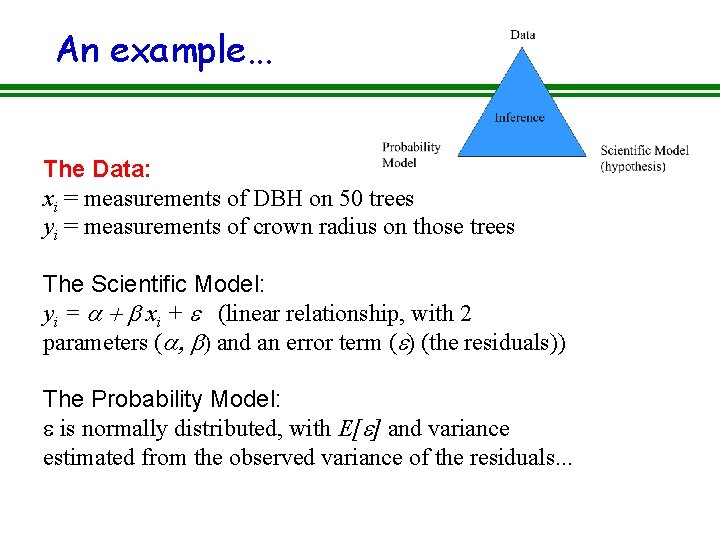

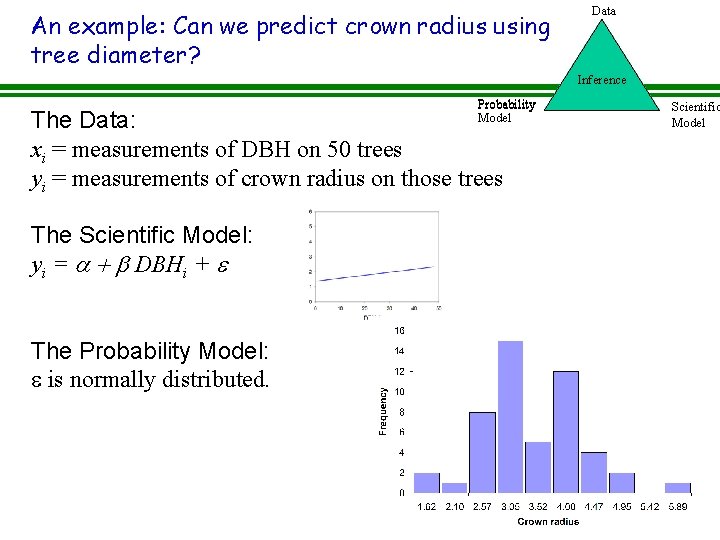

An example. . . The Data: xi = measurements of DBH on 50 trees yi = measurements of crown radius on those trees The Scientific Model: yi = a + b xi + e (linear relationship, with 2 parameters (a, b) and an error term (e) (the residuals)) The Probability Model: e is normally distributed, with E[e] and variance estimated from the observed variance of the residuals. . .

The triangle of statistical inference: Model • Models clarify our understanding of nature. • Help us understand the importance (or unimportance) of individuals processes and mechanisms. • Since they are not hypotheses, they can never be “correct”. • We don’t “reject” models; we assess their validity. • Establish what’s “true” by establishing which model the data support.

The triangle of statistical inference: Probability distributions • Data are never “clean”. • Most models are deterministic, they describe the average behavior of a system but not the noise or variability. To compare models with data, we need a statistical model which describes the variability. • We must understand the processes giving rise to variability to select the correct probability density function (error structure) that gives rise to the variability or noise.

An example: Can we predict crown radius using tree diameter? Data Inference Probability Model The Data: xi = measurements of DBH on 50 trees yi = measurements of crown radius on those trees The Scientific Model: yi = a + b DBHi + e The Probability Model: e is normally distributed. Scientific Model

Why do we care about probability? • Foundation of theory of statistics. • Description of uncertainty (error). – Measurement error – Process error • Needed to understand likelihood theory which is required for: ü Estimating model parameters. ü Model selection (What hypothesis do data support? ).

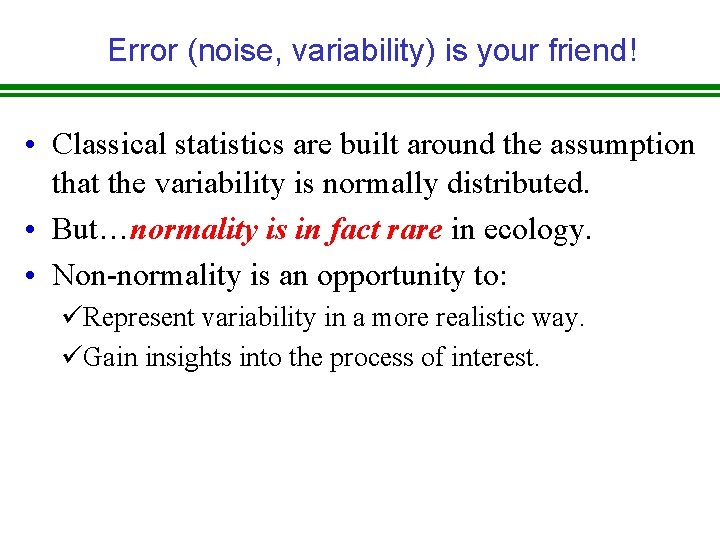

Error (noise, variability) is your friend! • Classical statistics are built around the assumption that the variability is normally distributed. • But…normality is in fact rare in ecology. • Non-normality is an opportunity to: üRepresent variability in a more realistic way. üGain insights into the process of interest.

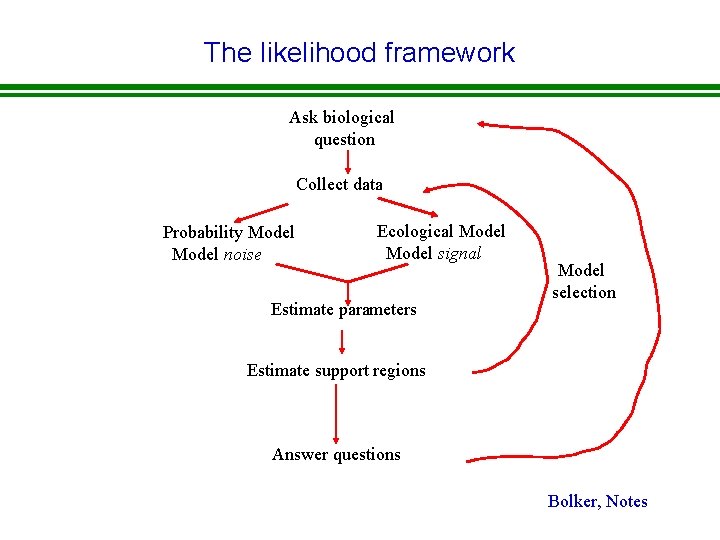

The likelihood framework Ask biological question Collect data Probability Model noise Ecological Model signal Estimate parameters Model selection Estimate support regions Answer questions Bolker, Notes

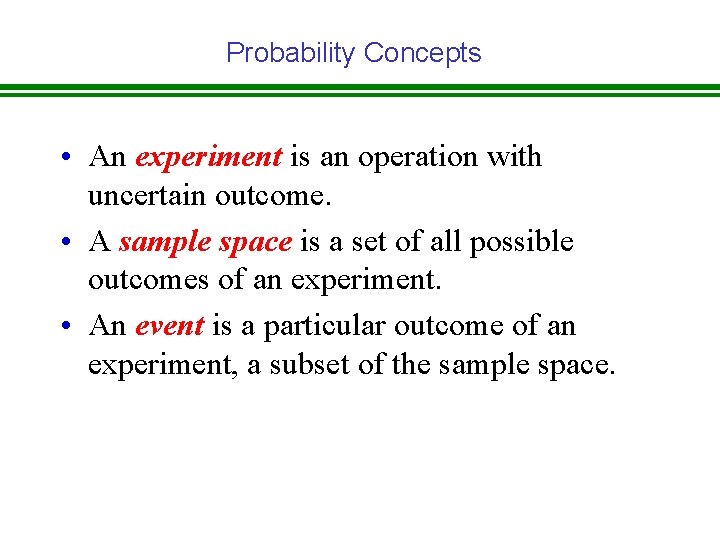

Probability Concepts • An experiment is an operation with uncertain outcome. • A sample space is a set of all possible outcomes of an experiment. • An event is a particular outcome of an experiment, a subset of the sample space.

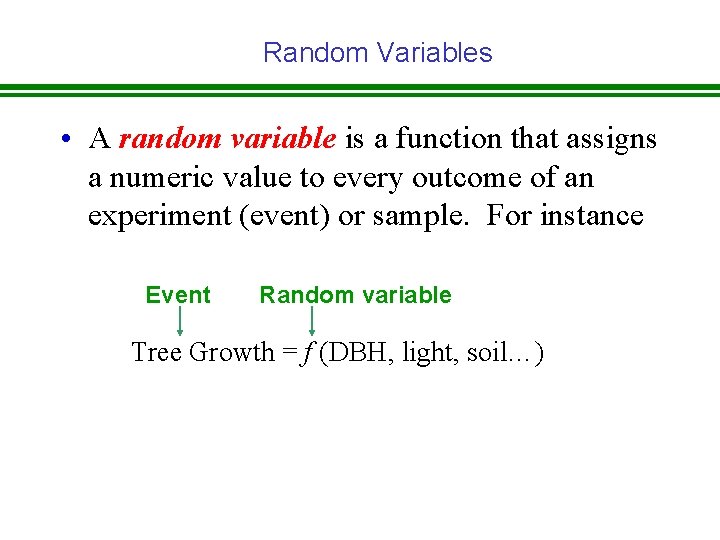

Random Variables • A random variable is a function that assigns a numeric value to every outcome of an experiment (event) or sample. For instance Event Random variable Tree Growth = f (DBH, light, soil…)

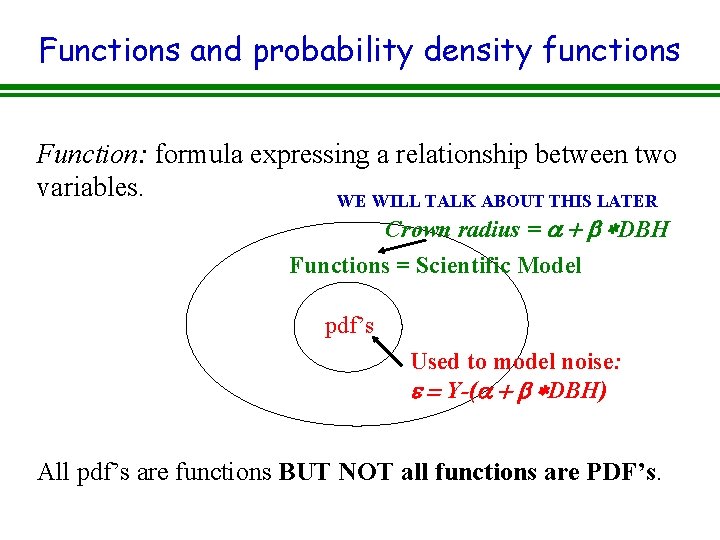

Functions and probability density functions Function: formula expressing a relationship between two variables. WE WILL TALK ABOUT THIS LATER Crown radius = a + b *DBH Functions = Scientific Model pdf’s Used to model noise: e = Y-(a + b *DBH) All pdf’s are functions BUT NOT all functions are PDF’s.

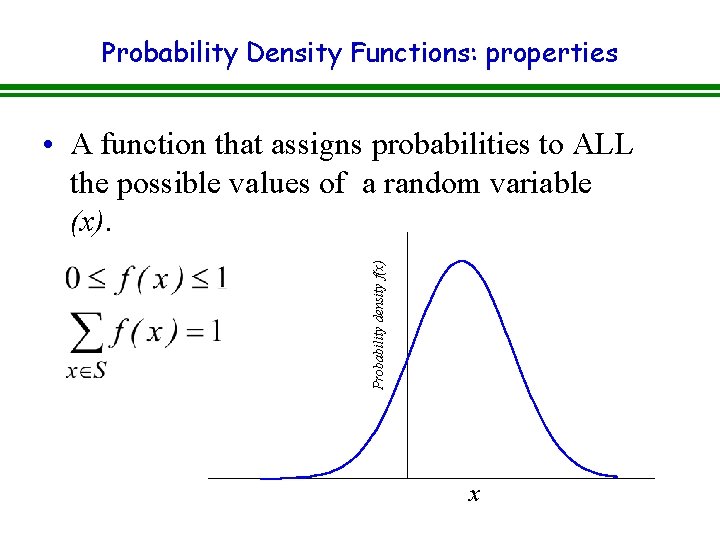

Probability Density Functions: properties Probability density f(x) • A function that assigns probabilities to ALL the possible values of a random variable (x). x

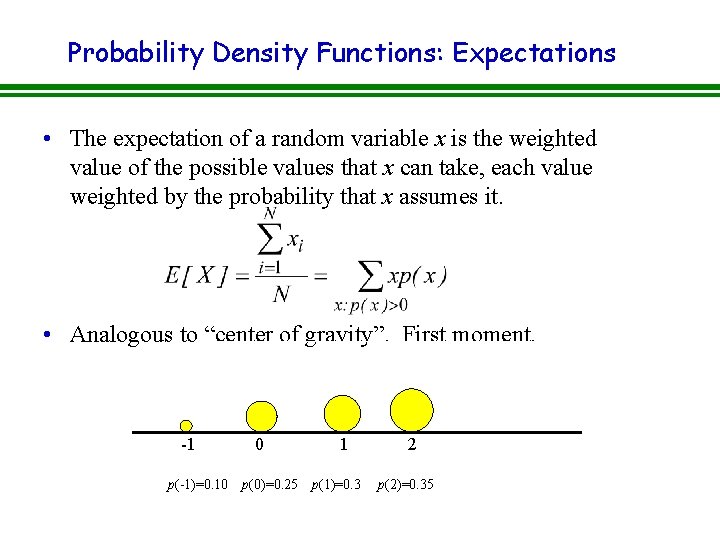

Probability Density Functions: Expectations • The expectation of a random variable x is the weighted value of the possible values that x can take, each value weighted by the probability that x assumes it. • Analogous to “center of gravity”. First moment. -1 p(-1)=0. 10 0 p(0)=0. 25 1 p(1)=0. 3 2 p(2)=0. 35

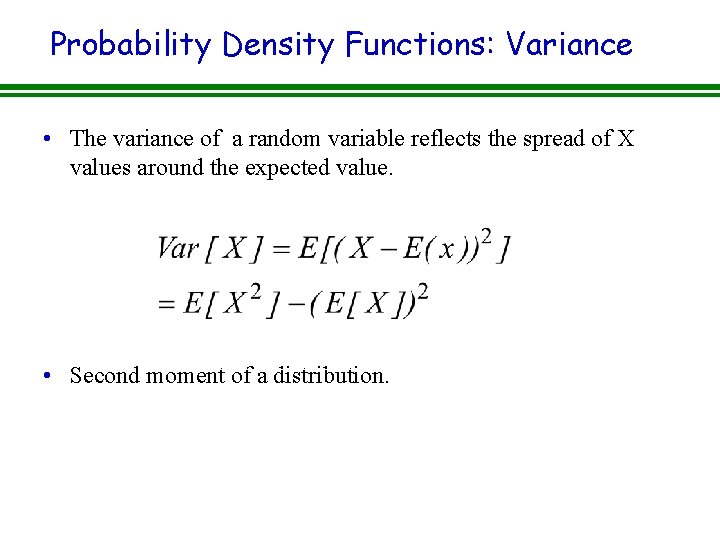

Probability Density Functions: Variance • The variance of a random variable reflects the spread of X values around the expected value. • Second moment of a distribution.

Probability Distributions • A function that assigns probabilities to the possible values of a random variable (X). • They come in two flavors: ü DISCRETE: outcomes are a set of discrete possibilities such as integers (e. g, counting). ü CONTINUOUS: A probability distribution over a continuous range (real numbers or the non-negative real numbers).

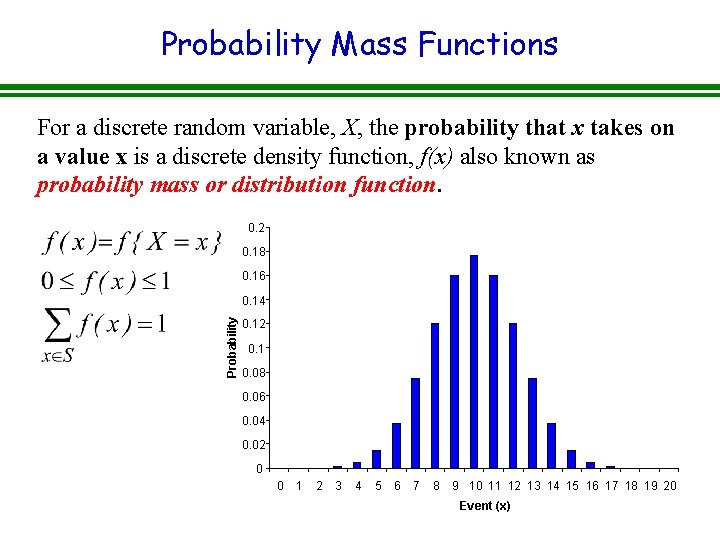

Probability Mass Functions For a discrete random variable, X, the probability that x takes on a value x is a discrete density function, f(x) also known as probability mass or distribution function. 0. 2 0. 18 0. 16 Probability 0. 14 0. 12 0. 1 0. 08 0. 06 0. 04 0. 02 0 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 Event (x)

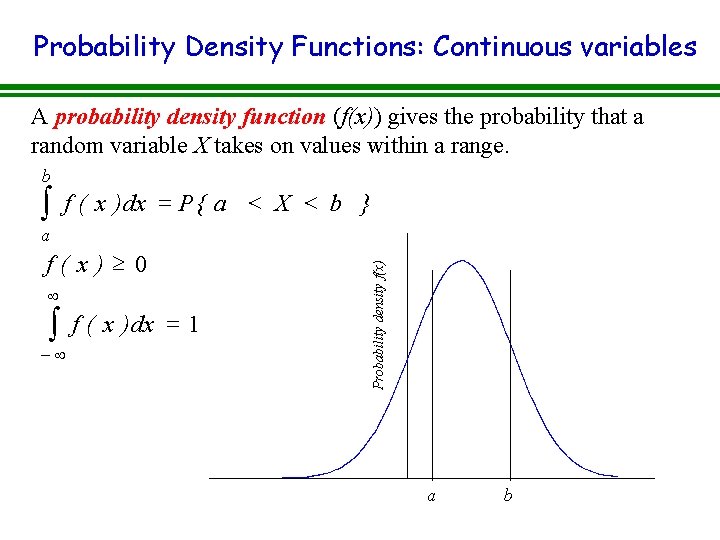

Probability Density Functions: Continuous variables A probability density function (f(x)) gives the probability that a random variable X takes on values within a range. b ò f ( x ) dx = P { a < X < b } f(x)³ 0 ¥ ò -¥ f ( x ) dx = 1 Probability density f(x) a a b

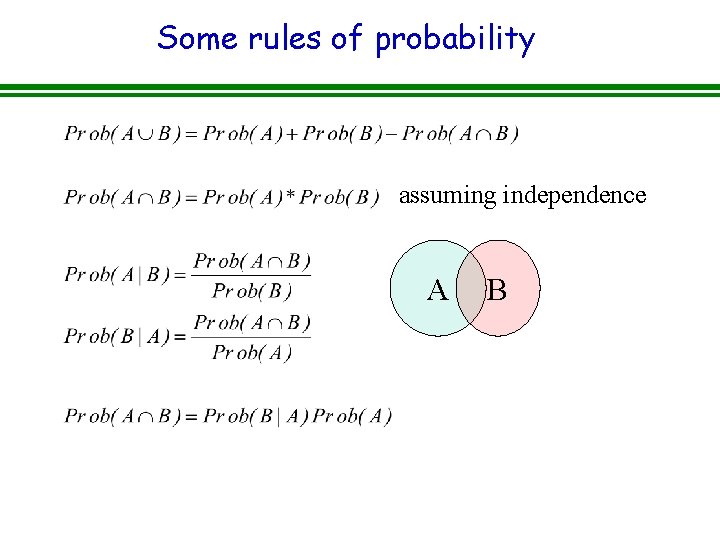

Some rules of probability assuming independence A B

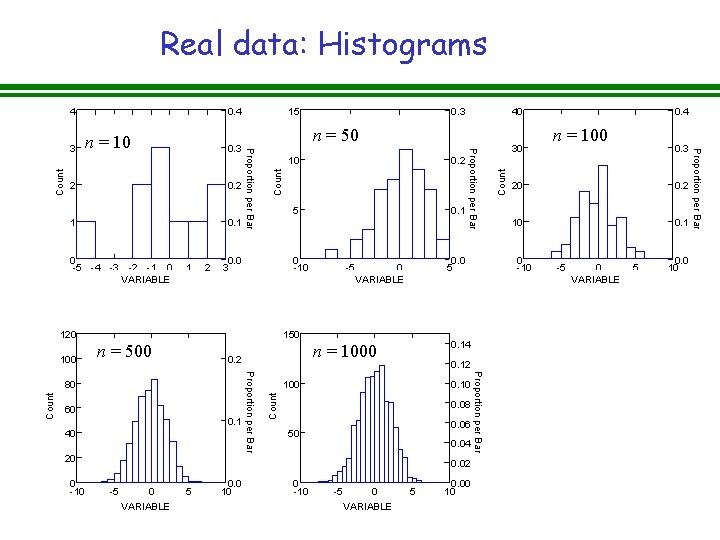

Real data: Histograms 0. 2 1 0 -5 -4 -3 -2 -1 0 TEN VARIABLE 1 2 n = 50 10 5 0. 0 3 -5 0. 0 5 0 FIFTY VARIABLE 150 100 0. 10 0. 08 0. 06 50 0. 04 20 0 -10 0. 02 -5 0 5 FIVEHUND VARIABLE 0. 0 10 0 -10 -5 0 THOUS VARIABLE 5 0. 00 10 Proportion per Bar 0. 1 40 Proportion per Bar 60 0. 12 0. 4 n = 100 0. 3 20 0. 2 10 0. 1 0 -10 0. 14 n = 1000 0. 2 Count n = 500 80 Count 0. 1 0 -10 120 100 0. 2 30 Count 2 40 -5 0 5 HUNDRED VARIABLE 0. 0 10 Proportion per Bar 0. 3 Proportion per Bar n = 10 15 Proportion per Bar 3 0. 4 Count 4

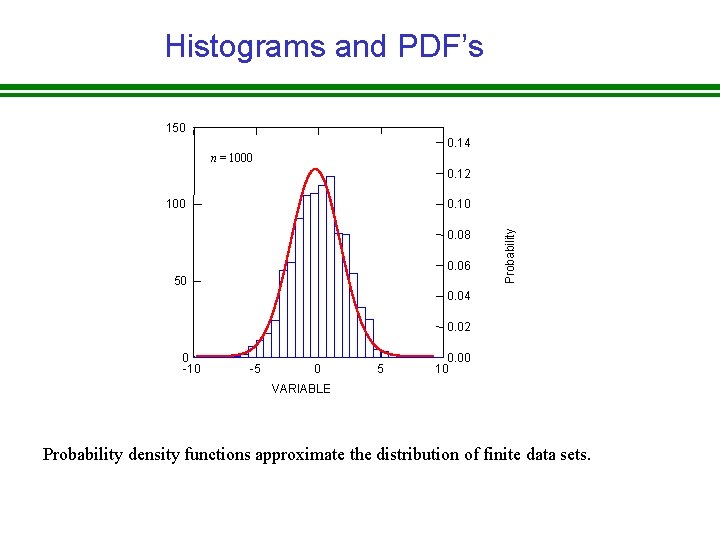

Histograms and PDF’s 150 0. 14 n = 1000 0. 12 0. 10 0. 08 0. 06 50 Probability 100 0. 04 0. 02 0 -10 -5 0 5 0. 00 10 VARIABLE Probability density functions approximate the distribution of finite data sets.

Uses of Frequency Distributions • Empirical (frequentist): üMake predictions about the frequency of a particular event. üJudge whether an observation belongs to a population. • Theoretical: üPredictions about the distribution of the data based on some basic assumptions about the nature of the forces acting on a particular biological system. üDescribe the randomness in the data.

Some useful distributions 1. Discrete § Binomial : Two possible outcomes. § Poisson: Counts. § Negative binomial: Counts. § Multinomial: Multiple categorical outcomes. 2. Continuous § Normal. § Lognormal. § Exponential § Gamma § Beta

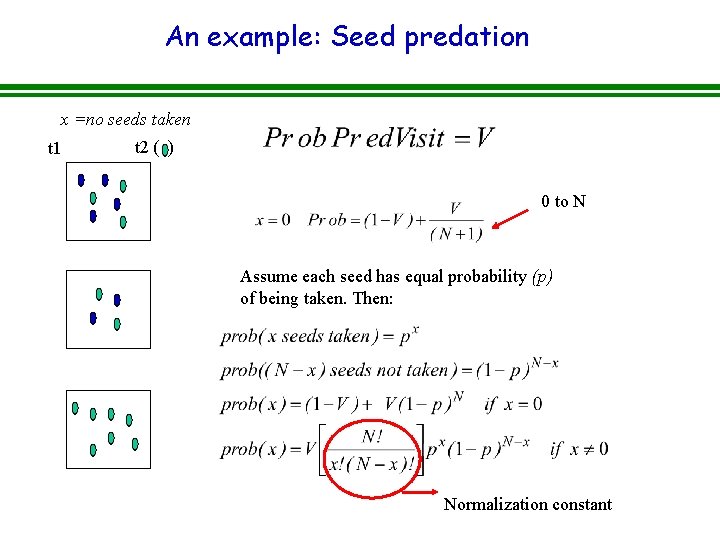

An example: Seed predation x =no seeds taken t 1 t 2 ( ) 0 to N Assume each seed has equal probability (p) of being taken. Then: Normalization constant

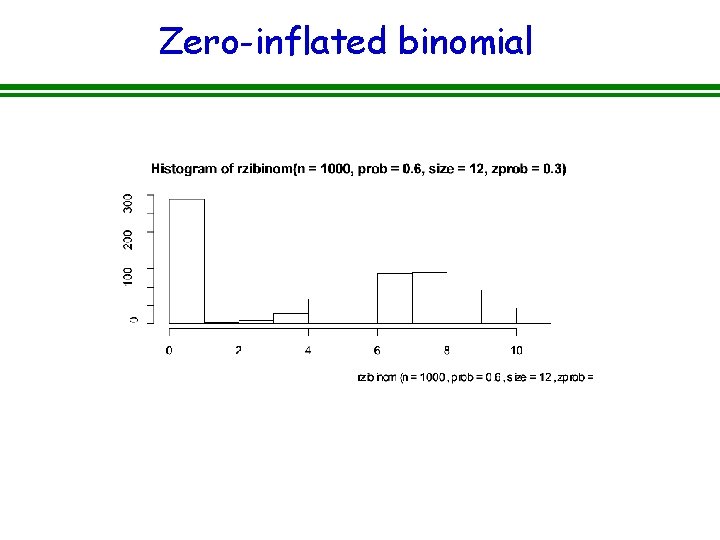

Zero-inflated binomial

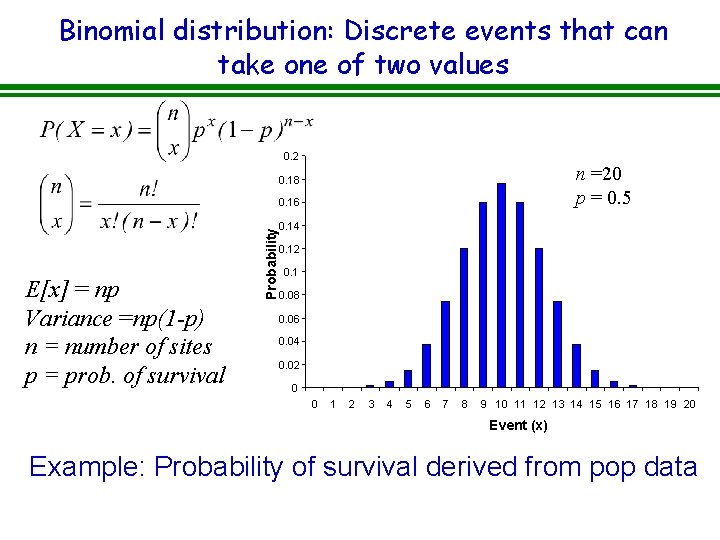

Binomial distribution: Discrete events that can take one of two values 0. 2 n =20 p = 0. 5 0. 18 E[x] = np Variance =np(1 -p) n = number of sites p = prob. of survival Probability 0. 16 0. 14 0. 12 0. 1 0. 08 0. 06 0. 04 0. 02 0 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 Event (x) Example: Probability of survival derived from pop data

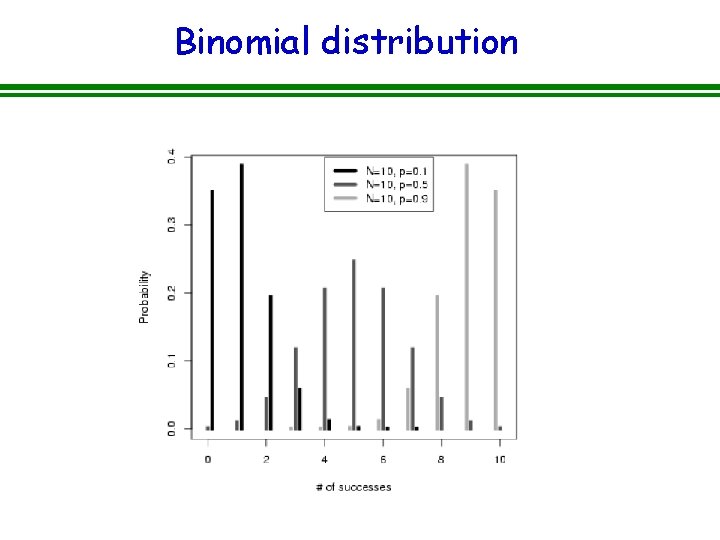

Binomial distribution

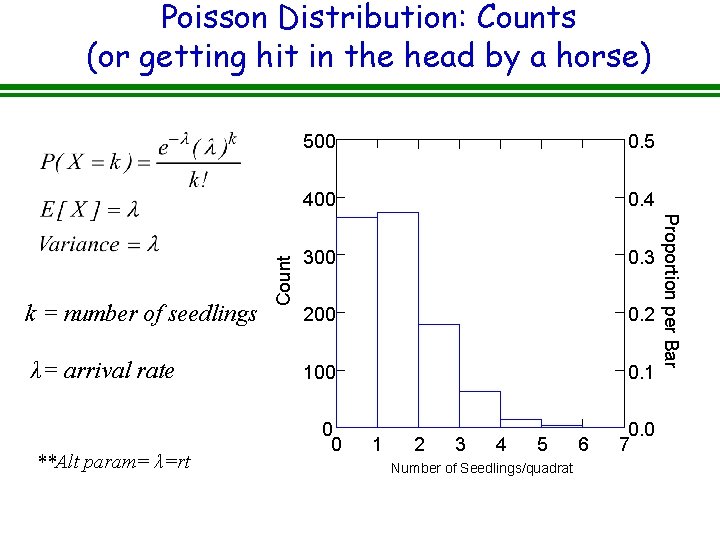

λ= arrival rate **Alt param= λ=rt 500 0. 5 400 0. 4 300 0. 3 200 0. 2 100 0. 1 0 0 1 2 3 4 5 6 Number. POISSON of Seedlings/quadrat 0. 0 7 Proportion per Bar k = number of seedlings Count Poisson Distribution: Counts (or getting hit in the head by a horse)

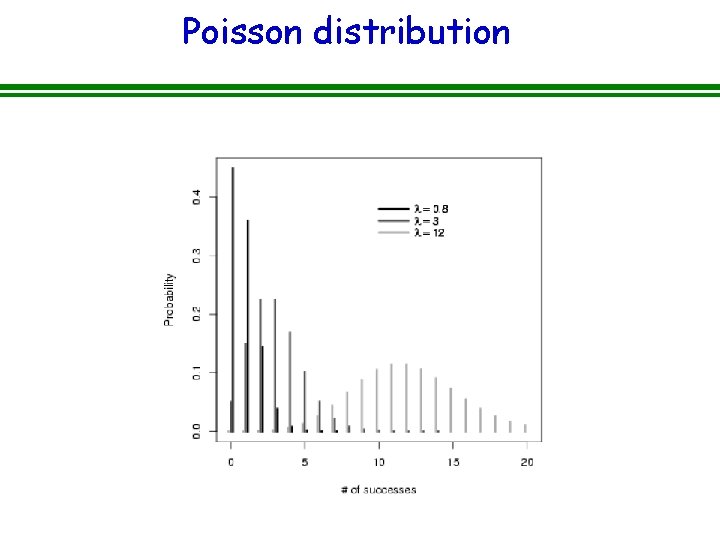

Poisson distribution

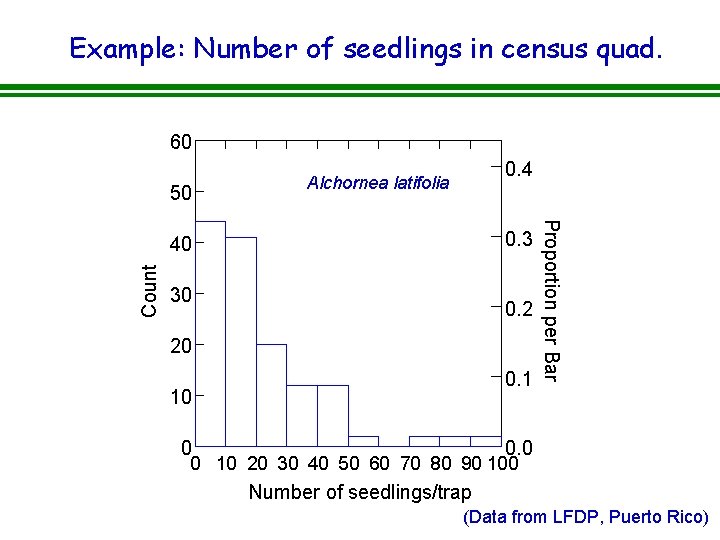

Example: Number of seedlings in census quad. 60 50 0. 4 Alchornea latifolia Count 30 0. 2 20 0. 1 10 0 Proportion per Bar 0. 3 40 0. 0 0 10 20 30 40 50 60 70 80 90 100 Number of seedlings/trap (Data from LFDP, Puerto Rico)

![Clustering in space or time Poisson process E[X]=Variance[X] Negative binomial? Poisson process E[X]<Variance[X] Overdispersed Clustering in space or time Poisson process E[X]=Variance[X] Negative binomial? Poisson process E[X]<Variance[X] Overdispersed](http://slidetodoc.com/presentation_image_h/d778c09d35db151e2487e6e1850e3aef/image-32.jpg)

Clustering in space or time Poisson process E[X]=Variance[X] Negative binomial? Poisson process E[X]<Variance[X] Overdispersed Clumped or patchy

![Negative binomial: Table 4. 2 & 4. 3 in H&M Bycatch Data E[X]=0. 279 Negative binomial: Table 4. 2 & 4. 3 in H&M Bycatch Data E[X]=0. 279](http://slidetodoc.com/presentation_image_h/d778c09d35db151e2487e6e1850e3aef/image-33.jpg)

Negative binomial: Table 4. 2 & 4. 3 in H&M Bycatch Data E[X]=0. 279 Variance[X]=1. 56 Suggests temporal or spatial aggregation in the data

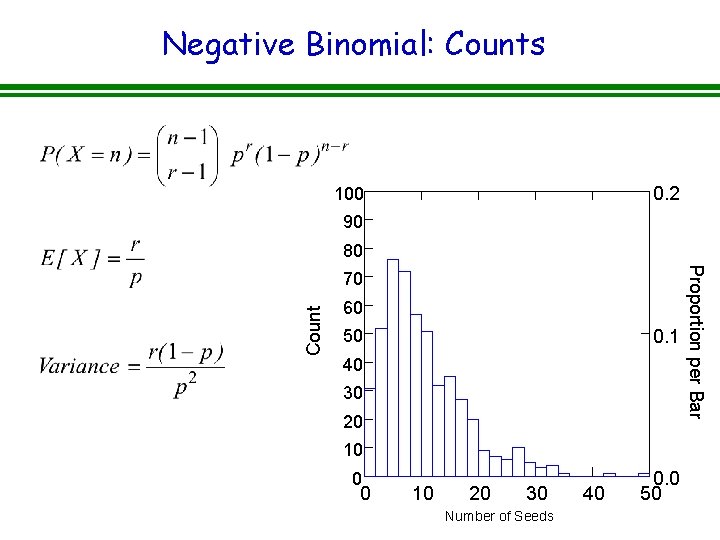

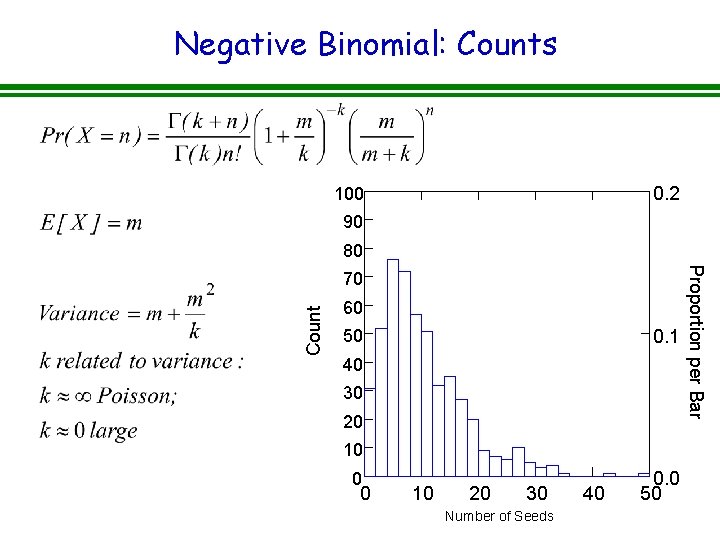

Negative Binomial: Counts 0. 2 100 90 80 Count 60 0. 1 50 40 30 20 10 0 0 10 20 30 NEGBIN Number of Seeds 40 0. 0 50 Proportion per Bar 70

Negative Binomial: Counts 0. 2 100 90 80 Count 60 0. 1 50 40 30 20 10 0 0 10 20 30 NEGBIN Number of Seeds 40 0. 0 50 Proportion per Bar 70

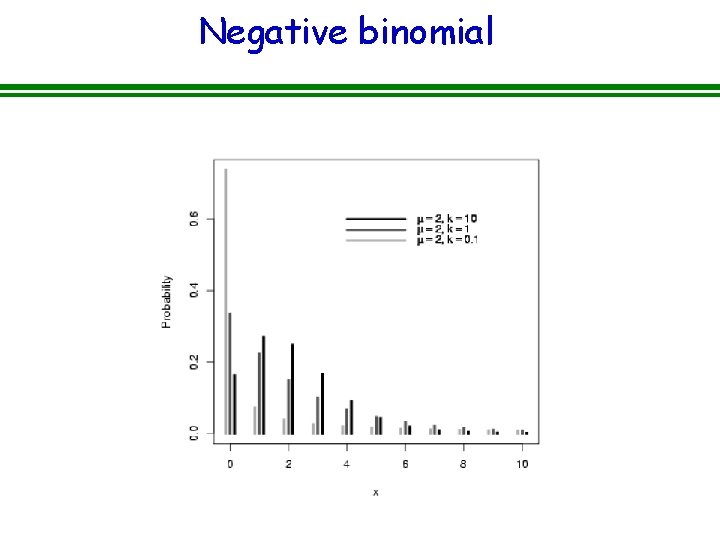

Negative binomial

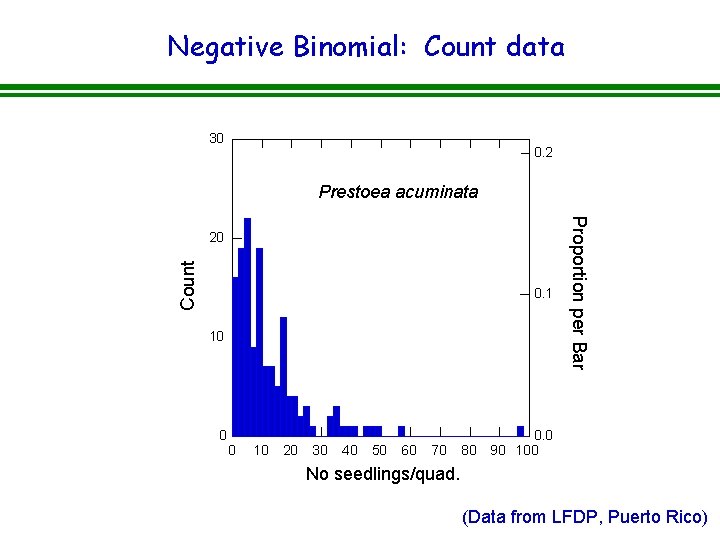

Negative Binomial: Count data 30 0. 2 Prestoea acuminata Count 0. 1 10 0 0 10 20 30 40 50 60 70 80 Proportion per Bar 20 0. 0 90 100 No seedlings/quad. (Data from LFDP, Puerto Rico)

![Normal Distribution E[x] = m Variance = δ 2 Normal PDF with mean = Normal Distribution E[x] = m Variance = δ 2 Normal PDF with mean =](http://slidetodoc.com/presentation_image_h/d778c09d35db151e2487e6e1850e3aef/image-38.jpg)

Normal Distribution E[x] = m Variance = δ 2 Normal PDF with mean = 0 1 Var = 0. 25 Var = 0. 5 Var = 1 Var = 2 Var = 5 Var = 10 Prob(x) 0. 8 0. 6 0. 4 0. 2 0 -5 -4 -3 -2 -1 0 X 1 2 3 4 5

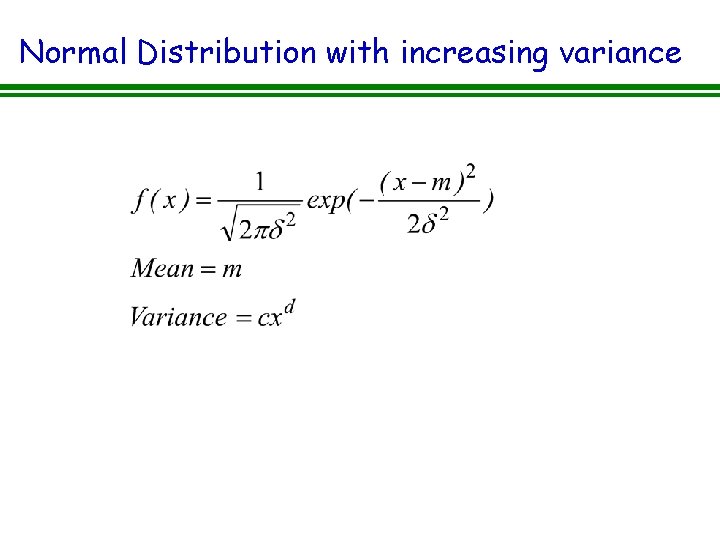

Normal Distribution with increasing variance

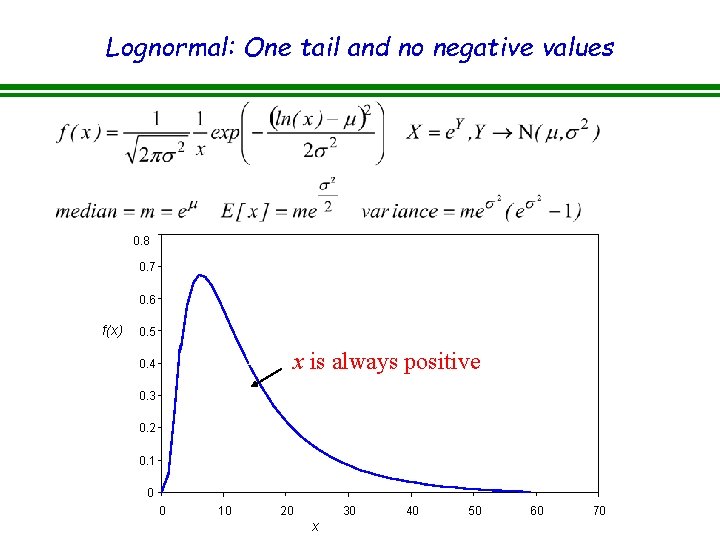

Lognormal: One tail and no negative values 0. 8 0. 7 0. 6 f(x) 0. 5 x is always positive 0. 4 0. 3 0. 2 0. 1 0 0 10 20 30 x 40 50 60 70

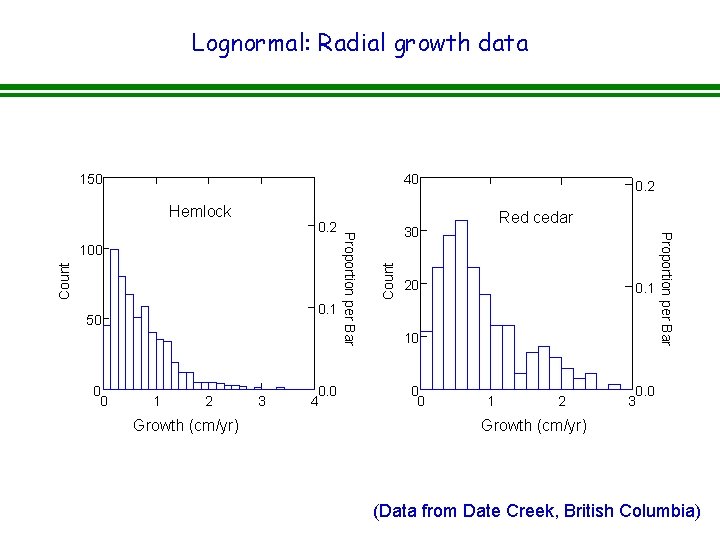

Lognormal: Radial growth data 150 40 Count 0. 1 50 0 0 1 2 3 HEMLOCK Growth (cm/yr) 0. 0 4 30 Red cedar 20 0. 1 10 0 0 1 2 REDCEDAR Proportion per Bar 100 Proportion per Bar 0. 2 Count Hemlock 0. 2 0. 0 3 Growth (cm/yr) (Data from Date Creek, British Columbia)

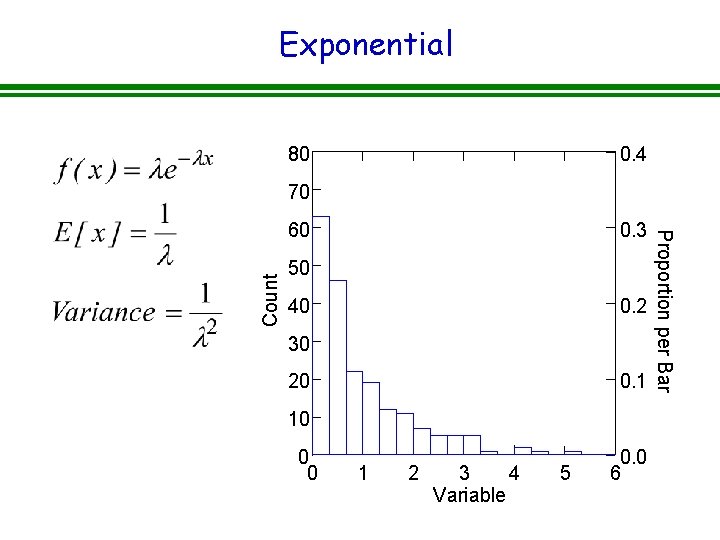

Exponential 80 0. 4 70 Count 0. 3 50 40 0. 2 30 20 0. 1 10 0 0 1 2 3 4 Variable 5 0. 0 6 Proportion per Bar 60

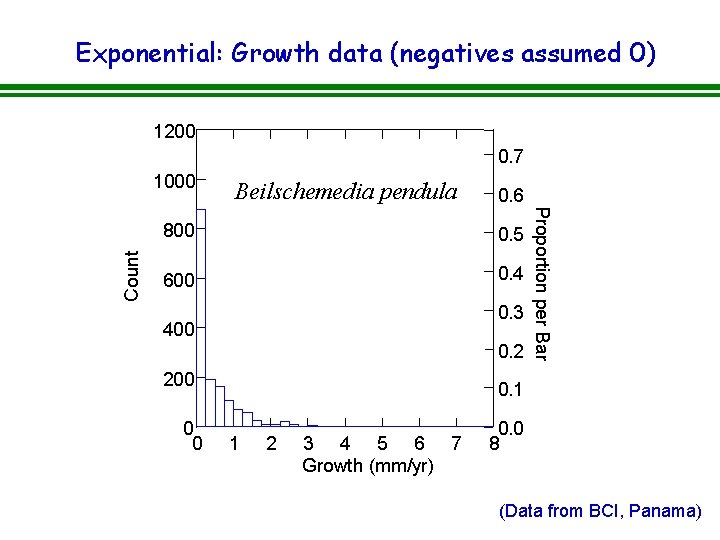

Exponential: Growth data (negatives assumed 0) 1200 0. 7 Beilschemedia pendula 0. 6 800 0. 5 600 0. 4 0. 3 400 0. 2 200 0 0 Proportion per Bar Count 1000 0. 1 1 2 3 4 5 6 7 Growth (mm/yr) 0. 0 8 (Data from BCI, Panama)

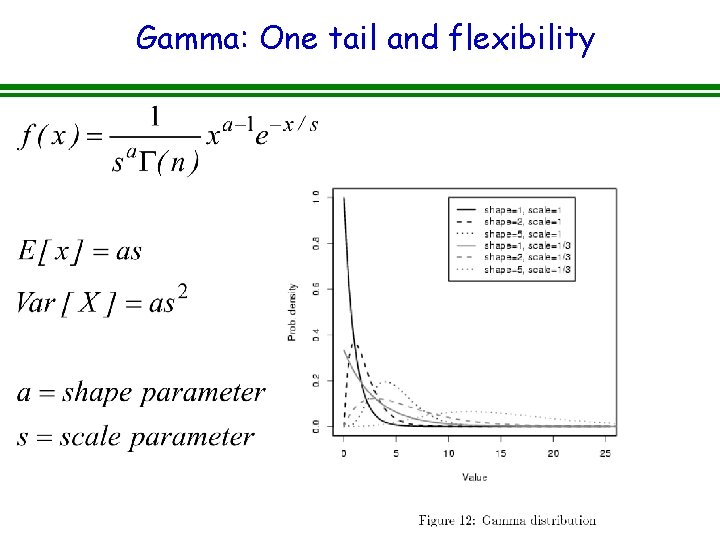

Gamma: One tail and flexibility

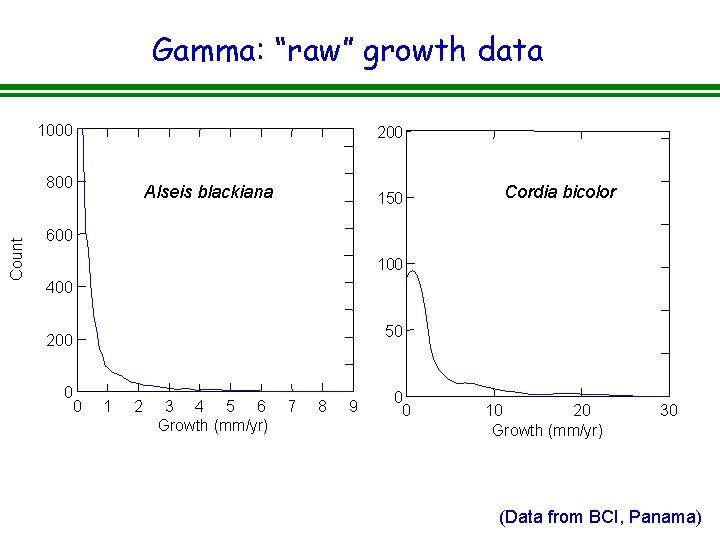

Gamma: “raw” growth data 1000 200 Count 800 Alseis blackiana 150 Cordia bicolor 600 100 400 50 200 0 0 1 2 3 4 5 6 Growth (mm/yr) 7 8 9 0 0 10 20 Growth (mm/yr) 30 (Data from BCI, Panama)

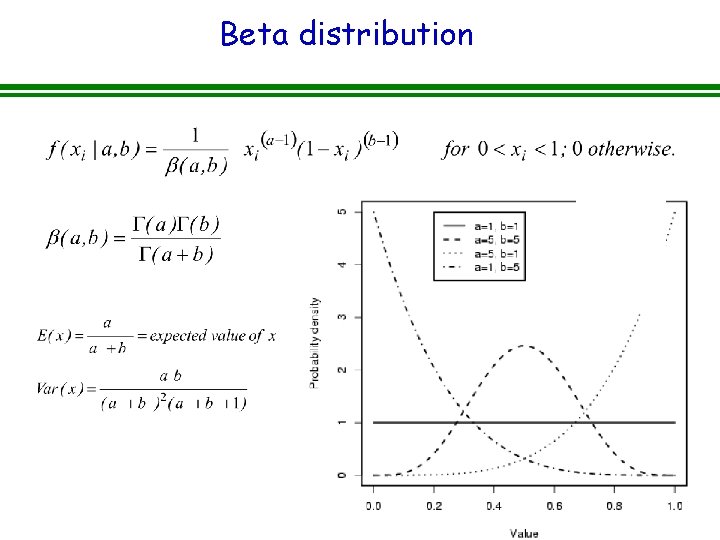

Beta distribution

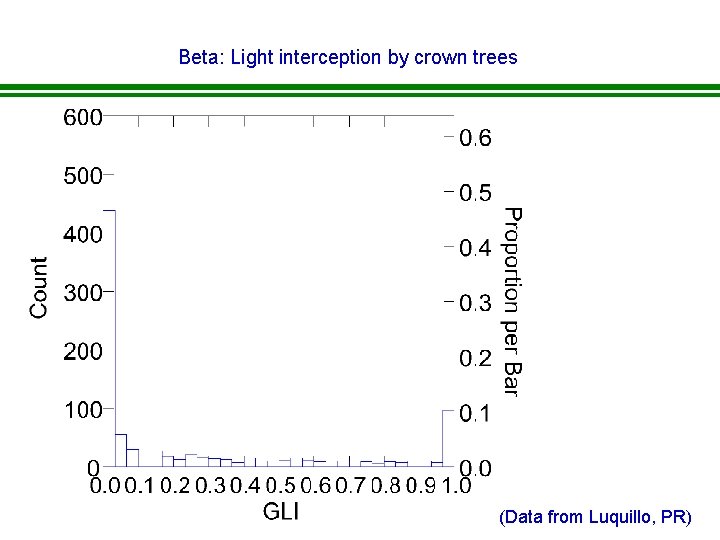

Beta: Light interception by crown trees (Data from Luquillo, PR)

Mixture models • What do you do when your data don’t fit any known distribution? – Add covariates – Mixture models • Discrete • Continuous

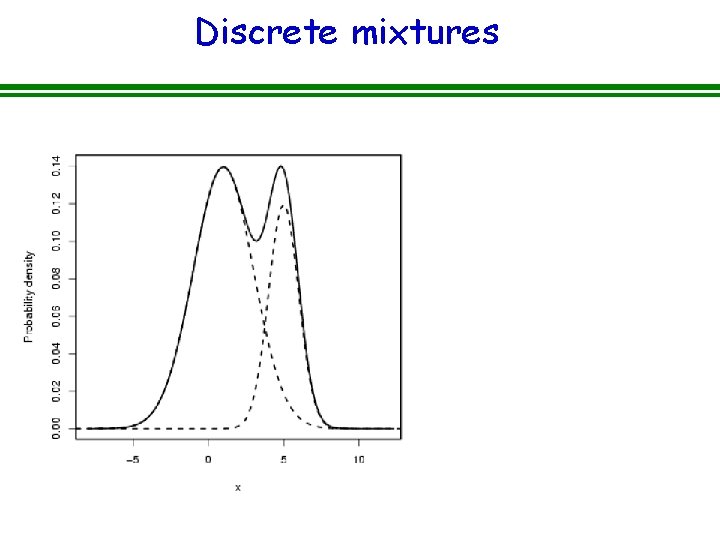

Discrete mixtures

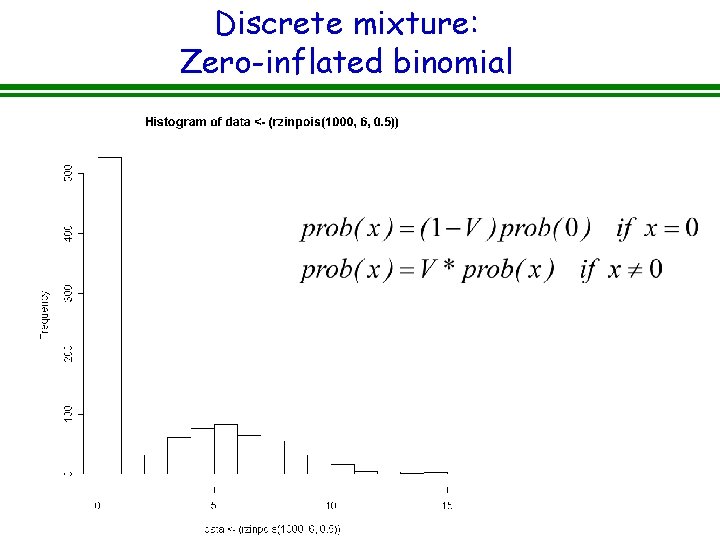

Discrete mixture: Zero-inflated binomial

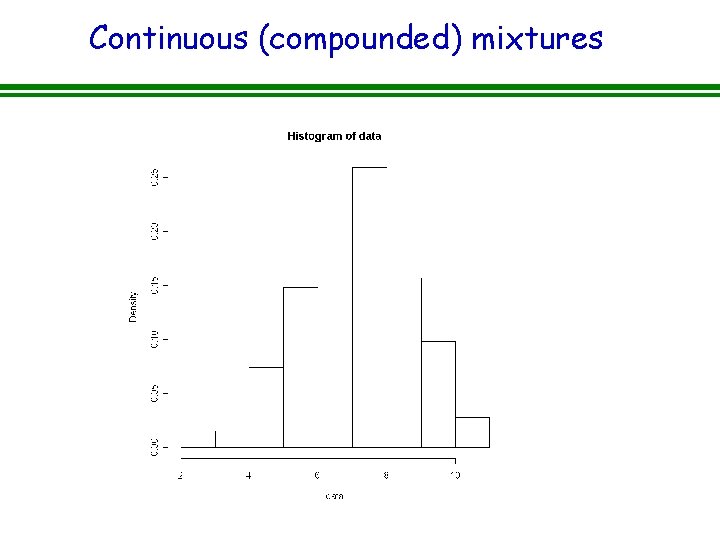

Continuous (compounded) mixtures

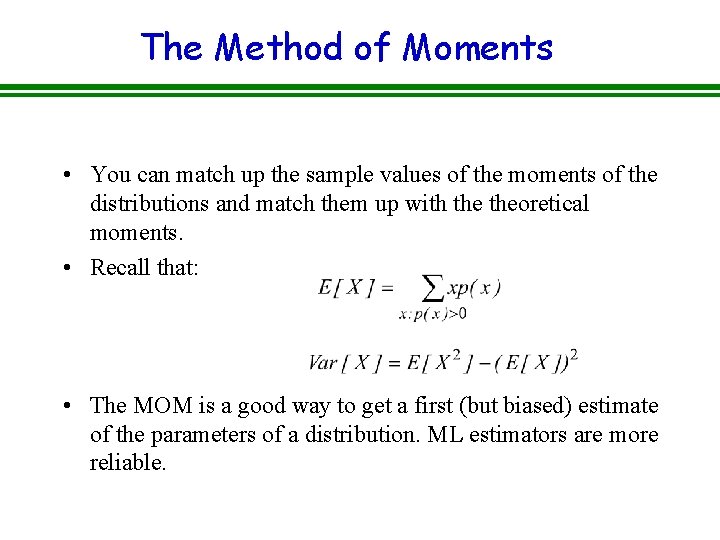

The Method of Moments • You can match up the sample values of the moments of the distributions and match them up with theoretical moments. • Recall that: • The MOM is a good way to get a first (but biased) estimate of the parameters of a distribution. ML estimators are more reliable.

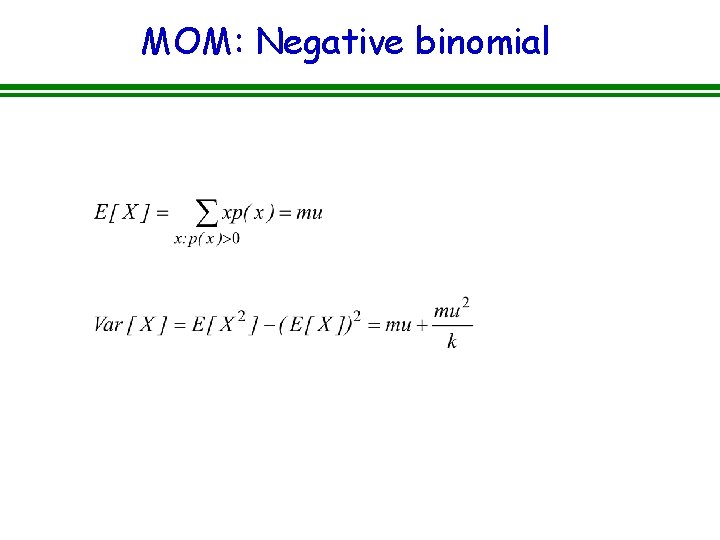

MOM: Negative binomial

- Slides: 53