A Dark Web Intelligence Strategy for Law Enforcement

A Dark Web Intelligence Strategy for Law Enforcement Team 7: Jennifer Chavez, Juanita Maya, Shavvon Cintron, Albert Elezovic , Krishna Bathula, Dr. Charles Tappert

Why the Dark Web and Law Enforcement • Lack of knowledge and resources • Increase of crimes on the Dark Web • Providing a inside of the Dark Web

Client ● ● Dr. Charles Tappert Krishna Bathula

Summary of User Stories Collected • On Dark Web it is important to understand that it is more difficult to collect data and turn it into evidence. Due to this government agencies must be able to conduct flawless investigations both digitally and physically. Law enforcement agrees that the main problems with investigating the Dark web is the lack of knowledge. This leads investigators to miss key information or evidence due to lack of training or knowledge.

Summary of User Stories Collected • • One can find these illicit items on the dark web by completing an analytical framework for scraping Dark Web marketplaces of interest and correlating that data with Maltego. In order to gather information from the dark Web one has to create a web crawler. Cybersecurity and Infrastructure Security Agency (CISA) website was used to search for 'dark web, tor, onion, deep web', there were limited search results with no significant relevance to the dark web or how it operates. This shows an absence of information for the dark web for the public, including local law enforcement not within any triple letter organizations

Design Decisions ● ● Decision was made to create a framework for Law enforcement investigations on the Dark Web The further that we researched, we saw the need for a guide for smaller law enforcement agencies ○ The guide to be created will assist law enforcement agencies on the following: ■ How to access the Dark Web ● How to Navigate Tor ■ How to use Kali Linux ■ What Open Source Tools Officers can use for Dark Web investigation ● What to look for ■ Possible trainings for the Officers/Investigators ● Free Basic Coding Courses

Trade Offs • • Nodes Idea- Not enough time to create nodes and extract data. In lieu of creating nodes we still used wireshark as a tool within our project. Crawler to extract data in key terms - No programmer within the group, the idea was to create a crawler that would extract data using key terms. At the time we saw the timeframe exceeding expectations. The trade off was to see what crawlers do as a tool rather than create one.

Team Meetings • • • meetmsk. zoom. us Tuesday & Thursday 7 pm (Sometimes Weekends) Daily Communication (SMS text group chat, Slack, Whats. App) *Sometimes Disney Theme*

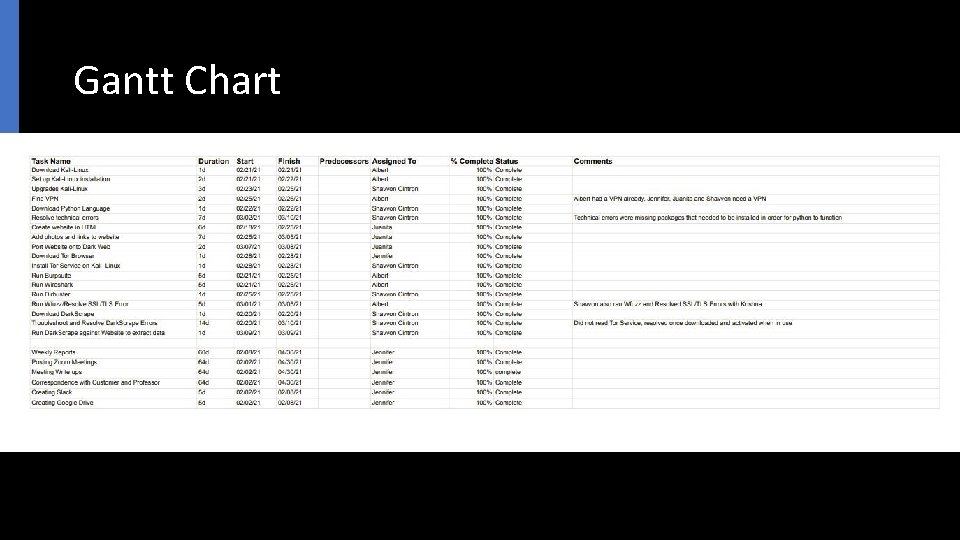

Gantt Chart

Testing Strategy • The approach in the testing strategy was to build and penetrate a website on the dark web. The idea was to construct a guide on how to extract data and explain to a law enforcement agency in plain terms. • The tools that were used were burpsuite, wireshark, dirbuster, wfuzz and darkscrape. Most of these tools were accessible through kali-linux work space. Dark. Scrape is a tool and crawler that needs to be installed on kalilinux.

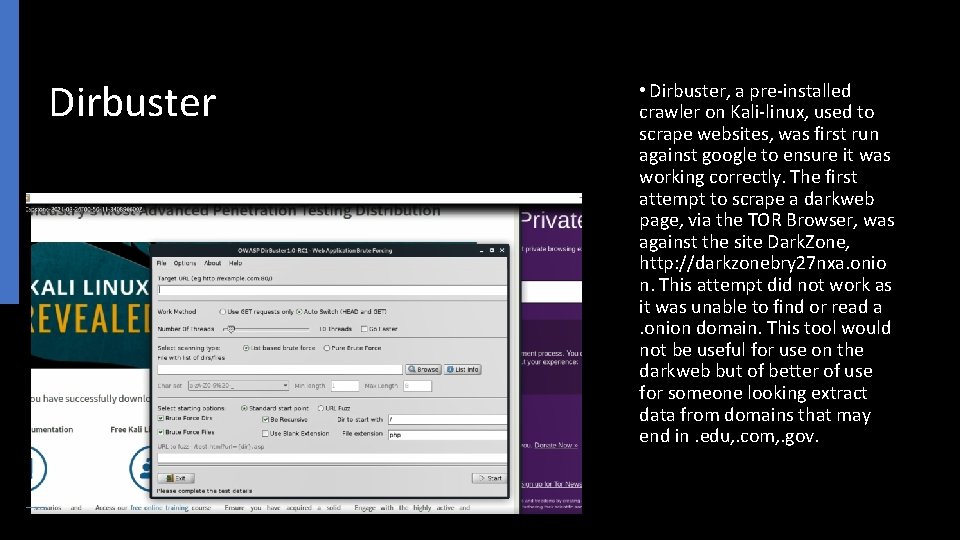

Dirbuster • Dirbuster, a pre-installed crawler on Kali-linux, used to scrape websites, was first run against google to ensure it was working correctly. The first attempt to scrape a darkweb page, via the TOR Browser, was against the site Dark. Zone, http: //darkzonebry 27 nxa. onio n. This attempt did not work as it was unable to find or read a. onion domain. This tool would not be useful for use on the darkweb but of better of use for someone looking extract data from domains that may end in. edu, . com, . gov.

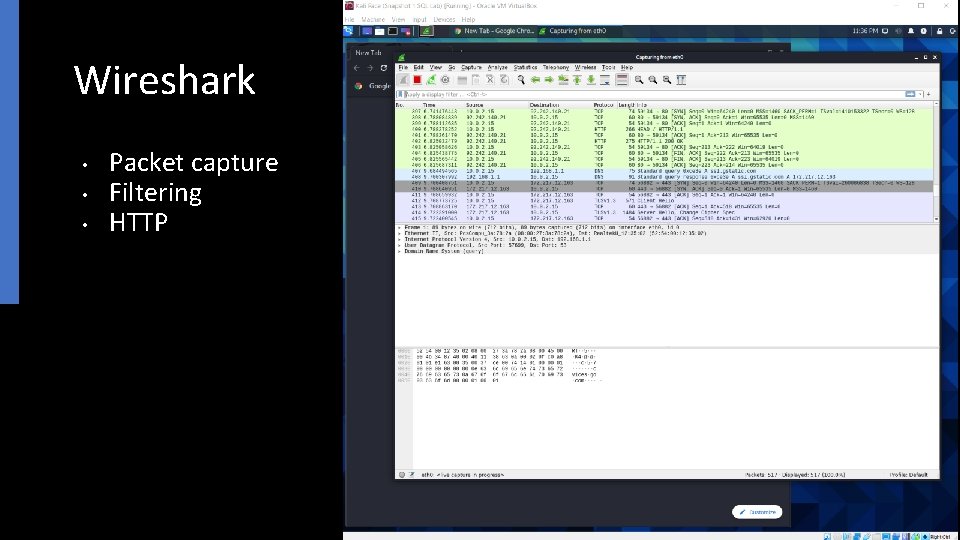

Wireshark • • • Packet capture Filtering HTTP

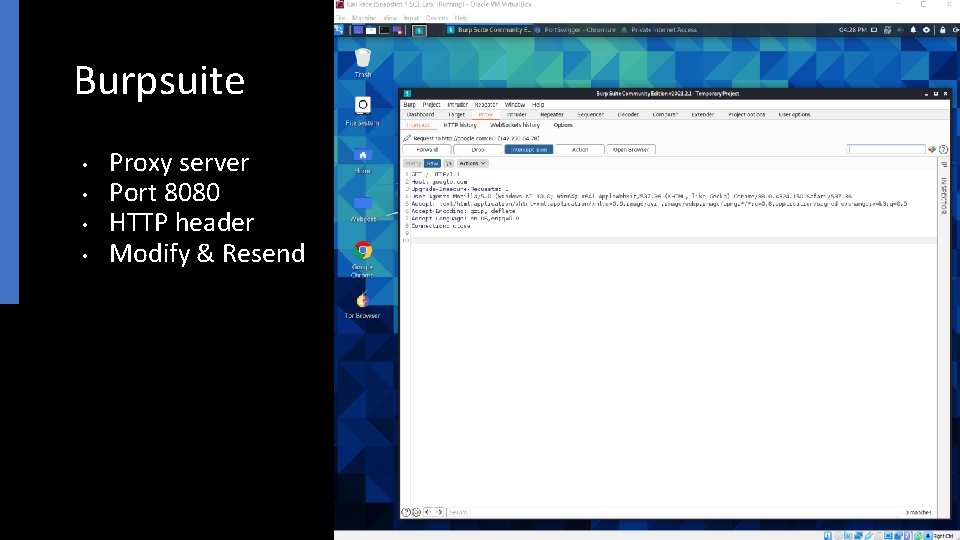

Burpsuite • • Proxy server Port 8080 HTTP header Modify & Resend

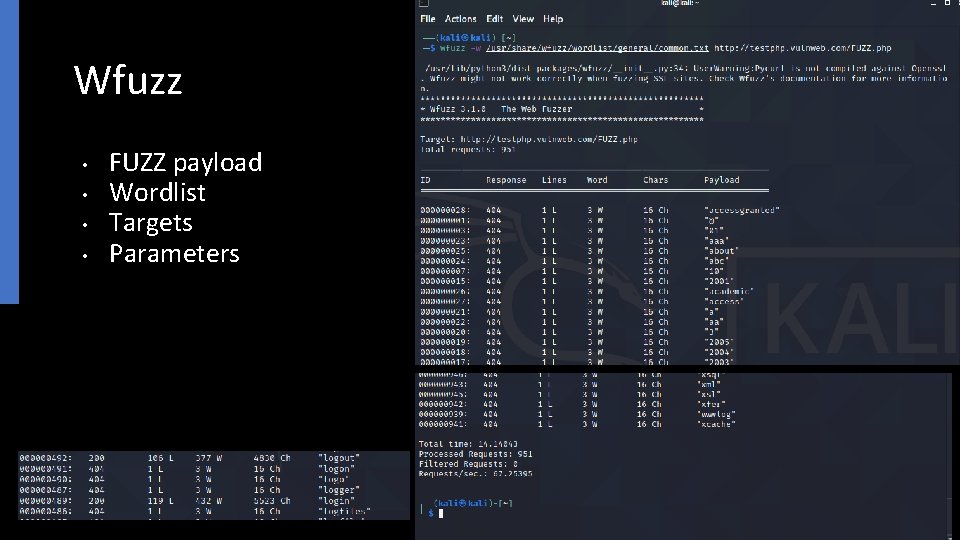

Wfuzz • • FUZZ payload Wordlist Targets Parameters

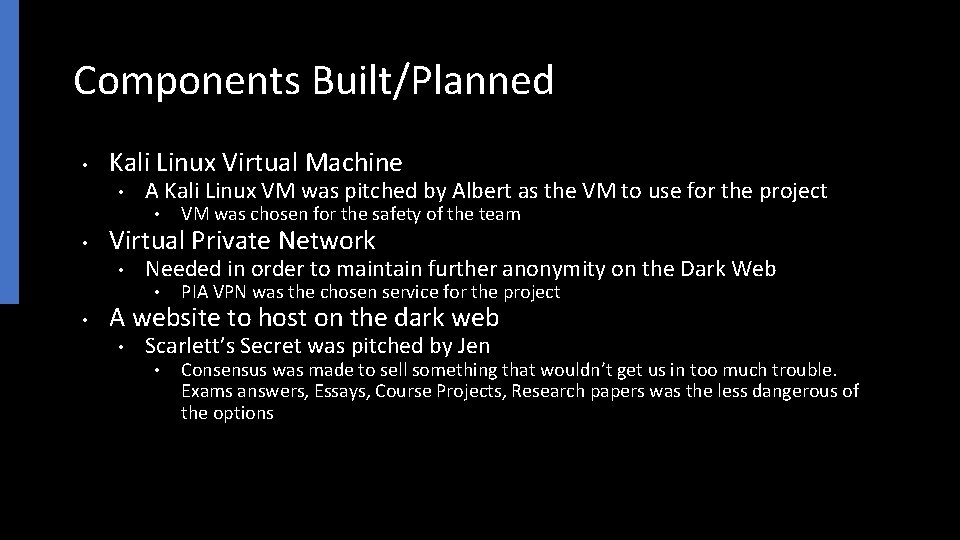

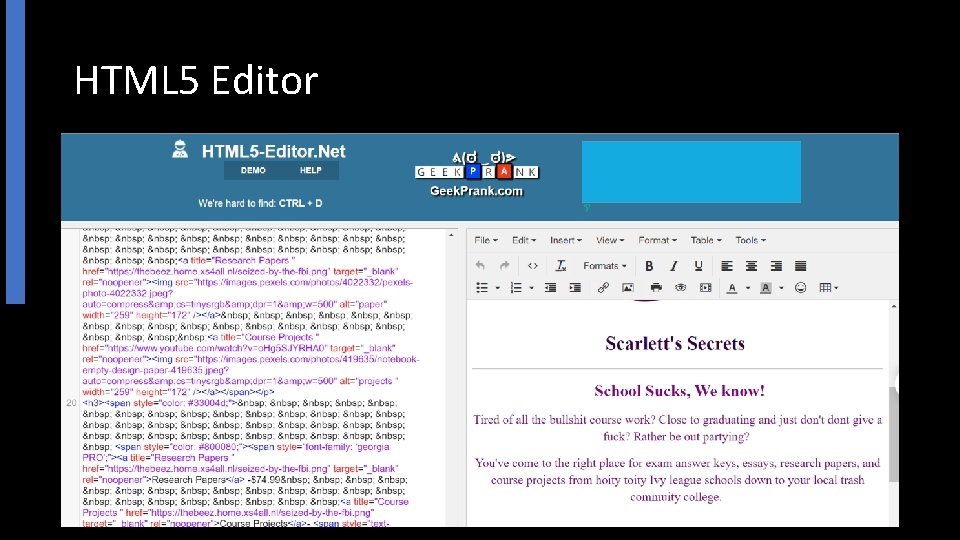

Components Built/Planned • Kali Linux Virtual Machine • A Kali Linux VM was pitched by Albert as the VM to use for the project • • Virtual Private Network • Needed in order to maintain further anonymity on the Dark Web • • VM was chosen for the safety of the team PIA VPN was the chosen service for the project A website to host on the dark web • Scarlett’s Secret was pitched by Jen • Consensus was made to sell something that wouldn’t get us in too much trouble. Exams answers, Essays, Course Projects, Research papers was the less dangerous of the options

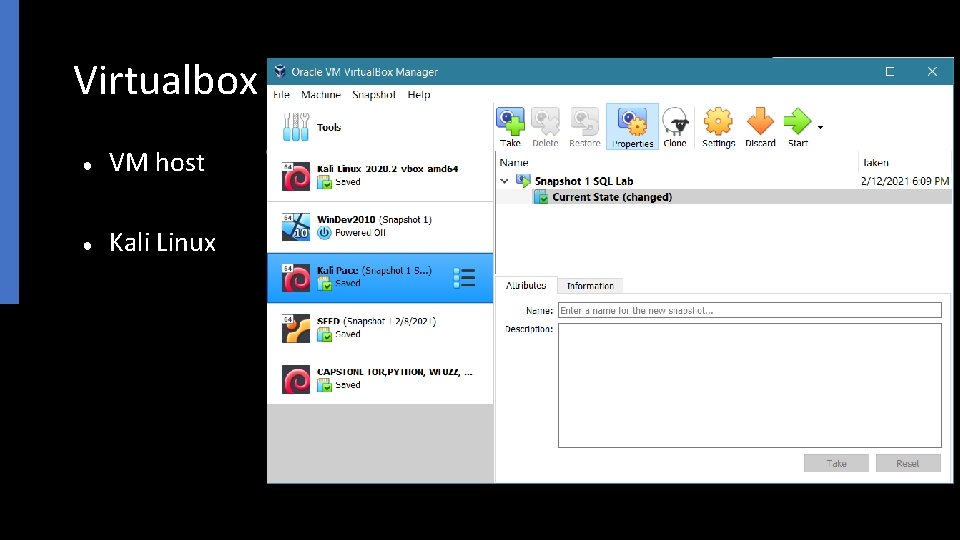

Virtualbox ● VM host ● Kali Linux

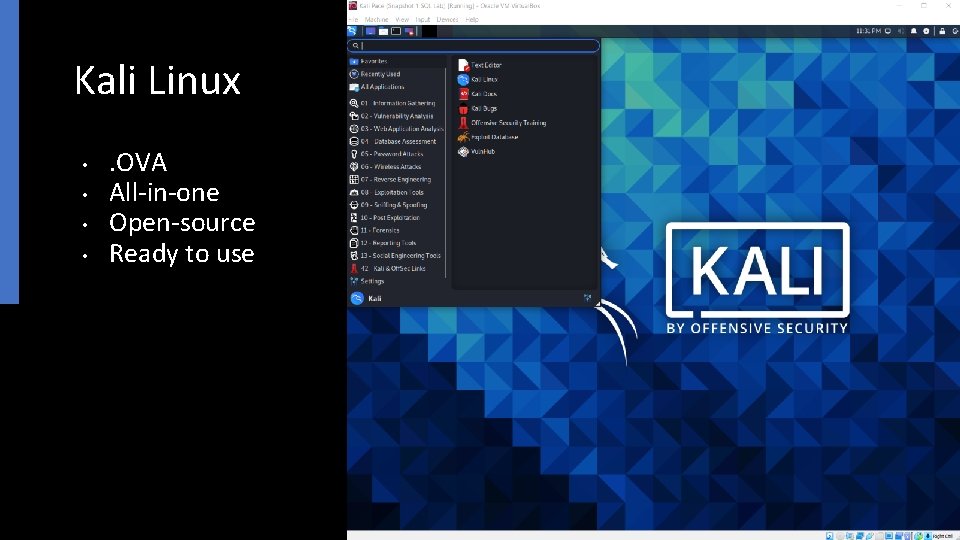

Kali Linux • • . OVA All-in-one Open-source Ready to use

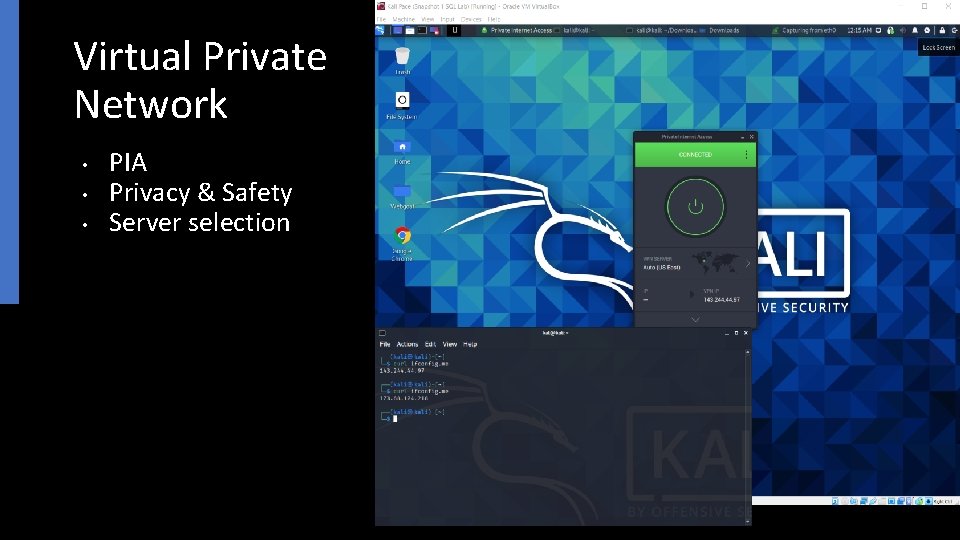

Virtual Private Network • • • PIA Privacy & Safety Server selection

HTML 5 Editor

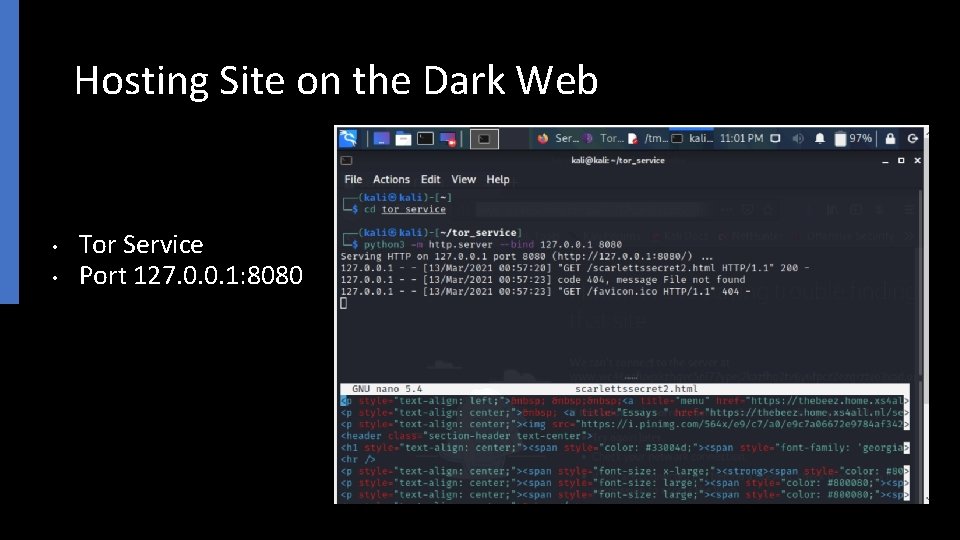

Hosting Site on the Dark Web • • Tor Service Port 127. 0. 0. 1: 8080

Scarlett’s Secret

Describe what was accomplished to this checkpoint (complete a prototype system, etc. ) The group has accomplished several things ● ● ● Creating a test environment that is transferable using a VM Created a dark website in HTML with interactive content Hosting the dark website on the dark web using a VM Created a dark web crawler Selected and prepared penetration testing tools for experimental use All without a programmer. All open source and free. Our prototype is in its final stages and ready to be deployed and tested in a live test.

Describe what has been easy/difficult during this half of the semester Difficult ● Creating an idea that took a novel and unique approach utilizing all of our skills, and then fine tuning that idea into something feasible and reasonable ● Setting up the website and creating it from scratch as we are not programmers ● Finding tools to use and that may possibly work on the Dark Web ● Configuring everything to work exactly. Technical stuff has been more difficult Easy ● Working together, we were all on the same page and understood our responsibilities as well as our shortcomings ● Deciding on the idea of a website. ● Creative direction or creative thinking has been easier

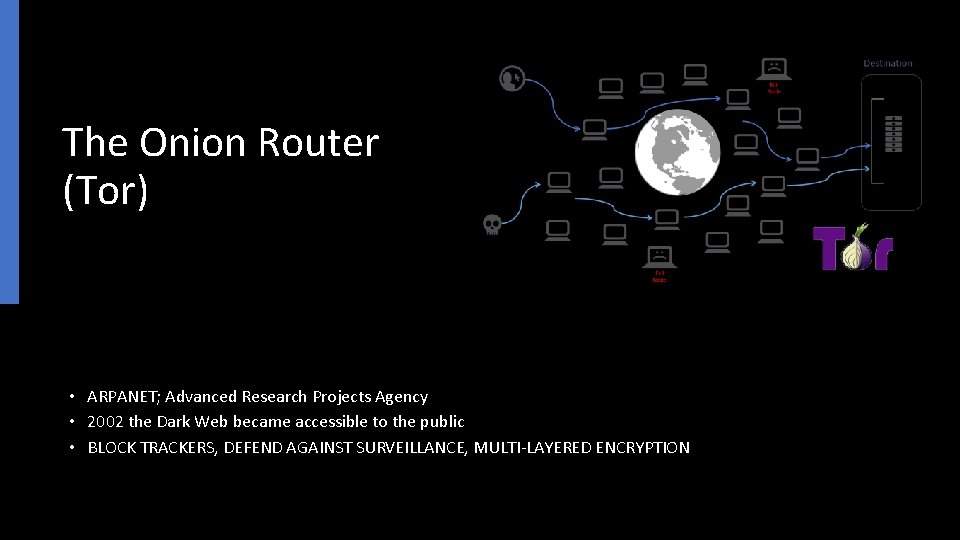

The Onion Router (Tor) • ARPANET; Advanced Research Projects Agency • 2002 the Dark Web became accessible to the public • BLOCK TRACKERS, DEFEND AGAINST SURVEILLANCE, MULTI-LAYERED ENCRYPTION

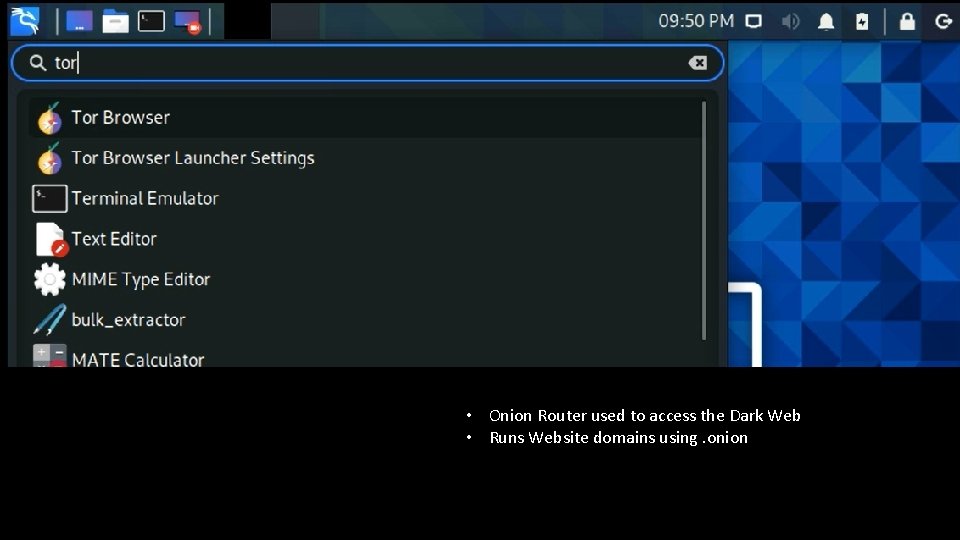

Tor Browser • Onion Router used to access the Dark Web • Runs Website domains using. onion

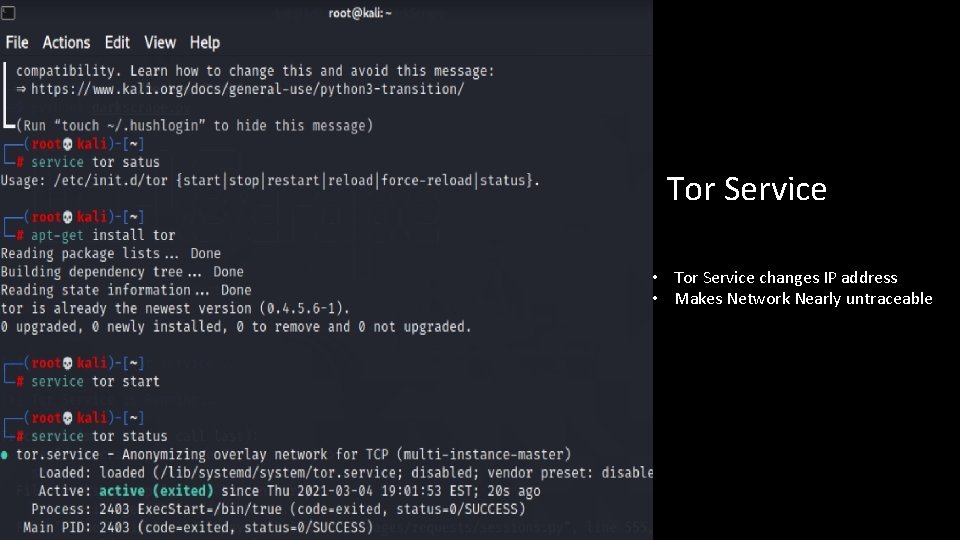

Tor Service • Tor Service changes IP address • Makes Network Nearly untraceable

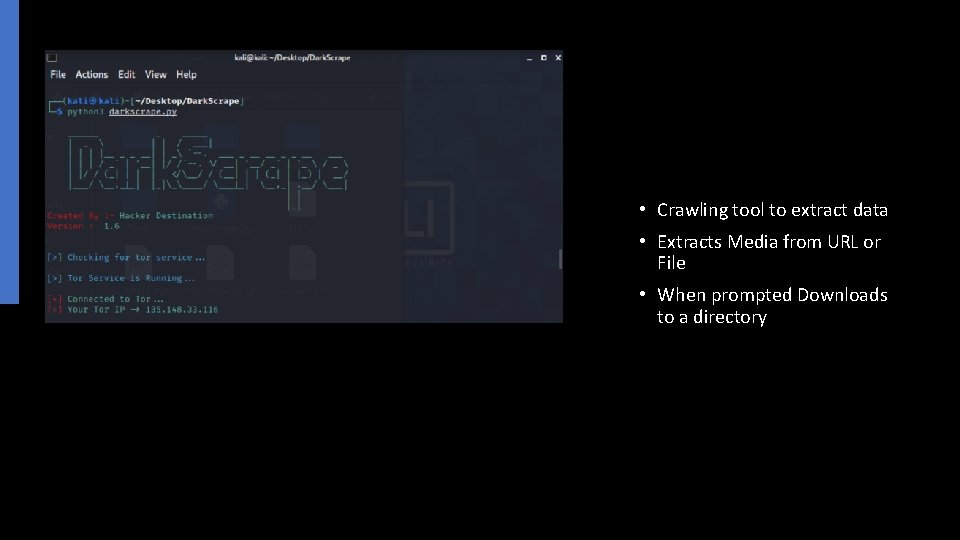

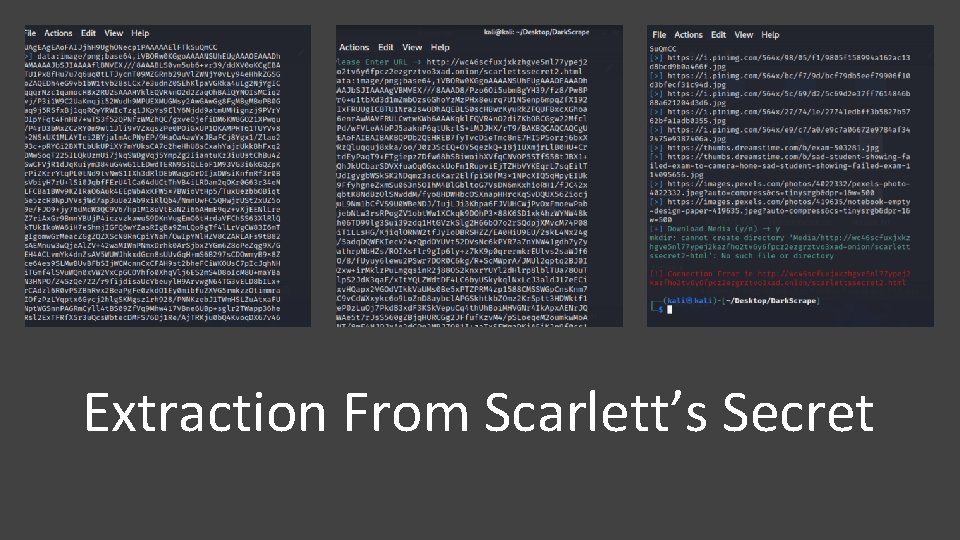

• Crawling tool to extract data • Extracts Media from URL or File • When prompted Downloads to a directory

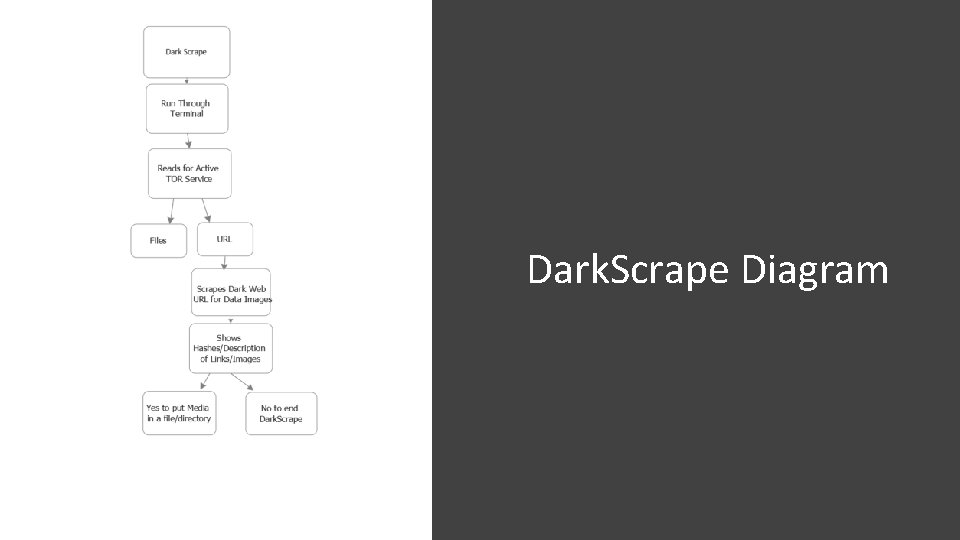

Dark. Scrape Diagram

Extraction From Scarlett’s Secret

Scarlett’s Secret Extracted information such as thumbnails, photograph description. Extracted Hashes from the website. Hashes are usually photographs. Hashes would be beneficial because they can be searched and saved into a databases during investigations.

Describe what you plan for the remainder of the semester ● ● ● To use the web crawler and penetration tools successfully against the website we created on the Dark Web and gather data as a proof -of-concept for our topic To further improve the website and attempt to add more data and content to allow for better data scraping and improved tool use Find and use more tools if possible, against the website

Conclusion

- Slides: 32