Towards a VQA Suite Architecture Tweaks Learning Rate

- Slides: 22

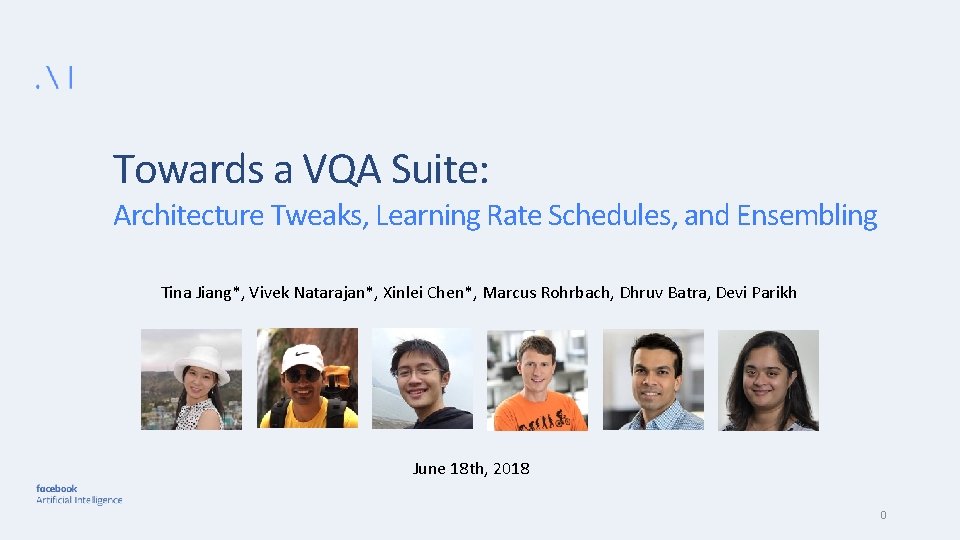

Towards a VQA Suite: Architecture Tweaks, Learning Rate Schedules, and Ensembling Tina Jiang*, Vivek Natarajan*, Xinlei Chen*, Marcus Rohrbach, Dhruv Batra, Devi Parikh June 18 th, 2018 0

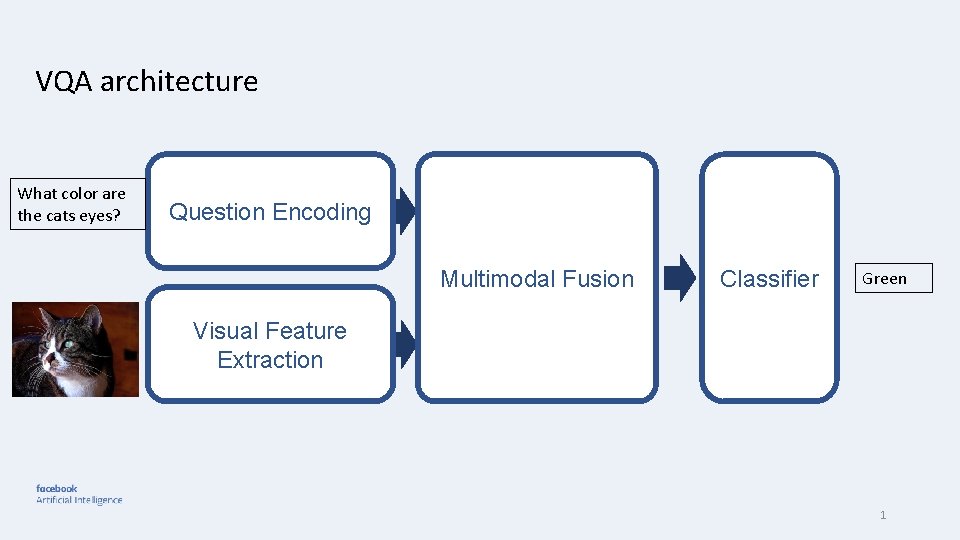

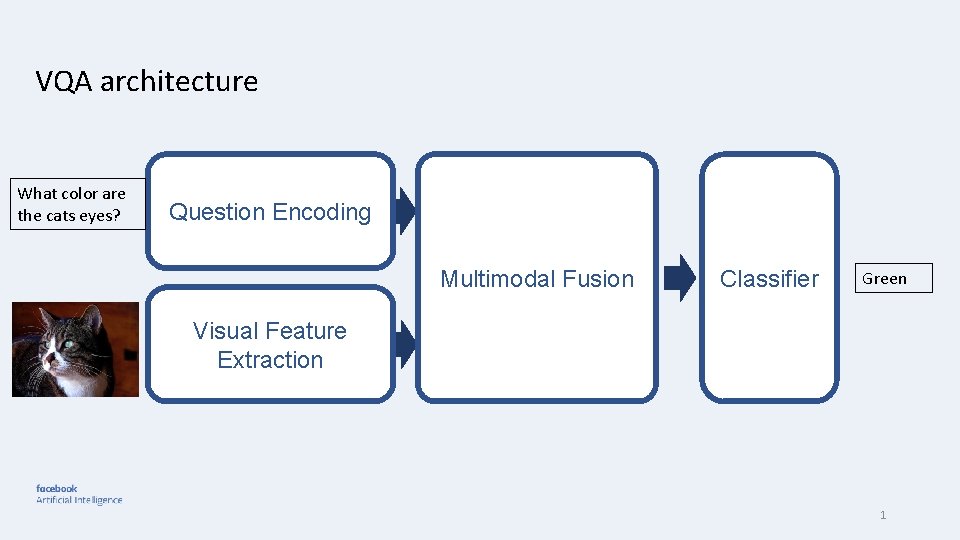

VQA architecture What color are the cats eyes? Question Encoding Multimodal Fusion Classifier Green Visual Feature Extraction 1

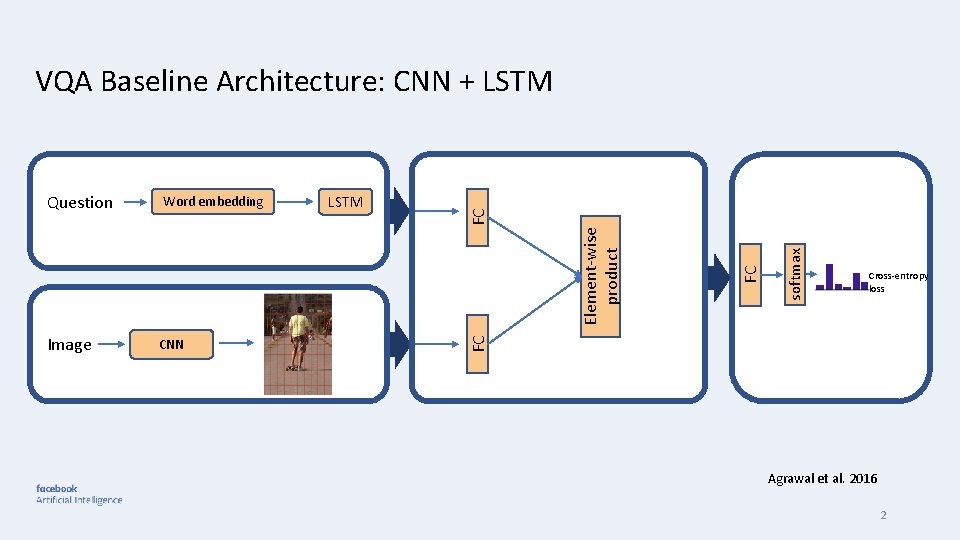

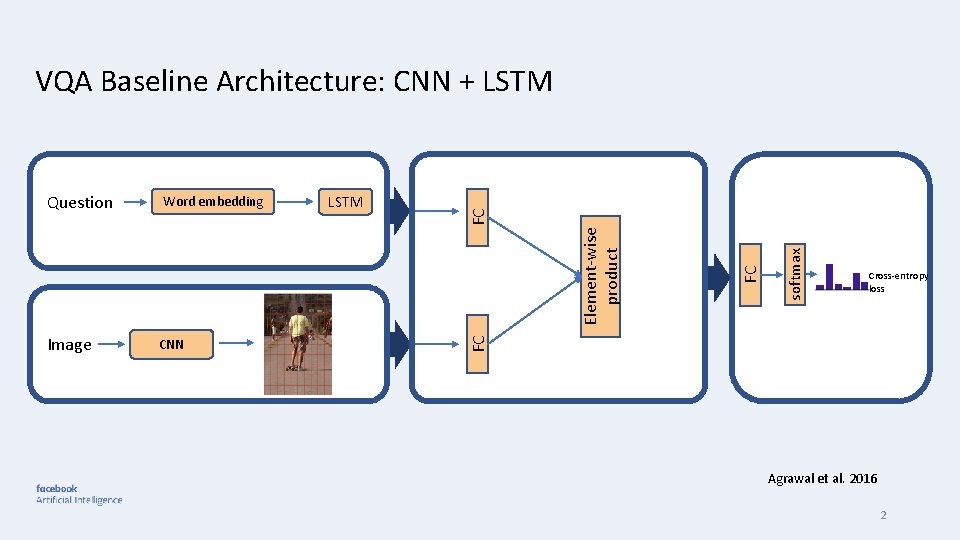

Image CNN softmax FC LSTM Element-wise product Word embedding Cross-entropy loss FC Question FC VQA Baseline Architecture: CNN + LSTM Agrawal et al. 2016 2

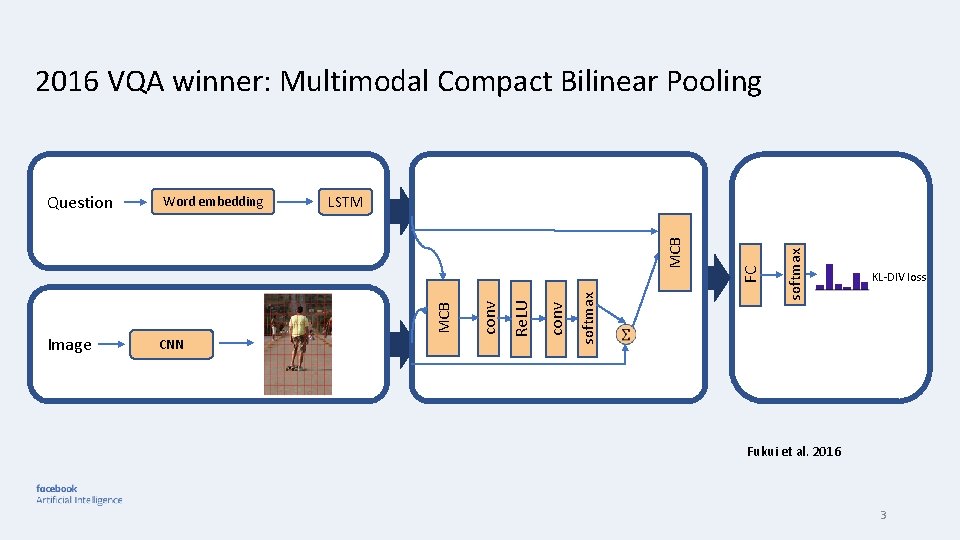

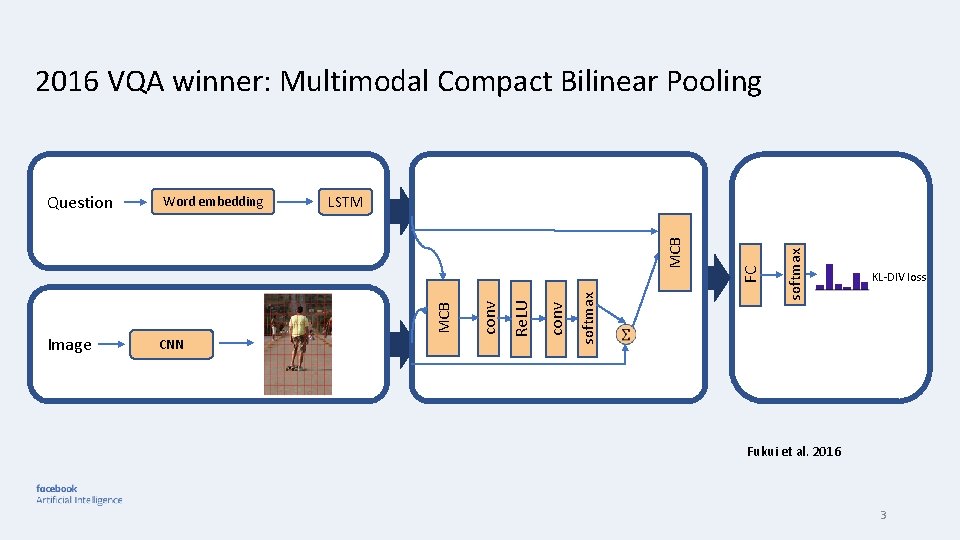

2016 VQA winner: Multimodal Compact Bilinear Pooling softmax conv Re. LU CNN conv MCB Image softmax LSTM FC Word embedding MCB Question KL-DIV loss Fukui et al. 2016 3

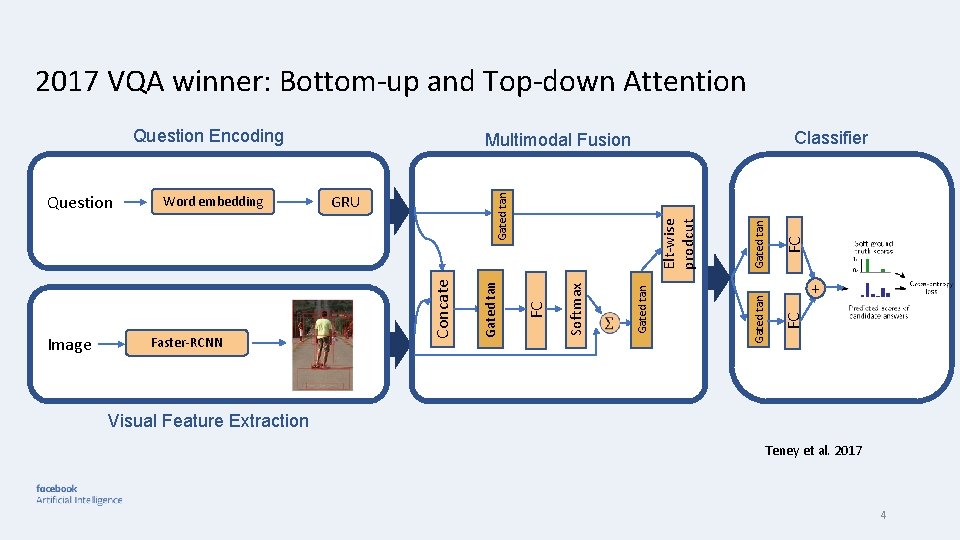

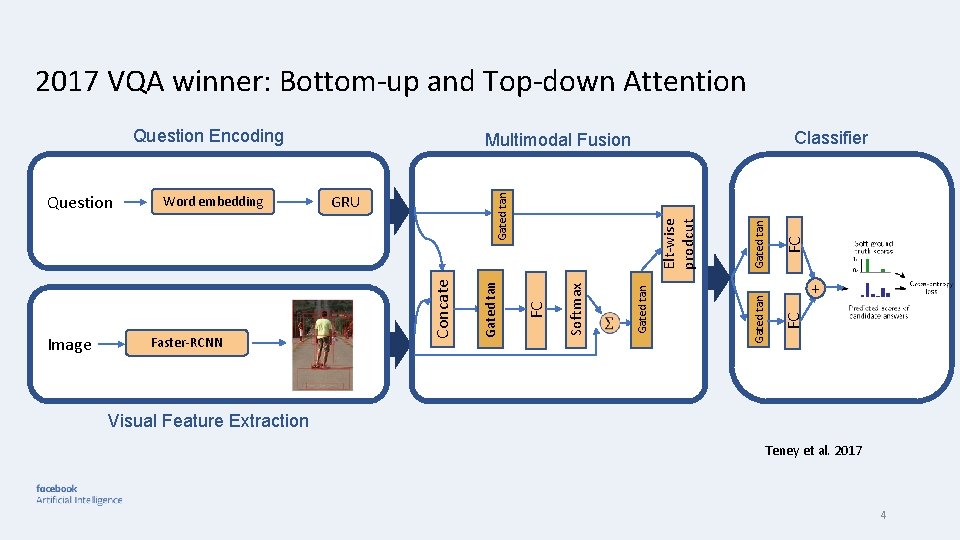

2017 VQA winner: Bottom-up and Top-down Attention FC Gated tan FC Elt-wise prodcut Gated tan Softmax Faster-RCNN GRU Gated tan Image Word embedding Concate Question Classifier Multimodal Fusion + FC Question Encoding Visual Feature Extraction Teney et al. 2017 4

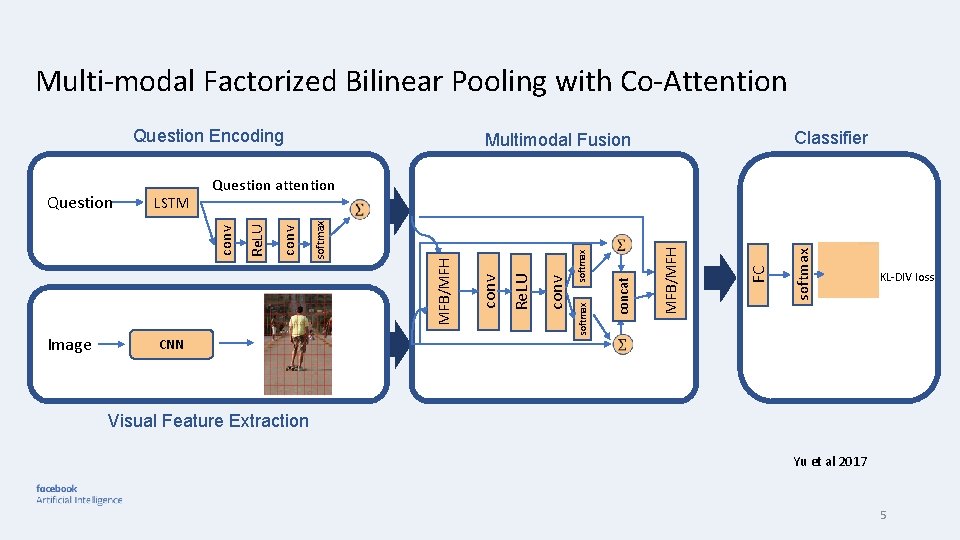

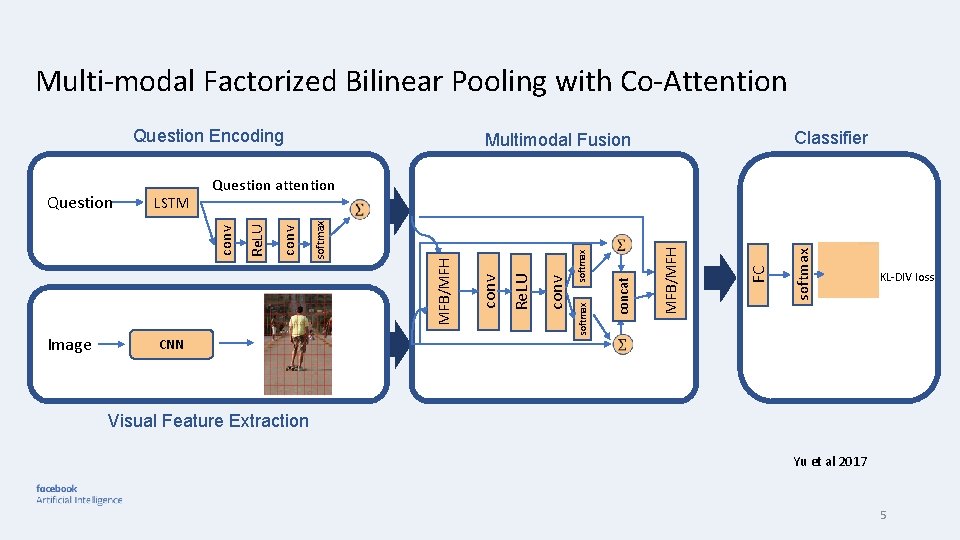

Multi-modal Factorized Bilinear Pooling with Co-Attention Question Encoding softmax FC MFB/MFH concat softmax conv Re. LU conv MFB/MFH softmax conv CNN softmax Image Re. LU LSTM Question attention conv Question Classifier Multimodal Fusion KL-DIV loss Visual Feature Extraction Yu et al 2017 5

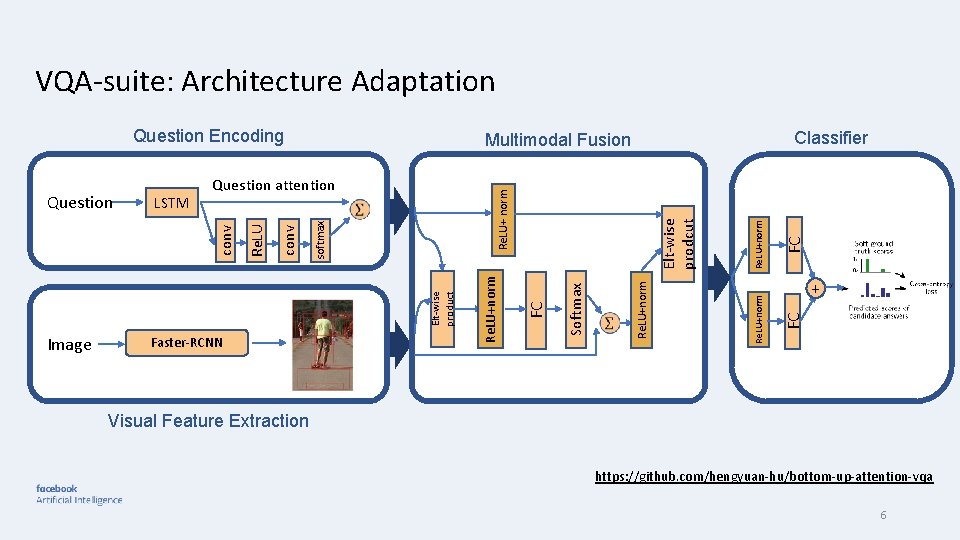

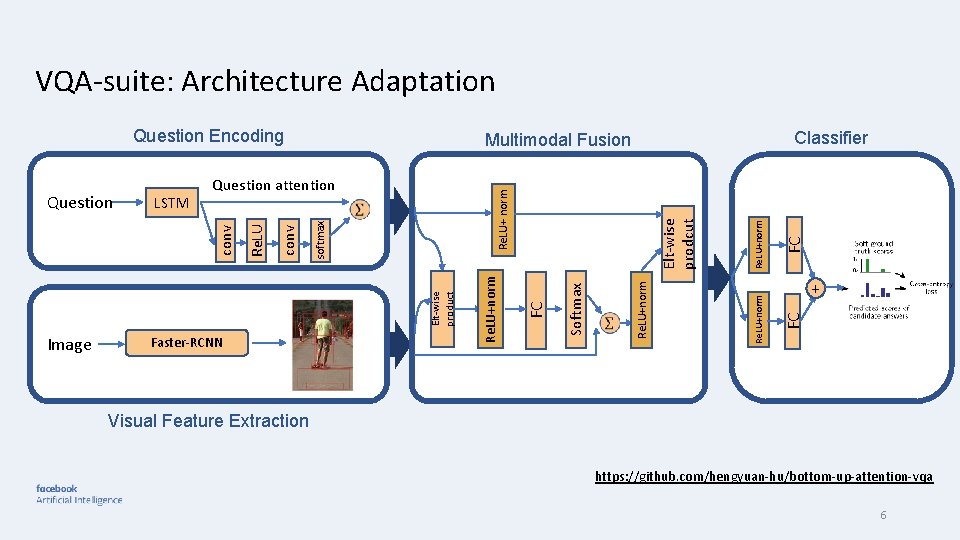

VQA-suite: Architecture Adaptation FC Re. LU+norm FC Elt-wise prodcut Re. LU+ norm Softmax Image Faster-RCNN Re. LU+norm Elt-wise product softmax conv Re. LU LSTM Question attention conv Question Classifier Multimodal Fusion + FC Question Encoding Visual Feature Extraction https: //github. com/hengyuan-hu/bottom-up-attention-vqa 6

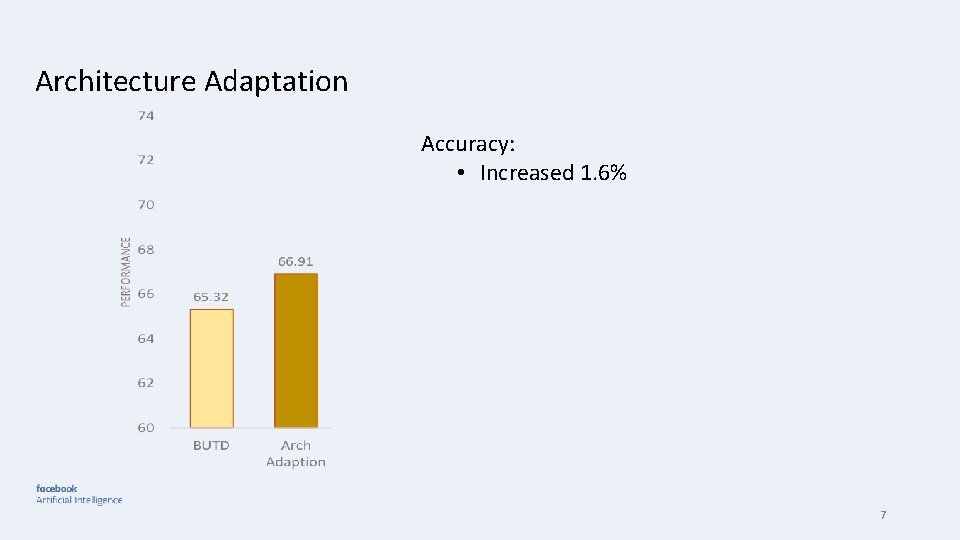

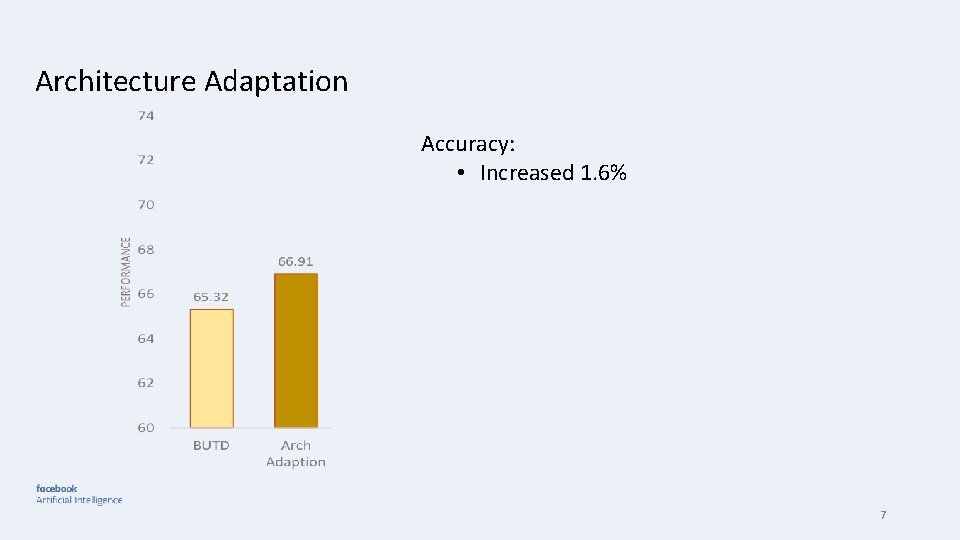

Architecture Adaptation Accuracy: • Increased 1. 6% 7

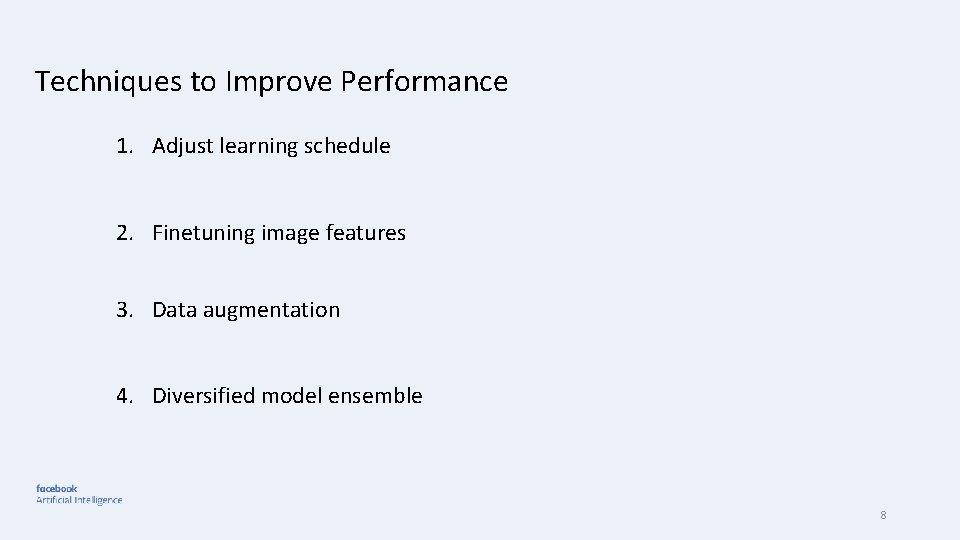

Techniques to Improve Performance 1. Adjust learning schedule 2. Finetuning image features 3. Data augmentation 4. Diversified model ensemble 8

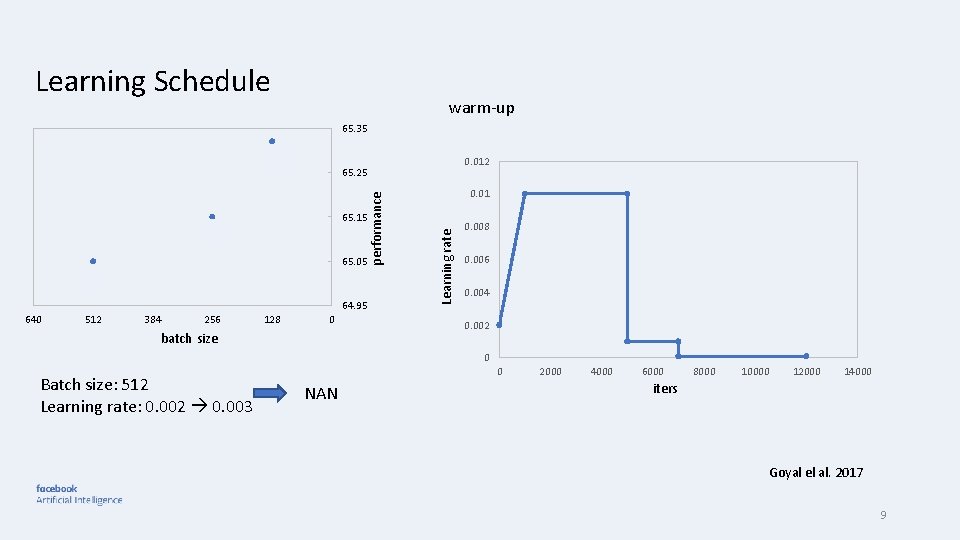

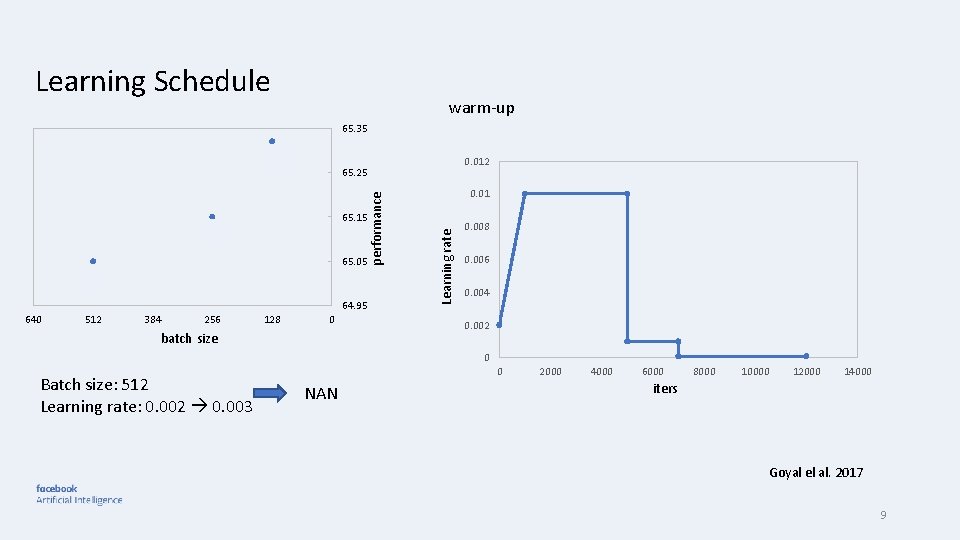

Learning Schedule warm-up 65. 35 0. 012 65. 05 64. 95 640 512 384 256 batch size 128 0 0. 01 Learning rate 65. 15 performance 65. 25 0. 008 0. 006 0. 004 0. 002 0 Batch size: 512 Learning rate: 0. 002 0. 003 0 NAN 2000 4000 6000 8000 10000 12000 14000 iters Goyal el al. 2017 9

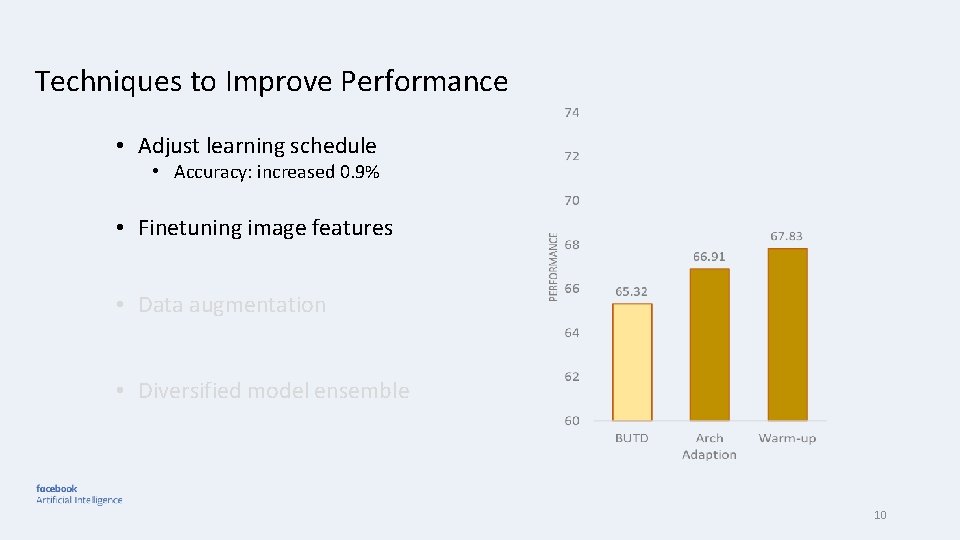

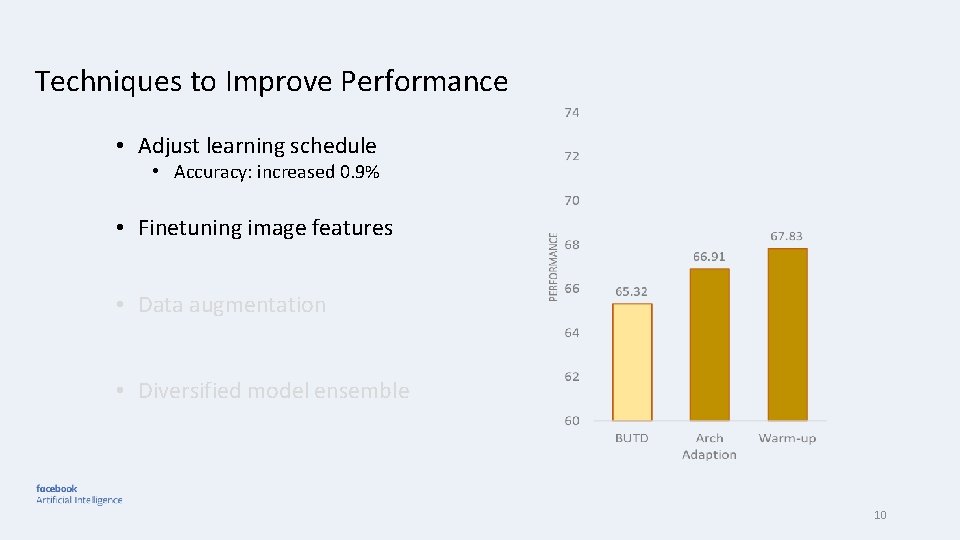

Techniques to Improve Performance • Adjust learning schedule • Accuracy: increased 0. 9% • Finetuning image features • Data augmentation • Diversified model ensemble 10

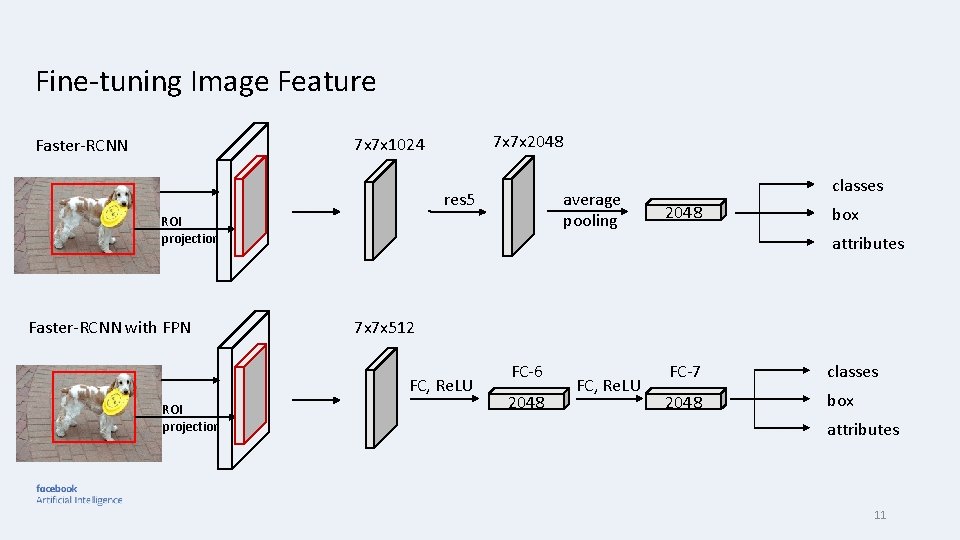

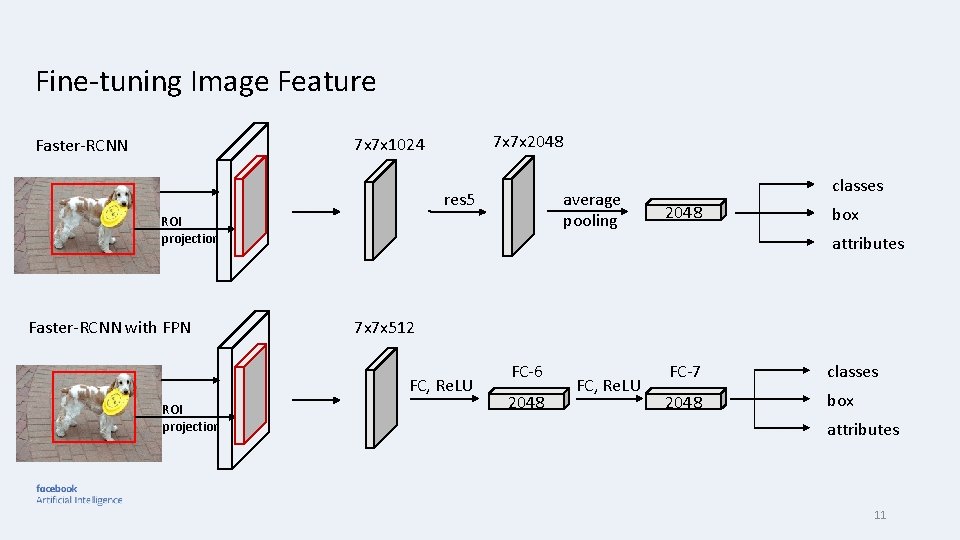

Fine-tuning Image Feature 7 x 7 x 2048 7 x 7 x 1024 Faster-RCNN res 5 average pooling ROI projection Faster-RCNN with FPN 2048 box attributes 7 x 7 x 512 FC, Re. LU ROI projection classes FC-6 2048 FC, Re. LU FC-7 classes 2048 box attributes 11

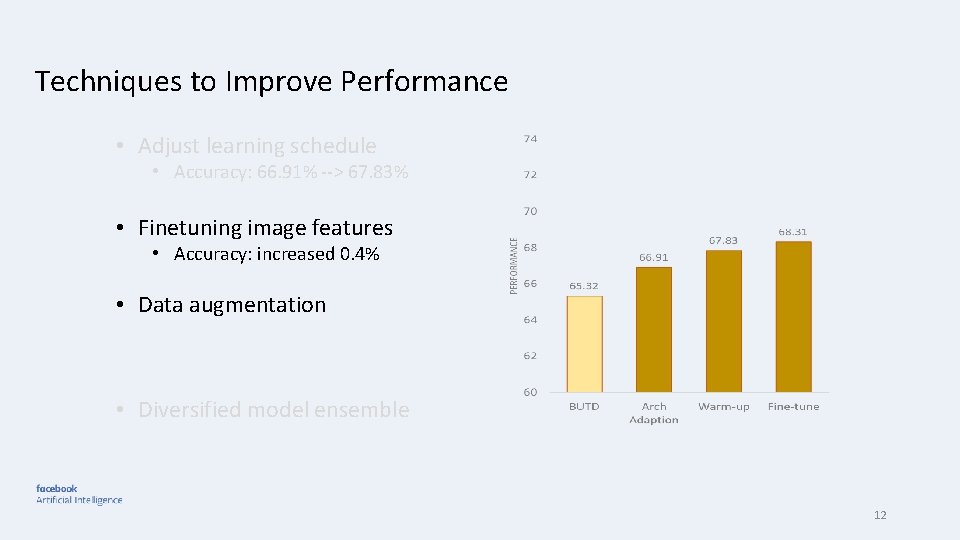

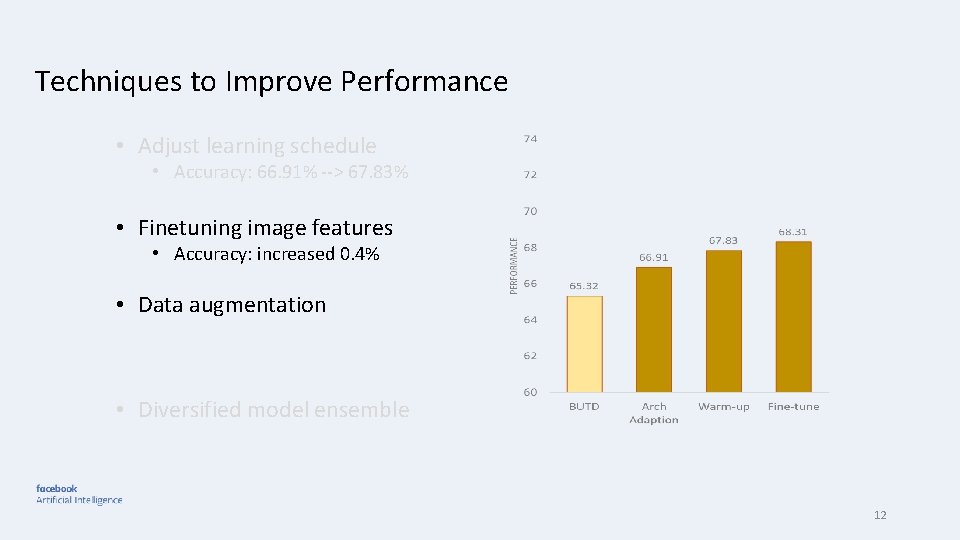

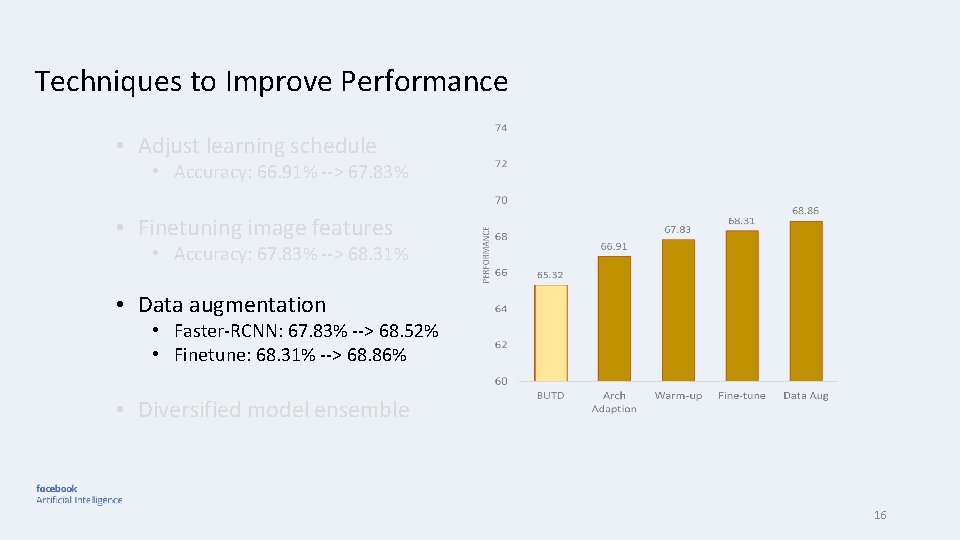

Techniques to Improve Performance • Adjust learning schedule • Accuracy: 66. 91% --> 67. 83% • Finetuning image features • Accuracy: increased 0. 4% • Data augmentation • Diversified model ensemble 12

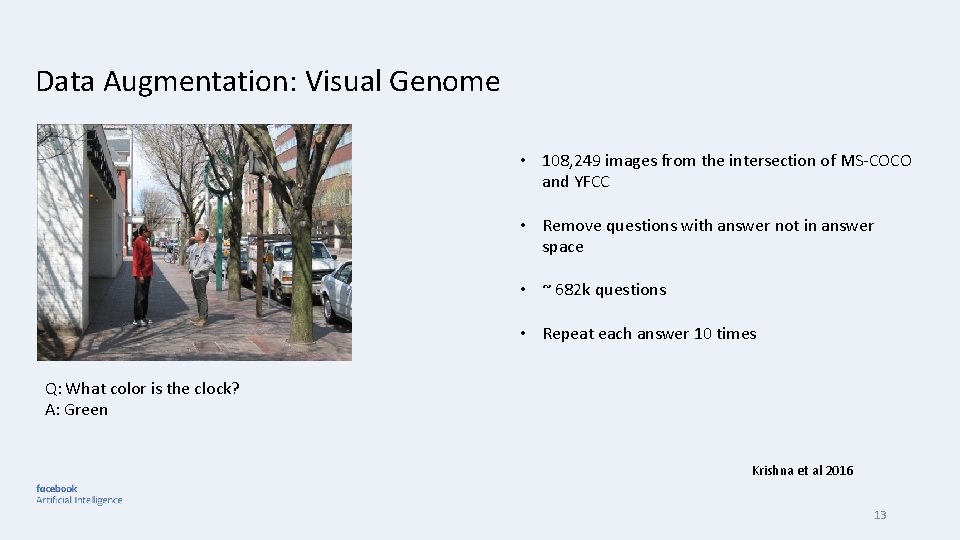

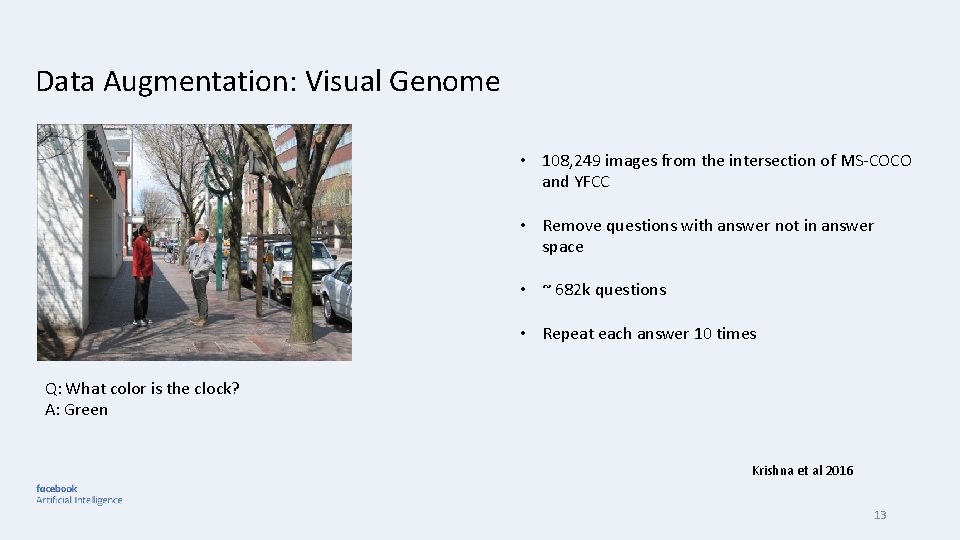

Data Augmentation: Visual Genome • 108, 249 images from the intersection of MS-COCO and YFCC • Remove questions with answer not in answer space • ~ 682 k questions • Repeat each answer 10 times Q: What color is the clock? A: Green Krishna et al 2016 13

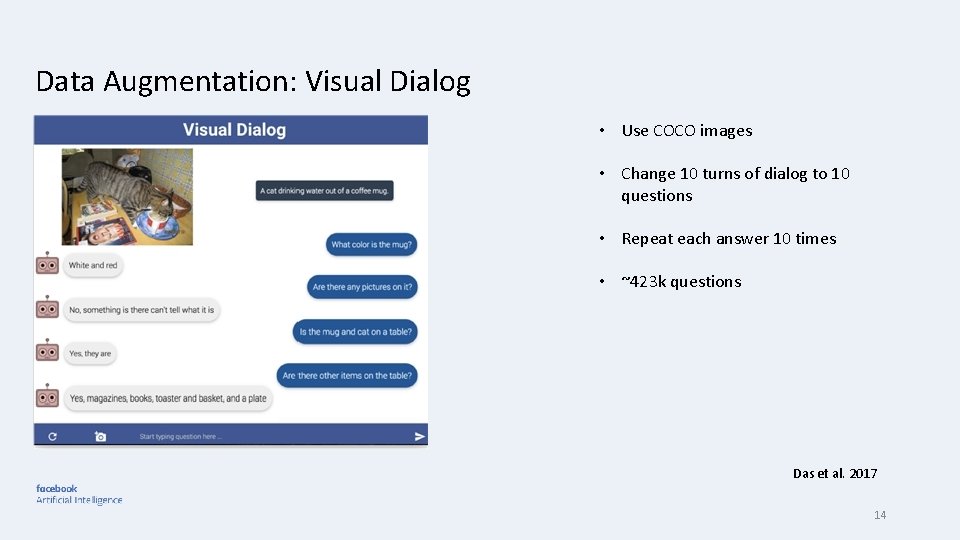

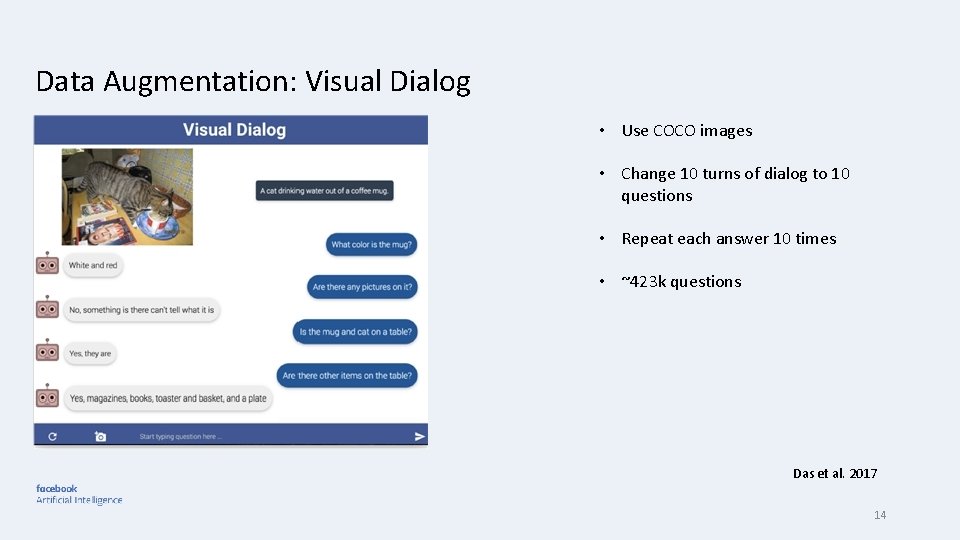

Data Augmentation: Visual Dialog • Use COCO images • Change 10 turns of dialog to 10 questions • Repeat each answer 10 times • ~423 k questions Das et al. 2017 14

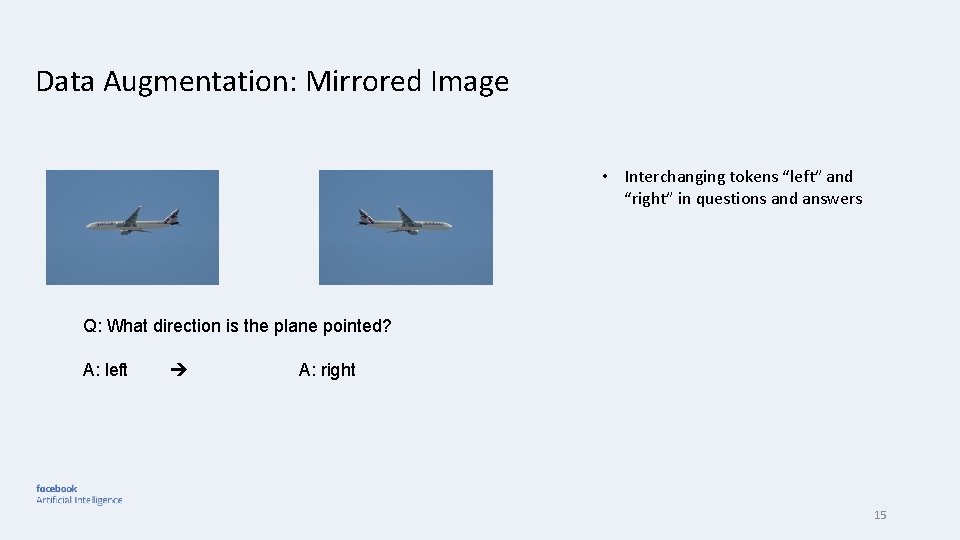

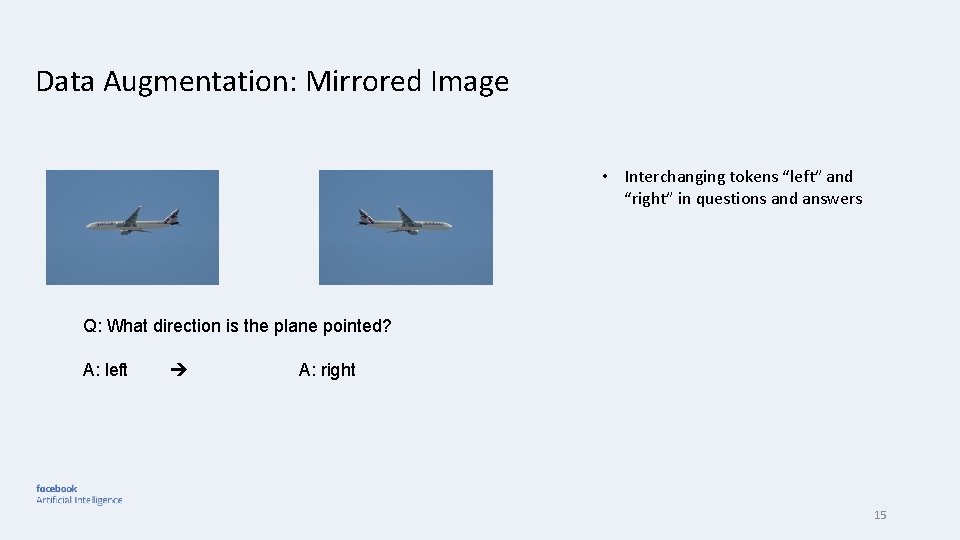

Data Augmentation: Mirrored Image • Interchanging tokens “left” and “right” in questions and answers Q: What direction is the plane pointed? A: left A: right 15

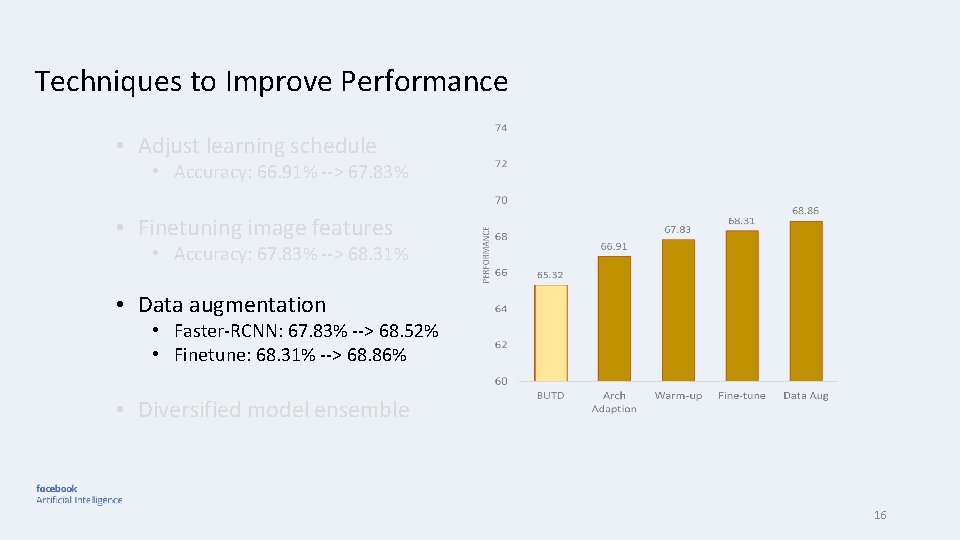

Techniques to Improve Performance • Adjust learning schedule • Accuracy: 66. 91% --> 67. 83% • Finetuning image features • Accuracy: 67. 83% --> 68. 31% • Data augmentation • Faster-RCNN: 67. 83% --> 68. 52% • Finetune: 68. 31% --> 68. 86% • Diversified model ensemble 16

Techniques to Improve Performance • Adjust learning schedule • Accuracy: 66. 91% --> 67. 83% • Finetuning image features • Accuracy: 67. 83% --> 68. 31% • Data augmentation • Faster-RCNN: 67. 83% --> 68. 52% • Finetune: 68. 31% --> 68. 86% • Diversified model ensemble 17

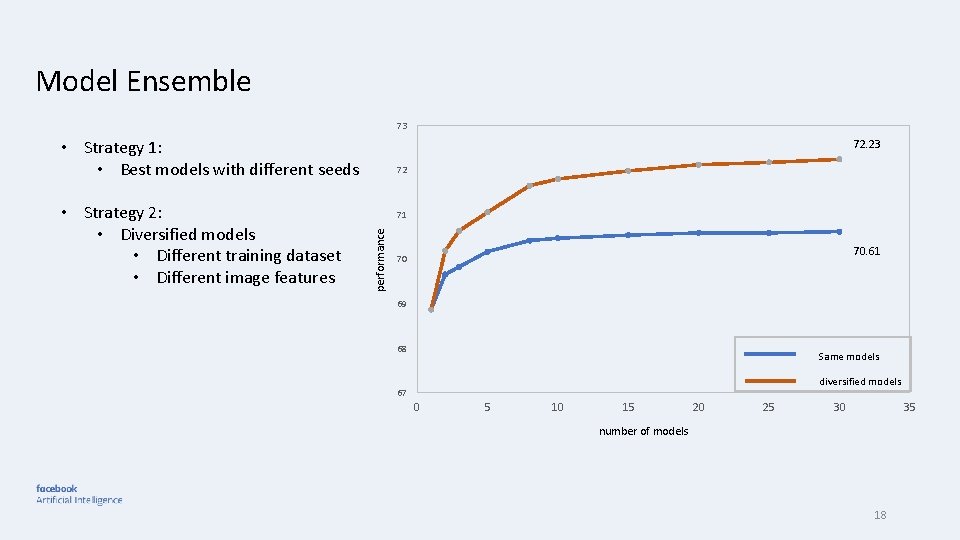

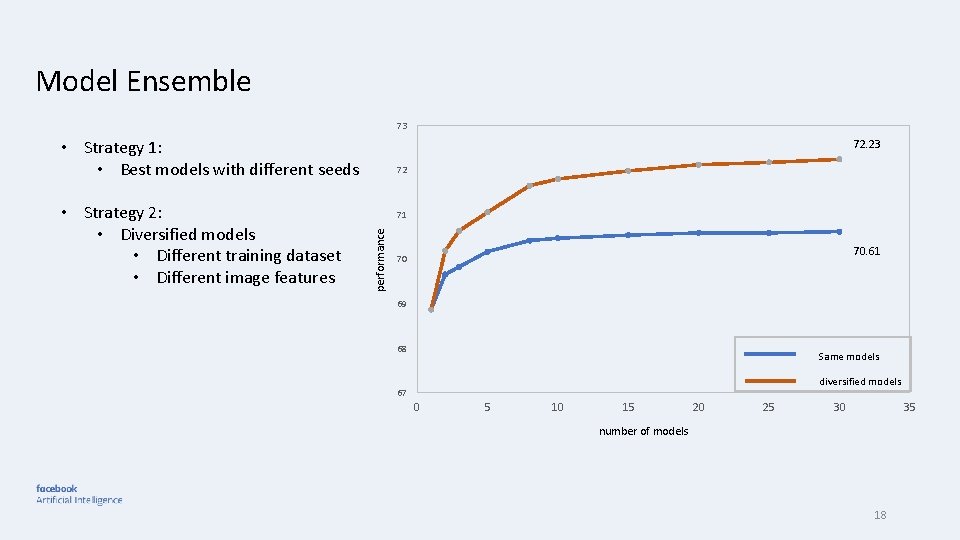

Model Ensemble 73 72. 23 • Strategy 1: • Best models with different seeds 71 performance • Strategy 2: • Diversified models • Different training dataset • Different image features 72 70. 61 70 69 68 Same models diversified models 67 0 5 10 15 20 25 30 35 number of models 18

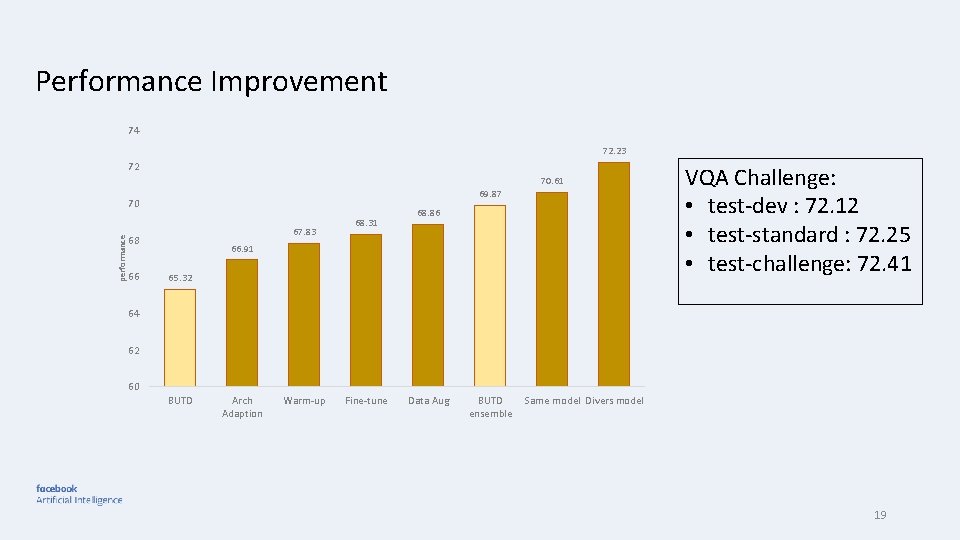

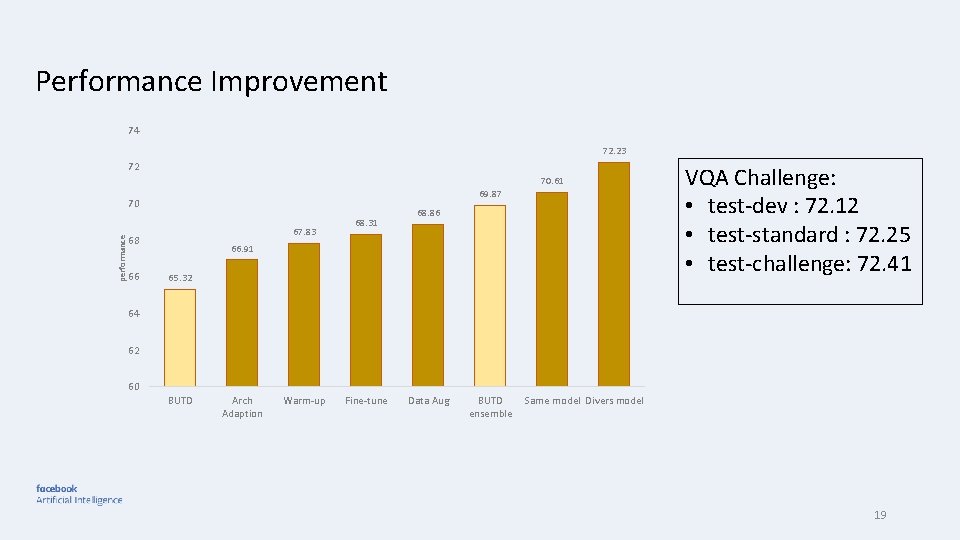

Performance Improvement 74 72. 23 72 70. 61 69. 87 performance 70 67. 83 68 66 68. 31 68. 86 66. 91 65. 32 VQA Challenge: • test-dev : 72. 12 • test-standard : 72. 25 • test-challenge: 72. 41 64 62 60 BUTD Arch Adaption Warm-up Fine-tune Data Aug BUTD ensemble Same model Divers model 19

Summary • Model architecture adaption, adjusting learning schedule, image fine-tune and data augmentation improved the single model performance • Diversified model can significantly improve ensemble performance • VQA-suite enabled all of these functionalities • Open source our codebase 20

Acknowledgments Poster Here Vivek Natarajan Xinlei Chen Marcus Rohrbach Dhruv Batra Peter Anderson Abhishek Das Stefan Lee Jiasen Lu Devi Parikh Jianwei Yang Deshraj Yadav 21