The Grid Beyond the Hype Ian Foster Argonne

The Grid: Beyond the Hype Ian Foster Argonne National Laboratory University of Chicago Globus Alliance www. mcs. anl. gov/~foster Seminar, Duke, September 14, 2004

Grid Hype 3

The Shape of Grids to Come? Internet Hype? Energy Internet 4

5 e. Science & Grid: 6 Theses 1. Scientific progress depends increasingly on large-scale distributed collaborative work 2. Such distributed collaborative work raises challenging problems of broad importance 3. Any effective attack on those problems must involve close engagement with applications 4. Open software & standards are key to producing & disseminating required solutions 5. Shared software & service infrastructure are essential application enablers 6. A cross-disciplinary community of technology producers & consumers is needed

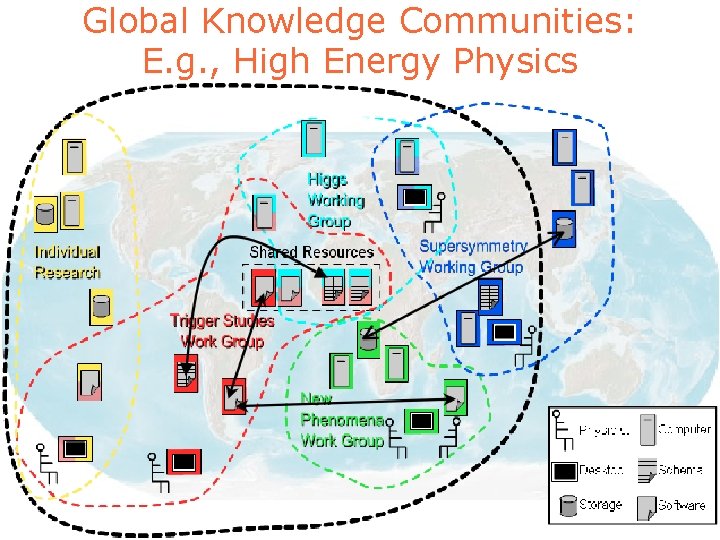

Global Knowledge Communities: E. g. , High Energy Physics

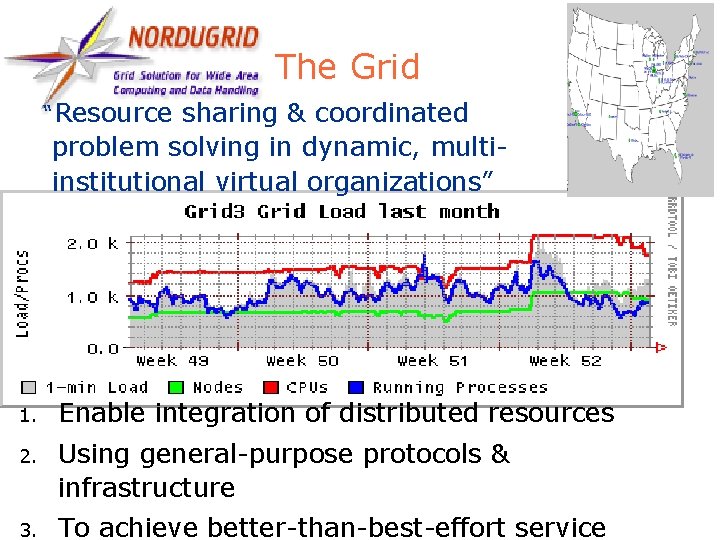

7 The Grid “Resource sharing & coordinated problem solving in dynamic, multiinstitutional virtual organizations” 1. Enable integration of distributed resources 2. Using general-purpose protocols & infrastructure 3. To achieve better-than-best-effort service

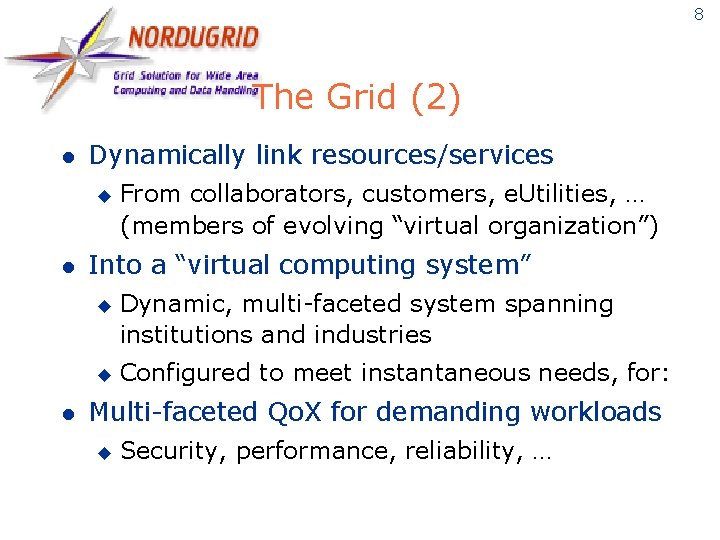

8 The Grid (2) l Dynamically link resources/services u l Into a “virtual computing system” u u l From collaborators, customers, e. Utilities, … (members of evolving “virtual organization”) Dynamic, multi-faceted system spanning institutions and industries Configured to meet instantaneous needs, for: Multi-faceted Qo. X for demanding workloads u Security, performance, reliability, …

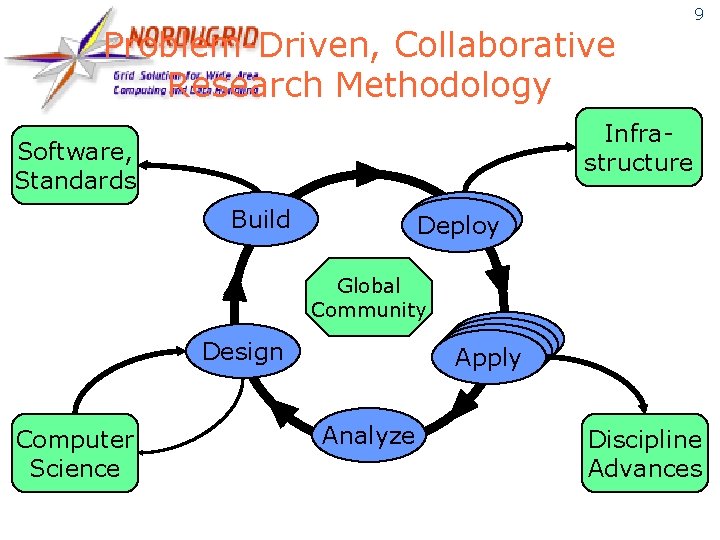

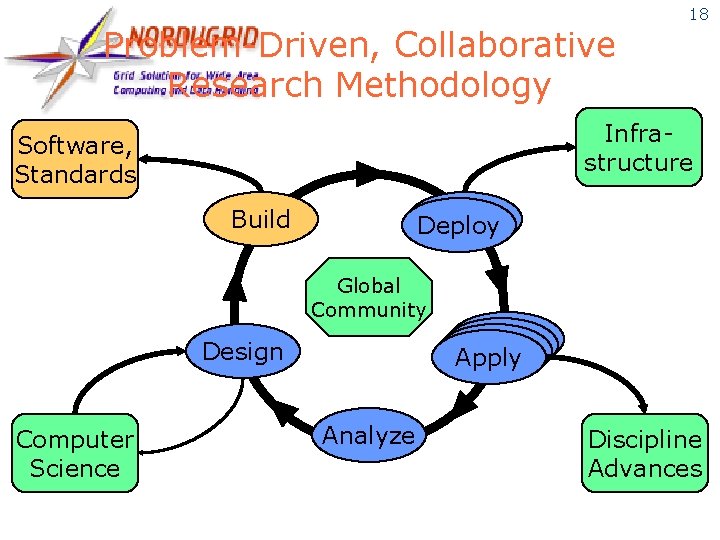

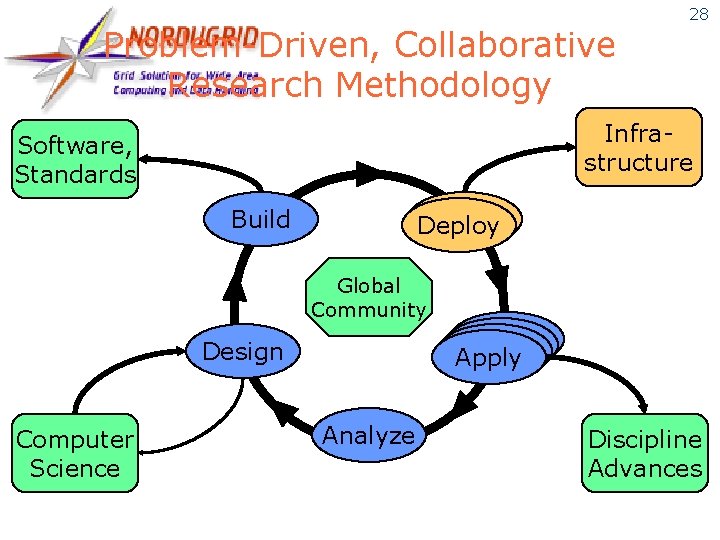

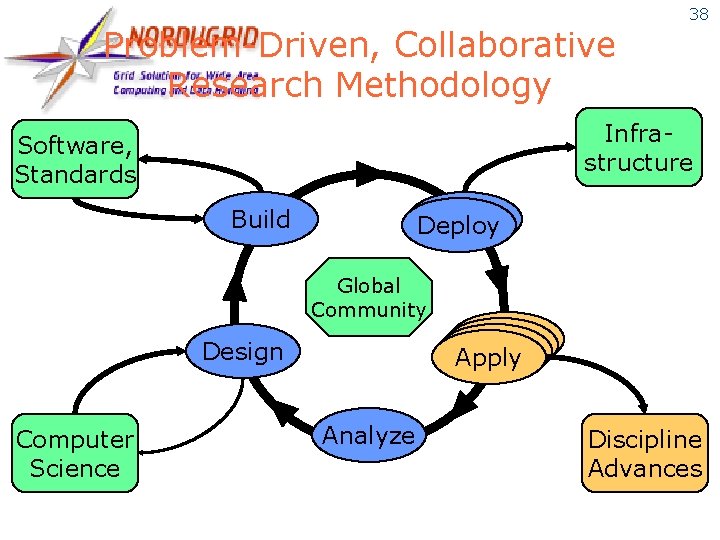

Problem-Driven, Collaborative Research Methodology 9 Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

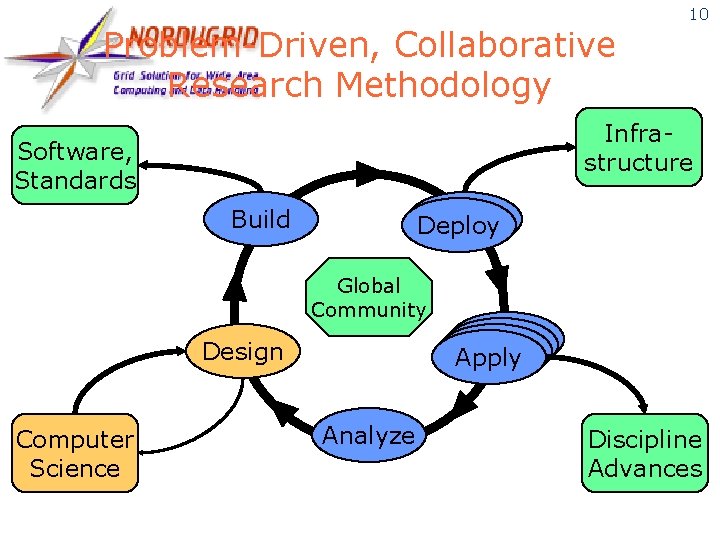

Problem-Driven, Collaborative Research Methodology 10 Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

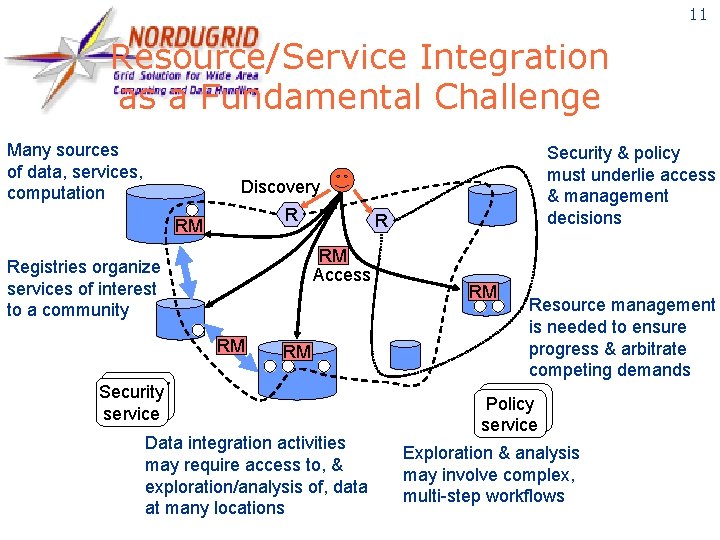

11 Resource/Service Integration as a Fundamental Challenge Many sources of data, services, computation Security & policy must underlie access & management decisions Discovery R RM Access Registries organize services of interest to a community RM RM Security service Data integration activities may require access to, & exploration/analysis of, data at many locations RM Resource management is needed to ensure progress & arbitrate competing demands Policy service Exploration & analysis may involve complex, multi-step workflows

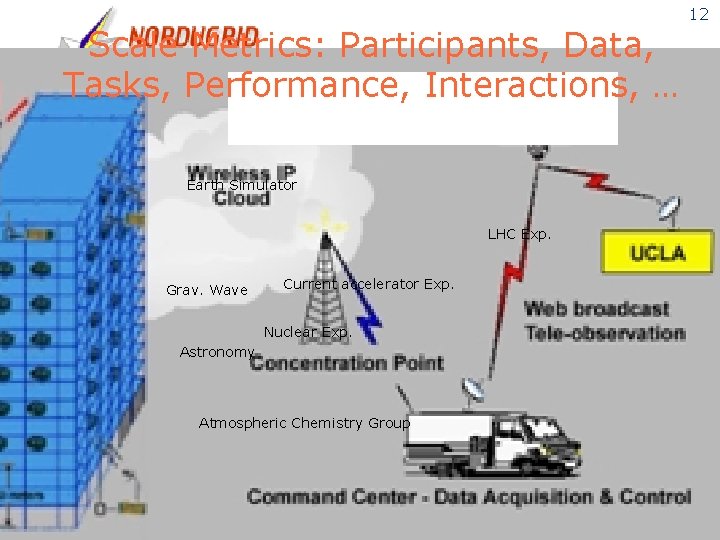

Scale Metrics: Participants, Data, Tasks, Performance, Interactions, … Earth Simulator LHC Exp. Grav. Wave Current accelerator Exp. Nuclear Exp. Astronomy Atmospheric Chemistry Group 12

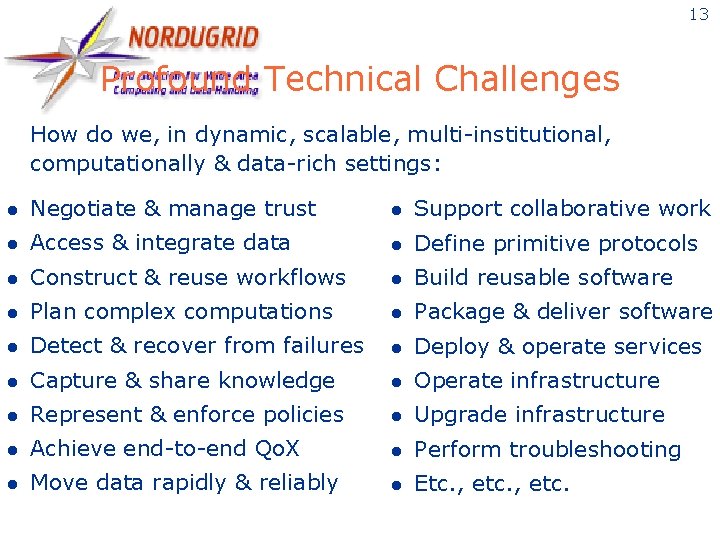

13 Profound Technical Challenges How do we, in dynamic, scalable, multi-institutional, computationally & data-rich settings: l Negotiate & manage trust l Support collaborative work l Access & integrate data l Define primitive protocols l Construct & reuse workflows l Build reusable software l Plan complex computations l Package & deliver software l Detect & recover from failures l Deploy & operate services l Capture & share knowledge l Operate infrastructure l Represent & enforce policies l Upgrade infrastructure l Achieve end-to-end Qo. X l Perform troubleshooting l Move data rapidly & reliably l Etc. , etc.

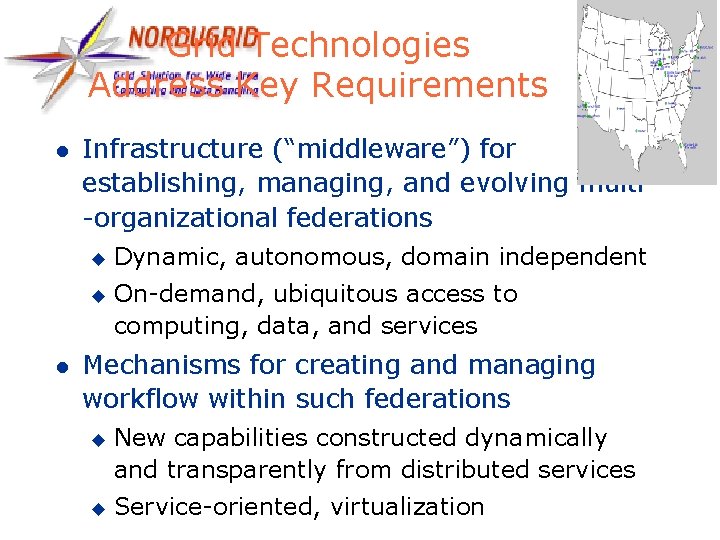

Grid Technologies Address Key Requirements l Infrastructure (“middleware”) for establishing, managing, and evolving multi -organizational federations u u l Dynamic, autonomous, domain independent On-demand, ubiquitous access to computing, data, and services Mechanisms for creating and managing workflow within such federations u u New capabilities constructed dynamically and transparently from distributed services Service-oriented, virtualization 14

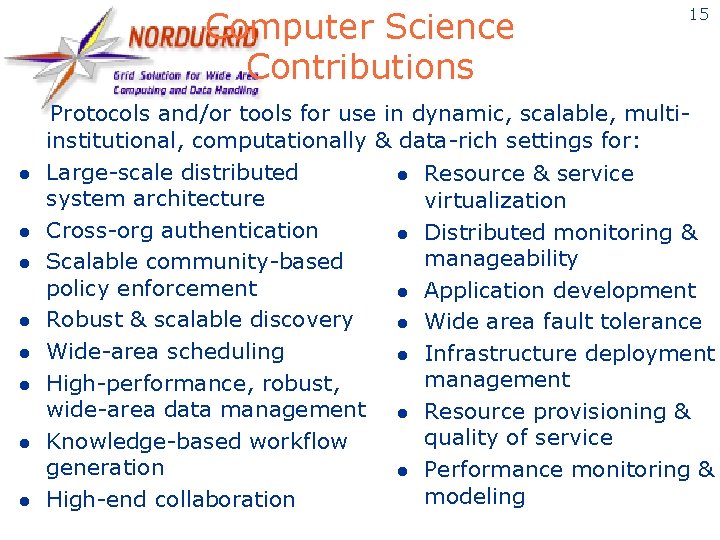

Computer Science Contributions l l l l 15 Protocols and/or tools for use in dynamic, scalable, multiinstitutional, computationally & data-rich settings for: Large-scale distributed l Resource & service system architecture virtualization Cross-org authentication l Distributed monitoring & manageability Scalable community-based policy enforcement l Application development Robust & scalable discovery l Wide area fault tolerance Wide-area scheduling l Infrastructure deployment management High-performance, robust, wide-area data management l Resource provisioning & quality of service Knowledge-based workflow generation l Performance monitoring & modeling High-end collaboration

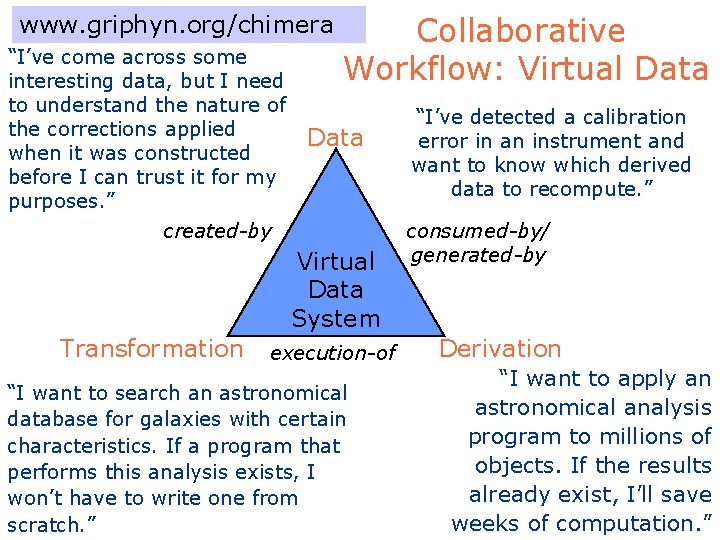

www. griphyn. org/chimera “I’ve come across some interesting data, but I need to understand the nature of the corrections applied when it was constructed before I can trust it for my purposes. ” Collaborative Workflow: Virtual Data created-by Virtual Data System Transformation execution-of “I want to search an astronomical database for galaxies with certain characteristics. If a program that performs this analysis exists, I won’t have to write one from scratch. ” “I’ve detected a calibration error in an instrument and want to know which derived data to recompute. ” consumed-by/ generated-by Derivation “I want to apply an astronomical analysis program to millions of objects. If the results already exist, I’ll save weeks of computation. ”

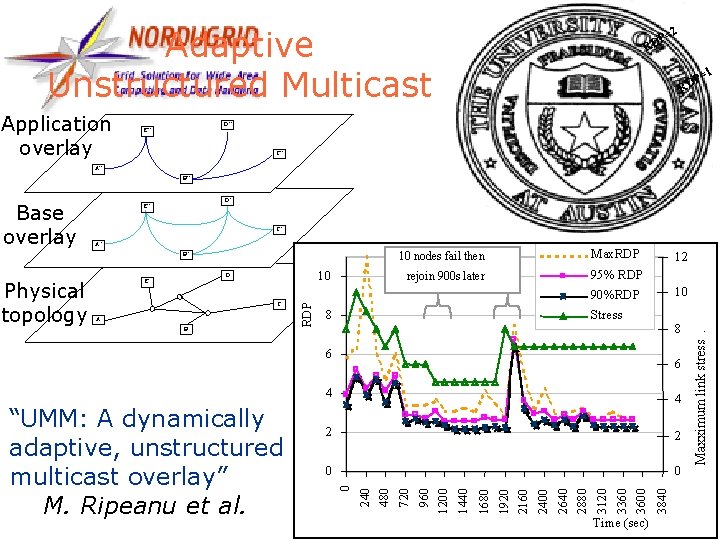

17 Adaptive Unstructured Multicast Application overlay =2 P RD =1 P RD D” E” C” A” B” C’ A’ B’ C A B Max. RDP rejoin 900 s later 95% RDP 12 10 90%RDP Physical topology 10 D E 10 nodes fail then 8 Stress 8 6 Time (sec) 3840 3360 3600 3120 2640 2880 2400 1920 2160 0 1680 0 1440 2 960 1200 2 720 4 240 480 4 0 “UMM: A dynamically adaptive, unstructured multicast overlay” M. Ripeanu et al. 6 Maxximum link stress. Base overlay D’ E’

Problem-Driven, Collaborative Research Methodology 18 Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

19 Open Standards & Software l l Standardized & interoperable mechanisms for secure & reliable: u Authentication, authorization, policy, … u Representation & management of state u Initiation & management of computation u Data access & movement u Communication & notification Good quality open source implementations to accelerate adoption & development u E. g. , Globus Toolkit

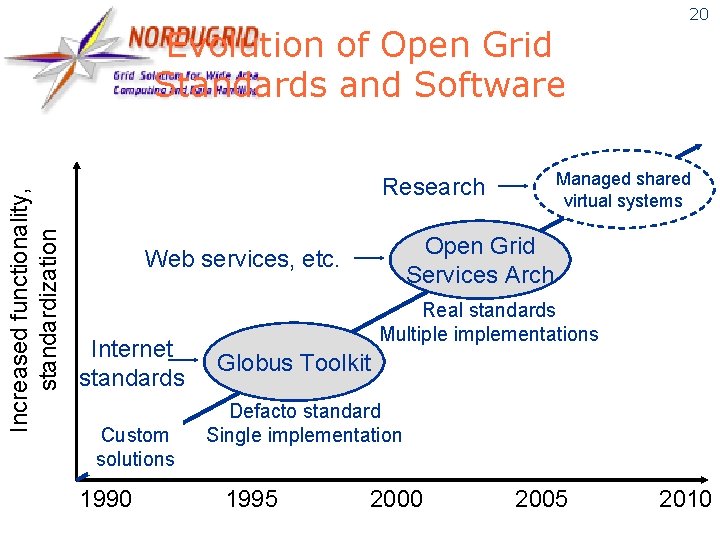

Increased functionality, standardization Evolution of Open Grid Standards and Software Managed shared virtual systems Research Open Grid Services Arch Web services, etc. Internet standards Custom solutions 1990 20 Real standards Multiple implementations Globus Toolkit Defacto standard Single implementation 1995 2000 2005 2010

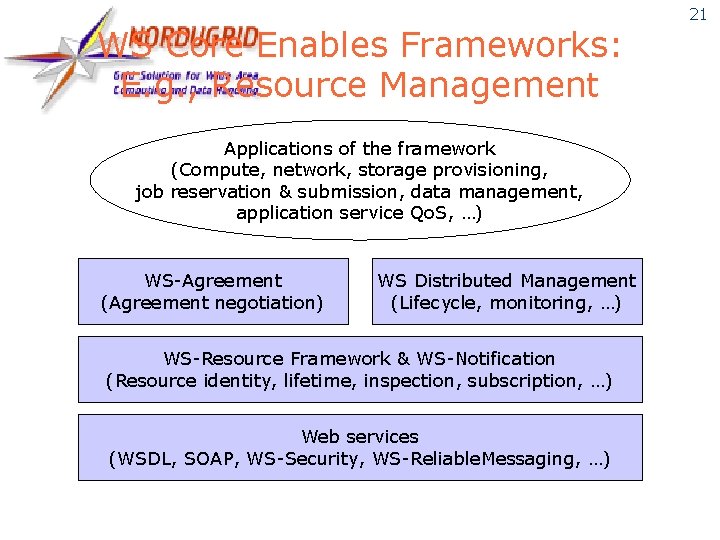

WS Core Enables Frameworks: E. g. , Resource Management Applications of the framework (Compute, network, storage provisioning, job reservation & submission, data management, application service Qo. S, …) WS-Agreement (Agreement negotiation) WS Distributed Management (Lifecycle, monitoring, …) WS-Resource Framework & WS-Notification (Resource identity, lifetime, inspection, subscription, …) Web services (WSDL, SOAP, WS-Security, WS-Reliable. Messaging, …) 21

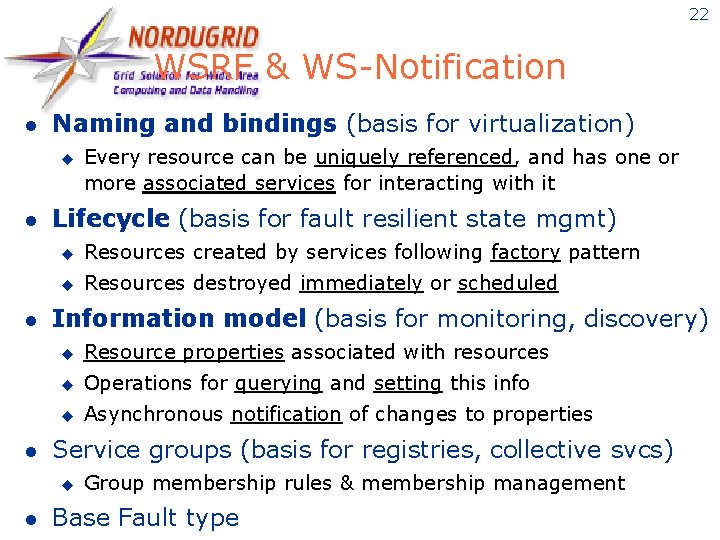

22 WSRF & WS-Notification l Naming and bindings (basis for virtualization) u l l l Lifecycle (basis for fault resilient state mgmt) u Resources created by services following factory pattern u Resources destroyed immediately or scheduled Information model (basis for monitoring, discovery) u Resource properties associated with resources u Operations for querying and setting this info u Asynchronous notification of changes to properties Service groups (basis for registries, collective svcs) u l Every resource can be uniquely referenced, and has one or more associated services for interacting with it Group membership rules & membership management Base Fault type

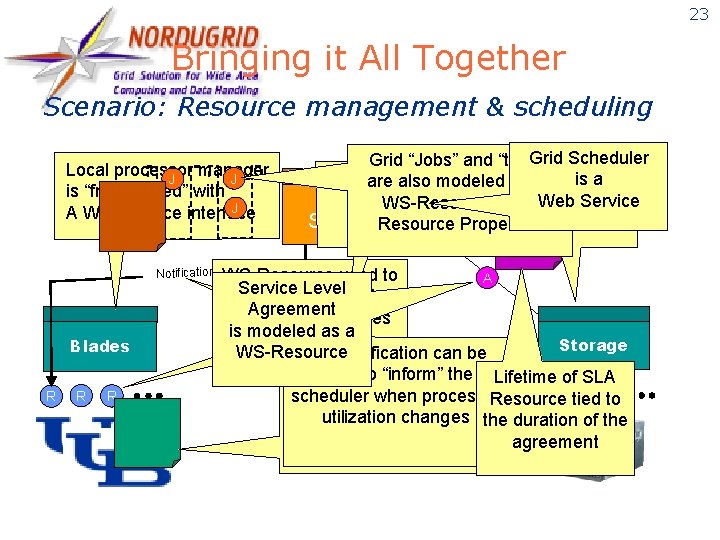

23 Bringing it All Together Scenario: Resource management & scheduling Local processor manager J J is “front-ended” with J A Web service interface Notification Blades R R R Grid Scheduler Grid “Jobs” and “tasks” is a Otherare kinds resources are alsoofmodeled using Grid“modeled” as WS-Resources and. Web Service Scheduler Resource Properties Level WS-Resource used to A Service Level “model” physical Agreement processor resources is modeled as a Storage Network WS-Resource WS-Notification can be used to “inform” the Lifetime of SLA R R Properties scheduler when processor R Resource tied. Rto WS-Resource utilization changes “project” processor statusthe duration of the agreement (like utilization)

24 The Globus Alliance & Toolkit (Argonne, USC/ISI, Edinburgh, PDC) l An international partnership dedicated to creating & disseminating high-quality open source Grid technology: the Globus Toolkit u l Design, engineering, support, governance Academic Affiliates make major contributions u EU: CERN, Imperial, MPI, Poznan u AP: AIST, TIT, Monash u US: NCSA, SDSC, TACC, UCSB, UW, etc. l Significant industrial contributions l 1000 s of users worldwide, many contribute

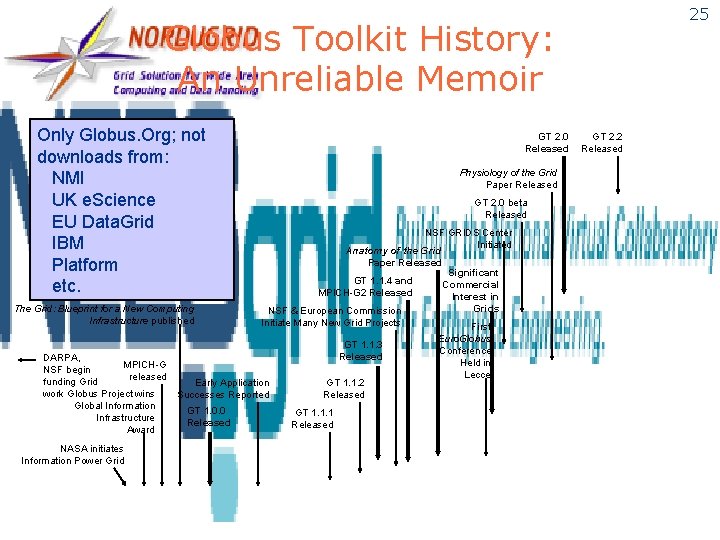

25 Globus Toolkit History: An Unreliable Memoir Only Globus. Org; not downloads from: NMI UK e. Science EU Data. Grid IBM Platform etc. The Grid: Blueprint for a New Computing Infrastructure published DARPA, MPICH-G NSF begin released funding Grid work Globus Project wins Global Information Infrastructure Award NASA initiates Information Power Grid GT 2. 0 Released Physiology of the Grid Paper Released GT 2. 0 beta Released NSF GRIDS Center Initiated Anatomy of the Grid Paper Released Significant GT 1. 1. 4 and Commercial MPICH-G 2 Released Interest in Grids NSF & European Commission Initiate Many New Grid Projects First GT 1. 1. 3 Released Early Application Successes Reported GT 1. 0. 0 Released GT 1. 1. 2 Released GT 1. 1. 1 Released Euro. Globus Conference Held in Lecce GT 2. 2 Released

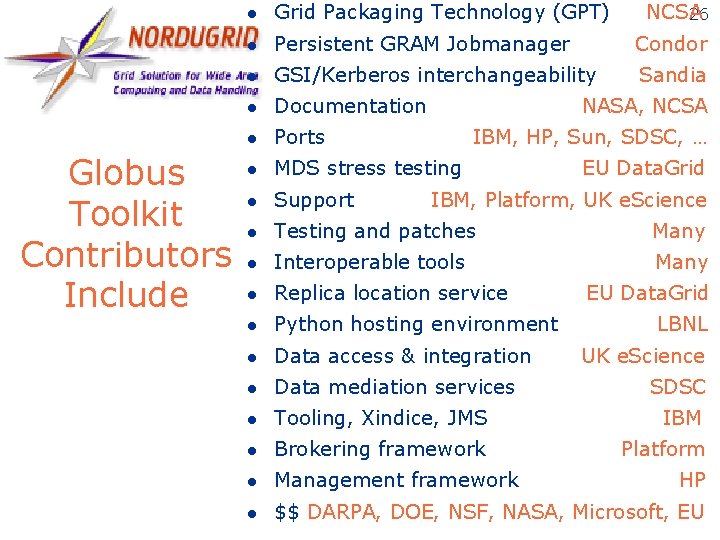

Globus Toolkit Contributors Include l Grid Packaging Technology (GPT) NCSA 26 l Persistent GRAM Jobmanager Condor l GSI/Kerberos interchangeability Sandia l Documentation l Ports l MDS stress testing l Support l Testing and patches Many l Interoperable tools Many l Replica location service l Python hosting environment l Data access & integration l Data mediation services l Tooling, Xindice, JMS IBM l Brokering framework Platform l Management framework l $$ DARPA, DOE, NSF, NASA, Microsoft, EU NASA, NCSA IBM, HP, Sun, SDSC, … EU Data. Grid IBM, Platform, UK e. Science EU Data. Grid LBNL UK e. Science SDSC HP

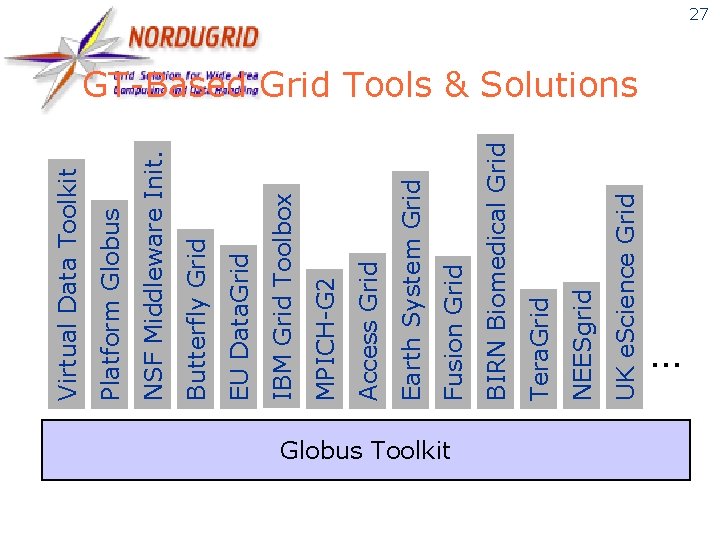

Globus Toolkit UK e. Science Grid NEESgrid Tera. Grid BIRN Biomedical Grid Fusion Grid Earth System Grid Access Grid MPICH-G 2 IBM Grid Toolbox EU Data. Grid Butterfly Grid NSF Middleware Init. Platform Globus Virtual Data Toolkit 27 GT-Based Grid Tools & Solutions …

Problem-Driven, Collaborative Research Methodology 28 Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

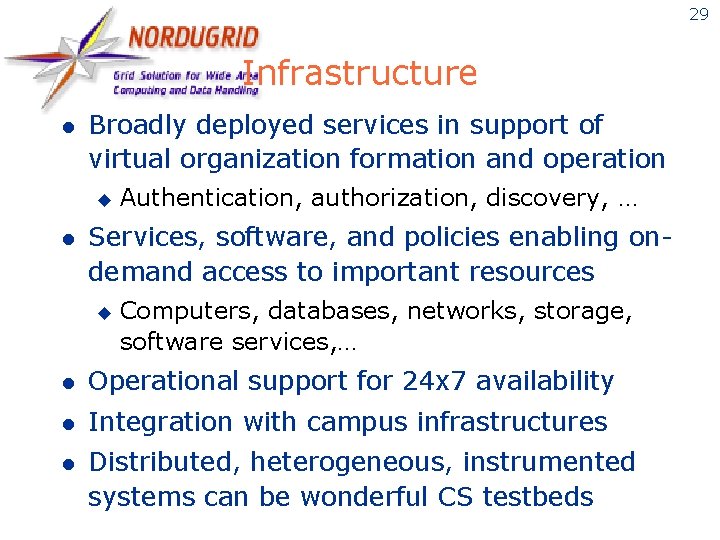

29 Infrastructure l Broadly deployed services in support of virtual organization formation and operation u l Authentication, authorization, discovery, … Services, software, and policies enabling ondemand access to important resources u Computers, databases, networks, storage, software services, … l Operational support for 24 x 7 availability l Integration with campus infrastructures l Distributed, heterogeneous, instrumented systems can be wonderful CS testbeds

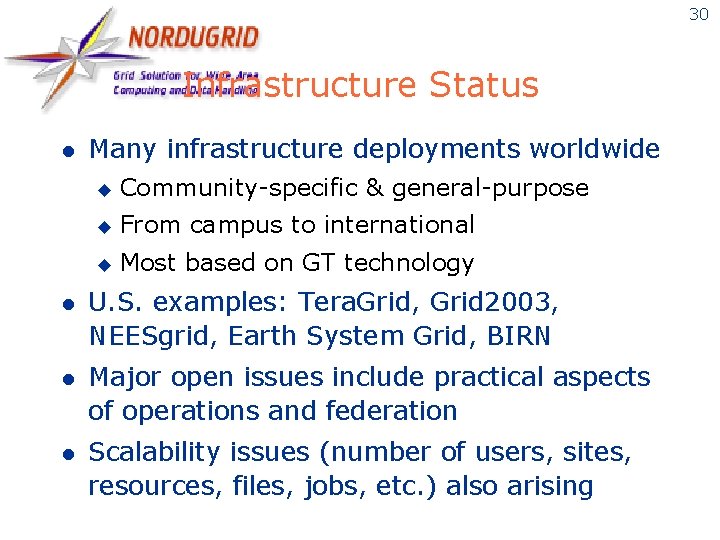

30 Infrastructure Status l Many infrastructure deployments worldwide u Community-specific & general-purpose u From campus to international u Most based on GT technology l U. S. examples: Tera. Grid, Grid 2003, NEESgrid, Earth System Grid, BIRN l Major open issues include practical aspects of operations and federation l Scalability issues (number of users, sites, resources, files, jobs, etc. ) also arising

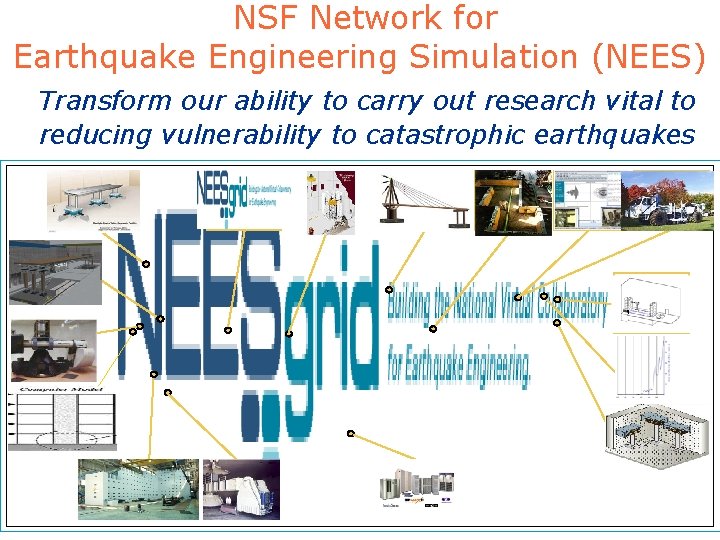

NSF Network for Earthquake Engineering Simulation (NEES) Transform our ability to carry out research vital to reducing vulnerability to catastrophic earthquakes

32 NEESgrid User Perspective Secure, reliable, ondemand access to data, software, people, and other resources (ideally all via a Web Browser!)

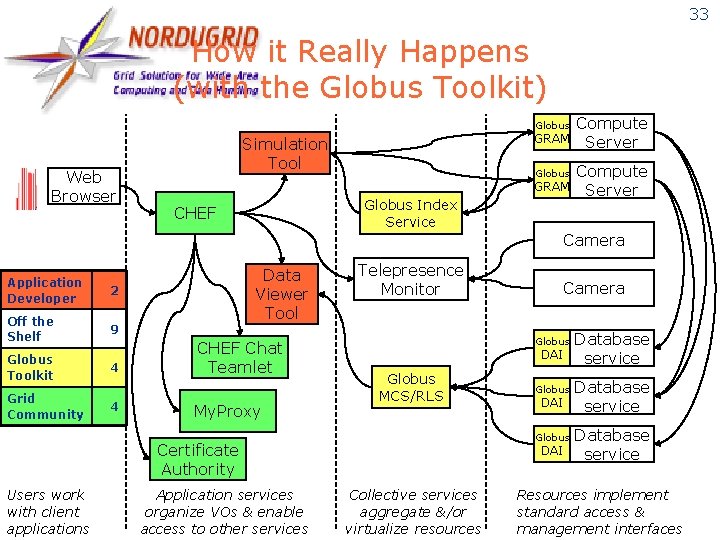

33 How it Really Happens (with the Globus Toolkit) Globus Web Browser GRAM Simulation Tool Globus GRAM Globus Index Service CHEF Compute Server Camera Application Developer 2 Off the Shelf 9 Globus Toolkit Grid Community 4 4 Data Viewer Tool CHEF Chat Teamlet My. Proxy Telepresence Monitor Globus DAI Globus MCS/RLS Application services organize VOs & enable access to other services Globus DAI Globus Certificate Authority Users work with client applications Camera DAI Collective services aggregate &/or virtualize resources Database service Resources implement standard access & management interfaces

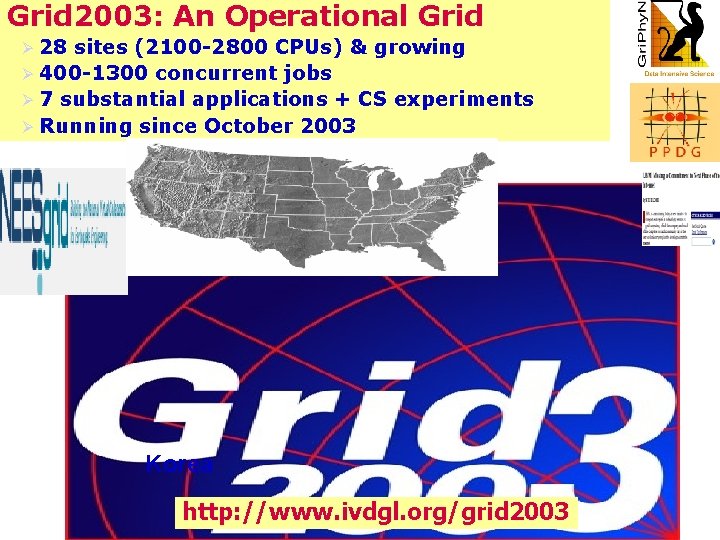

Grid 2003: An Operational Grid Ø 28 sites (2100 -2800 CPUs) & growing Ø 400 -1300 concurrent jobs Ø 7 substantial applications + CS experiments Ø Running since October 2003 Korea http: //www. ivdgl. org/grid 2003 34

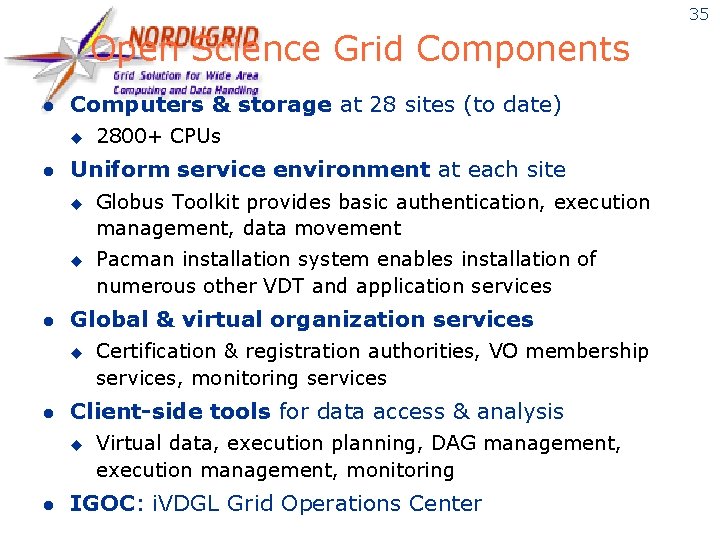

35 Open Science Grid Components l Computers & storage at 28 sites (to date) u l Uniform service environment at each site u u l Pacman installation system enables installation of numerous other VDT and application services Certification & registration authorities, VO membership services, monitoring services Client-side tools for data access & analysis u l Globus Toolkit provides basic authentication, execution management, data movement Global & virtual organization services u l 2800+ CPUs Virtual data, execution planning, DAG management, execution management, monitoring IGOC: i. VDGL Grid Operations Center

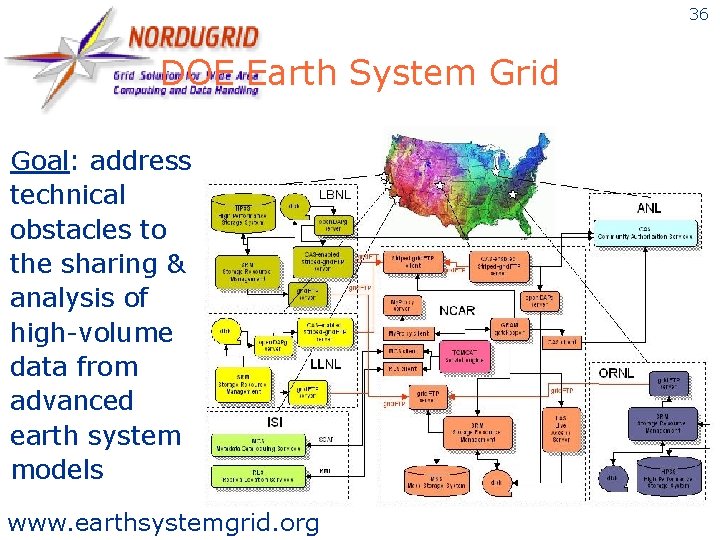

36 DOE Earth System Grid Goal: address technical obstacles to the sharing & analysis of high-volume data from advanced earth system models www. earthsystemgrid. org

Earth System Grid 37

Problem-Driven, Collaborative Research Methodology 38 Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

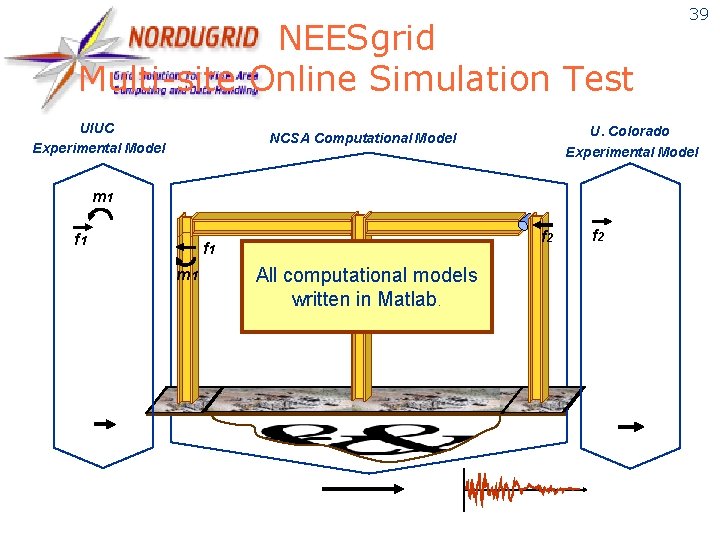

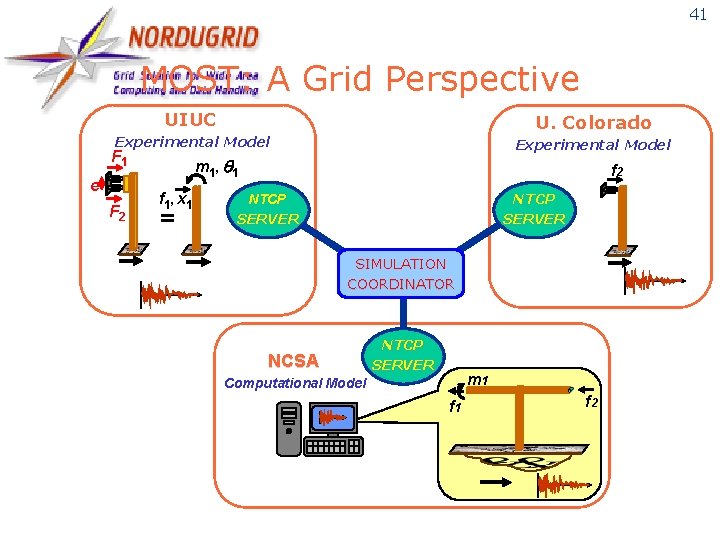

NEESgrid Multi-site Online Simulation Test UIUC Experimental Model U. Colorado Experimental Model NCSA Computational Model m 1 f 2 f 1 m 1 All computational models written in Matlab. 39 f 2

40 NEESgrid Multisite Online Simulation Test (July 2003) Colorado Illinois (simulation)

41 MOST: A Grid Perspective UIUC U. Colorado Experimental Model F 1 e F 2 m 1 , q 1 f 1 , x 1 = f 2 NTCP SERVER SIMULATION COORDINATOR NCSA NTCP SERVER m 1 Computational Model f 1 f 2

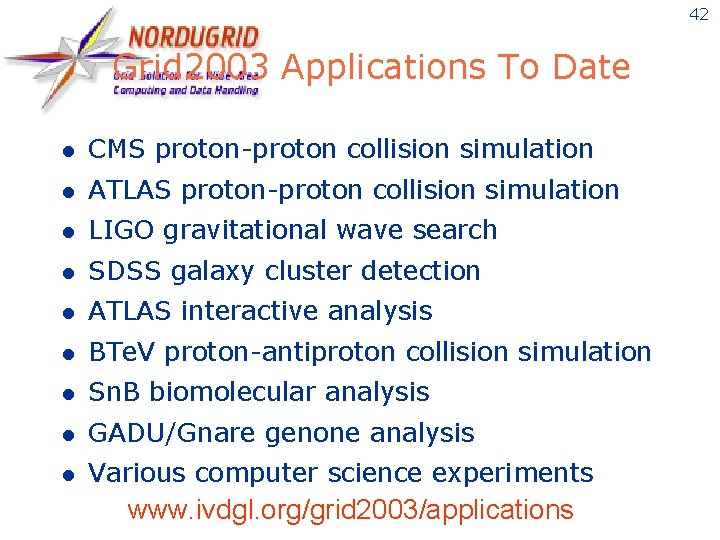

42 Grid 2003 Applications To Date l CMS proton-proton collision simulation l ATLAS proton-proton collision simulation l LIGO gravitational wave search l SDSS galaxy cluster detection l ATLAS interactive analysis l BTe. V proton-antiproton collision simulation l Sn. B biomolecular analysis l GADU/Gnare genone analysis l Various computer science experiments www. ivdgl. org/grid 2003/applications

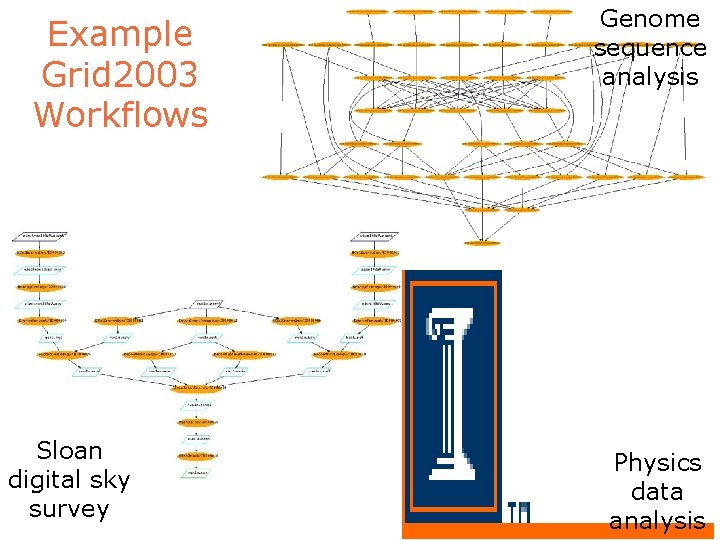

Example Grid 2003 Workflows Sloan digital sky survey Genome sequence analysis Physics data analysis

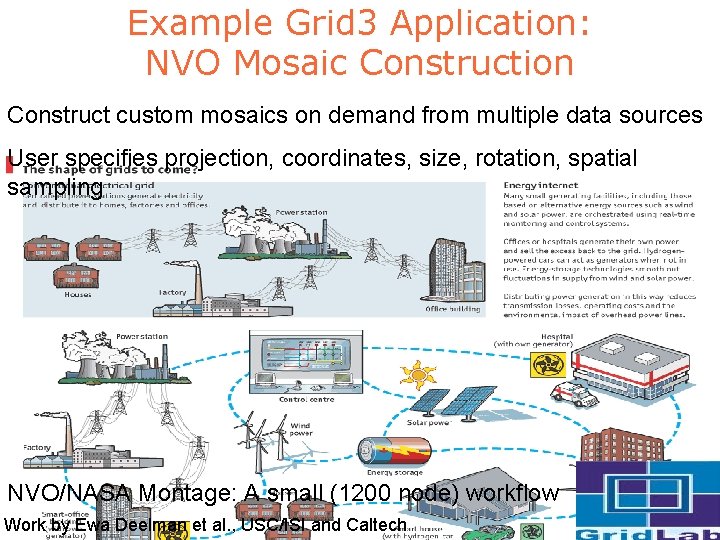

Example Grid 3 Application: NVO Mosaic Construction Construct custom mosaics on demand from multiple data sources User specifies projection, coordinates, size, rotation, spatial sampling NVO/NASA Montage: A small (1200 node) workflow Work by Ewa Deelman et al. , USC/ISI and Caltech

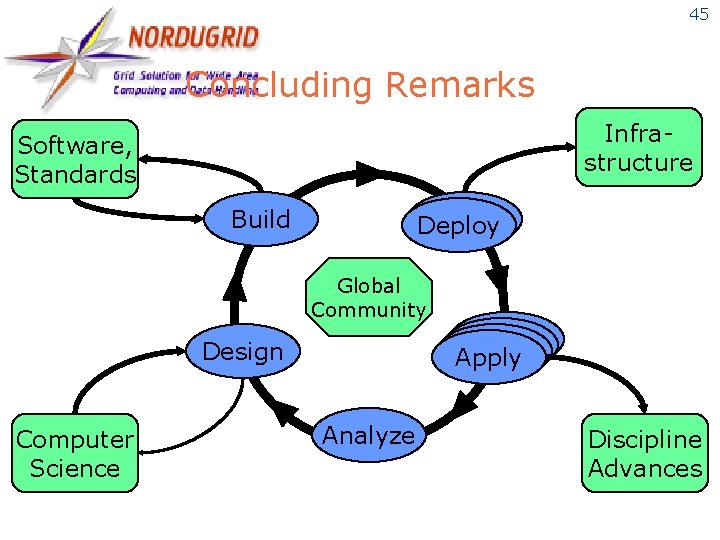

45 Concluding Remarks Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science Analyze Discipline Advances

46 e. Science & Grid: 6 Theses 1. Scientific progress depends increasingly on large-scale distributed collaborative work 2. Such distributed collaborative work raises challenging problems of broad importance 3. Any effective attack on those problems must involve close engagement with applications 4. Open software & standards are key to producing & disseminating required solutions 5. Shared software & service infrastructure are essential application enablers 6. A cross-disciplinary community of technology producers & consumers is needed

Global Community

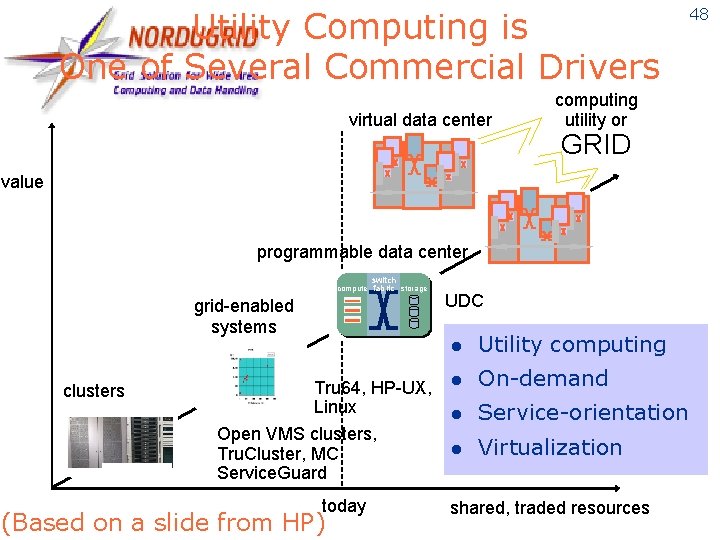

Utility Computing is One of Several Commercial Drivers virtual data center computing utility or GRID value programmable data center switch compute fabric storage grid-enabled systems clusters Tru 64, HP-UX, Linux Open VMS clusters, Tru. Cluster, MC Service. Guard today (Based on a slide from HP) UDC l Utility computing l On-demand l Service-orientation l Virtualization shared, traded resources 48

49 Significant Challenges Remain l l l Scaling in multiple dimensions u Ambition and complexity of applications u Number of users, datasets, services, … u From technologies to solutions The need for persistent infrastructure u Software and people as well as hardware u Currently no long-term commitment Institutionalizing multidisciplinary approach u Understand implications on the practice of computer science research

50 Thanks, in particular, to: l Carl Kesselman and Steve Tuecke, my longtime Globus co-conspirators l Gregor von Laszewski, Kate Keahey, Jennifer Schopf, Mike Wilde, Argonne colleagues l Globus Alliance members at Argonne, U. Chicago, USC/ISI, Edinburgh, PDC l Miron Livny, U. Wisconsin Condor project, Rick Stevens, Argonne & U. Chicago l Other partners in Grid technology, application, & infrastructure projects l DOE, NSF, NASA, IBM for generous support

51 For More Information l Globus Alliance u l Global Grid Forum u l www. opensciencegrid. org Background information u l www. ggf. org Open Science Grid u l www. globus. org www. mcs. anl. gov/~foster Globus. WORLD 2005 u Feb 7 -11, Boston 2 nd Edition www. mkp. com/grid 2

- Slides: 50