PeertoPeer Computing The hype the hard problems and

Peer-to-Peer Computing: The hype, the hard problems and quest for solutions Krishna Kant Ravi Iyer Vijay Tewari Intel Corporation www. intel. com/labs

Outline Section I Overview of P 2 P Framework Overview of distributed computing frameworks Additional P 2 P framework requirements P 2 P Middleware Section II Taxonomy of P 2 P applications Research Issues Section III Preliminary Performance Modeling Conclusion www. intel. com/labs 2

Goals for Section I Examine the early beginnings of Peer-to-Peer. Look at some possible definitions of Peer-to-Peer General idea about the Peer-to-Peer applications and frameworks. Identify the requirements of Peer-to-Peer applications. www. intel. com/labs 3

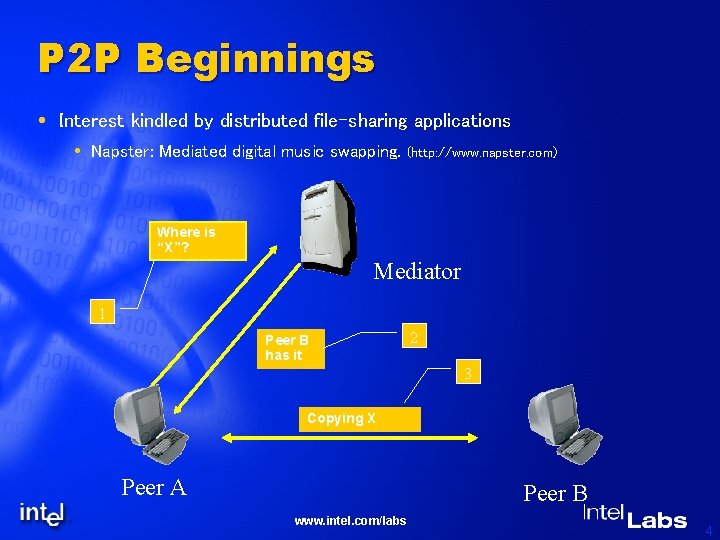

P 2 P Beginnings Interest kindled by distributed file-sharing applications Napster: Mediated digital music swapping. (http: //www. napster. com) Where is “X”? Mediator 1 Peer B has it 2 3 Copying X Peer A Peer B www. intel. com/labs 4

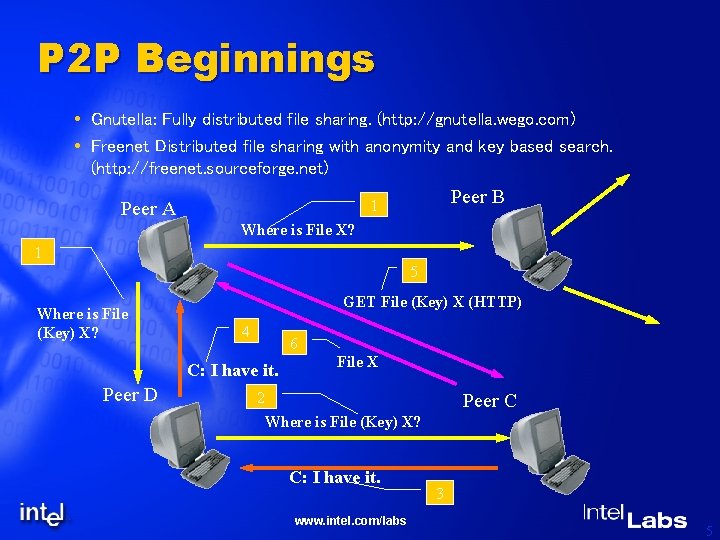

P 2 P Beginnings Gnutella: Fully distributed file sharing. (http: //gnutella. wego. com) Freenet Distributed file sharing with anonymity and key based search. (http: //freenet. sourceforge. net) Peer B 1 Peer A Where is File X? 1 5 Where is File (Key) X? GET File (Key) X (HTTP) 4 6 C: I have it. Peer D File X 2 Peer C Where is File (Key) X? C: I have it. www. intel. com/labs 3 5

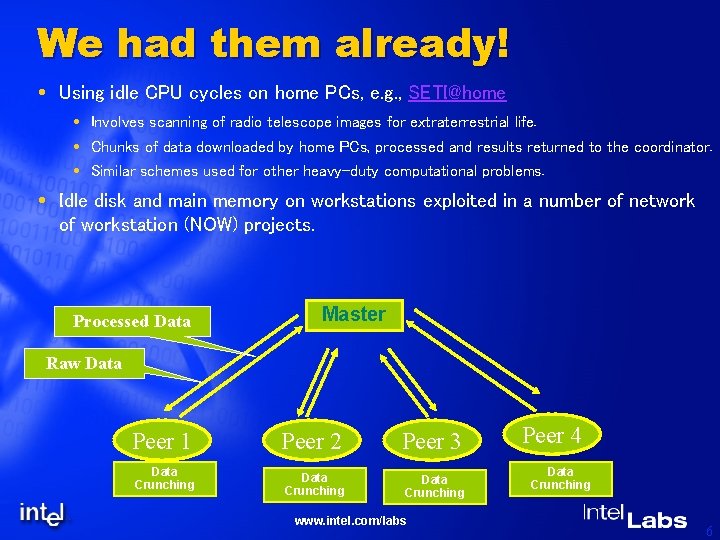

We had them already! Using idle CPU cycles on home PCs, e. g. , SETI@home Involves scanning of radio telescope images for extraterrestrial life. Chunks of data downloaded by home PCs, processed and results returned to the coordinator. Similar schemes used for other heavy-duty computational problems. Idle disk and main memory on workstations exploited in a number of network of workstation (NOW) projects. Processed Data Master Raw Data Peer 1 Peer 2 Peer 3 Data Crunching www. intel. com/labs Peer 4 Data Crunching 6

Newer Applications P 2 P streaming media distribution Center. Span (C-Star Multisource Peer Streaming) Mediated, Secure P 2 P platform for distributing digital content. Partition content and encrypt each segment. Distribute segments amongst peers. Redundant distribution for reliability. Download segments from local cache, peers or seed servers. http: //www. centerspan. com v. Trails vt. Caster: At stream source. Creates network topology tree based on end users (vt. Pass client software). Dynamically optimizes tree. Content distributed in a tiered manner. http: //www. vtrails. com www. intel. com/labs 7

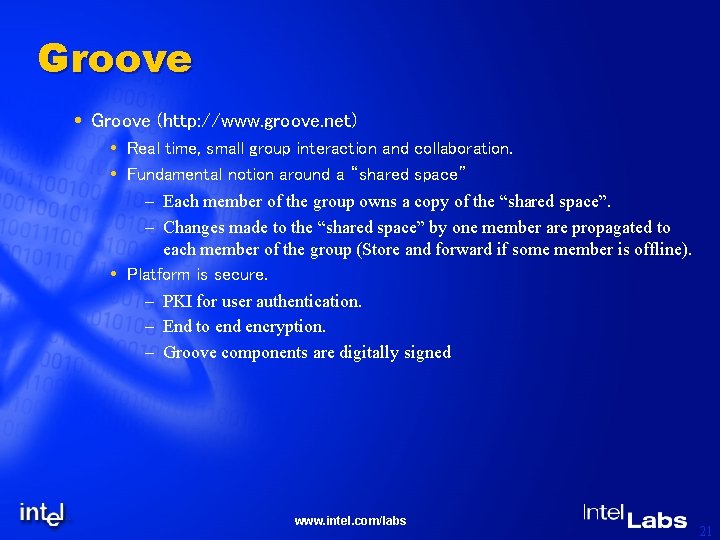

Newer Applications P 2 P Collaboration Groove (http: //www. groove. net) Real time, small group interaction and collaboration. Fundamental notion around a “shared space” – Each member of the group owns a copy of the “shared space”. – Changes made to the “shared space” by one member are propagated to each member of the group (Store and forward if some member is offline). Platform is secure. – PKI for user authentication. – End to end encryption. – Groove components are digitally signed www. intel. com/labs 8

So, what is P 2 P? Hype: A new paradigm that can Unlock vast idle computing power of the Internet, and Provide unlimited performance scaling. Skeptic’s view: Nothing new, just distributed computing “re-discovered” or made fashionable. Reality: Distributed computing on a large scale No longer limited to a single LAN or a single domain. Autonomous nodes, no controlling/managing authority. Heterogeneous nodes intermittently connected via links of varying speed and reliability. A tentative definition: A dynamic network (peers can come & go as they please) No central controlling or managing authority. A node can act as both as a “client” and as a “server”. www. intel. com/labs 9

P 2 P Platforms Legion, University of Virginia, Now owned by “Avaki” Corp. Globe, Vrije Univ. , Netherlands Globus, Developed by a consortium including Argonne Natl. Lab and USC’s Information Sciences Institute. JXTA, Open source P 2 P effort started by Sun Microsystems. . NET by Microsoft Corp. Web. OS, University of Washington Magi, Endeavors Technology Groove www. intel. com/labs 10

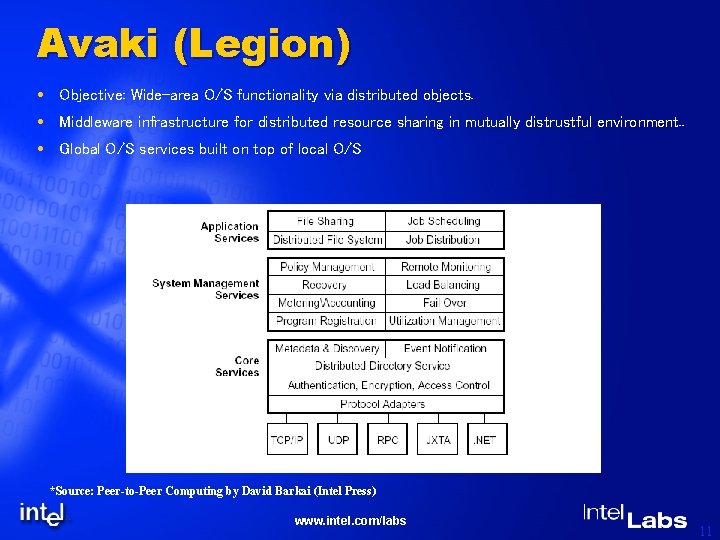

Avaki (Legion) Objective: Wide-area O/S functionality via distributed objects. Middleware infrastructure for distributed resource sharing in mutually distrustful environment. . Global O/S services built on top of local O/S *Source: Peer-to-Peer Computing by David Barkai (Intel Press) www. intel. com/labs 11

Avaki (Legion) Naming: LOID (location Indep. Object Id), current object address & object name Persistent object space: generalization of file-system (manages files, classes, hosts, etc. ) Communication: RPC like except that the results can be forwarded to the real consumer directly. Security: RSA keys a part of LOIDs, Encryption, authentication, digesting provided. Local autonomy: Objects call local O/S services for all management, protection and scheduling. Active objects: objects represent both processes and methods. Overall: Comprehensive WAN O/S, but not targeted as a general P 2 P enabler. www. intel. com/labs 12

Globe Objective: Another model for WAN O/S. Distributed passive object model. Processes are separate entities that bind to objects. Each object consists of 4 subobjects: Semantics subobject for functionality. Communication subobject for inter-object communication. Replication subobject for replica handling including consistency maintenance. Control subobject for control flow within the object. Binding to object includes two steps: Name & location lookup and contact address creation. Selecting an implementation of the interface. Overall: Similar to Legion, except that processes and objects are not tightly integrated. www. intel. com/labs 13

Globus Objective: Grid computing, integration of existing services. Defines a collection of services, e. g. , Service discovery protocol Resource location & availability protocol Resource replication service Performance monitoring service Any service can be defined and becomes the part of the “system”. Higher level services can be built on top of basic ones. Preserves site autonomy. Existing legacy services can be offered unaltered. Overall: Excellent reusability. Unconstrained toolbox approach => Very difficult to join two “islands”. www. intel. com/labs 14

JXTA Objective: A low-level framework to support P 2 P applications: Avoids any reference to specific policies or usage models. Not targeted for any specific language, O/S, runtime environment, or networking model. All exchanges are XML based. Base concepts for Identifiers Advertisements Peer Groups Pipes At the highest abstraction defines a set of protocols using the base concepts: Peer Discovery protocol: Discovery of peers, resources, peer groups etc. Peer Resolver Protocol Peer Information Protocol Peer Membership protocol. Pipe binding protocol Peer endpoint protocol. www. intel. com/labs 15

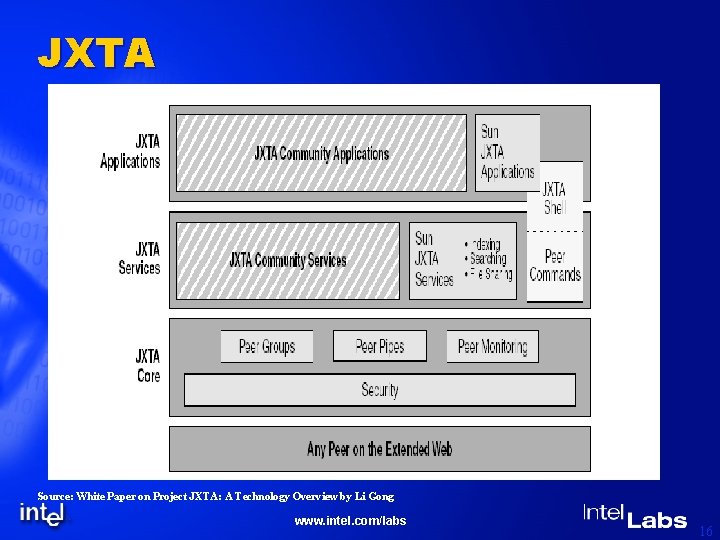

JXTA Source: White Paper on Project JXTA: A Technology Overview by Li Gong www. intel. com/labs 16

Microsoft. NET in the context of P 2 P Objective: An enabler of general XML/SOAP based web services. Message transfer via SOAP (simple object access protocol) over HTTP. Kerberos based user authentication. Extensive class library. Emphasizes global user authentication via passport service (user distinct from the device being used). Hailstorm supports personal services which can be accessed via SOAP from any entity www. intel. com/labs 17

MAGI Enabler for collaborative business applications. *Source: Peer-to-Peer Computing by David Barkai (Intel Press) www. intel. com/labs 18

Magi Magi: Micro-Apache Generic Interface, an extension of Apache project. Superset of HTTP using Web. DAV: Web distributed authoring & versioning protocol, which provides, locking services, discovery & assignment services, etc. for web documents. SWAP (simple workflow access protocol) that supports interaction between running services (e. g. , notification, monitoring, remote stop/synchronization, etc. ) Intended for servers; client interface is HTTP. www. intel. com/labs 19

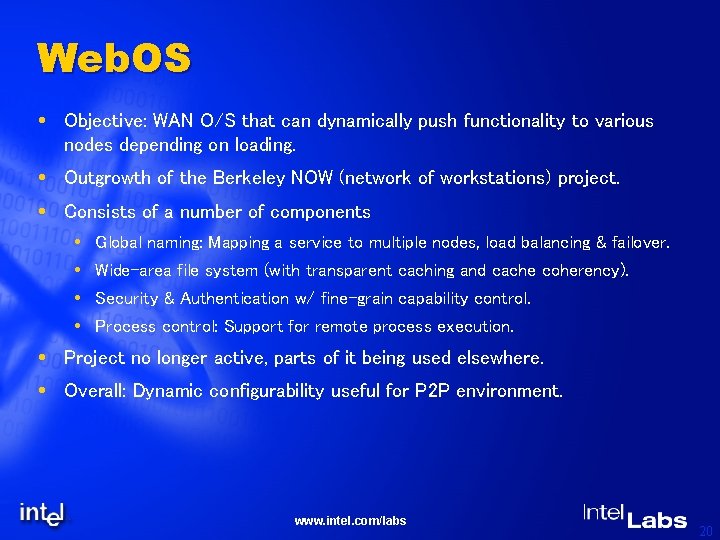

Web. OS Objective: WAN O/S that can dynamically push functionality to various nodes depending on loading. Outgrowth of the Berkeley NOW (network of workstations) project. Consists of a number of components Global naming: Mapping a service to multiple nodes, load balancing & failover. Wide-area file system (with transparent caching and cache coherency). Security & Authentication w/ fine-grain capability control. Process control: Support for remote process execution. Project no longer active, parts of it being used elsewhere. Overall: Dynamic configurability useful for P 2 P environment. www. intel. com/labs 20

Groove (http: //www. groove. net) Real time, small group interaction and collaboration. Fundamental notion around a “shared space” – Each member of the group owns a copy of the “shared space”. – Changes made to the “shared space” by one member are propagated to each member of the group (Store and forward if some member is offline). Platform is secure. – PKI for user authentication. – End to end encryption. – Groove components are digitally signed www. intel. com/labs 21

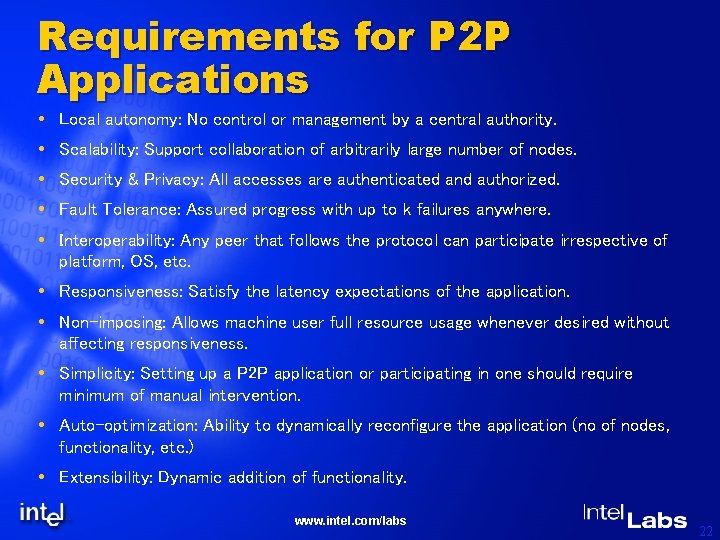

Requirements for P 2 P Applications Local autonomy: No control or management by a central authority. Scalability: Support collaboration of arbitrarily large number of nodes. Security & Privacy: All accesses are authenticated and authorized. Fault Tolerance: Assured progress with up to k failures anywhere. Interoperability: Any peer that follows the protocol can participate irrespective of platform, OS, etc. Responsiveness: Satisfy the latency expectations of the application. Non-imposing: Allows machine user full resource usage whenever desired without affecting responsiveness. Simplicity: Setting up a P 2 P application or participating in one should require minimum of manual intervention. Auto-optimization: Ability to dynamically reconfigure the application (no of nodes, functionality, etc. ) Extensibility: Dynamic addition of functionality. www. intel. com/labs 22

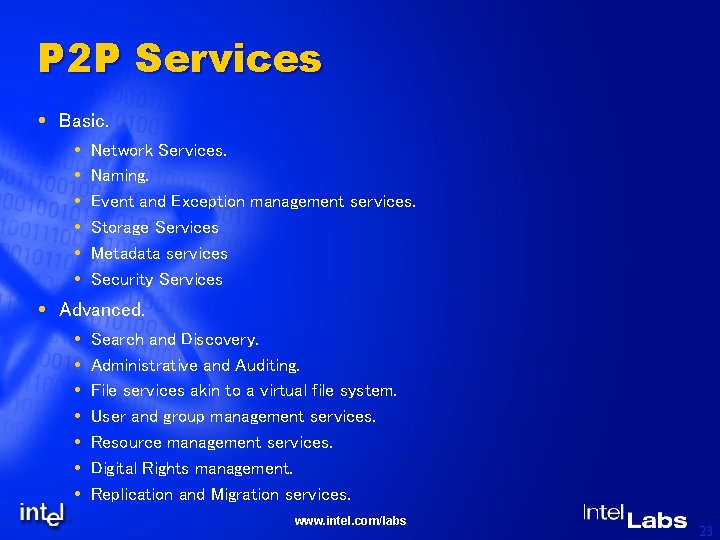

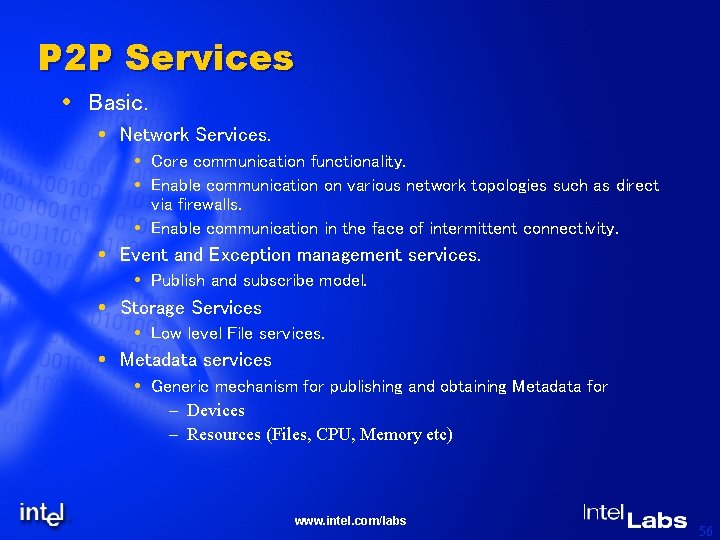

P 2 P Services Basic. Network Services. Naming. Event and Exception management services. Storage Services Metadata services Security Services Advanced. Search and Discovery. Administrative and Auditing. File services akin to a virtual file system. User and group management services. Resource management services. Digital Rights management. Replication and Migration services. www. intel. com/labs 23

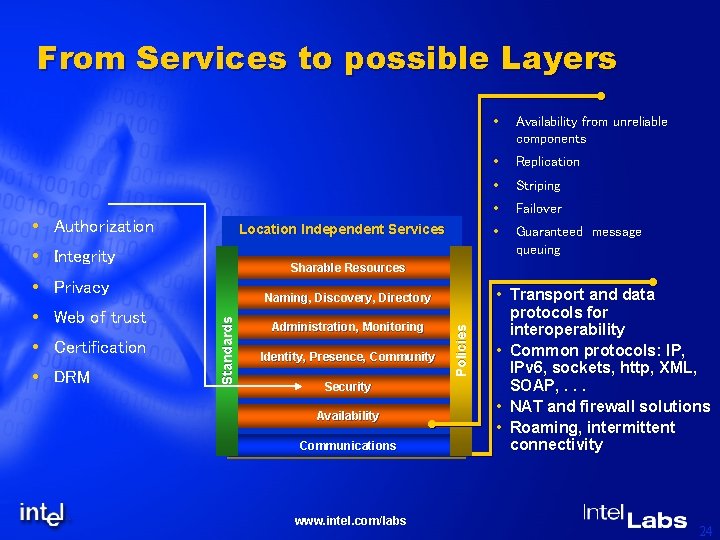

From Services to possible Layers Authorization Location Independent Services Integrity Administration, Monitoring Identity, Presence, Community Security Availability Communications www. intel. com/labs Policies DRM Naming, Discovery, Directory Standards Certification Availability from unreliable components Replication Striping Failover Guaranteed message queuing Sharable Resources Privacy Web of trust • Transport and data protocols for interoperability • Common protocols: IP, IPv 6, sockets, http, XML, SOAP, . . . • NAT and firewall solutions • Roaming, intermittent connectivity 24

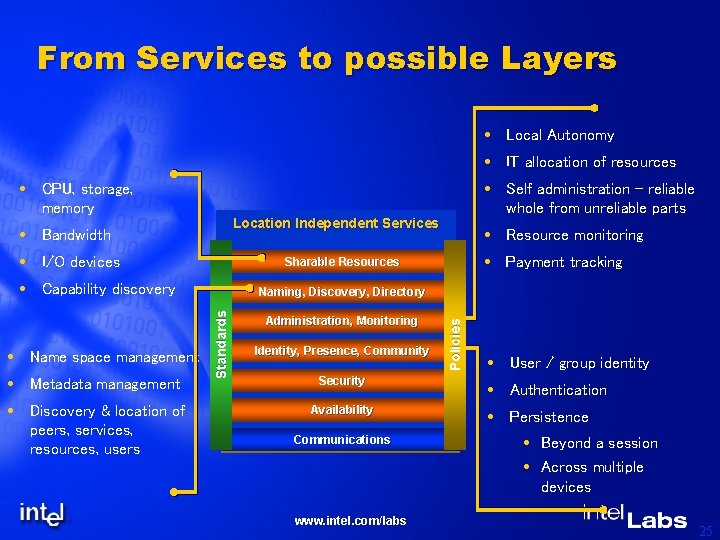

From Services to possible Layers Local Autonomy IT allocation of resources CPU, storage, memory Location Independent Services Bandwidth I/O devices Discovery & location of peers, services, resources, users Payment tracking Administration, Monitoring Identity, Presence, Community Security Availability Communications www. intel. com/labs Policies Naming, Discovery, Directory Standards Metadata management Resource monitoring Sharable Resources Capability discovery Name space management Self administration – reliable whole from unreliable parts User / group identity Authentication Persistence Beyond a session Across multiple devices 25

Questions ? ? ? www. intel. com/labs

Part 2: Taxonomy & Research Issues Goals: To introduce a taxonomy for classifying P 2 P applications and environments. To elaborate upon some major research issues. www. intel. com/labs 27

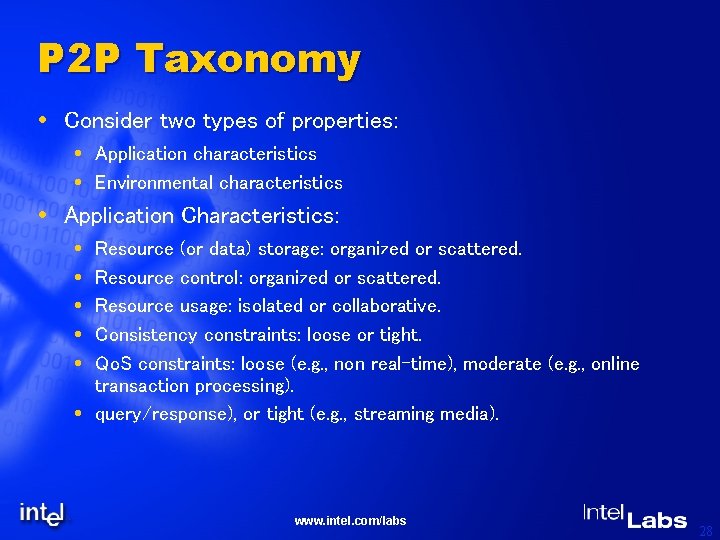

P 2 P Taxonomy Consider two types of properties: Application characteristics Environmental characteristics Application Characteristics: Resource (or data) storage: organized or scattered. Resource control: organized or scattered. Resource usage: isolated or collaborative. Consistency constraints: loose or tight. Qo. S constraints: loose (e. g. , non real-time), moderate (e. g. , online transaction processing). query/response), or tight (e. g. , streaming media). www. intel. com/labs 28

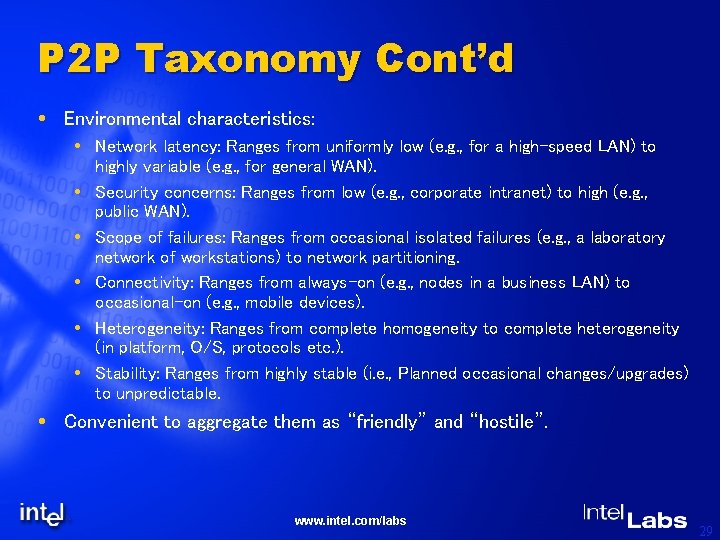

P 2 P Taxonomy Cont’d Environmental characteristics: Network latency: Ranges from uniformly low (e. g. , for a high-speed LAN) to highly variable (e. g. , for general WAN). Security concerns: Ranges from low (e. g. , corporate intranet) to high (e. g. , public WAN). Scope of failures: Ranges from occasional isolated failures (e. g. , a laboratory network of workstations) to network partitioning. Connectivity: Ranges from always-on (e. g. , nodes in a business LAN) to occasional-on (e. g. , mobile devices). Heterogeneity: Ranges from complete homogeneity to complete heterogeneity (in platform, O/S, protocols etc. ). Stability: Ranges from highly stable (i. e. , Planned occasional changes/upgrades) to unpredictable. Convenient to aggregate them as “friendly” and “hostile”. www. intel. com/labs 29

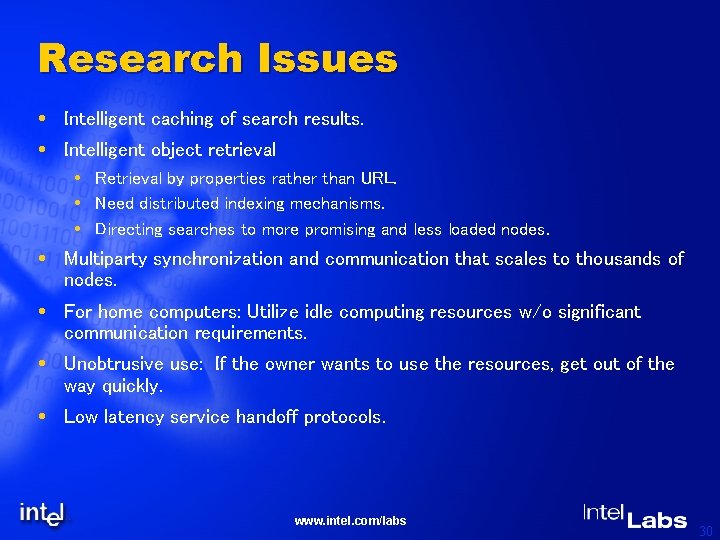

Research Issues Intelligent caching of search results. Intelligent object retrieval Retrieval by properties rather than URL. Need distributed indexing mechanisms. Directing searches to more promising and less loaded nodes. Multiparty synchronization and communication that scales to thousands of nodes. For home computers: Utilize idle computing resources w/o significant communication requirements. Unobtrusive use: If the owner wants to use the resources, get out of the way quickly. Low latency service handoff protocols. www. intel. com/labs 30

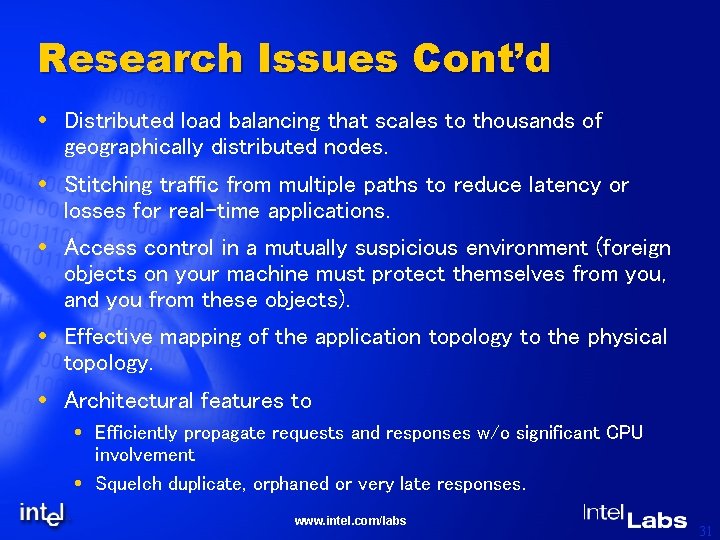

Research Issues Cont’d Distributed load balancing that scales to thousands of geographically distributed nodes. Stitching traffic from multiple paths to reduce latency or losses for real-time applications. Access control in a mutually suspicious environment (foreign objects on your machine must protect themselves from you, and you from these objects). Effective mapping of the application topology to the physical topology. Architectural features to Efficiently propagate requests and responses w/o significant CPU involvement Squelch duplicate, orphaned or very late responses. www. intel. com/labs 31

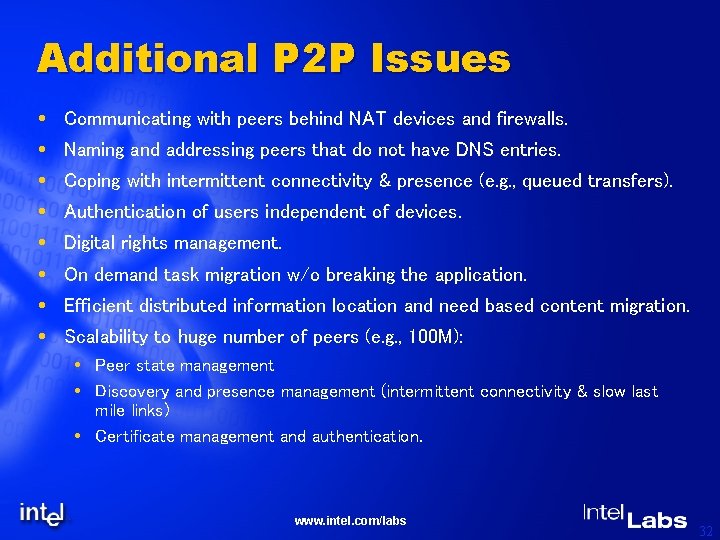

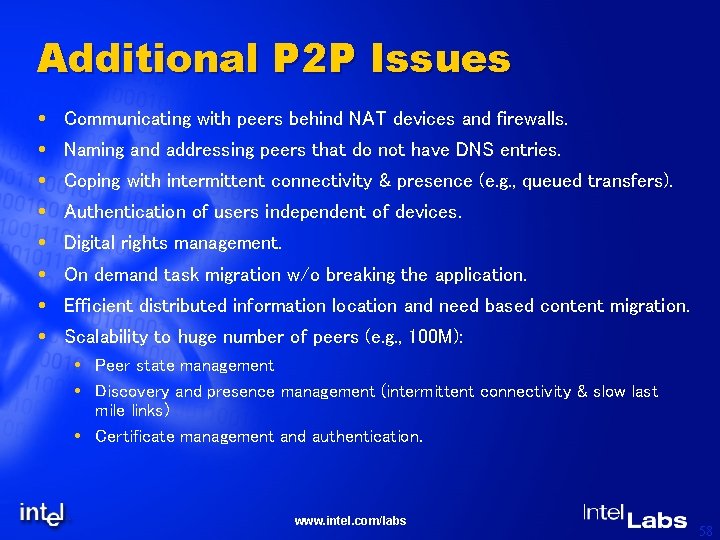

Additional P 2 P Issues Communicating with peers behind NAT devices and firewalls. Naming and addressing peers that do not have DNS entries. Coping with intermittent connectivity & presence (e. g. , queued transfers). Authentication of users independent of devices. Digital rights management. On demand task migration w/o breaking the application. Efficient distributed information location and need based content migration. Scalability to huge number of peers (e. g. , 100 M): Peer state management Discovery and presence management (intermittent connectivity & slow last mile links) Certificate management and authentication. www. intel. com/labs 32

Part 3: Performance Study Goals: 1. Define a performance model including - Network model - File storage and access model - File caching and propagation model 2. Discuss sample results 3. Discuss Architectural impacts www. intel. com/labs

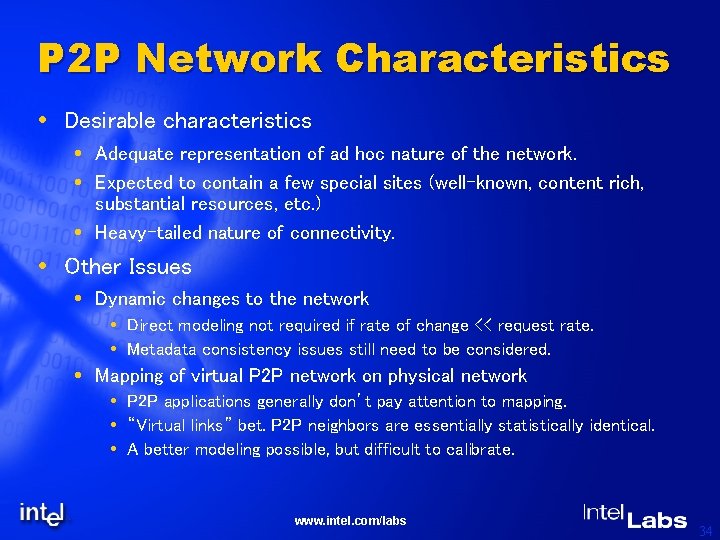

P 2 P Network Characteristics Desirable characteristics Adequate representation of ad hoc nature of the network. Expected to contain a few special sites (well-known, content rich, substantial resources, etc. ) Heavy-tailed nature of connectivity. Other Issues Dynamic changes to the network Direct modeling not required if rate of change << request rate. Metadata consistency issues still need to be considered. Mapping of virtual P 2 P network on physical network P 2 P applications generally don’t pay attention to mapping. “Virtual links” bet. P 2 P neighbors are essentially statistically identical. A better modeling possible, but difficult to calibrate. www. intel. com/labs 34

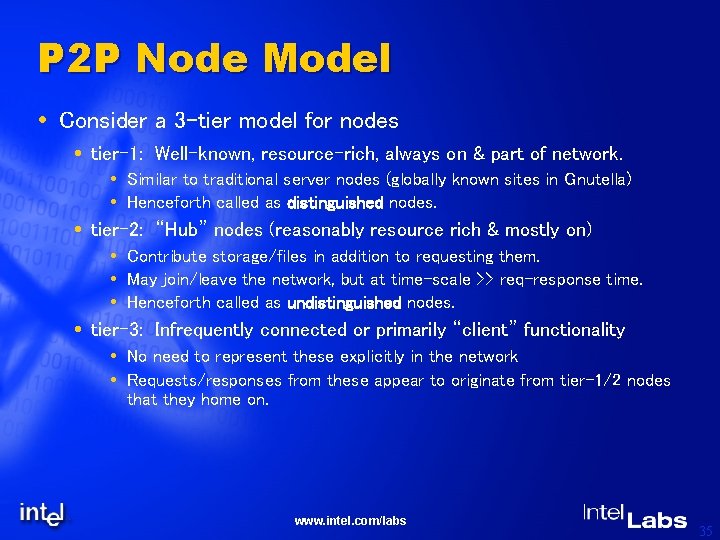

P 2 P Node Model Consider a 3 -tier model for nodes tier-1: Well-known, resource-rich, always on & part of network. Similar to traditional server nodes (globally known sites in Gnutella) Henceforth called as distinguished nodes. tier-2: “Hub” nodes (reasonably resource rich & mostly on) Contribute storage/files in addition to requesting them. May join/leave the network, but at time-scale >> req-response time. Henceforth called as undistinguished nodes. tier-3: Infrequently connected or primarily “client” functionality No need to represent these explicitly in the network Requests/responses from these appear to originate from tier-1/2 nodes that they home on. www. intel. com/labs 35

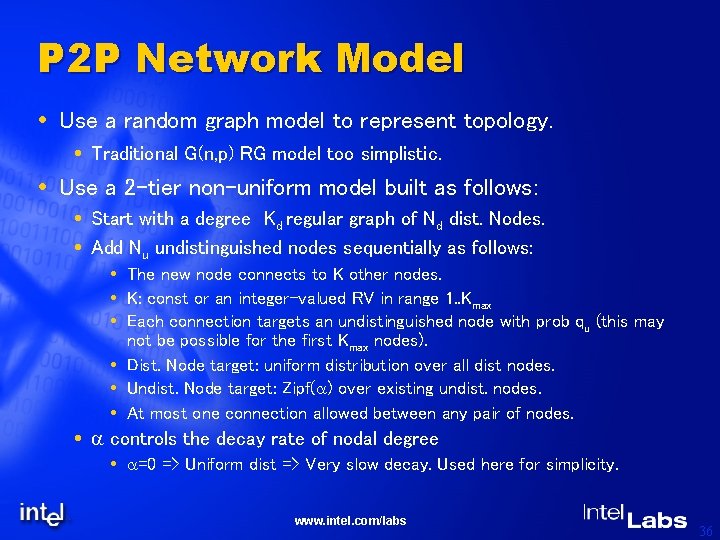

P 2 P Network Model Use a random graph model to represent topology. Traditional G(n, p) RG model too simplistic. Use a 2 -tier non-uniform model built as follows: Start with a degree Kd regular graph of Nd dist. Nodes. Add Nu undistinguished nodes sequentially as follows: The new node connects to K other nodes. K: const or an integer-valued RV in range 1. . Kmax Each connection targets an undistinguished node with prob qu (this may not be possible for the first Kmax nodes). Dist. Node target: uniform distribution over all dist nodes. Undist. Node target: Zipf(a) over existing undist. nodes. At most one connection allowed between any pair of nodes. a controls the decay rate of nodal degree a=0 => Uniform dist => Very slow decay. Used here for simplicity. www. intel. com/labs 36

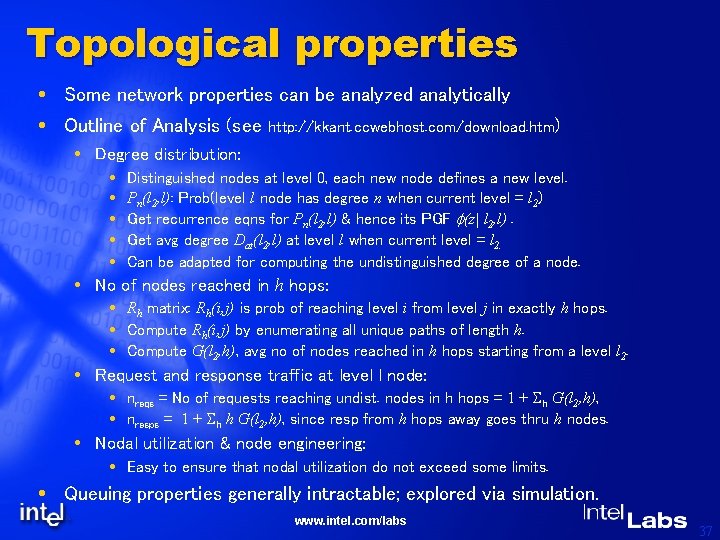

Topological properties Some network properties can be analyzed analytically Outline of Analysis (see http: //kkant. ccwebhost. com/download. htm) Degree distribution: Distinguished nodes at level 0, each new node defines a new level. Pn(l 2, l): Prob(level l node has degree n when current level = l 2) Get recurrence eqns for Pn(l 2, l) & hence its PGF f(z| l 2, l). Get avg degree Dat(l 2, l) at level l when current level = l 2. Can be adapted for computing the undistinguished degree of a node. No of nodes reached in h hops: Rh matrix: Rh(i, j) is prob of reaching level i from level j in exactly h hops. Compute Rh(i, j) by enumerating all unique paths of length h. Compute G(l 2, h), avg no of nodes reached in h hops starting from a level l 2. Request and response traffic at level l node: nreqs = No of requests reaching undist. nodes in h hops = 1 + Sh G(l 2, h), nresps = 1 + Sh h G(l 2, h), since resp from h hops away goes thru h nodes. Nodal utilization & node engineering: Easy to ensure that nodal utilization do not exceed some limits. Queuing properties generally intractable; explored via simulation. www. intel. com/labs 37

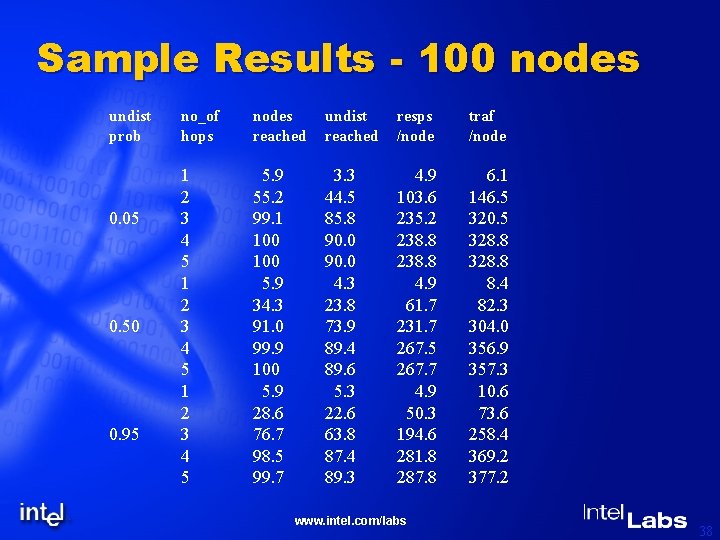

Sample Results - 100 nodes undist prob 0. 05 0. 50 0. 95 no_of hops nodes reached undist reached resps /node traf /node 1 2 3 4 5 5. 9 55. 2 99. 1 100 5. 9 34. 3 91. 0 99. 9 100 5. 9 28. 6 76. 7 98. 5 99. 7 3. 3 44. 5 85. 8 90. 0 4. 3 23. 8 73. 9 89. 4 89. 6 5. 3 22. 6 63. 8 87. 4 89. 3 4. 9 103. 6 235. 2 238. 8 4. 9 61. 7 231. 7 267. 5 267. 7 4. 9 50. 3 194. 6 281. 8 287. 8 6. 1 146. 5 320. 5 328. 8 8. 4 82. 3 304. 0 356. 9 357. 3 10. 6 73. 6 258. 4 369. 2 377. 2 www. intel. com/labs 38

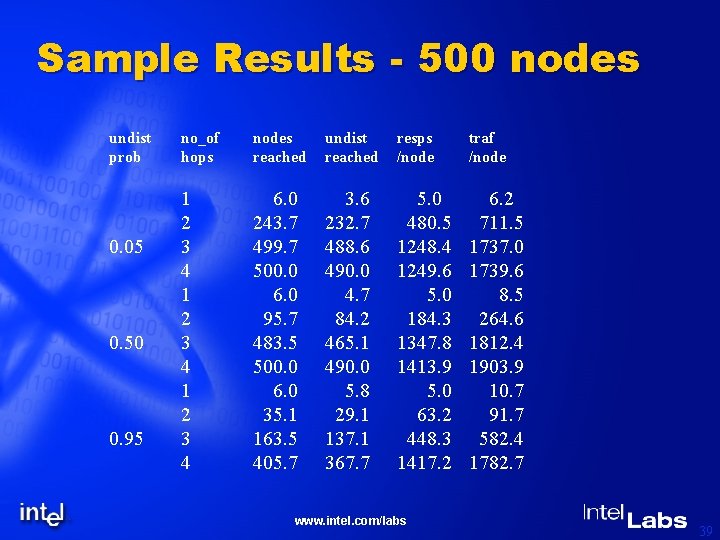

Sample Results - 500 nodes undist prob 0. 05 0. 50 0. 95 no_of hops nodes reached undist reached resps /node traf /node 1 2 3 4 6. 0 243. 7 499. 7 500. 0 6. 0 95. 7 483. 5 500. 0 6. 0 35. 1 163. 5 405. 7 3. 6 232. 7 488. 6 490. 0 4. 7 84. 2 465. 1 490. 0 5. 8 29. 1 137. 1 367. 7 5. 0 480. 5 1248. 4 1249. 6 5. 0 184. 3 1347. 8 1413. 9 5. 0 63. 2 448. 3 1417. 2 6. 2 711. 5 1737. 0 1739. 6 8. 5 264. 6 1812. 4 1903. 9 10. 7 91. 7 582. 4 1782. 7 www. intel. com/labs 39

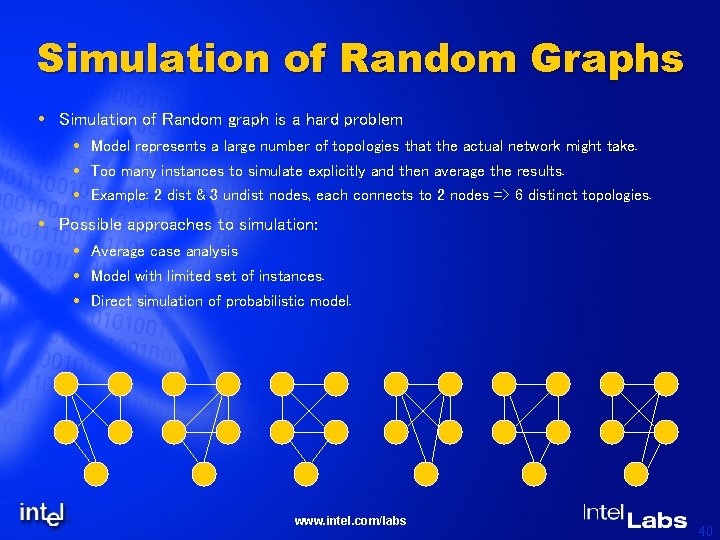

Simulation of Random Graphs Simulation of Random graph is a hard problem Model represents a large number of topologies that the actual network might take. Too many instances to simulate explicitly and then average the results. Example: 2 dist & 3 undist nodes, each connects to 2 nodes => 6 distinct topologies. Possible approaches to simulation: Average case analysis Model with limited set of instances. Direct simulation of probabilistic model. www. intel. com/labs 40

Average case analysis Intended environment To study performance of an “average” network defined by RG model. No dynamic changes to the topology possible. Graph construction Start with the regular graph of distinguished nodes (as usual). For adding undist nodes, work with only the avg connectivities Kd & Ku for an incoming node. Always connect to the existing node with min connectivity. Kd & Kd can be used successively to handle non-integer Kd values (similarly for Ku). Characteristics/issues Simple, only one graph to deal with in simulation. Gives correct avg reachability and nodal utilizations. All queuing metrics (including avg response time) are underestimated. www. intel. com/labs 41

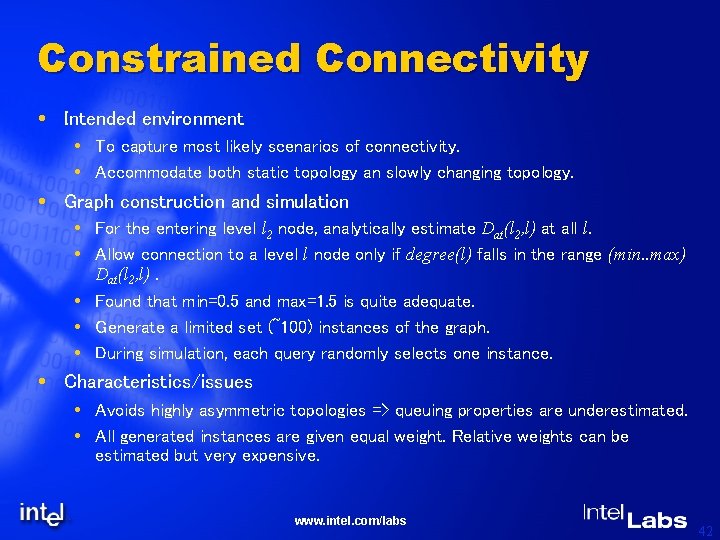

Constrained Connectivity Intended environment To capture most likely scenarios of connectivity. Accommodate both static topology an slowly changing topology. Graph construction and simulation For the entering level l 2 node, analytically estimate Dat(l 2, l) at all l. Allow connection to a level l node only if degree(l) falls in the range (min. . max) Dat(l 2, l). Found that min=0. 5 and max=1. 5 is quite adequate. Generate a limited set (~100) instances of the graph. During simulation, each query randomly selects one instance. Characteristics/issues Avoids highly asymmetric topologies => queuing properties are underestimated. All generated instances are given equal weight. Relative weights can be estimated but very expensive. www. intel. com/labs 42

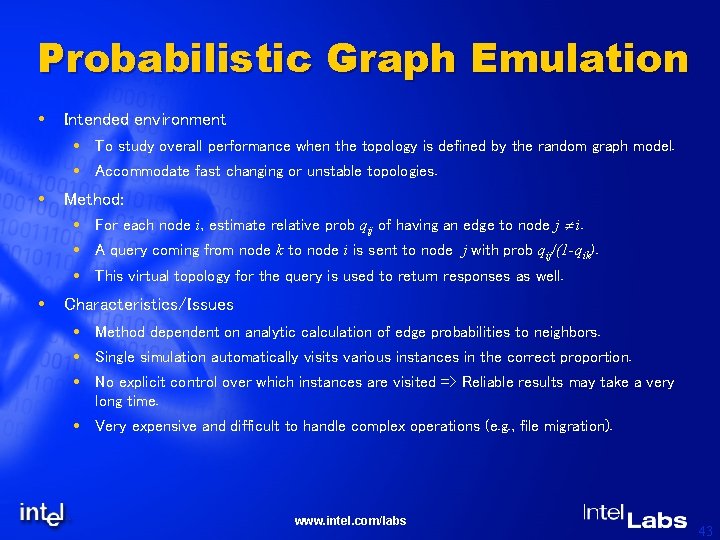

Probabilistic Graph Emulation Intended environment To study overall performance when the topology is defined by the random graph model. Accommodate fast changing or unstable topologies. Method: For each node i, estimate relative prob qij of having an edge to node j i. A query coming from node k to node i is sent to node j with prob qij/(1 -qik). This virtual topology for the query is used to return responses as well. Characteristics/Issues Method dependent on analytic calculation of edge probabilities to neighbors. Single simulation automatically visits various instances in the correct proportion. No explicit control over which instances are visited => Reliable results may take a very long time. Very expensive and difficult to handle complex operations (e. g. , file migration). www. intel. com/labs 43

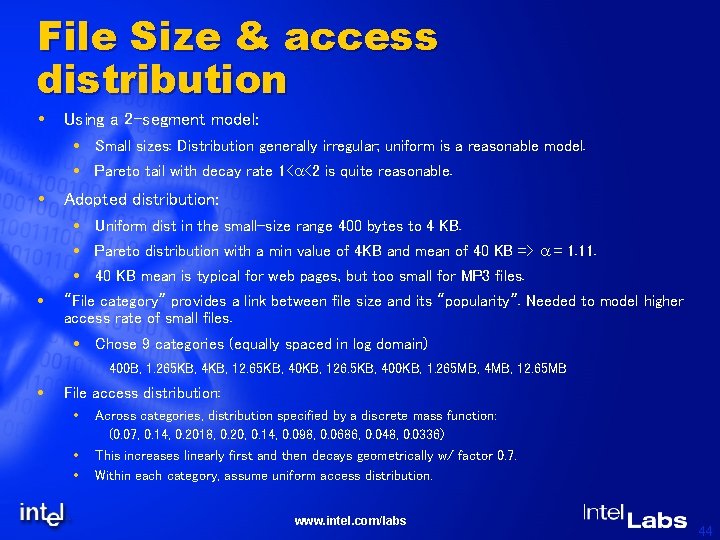

File Size & access distribution Using a 2 -segment model: Small sizes: Distribution generally irregular; uniform is a reasonable model. Pareto tail with decay rate 1<a<2 is quite reasonable. Adopted distribution: Uniform dist in the small-size range 400 bytes to 4 KB. Pareto distribution with a min value of 4 KB and mean of 40 KB => a = 1. 11. 40 KB mean is typical for web pages, but too small for MP 3 files. “File category” provides a link between file size and its “popularity”. Needed to model higher access rate of small files. Chose 9 categories (equally spaced in log domain) 400 B, 1. 265 KB, 4 KB, 12. 65 KB, 40 KB, 126. 5 KB, 400 KB, 1. 265 MB, 4 MB, 12. 65 MB File access distribution: Across categories, distribution specified by a discrete mass function: (0. 07, 0. 14, 0. 2018, 0. 20, 0. 14, 0. 098, 0. 0686, 0. 048, 0. 0336) This increases linearly first and then decays geometrically w/ factor 0. 7. Within each category, assume uniform access distribution. www. intel. com/labs 44

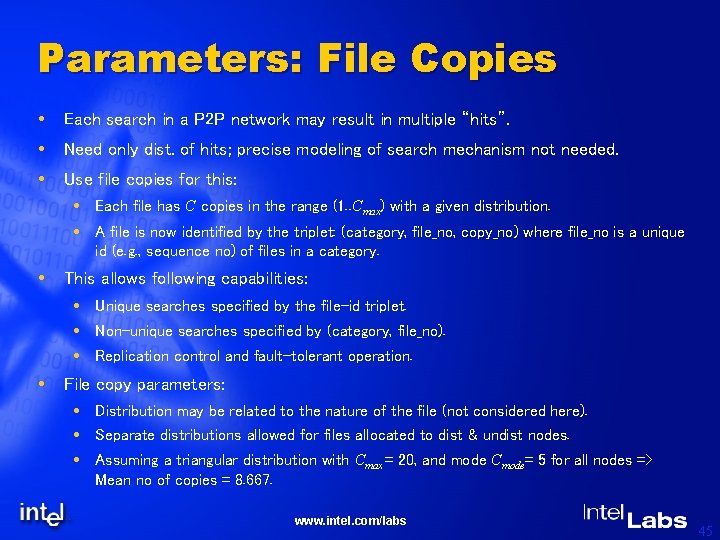

Parameters: File Copies Each search in a P 2 P network may result in multiple “hits”. Need only dist. of hits; precise modeling of search mechanism not needed. Use file copies for this: Each file has C copies in the range (1. . Cmax) with a given distribution. A file is now identified by the triplet: (category, file_no, copy_no) where file_no is a unique id (e. g. , sequence no) of files in a category. This allows following capabilities: Unique searches specified by the file-id triplet. Non-unique searches specified by (category, file_no). Replication control and fault-tolerant operation. File copy parameters: Distribution may be related to the nature of the file (not considered here). Separate distributions allowed for files allocated to dist & undist nodes. Assuming a triangular distribution with Cmax = 20, and mode Cmode= 5 for all nodes => Mean no of copies = 8. 667. www. intel. com/labs 45

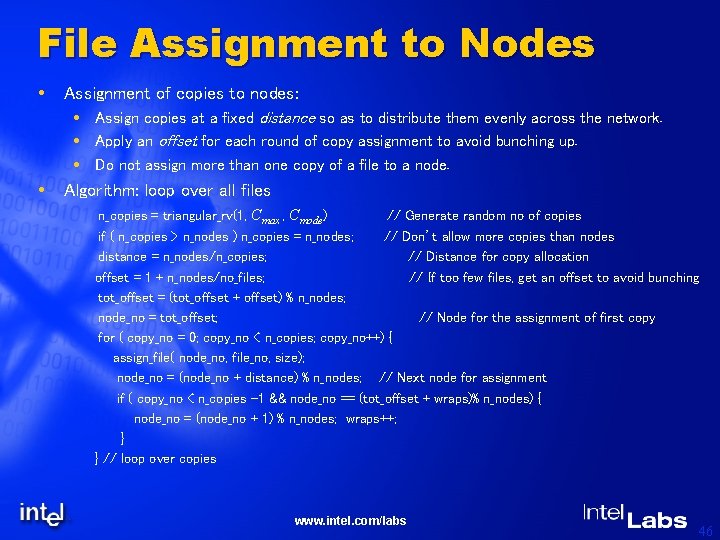

File Assignment to Nodes Assignment of copies to nodes: Assign copies at a fixed distance so as to distribute them evenly across the network. Apply an offset for each round of copy assignment to avoid bunching up. Do not assign more than one copy of a file to a node. Algorithm: loop over all files n_copies = triangular_rv(1, Cmax , Cmode) // Generate random no of copies if ( n_copies > n_nodes ) n_copies = n_nodes; // Don’t allow more copies than nodes distance = n_nodes/n_copies; // Distance for copy allocation offset = 1 + n_nodes/no_files; // If too few files, get an offset to avoid bunching tot_offset = (tot_offset + offset) % n_nodes; node_no = tot_offset; // Node for the assignment of first copy for ( copy_no = 0; copy_no < n_copies; copy_no++) { assign_file( node_no, file_no, size); node_no = (node_no + distance) % n_nodes; // Next node for assignment if ( copy_no < n_copies -1 && node_no == (tot_offset + wraps)% n_nodes) { node_no = (node_no + 1) % n_nodes; wraps++; } } // loop over copies www. intel. com/labs 46

Query Characteristics Assumptions: No queries (searches) started from distinguished nodes since these nodes are essentially “servers”. Identical query arrival process at each undistinguished node. Arrival process model An on-off process with identical Pareto distribution for on & off periods: P(X>x) = (x/T)g for x > T Assume T=12 secs, and g =1. 4 which gives E(X)=30 secs. Const inter-arrival time of 4 secs during the on-period, no traffic during off period. Total traffic at a node is superposition of arrivals from all reachable nodes. Approx. a self-similar process with Hurst parameter H=(3 - g)/2 = 0. 8 when no of reachable nodes is large. Query properties: Each query specifies a file (category, file_no) w/ given access characteristics. Shown results do not specify copy_no => Multiple hits possible for each query. Query percolates for h “hops”. (h=3 can cover 95% of nodes for chosen graph). If a query arrives at a node more than once, it is not propagated. www. intel. com/labs 47

File Retrieval Query Response: Query reaching a node generates found/not found response, which travels backwards along the search path. Querying node runs a timer Tu; all responses after the timeout are ignored. Currently no concept of retrying the timed out requests. Distribution of Tu: Triangular in the range (3, 14) secs with mean 8. 0 secs. File retrieval: Randomly choose one of the positively responding nodes for file retrieval. Requested file(s) are obtained directly (i. e. , do not follow the response path). Retrieved file may be optionally cached at the requesting node. File cache flushing Used as a way of modeling dynamic changes in tier-3 nodes (which are not represented). A cache flush represents a tier 3 user disconnecting and replaced by another statistically identical tier-3 node. No of cycles before cache flushing: Zipf with min=30, max=120 and a =1. 0. www. intel. com/labs 48

Simulation Results www. intel. com/labs 49

Major Observations www. intel. com/labs 50

Conclusions & Future Work Covered in the tutorial: Introduced major developments relevant to P 2 P computing. Introduced sample middleware functionality to support P 2 P applications. Introduced a taxonomy for classifying P 2 P computing applications and environments. Discussed major research issues to be resolved. Proposed a random graph model for P 2 P networks and studied its properties. Studies some performance issues for P 2 P deployments using detailed simulation of file-sharing applications. Potential Future Work Further refinement of middleware functionality and taxonomy as newer P 2 P applications emerge. More comprehensive performance studies, particularly going beyond simply file -sharing. www. intel. com/labs 51

Backup www. intel. com/labs

Goals Define Peer-to-Peer. General idea about the Peer-to-Peer applications and frameworks. Identify the requirements of Peer-to-Peer applications. Examine a taxonomy for Peer-to-Peer. Performance. (Not clear what we write here) www. intel. com/labs 53

Ad-hoc Collaborative computing Several applications e. g. , telemedicine, military planning, video-conferencing, document editing A group of peers discover one-another and form an ad-hoc network Peers setup the necessary communication channels (perhaps secure) and distribute objects. Peers do arbitrary real-time computation perhaps involving multiparty synchronization. Results are collected and the network disbanded. www. intel. com/labs 54

JXTA At the highest abstraction defines a set of protocols: Peers & peer groups: An arbitrary grouping of peers; group members share resources & services. Services: A basic set defined (e. g. , discovery, membership, access control, resolver, communication, etc. ) Pipes: Unidirectional, asynchronous communication channels. A peer can dynamically connect/disconnect to any existing pipe within the peer group. Messages: Arbitrary sized w/ src and dest addresses in URI form. Advertisements: A “properties” record needed for name resolution, availability, etc. Specified as a XML document. www. intel. com/labs 55

P 2 P Services Basic. Network Services. Core communication functionality. Enable communication on various network topologies such as direct via firewalls. Enable communication in the face of intermittent connectivity. Event and Exception management services. Publish and subscribe model. Storage Services Low level File services. Metadata services Generic mechanism for publishing and obtaining Metadata for – Devices – Resources (Files, CPU, Memory etc) www. intel. com/labs 56

P 2 P Services Security Services Identification Authentication Access Control Integrity Confidentiality Audit Trail User and group management services. Resource management and Placement services. Advanced. Naming. Search. Discovery. Administrative. Auditing. File services www. intel. com/labs 57

Additional P 2 P Issues Communicating with peers behind NAT devices and firewalls. Naming and addressing peers that do not have DNS entries. Coping with intermittent connectivity & presence (e. g. , queued transfers). Authentication of users independent of devices. Digital rights management. On demand task migration w/o breaking the application. Efficient distributed information location and need based content migration. Scalability to huge number of peers (e. g. , 100 M): Peer state management Discovery and presence management (intermittent connectivity & slow last mile links) Certificate management and authentication. www. intel. com/labs 58

Web Sites of Interest Napster (http: //www. napster. com) Gnutella (http: //gnutella. wego. com) Freenet (http: //freenet. sourceforge. net) JXTA (http: //www. jxta. org) Avaki Corp (http: //www. avaki. com) Legion (http: //legion. virginia. edu) Globe (http: //www. cs. vu. nl/~steen/globe) Globus (http: //www. globus. org) Microsoft. Net (http: //www. microsoft. com/net) www. intel. com/labs 59

Web sites of interest Peer-to-Peer Working Group. (http: //www. p 2 pwg. org) Center. Span (http: //www. centerspan. com) v. Trails (http: //www. vtrails. com) SETI@Home (http: //setiathome. ssl. berkeley. edu) www. intel. com/labs 60

- Slides: 60