SWAT Designing Resilient Hardware by Treating Software Anomalies

SWAT: Designing Resilient Hardware by Treating Software Anomalies Man-Lap (Alex) Li, Pradeep Ramachandran, Swarup K. Sahoo, Siva Kumar Sastry Hari, Rahmet Ulya Karpuzcu, Sarita Adve, Vikram Adve, Yuanyuan Zhou Department of Computer Science University of Illinois at Urbana-Champaign swat@cs. uiuc. edu 1

Motivation • Hardware failures will happen in the field – Aging, soft errors, inadequate burn-in, design defects, … Need in-field detection, diagnosis, recovery, repair • Reliability problem pervasive across many markets – Traditional redundancy (e. g. , n. MR) too expensive – Piecemeal solutions for specific fault model too expensive – Must incur low area, performance, power overhead Today: low-cost solution for multiple failure sources 2

Observations • Need handle only hardware faults that propagate to software • Fault-free case remains common, must be optimized Watch for software anomalies (symptoms) – Hardware fault detection ~ Software bug detection – Zero to low overhead “always-on” monitors Diagnose cause after symptom detected − May incur high overhead, but rarely invoked SWAT: Soft. Ware Anomaly Treatment 3

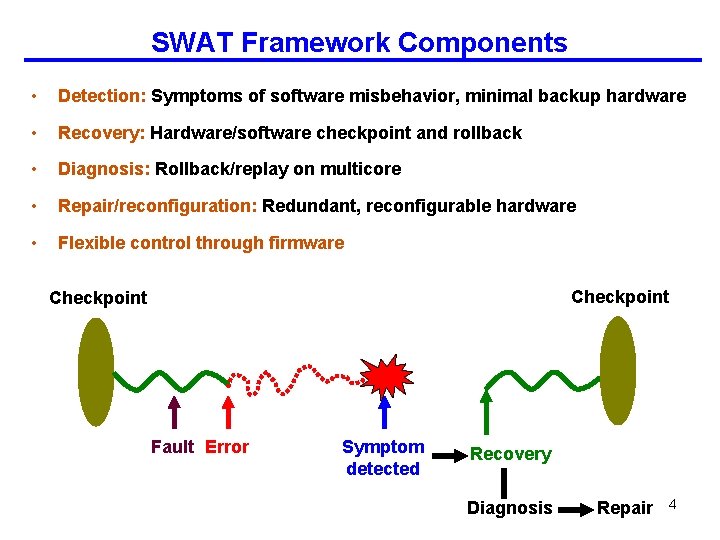

SWAT Framework Components • Detection: Symptoms of software misbehavior, minimal backup hardware • Recovery: Hardware/software checkpoint and rollback • Diagnosis: Rollback/replay on multicore • Repair/reconfiguration: Redundant, reconfigurable hardware • Flexible control through firmware Checkpoint Fault Error Symptom detected Recovery Diagnosis Repair 4

![SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/](http://slidetodoc.com/presentation_image/6e5075f10b8025090db64616d98d4aba/image-5.jpg)

SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ compiler support [DSN ’ 08 a] Checkpoint Fault Error Symptom detected 4. Accurate Fault Models [HPCA’ 09] Recovery Diagnosis Repair 3. Trace-Based Fault Diagnosis [DSN ’ 08 b] 5

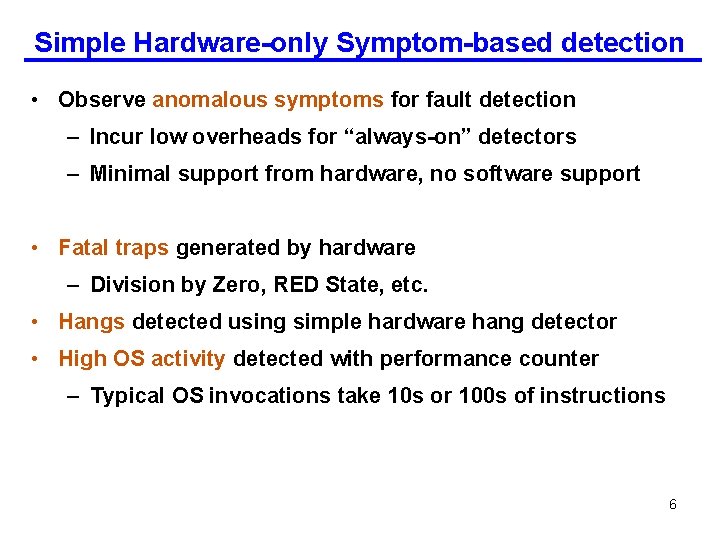

Simple Hardware-only Symptom-based detection • Observe anomalous symptoms for fault detection – Incur low overheads for “always-on” detectors – Minimal support from hardware, no software support • Fatal traps generated by hardware – Division by Zero, RED State, etc. • Hangs detected using simple hardware hang detector • High OS activity detected with performance counter – Typical OS invocations take 10 s or 100 s of instructions 6

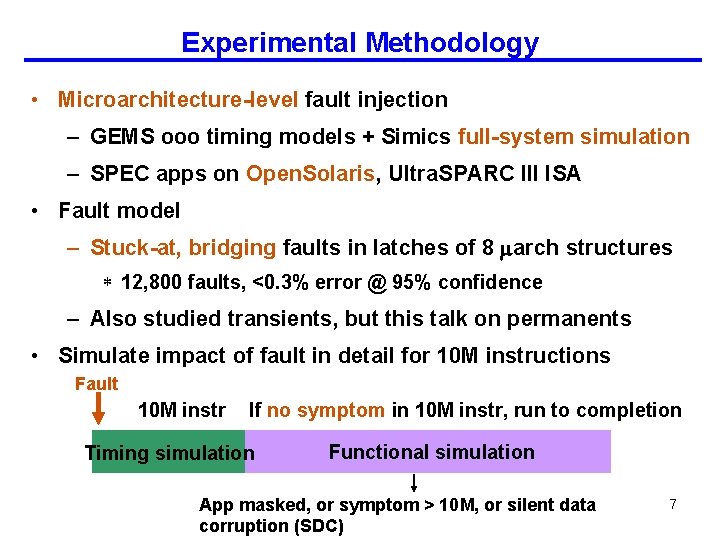

Experimental Methodology • Microarchitecture-level fault injection – GEMS ooo timing models + Simics full-system simulation – SPEC apps on Open. Solaris, Ultra. SPARC III ISA • Fault model – Stuck-at, bridging faults in latches of 8 arch structures * 12, 800 faults, <0. 3% error @ 95% confidence – Also studied transients, but this talk on permanents • Simulate impact of fault in detail for 10 M instructions Fault 10 M instr If no symptom in 10 M instr, run to completion Timing simulation Functional simulation App masked, or symptom > 10 M, or silent data corruption (SDC) 7

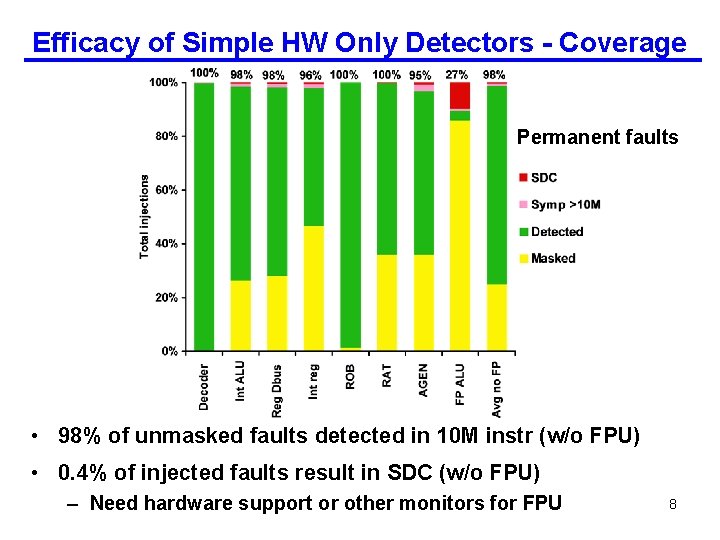

Efficacy of Simple HW Only Detectors - Coverage Permanent faults • 98% of unmasked faults detected in 10 M instr (w/o FPU) • 0. 4% of injected faults result in SDC (w/o FPU) – Need hardware support or other monitors for FPU 8

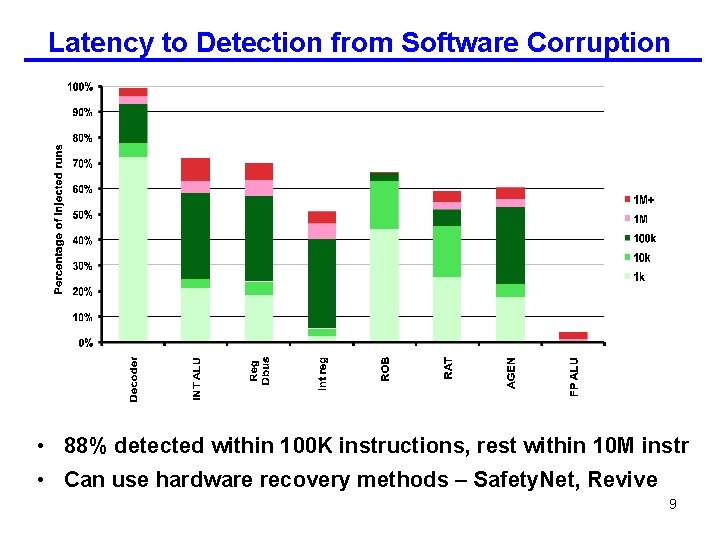

Latency to Detection from Software Corruption • 88% detected within 100 K instructions, rest within 10 M instr • Can use hardware recovery methods – Safety. Net, Revive 9

Conclusions So Far SWAT approach feasible and attractive • Very low-cost hardware detectors already effective – 98% coverage, only 0. 4% SDC for 7 of 8 structures • Next – Can we get even better coverage, especially SDC rate? 10

![SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/](http://slidetodoc.com/presentation_image/6e5075f10b8025090db64616d98d4aba/image-11.jpg)

SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ compiler support [DSN ’ 08 a] Checkpoint Fault Error Symptom detected 4. Accurate Fault Models [HPCA’ 09] Recovery Diagnosis Repair 3. Trace-Based Fault Diagnosis [DSN ’ 08 b] 11

Improving SWAT Detection Coverage Can we improve coverage, SDC rate further? • SDC faults primarily corrupt data values – Illegal control/address values caught by other symptoms – Need detectors to capture “semantic” information • Software-level invariants capture program semantics – Use when higher coverage desired – Sound program invariants expensive static analysis – We use likely program invariants 12

Likely Program Invariants • Likely program invariants – Hold on all observed inputs, expected to hold on others – But suffer from false positives – Use SWAT diagnosis to detect false positives on-line • i. SWAT invariant detectors – Range-based value invariants [Sahoo et al. DSN ‘ 08] – Check MIN value MAX on data values – Disable invariant when diagnose false-positive 13

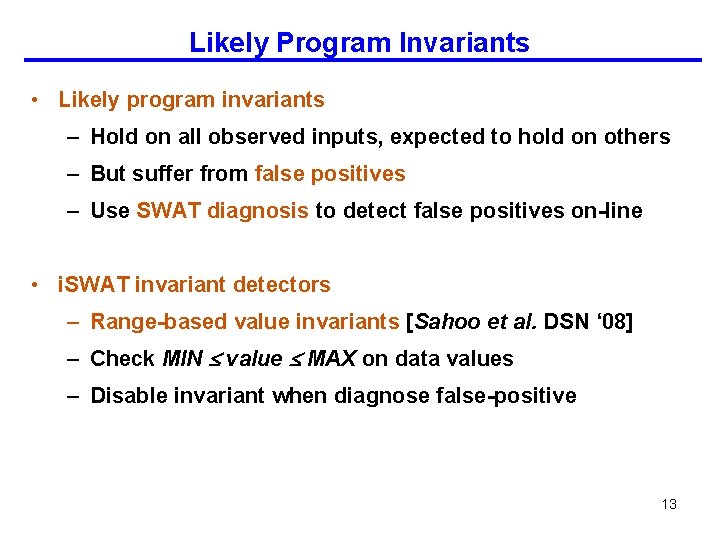

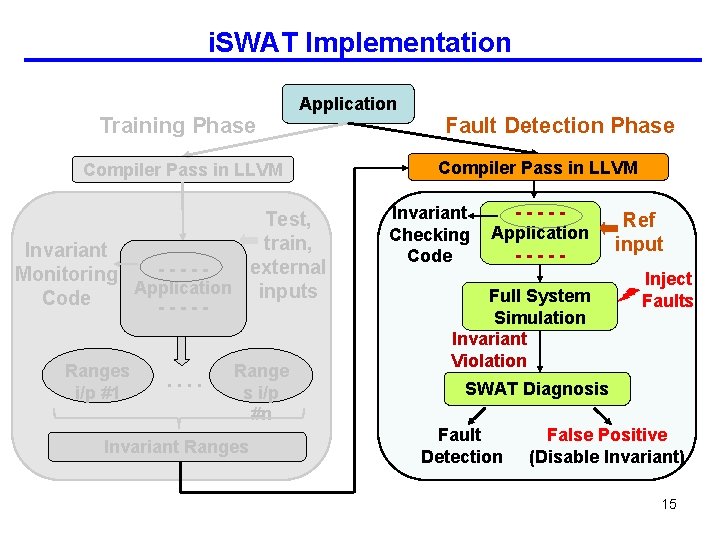

i. SWAT Implementation Training Phase Application Compiler Pass in LLVM Test, train, Invariant external ----Monitoring Application inputs Code ----Range i/p #1 . . Range i/p #n Invariant Ranges 14

i. SWAT Implementation Training Phase Application Compiler Pass in LLVM Test, train, Invariant external ----Monitoring Application inputs Code ----Ranges i/p #1 . . Range s i/p #n Invariant Ranges Fault Detection Phase Compiler Pass in LLVM Invariant Checking Code ----Application ----- Full System Simulation Invariant Violation Ref input Inject Faults SWAT Diagnosis Fault Detection False Positive (Disable Invariant) 15

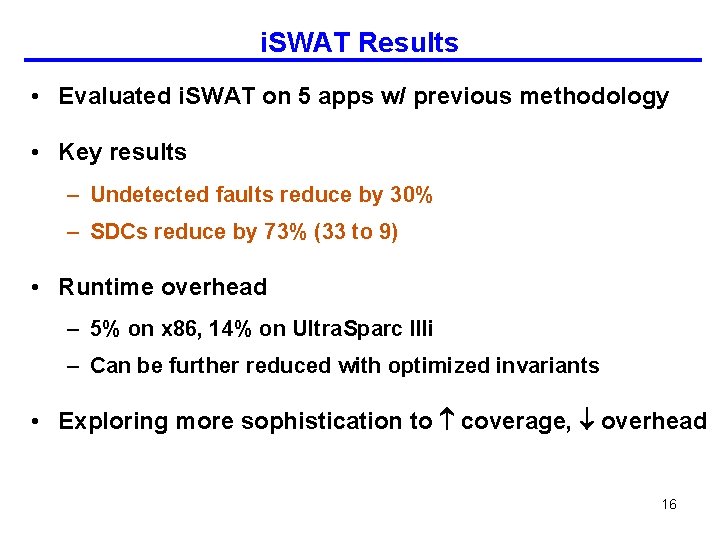

i. SWAT Results • Evaluated i. SWAT on 5 apps w/ previous methodology • Key results – Undetected faults reduce by 30% – SDCs reduce by 73% (33 to 9) • Runtime overhead – 5% on x 86, 14% on Ultra. Sparc IIIi – Can be further reduced with optimized invariants • Exploring more sophistication to coverage, overhead 16

![SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/](http://slidetodoc.com/presentation_image/6e5075f10b8025090db64616d98d4aba/image-17.jpg)

SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ compiler support [DSN ’ 08 a] Checkpoint Fault Error Symptom detected 4. Accurate Fault Models [HPCA’ 09] Recovery Diagnosis Repair 3. Trace-Based Fault Diagnosis [DSN ’ 08 b] 17

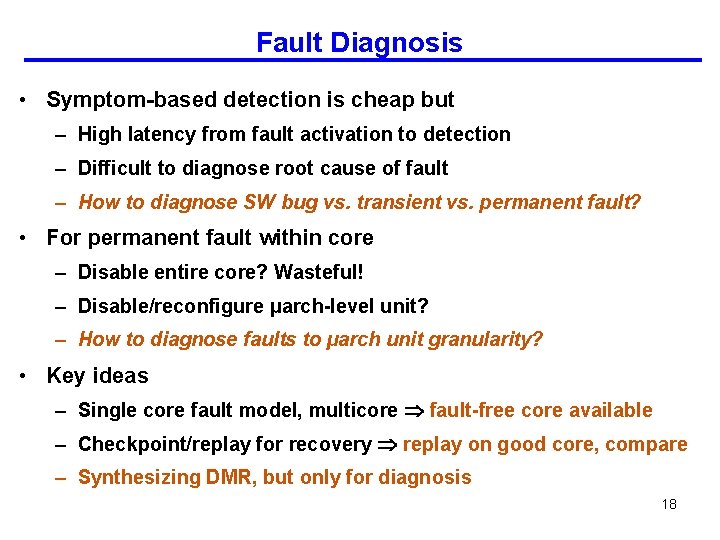

Fault Diagnosis • Symptom-based detection is cheap but – High latency from fault activation to detection – Difficult to diagnose root cause of fault – How to diagnose SW bug vs. transient vs. permanent fault? • For permanent fault within core – Disable entire core? Wasteful! – Disable/reconfigure µarch-level unit? – How to diagnose faults to µarch unit granularity? • Key ideas – Single core fault model, multicore fault-free core available – Checkpoint/replay for recovery replay on good core, compare – Synthesizing DMR, but only for diagnosis 18

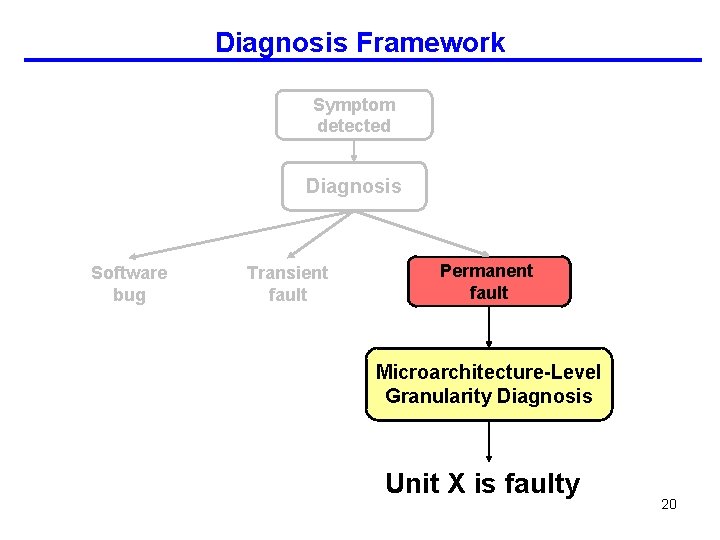

SW Bug vs. Transient vs. Permanent • Rollback/replay on same/different core • Watch if symptom reappears Faulty Good Symptom detected Rollback on faulty core No symptom Transient h/w bug or non-deterministic s/w bug Continue Execution Symptom Permanent h/w bug or deterministic s/w bug or false positive (i. SWAT) Rollback/replay on good core No symptom Permanent h/w fault, needs repair! Symptom False positive (i. SWAT) or deterministic s/w bug (send to s/w 19 layer)

Diagnosis Framework Symptom detected Diagnosis Software bug Transient fault Permanent fault Microarchitecture-Level Granularity Diagnosis Unit X is faulty 20

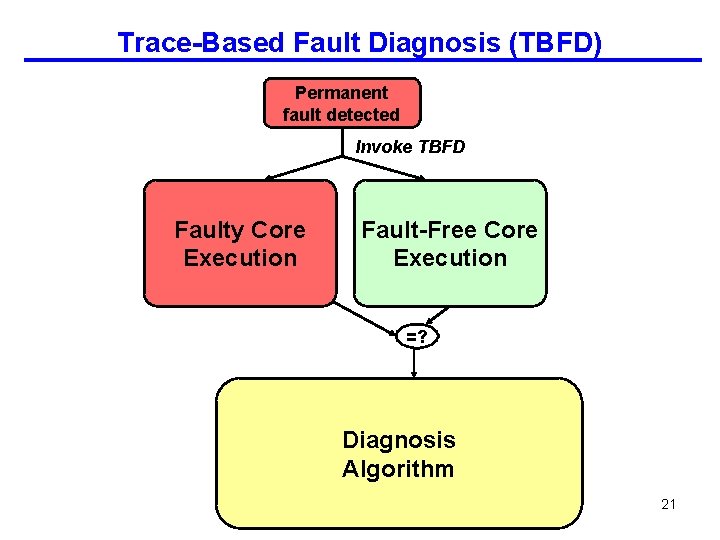

Trace-Based Fault Diagnosis (TBFD) Permanent fault detected Invoke TBFD Faulty Core Execution Fault-Free Core Execution =? Diagnosis Algorithm 21

Trace-Based Fault Diagnosis (TBFD) Permanent fault detected Invoke TBFD Rollback faultycore to checkpoint Replay execution, collect info Fault-Free Core Execution =? Diagnosis Algorithm 22

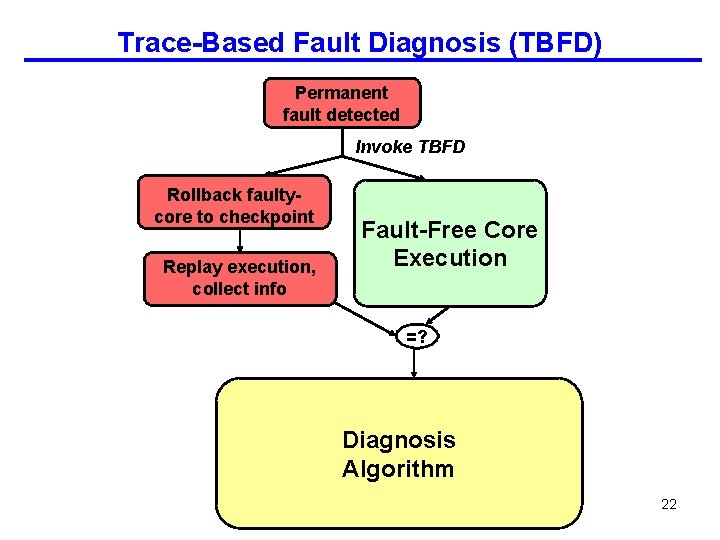

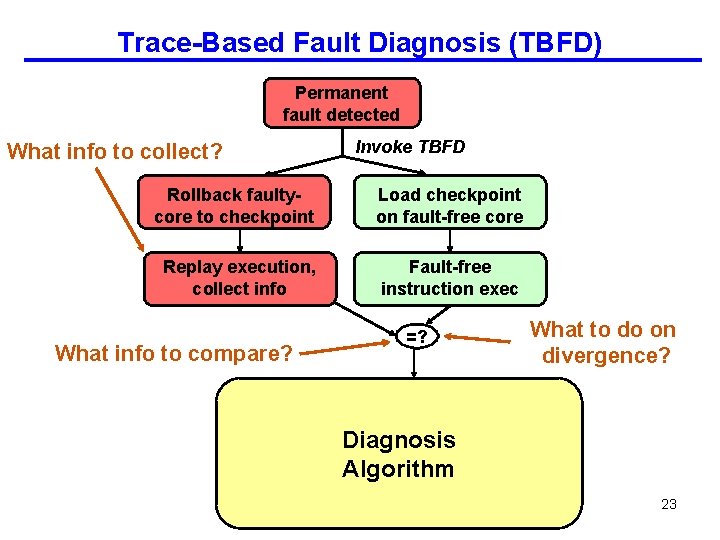

Trace-Based Fault Diagnosis (TBFD) Permanent fault detected What info to collect? Invoke TBFD Rollback faultycore to checkpoint Load checkpoint on fault-free core Replay execution, collect info Fault-free instruction exec What info to compare? =? What to do on divergence? Diagnosis Algorithm 23

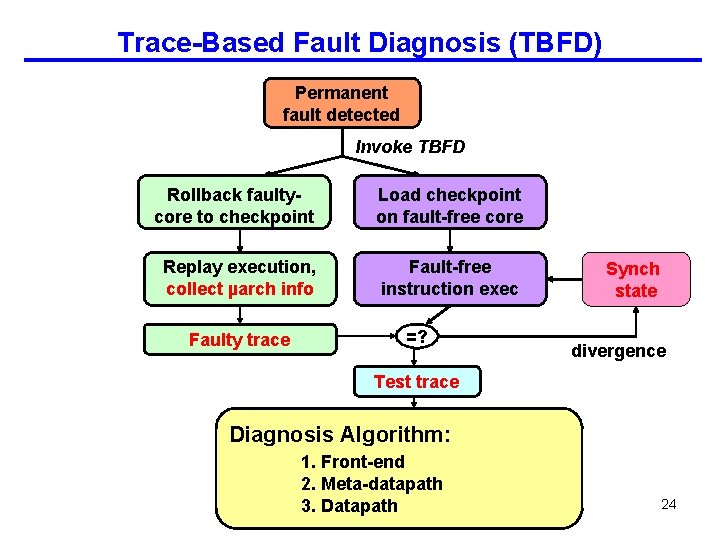

Trace-Based Fault Diagnosis (TBFD) Permanent fault detected Invoke TBFD Rollback faultycore to checkpoint Load checkpoint on fault-free core Replay execution, collect µarch info Fault-free instruction exec Faulty trace =? Synch state divergence Test trace Diagnosis Algorithm: 1. Front-end 2. Meta-datapath 3. Datapath 24

Diagnosis Results • 98% of detected faults are diagnosed – 89% diagnosed to unique unit/array entry • Meta-datapath faults in out-of-order execution mislead TBFD 25

![SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/](http://slidetodoc.com/presentation_image/6e5075f10b8025090db64616d98d4aba/image-26.jpg)

SWAT Framework Components 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ compiler support [DSN ’ 08 a] Checkpoint Fault Error Symptom detected 4. Accurate Fault Models [HPCA’ 09] Recovery Diagnosis Repair 3. Trace-Based Fault Diagnosis [DSN ’ 08 b] 26

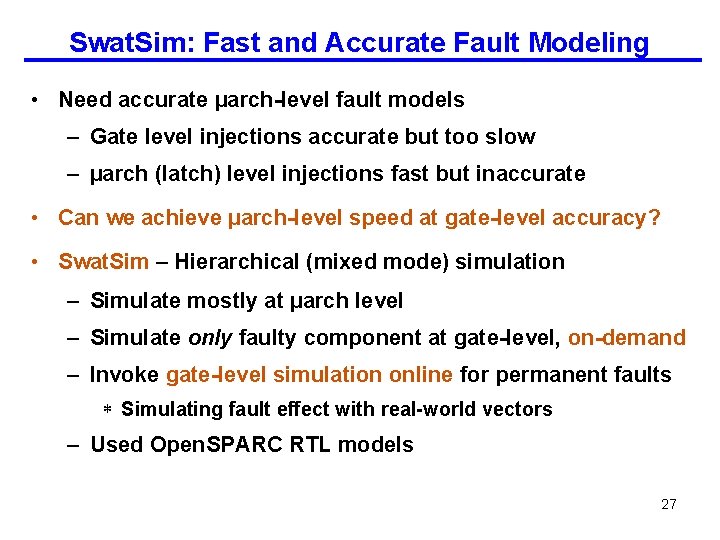

Swat. Sim: Fast and Accurate Fault Modeling • Need accurate µarch-level fault models – Gate level injections accurate but too slow – µarch (latch) level injections fast but inaccurate • Can we achieve µarch-level speed at gate-level accuracy? • Swat. Sim – Hierarchical (mixed mode) simulation – Simulate mostly at µarch level – Simulate only faulty component at gate-level, on-demand – Invoke gate-level simulation online for permanent faults * Simulating fault effect with real-world vectors – Used Open. SPARC RTL models 27

SWAT-Sim: Gate-level Accuracy at µarch Speeds µarch simulation r 3 r 1 op r 2 Input Faulty Unit Used? No Yes Stimuli µarch-Level Simulation Output Response r 3 Gate-Level Fault Simulation Fault propagated to output Continue µarch simulation 28

Results from Swat. Sim • Swat. Sim implemented within full-system simulation – GEMS+Simics for µarch simulation – NCVerilog + VPI for gate-level ALU, AGEN from Open. SPARC models • Performance overhead – 100, 000 X faster than gate level full processor simulation – 2 X slowdown over µarch level simulation • Accuracy of µarch fault models using SWAT coverage/latency – Compared µarch stuck-at with Swat. Sim stuck-at, delay – µarch fault models generally inaccurate * Accuracy varies depending on structure, fault model * Differences in activation rate, multi-bit flips • Unsuccessful attempts to derive more accurate µarch fault models Need Swat. Sim, at least for now 29

![Summary – SWAT Works! 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors Summary – SWAT Works! 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors](http://slidetodoc.com/presentation_image/6e5075f10b8025090db64616d98d4aba/image-30.jpg)

Summary – SWAT Works! 1. Detectors w/ simple hardware [ASPLOS ’ 08] 2. Detectors w/ compiler support [DSN ’ 08 a] Checkpoint Fault Error Symptom detected 4. Accurate Fault Models [HPCA’ 09] Recovery Diagnosis Repair 3. Trace-Based Fault Diagnosis [DSN ’ 08 b] 30

Summary: SWAT Advantages • Handles all faults that matter – Oblivious to low-level failure modes & masked faults • Low, amortized overheads – Optimize for common case, exploit s/w reliability solutions • Holistic systems view enables novel, synergistic solutions – Invariant detectors use diagnosis mechanisms – Diagnosis uses recovery mechanisms • Customizable and flexible – Firmware control can adapt to specific reliability needs – E. g. , hybrid, app-specific recovery (TBD) • Beyond hardware reliability – SWAT treats hardware faults as software bugs * Long-term goal: unified system (hw + sw) reliability at lowest cost – Potential applications to post-silicon test and debug 31

Ongoing and Future Work • Complete SWAT system implementation – Recovery and firmware control w/ Open. SPARC hypervisor/OS – Multithreaded software on multicore: Initial results promising • More aggressive detection – More aggressive software reliability techniques – H/W assertions to complement software (w/ Shobha Vasudevan) • Modeling – Comprehensive SWATSim w/ Open. SPARC RTL for more h/w modules – Off-core faults • Validation on FPGA (w/ Michigan) using Leon based system – Would be nice to have state-of-the-art multicore SPARC system • Post-silicon debug and test • Engagements with Sun – Student summer intern w/ Dr. Ishwar Parulkar, teleconferences, visits 32

- Slides: 32